Spectral Analysis of Random Graphs with Skewed degrees

- Slides: 33

Spectral Analysis of Random Graphs with Skewed degrees Anirban Dasgupta Joint work with John Hopcroft and Frank Mc. Sherry 1

Motivation • Clustering : partitioning data into subsets such that elements in each part share a common ‘trait’. • Our interest is in clustering graph structured data e. g. web, linked databases. • Data can be represented by the adjacency matrix of the graph. 2

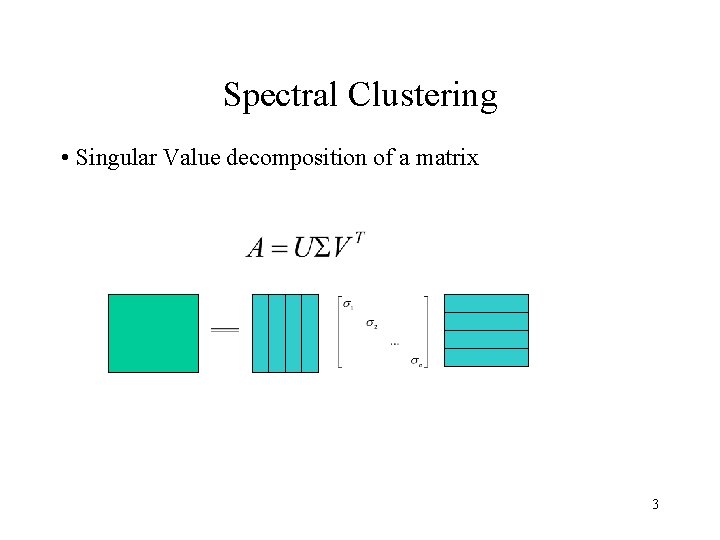

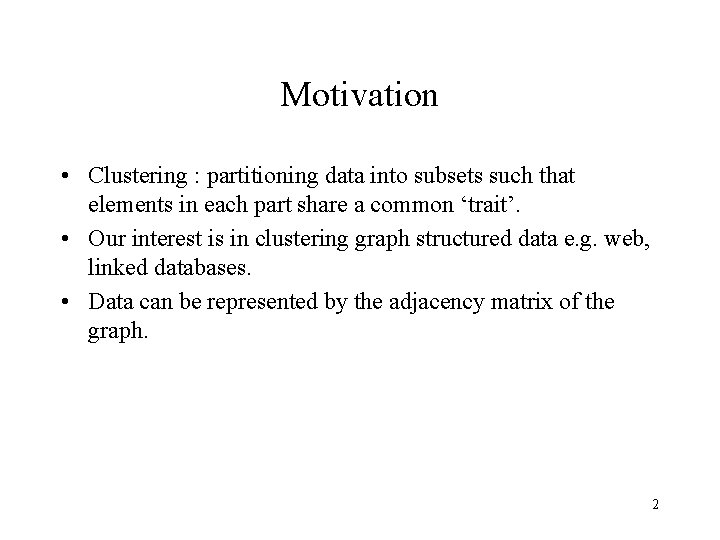

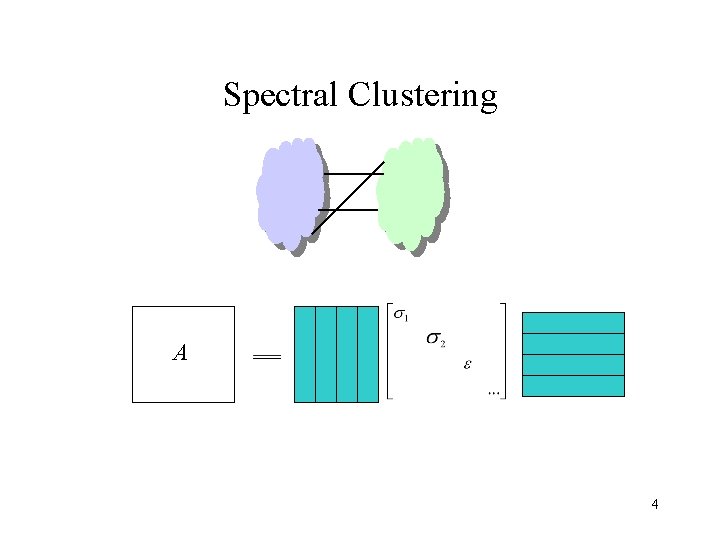

Spectral Clustering • Singular Value decomposition of a matrix 3

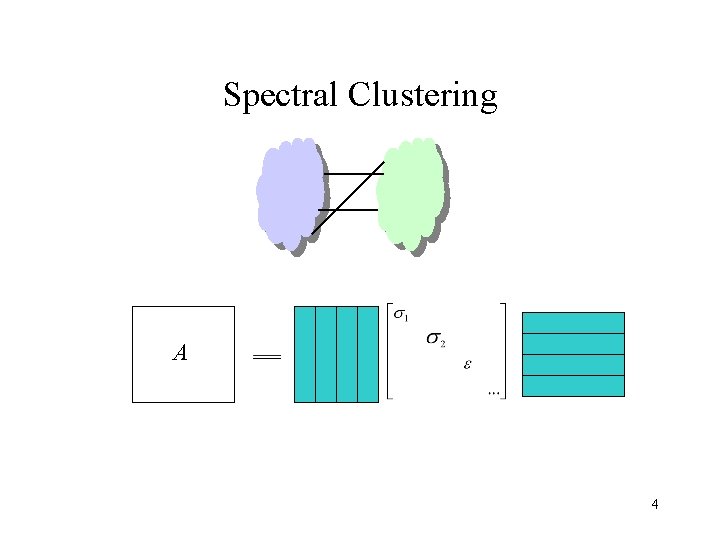

Spectral Clustering A 4

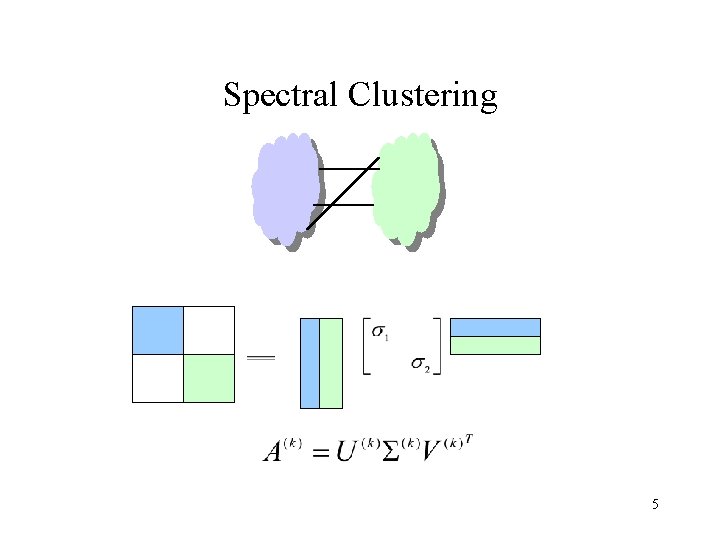

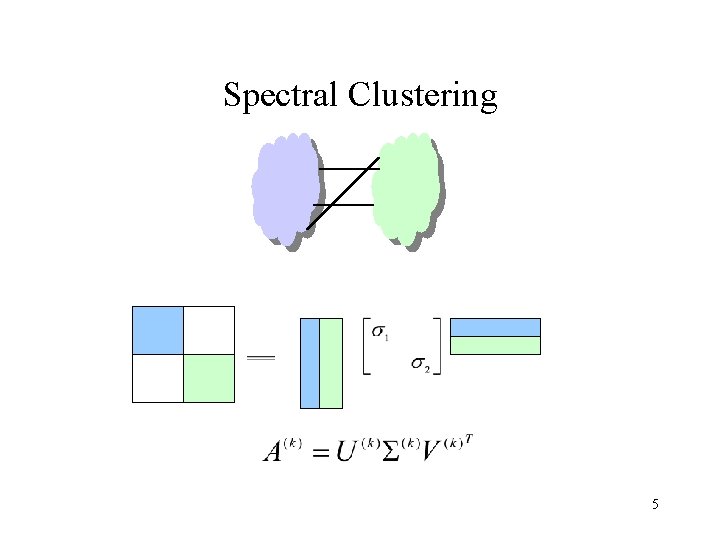

Spectral Clustering 5

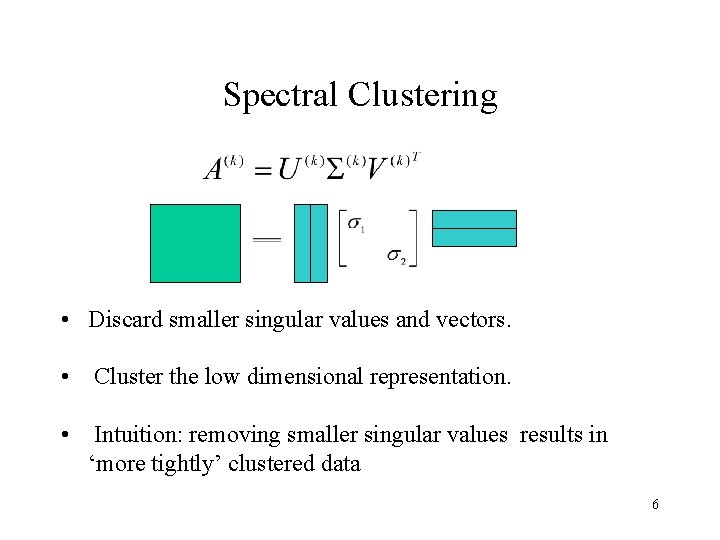

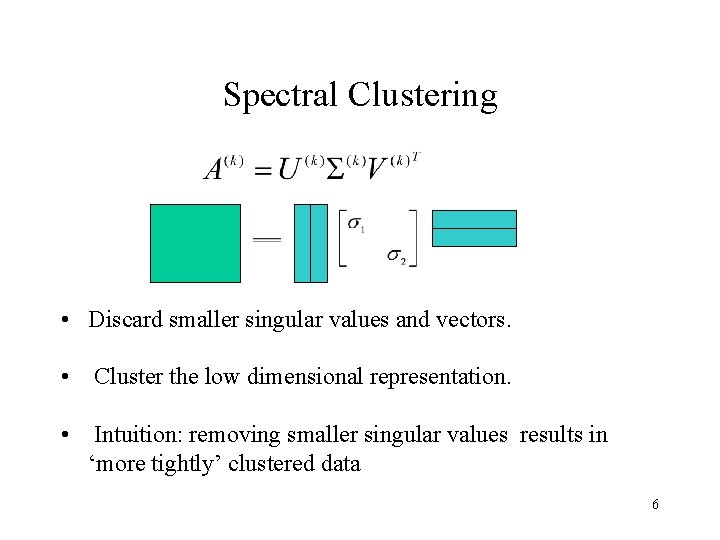

Spectral Clustering • Discard smaller singular values and vectors. • Cluster the low dimensional representation. • Intuition: removing smaller singular values results in ‘more tightly’ clustered data 6

Spectral Clustering • Analyze the heuristic under a probabilistic model of data. - G(n, p) : Erdos model for random graphs. - trivial clustering methods perform well in this space. • Need a model of random graphs with structure embedded. 7

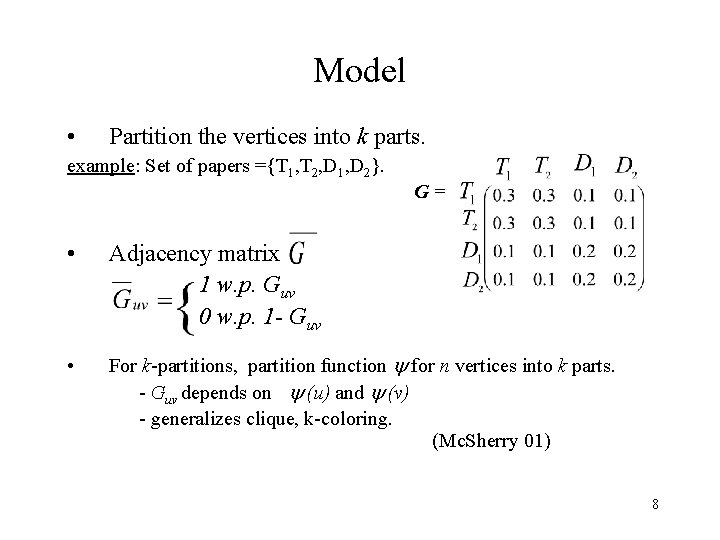

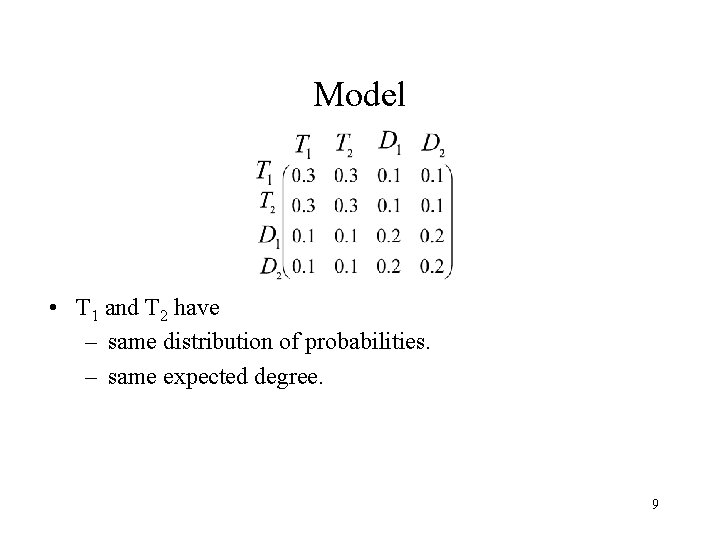

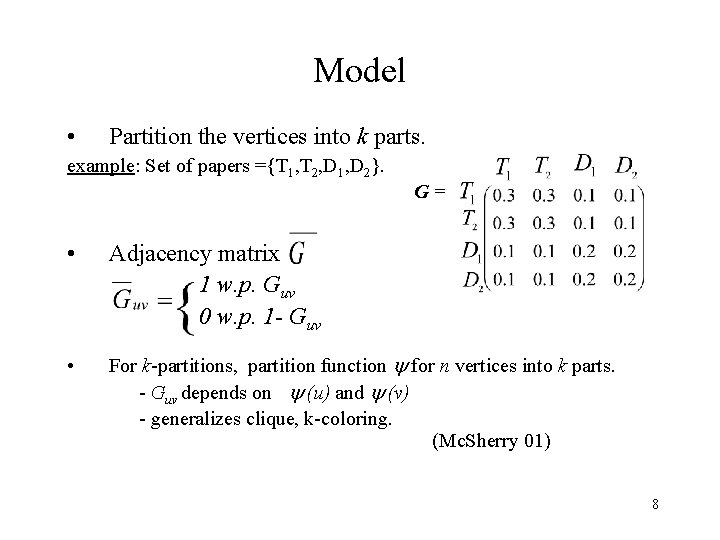

Model • Partition the vertices into k parts. example: Set of papers ={T 1, T 2, D 1, D 2}. G= • Adjacency matrix 1 w. p. Guv 0 w. p. 1 - Guv • For k-partitions, partition function for n vertices into k parts. - Guv depends on (u) and (v) - generalizes clique, k-coloring. (Mc. Sherry 01) 8

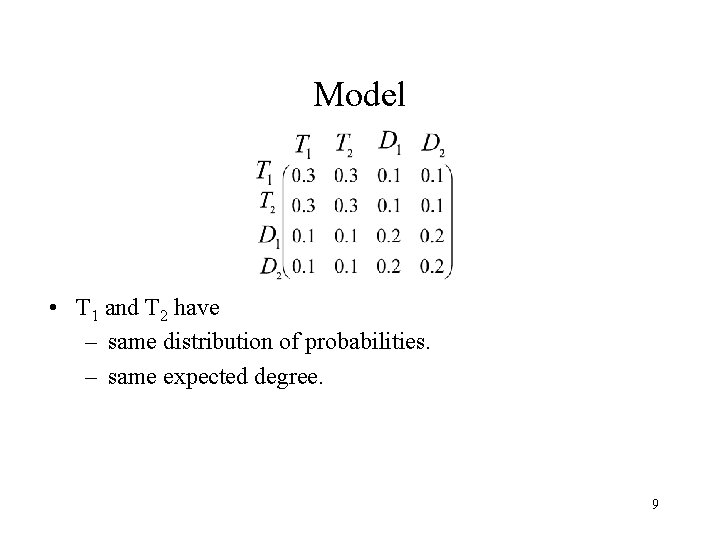

Model • T 1 and T 2 have – same distribution of probabilities. – same expected degree. 9

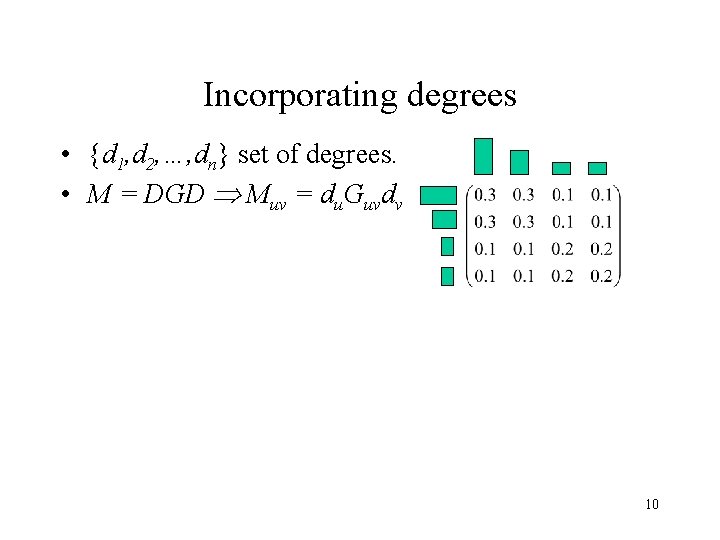

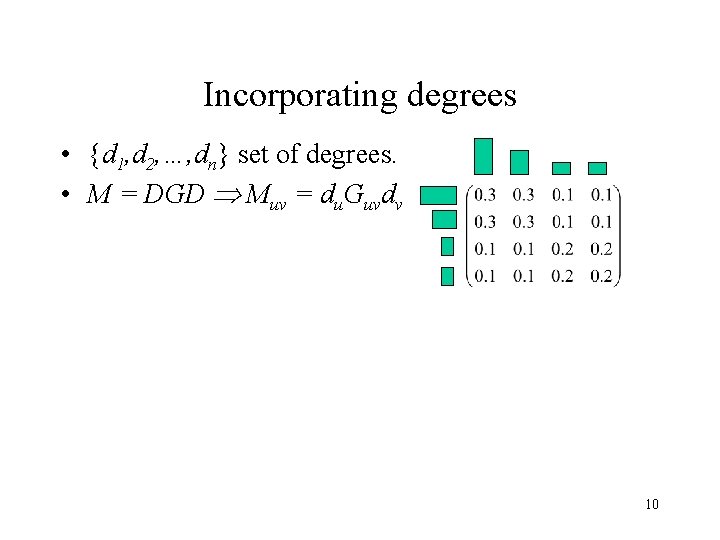

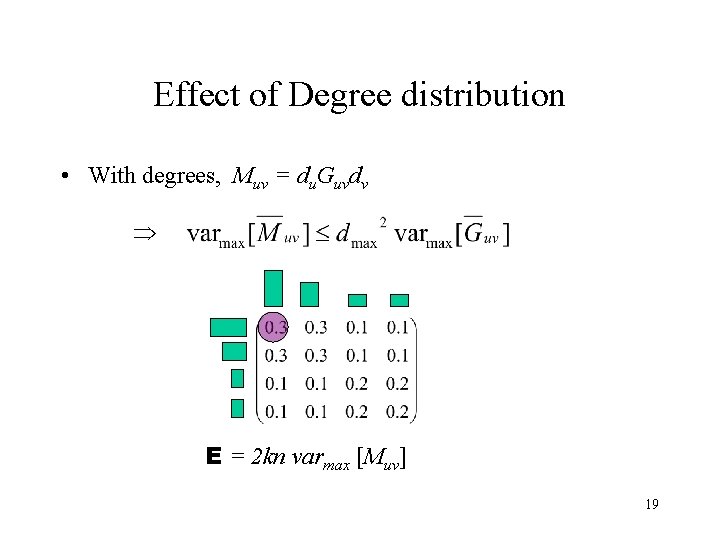

Incorporating degrees • {d 1, d 2, …, dn} set of degrees. • M = DGD Muv = du. Guvdv 10

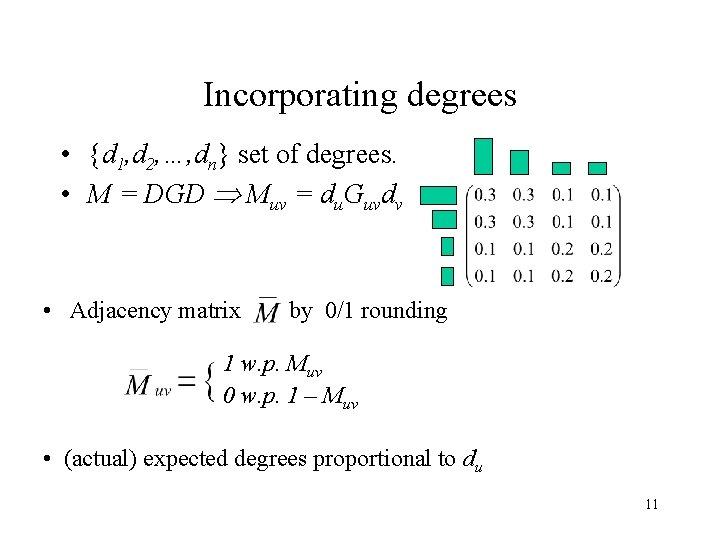

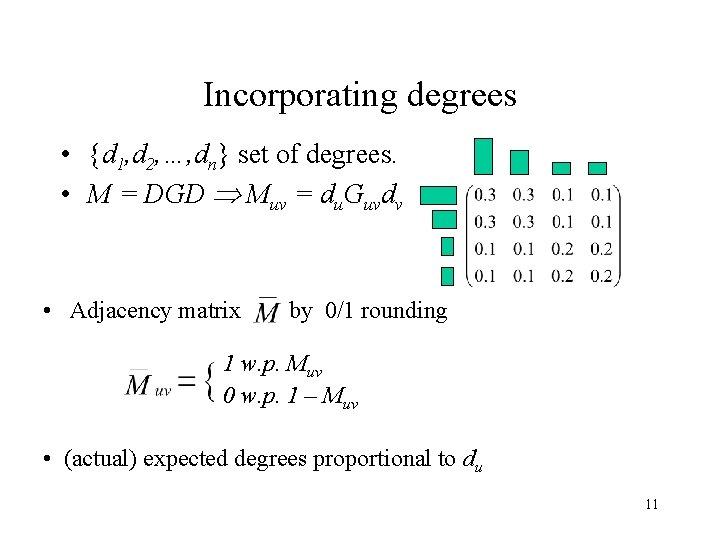

Incorporating degrees • {d 1, d 2, …, dn} set of degrees. • M = DGD Muv = du. Guvdv • Adjacency matrix by 0/1 rounding 1 w. p. Muv 0 w. p. 1 – Muv • (actual) expected degrees proportional to du 11

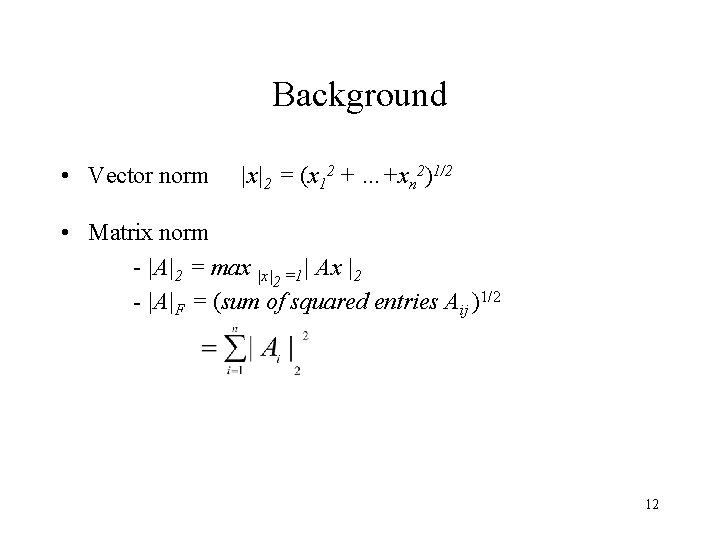

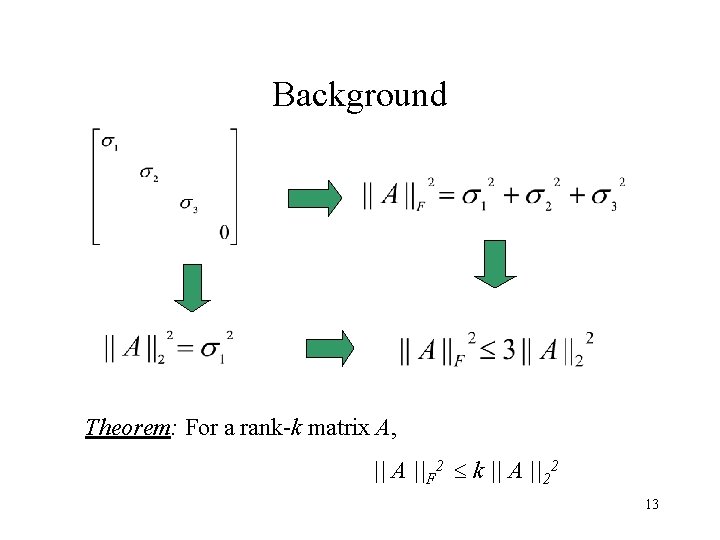

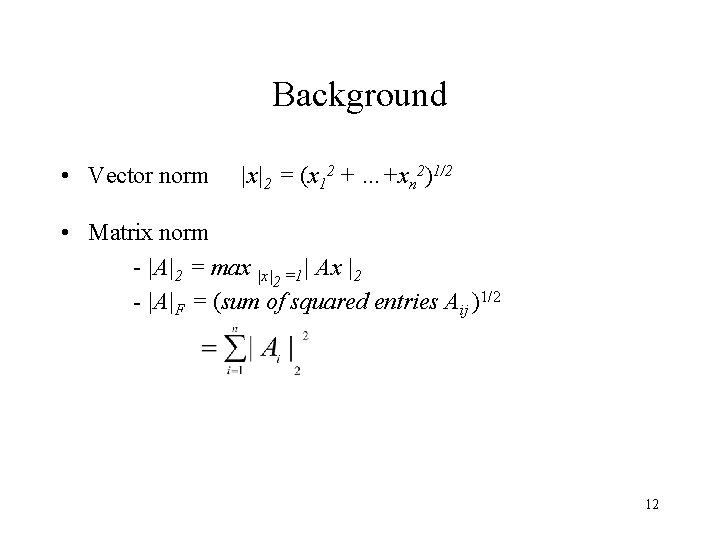

Background • Vector norm |x|2 = (x 12 + …+xn 2)1/2 • Matrix norm - |A|2 = max |x|2 =1| Ax |2 - |A|F = (sum of squared entries Aij )1/2 12

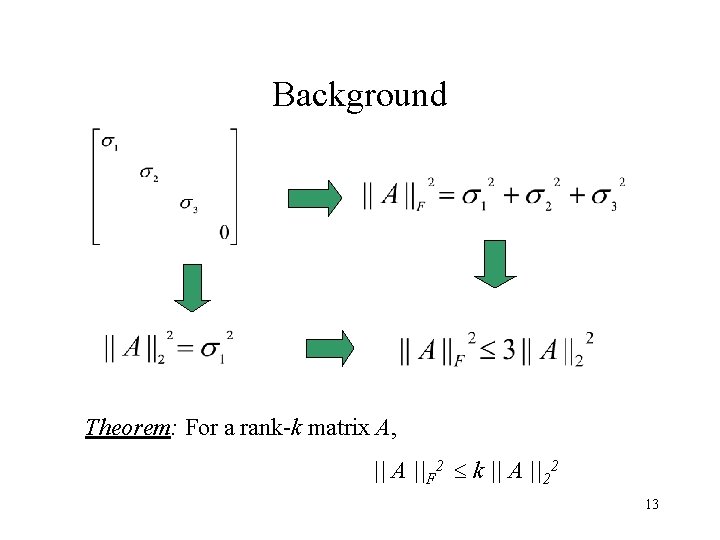

Background Theorem: For a rank-k matrix A, || A ||F 2 k || A ||22 13

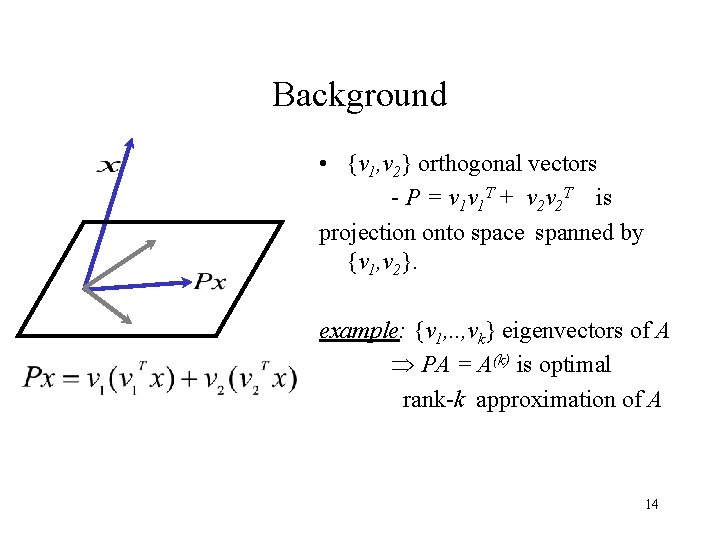

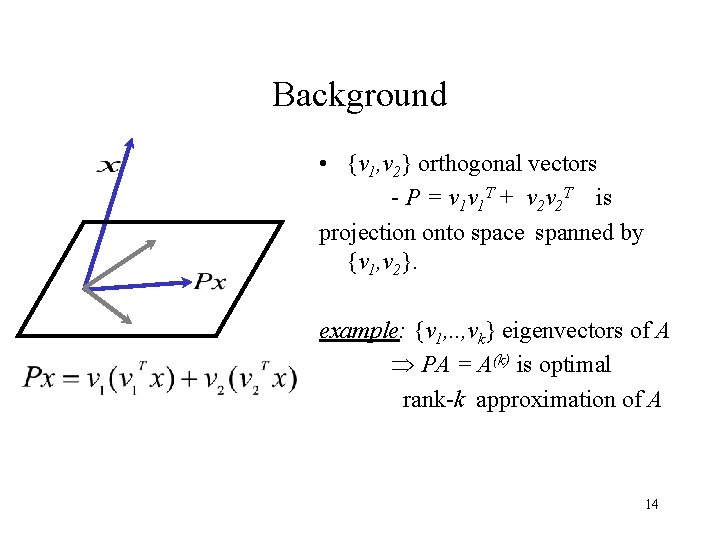

Background • {v 1, v 2} orthogonal vectors - P = v 1 v 1 T + v 2 v 2 T is projection onto space spanned by {v 1, v 2}. example: {v 1, . . , vk} eigenvectors of A PA = A(k) is optimal rank-k approximation of A 14

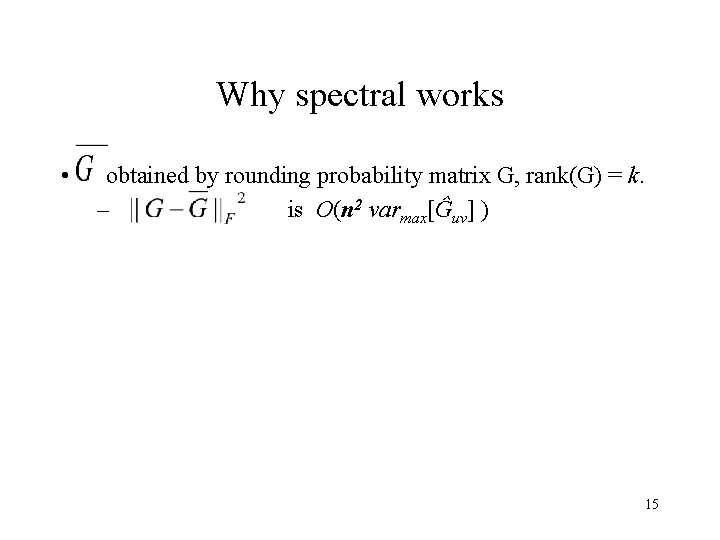

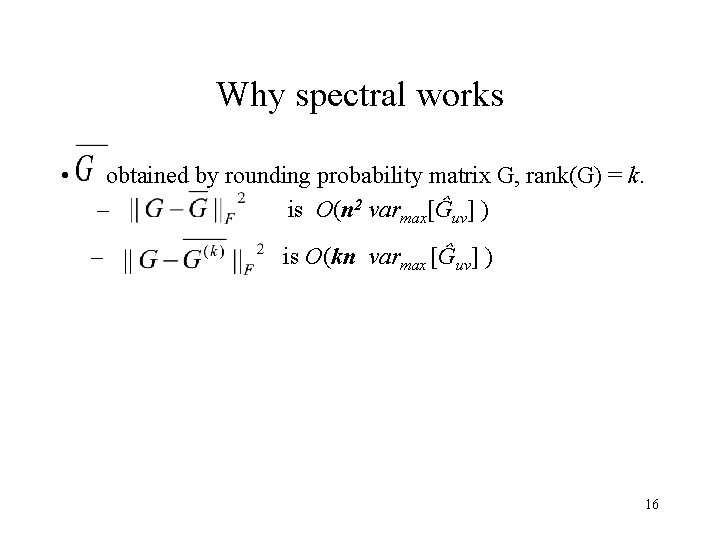

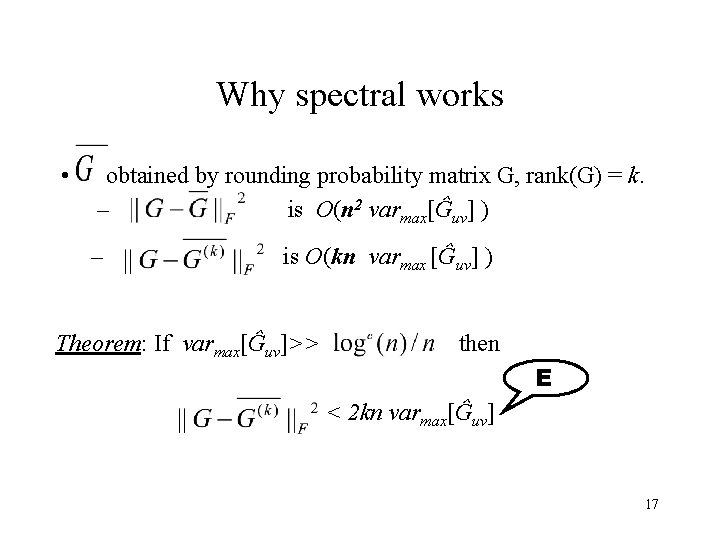

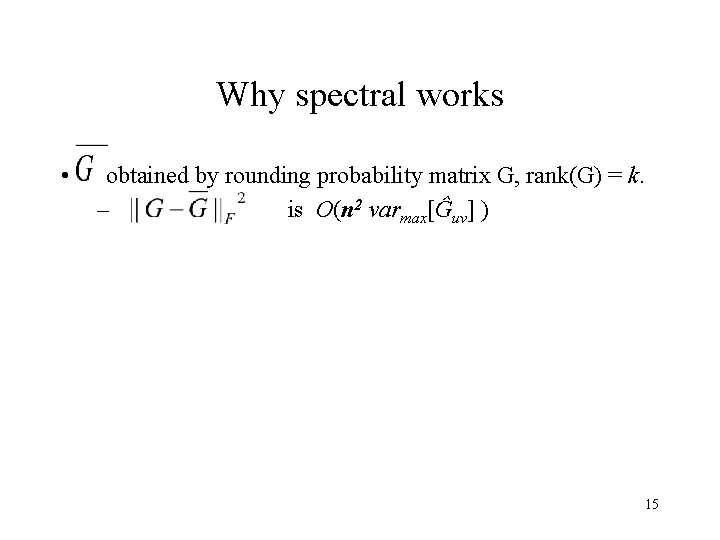

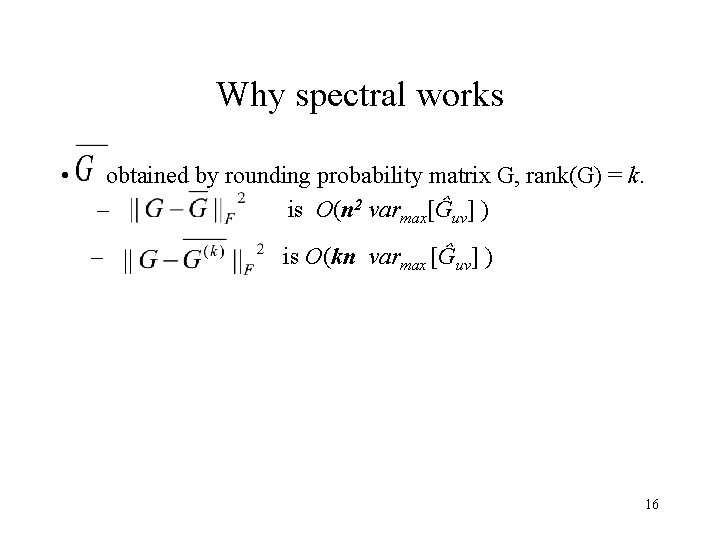

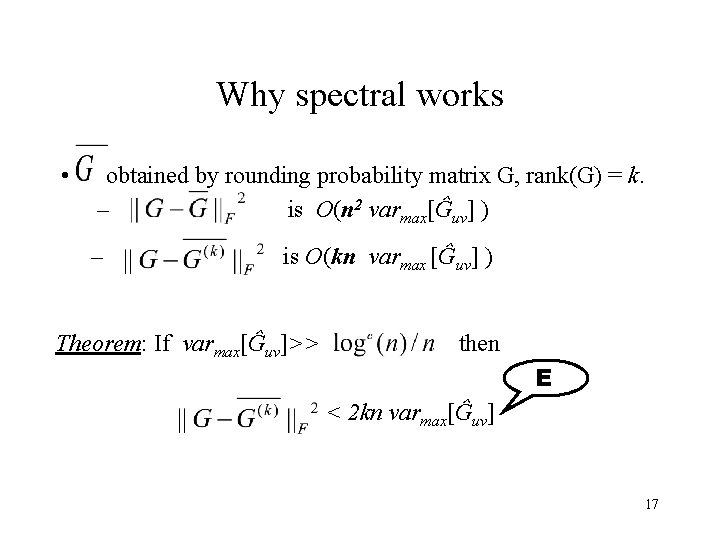

Why spectral works • obtained by rounding probability matrix G, rank(G) = k. – is O(n 2 varmax[Ĝuv] ) 15

Why spectral works • obtained by rounding probability matrix G, rank(G) = k. – is O(n 2 varmax[Ĝuv] ) – is O(kn varmax [Ĝuv] ) 16

Why spectral works • obtained by rounding probability matrix G, rank(G) = k. – is O(n 2 varmax[Ĝuv] ) – is O(kn varmax [Ĝuv] ) Theorem: If varmax[Ĝuv]>> then E < 2 kn varmax[Ĝuv] 17

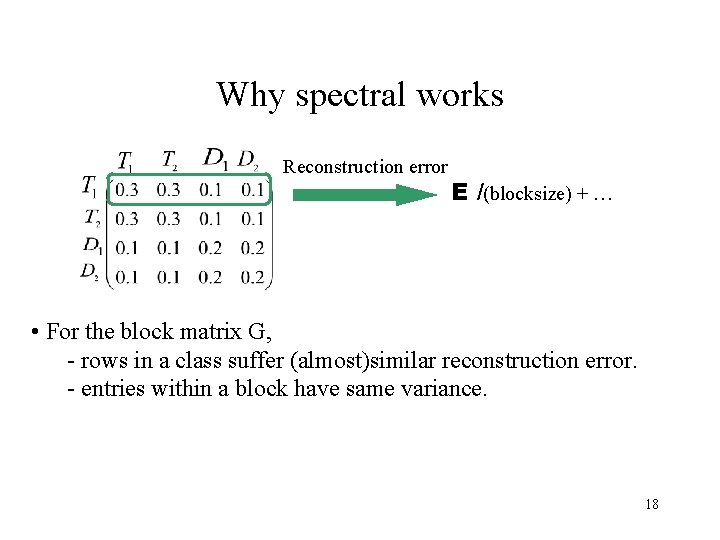

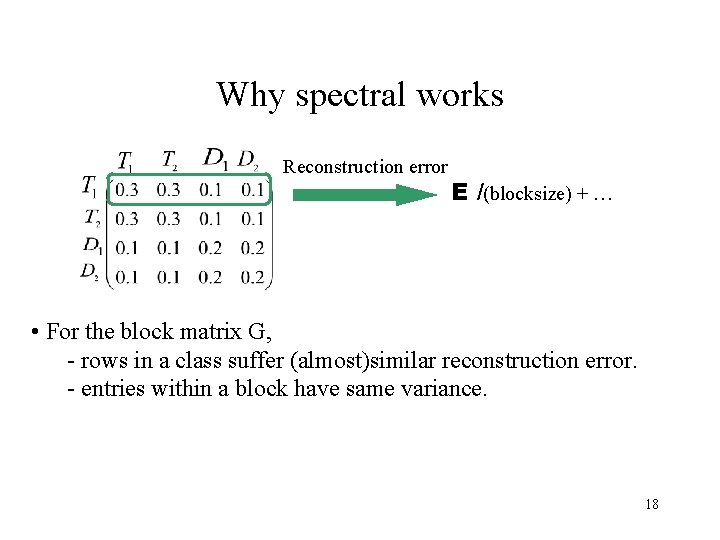

Why spectral works Reconstruction error E /(blocksize) + … • For the block matrix G, - rows in a class suffer (almost)similar reconstruction error. - entries within a block have same variance. 18

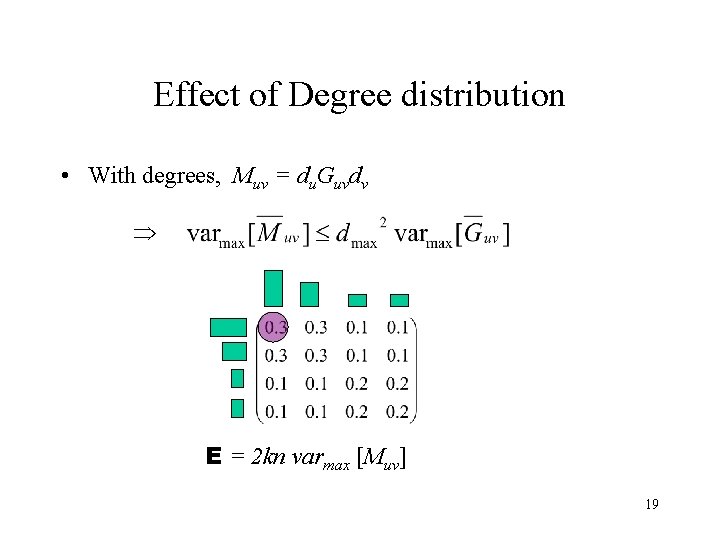

Effect of Degree distribution • With degrees, Muv = du. Guvdv E = 2 kn varmax [Muv] 19

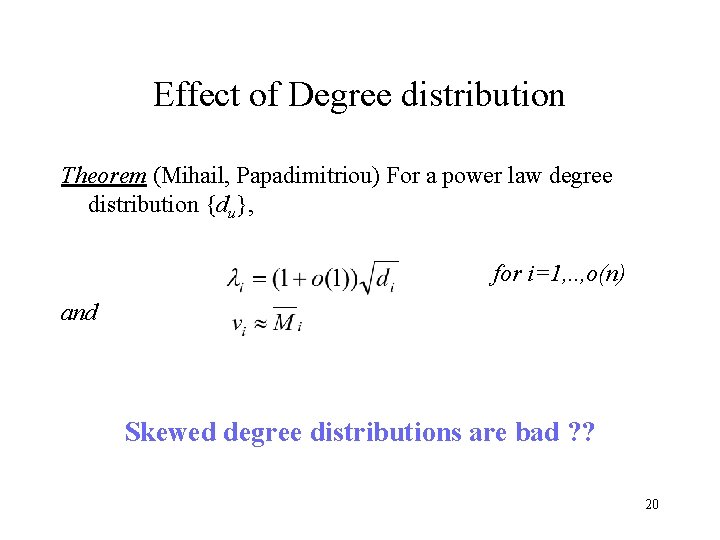

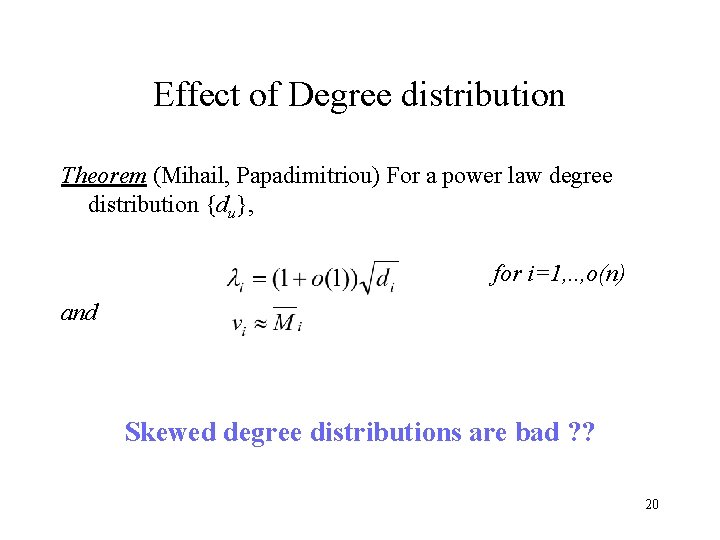

Effect of Degree distribution Theorem (Mihail, Papadimitriou) For a power law degree distribution {du}, for i=1, . . , o(n) and Skewed degree distributions are bad ? ? 20

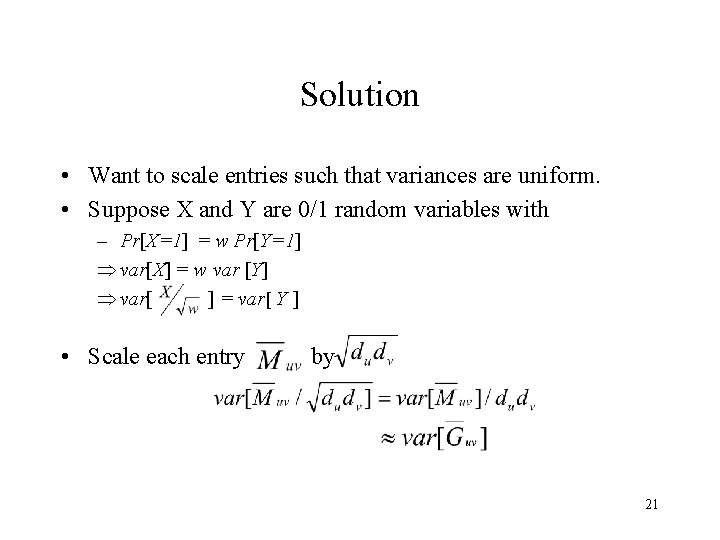

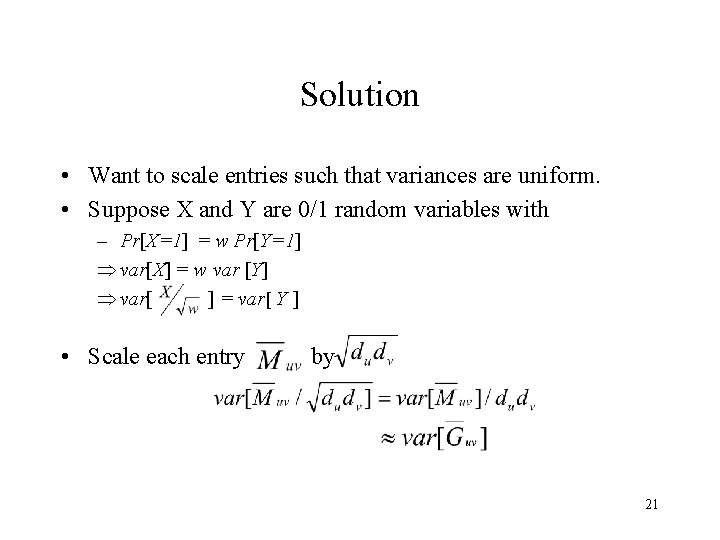

Solution • Want to scale entries such that variances are uniform. • Suppose X and Y are 0/1 random variables with – Pr[X=1] = w Pr[Y=1] var[X] = w var [Y] var[ ] = var[ Y ] • Scale each entry by 21

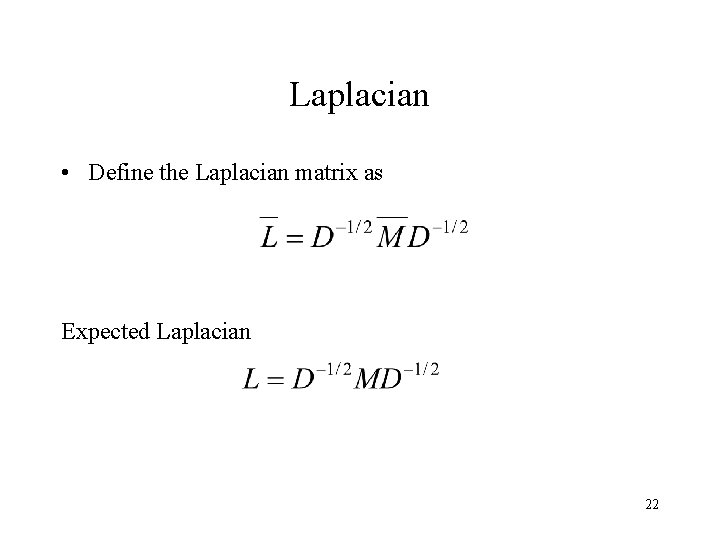

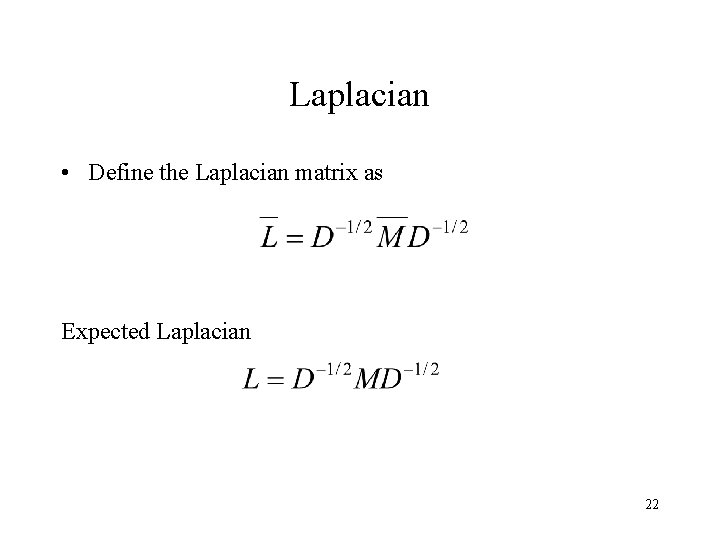

Laplacian • Define the Laplacian matrix as Expected Laplacian 22

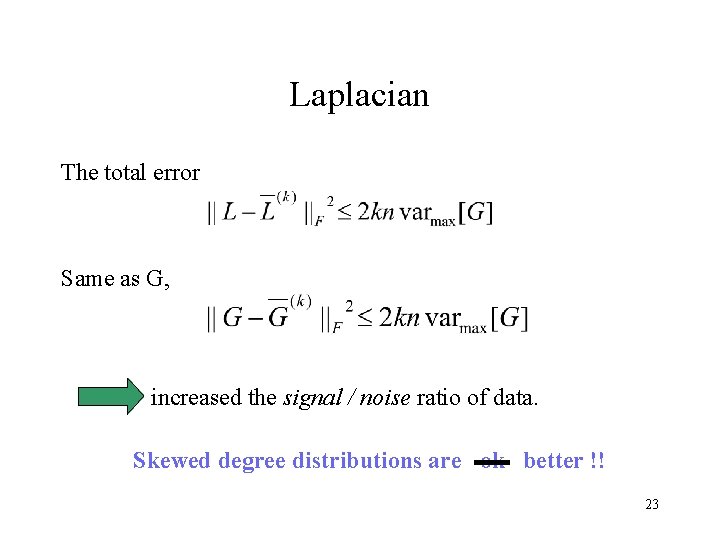

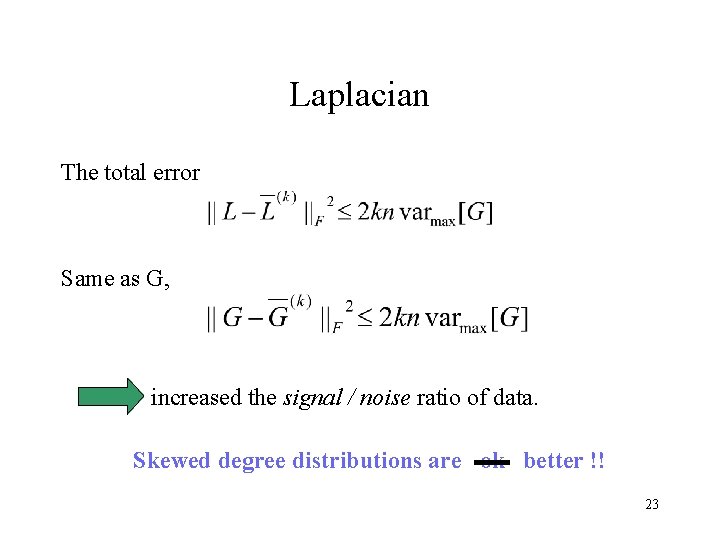

Laplacian The total error Same as G, increased the signal / noise ratio of data. Skewed degree distributions are ok better !! 23

Related Work - Mc. Sherry 2001 Chung et al. use of Laplacian for normalized graph-cuts. other normalizations used in IR. 24

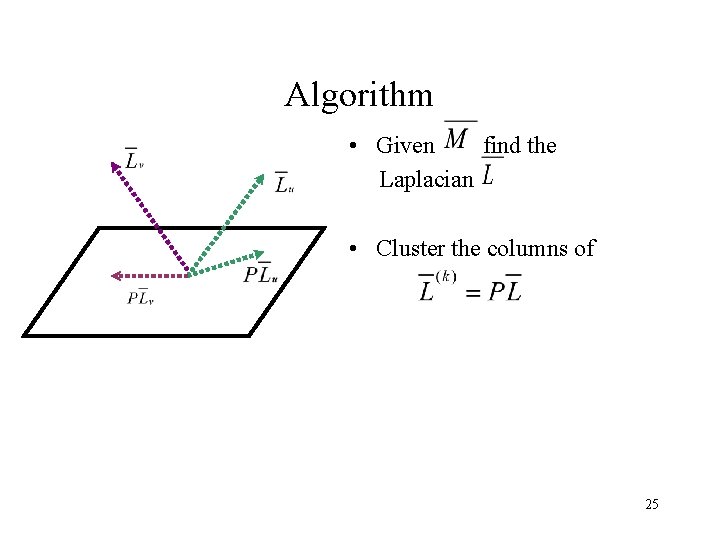

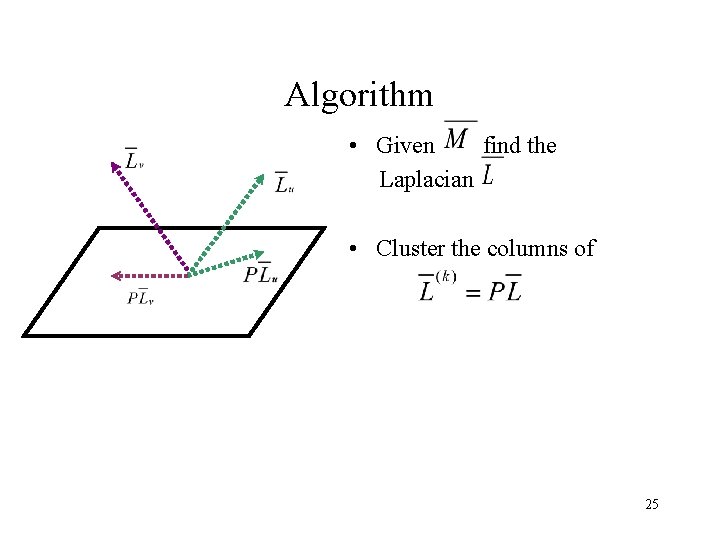

Algorithm • Given find the Laplacian • Cluster the columns of 25

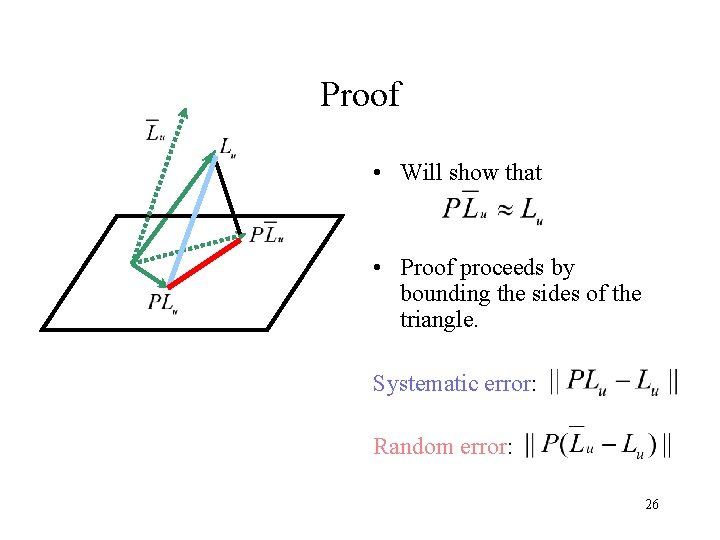

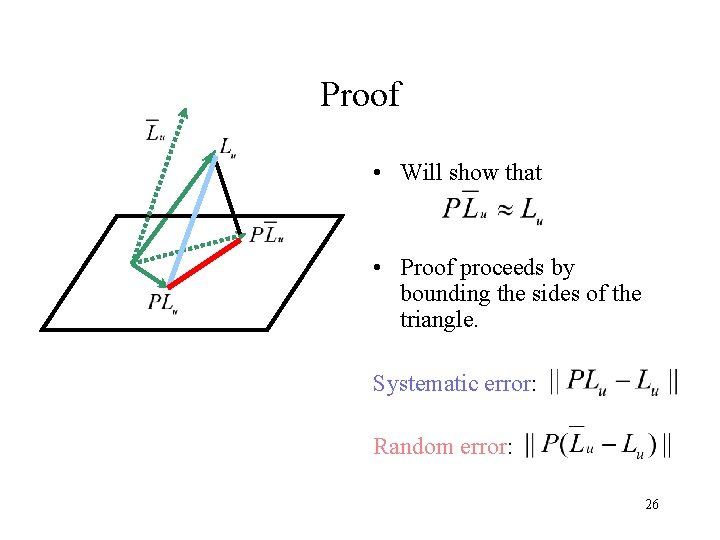

Proof • Will show that • Proof proceeds by bounding the sides of the triangle. Systematic error: Random error: 26

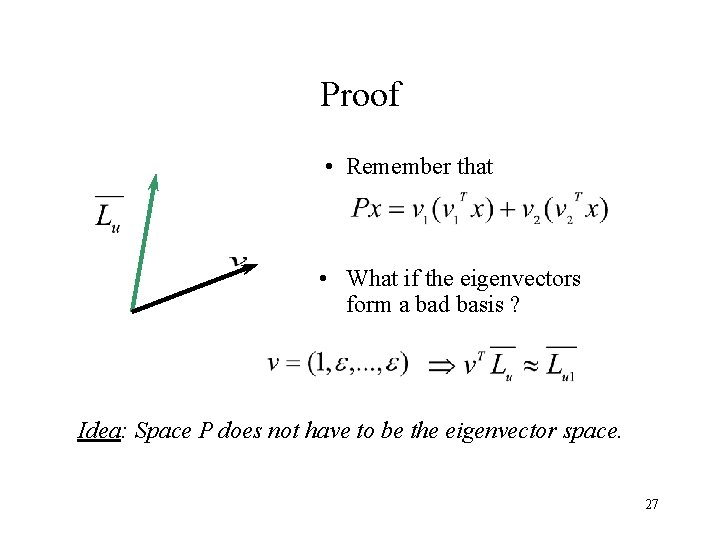

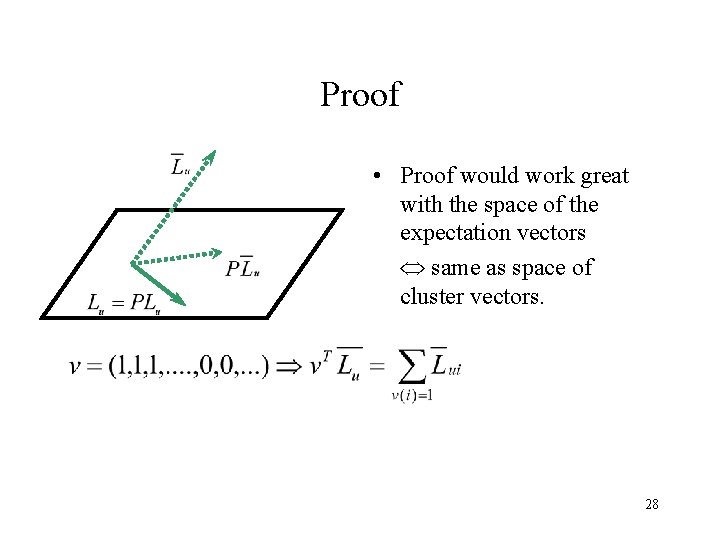

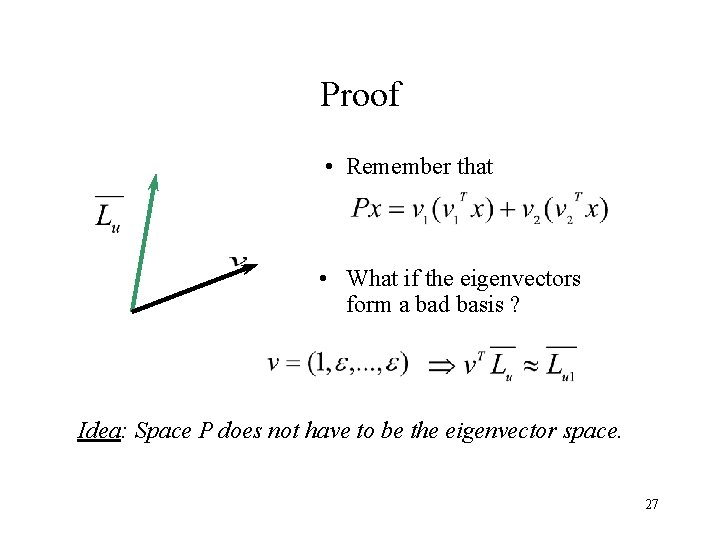

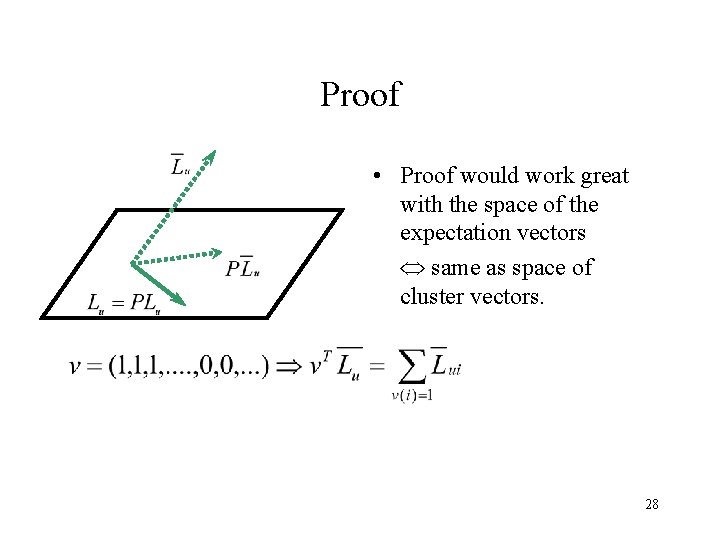

Proof • Remember that • What if the eigenvectors form a bad basis ? Idea: Space P does not have to be the eigenvector space. 27

Proof • Proof would work great with the space of the expectation vectors same as space of cluster vectors. 28

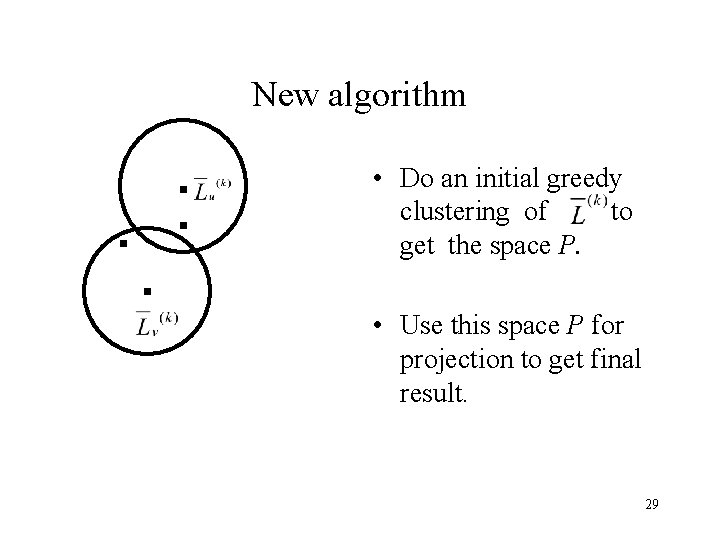

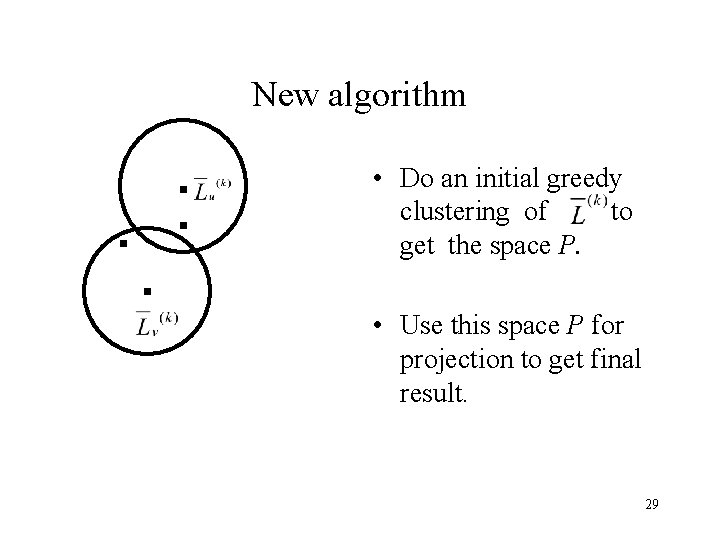

New algorithm • Do an initial greedy clustering of to get the space P. • Use this space P for projection to get final result. 29

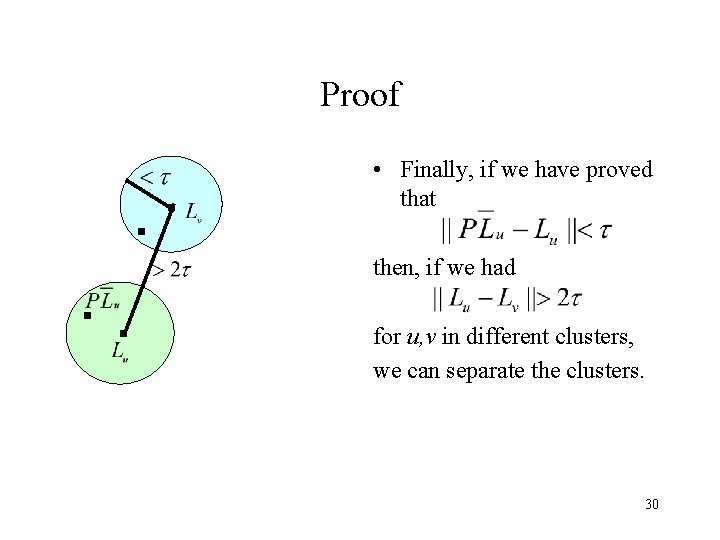

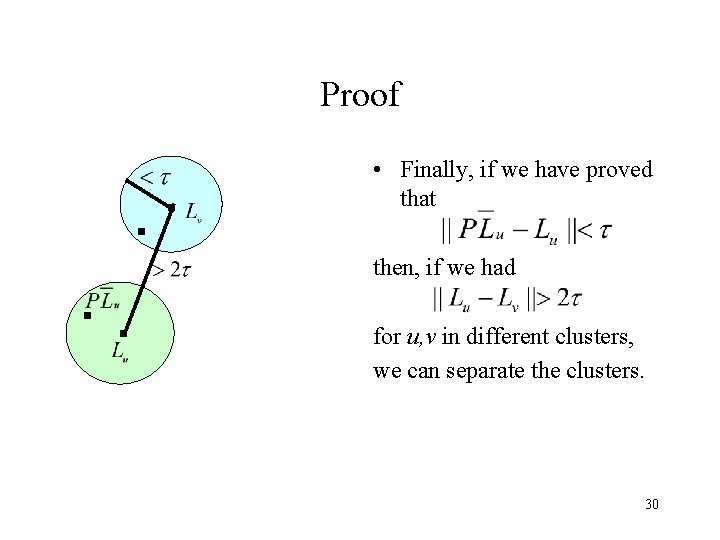

Proof • Finally, if we have proved that then, if we had for u, v in different clusters, we can separate the clusters. 30

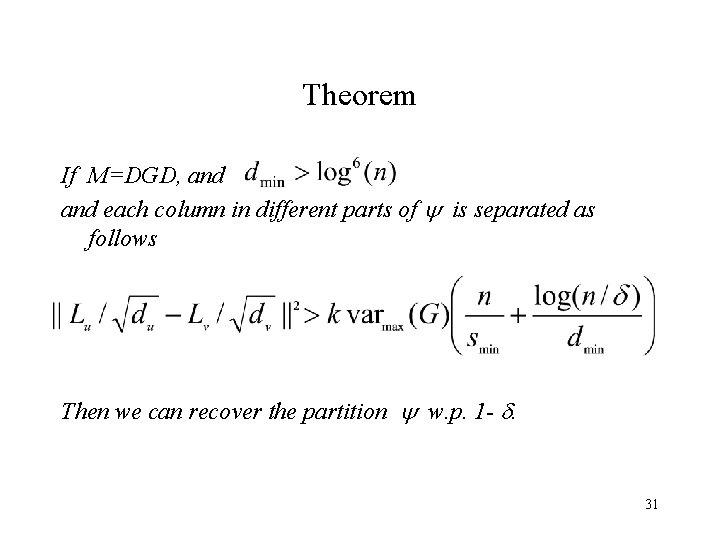

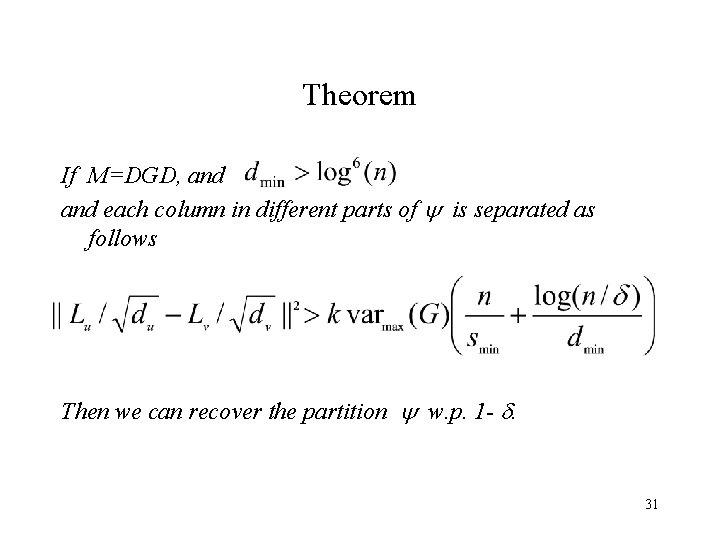

Theorem If M=DGD, and each column in different parts of is separated as follows Then we can recover the partition w. p. 1 - . 31

Recap • To undo effects of degree on variance, need to take Laplacian. • Rank-k approximation boosts ‘signal / noise’ ratio, more for skewed degrees. • Breaking the analysis into the systemic and random errors. • Constructing a different projection space. 32

Future Work • Can extend this to bipartite graphs but need both row and column separations. • Need better matrix inequality to extend to sparse graphs. • Can we represent the apriori weights in collaborative filtering using this degree formulation ? • Extending the random graph model ? 33