Special Topics in Computer Science The Art of

- Slides: 25

Special Topics in Computer Science The Art of Information Retrieval Chapter 7: Text Operations Alexander Gelbukh www. Gelbukh. com

Previous chapter: Conclusions q Modeling of text helps predict behavior of systems o Zipf law, Heaps’ law q Describing formally the structure of documents allows to treat a part of their meaning automatically, e. g. , search q Languages to describe document syntax o SGML, too expensive o HTML, too simple o XML, good combination 2

Text operations q q Linguistic operations Document clustering Compression Encription (not discussed here) 3

Linguistic operations Purpose: Convert words to “meanings” q Synonyms or related words o Different words, same meaning. Morphology o Foot / feet, woman / female q Homonyms o Same words, different meanings. Word senses o River bank / financial bank q Stopwords o Word, no meaning. Functional words o The 4

For good or for bad? q More exact matching o Less noise, better recall q Unexpected behavior o Difficult for users to grasp o Harms if introduces errors q More expensive o Adds a whole new technology o Maintenance; language dependents o Slows down Good if done well, harmful if done badly 5

Document preprocessing q Lexical analysis (punctuation, case) o Simple but must be careful q Stopwords. Reduces index size and pocessing time q Stemming: connected, connections, . . . o Multiword expressions: hot dog, B-52 o Here, all the power of linguistic analysis can be used q Selection of index terms o Often nouns; noun groups: computer science q Construction of thesaurus o synonymy: network of related concepts (words or phrases) 6

Stemming q Methods o o Linguistic analysis: complex, expensive maintenance Table lookup: simple, but needs data Statistical (Avetisyan): no data, but imprecise Suffix removal q Suffix removal o Porter algorithm. Martin Porter. Ready code on his website o Substitution rules: sses s, s o stresses stress. 7

Better stemming The whole problematics of computational linguistics q POS disambiguation o well adverb or noun? Oil well. o Statistical methods. Brill tagger o Syntactic analysis. Syntactic disambiguation q Word sense disambiguatiuon o o bank 1 and bank 2 should be different stems Statistical methods Dictionary-based methods. Lesk algorithm Semantic analysis 8

Thesaurus q Terms (controlled vocabulary) and relationships q Terms o used for indexing o represent a concept. One word or a phrase. Usually nouns o sense. Definition or notes to distinguish senses: key (door). q Relationships o Paradigmatic: § Synonymy, hierarchical (is-a, part), non-hierarchical o Syntagmatic: collocations, co-occurrences q Word. Net. Euro. Word. Net o synsets 9

Use of thesurus q To help the user to formulate the query o Navigation in the hierarchy of words o Yahoo! q For the program, to collate related terms o woman female o fuzzy comparison: woman 0. 8 * female. Path length 10

Yahoo! vs. thesaurus q The book says Yahoo! is based on a thesaurus. I disagree q Tesaurus: words of language organized in hierarchy q Document hierarchy: documents attached to hierarchy q This is word sense disambiguation q I claim that Yahoo! is based on (manual) WSD q Also uses thesaurus for navigation 11

Text operations q q Linguistic operations Document clustering Compression Encription (not discussed here) 12

Document clustering q Operation on the whole collection q Global vs. local q Global: whole collection o At compile time, one-time operation q Local o Cluster the results of a specific query o At runtime, with each query q Is more a query transformation operation o Already discussed in Chapter 5 13

Text operations q q Linguistic operations Document clustering Compression Encription (not discussed here) 14

Compression q Gain: storage, transmission, search q Lost: time on compressing/decompressing q In IR: need for random access. o Blocks do not work q Also: pattern matching on compressed text 15

Compression methods Statistical q Huffman: fixed size per symbol. o More frequent symbols shorter o Allows starting decompression from any symbol q Arithmetic: dynamic coding o Need to decompress from the beginning o Not for IR Dictionary q Pointers to previous occurrences. Lampel-Ziv o Again not for IR 16

Compression ratio q Size compressed / size decompressed q Huffman, units = words: up to 2 bits per char o Close to the limit = entropy. Only for large texts! o Other methods: similar ratio, but no random access q Shannon: optimal length for symbol with probability p is - log 2 p q Entropy: Limit of compression o Average length with optimal coding o Property of model 17

Modeling q Find probability for the next symbol q Adaptive, static, semi-static o Adaptive: good compression, but need to start from beginning o Static (for language): poor compression, random access o Semi-static (for specific text; two-pass): both OK q Word-based vs. character-based o Word-based: better compression and search 18

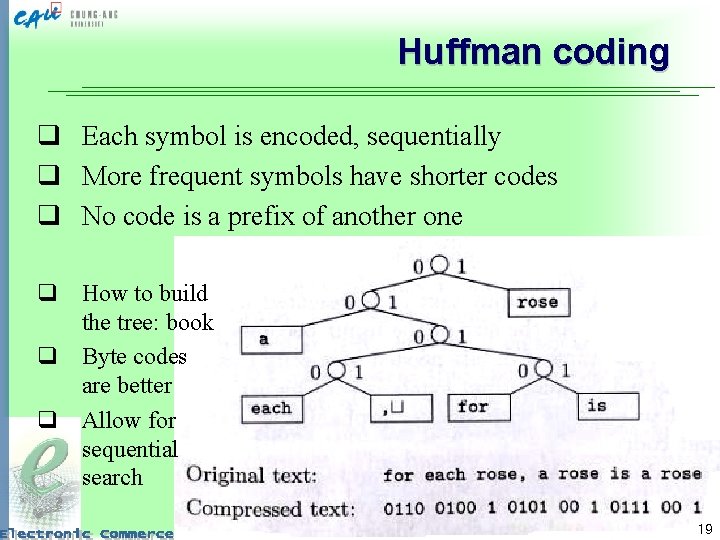

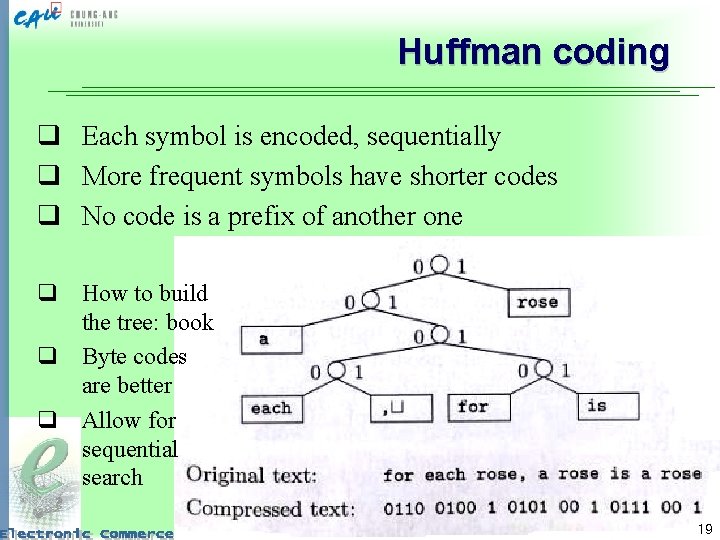

Huffman coding q Each symbol is encoded, sequentially q More frequent symbols have shorter codes q No code is a prefix of another one q How to build the tree: book q Byte codes are better q Allow for sequential search 19

Dictionary-based methods q Static (simple, poor compression), dynamic, semi-static. q Lempel-Ziv: references to previous occurrence o Adaptive q Disadvantages for IR o Need to decode from the very beginning o New statistical methods perform better 20

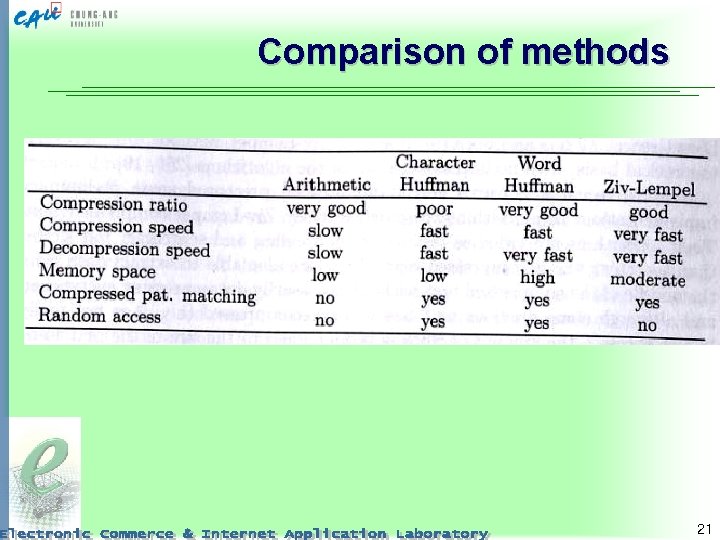

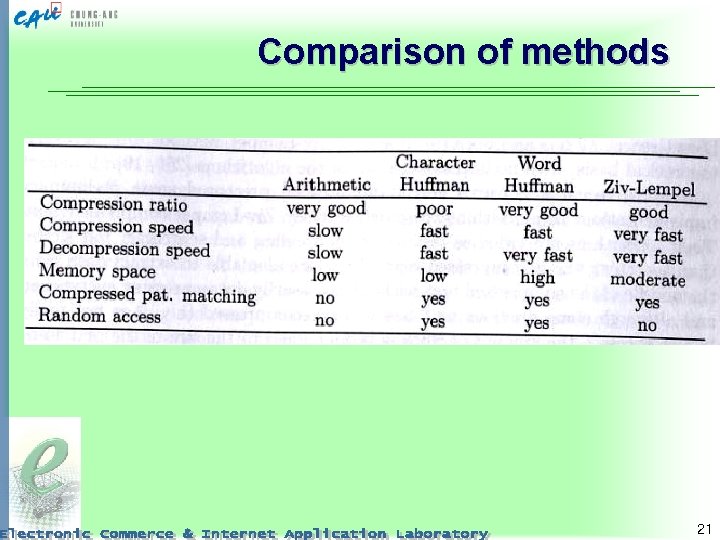

Comparison of methods 21

Compression of inverted files q Inverted file: words + lists of docs where they occur q Lists of docs are ordered. Can be compressed q Seen as lists of gaps. o Short gaps occur more frequently o Statistical compression q Our work: order the docs for better compression o o We code runs of docs Minimize the number of runs Distance: # of different words TSP. 22

Research topics q All computational linguistics o Improved POS tagging o Improved WSD q Uses of thesaurus o for user navigation o for collating similar terms q Better compression methods o Searchable compression o Random access 23

Conclusions q Text transformation: meaning instead of strings o Lexical analysis o Stopwords o Stemming § POS, WSD, syntax, semantics § Ontologies to collate similar stems q Text compression o Searchable o Random access o Word-based statistical methods (Huffman) q Index compression 24

Thank you! Till compensation lecture 25