Spatially Supervised Recurrent Neural Networks for Visual Object

Spatially Supervised Recurrent Neural Networks for Visual Object Tracking Authors: Guanghan Ning, Zhi Zhang, Chen Huang, Xiaobo Ren, Haohong Wang, Canhui Cai, Zhihai(Henry) He

The Problem Object Tracking Visual Object Tracking is the process of localizing a single target in a video or sequential images, given the target position in the first frame. The significance lies in two aspects: 1. It has a wide range of applications such as motion analysis, activity recognition, surveillance, and human-computer interaction. 2. It can be a prerequisite or a necessary component of another system. 2 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 17 PM

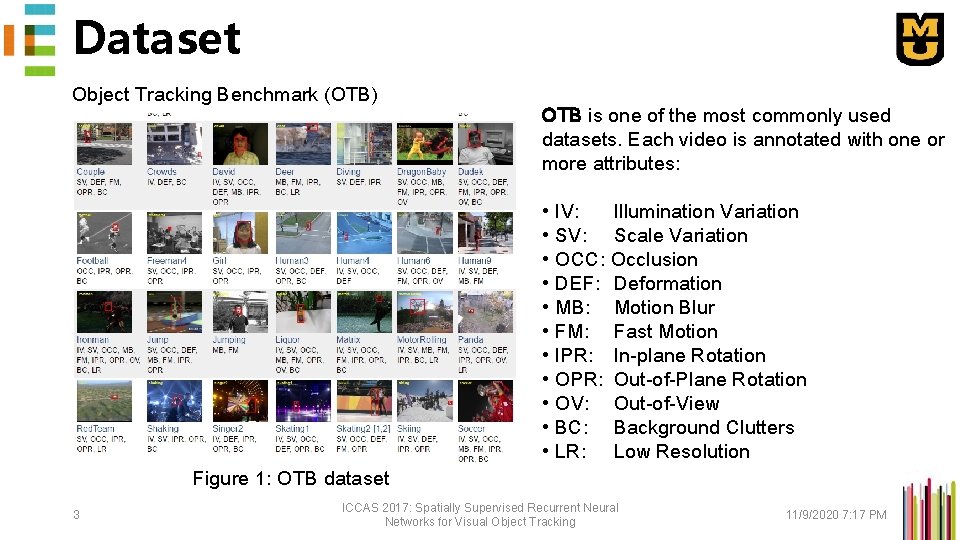

Dataset Object Tracking Benchmark (OTB) OTB is one of the most commonly used datasets. Each video is annotated with one or more attributes: • • • IV: Illumination Variation SV: Scale Variation OCC: Occlusion DEF: Deformation MB: Motion Blur FM: Fast Motion IPR: In-plane Rotation OPR: Out-of-Plane Rotation OV: Out-of-View BC: Background Clutters LR: Low Resolution Figure 1: OTB dataset 3 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 17 PM

Evaluation 1. How do we measure the performance? • Measured by OPE (one pass evaluation), testing a sequence with initialization from the ground truth position in the 1 st frame and report the [average precision] or [success rate]. 2. How to calculate the [average precision] or [success rate]? • Average precision is the average overlap score over frames • Frame is a success when its overlap score is above threshold 3. How to evaluate over a range of thresholds? • The [success plot] shows the ratios of successful frames at the thresholds varied from 0 to 1. • We use the [area under curve (AUC)] of each success plot to rank the tracking algorithms. 4 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

Challenges 1. Appearance Variations: • Target deformations • Illumination variations • Scale changes • Background Clutters • Fast and abrupt motion 2. Occlusion • Partial Occlusion • Full Occlusion 3. Difficulties Introduced by Camera • Uneven lighting • Illumination • Blur • Low resolution • Perspective distortion 5 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

Related Works What are the major related works? 1. Regression-Based Object Recognition • YOLO is regression-based CNN network that detects generic objects, and inspires us to research into the regression capabilities of LSTM. 2. LSTM • LSTM is an RNN module with memory that is temporally deep. • We research into incorporating CNN and LSTM to interpreted high-level visual features both spatially and temporally, and propose to regress the features into object locations. 6 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

Related Works Our proposed network is the first work that incorporates CNN and LSTM for the purpose of object tracking on real-world datasets. There are two prior papers [1, 2] that are closely related to this work: • [1] Quan Gan, Qipeng Guo, Zheng Zhang, and Kyunghyun Cho. First step toward model-free, anonymous object tracking with recurrent neural networks. ar. Xiv preprint ar. Xiv: 1511. 06425, 2015. • Traditional RNN, not LSTM • Focused on artificially generated sequences and synthesized data, not real-world videos 7 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

Related Works Our proposed network is the first work that incorporates CNN and LSTM for the purpose of object tracking on real-world datasets. There are two prior papers [1, 2] that are closely related to this work: • [2] Samira Ebrahimi Kahou, Vincent Michalski, and Roland Memisevic. Ratm: Recurrent attentive tracking model. ar. Xiv preprint ar. Xiv: 1510. 08660, 2015. • Traditional RNN as an attention scheme • In contrast, we directly regress coordinates or heatmaps instead of using sub-region classifiers. • We use the LSTM for an end-to-end spatio-temporal regression with a single evaluation 8 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

![Related Works Many recent works [3, 4, 5] have appeared since our work. (July Related Works Many recent works [3, 4, 5] have appeared since our work. (July](http://slidetodoc.com/presentation_image_h/7525bab4ea4acabc35d06b7d7f4df541/image-9.jpg)

Related Works Many recent works [3, 4, 5] have appeared since our work. (July 2016 on Arxiv). • Some works [3, 4] extend our proposed YOLO + LSTM scheme with multi-target tracking and reinforcement learning. • Some works [4] seem to be built upon our open-sourced code. • Some works [5] are similar but independent. [3] Dan Iter, et. al. Target Tracking with Kalman Filtering, KNN and LSTMs, December 2016. [4] Da Zhang, et. al. Deep Reinforcement Learning for Visual Object Tracking in Videos, April 2017. [5] Anton Milan, et. al. Online Multi-Target Tracking Using Recurrent Neural Networks, December 2016. 9 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

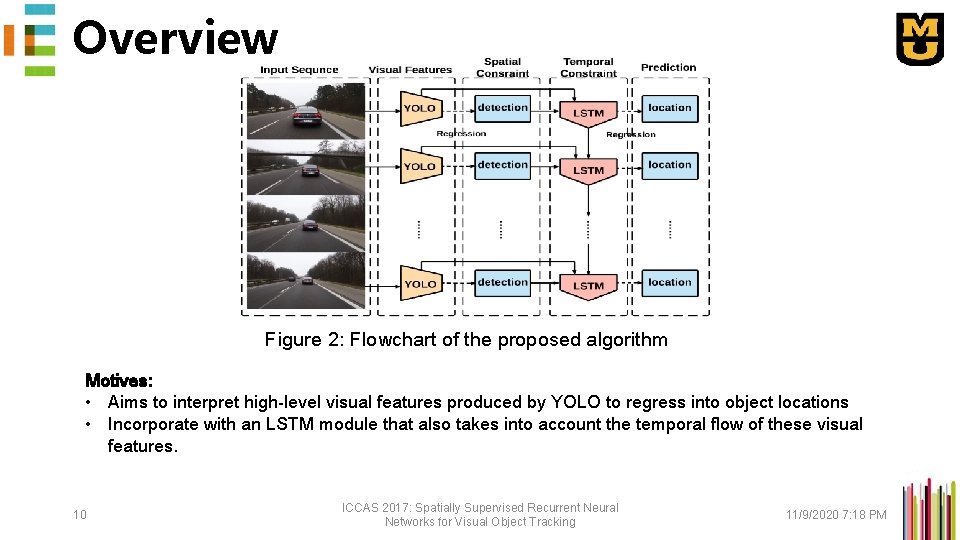

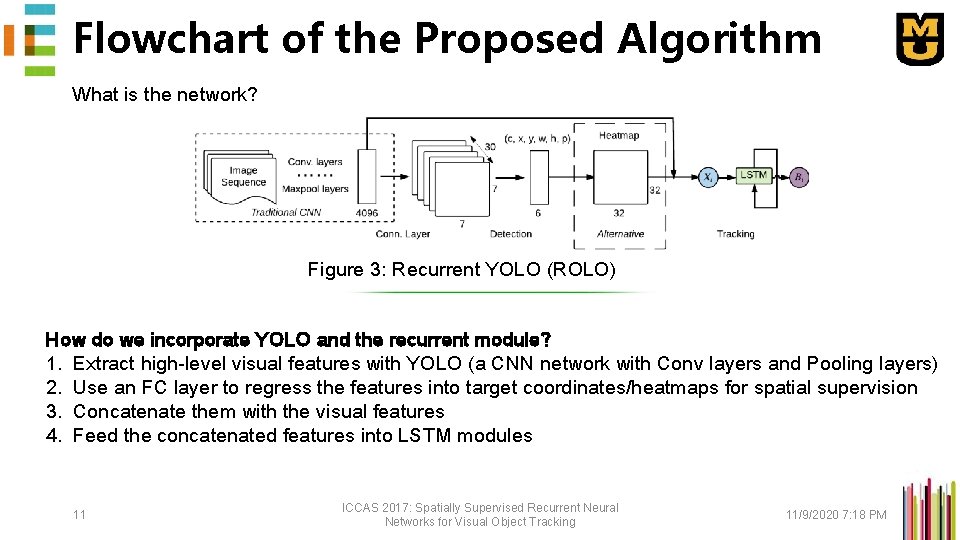

Overview Figure 2: Flowchart of the proposed algorithm Motives: • Aims to interpret high-level visual features produced by YOLO to regress into object locations • Incorporate with an LSTM module that also takes into account the temporal flow of these visual features. 10 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

Flowchart of the Proposed Algorithm What is the network? Figure 3: Recurrent YOLO (ROLO) How do we incorporate YOLO and the recurrent module? 1. Extract high-level visual features with YOLO (a CNN network with Conv layers and Pooling layers) 2. Use an FC layer to regress the features into target coordinates/heatmaps for spatial supervision 3. Concatenate them with the visual features 4. Feed the concatenated features into LSTM modules 11 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

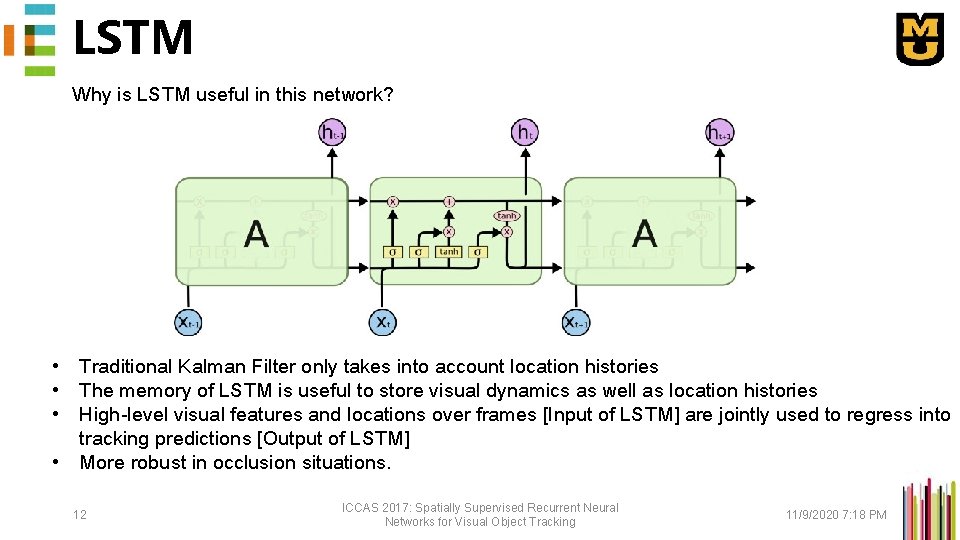

LSTM Why is LSTM useful in this network? • Traditional Kalman Filter only takes into account location histories • The memory of LSTM is useful to store visual dynamics as well as location histories • High-level visual features and locations over frames [Input of LSTM] are jointly used to regress into tracking predictions [Output of LSTM] • More robust in occlusion situations. 12 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

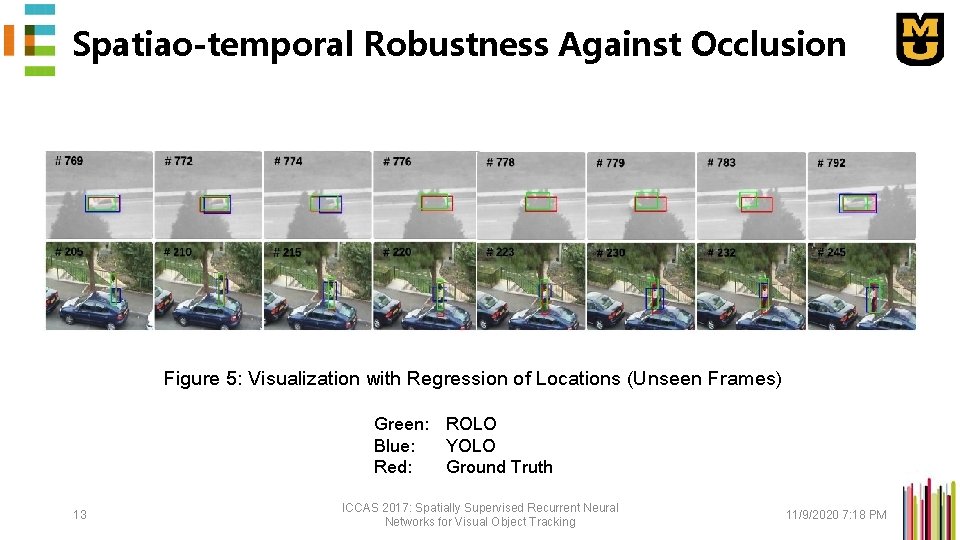

Spatiao-temporal Robustness Against Occlusion Figure 5: Visualization with Regression of Locations (Unseen Frames) Green: ROLO Blue: YOLO Red: Ground Truth 13 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 18 PM

Spatiao-temporal Robustness Against Occlusion ROLO is effective due to several reasons: • (1) the representation power of the high-level visual features from conv. Nets, • (2) the feature interpretation power of LSTM, therefore the ability to detect visual objects, • (3) spatially supervised by a location or heatmap vector, • (3) the capability of LSTM in regressing effectively with spatio-temporal information. 14 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 19 PM

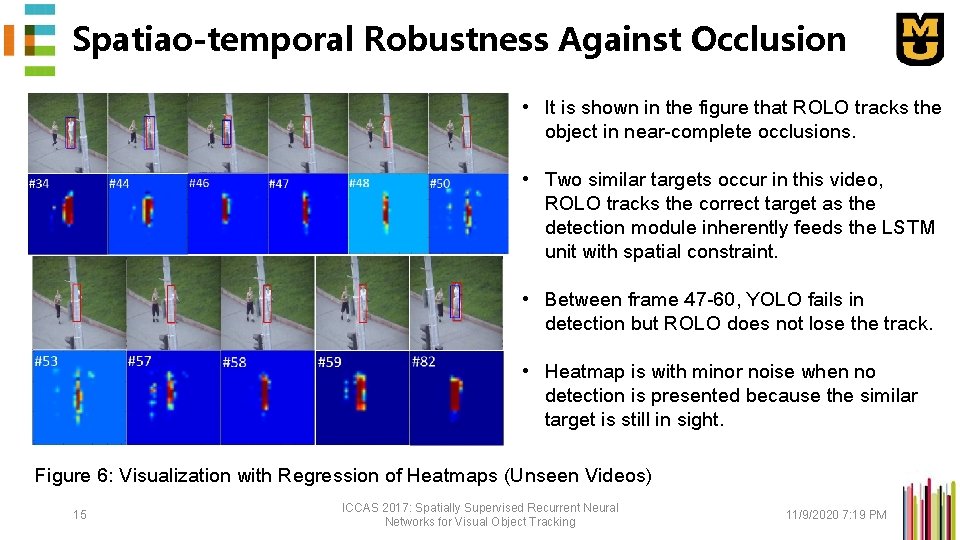

Spatiao-temporal Robustness Against Occlusion • It is shown in the figure that ROLO tracks the object in near-complete occlusions. • Two similar targets occur in this video, ROLO tracks the correct target as the detection module inherently feeds the LSTM unit with spatial constraint. • Between frame 47 -60, YOLO fails in detection but ROLO does not lose the track. • Heatmap is with minor noise when no detection is presented because the similar target is still in sight. Figure 6: Visualization with Regression of Heatmaps (Unseen Videos) 15 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 19 PM

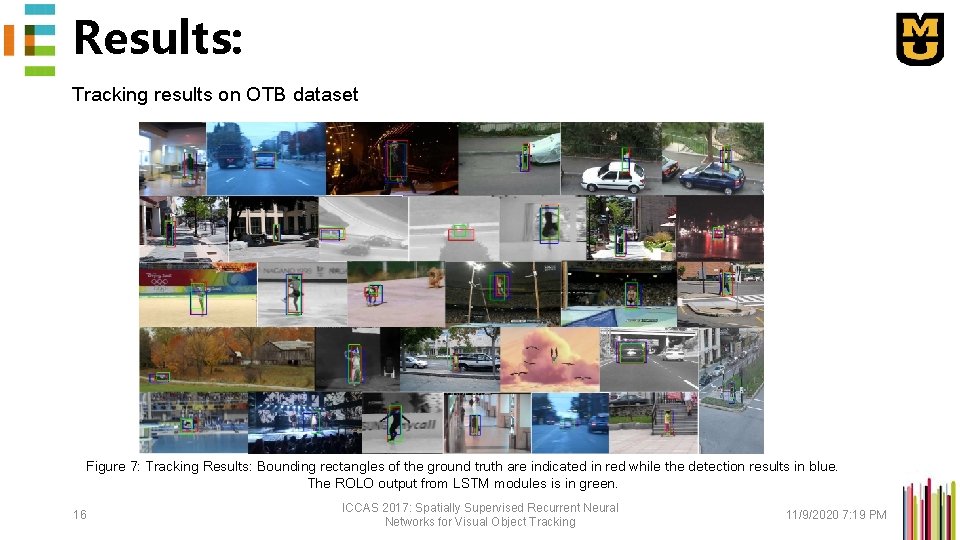

Results: Tracking results on OTB dataset Figure 7: Tracking Results: Bounding rectangles of the ground truth are indicated in red while the detection results in blue. The ROLO output from LSTM modules is in green. 16 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 19 PM

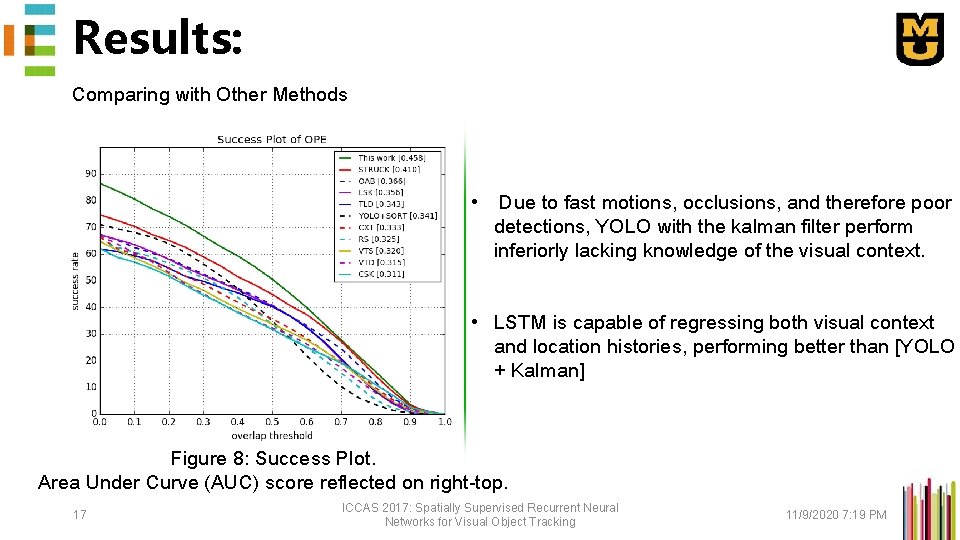

Results: Comparing with Other Methods • Due to fast motions, occlusions, and therefore poor detections, YOLO with the kalman filter perform inferiorly lacking knowledge of the visual context. • LSTM is capable of regressing both visual context and location histories, performing better than [YOLO + Kalman] Figure 8: Success Plot. Area Under Curve (AUC) score reflected on right-top. 17 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 19 PM

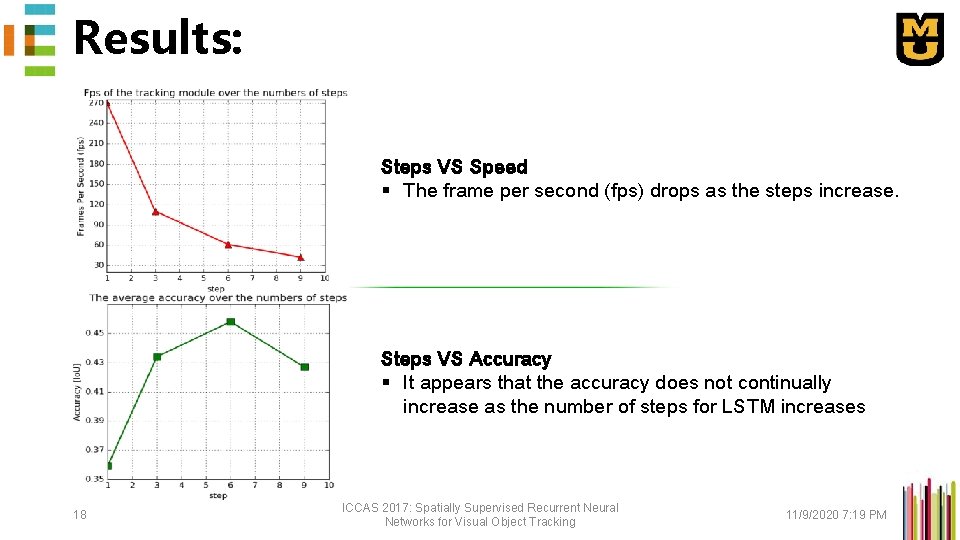

Results: Steps VS Speed § The frame per second (fps) drops as the steps increase. Steps VS Accuracy § It appears that the accuracy does not continually increase as the number of steps for LSTM increases 18 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 19 PM

Conclusion And contributions of this paper: § Our proposed ROLO method extends the deep neural network learning and analysis into the spatiotemporal domain. § We have also studied LSTM’s interpretation and regression capabilities of high-level visual features. § Our proposed tracker is both spatially and temporally deep, and can effectively tackle problems of major occlusion and severe motion blur. 19 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 19 PM

THANKS! 20 ICCAS 2017: Spatially Supervised Recurrent Neural Networks for Visual Object Tracking 11/9/2020 7: 19 PM

- Slides: 20