Sparse Redundant Representations by IteratedShrinkage Algorithms Michael Elad

Sparse & Redundant Representations by Iterated-Shrinkage Algorithms * Michael Elad echnology The Computer Science Department The Technion – Israel Institute of Haifa 32000, Israel 26 - 30 August 2007 San Diego Convention Center San Diego, CA USA * Joint work with: Boaz Matalon Joseph Michael Shtok Zibulevsky A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel Wavelet XII (6701)

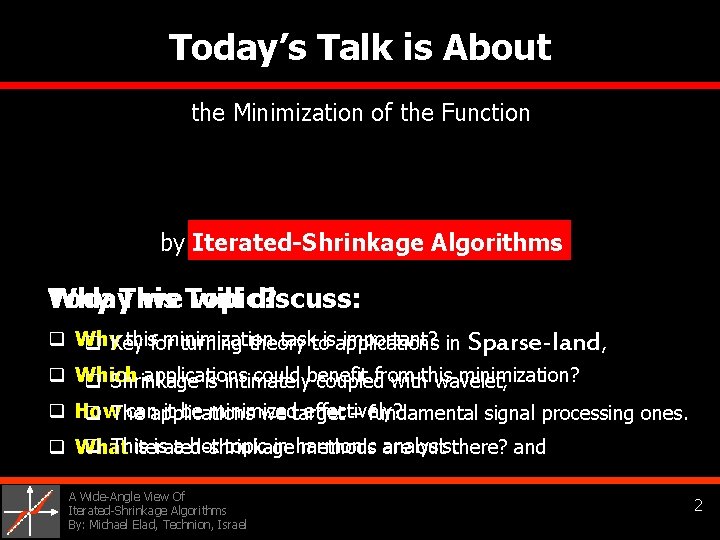

Today’s Talk is About the Minimization of the Function by Iterated-Shrinkage Algorithms Why Today. This we Topic? will discuss: q Why this minimization task is important? q Key for turning theory to applications in Sparse-land, q Which applications could benefit from this minimization? q Shrinkage is intimately coupled with wavelet, q How can it be minimized effectively? q The applications we target – fundamental signal processing ones. q This is a hot topic in harmonic analysis. q What iterated-shrinkage methods are out there? and A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 2

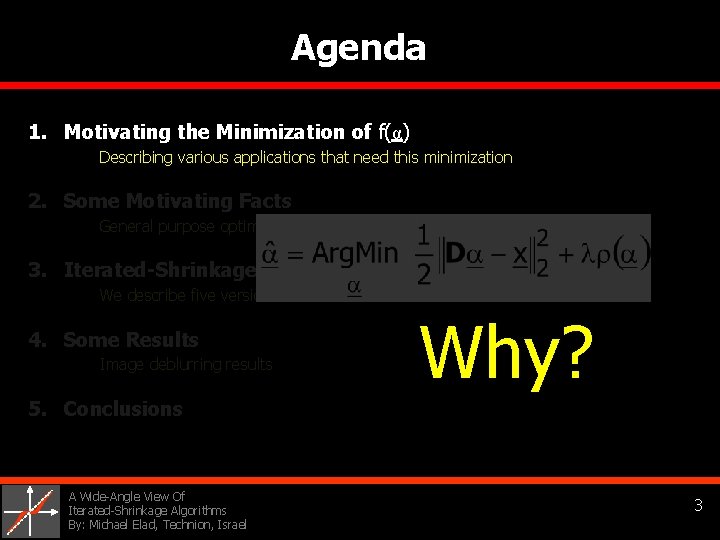

Agenda 1. Motivating the Minimization of f(α) Describing various applications that need this minimization 2. Some Motivating Facts General purpose optimization tools, and the unitary case 3. Iterated-Shrinkage Algorithms We describe five versions of those in detail 4. Some Results Image deblurring results Why? 5. Conclusions A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 3

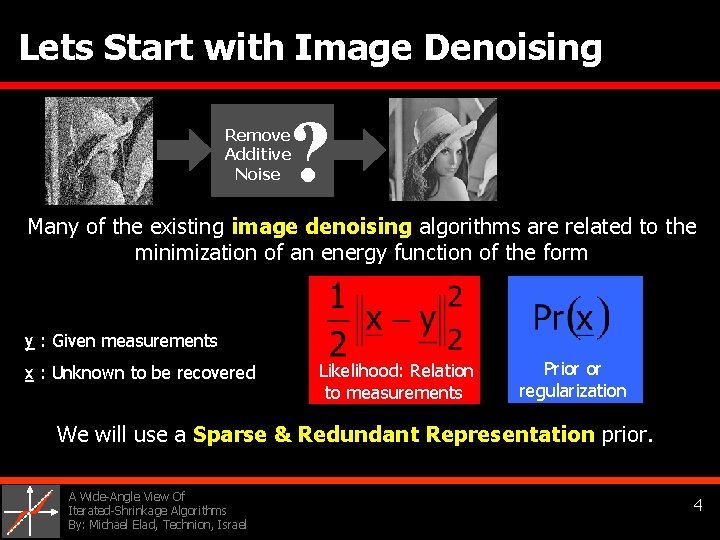

Lets Start with Image Denoising ? Remove Additive Noise Many of the existing image denoising algorithms are related to the minimization of an energy function of the form y : Given measurements x : Unknown to be recovered Likelihood: Relation Prior or regularization to measurements We will use a Sparse & Redundant Representation prior. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 4

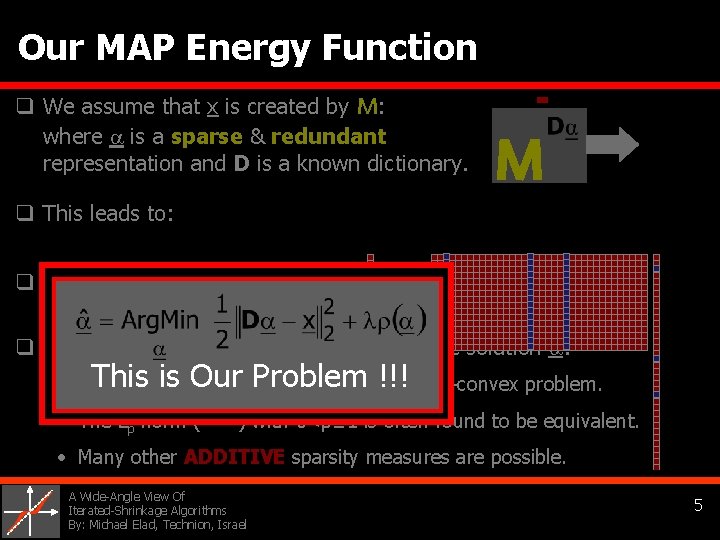

Our MAP Energy Function q We assume that x is created by M: where is a sparse & redundant representation and D is a known dictionary. M q This leads to: D =x= = q This MAP denoising algorithm is known as basis Pursuit Denoising [Chen, Donoho, Saunders 1995]. q The term ρ(α) measures the sparsity of the solution : • L 0 This is Our Problem !!! -norm (||α||0) leads to non-smooth & non-convex problem. • The Lp norm ( ) with 0<p≤ 1 is often found to be equivalent. • Many other ADDITIVE sparsity measures are possible. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 5

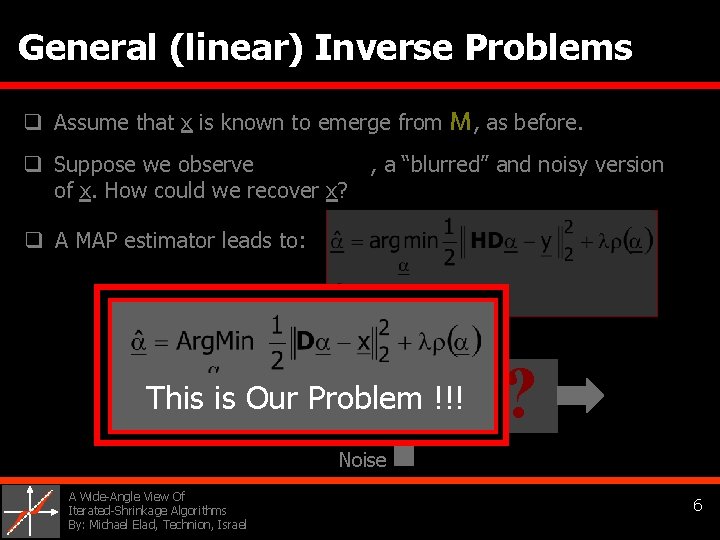

General (linear) Inverse Problems q Assume that x is known to emerge from M, as before. q Suppose we observe , a “blurred” and noisy version of x. How could we recover x? q A MAP estimator leads to: MThis is Our Problem !!! ? Noise A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 6

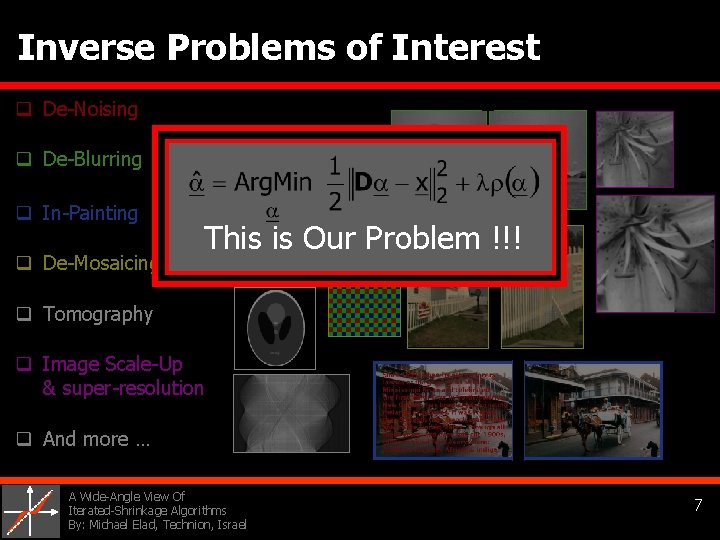

Inverse Problems of Interest q De-Noising q De-Blurring q In-Painting q De-Mosaicing This is Our Problem !!! q Tomography q Image Scale-Up & super-resolution q And more … A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 7

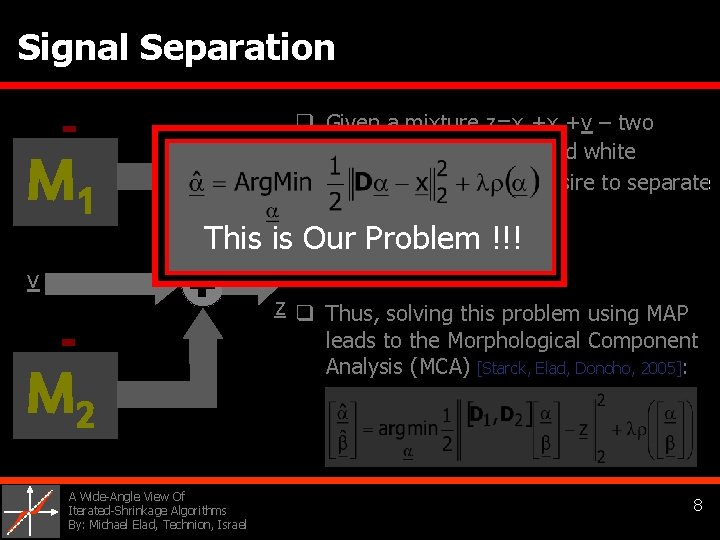

Signal Separation M 1 q Given a mixture z=x 1+x 2+v – two sources, M 1 and M 2 , and white Gaussian noise v, we desire to separate it to its ingredients. This is Our Problem !!! q Written differently: v z q Thus, solving this problem using MAP M 2 A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel leads to the Morphological Component Analysis (MCA) [Starck, Elad, Donoho, 2005]: 8

![Compressed-Sensing [Candes et. al. 2006], [Donoho, 2006] q In compressed-sensing we compress the signal Compressed-Sensing [Candes et. al. 2006], [Donoho, 2006] q In compressed-sensing we compress the signal](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-9.jpg)

Compressed-Sensing [Candes et. al. 2006], [Donoho, 2006] q In compressed-sensing we compress the signal x by exploiting its origin. This is done by p<<n random projections. q The core idea: (P size: p×n) holds all the information about the original signal x, even though p<<n. This is Our Problem !!! q Reconstruction? Use MAP again and solve M ? Noise A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 9

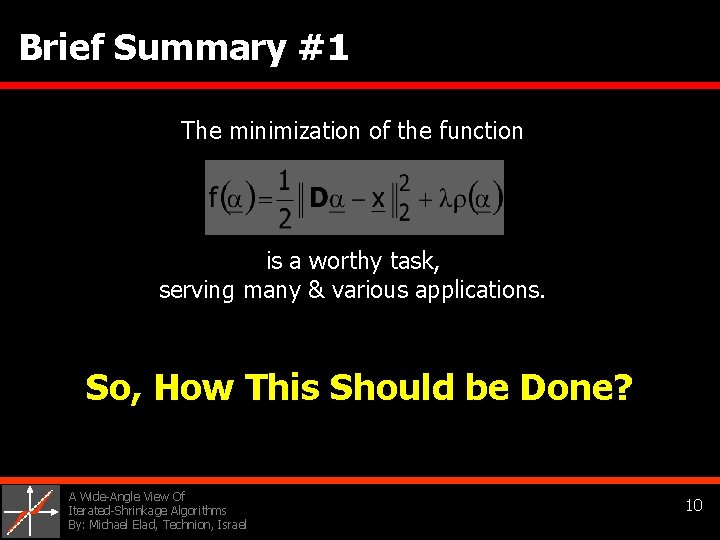

Brief Summary #1 The minimization of the function is a worthy task, serving many & various applications. So, How This Should be Done? A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 10

Agenda 1. Motivating the Minimization of f(α) Describing various applications that need this minimization 2. Some Motivating facts General purpose optimization tools, and the unitary case 3. Iterated-Shrinkage Algorithms We describe five versions of those in detail 4. Some Results Image deblurring results 5. Conclusions A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel LUT Apply Wavelet Transform Apply Inv. Wavelet Transform 11

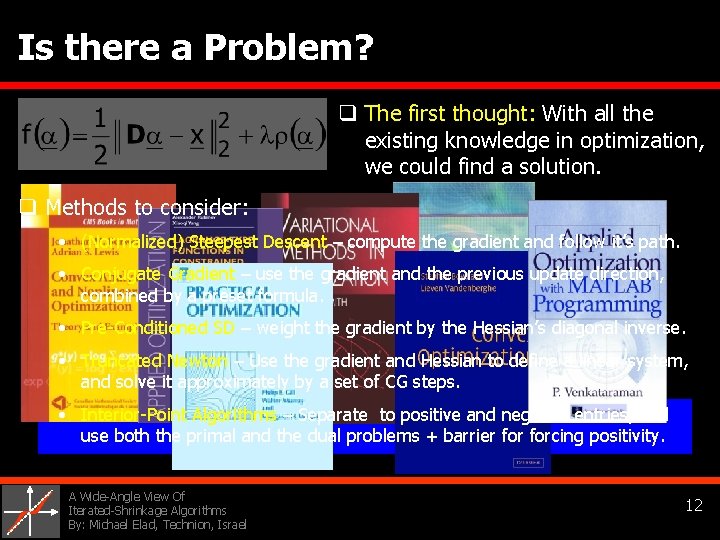

Is there a Problem? q The first thought: With all the existing knowledge in optimization, we could find a solution. q Methods to consider: • (Normalized) Steepest Descent – compute the gradient and follow it’s path. • Conjugate Gradient – use the gradient and the previous update direction, combined by a preset formula. • Pre-Conditioned SD – weight the gradient by the Hessian’s diagonal inverse. • Truncated Newton – Use the gradient and Hessian to define a linear system, and solve it approximately by a set of CG steps. • Interior-Point Algorithms – Separate to positive and negative entries, and use both the primal and the dual problems + barrier forcing positivity. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 12

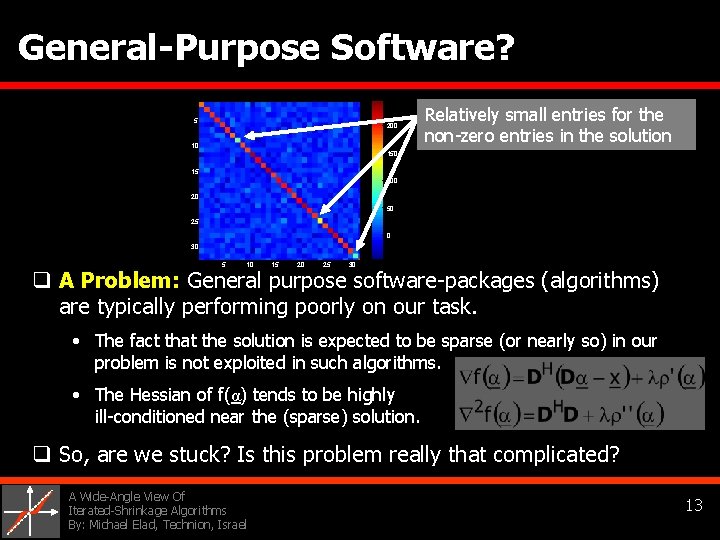

General-Purpose Software? q So, simply download one of many general-purpose packages: Relatively small entries for the 5 200 • L 1 -Magic (interior-point solver), 150 • Sparselab (interior-point solver), 100 10 non-zero entries in the solution 15 20 • MOSEK (various tools), 50 25 • Matlab Optimization Toolbox (various tools), … 0 30 5 10 15 20 25 30 q A Problem: General purpose software-packages (algorithms) are typically performing poorly on our task. Possible reasons: • The fact that the solution is expected to be sparse (or nearly so) in our problem is not exploited in such algorithms. • The Hessian of f(α) tends to be highly ill-conditioned near the (sparse) solution. q So, are we stuck? Is this problem really that complicated? A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 13

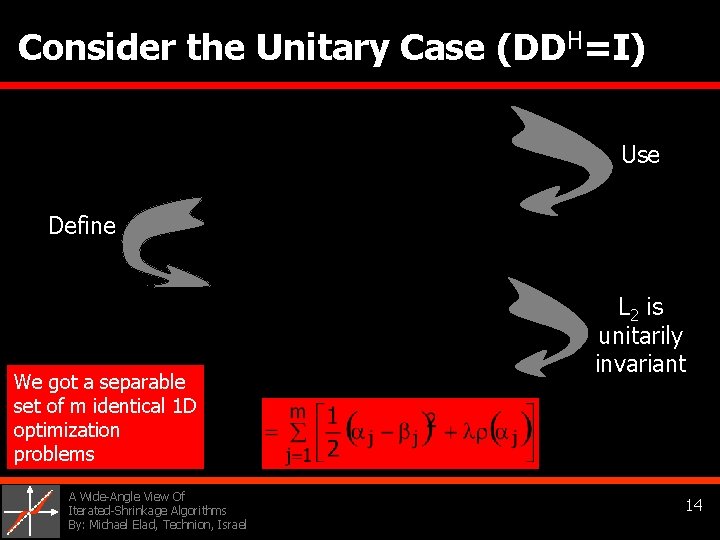

Consider the Unitary Case (DDH=I) Use Define We got a separable set of m identical 1 D optimization problems A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel L 2 is unitarily invariant 14

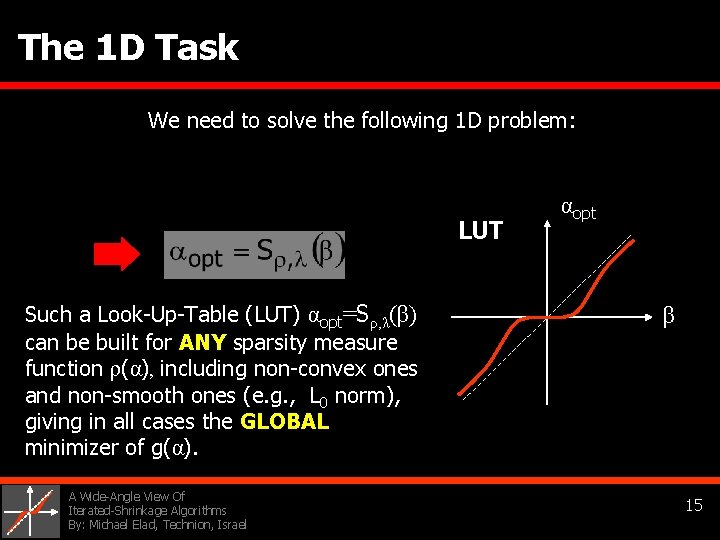

The 1 D Task We need to solve the following 1 D problem: LUT Such a Look-Up-Table (LUT) αopt=Sρ, λ(β) can be built for ANY sparsity measure function ρ(α), including non-convex ones and non-smooth ones (e. g. , L 0 norm), giving in all cases the GLOBAL minimizer of g(α). A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel αopt β 15

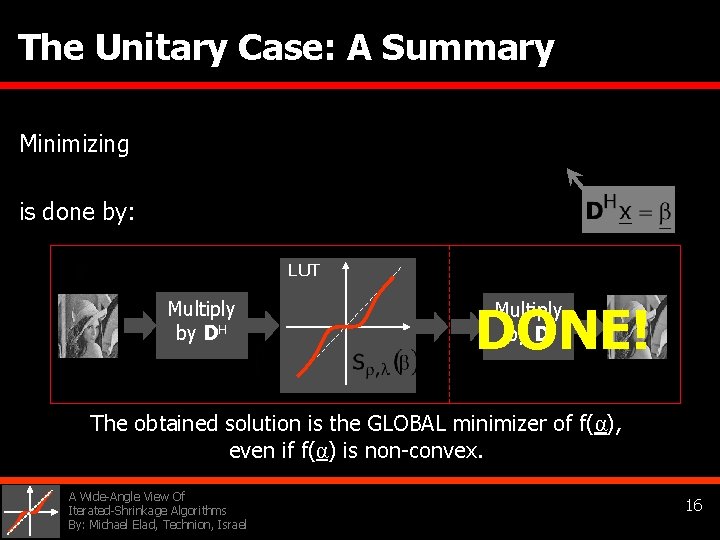

The Unitary Case: A Summary Minimizing is done by: LUT Multiply by DH DONE! Multiply by D The obtained solution is the GLOBAL minimizer of f(α), even if f(α) is non-convex. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 16

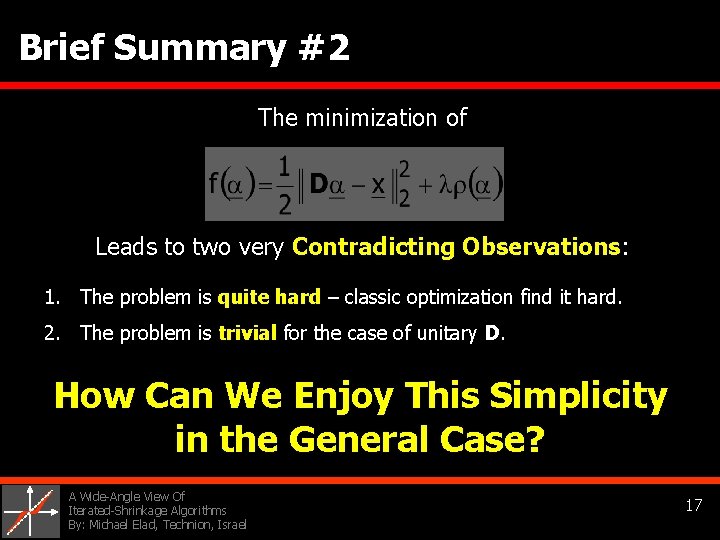

Brief Summary #2 The minimization of Leads to two very Contradicting Observations: 1. The problem is quite hard – classic optimization find it hard. 2. The problem is trivial for the case of unitary D. How Can We Enjoy This Simplicity in the General Case? A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 17

Agenda 1. Motivating the Minimization of f(α) Describing various applications that need this minimization 2. Some Motivating Facts General purpose optimization tools, and the unitary case 3. Iterated-Shrinkage Algorithms We describe five versions of those in detail 4. Some Results Image deblurring results 5. Conclusions A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 18

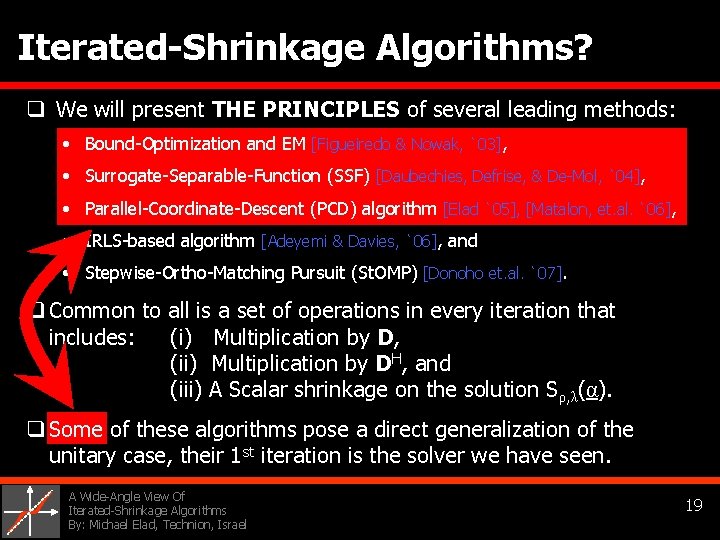

Iterated-Shrinkage Algorithms? q We will present THE PRINCIPLES of several leading methods: • Bound-Optimization and EM [Figueiredo & Nowak, `03], • Surrogate-Separable-Function (SSF) [Daubechies, Defrise, & De-Mol, `04], • Parallel-Coordinate-Descent (PCD) algorithm [Elad `05], [Matalon, et. al. `06], • IRLS-based algorithm [Adeyemi & Davies, `06], and • Stepwise-Ortho-Matching Pursuit (St. OMP) [Donoho et. al. `07]. q Common to all is a set of operations in every iteration that includes: (i) Multiplication by D, (ii) Multiplication by DH, and (iii) A Scalar shrinkage on the solution Sρ, λ(α). q Some of these algorithms pose a direct generalization of the unitary case, their 1 st iteration is the solver we have seen. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 19

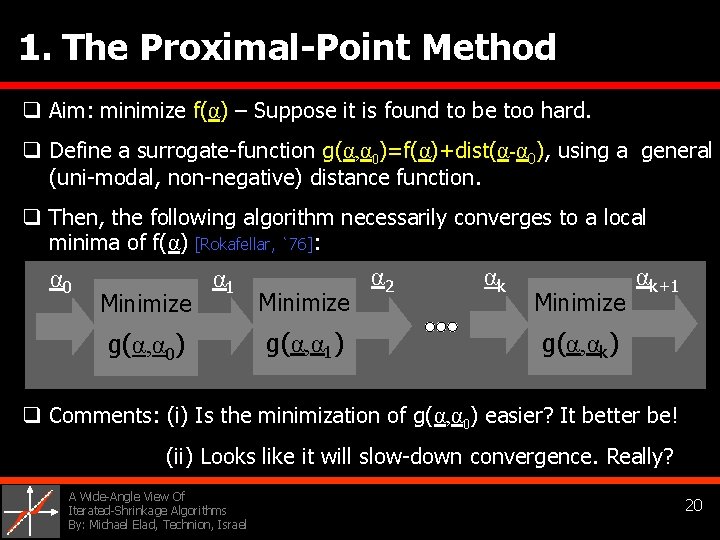

1. The Proximal-Point Method q Aim: minimize f(α) – Suppose it is found to be too hard. q Define a surrogate-function g(α, α 0)=f(α)+dist(α-α 0), using a general (uni-modal, non-negative) distance function. q Then, the following algorithm necessarily converges to a local minima of f(α) [Rokafellar, `76]: α 0 Minimize α 1 g(α, α 0) Minimize g(α, α 1) α 2 αk Minimize αk+1 g(α, αk) q Comments: (i) Is the minimization of g(α, α 0) easier? It better be! (ii) Looks like it will slow-down convergence. Really? A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 20

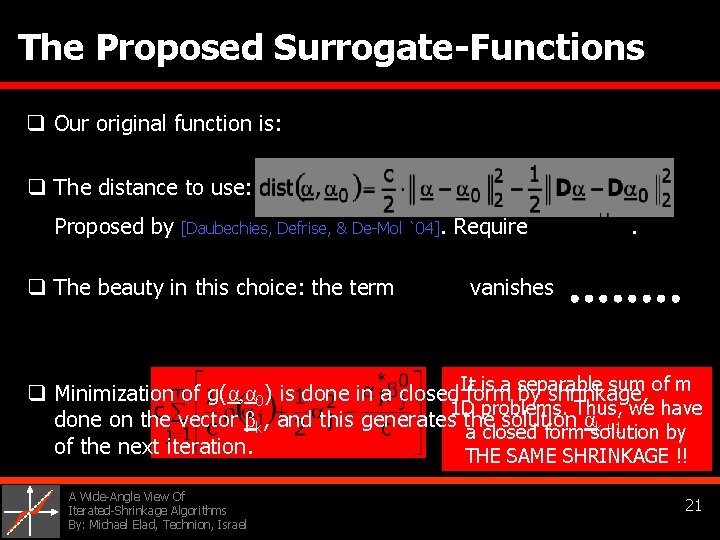

The Proposed Surrogate-Functions q Our original function is: q The distance to use: Proposed by [Daubechies, Defrise, & De-Mol `04]. Require . q The beauty in this choice: the term vanishes It is a separable sum of m q Minimization of g(α, α 0) is done in a closed form by shrinkage, 1 D problems. Thus, we have done on the vector βk, and this generates the solution αk+1 a closed form solution by of the next iteration. THE SAME SHRINKAGE !! A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 21

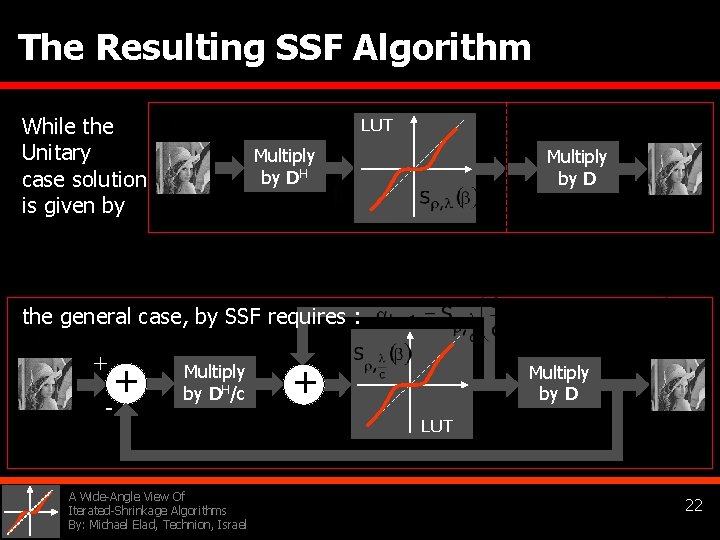

The Resulting SSF Algorithm While the Unitary Multiply by DH case solution is given by LUT Multiply by D the general case, by SSF requires : + + - Multiply by DH/c A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel + Multiply by D LUT 22

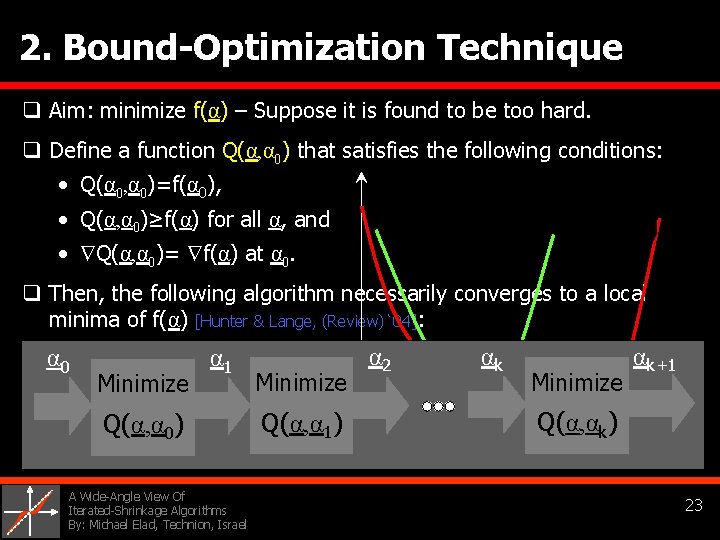

2. Bound-Optimization Technique q Aim: minimize f(α) – Suppose it is found to be too hard. q Define a function Q(α, α 0) that satisfies the following conditions: • Q(α 0, α 0)=f(α 0), • Q(α, α 0)≥f(α) for all α, and • Q(α, α 0)= f(α) at α 0. q Then, the following algorithm necessarily converges to a local minima of f(α) [Hunter & Lange, (Review)`04]: α 2 α 1 qα Well, regarding this method … 0 Minimize αk+1 • The above is closely related to the EM algorithm [Neal & Hinton, `98]. Q(α, αk) Q(α, α 1) Q(α, α 0) • Figueiredo & Nowak’s method (`03): use the BO idea to minimize f(α). They use the VERY SAME Surrogate functions we saw before. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 23

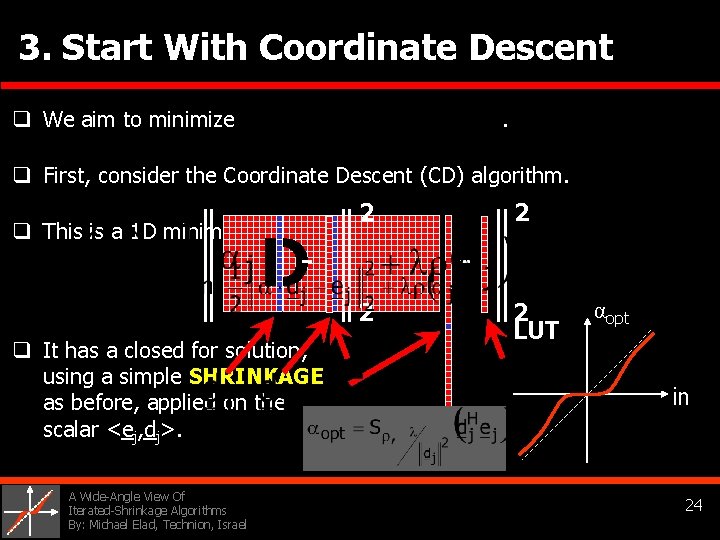

3. Start With Coordinate Descent q We aim to minimize . q First, consider the Coordinate Descent (CD) algorithm. 2 q This is a 1 D minimization problem: D - 2 2 2 LUT αopt q It has a closed for solution, using a simple SHRINKAGE in as before, applied on the scalar <ej, dj>. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 24

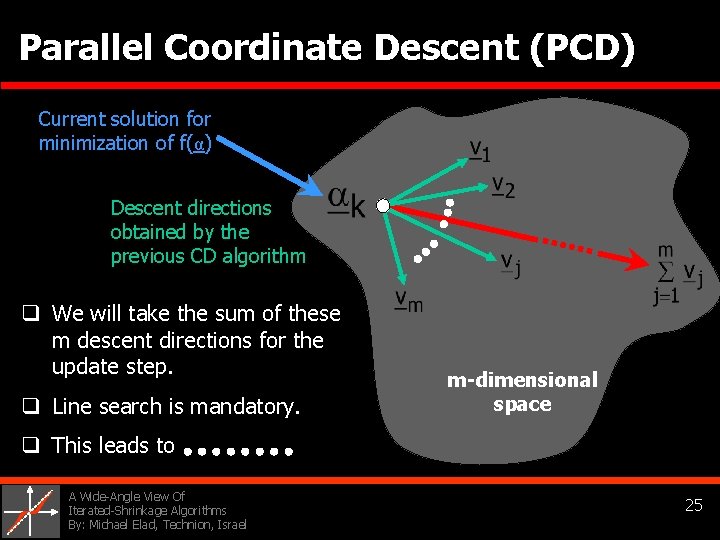

Parallel Coordinate Descent (PCD) Current solution for minimization of f(α) Descent directions obtained by the previous CD algorithm q We will take the sum of these m descent directions for the update step. q Line search is mandatory. m-dimensional space q This leads to A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 25

![The PCD Algorithm [Elad, `05] [Matalon, Elad, & Zibulevsky, `06] Where and represents a The PCD Algorithm [Elad, `05] [Matalon, Elad, & Zibulevsky, `06] Where and represents a](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-26.jpg)

The PCD Algorithm [Elad, `05] [Matalon, Elad, & Zibulevsky, `06] Where and represents a line search (LS). Line Search + + - Multiply by QDH + Multiply by D LUT Note: Q can be computed quite easily off-line. Its storage is just like storing the vector αk. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 26

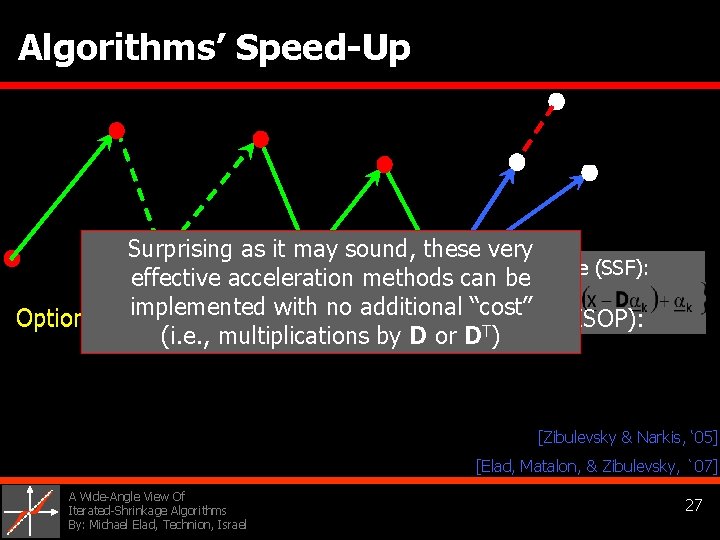

Algorithms’ Speed-Up Surprising as it may sound, these very For example (SSF): effective acceleration methods can be implemented with no additional “cost” Option 2 – Use line-search with v: Option 1 – Use v as is: Option 3 – Use Sequential Subspace OPtimization (SESOP): (i. e. , multiplications by D or DT) [Zibulevsky & Narkis, ‘ 05] [Elad, Matalon, & Zibulevsky, `07] A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 27

Brief Summary #3 For an effective minimization of the function we saw several iterated-shrinkage algorithms, built using 1. Proximal Point Method 4. Iterative Reweighed LS 2. Bound Optimization 5. Fixed Point Iteration 3. Parallel Coordinate Descent 6. Greedy Algorithms How Are They Performing? A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 28

Agenda 1. Motivating the Minimization of f(α) Describing various applications that need this minimization 2. Some Motivating Facts General purpose optimization tools, and the unitary case 3. Iterated-Shrinkage Algorithms We describe five versions of those in detail 4. Some Results Image deblurring results 5. Conclusions A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 29

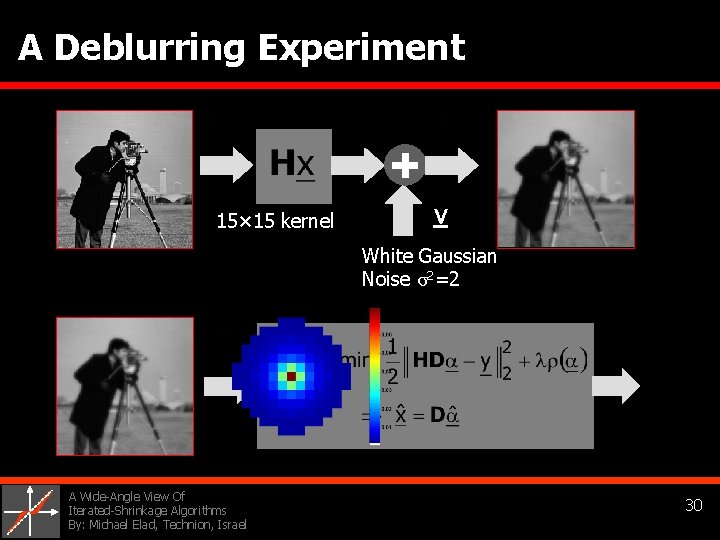

A Deblurring Experiment 15× 15 kernel v White Gaussian Noise σ2=2 A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 30

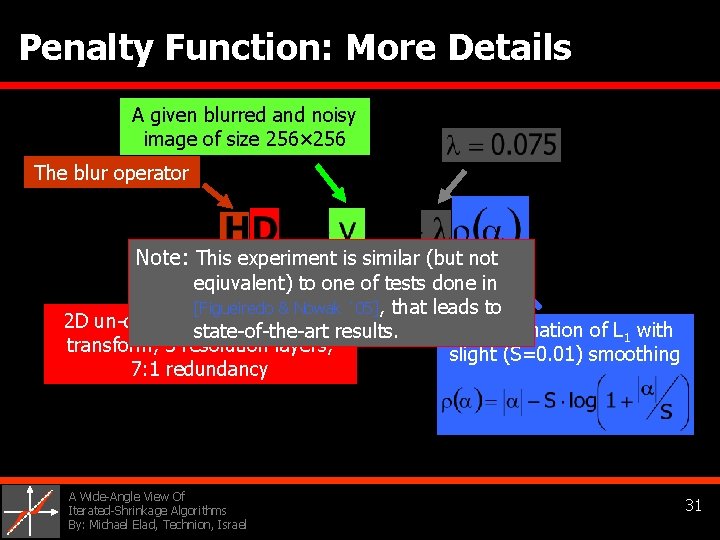

Penalty Function: More Details A given blurred and noisy image of size 256× 256 The blur operator Note: This experiment is similar (but not eqiuvalent) to one of tests done in [Figueiredo & Nowak `05], that leads to 2 D un-decimated Haar wavelet Approximation of L 1 with state-of-the-art results. transform, 3 resolution layers, slight (S=0. 01) smoothing 7: 1 redundancy A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 31

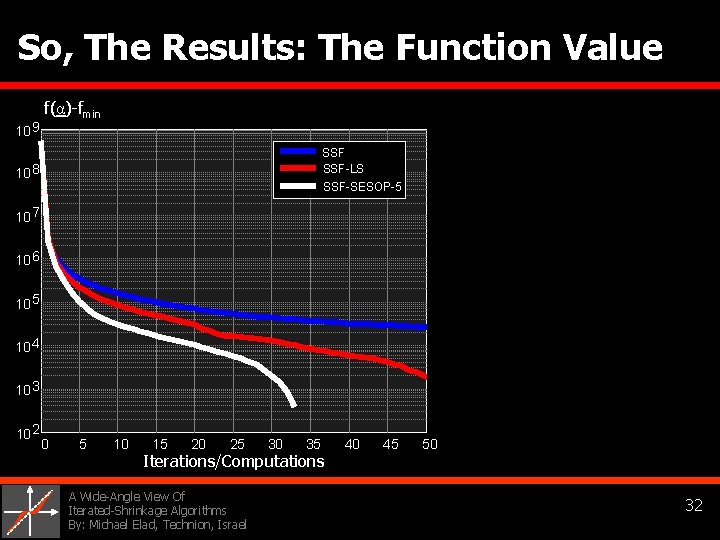

So, The Results: The Function Value 10 9 f(α)-fmin SSF-LS 10 8 SSF-SESOP-5 10 7 10 6 10 5 10 4 10 3 10 2 0 5 10 15 20 25 30 35 Iterations/Computations A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 40 45 50 32

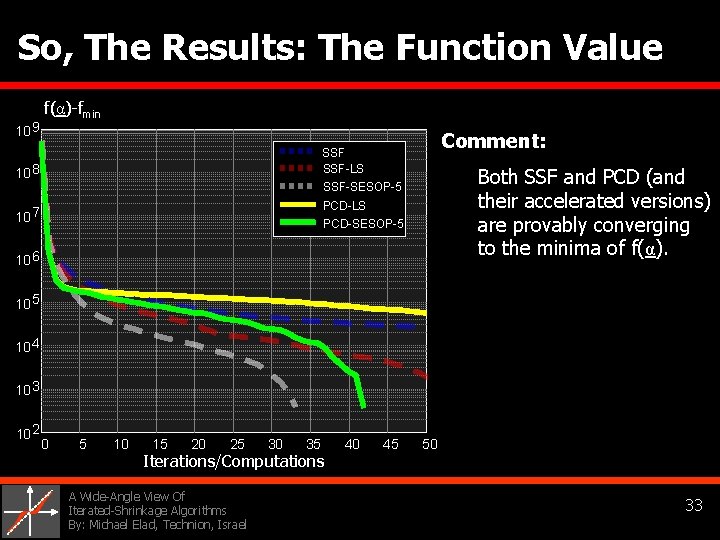

So, The Results: The Function Value 10 9 f(α)-fmin Comment: 10 8 SSF-LS 10 7 PCD-LS PCD-SESOP-5 Both SSF and PCD (and their accelerated versions) are provably converging to the minima of f(α). SSF-SESOP-5 10 6 10 5 10 4 10 3 10 2 0 5 10 15 20 25 30 35 Iterations/Computations A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 40 45 50 33

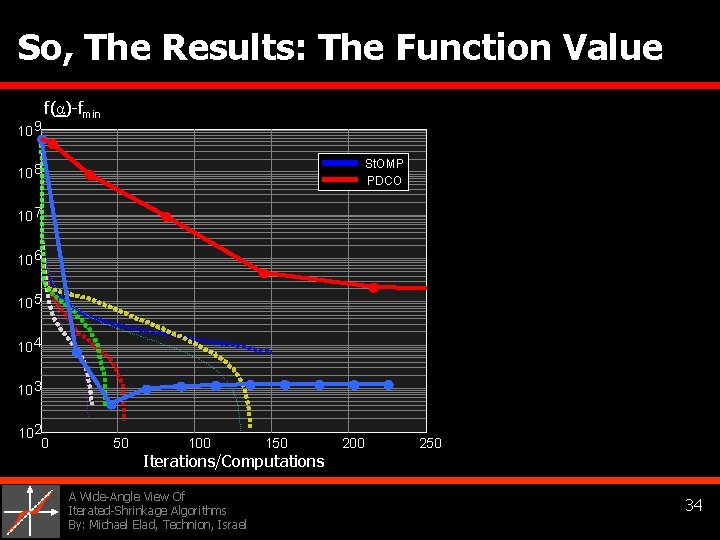

So, The Results: The Function Value 10 9 f(α)-fmin St. OMP PDCO 10 8 10 7 10 6 10 5 10 4 10 3 102 0 50 100 150 Iterations/Computations A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 200 250 34

![So, The Results: ISNR [d. B] 10 5 0 SSF-LS 6. 41 d. B So, The Results: ISNR [d. B] 10 5 0 SSF-LS 6. 41 d. B](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-35.jpg)

So, The Results: ISNR [d. B] 10 5 0 SSF-LS 6. 41 d. B -5 SSF-SESOP-5 -10 -15 -20 0 5 10 15 20 25 30 35 Iterations/Computations A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 40 45 50 35

![So, The Results: ISNR [d. B] 10 5 0 SSF-LS 7. 03 d. B So, The Results: ISNR [d. B] 10 5 0 SSF-LS 7. 03 d. B](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-36.jpg)

So, The Results: ISNR [d. B] 10 5 0 SSF-LS 7. 03 d. B -5 SSF-SESOP-5 PCD-LS PCD-SESOP-5 -10 -15 -20 0 5 10 15 20 25 30 35 Iterations/Computations A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 40 45 50 36

![So, The Results: ISNR [d. B] 10 Comments: St. OMP is inferior in speed So, The Results: ISNR [d. B] 10 Comments: St. OMP is inferior in speed](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-37.jpg)

So, The Results: ISNR [d. B] 10 Comments: St. OMP is inferior in speed and final quality (ISNR=5. 91 d. B) due to to over-estimated support. 5 0 St. OMP PDCO -5 -10 -15 -20 0 50 100 150 Iterations/Computations A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 200 250 PDCO is very slow due to the numerous inner Least-Squares iterations done by CG. It is not competitive with the Iterated-Shrinkage methods. 37

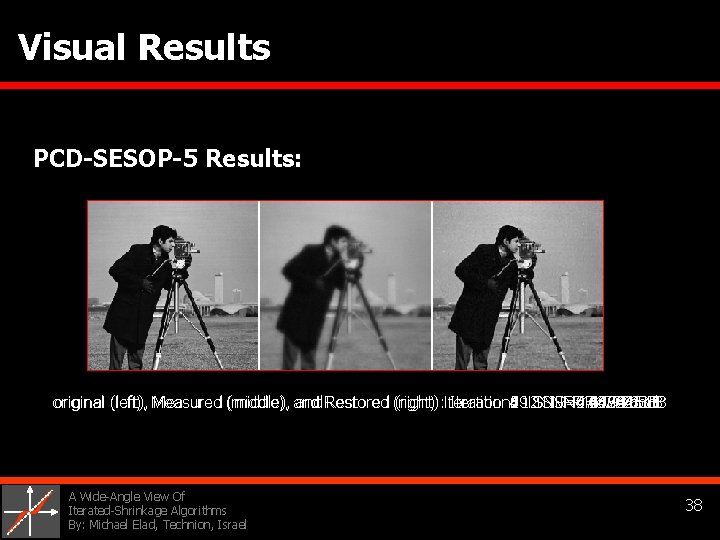

Visual Results PCD-SESOP-5 Results: original (left), Measured (middle), and Restored (right): Iteration: 1 2 19 3 4 5 6 7 8 0 ISNR=0. 069583 ISNR=2. 46924 ISNR=4. 1824 ISNR=4. 9726 ISNR=5. 5875 ISNR=6. 2188 ISNR=6. 6479 ISNR=6. 6789 ISNR=-16. 7728 ISNR=7. 0322 d. B original (left), Measured (middle), and Restored (right): Iteration: 12 ISNR=6. 9416 d. B A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 38

Agenda 1. Motivating the Minimization of f(α) Describing various applications that need this minimization 2. Some Motivating Facts General purpose optimization tools, and the unitary case 3. Iterated-Shrinkage Algorithms We describe five versions of those in detail 4. Some Results Image deblurring results 5. Conclusions A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 39

Conclusions – The Bottom Line If your work leads you to the need to minimize the problem: Then: q We recommend you use an Iterated-Shrinkage algorithm. q SSF and PCD are Preferred: both are provably converging to the (local) minima of f(α), and their performance is very good, getting a reasonable result in few iterations. q Use SESOP Acceleration – it is very effective, and with hardly any cost. q There is Room for more work on various aspects of these algorithms – see the accompanying paper. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 40

Thank You for Your Time & Attention This field of research is very hot … More information, including these slides and the accompanying paper, can be found on my web-page http: //www. cs. technion. ac. il/~elad THE END !! A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 41

A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 42

![3. The IRLS-Based Algorithm q Use the following principles [Edeyemi & Davies `06]: (1) 3. The IRLS-Based Algorithm q Use the following principles [Edeyemi & Davies `06]: (1)](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-43.jpg)

3. The IRLS-Based Algorithm q Use the following principles [Edeyemi & Davies `06]: (1) Iterative Reweighed Least-Squares (IRLS) & (2) Fixed-Point Iteration 0 0 This is the IRLS algorithm, used in FOCUSS [Gorodinsky & Rao `97]. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 43

![The IRLS-Based Algorithm q Use the following principles [Edeyemi & Davies `06]: (2) Fixed-Point The IRLS-Based Algorithm q Use the following principles [Edeyemi & Davies `06]: (2) Fixed-Point](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-44.jpg)

The IRLS-Based Algorithm q Use the following principles [Edeyemi & Davies `06]: (2) Fixed-Point Iteration The Fixed-Point-Iteration method: Task: solve the system Diagonal Entries Idea: assign indices The actual algorithm: Notes: (1) For convergence, we should require c>r(DHD)/2. (2) This algorithm cannot guarantee local-minimum. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 44

![4. Stagewise-OMP [Donoho, Drori, Starck, & Tsaig, `07] + + - Multiply by DH 4. Stagewise-OMP [Donoho, Drori, Starck, & Tsaig, `07] + + - Multiply by DH](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-45.jpg)

4. Stagewise-OMP [Donoho, Drori, Starck, & Tsaig, `07] + + - Multiply by DH Multiply by D + LUT s Minimize ||Dα-x||^2 on S q St. OMP is originally designed to solve and especially so for random dictionary D (Compressed-Sensing). q Nevertheless, it is used elsewhere (restoration) [Fadili & Starck, `06]. q If S grows by one item at each iteration, this becomes OMP. q LS uses K 0 CG steps, each equivalent to 1 iterated-shrinkage step. A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 45

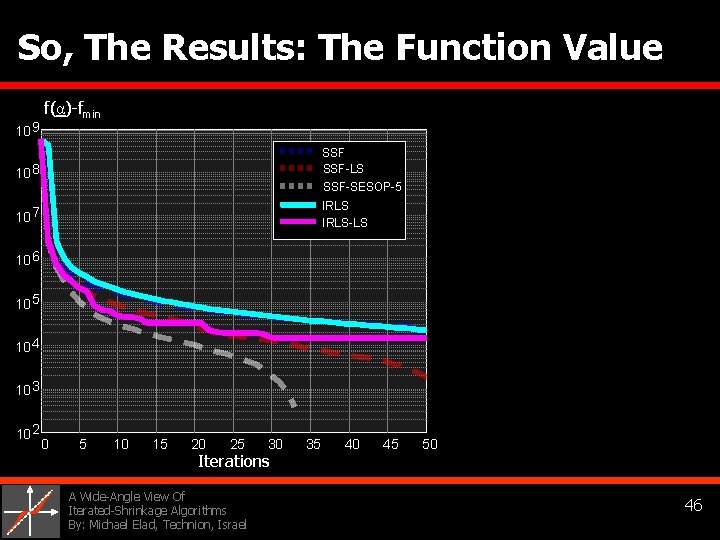

So, The Results: The Function Value 10 9 f(α)-fmin 10 8 SSF-LS 10 7 IRLS-LS SSF-SESOP-5 10 6 10 5 10 4 10 3 10 2 0 5 10 15 20 25 30 Iterations A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 35 40 45 50 46

![SKIP So, The Results: ISNR [d. B] 10 5 0 SSF-LS SSF-SESOP-5 -5 IRLS-LS SKIP So, The Results: ISNR [d. B] 10 5 0 SSF-LS SSF-SESOP-5 -5 IRLS-LS](http://slidetodoc.com/presentation_image/11cb3c22810e5265d62278f68ac74e7c/image-47.jpg)

SKIP So, The Results: ISNR [d. B] 10 5 0 SSF-LS SSF-SESOP-5 -5 IRLS-LS -10 -15 -20 0 5 10 15 20 25 30 Iteration A Wide-Angle View Of Iterated-Shrinkage Algorithms By: Michael Elad, Technion, Israel 35 40 45 50 47

- Slides: 47