Some Recent Progress in Combinatorial Statistics Elchanan Mossel

Some Recent Progress in Combinatorial Statistics Elchanan Mossel, UC Berkeley + Weizmann Institute http: //www. stat. berkeley. edu/~mossel

What is Combinatorial Statistics • “Combinatorial Statistics” deals with Inference of • Discrete parameters such as Graph, Trees or Partitions. • With the goal of providing algorithms with guarantees on: • Sampling Complexity, Computational Complexity and Success Probability. • Alternatively: Sampling or Complexity Lower bounds.

Example 1: Graphical Model reconstruction

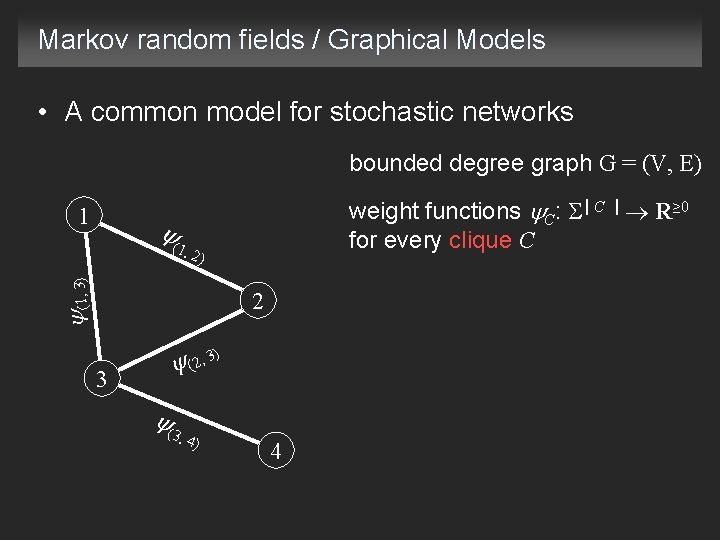

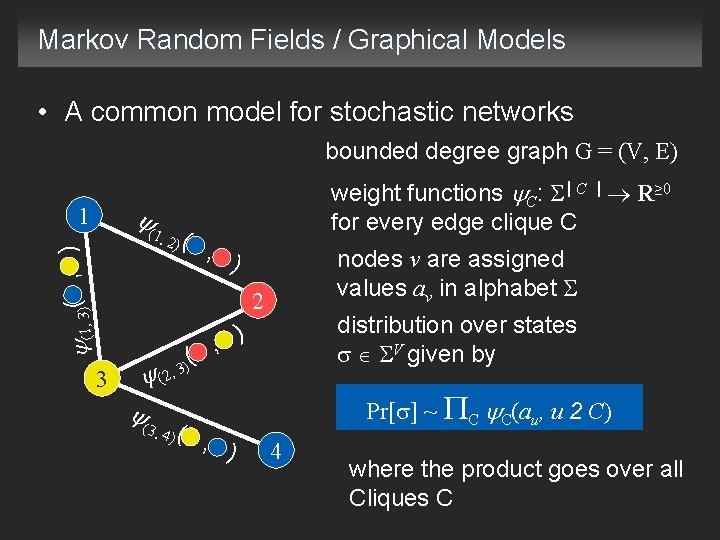

Markov random fields / Graphical Models • A common model for stochastic networks bounded degree graph G = (V, E) 1 weight functions C: | C | R≥ 0 for every clique C ( (1, 3) 1, 2 ) 2 3 ( 3) (2, 3, 4 ) 4

Markov Random Fields / Graphical Models • A common model for stochastic networks bounded degree graph G = (V, E) ( 1 )( ) (1, 3)( , 1, 2 weight functions C: | C | R≥ 0 for every edge clique C , ) nodes v are assigned values av in alphabet 2 3 (2, ( distribution over states V given by ) , ( 3) 3, 4 ( ) , ) Pr[ ] ~ C C(au, u 2 C) 4 where the product goes over all Cliques C

Reconstruction task for Markov random fields • Suppose we can obtain independent samples from the Markov random field • Given observed data at the nodes, is it possible to reconstruct the model (network)? • Important: Do we see data at all of the nodes or only a subset?

Reconstruction problem Problem: Given k independent samples of = ( 1, … n) at all the nodes, find the graph G (Given activity at all the nodes, can network be reconstructed? ) • Restrict attention to graphs with max degree d: • A structure estimator is a map Questions: 1. How many samples k are required (asymptotically) in order to reconstruct MRFs with number of nodes n, max degree d with probability approaching 1, I. e. 2. Want an efficient algorithm for reconstruction.

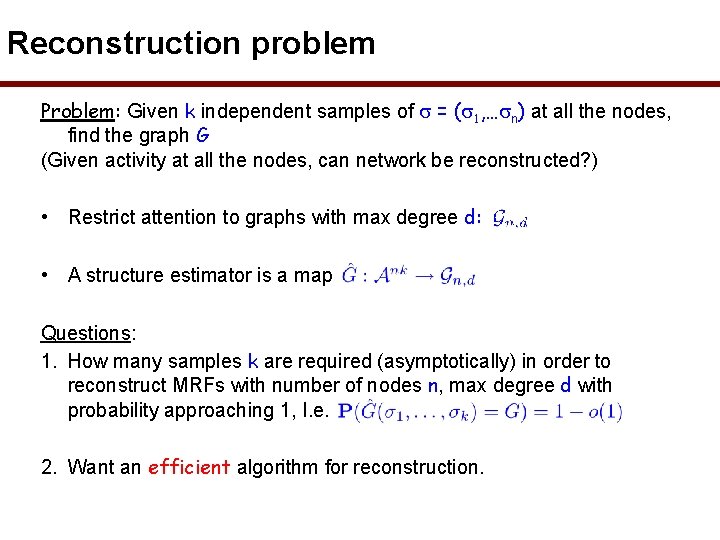

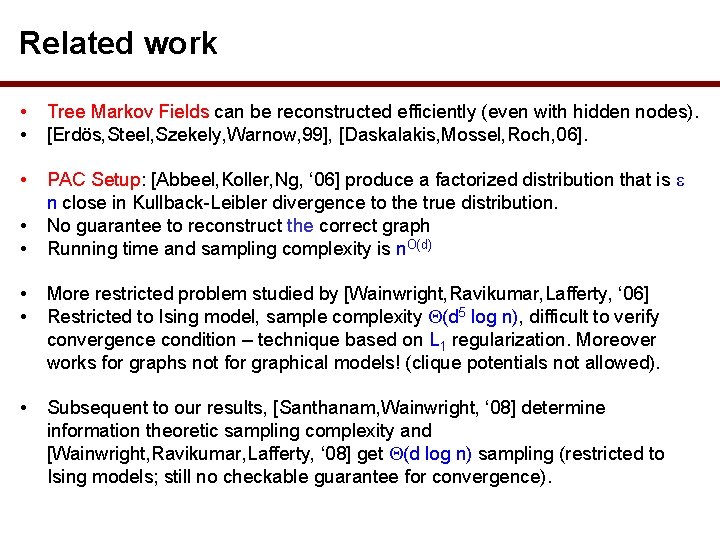

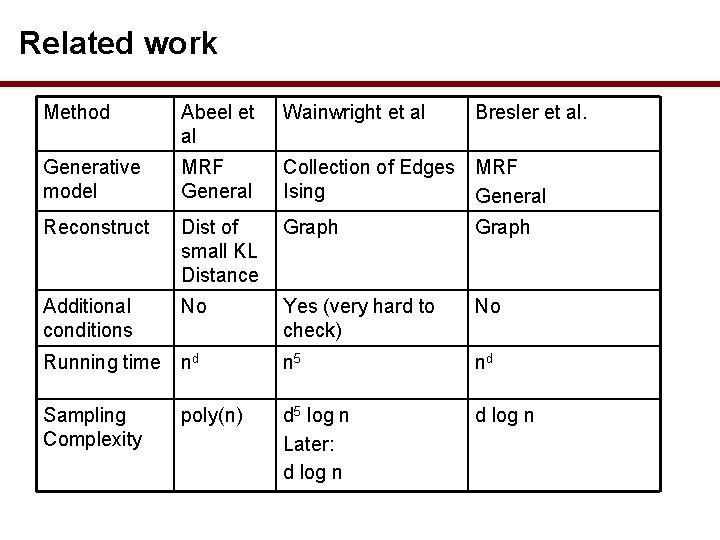

Related work • • Tree Markov Fields can be reconstructed efficiently (even with hidden nodes). [Erdös, Steel, Szekely, Warnow, 99], [Daskalakis, Mossel, Roch, 06]. • PAC Setup: [Abbeel, Koller, Ng, ‘ 06] produce a factorized distribution that is n close in Kullback-Leibler divergence to the true distribution. No guarantee to reconstruct the correct graph Running time and sampling complexity is n. O(d) • • More restricted problem studied by [Wainwright, Ravikumar, Lafferty, ‘ 06] Restricted to Ising model, sample complexity (d 5 log n), difficult to verify convergence condition – technique based on L 1 regularization. Moreover works for graphs not for graphical models! (clique potentials not allowed). • Subsequent to our results, [Santhanam, Wainwright, ‘ 08] determine information theoretic sampling complexity and [Wainwright, Ravikumar, Lafferty, ‘ 08] get (d log n) sampling (restricted to Ising models; still no checkable guarantee for convergence).

Related work Method Abeel et al Wainwright et al Bresler et al. Generative model MRF General Collection of Edges MRF Ising General Reconstruct Dist of small KL Distance Graph Additional conditions No Yes (very hard to check) No Running time nd n 5 nd Sampling Complexity poly(n) d 5 log n Later: d log n

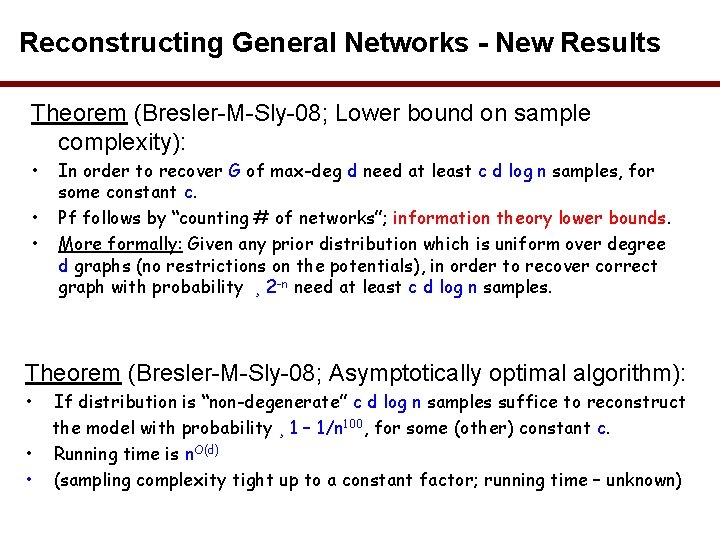

Reconstructing General Networks - New Results Theorem (Bresler-M-Sly-08; Lower bound on sample complexity): • • • In order to recover G of max-deg d need at least c d log n samples, for some constant c. Pf follows by “counting # of networks”; information theory lower bounds. More formally: Given any prior distribution which is uniform over degree d graphs (no restrictions on the potentials), in order to recover correct graph with probability ¸ 2 -n need at least c d log n samples. Theorem (Bresler-M-Sly-08; Asymptotically optimal algorithm): • • • If distribution is “non-degenerate” c d log n samples suffice to reconstruct the model with probability ¸ 1 – 1/n 100, for some (other) constant c. Running time is n. O(d) (sampling complexity tight up to a constant factor; running time – unknown)

Intuition Behind Algorithms • • • Observation: Knowing graph is same as knowing neighborhoods But neighborhood is determined by Markov property Same intuition behind work of Abeel et. al. “Algorithm”: Step 1. Compute empirical probabilities, which are concentrated around true probabilities Step 2. For each node, simply test Markov property of each candidate neighborhood Main Challenge: Show nondegeneracy ) algorithm works

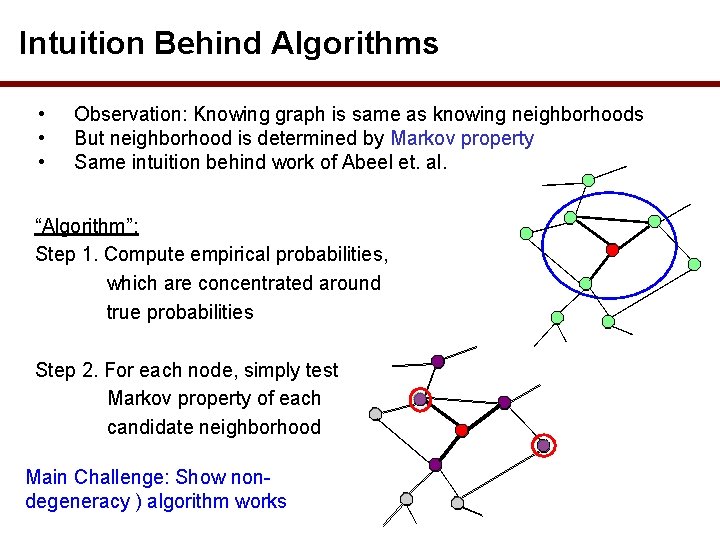

Example 2: Reconstruction of MRF with Hidden nodes • In many applications only some of the nodes can be observed ( 1 )( ) (1, 3)( , 1, 2 visible nodes W V , ) Markov random field over visible nodes is 2 ) , ( 3) W = ( w : w W) (2, ( • Is reconstruction still possible? 3, 4 ( ) 3 , ) 4 • What does “reconstruction” even mean?

Reconstruction versus distinguishing • Easy to construct many models that lead to the same distribution (statistical unidentifiable) • Assuming “this is not a problem” are there computational obstacles for reconstruction? • In particular: how hard is it to distinguish statistically different models?

Distinguishing problems • Let M 1, M 2 be two models with hidden nodes PROBLEM 1 • Can you tell if M 1 and M 2 are statistically close or far apart (on the visible nodes)? PROBLEM 2 • Assuming M 1 and M 2 are statistically far apart and given access to samples from one of them, can you tell where the samples came from?

Hardness result with hidden nodes • In Bogdanov-M-Vadhan-08: Problems 1 and 2 are intractable (in the worst case) unless NP = RP • Conversely, if NP = RP then distinguishing (and other forms of reconstruction) are achievable

A possible objection • The “hard” models M 1, M 2 describe distributions that are not efficiently samplable • But if nature is efficient, we never need to worry about such distributions!

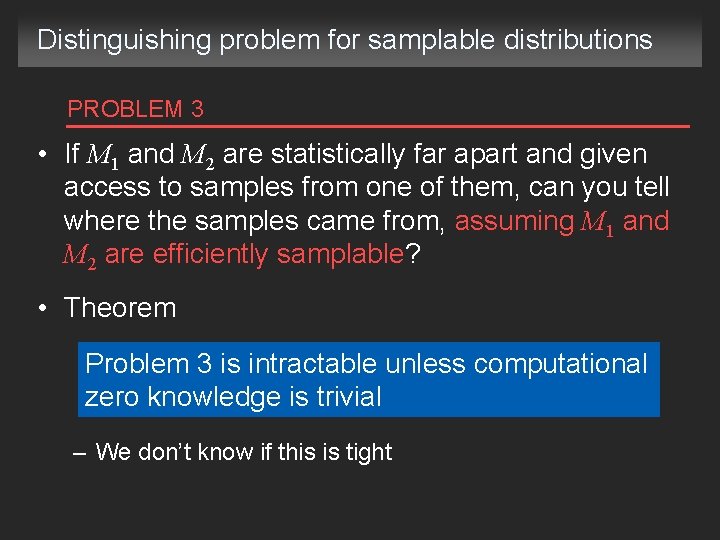

Distinguishing problem for samplable distributions PROBLEM 3 • If M 1 and M 2 are statistically far apart and given access to samples from one of them, can you tell where the samples came from, assuming M 1 and M 2 are efficiently samplable? • Theorem Problem 3 is intractable unless computational zero knowledge is trivial – We don’t know if this is tight

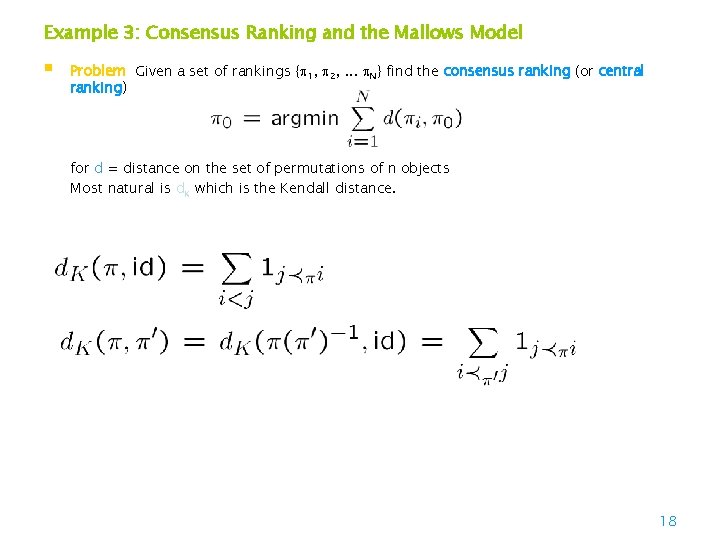

Example 3: Consensus Ranking and the Mallows Model § Problem Given a set of rankings { 1, 2, . . . N} find the consensus ranking (or central ranking) for d = distance on the set of permutations of n objects Most natural is d. K which is the Kendall distance. 18

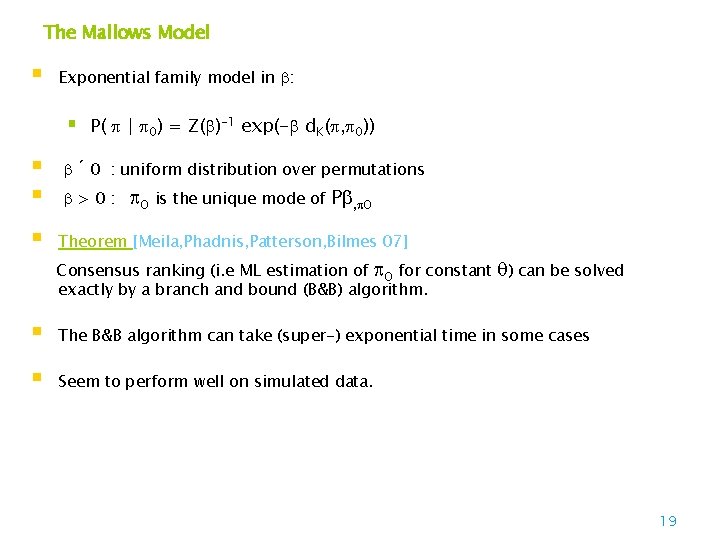

The Mallows Model § Exponential family model in : § P( | 0) = Z( )-1 exp(- d. K( , 0)) § § § ´ 0 : uniform distribution over permutations >0: 0 is the unique mode of P , 0 Theorem [Meila, Phadnis, Patterson, Bilmes 07] Consensus ranking (i. e ML estimation of 0 for constant ) can be solved exactly by a branch and bound (B&B) algorithm. § The B&B algorithm can take (super-) exponential time in some cases § Seem to perform well on simulated data. 19

![Related work ML estimation [Fligner&Verducci 86] introduce generalized Mallows model, ML estimation [Fligner&Verducci 88] Related work ML estimation [Fligner&Verducci 86] introduce generalized Mallows model, ML estimation [Fligner&Verducci 88]](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-20.jpg)

Related work ML estimation [Fligner&Verducci 86] introduce generalized Mallows model, ML estimation [Fligner&Verducci 88] (FV) heuristic for 0 estimation § § Consensus ranking [Cohen, Schapire, Singer 99] Greedy algorithm (CSS) § + improvement by finding strongly connected components § + missing data (not all i rank all n items) [Ailon, Newman, Charikar 05] Randomized algorithm § guaranteed 11/7 factor approximation (ANC) § § § [Mathieu, 07] (1+ ) approximation, time O(n 6/ +2^2 O(1/ )) [Davenport, Kalagnanan 03] Heuristics based on edge-disjoint cycles [Conitzer, D, K 05] Exact algorithm based on integer programming, better bounds 20

![Efficient Sorting of the Mallow’s model • [Braverman-Mossel-09]: • Given r independent samples from Efficient Sorting of the Mallow’s model • [Braverman-Mossel-09]: • Given r independent samples from](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-21.jpg)

Efficient Sorting of the Mallow’s model • [Braverman-Mossel-09]: • Given r independent samples from the Mallows Model, find ML solution exactly! in time nb, where • b = 1 + O(( r)-1), • where r is the number of samples • with high probability (say ¸ 1 -n-100)

![Sorting the Mallow’s Model • [Braverman-M-09]: Optimal order can be found in polynomial time Sorting the Mallow’s Model • [Braverman-M-09]: Optimal order can be found in polynomial time](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-22.jpg)

Sorting the Mallow’s Model • [Braverman-M-09]: Optimal order can be found in polynomial time and O(n log n) queries. • Proof Ingredient 1: “statistical properties” of generated permutations i in terms of the original order 0 : • With high probability: x | i(x)- (x)| = O(n), max | i(x)- (x)| = O(log n) • Additional ingredient: A dynamic programming algorithm to find given a starting point where each elements is at most k away with running time O(n 26 k)

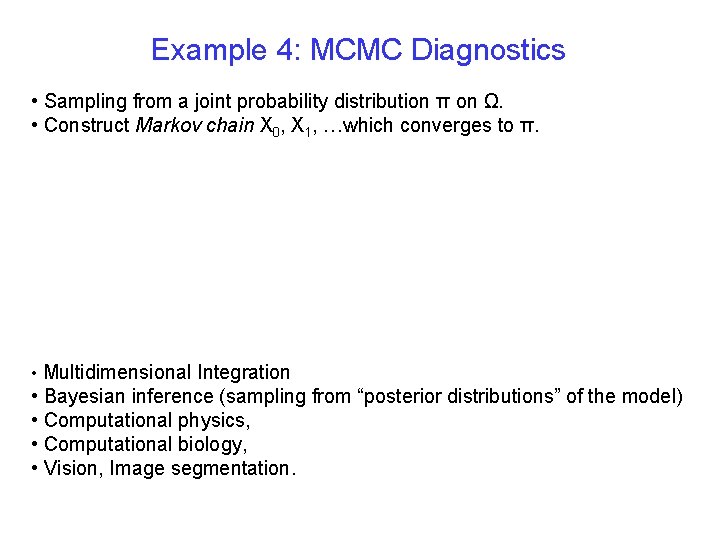

Example 4: MCMC Diagnostics • Sampling from a joint probability distribution π on Ω. • Construct Markov chain X 0, X 1, …which converges to π. • Multidimensional Integration • Bayesian inference (sampling from “posterior distributions” of the model) • Computational physics, • Computational biology, • Vision, Image segmentation.

Convergence Diagnostics • A method to determine t such that Pt(X 0, ) is “sufficiently close” to π. - MCMC in Practice, Gilks, Richardson & Spiegelhalter Are we there yet? •

Convergence Diagnostics in the Literature Visual inspection of functions of samples. - MCMC in Practice, Gilks, Richardson & Spiegelhalter [Raftery-Lewis] Bounding the variance of estimates of quantiles of functions of parameters. [Gelman-Rubin] Convergence achieved if the separate empirical distributions are approximately the same as the combined distribution. [Cowles-Carlin] • Survey of 13 diagnostics designed to identify specific types of convergence failure. • Recommend combinations of strategies – running parallel chains, computing autocorrelations.

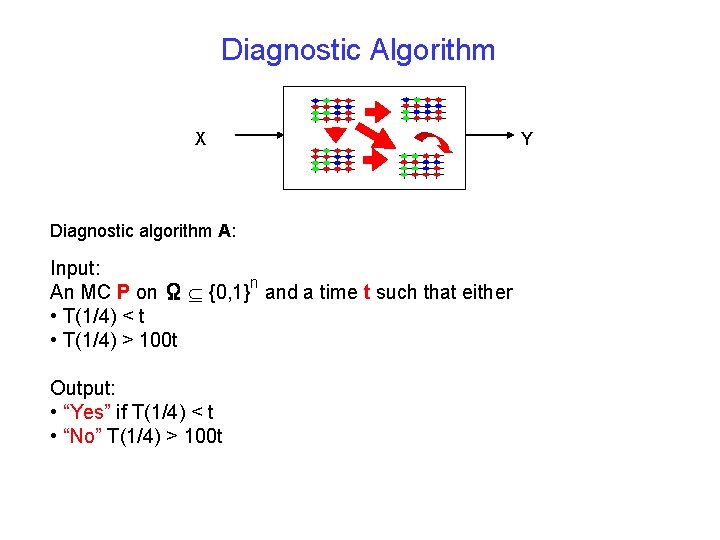

Diagnostic Algorithm X Diagnostic algorithm A: Input: n An MC P on Ω {0, 1} and a time t such that either • T(1/4) < t • T(1/4) > 100 t Output: • “Yes” if T(1/4) < t • “No” T(1/4) > 100 t Y

![Results - The General Case Theorem [Bhatnagar-Bogdanov-M-09]: Given n 1. A MC P on Results - The General Case Theorem [Bhatnagar-Bogdanov-M-09]: Given n 1. A MC P on](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-27.jpg)

Results - The General Case Theorem [Bhatnagar-Bogdanov-M-09]: Given n 1. A MC P on Ω {0, 1} 2. A time t such that either • T(1/4) < t • T(1/4) > 100 t It is a PSPACE-complete problem to distinguish the two.

![PSPACE complete problems [Crasmaru-Tromp] Ladder problems in GO – does black have a winning PSPACE complete problems [Crasmaru-Tromp] Ladder problems in GO – does black have a winning](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-28.jpg)

PSPACE complete problems [Crasmaru-Tromp] Ladder problems in GO – does black have a winning strategy? PSPACE hard problems are “much harder” the NP-hard problems.

![Results - Polynomial Mixing Theorem 2 [Bhatnagar-Bogdanov-M-09]: Given n 1. A MC P on Results - Polynomial Mixing Theorem 2 [Bhatnagar-Bogdanov-M-09]: Given n 1. A MC P on](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-29.jpg)

Results - Polynomial Mixing Theorem 2 [Bhatnagar-Bogdanov-M-09]: Given n 1. A MC P on Ω {0, 1} with a promise that T(1/4) < poly(n) 2. A time t such that either • T(1/4) < t • T(1/4) > 100 t • It is as hard as any co. NP problem to distinguish the two. (x 0+x 3+x 7)^(x 2+x 7+xn) …^(…) T(1/4) < t (x 6+x 3+x 2)^(x 0+x 7+x 1) …^(…) T(1/4) > 100 t

![Results - Mixing from a Given State Theorem 3 [Bhatnagar-Bogdanov-M-09]: : Given n 1. Results - Mixing from a Given State Theorem 3 [Bhatnagar-Bogdanov-M-09]: : Given n 1.](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-30.jpg)

Results - Mixing from a Given State Theorem 3 [Bhatnagar-Bogdanov-M-09]: : Given n 1. A MC P on Ω {0, 1} such that T(1/4) < poly(n) 2. A starting state X 0, 3. A time t such that either • TX 0(1/4) < t • TX 0(1/4) > 100 t A diagnostic algorithm can be used to distinguish whether two efficiently sampleable distributions are close or far in statistical distance. [Sahai-Vadhan] “Statistical Zero Knowledge” - complete … … r 1 rn r’ 1 r’n C 1 a 1 … C 2 am a 1 … am

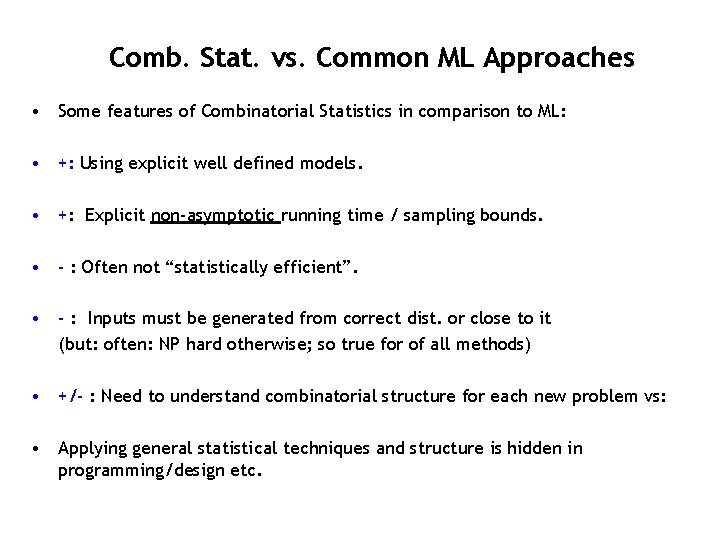

Comb. Stat. vs. Common ML Approaches • Some features of Combinatorial Statistics in comparison to ML: • +: Using explicit well defined models. • +: Explicit non-asymptotic running time / sampling bounds. • - : Often not “statistically efficient”. • - : Inputs must be generated from correct dist. or close to it (but: often: NP hard otherwise; so true for of all methods) • +/- : Need to understand combinatorial structure for each new problem vs: • Applying general statistical techniques and structure is hidden in programming/design etc.

![Conclusions and Questions • [Cowles-Carlin] Survey of 13 diagnostics designed to identify specific types Conclusions and Questions • [Cowles-Carlin] Survey of 13 diagnostics designed to identify specific types](http://slidetodoc.com/presentation_image_h2/0ddc1cd05927f82f6b39d9e0e636903c/image-32.jpg)

Conclusions and Questions • [Cowles-Carlin] Survey of 13 diagnostics designed to identify specific types of convergence failure. Each can fail to detect the type of convergence it was designed for. • [Arora-Barak] Efficient algorithms are not believed to exist for PSPACEcomplete, co. NP-complete or SZK-complete problems. • Diagnostic algorithms do not exist for large classes of MCMC algorithms, like Gibbs samplers unless there are efficient algorithms for PSPACE or co. NP or SZK. • For what classes of samplers are convergence diagnostics possible? • Hardness of testing convergence for more restricted classes of samplers.

Collaborators Nayantara Bhatnagar, Berkeley Allan Sly Berkeley Guy Bresler Berkeley Andrej Bogdanov: Hong-Kong Mark Braverman Microsoft Salil Vadhan: Harvard

Thanks !!

- Slides: 34