SOME BASIC MPI ROUTINES With formal datatypes specified

SOME BASIC MPI ROUTINES With formal datatypes specified

Preliminaries

![Initialize MPI environment int MPI_Init(int *argc, char **argv[]) *argc **argv[] argument from main() Initialize MPI environment int MPI_Init(int *argc, char **argv[]) *argc **argv[] argument from main()](http://slidetodoc.com/presentation_image_h2/faef7085f6c0aa40338550925176561a/image-3.jpg)

Initialize MPI environment int MPI_Init(int *argc, char **argv[]) *argc **argv[] argument from main()

Terminate MPI execution environment. int MPI_Finalize(void) Parameters: None.

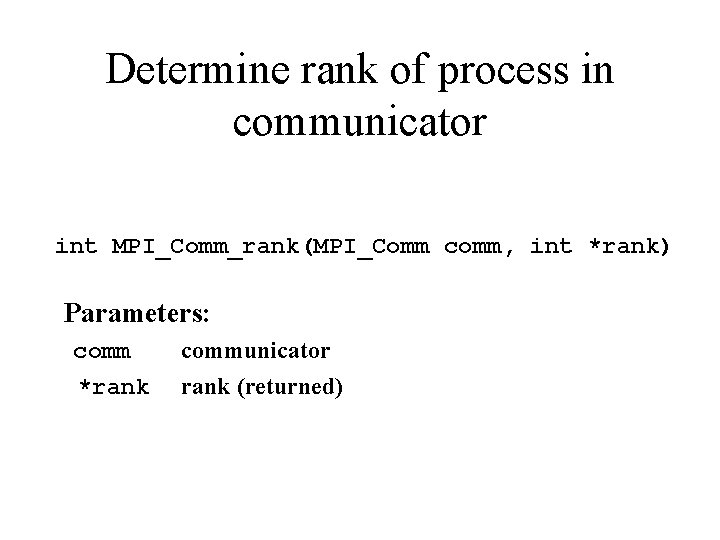

Determine rank of process in communicator int MPI_Comm_rank(MPI_Comm comm, int *rank) Parameters: communicator *rank (returned)

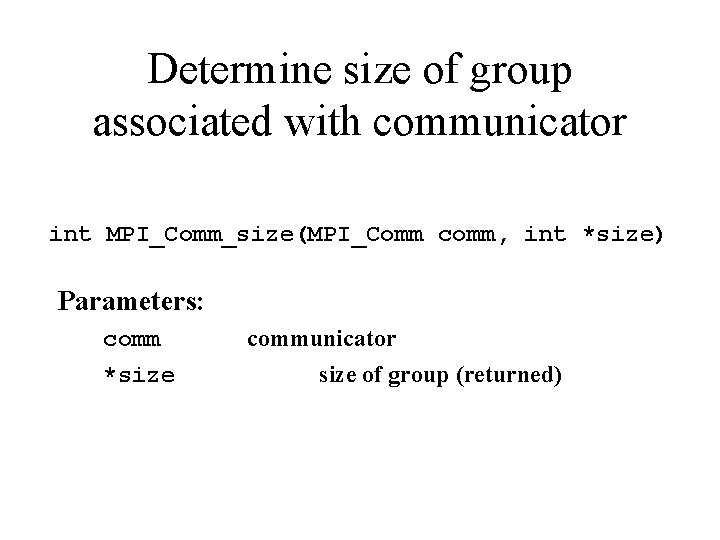

Determine size of group associated with communicator int MPI_Comm_size(MPI_Comm comm, int *size) Parameters: comm *size communicator size of group (returned)

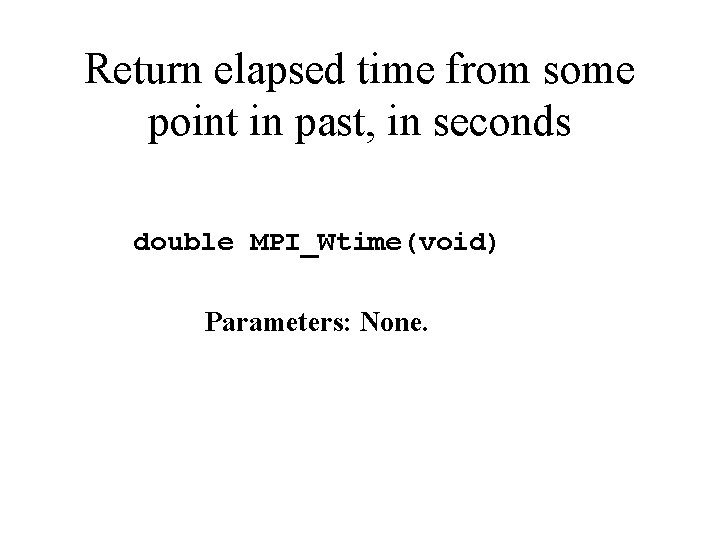

Return elapsed time from some point in past, in seconds double MPI_Wtime(void) Parameters: None.

Basic Blocking Point-to. Point Message Passing

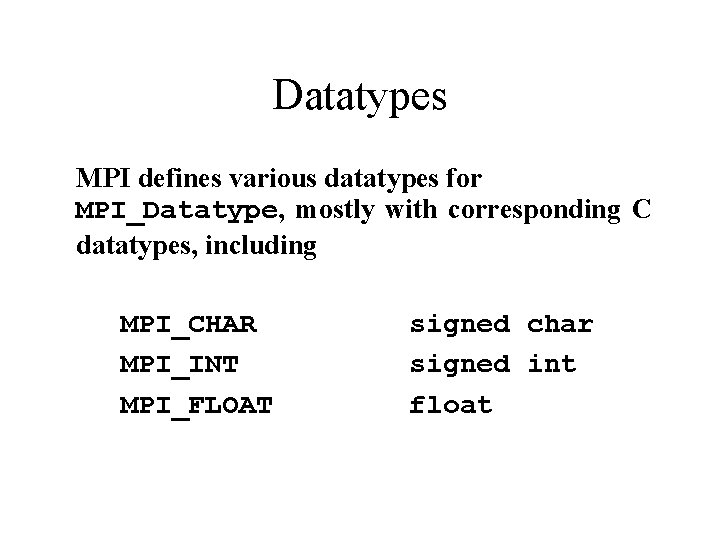

Datatypes MPI defines various datatypes for MPI_Datatype, mostly with corresponding C datatypes, including MPI_CHAR MPI_INT MPI_FLOAT signed char signed int float

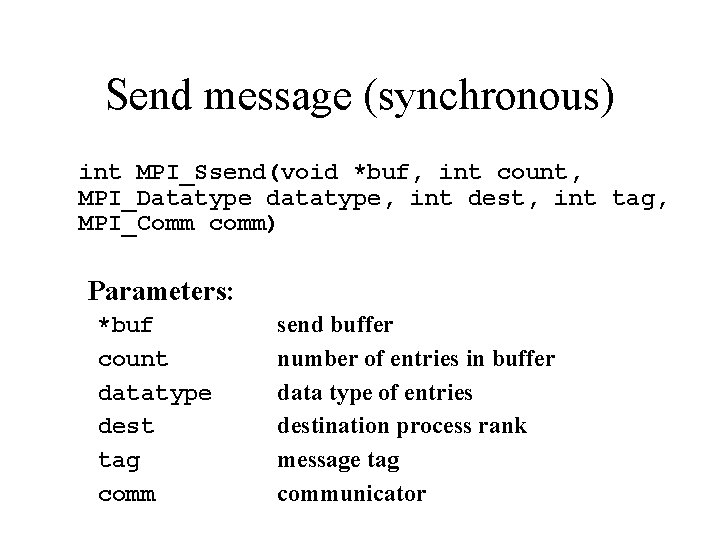

Send message (synchronous) int MPI_Ssend(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) Parameters: *buf count datatype dest tag comm send buffer number of entries in buffer data type of entries destination process rank message tag communicator

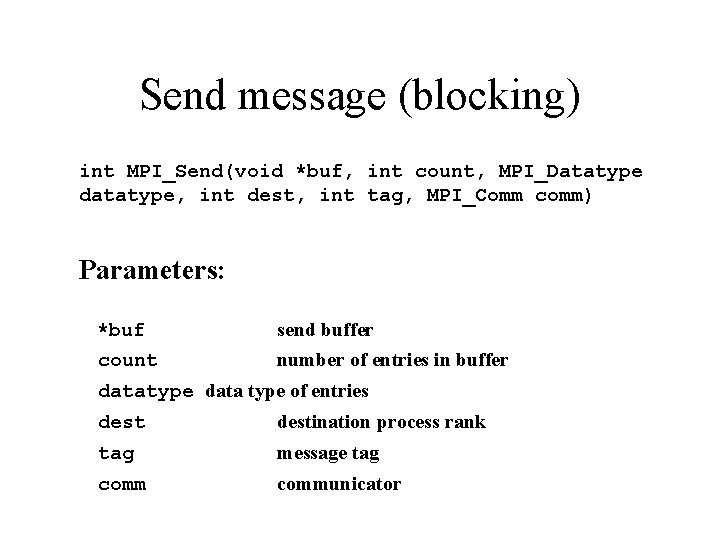

Send message (blocking) int MPI_Send(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) Parameters: *buf send buffer count number of entries in buffer datatype data type of entries destination process rank tag message tag communicator

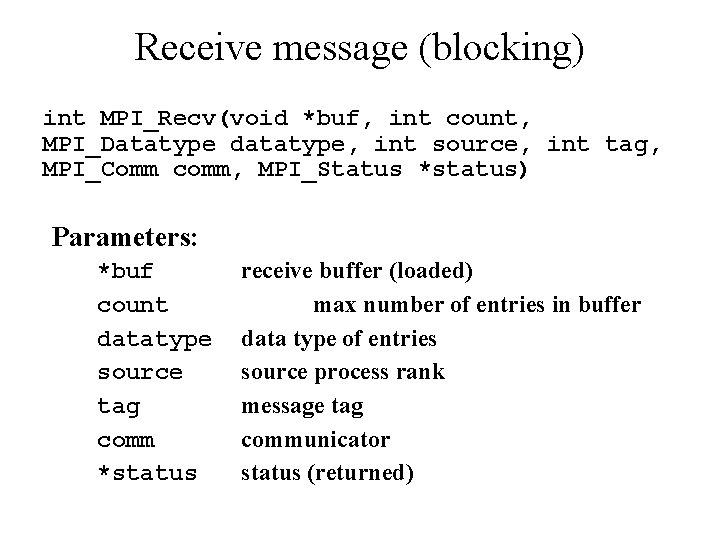

Receive message (blocking) int MPI_Recv(void *buf, int count, MPI_Datatype datatype, int source, int tag, MPI_Comm comm, MPI_Status *status) Parameters: *buf count datatype source tag comm *status receive buffer (loaded) max number of entries in buffer data type of entries source process rank message tag communicator status (returned)

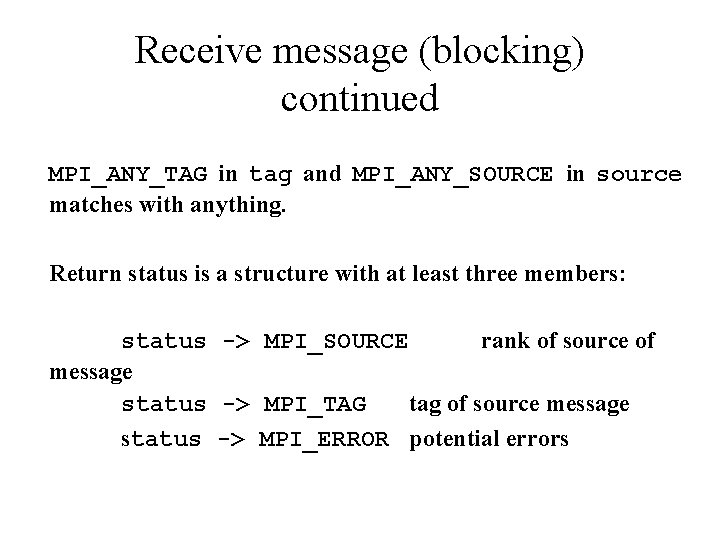

Receive message (blocking) continued MPI_ANY_TAG in tag and MPI_ANY_SOURCE in source matches with anything. Return status is a structure with at least three members: status -> MPI_SOURCE rank of source of message status -> MPI_TAG tag of source message status -> MPI_ERROR potential errors

Basic Group Routines

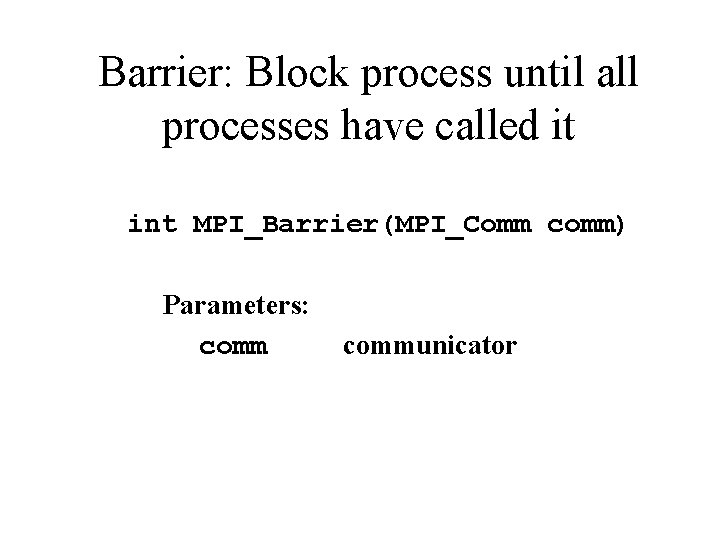

Barrier: Block process until all processes have called it int MPI_Barrier(MPI_Comm comm) Parameters: communicator

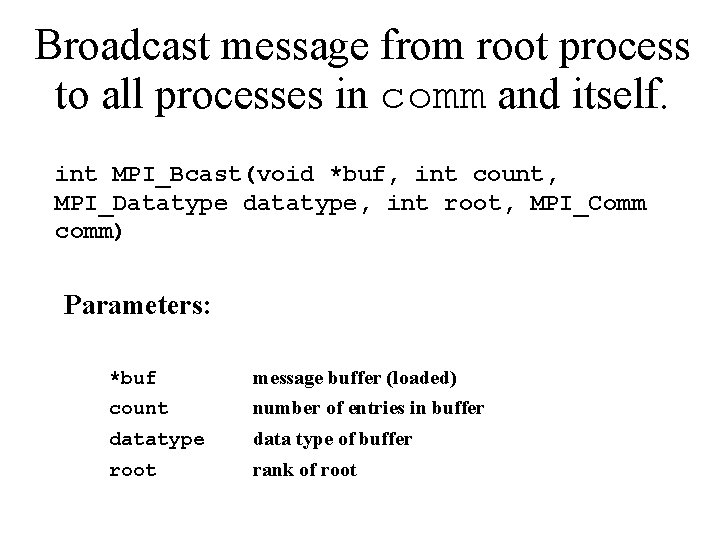

Broadcast message from root process to all processes in comm and itself. int MPI_Bcast(void *buf, int count, MPI_Datatype datatype, int root, MPI_Comm comm) Parameters: *buf message buffer (loaded) count number of entries in buffer datatype data type of buffer root rank of root

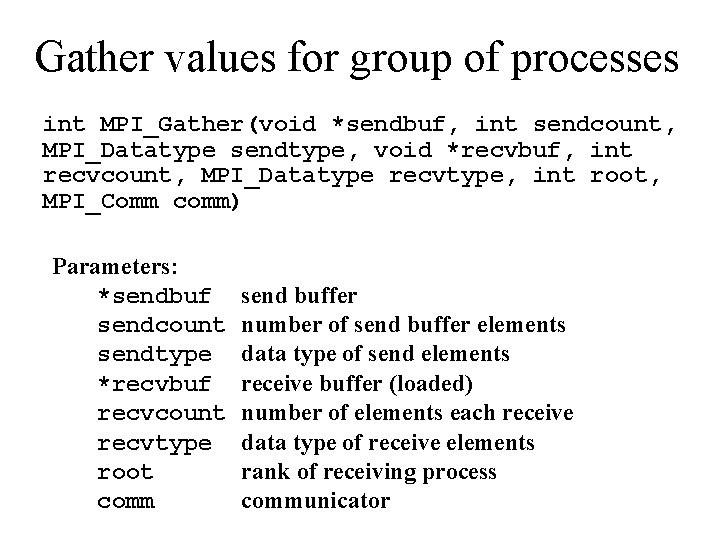

Gather values for group of processes int MPI_Gather(void *sendbuf, int sendcount, MPI_Datatype sendtype, void *recvbuf, int recvcount, MPI_Datatype recvtype, int root, MPI_Comm comm) Parameters: *sendbuf sendcount sendtype *recvbuf recvcount recvtype root comm send buffer number of send buffer elements data type of send elements receive buffer (loaded) number of elements each receive data type of receive elements rank of receiving process communicator

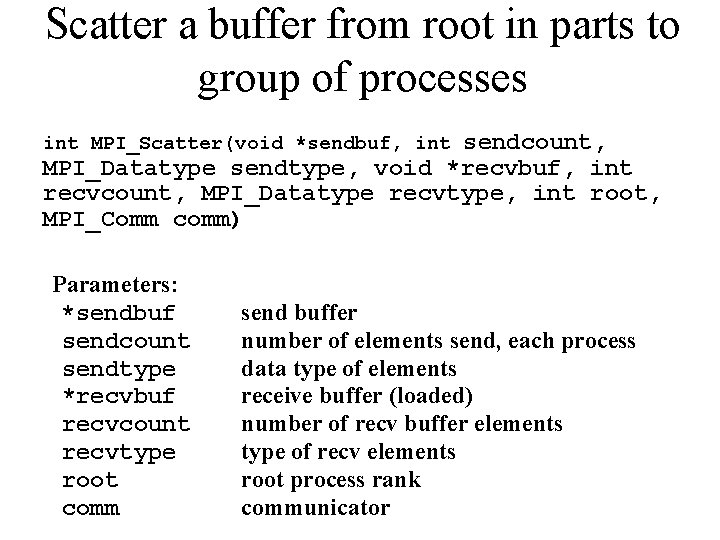

Scatter a buffer from root in parts to group of processes sendcount, MPI_Datatype sendtype, void *recvbuf, int recvcount, MPI_Datatype recvtype, int root, MPI_Comm comm) int MPI_Scatter(void *sendbuf, int Parameters: *sendbuf sendcount sendtype *recvbuf recvcount recvtype root comm send buffer number of elements send, each process data type of elements receive buffer (loaded) number of recv buffer elements type of recv elements root process rank communicator

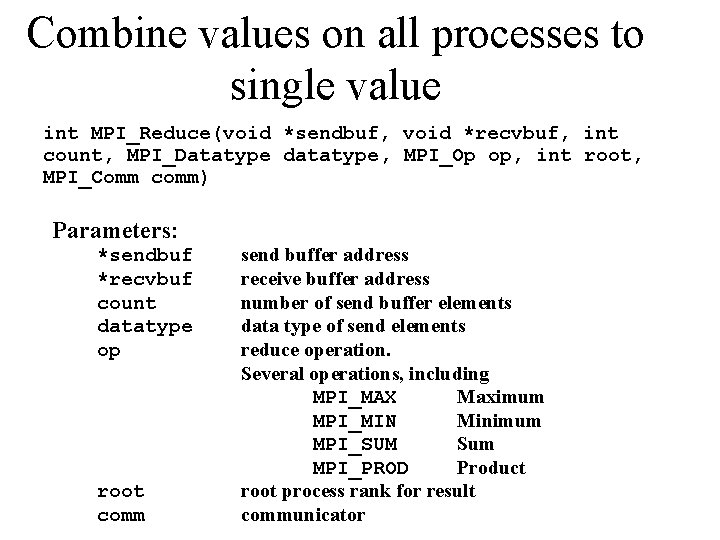

Combine values on all processes to single value int MPI_Reduce(void *sendbuf, void *recvbuf, int count, MPI_Datatype datatype, MPI_Op op, int root, MPI_Comm comm) Parameters: *sendbuf *recvbuf count datatype op root comm send buffer address receive buffer address number of send buffer elements data type of send elements reduce operation. Several operations, including MPI_MAX Maximum MPI_MIN Minimum MPI_SUM Sum MPI_PROD Product root process rank for result communicator

Bibliography • Gropp, W. , E. Lusk, and A. Skjellum (1999), Using MPI Portable Parallel Programming with the Message-Passing Interface, 2 nd ed. , MIT Press, Cambridge, Massachusetts. • Gropp, W. , E. Lusk, and R. Thakur (1999), Using MPI-2 Advanced Features of the Message-Passing Interface, MIT Press, Cambridge, Massachusetts. • Snir, M. , S. W. Otto, S. Huss-Lederman, D. W. Walker, and J. Dongarra (1998), MPI — The Complete Reference: Volume 1, The MPI Core, MIT Press, Cambridge, Massachusetts. • Gropp, W. , S. Huss-Lederman, A. Lumsdaine, E. Lusk, B. Nitzberg, W. Saphir, and M. Snir (1998), MPI — The Complete Reference: Volume 2, The MPI-2 Extensions, MIT Press, Cambridge, Massachusetts.

- Slides: 20