Software Testing Michael P Rupen EVLA Project Scientist

- Slides: 25

Software Testing Michael P. Rupen EVLA Project Scientist for Software Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 1

Connecting Scientists and Software • Software is both complex and important – defines telescope (e. g. , rms noise achievable) – programmers are professionals – cannot assume astronomical knowledge or p. o. v. (e. g. , declination -00: 02) – still learning (e. g. , user interface; implications of dynamic range goals) Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 3

Connecting Scientists and Software – organizational split: much higher walls between scientist and implementation • sheer size of project • accounting requirements • history of aips++ èrequires close connections between astronomers and programmers • at all levels, from overall priorities to tests of welldefined sections of code Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 4

Connecting Scientists and Software: EVLA Approach • EVLA Proj. Sci for SW: Rupen – responsible for requirements, timescales, project book, inter-project interactions, … • EVLA SW Science Reqts. Committee – Rupen, Butler, Chandler, Clark, Mc. Kinnon, Myers, Owen, Perley, (Brogan, Fomalont, Hibbard) – consultation for scientific requirements – source group for more targeted work Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 5

Connecting Scientists and Software: EVLA Approach • Subsystem Scientists – – – – Rupen scientific guidance for individual subsystems day-to-day contacts for programmers interpret scientific requirements for programmers oversee (and are heavily involved in) tests heavily involved in user documentation consult with other scientific staff duties vary with subsystem development EVLA Advisory Committee Meeting May 8 -9, 2006 6

Connecting Scientists and Software: EVLA Approach • Subsystem Scientists (cont’d) – Currently: • • • Rupen Proposal Submission Tool: Wrobel Obs. Prep: Chandler (“daughter of JOBSERVE”) WIDAR: Rupen/Romney Post-processing: Rupen/Owen TBD: Scheduler, Obs. Status Screens, Archive EVLA Advisory Committee Meeting May 8 -9, 2006 7

Connecting Scientists and Software: EVLA Approach • Less formal, more direct contacts – e. g. , Executor (e. g. , ref. ptg. priorities and testing) – e. g. , Greisen developing plotting programs based on Perley hardware testing • Testers – On-going tests: small in-house group (fast turn-around, very focused) – Pre-release tests: larger group of staff across sites and projects – External tests: staff + outside users Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 8

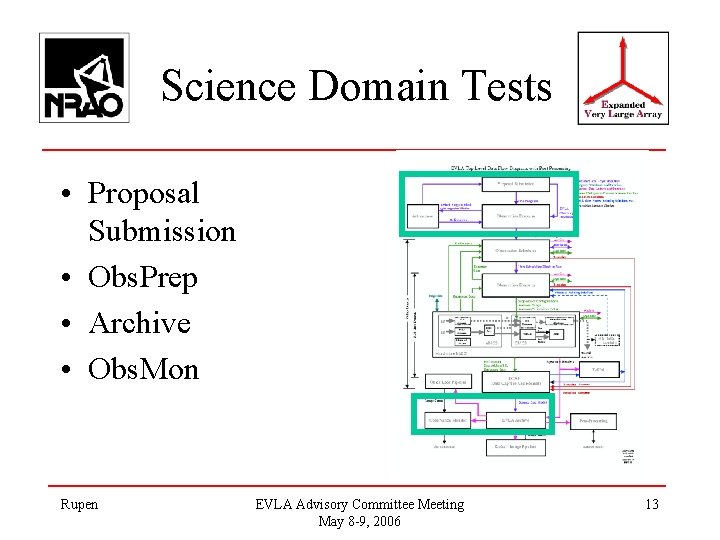

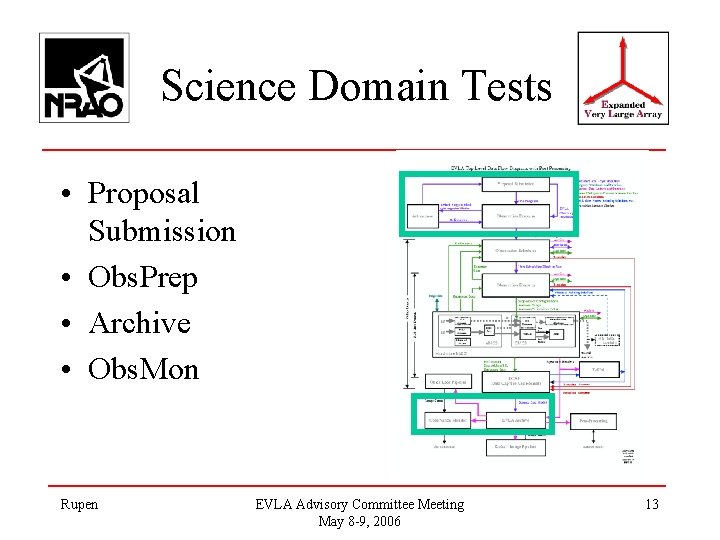

Science Domain Tests • Proposal Submission • Obs. Prep • Archive • Obs. Mon Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 13

Science Domain Tests: Prop. Submission Tool • Testing timetable for this release: – Feb 10: post-mortem based on user comments – Mar 1: internal test-I (van Moorsel, Butler, Rupen) – Mar 20: internal test-II (+Frail) – Apr 18: NRAO-wide test (14, incl. CV/GB) – May 3: internal test-III – May 7: public release Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 15

Science Domain Tests: Prop. Submission Tool – rest of May: fix up & test post-submission tools – Early June: gather user comments – June 7: post-mortem, set next goals Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 16

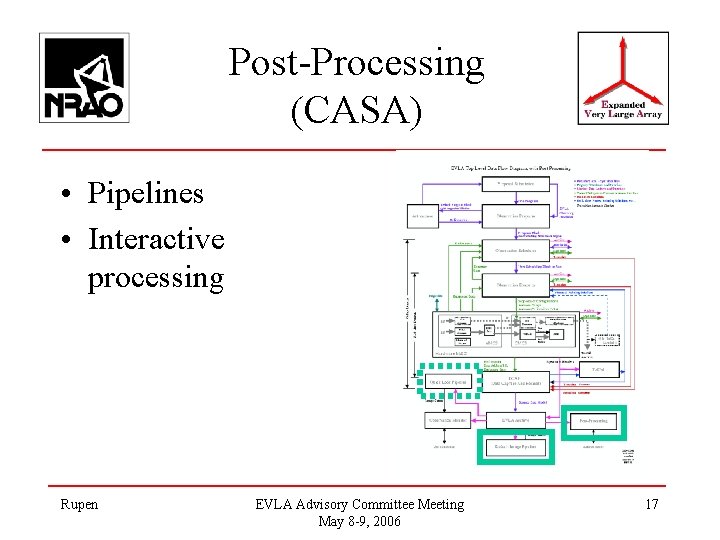

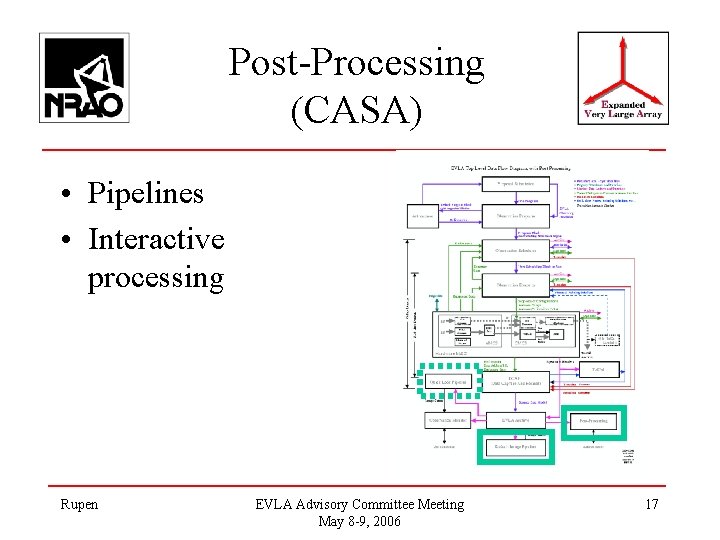

Post-Processing (CASA) • Pipelines • Interactive processing Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 17

Post-Processing: On-going Interactions • NAUG/NAWG – each meets once a month – track and test current progress & algorithmic developments – Scistaff members: Myers, Brisken, Brogan, Chandler, Fomalont, Greisen, Hibbard, Owen, Rupen, Shepherd, Whysong Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 18

Post-Processing: On-going Interactions • ALMA formal tests – ALMA testers themselves often have VLA experience (e. g. , Testi) – ALMA 2006. 01 -4 included Brisken, Owen, Rupen; first look at python – EVLA goals: gain experience; learn how ALMA tests work; influence interface Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 19

Post-Processing: On-going Interactions • User Interface Working Group (Apr 06) – goal: refine requirements for user interface – Myers, Brogan, Brisken, Greisen, Hibbard, Owen, Rupen, Shepherd, Whysong – draft results on Web Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 20

EVLA CASA Tests: Philosophy • Planned opportunities for collaboration – testing for mutual benefit, not an opportunity to fail • important to repair aips++ programmer/scientist rift – allows us to reserve time of very useful, very busy people (Brisken, Owen, …) • Set priorities • Check current approaches and progress Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 21

EVLA CASA Tests: Philosophy • Provide real deadlines for moving software over to the users • Prepare for scientific and hardware deadlines (e. g. , WIDAR) èjoint decisions on topics èfeedback going both ways (e. g. , what requirements are most useful [cf. DR]) Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 22

EVLA CASA Tests: Philosophy èless formal than ALMA • avoid duplication • lesser resources • EVLA-specific work is often either research, or requires much programmer/scientist interaction (e. g. , how to deal with RFI) • Learning curve for CASA is still steep. ALMA’s formal tests involve e. g. re-writing the cookbook; that is likely beyond our resources Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 23

EVLA CASA Tests: Current Status • May-July 2005 test: w-projection – first step in the Big Imaging Problem – generally good performance (speed, robustness) but lots of ease-of-use issues – Myers, Brisken, Butler, Fomalont, van Moorsel, Owen • Currently concentrating on – long lead-time items (e. g. , high DR imaging) – H/W driven items (e. g. , proto-type correlator) – shift to user-oriented system Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 24

EVLA CASA Tests: Current Status • Feb/Mar 2006 ALMA test – 1 st with python interface – in-line help • March 2006 User Interface Working Group – tasks – documentation – scripting vs. “ordinary” use Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 25

EVLA CASA Tests: Fall 2006 • User interface – revised UI (tasks etc. ) – revamped module organization – new documentation system • Reading and writing SDM – ASDM CASA MS UVFITS AIPS • Basic calibration, incl. calibration of weights Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 26

EVLA CASA Tests: Fall 2006 • Data examination – basic plots (mostly a survey of existing code) – first look at stand-alone viewer (qtview) • Imaging – widefield imaging (w term, multiple fields) – full pol’n imaging – pointing self-cal “for pundits” • Some of this may be NAUG-tested instead Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 27

EVLA CASA Tests: 2007 • Test scheduled for early 2007 – probably focused on calibration and data examination • Driven by need to support initial basic modes (e. g. , big spectral line cubes), and to learn from the new hardware (e. g. , WIDAR + wide-band feeds) • Currently working on associated requirements Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 28

Involving Outside Users • Science domain – fast turn-around active discussion make this a fair commitment – reluctance to show undocumented, unfinished product – current involvement is mostly at the end of the testing process Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 29

Involving Outside Users • CASA – focus has been on functionality, reliability, and speed • major advances, but documentation & UI have lagged – learning CASA for a test is a little painful, esp. since the UI is evolving rapidly Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 30

Involving Outside Users – algorithmic development is special: • many public documents & talks • interactions with other developers • student involvement: Urvashi; two from Baum – focus is shifting to user interactions • as this happens, we will involve more non-NRAO astronomers Rupen EVLA Advisory Committee Meeting May 8 -9, 2006 31