Software Testing and Reliability Preliminaries Aditya P Mathur

Software Testing and Reliability Preliminaries Aditya P. Mathur Purdue University August 12 -16 @ Guidant Corporation Minneapolis/St Paul, MN Graduate Assistants: Ramkumar Natarajan Baskar Sridharan Last update: August 3, 2002 Software Testing and Reliability Aditya P. Mathur 2002

Preliminaries Learning Objectives n n What is testing? How does it differ from verification? How and why does testing improve our confidence in program correctness? n What is coverage and what role does it play in testing? n What are the different types of testing? n What are the formalisms for specification and design used as source for test and oracle generation? Software Testing and Reliability Aditya P. Mathur 2002 2

Testing: Preliminaries n What is testing? n n The act of checking if a part or a product performs as expected. Why test? n n Gain confidence in the correctness of a part or a product. Check if there any errors in a part or a product. Software Testing and Reliability Aditya P. Mathur 2002 3

What to test? n n During software lifecycle several products are generated. Examples: n n Requirements document Design document Software subsystems Software system Software Testing and Reliability Aditya P. Mathur 2002 4

Test all! n n n Each of these products needs testing. Methods for testing various products are different. Examples: n n n Test a requirements document using scenario construction and simulation Test a design document using simulation. Test a subsystem using functional testing. Software Testing and Reliability Aditya P. Mathur 2002 5

What is our focus? n n We focus on testing programs. Programs may be subsystems or complete systems. These are written in a formal programming language. There is a large collection of techniques and tools to test programs. Software Testing and Reliability Aditya P. Mathur 2002 6

Few basic terms n Program: n n A collection of functions, as in C, or a collection of classes as in java. Specification n Description of requirements for a program. This might be formal or informal. Software Testing and Reliability Aditya P. Mathur 2002 7

Few basic terms-continued n Test case or test input n n Test set n n A set of values of input variables of a program. Values of environment variables are also included. Set of test inputs Program execution n Execution of a program on a test input. Software Testing and Reliability Aditya P. Mathur 2002 8

Few basic terms-continued n Oracle n A function that determines whether or not the results of executing a program under test is as per the program’s specifications. Software Testing and Reliability Aditya P. Mathur 2002 9

Correctness n n n Let P be a program (say, an integer sort program). Let S denote the specification for P. For sort let S be: Software Testing and Reliability Aditya P. Mathur 2002 10

Sample Specification n P takes as input an integer N>0 and a sequence of N integers called elements of n the sequence. Let K denote any element of this sequence, n P sorts the input sequence in descending order and prints the sorted sequence. Software Testing and Reliability Aditya P. Mathur 2002 11

Correctness again n P is considered correct with respect to a specification S if and only if: n For each valid input the output of P is in accordance with the specification S. Software Testing and Reliability Aditya P. Mathur 2002 12

Errors, defects, faults n n Error: A mistake made by a programmer Example: Misunderstood the requirements. Defect/fault: Manifestation of an error in a program. Example: Incorrect code: Correct code: Software Testing and Reliability Aditya P. Mathur 2002 if (a<b) {foo(a, b); } if (a>b) {foo(a, b); } 13

Failure n n n Incorrect program behavior due to a fault in the program. Failure can be determined only with respect to a set of requirement specifications. A necessary condition for a failure to occur is that execution of the program force the erroneous portion of the program to be executed. What is the sufficiency condition? Software Testing and Reliability Aditya P. Mathur 2002 14

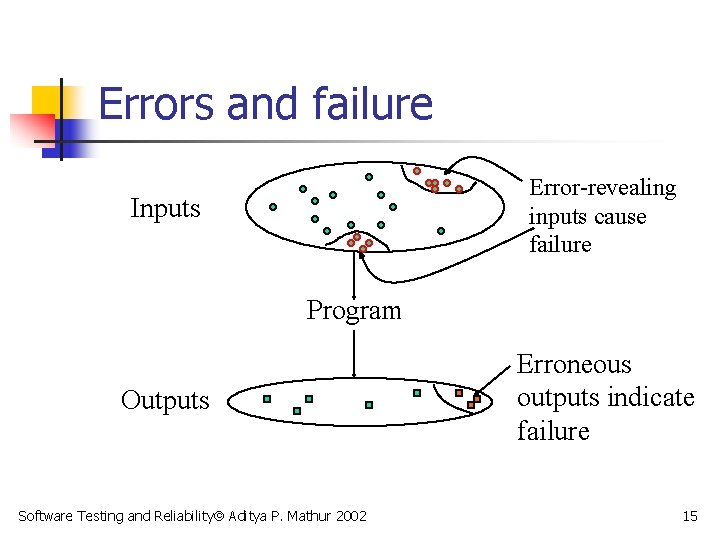

Errors and failure Error-revealing inputs cause failure Inputs Program Outputs Software Testing and Reliability Aditya P. Mathur 2002 Erroneous outputs indicate failure 15

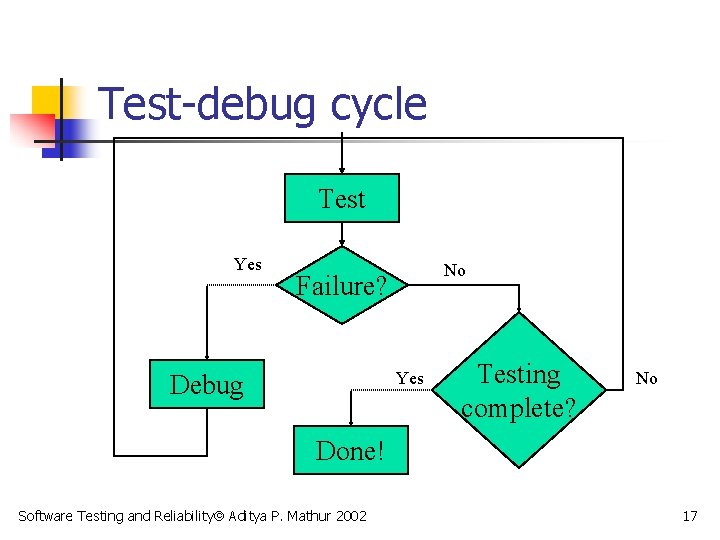

Debugging n n n Suppose that a failure is detected during the testing of P. The process of finding and removing the cause of this failure is known as debugging. The word bug is slang for fault. Testing usually leads to debugging Testing and debugging usually happen in a cycle. Software Testing and Reliability Aditya P. Mathur 2002 16

Test-debug cycle Test Yes No Failure? Yes Debug Testing complete? No Done! Software Testing and Reliability Aditya P. Mathur 2002 17

Testing and code inspection n n Code inspection is a technique whereby the source code is inspected for possible errors. Code inspection is generally considered complementary to testing. Neither is more important than the other! n One is not likely to replace testing by code inspection or by verification. Software Testing and Reliability Aditya P. Mathur 2002 18

Testing for correctness? n n n Identify the input domain of P. Execute P against each element of the input domain. For each execution of P, check if P generates the correct output as per its specification S. Software Testing and Reliability Aditya P. Mathur 2002 19

What is an input domain ? n n Input domain of a program P is the set of all valid inputs that P can expect. The size of an input domain is the number of elements in it. An input domain could be finite or infinite. Finite input domains might be very large! Software Testing and Reliability Aditya P. Mathur 2002 20

Identifying the input domain n For the sort program: N: size of the sequence, K: each element of the sequence. Example: For N<3, e=3, some sequences in the input domain are: [ ]: An empty sequence (N=0). [0]: A sequence of size 1 (N=1) [2 1]: A sequence of size 2 (N=2). n Software Testing and Reliability Aditya P. Mathur 2002 21

Size of an input domain n Suppose that The size of the input domain is the number of all sequences of size 0, 1, 2, and so on. The size can be computed as: Software Testing and Reliability Aditya P. Mathur 2002 22

Testing for correctness? Sorry! n n To test for correctness P needs to be executed on all inputs. For our example, it will take several light years to execute a program on all inputs on the most powerful computers of today! Software Testing and Reliability Aditya P. Mathur 2002 23

Exhaustive Testing n n n This form of testing is also known as exhaustive testing as we execute P on all elements of the input domain. For most programs exhaustive testing is not feasible. What is the alternative? Software Testing and Reliability Aditya P. Mathur 2002 24

Verification n n Verification for correctness is different from testing for correctness. There are techniques for program verification which we will not discuss. Software Testing and Reliability Aditya P. Mathur 2002 25

Partition Testing n n n In this form of testing the input domain is partitioned into a finite number of sub -domains. P is then executed on a few elements of each sub-domain. Let us go back to the sort program. Software Testing and Reliability Aditya P. Mathur 2002 26

Sub-domains n n Suppose that and e=3. The size of the partitions is : We can divide the input domain into three sub-domains as shown. Software Testing and Reliability Aditya P. Mathur 2002 1 9 3 27

Fewer test inputs n n n Now sort can be tested on one element selected from each domain. For example, one set of three inputs is: [] [2] Empty sequence from sub-domain 1. Sequence from sub-domain 2. [2 0] Sequence from sub-domain 3. We have thus reduced the number of inputs used for testing from 13 to 3! Software Testing and Reliability Aditya P. Mathur 2002 28

Confidence in your program n n n Confidence is a measure of one’s belief in the correctness of the program. Correctness is not measured in binary terms: a correct or an incorrect program. Instead, it is measured as the probability of correct operation of a program when used in various scenarios. Software Testing and Reliability Aditya P. Mathur 2002 29

Measures of confidence n Reliability: Probability that a program will function correctly in a given environment over a certain number of executions. We do not plan to cover Reliability. n Test completeness: The extent to which a program has been tested and errors found have been removed. Software Testing and Reliability Aditya P. Mathur 2002 30

Example: Increase in Confidence n n We consider a non-programming example to illustrate what is meant by “increase in confidence. ” Example: A rectangular field has been prepared to certain specifications. n One item in the specifications is: “There should be no stones remaining in the field. ” Software Testing and Reliability Aditya P. Mathur 2002 31

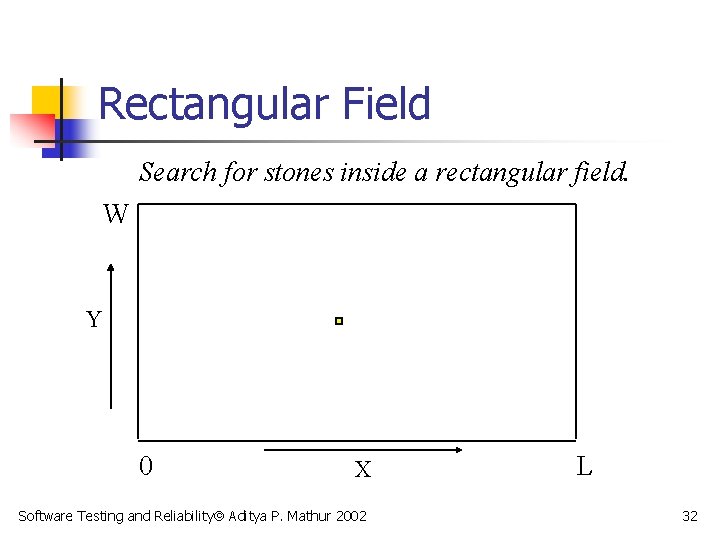

Rectangular Field Search for stones inside a rectangular field. W Y 0 X Software Testing and Reliability Aditya P. Mathur 2002 L 32

Testing the rectangular field n n The field has been prepared and our task is to test it to make sure that it has no stones. How should we organize our search? Software Testing and Reliability Aditya P. Mathur 2002 33

Partitioning the field n n We divide the entire field into smaller search rectangles. The length and breadth of each search rectangle is one half that of the smallest stone. Software Testing and Reliability Aditya P. Mathur 2002 34

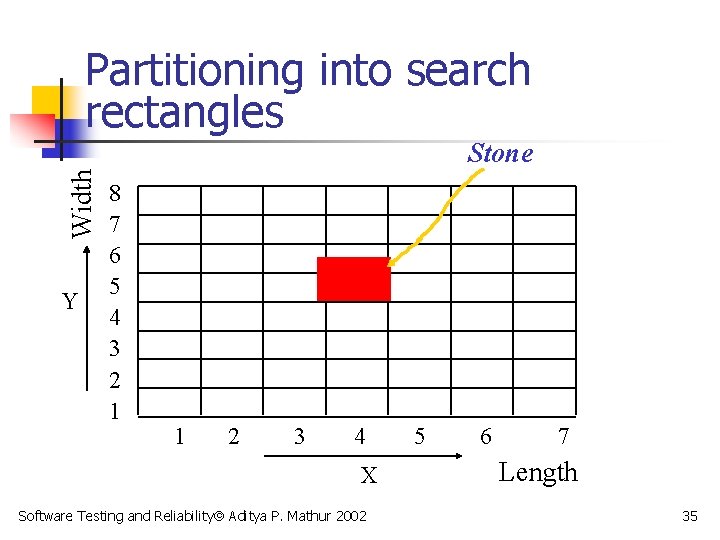

Partitioning into search rectangles Width Stone Y 8 7 6 5 4 3 2 1 1 2 3 4 X Software Testing and Reliability Aditya P. Mathur 2002 5 6 7 Length 35

Input domain n Input domain is the set of all possible inputs to the search process. In our example this is the set of all points in the field. Thus, the input domain is infinite! To reduce the size of the input domain we partition the field into finite size rectangles. Software Testing and Reliability Aditya P. Mathur 2002 36

Rectangle size n n The length and breadth of each search rectangle is one half that of the smallest stone. This ensures that each stone covers at least one rectangle. (Is this always true? ) Software Testing and Reliability Aditya P. Mathur 2002 37

Constraints n n n Testing must be completed in less than H hours. Any stone found during testing is removed. Upon completion of testing the probability of finding a stone must be less than p. Software Testing and Reliability Aditya P. Mathur 2002 38

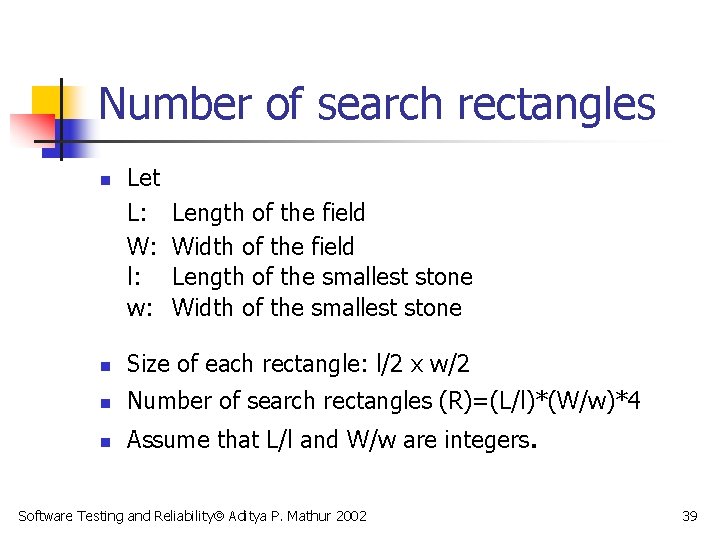

Number of search rectangles n Let L: W: l: w: Length of the field Width of the field Length of the smallest stone Width of the smallest stone n Size of each rectangle: l/2 x w/2 n Number of search rectangles (R)=(L/l)*(W/w)*4 n Assume that L/l and W/w are integers. Software Testing and Reliability Aditya P. Mathur 2002 39

Time to test n n Let t be the time to look inside one search rectangle. No rectangle is examined more than once. Let o be the overhead in moving from one search rectangle to another. Total time to search (T)=R*t+(R-1)*o Testing with R rectangles is feasible only if T<H. Software Testing and Reliability Aditya P. Mathur 2002 40

Partitioning the input domain n n This set consists of all search rectangles (R). Number of partitions of the input domain is finite (=R). However, if T>H then the number of partitions is too large and scanning each rectangle once is infeasible. What should we do in such a situation? Software Testing and Reliability Aditya P. Mathur 2002 41

Option 1: Do a limited search n n n Of the R search rectangles we examine only r where r is such that (t*r+o*(r-1)) < H. This limited search will satisfy the time constraint. Will it satisfy the probability constraint? Software Testing and Reliability Aditya P. Mathur 2002 42

Distribution of stones n n To satisfy the probability constraint we must scan enough search rectangles so that the probability of finding a stone, after testing, remains less than p. Let us assume that n there are cycles. stones remaining after i test Software Testing and Reliability Aditya P. Mathur 2002 43

Distribution of stones n n n There are search rectangles remaining after i test cycles. Stones are distributed uniformly over the field An estimate of the probability of finding a stone in a randomly selected remaining search rectangle is Software Testing and Reliability Aditya P. Mathur 2002 44

Probability constraint n n We will stop looking into rectangles if Can we really apply this test method in practice? Software Testing and Reliability Aditya P. Mathur 2002 45

Confidence n n n Number of stones in the field is not known in advance. Hence we cannot compute the probability of finding a stone after a certain number of rectangles have been examined. The best we can do is to scan as many rectangles as we can and remove the stones found. Software Testing and Reliability Aditya P. Mathur 2002 46

Coverage n n After a rectangle has been scanned for a stone and any stone found has been removed, we say that the rectangle has been covered. Suppose that r rectangles have been scanned from a total of R. Then we say that the coverage is r/R. Software Testing and Reliability Aditya P. Mathur 2002 47

Coverage and confidence n What happens when coverage increases? As coverage increases so does our confidence in a “stone-free” field. n In this example, when the coverage reaches 100%, all stones have been found and removed. Can you think of a situation when this might not be true? Software Testing and Reliability Aditya P. Mathur 2002 48

Option 2: Reduce number of partitions n n If the number of rectangles to scan is too large, we can increase the size of a rectangle. This reduces the number of rectangles. Increasing the size of a rectangle also implies that there might be more than one stone within a rectangle. Is it good for a tester? Software Testing and Reliability Aditya P. Mathur 2002 49

Rectangle size n n n As a stone may now be smaller than a rectangle, detecting a stone inside a rectangle is not guaranteed. Despite this fact our confidence in a “stonefree” field increases with coverage. However, when the coverage reaches 100% we cannot guarantee a “stone-free” field. Software Testing and Reliability Aditya P. Mathur 2002 50

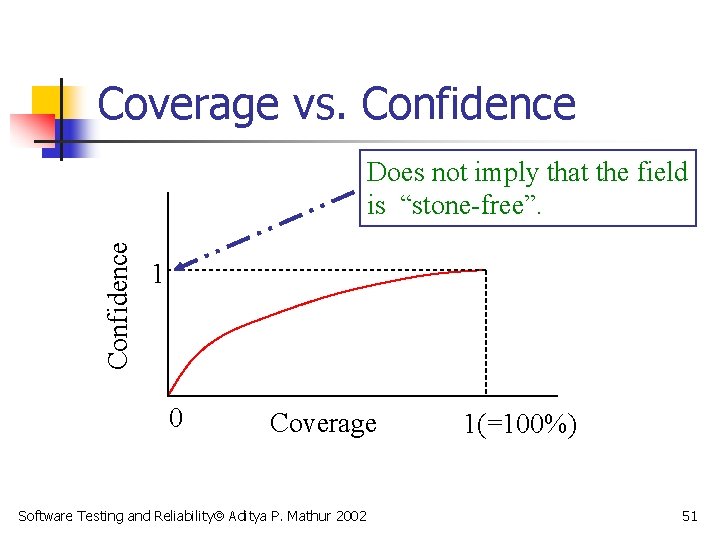

Coverage vs. Confidence Does not imply that the field is “stone-free”. 1 0 Coverage Software Testing and Reliability Aditya P. Mathur 2002 1(=100%) 51

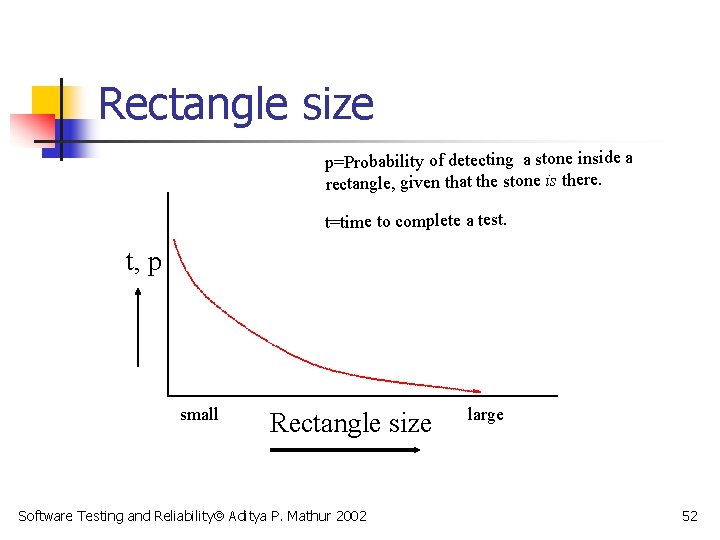

Rectangle size p=Probability of detecting a stone inside a rectangle, given that the stone is there. t=time to complete a test. t, p small Rectangle size Software Testing and Reliability Aditya P. Mathur 2002 large 52

Analogy Field: Program Stone: Error Scan a rectangle: Test program on one input Remove stone: Remove error Partition: Subset of input domain Size of stone: Size of an error Rectangle size: Size of a partition Software Testing and Reliability Aditya P. Mathur 2002 53

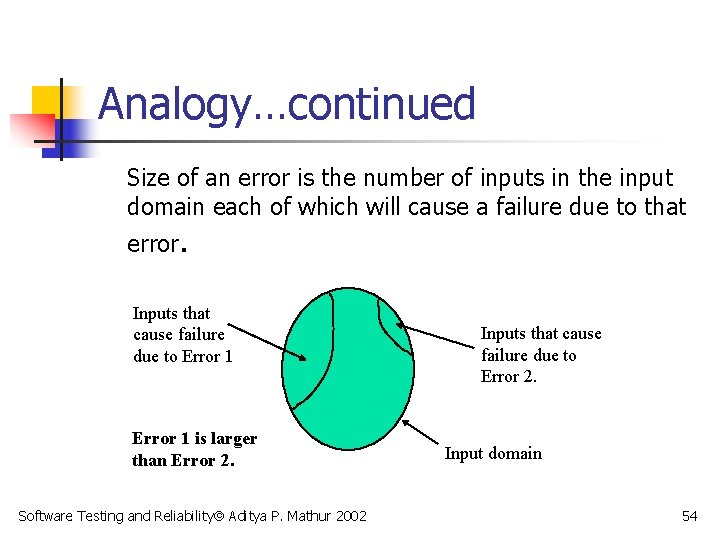

Analogy…continued Size of an error is the number of inputs in the input domain each of which will cause a failure due to that error. Inputs that cause failure due to Error 1 is larger than Error 2. Software Testing and Reliability Aditya P. Mathur 2002 Inputs that cause failure due to Error 2. Input domain 54

Confidence and probability n n n Increase in coverage increases our confidence in a “stone-free” field. It might not increase the probability that the field is “stone-free”. Important: Increase in confidence is NOT justified if detected stones are not guaranteed to be removed! Software Testing and Reliability Aditya P. Mathur 2002 55

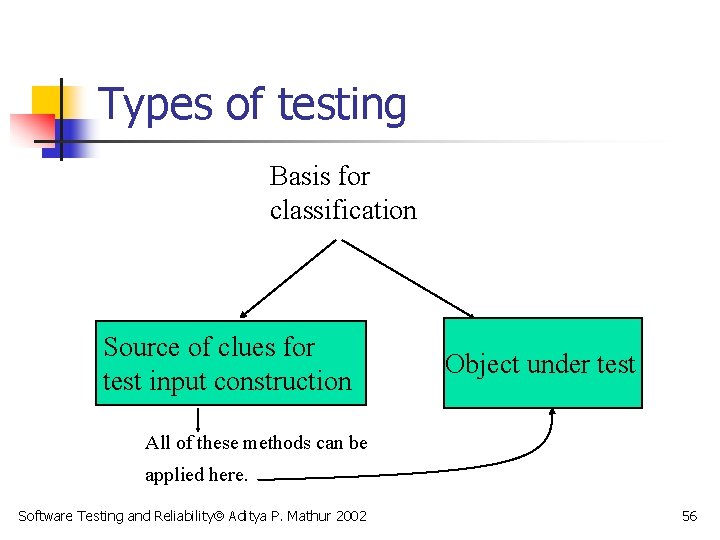

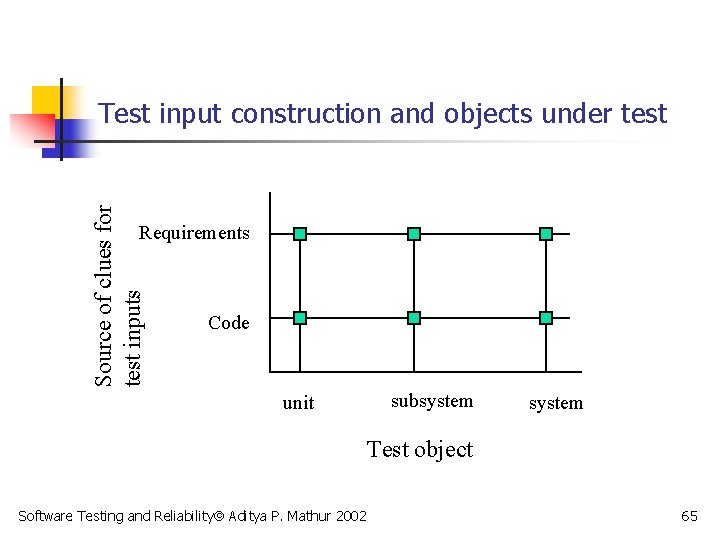

Types of testing Basis for classification Source of clues for test input construction Object under test All of these methods can be applied here. Software Testing and Reliability Aditya P. Mathur 2002 56

Testing: based on source of test inputs n Functional testing/specification testing/black-box testing/conformance testing: n n Clues for test input generation come from requirements. White-box testing/coverage testing/code -based testing n Clues come from program text. Software Testing and Reliability Aditya P. Mathur 2002 57

Testing: based on source of test inputs n Stress testing n Clues come from “load” requirements. For example, a telephone system must be able to handle 1000 calls over any 1 -minute interval. What happens when the system is loaded or overloaded? Software Testing and Reliability Aditya P. Mathur 2002 58

Testing: based on source of test inputs n Performance testing n n Clues come from performance requirements. For example, each call must be processed in less than 5 seconds. Does the system process each call in less than 5 seconds? Fault- or error- based testing n Clues come from the faults that are injected into the program text or are hypothesized to be in the program. Software Testing and Reliability Aditya P. Mathur 2002 59

Testing: based on source of test inputs n Random testing n n Clues come from requirements. Test are generated randomly using these clues. Robustness testing n Clues come from requirements. The goal is to test a program under scenarios not stipulated in the requirements. Software Testing and Reliability Aditya P. Mathur 2002 60

Testing: based on source of test inputs n OO testing n n Clues come from the requirements and the design of an OO-program. Protocol testing n Clues come from the specification of a protocol. As, for example, when testing for a communication protocol. Software Testing and Reliability Aditya P. Mathur 2002 61

Testing: based on item under test n Unit testing Testing of a program unit. A unit is the smallest testable piece of a program. One or more units form a subsystem. n Subsystem testing n Testing of a subsystem. A subsystem is a collection of units that cooperate to provide a part of system functionality Software Testing and Reliability Aditya P. Mathur 2002 62

Testing: based on item under test n Integration testing n n Testing of subsystems that are being integrated to form a larger subsystem or a complete system. System testing n Testing of a complete system. Software Testing and Reliability Aditya P. Mathur 2002 63

Testing: based on item under test n Regression testing n Test a subsystem or a system on a subset of the set of existing test inputs to check if it continues to function correctly after changes have been made to an older version. And the list goes on and on! Software Testing and Reliability Aditya P. Mathur 2002 64

Source of clues for test inputs Test input construction and objects under test Requirements Code unit subsystem Test object Software Testing and Reliability Aditya P. Mathur 2002 65

The Unified Modeling Language n n Unified Modeling Language (UML) is a notation to express requirements and designs of software systems. Requirements are represented using: n n a collection of use cases, each use case being a brief description of a major function provided by the application to its user, be it internal or external to the application, and a collection of system sequence diagrams that explain the interaction between a user and the application for each use case. Software Testing and Reliability Aditya P. Mathur 2002 66

Summary: Terms n n n Testing and debugging Specification Correctness Input domain Exhaustive testing Confidence Software Testing and Reliability Aditya P. Mathur 2002 67

Summary: Terms n n n Reliability Coverage Error, defect, fault, failure Debugging, test-debug cycle Types of testing, basis for classification Software Testing and Reliability Aditya P. Mathur 2002 68

Summary: Questions n n What is the effect of reducing the partition size on probability of finding errors? How does coverage effect our confidence in program correctness? Does 100% coverage imply that a program is fault-free? What decides the type of testing? Software Testing and Reliability Aditya P. Mathur 2002 69

- Slides: 69