Software Productivity Research an Artemis company Software Benchmarking

Software Productivity Research an Artemis company Software Benchmarking: What Works and What Doesn’t? Capers Jones, Chief Scientist 6 Lincoln Knoll Drive Burlington, Massachusetts 01803 Tel. : 781. 273. 0140 Fax: 781. 273. 5176 http: //www. spr. com Copyright © 2000 by SPR. All Rights Reserved. November 27, 2000 PRJ/1

Basic Definitions of Terms • Assessment: A formal analysis of software development practices against standard criteria. • Baseline: A set of quality, productivity, and assessment data at a fixed point in time, to be used for measuring future progress. • Benchmark: A formal comparison of one organization’s quality, productivity, and assessment data against similar data points from similar organizations. Copyright © 2000 by SPR. All Rights Reserved. PRJ/2

Major Forms of Software Benchmarks • Staff compensation and benefits benchmarks • Staff turnover and morale benchmarks • Software budgets and spending benchmarks • Staffing and specialization benchmarks • Process assessments (SEI, SPR, etc. ) • Productivity and cost benchmarks • Quality and defect removal benchmarks • Customer satisfaction benchmarks Copyright © 2000 by SPR. All Rights Reserved. PRJ/3

TWELVE CRITERIA FOR BENCHMARK SUCCESS The Benchmark data should: 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. Copyright © 2000 by SPR. All Rights Reserved. Benefit the executives who fund it Benefit the managers and staff who use it Generate positive ROI within 12 months Meet normal corporate ROI criteria Be as accurate as financial data Explain why projects vary Explain how much projects vary Link assessment and quantitative results Support multiple metrics Support multiple kinds of software Support multiple activities and deliverables Lead to improvement in software results PRJ/4

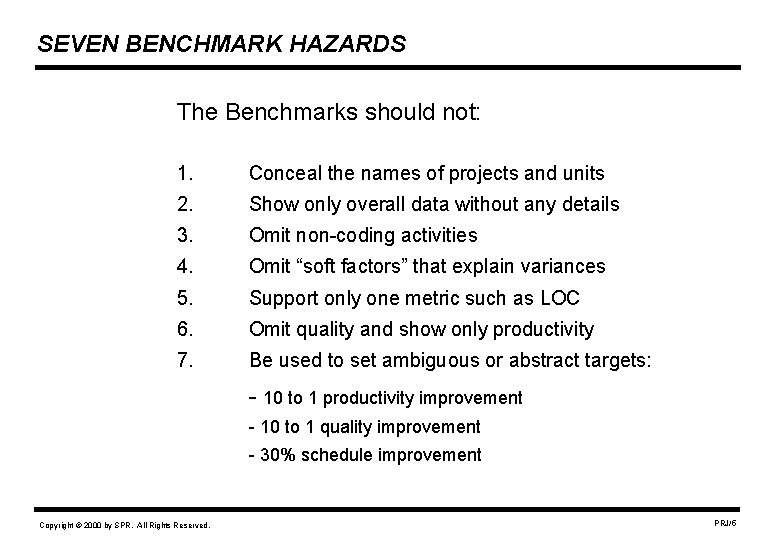

SEVEN BENCHMARK HAZARDS The Benchmarks should not: 1. Conceal the names of projects and units 2. Show only overall data without any details 3. Omit non-coding activities 4. Omit “soft factors” that explain variances 5. Support only one metric such as LOC 6. Omit quality and show only productivity 7. Be used to set ambiguous or abstract targets: - 10 to 1 productivity improvement - 10 to 1 quality improvement - 30% schedule improvement Copyright © 2000 by SPR. All Rights Reserved. PRJ/5

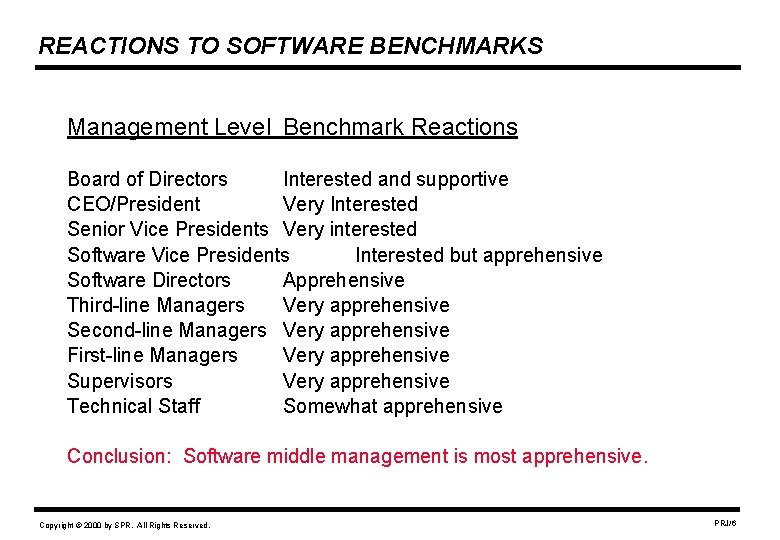

REACTIONS TO SOFTWARE BENCHMARKS Management Level Benchmark Reactions Board of Directors Interested and supportive CEO/President Very Interested Senior Vice Presidents Very interested Software Vice Presidents Interested but apprehensive Software Directors Apprehensive Third-line Managers Very apprehensive Second-line Managers Very apprehensive First-line Managers Very apprehensive Supervisors Very apprehensive Technical Staff Somewhat apprehensive Conclusion: Software middle management is most apprehensive. Copyright © 2000 by SPR. All Rights Reserved. PRJ/6

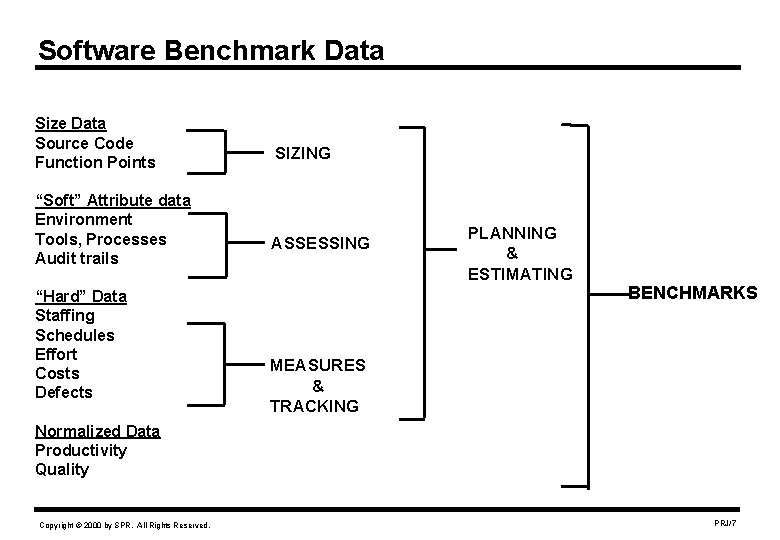

Software Benchmark Data Size Data Source Code Function Points “Soft” Attribute data Environment Tools, Processes Audit trails “Hard” Data Staffing Schedules Effort Costs Defects SIZING ASSESSING PLANNING & ESTIMATING BENCHMARKS MEASURES & TRACKING Normalized Data Productivity Quality Copyright © 2000 by SPR. All Rights Reserved. PRJ/7

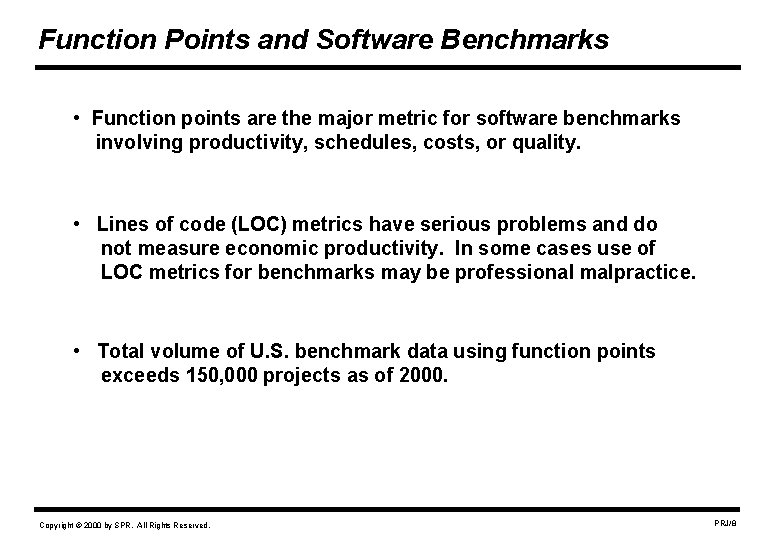

Function Points and Software Benchmarks • Function points are the major metric for software benchmarks involving productivity, schedules, costs, or quality. • Lines of code (LOC) metrics have serious problems and do not measure economic productivity. In some cases use of LOC metrics for benchmarks may be professional malpractice. • Total volume of U. S. benchmark data using function points exceeds 150, 000 projects as of 2000. Copyright © 2000 by SPR. All Rights Reserved. PRJ/8

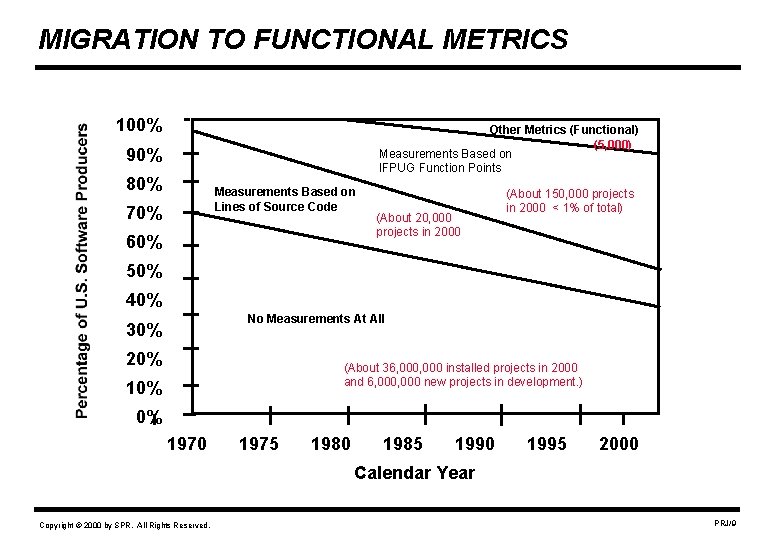

MIGRATION TO FUNCTIONAL METRICS 100% Other Metrics (Functional) (5, 000) Measurements Based on IFPUG Function Points 90% 80% Measurements Based on Lines of Source Code 70% 60% (About 20, 000 projects in 2000 (About 150, 000 projects in 2000 < 1% of total) 50% 40% No Measurements At All 30% 20% (About 36, 000 installed projects in 2000 and 6, 000 new projects in development. ) 10% 0% 1970 1975 1980 1985 1990 1995 2000 Calendar Year Copyright © 2000 by SPR. All Rights Reserved. PRJ/9

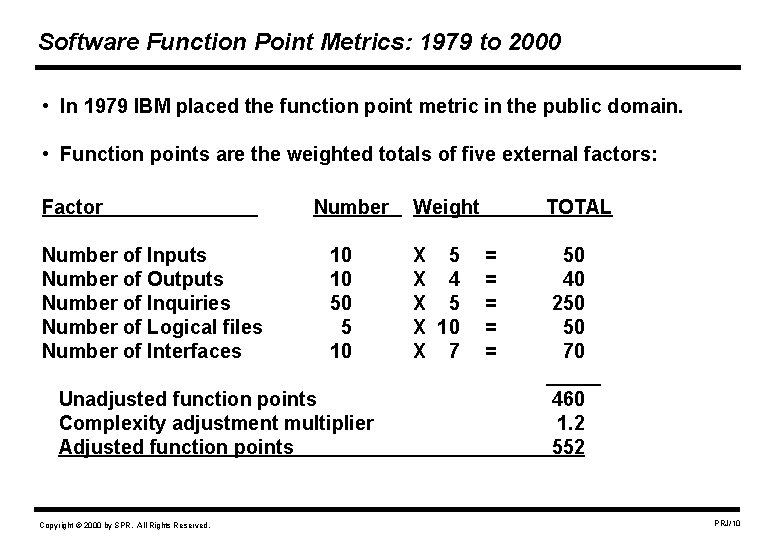

Software Function Point Metrics: 1979 to 2000 • In 1979 IBM placed the function point metric in the public domain. • Function points are the weighted totals of five external factors: Factor Number of Inputs Number of Outputs Number of Inquiries Number of Logical files Number of Interfaces Number 10 10 50 5 10 Unadjusted function points Complexity adjustment multiplier Adjusted function points Copyright © 2000 by SPR. All Rights Reserved. Weight X 5 X 4 X 5 X 10 X 7 TOTAL = = = 50 40 250 50 70 _____ 460 1. 2 552 PRJ/10

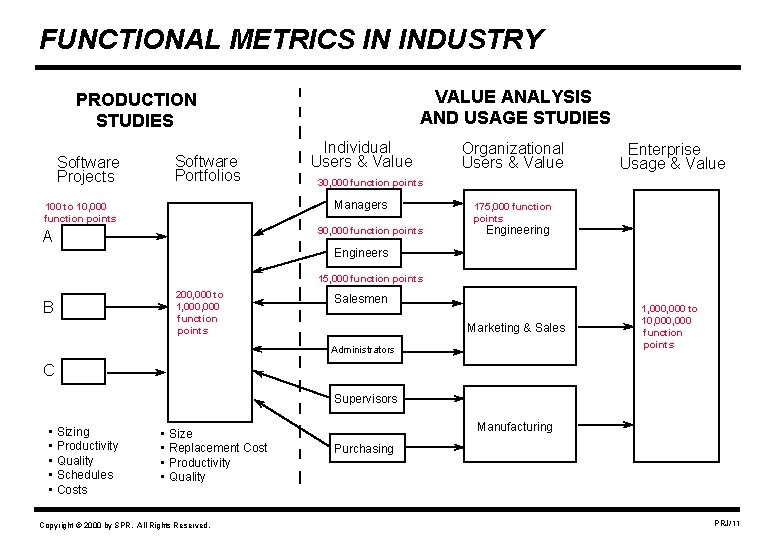

FUNCTIONAL METRICS IN INDUSTRY VALUE ANALYSIS AND USAGE STUDIES PRODUCTION STUDIES Software Projects Software Portfolios Individual Users & Value 90, 000 function points A Enterprise Usage & Value 30, 000 function points Managers 100 to 10, 000 function points Organizational Users & Value 175, 000 function points Engineering Engineers 15, 000 function points B 200, 000 to 1, 000 function points Salesmen Marketing & Sales Administrators 1, 000 to 10, 000 function points C Supervisors • Sizing • Productivity • Quality • Schedules • Costs • Size • Replacement Cost • Productivity • Quality Copyright © 2000 by SPR. All Rights Reserved. Manufacturing Purchasing PRJ/11

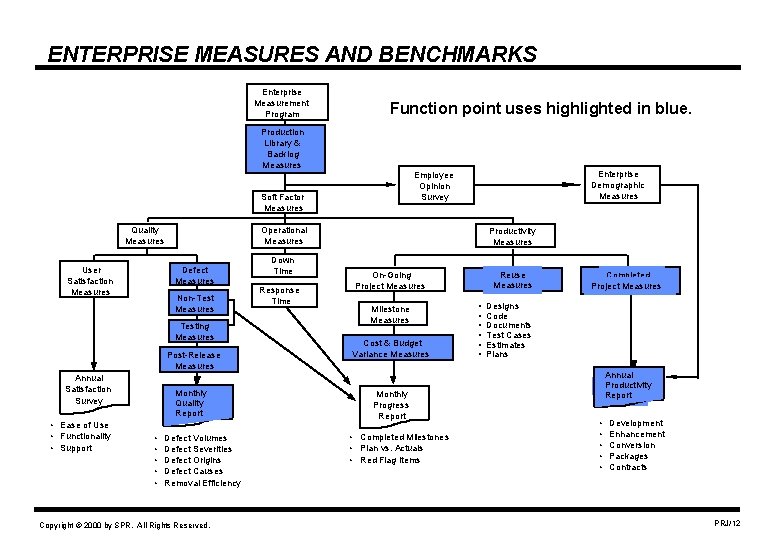

ENTERPRISE MEASURES AND BENCHMARKS Enterprise Measurement Program Function point uses highlighted in blue. Production Library & Backlog Measures Soft Factor Measures Quality Measures User Satisfaction Measures Operational Measures Defect Measures Non-Test Measures Testing Measures Post-Release Measures Annual Satisfaction Survey • Ease of Use • Functionality • Support Monthly Quality Report • • • Enterprise Demographic Measures Employee Opinion Survey Defect Volumes Defect Severities Defect Origins Defect Causes Removal Efficiency Copyright © 2000 by SPR. All Rights Reserved. Down Time Response Time Productivity Measures On-Going Project Measures Milestone Measures Cost & Budget Variance Measures Monthly Progress Report • Completed Milestones • Plan vs. Actuals • Red Flag Items Reuse Measures • • • Completed Project Measures Designs Code Documents Test Cases Estimates Plans Annual Productivity Report • • • Development Enhancement Conversion Packages Contracts PRJ/12

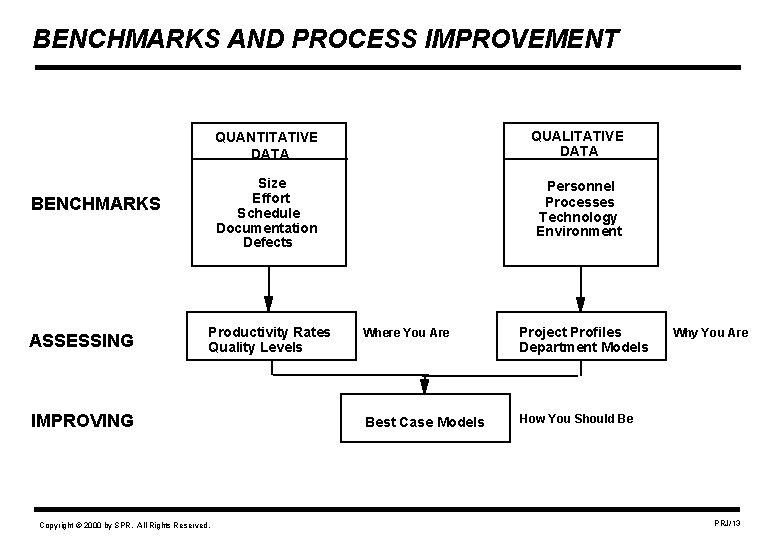

BENCHMARKS AND PROCESS IMPROVEMENT BENCHMARKS ASSESSING QUANTITATIVE DATA QUALITATIVE DATA Size Effort Schedule Documentation Defects Personnel Processes Technology Environment Productivity Rates Quality Levels IMPROVING Copyright © 2000 by SPR. All Rights Reserved. Where You Are Project Profiles Department Models Best Case Models How You Should Be Why You Are PRJ/13

SPR BENCHMARK EXAMPLES • Software Productivity Research (SPR) has been performing assessments and benchmark studies since 1985. • SPR data in 2000 now exceeds 10, 000 projects representing more than 600 enterprises. • The following data points are samples of “lessons learned” during software assessment and benchmark studies. Copyright © 2000 by SPR. All Rights Reserved. PRJ/14

BENCHMARKS AND SOFTWARE CLASSES – Systems software Best software quality Best quality measurements – Information systems Best productivity measurements Best use of function point metrics – Outsource vendors Best benchmark measurements Best baseline measurements Shortest delivery schedules – Commercial software Best testing metrics Best user satisfaction measurements – Military software Best software reliability Most SEI process assessments Copyright © 2000 by SPR. All Rights Reserved. PRJ/15

FUNCTION POINT RULES OF THUMB • Function points ^ 0. 40 power = calendar months in schedule • Function points ^ 1. 15 power = pages of paper documents • Function points ^ 1. 20 power = number of test cases • Function points ^ 1. 25 power = software defect potential • Function points / 150 = development technical staff • Function points / 1, 500 = maintenance technical staff NOTE: These rules assume IFPUG Version 4. 1 counting rules. Copyright © 2000 by SPR. All Rights Reserved. PRJ/16

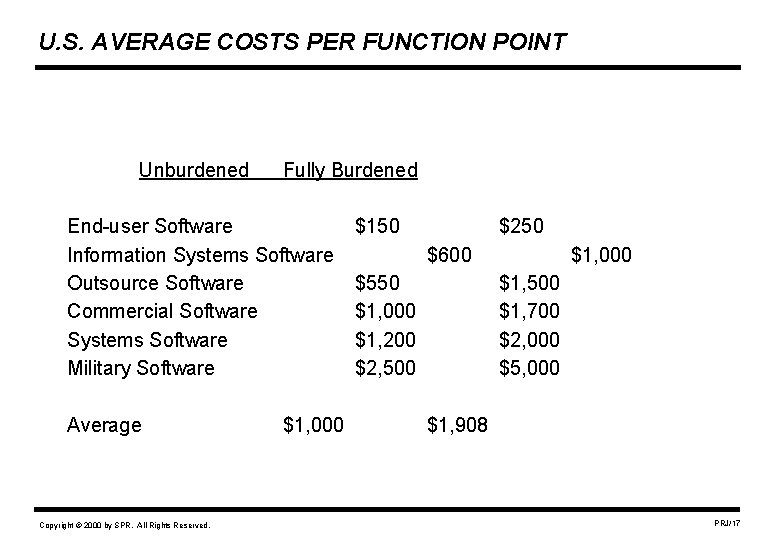

U. S. AVERAGE COSTS PER FUNCTION POINT Unburdened Fully Burdened End-user Software Information Systems Software Outsource Software Commercial Software Systems Software Military Software Average Copyright © 2000 by SPR. All Rights Reserved. $1, 000 $150 $250 $600 $550 $1, 000 $1, 200 $2, 500 $1, 000 $1, 500 $1, 700 $2, 000 $5, 000 $1, 908 PRJ/17

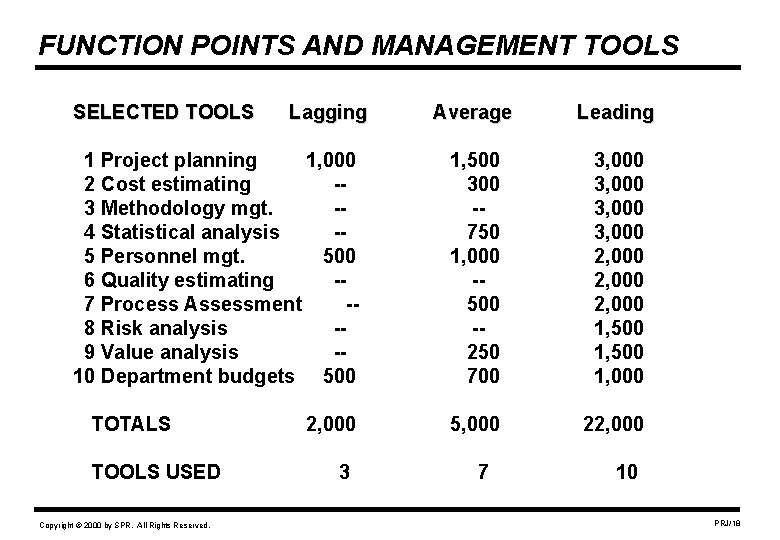

FUNCTION POINTS AND MANAGEMENT TOOLS SELECTED TOOLS Lagging 1 Project planning 1, 000 2 Cost estimating -3 Methodology mgt. -4 Statistical analysis -5 Personnel mgt. 500 6 Quality estimating -7 Process Assessment -8 Risk analysis -9 Value analysis -10 Department budgets 500 TOTALS TOOLS USED Copyright © 2000 by SPR. All Rights Reserved. 2, 000 3 Average Leading 1, 500 300 -750 1, 000 -500 -250 700 3, 000 2, 000 1, 500 1, 000 5, 000 22, 000 7 10 PRJ/18

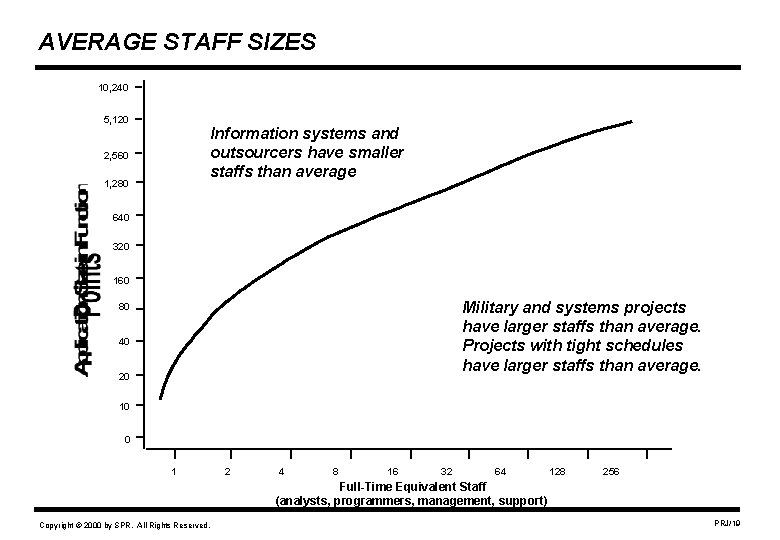

AVERAGE STAFF SIZES 10, 240 5, 120 Information systems and outsourcers have smaller staffs than average 2, 560 1, 280 640 320 160 Military and systems projects have larger staffs than average. Projects with tight schedules have larger staffs than average. 80 40 20 10 0 1 2 4 8 16 32 64 128 256 Full-Time Equivalent Staff (analysts, programmers, management, support) Copyright © 2000 by SPR. All Rights Reserved. PRJ/19

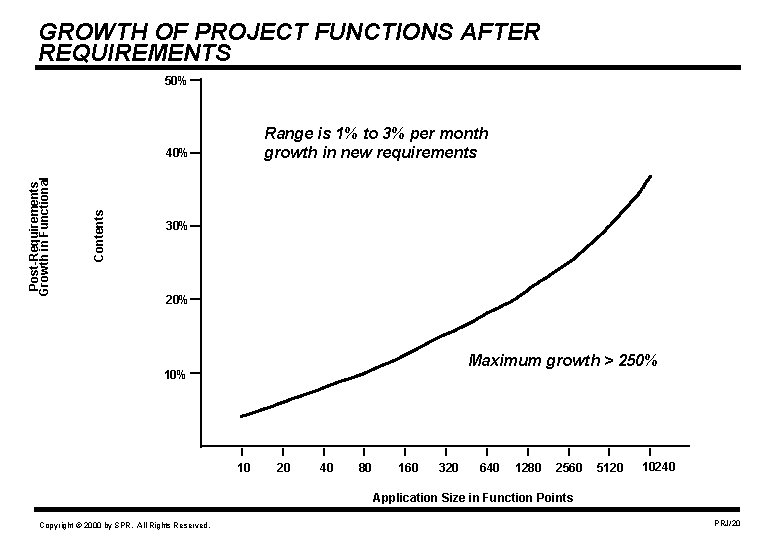

GROWTH OF PROJECT FUNCTIONS AFTER REQUIREMENTS 50% Range is 1% to 3% per month growth in new requirements Contents Post-Requirements Growth in Functional 40% 30% 20% Maximum growth > 250% 10 20 40 80 160 320 640 1280 2560 5120 10240 Application Size in Function Points Copyright © 2000 by SPR. All Rights Reserved. PRJ/20

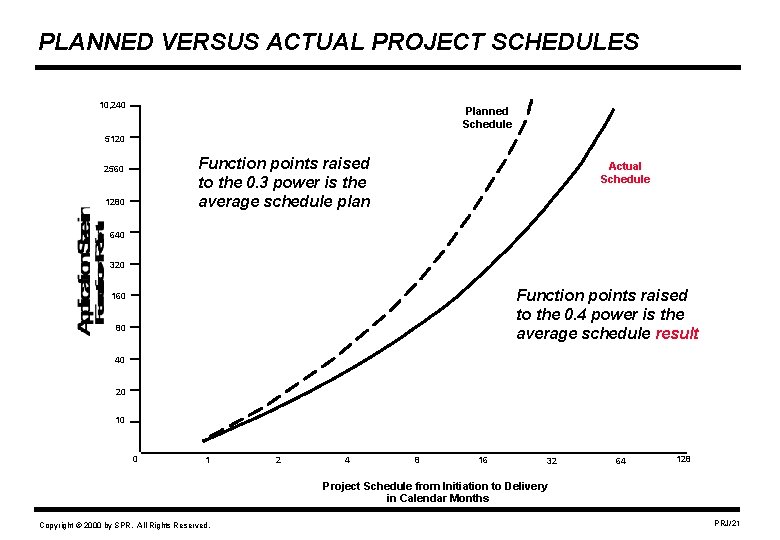

PLANNED VERSUS ACTUAL PROJECT SCHEDULES 10, 240 Planned Schedule 5120 Function points raised to the 0. 3 power is the average schedule plan 2560 1280 Actual Schedule 640 320 Function points raised to the 0. 4 power is the average schedule result 160 80 40 20 10 0 1 2 4 8 16 32 64 128 Project Schedule from Initiation to Delivery in Calendar Months Copyright © 2000 by SPR. All Rights Reserved. PRJ/21

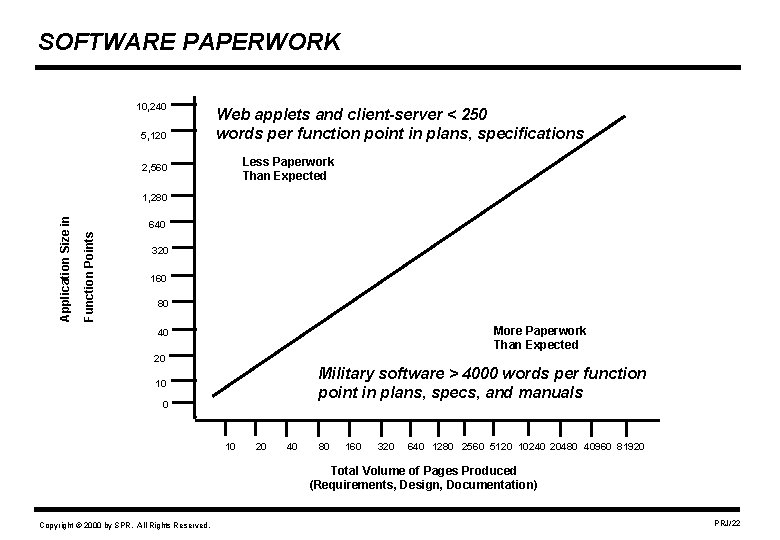

SOFTWARE PAPERWORK 10, 240 5, 120 Web applets and client-server < 250 words per function point in plans, specifications Less Paperwork Than Expected 2, 560 640 Function Points Application Size in 1, 280 320 160 80 More Paperwork Than Expected 40 20 Military software > 4000 words per function point in plans, specs, and manuals 10 0 10 20 40 80 160 320 640 1280 2560 5120 10240 20480 40960 81920 Total Volume of Pages Produced (Requirements, Design, Documentation) Copyright © 2000 by SPR. All Rights Reserved. PRJ/22

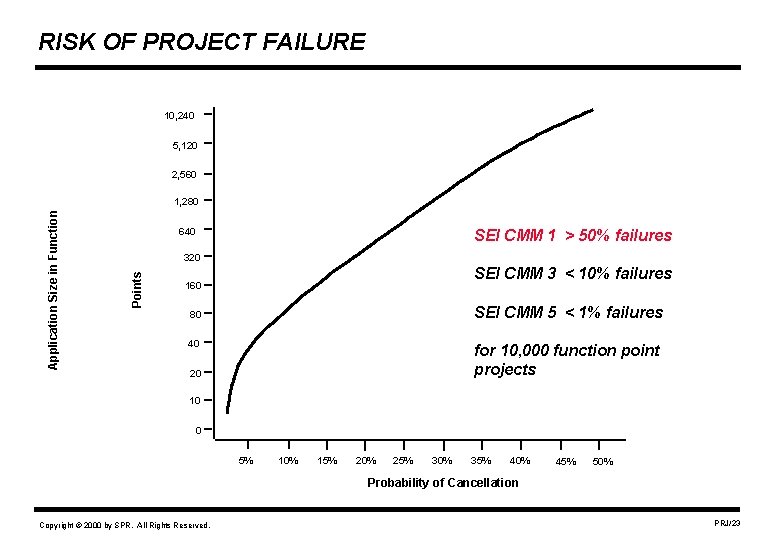

RISK OF PROJECT FAILURE 10, 240 5, 120 2, 560 640 SEI CMM 1 > 50% failures 320 Points Application Size in Function 1, 280 SEI CMM 3 < 10% failures 160 SEI CMM 5 < 1% failures 80 40 for 10, 000 function point projects 20 10 0 5% 10% 15% 20% 25% 30% 35% 40% 45% 50% Probability of Cancellation Copyright © 2000 by SPR. All Rights Reserved. PRJ/23

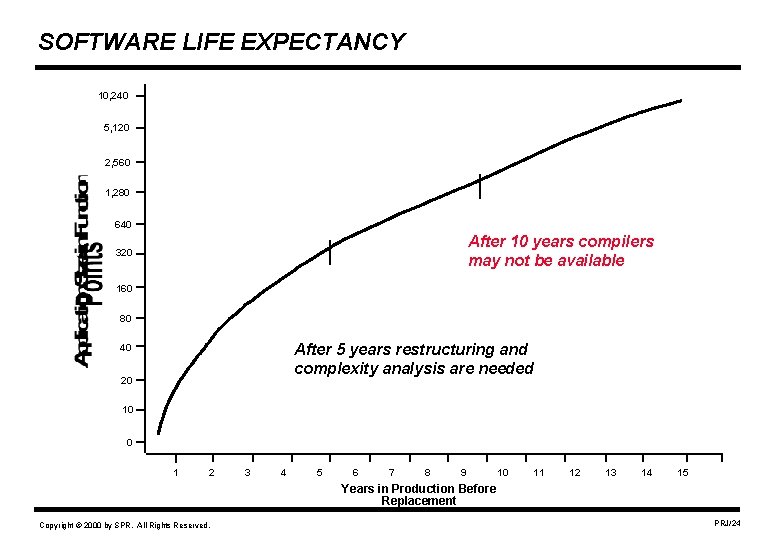

SOFTWARE LIFE EXPECTANCY 10, 240 5, 120 2, 560 1, 280 640 After 10 years compilers may not be available 320 160 80 After 5 years restructuring and complexity analysis are needed 40 20 10 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Years in Production Before Replacement Copyright © 2000 by SPR. All Rights Reserved. PRJ/24

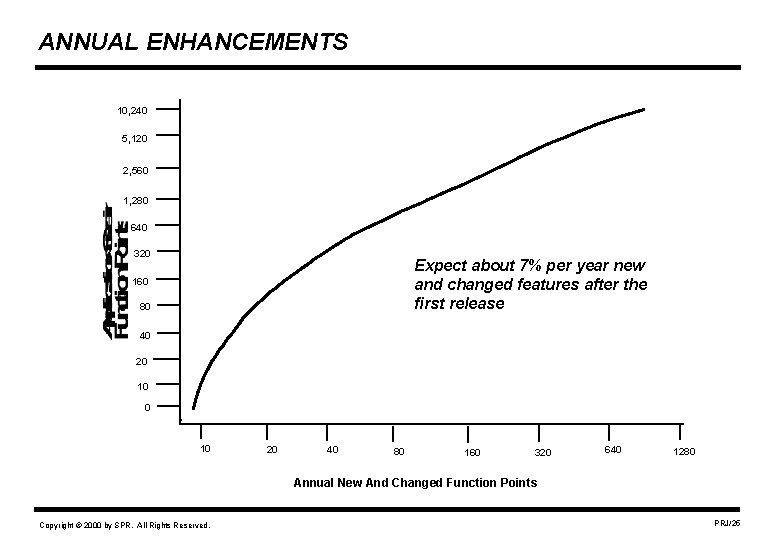

ANNUAL ENHANCEMENTS 10, 240 5, 120 2, 560 1, 280 640 320 Expect about 7% per year new and changed features after the first release 160 80 40 20 10 20 40 80 160 320 640 1280 Annual New And Changed Function Points Copyright © 2000 by SPR. All Rights Reserved. PRJ/25

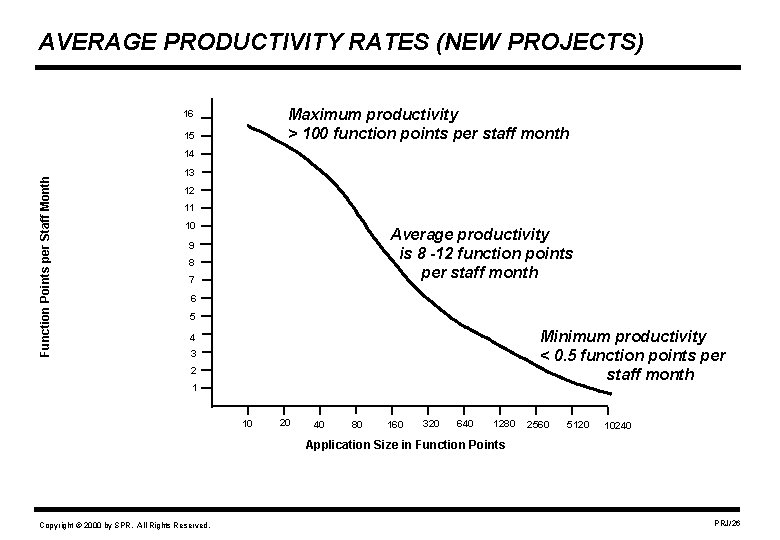

AVERAGE PRODUCTIVITY RATES (NEW PROJECTS) Maximum productivity > 100 function points per staff month 16 15 Function Points per Staff Month 14 13 12 11 10 Average productivity is 8 -12 function points per staff month 9 8 7 6 5 Minimum productivity < 0. 5 function points per staff month 4 3 2 1 10 20 40 80 160 320 640 1280 2560 5120 10240 Application Size in Function Points Copyright © 2000 by SPR. All Rights Reserved. PRJ/26

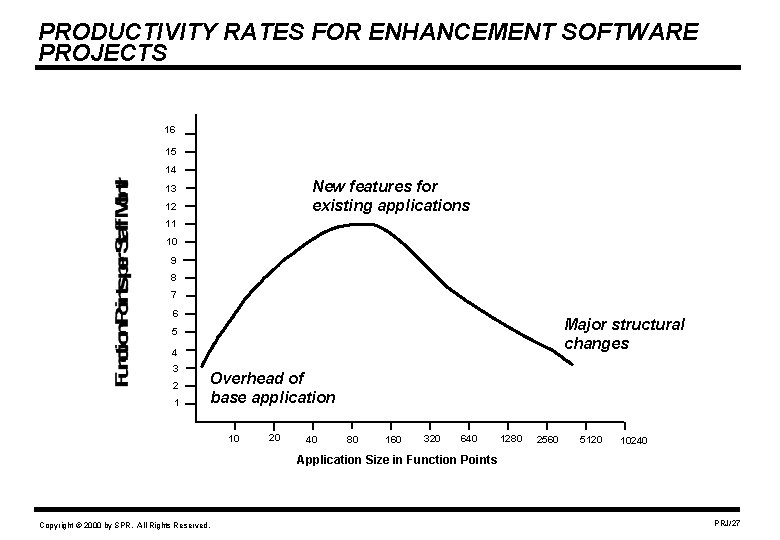

PRODUCTIVITY RATES FOR ENHANCEMENT SOFTWARE PROJECTS 16 15 14 New features for existing applications 13 12 11 10 9 8 7 6 Major structural changes 5 4 3 2 1 Overhead of base application 10 20 40 80 160 320 640 1280 2560 5120 10240 Application Size in Function Points Copyright © 2000 by SPR. All Rights Reserved. PRJ/27

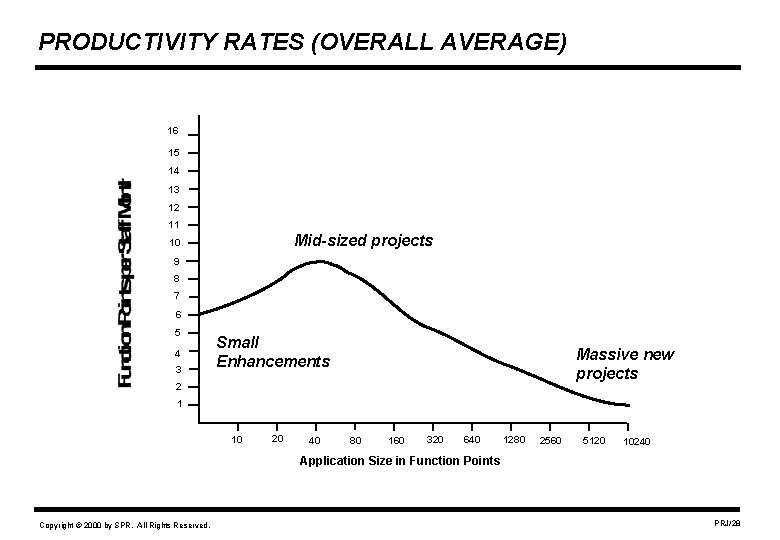

PRODUCTIVITY RATES (OVERALL AVERAGE) 16 15 14 13 12 11 Mid-sized projects 10 9 8 7 6 5 4 3 Small Enhancements Massive new projects 2 1 10 20 40 80 160 320 640 1280 2560 5120 10240 Application Size in Function Points Copyright © 2000 by SPR. All Rights Reserved. PRJ/28

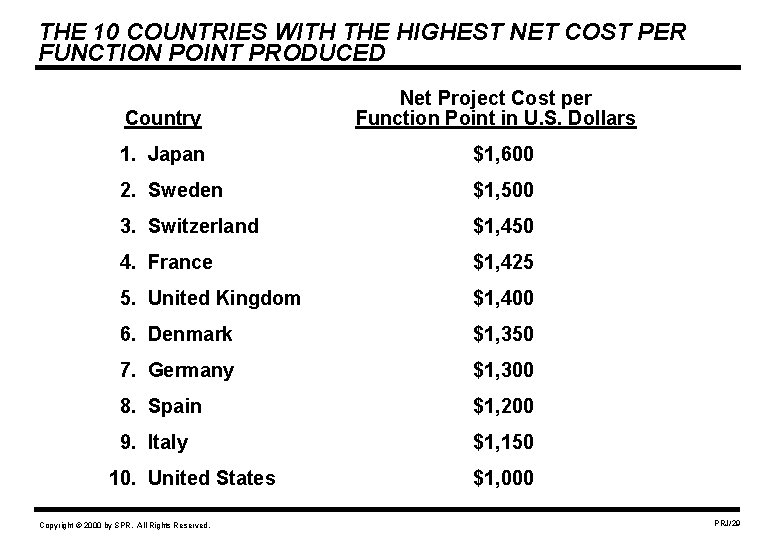

THE 10 COUNTRIES WITH THE HIGHEST NET COST PER FUNCTION POINT PRODUCED Country Net Project Cost per Function Point in U. S. Dollars 1. Japan $1, 600 2. Sweden $1, 500 3. Switzerland $1, 450 4. France $1, 425 5. United Kingdom $1, 400 6. Denmark $1, 350 7. Germany $1, 300 8. Spain $1, 200 9. Italy $1, 150 10. United States Copyright © 2000 by SPR. All Rights Reserved. $1, 000 PRJ/29

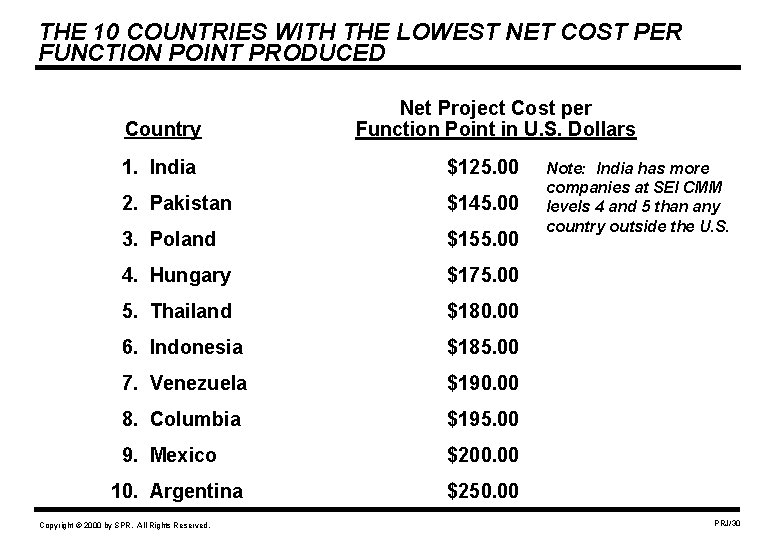

THE 10 COUNTRIES WITH THE LOWEST NET COST PER FUNCTION POINT PRODUCED Country Net Project Cost per Function Point in U. S. Dollars 1. India $125. 00 2. Pakistan $145. 00 3. Poland $155. 00 4. Hungary $175. 00 5. Thailand $180. 00 6. Indonesia $185. 00 7. Venezuela $190. 00 8. Columbia $195. 00 9. Mexico $200. 00 10. Argentina Copyright © 2000 by SPR. All Rights Reserved. Note: India has more companies at SEI CMM levels 4 and 5 than any country outside the U. S. $250. 00 PRJ/30

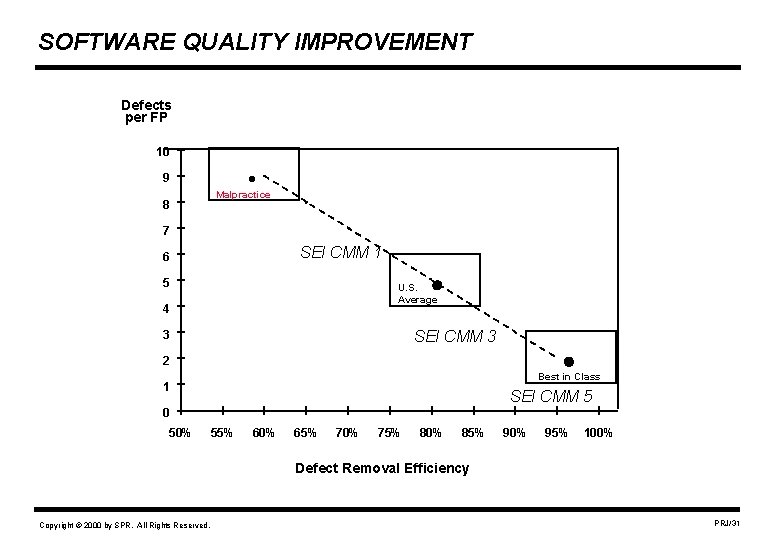

SOFTWARE QUALITY IMPROVEMENT Defects per FP 10 . 9 8 Malpractice 7 SEI CMM 1 6 5 . U. S. Average 4 . SEI CMM 3 3 2 Best in Class 1 SEI CMM 5 0 50% 55% 60% 65% 70% 75% 80% 85% 90% 95% 100% Defect Removal Efficiency Copyright © 2000 by SPR. All Rights Reserved. PRJ/31

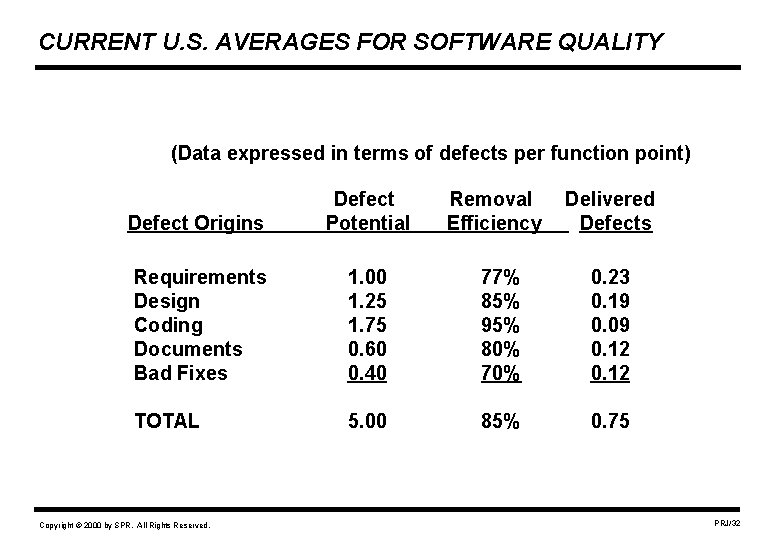

CURRENT U. S. AVERAGES FOR SOFTWARE QUALITY (Data expressed in terms of defects per function point) Defect Origins Defect Potential Requirements Design Coding Documents Bad Fixes 1. 00 1. 25 1. 75 0. 60 0. 40 77% 85% 95% 80% 70% 0. 23 0. 19 0. 09 0. 12 TOTAL 5. 00 85% 0. 75 Copyright © 2000 by SPR. All Rights Reserved. Removal Efficiency Delivered Defects PRJ/32

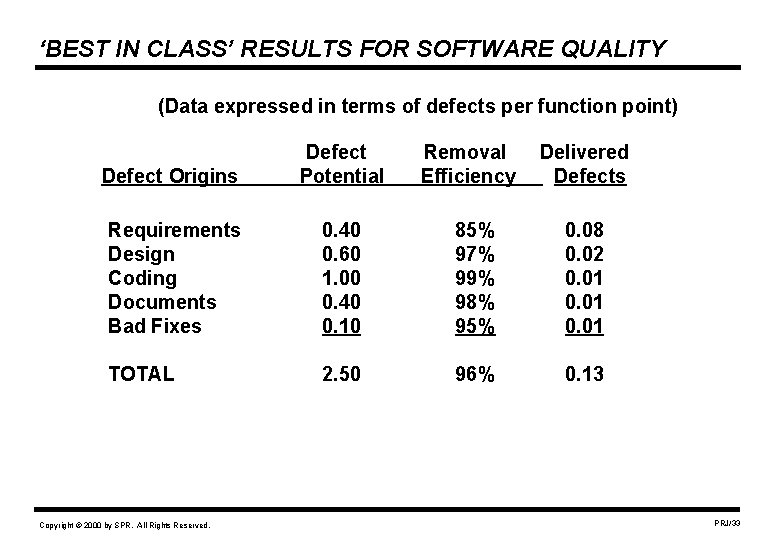

‘BEST IN CLASS’ RESULTS FOR SOFTWARE QUALITY (Data expressed in terms of defects per function point) Defect Origins Defect Potential Requirements Design Coding Documents Bad Fixes 0. 40 0. 60 1. 00 0. 40 0. 10 85% 97% 99% 98% 95% 0. 08 0. 02 0. 01 TOTAL 2. 50 96% 0. 13 Copyright © 2000 by SPR. All Rights Reserved. Removal Efficiency Delivered Defects PRJ/33

IMPROVING SOFTWARE PRODUCTIVITY AND QUALITY • Start with an assessment to find out what is right and wrong with current practices. • Commission a benchmark study to compare your performance with best practices in your industry • Stop doing what is wrong. • Do more of what is right. • Set targets: Best in Class, Better than Average, Better than Today. • Develop a three-year technology plan. • Include: capital equipment, offices, tools, methods, education, culture, languages and return on investment (ROI). Copyright © 2000 by SPR. All Rights Reserved. PRJ/34

QUANTITATIVE AND QUALITATIVE GOALS What It Means to be Best In Class 1. Software project cancellation due to cost or schedule overruns = zero 2. Software cost overruns < 5% compared to formal budgets 3. Software schedule overruns < 3% compared to formal plans 4. Development productivity > 50 function points per staff month 5. Software reuse of design, code and test cases averages > 75% 6. Development cost < $250 per function point at delivery 7. Software development schedules average 15% shorter than average Copyright © 2000 by SPR. All Rights Reserved. PRJ/35

QUANTITATIVE AND QUALITATIVE GOALS (cont. ) 8. Software defect potentials average < 2. 5 per function point 9. Software defect removal efficiency averages > 97% for all projects 10. Software delivered defects average < 0. 075 per function point 11. Software maintenance assignment scopes > 3, 500 function points 12. Annual software maintenance < $75 per function point 13. Customer service: Best of any similar corporation 14. User satisfaction: Highest of any similar corporation 15. Staff morale: Highest of any similar corporation 16. Compensation and benefits: Best in your industry Copyright © 2000 by SPR. All Rights Reserved. PRJ/36

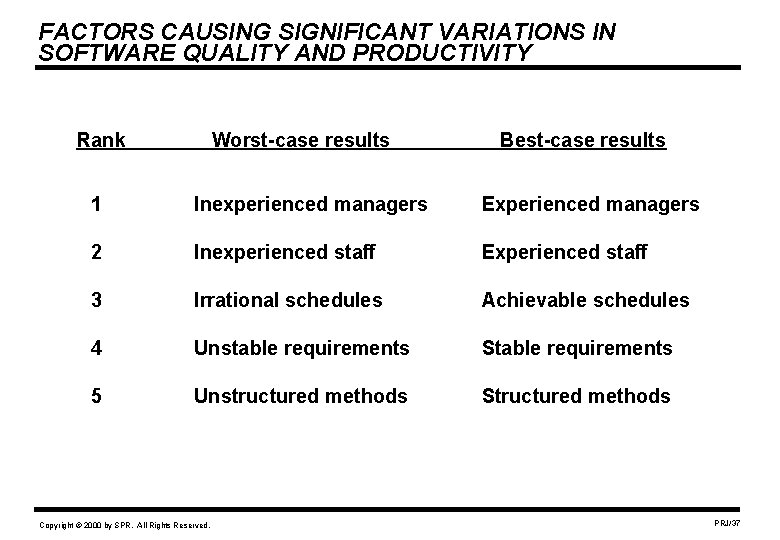

FACTORS CAUSING SIGNIFICANT VARIATIONS IN SOFTWARE QUALITY AND PRODUCTIVITY Rank Worst-case results Best-case results 1 Inexperienced managers Experienced managers 2 Inexperienced staff Experienced staff 3 Irrational schedules Achievable schedules 4 Unstable requirements Stable requirements 5 Unstructured methods Structured methods Copyright © 2000 by SPR. All Rights Reserved. PRJ/37

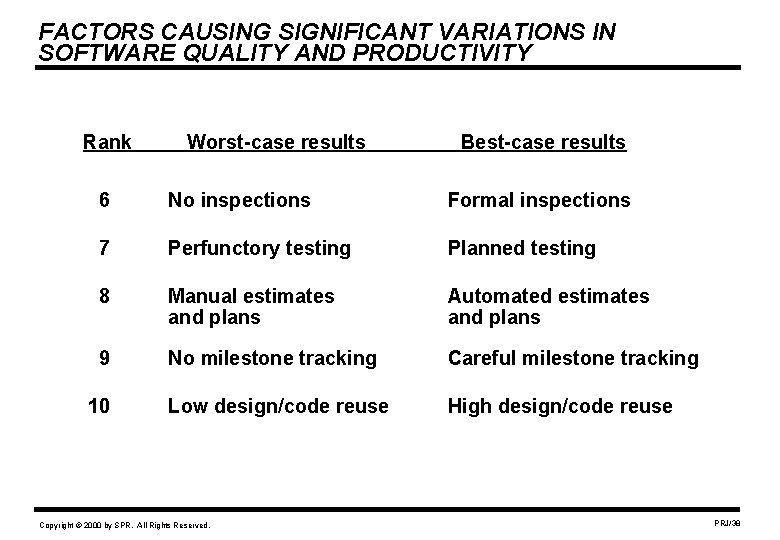

FACTORS CAUSING SIGNIFICANT VARIATIONS IN SOFTWARE QUALITY AND PRODUCTIVITY Rank Worst-case results Best-case results 6 No inspections Formal inspections 7 Perfunctory testing Planned testing 8 Manual estimates and plans Automated estimates and plans 9 No milestone tracking Careful milestone tracking Low design/code reuse High design/code reuse 10 Copyright © 2000 by SPR. All Rights Reserved. PRJ/38

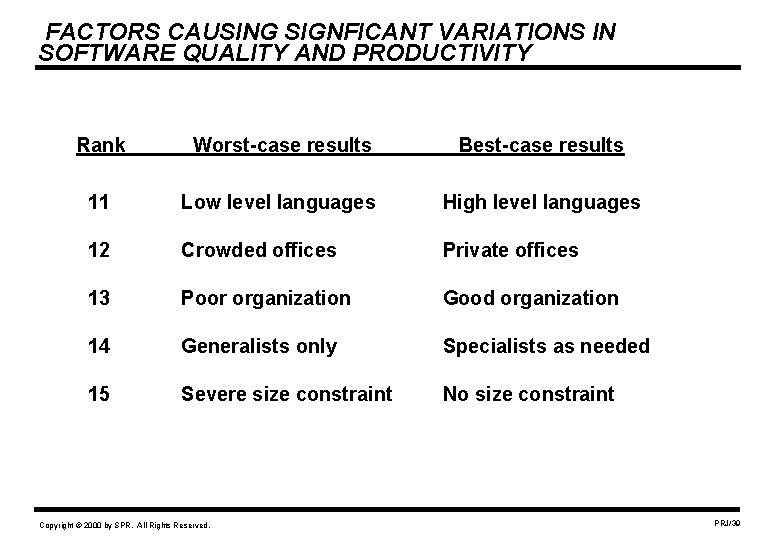

FACTORS CAUSING SIGNFICANT VARIATIONS IN SOFTWARE QUALITY AND PRODUCTIVITY Rank Worst-case results Best-case results 11 Low level languages High level languages 12 Crowded offices Private offices 13 Poor organization Good organization 14 Generalists only Specialists as needed 15 Severe size constraint No size constraint Copyright © 2000 by SPR. All Rights Reserved. PRJ/39

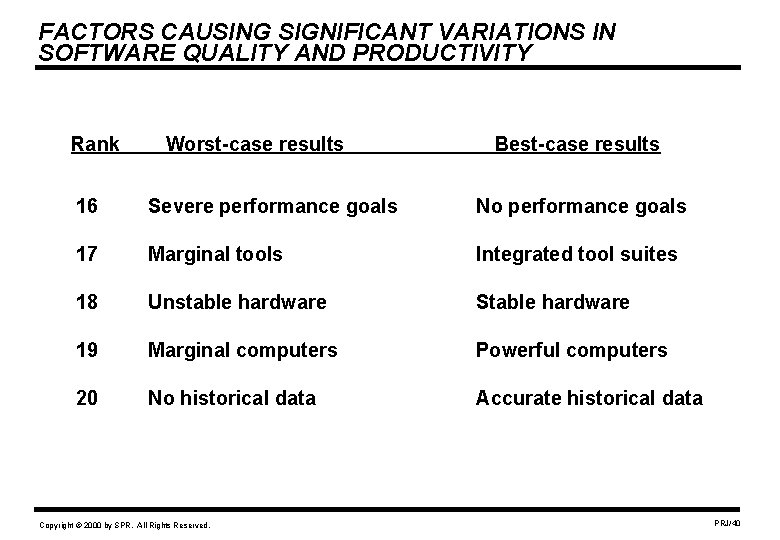

FACTORS CAUSING SIGNIFICANT VARIATIONS IN SOFTWARE QUALITY AND PRODUCTIVITY Rank Worst-case results Best-case results 16 Severe performance goals No performance goals 17 Marginal tools Integrated tool suites 18 Unstable hardware Stable hardware 19 Marginal computers Powerful computers 20 No historical data Accurate historical data Copyright © 2000 by SPR. All Rights Reserved. PRJ/40

ATTRIBUTES OF BEST IN CLASS COMPANIES 1. Good project management 2. Good technical staffs 3. Good support staffs 4. Good measurements 5. Good organization structures 6. Good methodologies 7. Good tool suites 8. Good environments Copyright © 2000 by SPR. All Rights Reserved. PRJ/41

GOOD PROJECT MANAGEMENT • Without good project management the rest is unachievable • Attributes of project good management: – Fairness to staff – Desire to be excellent – Strong customer orientation – Strong people orientation – Strong technology orientation – Understands planning and estimating tools – Can defend accurate estimates to clients and executives – Can justify investments in tools and processes Copyright © 2000 by SPR. All Rights Reserved. PRJ/42

GOOD SOFTWARE ENGINEERING TECHNICAL STAFFS • Without good engineering technical staffs tools are not effective • Attributes of good technical staffs: – Desire to be excellent – Good knowledge of applications – Good knowledge of development processes – Good knowledge of quality and defect removal methods – Good knowledge of maintenance methods – Good knowledge of programming languages – Good knowledge of software engineering tools – Like to stay at the leading edge of software engineering Copyright © 2000 by SPR. All Rights Reserved. PRJ/43

GOOD SUPPORT STAFFS • Without good support technical staffs and managers are handicapped • Support staffs > 30% of software personnel in leading companies • Attributes of good support staffs: – Planning and estimating skills – Measurement and metric skills – Writing/communication skills – Quality assurance skills – Data base skills – Network, internet, and web skills – Graphics and web-design skills – Testing and integration skills – Configuration control and change management skills Copyright © 2000 by SPR. All Rights Reserved. PRJ/44

GOOD SOFTWARE MEASUREMENTS • Without good measurements progress is unlikely • Attributes of good measurements: – Function point analysis of entire portfolio – Annual function point benchmarks – Life-cycle quality measures – User satisfaction measures – Development and maintenance productivity measures – Soft factor assessment measures – Hard factor measures of costs, staffing, effort, schedules – Measurements used as management tools Copyright © 2000 by SPR. All Rights Reserved. PRJ/45

GOOD ORGANIZATION STRUCTURES • Without good organization structures progress is unlikely • Attributes of good organization structures: – Balance of line and staff functions – Balance of centralized and decentralized functions – Organizations are planned – Organizations are dynamic – Effective use of specialists for key functions – Able to integrate “virtual teams” at remote locations – Able to integrate telecommuting Copyright © 2000 by SPR. All Rights Reserved. PRJ/46

GOOD PROCESSES AND METHODOLOGIES • Without good processes and methodologies tools are ineffective • Attributes of good methodologies: – Flexible and useful for both new projects and updates – Scalable from small projects up to major systems – Versatile and able to handle multiple kinds of software – Efficient and cost effective – Evolutionary and able to handle new kinds of projects – Unobtrusive and not viewed as bureaucratic Copyright © 2000 by SPR. All Rights Reserved. PRJ/47

GOOD TOOL SUITES • Without good tool suites, management and staffs are handicapped • Attributes of good tool suites: – Both project management and technical tools – Functionally complete – Mutually compatible – Easy to learn – Easy to use – Tolerant of user errors – Secure Copyright © 2000 by SPR. All Rights Reserved. PRJ/48

GOOD ENVIRONMENTS AND ERGONOMICS • Without good office environments productivity is difficult • Attributes of good environments and ergonomics: – Private office space for knowledge workers (> 90 square feet; > 6 square meters) – Avoid small or crowded cubicles with 3 or more staff – Adequate conference and classroom facilities – Excellent internet and intranet communications – Excellent communication with users and clients Copyright © 2000 by SPR. All Rights Reserved. PRJ/49

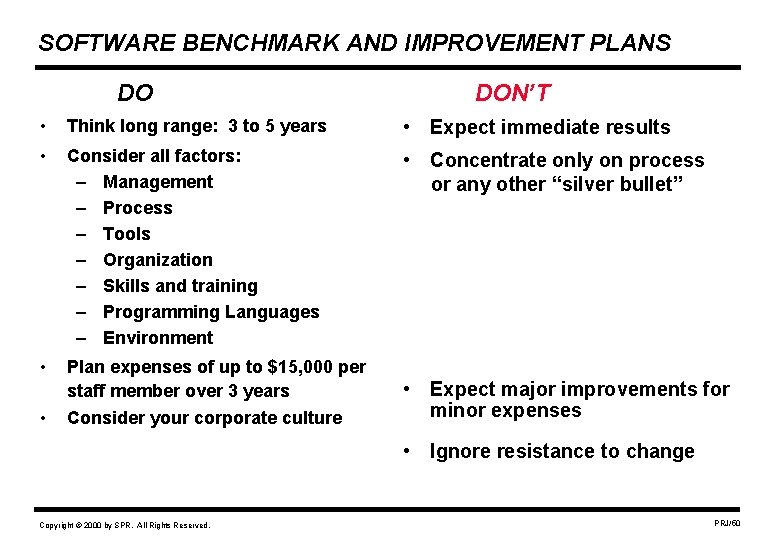

SOFTWARE BENCHMARK AND IMPROVEMENT PLANS DO DON’T • Think long range: 3 to 5 years • Expect immediate results • Consider all factors: – Management – Process – Tools – Organization – Skills and training – Programming Languages – Environment • Concentrate only on process or any other “silver bullet” • Plan expenses of up to $15, 000 per staff member over 3 years Consider your corporate culture • • Expect major improvements for minor expenses • Ignore resistance to change Copyright © 2000 by SPR. All Rights Reserved. PRJ/50

Software Benchmark Information Sources • Software Assessments, Benchmarks, and Best Practices Addison Wesley Longman, 2000. (Capers Jones) • Measuring the Software Process: A Guide to Functional Measurement Prentice Hall, 1995 (David Herron and David Garmus) • Function Point Analysis Prentice Hall, 1989 (Dr. Brian Dreger) • http: //www. IFPUG. org (International Function Point Users Group) • http: //www. SPR. com (Software Productivity Research web site) Copyright © 2000 by SPR. All Rights Reserved. PRJ/51

- Slides: 51