Software attacks NET security Software attacks The problem

Software attacks. NET security

Software attacks The problem • Buffer owerflow: one silly but very common bug • Can allow a determined attacker to obtain control • Components: building an application from thirdparty DLLs makes the problem even worse: – the attacker has lots of time to discover the various gaps to be exploited in each DLL in use – a bogus/malicious component could be used

Software attacks What we want A user that runs a process constructed from components, wants to be sure that he is protected from malicious software. => Prevent buffer overflow with verification of managed code => Prevent execution of malicious software: Code access security (CAS)

Software attacks . NET • • • CLR: CTS: IL: Metadata: Assembly: Manifest: Common Language Runtime Common Type System Intermediate Language Self info about classes Code unit Description of assembly

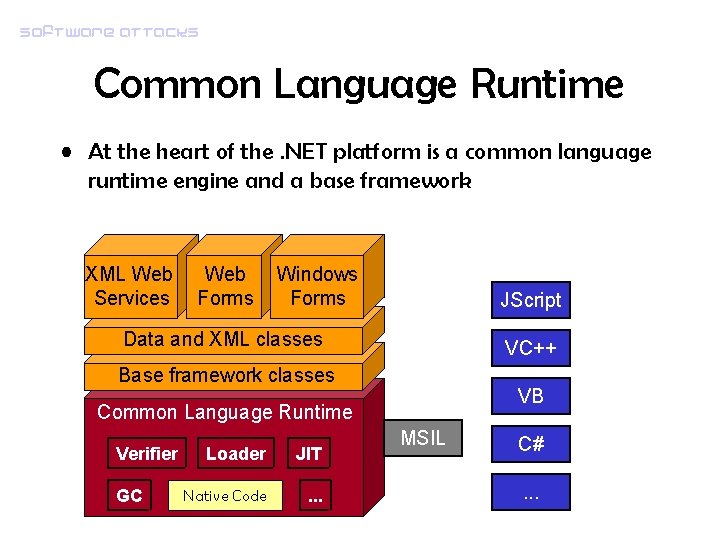

Software attacks Common Language Runtime • At the heart of the. NET platform is a common language runtime engine and a base framework XML Web Services Web Forms Windows Forms JScript Data and XML classes VC++ Base framework classes VB Common Language Runtime Verifier GC Loader JIT Native Code . . . MSIL C#. . .

Software attacks MSIL • Every. NET language is not compiled to machine code targeted to any specific CPU. Instead, the code generated by the compiler is a Microsoft intermediate language (MSIL) • MSIL is much higher level than most CPU machine languages: – – – It understands object types Has instructions that create and initialize objects Calls to virtual methods on objects Manipulate array elements directly Instructions that raise and catch exceptions

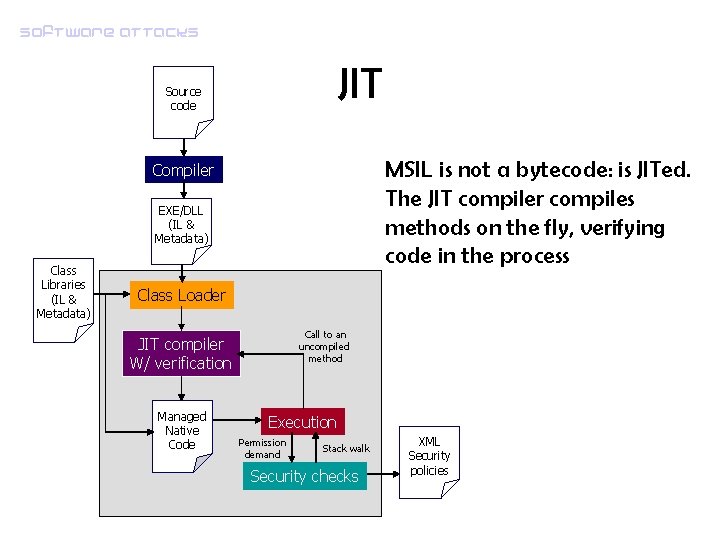

Software attacks JIT Source code MSIL is not a bytecode: is JITed. The JIT compiler compiles methods on the fly, verifying code in the process Compiler EXE/DLL (IL & Metadata) Class Libraries (IL & Metadata) Class Loader Call to an uncompiled method JIT compiler W/ verification Managed Native Code Execution Permission demand Stack walk Security checks XML Security policies

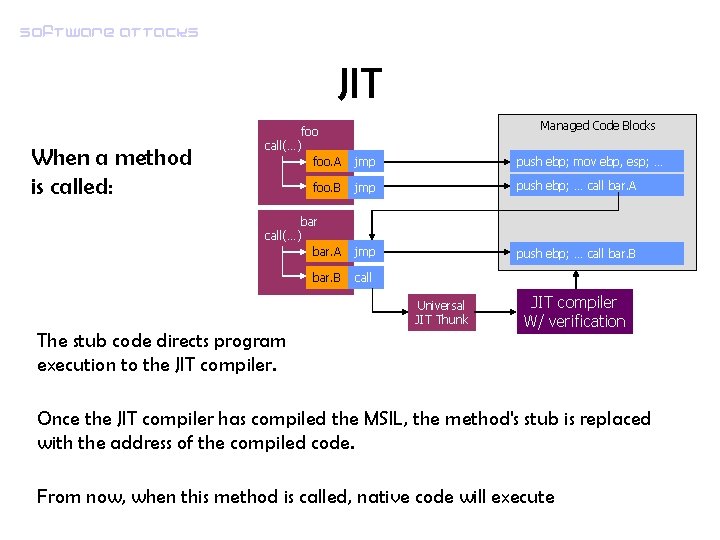

Software attacks JIT When a method is called: Managed Code Blocks foo call(…) foo. A jmp push ebp; mov ebp, esp; … foo. B jmp push ebp; … call bar. A bar call(…) bar. A jmp push ebp; … call bar. B call Universal JIT Thunk The stub code directs program execution to the JIT compiler W/ verification Once the JIT compiler has compiled the MSIL, the method's stub is replaced with the address of the compiled code. From now, when this method is called, native code will execute

Software attacks Metadata • The Microsoft. NET platform uses metadata and assemblies to store information about components: – – enabling cross-language programming locate and load class types in the file resolve method calls and field references resolving the infamous DLL Hell problem (Sx. S execution) – easy linking and loading of assemblies – easy versioning – reflection.

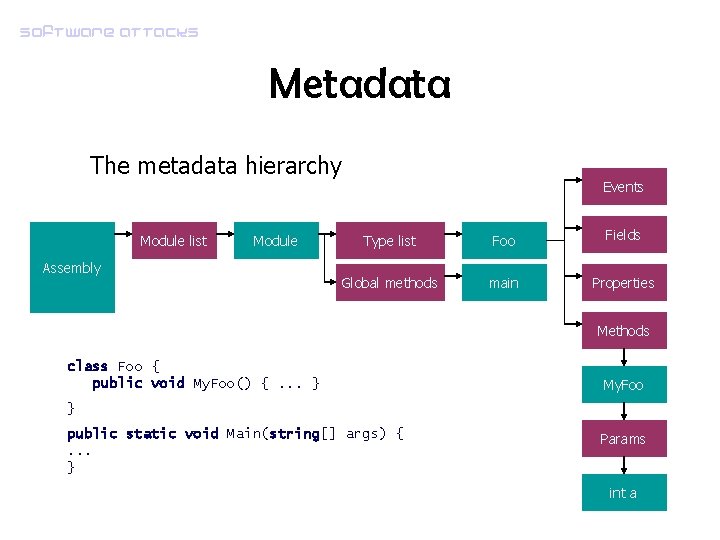

Software attacks Metadata The metadata hierarchy Module list Module Assembly Events Type list Foo Fields Global methods main Properties Methods class Foo { public void My. Foo() {. . . } My. Foo } public static void Main(string[] args) {. . . } Params int a

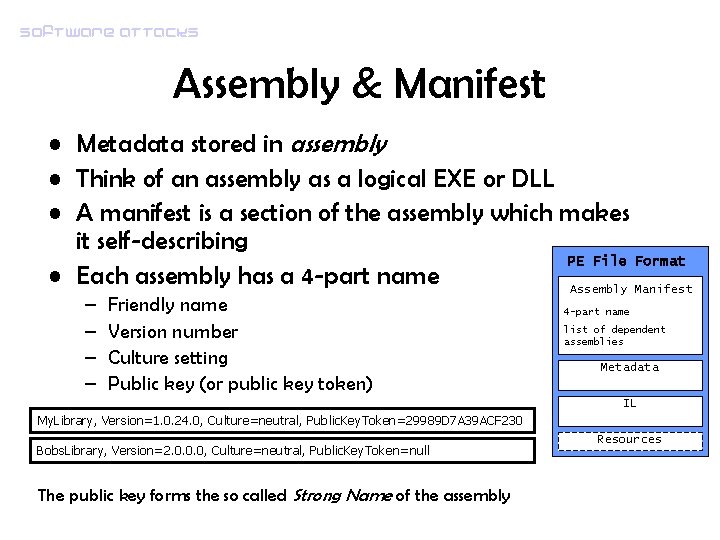

Software attacks Assembly & Manifest • Metadata stored in assembly • Think of an assembly as a logical EXE or DLL • A manifest is a section of the assembly which makes it self-describing PE File Format • Each assembly has a 4 -part name Assembly Manifest – – Friendly name Version number Culture setting Public key (or public key token) 4 -part name list of dependent assemblies Metadata IL My. Library, Version=1. 0. 24. 0, Culture=neutral, Public. Key. Token=29989 D 7 A 39 ACF 230 Bobs. Library, Version=2. 0. 0. 0, Culture=neutral, Public. Key. Token=null The public key forms the so called Strong Name of the assembly Resources

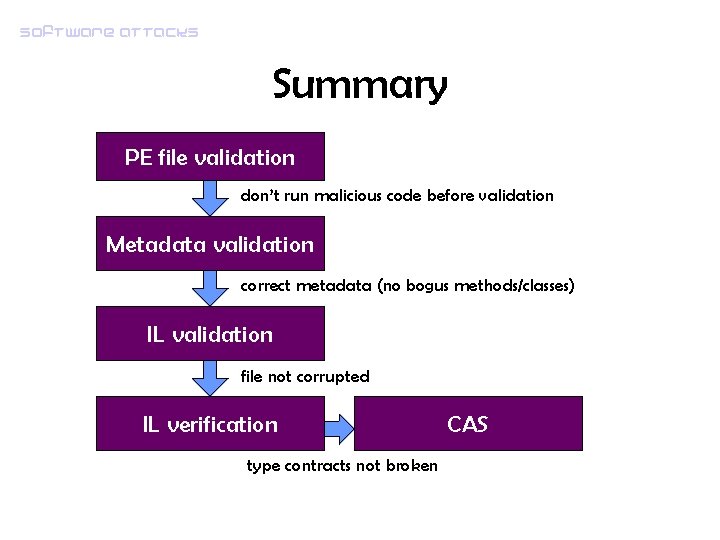

Software attacks Phase I: Verification and validation • Even before checking if an assembly has the necessary permission to access a resource, it has to pass a set of rigorous checks • Each related set of checks is called a validation • Three major validation are made: – PE file format validation – Metadata validation – IL validation • These check ensure that later security checks on assemblies are valid

Software attacks PE file format validation • Assemblies are PE files • They contain a special slot (pointing to an header with relevant addresses) + an unique x 86 asm instruction (for old systems) – Donut virus • Time of check: right after CLR initialization – All relevant addresses (Metadata location, IL location, etc. ) point inside the PE file – Ensures assembly isolation

Software attacks Metadata validation • As we saw, metadata is a set of linked tables • Metadata is used for many crucial functions (recall for example load of types) – Structural validation (the table structure must adhere some standards) – Ex: field table pointing to a buffer (data section) – Semantic validation (rules of the CLR: type invariants, type relatinons) – Ex: subclass B of A cannot be A superclass • They are done before metadata is used (i. e. before IL validation)

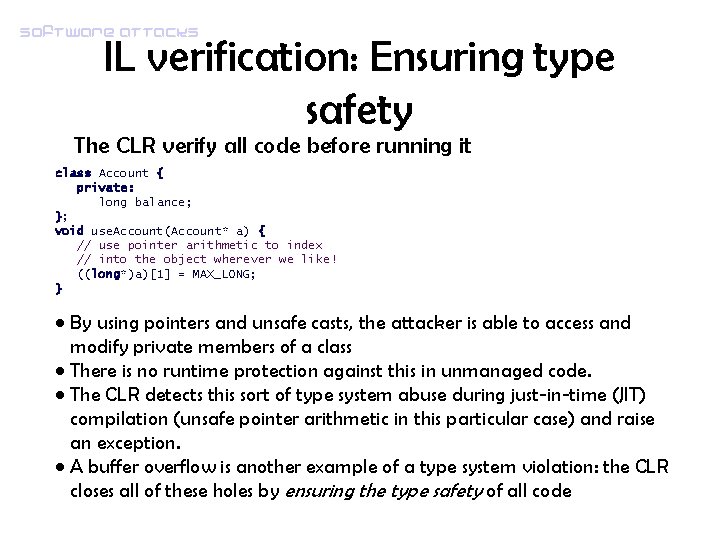

Software attacks IL validation & verification • As we saw, IL is not executed but compiled on a method basis • Before compiling a method, IL is validated • Then, it is verified – Native code has direct memory access and can subvert isolation – Type contracts can be broken (C-style casts, access to private members…) • JIT checks that IL is not used in a way it can generate unsafe native code

Software attacks IL validation • Bytes in the IL stream are all valid IL instructions • All jumps are inside the same method

Software attacks IL verification: Ensuring type safety The CLR verify all code before running it class Account { private: long balance; }; void use. Account(Account* a) { // use pointer arithmetic to index // into the object wherever we like! ((long*)a)[1] = MAX_LONG; } • By using pointers and unsafe casts, the attacker is able to access and modify private members of a class • There is no runtime protection against this in unmanaged code. • The CLR detects this sort of type system abuse during just-in-time (JIT) compilation (unsafe pointer arithmetic in this particular case) and raise an exception. • A buffer overflow is another example of a type system violation: the CLR closes all of these holes by ensuring the type safety of all code

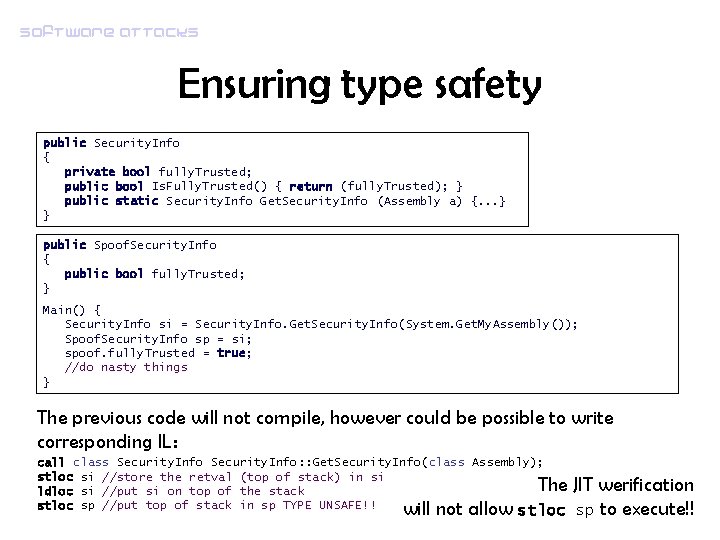

Software attacks Ensuring type safety public Security. Info { private bool fully. Trusted; public bool Is. Fully. Trusted() { return (fully. Trusted); } public static Security. Info Get. Security. Info (Assembly a) {. . . } } public Spoof. Security. Info { public bool fully. Trusted; } Main() { Security. Info si = Security. Info. Get. Security. Info(System. Get. My. Assembly()); Spoof. Security. Info sp = si; spoof. fully. Trusted = true; //do nasty things } The previous code will not compile, however could be possible to write corresponding IL: call class Security. Info: : Get. Security. Info(class Assembly); stloc si //store the retval (top of stack) in si ldloc si //put si on top of the stack stloc sp //put top of stack in sp TYPE UNSAFE!! stloc will not allow The JIT werification sp to execute!!

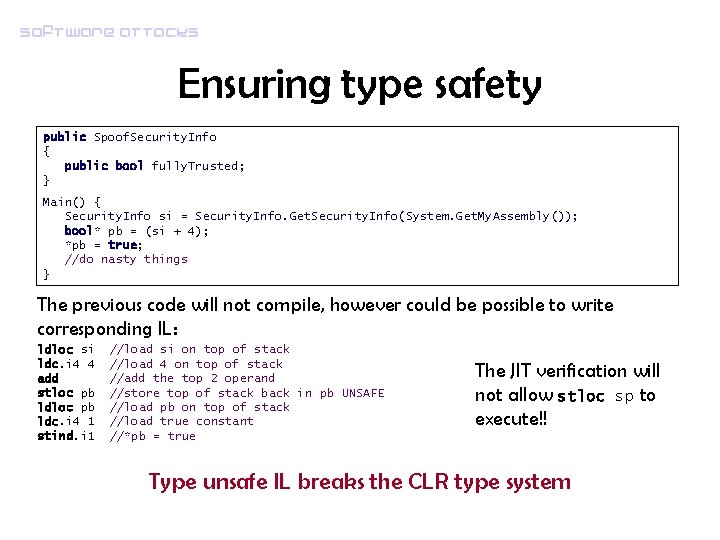

Software attacks Ensuring type safety public Spoof. Security. Info { public bool fully. Trusted; } Main() { Security. Info si = Security. Info. Get. Security. Info(System. Get. My. Assembly()); bool* pb = (si + 4); *pb = true; //do nasty things } The previous code will not compile, however could be possible to write corresponding IL: ldloc si ldc. i 4 4 add stloc pb ldc. i 4 1 stind. i 1 //load si on top of stack //load 4 on top of stack //add the top 2 operand //store top of stack back in pb UNSAFE //load pb on top of stack //load true constant //*pb = true The JIT verification will not allow stloc sp to execute!! Type unsafe IL breaks the CLR type system

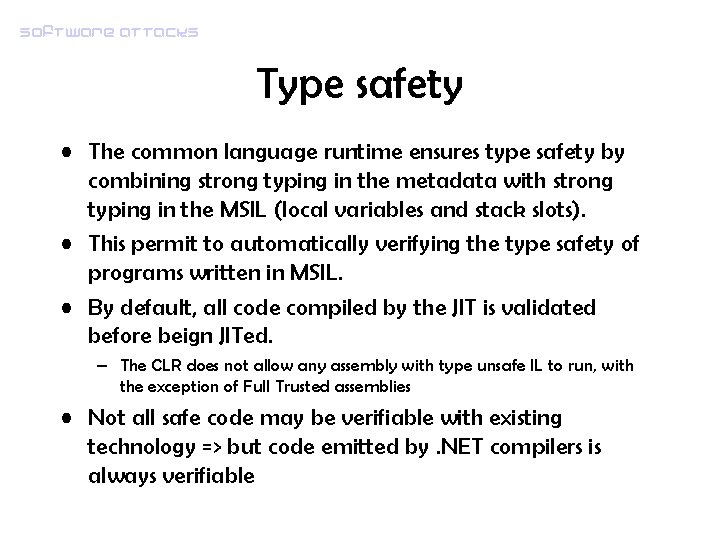

Software attacks Type safety • The common language runtime ensures type safety by combining strong typing in the metadata with strong typing in the MSIL (local variables and stack slots). • This permit to automatically verifying the type safety of programs written in MSIL. • By default, all code compiled by the JIT is validated before beign JITed. – The CLR does not allow any assembly with type unsafe IL to run, with the exception of Full Trusted assemblies • Not all safe code may be verifiable with existing technology => but code emitted by. NET compilers is always verifiable

Software attacks Summary PE file validation don’t run malicious code before validation Metadata validation correct metadata (no bogus methods/classes) IL validation file not corrupted IL verification type contracts not broken CAS

Software attacks Security models • Traditional OS security provides isolation and access control based on user accounts. • This is useful for organizations, but assumes that all code is equally trustworthy. • This assumption is broken by the increasing reliance on mobile code (Web services and components, e-mail attachments, …) • There is a need for more granular control of application behavior.

Software attacks User-based security model • Programs have the same rights of the user who executes them, so they can do all the things a user can do – Often users didn’t even know what a program can do! • Too many users run as administrator, too many programs require admin settings to install and run – Even when a program is executed with standard rights, the same concept holds!

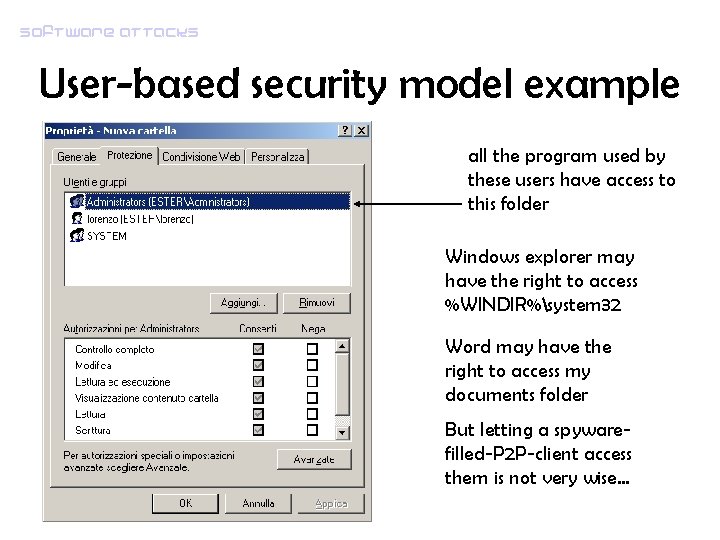

Software attacks User-based security model example all the program used by these users have access to this folder Windows explorer may have the right to access %WINDIR%system 32 Word may have the right to access my documents folder But letting a spywarefilled-P 2 P-client access them is not very wise…

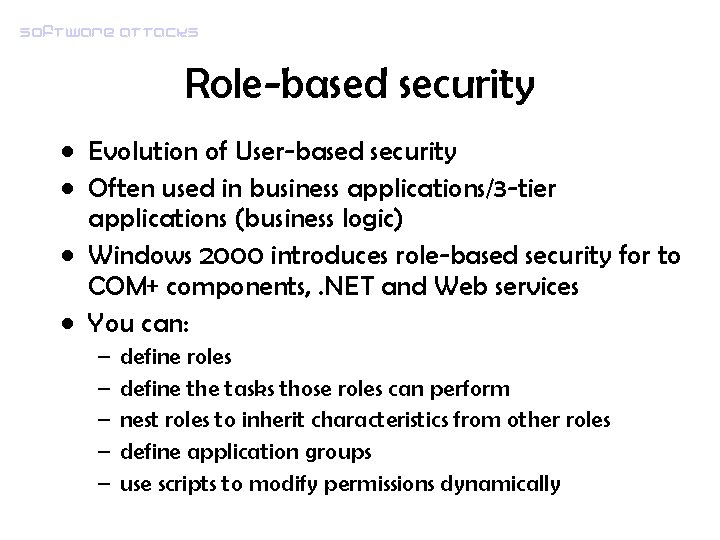

Software attacks Role-based security • Evolution of User-based security • Often used in business applications/3 -tier applications (business logic) • Windows 2000 introduces role-based security for to COM+ components, . NET and Web services • You can: – – – define roles define the tasks those roles can perform nest roles to inherit characteristics from other roles define application groups use scripts to modify permissions dynamically

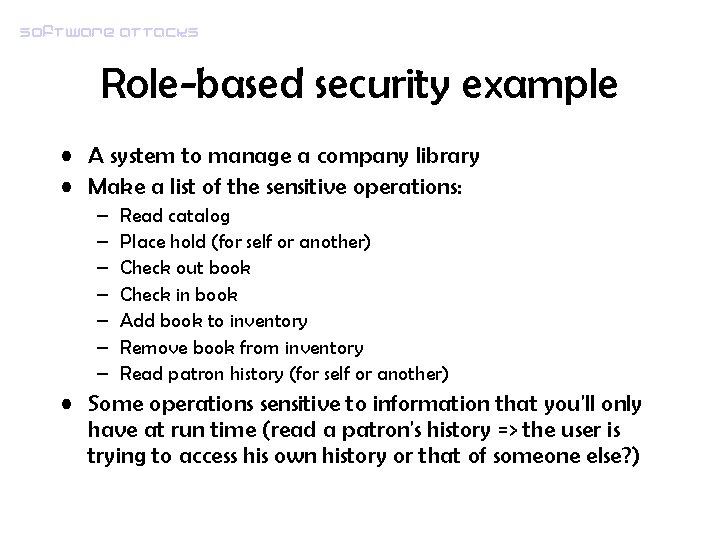

Software attacks Role-based security example • A system to manage a company library • Make a list of the sensitive operations: – – – – Read catalog Place hold (for self or another) Check out book Check in book Add book to inventory Remove book from inventory Read patron history (for self or another) • Some operations sensitive to information that you'll only have at run time (read a patron's history => the user is trying to access his own history or that of someone else? )

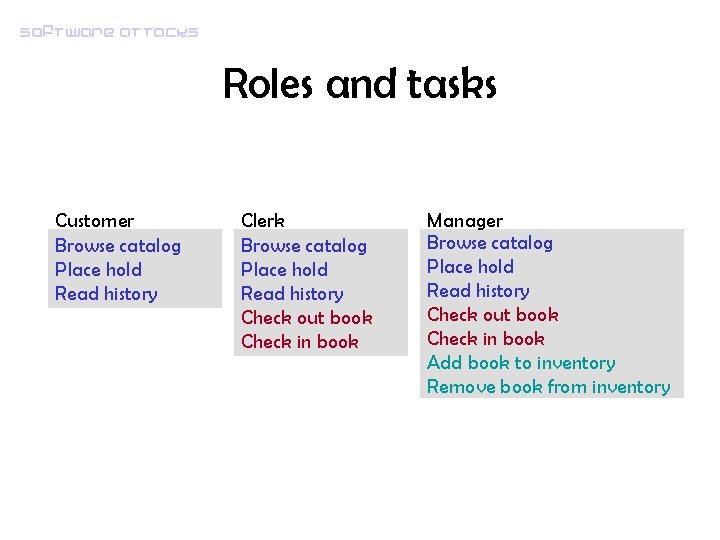

Software attacks Roles and tasks Customer Browse catalog Place hold Read history Clerk Browse catalog Place hold Read history Check out book Check in book Manager Browse catalog Place hold Read history Check out book Check in book Add book to inventory Remove book from inventory

Software attacks Code-based security model • Authenticate code, not the user running it => Code identity • Authorize code, not the user => security policies an permission sets • Enforce code authorization => CAS

Software attacks Evidences • Code identity is based on evidences • Using evidences, security policy can control the privileges granted to an application • Eight types of evidences: – Application. Directory: From what directory was this assembly executed? – Site: From what site was this assembly downloaded? – URL: Which is the URL of origin of this assembly? – Zone: From what zone was this assembly obtained? – Hash: What’s the hash of this assembly? – Strong. Name: What's the strong name of this assembly? – Publisher: Who signed this assembly?

Software attacks Code Identity • There are only two ways for execute code: – through the class loader – through interoperability services • Both of these are services provided by the CLR and are part of the security perimeter • The class loader maintains information about the source of every class that it loads • The class loader provides some of the evidence upon which code identity is based

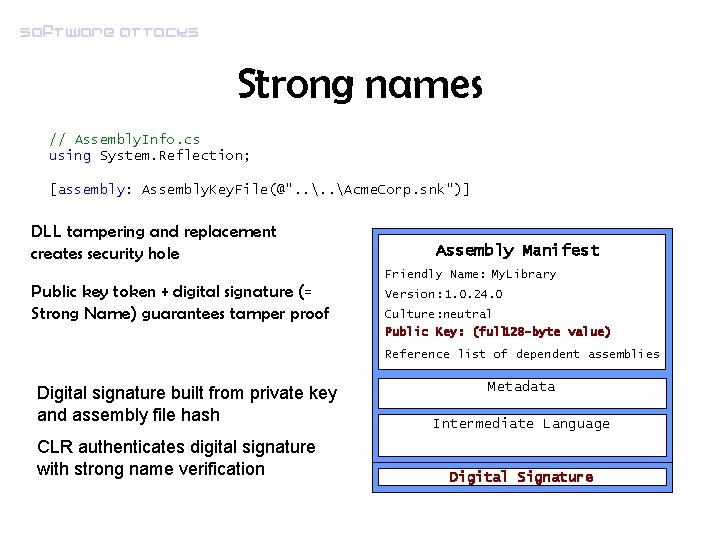

Software attacks Strong names // Assembly. Info. cs using System. Reflection; [assembly: Assembly. Key. File(@". . Acme. Corp. snk")] DLL tampering and replacement creates security hole Assembly Manifest Friendly Name: My. Library Public key token + digital signature (= Strong Name) guarantees tamper proof Version: 1. 0. 24. 0 Culture: neutral Public Key: (full 128 -byte value) Reference list of dependent assemblies Digital signature built from private key and assembly file hash CLR authenticates digital signature with strong name verification Metadata Intermediate Language Digital Signature

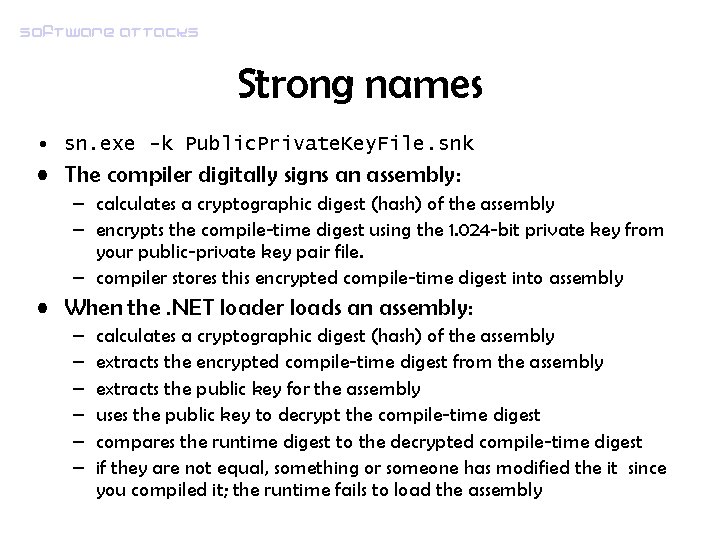

Software attacks Strong names • sn. exe -k Public. Private. Key. File. snk • The compiler digitally signs an assembly: – calculates a cryptographic digest (hash) of the assembly – encrypts the compile-time digest using the 1. 024 -bit private key from your public-private key pair file. – compiler stores this encrypted compile-time digest into assembly • When the. NET loader loads an assembly: – – – calculates a cryptographic digest (hash) of the assembly extracts the encrypted compile-time digest from the assembly extracts the public key for the assembly uses the public key to decrypt the compile-time digest compares the runtime digest to the decrypted compile-time digest if they are not equal, something or someone has modified the it since you compiled it; the runtime fails to load the assembly

Software attacks Strong names • Strong Names during developement cycle: – Build the assembly with delay signing enabled – Enable strong name verification skipping for the assembly (sn. exe -Vr) – Debug and test the assembly – Obfuscate the assembly – Debug and test the obfuscated version – Delay sign the assembly (sn. exe -R).

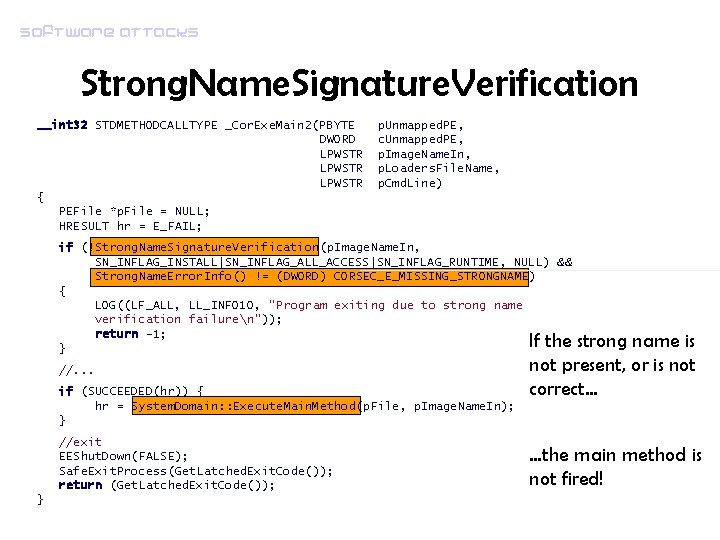

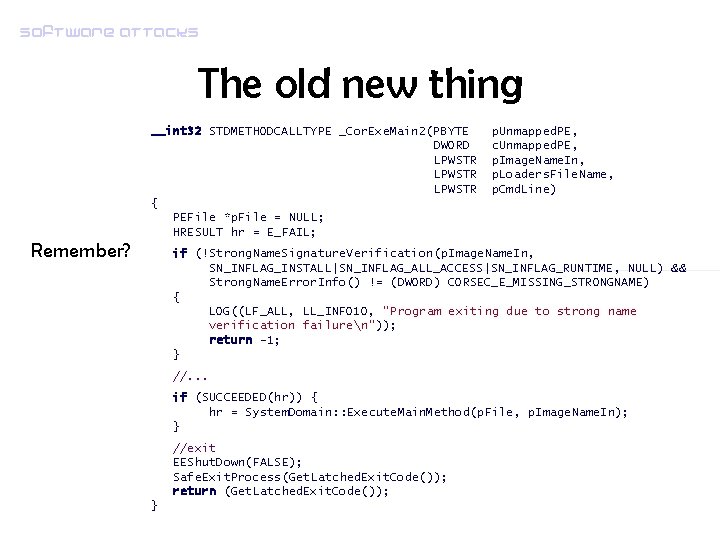

Software attacks Strong. Name. Signature. Verification __int 32 STDMETHODCALLTYPE _Cor. Exe. Main 2(PBYTE DWORD LPWSTR { PEFile *p. File = NULL; HRESULT hr = E_FAIL; p. Unmapped. PE, c. Unmapped. PE, p. Image. Name. In, p. Loaders. File. Name, p. Cmd. Line) if (!Strong. Name. Signature. Verification(p. Image. Name. In, SN_INFLAG_INSTALL|SN_INFLAG_ALL_ACCESS|SN_INFLAG_RUNTIME, NULL) && Strong. Name. Error. Info() != (DWORD) CORSEC_E_MISSING_STRONGNAME) { LOG((LF_ALL, LL_INFO 10, "Program exiting due to strong name verification failuren")); return -1; } //. . . if (SUCCEEDED(hr)) { hr = System. Domain: : Execute. Main. Method(p. File, p. Image. Name. In); } //exit EEShut. Down(FALSE); Safe. Exit. Process(Get. Latched. Exit. Code()); return (Get. Latched. Exit. Code()); } If the strong name is not present, or is not correct… …the main method is not fired!

Software attacks Authorization & Enforcement • They are highly coupled • Security decisions – Coded in software (dynamic, hardcoded) – Declared in assemblies (declarative security) – Inserted in Security Policy (static, configurable)

Software attacks Setting permission • The CLR discover permissions by gathering evidences from the assembly and giving the answers to the security policy manager, which converts them into permissions. • However, often we may want to restrict the set of permissions based on policy at runtime. Let’s see an example.

Software attacks Runtime security request • Imagine an highly trusted assemblies calling to a user-provided script. The assembly invoking the script might want to restrict the effective permissions before making the call • Need for a dynamic permission set management: – – – Permission. Set Demand Deny and Revert. Deny, Permit. Only and Revert. Permit. Only Assert

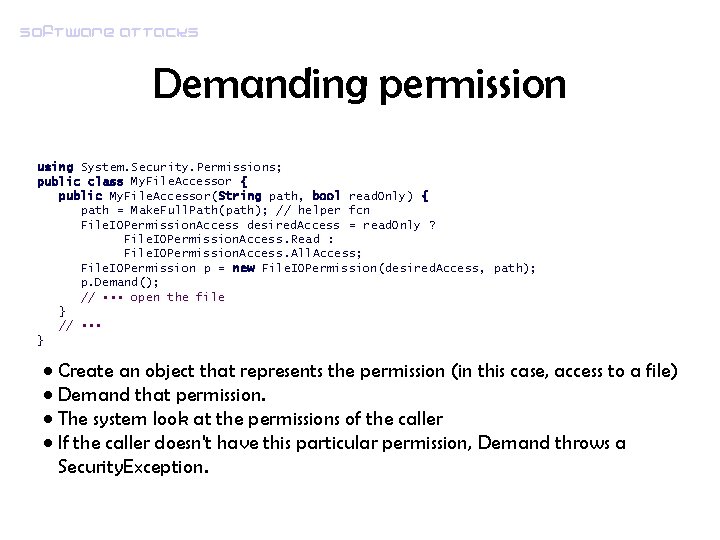

Software attacks Demanding permission using System. Security. Permissions; public class My. File. Accessor { public My. File. Accessor(String path, bool read. Only) { path = Make. Full. Path(path); // helper fcn File. IOPermission. Access desired. Access = read. Only ? File. IOPermission. Access. Read : File. IOPermission. Access. All. Access; File. IOPermission p = new File. IOPermission(desired. Access, path); p. Demand(); // • • • open the file } // • • • } • Create an object that represents the permission (in this case, access to a file) • Demand that permission. • The system look at the permissions of the caller • If the caller doesn't have this particular permission, Demand throws a Security. Exception.

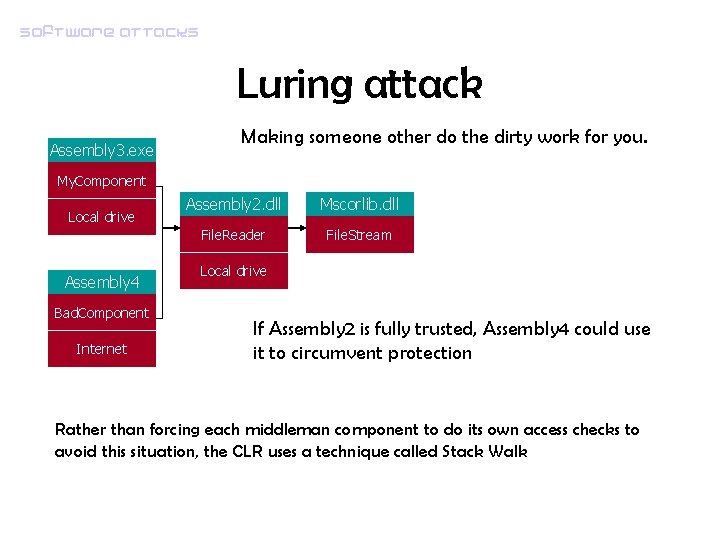

Software attacks Luring attack Assembly 3. exe Making someone other do the dirty work for you. My. Component Local drive Assembly 4 Bad. Component Internet Assembly 2. dll Mscorlib. dll File. Reader File. Stream Local drive If Assembly 2 is fully trusted, Assembly 4 could use it to circumvent protection Rather than forcing each middleman component to do its own access checks to avoid this situation, the CLR uses a technique called Stack Walk

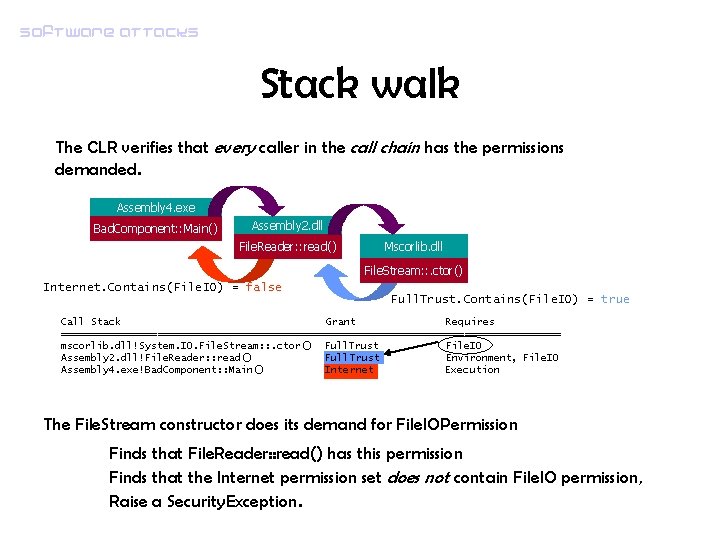

Software attacks Stack walk The CLR verifies that every caller in the call chain has the permissions demanded. Assembly 4. exe Bad. Component: : Main() Assembly 2. dll File. Reader: : read() Mscorlib. dll File. Stream: : . ctor() Internet. Contains(File. IO) = false Full. Trust. Contains(File. IO) = true Call Stack Grant Requires ========================================== mscorlib. dll!System. IO. File. Stream: : . ctor() Full. Trust File. IO Assembly 2. dll!File. Reader: : read() Full. Trust Environment, File. IO Assembly 4. exe!Bad. Component: : Main() Internet Execution The File. Stream constructor does its demand for File. IOPermission Finds that File. Reader: : read() has this permission Finds that the Internet permission set does not contain File. IO permission, Raise a Security. Exception.

Software attacks Stack walk When the local component Assembly 3. exe uses File. Reader to read a file, it will be given access because all asseblies in the call chain are local. However, when Bad. Component attempts to use File. Reader, File. Stream calls Demand, the CLR walks up the stack and discover that one of the callers in the call chain don't have the requisite permissions: Demand will throw a Security. Exception.

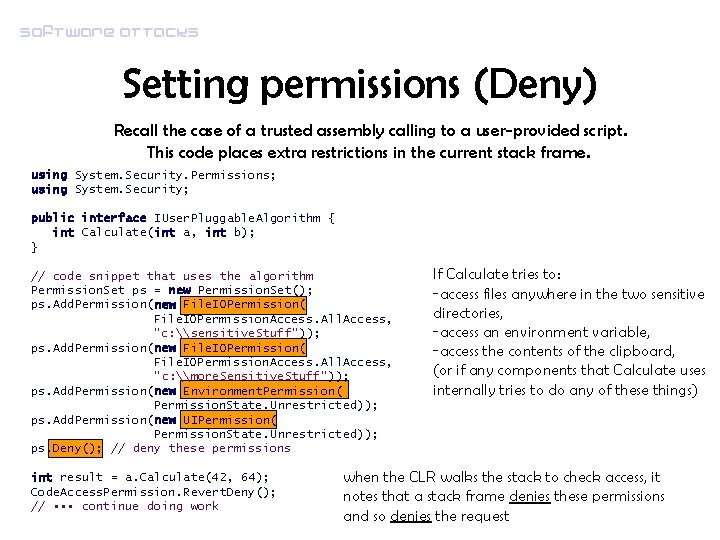

Software attacks Setting permissions (Deny) Recall the case of a trusted assembly calling to a user-provided script. This code places extra restrictions in the current stack frame. using System. Security. Permissions; using System. Security; public interface IUser. Pluggable. Algorithm { int Calculate(int a, int b); } // code snippet that uses the algorithm Permission. Set ps = new Permission. Set(); ps. Add. Permission(new File. IOPermission( File. IOPermission. Access. All. Access, "c: \sensitive. Stuff")); ps. Add. Permission(new File. IOPermission( File. IOPermission. Access. All. Access, "c: \more. Sensitive. Stuff")); ps. Add. Permission(new Environment. Permission( Permission. State. Unrestricted)); ps. Add. Permission(new UIPermission( Permission. State. Unrestricted)); ps. Deny(); // deny these permissions int result = a. Calculate(42, 64); Code. Access. Permission. Revert. Deny(); // • • • continue doing work If Calculate tries to: -access files anywhere in the two sensitive directories, -access an environment variable, -access the contents of the clipboard, (or if any components that Calculate uses internally tries to do any of these things) when the CLR walks the stack to check access, it notes that a stack frame denies these permissions and so denies the request

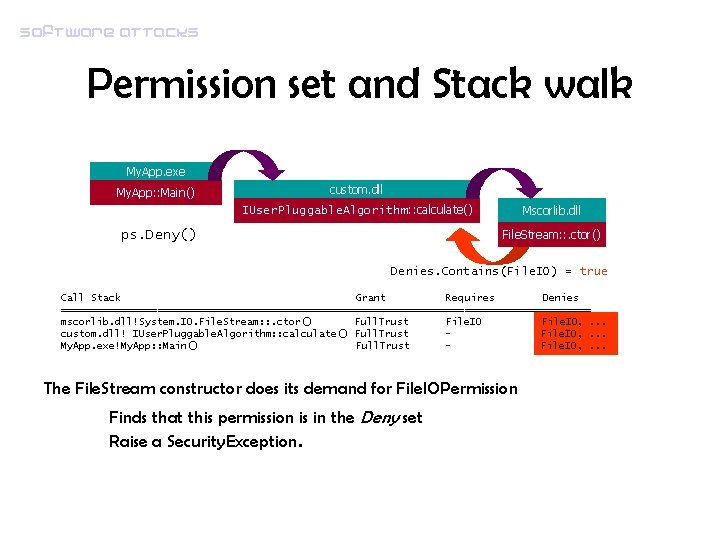

Software attacks Permission set and Stack walk My. App. exe My. App: : Main() custom. dll Mscorlib. dll IUser. Pluggable. Algorithm: : calculate() File. Stream: : . ctor() ps. Deny() Denies. Contains(File. IO) = true Call Stack Grant Requires Denies ============================================ mscorlib. dll!System. IO. File. Stream: : . ctor() Full. Trust File. IO, . . . custom. dll! IUser. Pluggable. Algorithm: : calculate() Full. Trust File. IO, . . . My. App. exe!My. App: : Main() Full. Trust File. IO, . . . The File. Stream constructor does its demand for File. IOPermission Finds that this permission is in the Deny set Raise a Security. Exception.

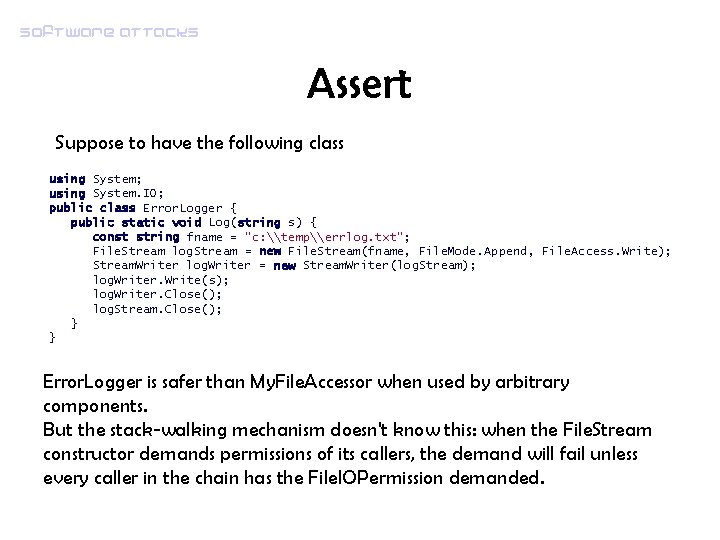

Software attacks Assert Suppose to have the following class using System; using System. IO; public class Error. Logger { public static void Log(string s) { const string fname = "c: \temp\errlog. txt"; File. Stream log. Stream = new File. Stream(fname, File. Mode. Append, File. Access. Write); Stream. Writer log. Writer = new Stream. Writer(log. Stream); log. Writer. Write(s); log. Writer. Close(); log. Stream. Close(); } } Error. Logger is safer than My. File. Accessor when used by arbitrary components. But the stack-walking mechanism doesn't know this: when the File. Stream constructor demands permissions of its callers, the demand will fail unless every caller in the chain has the File. IOPermission demanded.

Software attacks Assert • If Error. Logger class was installed locally, and its assembly is granted full access to the file system by the code access security policy. • But this class was designed to provide service to other assemblies, some of which aren't granted permissions to write to the local file system! • This impediment can be removed by having Error. Logger assert its own authority to write to the log file.

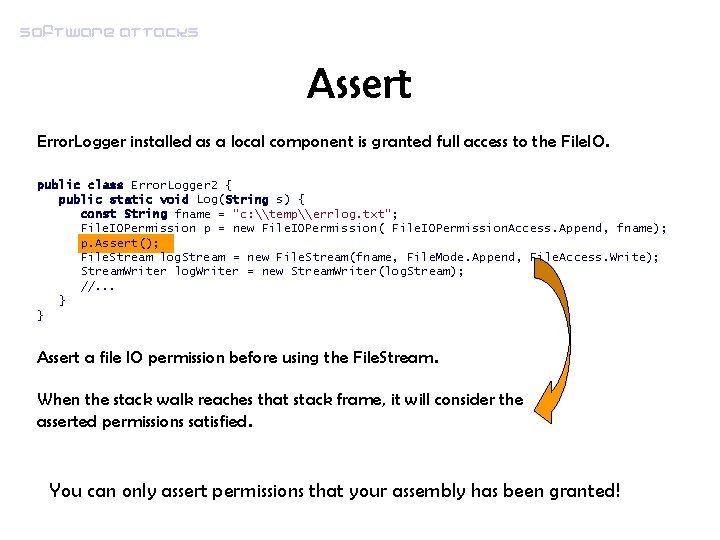

Software attacks Assert Error. Logger installed as a local component is granted full access to the File. IO. public class Error. Logger 2 { public static void Log(String s) { const String fname = "c: \temp\errlog. txt"; File. IOPermission p = new File. IOPermission( File. IOPermission. Access. Append, fname); p. Assert(); File. Stream log. Stream = new File. Stream(fname, File. Mode. Append, File. Access. Write); Stream. Writer log. Writer = new Stream. Writer(log. Stream); //. . . } } Assert a file IO permission before using the File. Stream. When the stack walk reaches that stack frame, it will consider the asserted permissions satisfied. You can only assert permissions that your assembly has been granted!

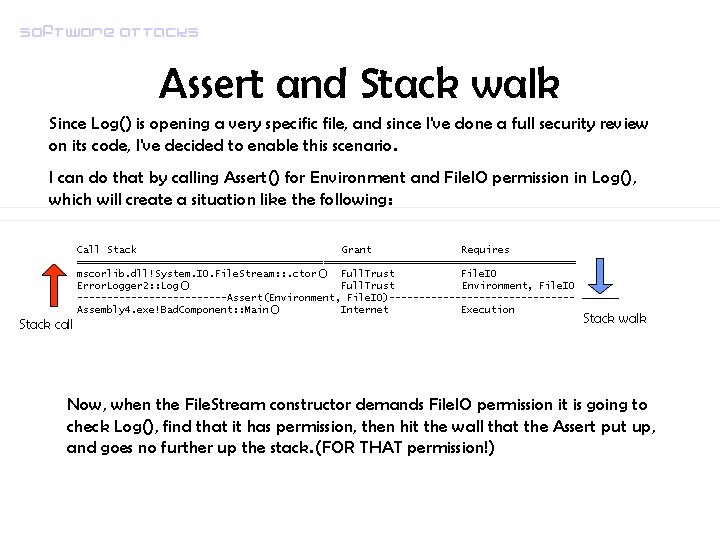

Software attacks Assert and Stack walk Since Log() is opening a very specific file, and since I've done a full security review on its code, I've decided to enable this scenario. I can do that by calling Assert() for Environment and File. IO permission in Log(), which will create a situation like the following: Call Stack Grant Requires ========================================== mscorlib. dll!System. IO. File. Stream: : . ctor() Full. Trust File. IO Error. Logger 2: : Log() Full. Trust Environment, File. IO -------------Assert(Environment, File. IO)---------------Assembly 4. exe!Bad. Component: : Main() Internet Execution Stack call Stack walk Now, when the File. Stream constructor demands File. IO permission it is going to check Log(), find that it has permission, then hit the wall that the Assert put up, and goes no further up the stack. (FOR THAT permission!)

Software attacks Declarative Security • This powerful mechanism allows you to insert code access security checks into your code by annotating classes, fields, or methods. • The declarations are encoded in the assembly metadata and are enforced by the. NET security system. [File. IOPermission(Security. Action. Demand, Unmanaged. Code = true)] void a. Method() {. . . }

Software attacks Security policy • Permissions are assigned on a per-assembly basis by the Class Loader in the CLR. The steps are: – Gather evidence – Present evidence to security policy – discover assigned permission set • It is possible to fine-tune permission set based on assembly requirements

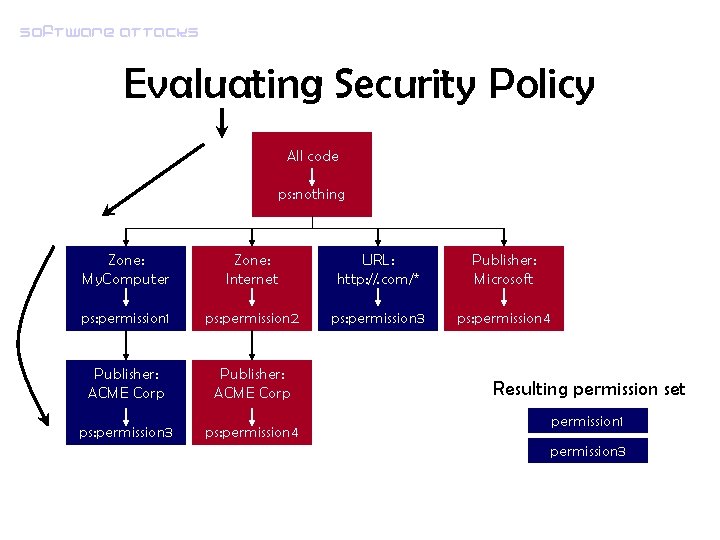

Software attacks Evaluating Security Policy • To discover the permission set assigned to that assembly, a graph is traversed. • The root node is really just a starting place for the traversal: – it matches all code – by default refers to the permission set named Nothing, which contains no permissions. • The traversal of the graph is governed by a couple of rules. – if a parent node doesn't match, none of its children are tested for matches – each node can also have the Exclusive attribute, that influence the traversal: only the permission set for that particular node will be used

Software attacks Evaluating Security Policy All code ps: nothing Zone: My. Computer Zone: Internet URL: http: //. com/* Publisher: Microsoft ps: permission 1 ps: permission 2 ps: permission 3 ps: permission 4 Publisher: ACME Corp ps: permission 3 ps: permission 4 Resulting permission set permission 1 permission 3

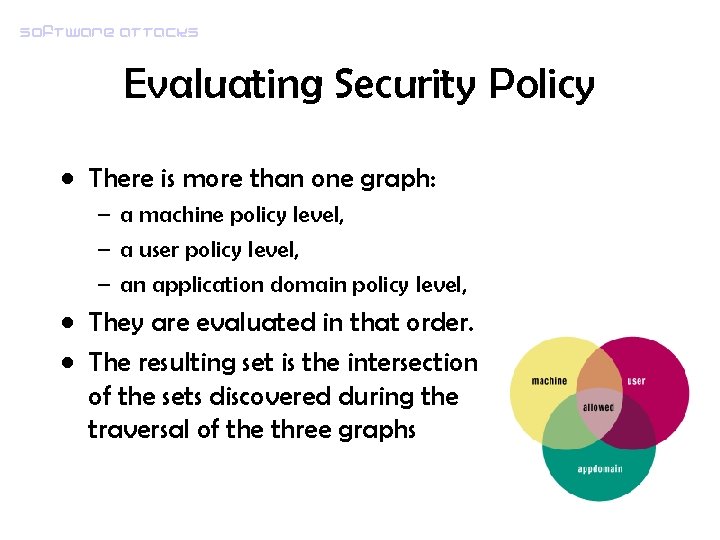

Software attacks Evaluating Security Policy • There is more than one graph: – a machine policy level, – a user policy level, – an application domain policy level, • They are evaluated in that order. • The resulting set is the intersection of the sets discovered during the traversal of the three graphs

Software attacks App. Domains • Last enforcement technique, independent from permissions • An App. Domain is conceptually similar to a process, but has a much lighter weight. • When a process hosts the CLR, it always has a default App. Domain where all managed types are loaded. • By creating multiple App. Domains, types in one App. Domain cannot liberaly access types in any other App. Domains. – Sharing references between App. Domains requires the use of remoting. If A and B are in separate App. Domains and A want to access B objects, B has to pass a reference to A. – A can't poke around without being invited.

Software attacks Against CAS • Misuse of Assert • Unmanaged code security permissions: – Permission to call unmanaged code, it can bypass all CAS – no permission to the file system, but allowed to call into unmanaged code => call to the Win 32 API – No protection if an attacker executes unmanaged code as admin • Permission escalation by change of location: – Assembly used from Internet => low permissions – Convince the victim to install a copy of the assembly on his machine => permission escalation

Software attacks Against CAS • Fully trusted assembly – Can cross App. Domain boundaries – Can pass over IStack. Walk. Deny with new Permission. Set( Permission. State. Unrestricted). Assert(); – Can access private members (via Reflection) by turning off JIT checks Security. Manager. Security. Enabled = false; • Remember the principle of least privilege, and design and code with it in mind.

Software attacks The old new thing • How an. exe assembly get turned into code? • _Cor. Exe. Main 2 starts the process – verifies the signature on the assembly (Strong. Name. Signature. Verification) – gets the execution engine (EE) ready (Co. Initialize. EE ) • EEStartup starts up all supporting services - garbage collector, - JIT - thread pool manager. – System. Domain: : Execute. Main. Method makes the Class Loder load an JIT the application main method

Software attacks The old new thing __int 32 STDMETHODCALLTYPE _Cor. Exe. Main 2(PBYTE DWORD LPWSTR { PEFile *p. File = NULL; HRESULT hr = E_FAIL; Remember? p. Unmapped. PE, c. Unmapped. PE, p. Image. Name. In, p. Loaders. File. Name, p. Cmd. Line) if (!Strong. Name. Signature. Verification(p. Image. Name. In, SN_INFLAG_INSTALL|SN_INFLAG_ALL_ACCESS|SN_INFLAG_RUNTIME, NULL) && Strong. Name. Error. Info() != (DWORD) CORSEC_E_MISSING_STRONGNAME) { LOG((LF_ALL, LL_INFO 10, "Program exiting due to strong name verification failuren")); return -1; } //. . . if (SUCCEEDED(hr)) { hr = System. Domain: : Execute. Main. Method(p. File, p. Image. Name. In); } //exit EEShut. Down(FALSE); Safe. Exit. Process(Get. Latched. Exit. Code()); return (Get. Latched. Exit. Code()); }

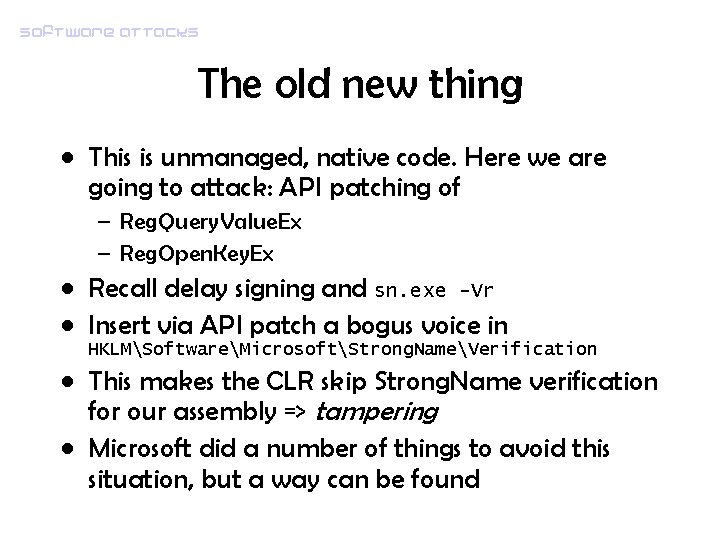

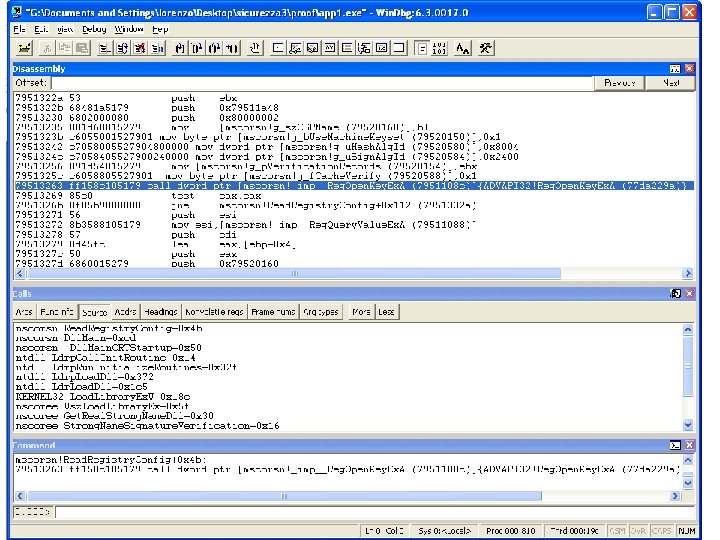

Software attacks The old new thing • This is unmanaged, native code. Here we are going to attack: API patching of – Reg. Query. Value. Ex – Reg. Open. Key. Ex • Recall delay signing and sn. exe -Vr • Insert via API patch a bogus voice in HKLMSoftwareMicrosoftStrong. NameVerification • This makes the CLR skip Strong. Name verification for our assembly => tampering • Microsoft did a number of things to avoid this situation, but a way can be found

Software attacks

- Slides: 59