Skeletons and Asynchronous RPC for Embedded Data and

Skeletons and Asynchronous RPC for Embedded Data- and Task Parallel Image Processing IAPR Conference on Machine Vision Applications Wouter Caarls, Pieter Jonker, Henk Corporaal May 16 -18, 2005 1 Quantitative Imaging Group, department of Imaging Science & Technology

Overview • Introduction & Motivation • Approach • Algorithmic skeletons • Asynchronous RPC • Implementation • Run-time system • Prototype architecture • Results • Conclusions & Future work May 16 -18, 2005 2

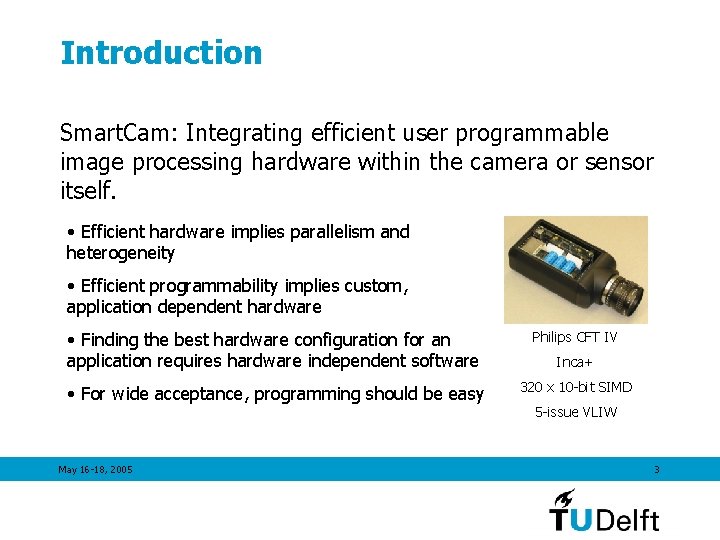

Introduction Smart. Cam: Integrating efficient user programmable image processing hardware within the camera or sensor itself. • Efficient hardware implies parallelism and heterogeneity • Efficient programmability implies custom, application dependent hardware • Finding the best hardware configuration for an application requires hardware independent software Philips CFT IV • For wide acceptance, programming should be easy 320 x 10 -bit SIMD May 16 -18, 2005 Inca+ 5 -issue VLIW 3

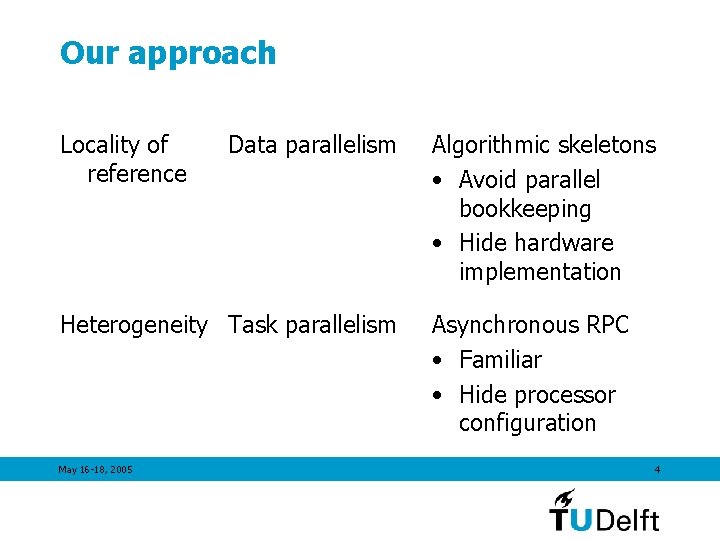

Our approach Locality of reference Data parallelism Heterogeneity Task parallelism May 16 -18, 2005 Algorithmic skeletons • Avoid parallel bookkeeping • Hide hardware implementation Asynchronous RPC • Familiar • Hide processor configuration 4

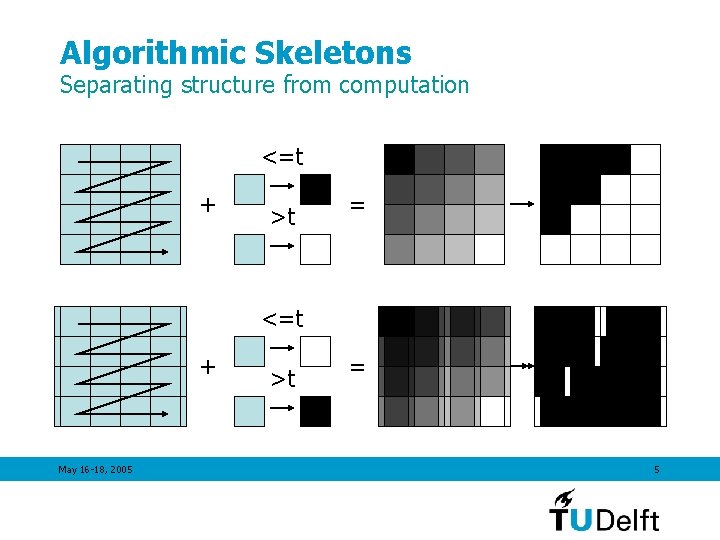

Algorithmic Skeletons Separating structure from computation <=t + >t = <=t + May 16 -18, 2005 >t = 5

Algorithmic Skeletons Implicit parallel programming • Choice of skeleton implies set of constraints (dependencies) • System is free as long as constraints are not violated • Distribution • Scanning order • Consistent library interface facilitates between-skeleton dependency analysis • No side effects • Well-defined inputs and outputs May 16 -18, 2005 6

Algorithmic Skeletons Disadvantages • Inability to parallelize algorithms that cannot be expressed using one of the skeletons in the library • Inability to specify certain algorithmic optimizations • Inability to specify architecture-dependent optimizations • Solution: Allow the programmer to add his own (application-specific or architecture-specific) skeletons to the library May 16 -18, 2005 7

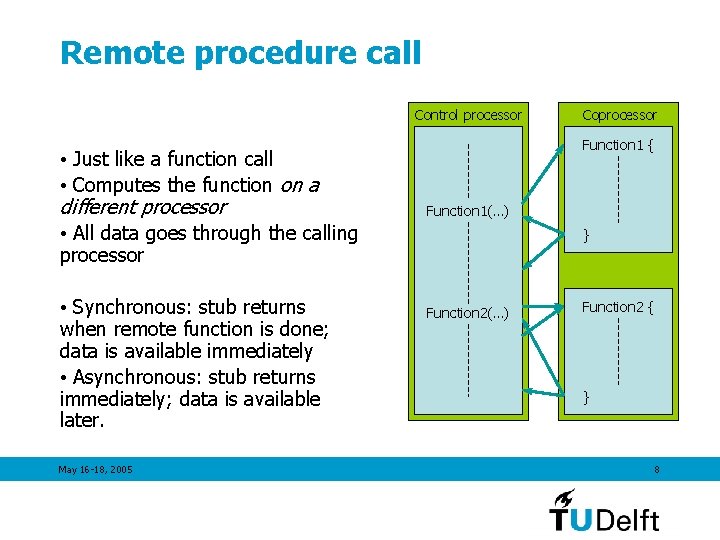

Remote procedure call Control processor • Just like a function call • Computes the function on a different processor • All data goes through the calling processor • Synchronous: stub returns when remote function is done; data is available immediately • Asynchronous: stub returns immediately; data is available later. May 16 -18, 2005 Coprocessor Function 1 { Function 1(…) } Function 2(…) Function 2 { } 8

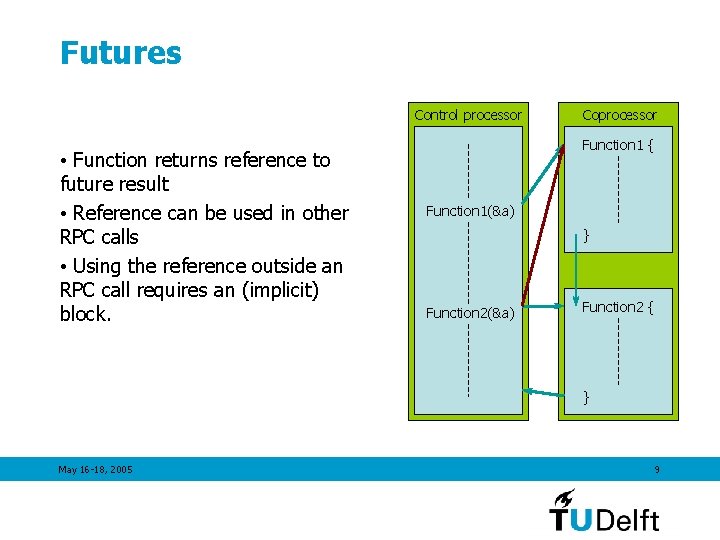

Futures Control processor • Function returns reference to future result • Reference can be used in other RPC calls • Using the reference outside an RPC call requires an (implicit) block. Coprocessor Function 1 { Function 1(&a) } Function 2(&a) Function 2 { } May 16 -18, 2005 9

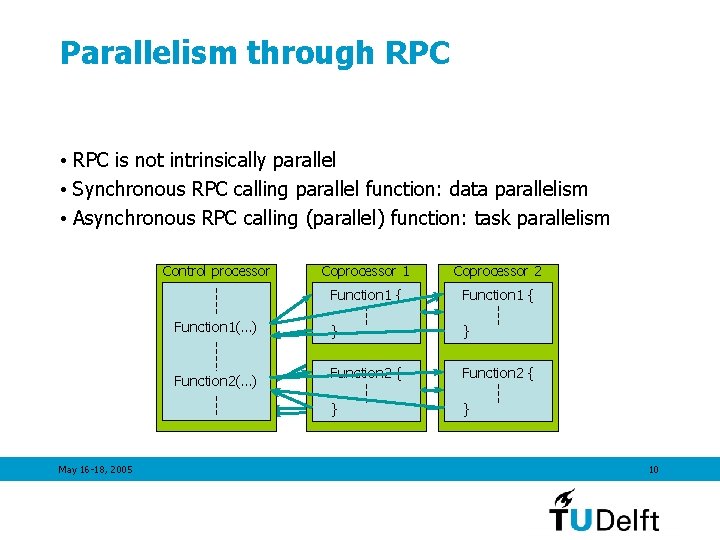

Parallelism through RPC • RPC is not intrinsically parallel • Synchronous RPC calling parallel function: data parallelism • Asynchronous RPC calling (parallel) function: task parallelism Control processor Function 1(…) Function 2(…) May 16 -18, 2005 Coprocessor 1 Coprocessor 2 2 Function 1 { Function 2 { Function 1 } } Function 2 { }} }} 10

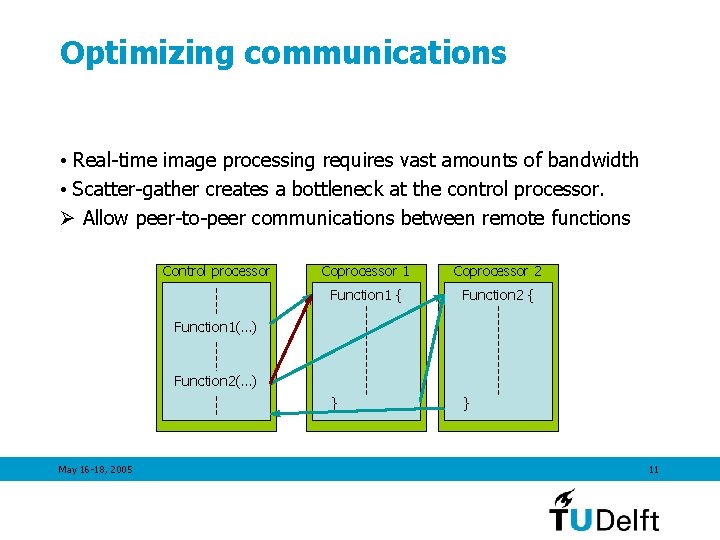

Optimizing communications • Real-time image processing requires vast amounts of bandwidth • Scatter-gather creates a bottleneck at the control processor. Ø Allow peer-to-peer communications between remote functions Control processor Coprocessor 1 Coprocessor 2 Function 1 { Function 2 { } } Function 1(…) Function 2(…) May 16 -18, 2005 11

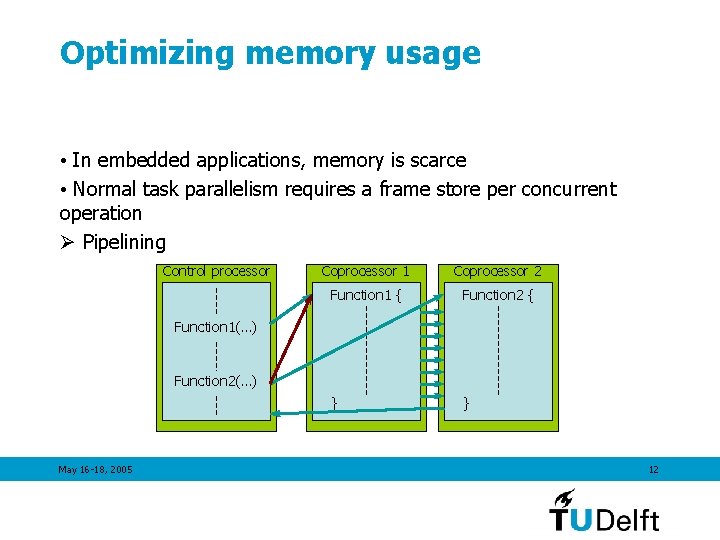

Optimizing memory usage • In embedded applications, memory is scarce • Normal task parallelism requires a frame store per concurrent operation Ø Pipelining Control processor Coprocessor 1 Coprocessor 2 Function 1 { Function 2 { } } Function 1(…) Function 2(…) May 16 -18, 2005 12

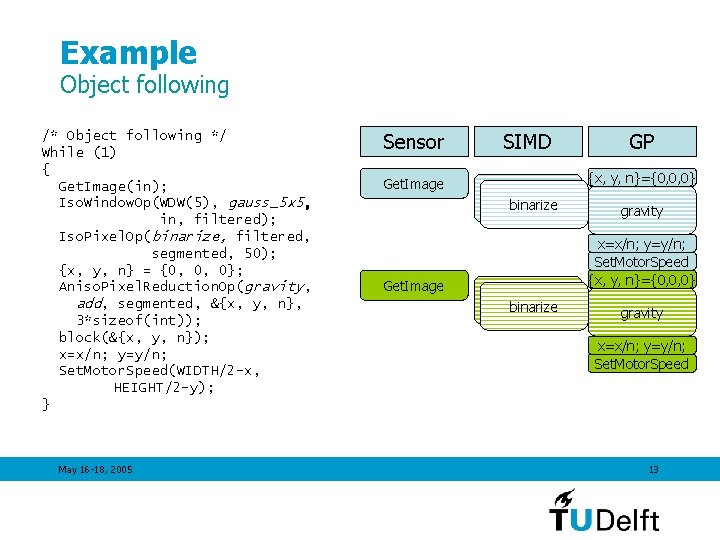

Example Object following /* Object following */ While (1) { Get. Image(in); Iso. Window. Op(WDW(5), gauss_5 x 5, in, filtered); Iso. Pixel. Op(binarize, filtered, segmented, 50); {x, y, n} = {0, 0, 0}; Aniso. Pixel. Reduction. Op(gravity, add, segmented, &{x, y, n}, 3*sizeof(int)); block(&{x, y, n}); x=x/n; y=y/n; Set. Motor. Speed(WIDTH/2 -x, HEIGHT/2 -y); } May 16 -18, 2005 Sensor Get. Image SIMD GP {x, y, n}={0, 0, 0} gauss_5 x 5 binarize gravity x=x/n; y=y/n; Set. Motor. Speed 13

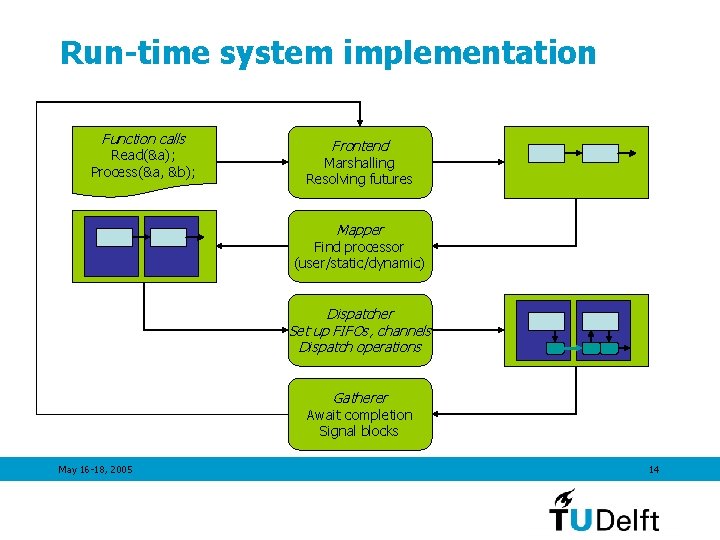

Run-time system implementation Function calls Read(&a); Process(&a, &b); Frontend Marshalling Resolving futures Mapper Find processor (user/static/dynamic) Dispatcher Set up FIFOs, channels Dispatch operations Gatherer Await completion Signal blocks May 16 -18, 2005 14

Prototype architecture May 16 -18, 2005 15

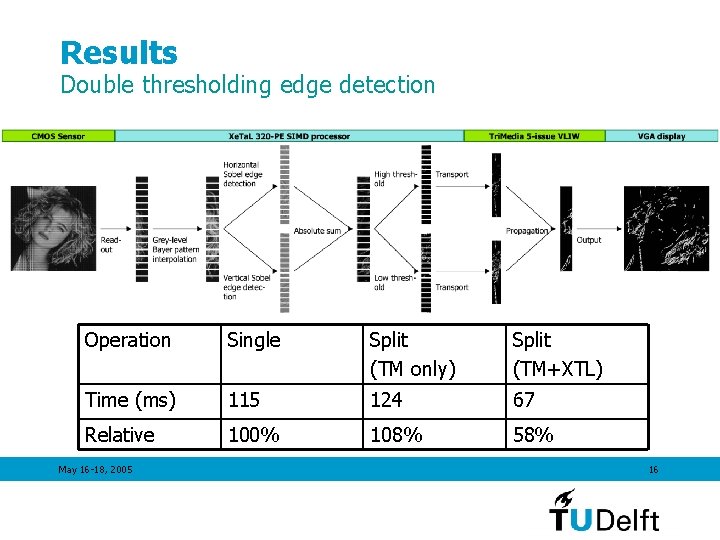

Results Double thresholding edge detection Operation Single Split (TM only) Split (TM+XTL) Time (ms) 115 124 67 Relative 100% 108% 58% May 16 -18, 2005 16

Conclusion The proposed programming model • Is easy to use • Skeletons hide data parallel bookkeeping • RPC hides task parallel implementation • Is architecture independent • A skeleton can be implemented for different architectures • RPC can map to heterogeneous system • Is optimized for embedded usage • Peer-to-peer communication: no scatter/gather bottleneck • Pipelined: no frame stores May 16 -18, 2005 17

Future work • Skeletons • Skeleton Definition Language • Skeleton merging • Mapping • Memory • Scalar dependencies • Evaluation • New prototype architecture • Dynamic, complex application May 16 -18, 2005 18

- Slides: 18