Site Lightning Report MWT 2 Mark Neubauer University

Site Lightning Report: MWT 2 Mark Neubauer University of Illinois at Urbana-Champaign US ATLAS Facilities Meeting @ UC Santa Cruz Nov 14, 2012

Midwest Tier-2 Three site Tier-2 consortia 0101001011110… The Team: Rob Gardner, Dave Lesny, Mark Neubauer, Sarah Williams, Illija Vukotic, Lincoln Bryant, Fred Luehring 2

Midwest Tier-2 Focus of this talk: Illinois Tier-2 3

Tier-2 @ Illinois History of the project: – Fall 2007−: Development/operation of T 3 gs – 08/26/10: Torre’s US ATLAS IB talk – 10/26/10: Tier 2@Illinois Proposal submitted to US ATLAS Computing Mgmt – 11/23/20: Proposal formally accepted – 10/5/11: First successful test of ATLAS production jobs run on Campus Cluster(CC) • Jobs read data from our Tier 3 gs cluster 4

Tier-2 @ Illinois History of the project (cont): – 03/1/12: Successful T 2@Illinois Pilot • Squid proxy cache, Condor head node job flocking from UC – 4/4/12: First hardware into Taub cluster • 16 compute nodes (dual x 5650, 48 GB memory, 160 GB disk, IB) 196 cores • 60 2 TB drives in DDN array 120 TB raw – 4/17/12: Perf. SONAR nodes online 5

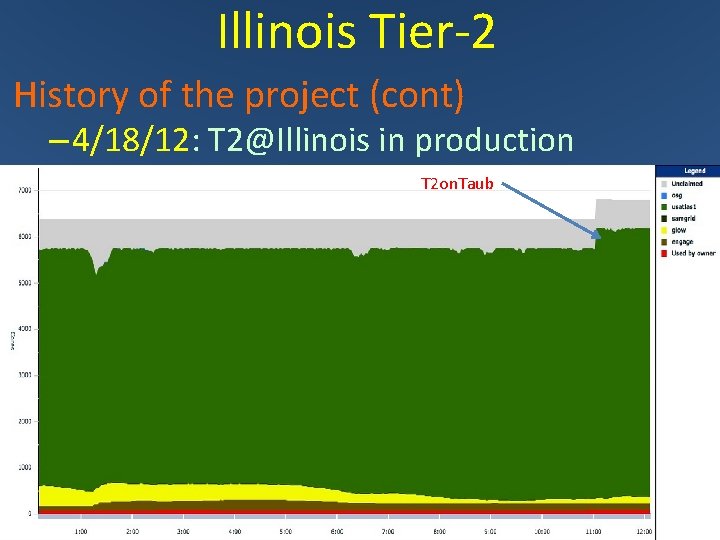

Illinois Tier-2 History of the project (cont) – 4/18/12: T 2@Illinois in production T 2 on. Taub 6

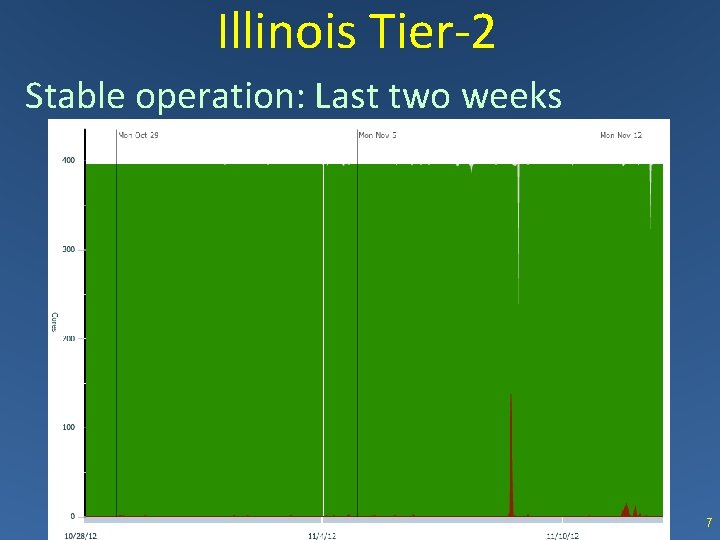

Illinois Tier-2 Stable operation: Last two weeks 7

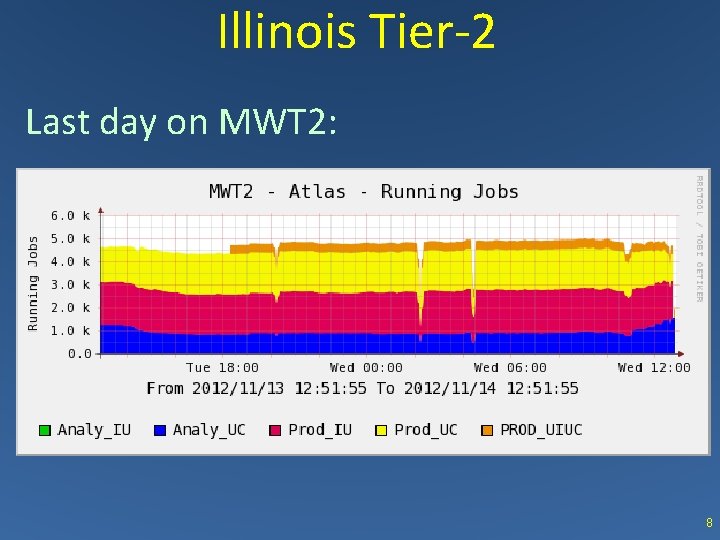

Illinois Tier-2 Last day on MWT 2: 8

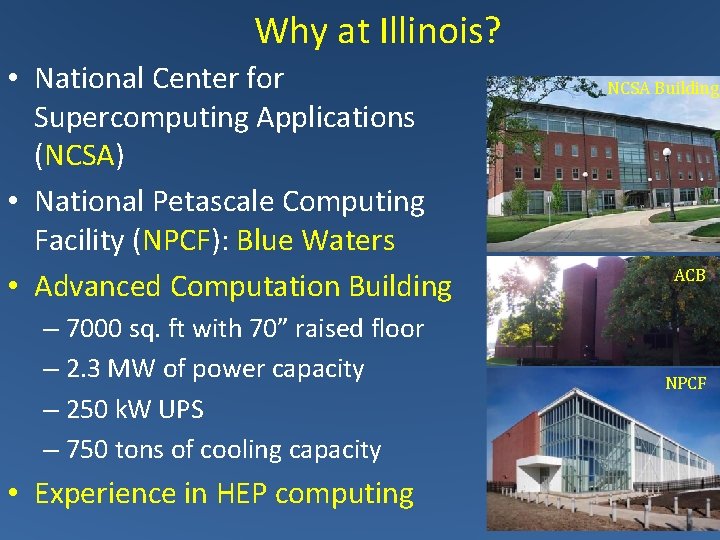

Why at Illinois? • National Center for Supercomputing Applications (NCSA) • National Petascale Computing Facility (NPCF): Blue Waters • Advanced Computation Building – 7000 sq. ft with 70” raised floor – 2. 3 MW of power capacity – 250 k. W UPS – 750 tons of cooling capacity NCSA Building ACB NPCF • Experience in HEP computing 9

Tier-2 @ Illinois • Deployed in a shared campus cluster (CC) in ACB – “Taub” first instance of CC – Tier 2@Illinois on Taub in production within MWT 2 • Pros (ATLAS perspective) – Free building, power, cooling, core infrastructure support w/ plenty of room for future expansion – Pool of Expertise, heterogeneous HW – Bulk Pricing important given DDD (Dell Deal Demise) – Opportunistic resources • Challenges – Constraints on hardware, pricing, architecture, timing 10

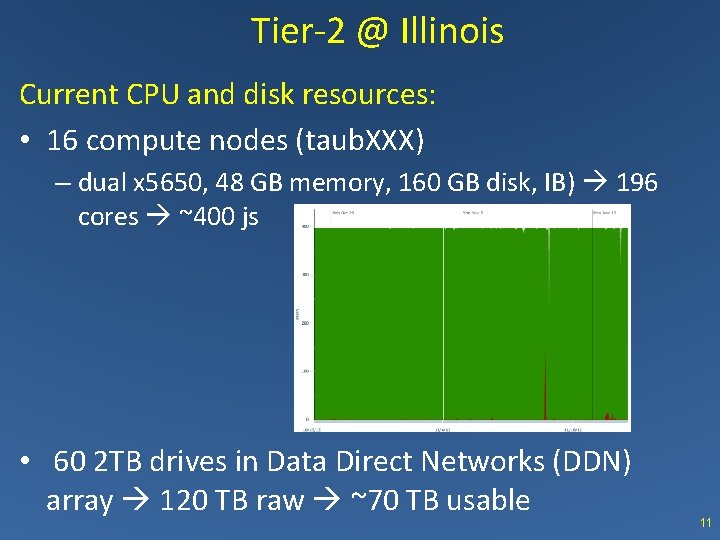

Tier-2 @ Illinois Current CPU and disk resources: • 16 compute nodes (taub. XXX) – dual x 5650, 48 GB memory, 160 GB disk, IB) 196 cores ~400 js • 60 2 TB drives in Data Direct Networks (DDN) array 120 TB raw ~70 TB usable 11

Tier-2 @ Illinois • Utility nodes / services (. campuscluster. illinois. edu): – Gatekeeper (mwt 2 -gt) • Primary schedd for Taub condor pool – Flocks other jobs to UC and IU Condor Pools – Condor Head Node (mwt 2 -condor) • Collector and Negotiator for Taub condor pool – Accepts flocked jobs from other MWT 2 Gatekeepers – Squid (mwt 2 -squid) • Proxy cache for CVMFS, Frontier for Taub (backup for IU/UC) – CVMFS Replica server (mwt 2 -cvmfs) • CVMFS replica server for Master CVMFS server – d. Cache s-node (mwt 2 -s 1) • Pool node for GPFS data storage (installed, d. Cache in progress)12

Next CC Instance (to be named) Overview • Mix of Ethernet-only and Ethernet + Infini. Band connected nodes – assume 50 -100% will be IB enabled • Mix of CPU-only and CPU+GPU nodes – assume up to 25% of nodes will have GPUs • New storage device and support nodes – added to shared storage environment – Allow for other protocols (SAMBA, NFS, Grid. FTP, GPFS) • VM hosting and related services – persistent services and other needs directly related to use of compute/storage resources 13

Next CC Instance (basic configuration) • Dell Power. Edge C 8220 2 -socket Intel Xeon E 5 -2670 – 8 -core Sandy Bridge processors @ 2. 60 GHz – 1 “sled” : 2 SB processors Dell C 8220 compute sled – 8 sleds in 4 U : 128 cores • Memory configuration options: – 2 GB/core, 4 GB/core, 8 GB/core • Options: – Infini. Band FDR (Gig. E otherwise) – NVIDIA M 2090 (Fermi GPU) Accelerators – Storage via DDN SFA 12000 – can add in 30 TB (raw) increments 14

Summary and Plans • New Tier-2 @ Illinois – Modest (currently) resource integrated into MWT 2 and in production use – Cautious optimism: Deploying an Tier-2 within a shared campus cluster a success • Near term plans – Buy into 2 nd campus cluster instance • $160 k of FY 12 funds with 60/40 CPU/disk split – Continue d. Cache deployment – LHCONE @ Illinois due to turn on 11/20/12 – Virtualization of Tier-2 utility services – Better integration into MWT 2 monitoring 15

- Slides: 15