Simple Linear Regression Least squares line Interpreting coefficients

![Confidence Interval > qt(. 975, 5) [1] 2. 570582 We are 95% confident that Confidence Interval > qt(. 975, 5) [1] 2. 570582 We are 95% confident that](https://slidetodoc.com/presentation_image_h/2ee8f7ba875ebb84089cbc5dbaa4f94f/image-36.jpg)

![Hypothesis Test > 2*pt(16. 21, 5, lower. tail=FALSE) [1] 1. 628701 e-05 There is Hypothesis Test > 2*pt(16. 21, 5, lower. tail=FALSE) [1] 1. 628701 e-05 There is](https://slidetodoc.com/presentation_image_h/2ee8f7ba875ebb84089cbc5dbaa4f94f/image-37.jpg)

- Slides: 40

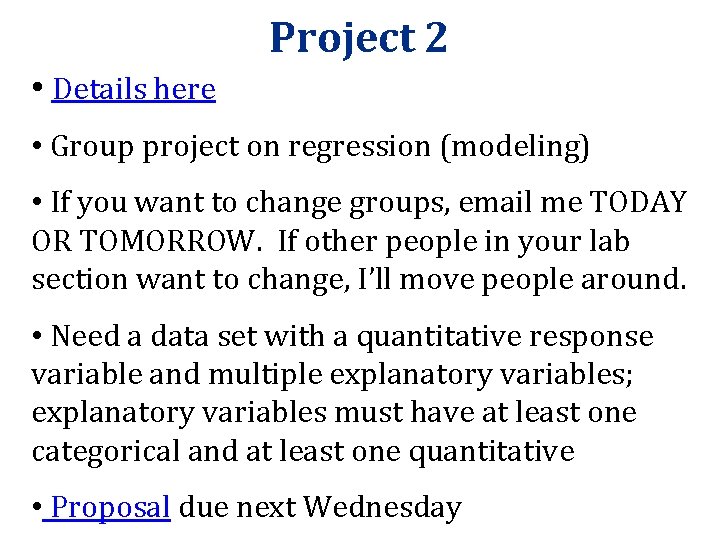

Simple Linear Regression • Least squares line • Interpreting coefficients • Prediction • Cautions • The formal model Section 2. 6, 9. 1, 9. 2 Professor Kari Lock Morgan Duke University

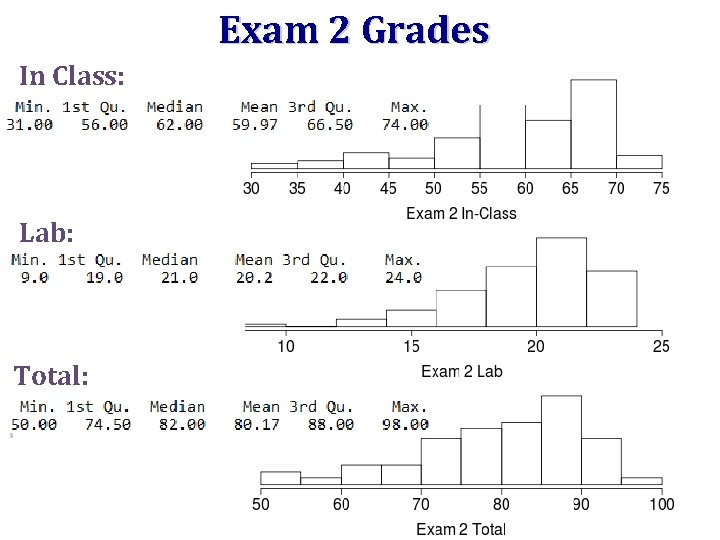

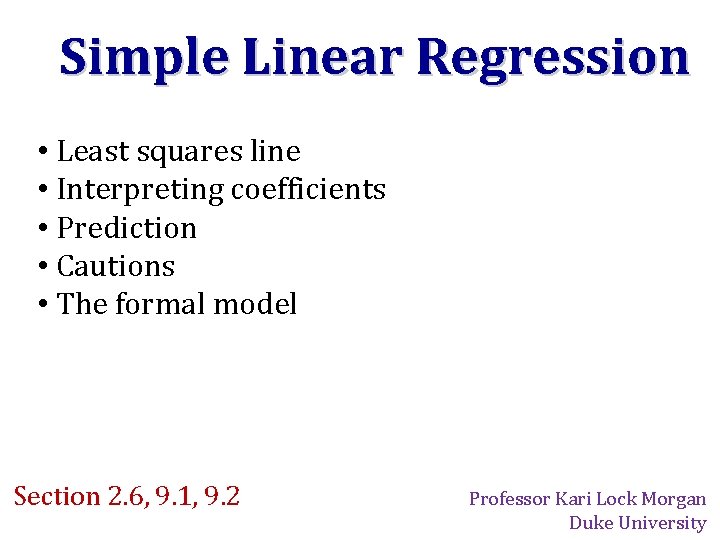

Exam 2 Grades In Class: Lab: Total:

Comments on In-Class Exam • Test whether this data provides evidence that Melanoma is found significantly more often on the left side of the body: one categorical variable -> single proportion • 2011 Hollywood movies: If the sample is the same as the population, then no need for inference! • Standard deviation of a bootstrap distribution is the standard error

Comments on Lab Exam • Most common reason for points off: applying the wrong method • The first step should ALWAYS be asking yourself: What is/are the variable(s)? Are they categorical or quantitative? • Always plot/visualize your data. Outliers can strongly affect the results; you should either explain why they are left in, or else remove them

Simulation Methods • For any one or two variables, resample( ) gives a confidence interval • For any two variables, reallocate( ) tests for an association between the variables • No conditions to check! • Automatically deals with missing data! • Only two commands to remember! • No distributions to remember!

MODELING

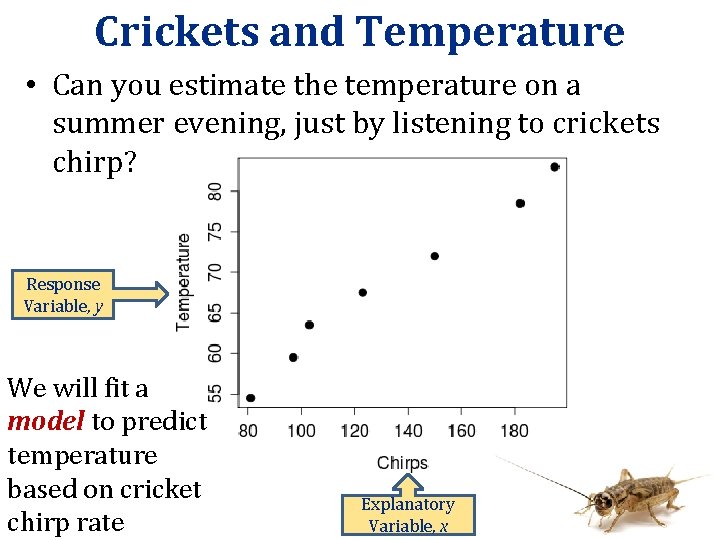

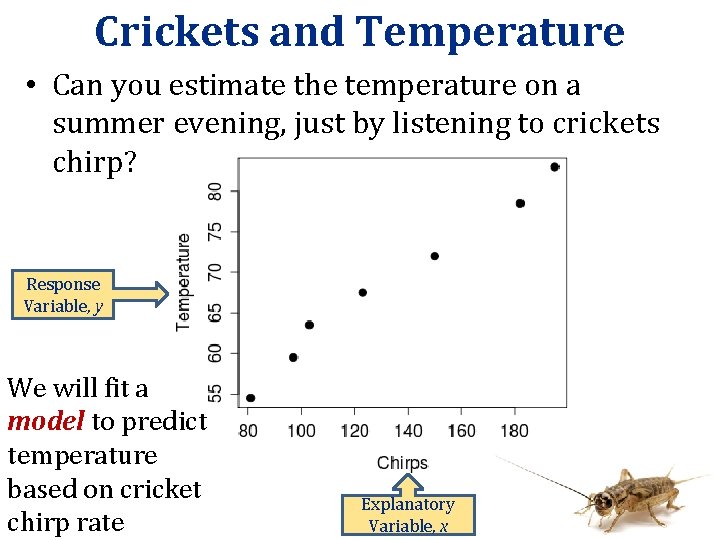

Crickets and Temperature • Can you estimate the temperature on a summer evening, just by listening to crickets chirp? Response Variable, y We will fit a model to predict temperature based on cricket chirp rate Explanatory Variable, x

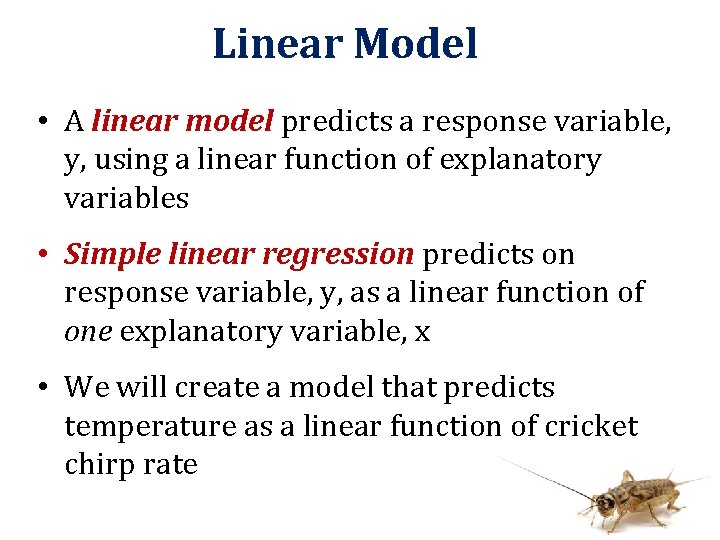

Linear Model • A linear model predicts a response variable, y, using a linear function of explanatory variables • Simple linear regression predicts on response variable, y, as a linear function of one explanatory variable, x • We will create a model that predicts temperature as a linear function of cricket chirp rate

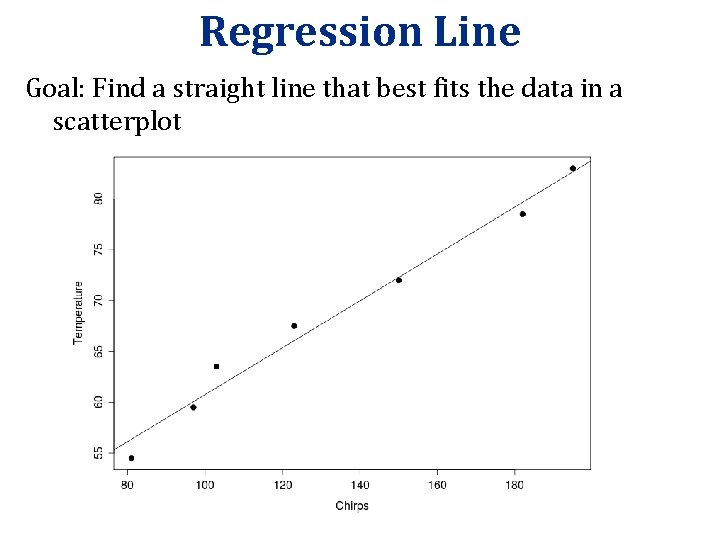

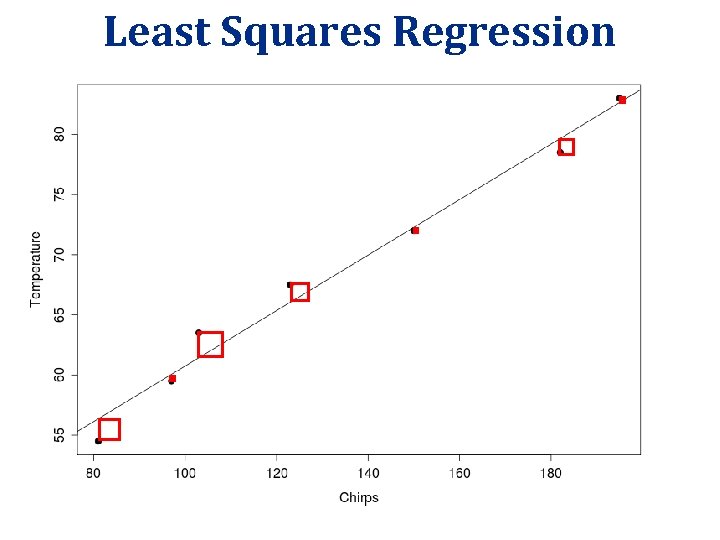

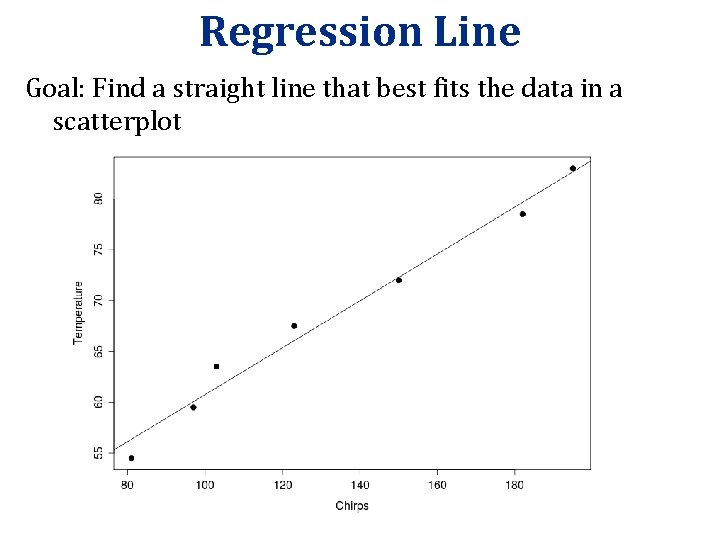

Regression Line Goal: Find a straight line that best fits the data in a scatterplot

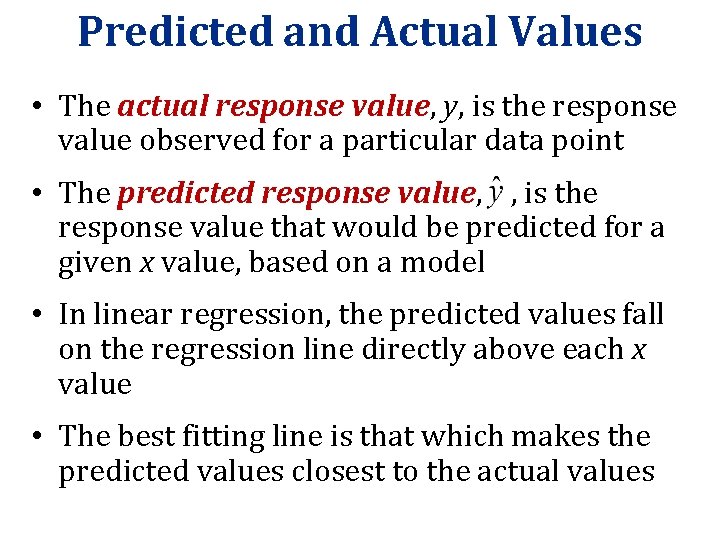

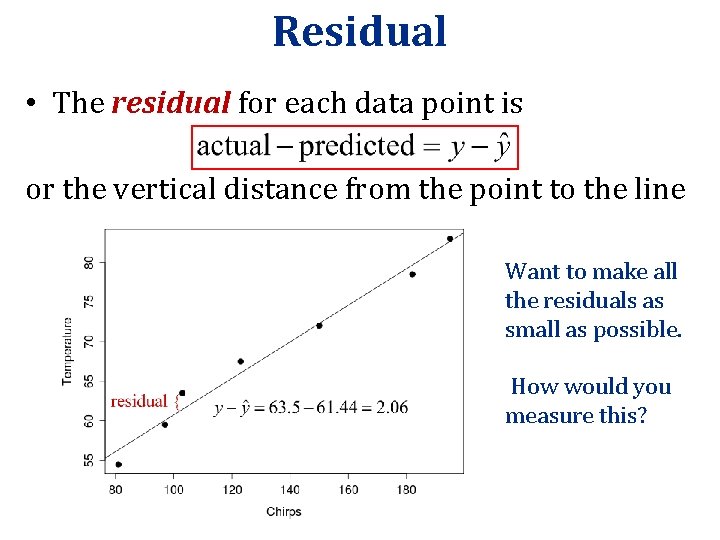

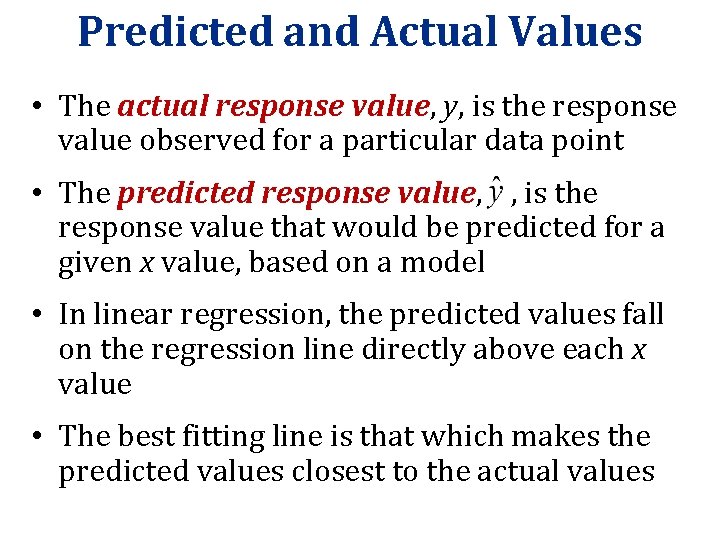

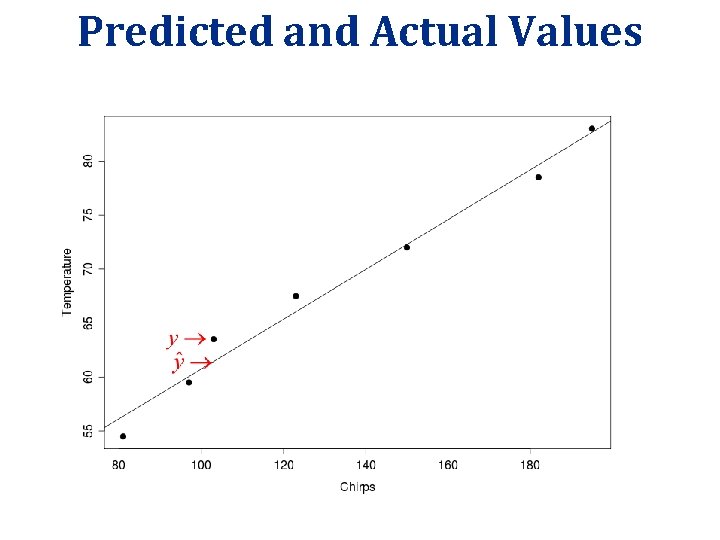

Predicted and Actual Values • The actual response value, y, is the response value observed for a particular data point • The predicted response value, , is the response value that would be predicted for a given x value, based on a model • In linear regression, the predicted values fall on the regression line directly above each x value • The best fitting line is that which makes the predicted values closest to the actual values

Predicted and Actual Values

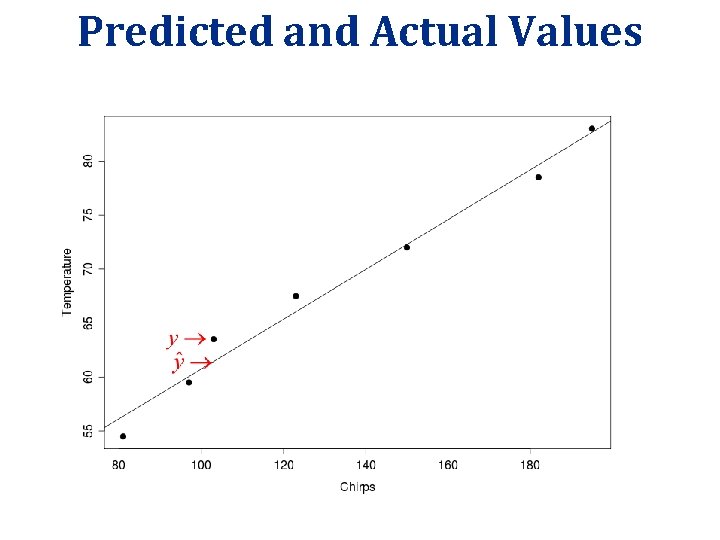

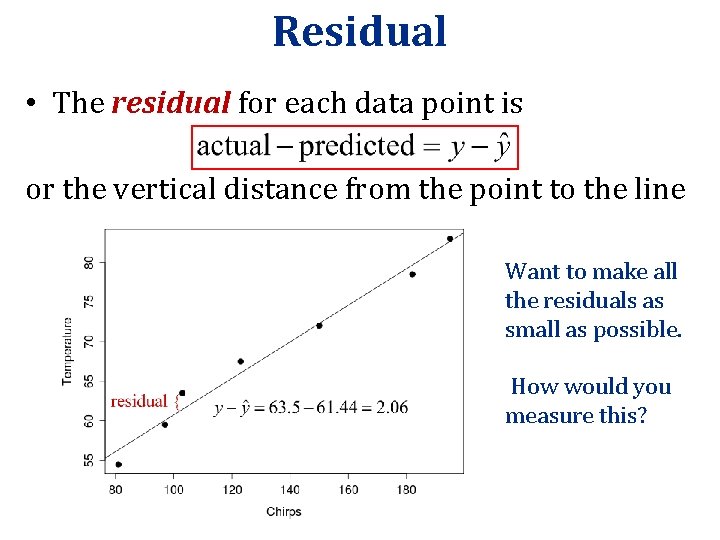

Residual • The residual for each data point is or the vertical distance from the point to the line Want to make all the residuals as small as possible. How would you measure this?

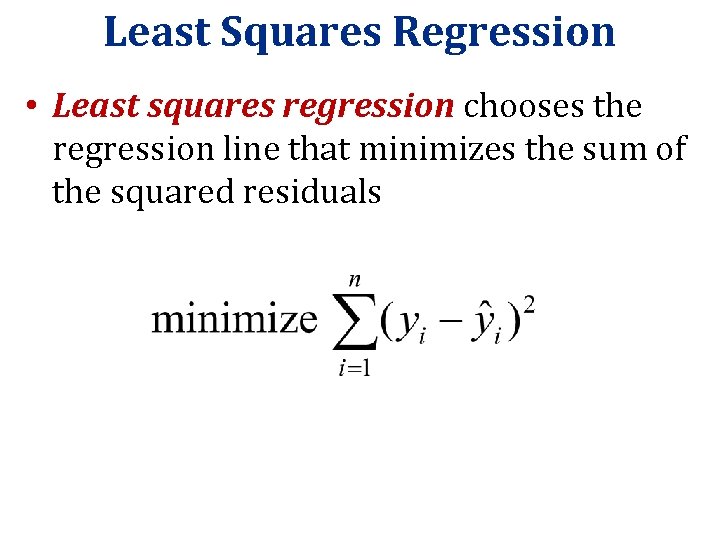

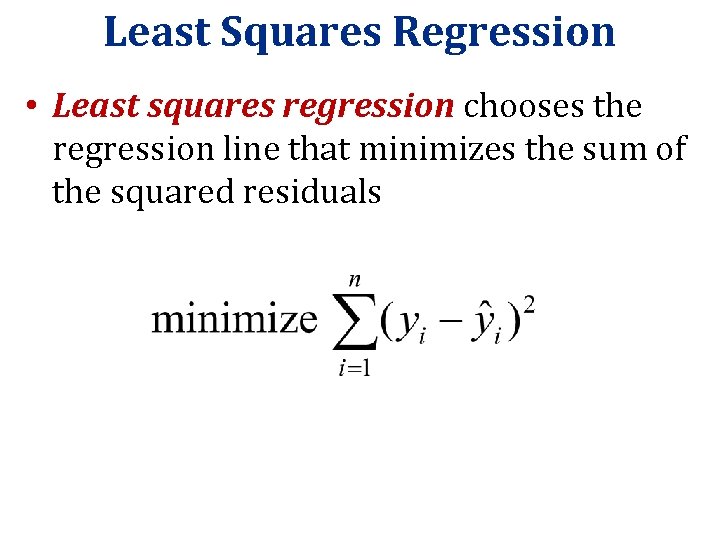

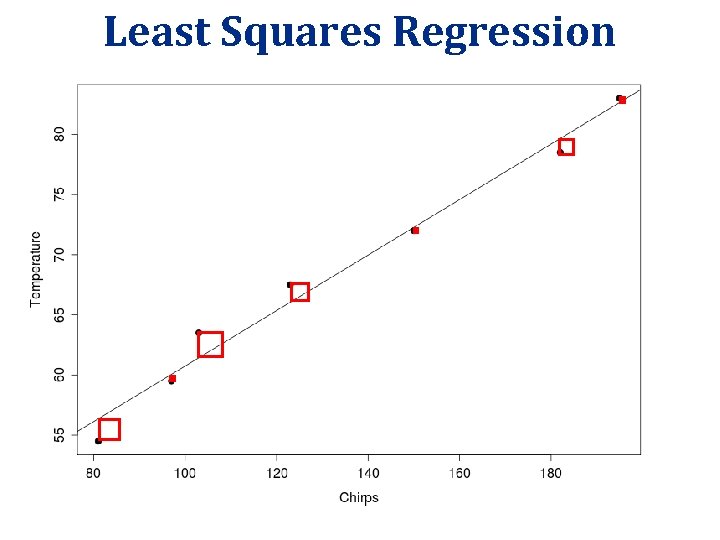

Least Squares Regression • Least squares regression chooses the regression line that minimizes the sum of the squared residuals

Least Squares Regression

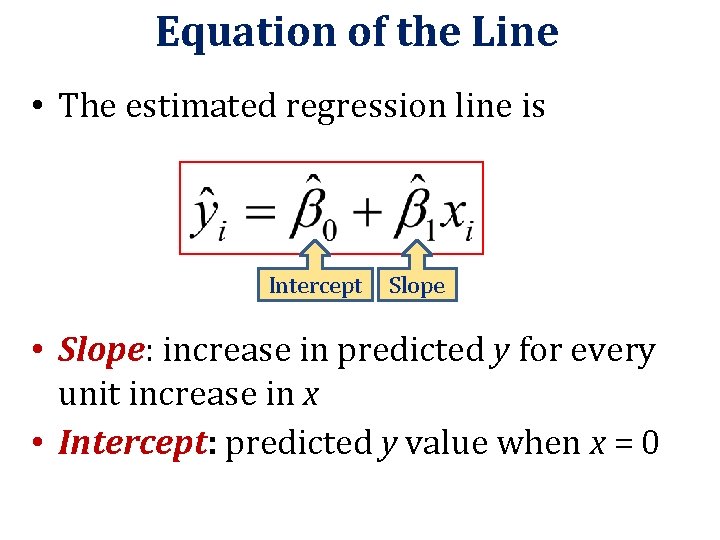

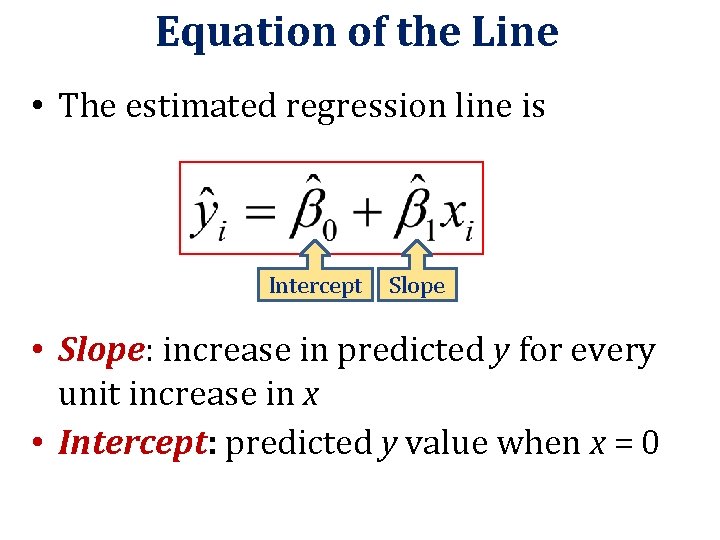

Equation of the Line • The estimated regression line is Intercept Slope • Slope: increase in predicted y for every unit increase in x • Intercept: predicted y value when x = 0

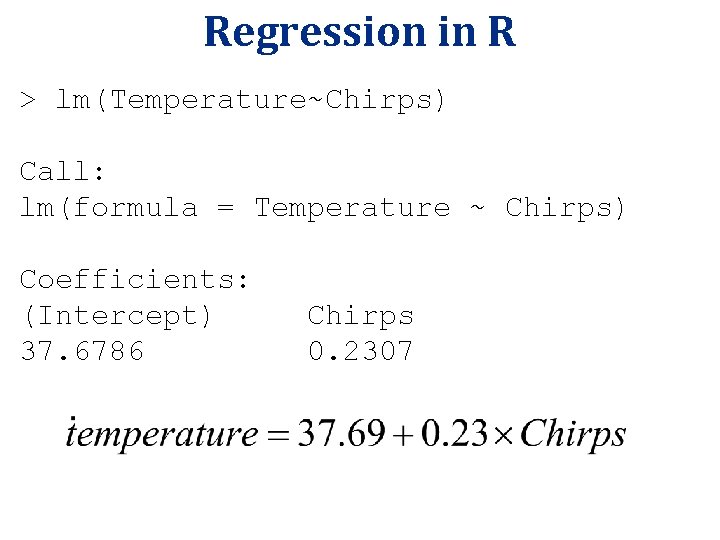

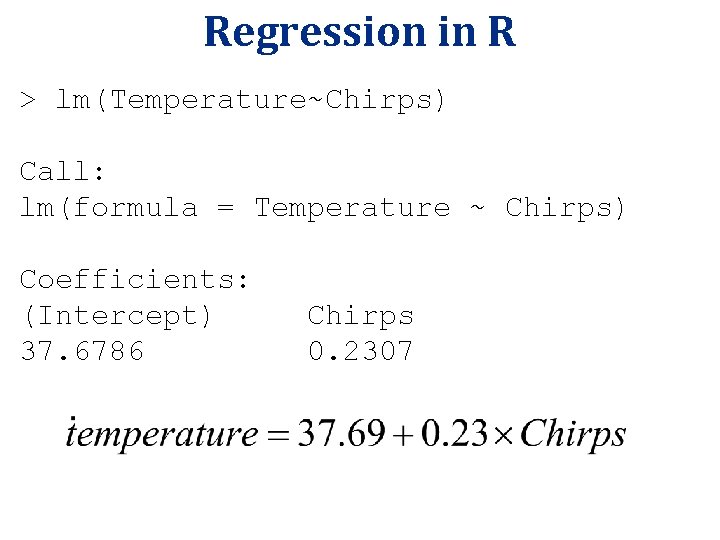

Regression in R > lm(Temperature~Chirps) Call: lm(formula = Temperature ~ Chirps) Coefficients: (Intercept) 37. 6786 Chirps 0. 2307

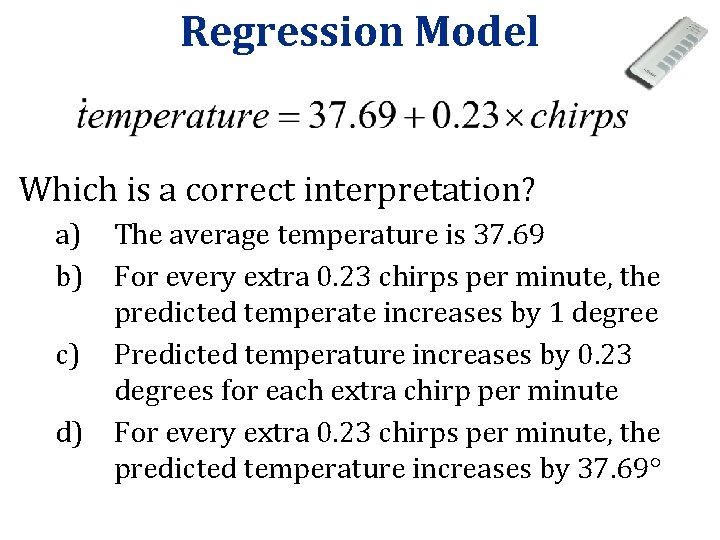

Regression Model Which is a correct interpretation? a) The average temperature is 37. 69 b) For every extra 0. 23 chirps per minute, the predicted temperate increases by 1 degree c) Predicted temperature increases by 0. 23 degrees for each extra chirp per minute d) For every extra 0. 23 chirps per minute, the predicted temperature increases by 37. 69

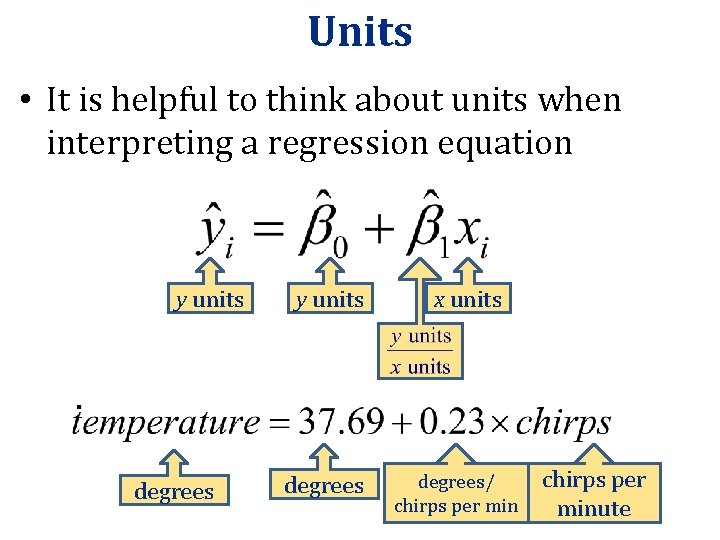

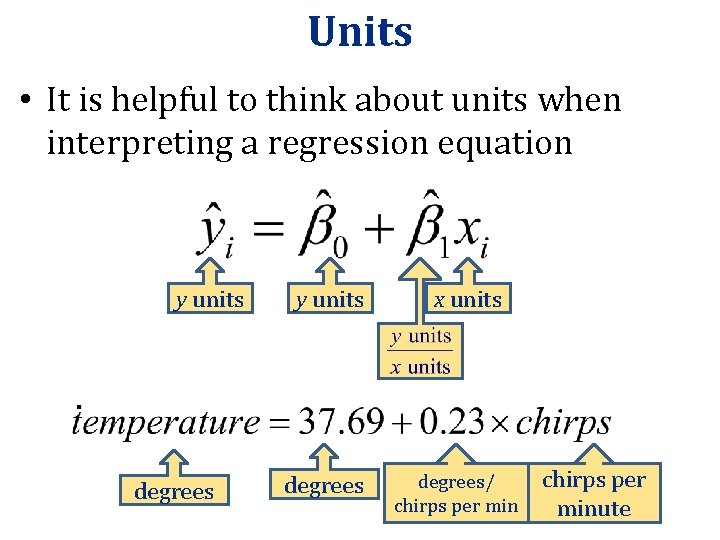

Units • It is helpful to think about units when interpreting a regression equation y units degrees x units degrees/ chirps per minute

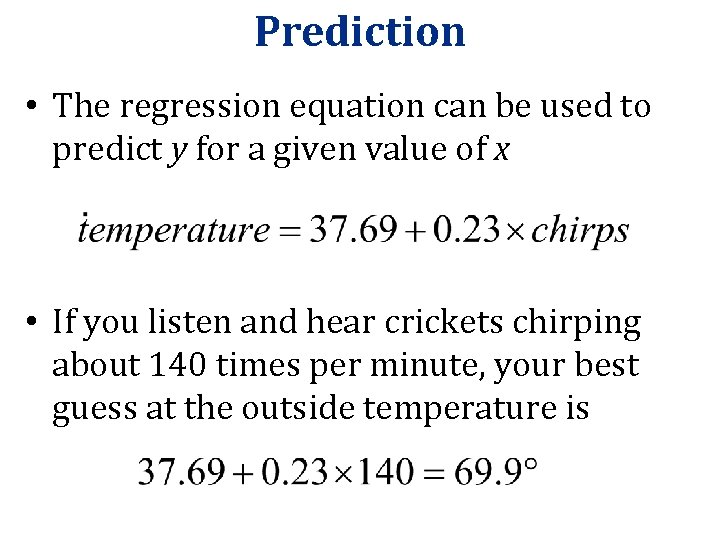

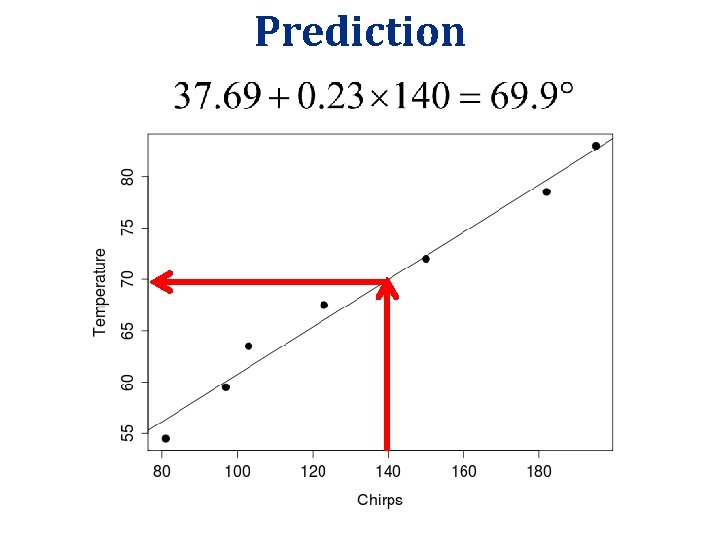

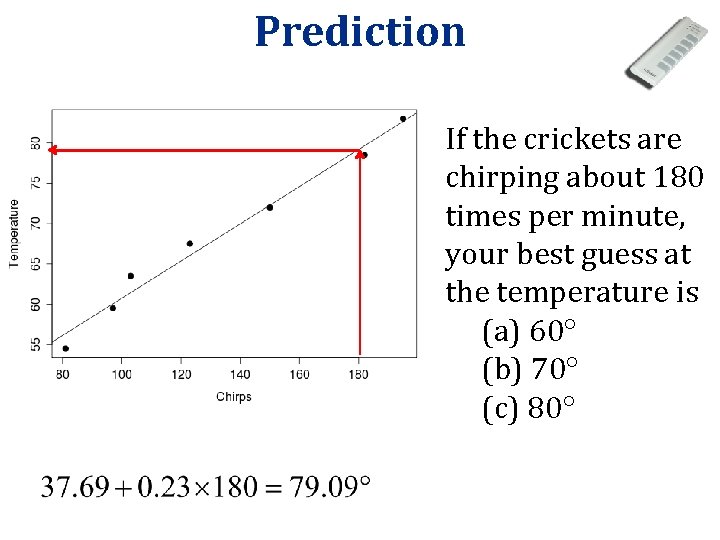

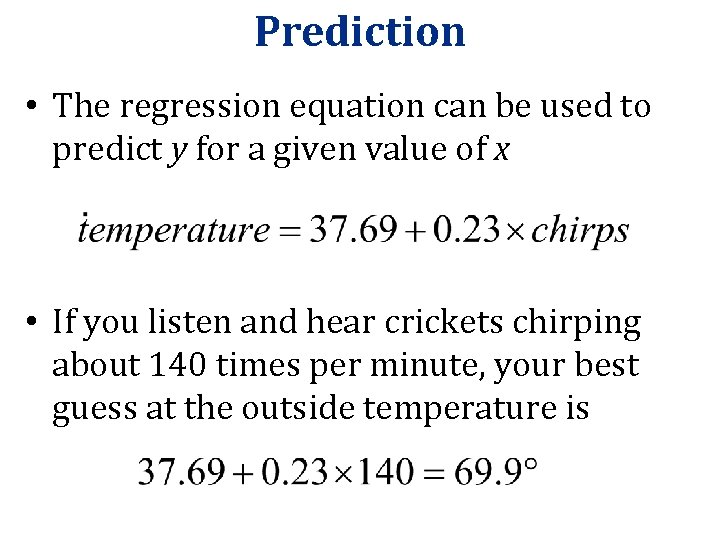

Prediction • The regression equation can be used to predict y for a given value of x • If you listen and hear crickets chirping about 140 times per minute, your best guess at the outside temperature is

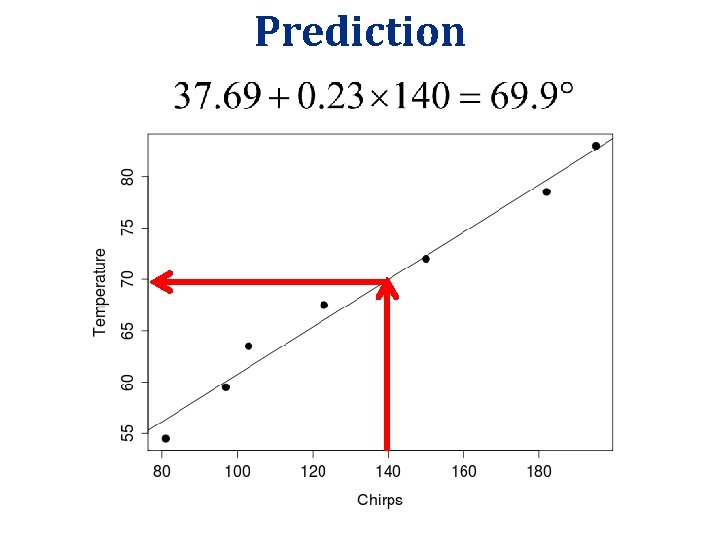

Prediction

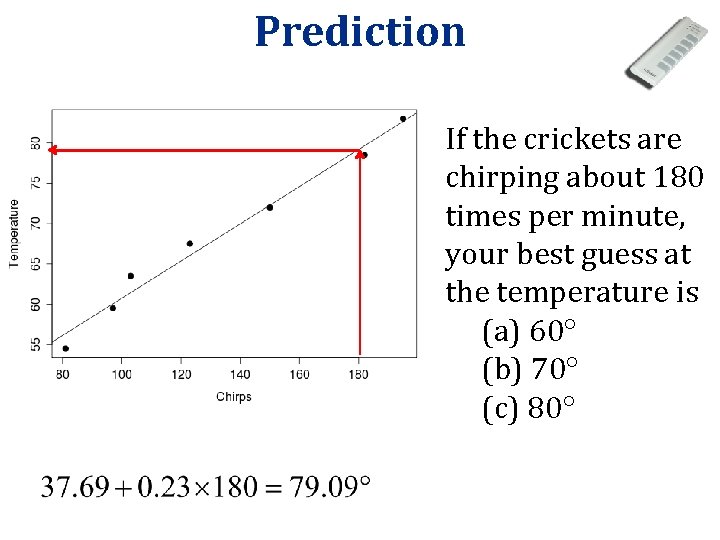

Prediction If the crickets are chirping about 180 times per minute, your best guess at the temperature is (a) 60 (b) 70 (c) 80

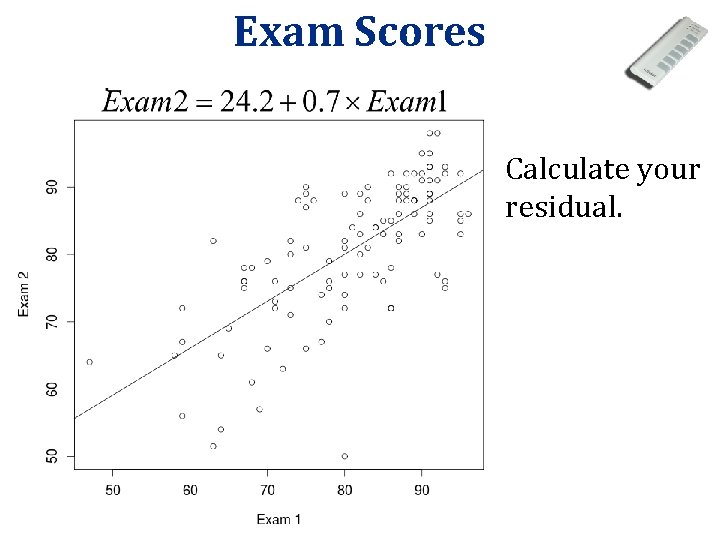

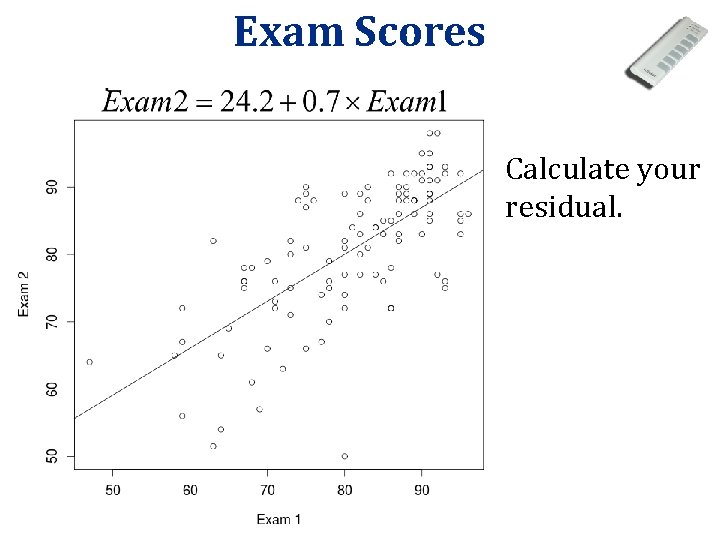

Exam Scores Calculate your residual.

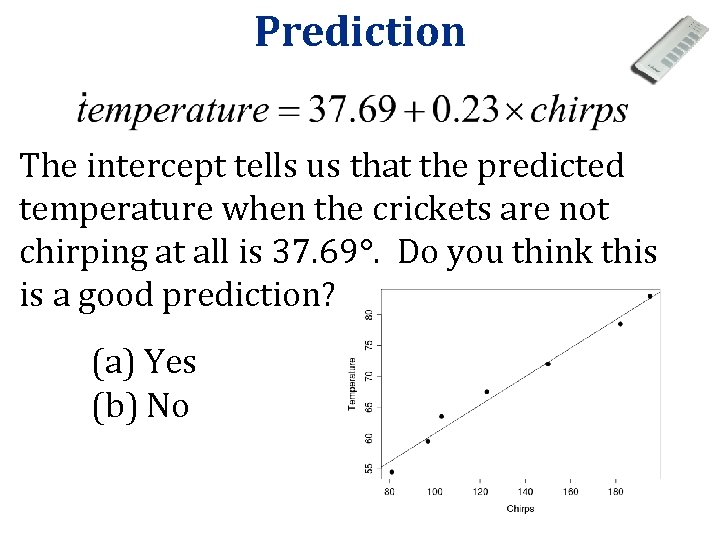

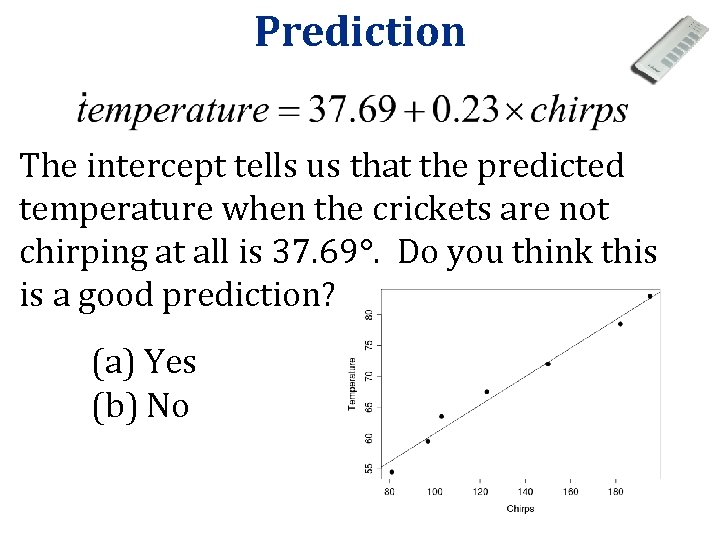

Prediction The intercept tells us that the predicted temperature when the crickets are not chirping at all is 37. 69. Do you think this is a good prediction? (a) Yes (b) No

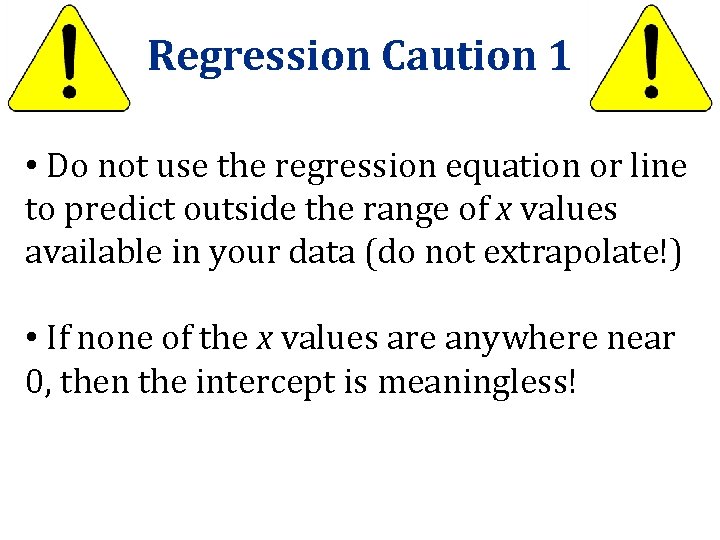

Regression Caution 1 • Do not use the regression equation or line to predict outside the range of x values available in your data (do not extrapolate!) • If none of the x values are anywhere near 0, then the intercept is meaningless!

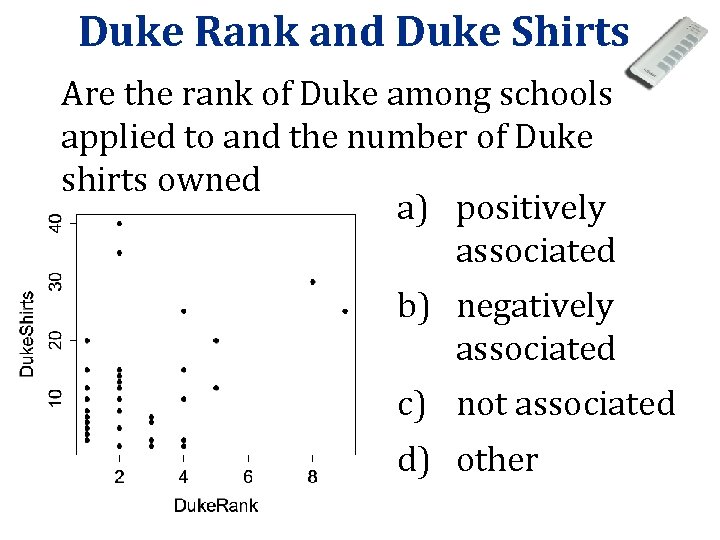

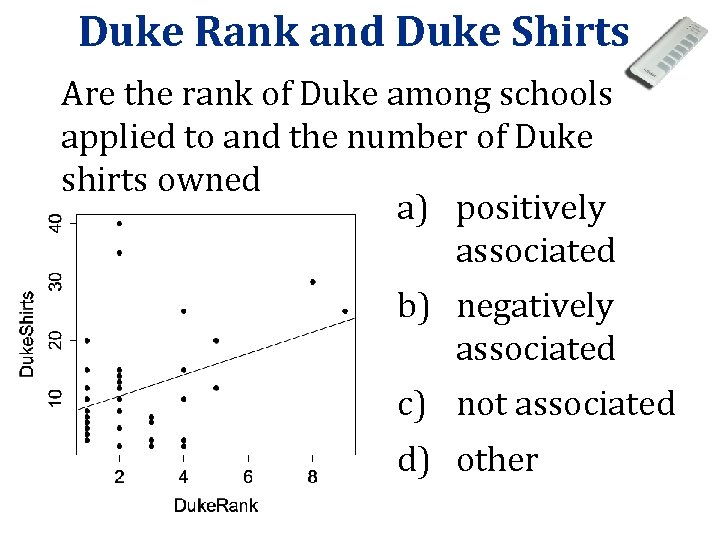

Duke Rank and Duke Shirts Are the rank of Duke among schools applied to and the number of Duke shirts owned a) positively associated b) negatively associated c) not associated d) other

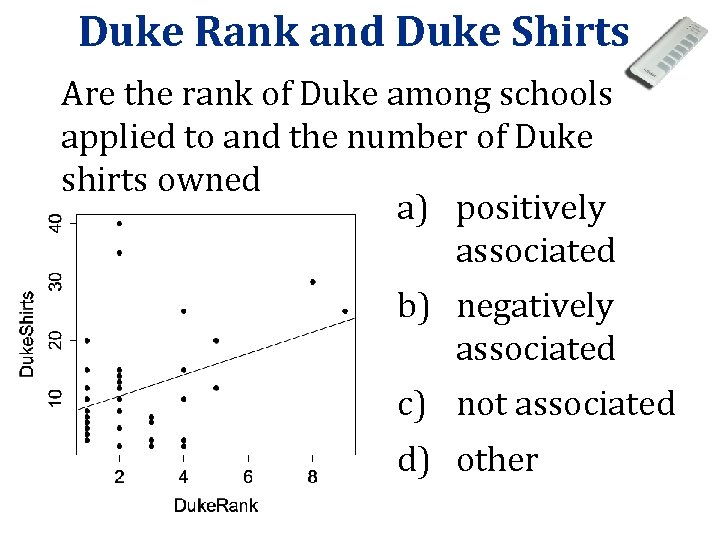

Duke Rank and Duke Shirts Are the rank of Duke among schools applied to and the number of Duke shirts owned a) positively associated b) negatively associated c) not associated d) other

Regression Caution 2 • Computers will calculate a regression line for any two quantitative variables, even if they are not associated or if the association is not linear • ALWAYS PLOT YOUR DATA! • The regression line/equation should only be used if the association is approximately linear

Regression Caution 3 • Outliers (especially outliers in both variables) can be very influential on the regression line • ALWAYS PLOT YOUR DATA! http: //illuminations. nctm. org/Lesson. Detai l. aspx? ID=L 455

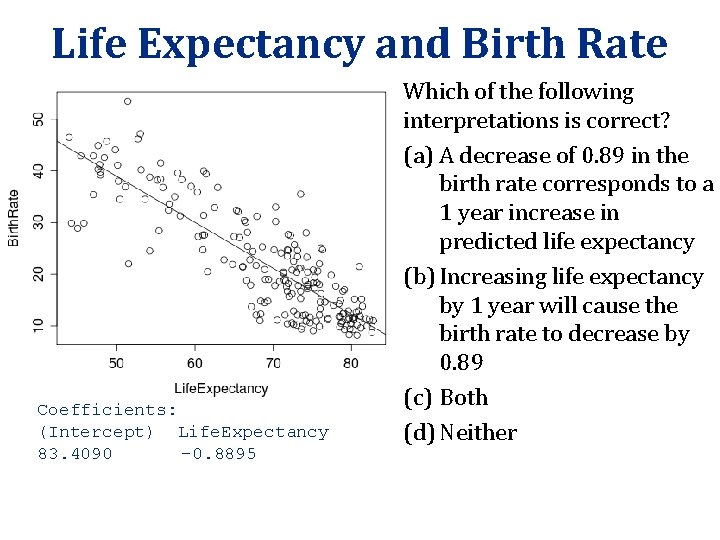

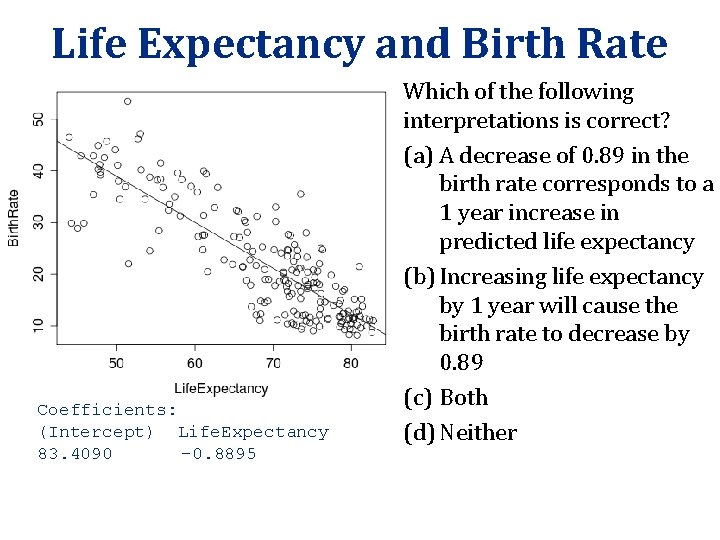

Life Expectancy and Birth Rate Coefficients: (Intercept) Life. Expectancy 83. 4090 -0. 8895 Which of the following interpretations is correct? (a) A decrease of 0. 89 in the birth rate corresponds to a 1 year increase in predicted life expectancy (b) Increasing life expectancy by 1 year will cause the birth rate to decrease by 0. 89 (c) Both (d) Neither

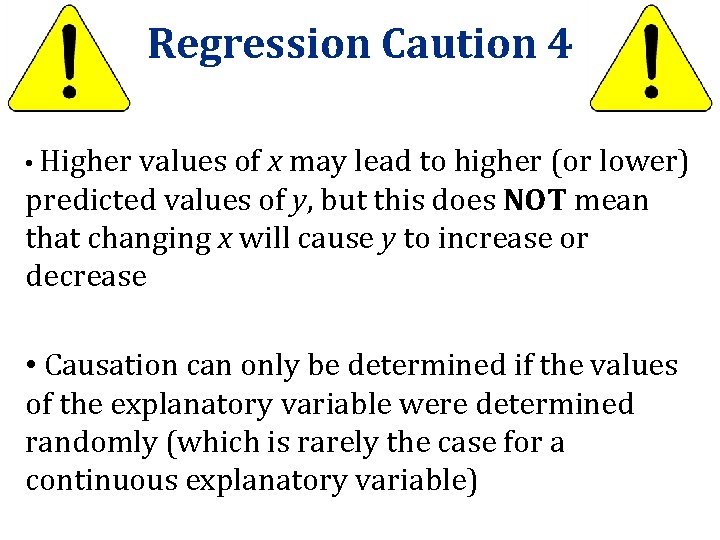

Regression Caution 4 • Higher values of x may lead to higher (or lower) predicted values of y, but this does NOT mean that changing x will cause y to increase or decrease • Causation can only be determined if the values of the explanatory variable were determined randomly (which is rarely the case for a continuous explanatory variable)

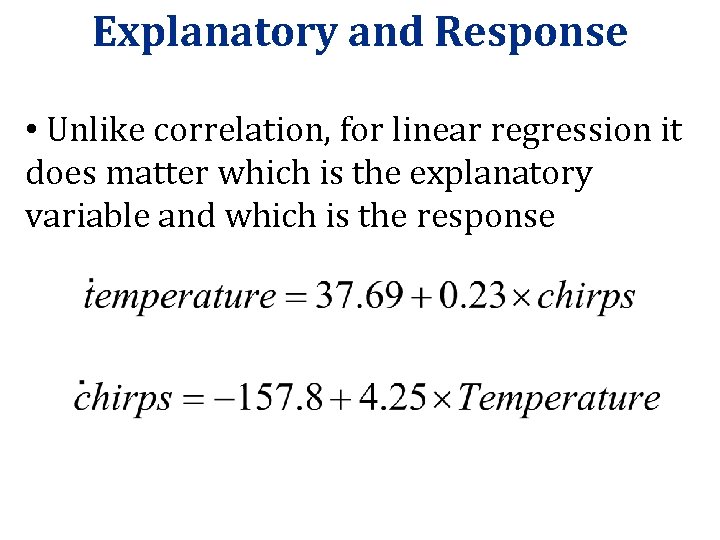

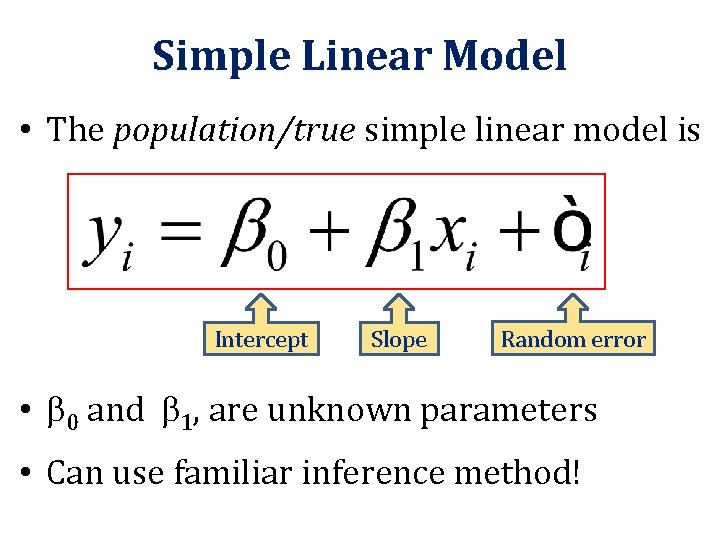

Explanatory and Response • Unlike correlation, for linear regression it does matter which is the explanatory variable and which is the response

r=0 Challenge: If the correlation between x and y is 0, what would the regression line be?

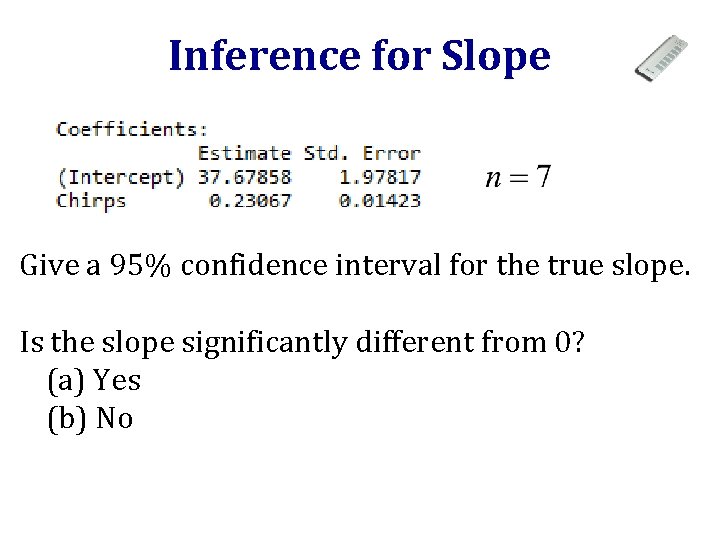

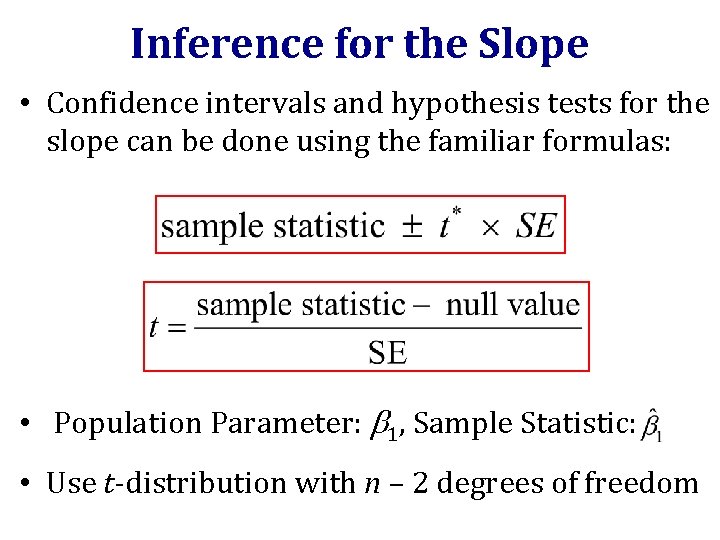

Simple Linear Model • The population/true simple linear model is Intercept Slope Random error • 0 and 1, are unknown parameters • Can use familiar inference method!

Inference for the Slope • Confidence intervals and hypothesis tests for the slope can be done using the familiar formulas: • Population Parameter: 1, Sample Statistic: • Use t-distribution with n – 2 degrees of freedom

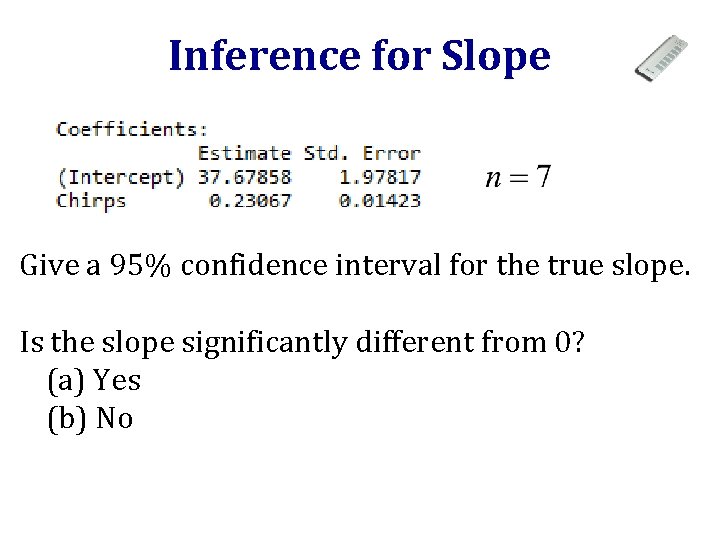

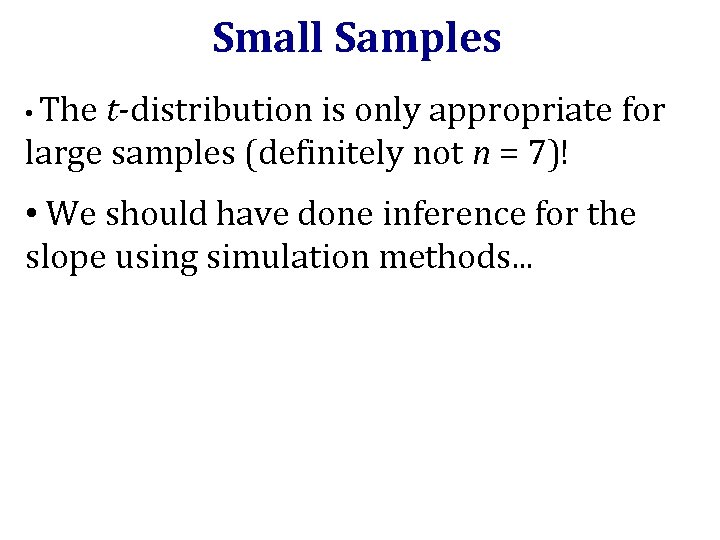

Inference for Slope Give a 95% confidence interval for the true slope. Is the slope significantly different from 0? (a) Yes (b) No

![Confidence Interval qt 975 5 1 2 570582 We are 95 confident that Confidence Interval > qt(. 975, 5) [1] 2. 570582 We are 95% confident that](https://slidetodoc.com/presentation_image_h/2ee8f7ba875ebb84089cbc5dbaa4f94f/image-36.jpg)

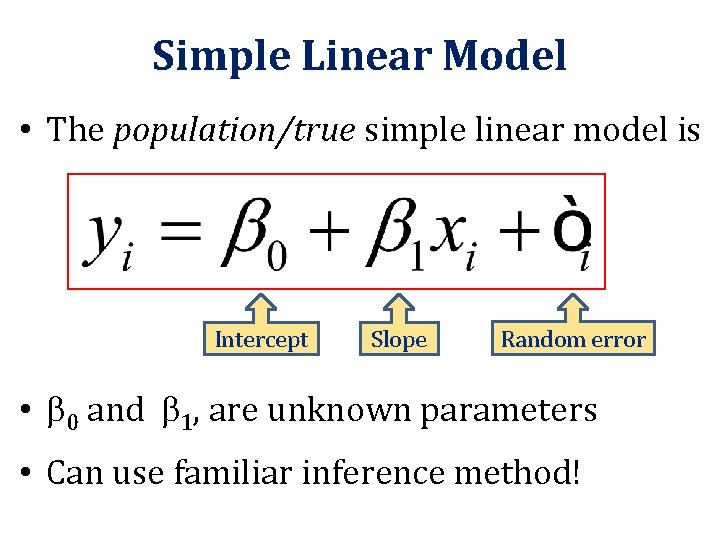

Confidence Interval > qt(. 975, 5) [1] 2. 570582 We are 95% confident that the true slope, regressing temperature on cricket chirp rate, is between 0. 194 and 0. 266 degrees per chirp per minute.

![Hypothesis Test 2pt16 21 5 lower tailFALSE 1 1 628701 e05 There is Hypothesis Test > 2*pt(16. 21, 5, lower. tail=FALSE) [1] 1. 628701 e-05 There is](https://slidetodoc.com/presentation_image_h/2ee8f7ba875ebb84089cbc5dbaa4f94f/image-37.jpg)

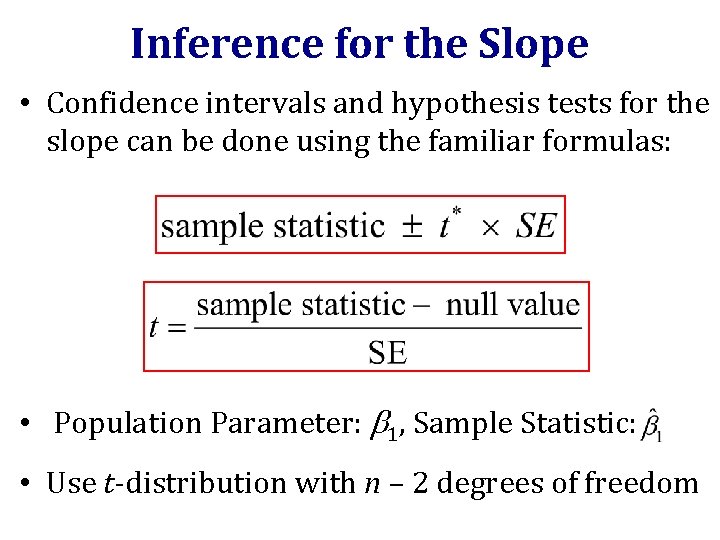

Hypothesis Test > 2*pt(16. 21, 5, lower. tail=FALSE) [1] 1. 628701 e-05 There is strong evidence that the slope is significantly different from 0, and that there is an association between cricket chirp rate and temperature.

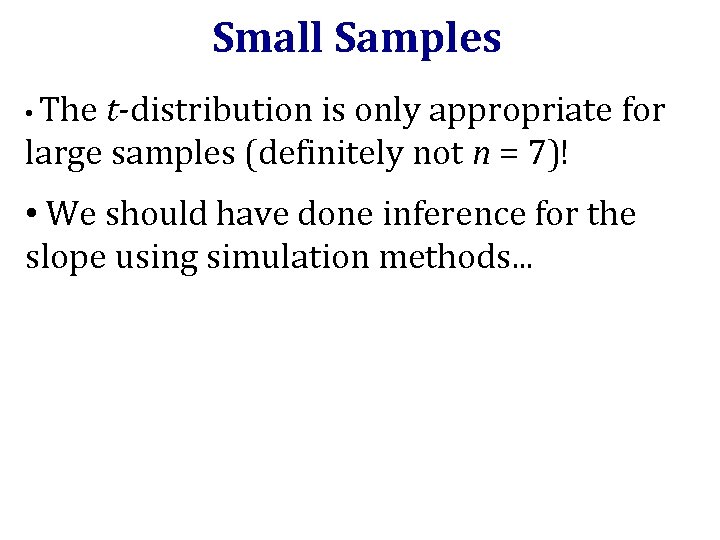

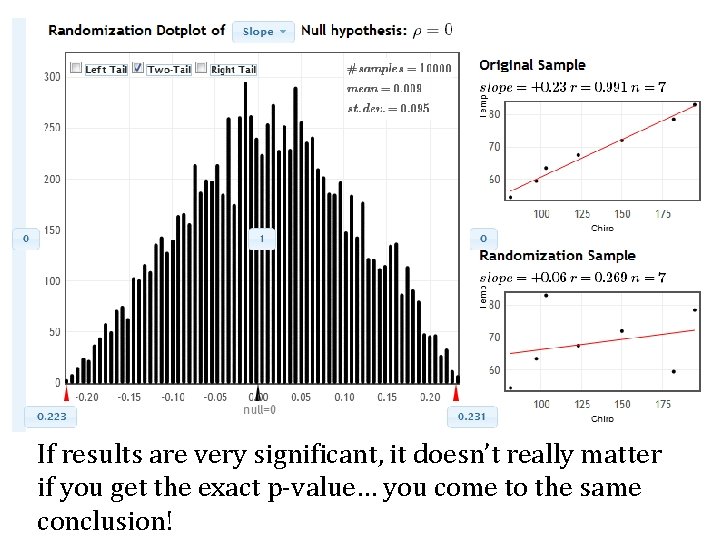

Small Samples • The t-distribution is only appropriate for large samples (definitely not n = 7)! • We should have done inference for the slope using simulation methods. . .

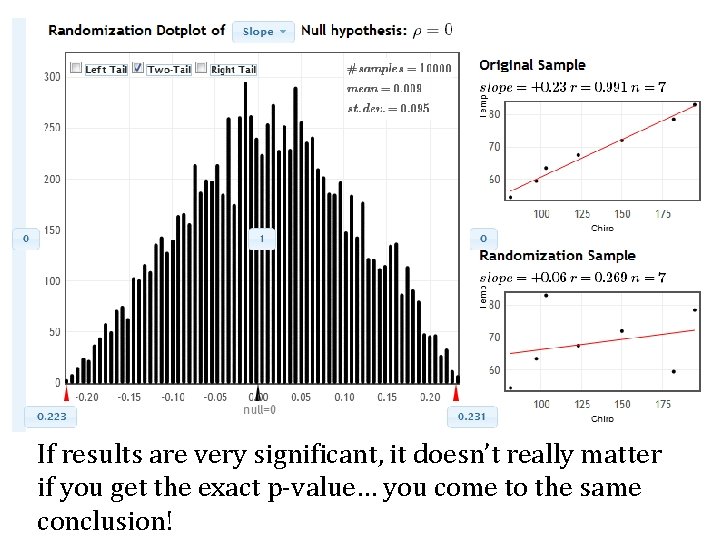

If results are very significant, it doesn’t really matter if you get the exact p-value… you come to the same conclusion!

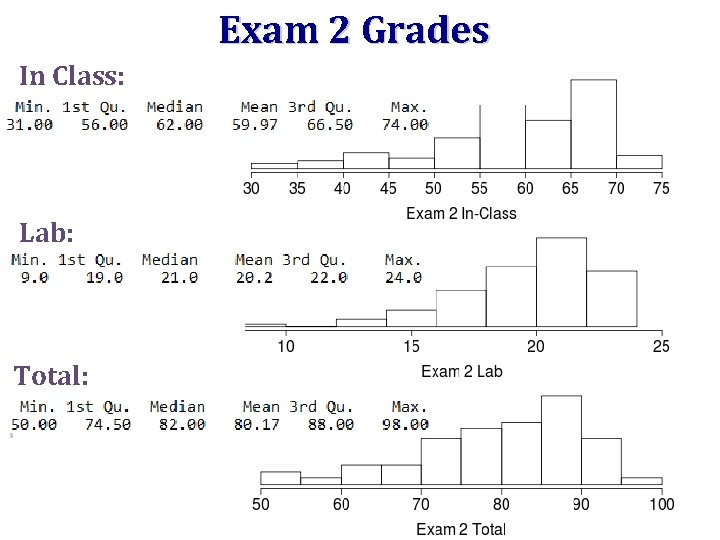

Project 2 • Details here • Group project on regression (modeling) • If you want to change groups, email me TODAY OR TOMORROW. If other people in your lab section want to change, I’ll move people around. • Need a data set with a quantitative response variable and multiple explanatory variables; explanatory variables must have at least one categorical and at least one quantitative • Proposal due next Wednesday