Shared Memory Programming for Large Scale Machines C

- Slides: 27

Shared Memory Programming for Large Scale Machines C. Barton 1, C. Cascaval 2, G. Almasi 2, Y. Zheng 3, M. Farreras 4, J. Nelson Amaral 1 1 University 2 IBM Watson Research Center 3 4 of Alberta Purdue Universitat Politecnica de Catalunya IBM Research Report RC 23853 January 27, 2006 CS 6091 2006 Michigan Technological University 3/15/6 1

Abstract q UPC is scalable and competitive with MPI on hundreds of thousands of processors. q This paper discusses the compiler and runtime system features that achieve this performance on the IBM Blue. Gene/L. q Three benchmarks are used: q HPC Random. Access q HPC STREAMS q NAS Conjugate Gradient (CG). CS 6091 2006 Michigan Technological University 3/15/6 2

1. Blue. Gene/L q 65, 536 x 2 -way 700 MHz processors (low power) q 280 sustained Tflops on HPL Linpack q 64 x 32 3 d packet-switched torus network q XL UPC compiler and UPC runtime system (RTS) CS 6091 2006 Michigan Technological University 3/15/6 3

2. 1 XL Compiler Structure q UPC source is translated to W-code q An early version did as Mu. PC: calls to the RTS were inserted into W-code. This prevents optimizations such as copy propagation and common sub-expression elimination. q The current version delays the insertion of RTS calls. Wcode is extended to represent shared variables and the memory access mode (strict or relaxed). CS 6091 2006 Michigan Technological University 3/15/6 4

XL Compiler (cont’d) q Toronto Portable Optimizer (TPO) can “apply all the classical optimizations” to shared memory accesses. q UPC-specific optimizations are also performed. CS 6091 2006 Michigan Technological University 3/15/6 5

2. 2 UPC Runtime System q The RTS targets q SMPs using Pthreads q Ethernet and LAPI clusters using LAPI q Blue. Gene/L using the Blue. Gene/L message layer q TPO does link-time optimizations between the user program and the RTS. q Shared objects are accessed through handles. CS 6091 2006 Michigan Technological University 3/15/6 6

Shared objects q The RTS identifies five shared object types: q shared scalars q shared structures/unions/enumerations q shared arrays q shared pointers [sic] with shared targets q shared pointers [sic] with private targets q “Fat” pointers increase remote access costs and limit scalability. q (optimizing remote accesses is discussed soon) CS 6091 2006 Michigan Technological University 3/15/6 7

Shared Variable Directory (SVD) q Each thread on a distributed memory machine contains a twolevel SVD containing handles pointing to all shared objects. q The SVD in each thread has THREADS+1 partitions. q Partition i contains handles for shared objects in thread i, except the last partition which contains handles for statically declared shared arrays. q Local sections of shared arrays do not have to be mapped to the same address on each thread. CS 6091 2006 Michigan Technological University 3/15/6 8

SVD benefits q Scalability: Pointers to shared do not have to span all of shared memory. Only the owner knows the addresses of its shared object. Remote access are made via handles. q Each thread mediates access to its shared objects so coherence problems are reduced 1. q Only nonblocking synchronization is needed for upc_global_alloc(), for example. 1 Runtime caching is beyond the scope of this paper. CS 6091 2006 Michigan Technological University 3/15/6 9

2. 3 Messaging Library q This topic is beyond the scope of this talk. q Note, however, that the network layer does not support onesided communication. CS 6091 2006 Michigan Technological University 3/15/6 10

3. Compiler Optimizations q 3. 1 upc_forall(init; limit; incr; affinity) q 3. 2 local memory optimizations q 3. 3 update optimizations CS 6091 2006 Michigan Technological University 3/15/6 11

3. 1 upc_forall q The affinity parameter may be: q pointer-to-shared q integer type q continue q If the (unmodified) induction variable is used the conditional is eliminated. q This is the only optimization technique used. q “. . . even this simple optimization captures most of the loops in the existing UPC benchmarks. ” CS 6091 2006 Michigan Technological University 3/15/6 12

Observations q upc_forall loops cannot be meaningfully nested. q upc_forall loops must be inner loops for this optimization to pay off. CS 6091 2006 Michigan Technological University 3/15/6 13

3. 2 Local Memory Operations q Try to turn dereferences of fat pointers into dereferences of ordinary C pointers. q Optimization is attempted only when affinity can be statically determined. q Move the base address calculation to the loop preheader (initialization block). q Generate code to access intrinsic types directly, otherwise use memcpy. CS 6091 2006 Michigan Technological University 3/15/6 14

3. 3 Update Optimizations q Consider operations of the form r = r op B, where r is a remote shared object and B is local or remote. q Implement this as an active message [Culler, UC Berkeley]. q Send the entire instruction to the thread with affinity to r. CS 6091 2006 Michigan Technological University 3/15/6 15

4. Experimental Results q 4. 1 Hardware q 4. 2 HPC Random. Access benchmark q 4. 3 Embarrassingly Parallel (EP) STREAM triad q 4. 4 NAS CG q 4. 5 Performance evaluation CS 6091 2006 Michigan Technological University 3/15/6 16

4. 1 Hardware q Development done on 64 -processor node cards. q TJ Watson: 20 racks, 40960 processors q LLNL: 64 racks, 131072 processors CS 6091 2006 Michigan Technological University 3/15/6 17

4. 2 HPC Random. Access q 111 lines of code q Read-modify-write randomly selected remote objects. q Use 50% of memory. q [Seems a good match for the update optimization. ] CS 6091 2006 Michigan Technological University 3/15/6 18

4. 3 EP STREAM Triad q 105 lines of code q All computation is done locally within a upc_forall loop. q [Seems like a good match for the loop optimization. ] CS 6091 2006 Michigan Technological University 3/15/6 19

4. 4 NAS CG q GW’s translation of MPI code into UPC. q Uses upc_memcpy in place of MPI sends and receives. q It is not clear whether IBM used GW’s hand-optimized version. q IBM mentions that they manually privatized some pointers, which is what is done in GW’s optimized version. CS 6091 2006 Michigan Technological University 3/15/6 20

4. 5 Performance Evaluation q Table 1: q FE is Mu. PC-style front end containing some optimizations q Others all use TPO front end q no optimizations q “indexing” is shared to local pointer reduction q “update” is active messages q “forall” is upc_forall affinity optimization q Speedups are relative to no TPO optimization q maximum speedup for random and stream is 2. 11 CS 6091 2006 Michigan Technological University 3/15/6 21

Combined Speedup q The combined stream speedup is 241! q This is attributed to the shared to local pointer reductions. q This seems inconsistent with “indexing” speedups of 1. 01 and 1. 32 for random and streams benchmarks, respectively. CS 6091 2006 Michigan Technological University 3/15/6 22

Table 2: Random Access q This is basically a measurement of how many asynchronous messages can be started up. q It is not known whether the network can do combining. q Beats MPI (0. 56 vs. 0. 45) on 2048 processors. CS 6091 2006 Michigan Technological University 3/15/6 23

Table 3: Streams q This is EP. CS 6091 2006 Michigan Technological University 3/15/6 24

CG q Speedup tracks MPI through 512 processors. q Speedup exceeds MPI on 1024 and 2048 processors. q This is a fixed-problem-size benchmark so network latency eventually dominates. q The improvement over MPI is explained: “In the UPC implementation, due to the use of one-sided communication, the overheads are smaller” compared to MPI two-sided overhead. [But the Blue. Gene/L network does not implement one-sided communication. ] CS 6091 2006 Michigan Technological University 3/15/6 25

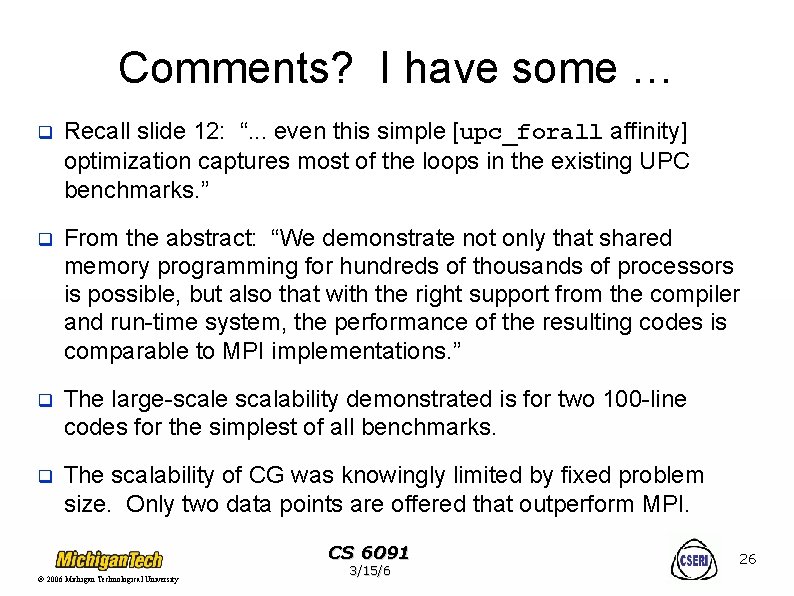

Comments? I have some … q Recall slide 12: “. . . even this simple [upc_forall affinity] optimization captures most of the loops in the existing UPC benchmarks. ” q From the abstract: “We demonstrate not only that shared memory programming for hundreds of thousands of processors is possible, but also that with the right support from the compiler and run-time system, the performance of the resulting codes is comparable to MPI implementations. ” q The large-scale scalability demonstrated is for two 100 -line codes for the simplest of all benchmarks. q The scalability of CG was knowingly limited by fixed problem size. Only two data points are offered that outperform MPI. CS 6091 2006 Michigan Technological University 3/15/6 26

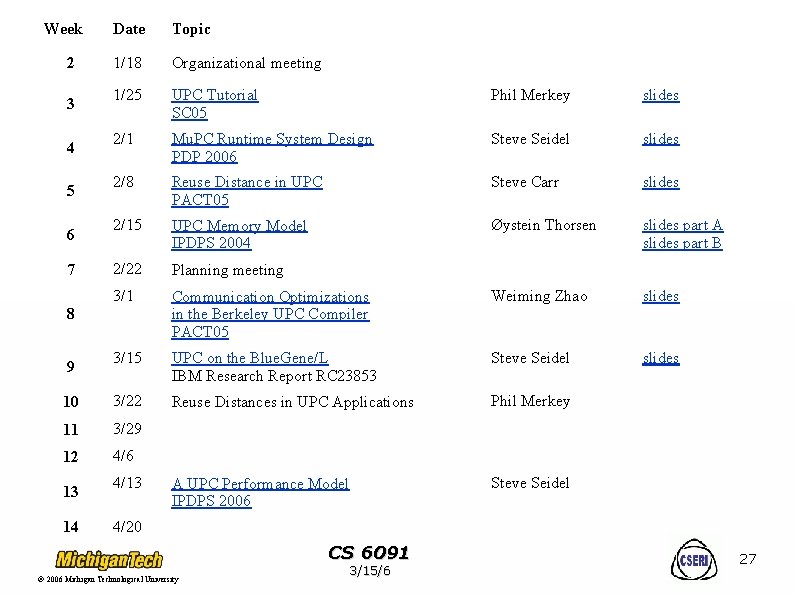

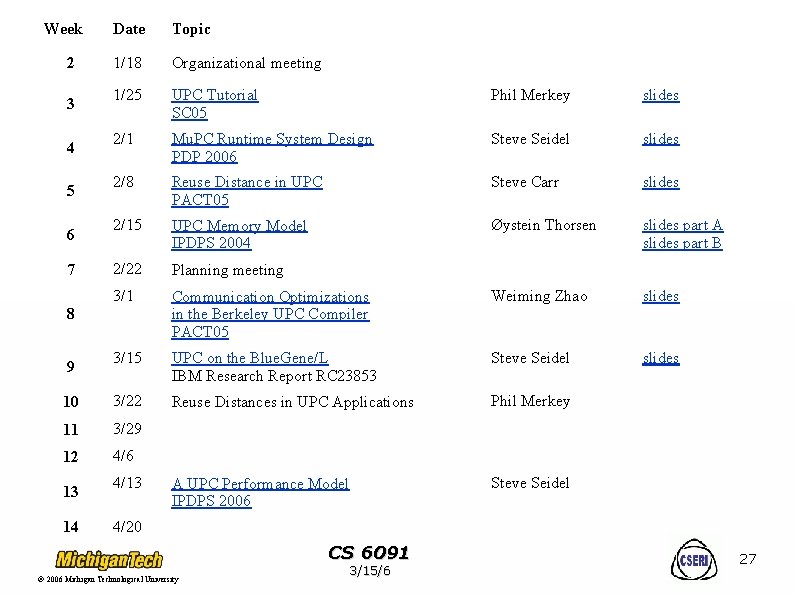

Week Date Topic 1/18 Organizational meeting 1/25 UPC Tutorial SC 05 Phil Merkey slides 2/1 Mu. PC Runtime System Design PDP 2006 Steve Seidel slides 2/8 Reuse Distance in UPC PACT 05 Steve Carr slides 2/15 UPC Memory Model IPDPS 2004 Øystein Thorsen slides part A slides part B 2/22 Planning meeting 3/1 Communication Optimizations in the Berkeley UPC Compiler PACT 05 Weiming Zhao slides 3/15 UPC on the Blue. Gene/L IBM Research Report RC 23853 Steve Seidel slides 10 3/22 Reuse Distances in UPC Applications Phil Merkey 11 3/29 12 4/6 A UPC Performance Model IPDPS 2006 Steve Seidel 2 3 4 5 6 7 8 9 13 14 4/13 4/20 CS 6091 2006 Michigan Technological University 3/15/6 27