Shared Memory Consistency Models A Tutorial Based on

Shared Memory Consistency Models: A Tutorial Based on research of: Sarita V. Adve Kourosh Gharachorloo

What do a programmer expect from shared memory? • What is important? • How can the hardware grantee it? • What relaxations can be allowed? Memory Consistency Models

Sequential Consistent

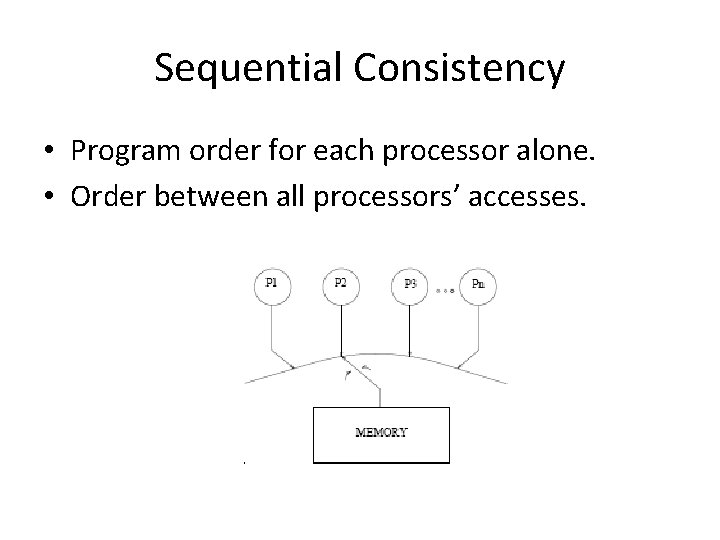

Sequential Consistency • Program order for each processor alone. • Order between all processors’ accesses.

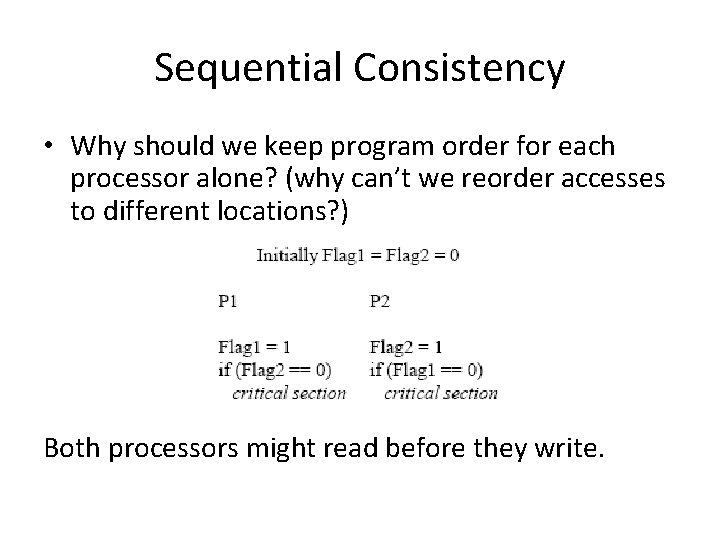

Sequential Consistency • Why should we keep program order for each processor alone? (why can’t we reorder accesses to different locations? ) Both processors might read before they write.

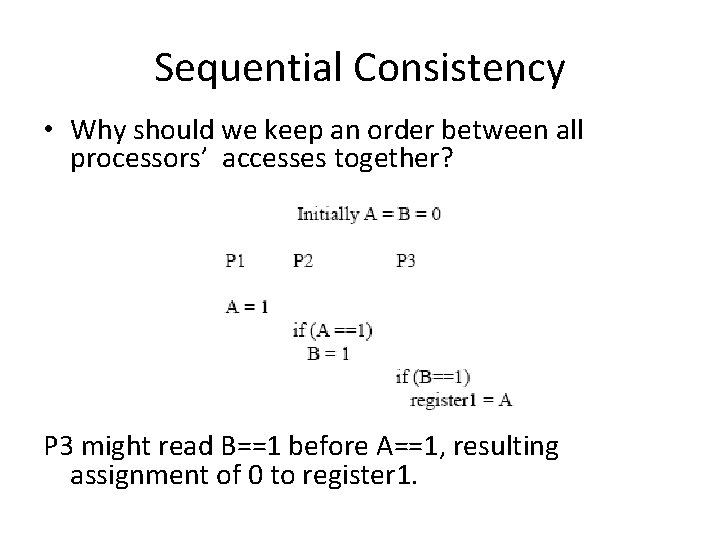

Sequential Consistency • Why should we keep an order between all processors’ accesses together? P 3 might read B==1 before A==1, resulting assignment of 0 to register 1.

Implementing Sequential Consistency • Hardware optimizations can violate Sequential Consistency. • Extra communication can be used to prevent those violations. • We will consider two type of architectures – Without Caches – With Caches

Architectures Without Caches

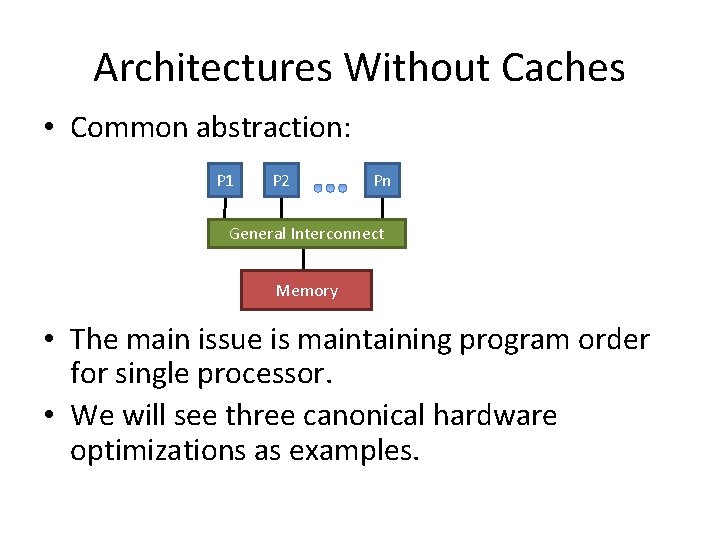

Architectures Without Caches • Common abstraction: P 1 P 2 Pn General Interconnect Memory • The main issue is maintaining program order for single processor. • We will see three canonical hardware optimizations as examples.

Architectures Without Caches • Write Buffers with Bypassing Capability: • Writes might be delayed after reads for both processors. • For single processor such optimization would be OK. • We must ensure write-read order.

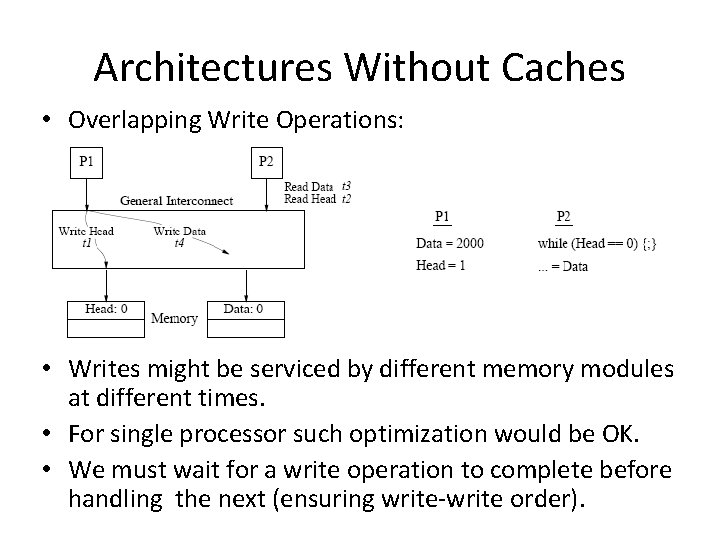

Architectures Without Caches • Overlapping Write Operations: • Writes might be serviced by different memory modules at different times. • For single processor such optimization would be OK. • We must wait for a write operation to complete before handling the next (ensuring write-write order).

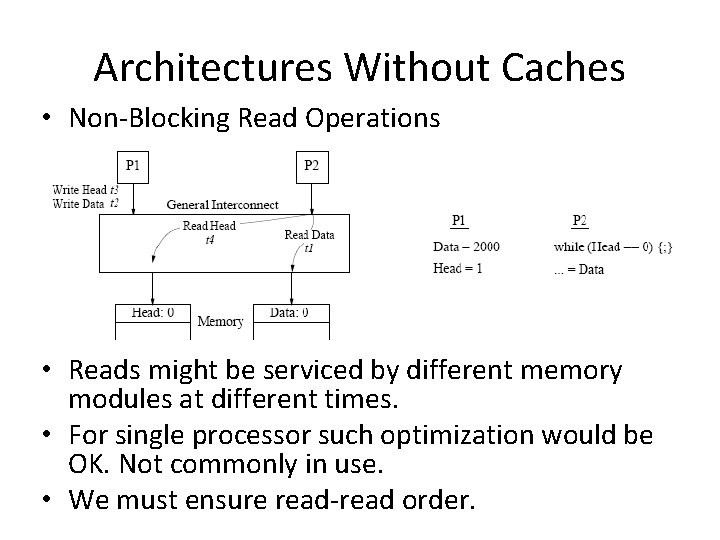

Architectures Without Caches • Non-Blocking Read Operations • Reads might be serviced by different memory modules at different times. • For single processor such optimization would be OK. Not commonly in use. • We must ensure read-read order.

Architectures With Caches

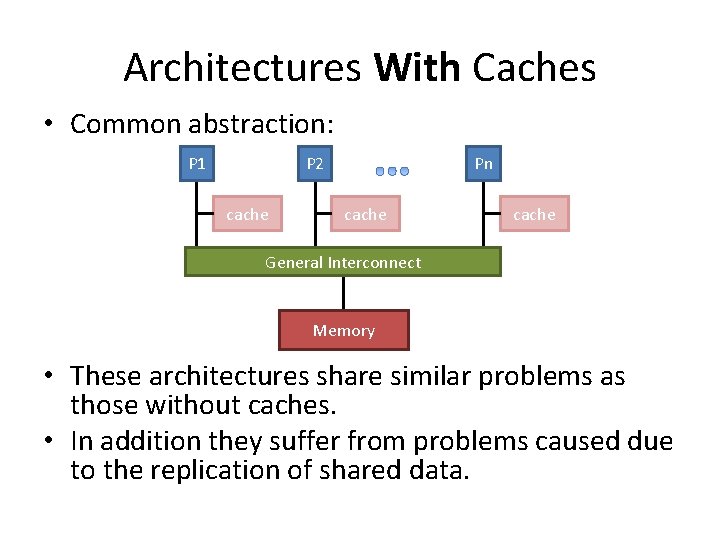

Architectures With Caches • Common abstraction: P 1 P 2 cache Pn cache General Interconnect Memory • These architectures share similar problems as those without caches. • In addition they suffer from problems caused due to the replication of shared data.

Cache coherence and Sequential Consistent • A common definition: 1. A write can be seen by all processors (eventually). by program order. 2. Writes __________ to the same location appear to be seen in the same order by all processors. • Not enough for Sequential Consistent • Can be considered as a mechanism that propagates writes to the cached copies. • Can be used by a memory consistency model.

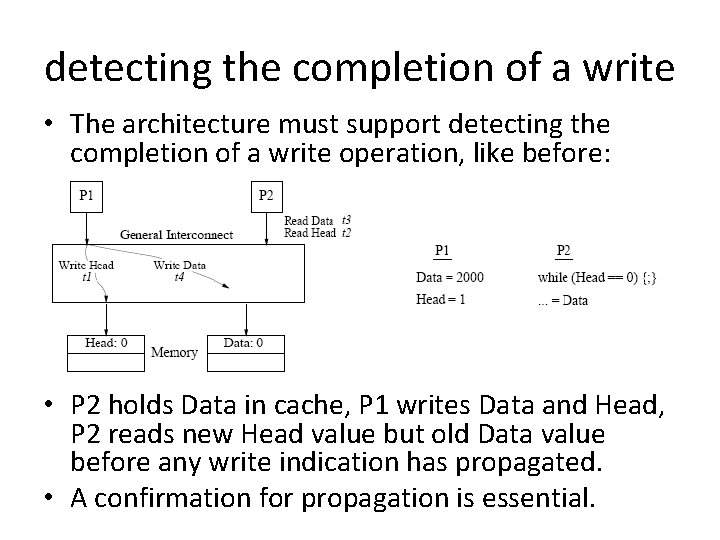

detecting the completion of a write • The architecture must support detecting the completion of a write operation, like before: • P 2 holds Data in cache, P 1 writes Data and Head, P 2 reads new Head value but old Data value before any write indication has propagated. • A confirmation for propagation is essential.

Writes’ Atomicity • Maintaining the illusion of atomicity for writes can be ensured by two conditions. • we will see: – Motivations for the conditions (why we need them) – Their description (what should we enforce) – Implementations (how can we enforce)

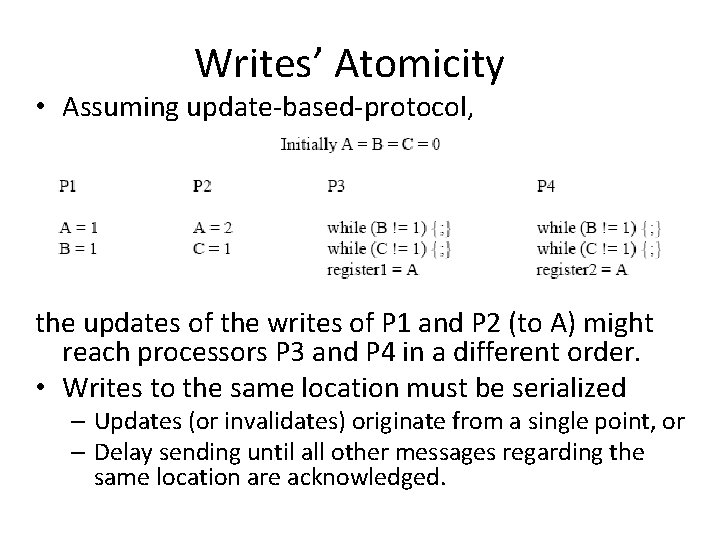

Writes’ Atomicity • Assuming update-based-protocol, the updates of the writes of P 1 and P 2 (to A) might reach processors P 3 and P 4 in a different order. • Writes to the same location must be serialized – Updates (or invalidates) originate from a single point, or – Delay sending until all other messages regarding the same location are acknowledged.

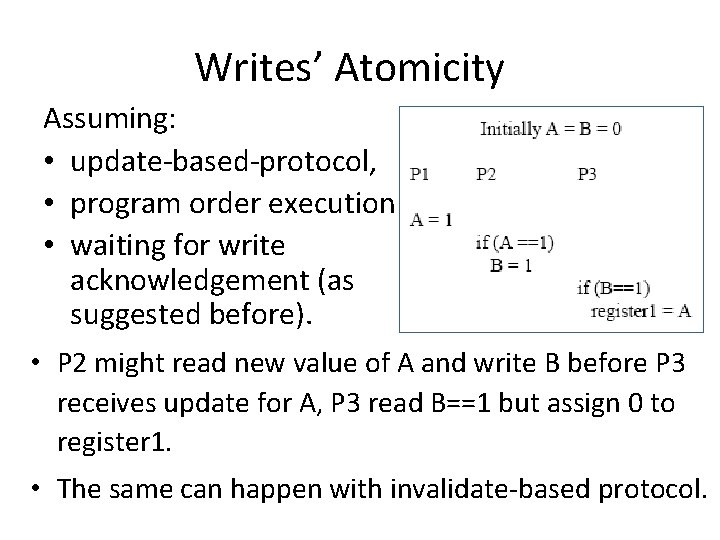

Writes’ Atomicity Assuming: • update-based-protocol, • program order execution • waiting for write acknowledgement (as suggested before). • P 2 might read new value of A and write B before P 3 receives update for A, P 3 read B==1 but assign 0 to register 1. • The same can happen with invalidate-based protocol.

Writes’ Atomicity • We should prohibit a read from returning a newly written value until all cached copies have acknowledged the receipt of the invalidation or update message generated by the write. • With update-based-protocols it’s not straightforward. • Two phase update scheme can be employed: – Sending the update and receiving acknowledgments – Sending a confirmation to all updated caches

Compilers • Compilers also use optimizations. • For example: – Register allocation – Software pipelining • Such optimizations might do reordering of shared memory operations and to cause similar violations to those in hardware or even worst.

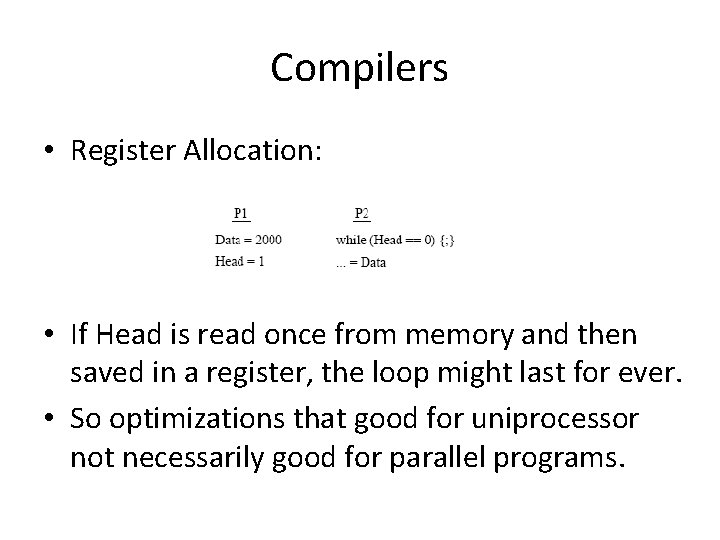

Compilers • Register Allocation: • If Head is read once from memory and then saved in a register, the loop might last for ever. • So optimizations that good for uniprocessor not necessarily good for parallel programs.

Sequential Consistency (Summary) • Many hardware and compiler optimization can violate Sequential Consistency. • Hardware optimizations typically need to satisfy the following requirements: – Program order - Processor must ensure completion of one memory access before continuing to next (by acknowledgment from memory or from all processors with cache architectures) – Write atomicity – writes to the same location should be serialized and the value of a write shouldn’t be returned by a read until all invalidates or updates are acknowledged

Sequential Consistency (Summary) • There are hardware optimizations that don’t violate Sequential Consistency: – Prefetch ownership for delayed writes (for invalidation-based-protocols) – Speculatively service delayed reads • There are compiler algorithms to detect when memory operations can be reordered without violating Sequential Consistency. – Can be used for hardware optimizations.

Relaxed Memory Models

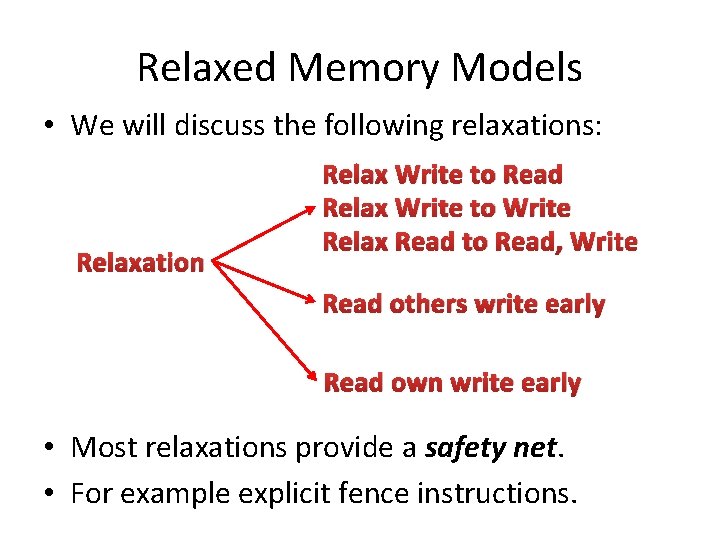

Relaxed Memory Models • Relaxing Sequential Consistency can have good impact on performance of hardware shared-memory systems. • We categorize relaxed memory consistency models by how they relax: – Program order requirement , and – Write atomicity requirement.

Relaxed Memory Models • We will discuss the following relaxations: Relaxation Relax Write to Read Relax Write to Write Relax Read to Read, Write Read others write early Read own write early • Most relaxations provide a safety net. • For example explicit fence instructions.

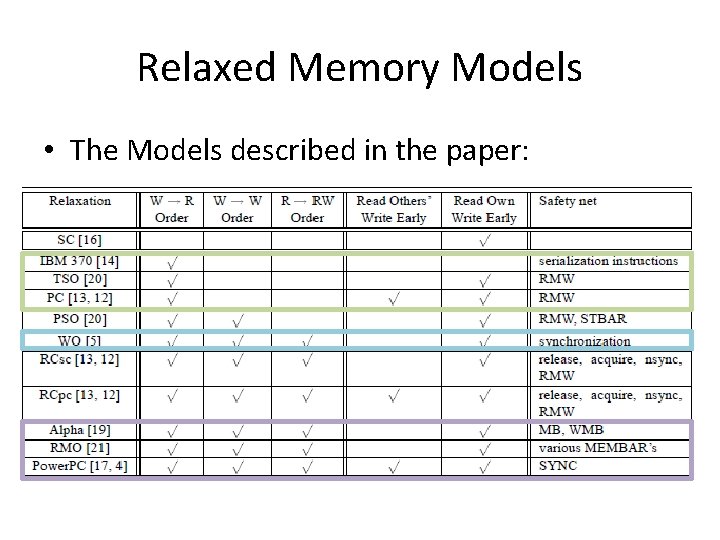

Relaxed Memory Models • The Models described in the paper:

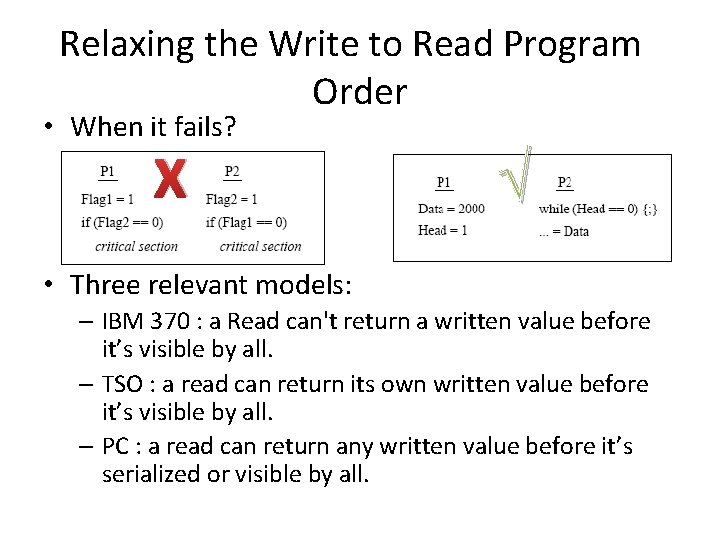

Relaxing the Write to Read Program Order • When it fails? X √ • Three relevant models: – IBM 370 : a Read can't return a written value before it’s visible by all. – TSO : a read can return its own written value before it’s visible by all. – PC : a read can return any written value before it’s serialized or visible by all.

Relaxing the Write to Read (Examples) Left: TSO and PC reads shouldn’t wait and can return old values. Right: Only on PC, when P 2 reads A it shouldn’t wait for new A value to be visible by P 3 too.

Relaxing the Write to Read (Safety Nets) • Safety Nets for Program order: – IBM 370 : special serialization instructions. – TSO : none explicit, use read-modify-write instruction. – PC : none explicit, use read-modify-write instruction.

Relaxing the Write to Read (Safety Nets) • Safety Nets for writes’ atomicity: – IBM 370 : no need. – TSO : use read-modify-write (in case of a read after a write to the same location). – PC : use read-modify-write. • Disadvantages for using read-modify-write: – The implementation might not allow easy replacement. – Replacing a read by read-modify-write has a bad impact on performance.

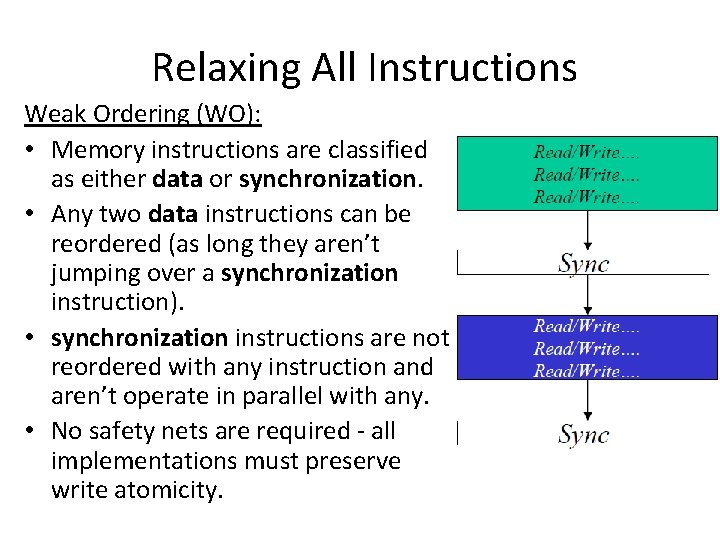

Relaxing All Instructions Weak Ordering (WO): • Memory instructions are classified as either data or synchronization. • Any two data instructions can be reordered (as long they aren’t jumping over a synchronization instruction). • synchronization instructions are not reordered with any instruction and aren’t operate in parallel with any. • No safety nets are required - all implementations must preserve write atomicity.

Relaxing All Instructions • Possible implementation: – Increase counter when data instruction start. – Decrease when finished. – Synchronization instruction can be started only when the counter is zero. – No other instruction can start until synchronization instruction is finished.

Relaxing All Instructions Alpha, RMO and Power. PC • All provide explicit fence instructions as safety nets: • Alpha: – Memory barrier (MB) and write memory barrier (WMB). – No safety net for write atomicity • SPARC V 9 RMO: generic MEMBAR can be used to ensure any combination R/W. • Power. PC: SYNC instruction like MB but doesn't order two reads. – Need to use read-modify-write to enforce order between reads and to provide write atomicity (allows early read of written value).

Alternate Abstraction for Relaxed Memory Models

Programmer-centric • The relaxed models we saw provide Systemcentric specifications. • They increase programming complexity – Hard to identify the ordering constraints needed for correctness. – Hard to import code from one model to the other. • A higher level Programmer-centric specification should be used.

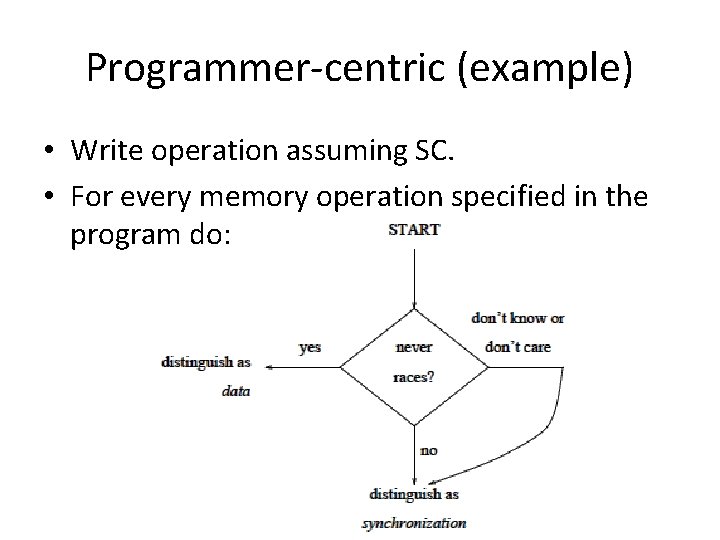

Programmer-centric (example) The Data-race-free-0 Model: • Weak Ordering is quite simple, but still we have to distinguish between Data instructions to synchronization ones. • We do that using data-races. • Two operations forms a data race if: – both access the same location. – one of them is write. – they are adjacent (in some SC order).

Programmer-centric (example) • Write operation assuming SC. • For every memory operation specified in the program do:

Mechanism for Distinguishing Memory Operations

Conveying Information at the Programming Language Level • Some languages offer high level parallel constructs, for example Do. All loops. • Languages can provide a library of common synchronization routines. • Programmer may allowed to directly use memory operations (at program level) for synchronization, for example using memory location as flag. – Identify code sections as data or synchronization – Associate data or synchronization attribute with a shared variable or address. Perhaps using additional type declaration (what is the default type? )

Conveying Information to the Hardware • Information from programming language must be provided to the underlying hardware. • Information about memory operation may be associated by address ranges or memory instructions. • Association of address ranges can be done by treating operations to specific pages ad data or synchronization operations • Association of memory instructions can be done by: – Using different versions of instructions (extra opcodes). – Using high order bits of virtual memory address. – Some instruction are treated as synchronization by default (for example compare&swap)

Conveying Information to the Hardware • Most commercial systems don’t provide these mechanisms and instead provide explicit fence instructions. • It’s the compiler duty to use them and provide synchronization.

Summery • • Sequential Consistency Optimizations to SC Relaxed Models Programmer-centric view

Summery • • Sequential Consistency Optimizations to SC Relaxed Models Programmer-centric view

- Slides: 46