SGI Contributions to Supercomputing by 2010 Steve Reinhardt

SGI Contributions to Supercomputing by 2010 Steve Reinhardt Director of Engineering spr@sgi. com

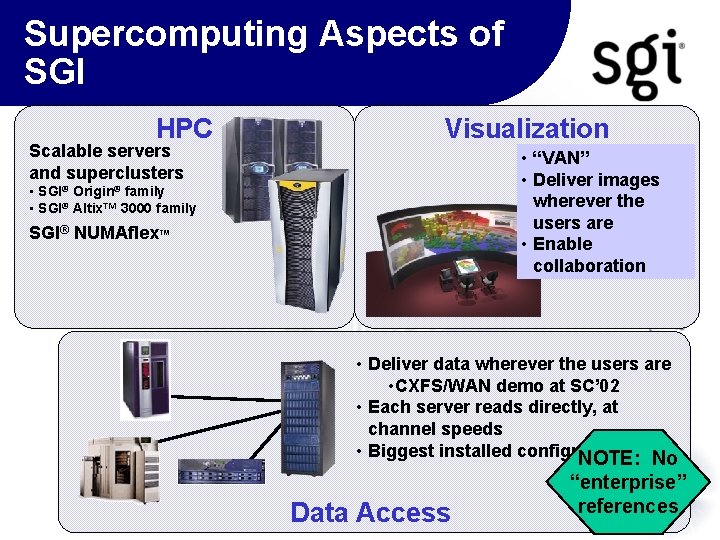

Supercomputing Aspects of SGI HPC Scalable servers and superclusters Visualization • “VAN” • Deliver images wherever the users are • Enable collaboration • SGI® Origin® family • SGI® Altix™ 3000 family SGI® NUMAflex™ • Deliver data wherever the users are • CXFS/WAN demo at SC’ 02 • Each server reads directly, at channel speeds • Biggest installed configuration NOTE: . 5 PB No Data Access “enterprise” references

SGI in HPC • Memory is unifying theme • globally addressable up to O(PB) • incorporating varied processing types • latency (-> 500 ns for 10 KP) • bandwidth (local stride-1 B: F -> 2. 0+ local gather/scatter B: F. 5 -1. 0 remote bisection BW B: F ->. 3) • Sustained performance • differentiated scaling (latency & bandwidth) • better memory interface • new synchronization substrate • Raise the level of programming abstraction • UPC/CAF (near-term) • parallel Matlab (radical)

SGI in HPC • SGI Origin® family • MIPS processors, Irix OS • exploit low power consumption, ISA control • SGI Altix™ family • IPF processors, Linux OS • exploit SGI interconnect, with industry-standard ISA and rapid OS maturation

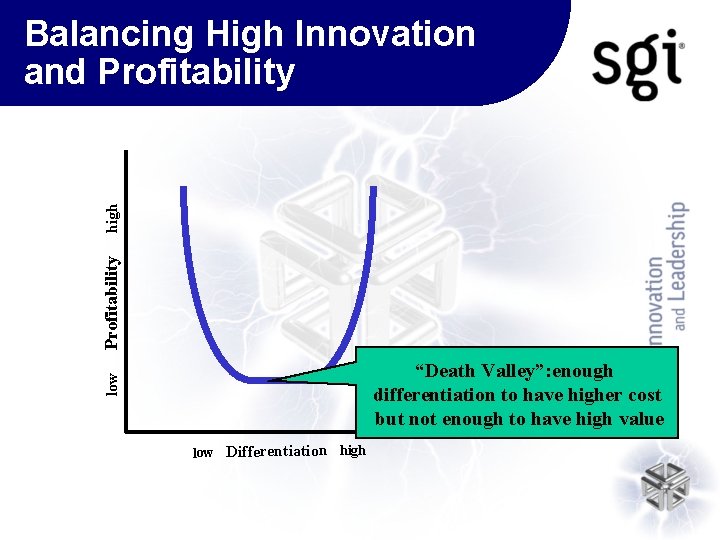

Profitability high Balancing High Innovation and Profitability low “Death Valley”: enough differentiation to have higher cost but not enough to have high value low Differentiation high

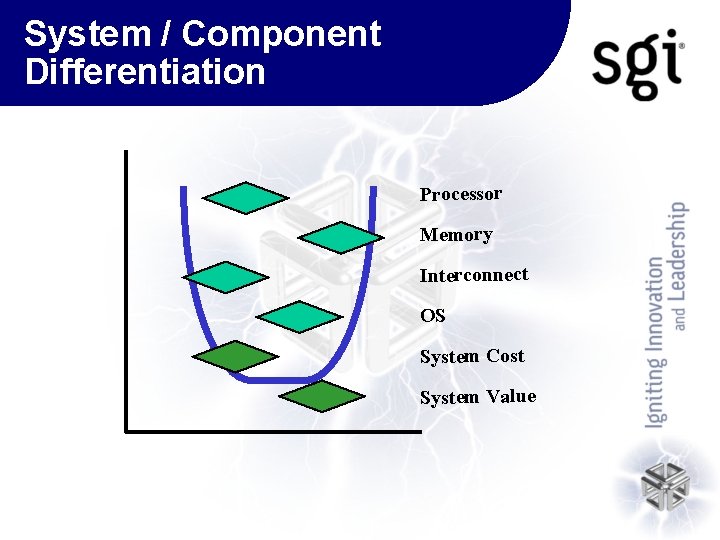

System / Component Differentiation Processor Memory Interconnect OS System Cost System Value

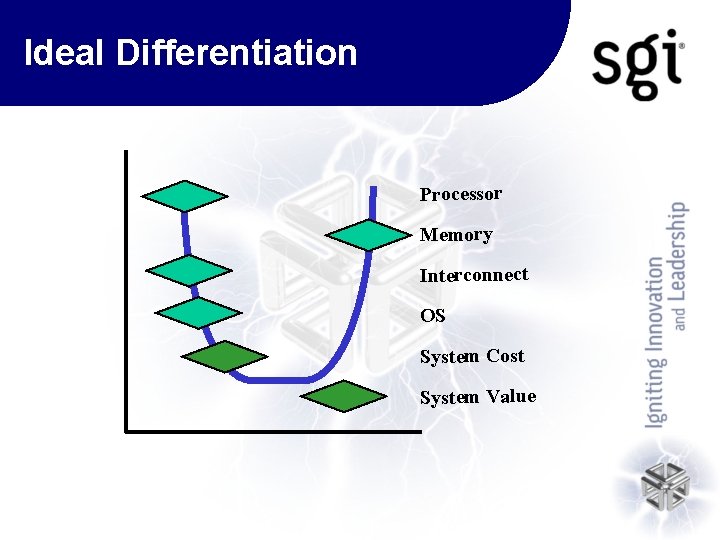

Ideal Differentiation Processor Memory Interconnect OS System Cost System Value

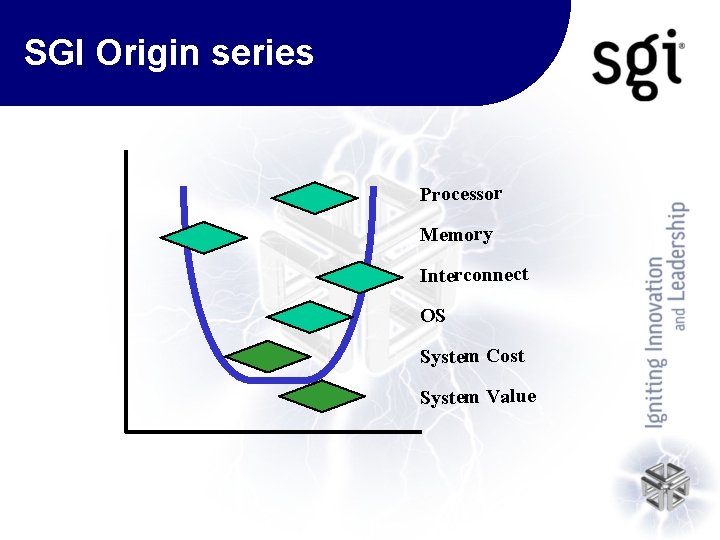

SGI Origin series Processor Memory Interconnect OS System Cost System Value

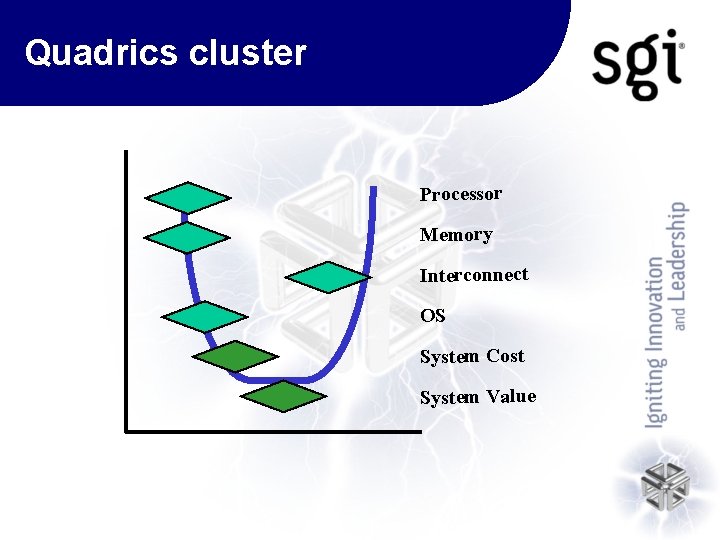

Quadrics cluster Processor Memory Interconnect OS System Cost System Value

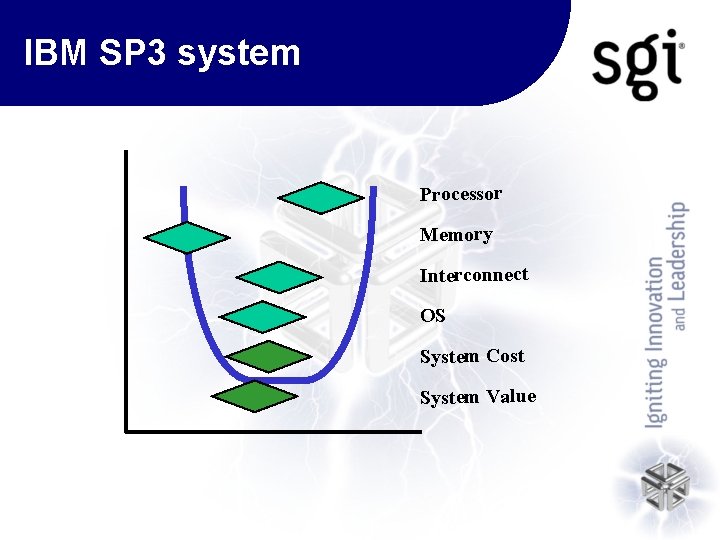

IBM SP 3 system Processor Memory Interconnect OS System Cost System Value

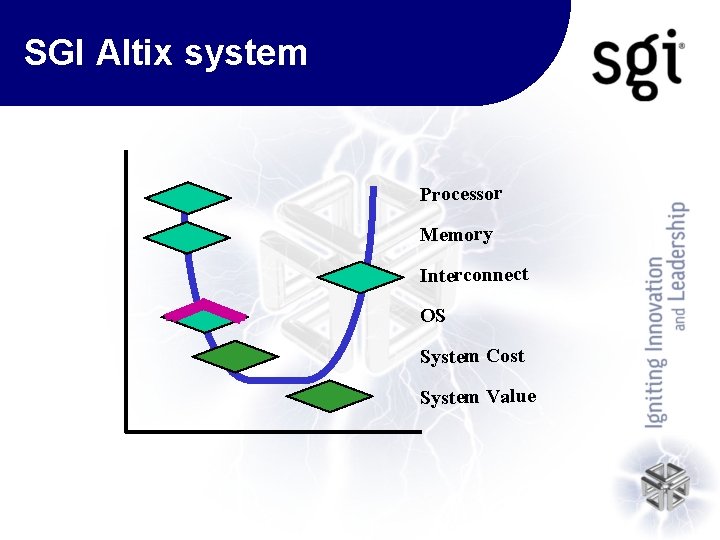

SGI Altix system Processor Memory Interconnect OS System Cost System Value

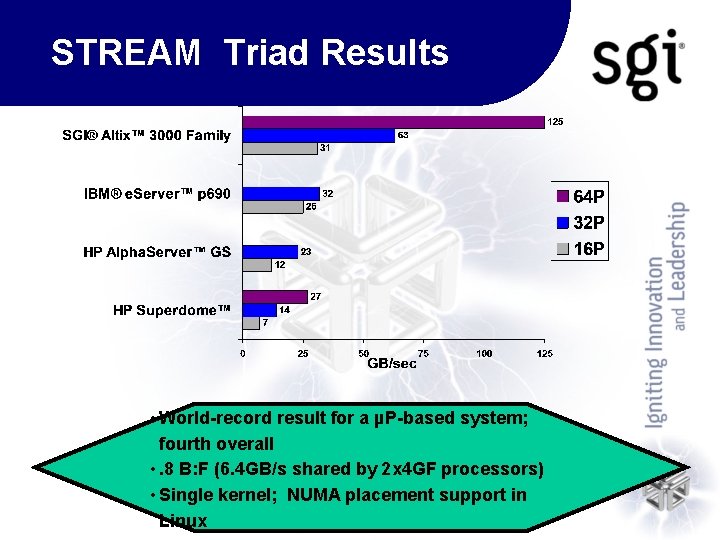

STREAM Triad Results • World-record result for a µP-based system; fourth overall • . 8 B: F (6. 4 GB/s shared by 2 x 4 GF processors) • Single kernel; NUMA placement support in Linux

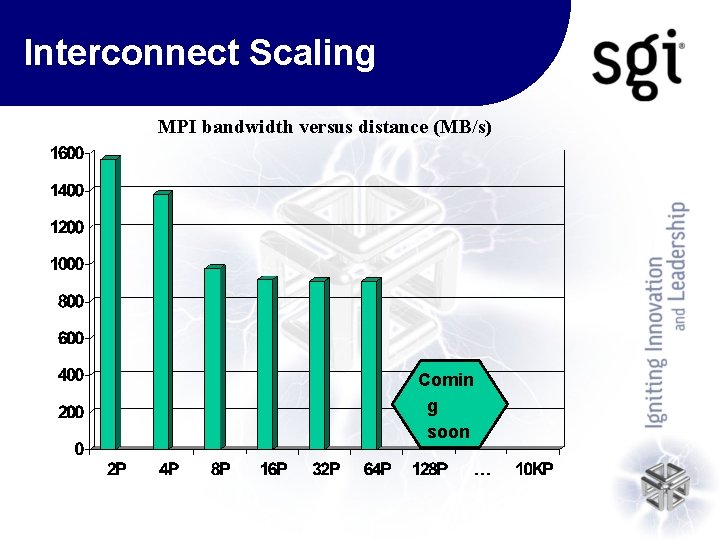

Interconnect Scaling MPI bandwidth versus distance (MB/s) Comin g soon

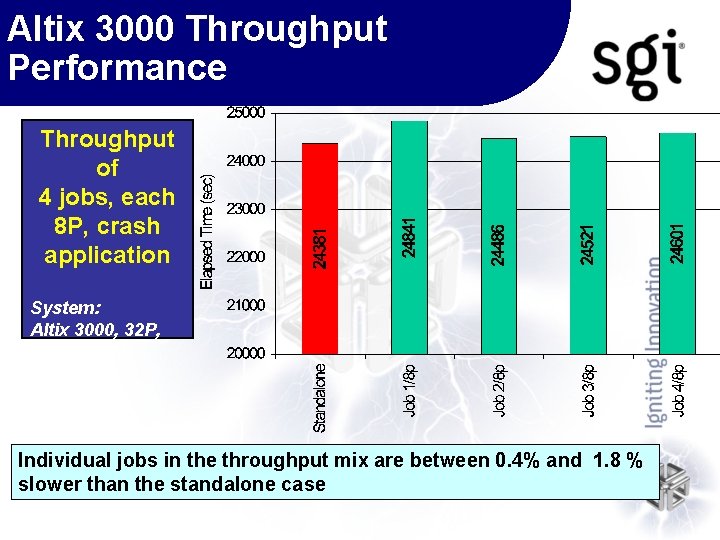

Altix 3000 Throughput Performance Throughput of 4 jobs, each 8 P, crash application System: Altix 3000, 32 P, 64 GB, XVM, TP 900 Individual jobs in the throughput mix are between 0. 4% and 1. 8 % slower than the standalone case

Summary: SGI for HPC • Long-term directions –Memory: globally addressable, high BW, low latency –Strong delivered performance • differentiated scaling (latency & bandwidth) • better memory interface • new synchronization substrate –Raise the level of programming abstraction • UPC/CAF (near-term); parallel Matlab (radical) • Near-term deliverables –Altix 3000 system • distinguished performance

- Slides: 17