Session Objectives And Takeaways A word on Perf

Session Objectives And Takeaways

A word on Perf & VDI architecture

VDI load during various phases Primary focus of today’s talk

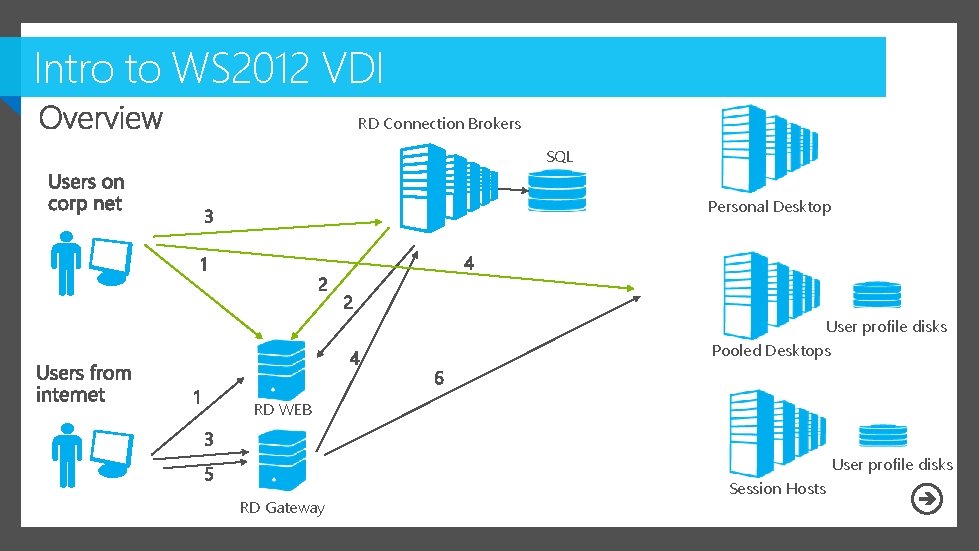

Intro to WS 2012 VDI RD Connection Brokers SQL Personal Desktop User profile disks Pooled Desktops RD WEB User profile disks RD Gateway Session Hosts

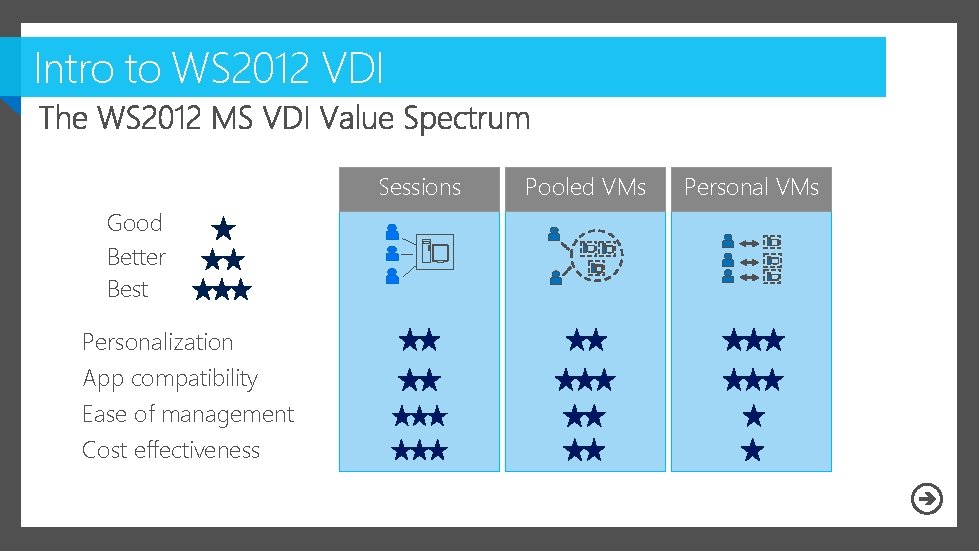

Intro to WS 2012 VDI Sessions Good Better Best Personalization App compatibility Ease of management Cost effectiveness Pooled VMs Personal VMs

Designing a large scale MS VDI deployment 80% of users running on LAN 20% connecting from internet

Designs for a large scale VDI deployment

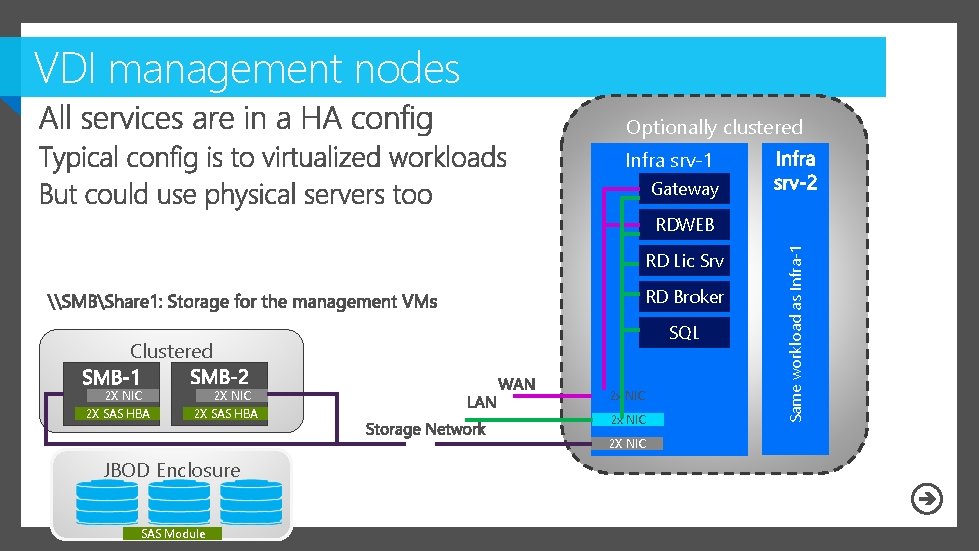

VDI management nodes Optionally clustered Infra srv-1 Gateway RD Lic Srv RD Broker SQL Clustered 2 X NIC 2 X SAS HBA 2 x NIC 2 X NIC JBOD Enclosure SAS Module Same workload as Infra-1 RDWEB

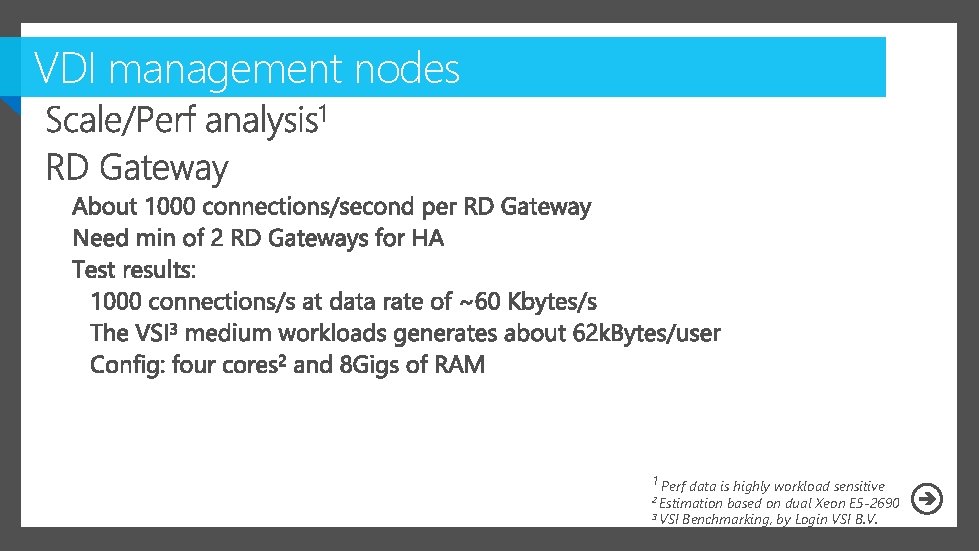

VDI management nodes 1 Perf data is highly workload sensitive 2 Estimation based on dual Xeon E 5 -2690 3 VSI Benchmarking, by Login VSI B. V.

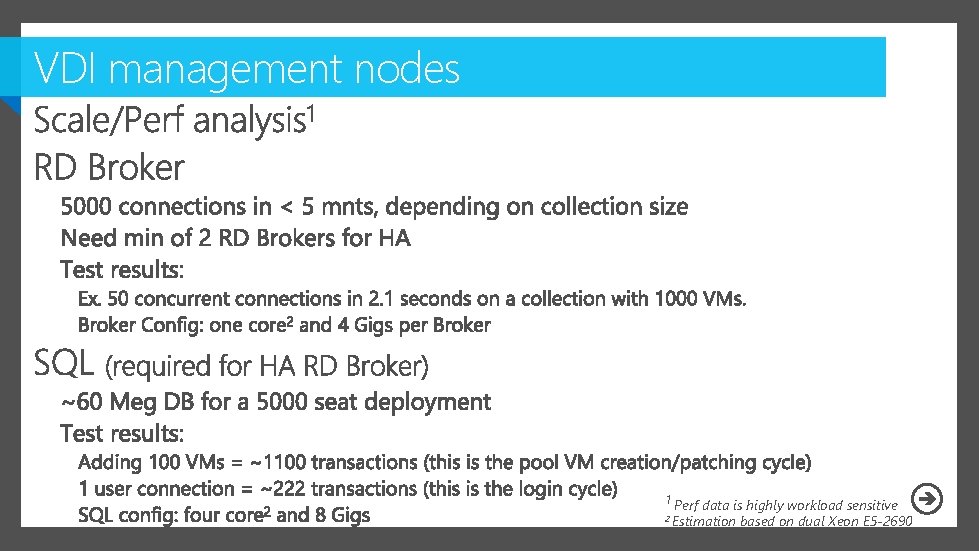

VDI management nodes 1 Perf data is highly workload sensitive 2 Estimation based on dual Xeon E 5 -2690

VDI management nodes 1 Perf data is highly workload sensitive

Designs for a large scale VDI deployment

VDI compute and storage nodes

VDI compute and storage nodes

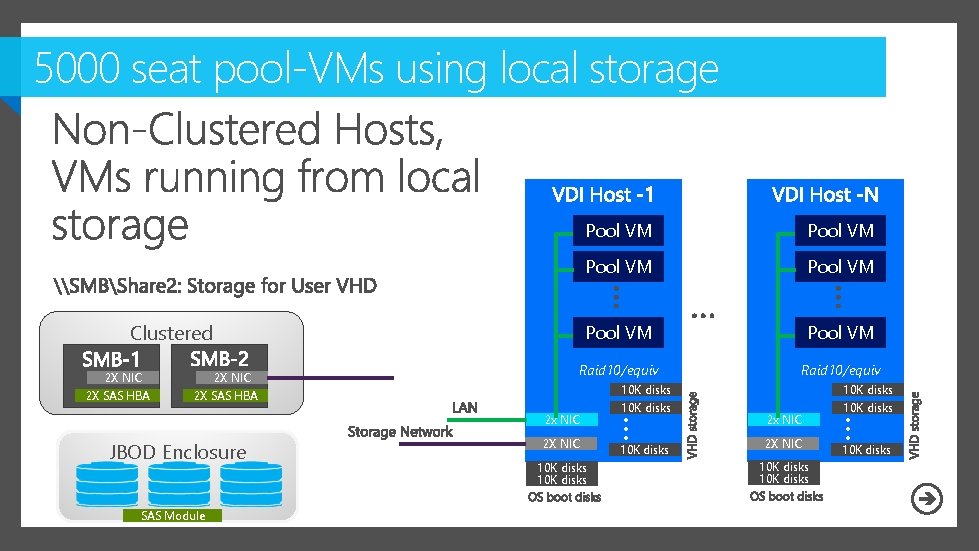

5000 seat pool-VMs using local storage Pool VM Pool VM Raid 10/equiv Clustered 2 X NIC 2 X SAS HBA SAS Module 2 X NIC 10 K disks 2 x NIC 2 X NIC 10 K disks … JBOD Enclosure … 2 x NIC 10 K disks VHD storage Pool VM 10 K disks

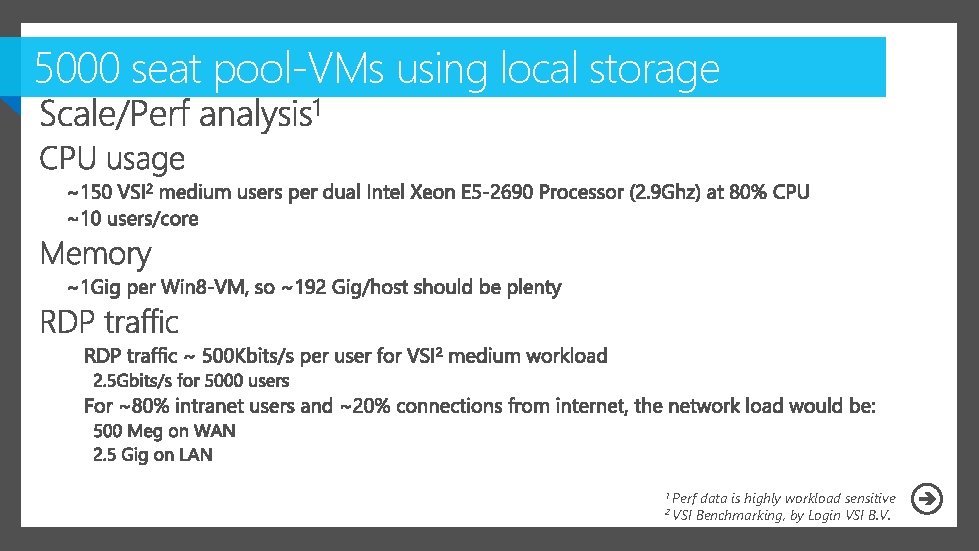

5000 seat pool-VMs using local storage 1 Perf 2 VSI data is highly workload sensitive Benchmarking, by Login VSI B. V.

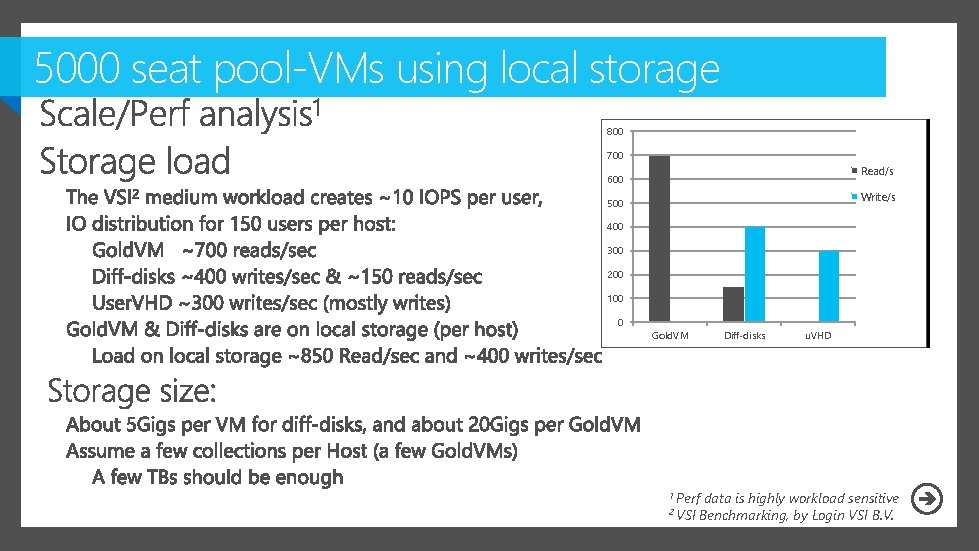

5000 seat pool-VMs using local storage 800 700 Read/s 600 Write/s 500 400 300 200 100 0 Gold. VM Diff-disks 1 Perf 2 VSI u. VHD data is highly workload sensitive Benchmarking, by Login VSI B. V.

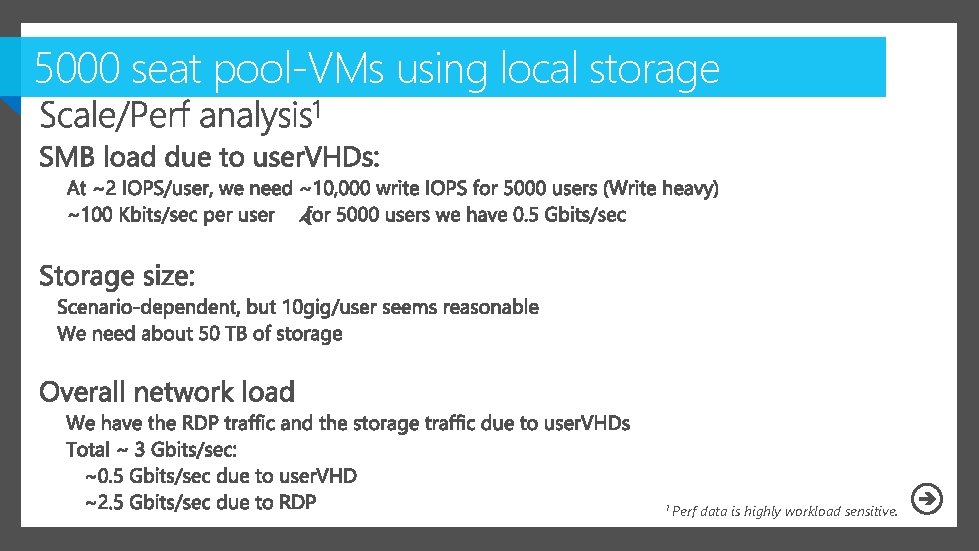

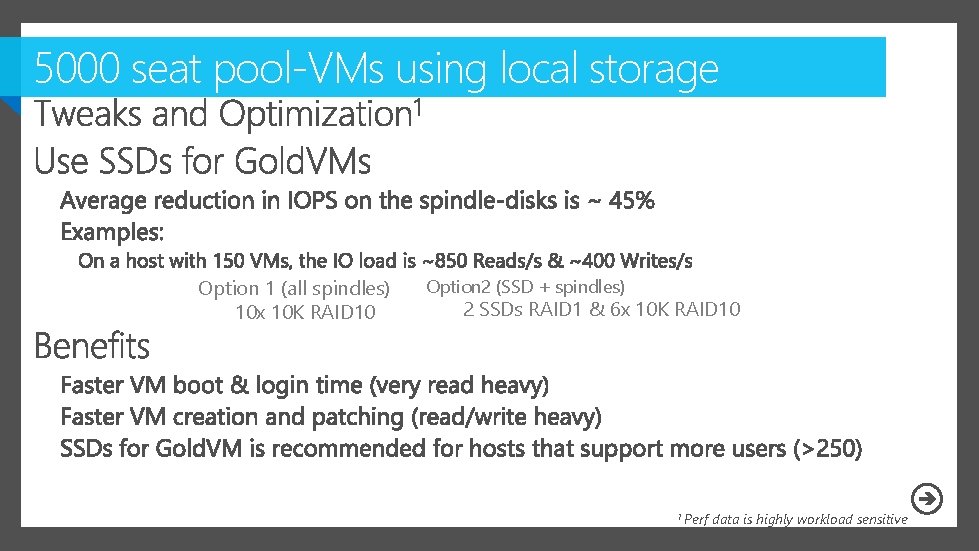

5000 seat pool-VMs using local storage 1 Perf data is highly workload sensitive.

5000 seat pool-VMs using local storage Option 1 (all spindles) 10 x 10 K RAID 10 Option 2 (SSD + spindles) 2 SSDs RAID 1 & 6 x 10 K RAID 10 1 Perf data is highly workload sensitive

VDI compute and storage nodes

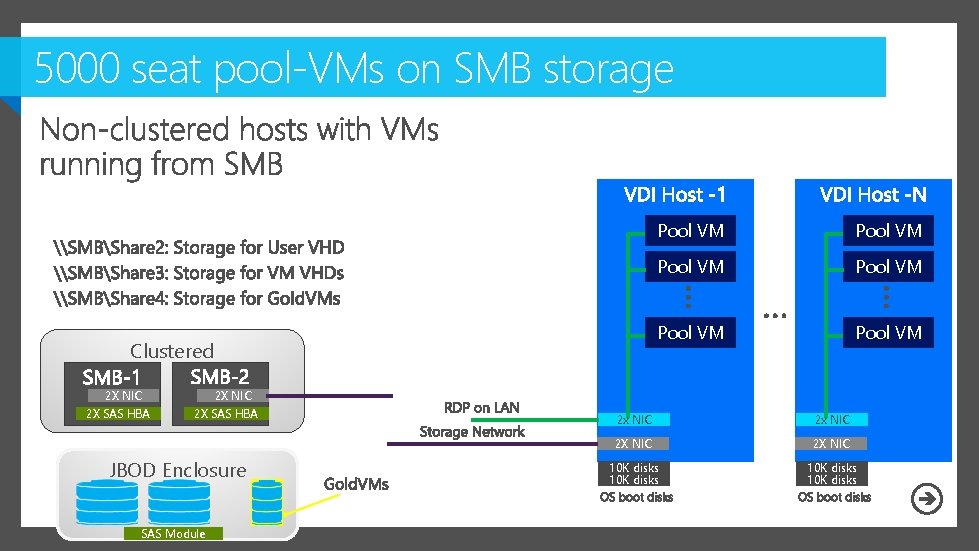

5000 seat pool-VMs on SMB storage Clustered 2 X NIC 2 X SAS HBA Pool VM Pool VM 2 X NIC 2 X SAS HBA JBOD Enclosure SAS Module 2 x NIC 2 X NIC 10 K disks

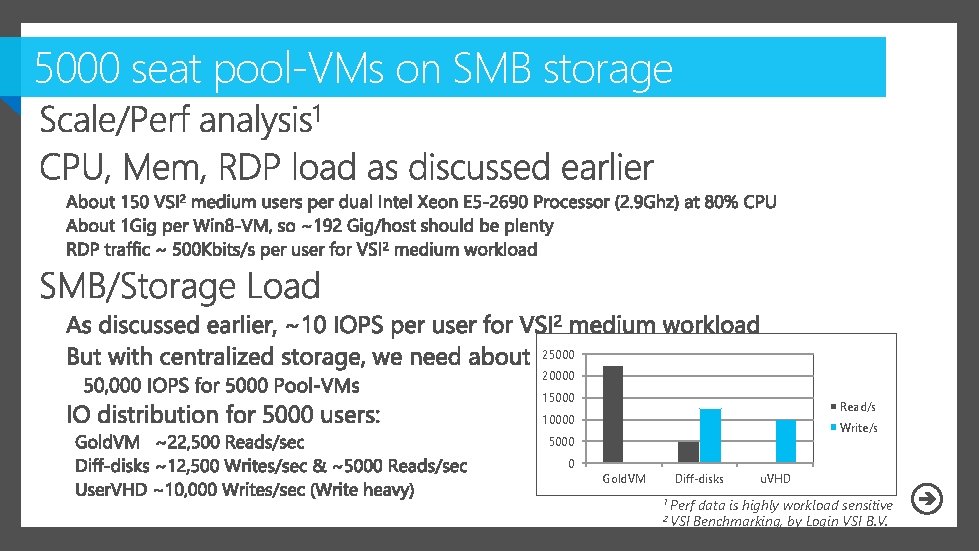

5000 seat pool-VMs on SMB storage 25000 20000 15000 Read/s 10000 Write/s 5000 0 Gold. VM Diff-disks 1 Perf 2 VSI u. VHD data is highly workload sensitive Benchmarking, by Login VSI B. V.

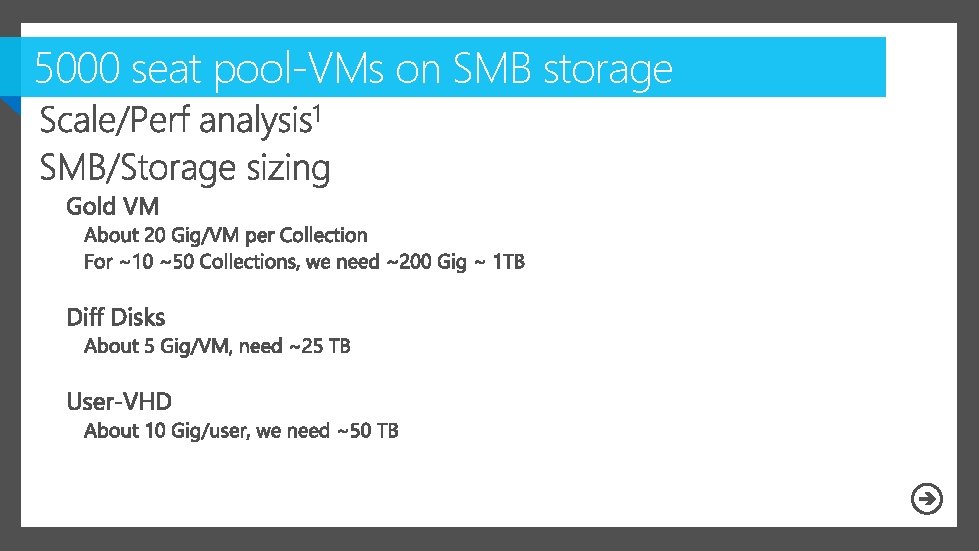

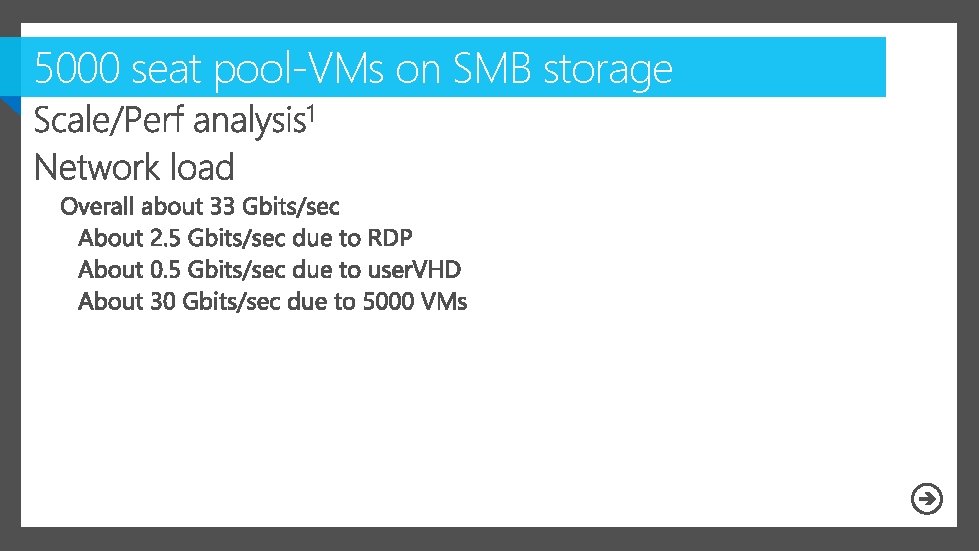

5000 seat pool-VMs on SMB storage 1 Perf data is highly workload sensitive

5000 seat pool-VMs on SMB storage 1 Perf data is highly workload sensitive

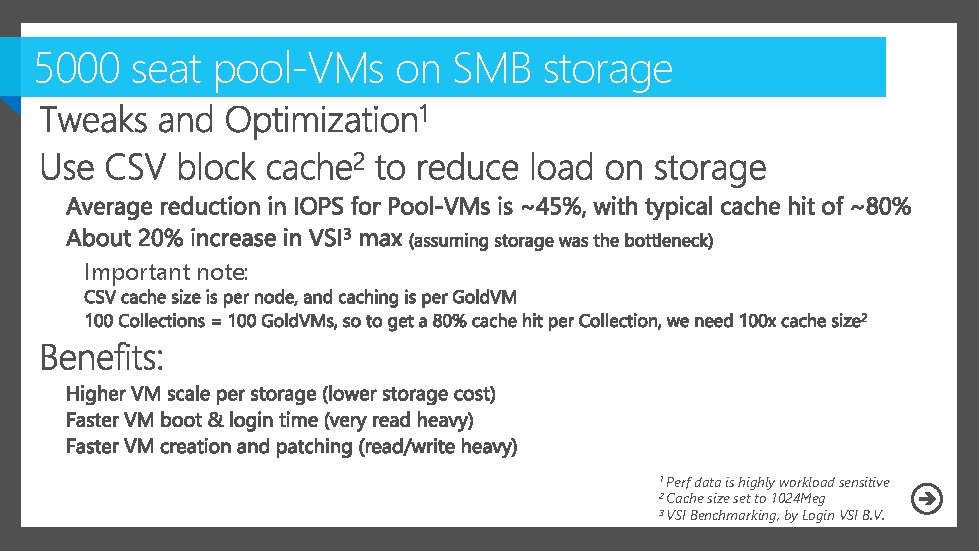

5000 seat pool-VMs on SMB storage Important note: 1 Perf data is highly workload sensitive size set to 1024 Meg 3 VSI Benchmarking, by Login VSI B. V. 2 Cache

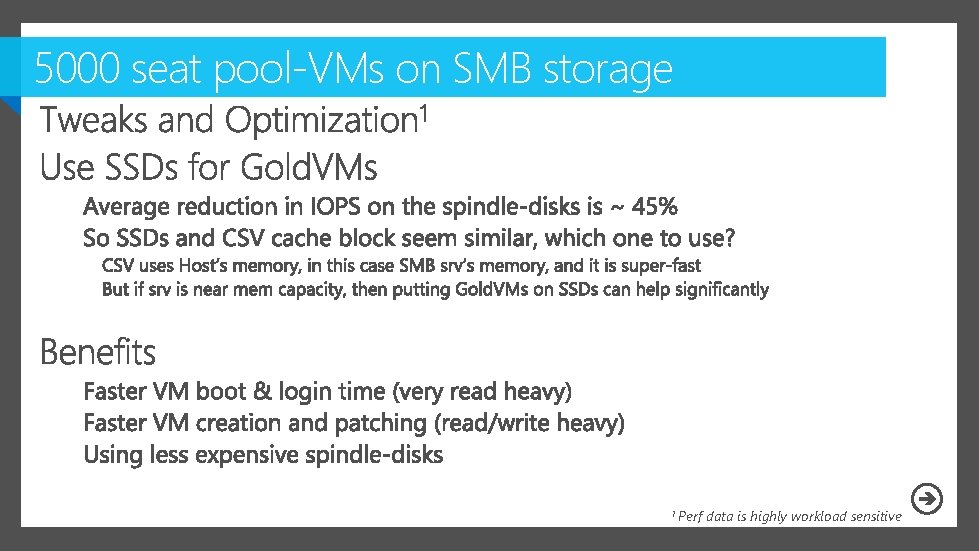

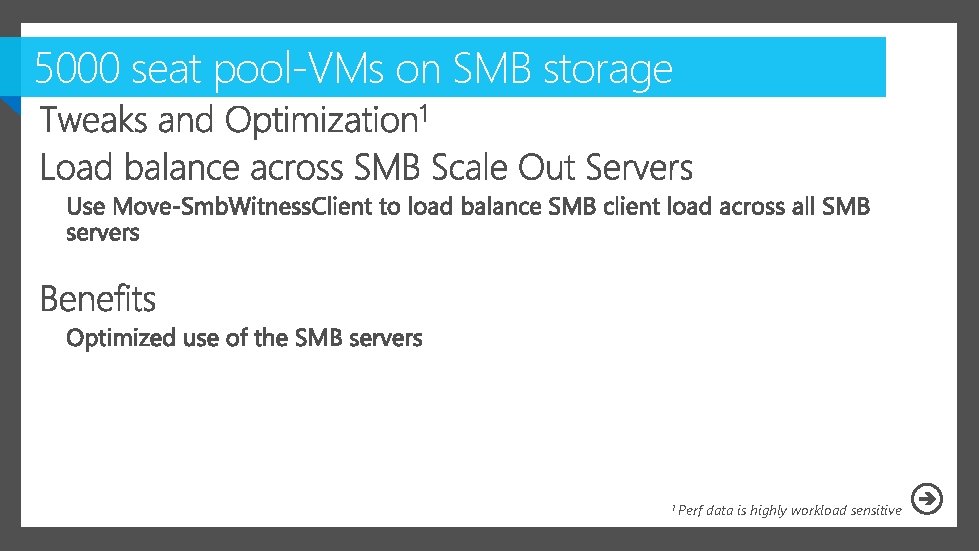

5000 seat pool-VMs on SMB storage 1 Perf data is highly workload sensitive

5000 seat pool-VMs on SMB storage 1 Perf data is highly workload sensitive

VDI compute and storage nodes 4000 Pool-VMs 1000 PD-VMs

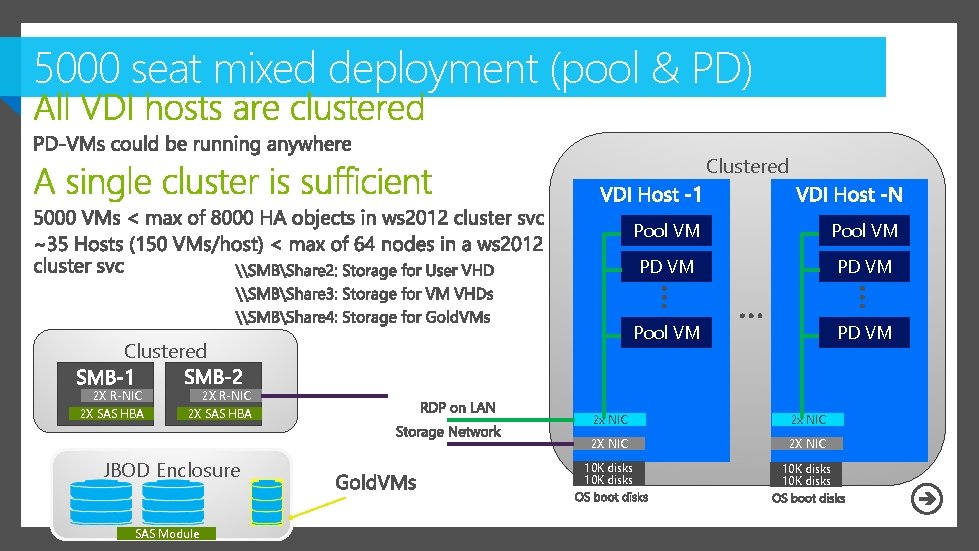

5000 seat mixed deployment (pool & PD) Clustered 2 X R-NIC 2 X SAS HBA JBOD Enclosure SAS Module Pool VM PD VM 2 x NIC 2 X NIC 10 K disks

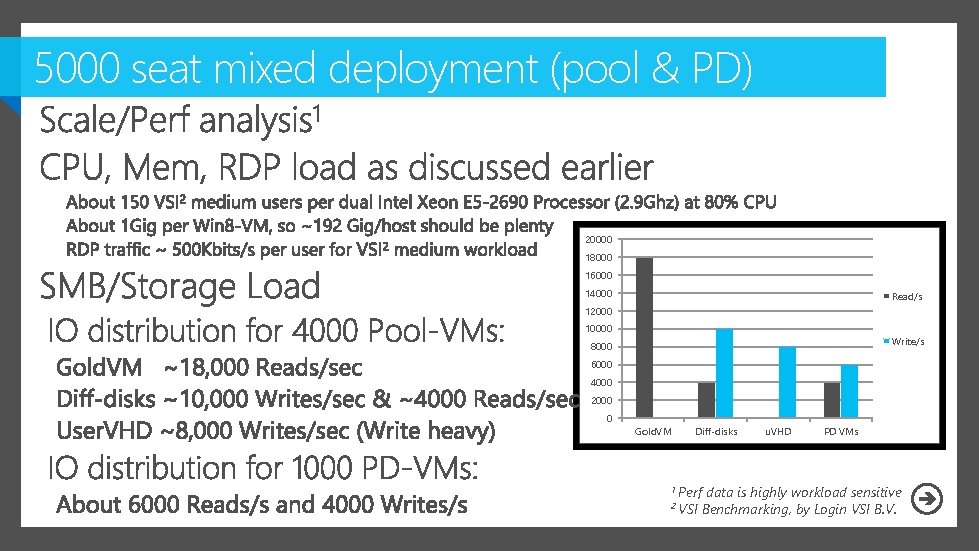

5000 seat mixed deployment (pool & PD) 20000 18000 16000 14000 Read/s 12000 10000 Write/s 8000 6000 4000 2000 0 Gold. VM Diff-disks 1 Perf 2 VSI u. VHD PD VMs data is highly workload sensitive Benchmarking, by Login VSI B. V.

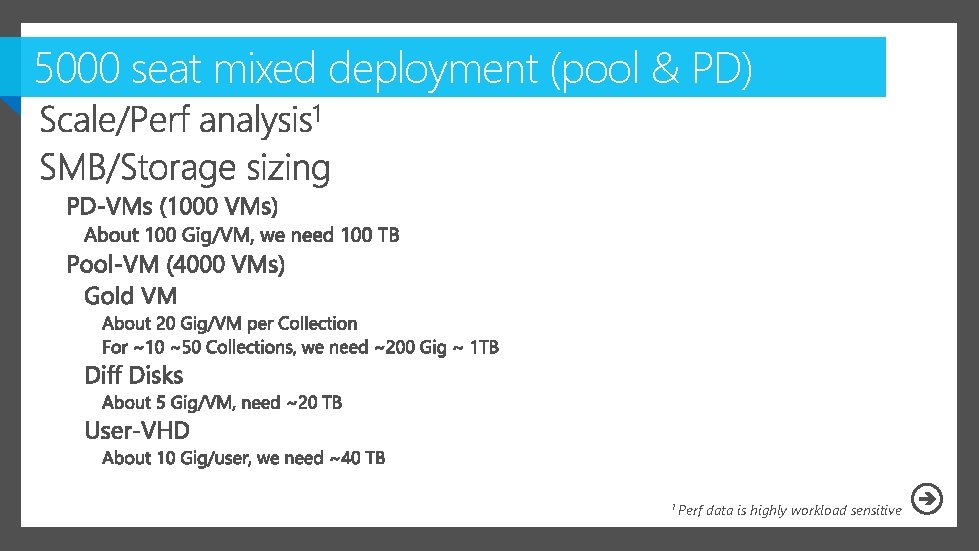

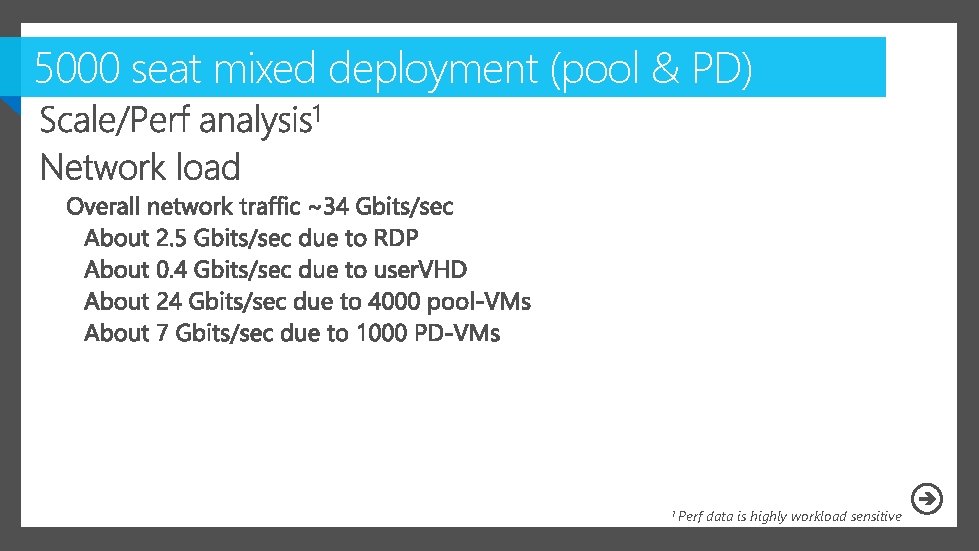

5000 seat mixed deployment (pool & PD) 1 Perf data is highly workload sensitive

5000 seat mixed deployment (pool & PD) 1 Perf data is highly workload sensitive

5000 seat mixed deployment (pool & PD)

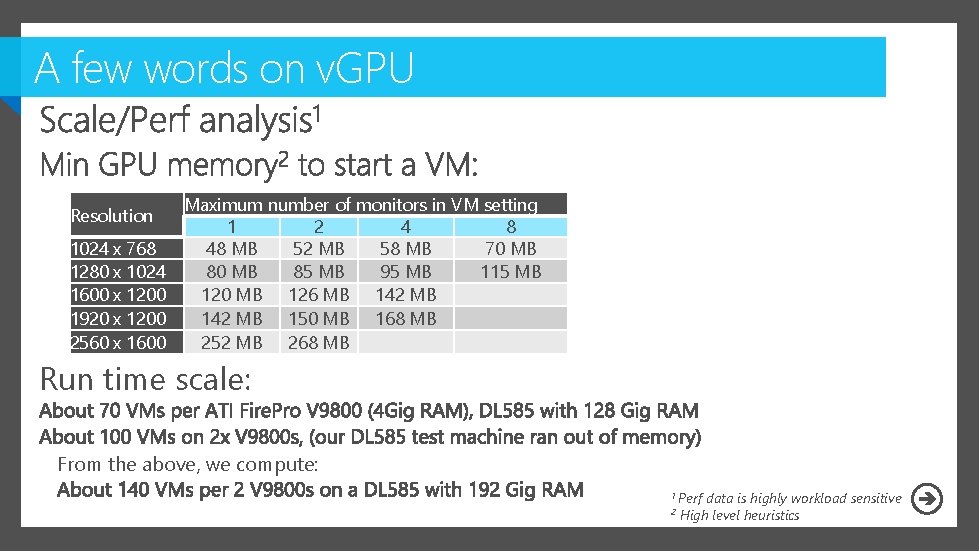

A few words on v. GPU Resolution 1024 x 768 1280 x 1024 1600 x 1200 1920 x 1200 2560 x 1600 Maximum number of monitors in VM setting 1 2 4 8 48 MB 52 MB 58 MB 70 MB 85 MB 95 MB 115 MB 120 MB 126 MB 142 MB 150 MB 168 MB 252 MB 268 MB Run time scale: From the above, we compute: 1 Perf 2 data is highly workload sensitive High level heuristics

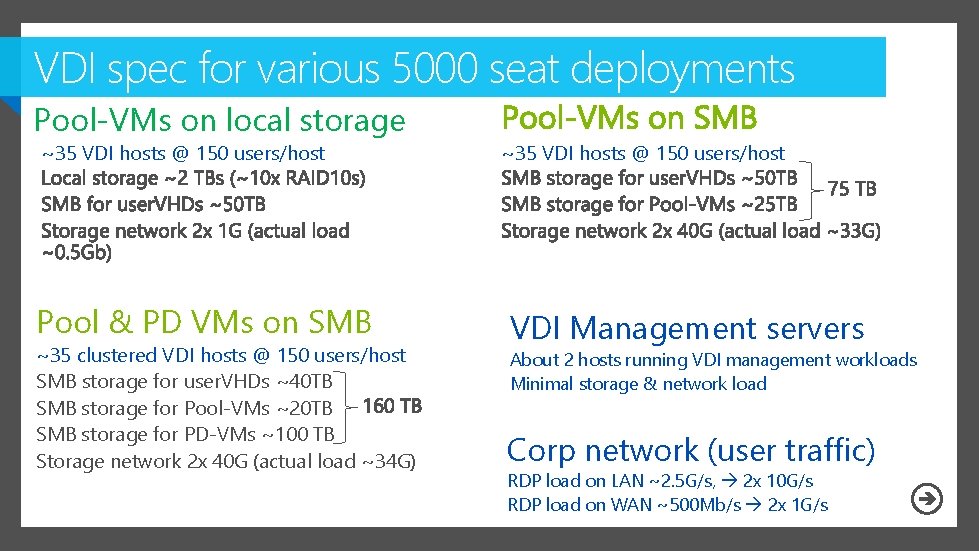

VDI spec for various 5000 seat deployments Pool-VMs on local storage ~35 VDI hosts @ 150 users/host Pool & PD VMs on SMB ~35 clustered VDI hosts @ 150 users/host SMB storage for user. VHDs ~40 TB SMB storage for Pool-VMs ~20 TB SMB storage for PD-VMs ~100 TB Storage network 2 x 40 G (actual load ~34 G) ~35 VDI hosts @ 150 users/host VDI Management servers About 2 hosts running VDI management workloads Minimal storage & network load Corp network (user traffic) RDP load on LAN ~2. 5 G/s, 2 x 10 G/s RDP load on WAN ~500 Mb/s 2 x 1 G/s

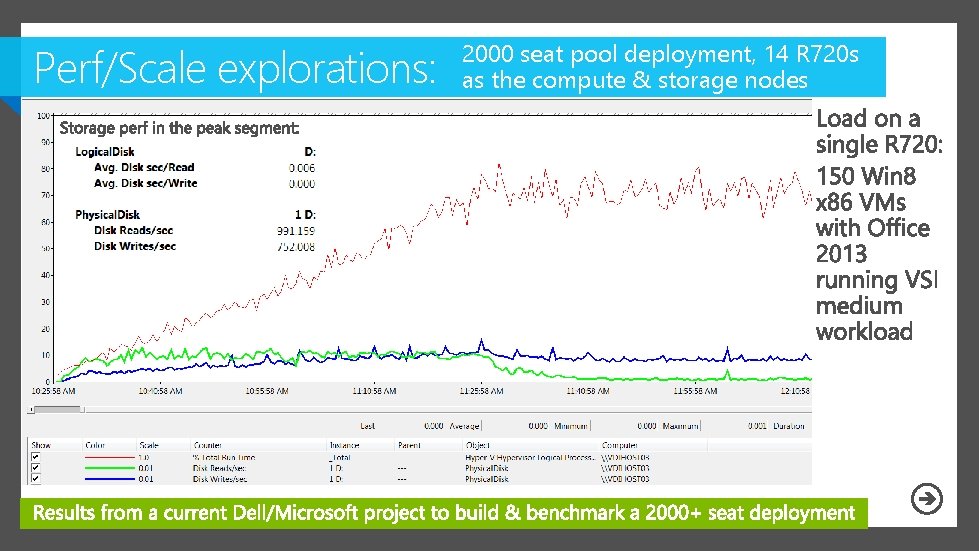

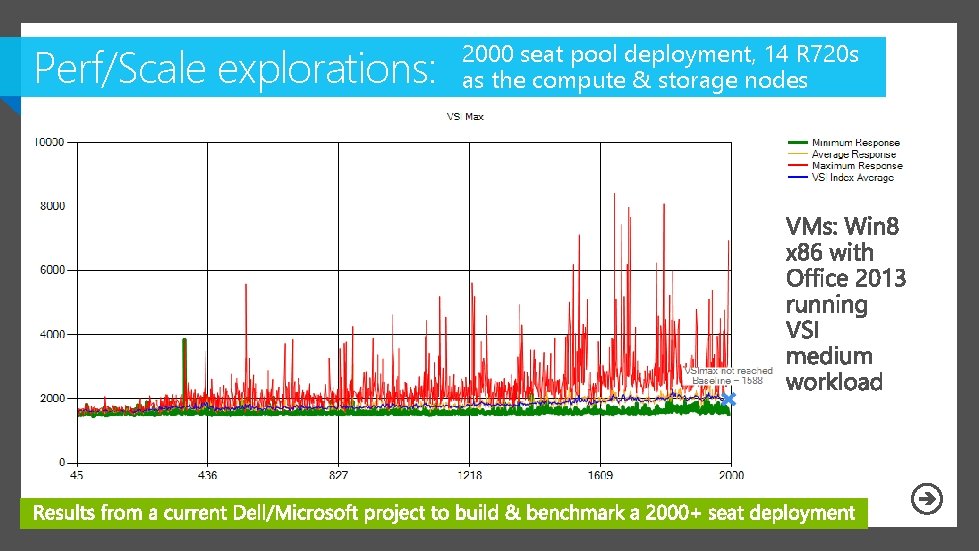

Perf/Scale explorations: 2000 seat pool deployment, 14 R 720 s as the compute & storage nodes

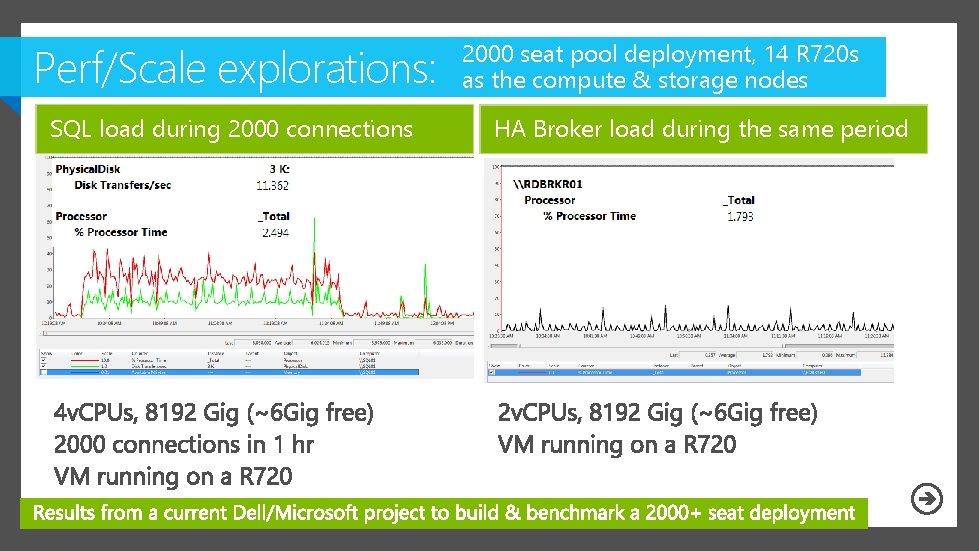

Perf/Scale explorations: SQL load during 2000 connections 2000 seat pool deployment, 14 R 720 s as the compute & storage nodes HA Broker load during the same period

Perf/Scale explorations: 2000 seat pool deployment, 14 R 720 s as the compute & storage nodes

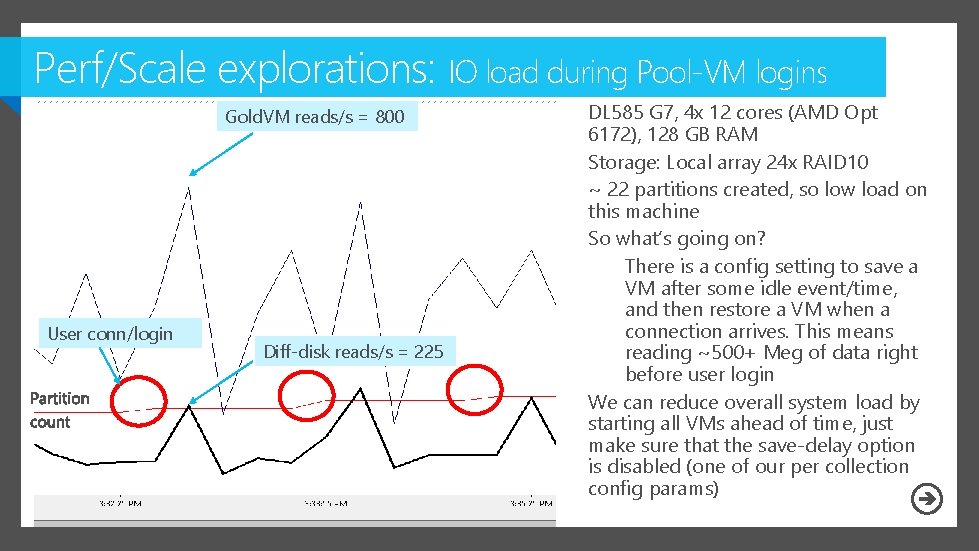

Perf/Scale explorations: IO load during Pool-VM logins Gold. VM reads/s = 800 User conn/login Diff-disk reads/s = 225 DL 585 G 7, 4 x 12 cores (AMD Opt 6172), 128 GB RAM Storage: Local array 24 x RAID 10 ~ 22 partitions created, so low load on this machine So what’s going on? There is a config setting to save a VM after some idle event/time, and then restore a VM when a connection arrives. This means reading ~500+ Meg of data right before user login We can reduce overall system load by starting all VMs ahead of time, just make sure that the save-delay option is disabled (one of our per collection config params)

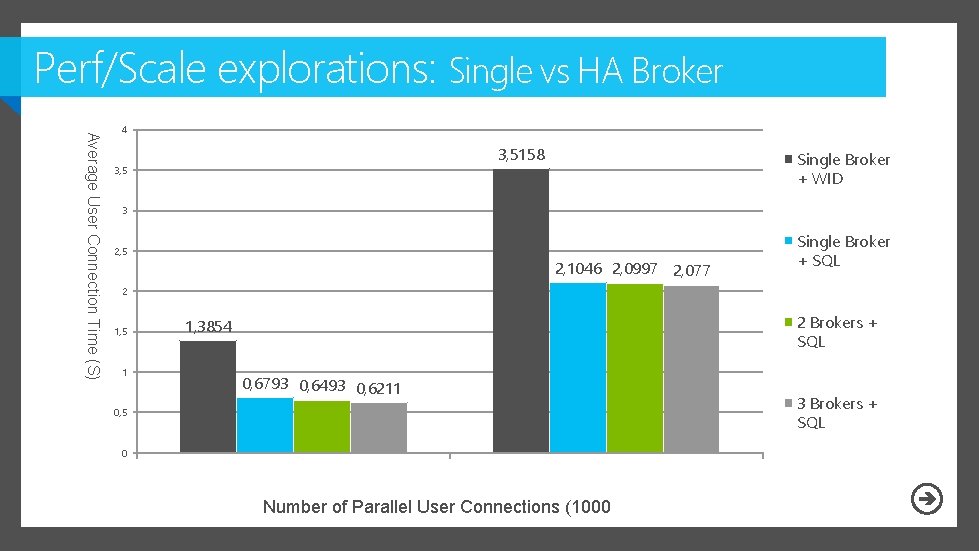

Perf/Scale explorations: Single vs HA Broker Average User Connection Time (S) 4 3, 5158 3, 5 Single Broker + WID 3 2, 5 2, 1046 2, 0997 2, 077 Single Broker + SQL 2 1, 5 1 2 Brokers + SQL 1, 3854 0, 6793 0, 6493 0, 6211 0, 5 0 Number of Parallel User Connections (1000 3 Brokers + SQL

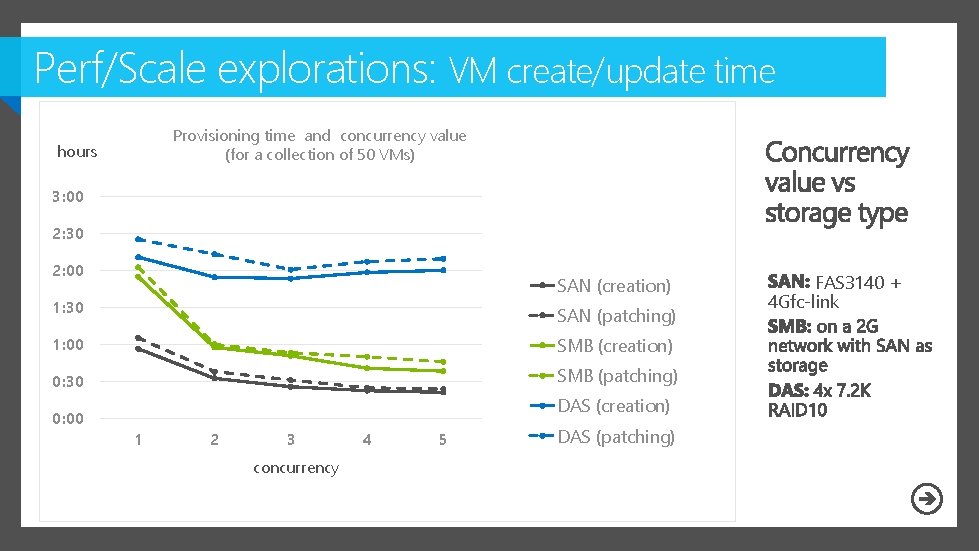

Perf/Scale explorations: VM create/update time Provisioning time and concurrency value (for a collection of 50 VMs) hours 3: 00 2: 30 2: 00 SAN (creation) 1: 30 SAN (patching) 1: 00 SMB (creation) 0: 30 SMB (patching) 0: 00 DAS (creation) 1 2 3 concurrency 4 5 DAS (patching) FAS 3140 + 4 Gfc-link

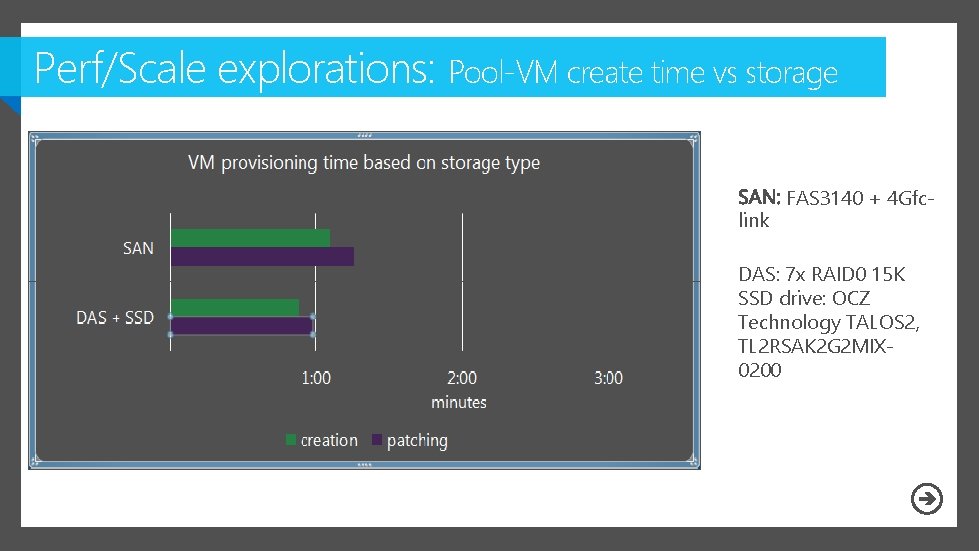

Perf/Scale explorations: Pool-VM create time vs storage pe link ty FAS 3140 + 4 Gfc- DAS: 7 x RAID 0 15 K SSD drive: OCZ Technology TALOS 2, TL 2 RSAK 2 G 2 MIX 0200

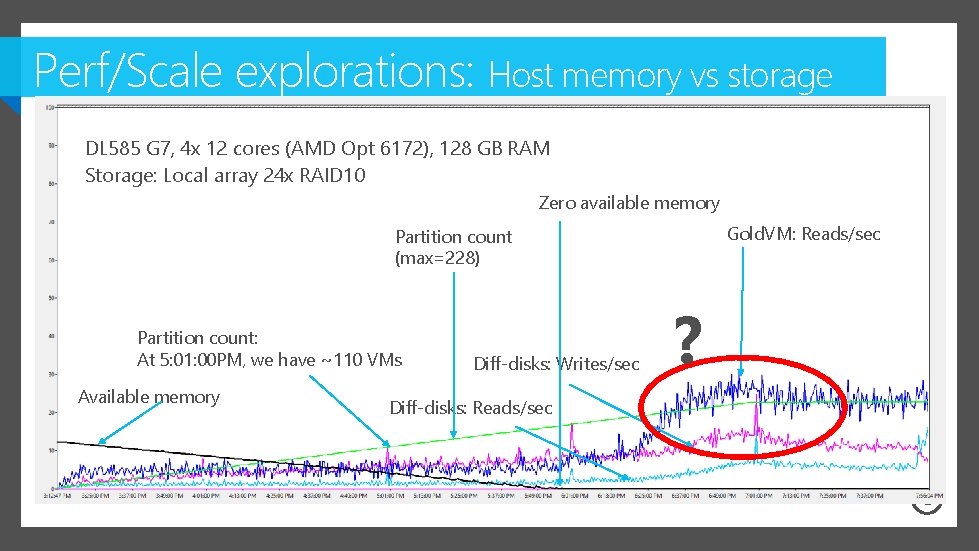

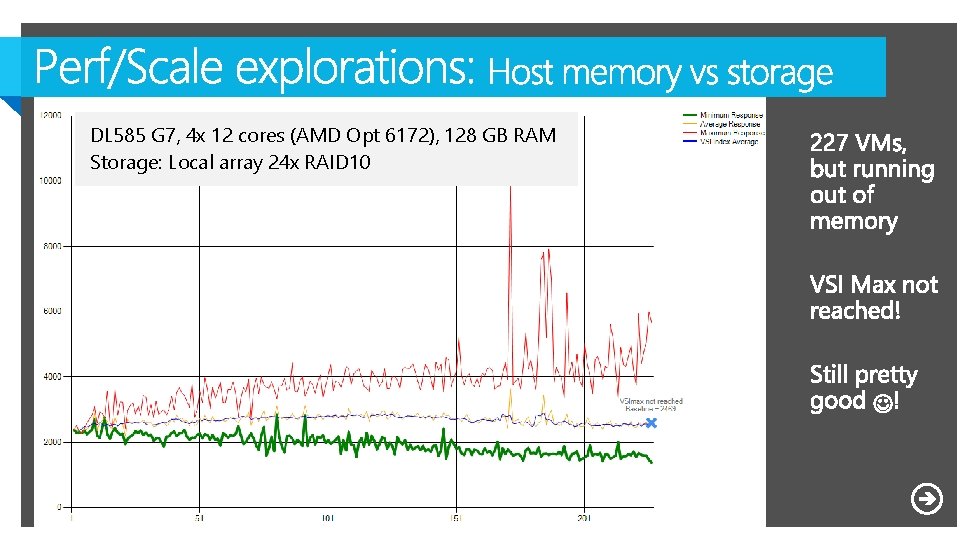

Perf/Scale explorations: Host memory vs storage load DL 585 G 7, 4 x 12 cores (AMD Opt 6172), 128 GB RAM Storage: Local array 24 x RAID 10 Zero available memory Gold. VM: Reads/sec Partition count (max=228) Partition count: At 5: 01: 00 PM, we have ~110 VMs Available memory Diff-disks: Writes/sec Diff-disks: Reads/sec ?

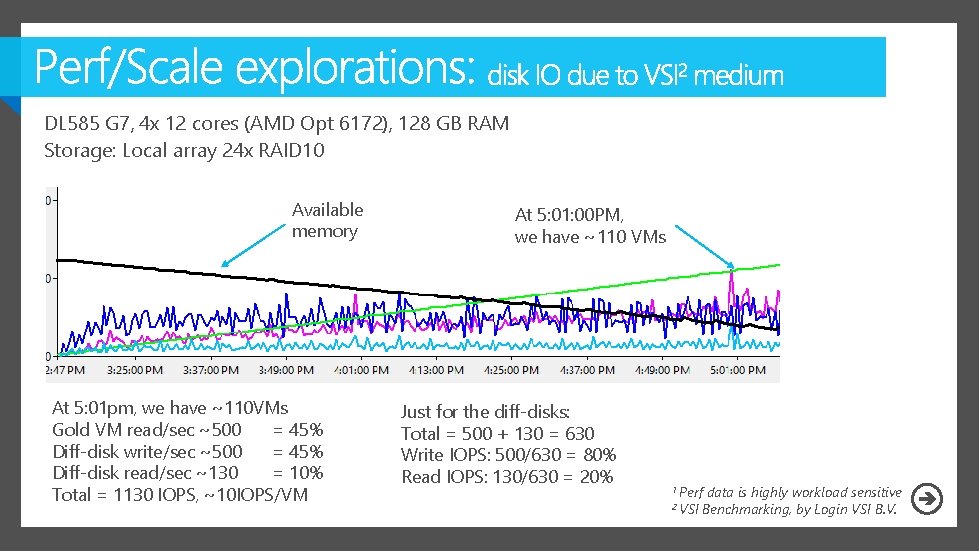

DL 585 G 7, 4 x 12 cores (AMD Opt 6172), 128 GB RAM Storage: Local array 24 x RAID 10 Available memory At 5: 01 pm, we have ~110 VMs Gold VM read/sec ~500 = 45% Diff-disk write/sec ~500 = 45% Diff-disk read/sec ~130 = 10% Total = 1130 IOPS, ~10 IOPS/VM At 5: 01: 00 PM, we have ~110 VMs Just for the diff-disks: Total = 500 + 130 = 630 Write IOPS: 500/630 = 80% Read IOPS: 130/630 = 20% 1 Perf 2 VSI data is highly workload sensitive Benchmarking, by Login VSI B. V.

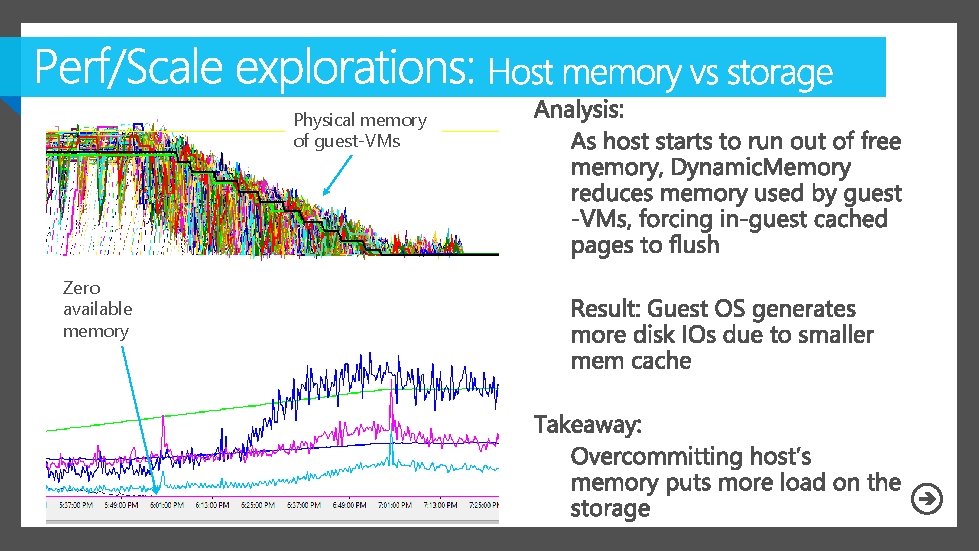

Physical memory of guest-VMs Zero available memory

DL 585 G 7, 4 x 12 cores (AMD Opt 6172), 128 GB RAM Storage: Local array 24 x RAID 10

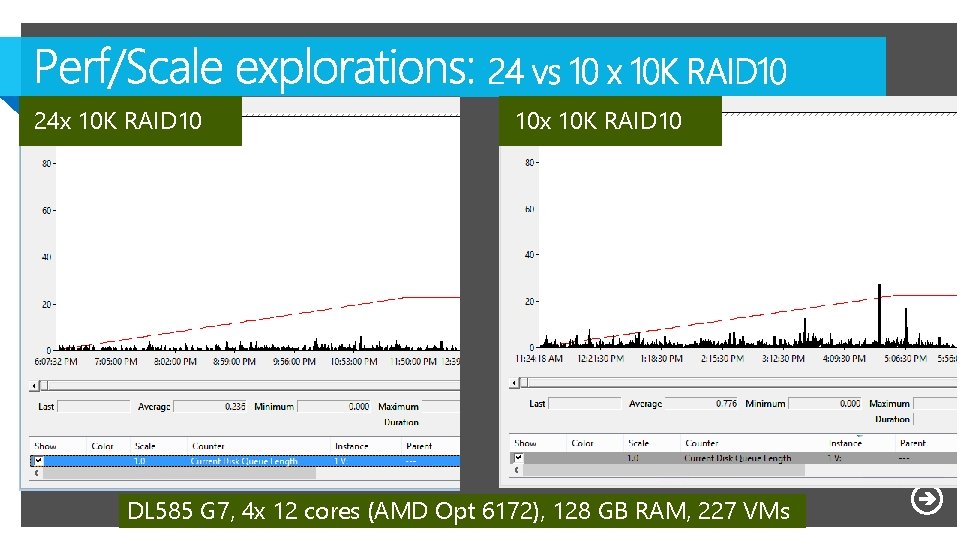

24 x 10 K RAID 10 10 x 10 K RAID 10 DL 585 G 7, 4 x 12 cores (AMD Opt 6172), 128 GB RAM, 227 VMs

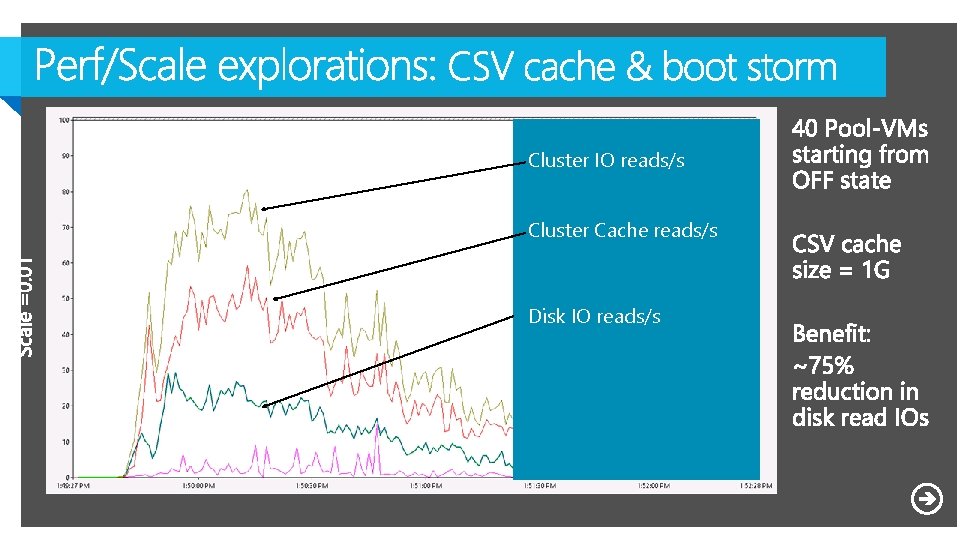

Cluster IO reads/s Cluster Cache reads/s Disk IO reads/s

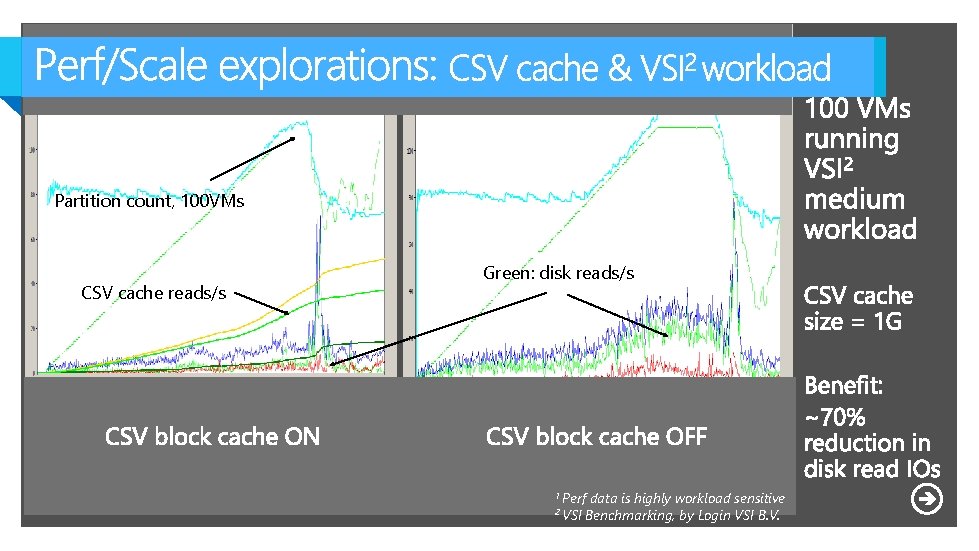

Partition count, 100 VMs CSV cache reads/s Green: disk reads/s 1 Perf 2 VSI data is highly workload sensitive Benchmarking, by Login VSI B. V.

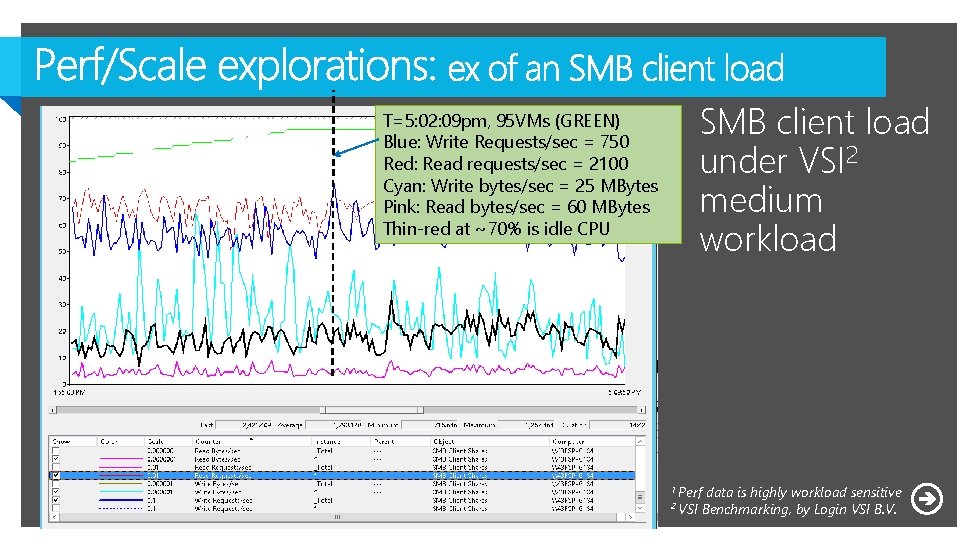

SMB client load under VSI 2 medium workload T=5: 02: 09 pm, 95 VMs (GREEN) Blue: Write Requests/sec = 750 Red: Read requests/sec = 2100 Cyan: Write bytes/sec = 25 MBytes Pink: Read bytes/sec = 60 MBytes Thin-red at ~70% is idle CPU 1 Perf 2 VSI data is highly workload sensitive Benchmarking, by Login VSI B. V.

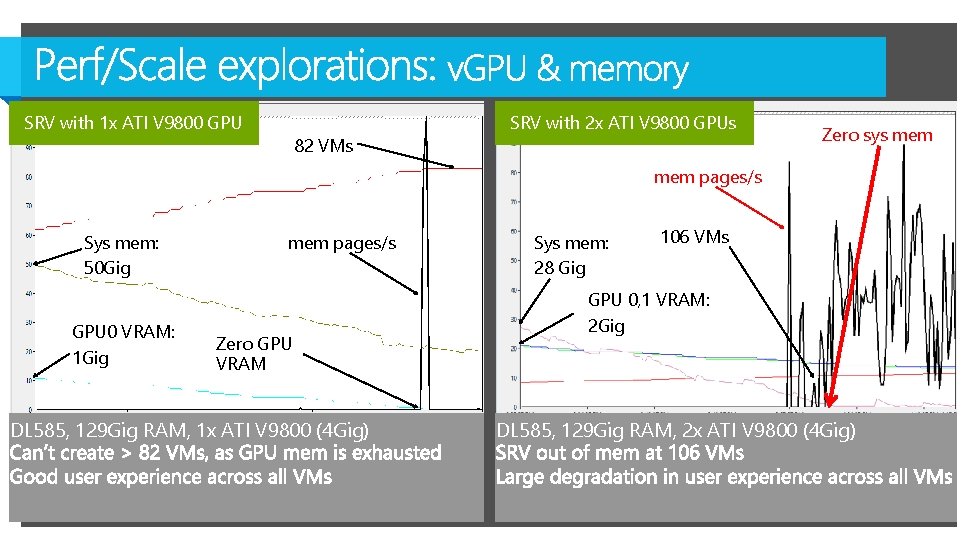

SRV with 1 x ATI V 9800 GPU 82 VMs SRV with 2 x ATI V 9800 GPUs Zero sys mem pages/s Sys mem: 50 Gig GPU 0 VRAM: 1 Gig mem pages/s Zero GPU VRAM DL 585, 129 Gig RAM, 1 x ATI V 9800 (4 Gig) Sys mem: 28 Gig 106 VMs GPU 0, 1 VRAM: 2 Gig DL 585, 129 Gig RAM, 2 x ATI V 9800 (4 Gig)

A few closing words

Session Objectives And Takeaways

Related Content

Further Reading and Info http: //blogs. msdn. com/b/rds/

Complete your session evaluations today and enter to win prizes daily. Provide your feedback at a Comm. Net kiosk or log on at www. 2013 mms. com. Upon submission you will receive instant notification if you have won a prize. Prize pickup is at the Information Desk located in Attendee Services in the Mandalay Bay Foyer. Entry details can be found on the MMS website.

Resources

- Slides: 64