SemiSupervised Probabilistic Principal Component Analysis Shipeng Yu University

(Semi-)Supervised Probabilistic Principal Component Analysis Shipeng Yu University of Munich, Germany Siemens Corporate Technology http: //www. dbs. ifi. lmu. de/~spyu Joint work with Kai Yu, Volker Tresp, Hans-Peter Kriegel, Mingrui Wu

Dimensionality Reduction p We are dealing with high-dimensional data n n n p Motivations n n p Texts: e. g. bag-of-words features Images: color histogram, correlagram, etc. Web pages: texts, linkages, structures, etc. Noisy features: how to remove or down-weight them? Learnability: “curse of dimensionality” Inefficiency: high computational cost Visualization A pre-processing for many data mining tasks 2

Unsupervised versus Supervised p Unsupervised Dimensionality Reduction n n p Only the input data are given PCA (principal component analysis) Supervised Dimensionality Reduction n Should be biased by the outputs Classification: FDA (Fisher discriminant analysis) p Regression: PLS (partial least squares) p RVs: CCA (canonical correlation analysis) p More general solutions? p Semi-Supervised? p 3

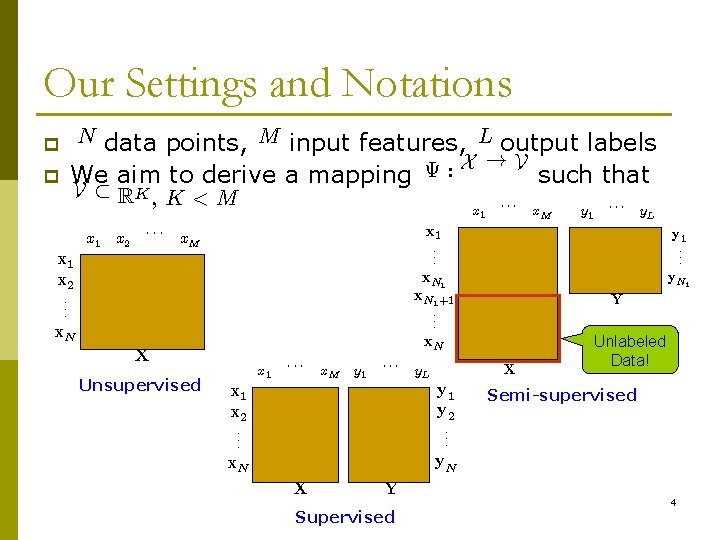

Our Settings and Notations data points, M input features, L output labels X !V ª : We aim to derive a mapping such that p N p V ½ RK , K < M x 1 x 2 ¢¢¢ x 1 x. M x 1 x 2. . . x. N x 1 ¢¢¢ x. M y 1 ¢¢¢ y. L y. N 1 Y X y. L x. N y. N Supervised ¢¢¢ x. N 1+1. . . y 1 y 2. . . Y y 1. . . x 1 x 2. . . X x. M x 1. . . x. N X Unsupervised ¢¢¢ Unlabeled Data! Semi-supervised 4

Outline Principal Component Analysis p Probabilistic PCA p Supervised Probabilistic PCA p Related Work p Conclusion p 5

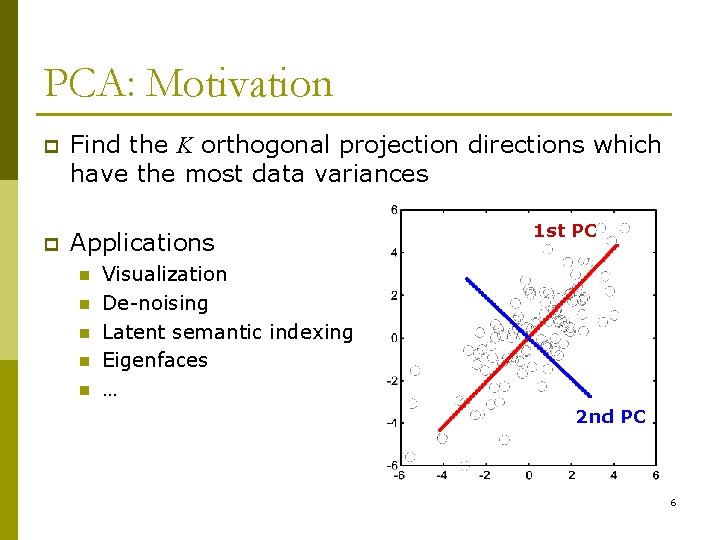

PCA: Motivation p Find the K orthogonal projection directions which have the most data variances p Applications n n n 1 st PC Visualization De-noising Latent semantic indexing Eigenfaces … 2 nd PC 6

PCA: Algorithm p Basic Algorithm P n Centralize dataà n P matrix Compute the sample covariance xi S= n xi ¡ ¹x ; 1 X> X N = ¹x = 1 N N x i=1 i > N x x i=1 i i 1 N Do eigen-decomposition (sort eigenvalues decreasingly) > S = U¤U n n PCA directions are given in UK, the first K columns of U ¤ The PCA projection of a test data x is > ¤ ¤ v = UK x 7

Supervised PCA? PCA is unsupervised p When output information is available: p n n n Classification labels: 0/1 Regression responses: real values Ranking orders: rank labels / preferences Multi-outputs: output dimension > 1 Structured outputs, … Can PCA be biased by outputs? p And how? p 8

Outline Principal Component Analysis p Probabilistic PCA p Supervised Probabilistic PCA p Related Work p Conclusion p 9

![Latent Variable Model for PCA p Another interpretation of PCA [Pearson 1901] n PCA Latent Variable Model for PCA p Another interpretation of PCA [Pearson 1901] n PCA](http://slidetodoc.com/presentation_image/091a0167fc5f02bda1923c828b7515ea/image-10.jpg)

Latent Variable Model for PCA p Another interpretation of PCA [Pearson 1901] n PCA is minimizing the reconstruction error of X : 2 min k. X ¡ VAk. F A; V > s. t. V V = I n n n p V 2 RN K are latent variables: PCA projections of X £ A 2 RK M are factor loadings: PCA mappings Equivalent to PCA up to a scaling factor £ Lead to idea of PPCA 10

![Probabilistic PCA [Tip. Bis 99] N Wx p v x Latent variable model x Probabilistic PCA [Tip. Bis 99] N Wx p v x Latent variable model x](http://slidetodoc.com/presentation_image/091a0167fc5f02bda1923c828b7515ea/image-11.jpg)

Probabilistic PCA [Tip. Bis 99] N Wx p v x Latent variable model x = Wx v + ¹x + ²x Latent » variables v n n n N (0; I) Mean vector σx Noise process ²x » N (0; ¾x 2 I) jv » N (W v + ¹ ; ¾ 2 I) x x Conditional independence: x x ! 2 0, PPCA leads to PCA solution (up to a rotation and If ¾x R scaling factor) x is Gaussian distributed: P (x) = P (xjv)P (v) dv 11

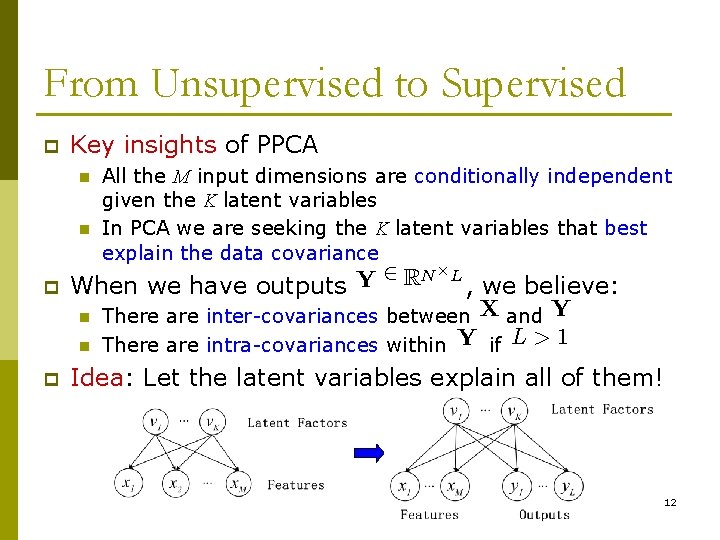

From Unsupervised to Supervised p Key insights of PPCA n n All the M input dimensions are conditionally independent given the K latent variables In PCA we are seeking the K latent variables that best explain the data covariance 2 RN Y p When we have outputs n n p £L , we believe: There are inter-covariances between X and Y There are intra-covariances within Y if L > 1 Idea: Let the latent variables explain all of them! 12

Outline Principal Component Analysis p Probabilistic PCA p Supervised Probabilistic PCA p Related Work p Conclusion p 13

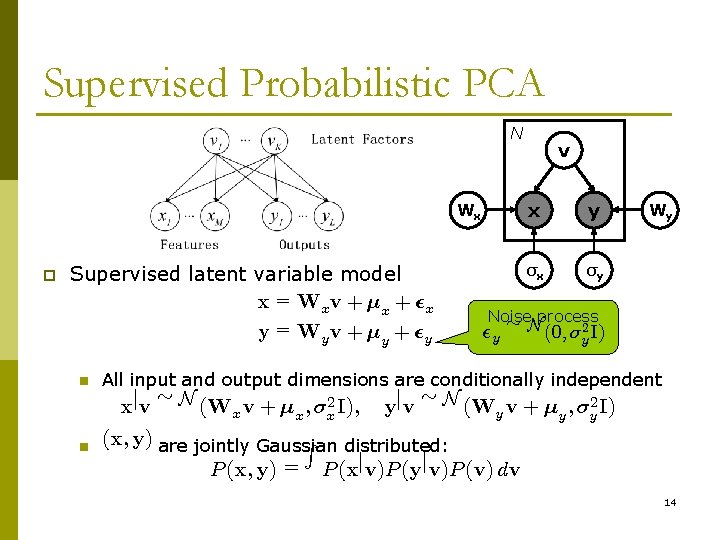

Supervised Probabilistic PCA N Wx p Supervised latent variable model x = Wx v + ¹x + ²x y = Wy v + ¹y + ²y n n v x y σx σy Wy Noise » process ²y N (0; ¾ 2 I) y All input and output dimensions are conditionally independent xjv » N (Wx v + ¹x ; ¾x 2 I); yjv » N (Wy v + ¹y ; ¾y 2 I) (x; y) are jointly Gaussian R distributed: P (x; y) = P (xjv)P (yjv)P (v) dv 14

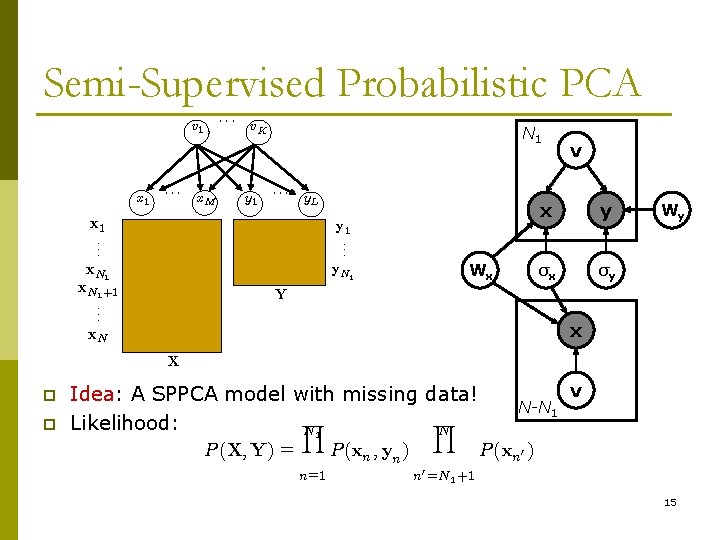

Semi-Supervised Probabilistic PCA ¢¢¢ v 1 x 1 ¢¢¢ x. M v. K y 1 N 1 ¢¢¢ y. L x 1. . . y 1. . . x. N 1+1. . . y. N 1 Wx v x y σx σy Wy Y x x. N X p p Idea: A SPPCA model with missing data! Y Y Likelihood: N 1 N P (X; Y) = P (xn ; yn ) n=1 N-N 1 v P (xn 0 ) n 0 =N 1 +1 15

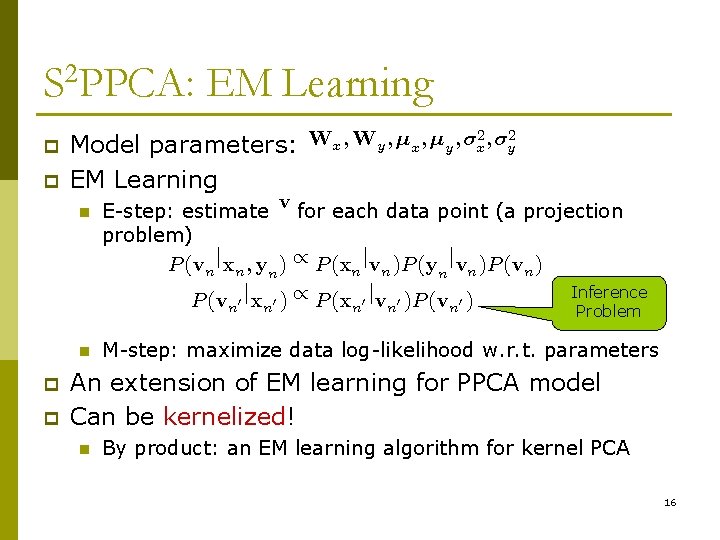

S 2 PPCA: EM Learning 2 ; ¾ 2 ; ; ¾ W W ¹ ¹ x y x y p Model parameters: p EM Learning n E-step: estimate v for each data point (a projection problem) P (vn jxn ; yn ) / P (xn jvn )P (yn jvn )P (vn ) P (v 0 jx 0 ) / P (x 0 jv 0 )P (v 0 ) n n p p n n Inference Problem M-step: maximize data log-likelihood w. r. t. parameters An extension of EM learning for PPCA model Can be kernelized! n By product: an EM learning algorithm for kernel PCA 16

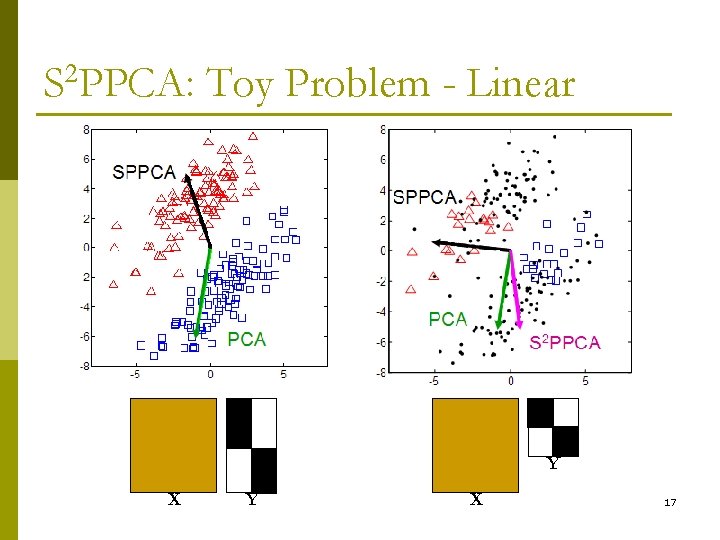

S 2 PPCA: Toy Problem - Linear Y X 17

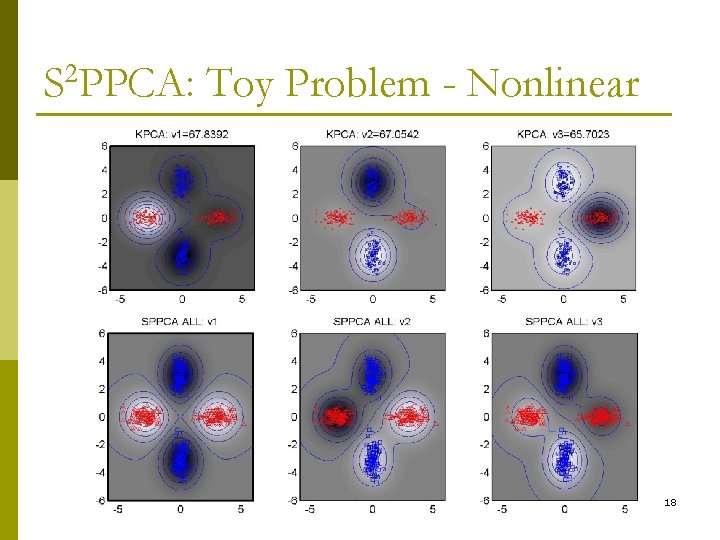

S 2 PPCA: Toy Problem - Nonlinear 18

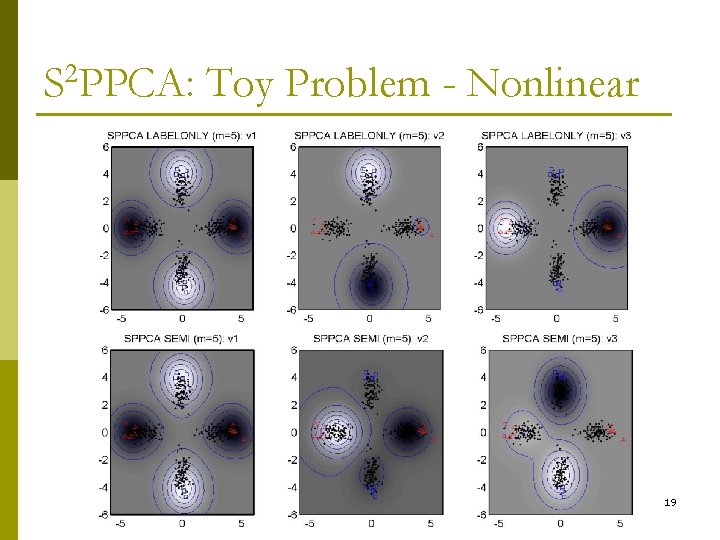

S 2 PPCA: Toy Problem - Nonlinear 19

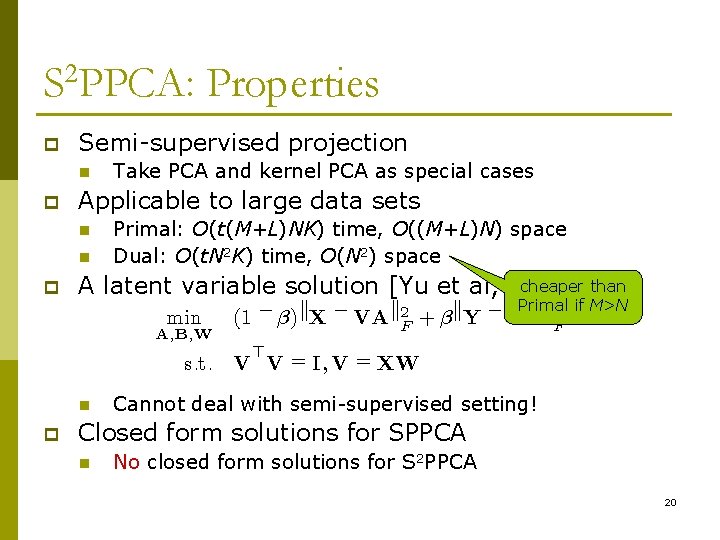

S 2 PPCA: Properties p Semi-supervised projection n p Applicable to large data sets n n p Take PCA and kernel PCA as special cases Primal: O(t(M+L)NK) time, O((M+L)N) space Dual: O(t. N 2 K) time, O(N 2) space cheaper than A latent variable solution [Yu et al, SIGIR’ 05] min A; B; W Primal k 2 if M>N 2 + ¯ k. Y ¡ VB (1 ¡ ¯)k. X ¡ VAk. F F > s. t. V V = I; V = XW n p Cannot deal with semi-supervised setting! Closed form solutions for SPPCA n No closed form solutions for S 2 PPCA 20

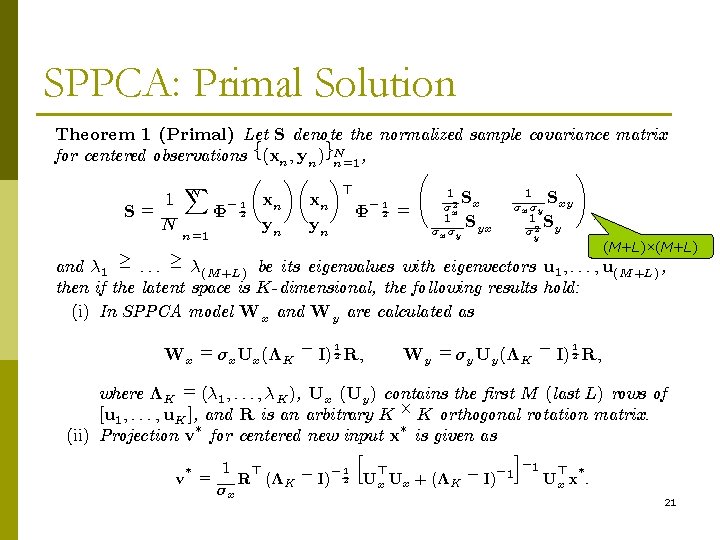

SPPCA: Primal Solution Theorem 1 (Primal) Let S denote the normalized sample covariance matrix for centered observations f(xn ; yn )gn. N=1 , à ! µ ¶µ ¶ X > 1 S xn 1 N ¡ 1 xn x xy ¡ 1 2 ¾ ¾ x ¾y x S= © 2 = ; 1 S N yn yn yx y ¾x ¾ y ¾ 2 n= 1 y (M+L)×(M+L) and ¸ 1 ¸ : : : ¸ ¸(M +L) be its eigenvalues with eigenvectors u 1 ; : : : ; u(M +L) , then if the latent space is K-dimensional, the following results hold: (i) In SPPCA model Wx and Wy are calculated as 1 Wx = ¾x Ux (¤K ¡ I) 2 R; 1 Wy = ¾y Uy (¤K ¡ I) 2 R; where ¤K = (¸ 1 ; : : : ; ¸K ), Ux (Uy ) contains the ¯rst M (last L) rows of [u 1 ; : : : ; u. K ], and R is an arbitrary K £ K orthogonal rotation matrix. ¤ ¤ (ii) Projection v for centered new input x is given as h 1 > > ¡ 1 ¡ v = R (¤K ¡ I) 2 Ux Ux + (¤K ¡ I) 1 ¾x ¤ i ¡ 1 > ¤ Ux x : 21

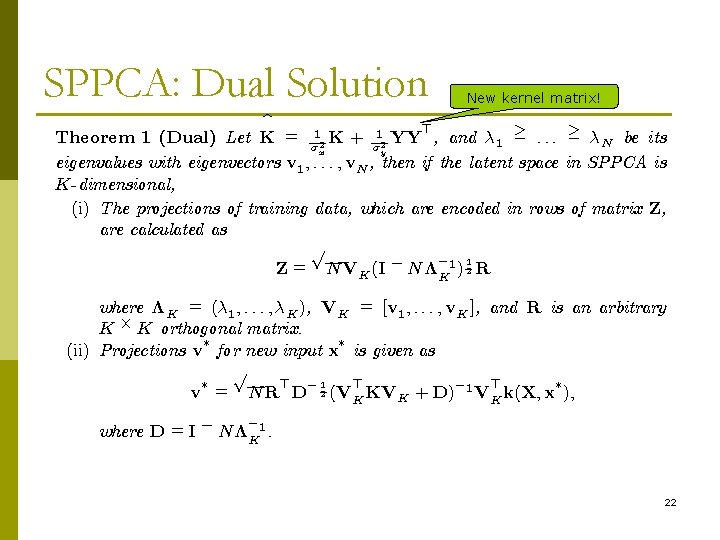

SPPCA: Dual Solution New kernel matrix! b > Theorem 1 (Dual) Let K = ¾ 12 K + ¾ 12 YY , and ¸ 1 ¸ : : : ¸ ¸N be its y x eigenvalues with eigenvectors v 1 ; : : : ; v. N , then if the latent space in SPPCA is K-dimensional, (i) The projections of training data, which are encoded in rows of matrix Z, are calculated as p ¡ 1 Z = NVK (I ¡ N¤K 1 ) 2 R where ¤K = (¸ 1 ; : : : ; ¸K ), VK = [v 1 ; : : : ; v. K ], and R is an arbitrary K £ K orthogonal matrix. ¤ ¤ (ii) Projections v for new input x is given as p ¤ ¤ > ¡ 1 > > ¡ v = NR D 2 (VK KVK + D) 1 VK k(X; x ); where D = I ¡ N¤K 1. ¡ 22

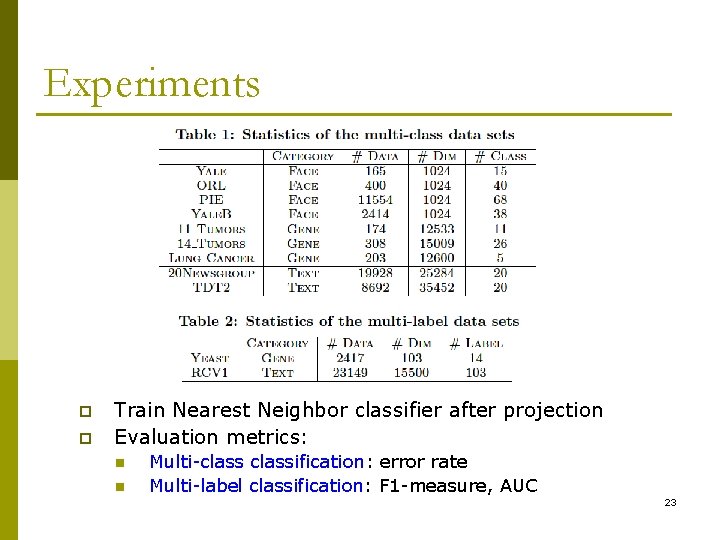

Experiments p p Train Nearest Neighbor classifier after projection Evaluation metrics: n n Multi-classification: error rate Multi-label classification: F 1 -measure, AUC 23

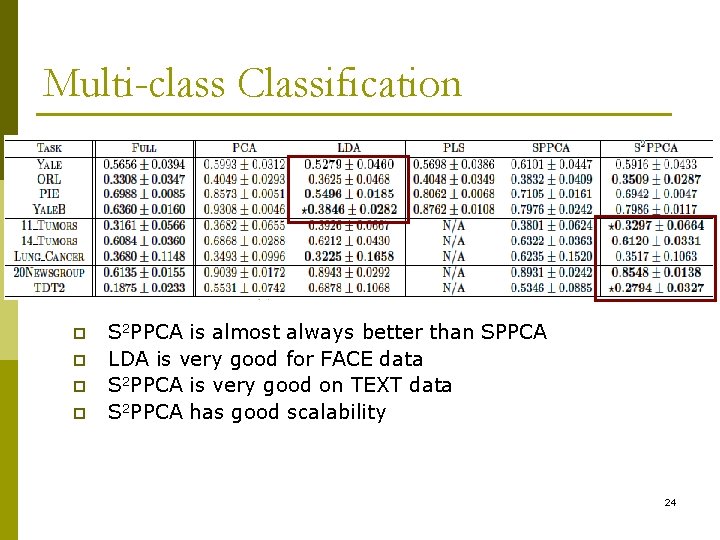

Multi-class Classification p p S 2 PPCA is almost always better than SPPCA LDA is very good for FACE data S 2 PPCA is very good on TEXT data S 2 PPCA has good scalability 24

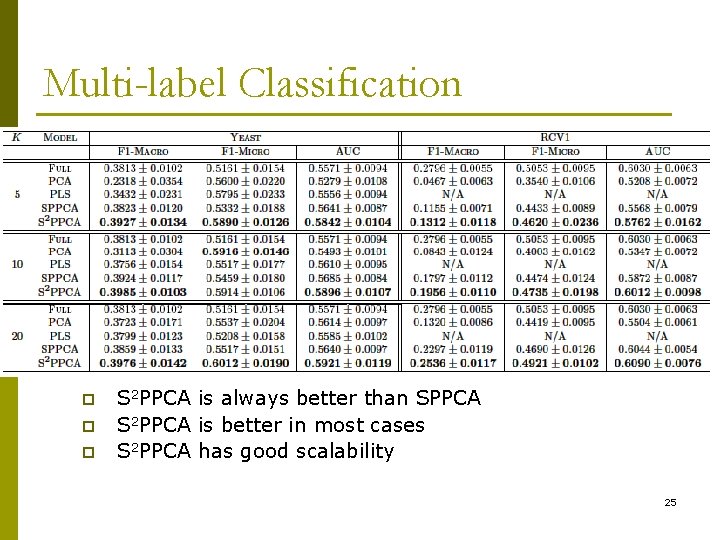

Multi-label Classification p p p S 2 PPCA is always better than SPPCA S 2 PPCA is better in most cases S 2 PPCA has good scalability 25

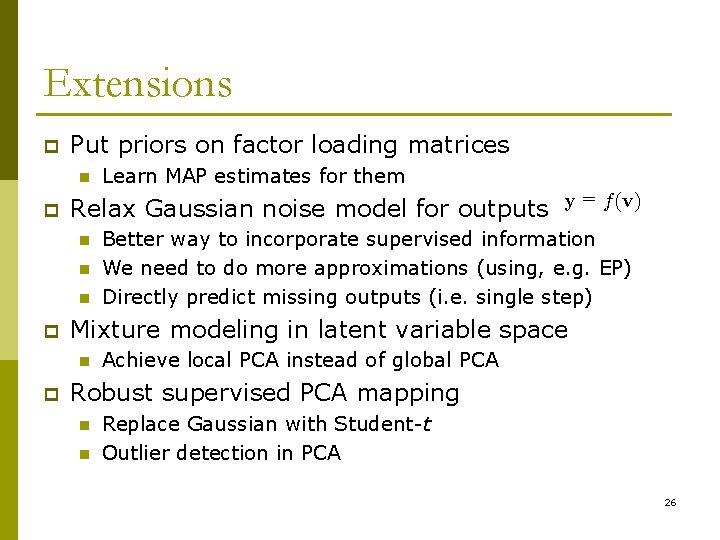

Extensions p Put priors on factor loading matrices n p = Relax Gaussian noise model for outputs y f(v) n n n p Better way to incorporate supervised information We need to do more approximations (using, e. g. EP) Directly predict missing outputs (i. e. single step) Mixture modeling in latent variable space n p Learn MAP estimates for them Achieve local PCA instead of global PCA Robust supervised PCA mapping n n Replace Gaussian with Student-t Outlier detection in PCA 26

Related Work p Fisher discriminant analysis (FDA) n n n p Canonical correlation analysis (CCA) n n p Goal: Find directions to maximize between-class distance while minimizing within-class distance Only deal with outputs of multi-classification Limited number of projection dimensions Goal: Maximize the correlation between inputs and outputs Ignore intra-covariance of both inputs and outputs Partial least squares (PLS) n n Goal: Sequentially find orthogonal directions to maximize covariance with respect to outputs A penalized CCA; poor generalization on new output dimensions 27

Conclusion p p p A general framework for (semi-)supervised dimensionality reduction We can solve the problem analytically (EIG) or via iterative algorithms (EM) Trade-off n n p p p EIG: optimization-based, easily extended to other loss EM: semi-supervised projection, good scalability Both algorithms can be kernelized PCA and kernel PCA are special cases More applications expected 28

Thank you! Questions? http: //www. dbs. ifi. lmu. de/~spyu

- Slides: 29