SemiSupervised learning Mining the Web Chakrabarti Ramakrishnan Need

Semi-Supervised learning Mining the Web Chakrabarti & Ramakrishnan

Need for an intermediate approach § Unsupervised and Supervised learning • Two extreme learning paradigms • Unsupervised learning ®collection of documents without any labels ®easy to collect • Supervised learning ®each object tagged with a class. ®laborious job § Semi-supervised learning • Real life applications are somewhere in between. Mining the Web Chakrabarti & Ramakrishnan 2

Motivation § Document collection D § A subset (with ) has known labels § Goal: to label the rest of the collection. § Approach • Train a supervised learner using , the labeled subset. Apply the trained learner on the remaining documents. • § Idea • Harness information in to enable better learning. Mining the Web Chakrabarti & Ramakrishnan 3

The Challenge § Unsupervised portion of the corpus, , adds to • Vocabulary • Knowledge about the joint distribution of terms • Unsupervised measures of inter-document similarity. ®E. g. : site name, directory path, hyperlinks § Put together multiple sources of evidence of similarity and class membership into a label-learning system. • combine different features with partial supervision Mining the Web Chakrabarti & Ramakrishnan 4

Hard Classification § Train a supervised learner on available labeled data § Label all documents in § Retrain the classifier using the new labels for documents where the classier was most confident, § Continue until labels do not change any more. Mining the Web Chakrabarti & Ramakrishnan 5

Expectation maximization § Softer variant of previous algorithm § Steps • Set up some fixed number of clusters with • some arbitrary initial distributions, Alternate following steps ®based on the current parameters of the distribution that characterizes c. – Re-estimate, Pr(c|d), for each cluster c and each document d, ®Re-estimate parameters of the distribution for each cluster. Mining the Web Chakrabarti & Ramakrishnan 6

Experiment: EM § Set up one cluster for each class label § Estimate a class-conditional distribution which includes information from D § Simultaneously estimate the cluster memberships of the unlabeled documents. Mining the Web Chakrabarti & Ramakrishnan 7

Experiment: EM (contd. . ) § Example: • EM procedure + multinomial naive Bayes text • classifier Laplace’s law for parameter smoothing • For EM, unlabeled documents belong to clusters probabilistically ®Term counts weighted by the probabilities • Likewise, modify class priors Mining the Web Chakrabarti & Ramakrishnan 8

EM: Issues § For , we know the class label cd • Question: how to use this information ? • Will be dealt with later § Using Laplace estimate instead of ML estimate • Not strictly EM • Convergence takes place in practice Mining the Web Chakrabarti & Ramakrishnan 9

EM: Experiments § Take a completely labeled corpus D, and randomly select a subset as DK. § also use the set of unlabeled documents in the EM procedure. § Correct classification of a document => concealed class label = class with largest probability § Accuracy with unlabeled documents > accuracy without unlabeled documents • Keeping labeled set of same size § EM beats naïve Bayes with same size of labeled document set • Largest boost for small size of labeled set • Comparable or poorer performance of EM for large labeled sets Mining the Web Chakrabarti & Ramakrishnan 10

Belief in labeled documents § Depending on one’s faith in the initial labeling • Set before 1 st iteration: ® • With each iteration ®Let the class probabilities of the labeled documents `smear' Mining the Web Chakrabarti & Ramakrishnan 11

EM: Reducing belief in unlabeled documents § Problems due to • Noise in term distribution of documents in • Mistakes in E-step § Solution • attenuate the contribution from documents in • Add a damping factor in E Step for contribution from Mining the Web Chakrabarti & Ramakrishnan 12

Increasing DU while holding DK fixed also shows the advantage of using large unlabeled sets in the EM-like algorithm. Mining the Web Chakrabarti & Ramakrishnan 13

EM: Reducing belief in unlabeled documents (contd. . ) § No theoretical justification • accuracy is indeed influenced by the choice of § What value of to choose ? § An intuitive recipe (to be tried) Mining the Web Chakrabarti & Ramakrishnan 14

EM: Modeling labels using many mixture components § Need not be a one to one correspondence between EM clusters and class labels. § Mixture modeling of • Term distributions of some classes • Especially “the negative class” § E. g. : For two class case “football” vs. “not football” • Documents not about “football” are actually about a variety of other things; Mining the Web Chakrabarti & Ramakrishnan 15

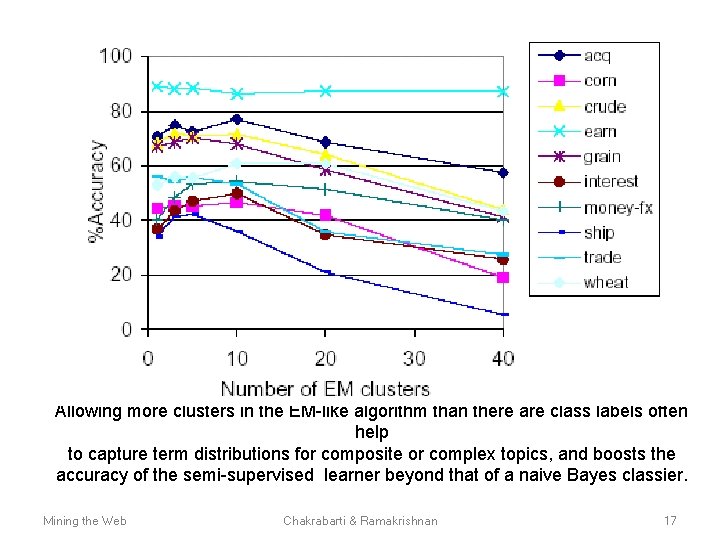

EM: Modeling labels using many mixture components § Experiments: comparison with Naïve Bayes • Lower accuracy with one mixture component • • per label Higher accuracy with more mixture components per label Over fitting and degradation with too large a number of clusters Mining the Web Chakrabarti & Ramakrishnan 16

Allowing more clusters in the EM-like algorithm than there are class labels often help to capture term distributions for composite or complex topics, and boosts the accuracy of the semi-supervised learner beyond that of a naive Bayes classier. Mining the Web Chakrabarti & Ramakrishnan 17

Labeling hypertext graphs § More complex features than exploited by EM • Test document is cited directly by a training • • document, or vice verca Short path between the test document and one or more training documents. Test document is cited by a named category in a Web directory ®Target category system could be somewhat different • Some category of a Web directory co-cites one or more training document along with the 18 Chakrabarti & Ramakrishnan Mining the Web

Labeling hypertext graphs: Scenario § Snapshot of the Web graph, Graph G=(V, E) § Set of topics, § Small subset of nodes VK labeled § Use the supervision to label some or all nodes in V - Vk Mining the Web Chakrabarti & Ramakrishnan 19

![Hypertext models for classification § c=class, t=text, N=neighbors § Text-only model: Pr[t|c] § Using Hypertext models for classification § c=class, t=text, N=neighbors § Text-only model: Pr[t|c] § Using](http://slidetodoc.com/presentation_image_h/abdeb983c296e14515becb92124660dc/image-20.jpg)

Hypertext models for classification § c=class, t=text, N=neighbors § Text-only model: Pr[t|c] § Using neighbors’ text to judge my topic: Pr[t, t(N) | c] § Better model: Pr[t, c(N) | c] § Non-linear relaxation Mining the Web Chakrabarti & Ramakrishnan ? 20

Absorbing features from neighboring pages § Page u may have little text on it to train or apply a text classier § u cites some second level pages § Often second-level pages have usable quantities of text § Question: How to use these features ? Mining the Web Chakrabarti & Ramakrishnan 21

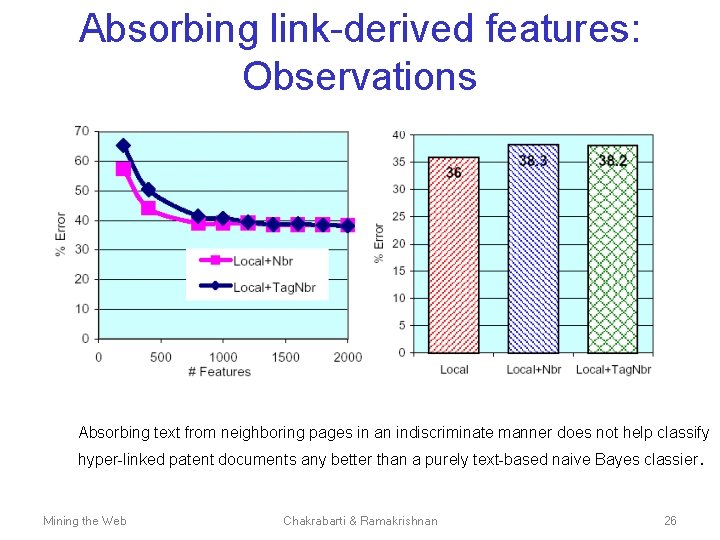

Absorbing features indiscriminate absorption of neighborhood text § Does not help. At times deteriorates accuracy § Reason: Implicit assumption • Topic of a page u is likely to be the same as the topic of a page cited by u. Not always true Topic may be “related” but not “same” • • § Distribution of topics of the pages cited could be quite distorted compared to the totality of contents available from the page itself § E. g. : university page with little textual content • Points to “how to get to our campus” or “recent sports prowess" Mining the Web Chakrabarti & Ramakrishnan 22

Absorbing link-derived features § Key insight 1 • The classes of hyper-linked neighbors is a better • representation of hyperlinks. E. g. : use the fact that u points to a page about athletics to raise our belief that u is a university homepage, ® learn to systematically reduce the attention we pay to the fact that a page links to the Netscape download site. ® § Key insight 2 • class labels are from a is-a hierarchy. ® evidence at the detailed topic level may be too noisy ® coarsening the topic helps collect more reliable data on the dependence between the class of the homepage and the link -derived feature. Mining the Web Chakrabarti & Ramakrishnan 23

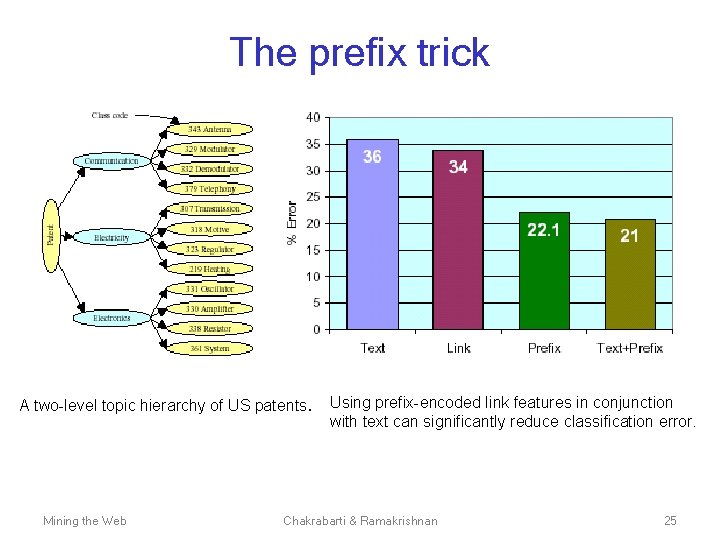

Absorbing link-derived features § Add all prefixes of the class path to the feature pool: § Do feature selection to get rid of noise features § Experiment • Corpus of US patents • Two level topic hierarchy ® three first-level classes, ® each has four children. • Each leaf topic has 800 documents, • Experiment with ® Text ® Link ® Prefix ® Text+Prefix Mining the Web Chakrabarti & Ramakrishnan 24

The prefix trick A two-level topic hierarchy of US patents. Mining the Web Using prefix-encoded link features in conjunction with text can significantly reduce classification error. Chakrabarti & Ramakrishnan 25

Absorbing link-derived features: Observations Absorbing text from neighboring pages in an indiscriminate manner does not help classify hyper-linked patent documents any better than a purely text-based naive Bayes classier. Mining the Web Chakrabarti & Ramakrishnan 26

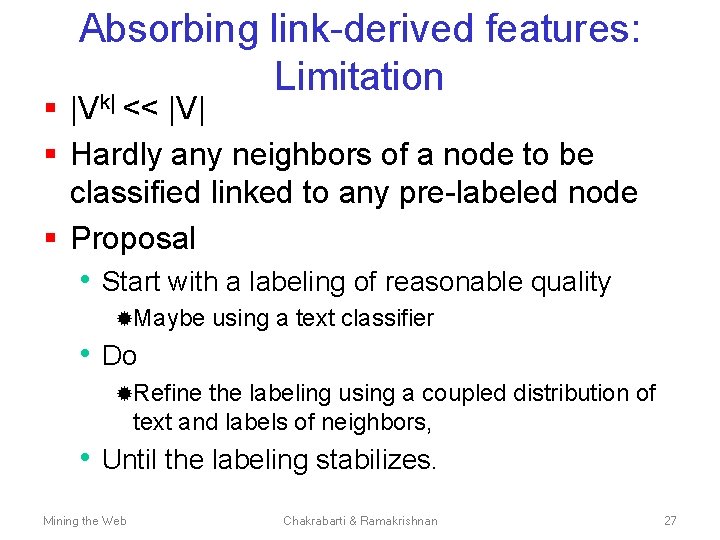

Absorbing link-derived features: Limitation k| § |V << |V| § Hardly any neighbors of a node to be classified linked to any pre-labeled node § Proposal • Start with a labeling of reasonable quality ®Maybe using a text classifier • Do ®Refine the labeling using a coupled distribution of text and labels of neighbors, • Until the labeling stabilizes. Mining the Web Chakrabarti & Ramakrishnan 27

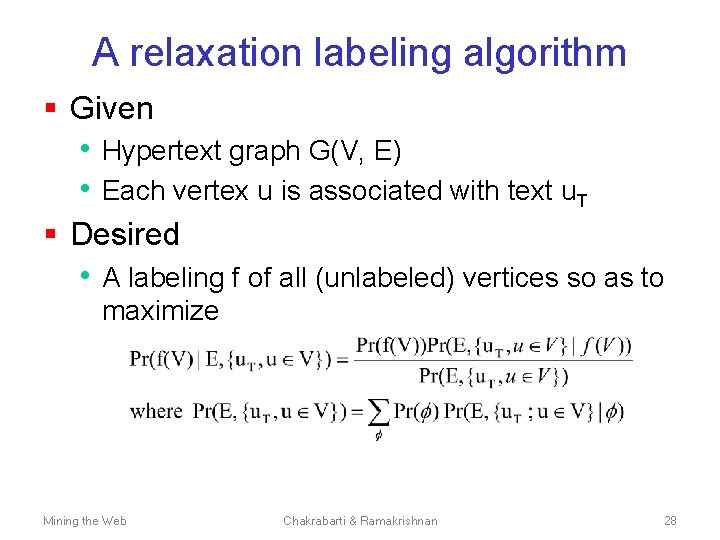

A relaxation labeling algorithm § Given • Hypertext graph G(V, E) • Each vertex u is associated with text u. T § Desired • A labeling f of all (unlabeled) vertices so as to maximize Mining the Web Chakrabarti & Ramakrishnan 28

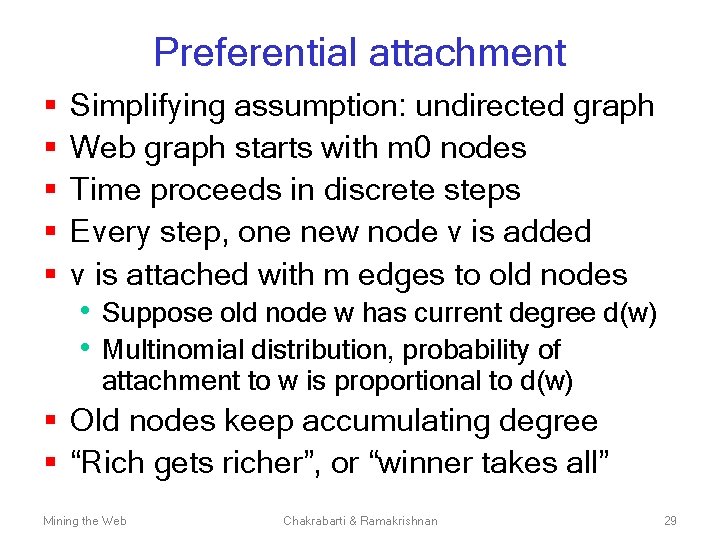

Preferential attachment § § § Simplifying assumption: undirected graph Web graph starts with m 0 nodes Time proceeds in discrete steps Every step, one new node v is added v is attached with m edges to old nodes • Suppose old node w has current degree d(w) • Multinomial distribution, probability of attachment to w is proportional to d(w) § Old nodes keep accumulating degree § “Rich gets richer”, or “winner takes all” Mining the Web Chakrabarti & Ramakrishnan 29

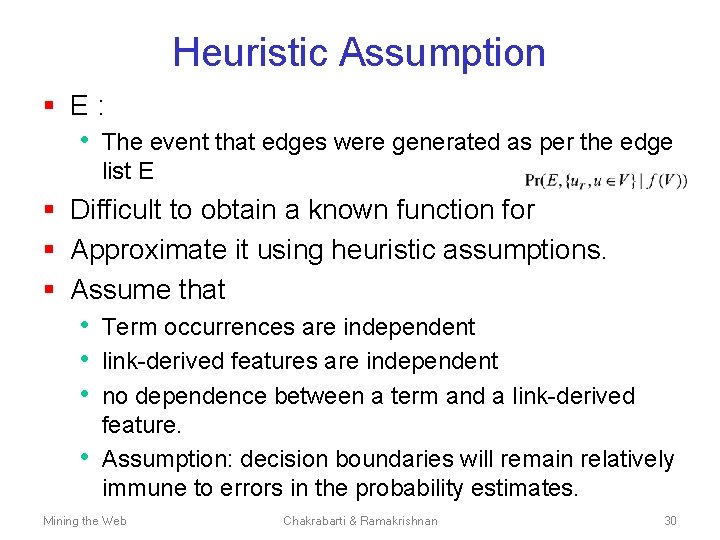

Heuristic Assumption § E: • The event that edges were generated as per the edge list E § Difficult to obtain a known function for § Approximate it using heuristic assumptions. § Assume that • Term occurrences are independent • link-derived features are independent • no dependence between a term and a link-derived • feature. Assumption: decision boundaries will remain relatively immune to errors in the probability estimates. Mining the Web Chakrabarti & Ramakrishnan 30

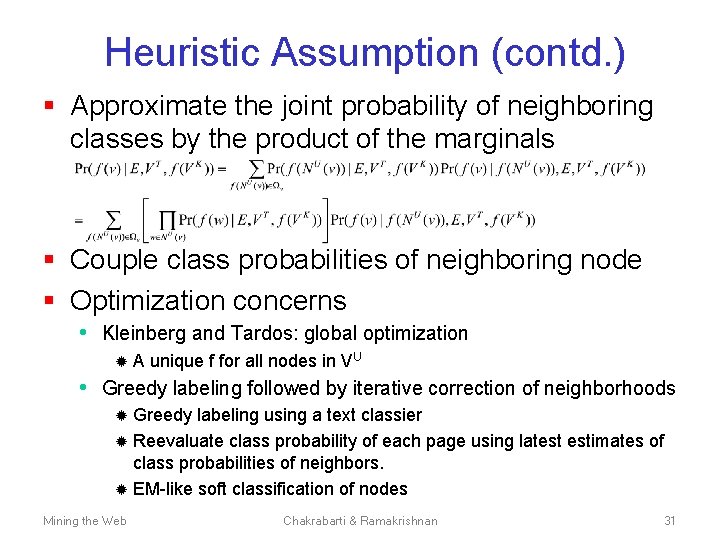

Heuristic Assumption (contd. ) § Approximate the joint probability of neighboring classes by the product of the marginals § Couple class probabilities of neighboring node § Optimization concerns • Kleinberg and Tardos: global optimization ® A unique f for all nodes in VU • Greedy labeling followed by iterative correction of neighborhoods Greedy labeling using a text classier ® Reevaluate class probability of each page using latest estimates of class probabilities of neighbors. ® EM-like soft classification of nodes ® Mining the Web Chakrabarti & Ramakrishnan 31

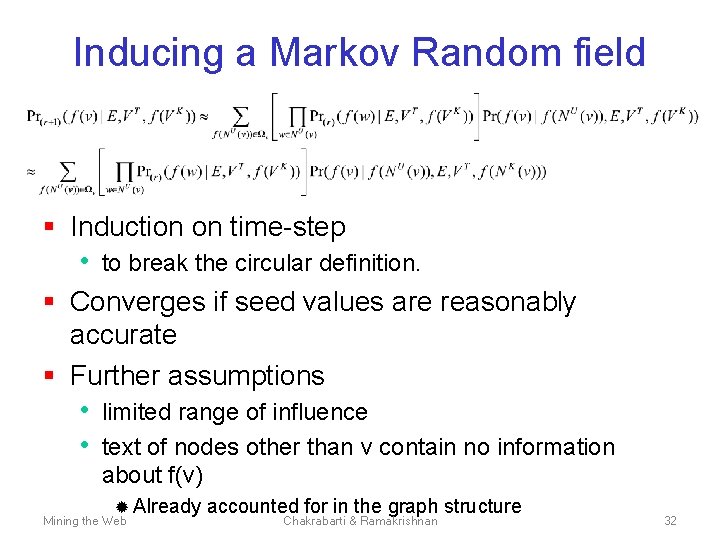

Inducing a Markov Random field § Induction on time-step • to break the circular definition. § Converges if seed values are reasonably accurate § Further assumptions • limited range of influence • text of nodes other than v contain no information about f(v) ® Already Mining the Web accounted for in the graph structure Chakrabarti & Ramakrishnan 32

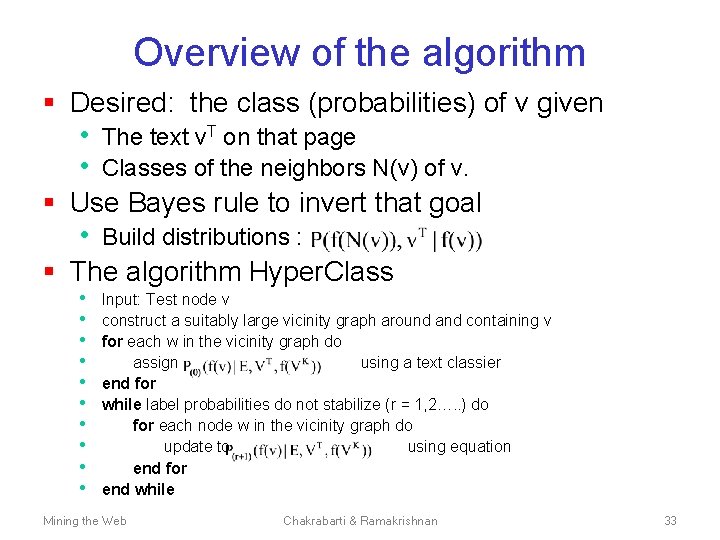

Overview of the algorithm § Desired: the class (probabilities) of v given • The text v. T on that page • Classes of the neighbors N(v) of v. § Use Bayes rule to invert that goal • Build distributions : § The algorithm Hyper. Class • • • Input: Test node v construct a suitably large vicinity graph around and containing v for each w in the vicinity graph do assign using a text classier end for while label probabilities do not stabilize (r = 1, 2…. . ) do for each node w in the vicinity graph do update to using equation end for end while Mining the Web Chakrabarti & Ramakrishnan 33

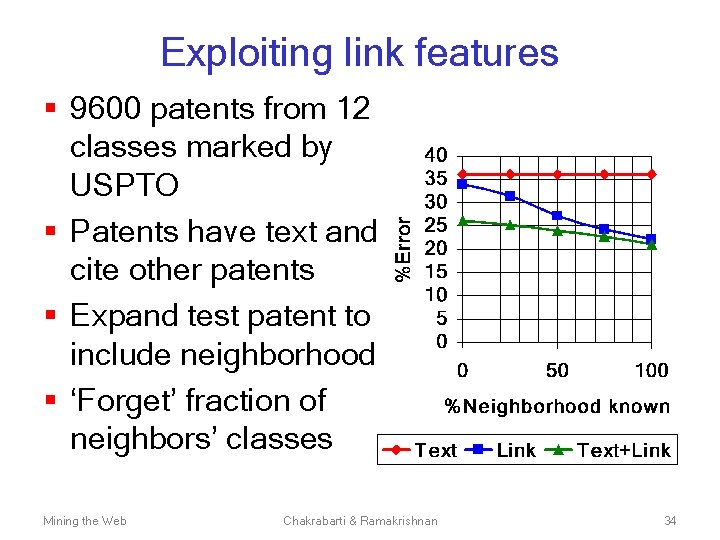

Exploiting link features § 9600 patents from 12 classes marked by USPTO § Patents have text and cite other patents § Expand test patent to include neighborhood § ‘Forget’ fraction of neighbors’ classes Mining the Web Chakrabarti & Ramakrishnan 34

Relaxation labeling: Observations § When the test neighborhood is completely unlabeled. • `Link‘ performs better than the text-based • classier Reason: Model bias ®Pages tend to link to pages with a related class label. " § Relaxation labeling • An approximate procedure to optimize a global objective function on the hypertext graph being labeled. Mining • the A Web metric graph. Chakrabarti & Ramakrishnan labeling problem 35

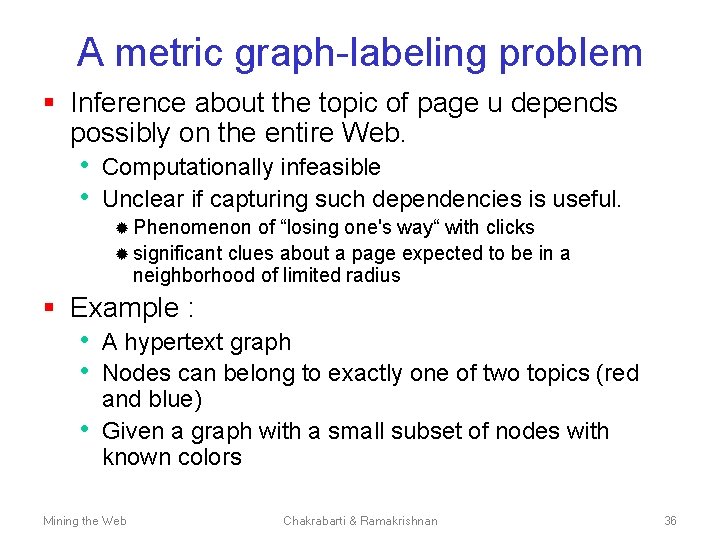

A metric graph-labeling problem § Inference about the topic of page u depends possibly on the entire Web. • Computationally infeasible • Unclear if capturing such dependencies is useful. ® Phenomenon of “losing one's way“ with clicks ® significant clues about a page expected to be in a neighborhood of limited radius § Example : • A hypertext graph • Nodes can belong to exactly one of two topics (red • and blue) Given a graph with a small subset of nodes with known colors Mining the Web Chakrabarti & Ramakrishnan 36

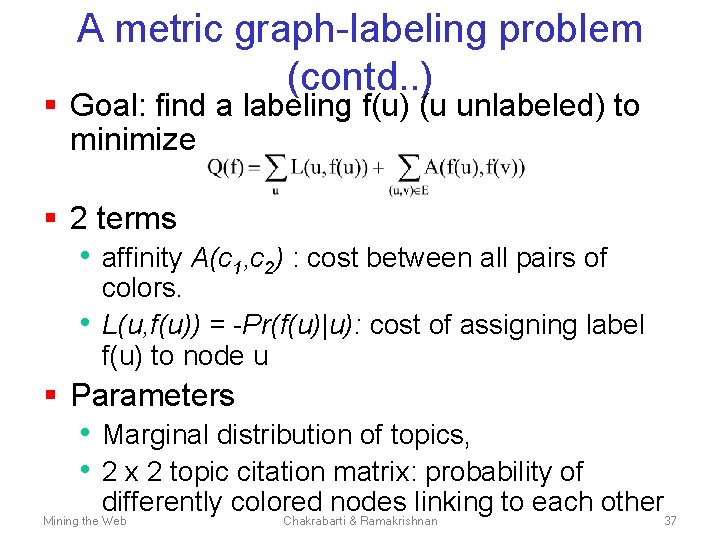

A metric graph-labeling problem (contd. . ) § Goal: find a labeling f(u) (u unlabeled) to minimize § 2 terms • affinity A(c 1, c 2) : cost between all pairs of • colors. L(u, f(u)) = -Pr(f(u)|u): cost of assigning label f(u) to node u § Parameters • Marginal distribution of topics, • 2 x 2 topic citation matrix: probability of differently colored nodes linking to each other Mining the Web Chakrabarti & Ramakrishnan 37

Semi-supervised hypertext classification represented as a problem of completing a partially colored graph subject to a given set of cost constraints. Mining the Web Chakrabarti & Ramakrishnan 38

![A metric graph-labeling problem: NP-Completeness § NP-complete [Kleinberg and Tardos] § approximation algorithms • A metric graph-labeling problem: NP-Completeness § NP-complete [Kleinberg and Tardos] § approximation algorithms •](http://slidetodoc.com/presentation_image_h/abdeb983c296e14515becb92124660dc/image-39.jpg)

A metric graph-labeling problem: NP-Completeness § NP-complete [Kleinberg and Tardos] § approximation algorithms • Within a O(log k) multiplicative factor • of the minimal cost, k = number of distinct class labels. Mining the Web Chakrabarti & Ramakrishnan 39

Problems with approaches so far § Metric or relaxation labeling • Representing accurate joint distributions over thousands of terms ® High space and time complexity § Naïve Models • Fast: assume class-conditional attribute • • • independence, Dimensionality of textual sub-problem >> dimensionality of link sub-problem, Pr(v. T|f(v)) tends to be lower in magnitude than Pr(f(N(v))|f(v)). Hacky workaround: aggressive pruning of textual features Mining the Web Chakrabarti & Ramakrishnan 40

![Co-Training [Blum and Mitchell] § Classifiers with disjoint features spaces. § Co-training of classifiers Co-Training [Blum and Mitchell] § Classifiers with disjoint features spaces. § Co-training of classifiers](http://slidetodoc.com/presentation_image_h/abdeb983c296e14515becb92124660dc/image-41.jpg)

Co-Training [Blum and Mitchell] § Classifiers with disjoint features spaces. § Co-training of classifiers • Scores used by each classifier to train the • other Semi-supervised EM-like training with two classifiers § Assumptions • Two sets of features (LA and LB) per document • • d. A and d. B. Must be no instance d for which Given the label , d. A is conditionally independent of d. B (and vice versa) Mining the Web Chakrabarti & Ramakrishnan 41

Co-training § Divide features into two class-conditionally independent sets § Use labeled data to induce two separate classifiers § Repeat: • Each classifier is “most confident” about some unlabeled • instances These are labeled and added to the training set of the other classifier § Improvements for text + hyperlinks Mining the Web Chakrabarti & Ramakrishnan 42

Co-Training: Performance § d. A=bag of words § d. B=bag of anchor texts from HREF tags § Reduces the error below the levels of both LA and LB individually § Pick a class c by maximizing • Pr(c|d. A) Pr(c|d. B). Mining the Web Chakrabarti & Ramakrishnan 43

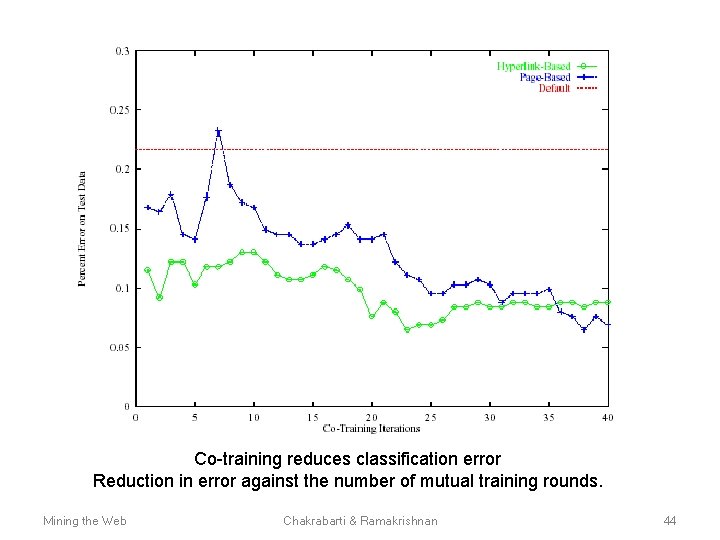

Co-training reduces classification error Reduction in error against the number of mutual training rounds. Mining the Web Chakrabarti & Ramakrishnan 44

- Slides: 44