Seminar in Computer Architecture Meeting 3 a Memory

- Slides: 54

Seminar in Computer Architecture Meeting 3 a: Memory Channel Partitioning Prof. Onur Mutlu ETH Zürich Fall 2020 1 October 2020

Example Paper Presentation 2

We Will Briefly Review This Paper n Sai Prashanth Muralidhara, Lavanya Subramanian, Onur Mutlu, Mahmut Kandemir, and Thomas Moscibroda, "Reducing Memory Interference in Multicore Systems via Application-Aware Memory Channel Partitioning" Proceedings of the 44 th International Symposium on Microarchitecture (MICRO), Porto Alegre, Brazil, December 2011. Slides (pptx) 3

Application-Aware Memory Channel Partitioning Sai Prashanth Muralidhara § Lavanya Subramanian † Onur Mutlu † Mahmut Kandemir § Thomas Moscibroda ‡ § Pennsylvania State University † Carnegie Mellon University ‡ Microsoft Research

Background, Problem & Goal 5

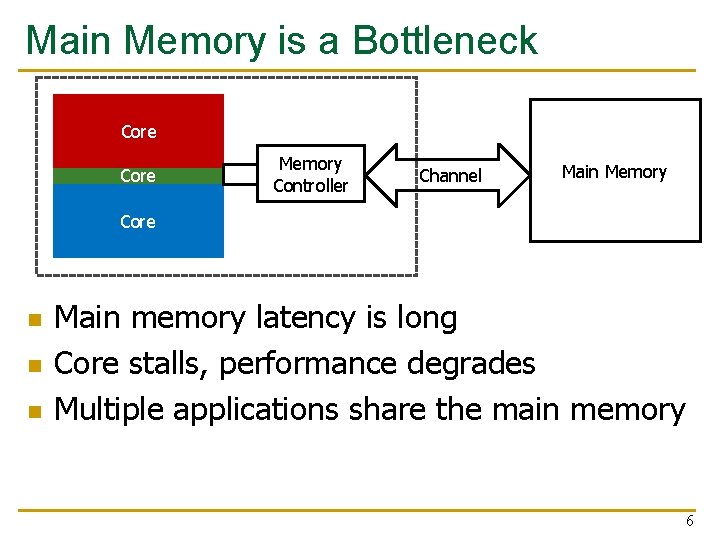

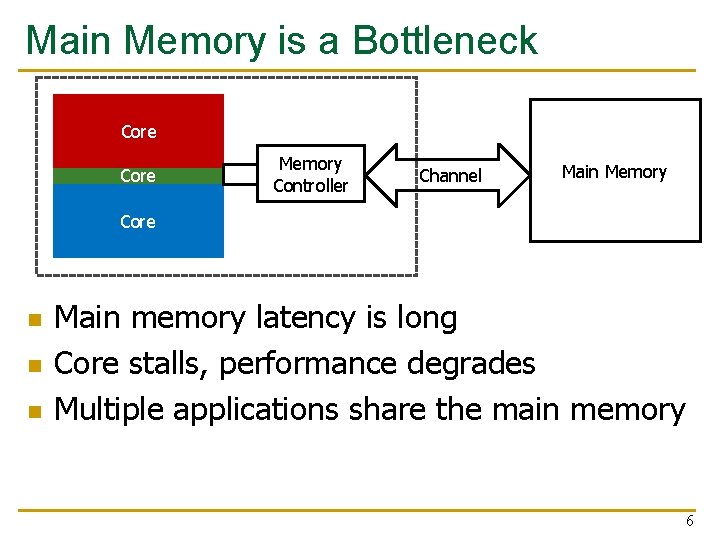

Main Memory is a Bottleneck Core Memory Controller Channel Main Memory Core n n n Main memory latency is long Core stalls, performance degrades Multiple applications share the main memory 6

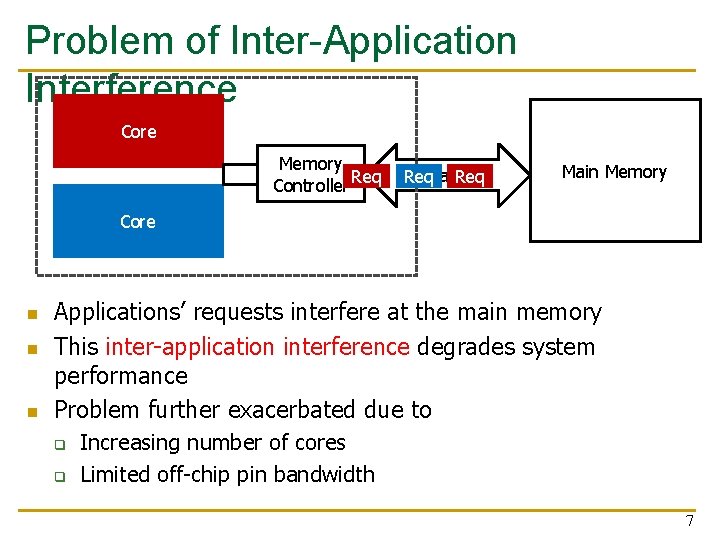

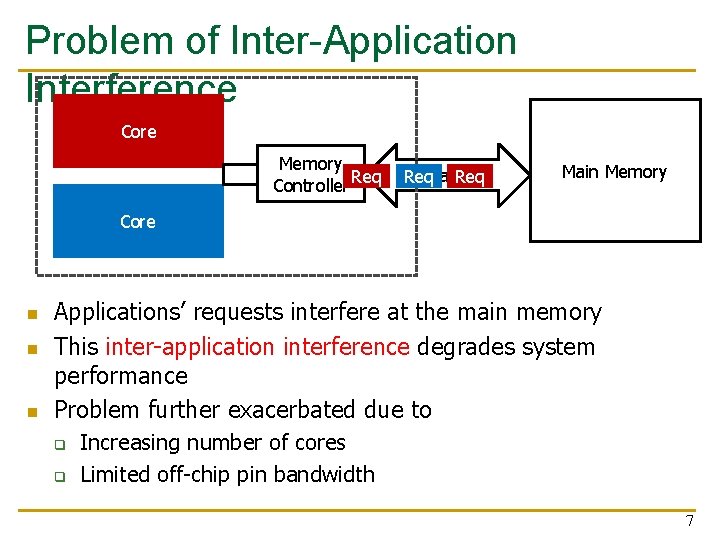

Problem of Inter-Application Interference Core Memory Controller Req Channel Req Main Memory Core n n n Applications’ requests interfere at the main memory This inter-application interference degrades system performance Problem further exacerbated due to q q Increasing number of cores Limited off-chip pin bandwidth 7

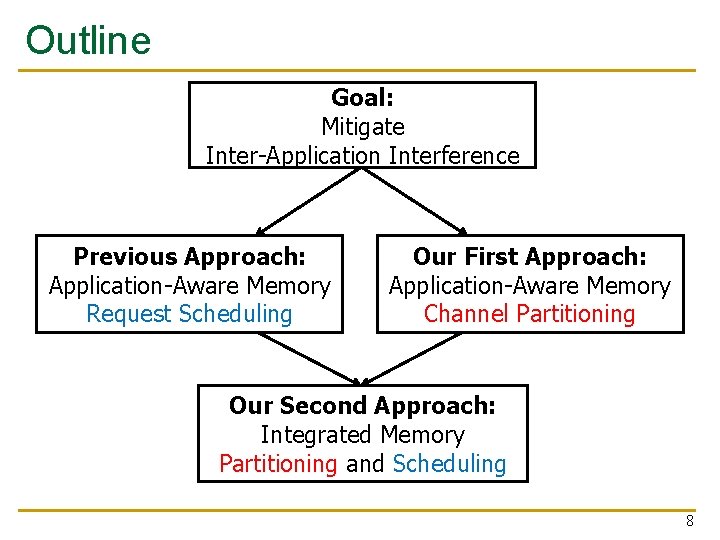

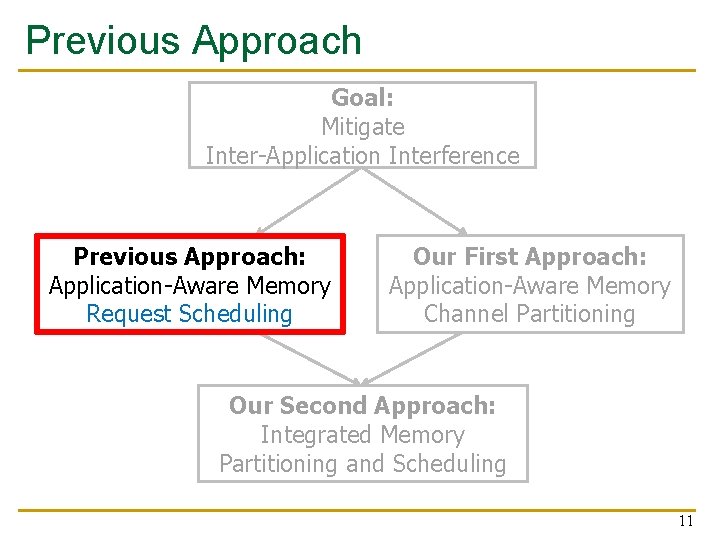

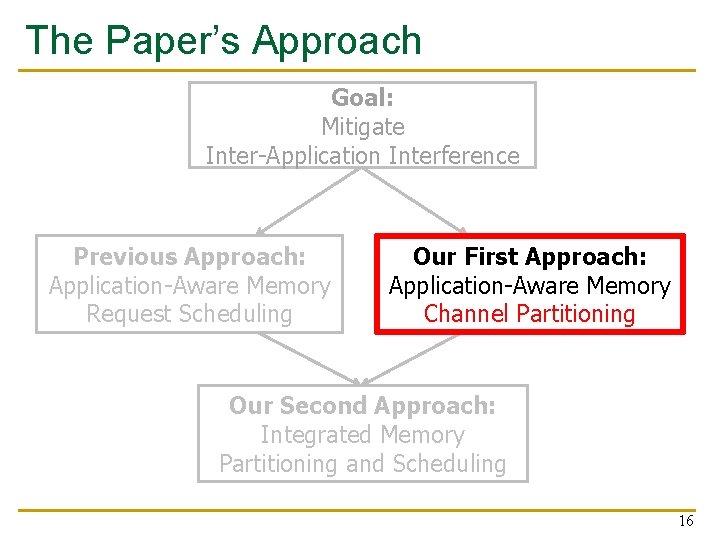

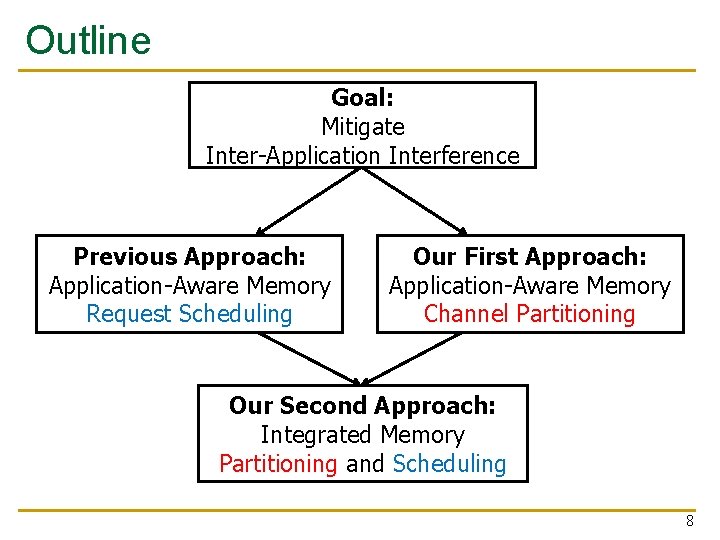

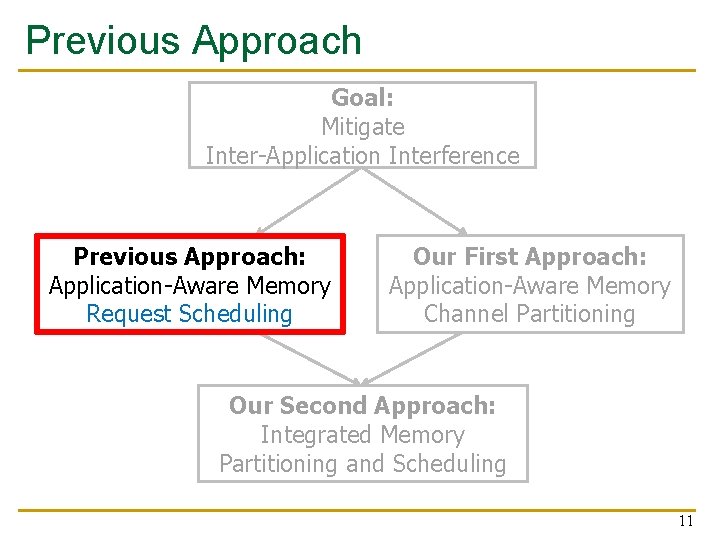

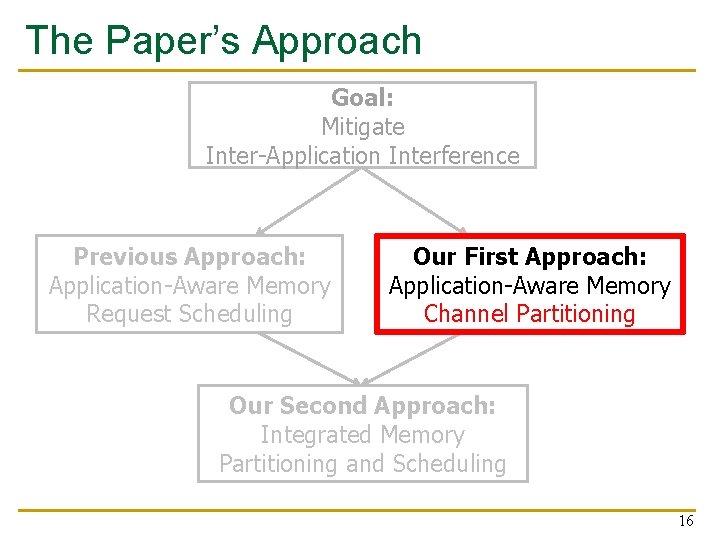

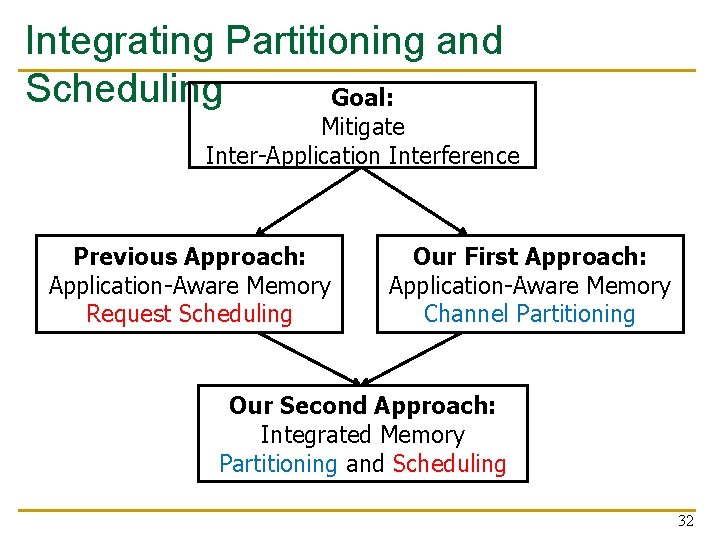

Outline Goal: Mitigate Inter-Application Interference Previous Approach: Application-Aware Memory Request Scheduling Our First Approach: Application-Aware Memory Channel Partitioning Our Second Approach: Integrated Memory Partitioning and Scheduling 8

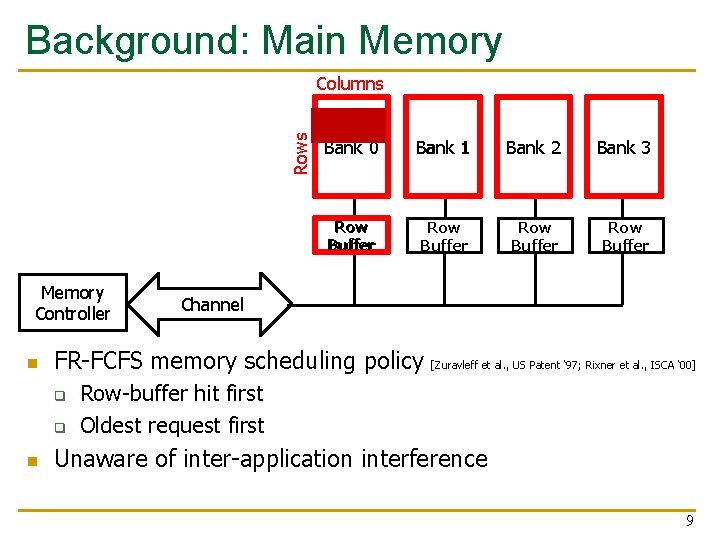

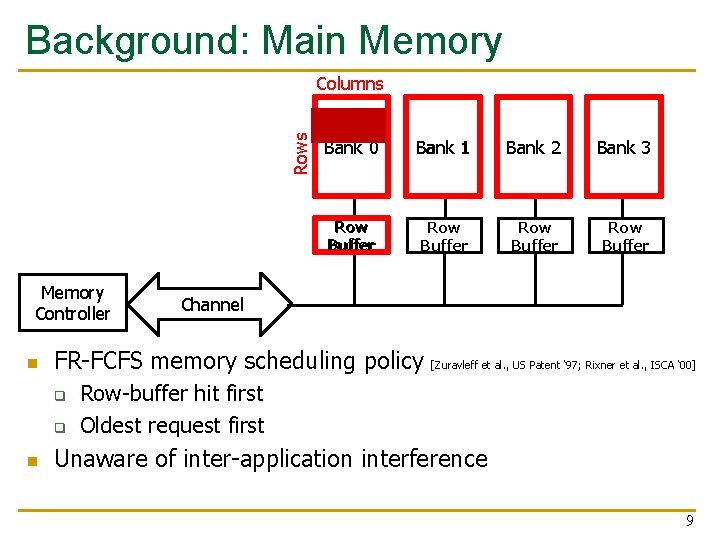

Background: Main Memory Rows Columns Memory Controller n Bank 1 Bank 2 Bank 3 Row Buffer Row Buffer Channel FR-FCFS memory scheduling policy q q n Bank 0 [Zuravleff et al. , US Patent ‘ 97; Rixner et al. , ISCA ‘ 00] Row-buffer hit first Oldest request first Unaware of inter-application interference 9

Novelty 10

Previous Approach Goal: Mitigate Inter-Application Interference Previous Approach: Application-Aware Memory Request Scheduling Our First Approach: Application-Aware Memory Channel Partitioning Our Second Approach: Integrated Memory Partitioning and Scheduling 11

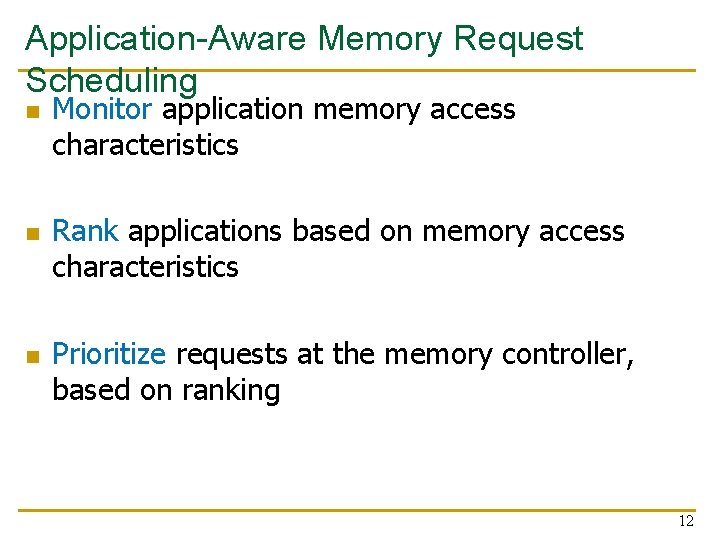

Application-Aware Memory Request Scheduling n n n Monitor application memory access characteristics Rank applications based on memory access characteristics Prioritize requests at the memory controller, based on ranking 12

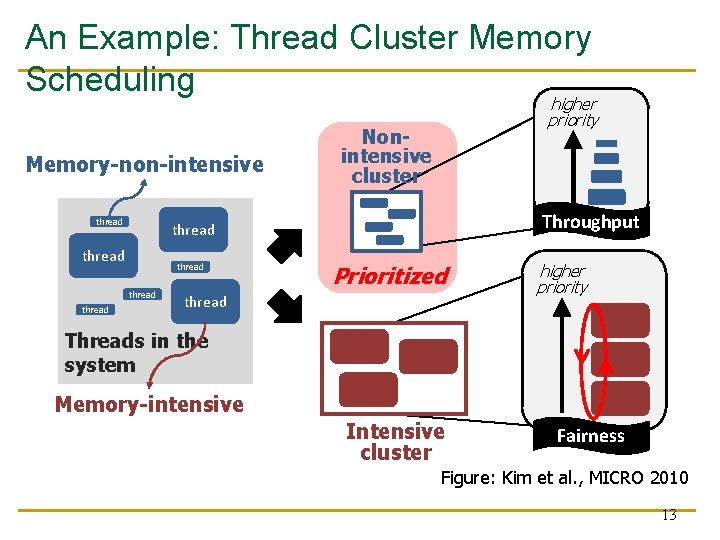

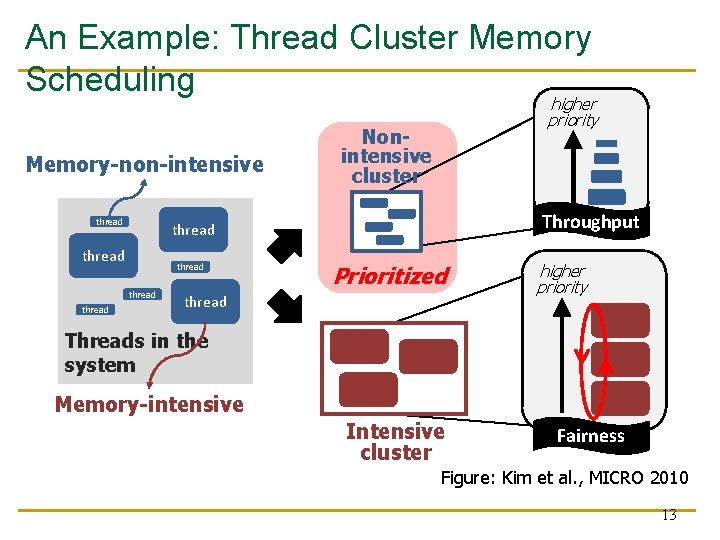

An Example: Thread Cluster Memory Scheduling Memory-non-intensive thread Nonintensive cluster Throughput thread thread higher priority Prioritized thread higher priority Threads in the system Memory-intensive Intensive cluster Fairness Figure: Kim et al. , MICRO 2010 13

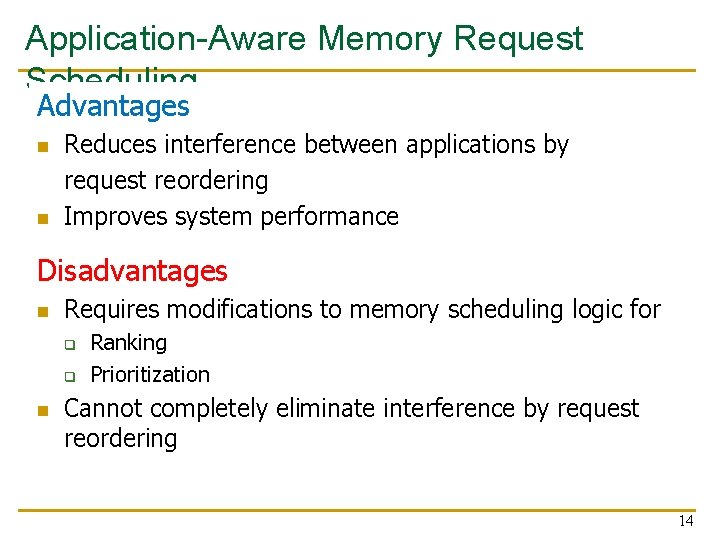

Application-Aware Memory Request Scheduling Advantages n n Reduces interference between applications by request reordering Improves system performance Disadvantages n Requires modifications to memory scheduling logic for q q n Ranking Prioritization Cannot completely eliminate interference by request reordering 14

Key Approach and Ideas 15

The Paper’s Approach Goal: Mitigate Inter-Application Interference Previous Approach: Application-Aware Memory Request Scheduling Our First Approach: Application-Aware Memory Channel Partitioning Our Second Approach: Integrated Memory Partitioning and Scheduling 16

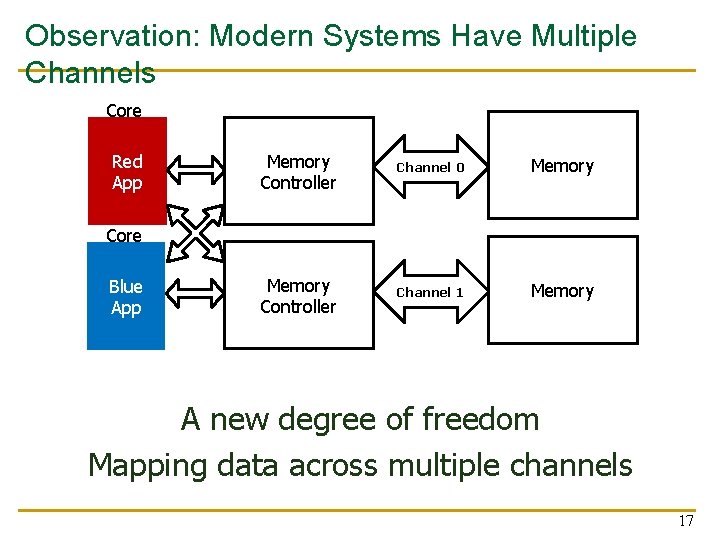

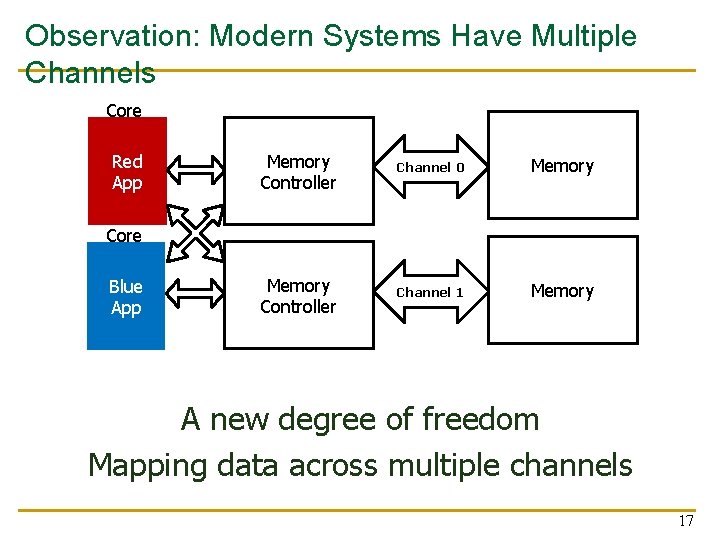

Observation: Modern Systems Have Multiple Channels Core Red App Memory Controller Channel 0 Memory Controller Channel 1 Memory Core Blue App A new degree of freedom Mapping data across multiple channels 17

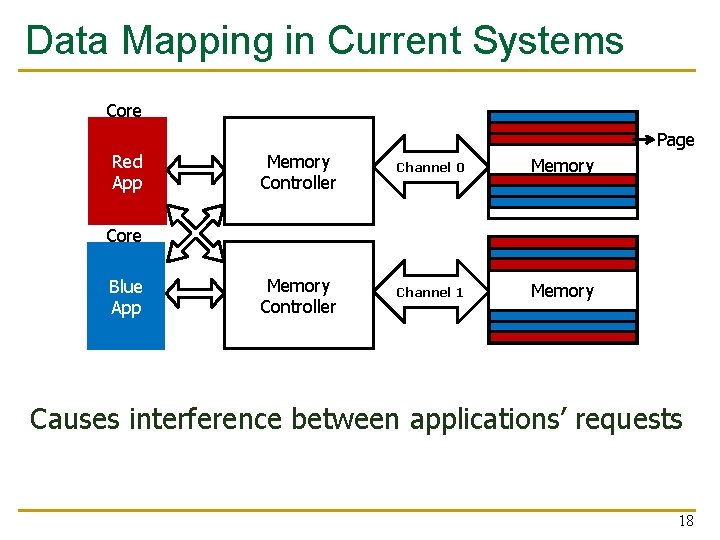

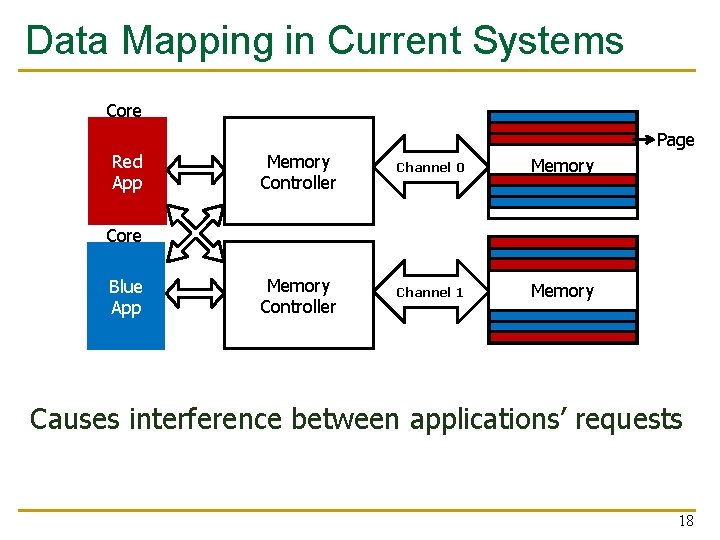

Data Mapping in Current Systems Core Red App Page Memory Controller Channel 0 Memory Controller Channel 1 Memory Core Blue App Causes interference between applications’ requests 18

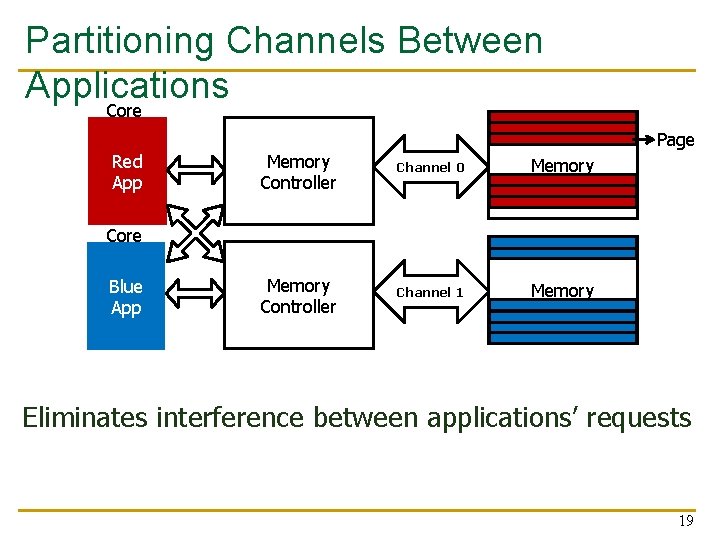

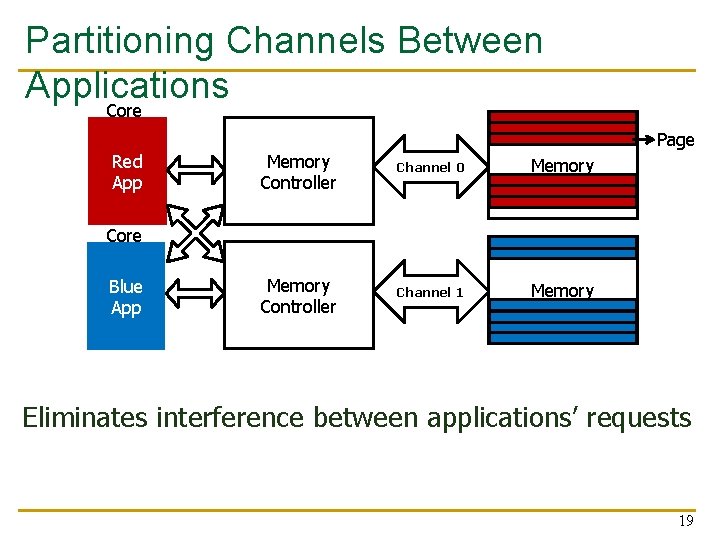

Partitioning Channels Between Applications Core Red App Page Memory Controller Channel 0 Memory Controller Channel 1 Memory Core Blue App Eliminates interference between applications’ requests 19

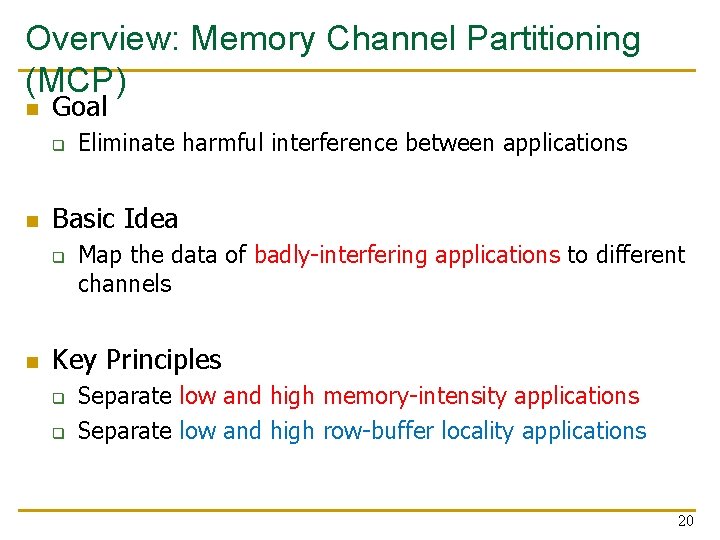

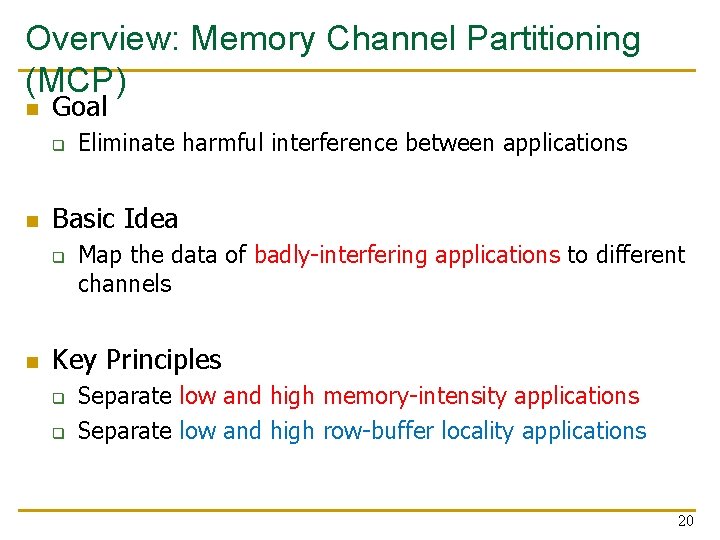

Overview: Memory Channel Partitioning (MCP) n Goal q n Basic Idea q n Eliminate harmful interference between applications Map the data of badly-interfering applications to different channels Key Principles q q Separate low and high memory-intensity applications Separate low and high row-buffer locality applications 20

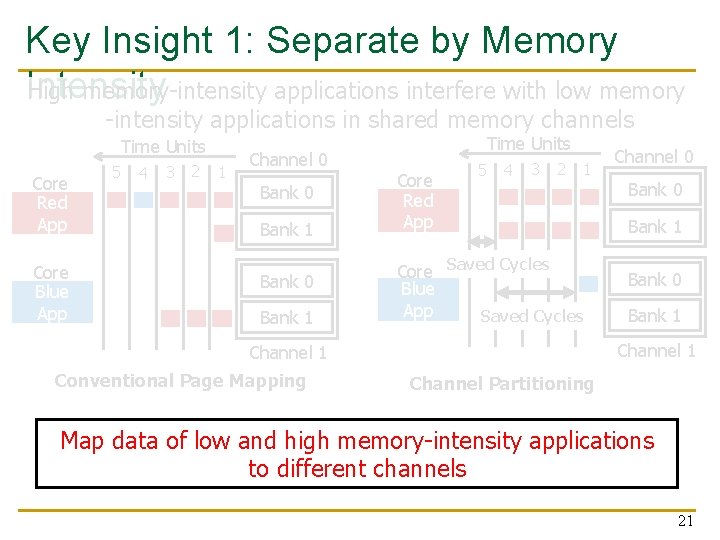

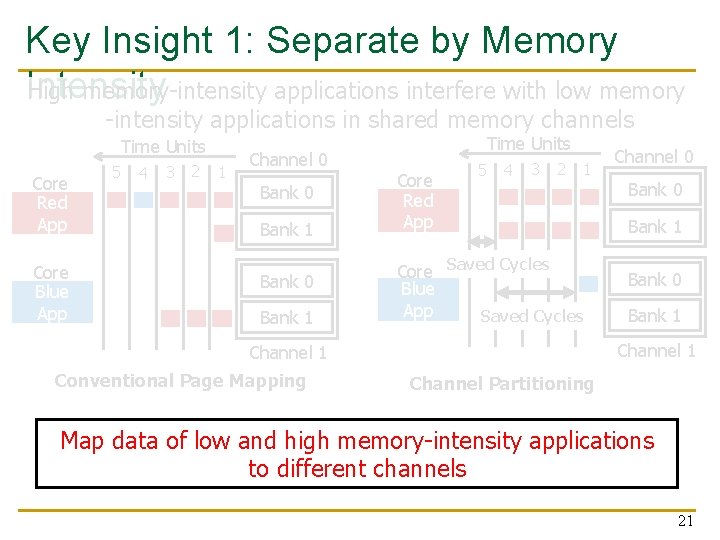

Key Insight 1: Separate by Memory Intensity High memory-intensity applications interfere with low memory -intensity applications in shared memory channels Time Units Core Red App Core Blue App 5 4 3 2 1 Channel 0 Bank 1 Bank 0 Bank 1 Time Units Core Red App 5 4 3 2 1 Core Saved Cycles Blue App Saved Cycles Bank 0 Bank 1 Channel 1 Conventional Page Mapping Channel 0 Channel Partitioning Map data of low and high memory-intensity applications to different channels 21

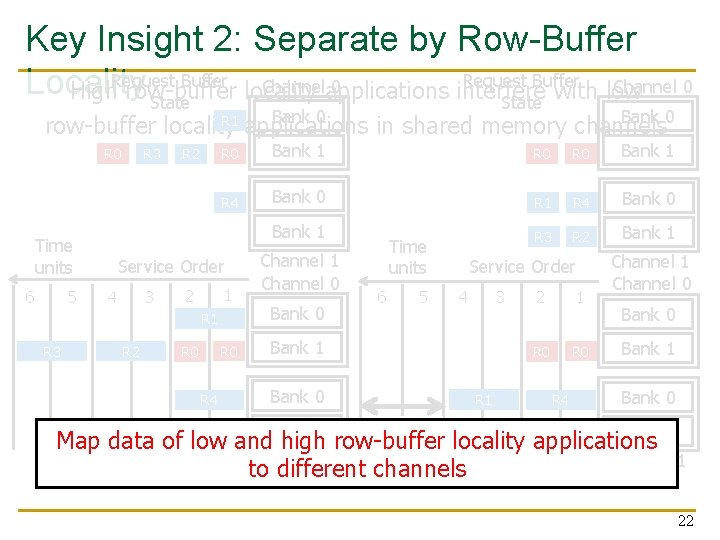

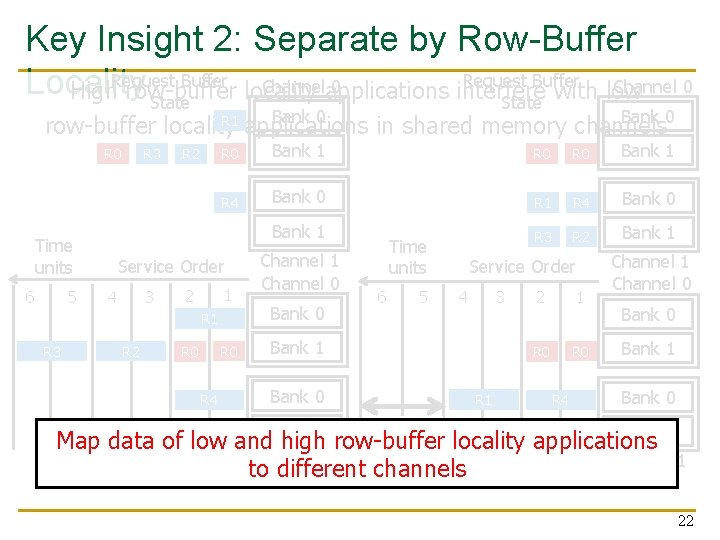

Key Insight 2: Separate by Row-Buffer Request Buffer Channel 0 Channelapplications 0 Locality High. Request row-buffer locality interfere with low State Bank 0 R 1 row-buffer locality applications in shared memory channels R 0 Time units 6 5 R 3 R 2 R 0 Bank 1 R 4 Bank 0 R 1 R 4 Bank 0 Bank 1 R 3 R 2 Bank 1 Service Order 3 4 1 2 R 1 R 3 R 2 R 0 R 4 Channel 1 Channel 0 Bank 0 Time units 6 5 Service Order 3 4 Bank 1 Bank 0 Bank 1 R 1 2 1 R 0 R 4 Channel 1 Channel 0 Bank 1 Bank 0 Saved row-buffer Cycles Bank 1 R 3 R 2 Map data of low and high locality applications Channel 1 to different channels Conventional Page Mapping Channel Partitioning 22

Mechanisms (in some detail) 23

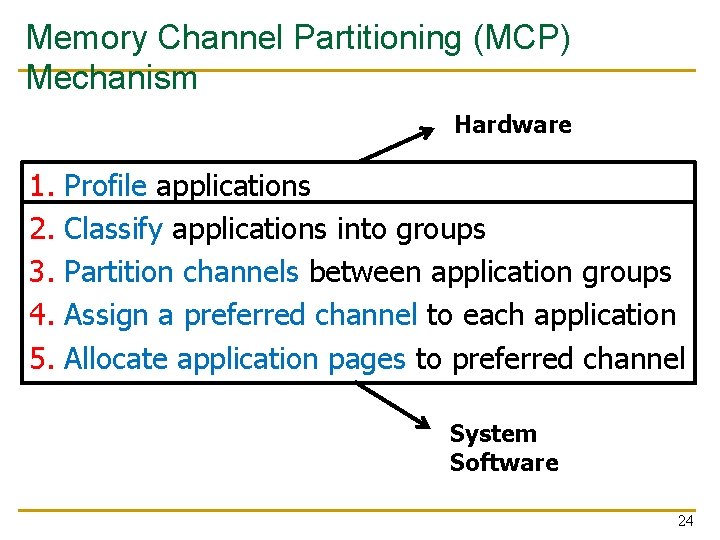

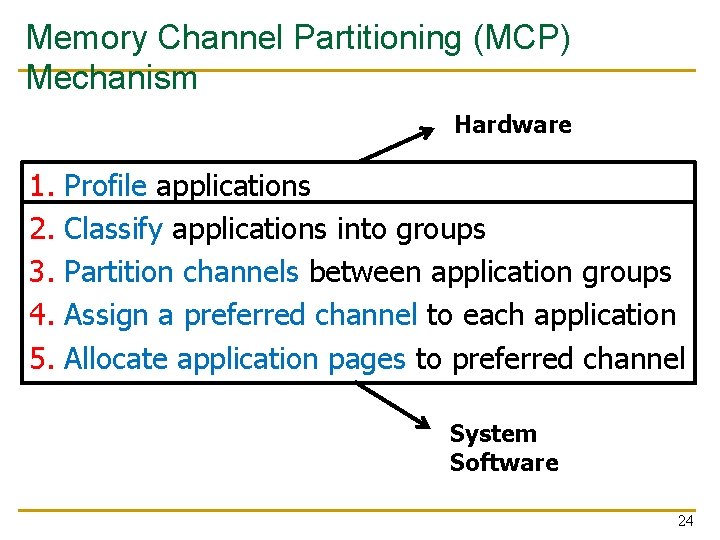

Memory Channel Partitioning (MCP) Mechanism Hardware 1. 2. 3. 4. 5. Profile applications Classify applications into groups Partition channels between application groups Assign a preferred channel to each application Allocate application pages to preferred channel System Software 24

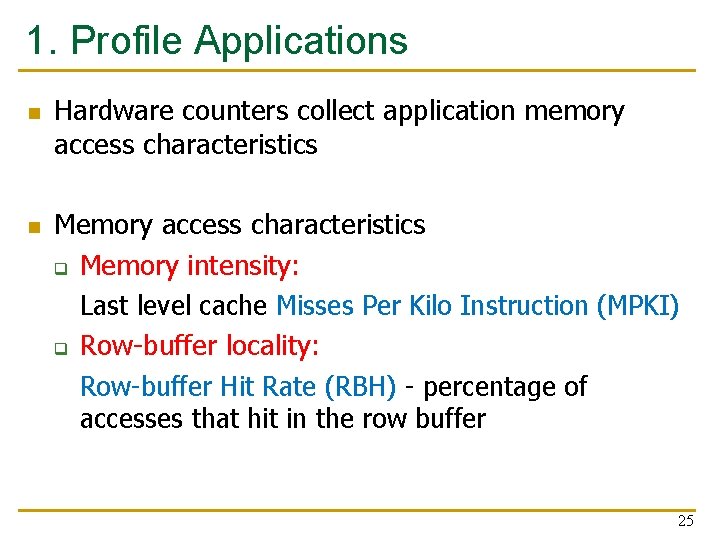

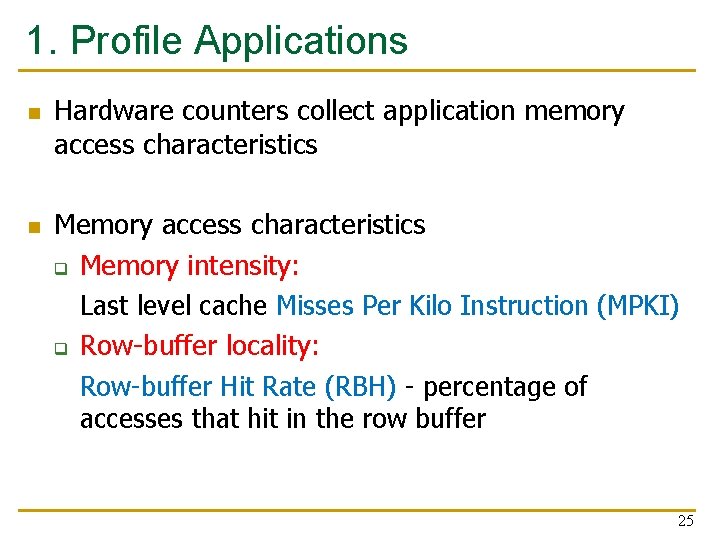

1. Profile Applications n n Hardware counters collect application memory access characteristics Memory access characteristics q Memory intensity: Last level cache Misses Per Kilo Instruction (MPKI) q Row-buffer locality: Row-buffer Hit Rate (RBH) - percentage of accesses that hit in the row buffer 25

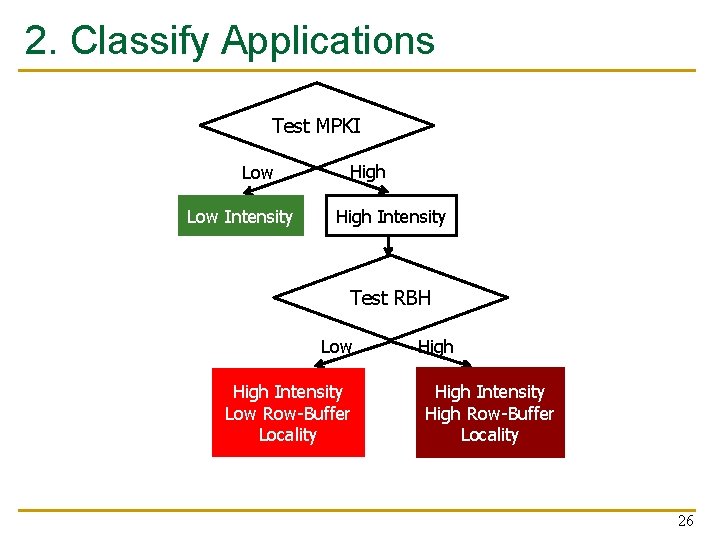

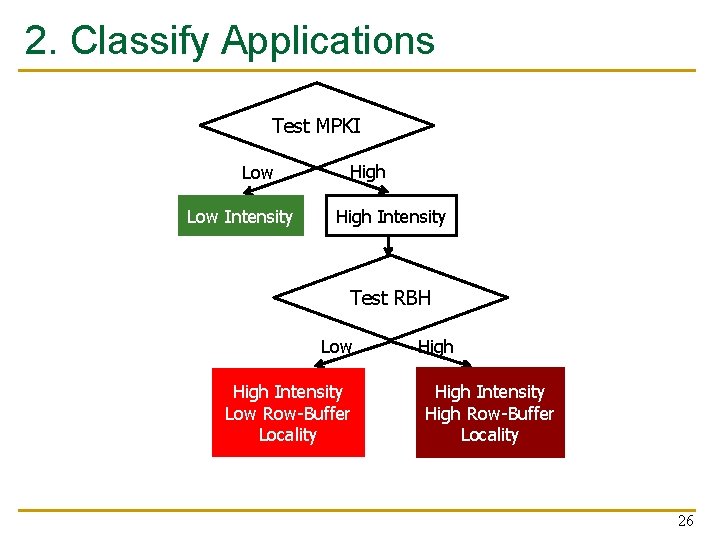

2. Classify Applications Test MPKI Low Intensity High Intensity Test RBH Low High Intensity Low Row-Buffer Locality High Intensity High Row-Buffer Locality 26

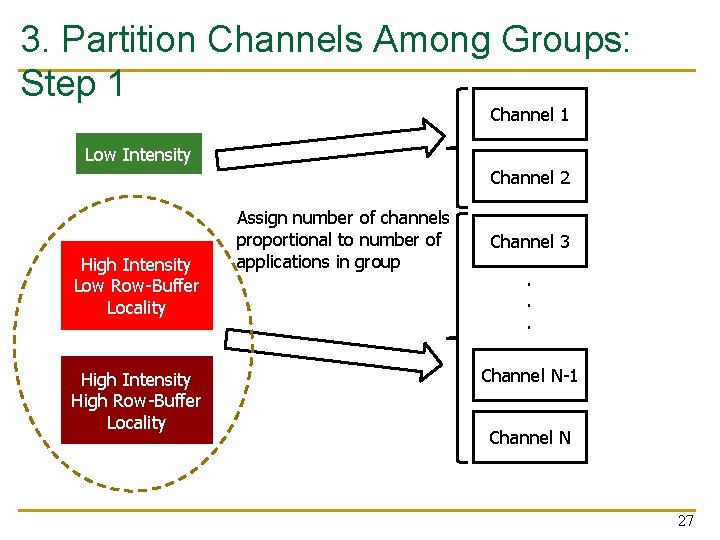

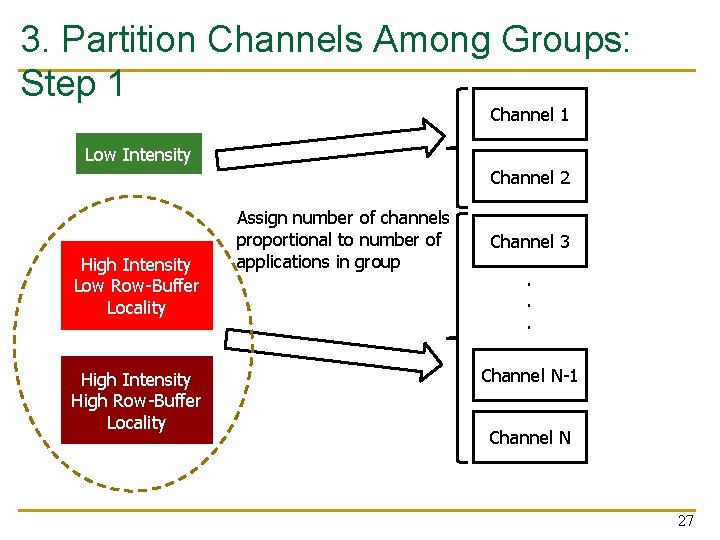

3. Partition Channels Among Groups: Step 1 Channel 1 Low Intensity Channel 2 High Intensity Low Row-Buffer Locality High Intensity High Row-Buffer Locality Assign number of channels proportional to number of applications in group Channel 3. . . Channel N-1 Channel N 27

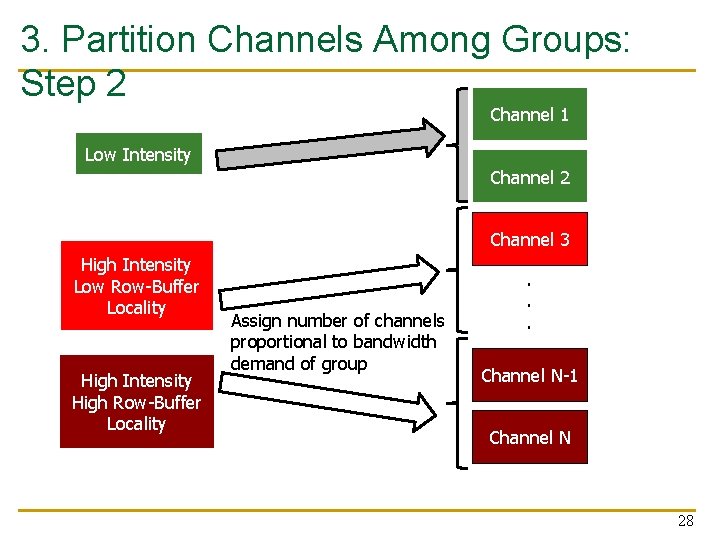

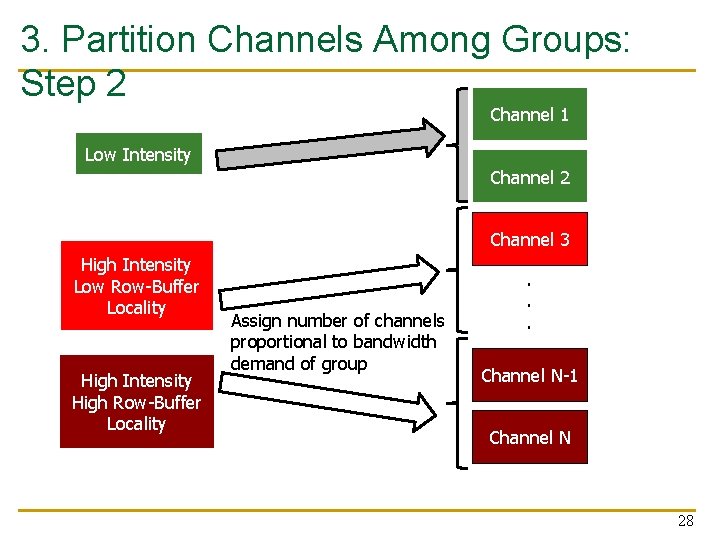

3. Partition Channels Among Groups: Step 2 Channel 1 Low Intensity Channel 2 Channel 3 High Intensity Low Row-Buffer Locality High Intensity High Row-Buffer Locality Assign number of channels proportional to bandwidth demand of group . . . Channel N-1. . Channel N 28

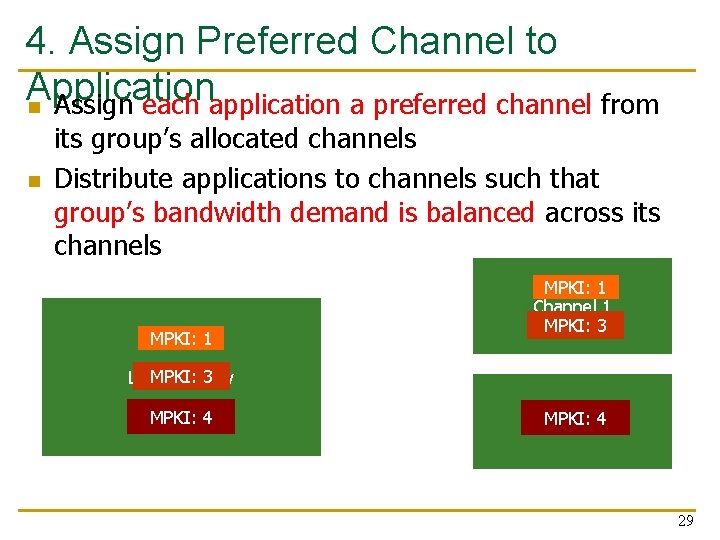

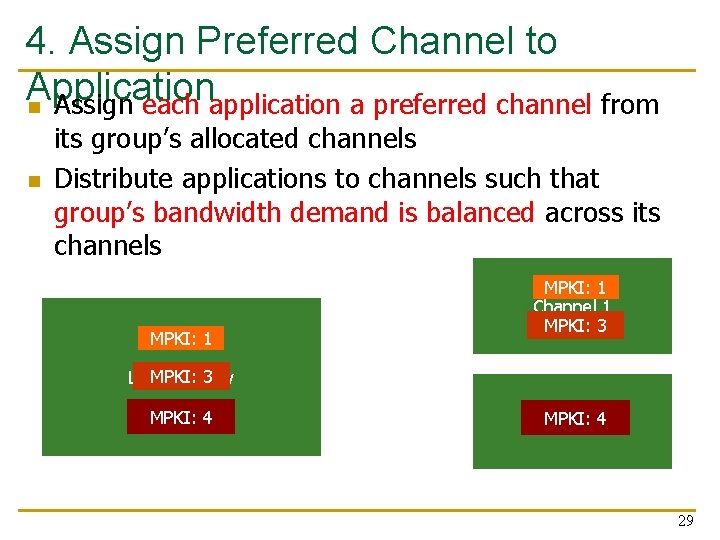

4. Assign Preferred Channel to Application n Assign each application a preferred channel from n its group’s allocated channels Distribute applications to channels such that group’s bandwidth demand is balanced across its channels MPKI: 1 Channel 1 MPKI: 3 Low Intensity MPKI: 42 Channel 29

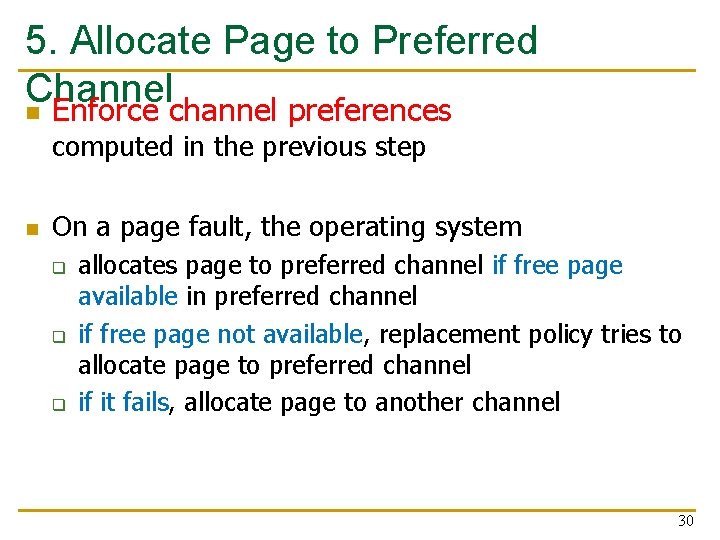

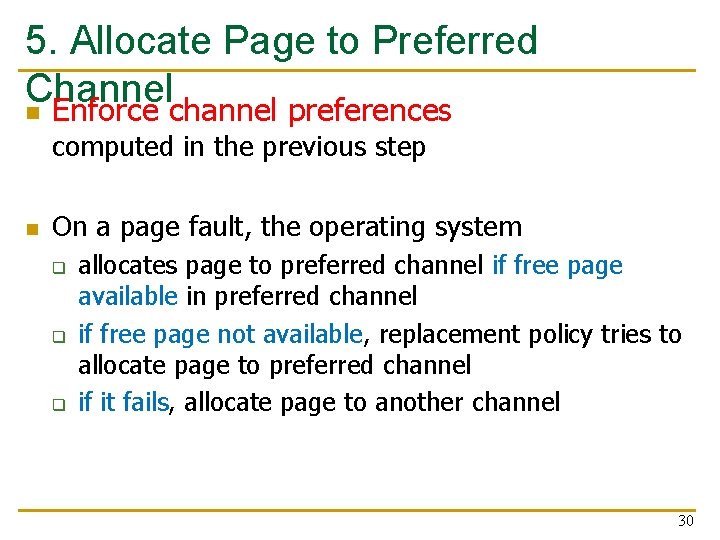

5. Allocate Page to Preferred Channel n Enforce channel preferences computed in the previous step n On a page fault, the operating system q q q allocates page to preferred channel if free page available in preferred channel if free page not available, replacement policy tries to allocate page to preferred channel if it fails, allocate page to another channel 30

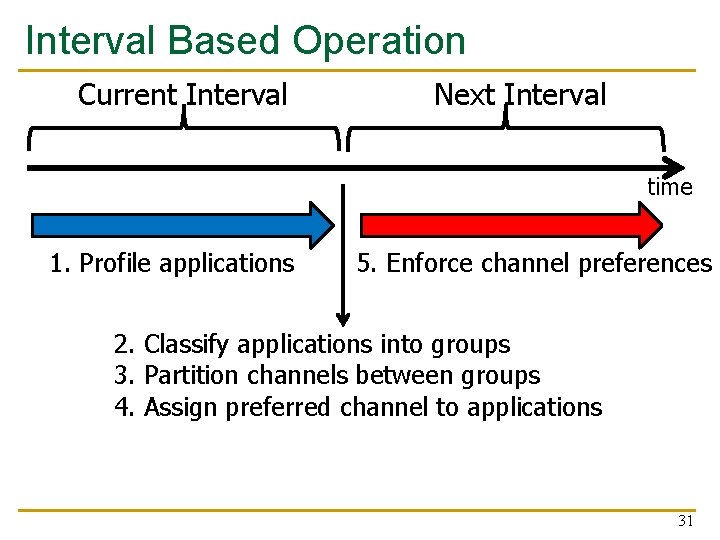

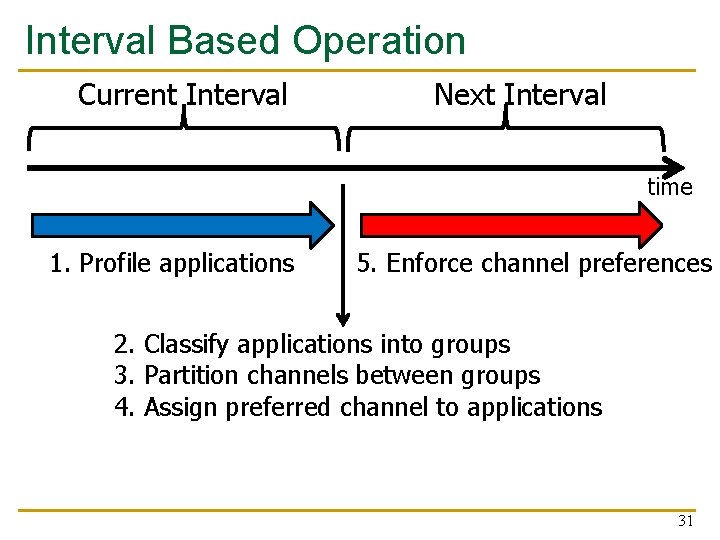

Interval Based Operation Current Interval Next Interval time 1. Profile applications 5. Enforce channel preferences 2. Classify applications into groups 3. Partition channels between groups 4. Assign preferred channel to applications 31

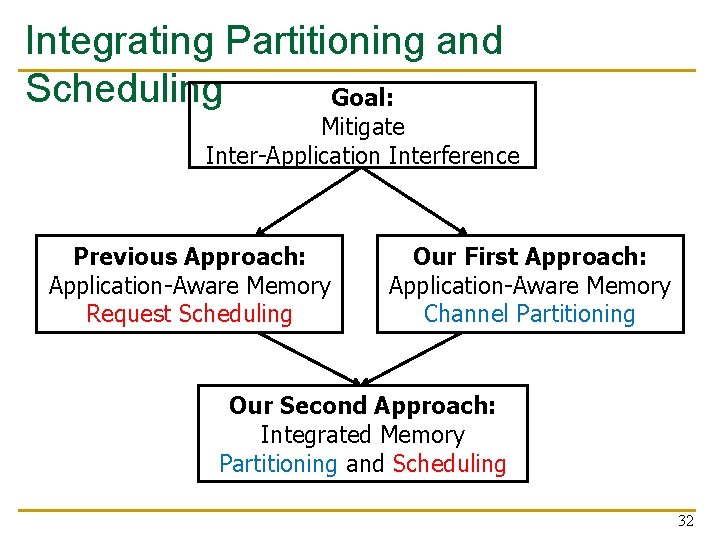

Integrating Partitioning and Scheduling Goal: Mitigate Inter-Application Interference Previous Approach: Application-Aware Memory Request Scheduling Our First Approach: Application-Aware Memory Channel Partitioning Our Second Approach: Integrated Memory Partitioning and Scheduling 32

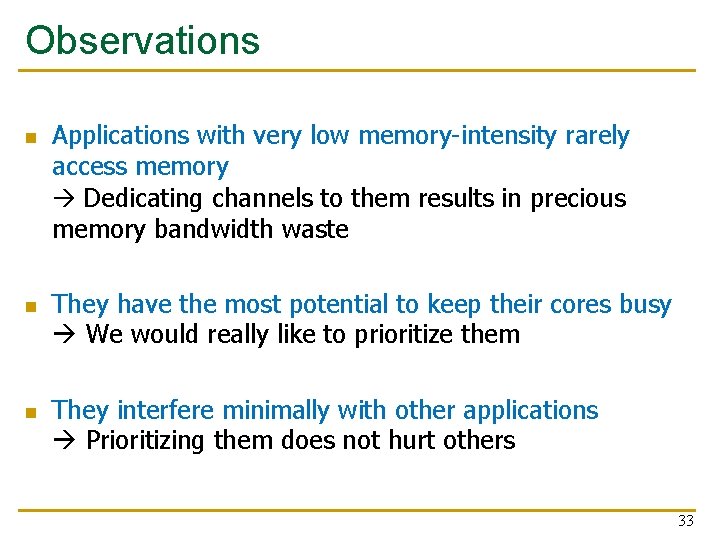

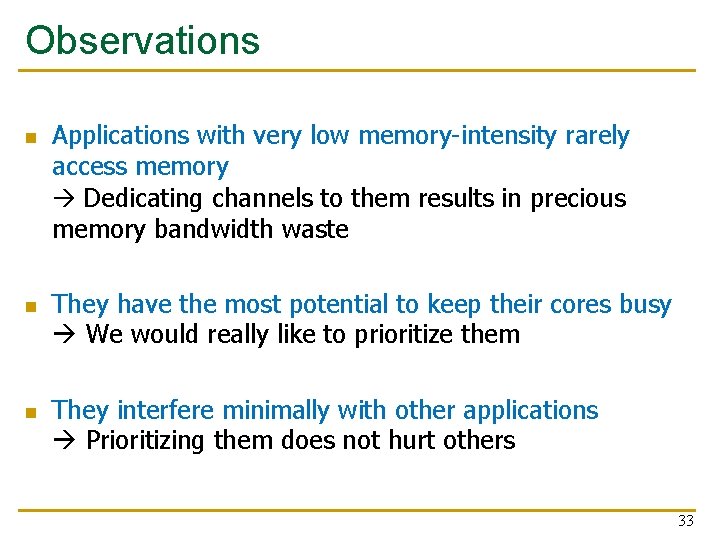

Observations n n n Applications with very low memory-intensity rarely access memory Dedicating channels to them results in precious memory bandwidth waste They have the most potential to keep their cores busy We would really like to prioritize them They interfere minimally with other applications Prioritizing them does not hurt others 33

Integrated Memory Partitioning and Scheduling (IMPS) n n Always prioritize very low memory-intensity applications in the memory scheduler Use memory channel partitioning to mitigate interference between other applications 34

Key Results: Methodology and Evaluation 35

Hardware Cost n n Memory Channel Partitioning (MCP) q Only profiling counters in hardware q No modifications to memory scheduling logic q 1. 5 KB storage cost for a 24 -core, 4 -channel system Integrated Memory Partitioning and Scheduling (IMPS) q A single bit per request q Scheduler prioritizes based on this single bit 36

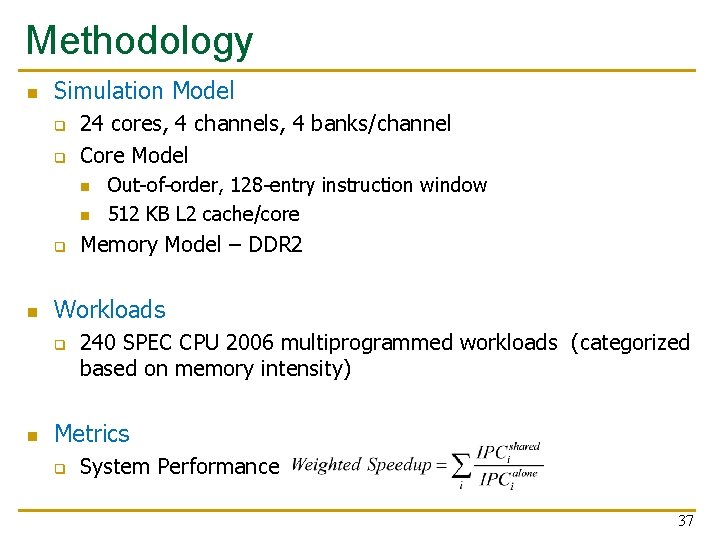

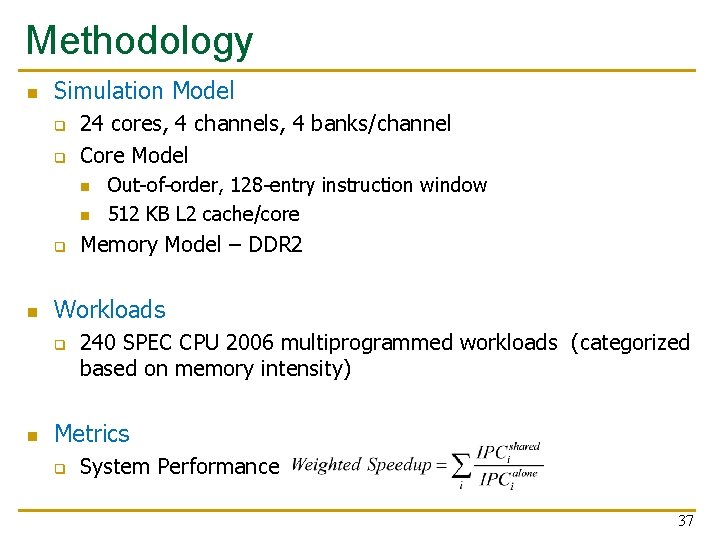

Methodology n Simulation Model q q 24 cores, 4 channels, 4 banks/channel Core Model n n q n Memory Model – DDR 2 Workloads q n Out-of-order, 128 -entry instruction window 512 KB L 2 cache/core 240 SPEC CPU 2006 multiprogrammed workloads (categorized based on memory intensity) Metrics q System Performance 37

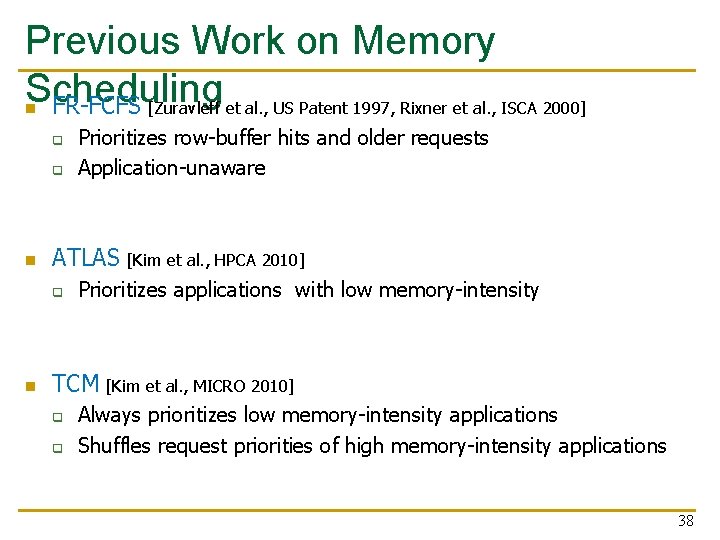

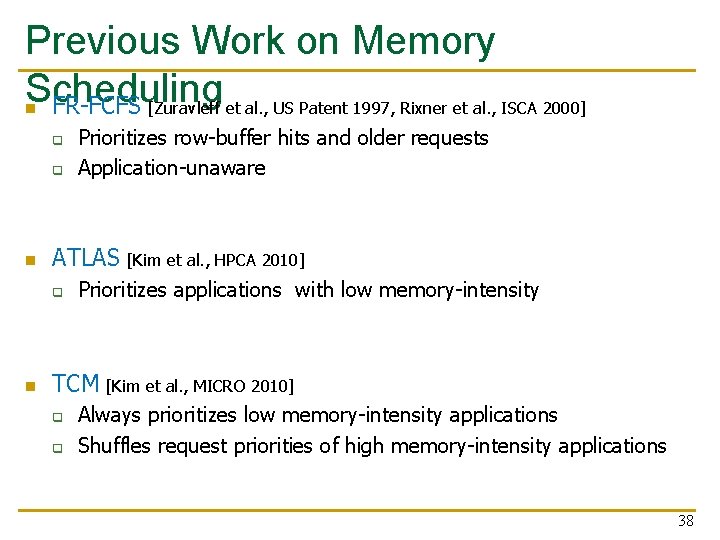

Previous Work on Memory Scheduling n FR-FCFS [Zuravleff et al. , US Patent 1997, Rixner et al. , ISCA 2000] q q n ATLAS [Kim et al. , HPCA 2010] q n Prioritizes row-buffer hits and older requests Application-unaware Prioritizes applications with low memory-intensity TCM [Kim et al. , MICRO 2010] q q Always prioritizes low memory-intensity applications Shuffles request priorities of high memory-intensity applications 38

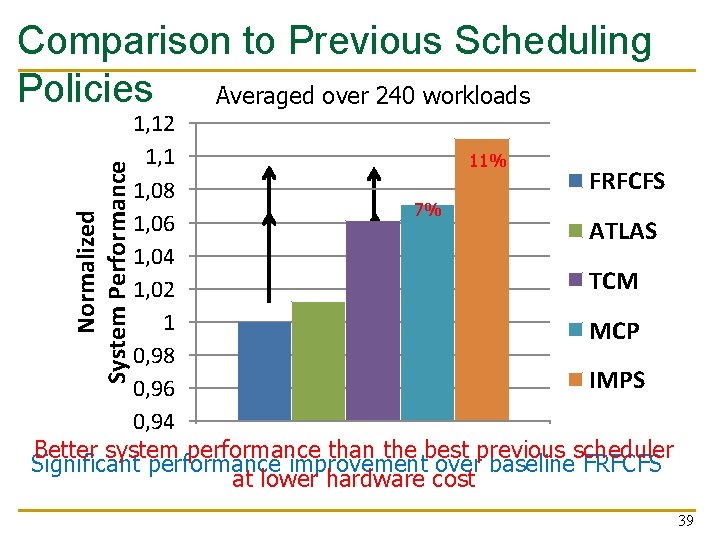

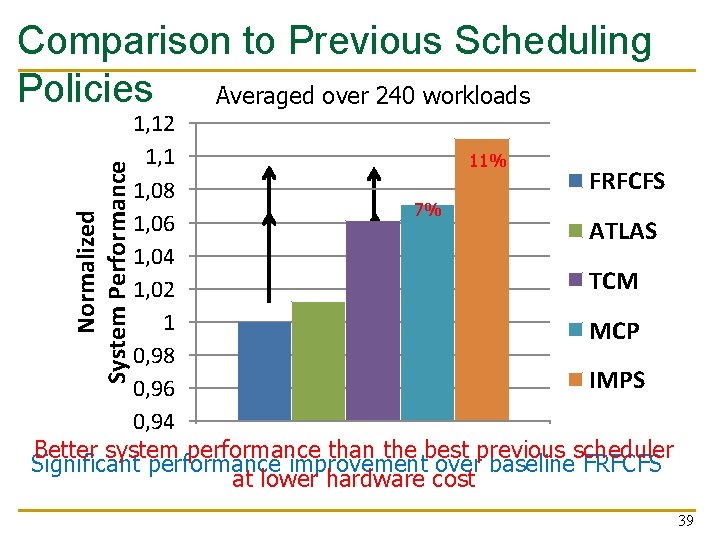

Comparison to Previous Scheduling Policies Averaged over 240 workloads Normalized System Performance 1, 12 1, 1 11% 5% FRFCFS 1, 08 7% 1% 1, 06 ATLAS 1, 04 TCM 1, 02 1 MCP 0, 98 IMPS 0, 96 0, 94 Better system performance than the best previous scheduler Significant performance improvement over baseline FRFCFS at lower hardware cost 39

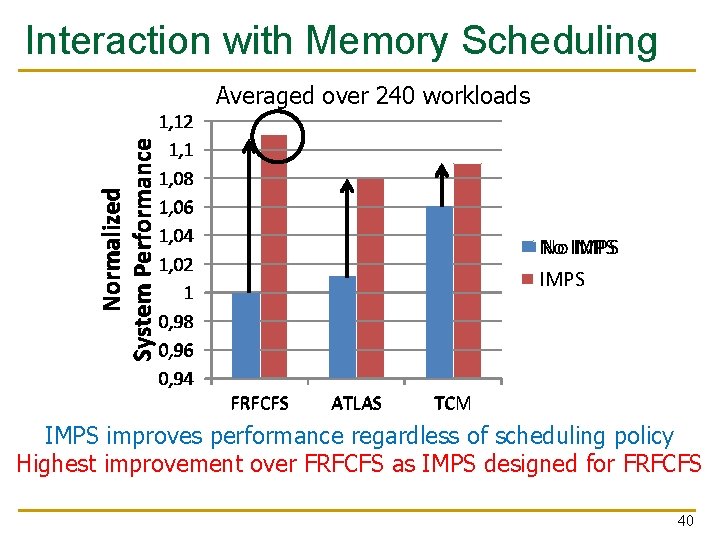

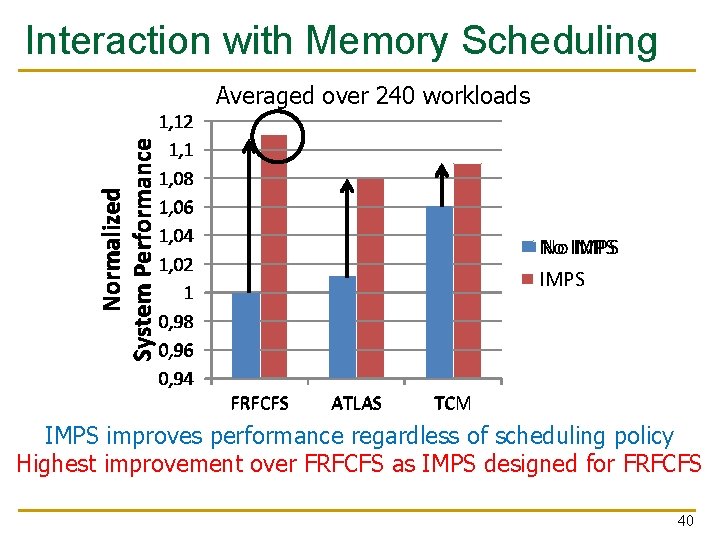

Interaction with Memory Scheduling Averaged over 240 workloads Normalized System Performance 1, 12 1, 1 1, 08 1, 06 1, 04 1, 02 1 0, 98 0, 96 0, 94 No No IMPS FRFCFS ATLAS TCM IMPS improves performance regardless of scheduling policy Highest improvement over FRFCFS as IMPS designed for FRFCFS 40

Summary 41

Summary n n Uncontrolled inter-application interference in main memory degrades system performance Application-aware memory channel partitioning (MCP) q n to Integrated memory partitioning and scheduling (IMPS) q q n Separates the data of badly-interfering applications different channels, eliminating interference Prioritizes very low memory-intensity applications in scheduler Handles other applications’ interference by partitioning MCP/IMPS provide better performance than applicationaware memory request scheduling at lower hardware cost 42

Strengths 43

Strengths of the Paper n n n n Novel solution to a key problem in multi-core systems, memory interference; the importance of problem will increase over time Keeps the memory scheduling hardware simple Combines multiple interference reduction techniques Can provide performance isolation across applications mapped to different channels General idea of partitioning can be extended to smaller granularities in the memory hierarchy: banks, subarrays, etc. Well-written paper Thorough simulation-based evaluation 44

Weaknesses 45

Weaknesses/Limitations of the Paper n n Mechanism may not work effectively if workload changes behavior after profiling Overhead of moving pages between channels restricts mechanism’s benefits Small number of memory channels reduces the scope of partitioning Load imbalance across channels can reduce performance q n n The paper addresses this and compares to another mechanism Software-hardware cooperative solution might not always be easy to adopt Evaluation is done solely in simulation Evaluation does not consider multi-chip systems Are these the best workloads to evaluate? 46

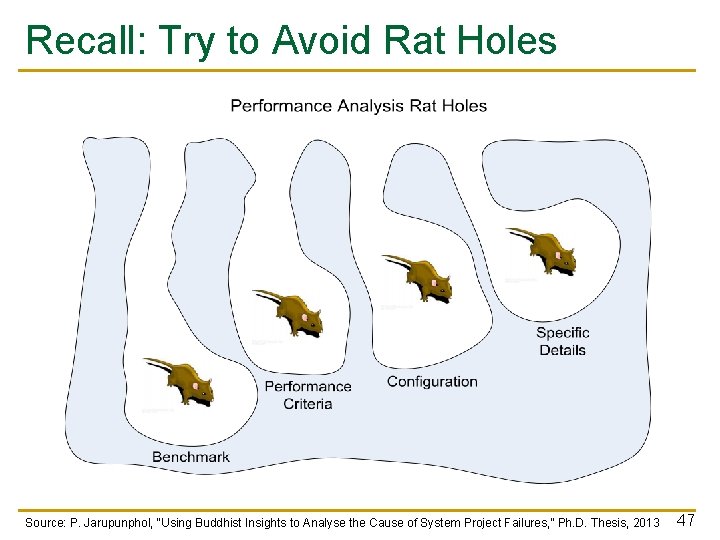

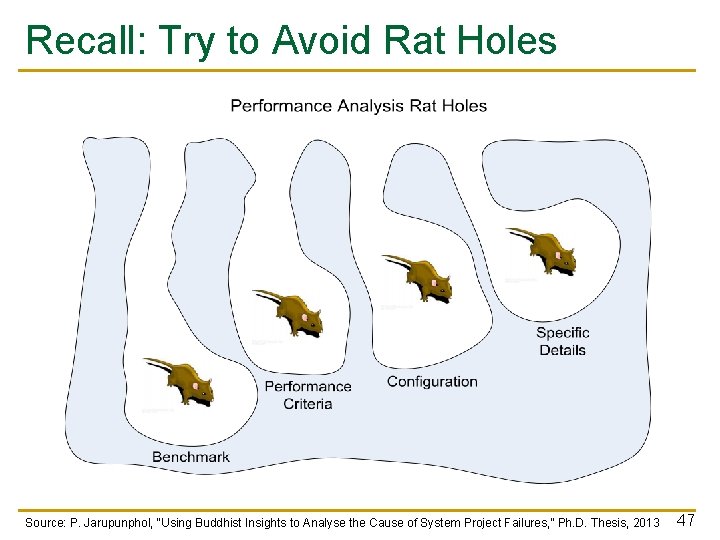

Recall: Try to Avoid Rat Holes Source: P. Jarupunphol, “Using Buddhist Insights to Analyse the Cause of System Project Failures, ” Ph. D. Thesis, 2013 47

Thoughts and Ideas 48

Extensions (I) n Can this idea be extended to different granularities in memory? q n n Can this idea be extended to provide performance predictability and performance isolation? How can MCP be combined effectively with other interference reduction techniques? q q n Partition banks, subarrays, mats across workloads E. g. , source throttling methods [Ebrahimi+, ASPLOS 2010] E. g. , thread scheduling methods Can this idea be evaluated on a real system? How? 49

Takeaways 50

Key Takeaways n A novel method to reduce memory interference n Simple and effective n Hardware/software cooperative n Good potential for work building on it to extend it q q q n To different structures To different metrics Multiple works have already built on the paper (see bank partitioning works in PACT 2012, HPCA 2012) Easy to read and understand paper 51

Open Discussion 52

Discussion Starters n Thoughts on the previous ideas? n How practical is this? n n Will the problem become bigger and more important over time? Will the solution become more important over time? Are other solutions better? Is this solution clearly advantageous in some cases? 53

Seminar in Computer Architecture Meeting 3 a: Memory Channel Partitioning Prof. Onur Mutlu ETH Zürich Fall 2020 1 October 2020