SearchBased Approaches to Accelerate Deep Learning Zhihao Jia

Search-Based Approaches to Accelerate Deep Learning Zhihao Jia 10/31/2020 Stanford University 1

Deep Learning is Everywhere Convolutional Neural Networks Neural Architecture Search Recurrent Neural Networks Reinforcement Learning 2

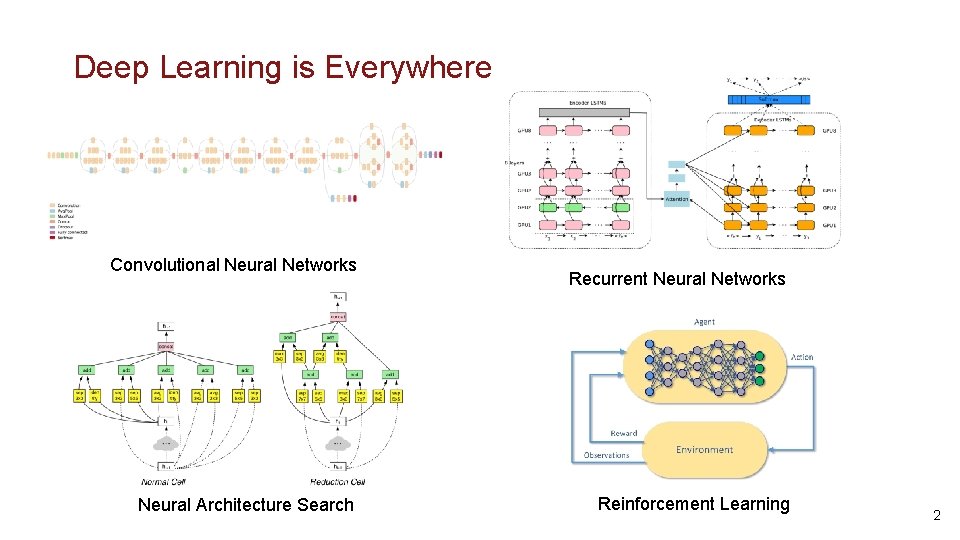

Deep Learning Deployment is Challenging Diverse and Complex DNN Models What operators to execute? Distributed Heterogenous Hardware Platforms How to distribute these operators? 3

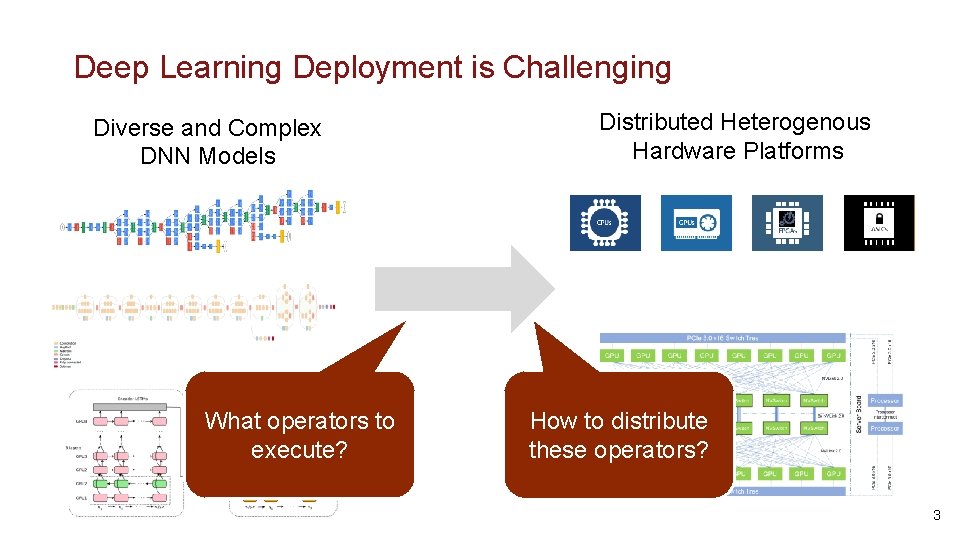

Existing Approach: Heuristic Optimizations Device 1 Device N DNN Architecture Graph Optimizations Parallelization Rule-based Operator Fusion Data/Model Parallelism • Miss model- and hardware-specific optimizations • Performance is suboptimal

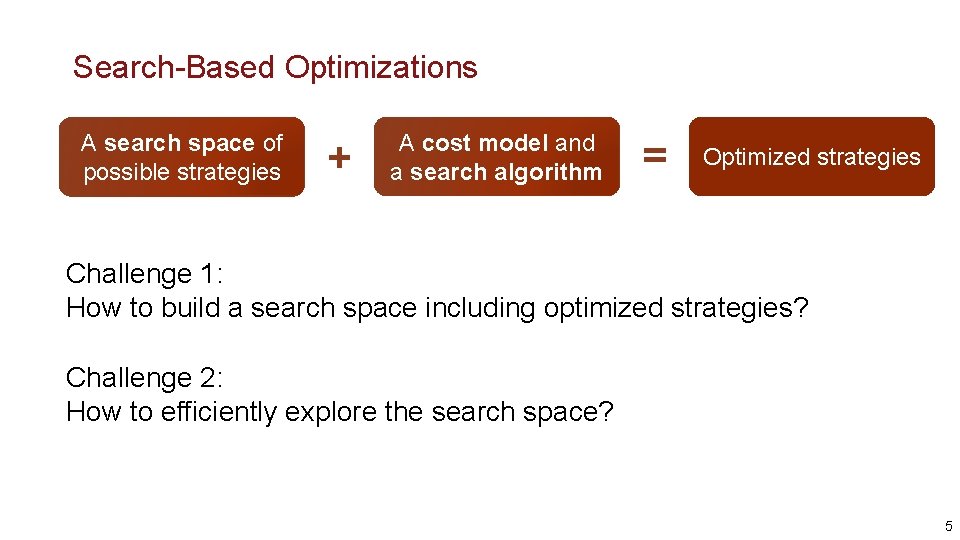

Search-Based Optimizations A search space of possible strategies + A cost model and a search algorithm = Optimized strategies Challenge 1: How to build a search space including optimized strategies? Challenge 2: How to efficiently explore the search space? 5

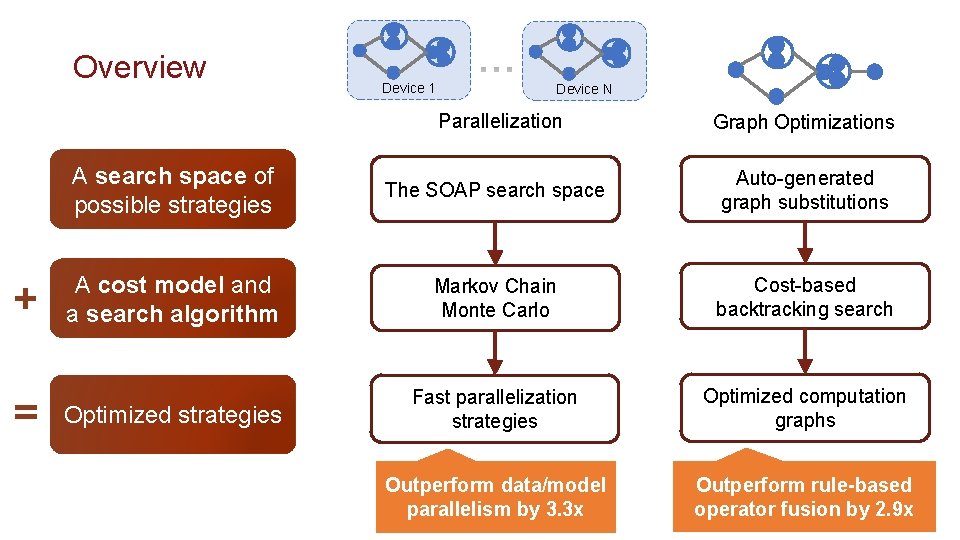

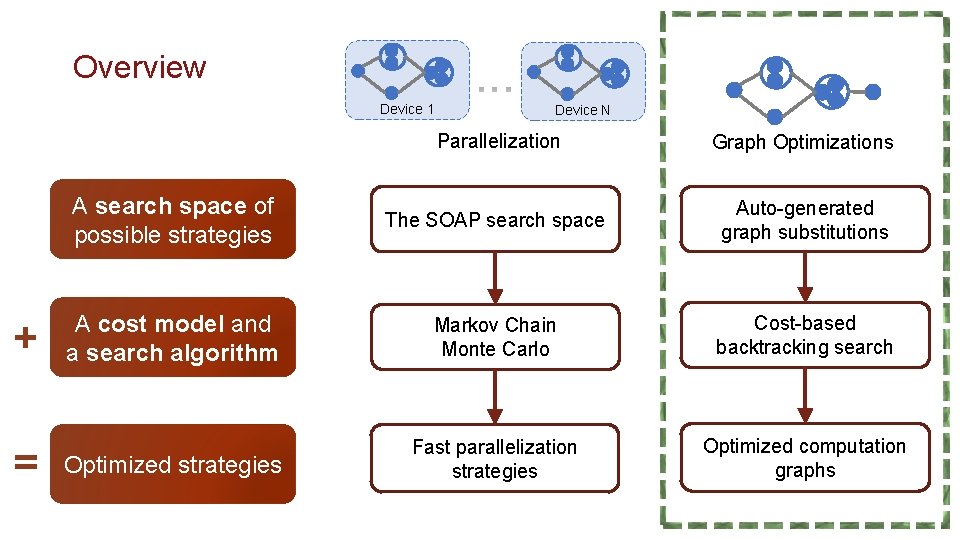

Overview + = Device 1 Device N Parallelization Graph Optimizations A search space of possible strategies The SOAP search space Auto-generated graph substitutions A cost model and a search algorithm Markov Chain Monte Carlo Cost-based backtracking search Optimized strategies Fast parallelization strategies Optimized computation graphs Outperform data/model parallelism by 3. 3 x Outperform rule-based operator fusion by 2. 9 x

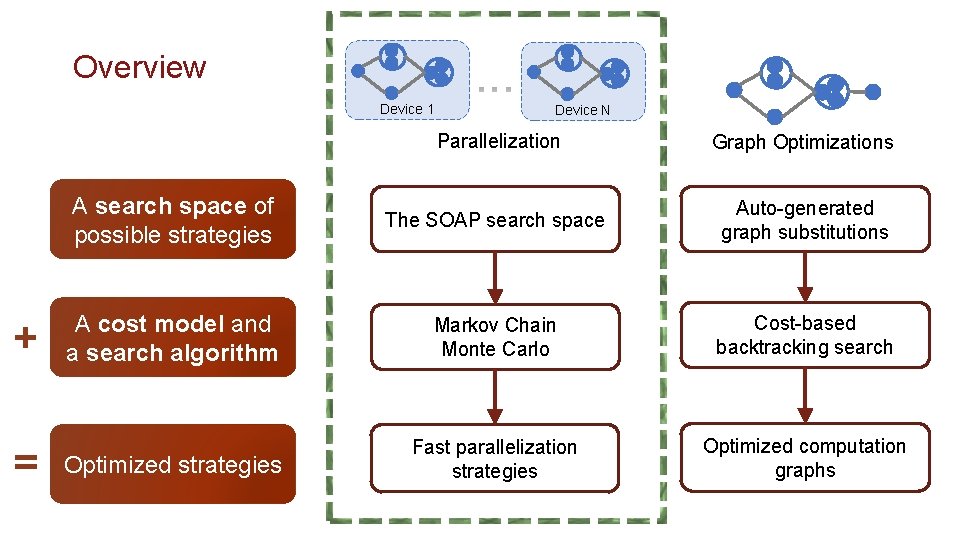

Overview Device 1 + = Device N Parallelization Graph Optimizations A search space of possible strategies The SOAP search space Auto-generated graph substitutions A cost model and a search algorithm Markov Chain Monte Carlo Cost-based backtracking search Optimized strategies Fast parallelization strategies Optimized computation graphs

Beyond Data and Model Parallelism for Deep Neural Networks ICML’ 18, Sys. ML’ 19 8

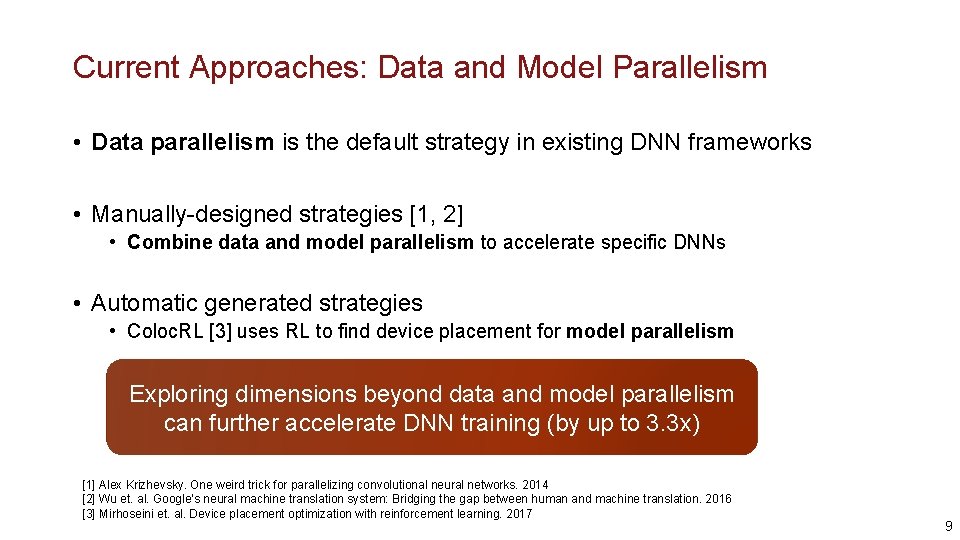

Current Approaches: Data and Model Parallelism • Data parallelism is the default strategy in existing DNN frameworks • Manually-designed strategies [1, 2] • Combine data and model parallelism to accelerate specific DNNs • Automatic generated strategies • Coloc. RL [3] uses RL to find device placement for model parallelism Exploring dimensions beyond data and model parallelism can further accelerate DNN training (by up to 3. 3 x) [1] Alex Krizhevsky. One weird trick for parallelizing convolutional neural networks. 2014 [2] Wu et. al. Google’s neural machine translation system: Bridging the gap between human and machine translation. 2016 [3] Mirhoseini et. al. Device placement optimization with reinforcement learning. 2017 9

The SOAP Search Space • • Samples Operators Attributes Parameters 10

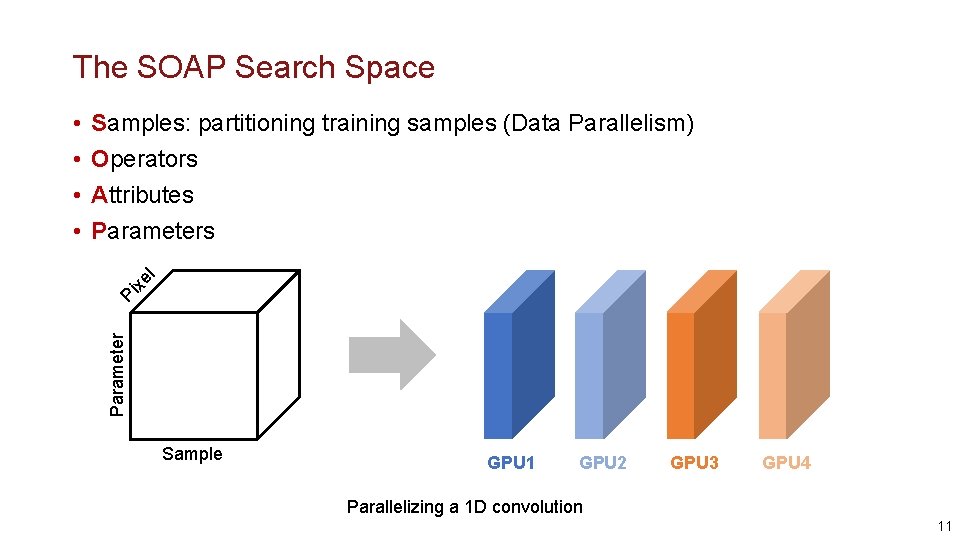

The SOAP Search Space Pi xe l Samples: partitioning training samples (Data Parallelism) Operators Attributes Parameter • • Sample GPU 1 GPU 2 GPU 3 GPU 4 Parallelizing a 1 D convolution 11

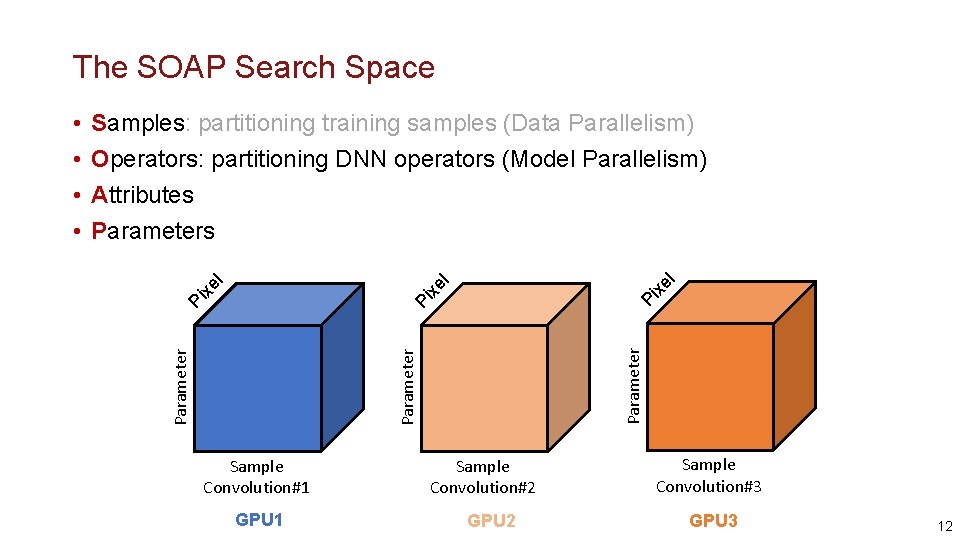

The SOAP Search Space Pi Parameter Pi Pi xe l Samples: partitioning training samples (Data Parallelism) Operators: partitioning DNN operators (Model Parallelism) Attributes Parameter • • Sample Convolution#1 GPU 1 Sample Convolution#2 GPU 2 Sample Convolution#3 GPU 3 12

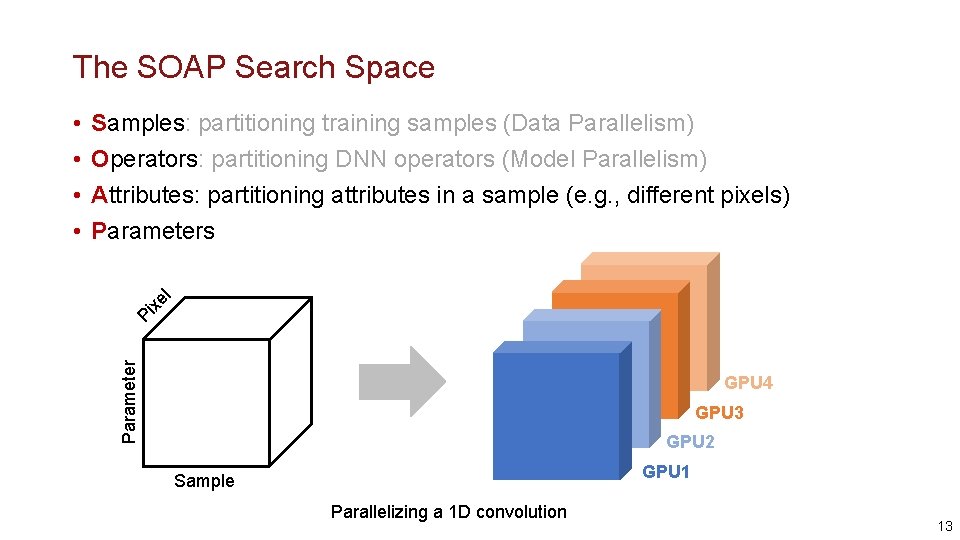

The SOAP Search Space Pi xe l Samples: partitioning training samples (Data Parallelism) Operators: partitioning DNN operators (Model Parallelism) Attributes: partitioning attributes in a sample (e. g. , different pixels) Parameters Parameter • • GPU 4 GPU 3 GPU 2 GPU 1 Sample Parallelizing a 1 D convolution 13

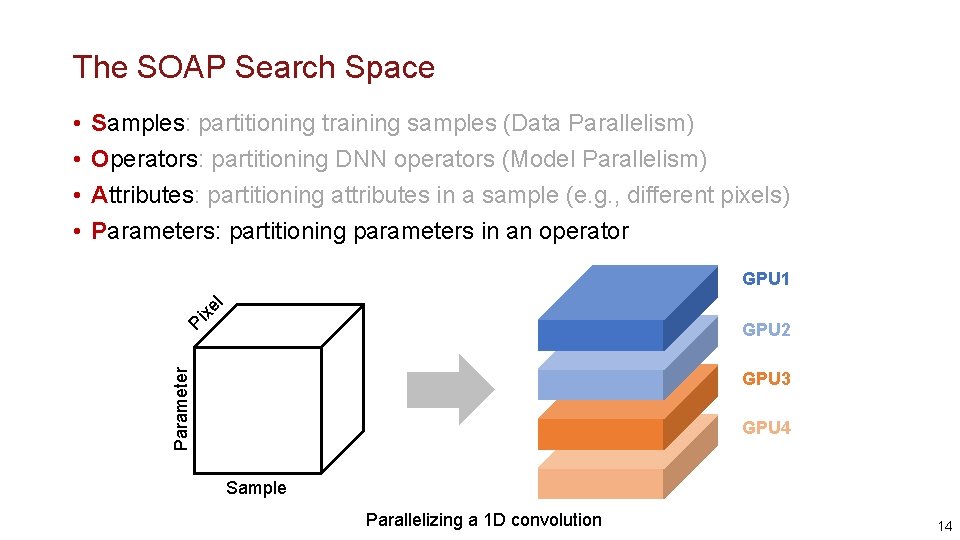

The SOAP Search Space Samples: partitioning training samples (Data Parallelism) Operators: partitioning DNN operators (Model Parallelism) Attributes: partitioning attributes in a sample (e. g. , different pixels) Parameters: partitioning parameters in an operator xe l GPU 1 Pi GPU 2 Parameter • • GPU 3 GPU 4 Sample Parallelizing a 1 D convolution 14

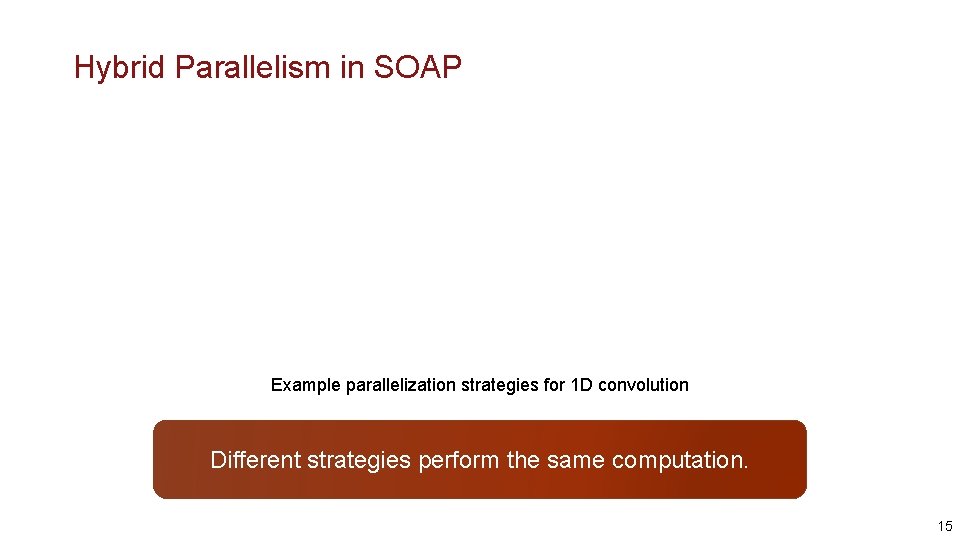

Hybrid Parallelism in SOAP Example parallelization strategies for 1 D convolution Different strategies perform the same computation. 15

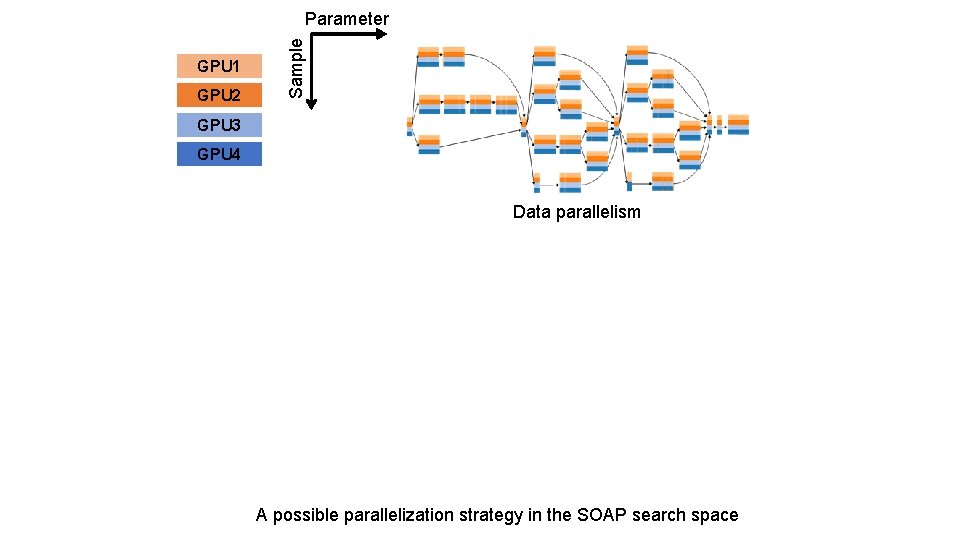

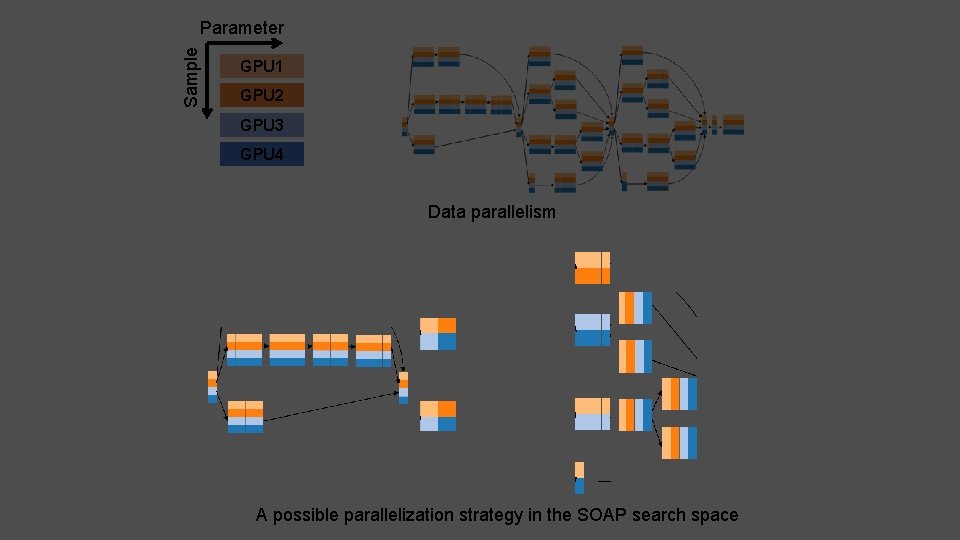

GPU 1 GPU 2 Sample Parameter 17 GPU 3 GPU 4 Data parallelism A possible parallelization strategy in the SOAP search space

Parameter Sample 18 GPU 1 GPU 2 GPU 3 GPU 4 Data parallelism A possible parallelization strategy in the SOAP search space

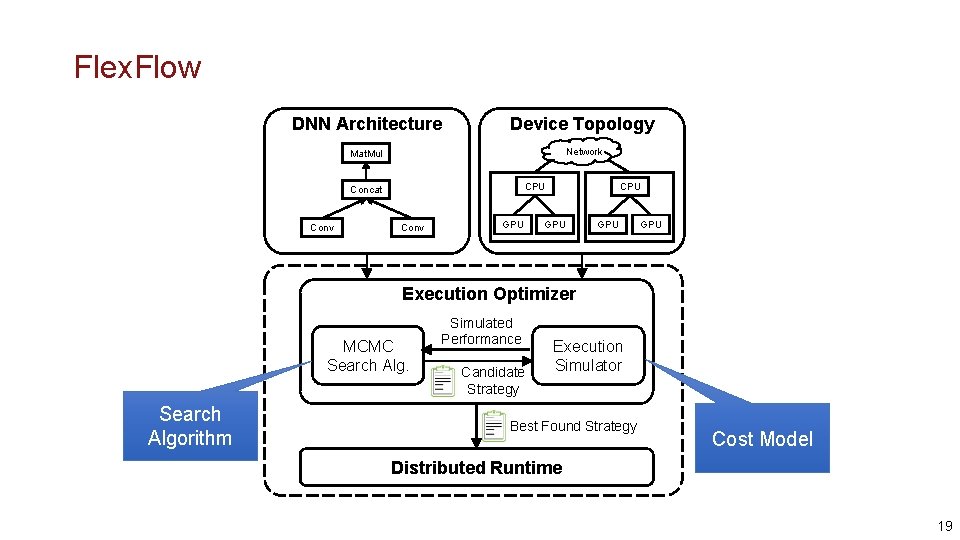

Flex. Flow DNN Architecture Device Topology Mat. Mul Network CPU Concat Conv GPU CPU GPU GPU Execution Optimizer MCMC Search Algorithm Simulated Performance Candidate Strategy Execution Simulator Best Found Strategy Cost Model Distributed Runtime 19

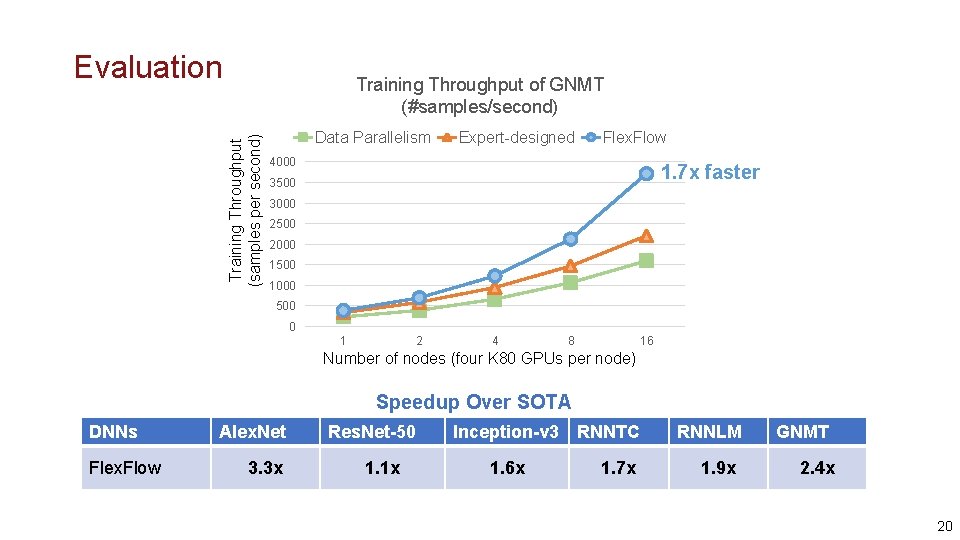

Evaluation Training Throughput (samples per second) Training Throughput of GNMT (#samples/second) Data Parallelism Expert-designed Flex. Flow 4000 1. 7 x faster 3500 3000 2500 2000 1500 1000 500 0 1 2 4 8 16 Number of nodes (four K 80 GPUs per node) Speedup Over SOTA DNNs Flex. Flow Alex. Net 3. 3 x Res. Net-50 1. 1 x Inception-v 3 1. 6 x RNNTC 1. 7 x RNNLM 1. 9 x GNMT 2. 4 x 20

Overview Device 1 + = Device N Parallelization Graph Optimizations A search space of possible strategies The SOAP search space Auto-generated graph substitutions A cost model and a search algorithm Markov Chain Monte Carlo Cost-based backtracking search Optimized strategies Fast parallelization strategies Optimized computation graphs

Optimizing DNN Computation with Automated Generation of Graph Substitutions Sys. ML’ 19 22

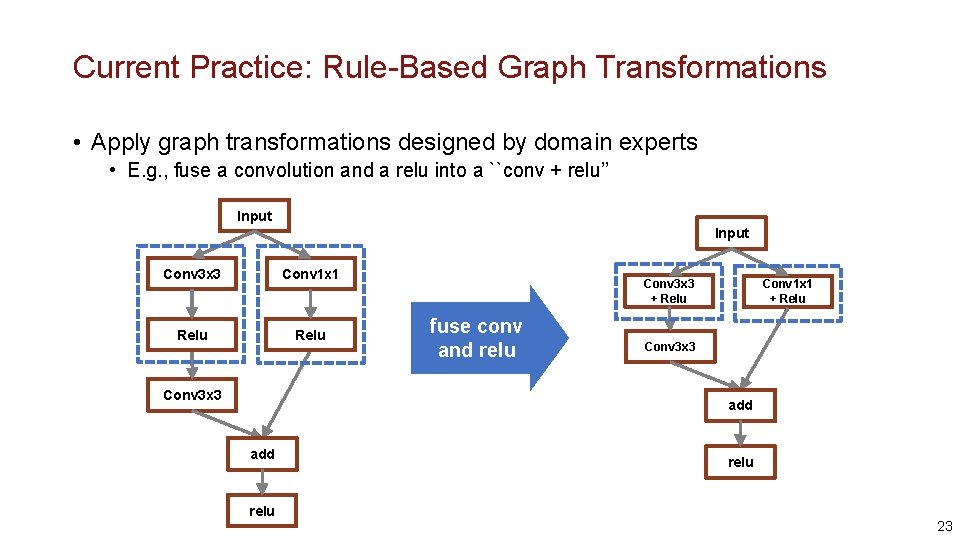

Current Practice: Rule-Based Graph Transformations • Apply graph transformations designed by domain experts • E. g. , fuse a convolution and a relu into a ``conv + relu’’ Input Conv 3 x 3 Conv 1 x 1 Relu Conv 3 x 3 + Relu fuse conv and relu Conv 1 x 1 + Relu Conv 3 x 3 add relu 23

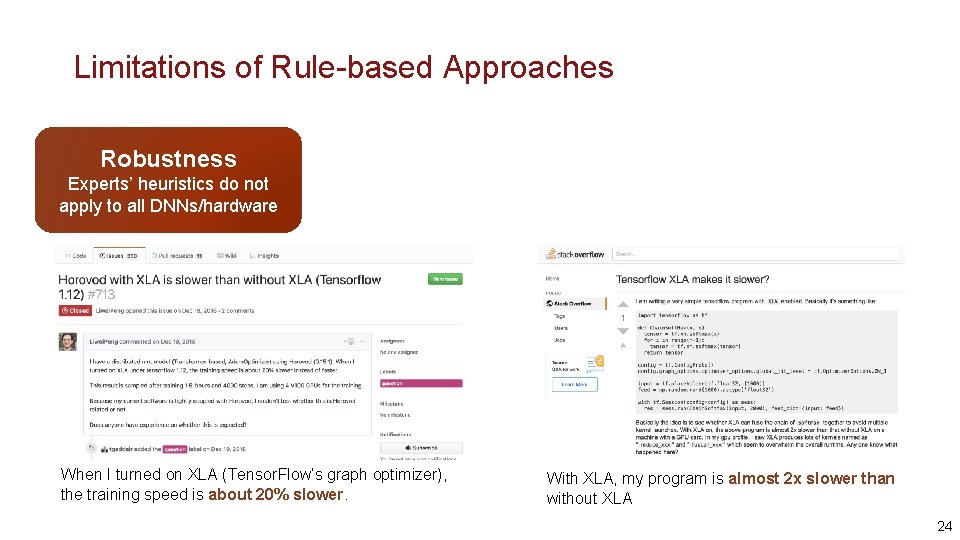

Limitations of Rule-based Approaches Robustness Experts’ heuristics do not apply to all DNNs/hardware When I turned on XLA (Tensor. Flow’s graph optimizer), the training speed is about 20% slower. With XLA, my program is almost 2 x slower than without XLA 24

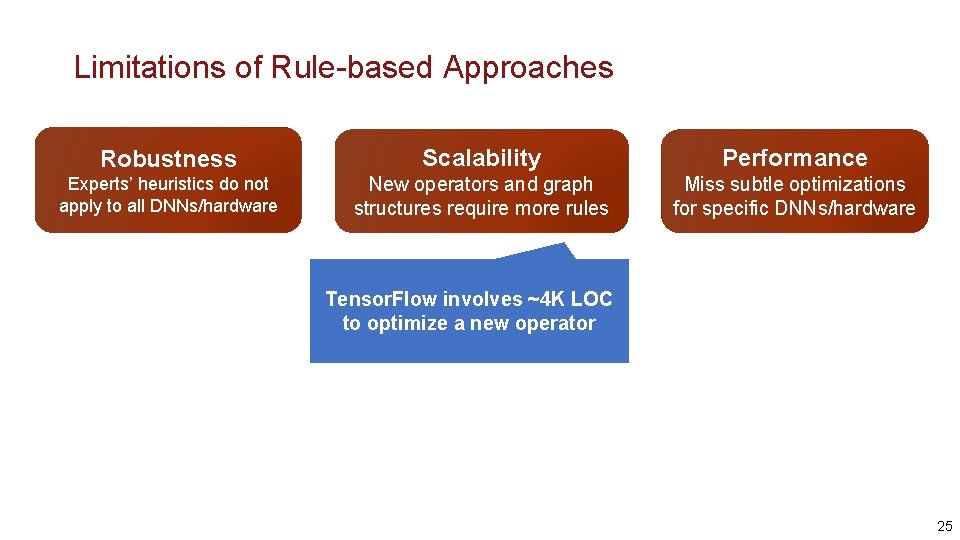

Limitations of Rule-based Approaches Robustness Scalability Performance Experts’ heuristics do not apply to all DNNs/hardware New operators and graph structures require more rules Miss subtle optimizations for specific DNNs/hardware Tensor. Flow involves ~4 K LOC to optimize a new operator 25

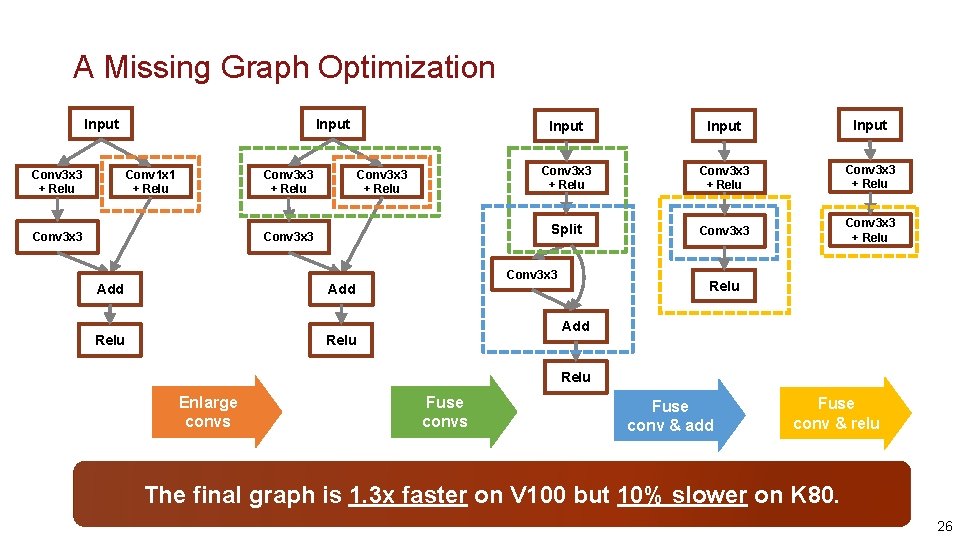

A Missing Graph Optimization Input Conv 3 x 3 + Relu Conv 1 x 1 + Relu Conv 3 x 3 Input Conv 3 x 3 + Relu Split Conv 3 x 3 + Relu Conv 3 x 3 Add Relu Enlarge convs Fuse conv & add Fuse conv & relu The final graph is 1. 3 x faster on V 100 but 10% slower on K 80. 26

Can we automatically find these optimizations? Automatically generated graph substitutions 27

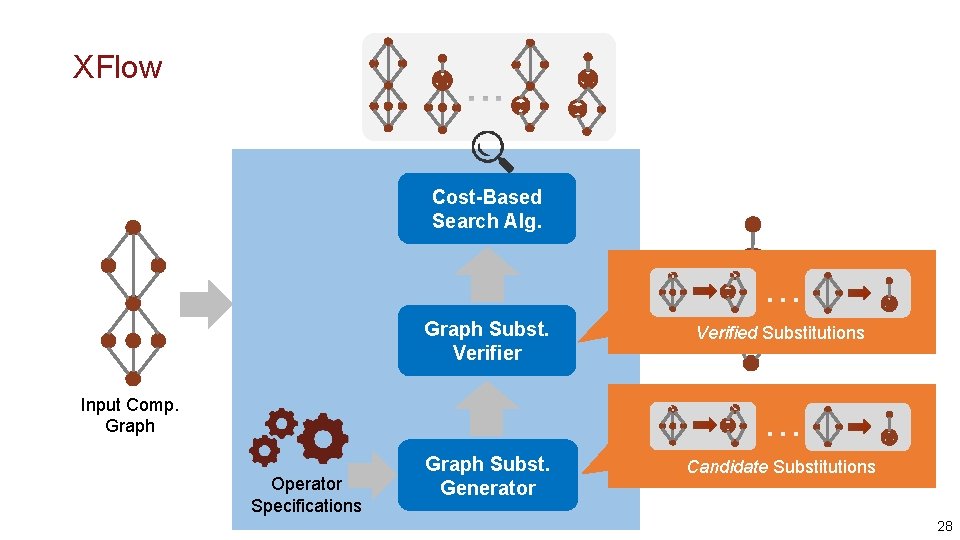

XFlow Cost-Based Search Alg. … Graph Subst. Verifier Verified Substitutions Optimized Comp. Graph Input Comp. Graph … Operator Specifications Graph Subst. Generator Candidate Substitutions 28

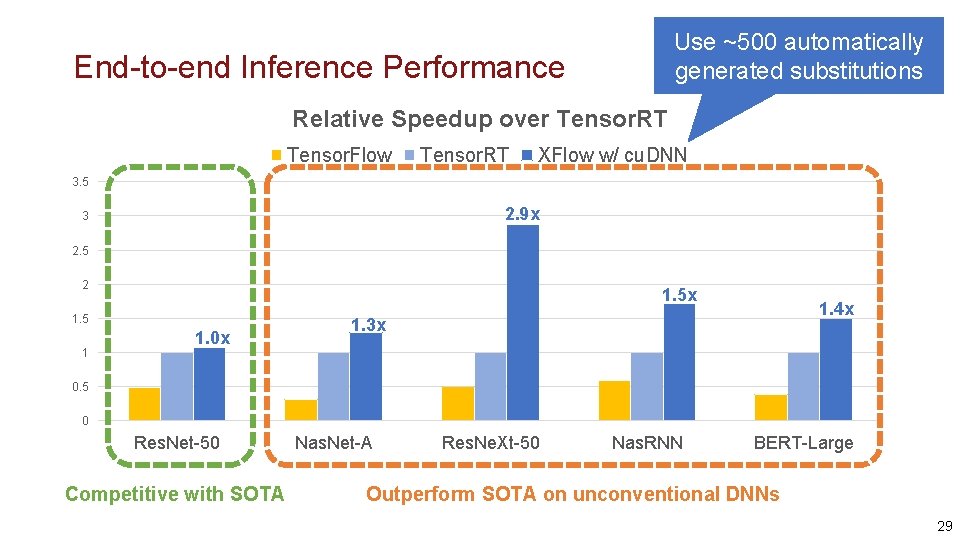

Use ~500 automatically generated substitutions End-to-end Inference Performance Relative Speedup over Tensor. RT Tensor. Flow Tensor. RT XFlow w/ cu. DNN 3. 5 2. 9 x 3 2. 5 2 1. 5 x 1. 5 1 1. 0 x 1. 4 x 1. 3 x 0. 5 0 Res. Net-50 Competitive with SOTA Nas. Net-A Res. Ne. Xt-50 Nas. RNN BERT-Large Outperform SOTA on unconventional DNNs 29

Open Problems Can we design better search space for parallelization and graph optimizations? Can we find more efficient search algorithms? Can we use search-based optimizations in other domains? 30

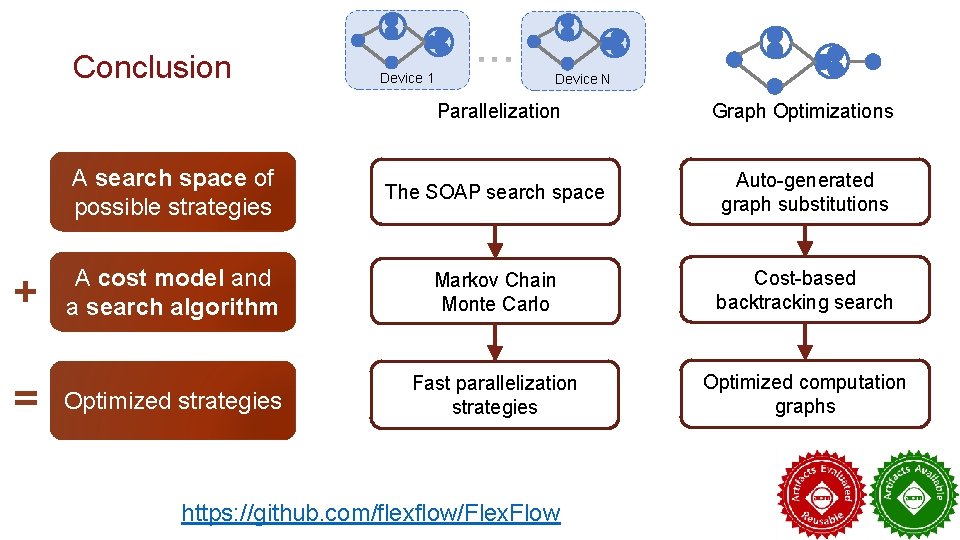

Conclusion + = Device 1 Device N Parallelization Graph Optimizations A search space of possible strategies The SOAP search space Auto-generated graph substitutions A cost model and a search algorithm Markov Chain Monte Carlo Cost-based backtracking search Optimized strategies Fast parallelization strategies Optimized computation graphs https: //github. com/flexflow/Flex. Flow

Backup Slides 32

- Slides: 31