SCICOMP IBM and TACC Then Now and Next

SCICOMP, IBM, and TACC: Then, Now, and Next Jay Boisseau, Director Texas Advanced Computing Center The University of Texas at Austin August 10, 2004

Precautions • This presentation contains some historical recollections from over 5 years ago. I can’t usually recall what I had for lunch yesterday. • This presentation contains some ideas on where I think things might be going next. If I can’t recall yesterday’s lunch, it seems unlikely that I can predict anything. • This presentation contains many tongue-in-cheek observations, exaggerations for dramatic effect, etc. • This presentation may cause boredom, drowsiness, nausea, or hunger.

Outline 1. Why Did We Create SCICOMP 5 Years Ago? 2. What Did I Do with My Summer (and the Previous 3 Years)? 3. What is TACC Doing Now with IBM? 4. Where Are We Now? Where Are We Going?

Why Did We Create SCICOMP 5 Years Ago?

The Dark Ages of HPC • In late 1990 s, most supercomputing was accomplished on proprietary systems from IBM, HP, SGI (including Cray), etc. – User environments were not very friendly – Limited development environment (debuggers, optimization tools, etc. ) – Very few cross platform tools – Difficult programming tools (MPI, Open. MP… some things haven’t changed)

Missing Cray Research… • Cray was no longer the dominant company, and it showed – Trend towards commoditization had begun – Systems were not balanced • Cray T 3 Es were used longer than any production MPP – Software for HPC was limited, not as reliable • Who doesn’t miss real checkpoint/restart, automatic performance monitoring, no weekly PM downtime, etc. ? – Companies were not as focused on HPC/research customers as on larger markets

1998 -99: Making Things Better • John Levesque hired by IBM to start the Advanced Computing Technology Center – Goal: ACTC should provide to customers what Cray Research used to provide • Jay Boisseau became first Associate Director of Scientific Computing at SDSC – Goal: Ensure SDSC helped users migrate from Cray T 3 E to IBM SP and do important, effective computational research

Creating SCICOMP • John and Jay hosted workshop at SDSC in March 1999 open to users and center staff – to discuss current state, issues, techniques, and results in using IBM systems for HPC – SP-XXL already existed, but was exclusive and more systems-oriented • Success led to first IBM SP Scientific Computing User Group meeting (SCICOMP) in August 1999 in Yorktown Heights – Jay as first director • Second meeting held in early 2000 at SDSC • In late 2000, John & Jay invited international participation in SCICOMP at IBM ACTC workshop in Paris

What Did I Do with My Summer (and the Previous 3 Years)?

Moving to TACC? • In 2001, I accepted job as director of TACC • Major rebuilding task: – – Only 14 staff No R&D programs Outdated HPC systems No visualization, grid computing or data-intensive computing – Little funding – Not much profile – Past political issues

Moving to TACC! • But big opportunities – – Talented key staff in HPC, systems, and operations Space for growth IBM Austin across the street Almost every other major HPC vendor has large presence in Austin – UT Austin has both quality and scale in sciences, engineering, CS – UT and Texas have unparalleled internal & external support (pride is not always a vice) – Austin is a fantastic place to live (and recruit)

Moving to TACC! • TEXAS-SIZED opportunities – – Talented key staff in HPC, systems, and operations Space for growth IBM Austin across the street Almost every other major HPC vendor has large presence in Austin – UT Austin is has both quality and scale in sciences, engineering, CS – UT and Texas have unparalleled internal & external support (pride is not always a vice) – Austin is fantastic place to live (and recruit)

Moving to TACC! • TEXAS-SIZED opportunities – – – – Talented key staff in HPC, systems, and operations Space for growth IBM Austin across the street Almost every other major HPC vendor has large presence in Austin UT Austin is has both quality and scale in sciences, engineering, CS UT and Texas have unparalleled internal & external support (pride is not always a vice) Austin is fantastic place to live (and recruit) I got the chance to build something else good and important

TACC Mission To enhance the research & education programs of The University of Texas at Austin and its partners through research, development, operation & support of advanced computing technologies.

TACC Strategy To accomplish this mission, TACC: – Evaluates, acquires & operates advanced computing systems – Provides training, consulting, and documentation to users Resources & Services – Collaborates with researchers to Research & apply advanced computing techniques Development – Conducts research & development to produce new computational technologies

TACC Advanced Computing Technology Areas • High Performance Computing (HPC) numerically intensive computing: produces data

TACC Advanced Computing Technology Areas • High Performance Computing (HPC) numerically intensive computing: produces data • Scientific Visualization (Sci. Vis) rendering data into information & knowledge

TACC Advanced Computing Technology Areas • High Performance Computing (HPC) numerically intensive computing: produces data • Scientific Visualization (Sci. Vis) rendering data into information & knowledge • Data & Information Systems (DIS) managing and analyzing data for information & knowledge

TACC Advanced Computing Technology Areas • High Performance Computing (HPC) numerically intensive computing: produces data • Scientific Visualization (Sci. Vis) rendering data into information & knowledge • Data & Information Systems (DIS) managing and analyzing data for information & knowledge • Distributed and Grid Computing (DGC) integrating diverse resources, data, and people to produce and share knowledge

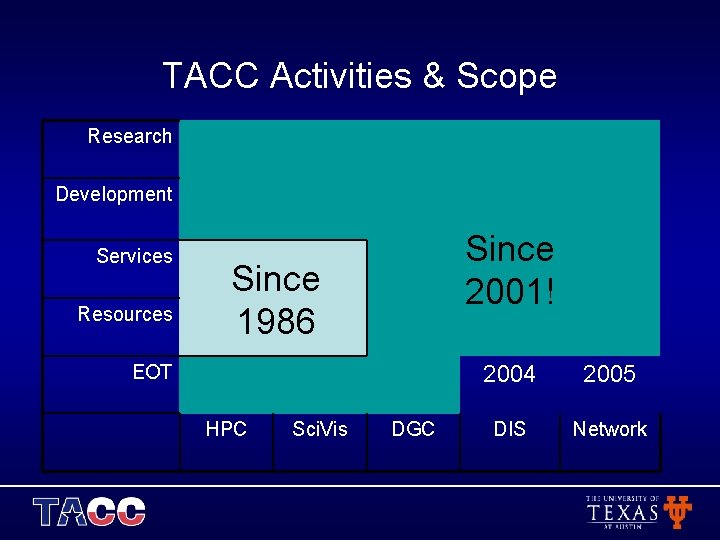

TACC Activities & Scope Research Development Services Resources Since 2001! Since 1986 EOT HPC Sci. Vis DGC 2004 2005 DIS Network

TACC Applications Focus Areas • TACC advanced computing technology R&D must be driven by applications • TACC Applications Focus Areas – Chemistry -> Biosciences – Climate/Weather/Ocean -> Geosciences – CFD

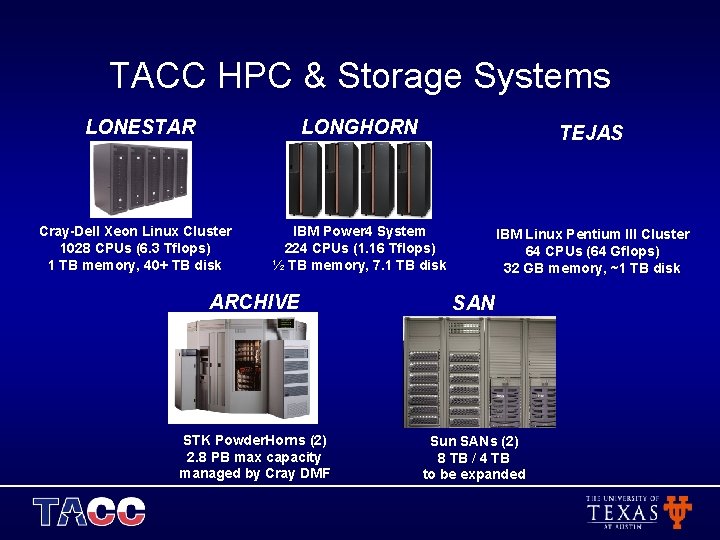

TACC HPC & Storage Systems LONESTAR Cray-Dell Xeon Linux Cluster 1028 CPUs (6. 3 Tflops) 1 TB memory, 40+ TB disk LONGHORN TEJAS IBM Power 4 System 224 CPUs (1. 16 Tflops) ½ TB memory, 7. 1 TB disk IBM Linux Pentium III Cluster 64 CPUs (64 Gflops) 32 GB memory, ~1 TB disk ARCHIVE SAN STK Powder. Horns (2) 2. 8 PB max capacity managed by Cray DMF Sun SANs (2) 8 TB / 4 TB to be expanded

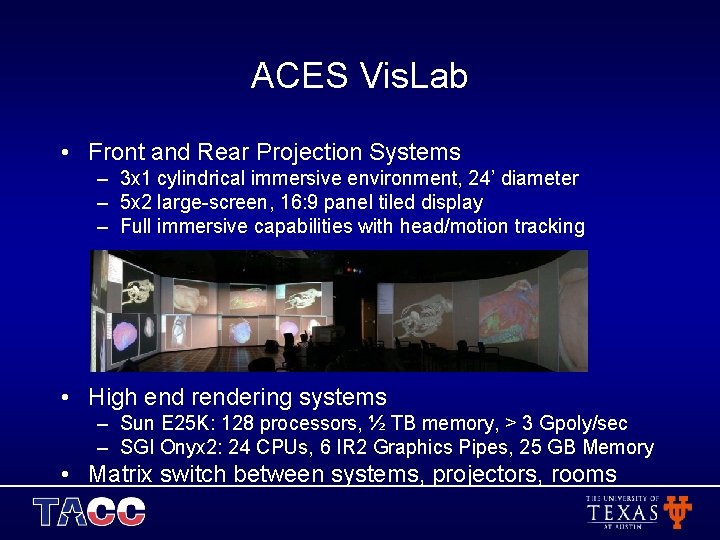

ACES Vis. Lab • Front and Rear Projection Systems – 3 x 1 cylindrical immersive environment, 24’ diameter – 5 x 2 large-screen, 16: 9 panel tiled display – Full immersive capabilities with head/motion tracking • High end rendering systems – Sun E 25 K: 128 processors, ½ TB memory, > 3 Gpoly/sec – SGI Onyx 2: 24 CPUs, 6 IR 2 Graphics Pipes, 25 GB Memory • Matrix switch between systems, projectors, rooms

TACC Services • TACC resources and services include: – – – Consulting Training Technical documentation Data storage/archival System selection/configuration consulting System hosting

TACC R&D – High Performance Computing • Scalability, performance optimization, and performance modeling for HPC applications • Evaluation of cluster technologies for HPC • Portability and performance issues of applications on clusters • Climate, weather, ocean modeling collaboration and support of Do. D • Starting CFD activities

TACC R&D – Scientific Visualization • Feature detection / terascale data analysis • Evaluation of performance characteristics and capabilities of high-end visualization technologies • Hardware accelerated visualization and computation on GPUs • Remote interactive visualization / grid-enabled interactive visualization

TACC R&D – Data & Information Systems • Newest technology group at TACC • Initial R&D focused on creating/hosting scientific data collections • Interests / plans – Geospatial and biological database extensions – Efficient ways to collect/create metadata – DB clusters / parallel DB I/O for scientific data

TACC R&D – Distributed & Grid Computing • Web-based grid portals • Grid resource data collection and information services • Grid scheduling and workflow • Grid-enabled visualization • Grid-enabled data collection hosting • Overall grid deployment and integration

TACC R&D - Networking • Very new activities: – Exploring high-bandwidth (OC-12, Gig. E, OC-48, OC 192) remote and collaborative grid-enabled visualization – Exploring network performance for moving terascale data on 10 Gbps networks (Tera. Grid) – Exploring Gig. E aggregation to fill 10 Gbps networks (parallel file I/O, parallel database I/O) • Recruiting a leader for TACC networking R&D activities

TACC Growth • New infrastructure provides UT with comprehensive, balanced, world-class resources: – – – 50 x HPC capability 20 x archival capability 10 x network capability World-class Vis. Lab New SAN • New comprehensive R&D program with focus on impact – Activities in HPC, Sci. Vis, DIS, DGC – New opportunities for professional staff • 40+ new, wonderful people in 3 years, adding to the excellent core of talented people that have been at TACC for many years

Summary of My Time with TACC Over Past 3 years • TACC provides terascale HPC, Sci. Vis, storage, data collection, and network resources • TACC provides expert support services: consulting, documentation, and training in HPC, Sci. Vis, and Grid • TACC conducts applied research & development in these advanced computing technologies • TACC has become one of the leading academic advanced computing centers in years • I have the best job in the world, mainly because I have the best staff in the world (but also because of UT and Austin)

And one other thing kept me busy the past 3 years…

What is TACC Doing Now with IBM?

UT Grid: Enable Campus-wide Terascale Distributed Computing • Vision: provide high-end systems, but move from ‘island’ to hub of campus computing continuum – provide models for local resources (clusters, vislabs, etc. ), training, and documentation – develop procedures for connecting local systems to campus grid • single sign-on, data space, compute space • leverage every PC, cluster, NAS, etc. on campus! – integrate digital assets into campus grid – integrate UT instruments & sensors into campus grid • Joint project with IBM

Building a Grid Together • UT Grid: Joint Between UT and IBM – TACC wants to be leader in e-science – IBM is a leader in e-business – UT Grid enables both to • Gain deployment experience (IBM Global Services) • Have a R&D testbed – Deliverables/Benefits • Deployment experience • Grid Zone papers • Other papers

UT Grid: Initial Focus on Computing • High-throughput parallel computing – Project Rodeo – Use CSF to schedule to LSF, PBS, SGE clusters across campus – Use Globus 3. 2 -> GT 4 • High-throughput serial computing – Project Roundup uses United Devices software on campus PCs – Also interfacing to Condor flock in CS department

UT Grid: Initial Focus on Computing • Develop CSF adapters for popular resource management systems through collaboration: – – – LSF: done by Platform Computing Globus: done by Platform Computing PBS: partially done SGE Load. Leveler Condor

UT Grid: Initial Focus on Computing • Develop CSF capability for flexible job requirements: – – – Serial vs parallel: no diff, just specify Ncpus Number: facilitate ensembles Batch: whenever, or by priority Advanced reservation: needed for coupling, interactive On-demand: needed for urgency • Integrate data management for jobs into CSF – SAN makes it easy – Grid. FTP is somewhat simple, if crude – Avaki Data Grid is a possibility

UT Grid: Initial Focus on Computing • Completion time in a compute grid is a function of – data transfer times • Use NWS for network bandwidth predictions, file transfer time predictions (Rich Wolski, UCSB) – queue wait times • Use new software from Wolski for prediction of start of execution in batch systems – application performance times • Use Prophesy (Valerie Taylor) for applications performance prediction • Develop CSF scheduling module that is data, network, and performance aware

UT Grid: Full Service! • UT Grid will offer a complete set of services: – – – Compute services Storage services Data collections services Visualization services Instruments services • But this will take 2 years—focusing on compute services now

UT Grid Interfaces • Grid User Portal – Hosted, built on Grid. Port – Augment developers by providing info services – Enable productivity by simplifying production usage • Grid User Node – Hosted, software includes Grid. Shell plus client versions of all other UT Grid software – Downloadable version enables configuring local Linux box into UT Grid (eventually, Windows and Mac)

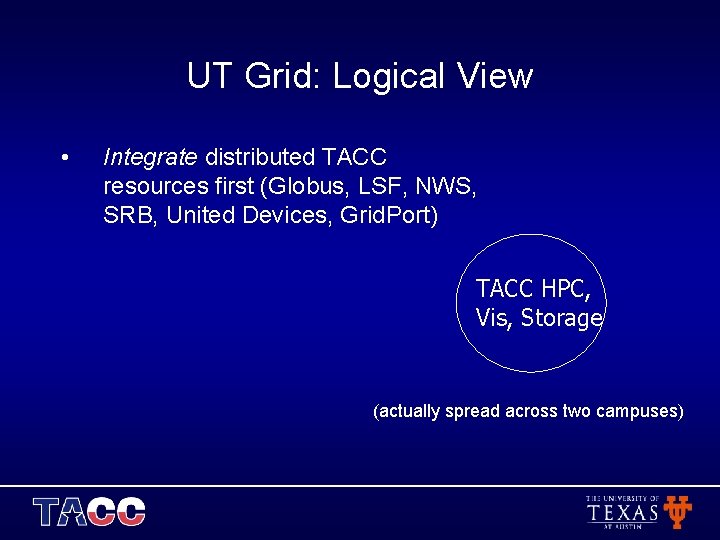

UT Grid: Logical View • Integrate distributed TACC resources first (Globus, LSF, NWS, SRB, United Devices, Grid. Port) TACC HPC, Vis, Storage (actually spread across two campuses)

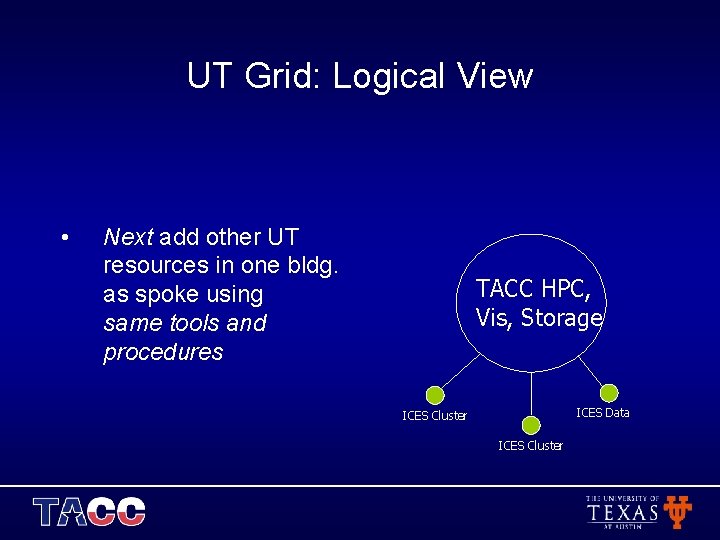

UT Grid: Logical View • Next add other UT resources in one bldg. as spoke using same tools and procedures TACC HPC, Vis, Storage ICES Data ICES Cluster

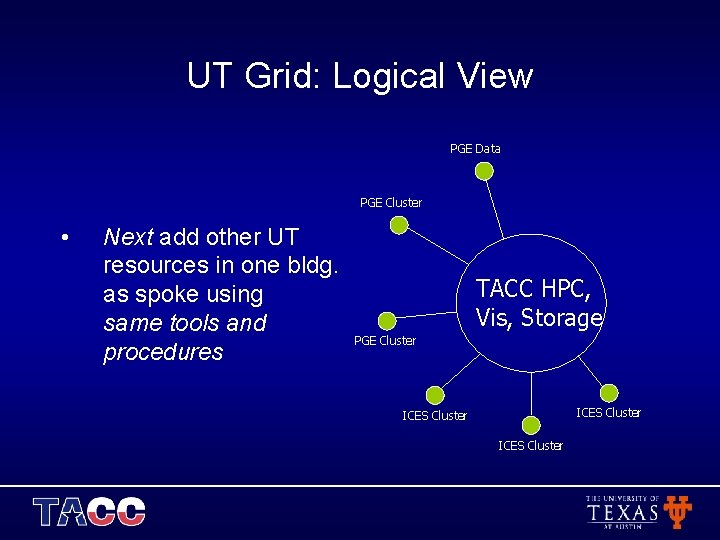

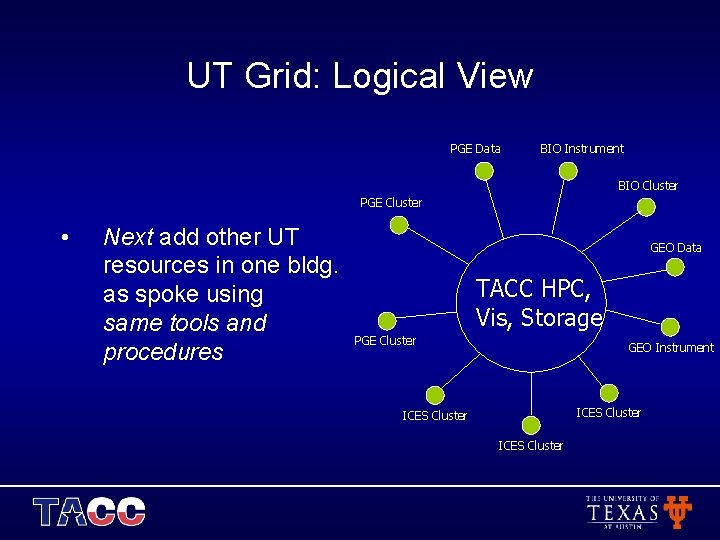

UT Grid: Logical View PGE Data PGE Cluster • Next add other UT resources in one bldg. as spoke using same tools and procedures TACC HPC, Vis, Storage PGE Cluster ICES Cluster

UT Grid: Logical View PGE Data BIO Instrument BIO Cluster PGE Cluster • Next add other UT resources in one bldg. as spoke using same tools and procedures GEO Data TACC HPC, Vis, Storage PGE Cluster GEO Instrument ICES Cluster

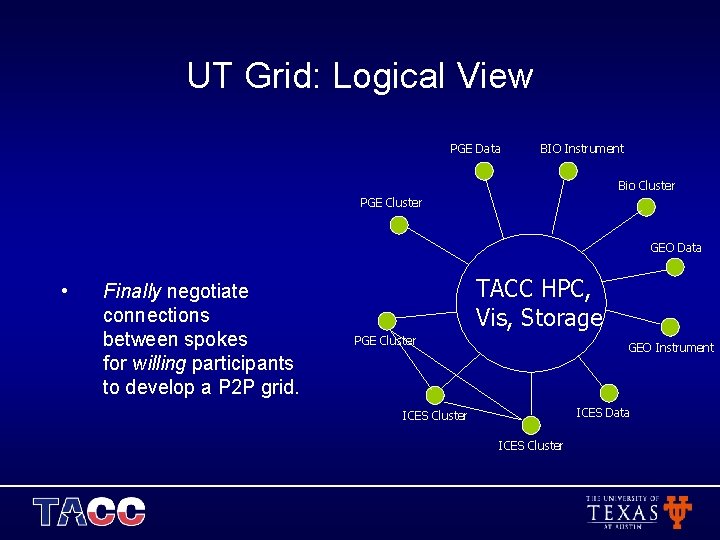

UT Grid: Logical View PGE Data BIO Instrument Bio Cluster PGE Cluster GEO Data • Finally negotiate connections between spokes for willing participants to develop a P 2 P grid. TACC HPC, Vis, Storage PGE Cluster GEO Instrument ICES Data ICES Cluster

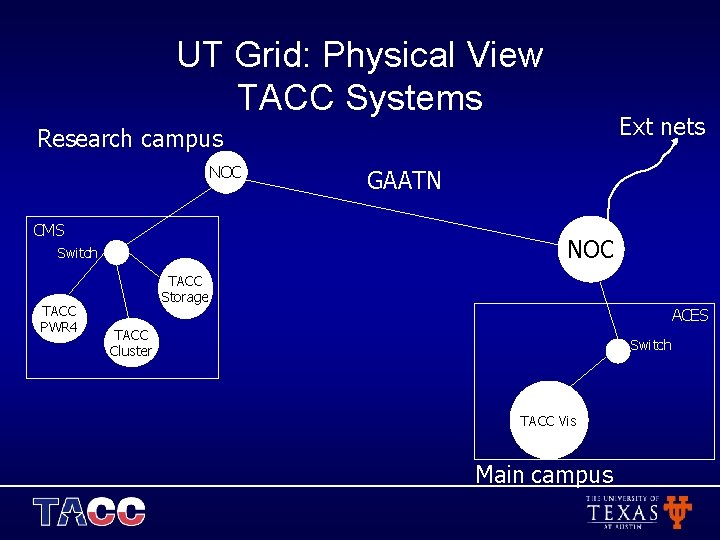

UT Grid: Physical View TACC Systems Ext nets Research campus NOC CMS NOC Switch TACC PWR 4 GAATN TACC Storage ACES TACC Cluster Switch TACC Vis Main campus

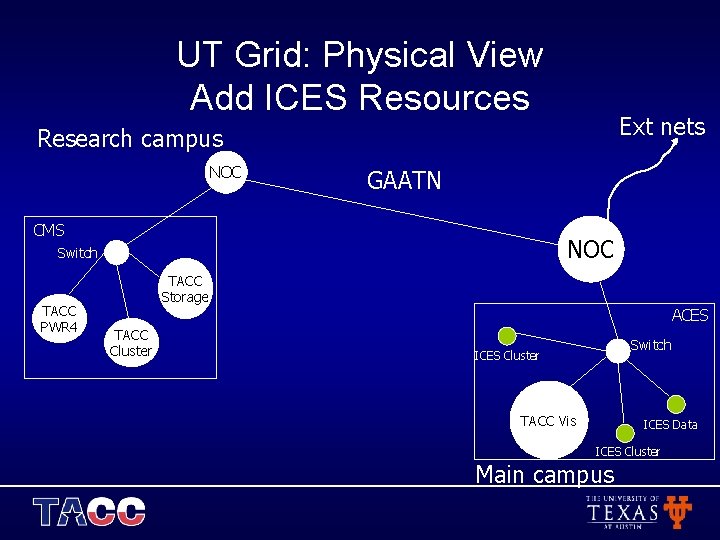

UT Grid: Physical View Add ICES Resources Ext nets Research campus NOC GAATN CMS NOC Switch TACC PWR 4 TACC Storage TACC Cluster ACES Switch ICES Cluster TACC Vis ICES Data ICES Cluster Main campus

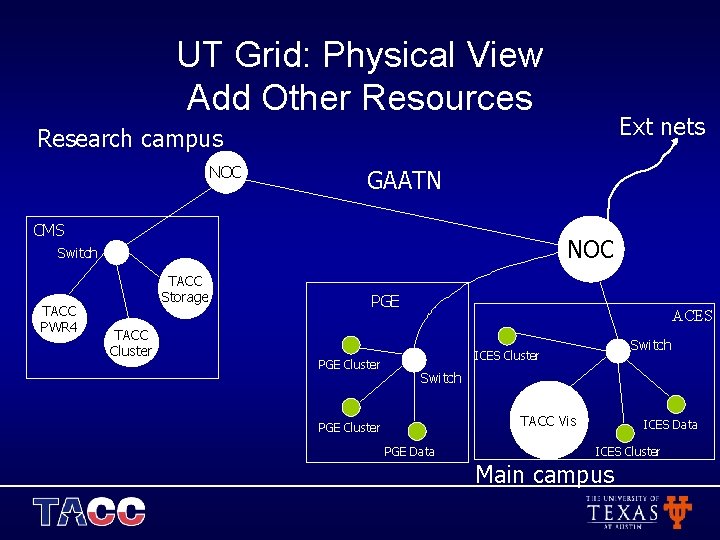

UT Grid: Physical View Add Other Resources Ext nets Research campus NOC GAATN CMS NOC Switch TACC PWR 4 TACC Storage TACC Cluster PGE Cluster ACES Switch ICES Cluster Switch TACC Vis PGE Cluster PGE Data ICES Cluster Main campus

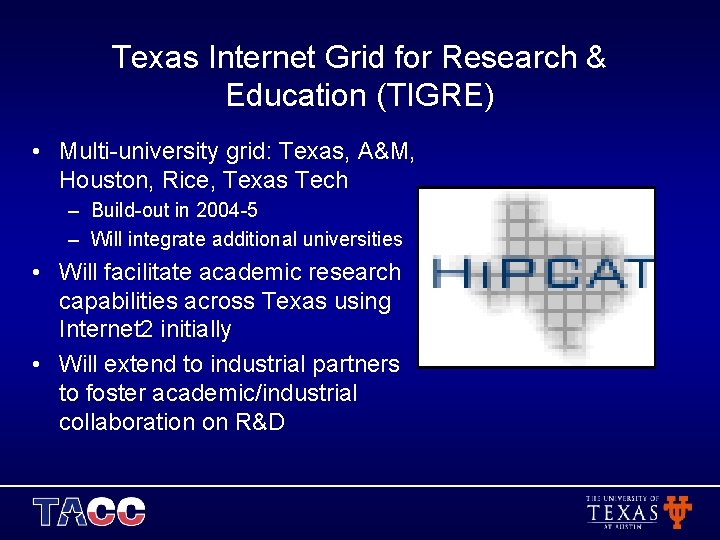

Texas Internet Grid for Research & Education (TIGRE) • Multi-university grid: Texas, A&M, Houston, Rice, Texas Tech – Build-out in 2004 -5 – Will integrate additional universities • Will facilitate academic research capabilities across Texas using Internet 2 initially • Will extend to industrial partners to foster academic/industrial collaboration on R&D

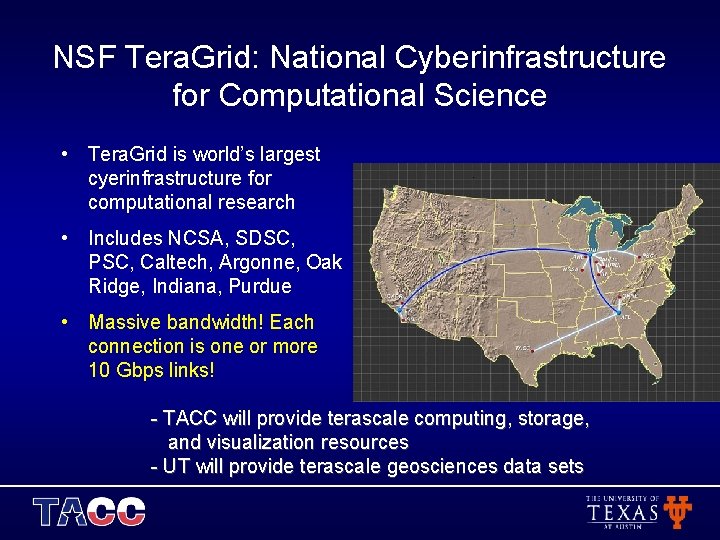

NSF Tera. Grid: National Cyberinfrastructure for Computational Science • Tera. Grid is world’s largest cyerinfrastructure for computational research • Includes NCSA, SDSC, PSC, Caltech, Argonne, Oak Ridge, Indiana, Purdue • Massive bandwidth! Each connection is one or more 10 Gbps links! - TACC will provide terascale computing, storage, and visualization resources - UT will provide terascale geosciences data sets

Where Are We Now? Where are We Going?

The Buzz Words • Clusters, Clusters • Grids & Cyberinfrastructure • Data, Data

Clusters, Clusters • No sense in trying to make long-term predictions here – 64 -bit is going to be more important (duh)—but is not yet (for most workloads) – Evaluate options, but differences are not so great (for diverse workloads) – Pricing is generally normalized to performance (via sales) for commodities

Grids & Cyberinfrastructure Are Coming… Really! • ‘The Grid’ is coming… eventually – The concept of a Grid was ahead of the standards – But we all use distributed computing anyway, and the advantages are just too big not to solve the issues – Still have to solve many of the same distributed computing research problems (but at least now we have standards to develop to) • ‘grid computing’ is here… almost – WSRF means finally getting the standards right – Federal agencies and companies alike are investing heavily in good projects and starting to see results

TACC Grid Tools and Deployments • Grid Computing Tools – – Grid. Port: transparent grid computing from Web Grid. Shell: transparent grid computing from CLI CSF: grid scheduling Grid. Flow / Grid. Steer: for coupling vis, steering simulations • Cyberinfrastructure Deployments – Tera. Grid: national cyberinfrastructure – TIGRE: state-wide cyberinfrastructure – UT Grid: campus cyberinfrastructure for research & education

Data, Data • Our ability to create and collect data (computing systems, instruments, sensors) is exploding • Availability of data even driving new modes of science (e. g. , bioinformatics) • Data availability and need for sharing, analysis, is driving the other aspects of computing – – – Need for 64 -bit microprocessors, improved memory systems Parallel file I/O Use of scientific databases, parallel databases Increased network bandwidth Grids for managing, sharing remote data

Renewed U. S. Interest in HEC Will Have Impact • While clusters are important, ‘non-clusters’ are still important!!! – Projects like IBM Blue Gene/L, Cray Red Storm, etc. address different problems than clusters – DARPA HPCS program is really important, but only a start – Strategic national interests require national investment!!! – I think we’ll see more federal funding for innovative research into computer systems

Visualization Will Catch Up • Visualization often lags behind HPC, storage – Flops get publicity – Bytes can’t get lost – Even Rainman can’t get insight from terabytes of 0’s and 1’s • Explosion in data creates limitations requiring – Feature detection (good) – Downsizing problem (bad) – Downsampling data (ugly)

Visualization Will Catch Up • As PCs impacted HPC, so will are graphics cards impacting visualization – Custom SMP systems using graphics cards (Sun, SGI) – Graphics clusters (Linux, Windows) • As with HPC, still a need for custom, powerful visualization solutions on certain problems – SGI has largely exited this market – IBM left long ago—please come back! – Again, requires federal investment

What Should You Do This Week?

Austin is Fun, Cool, Weird, & Wonderful • Mix of hippies, slackers, academics, geeks, politicos, musicians, and cowboys • “Keep Austin Weird” • Live Music Capital of the World (seriously) • Also great restaurants, cafes, clubs, bars, theaters, galleries, etc. – http: //www. austinchronicle. com/ – http: //www. austin 360. com/xl/content/xl/index. html – http: //www. research. ibm. com/arl/austin/index. html

Your Austin To-Do List ü ü ü ü ü Eat barbecue at Rudy’s, Stubb’s, Iron Works, Green Mesquite, etc. Eat Tex-Mex and at Chuy’s, Trudy’s, Maudie’s, etc. Have a cold Shiner Bock (not Lone Star) Visit 6 th Street and Warehouse District at night See sketch comedy at Esther’s Follies Go to at least one live music show Learn to two-step at The Broken Spoke Walk/jog/bike around Town Lake See a million bats emerge from Congress Ave. bridge at sunset Visit the Texas State History Museum Visit the UT main campus See movie at Alamo Drafthouse Cinema (arrive early, order beer & food) See the Round Rock Express at the Dell Diamond Drive into Hill Country, visit small towns and wineries Eat Amy’s Ice Cream Listen to and buy local music at Waterloo Records Buy a bottle each of Rudy’s Barbecue Sause and Tito’s Vodka

Final Comments & Thoughts • I’m very pleased to see SCICOMP is still going strong – Great leaders and a great community make it last • Still a need for groups like this – technologies get more powerful, but not necessarily simpler, and impact comes from effective utilization • More importantly, always a need for energetic, talented people to make a difference in advanced computing – Contribute to valuable efforts – Don’t be afraid to start something if necessary – Change is good (even if “the only thing certain about change is that things will be different afterwards”) • Enjoy Austin! – Ask any TACC staff about places to go and things to do

More About TACC: Texas Advanced Computing Center www. tacc. utexas. edu info@tacc. utexas. edu (512) 475 -9411

- Slides: 65