Scheduling CEG 4131 Computer Architecture III Miodrag Bolic

Scheduling CEG 4131 Computer Architecture III Miodrag Bolic Slides developed by Dr. Hesham El-Rewini Copyright Hesham El-Rewini 1

Outline • • Scheduling models Scheduling without considering communication Including communication in scheduling Heuristic algorithms 2

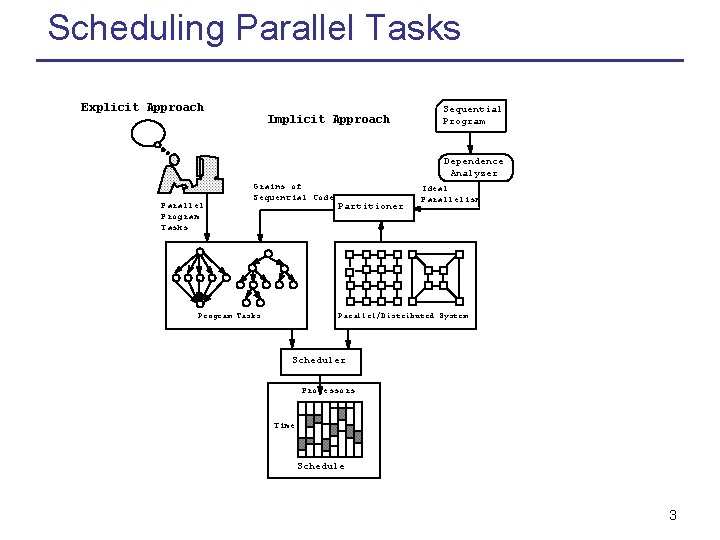

Scheduling Parallel Tasks Explicit Approach Implicit Approach Sequential Program Dependence Analyzer Parallel Program Tasks Grains of Sequential Code Program Tasks Partitioner Ideal Parallelism Parallel/Distributed System Scheduler Processors Time Schedule 3

Program Tasks Task Notation: (T, <, D, A) • • T set of tasks < partial order on T D Communication Data A amount of computation 4

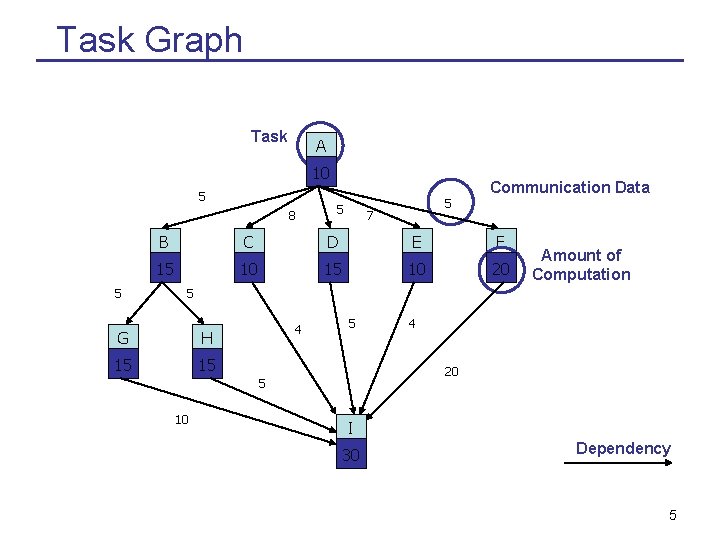

Task Graph Task A 10 5 8 5 Communication Data 5 5 7 B C D E F 15 10 20 Amount of Computation 5 G H 15 15 4 5 20 5 10 4 I 30 Dependency 5

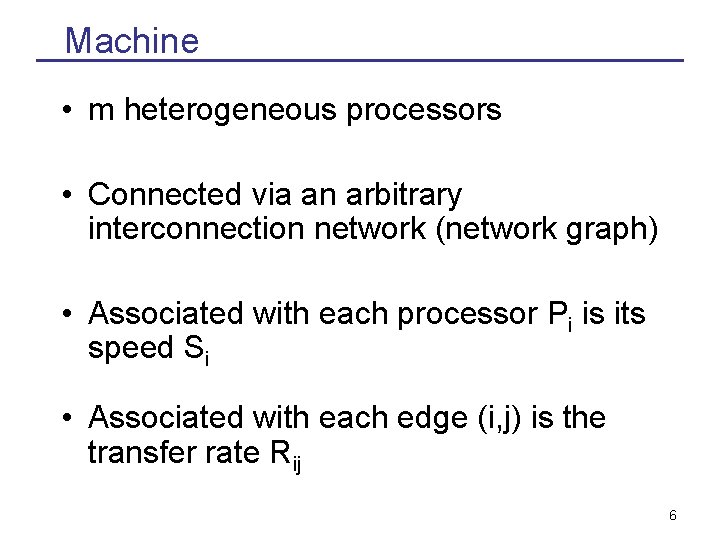

Machine • m heterogeneous processors • Connected via an arbitrary interconnection network (network graph) • Associated with each processor Pi is its speed Si • Associated with each edge (i, j) is the transfer rate Rij 6

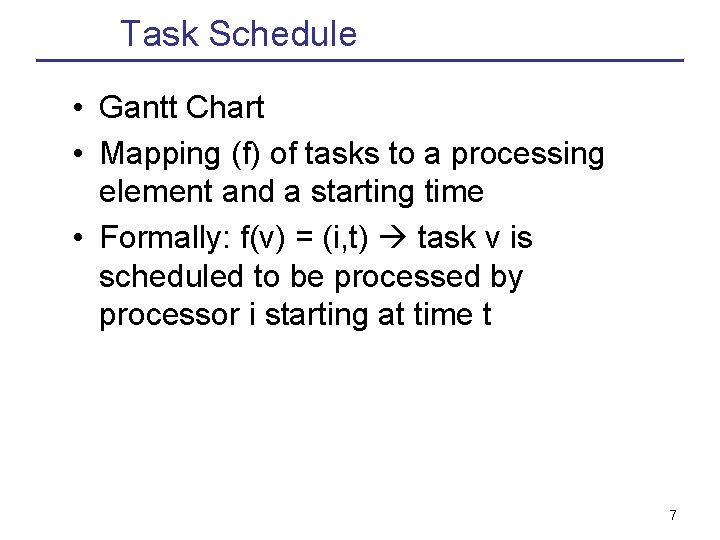

Task Schedule • Gantt Chart • Mapping (f) of tasks to a processing element and a starting time • Formally: f(v) = (i, t) task v is scheduled to be processed by processor i starting at time t 7

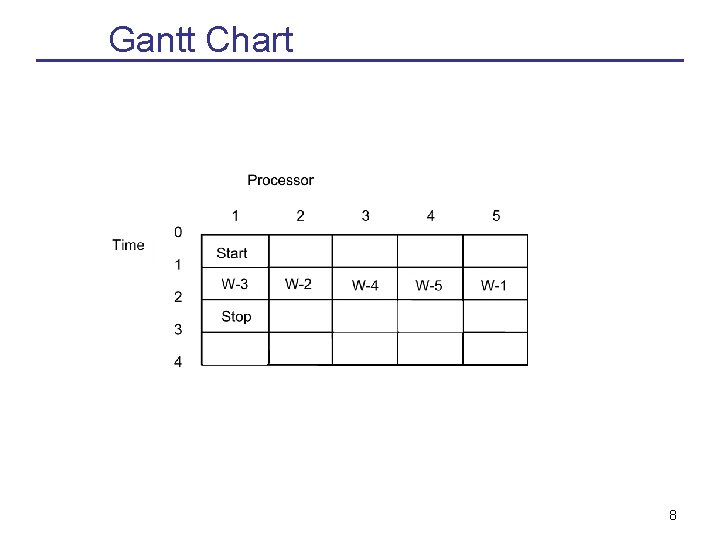

Gantt Chart 8

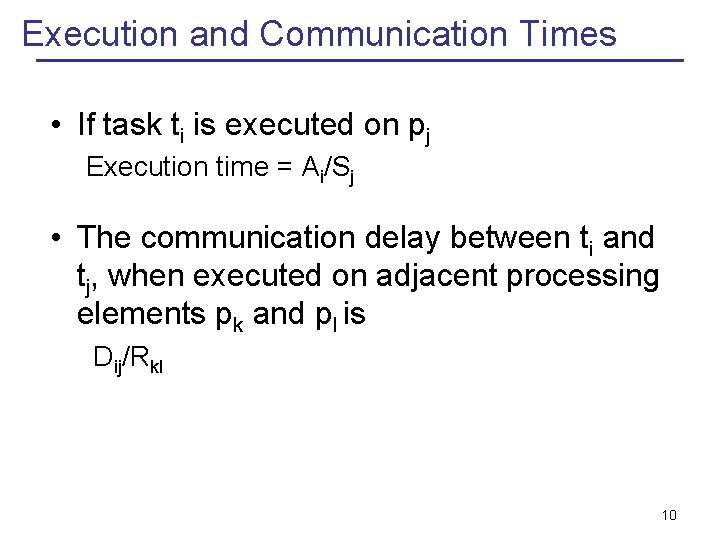

Execution and Communication Times • If task ti is executed on pj Execution time = Ai/Sj • The communication delay between ti and tj, when executed on adjacent processing elements pk and pl is Dij/Rkl 10

Complexity • Computationally intractable in general • Small number of polynomial optimal algorithms in restricted cases • A large number of heuristics in more general cases • Quality of the schedule vs. Quality of the scheduler 11

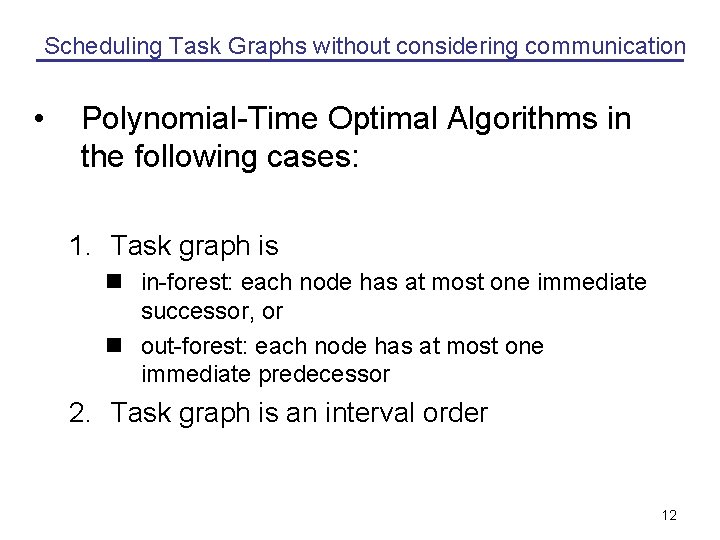

Scheduling Task Graphs without considering communication • Polynomial-Time Optimal Algorithms in the following cases: 1. Task graph is n in-forest: each node has at most one immediate successor, or n out-forest: each node has at most one immediate predecessor 2. Task graph is an interval order 12

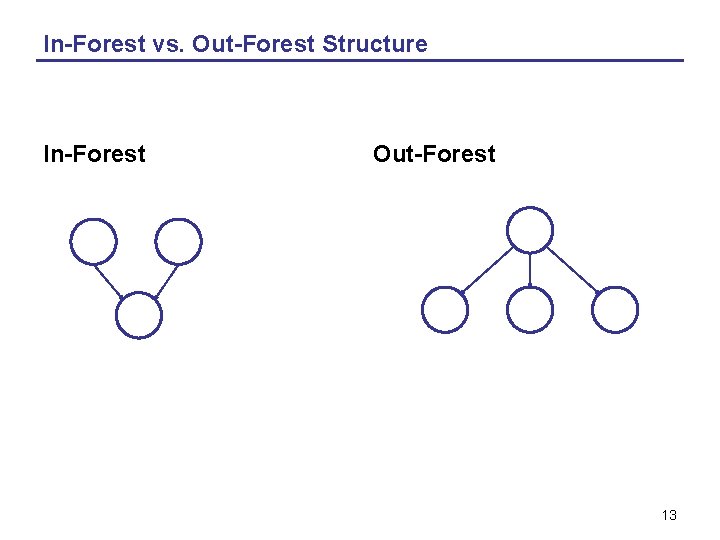

In-Forest vs. Out-Forest Structure In-Forest Out-Forest 13

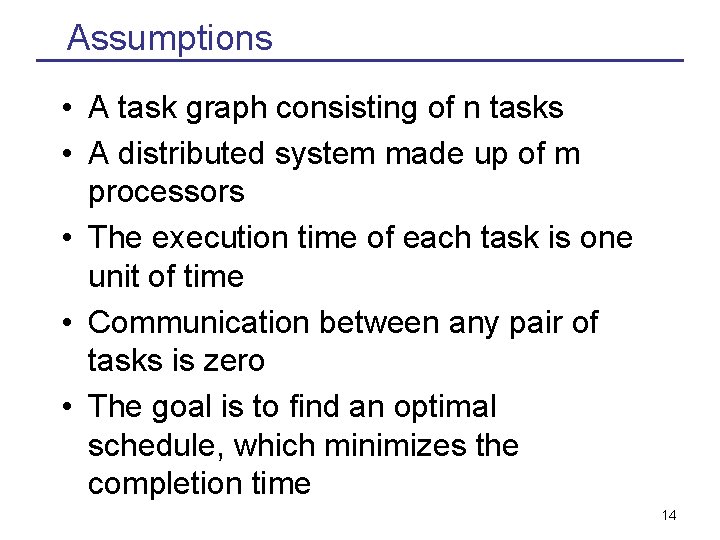

Assumptions • A task graph consisting of n tasks • A distributed system made up of m processors • The execution time of each task is one unit of time • Communication between any pair of tasks is zero • The goal is to find an optimal schedule, which minimizes the completion time 14

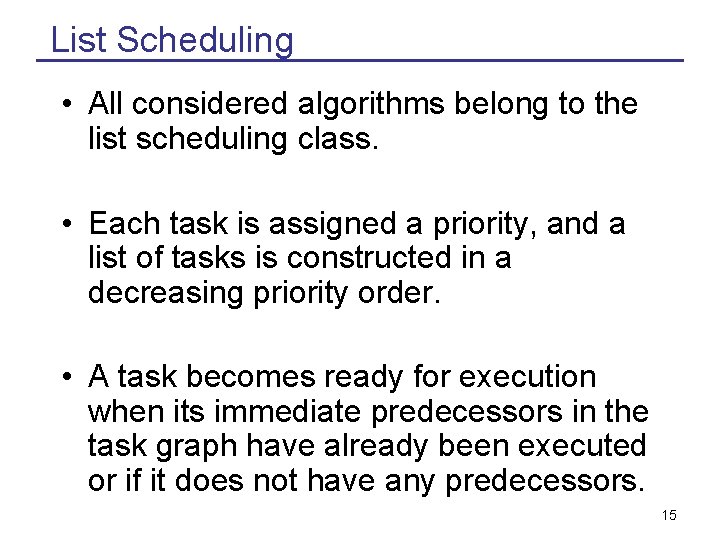

List Scheduling • All considered algorithms belong to the list scheduling class. • Each task is assigned a priority, and a list of tasks is constructed in a decreasing priority order. • A task becomes ready for execution when its immediate predecessors in the task graph have already been executed or if it does not have any predecessors. 15

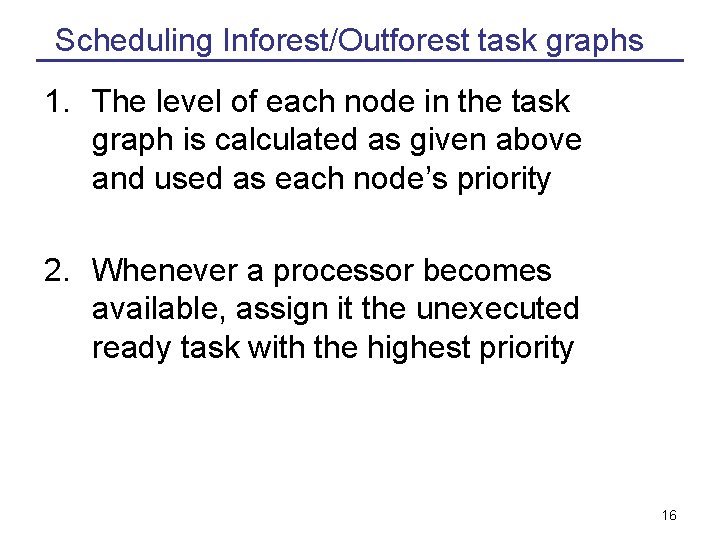

Scheduling Inforest/Outforest task graphs 1. The level of each node in the task graph is calculated as given above and used as each node’s priority 2. Whenever a processor becomes available, assign it the unexecuted ready task with the highest priority 16

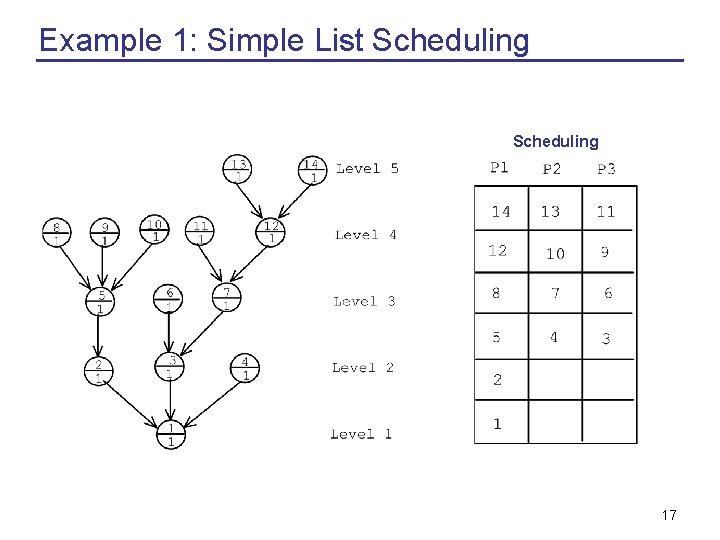

Example 1: Simple List Scheduling 17

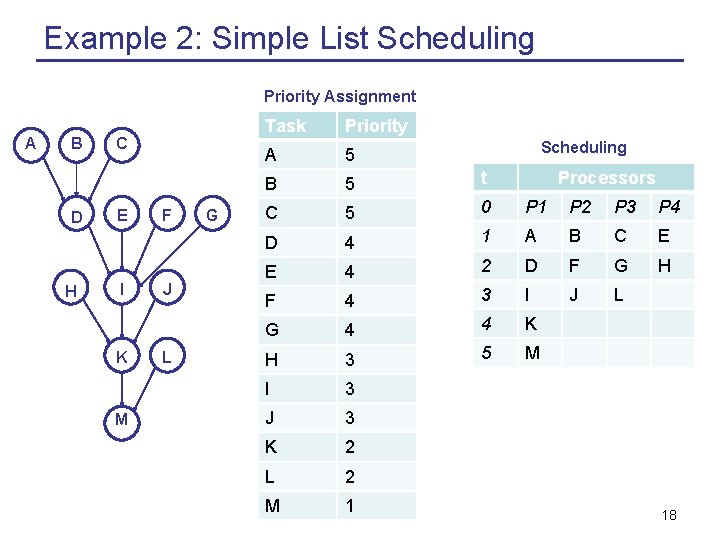

Example 2: Simple List Scheduling Priority Assignment A B D H C E I K M F J L G Task Priority A 5 B 5 t C 5 0 P 1 P 2 P 3 P 4 D 4 1 A B C E E 4 2 D F G H F 4 3 I J L G 4 4 K H 3 5 M I 3 J 3 K 2 L 2 M 1 Scheduling Processors 18

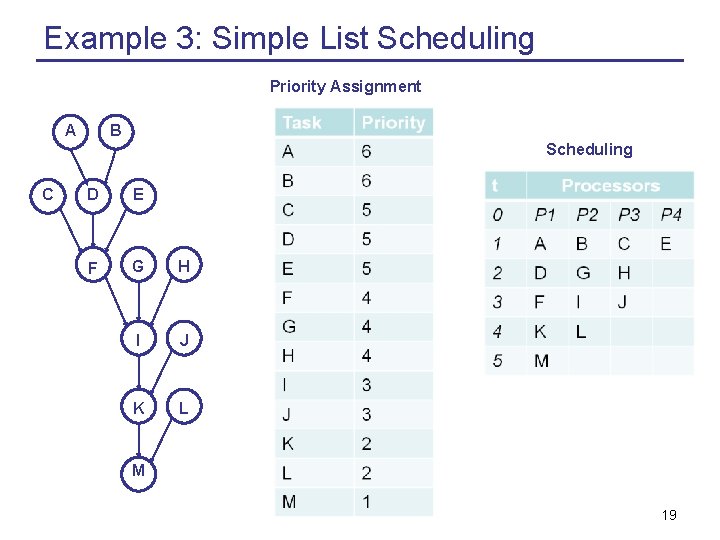

Example 3: Simple List Scheduling Priority Assignment A B Scheduling C D E F G H I J K L M 19

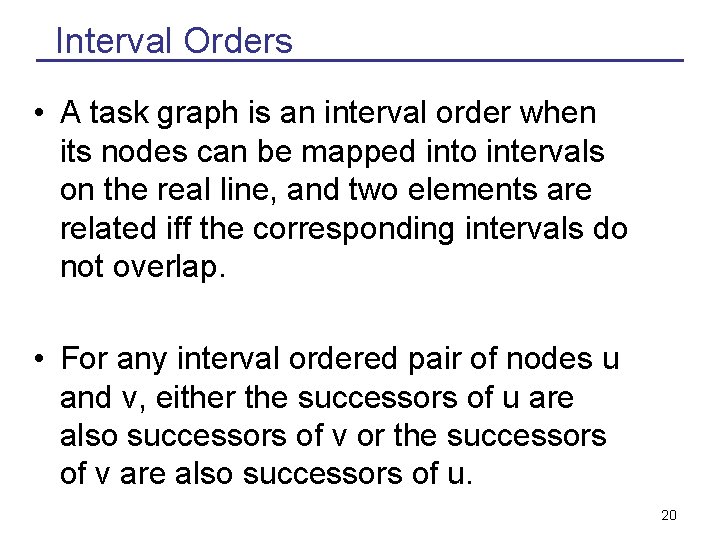

Interval Orders • A task graph is an interval order when its nodes can be mapped into intervals on the real line, and two elements are related iff the corresponding intervals do not overlap. • For any interval ordered pair of nodes u and v, either the successors of u are also successors of v or the successors of v are also successors of u. 20

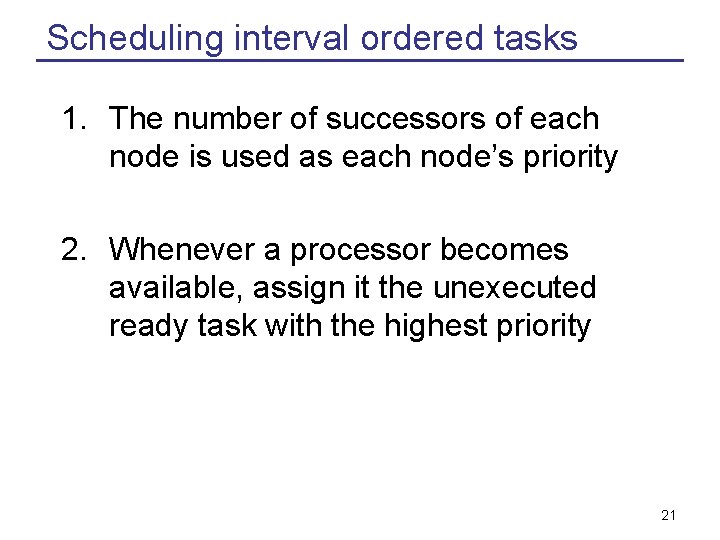

Scheduling interval ordered tasks 1. The number of successors of each node is used as each node’s priority 2. Whenever a processor becomes available, assign it the unexecuted ready task with the highest priority 21

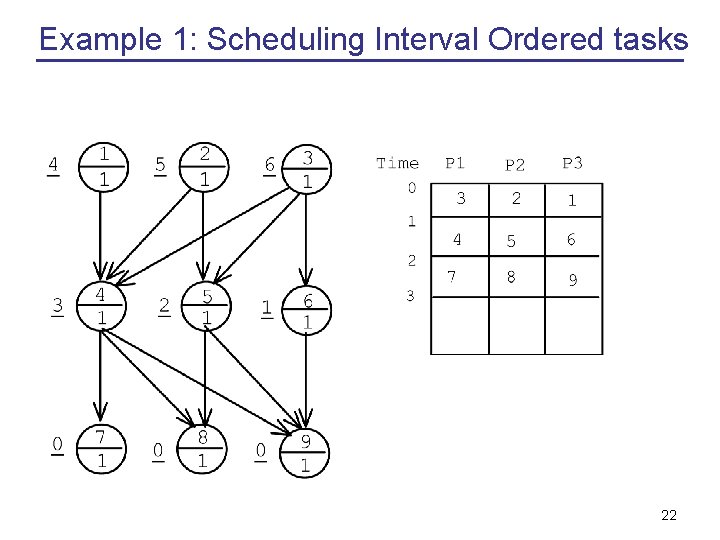

Example 1: Scheduling Interval Ordered tasks 22

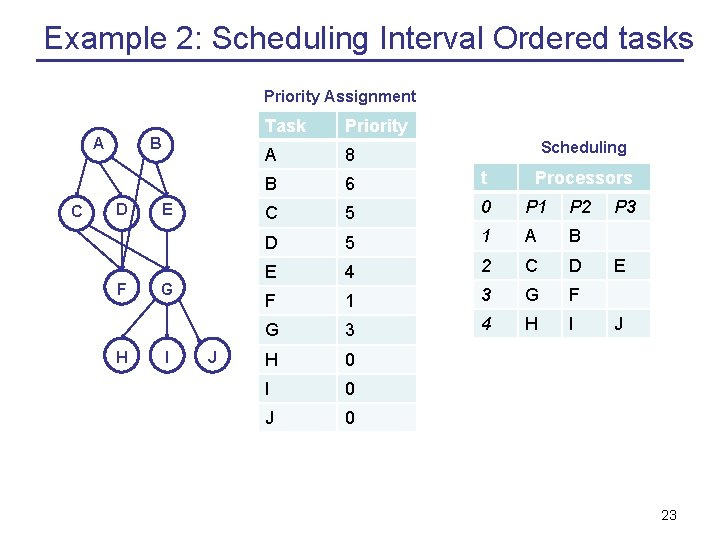

Example 2: Scheduling Interval Ordered tasks Priority Assignment A C B D F H E G I J Task Priority A 8 B 6 t C 5 0 P 1 P 2 D 5 1 A B E 4 2 C D F 1 3 G F G 3 4 H I H 0 I 0 J 0 Scheduling Processors P 3 E J 23

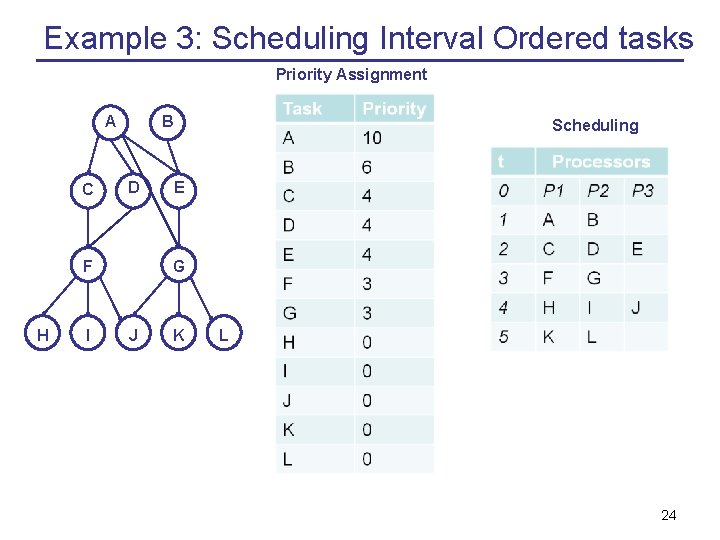

Example 3: Scheduling Interval Ordered tasks Priority Assignment A C B D F H I Scheduling E G H J K L 24

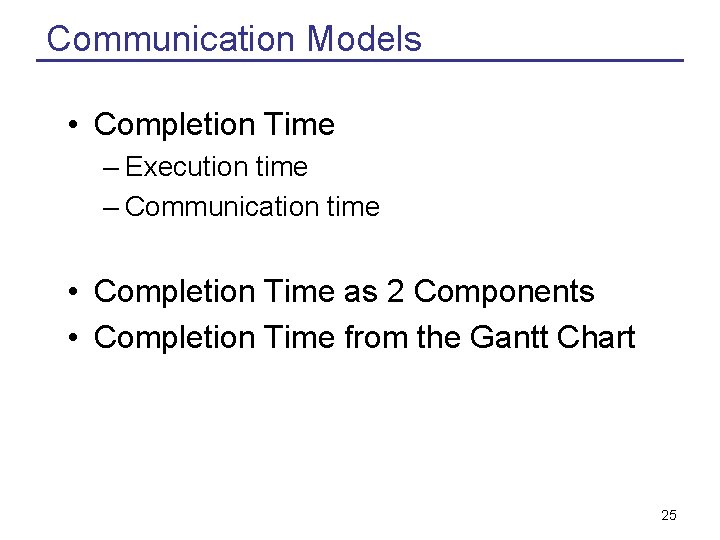

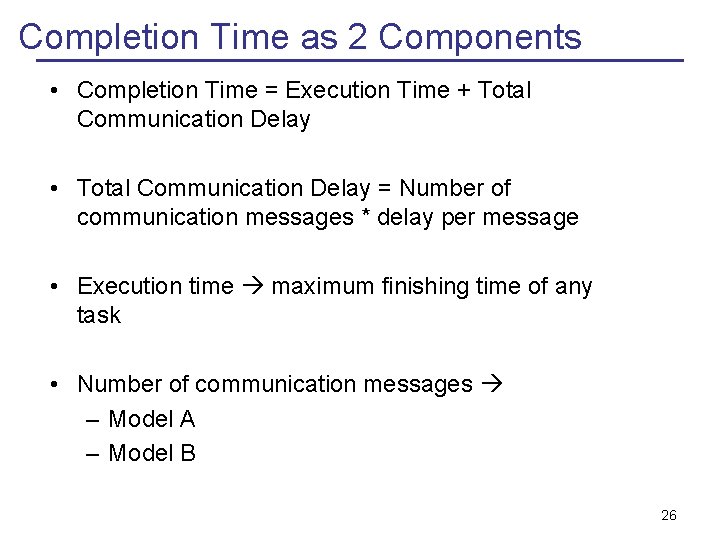

Communication Models • Completion Time – Execution time – Communication time • Completion Time as 2 Components • Completion Time from the Gantt Chart 25

Completion Time as 2 Components • Completion Time = Execution Time + Total Communication Delay • Total Communication Delay = Number of communication messages * delay per message • Execution time maximum finishing time of any task • Number of communication messages – Model A – Model B 26

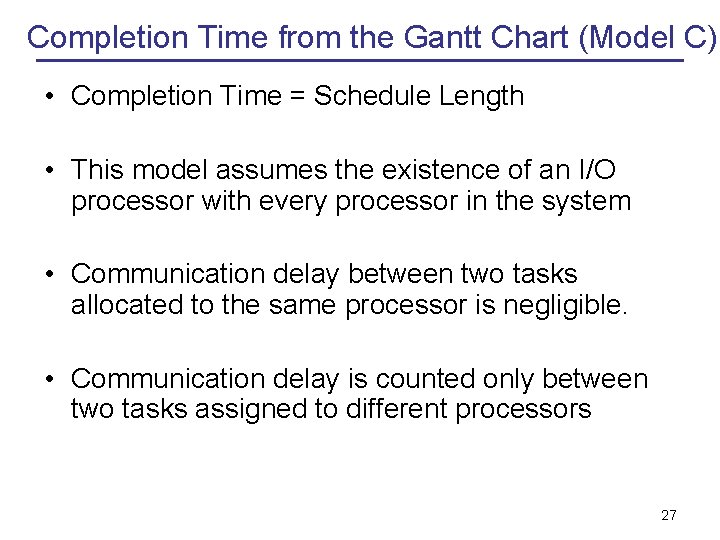

Completion Time from the Gantt Chart (Model C) • Completion Time = Schedule Length • This model assumes the existence of an I/O processor with every processor in the system • Communication delay between two tasks allocated to the same processor is negligible. • Communication delay is counted only between two tasks assigned to different processors 27

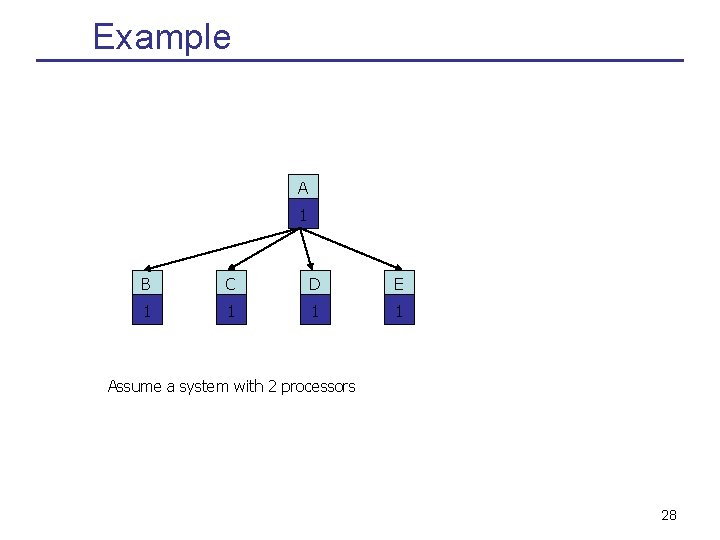

Example A 1 B C D E 1 1 Assume a system with 2 processors 28

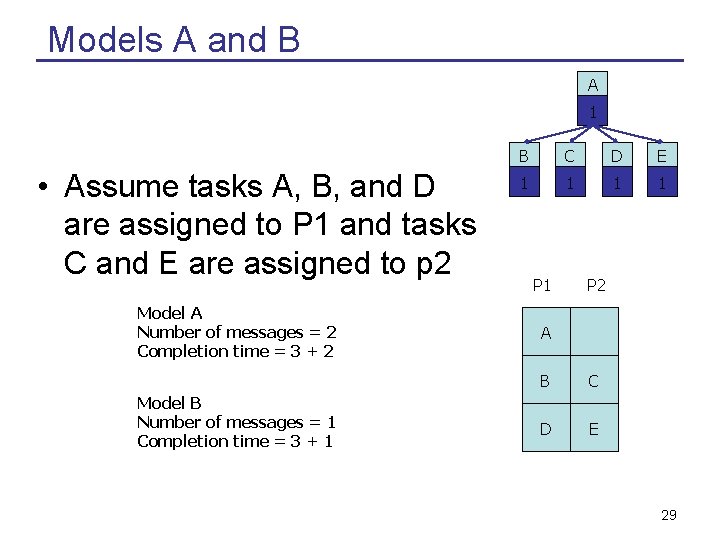

Models A and B A 1 • Assume tasks A, B, and D are assigned to P 1 and tasks C and E are assigned to p 2 Model A Number of messages = 2 Completion time = 3 + 2 Model B Number of messages = 1 Completion time = 3 + 1 B C D E 1 1 P 1 P 2 A B C D E 29

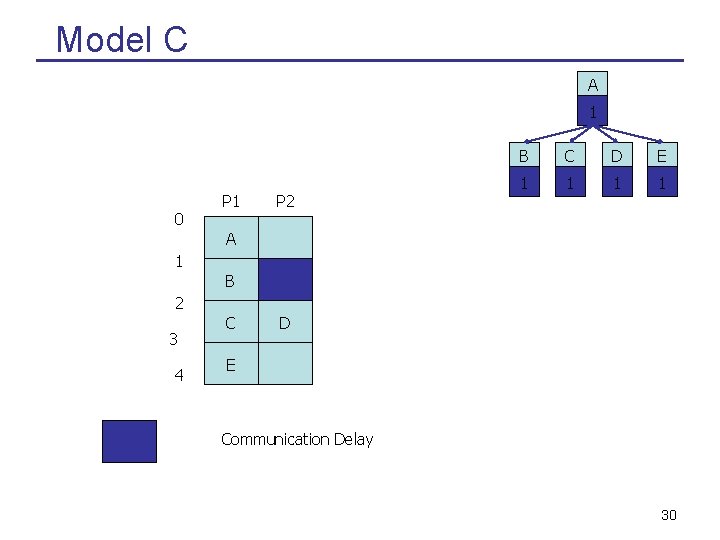

Model C A 1 0 P 1 P 2 B C D E 1 1 A 1 B 2 3 4 C D E Communication Delay 30

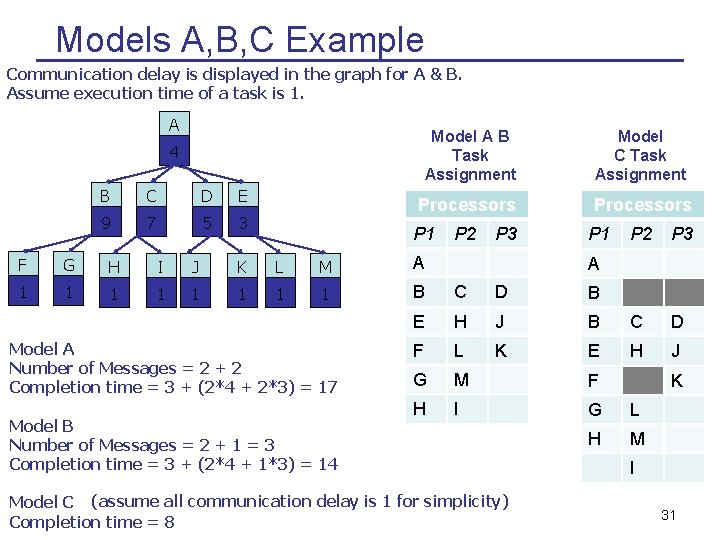

Models A, B, C Example Communication delay is displayed in the graph for A & B. Assume execution time of a task is 1. A Model A B Task Assignment Model C Task Assignment Processors 4 B C D E 9 7 5 3 P 1 P 2 P 3 F G H I J K L M A 1 1 1 1 B C D B E H J B C D F L K E H J G M F H I G L H M Model A Number of Messages = 2 + 2 Completion time = 3 + (2*4 + 2*3) = 17 Model B Number of Messages = 2 + 1 = 3 Completion time = 3 + (2*4 + 1*3) = 14 A Model C (assume all communication delay is 1 for simplicity) Completion time = 8 K I 31

Heuristics A heuristic produces an answer in less than exponential time, but does not guarantee an optimal solution. • Communication delay versus parallelism • Clustering • Duplication 32

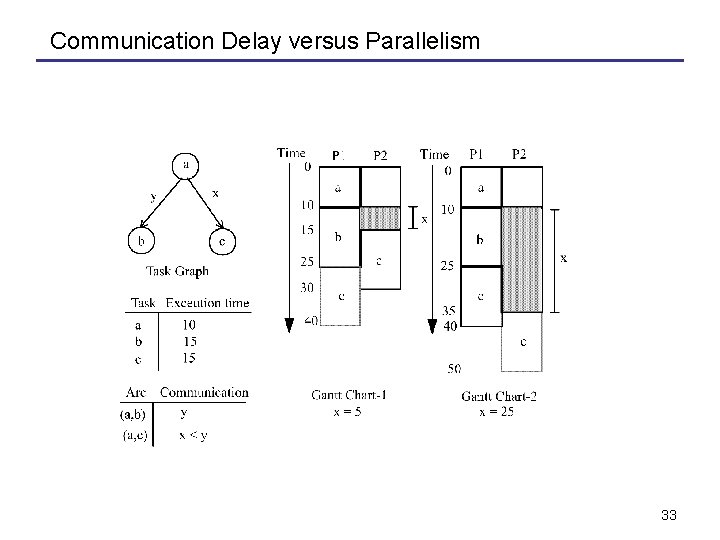

Communication Delay versus Parallelism 33

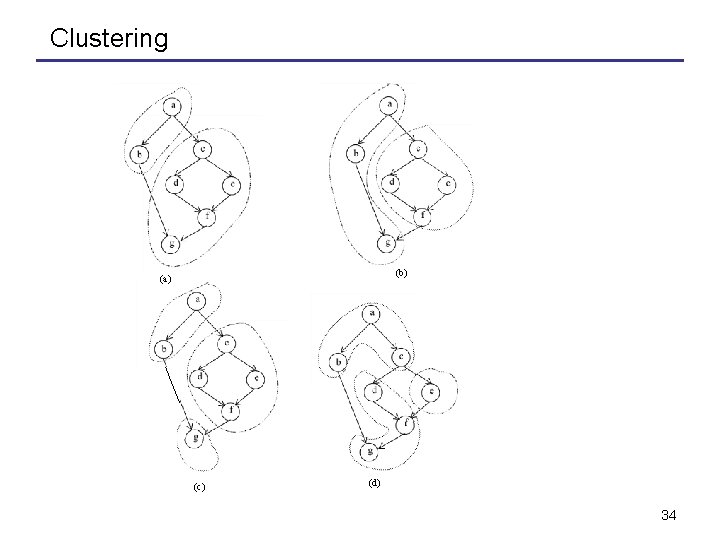

Clustering a a c b d d e e f f g g (b) (a) a a c b d e f f g g (c) (d) 34

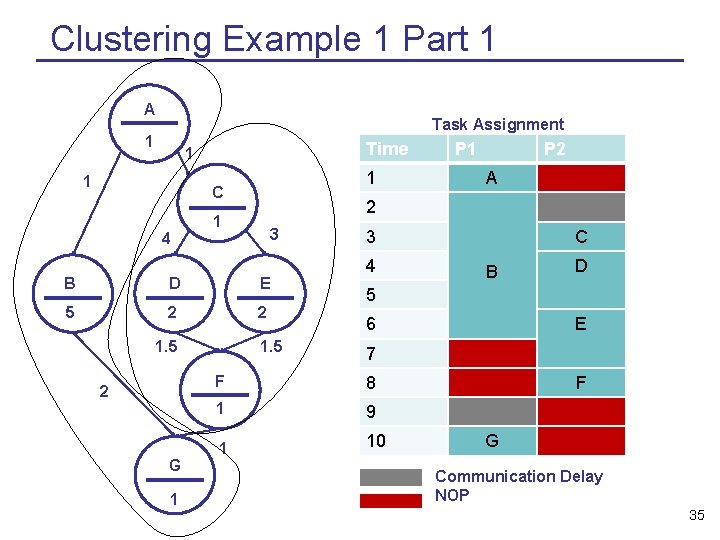

Clustering Example 1 Part 1 A Task Assignment 1 Time 1 1 1 C 4 3 B D E 5 2 2 2 G 1 P 2 A 2 1 1. 5 P 1 1. 5 3 4 C B D 5 6 E 7 F 8 1 9 1 10 F G Communication Delay NOP 35

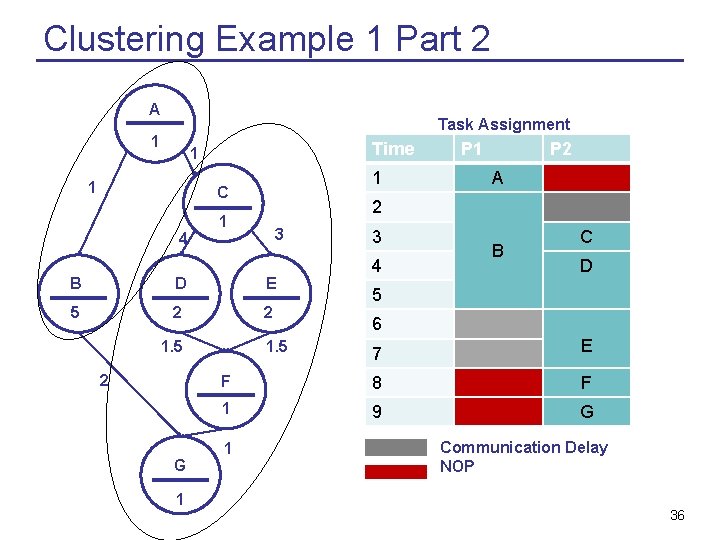

Clustering Example 1 Part 2 A Task Assignment 1 Time 1 1 1 C 4 3 D E 5 2 2 1 A 3 4 B C D 5 6 7 E F 8 F 1 9 G 1. 5 G P 2 2 1 B 2 P 1 1. 5 1 Communication Delay NOP 36

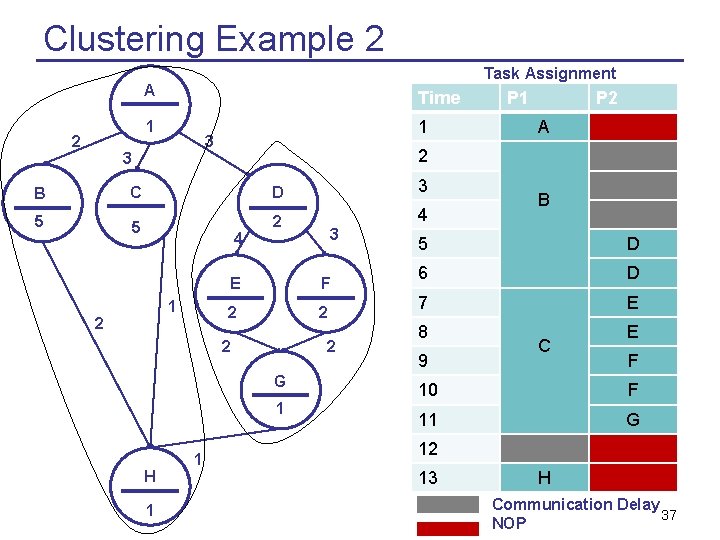

Clustering Example 2 Task Assignment A Time 1 2 1 3 3 C D 3 5 5 2 4 4 2 F 2 2 2 G 1 H 1 3 E 2 1 P 2 A 2 B 1 P 1 B 5 D 6 D 7 E 8 9 C E F 10 F 11 G 12 13 H Communication Delay 37 NOP

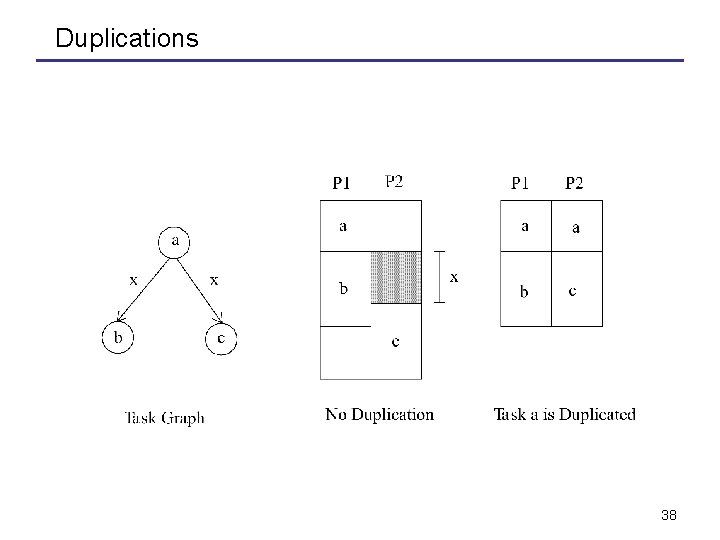

Duplications 38

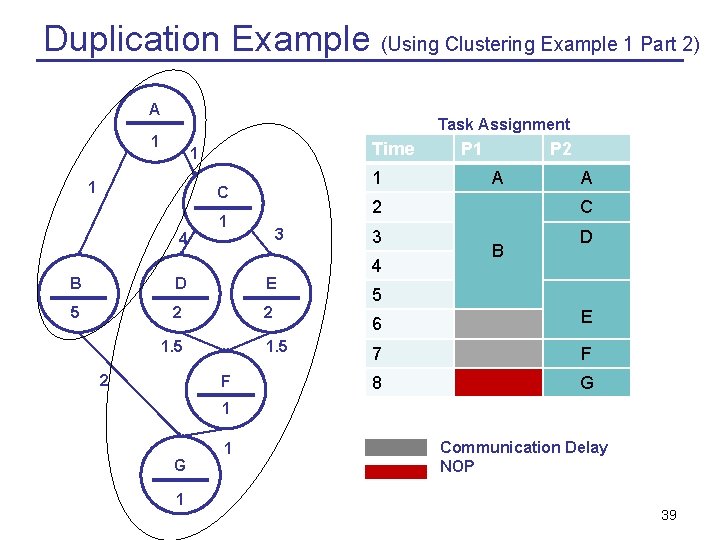

Duplication Example (Using Clustering Example 1 Part 2) A Task Assignment 1 Time 1 1 1 C 4 1 3 B D E 5 2 2 1. 5 F P 1 P 2 A A 2 C 3 D 4 B 5 6 E 7 F 8 G 1 1 Communication Delay NOP 39

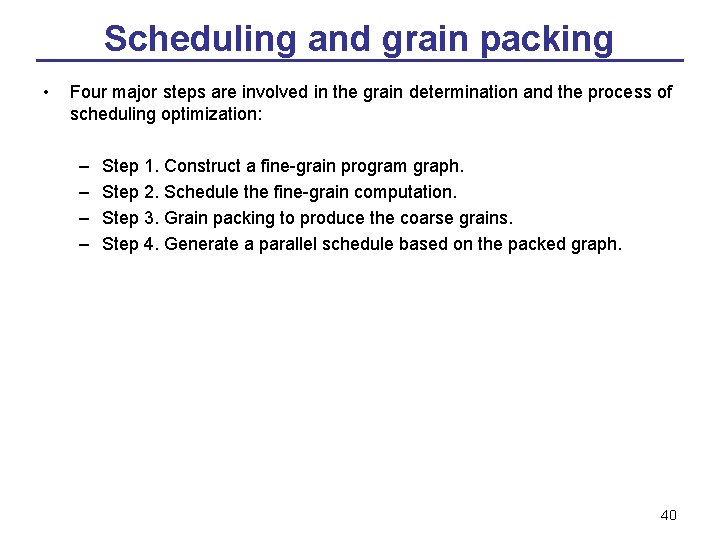

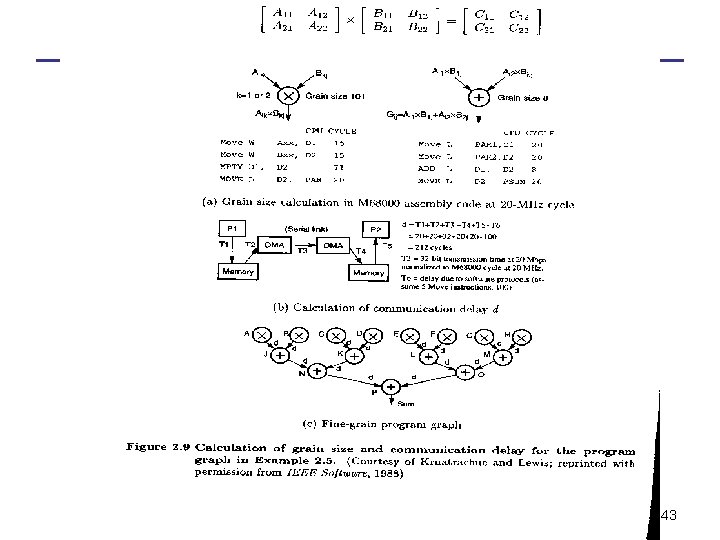

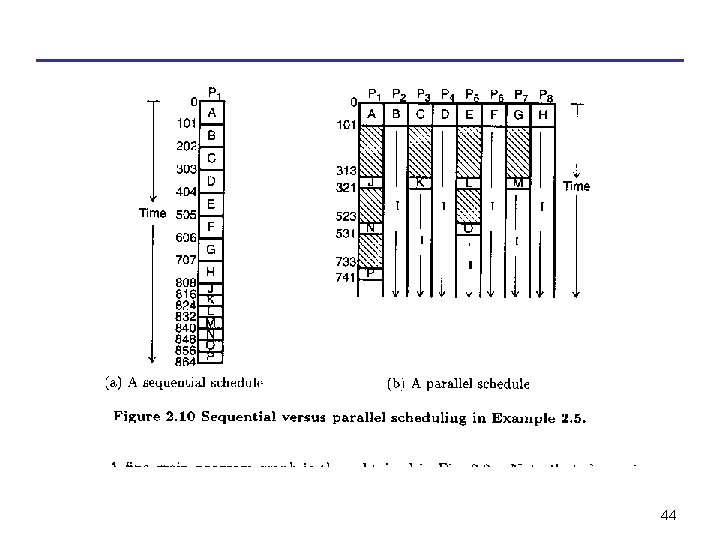

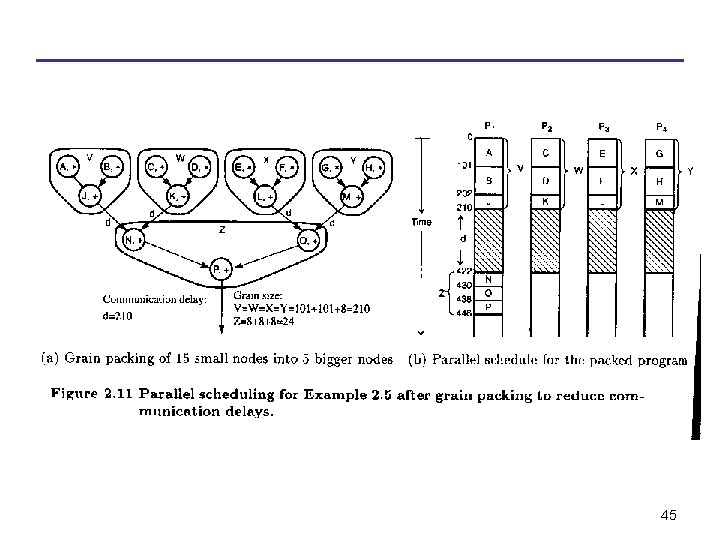

Scheduling and grain packing • Four major steps are involved in the grain determination and the process of scheduling optimization: – – Step 1. Construct a fine-grain program graph. Step 2. Schedule the fine-grain computation. Step 3. Grain packing to produce the coarse grains. Step 4. Generate a parallel schedule based on the packed graph. 40

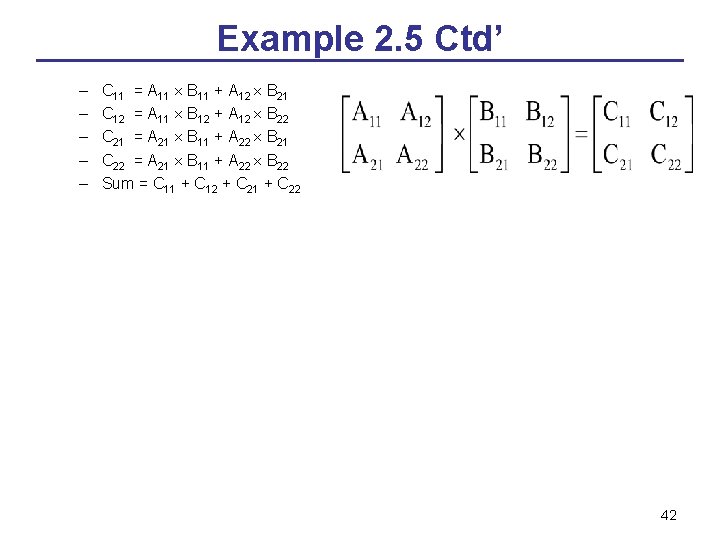

Program decomposition for static multiprocessor scheduling • two 2 x 2 matrices A and B are multiplied to compute the sum of the four elements in the resulting product matrix C = A x B. There are eight multiplications and seven additions to be performed in this program, as written below: 41

Example 2. 5 Ctd’ – – – C 11 = A 11 B 11 + A 12 B 21 C 12 = A 11 B 12 + A 12 B 22 C 21 = A 21 B 11 + A 22 B 21 C 22 = A 21 B 11 + A 22 B 22 Sum = C 11 + C 12 + C 21 + C 22 42

43

44

45

- Slides: 44