SCAN A Dynamic Scalable and Efficient Content Distribution

SCAN: A Dynamic, Scalable, and Efficient Content Distribution Network Yan Chen, Randy H. Katz, John D. Kubiatowicz {yanchen, randy, kubitron}@CS. Berkeley. EDU EECS Department UC Berkeley

Outlines • • Motivation Goal and Challenges Previous Work SCAN Architecture and Components Evaluation Methodology Results Conclusions

Motivation Scenario: World Cup 2002

Goal and Challenges Provide content distribution to clients with good latency and staleness, while retaining efficient and balanced resource consumption of the underlying infrastructure • Dynamic choice of number and location of replicas – Clients’ Qo. S constraints: latency, staleness – Servers’ capacity constraints • Efficient resource consumption – Small delay, bandwidth consumption for replica update – Small replica management cost • Scalability: millions of objects, clients and servers • No global network topology knowledge

Previous Work • Replica Placement – Research communities: optimal static replica placement • Assume clients’ distributions, access patterns & IP topology • No consideration for clients’ Qo. S or servers’ capacity constraints – CDN operators: un-cooperative, ad hoc placement • Centralized CDN name server cannot record replica locations – place many more than necessary (ICNP ’ 02) • Update Multicast – No inter-domain IP multicast – Most application-level multicast (ALM) unscalable • Split root as common solution, suffers consistency overhead

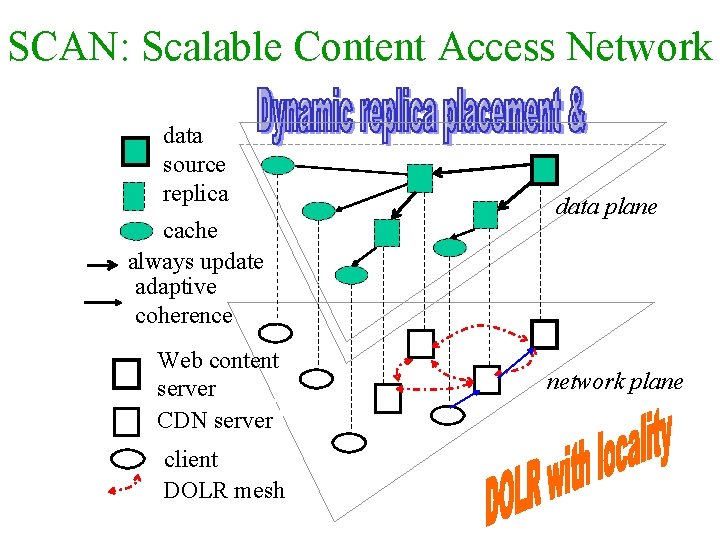

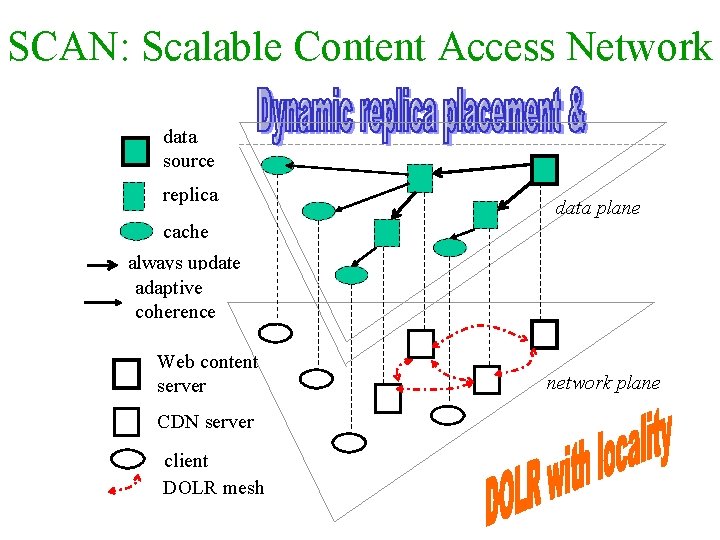

SCAN: Scalable Content Access Network data source replica cache always update adaptive coherence Web content server CDN server client DOLR mesh data plane network plane

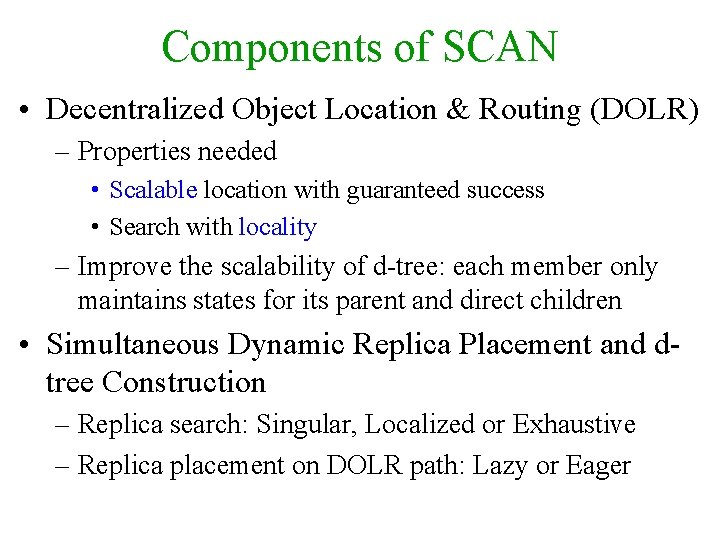

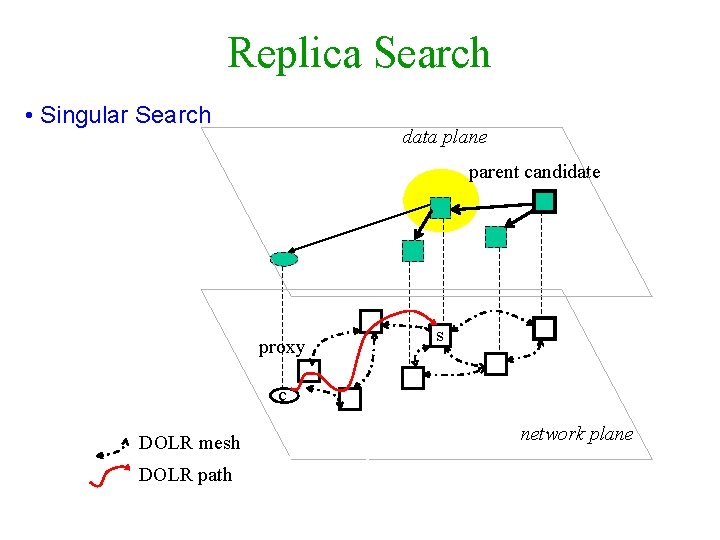

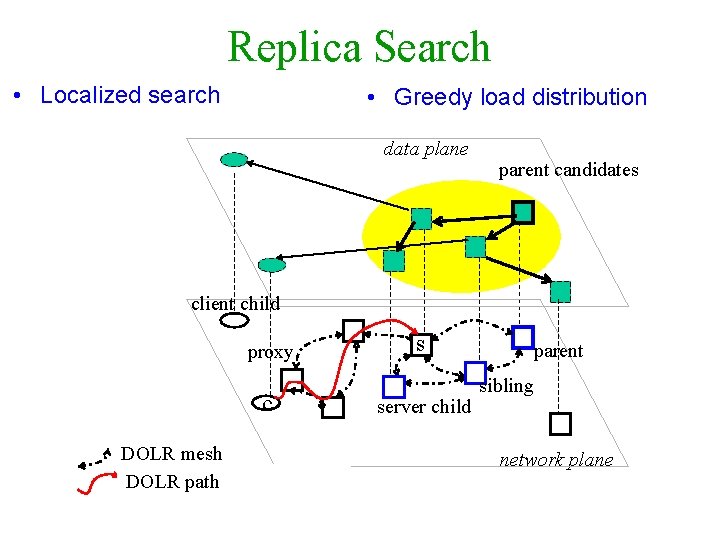

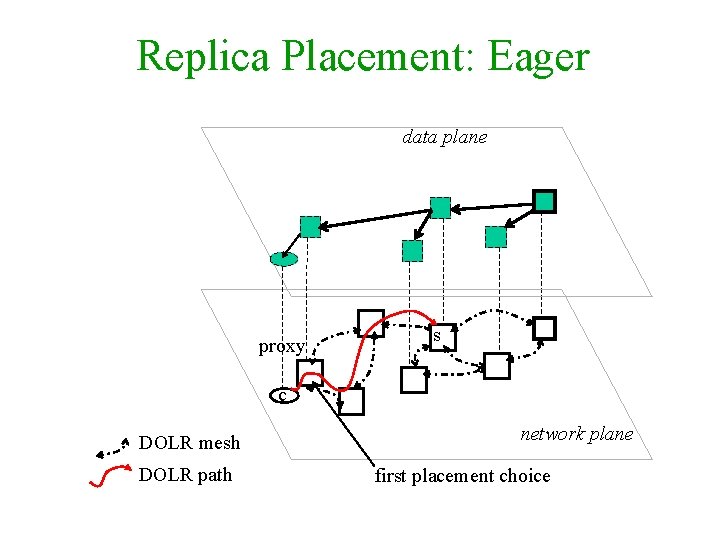

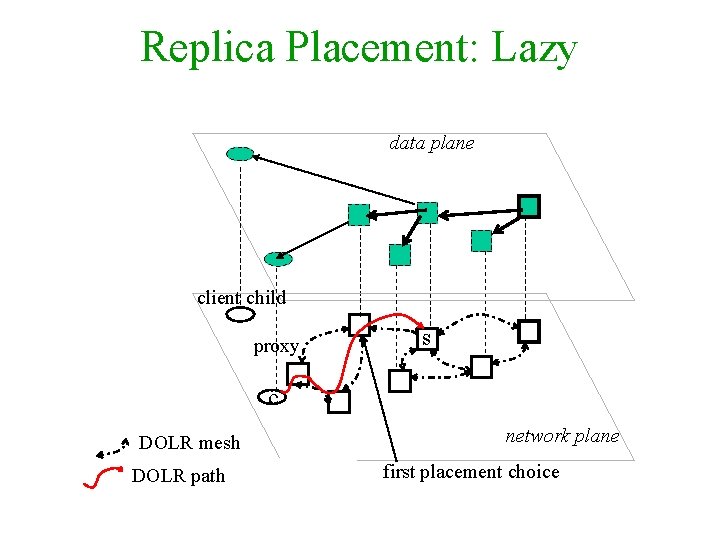

Components of SCAN • Decentralized Object Location & Routing (DOLR) – Properties needed • Scalable location with guaranteed success • Search with locality – Improve the scalability of d-tree: each member only maintains states for its parent and direct children • Simultaneous Dynamic Replica Placement and dtree Construction – Replica search: Singular, Localized or Exhaustive – Replica placement on DOLR path: Lazy or Eager

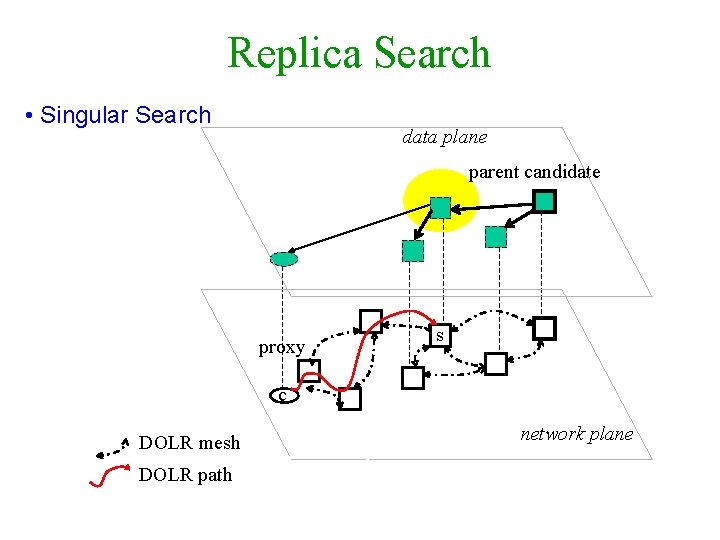

Replica Search • Singular Search data plane parent candidate proxy s c DOLR mesh DOLR path network plane

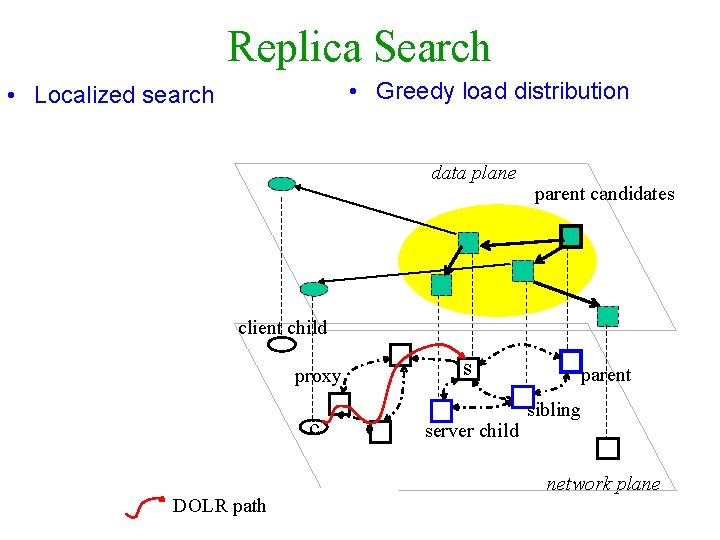

Replica Search • Localized search • Greedy load distribution data plane parent candidates client child proxy c DOLR mesh DOLR path s server child parent sibling network plane

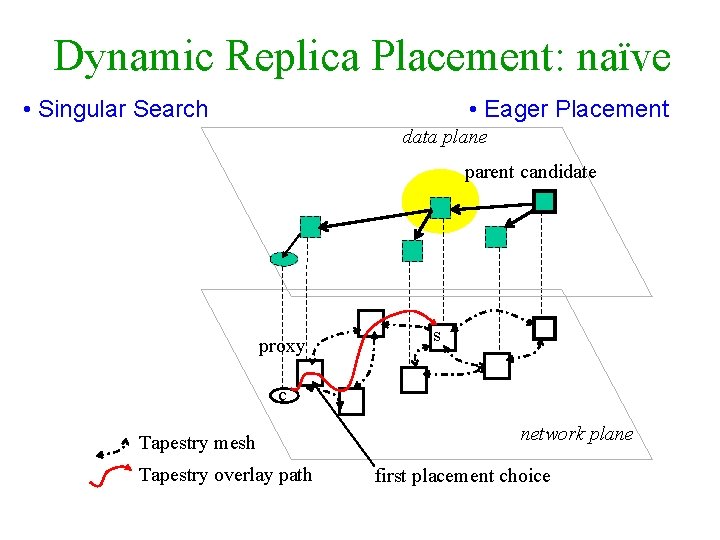

Replica Placement: Eager data plane proxy s c DOLR mesh DOLR path network plane first placement choice

Replica Placement: Lazy data plane client child proxy s c DOLR mesh DOLR path network plane first placement choice

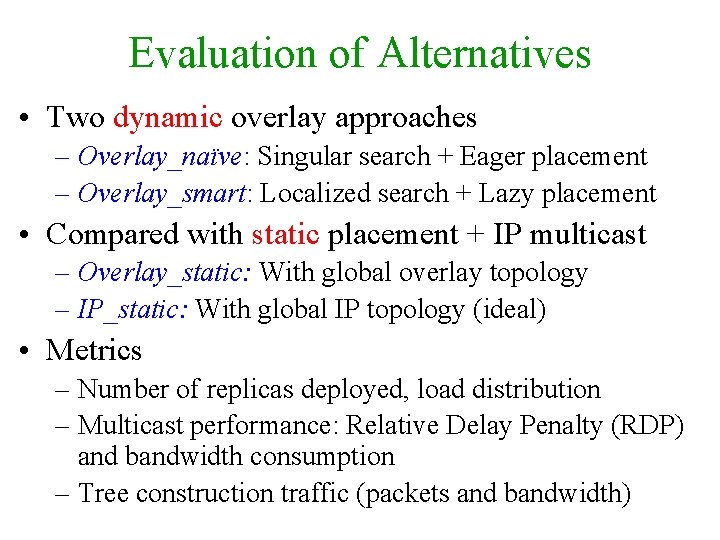

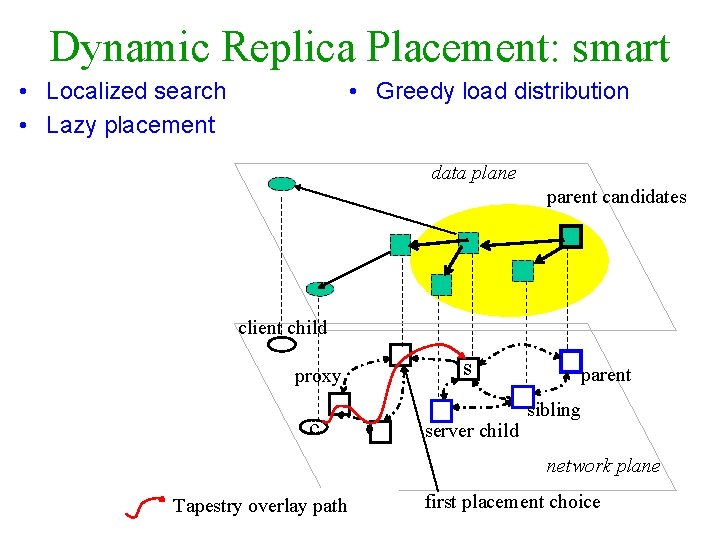

Evaluation of Alternatives • Two dynamic overlay approaches – Overlay_naïve: Singular search + Eager placement – Overlay_smart: Localized search + Lazy placement • Compared with static placement + IP multicast – Overlay_static: With global overlay topology – IP_static: With global IP topology (ideal) • Metrics – Number of replicas deployed, load distribution – Multicast performance: Relative Delay Penalty (RDP) and bandwidth consumption – Tree construction traffic (packets and bandwidth)

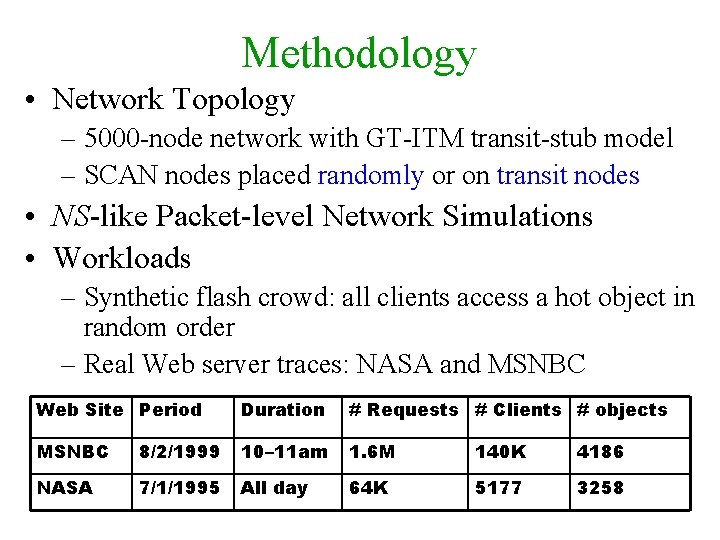

Methodology • Network Topology – 5000 -node network with GT-ITM transit-stub model – SCAN nodes placed randomly or on transit nodes • NS-like Packet-level Network Simulations • Workloads – Synthetic flash crowd: all clients access a hot object in random order – Real Web server traces: NASA and MSNBC Web Site Period Duration # Requests # Clients # objects MSNBC 8/2/1999 10– 11 am 1. 6 M 140 K 4186 NASA 7/1/1995 All day 64 K 5177 3258

Methodology: Sensitivity Analysis • • Various Client/Server Ratio Various Server Density Various Latency & Capacity Constraints Various Network Topologies – Average over 5 topologies with different setup • All Have Similar Trend of Results – Overlay_smart has close-to-optimal (IP_static) number of replicas, load distribution, multicast performance with reasonable amount of tree construction traffic

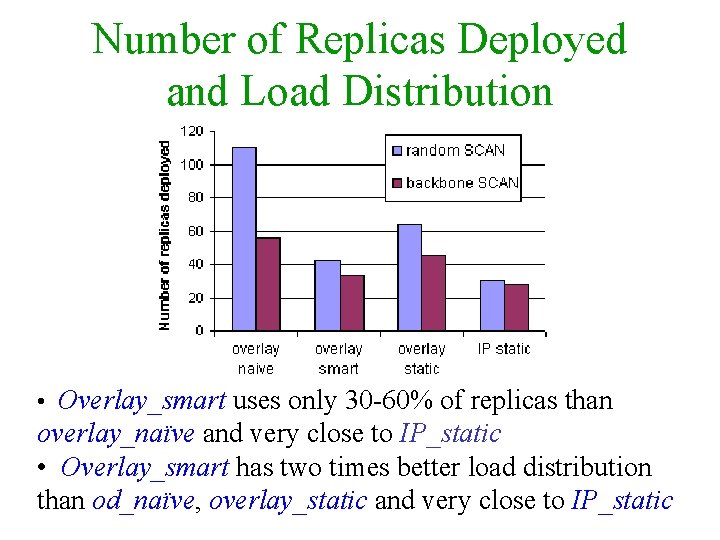

Number of Replicas Deployed and Load Distribution • Overlay_smart uses only 30 -60% of replicas than overlay_naïve and very close to IP_static • Overlay_smart has two times better load distribution than od_naïve, overlay_static and very close to IP_static

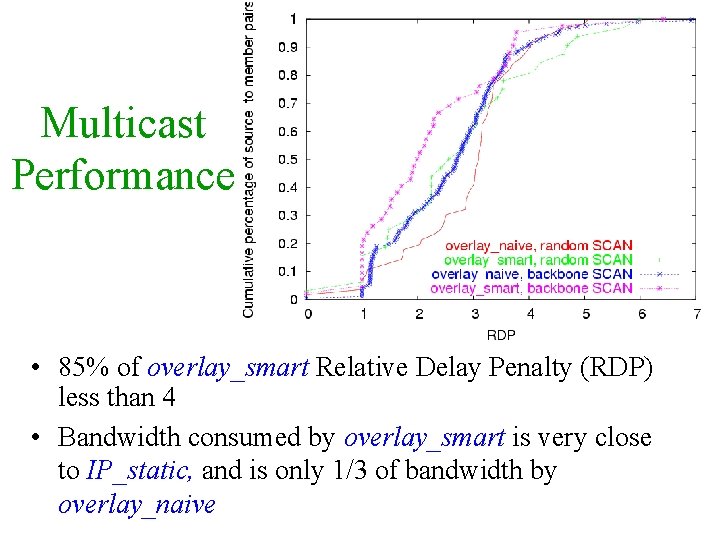

Multicast Performance • 85% of overlay_smart Relative Delay Penalty (RDP) less than 4 • Bandwidth consumed by overlay_smart is very close to IP_static, and is only 1/3 of bandwidth by overlay_naive

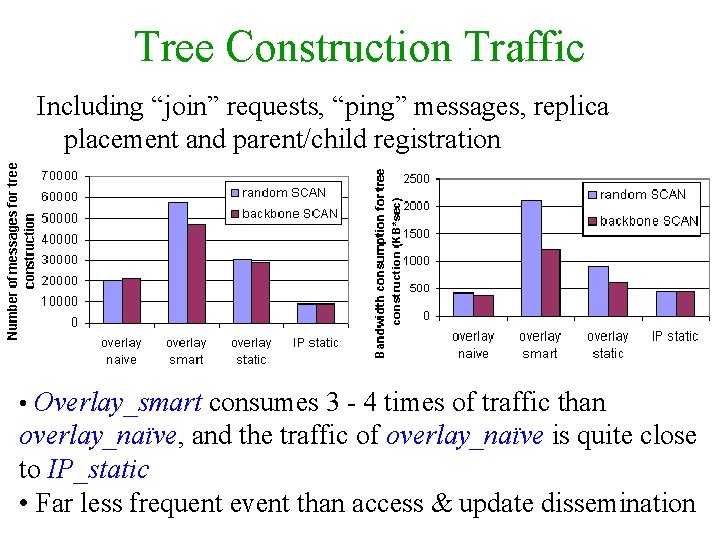

Tree Construction Traffic Including “join” requests, “ping” messages, replica placement and parent/child registration • Overlay_smart consumes 3 - 4 times of traffic than overlay_naïve, and the traffic of overlay_naïve is quite close to IP_static • Far less frequent event than access & update dissemination

Conclusions • P 2 P networks can be used to construct CDNs • SCAN: Scalable Content Access Network with good Qo. S, efficiency and load balancing – Simultaneous dynamic replica placement & d-tree construction – Leverage DOLR to improve scalability and locality • In particular, overlay_smart recommended – Localized search + Lazy placement – Close to optimal number of replicas, good load distribution, low multicast delay and bandwidth penalty at the price of reasonable construction traffic

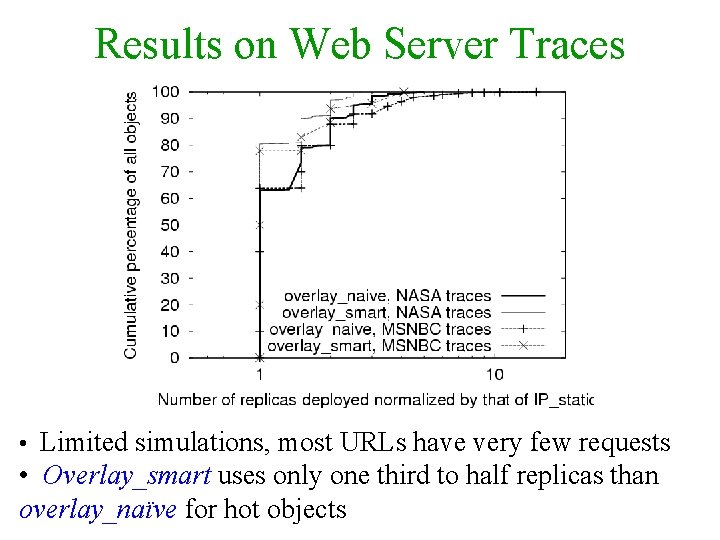

Results on Web Server Traces • Limited simulations, most URLs have very few requests • Overlay_smart uses only one third to half replicas than overlay_naïve for hot objects

SCAN: Scalable Content Access Network data source replica data plane cache always update adaptive coherence Web content server CDN server client DOLR mesh network plane

Replica Search • Singular Search data plane parent candidate proxy s c DOLR mesh DOLR path network plane

Replica Search • Greedy load distribution • Localized search data plane parent candidates client child proxy c s server child parent sibling network plane DOLR path

Dynamic Replica Placement: naïve • Singular Search • Eager Placement data plane parent candidate proxy s c Tapestry mesh Tapestry overlay path network plane first placement choice

Dynamic Replica Placement: smart • Localized search • Lazy placement • Greedy load distribution data plane parent candidates client child proxy c s server child parent sibling network plane Tapestry overlay path first placement choice

- Slides: 24