Scalla Andrew Hanushevsky Stanford Linear Accelerator Center Stanford

- Slides: 27

Scalla Andrew Hanushevsky Stanford Linear Accelerator Center Stanford University 8 -December-06 http: //xrootd. slac. stanford. edu

Outline Introduction n History Design points Architecture Data serving n xrootd Clustering n olbd SRM Access Conclusion 8 -December-06 2: http: //xrootd. slac. stanford. edu

What is Scalla? Scalla Structured Cluster Architecture for Low Latency Access n Low Latency Access to data via xrootd servers n POSIX-style n n By default, arbitrary data organized as files Hierarchical directory-like name space n Protocol n byte-level random access includes high performance features Structured Clustering provided by olbd servers n Exponentially 8 -December-06 scalable and self organizing 3: http: //xrootd. slac. stanford. edu

Brief History 1997 – Objectivity, Inc. collaboration n Design & Development to scale Objectivity/DB n n First attempt to use commercial DB for Physics data Very successful but problematical 2001 – Ba. Bar decides to use root framework n Collaboration with INFN, Padova & SLAC n n Design & develop high performance data access Work based on what we learned with Objectivity 2003 – First deployment of xrootd system at SLAC 2005 – Collaboration extended n n Root collaboration & Alice LHC experiment, CERN CNAF, Bologna, It; Cornell University, FZK, De; IN 2 P 3, Fr; INFN, Padova, It; RAL, UK; SLAC are current production deployment sites 8 -December-06 4: http: //xrootd. slac. stanford. edu

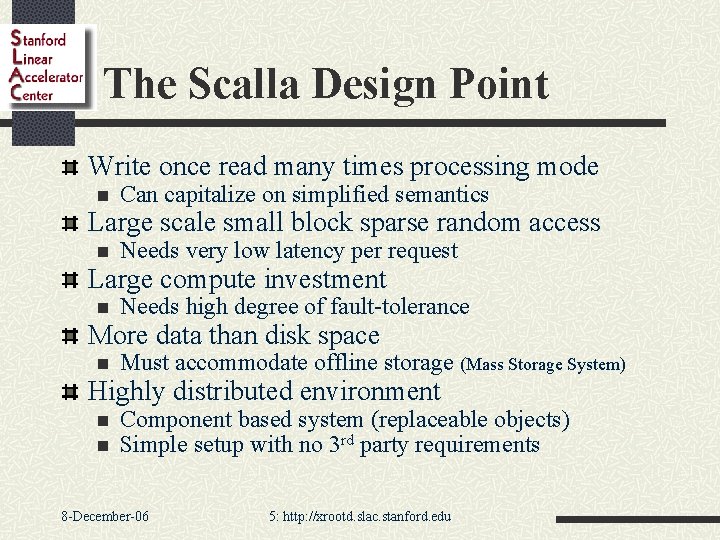

The Scalla Design Point Write once read many times processing mode n Can capitalize on simplified semantics Large scale small block sparse random access n Needs very low latency per request Large compute investment n Needs high degree of fault-tolerance More data than disk space n Must accommodate offline storage (Mass Storage System) Highly distributed environment n n Component based system (replaceable objects) Simple setup with no 3 rd party requirements 8 -December-06 5: http: //xrootd. slac. stanford. edu

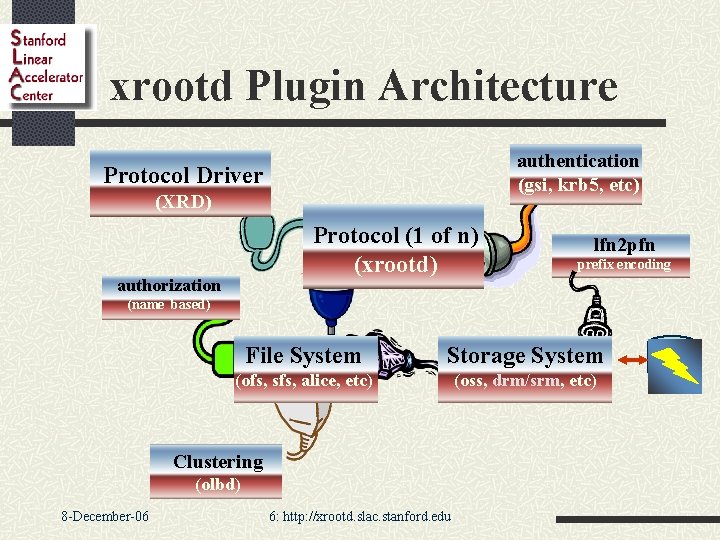

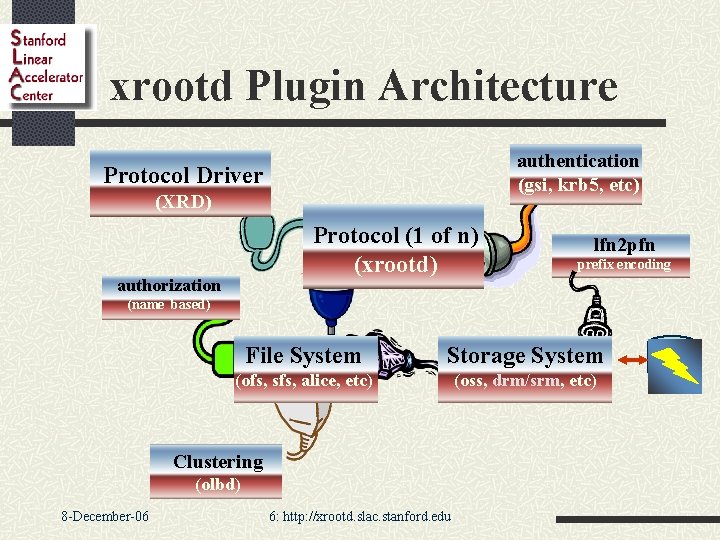

xrootd Plugin Architecture authentication (gsi, krb 5, etc) Protocol Driver (XRD) Protocol (1 of n) (xrootd) authorization lfn 2 pfn prefix encoding (name based) File System Storage System (ofs, sfs, alice, etc) (oss, drm/srm, etc) Clustering (olbd) 8 -December-06 6: http: //xrootd. slac. stanford. edu

Making the Server Perform I Protocol is a key component in performance n Compact & efficient protocol n n High degree of server-side flexibility n n n Minimal request/response overhead (24/8 bytes) Minimal encoding/decoding (network ordered binary) Parallel requests on a single client stream Request & response reordering Dynamic transfer size selection Rich set of operations n Allows hints for improved performance n n n Pre-read, prepare, client access & processing hints Especially important for accessing offline storage Integrated peer-to-peer clustering n 8 -December-06 Inherent scaling and fault tolerance 7: http: //xrootd. slac. stanford. edu

Making the Server Perform II Short code paths critical n Massively threaded design n Avoids synchronization bottlenecks n Adapts well to next generation multi-core chips n Internal wormhole mechanisms n Minimizes n n code paths in a multi-layered design Does not flatten the overall architecture Use the most efficient OS-specific system interfaces n Dynamic n n 8 -December-06 and compile-time selection Dynamic: aio_read() vs read() Compile-time: /dev/poll or kqueue() vs poll() or select() 8: http: //xrootd. slac. stanford. edu

Making the Server Perform III Intelligent memory management n Minimize cross-thread shared objects n Avoids n thrashing the processor cache Maximize object re-use n Less fragmenting the free space heap n Avoids major serialization bottleneck (malloc) n Load adaptive I/O buffer management n Minimize 8 -December-06 server growth to avoid paging 9: http: //xrootd. slac. stanford. edu

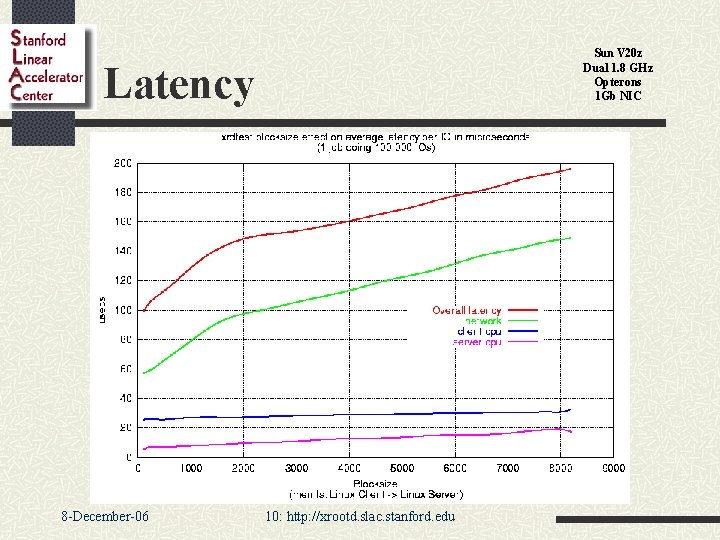

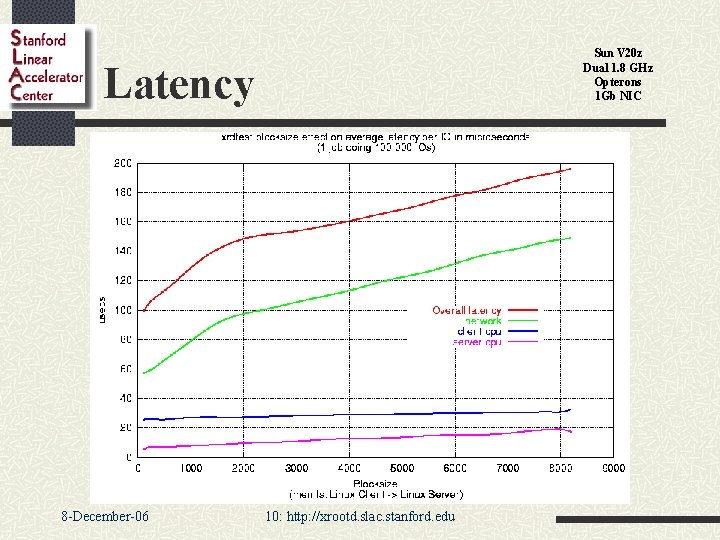

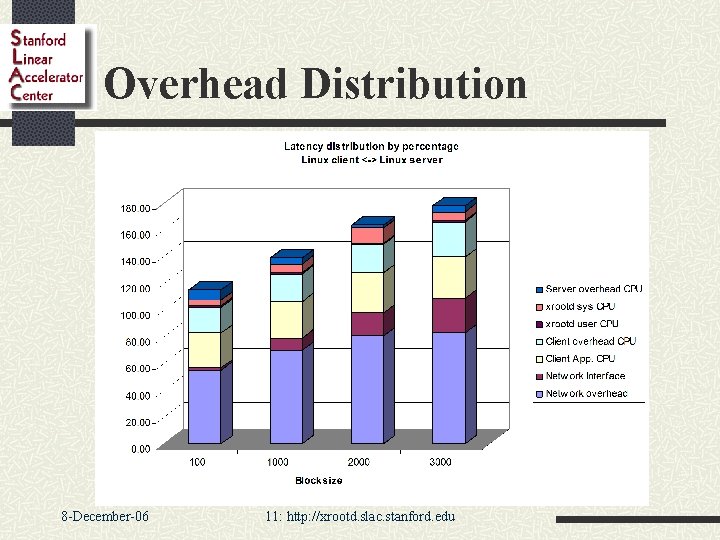

Sun V 20 z Dual 1. 8 GHz Opterons 1 Gb NIC Latency 8 -December-06 10: http: //xrootd. slac. stanford. edu

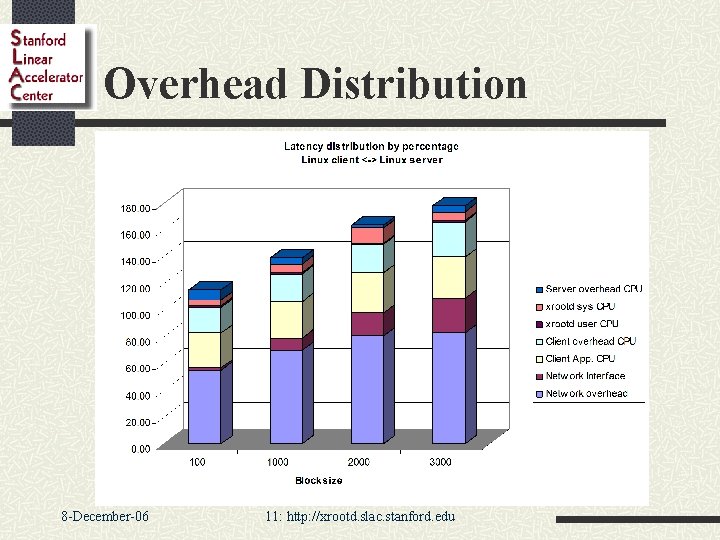

Overhead Distribution 8 -December-06 11: http: //xrootd. slac. stanford. edu

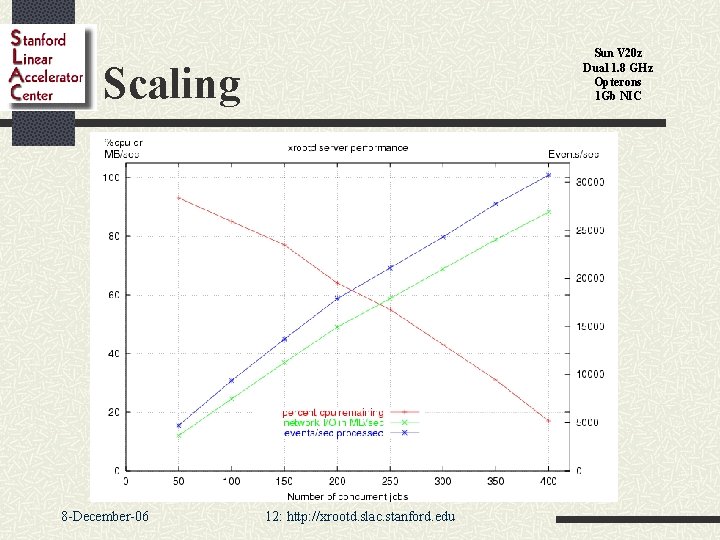

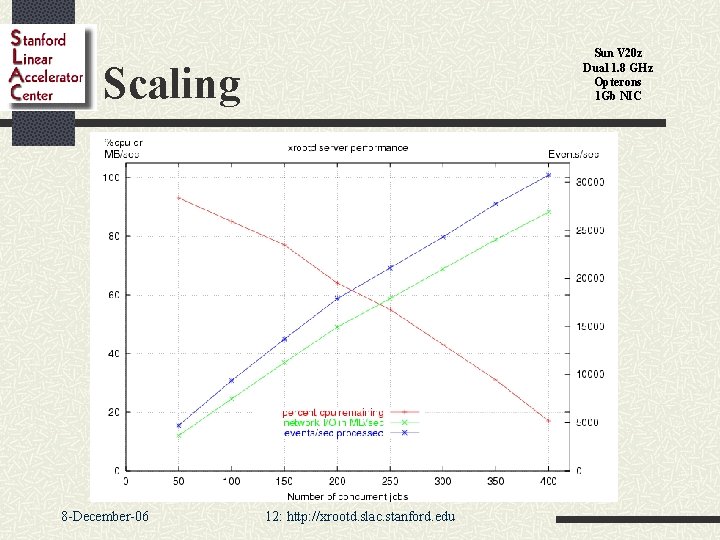

Sun V 20 z Dual 1. 8 GHz Opterons 1 Gb NIC Scaling 8 -December-06 12: http: //xrootd. slac. stanford. edu

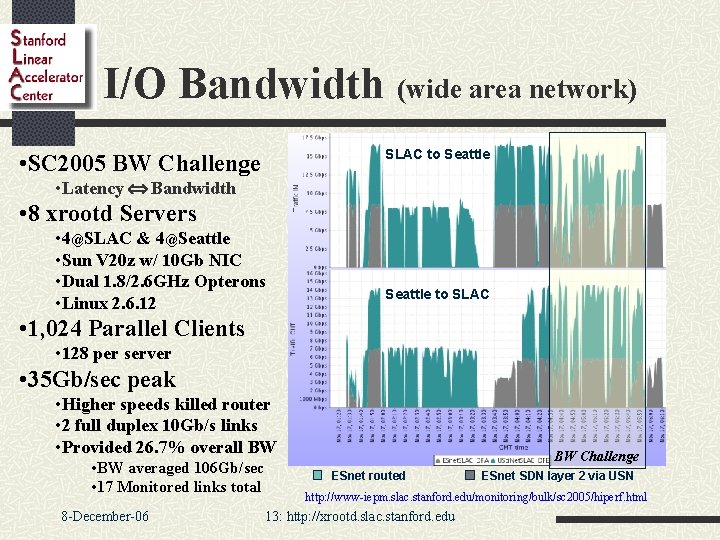

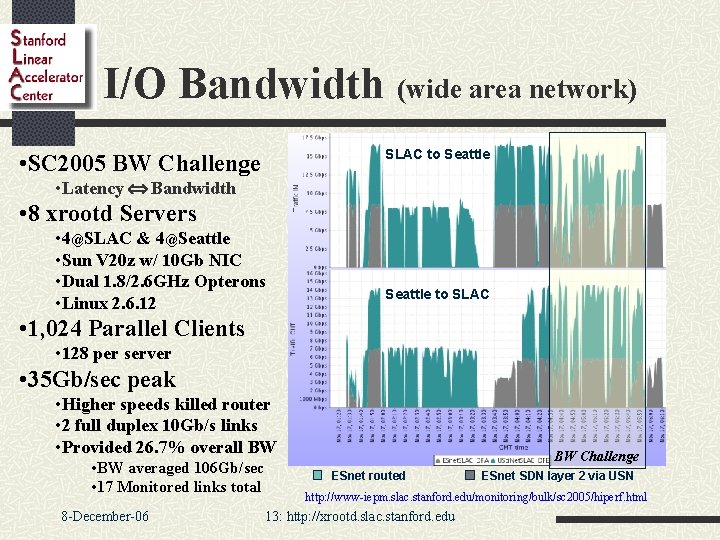

I/O Bandwidth (wide area network) SLAC to Seattle • SC 2005 BW Challenge • Latency Û Bandwidth • 8 xrootd Servers • 4@SLAC & 4@Seattle • Sun V 20 z w/ 10 Gb NIC • Dual 1. 8/2. 6 GHz Opterons • Linux 2. 6. 12 Seattle to SLAC • 1, 024 Parallel Clients • 128 per server • 35 Gb/sec peak • Higher speeds killed router • 2 full duplex 10 Gb/s links • Provided 26. 7% overall BW • BW averaged 106 Gb/sec • 17 Monitored links total 8 -December-06 BW Challenge ESnet routed ESnet SDN layer 2 via USN http: //www-iepm. slac. stanford. edu/monitoring/bulk/sc 2005/hiperf. html 13: http: //xrootd. slac. stanford. edu

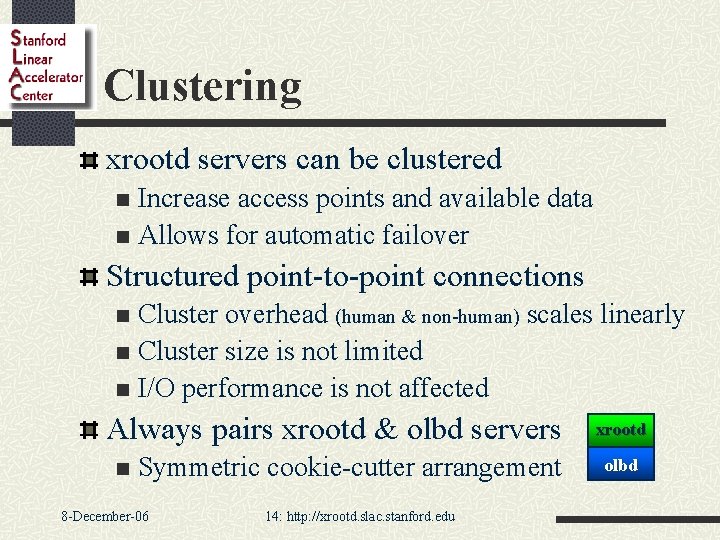

Clustering xrootd servers can be clustered Increase access points and available data n Allows for automatic failover n Structured point-to-point connections Cluster overhead (human & non-human) scales linearly n Cluster size is not limited n I/O performance is not affected n Always pairs xrootd & olbd servers n Symmetric cookie-cutter arrangement 8 -December-06 14: http: //xrootd. slac. stanford. edu xrootd olbd

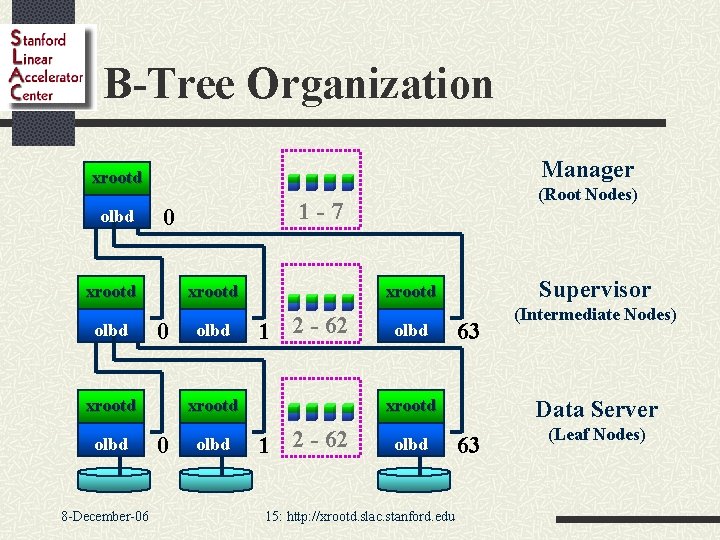

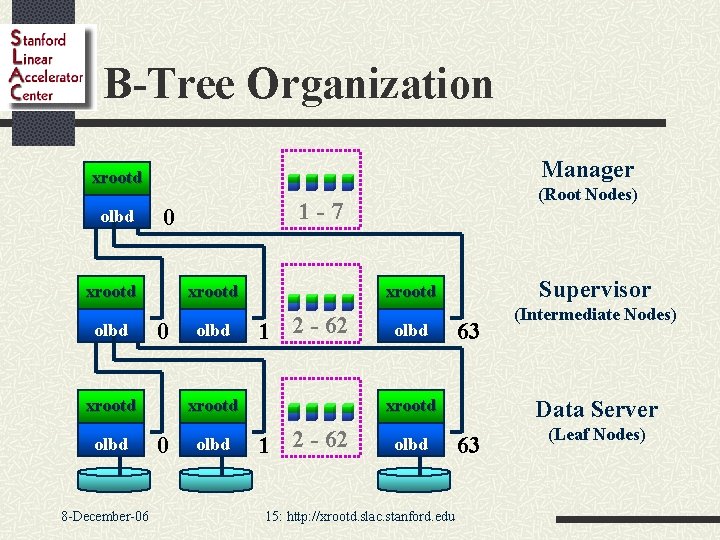

B-Tree Organization Manager xrootd olbd xrootd 0 xrootd olbd 8 -December-06 1 -7 0 xrootd olbd Supervisor xrootd 1 2 - 62 xrootd 0 (Root Nodes) olbd 63 xrootd 1 2 - 62 olbd 15: http: //xrootd. slac. stanford. edu (Intermediate Nodes) Data Server 63 (Leaf Nodes)

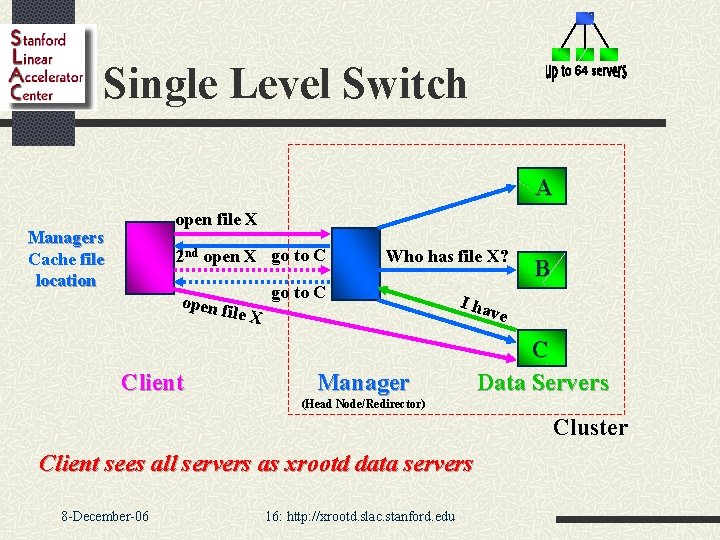

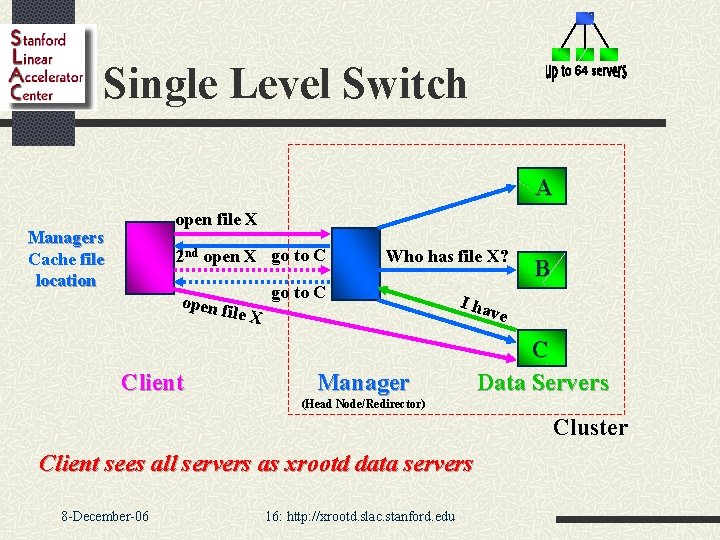

Single Level Switch A open file X Managers Cache file location 2 nd open X go to C open f ile X Client Who has file X? go to C B I ha Manager ve C Data Servers (Head Node/Redirector) Cluster Client sees all servers as xrootd data servers 8 -December-06 16: http: //xrootd. slac. stanford. edu

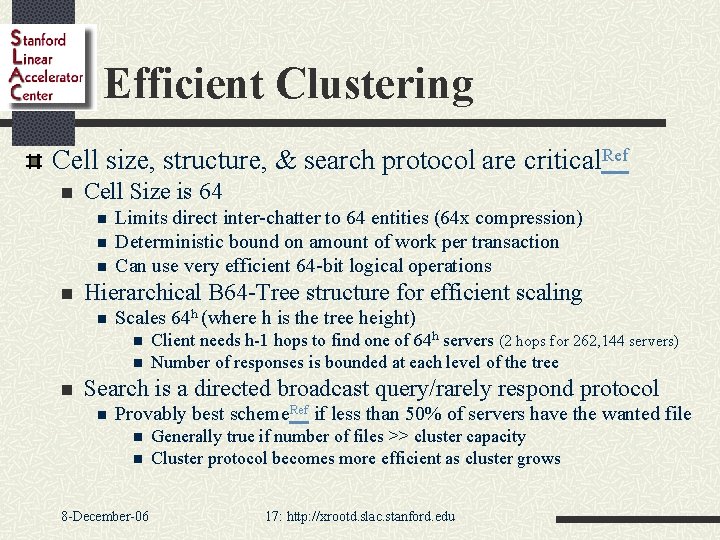

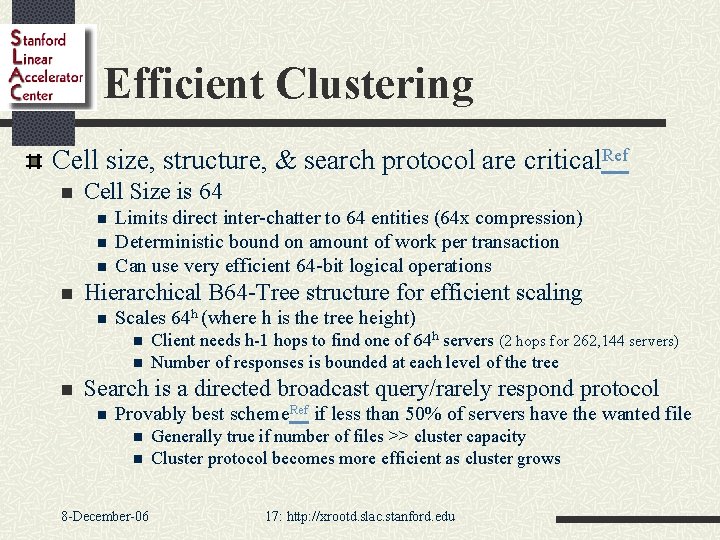

Efficient Clustering Cell size, structure, & search protocol are critical. Ref n Cell Size is 64 n n Limits direct inter-chatter to 64 entities (64 x compression) Deterministic bound on amount of work per transaction Can use very efficient 64 -bit logical operations Hierarchical B 64 -Tree structure for efficient scaling n Scales 64 h (where h is the tree height) n n n Client needs h-1 hops to find one of 64 h servers (2 hops for 262, 144 servers) Number of responses is bounded at each level of the tree Search is a directed broadcast query/rarely respond protocol n Provably best scheme. Ref if less than 50% of servers have the wanted file n n 8 -December-06 Generally true if number of files >> cluster capacity Cluster protocol becomes more efficient as cluster grows 17: http: //xrootd. slac. stanford. edu

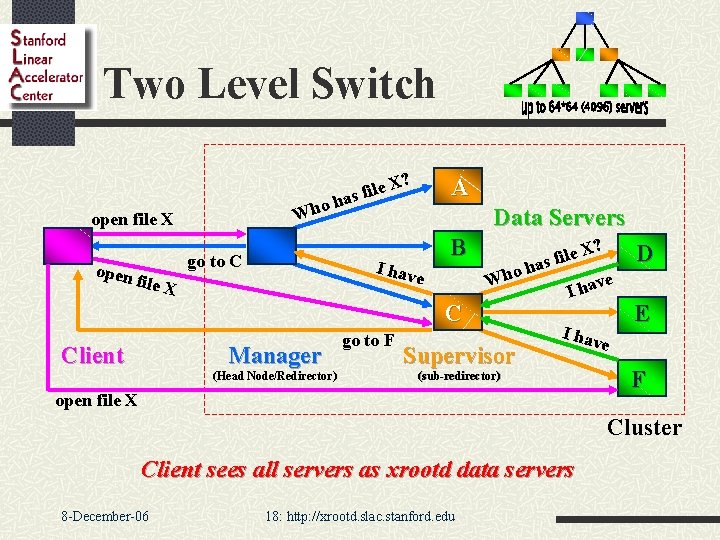

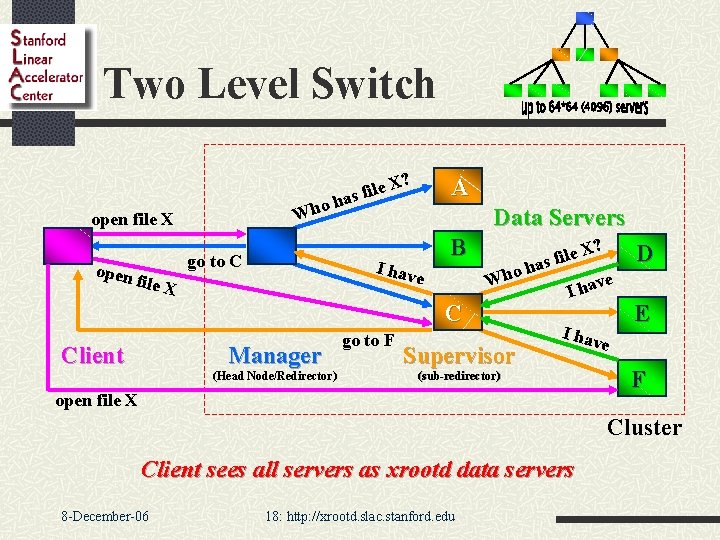

Two Level Switch ? ho h W open file X go to C open f A le X i f s a Data Servers I hav B e ile X ? ho h W C Client Manager (Head Node/Redirector) go to F X e l i f as Supervisor D ve I hav E (sub-redirector) open file X e F Cluster Client sees all servers as xrootd data servers 8 -December-06 18: http: //xrootd. slac. stanford. edu

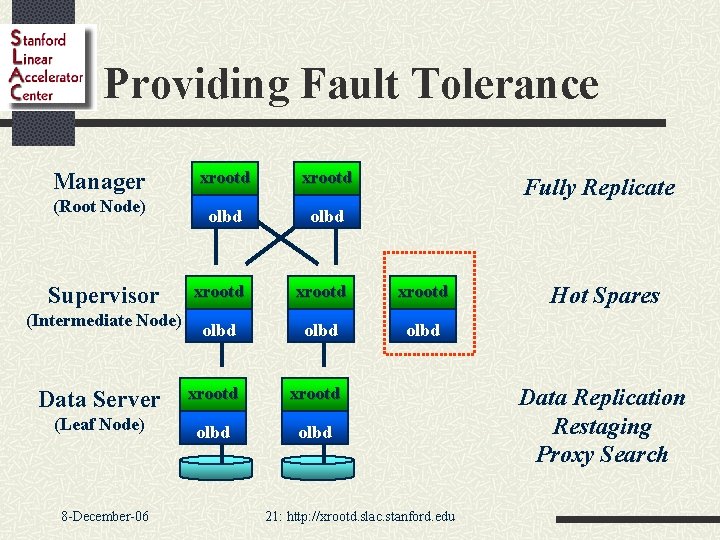

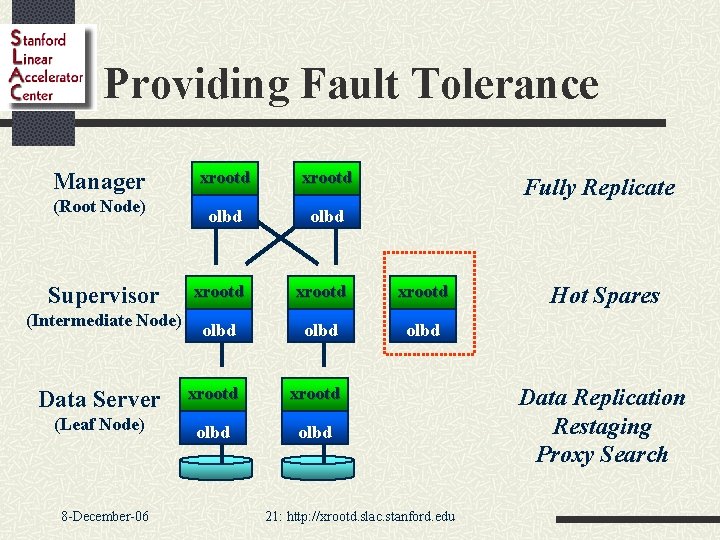

Providing Fault Tolerance xrootd olbd xrootd olbd Data Server xrootd (Leaf Node) olbd Manager (Root Node) Supervisor (Intermediate Node) 8 -December-06 Fully Replicate 21: http: //xrootd. slac. stanford. edu Hot Spares Data Replication Restaging Proxy Search

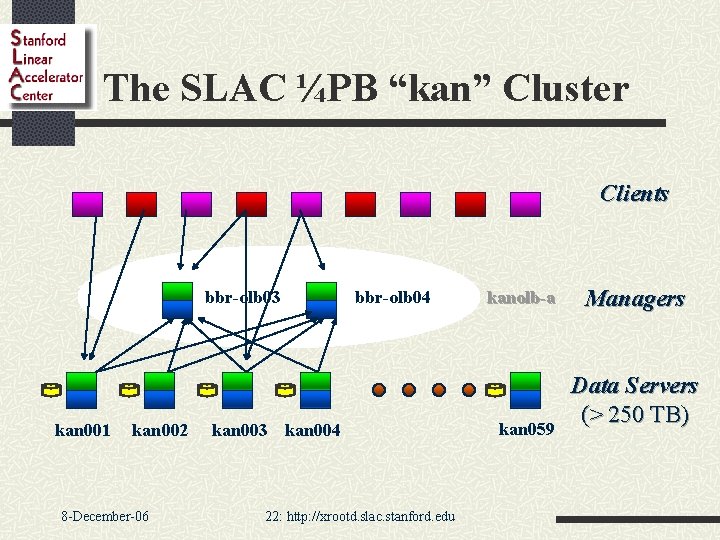

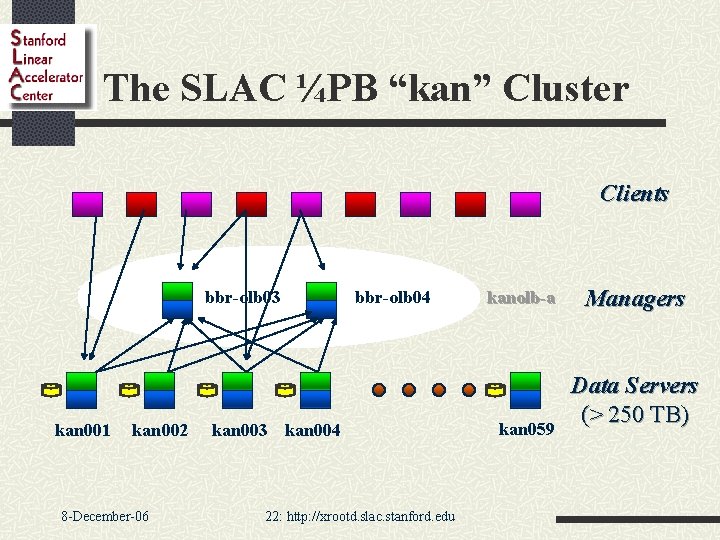

The SLAC ¼PB “kan” Cluster Clients bbr-olb 03 kan 001 kan 002 8 -December-06 bbr-olb 04 kan 003 kan 004 22: http: //xrootd. slac. stanford. edu kanolb-a kan 059 Managers Data Servers (> 250 TB)

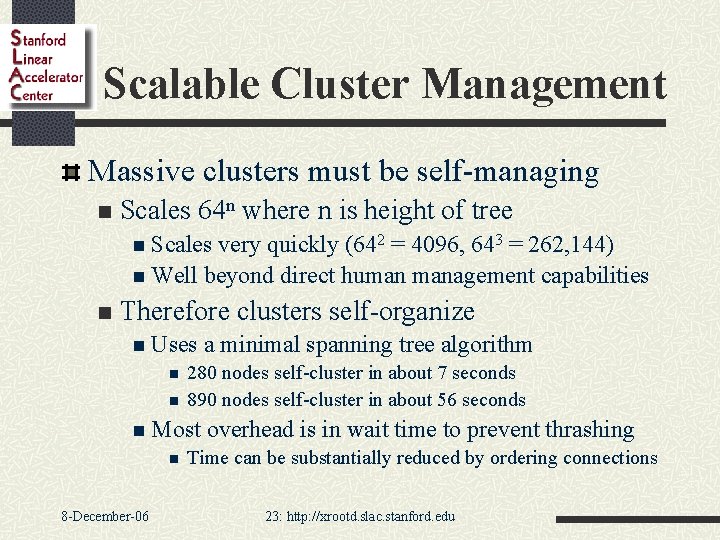

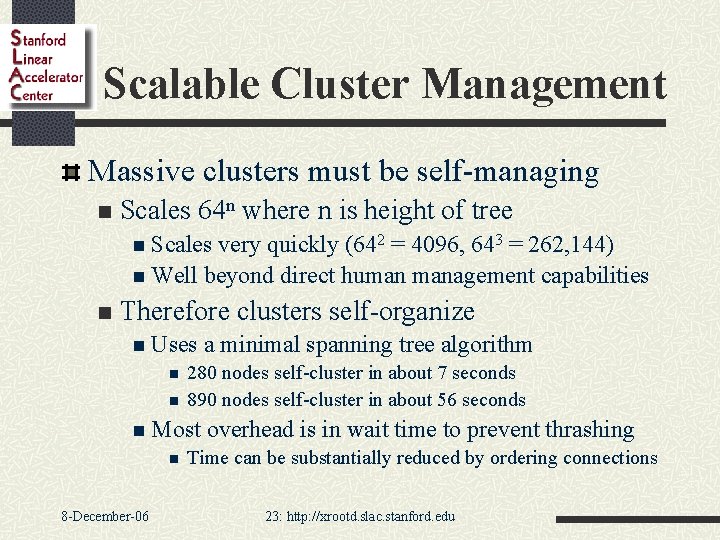

Scalable Cluster Management Massive clusters must be self-managing n Scales 64 n where n is height of tree n Scales very quickly (642 = 4096, 643 = 262, 144) n Well beyond direct human management capabilities n Therefore clusters self-organize n Uses n n 280 nodes self-cluster in about 7 seconds 890 nodes self-cluster in about 56 seconds n Most n 8 -December-06 a minimal spanning tree algorithm overhead is in wait time to prevent thrashing Time can be substantially reduced by ordering connections 23: http: //xrootd. slac. stanford. edu

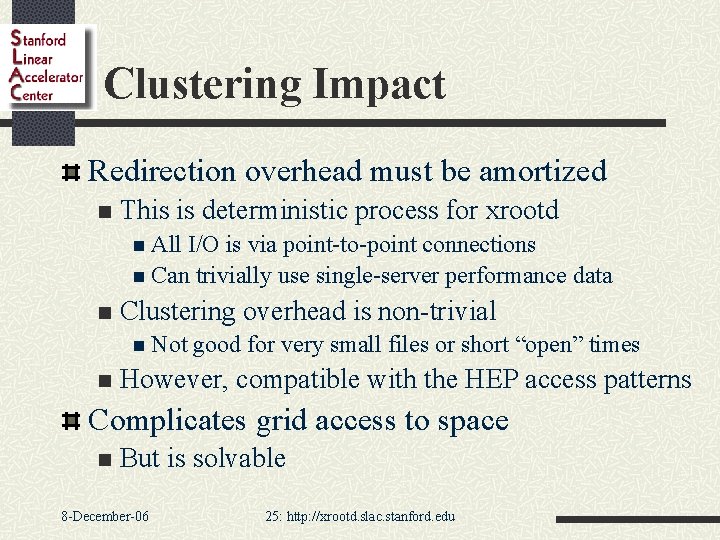

Clustering Impact Redirection overhead must be amortized n This is deterministic process for xrootd n All I/O is via point-to-point connections n Can trivially use single-server performance data n Clustering overhead is non-trivial n Not n good for very small files or short “open” times However, compatible with the HEP access patterns Complicates grid access to space n But is solvable 8 -December-06 25: http: //xrootd. slac. stanford. edu

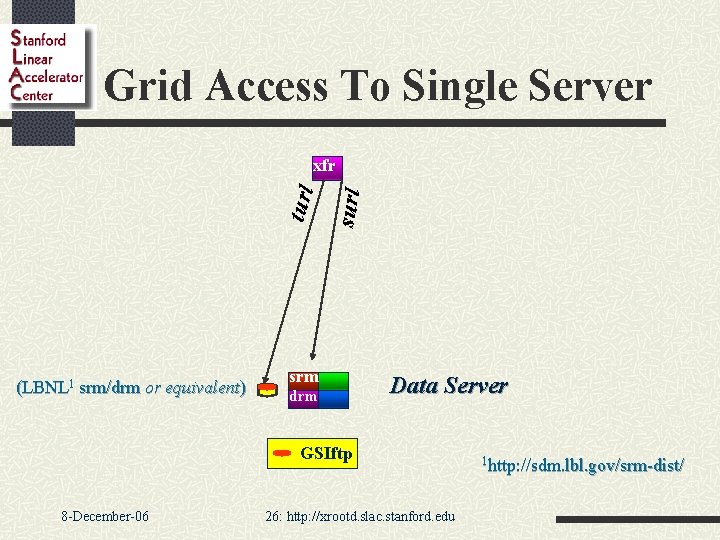

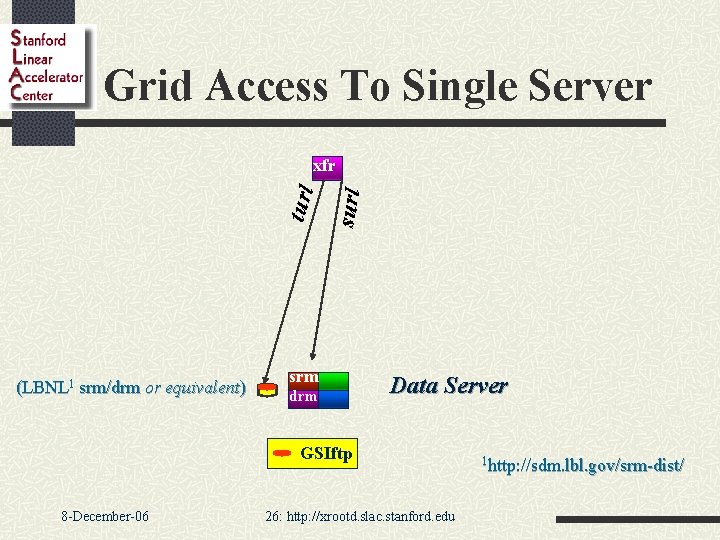

Grid Access To Single Server (LBNL 1 srm/drm or equivalent) surl turl xfr srm drm Data Server GSIftp 8 -December-06 26: http: //xrootd. slac. stanford. edu 1 http: //sdm. lbl. gov/srm-dist/

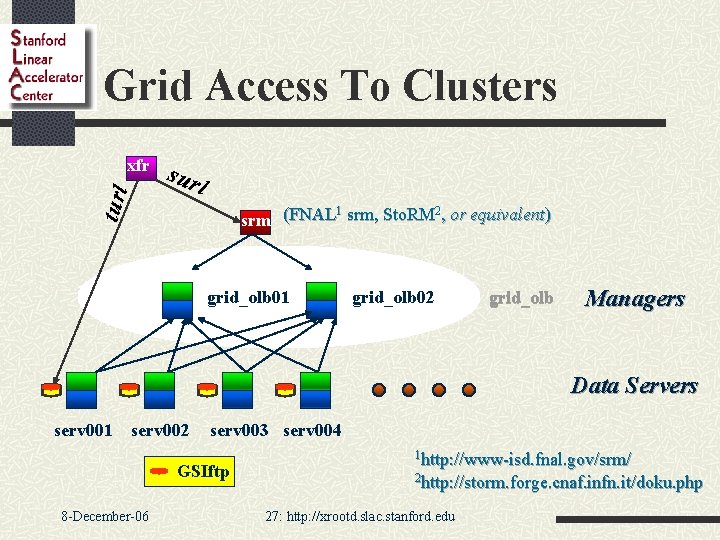

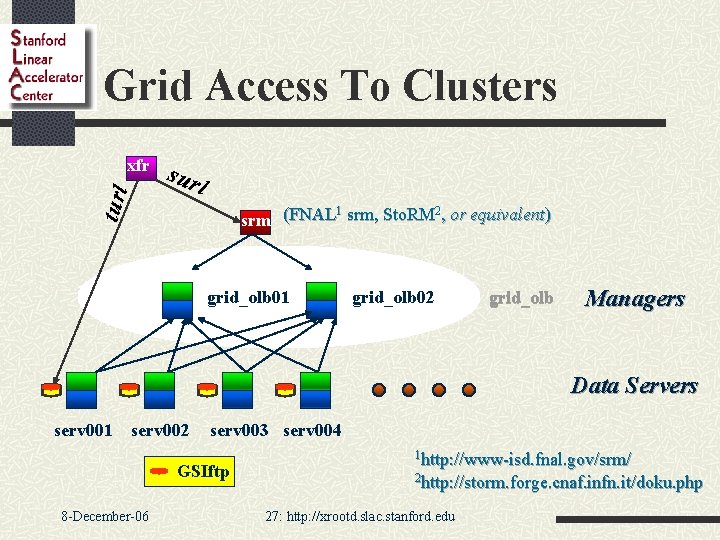

Grid Access To Clusters turl xfr sur l 1 2 srm (FNAL srm, Sto. RM , or equivalent) grid_olb 01 grid_olb 02 grid_olb Managers Data Servers serv 001 serv 002 serv 003 serv 004 GSIftp 8 -December-06 1 http: //www-isd. fnal. gov/srm/ 2 http: //storm. forge. cnaf. infn. it/doku. php 27: http: //xrootd. slac. stanford. edu

Grid Access to Managed Space LCG DPM n Includes SRM and Disk Pool Manager n xrootd integration completed Castor 2 n Includes SRM and Disk/Tape Manager n xrootd integration nearing completion MPS n n Part of standard distribution Makes sense when used with external SRM n n 8 -December-06 FNAL SRM integration on-going but problematical Sto. RM integration looks much more promising 28: http: //xrootd. slac. stanford. edu

Conclusion High performance data access systems achievable n The devil is in the details n Proper protocol, semantics, and engineering critical High performance and clustering are synergetic n Allows unique performance, usability, scalability, and recoverability characteristics SRM access for grid compatibility ongoing Several solutions (none perfect) n Some packaging issues remain n 8 -December-06 29: http: //xrootd. slac. stanford. edu

Acknowledgements Software Collaborators n n n n INFN/Padova: Fabrizio Furano (client-side), Alvise Dorigao Root: Fons Rademakers, Gerri Ganis (security), Beterand Bellenet (windows) Alice: Derek Feichtinger, Guenter Kickinger STAR/BNL: Pavel Jackl Cornell: Gregory Sharp SLAC: Jacek Becla, Tofigh Azemoon, Wilko Kroeger, Bill Weeks Ba. Bar: Pete Elmer (packaging) Operational collaborators n BNL, CNAF, FZK, INFN, IN 2 P 3, RAL, SLAC Funding n US Department of Energy n 8 -December-06 Contract DE-AC 02 -76 SF 00515 with Stanford University 30: http: //xrootd. slac. stanford. edu