Scalable training of L 1 regularized loglinear models

Scalable training of L 1 -regularized log-linear models Galen Andrew (Joint work with Jianfeng Gao) ICML, 2007

Minimizing regularized loss • Many parametric ML models are trained by minimizing a function of the form: • is a loss function quantifying “fit to the data” – Negative log-likelihood of training data – Distance from decision boundary of incorrect examples • If zero is a reasonable “default” parameter value, we can use where is a norm, penalizing large vectors, and C is a constant Galen Andrew Microsoft Research

Types of norms • A norm precisely defines “size” of a vector 1 2 3 3 Contours of L 2 -norm in 2 D Galen Andrew Contours of L 1 -norm in 2 D Microsoft Research

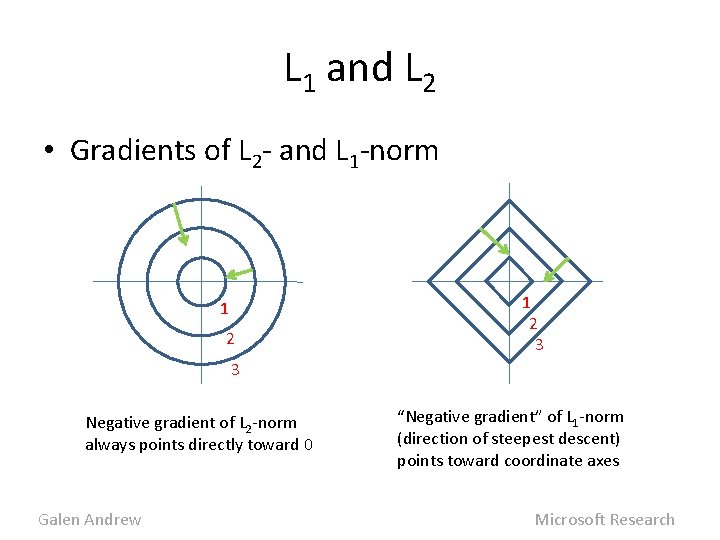

L 1 and L 2 • Gradients of L 2 - and L 1 -norm 1 2 3 3 Negative gradient of L 2 -norm always points directly toward 0 Galen Andrew “Negative gradient” of L 1 -norm (direction of steepest descent) points toward coordinate axes Microsoft Research

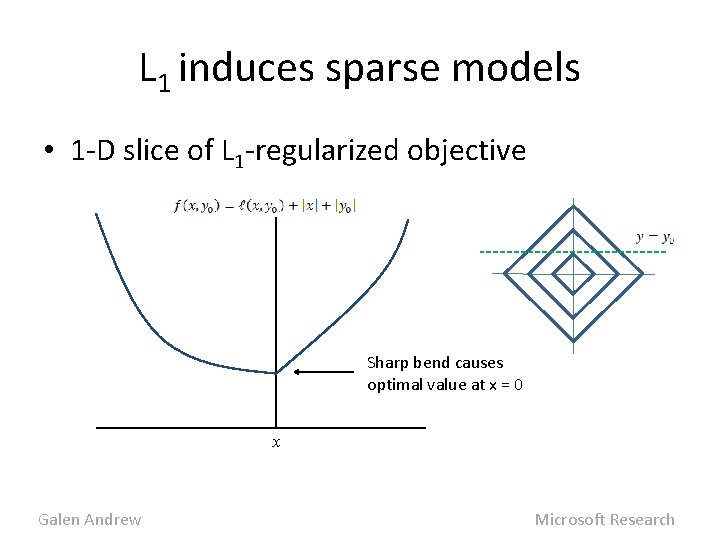

L 1 induces sparse models • 1 -D slice of L 1 -regularized objective Sharp bend causes optimal value at x = 0 x Galen Andrew Microsoft Research

L 1 induces sparse models • At global optimum, many parameters have value exactly zero – L 2 would give small, nonzero values • Thus L 1 does continuous feature selection – More interpretable, computationally manageable models • C parameter tunes sparsity/accuracy tradeoff • In our experiments, only 1. 5% of feats remain Galen Andrew Microsoft Research

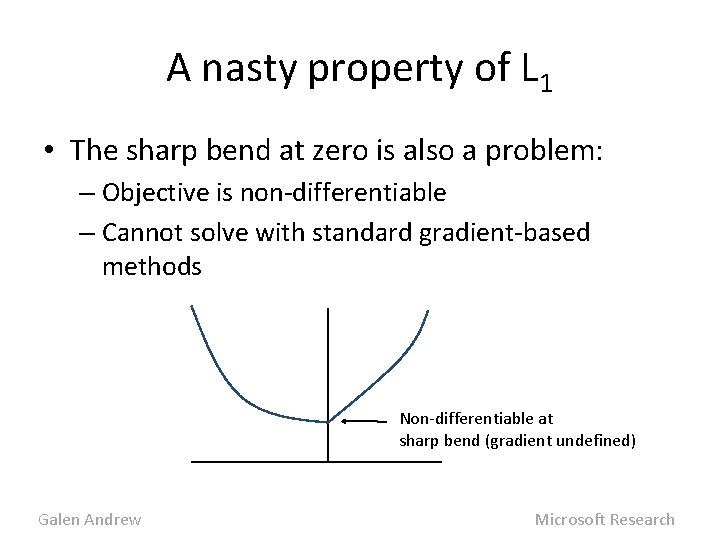

A nasty property of L 1 • The sharp bend at zero is also a problem: – Objective is non-differentiable – Cannot solve with standard gradient-based methods Non-differentiable at sharp bend (gradient undefined) Galen Andrew Microsoft Research

Digression: Newton’s method • To optimize a function f: 1. Form 2 nd-order Taylor expansion around x 0 2. Jump to minimum: (Actually, line search in direction of xnew) 3. Repeat • Sort of an ideal. – In practice, H is too large ( Galen Andrew ) Microsoft Research

Limited-Memory Quasi-Newton • Approximate H-1 with a low-rank matrix built from changes to gradient in recent iterations – Approximate H-1 and not H, so no need to invert the matrix or solve linear system! • Most popular L-M Q-N method: L-BFGS – Storage and computation are O(# vars) – Very good theoretical convergence properties – Empirically, best method for training large-scale log-linear models with L 2 (Malouf ‘ 02, Minka ‘ 03) Galen Andrew Microsoft Research

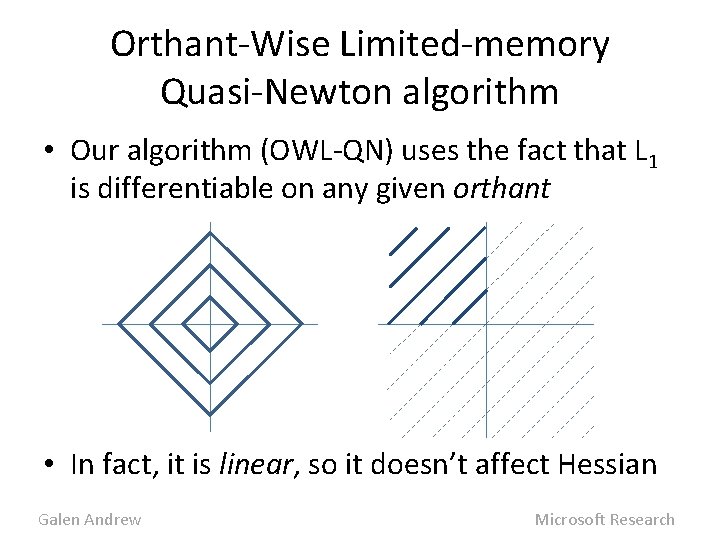

Orthant-Wise Limited-memory Quasi-Newton algorithm • Our algorithm (OWL-QN) uses the fact that L 1 is differentiable on any given orthant • In fact, it is linear, so it doesn’t affect Hessian Galen Andrew Microsoft Research

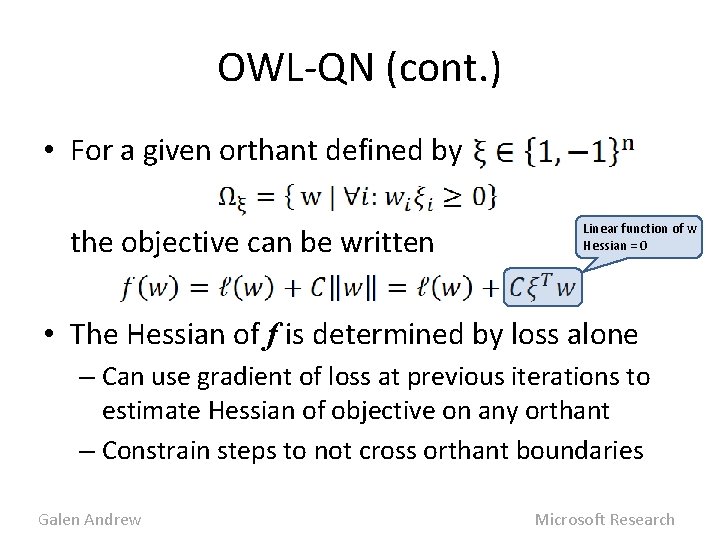

OWL-QN (cont. ) • For a given orthant defined by the objective can be written Linear function of w Hessian = 0 • The Hessian of f is determined by loss alone – Can use gradient of loss at previous iterations to estimate Hessian of objective on any orthant – Constrain steps to not cross orthant boundaries Galen Andrew Microsoft Research

OWL-QN (cont. ) 1. Choose an orthant 2. Find Quasi-Newton quadratic approximation to objective on orthant 3. Jump to minimum of quadratic (Actually, line search in direction of minimum) 4. Project back onto orthant 5. Repeat steps 1 -4 until convergence Galen Andrew Microsoft Research

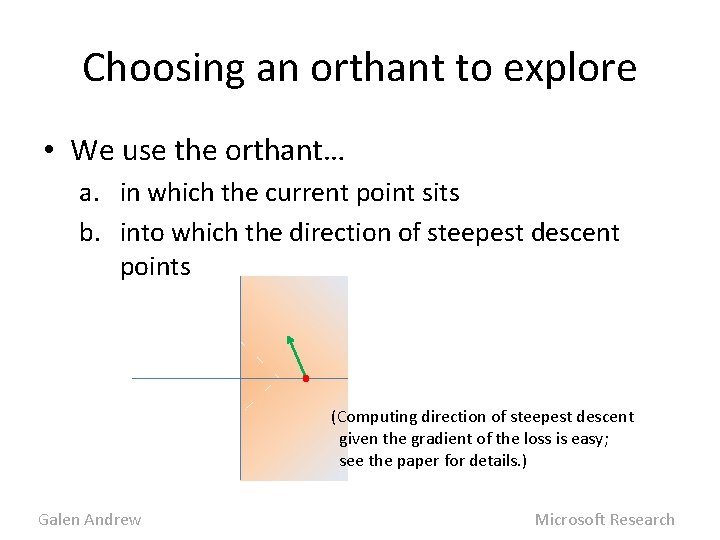

Choosing an orthant to explore • We use the orthant… a. in which the current point sits b. into which the direction of steepest descent points (Computing direction of steepest descent given the gradient of the loss is easy; see the paper for details. ) Galen Andrew Microsoft Research

Toy example • One iteration of OWL-QN: – Find vector of steepest descent – Choose orthant – Find L-BFGS quadratic approximation – Jump to minimum – Project back onto orthant – Update Hessian approximation using gradient of loss alone Galen Andrew Microsoft Research

Notes • Variables added/subtracted from model as orthant boundaries are hit • A variable can change signs in two iterations • Glossing over some details: – Line search with projection at each iteration – Convenient to expand notion of “orthant” to constrain some variables at zero – See paper for complete details • In paper we prove convergence to optimum Galen Andrew Microsoft Research

Experiments • We ran experiments with the parse re-ranking model of Charniak & Johnson (2005) – Start with a set of candidate parses for each sentence (produced by a baseline parser) – Train a log-linear model to select the correct one • Model uses ~1. 2 M features of a parse • Train on Sections 2 -19 of PTB (36 K sentences with 50 parses each) • Fit C to max. F-meas on Sec. 20 -21 (4 K sent. ) Galen Andrew Microsoft Research

Training methods compared • Compared OWL-QN with three other methods – Kazama & Tsujii (2003) paired variable formulation for L 1 implemented with Alg. Lib’s L-BFGS-B – L 2 with our own implementation of L-BFGS (on which OWL-QN is based) – L 2 with Alg. Lib’s implementation of L-BFGS • K&T turns L 1 into differentiable problem with bound constraints and twice the variables – Similar to Goodman’s 2004 method, but with L-BFGS-B instead of GIS Galen Andrew Microsoft Research

Comparison Methodology • For each problem (L 1 and L 2) – Run both algorithms until value nearly constant – Report time to reach within 1% of best value • We also report num. of function evaluations – Implementation independent comparison – Function evaluation dominates runtime • Results reported with chosen value of C • L-BFGS memory parameter = 5 for all runs Galen Andrew Microsoft Research

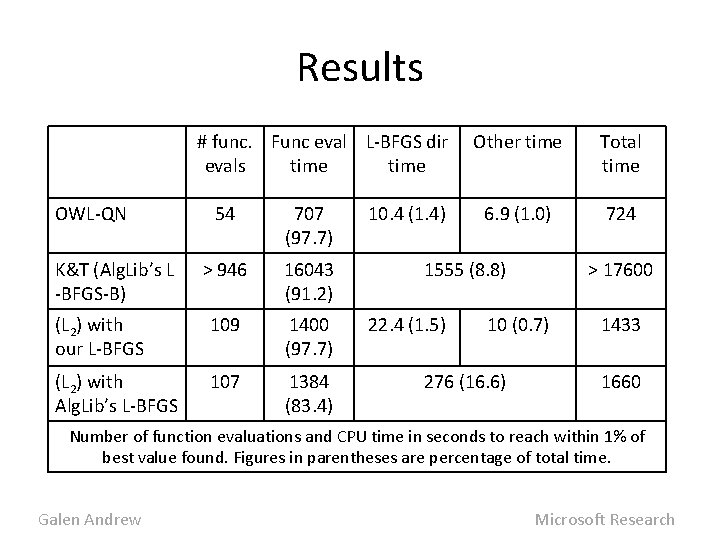

Results # func. Func eval L-BFGS dir evals time OWL-QN 54 707 (97. 7) > 946 16043 (91. 2) (L 2) with our L-BFGS 109 1400 (97. 7) (L 2) with Alg. Lib’s L-BFGS 107 1384 (83. 4) K&T (Alg. Lib’s L -BFGS-B) 10. 4 (1. 4) Other time Total time 6. 9 (1. 0) 724 1555 (8. 8) 22. 4 (1. 5) > 17600 10 (0. 7) 276 (16. 6) 1433 1660 Number of function evaluations and CPU time in seconds to reach within 1% of best value found. Figures in parentheses are percentage of total time. Galen Andrew Microsoft Research

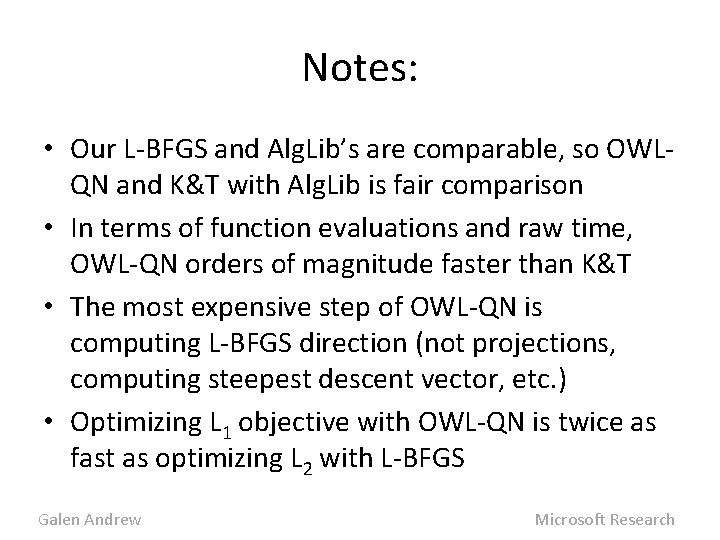

Notes: • Our L-BFGS and Alg. Lib’s are comparable, so OWLQN and K&T with Alg. Lib is fair comparison • In terms of function evaluations and raw time, OWL-QN orders of magnitude faster than K&T • The most expensive step of OWL-QN is computing L-BFGS direction (not projections, computing steepest descent vector, etc. ) • Optimizing L 1 objective with OWL-QN is twice as fast as optimizing L 2 with L-BFGS Galen Andrew Microsoft Research

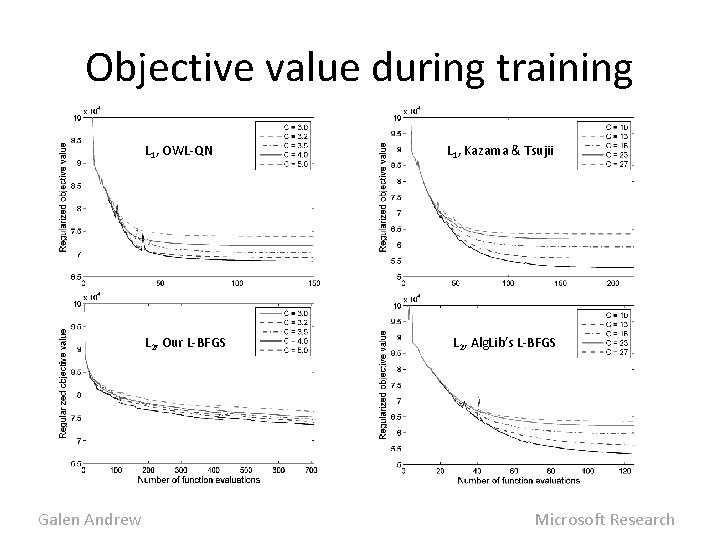

Objective value during training L 1, OWL-QN L 2, Our L-BFGS Galen Andrew L 1, Kazama & Tsujii L 2, Alg. Lib’s L-BFGS Microsoft Research

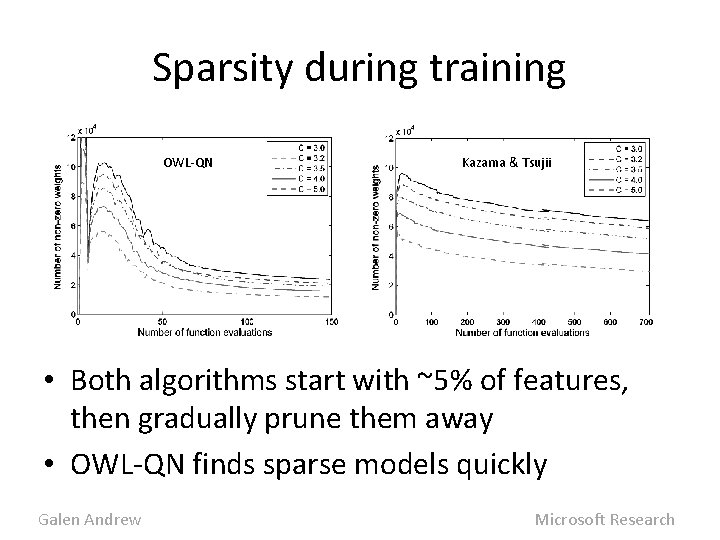

Sparsity during training OWL-QN Kazama & Tsujii • Both algorithms start with ~5% of features, then gradually prune them away • OWL-QN finds sparse models quickly Galen Andrew Microsoft Research

Extensions • For ACL paper, ran on 3 very different loglinear NLP models with up to 8 M features – CMM sequence model for POS tagging – Reranking log-linear model for LM adaptation – Semi-CRF for Chinese word segmentation • Can use any smooth convex loss – We’ve also tried least-squares (LASSO regression) • A small change allows non-convex loss – Only local minimum guaranteed Galen Andrew Microsoft Research

Software download • We’ve released c++ OWL-QN source – User can specify arbitrary convex smooth loss • Also included are standalone trainer for L 1 logistic regression and least-squares (LASSO) • Please visit my webpage for download – (Find with search engine of your choice) Galen Andrew Microsoft Research

Thanks! Galen Andrew Microsoft Research

Summary • L 1 regularization produces sparse, interpretable models that generalize well • Orthant-Wise Limited-memory Quasi-Newton, based on L-BFGS, efficiently minimizes L 1 regularized loss with millions of variables • Faster even than L 2 regularization in our experiments • Source code available for download Galen Andrew Microsoft Research

- Slides: 26