Scalable Application Layer Multicast Suman Banerjee Bobby Bhattacharjee

Scalable Application Layer Multicast Suman Banerjee Bobby Bhattacharjee Christopher Kommareddy ACM SIGCOMM Computer Communication Review , Proceedings of the 2002 conference on Applications, technologies, architectures, and protocols for computer communications, Vol. 32 no. 4, Aug 2002

Outline l l l Introduction Hierarchical Membership Protocol Description Simulation Results Experiment Results

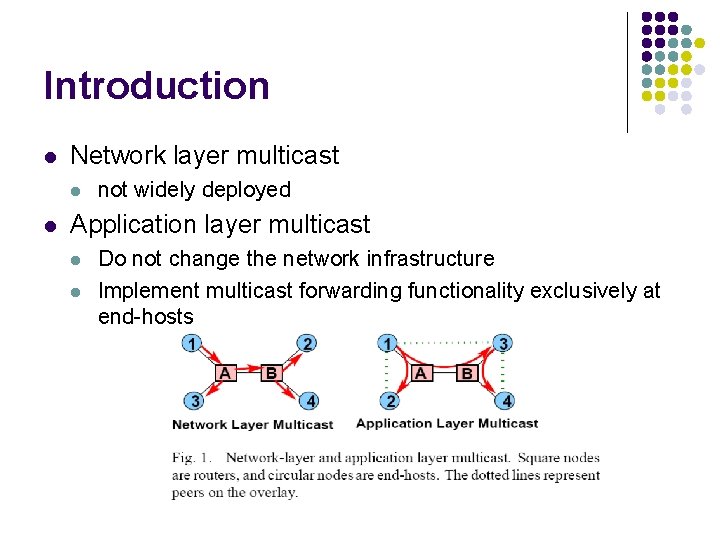

Introduction l Network layer multicast l l not widely deployed Application layer multicast l l Do not change the network infrastructure Implement multicast forwarding functionality exclusively at end-hosts

Introduction l l NICE - NICE is the Internet Cooperative Environment NICE is designed to l l l Support large receiver sets Small control overhead Low latency distribution trees

Hierarchical Membership l l Clients are assigned to different layers Each layer is partitioned into a set of clusters of size between k and 3 k – 1, where k is a constant parameter All hosts belong to the lowest layer L 0 The host with the minimum maximum distance to all other hosts in the cluster is chose to be the leader

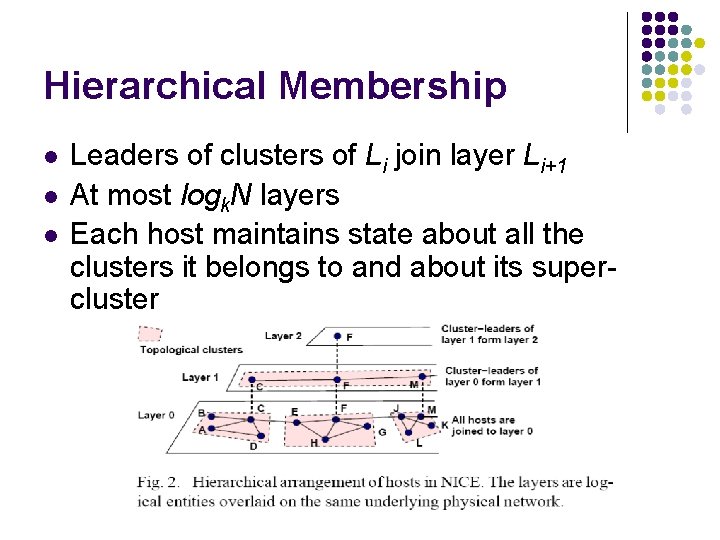

Hierarchical Membership l l l Leaders of clusters of Li join layer Li+1 At most logk. N layers Each host maintains state about all the clusters it belongs to and about its supercluster

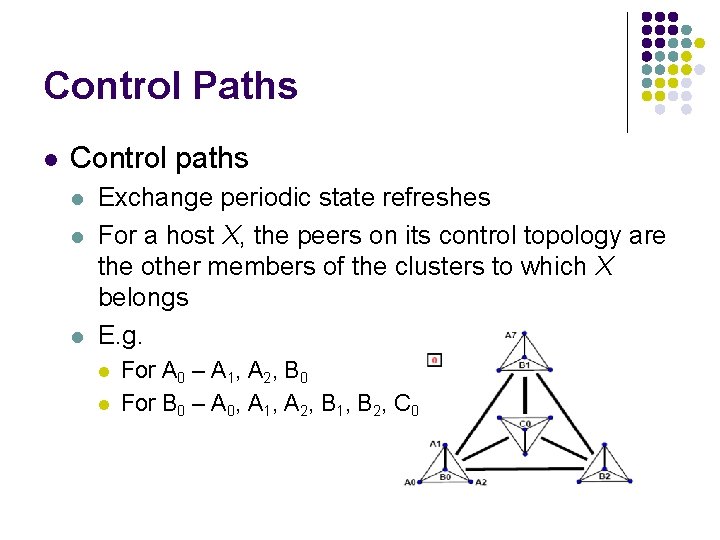

Control Paths l Control paths l l l Exchange periodic state refreshes For a host X, the peers on its control topology are the other members of the clusters to which X belongs E. g. l l For A 0 – A 1, A 2, B 0 For B 0 – A 0, A 1, A 2, B 1, B 2, C 0

Data Paths l Data paths l l Source-specific tree Run the following algorithm

Data Paths l Host h received data from host p l Forward the received packets to all clusters that h belongs, except that of p

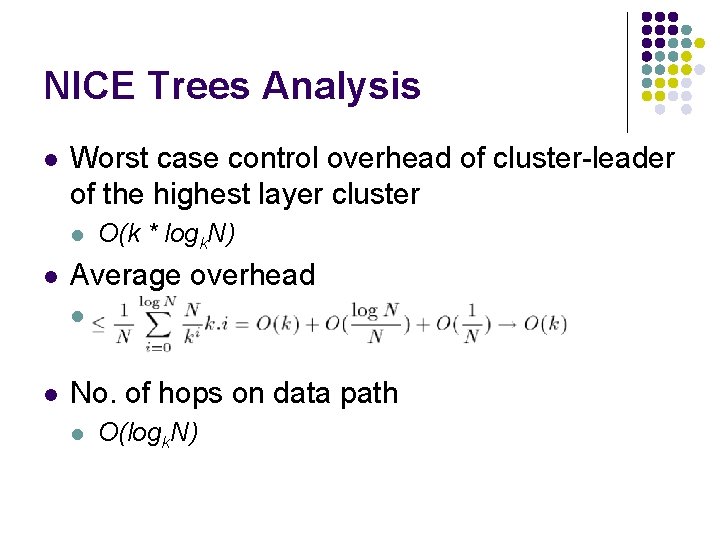

NICE Trees Analysis l Worst case control overhead of cluster-leader of the highest layer cluster l l Average overhead l l O(k * logk. N) No. of hops on data path l O(logk. N)

Protocol Description l Assumption l l l All hosts know the “Rendezvous Point” (RP) host RP is always the leader of the single cluster in the highest layer RP interacts with other hosts on control path, but not data path

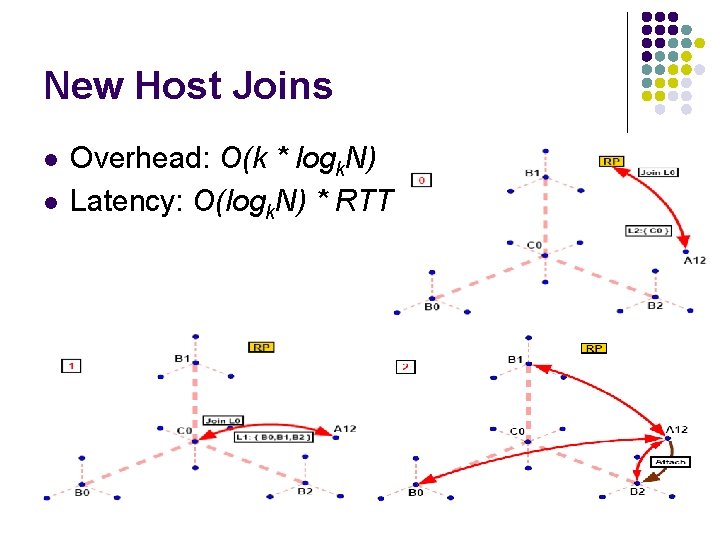

New Host Joins l Join procedure l l Contact RP to get the cluster members of the highest layer Loop until reach layer 0 l l Query the members of the returned cluster and find the closest one, X Get the members of the child-cluster of X

New Host Joins l l Overhead: O(k * logk. N) Latency: O(logk. N) * RTT

New Host Joins l l Cluster-leader may change as member joins or leaves A change in leadership of a cluster C, in layer Lj l l l Current leader of C removes itself from all layers > Lj Each affected layers choose a new leader The new leaders join their super-cluster l If the state of super-cluster is not locally available, contact RP

Cluster Maintenance and Refinement l Cluster-leader periodically checks the size of its cluster in layer Li l If the cluster size exceeds the 3 k - 1 limit l l Split the cluster into two equal-sized clusters such that the maximum of the radii among the two clusters is minimized If the cluster size is under k l The leader finds a closest host in layer Li+1 and merge with it

Cluster Maintenance and Refinement l l Each member, H, in any layer Li periodically probes all members in its super-cluster, to identify the closest member If a host, J, that is closer to H is found, then H joins the cluster under the J

Host Departure and Leader Selection l Node H leaves l l l Graceful leave l Send a leave message to all clusters it belongs Ungraceful leave l Other hosts detect the leave by not receiving the periodic refresh of H If H is leader l l Each remaining member, J, select a new leader independently Multiple leaders are resolved by the exchange of refreshes

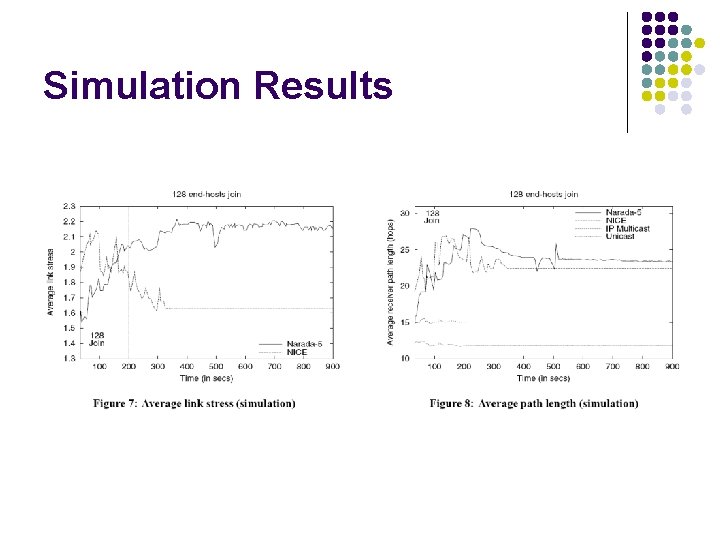

Simulation Results

Simulation Results

Simulation Results

Simulation Results

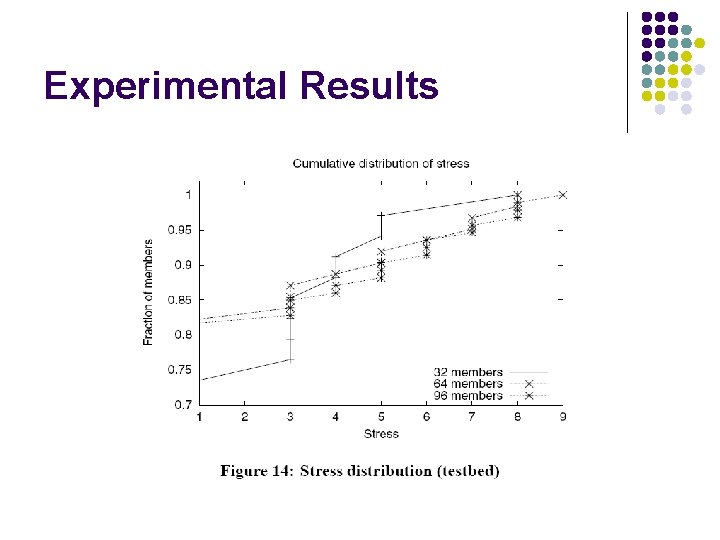

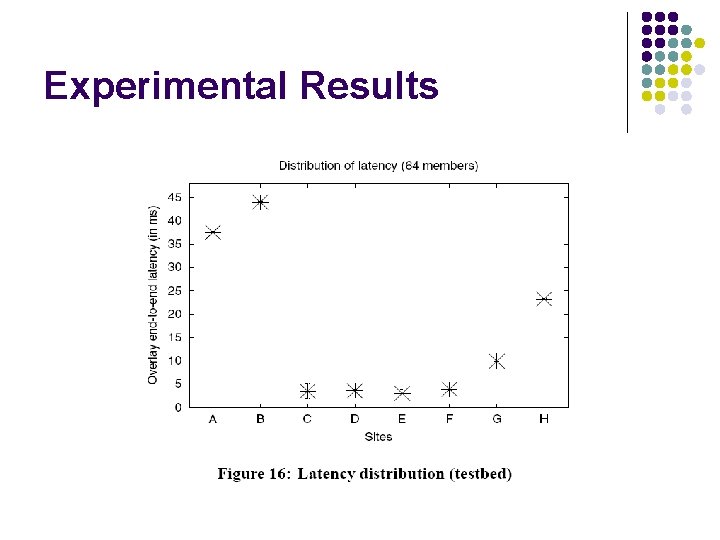

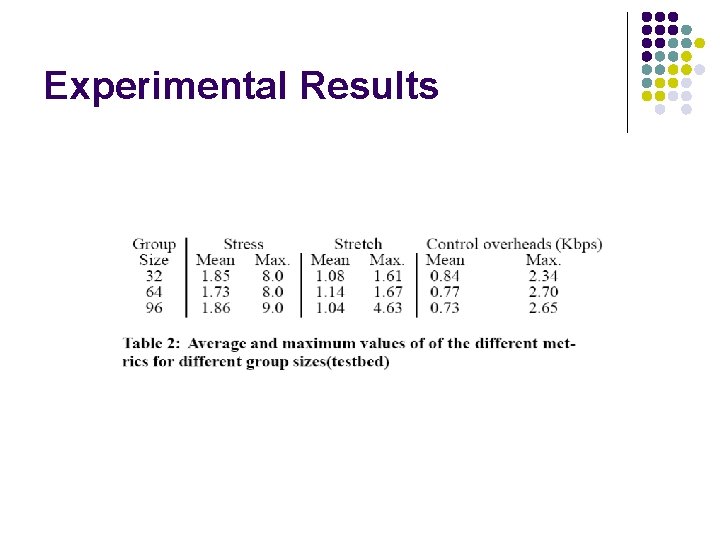

Experimental Results

Experimental Results

Experimental Results

Experimental Results

Experimental Results

Experimental Results

Experimental Results

Conclusions l Proposed a new application layer multicast protocol l l Low control overhead Low link stress

- Slides: 29