SAMPLING DEAD BLOCK PREDICTION FOR LASTLEVEL CACHES Samira

SAMPLING DEAD BLOCK PREDICTION FOR LAST-LEVEL CACHES Samira Khan Yingying Tian Daniel A. Jiménez

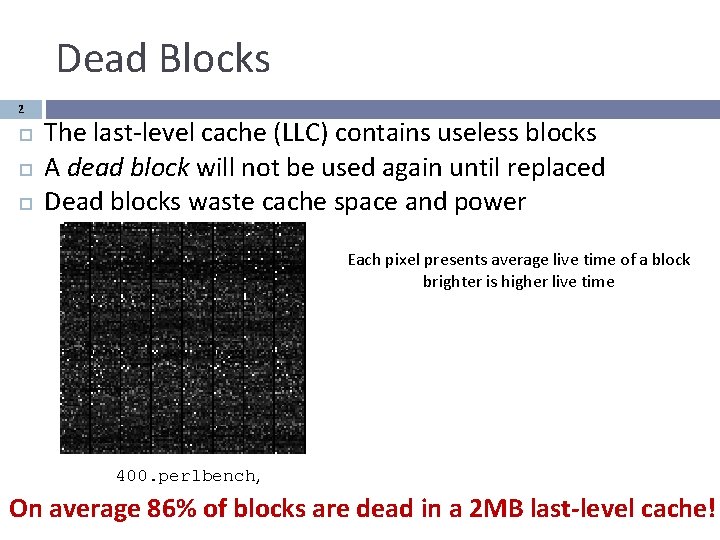

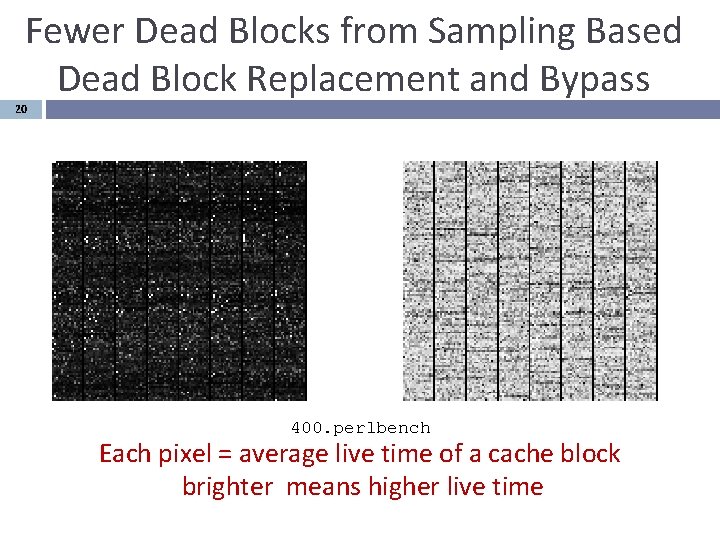

Dead Blocks 2 The last-level cache (LLC) contains useless blocks A dead block will not be used again until replaced Dead blocks waste cache space and power Each pixel presents average live time of a block brighter is higher live time 400. perlbench, On average 86% of blocks are dead in a 2 MB last-level cache!

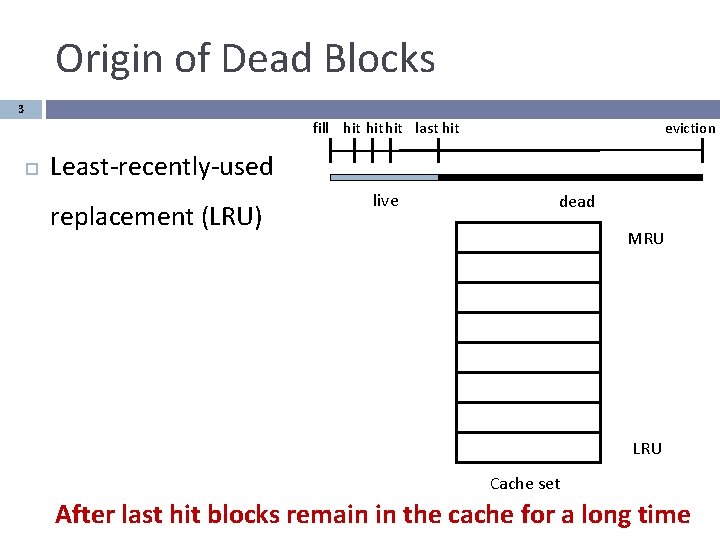

Origin of Dead Blocks 3 fill hit hit last hit eviction Least-recently-used replacement (LRU) live dead MRU LRU Cache set After last hit blocks remain in the cache for a long time

Dead Block Predictors 4 Identify dead blocks Problems with current predictors: � Consume significant state � Update predictor at every access � Depend on the LRU replacement policy � Do not work well in last-level cache L 1 and L 2 filter the temporal locality Goal: A Dead block predictor that uses far less state and works well for LLC

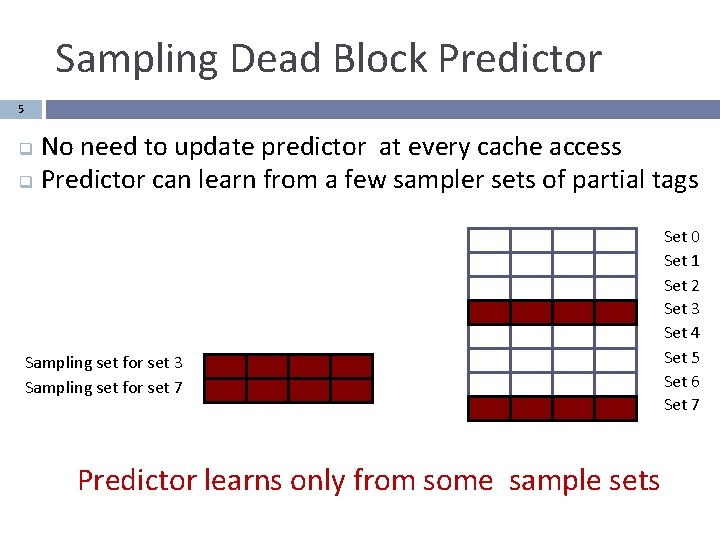

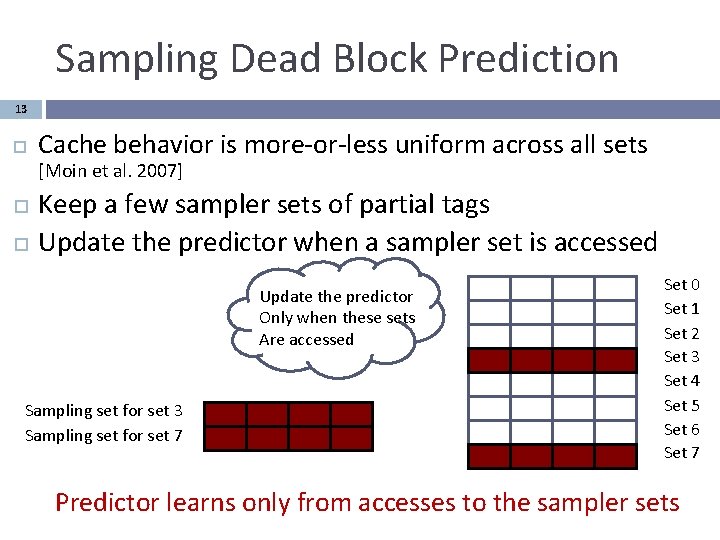

Sampling Dead Block Predictor 5 No need to update predictor at every cache access q Predictor can learn from a few sampler sets of partial tags q Sampling set for set 3 Sampling set for set 7 Predictor learns only from some sample sets Set 0 Set 1 Set 2 Set 3 Set 4 Set 5 Set 6 Set 7

Contribution 6 Prediction using sampling � Predictor learns from only a few sample sets � Sampled sets do not need to reflect real sets Decoupled replacement policy � Cache can have different replacement policy than sampler Skewed predictor Results Speedup of 5. 9% for single-thread workloads � Weighted speedup of 12. 5% for multi-core workloads � Sampling predictor consumes low power �

Outline 7 Introduction Background Sampling Predictor Methodology Results Conclusion

![Dead Block Predictors 8 Trace Based [Lai & Falsafi 2001] � Predicts Time Based Dead Block Predictors 8 Trace Based [Lai & Falsafi 2001] � Predicts Time Based](http://slidetodoc.com/presentation_image_h/c654ecf797c56592b4333d03c0b9f70a/image-8.jpg)

Dead Block Predictors 8 Trace Based [Lai & Falsafi 2001] � Predicts Time Based [Hu et al. 2002] � Predicts the last touch based on PC sequence dead after certain number of cycles Counting Based [Kharbutli & Solihin 2008] � Predicts dead after certain number of accesses

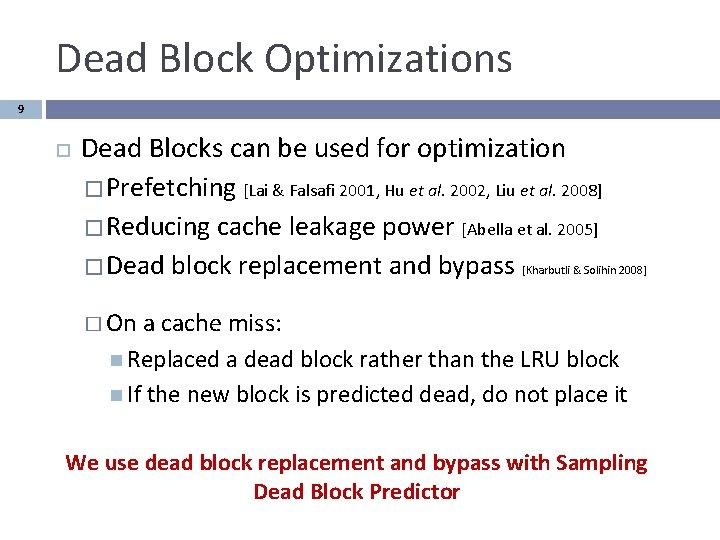

Dead Block Optimizations 9 Dead Blocks can be used for optimization � Prefetching [Lai & Falsafi 2001, Hu et al. 2002, Liu et al. 2008] � Reducing cache leakage power [Abella et al. 2005] � Dead block replacement and bypass [Kharbutli & Solihin 2008] � On a cache miss: Replaced a dead block rather than the LRU block If the new block is predicted dead, do not place it We use dead block replacement and bypass with Sampling Dead Block Predictor

![Reference Trace predictor [ Lai & Falsafi 2001 ] 10 Predicts last touch based Reference Trace predictor [ Lai & Falsafi 2001 ] 10 Predicts last touch based](http://slidetodoc.com/presentation_image_h/c654ecf797c56592b4333d03c0b9f70a/image-10.jpg)

Reference Trace predictor [ Lai & Falsafi 2001 ] 10 Predicts last touch based on sequence of instructions Encoding: truncated addition of instruction PCs � Called signature Predictor table is indexed by hashed signature � 2 bit saturating counters

![Reference Trace predictor [ Lai & Falsafi 2001 ] 11 Predictor Table Update live Reference Trace predictor [ Lai & Falsafi 2001 ] 11 Predictor Table Update live](http://slidetodoc.com/presentation_image_h/c654ecf797c56592b4333d03c0b9f70a/image-11.jpg)

Reference Trace predictor [ Lai & Falsafi 2001 ] 11 Predictor Table Update live PC sequence PC i: ld a Miss in set 2, replace PC j: ld b Hit in set 4 PC k: st c Update evicted dead Set 0 Set 1 Set 2 Set 3 Set 4 Set 5 Set 6 Set 7 Update live PC l: ld a Hit in set 7 Cache Predictor learns from every access to the cache

Outline 12 Introduction Background Sampling Predictor Methodology Results Conclusion

Sampling Dead Block Prediction 13 Cache behavior is more-or-less uniform across all sets [Moin et al. 2007] Keep a few sampler sets of partial tags Update the predictor when a sampler set is accessed Update the predictor Only when these sets Are accessed Sampling set for set 3 Sampling set for set 7 Set 0 Set 1 Set 2 Set 3 Set 4 Set 5 Set 6 Set 7 Predictor learns only from accesses to the sampler sets

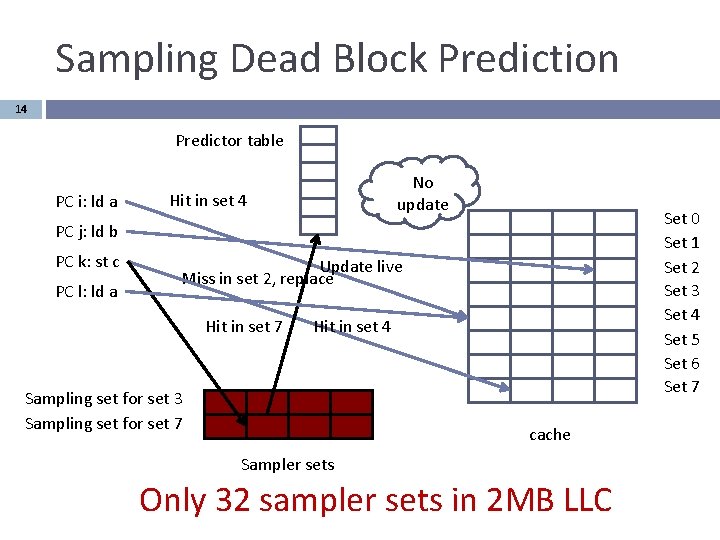

Sampling Dead Block Prediction 14 Predictor table PC i: ld a No update Hit in set 4 Set 0 Set 1 Set 2 Set 3 Set 4 Set 5 Set 6 Set 7 PC j: ld b PC k: st c PC l: ld a Update live Miss in set 2, replace Hit in set 7 Hit in set 4 Sampling set for set 3 Sampling set for set 7 cache Sampler sets Only 32 sampler sets in 2 MB LLC

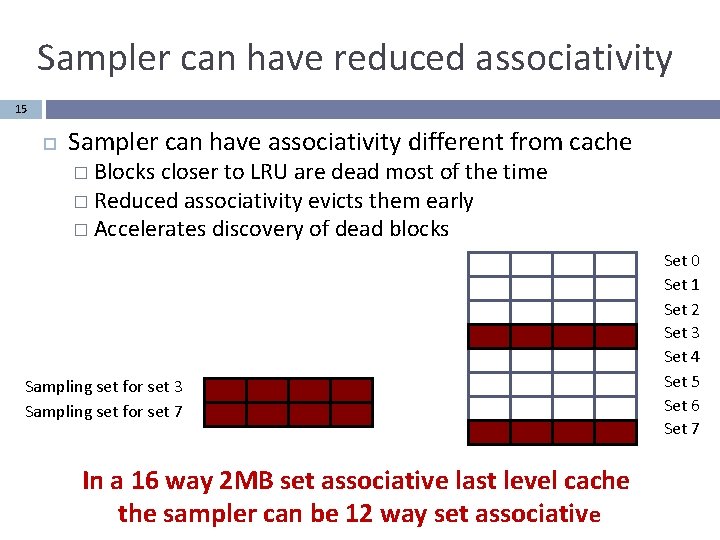

Sampler can have reduced associativity 15 Sampler can have associativity different from cache � Blocks closer to LRU are dead most of the time � Reduced associativity evicts them early � Accelerates discovery of dead blocks Sampling set for set 3 Sampling set for set 7 In a 16 way 2 MB set associative last level cache the sampler can be 12 way set associative Set 0 Set 1 Set 2 Set 3 Set 4 Set 5 Set 6 Set 7

Sampler Decouples Replacement Policy 16 Predictor learns from the LRU policy in sampler q Cache can deploy a cheap replacement policy q e. g. random replacement q LRU Replacement R Random Replacement Sampling set for set 3 Sampling set for set 7 Can save state and power needed for LRU policy Set 0 Set 1 Set 2 Set 3 Set 4 Set 5 Set 6 Set 7

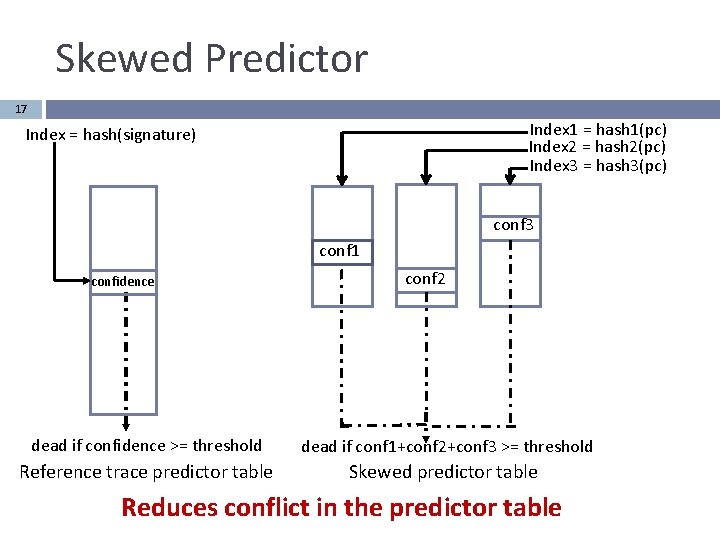

Skewed Predictor 17 Index 1 = hash 1(pc) Index 2 = hash 2(pc) Index 3 = hash 3(pc) Index = hash(signature) conf 3 conf 1 confidence conf 2 dead if confidence >= threshold dead if conf 1+conf 2+conf 3 >= threshold Reference trace predictor table Skewed predictor table Reduces conflict in the predictor table

Outline 18 Introduction Background Sampling Predictor Methodology Results Conclusion

![Methodology 19 CMP$im cycle accurate simulator [Jaleel et al. 2008] � 2 MB /core Methodology 19 CMP$im cycle accurate simulator [Jaleel et al. 2008] � 2 MB /core](http://slidetodoc.com/presentation_image_h/c654ecf797c56592b4333d03c0b9f70a/image-19.jpg)

Methodology 19 CMP$im cycle accurate simulator [Jaleel et al. 2008] � 2 MB /core 16 -way set-associative shared LLC � 32 KB I+D L 1, 256 KB L 2 � 200 -cycle DRAM access time 19 memory-intensive SPEC CPU 2006 benchmarks 10 mixes of SPEC CPU 2006 for 4 -cores Power numbers are from CACTI 5. 3 [Shyamkumar et al 2008]

Fewer Dead Blocks from Sampling Based Dead Block Replacement and Bypass 20 400. perlbench Each pixel = average live time of a cache block brighter means higher live time

Space Overhead 21 Storage Overhead in KB 120 sampler 100 predictor storage perline metadata 80 60 40 20 0 Ref Trace DBP Couting based DBP Sampling Dead Block Prediction uses 3 KB predictor table, one bit per cache line and 6. 75 KB sampler tag array

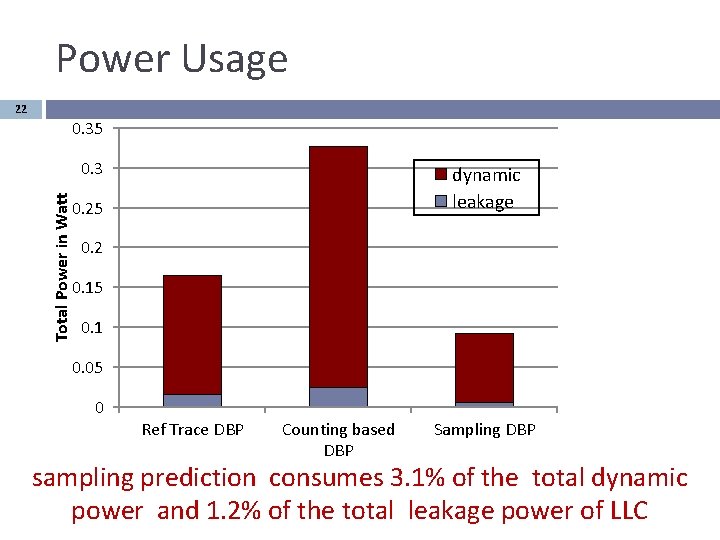

Power Usage 22 0. 35 Total Power in Watt 0. 3 dynamic leakage 0. 25 0. 2 0. 15 0. 1 0. 05 0 Ref Trace DBP Counting based DBP Sampling DBP sampling prediction consumes 3. 1% of the total dynamic power and 1. 2% of the total leakage power of LLC

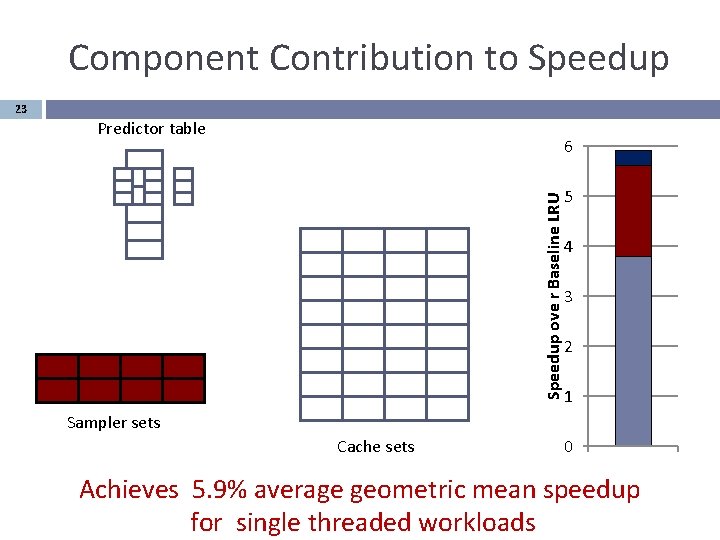

Component Contribution to Speedup 23 Predictor table Speedup ove r Baseline LRU 6 5 4 3 2 1 Sampler sets Cache sets 0 Achieves 5. 9% average geometric mean speedup for single threaded workloads

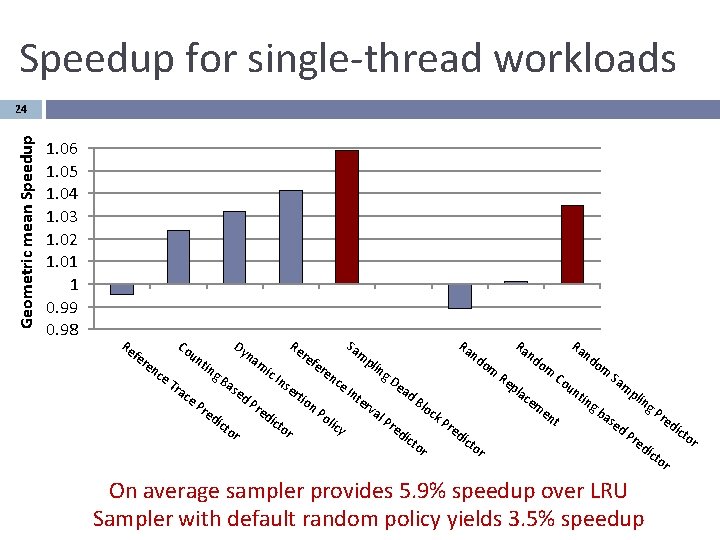

Speedup for single-thread workloads Geometric mean Speedup 24 1. 06 1. 05 1. 04 1. 03 1. 02 1. 01 1 0. 99 0. 98 Re fe re Co nc un e. T ra ce tin Dy Pr g. B na as ed ed ict or Re m ic Pr ed re Ins ict er or Sa tio fe m re n. P nc oli e. I cy Ra nt pli er ng va l Ra De Pr ad ed k. P or Re nd om Co pla Blo c ict om Ra nd nd re ce m un dic en to r t om Sa m tin gb as ed pli ng Pr Pr ed ic ed On average sampler provides 5. 9% speedup over LRU Sampler with default random policy yields 3. 5% speedup to r ict or

Normalized weighted speedup for multi-core workloads Geometric mean speedup 25 1. 14 1. 12 1. 1 1. 08 1. 06 1. 04 1. 02 1 0. 98 Re Ra Ra Ra Sam Re nd nd nd ref na p o m om o en e l i m m r n ic I e g. B ce g n C Sam R De nse ce ou ep Tra ase a l I n pli r a n d. B ce tin tio d. P cem ter ng Pre g l n. P o red v B Pre c a e k a l n o dic ict s P t l P i dic e r c r tor ed d. P or y ed tor ict i r c e tor or dic tor fer Co un tin Dy On average sampler provides 12. 5% benefit over LRU Sampler with default random policy yields 7% benefit

Conclusion 26 Sampling � � � Consumes less power Reduces storage overhead Decouples replacement policy Dead block replacement and bypass with sampling � achieves geometric mean speedup of 5. 9% for single threaded workloads 12. 5% for multi threaded workloads

27 Thank you

- Slides: 27