Rough Sets Basic Concepts of Rough Sets InformationDecision

_ Rough Sets

Basic Concepts of Rough Sets _ Information/Decision _ Indiscernibility _ Set Approximation _ Reducts and Core Systems (Tables)

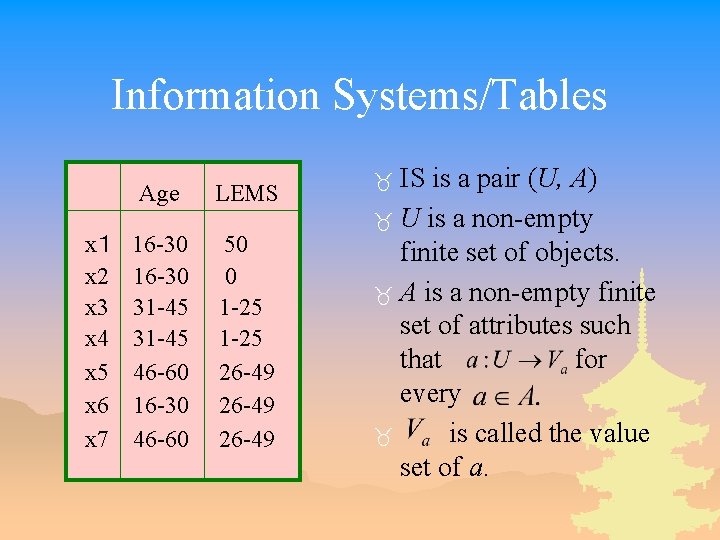

Information Systems/Tables x1 x 2 x 3 x 4 x 5 x 6 x 7 Age LEMS 16 -30 31 -45 46 -60 16 -30 46 -60 50 0 1 -25 26 -49 IS is a pair (U, A) _ U is a non-empty finite set of objects. _ A is a non-empty finite set of attributes such that for every _ is called the value set of a. _

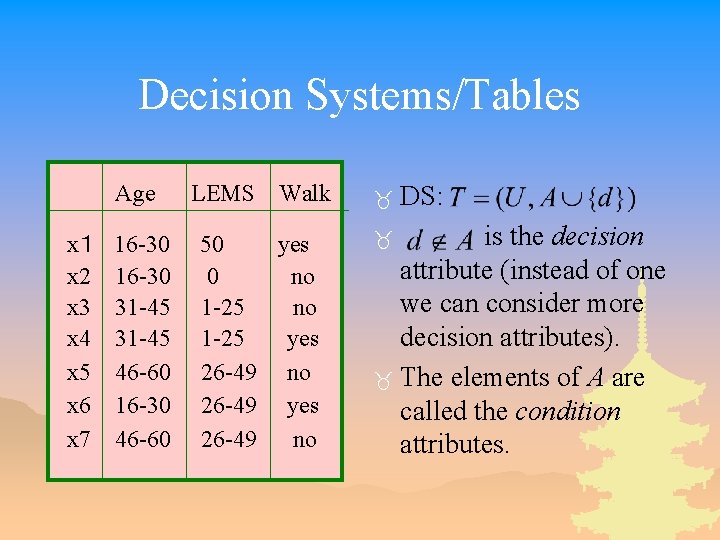

Decision Systems/Tables Age x1 x 2 x 3 x 4 x 5 x 6 x 7 16 -30 31 -45 46 -60 16 -30 46 -60 LEMS Walk 50 yes 0 no 1 -25 yes 26 -49 no 26 -49 yes 26 -49 no _ DS: is the decision attribute (instead of one we can consider more decision attributes). _ The elements of A are called the condition attributes. _

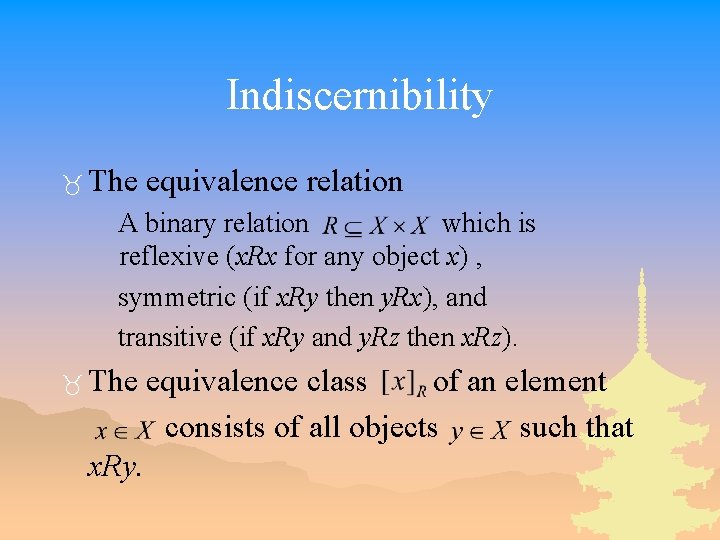

Indiscernibility _ The equivalence relation A binary relation which is reflexive (x. Rx for any object x) , symmetric (if x. Ry then y. Rx), and transitive (if x. Ry and y. Rz then x. Rz). _ The x. Ry. equivalence class of an element consists of all objects such that

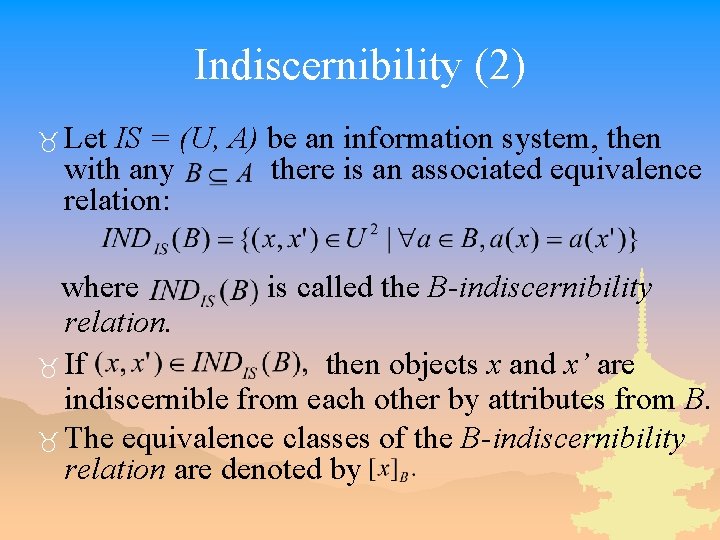

Indiscernibility (2) _ Let IS = (U, A) be an information system, then with any there is an associated equivalence relation: where is called the B-indiscernibility relation. _ If then objects x and x’ are indiscernible from each other by attributes from B. _ The equivalence classes of the B-indiscernibility relation are denoted by

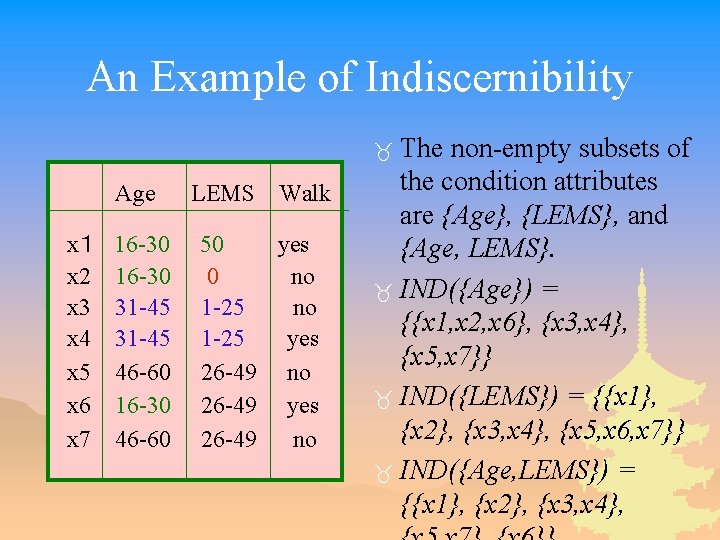

An Example of Indiscernibility The non-empty subsets of the condition attributes are {Age}, {LEMS}, and {Age, LEMS}. _ IND({Age}) = {{x 1, x 2, x 6}, {x 3, x 4}, {x 5, x 7}} _ IND({LEMS}) = {{x 1}, {x 2}, {x 3, x 4}, {x 5, x 6, x 7}} _ IND({Age, LEMS}) = {{x 1}, {x 2}, {x 3, x 4}, _ Age x1 x 2 x 3 x 4 x 5 x 6 x 7 16 -30 31 -45 46 -60 16 -30 46 -60 LEMS Walk 50 yes 0 no 1 -25 yes 26 -49 no 26 -49 yes 26 -49 no

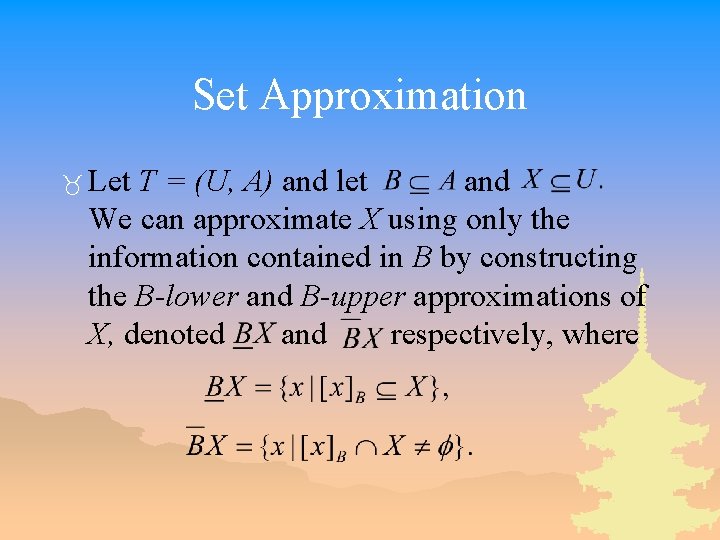

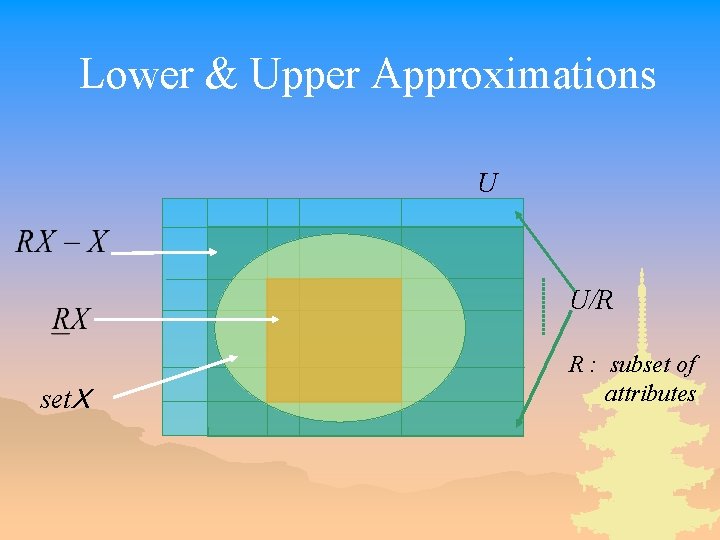

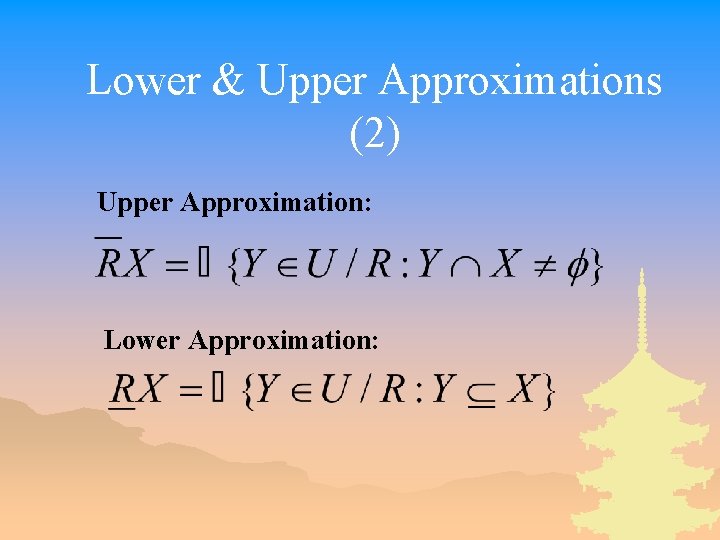

Set Approximation _ Let T = (U, A) and let and We can approximate X using only the information contained in B by constructing the B-lower and B-upper approximations of X, denoted and respectively, where

Set Approximation (2) _ B-boundary region of X, consists of those objects that we cannot decisively classify into X in B. _A set is said to be rough if its boundary region is non-empty, otherwise the set is crisp.

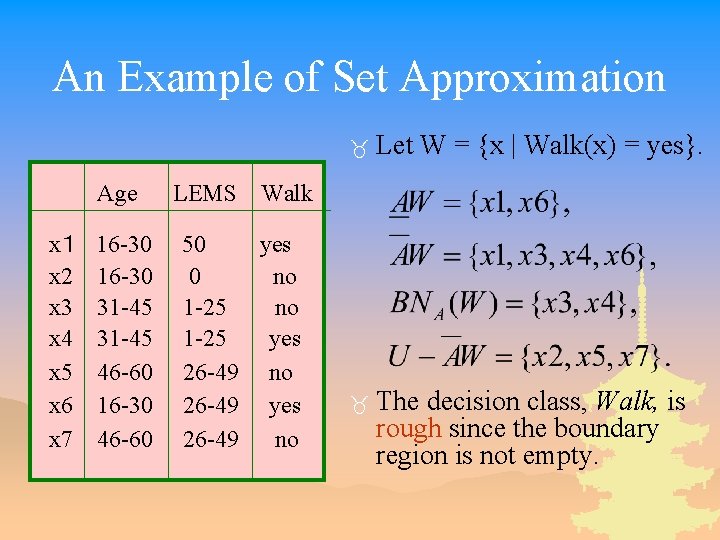

An Example of Set Approximation Age x1 x 2 x 3 x 4 x 5 x 6 x 7 16 -30 31 -45 46 -60 16 -30 46 -60 LEMS _ Let W = {x | Walk(x) = yes}. _ The decision class, Walk, is rough since the boundary region is not empty. Walk 50 yes 0 no 1 -25 yes 26 -49 no 26 -49 yes 26 -49 no

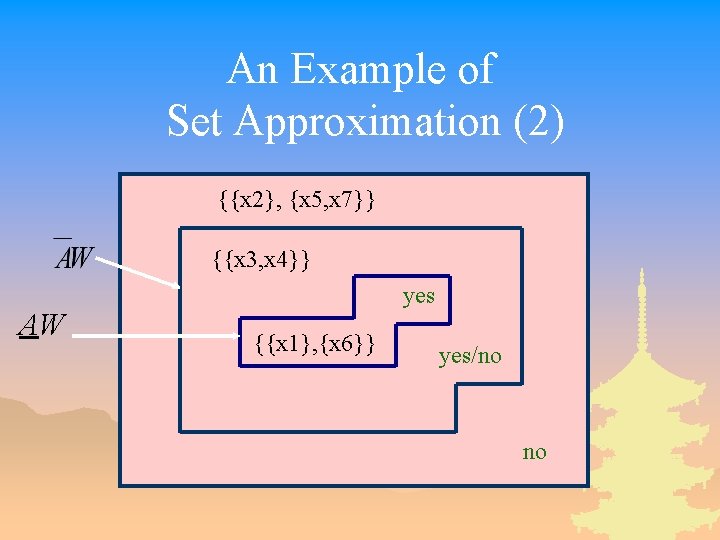

An Example of Set Approximation (2) {{x 2}, {x 5, x 7}} {{x 3, x 4}} AW yes {{x 1}, {x 6}} yes/no no

Lower & Upper Approximations U U/R setX R : subset of attributes

Lower & Upper Approximations (2) Upper Approximation: Lower Approximation:

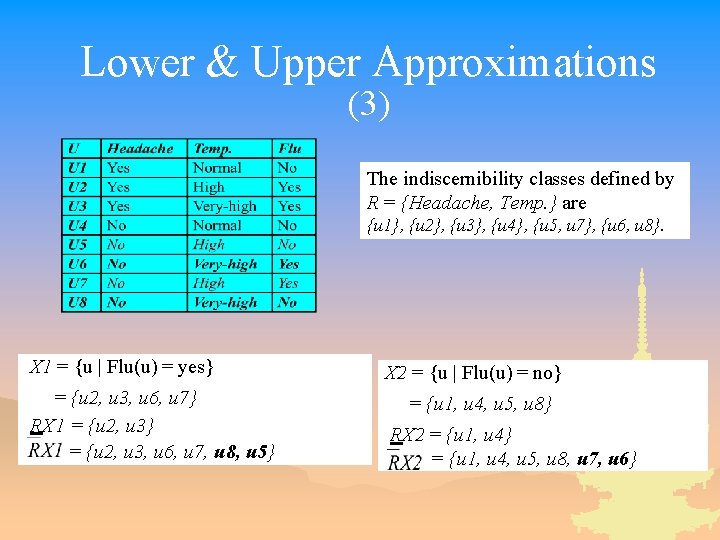

Lower & Upper Approximations (3) The indiscernibility classes defined by R = {Headache, Temp. } are {u 1}, {u 2}, {u 3}, {u 4}, {u 5, u 7}, {u 6, u 8}. X 1 = {u | Flu(u) = yes} = {u 2, u 3, u 6, u 7} RX 1 = {u 2, u 3} = {u 2, u 3, u 6, u 7, u 8, u 5} X 2 = {u | Flu(u) = no} = {u 1, u 4, u 5, u 8} RX 2 = {u 1, u 4} = {u 1, u 4, u 5, u 8, u 7, u 6}

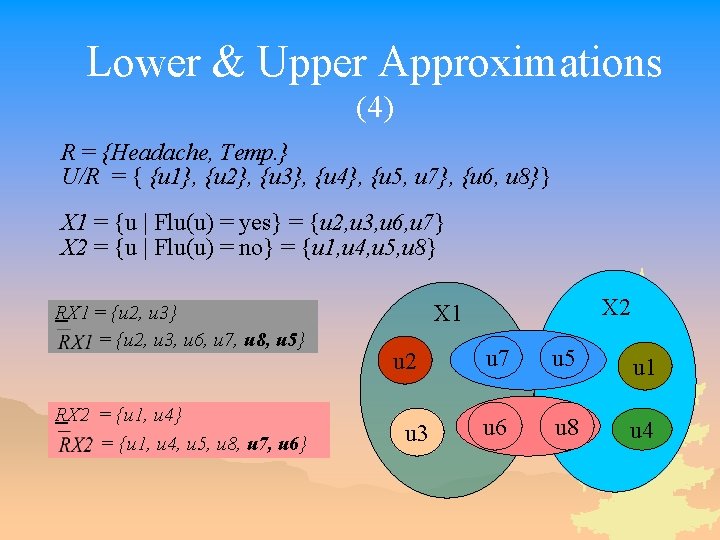

Lower & Upper Approximations (4) R = {Headache, Temp. } U/R = { {u 1}, {u 2}, {u 3}, {u 4}, {u 5, u 7}, {u 6, u 8}} X 1 = {u | Flu(u) = yes} = {u 2, u 3, u 6, u 7} X 2 = {u | Flu(u) = no} = {u 1, u 4, u 5, u 8} RX 1 = {u 2, u 3} = {u 2, u 3, u 6, u 7, u 8, u 5} RX 2 = {u 1, u 4} = {u 1, u 4, u 5, u 8, u 7, u 6} X 2 X 1 u 2 u 3 u 7 u 5 u 1 u 6 u 8 u 4

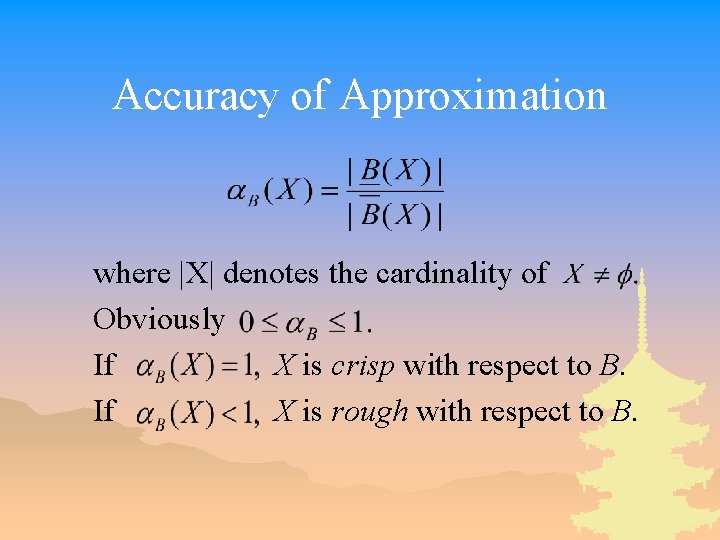

Accuracy of Approximation where |X| denotes the cardinality of Obviously If X is crisp with respect to B. If X is rough with respect to B.

Issues in the Decision Table _ The same or indiscernible objects may be represented several times. _ Some of the attributes may be superfluous (redundant). That is, their removal cannot worsen the classification.

Reducts _ Keep only those attributes that preserve the indiscernibility relation and, consequently, set approximation. _ There are usually several such subsets of attributes and those which are minimal are called reducts.

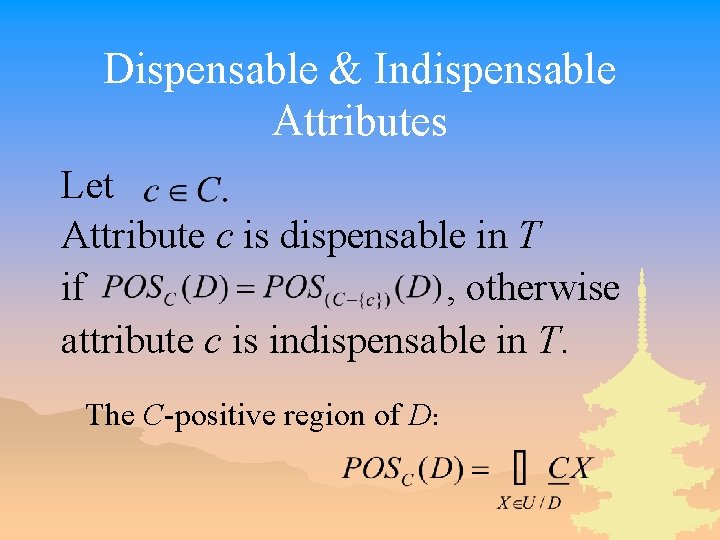

Dispensable & Indispensable Attributes Let Attribute c is dispensable in T if , otherwise attribute c is indispensable in T. The C-positive region of D:

Independent _T = (U, C, D) is independent if all are indispensable in T.

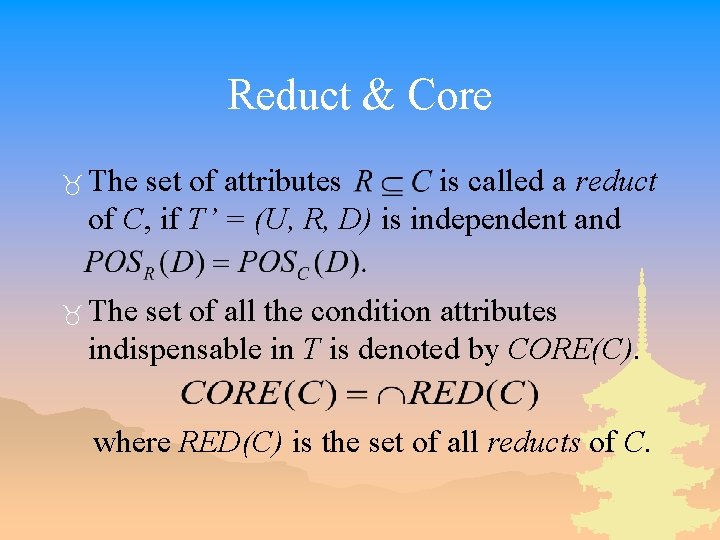

Reduct & Core _ The set of attributes is called a reduct of C, if T’ = (U, R, D) is independent and _ The set of all the condition attributes indispensable in T is denoted by CORE(C). where RED(C) is the set of all reducts of C.

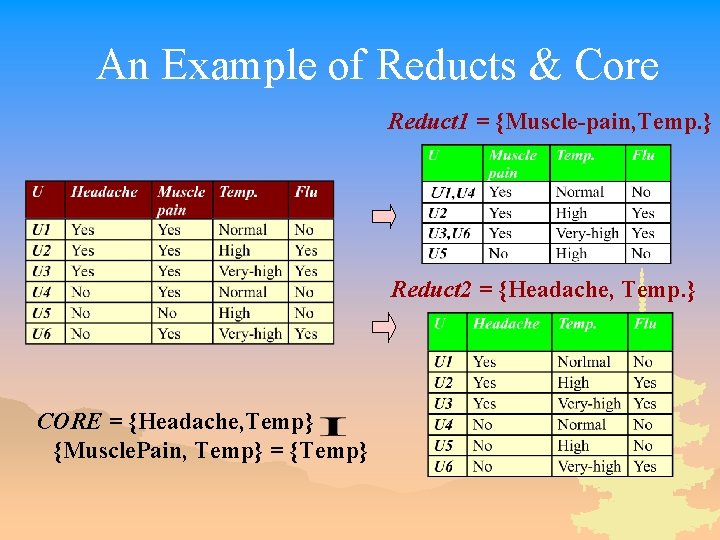

An Example of Reducts & Core Reduct 1 = {Muscle-pain, Temp. } Reduct 2 = {Headache, Temp. } CORE = {Headache, Temp} {Muscle. Pain, Temp} = {Temp}

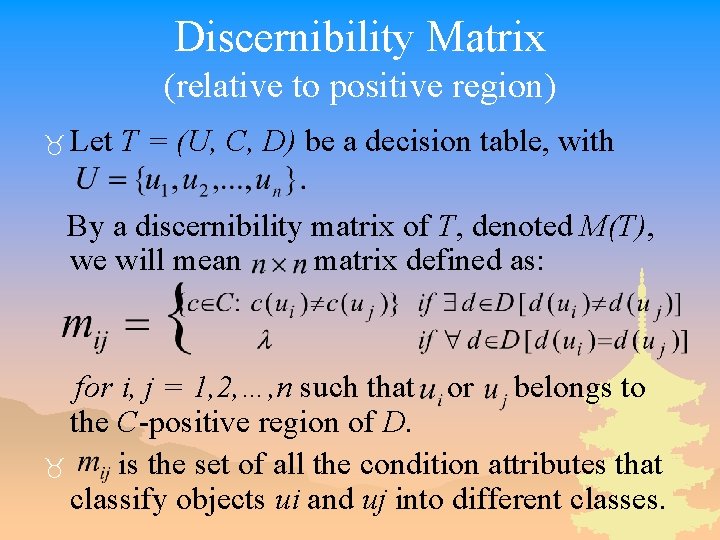

Discernibility Matrix (relative to positive region) _ Let T = (U, C, D) be a decision table, with By a discernibility matrix of T, denoted M(T), we will mean matrix defined as: for i, j = 1, 2, …, n such that or belongs to the C-positive region of D. _ is the set of all the condition attributes that classify objects ui and uj into different classes.

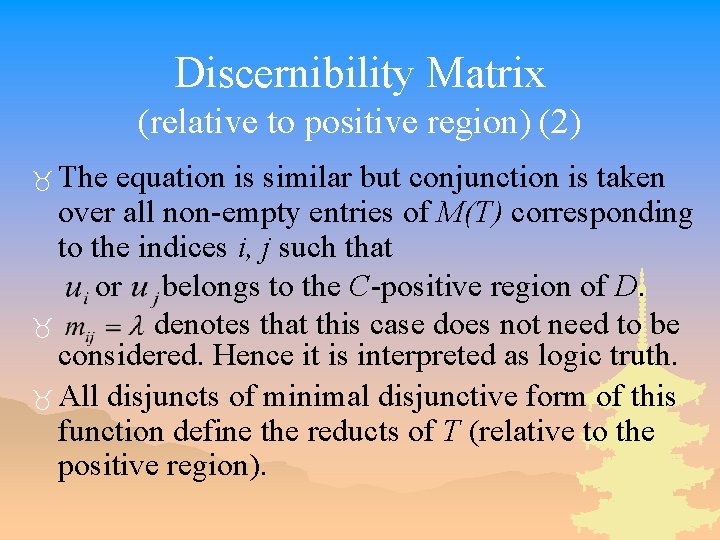

Discernibility Matrix (relative to positive region) (2) _ The equation is similar but conjunction is taken over all non-empty entries of M(T) corresponding to the indices i, j such that or belongs to the C-positive region of D. _ denotes that this case does not need to be considered. Hence it is interpreted as logic truth. _ All disjuncts of minimal disjunctive form of this function define the reducts of T (relative to the positive region).

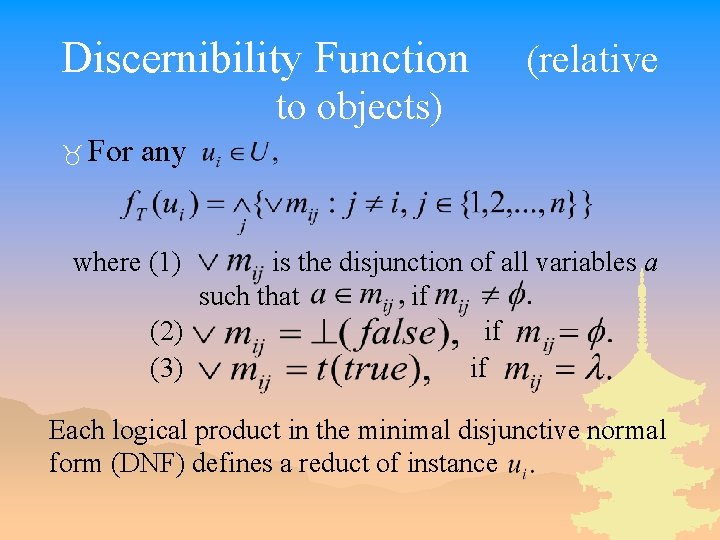

Discernibility Function (relative to objects) _ For any where (1) is the disjunction of all variables a such that if (2) if (3) if Each logical product in the minimal disjunctive normal form (DNF) defines a reduct of instance

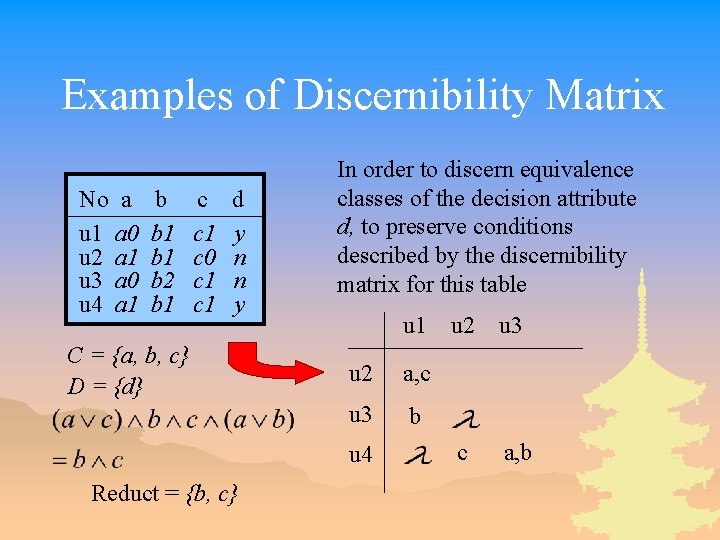

Examples of Discernibility Matrix No a u 1 a 0 u 2 a 1 u 3 a 0 u 4 a 1 b b 1 b 2 b 1 c c 1 c 0 c 1 d y n n y C = {a, b, c} D = {d} In order to discern equivalence classes of the decision attribute d, to preserve conditions described by the discernibility matrix for this table u 1 u 2 a, c u 3 b u 4 Reduct = {b, c} u 2 u 3 c a, b

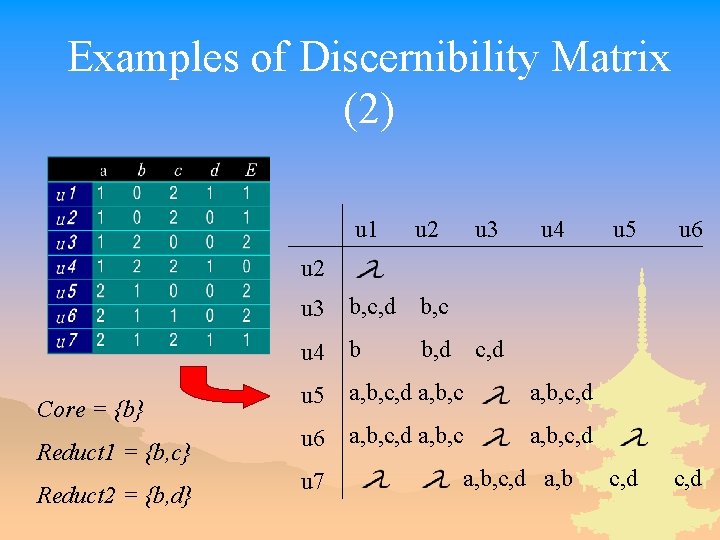

Examples of Discernibility Matrix (2) u 1 u 2 u 3 u 4 u 3 b, c, d b, c u 4 b b, d u 5 a, b, c, d u 6 a, b, c, d u 5 u 6 c, d u 2 Core = {b} Reduct 1 = {b, c} Reduct 2 = {b, d} u 7 c, d a, b, c, d a, b

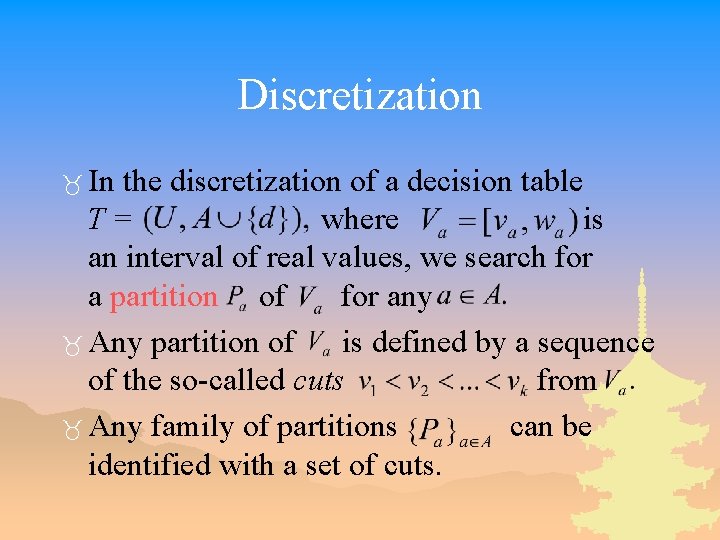

Discretization _ In the discretization of a decision table T= where is an interval of real values, we search for a partition of for any _ Any partition of is defined by a sequence of the so-called cuts from _ Any family of partitions can be identified with a set of cuts.

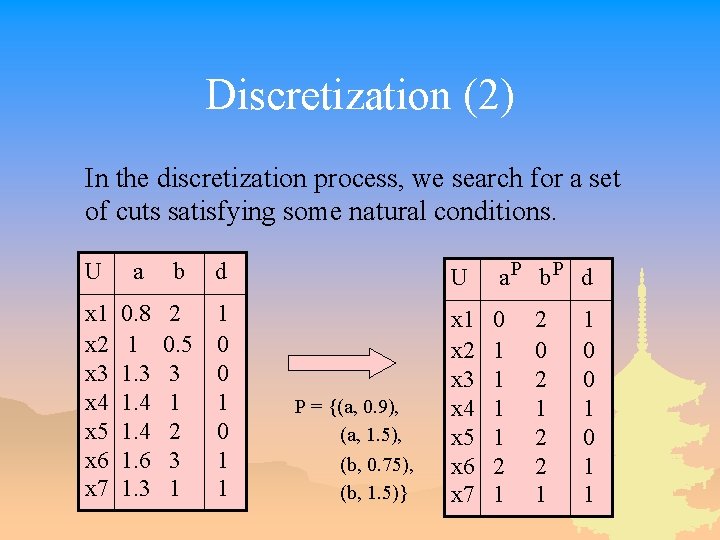

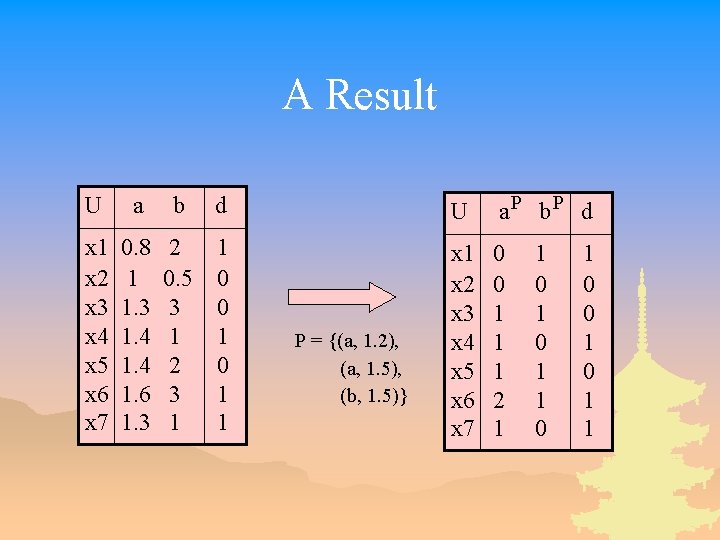

Discretization (2) In the discretization process, we search for a set of cuts satisfying some natural conditions. U a b d U a. P b. P d x 1 x 2 x 3 x 4 x 5 x 6 x 7 0. 8 1 1. 3 1. 4 1. 6 1. 3 2 0. 5 3 1 2 3 1 1 0 0 1 1 x 2 x 3 x 4 x 5 x 6 x 7 0 1 1 2 1 P = {(a, 0. 9), (a, 1. 5), (b, 0. 75), (b, 1. 5)} 2 0 2 1 2 2 1 1 0 0 1 1

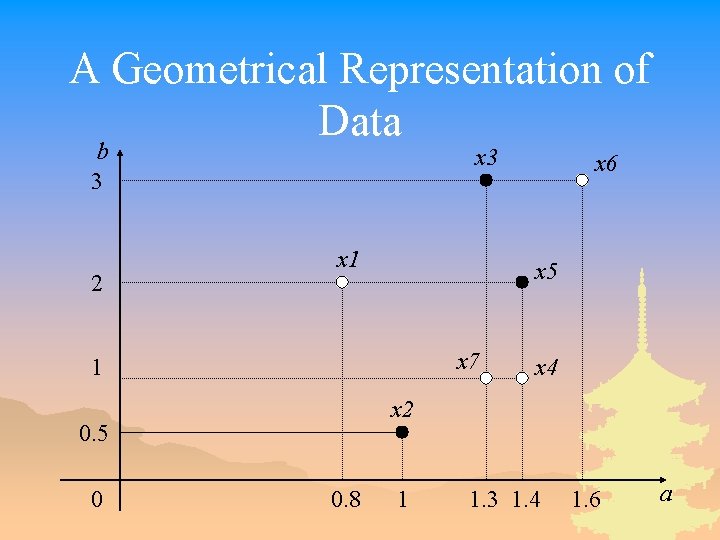

A Geometrical Representation of Data b 3 2 x 3 x 1 x 5 x 7 1 x 4 x 2 0. 5 0 x 6 0. 8 1 1. 3 1. 4 1. 6 a

A Geometrical Representation of Data and Cuts b x 3 3 2 x 1 x 5 x 7 1 x 4 x 2 0. 5 0 x 6 0. 8 1 1. 3 1. 4 1. 6 a

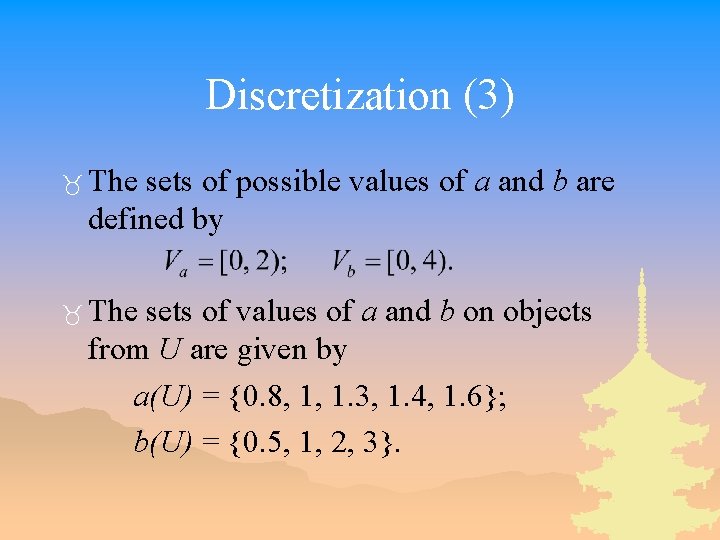

Discretization (3) _ The sets of possible values of a and b are defined by _ The sets of values of a and b on objects from U are given by a(U) = {0. 8, 1, 1. 3, 1. 4, 1. 6}; b(U) = {0. 5, 1, 2, 3}.

Discretization Based on RSBR (4) _ The discretization process returns a partition of the value sets of condition attributes into intervals.

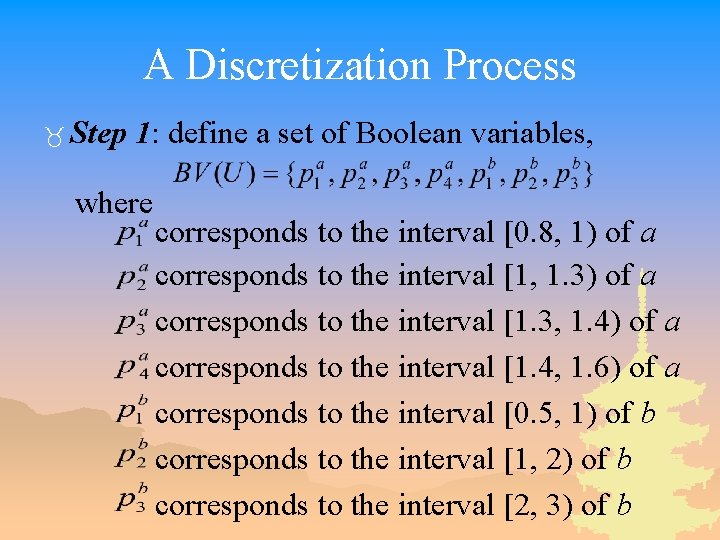

A Discretization Process _ Step 1: define a set of Boolean variables, where corresponds to the interval [0. 8, 1) of a corresponds to the interval [1, 1. 3) of a corresponds to the interval [1. 3, 1. 4) of a corresponds to the interval [1. 4, 1. 6) of a corresponds to the interval [0. 5, 1) of b corresponds to the interval [1, 2) of b corresponds to the interval [2, 3) of b

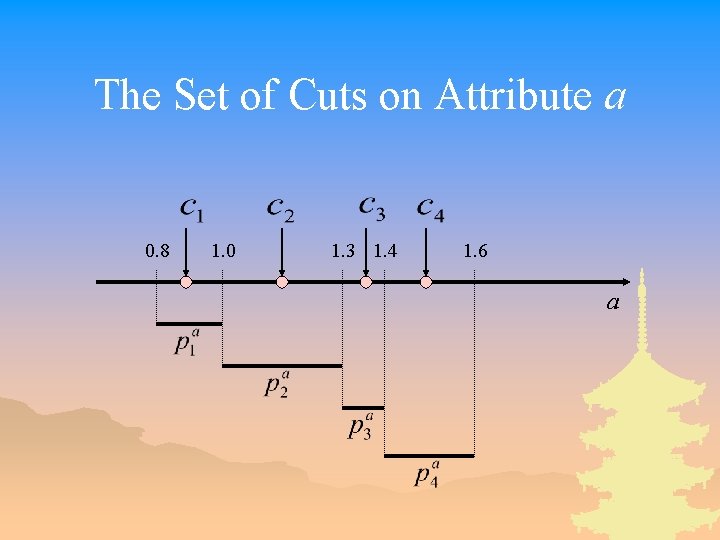

The Set of Cuts on Attribute a 0. 8 1. 0 1. 3 1. 4 1. 6 a

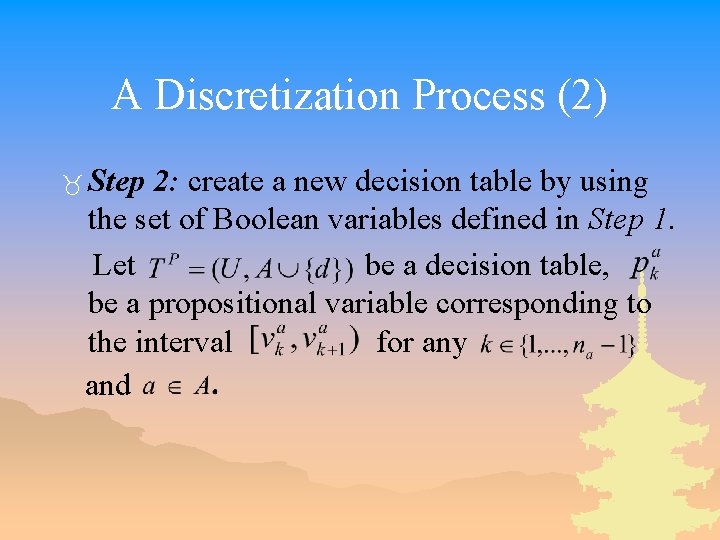

A Discretization Process (2) _ Step 2: create a new decision table by using the set of Boolean variables defined in Step 1. Let be a decision table, be a propositional variable corresponding to the interval for any and

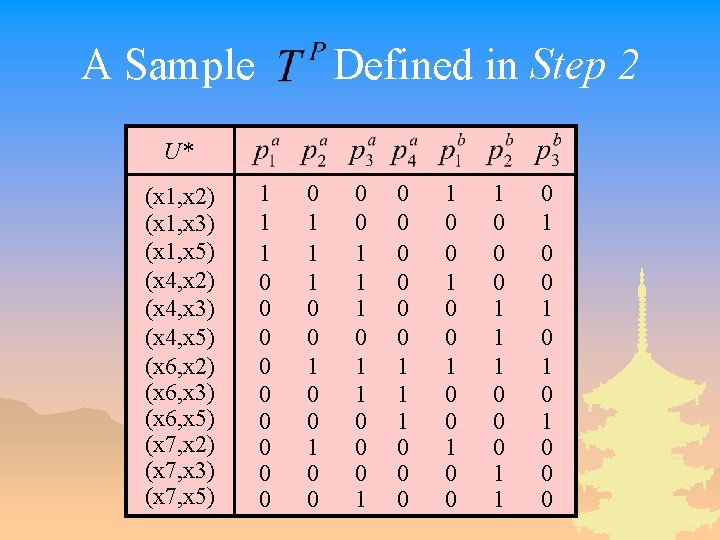

A Sample Defined in Step 2 U* (x 1, x 2) (x 1, x 3) (x 1, x 5) (x 4, x 2) (x 4, x 3) (x 4, x 5) (x 6, x 2) (x 6, x 3) (x 6, x 5) (x 7, x 2) (x 7, x 3) (x 7, x 5) 1 1 1 0 0 0 0 0 1 1 1 0 0 1 1 1 0 0 0 1 1 1 0 0 0 1 0 0 1 0 0 0 1 1 0 1 0 1 0 0 0

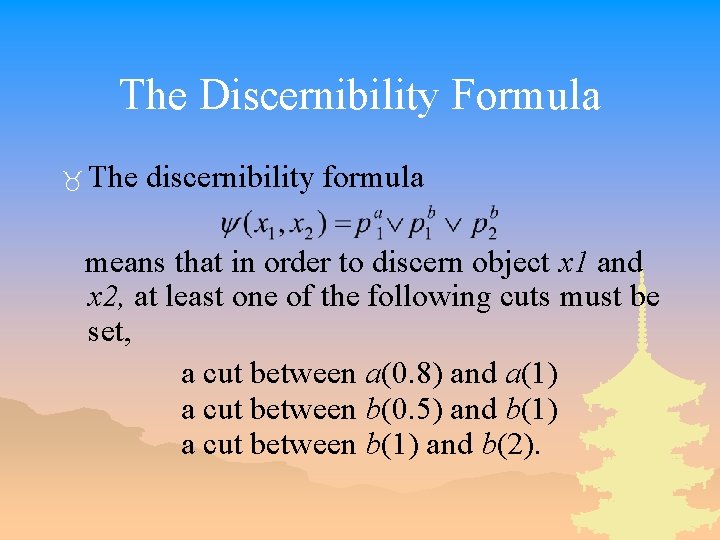

The Discernibility Formula _ The discernibility formula means that in order to discern object x 1 and x 2, at least one of the following cuts must be set, a cut between a(0. 8) and a(1) a cut between b(0. 5) and b(1) a cut between b(1) and b(2).

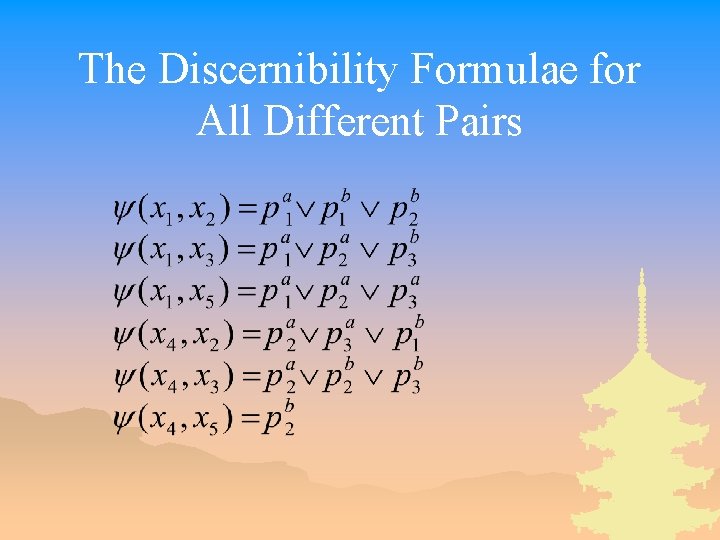

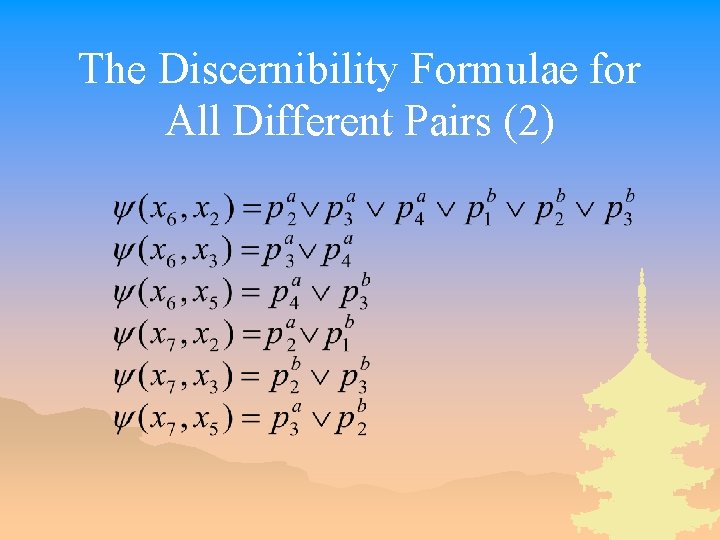

The Discernibility Formulae for All Different Pairs

The Discernibility Formulae for All Different Pairs (2)

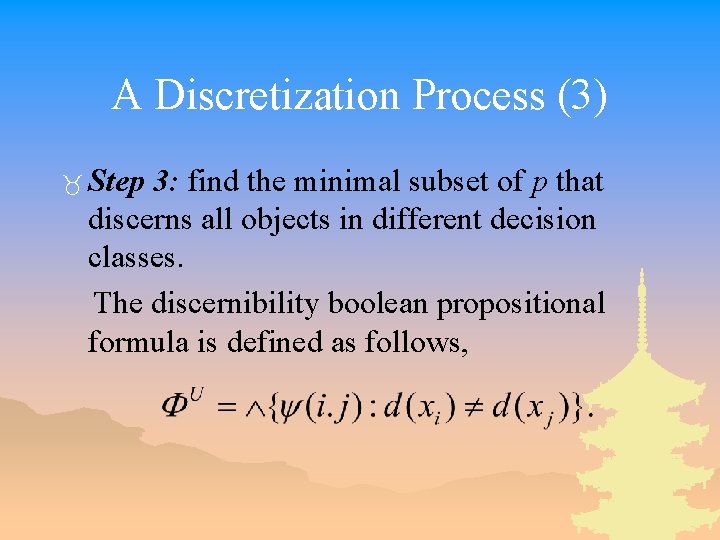

A Discretization Process (3) _ Step 3: find the minimal subset of p that discerns all objects in different decision classes. The discernibility boolean propositional formula is defined as follows,

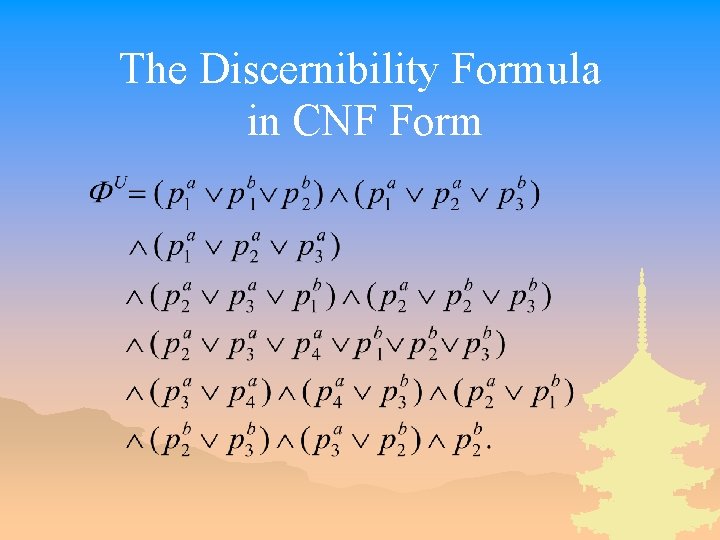

The Discernibility Formula in CNF Form

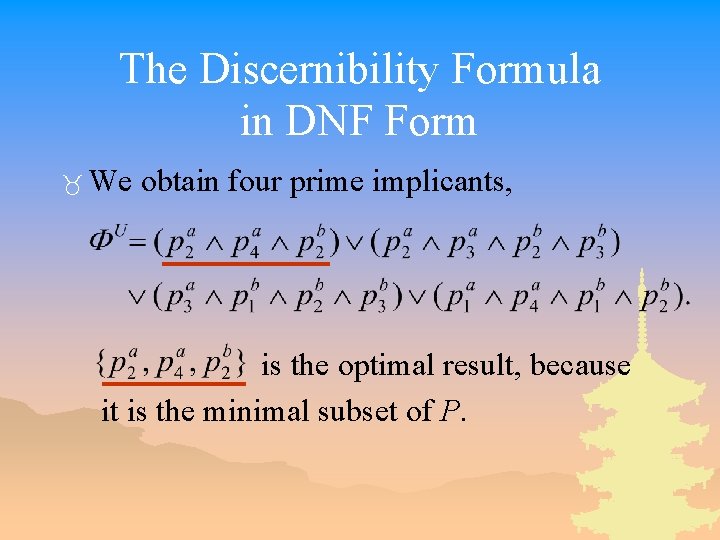

The Discernibility Formula in DNF Form _ We obtain four prime implicants, is the optimal result, because it is the minimal subset of P.

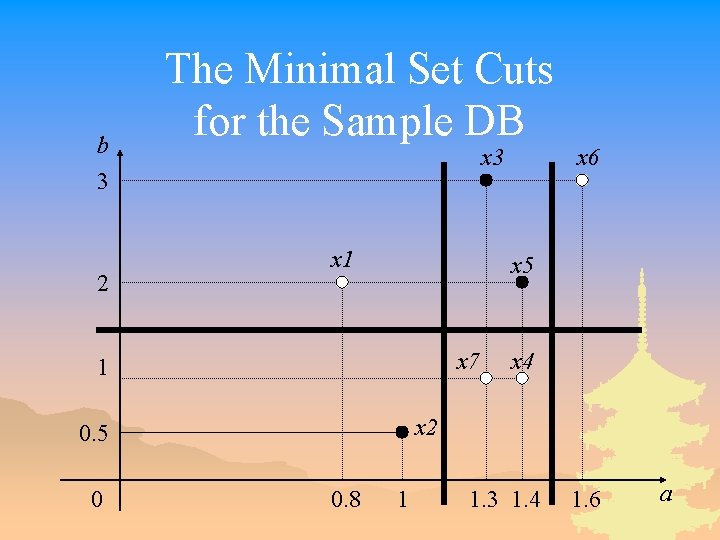

b 3 2 The Minimal Set Cuts for the Sample DB x 3 x 1 x 5 x 7 1 x 4 x 2 0. 5 0 x 6 0. 8 1 1. 3 1. 4 1. 6 a

A Result U a b d U a. P b. P d x 1 x 2 x 3 x 4 x 5 x 6 x 7 0. 8 1 1. 3 1. 4 1. 6 1. 3 2 0. 5 3 1 2 3 1 1 0 0 1 1 x 2 x 3 x 4 x 5 x 6 x 7 0 0 1 1 1 2 1 P = {(a, 1. 2), (a, 1. 5), (b, 1. 5)} 1 0 1 1 0 0 1 1

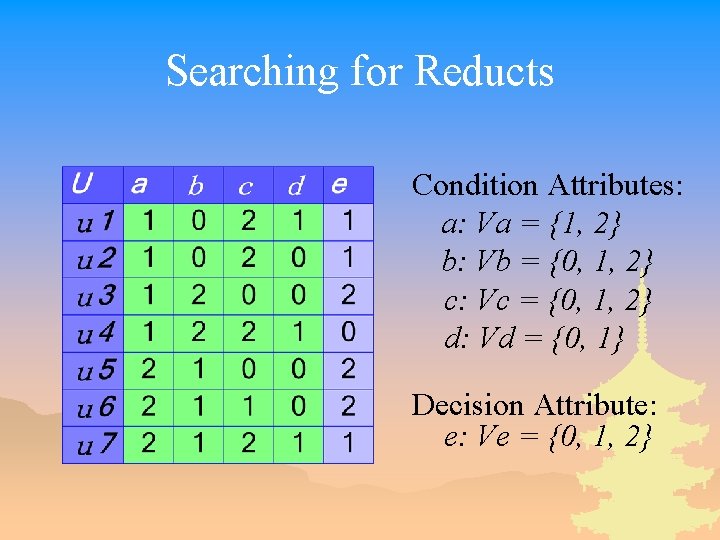

Searching for Reducts Condition Attributes: a: Va = {1, 2} b: Vb = {0, 1, 2} c: Vc = {0, 1, 2} d: Vd = {0, 1} Decision Attribute: e: Ve = {0, 1, 2}

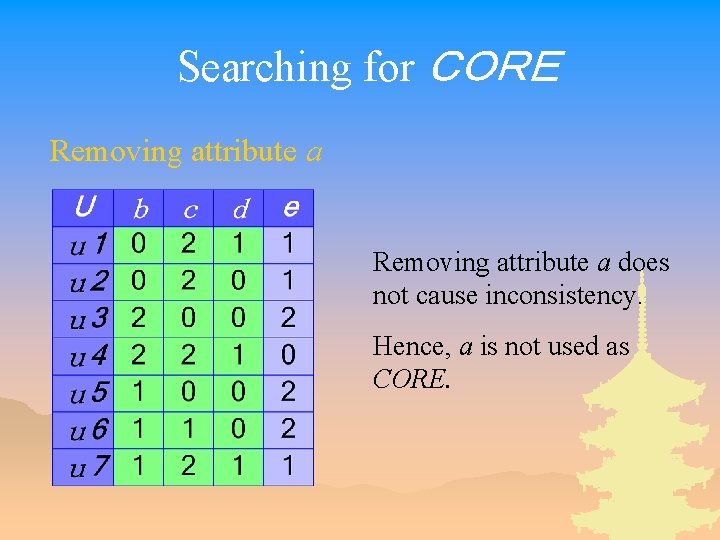

Searching for CORE Removing attribute a does not cause inconsistency. Hence, a is not used as CORE.

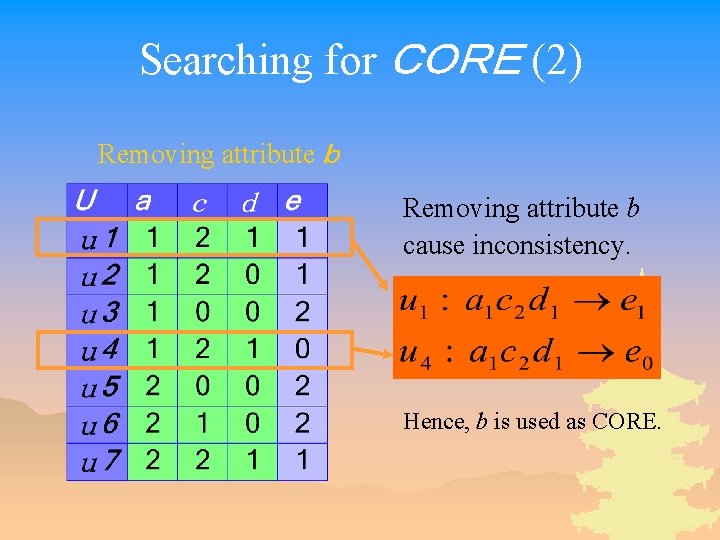

Searching for CORE (2) Removing attribute b Removing attribute b cause inconsistency. Hence, b is used as CORE.

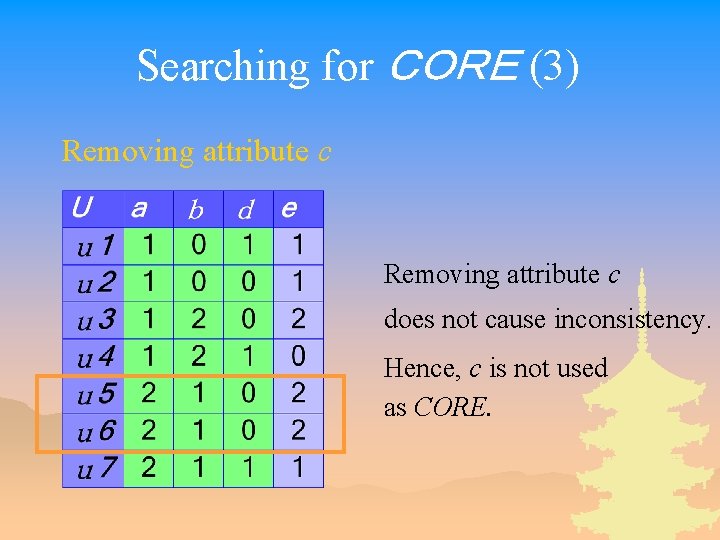

Searching for CORE (3) Removing attribute c does not cause inconsistency. Hence, c is not used as CORE.

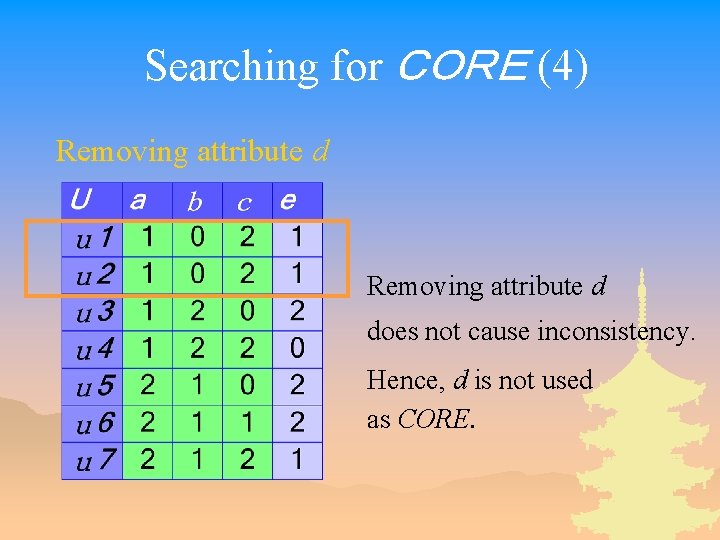

Searching for CORE (4) Removing attribute d does not cause inconsistency. Hence, d is not used as CORE.

Searching for CORE (5) Attribute b is the unique indispensable attribute. CORE(C)={b} Initial subset R = {b}

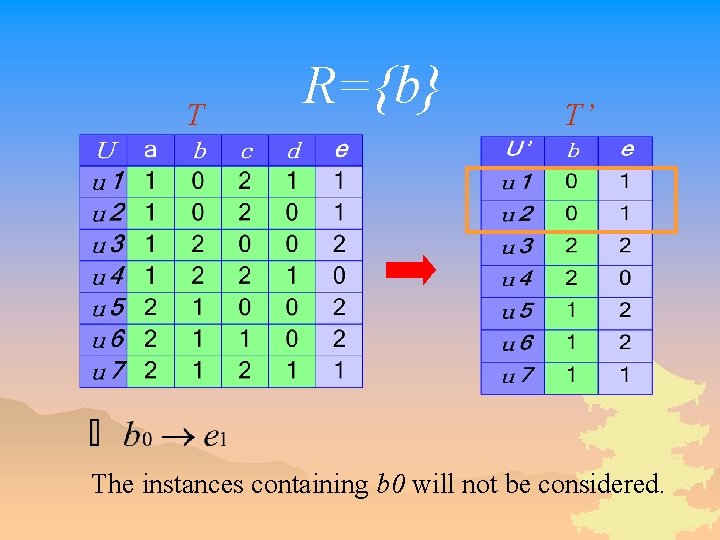

T R={b} T’ The instances containing b 0 will not be considered.

Attribute Evaluation Criteria _ Selecting the attributes that cause the number of consistent instances to increase faster – To obtain the subset of attributes as small as possible _ Selecting the attribute that has smaller number of different values – To guarantee that the number of instances covered by a rule is as large as possible.

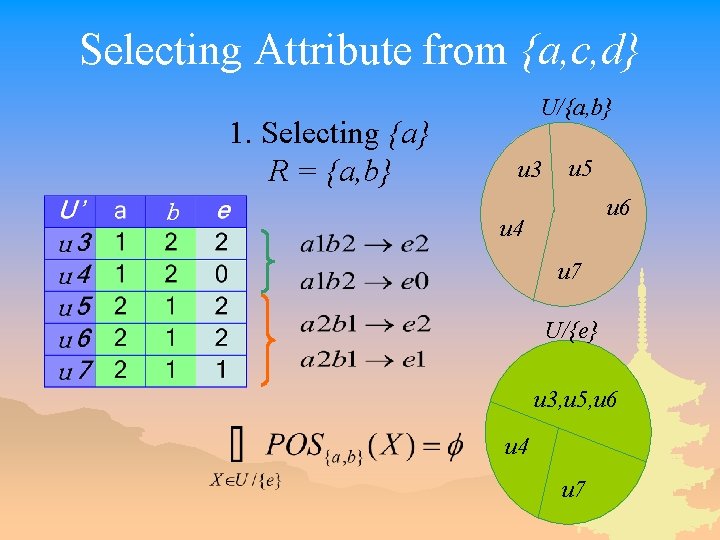

Selecting Attribute from {a, c, d} 1. Selecting {a} R = {a, b} U/{a, b} u 3 u 5 u 6 u 4 u 7 U/{e} u 3, u 5, u 6 u 4 u 7

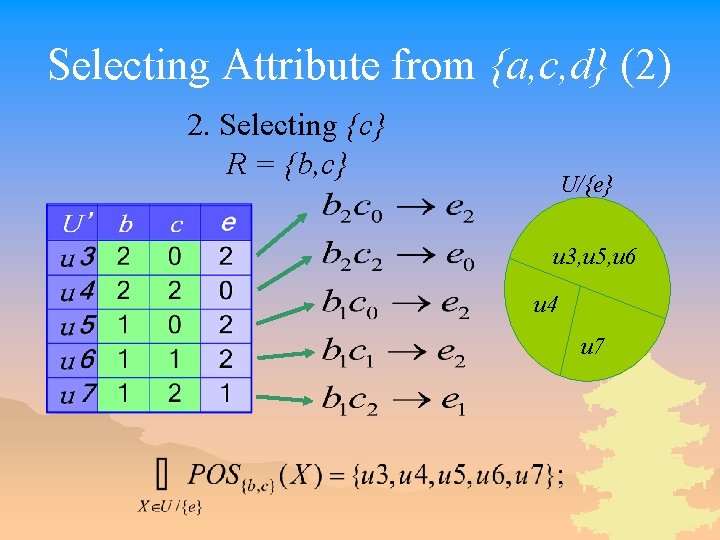

Selecting Attribute from {a, c, d} (2) 2. Selecting {c} R = {b, c} U/{e} u 3, u 5, u 6 u 4 u 7

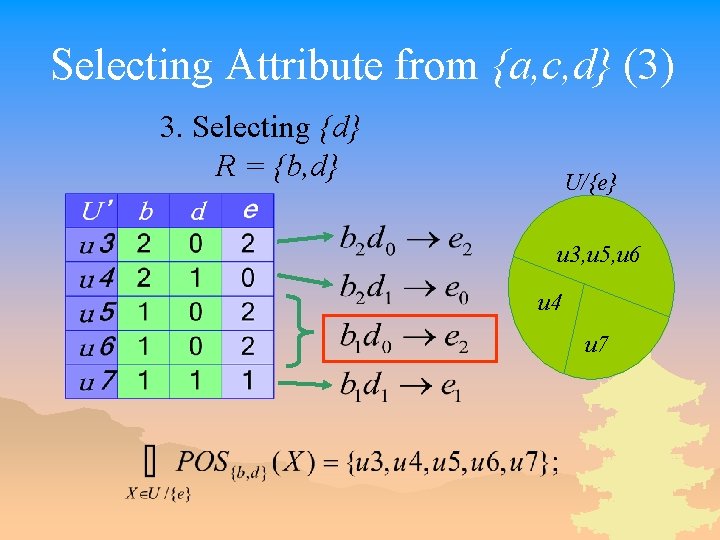

Selecting Attribute from {a, c, d} (3) 3. Selecting {d} R = {b, d} U/{e} u 3, u 5, u 6 u 4 u 7

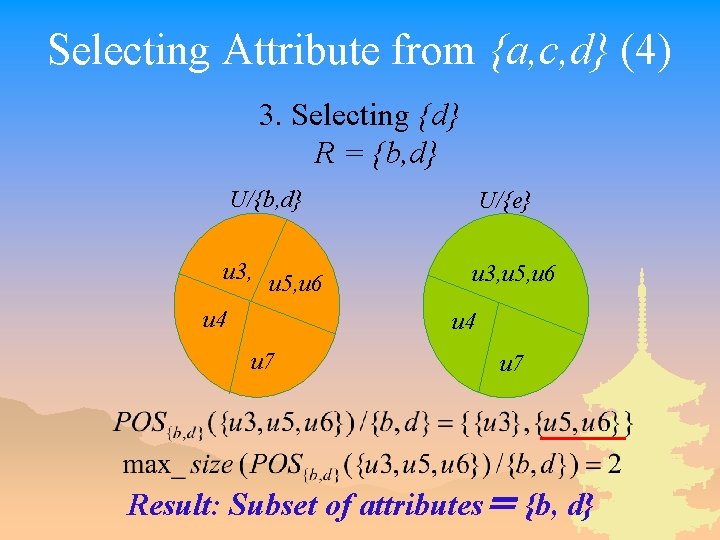

Selecting Attribute from {a, c, d} (4) 3. Selecting {d} R = {b, d} U/{e} u 3, u 5, u 6 u 4 u 7 Result: Subset of attributes= {b, d}

- Slides: 57