Rootkitbased Attacks and Defenses Past Present and Future

Rootkit-based Attacks and Defenses Past, Present and Future Vinod Ganapathy Rutgers University vinodg@cs. rutgers. edu Joint work with Liviu Iftode, Arati Baliga, Jeffrey Bickford (Rutgers) Andrés Lagar-Cavilla and Alex Varshavsky (AT&T Research)

What are rootkits? Rootkits = Stealthy malware • Tools used by attackers to conceal their presence on a compromised system • Typically installed after attacker has obtained root privileges • Stealth achieved by hiding accompanying malicious user-level programs 2

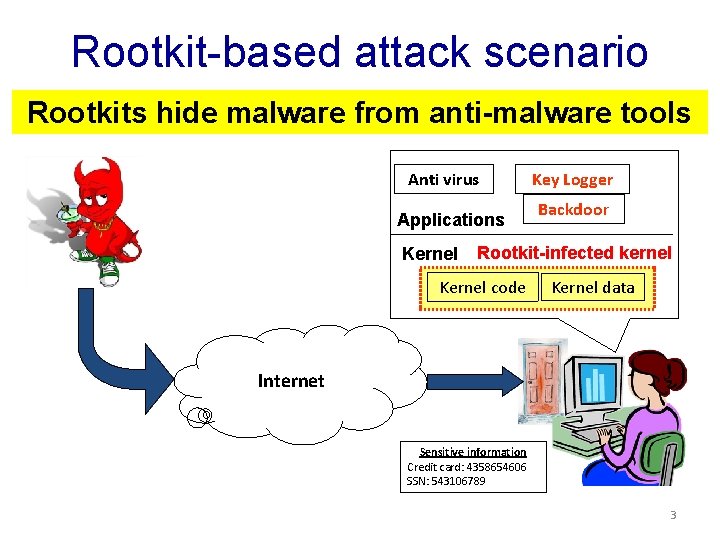

Rootkit-based attack scenario Rootkits hide malware from anti-malware tools Anti virus Applications Kernel Key Logger Backdoor Rootkit-infected kernel Kernel code Kernel data Internet Sensitive information Credit card: 4358654606 SSN: 543106789 3

Significance of the problem • Microsoft reported that 7% of all infections from client machines are because of rootkits (2010). • Rootkits are the vehicle of choice for botnetbased attacks: e. g. , Torpig, Storm. – Allow bot-masters to retain long-term control • A number of high-profile cases based on rootkits: – Stuxnet (2010), Sony BMG (2005), Greek wiretapping scandal (2004/5) 4

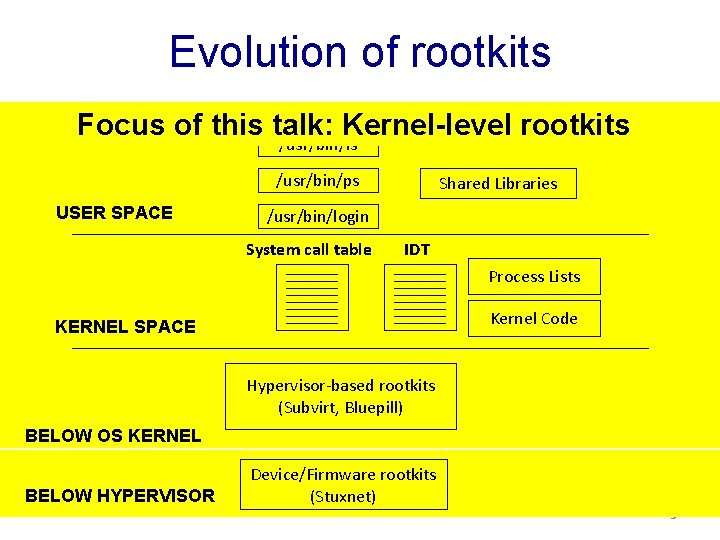

Evolution of rootkits System binaries Focus of this talk: Kernel-level rootkits /usr/bin/ls /usr/bin/ps USER SPACE Shared Libraries /usr/bin/login System call table IDT Process Lists Kernel Code KERNEL SPACE Hypervisor-based rootkits (Subvirt, Bluepill) BELOW OS KERNEL BELOW HYPERVISOR Device/Firmware rootkits (Stuxnet) 5

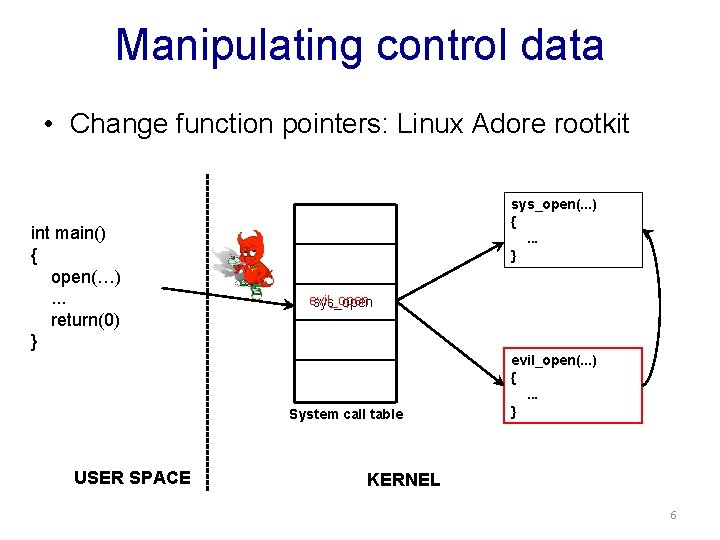

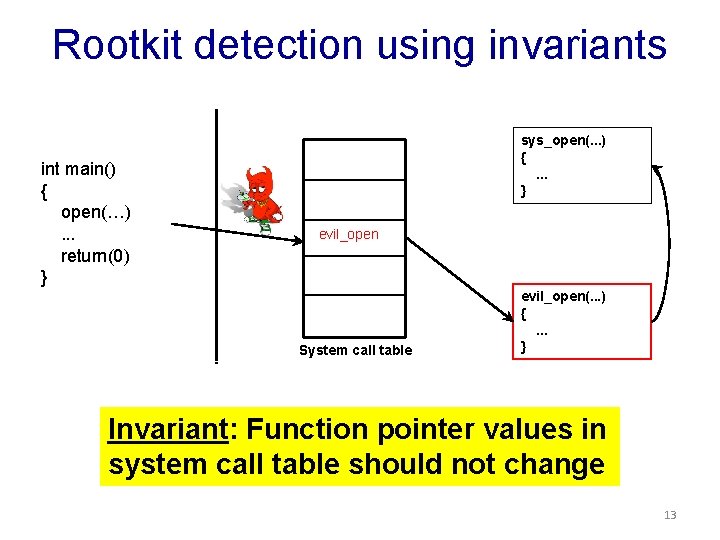

Manipulating control data • Change function pointers: Linux Adore rootkit int main() { open(…). . . return(0) } sys_open(. . . ) {. . . } evil_open sys_open System call table USER SPACE evil_open(. . . ) {. . . } KERNEL 6

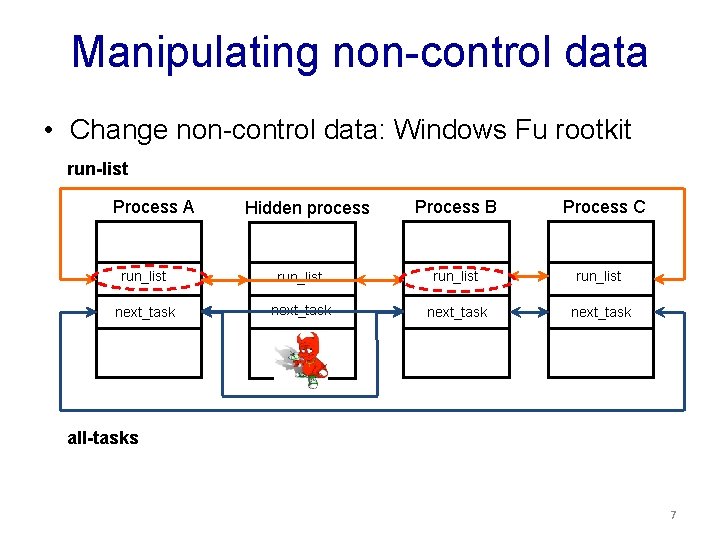

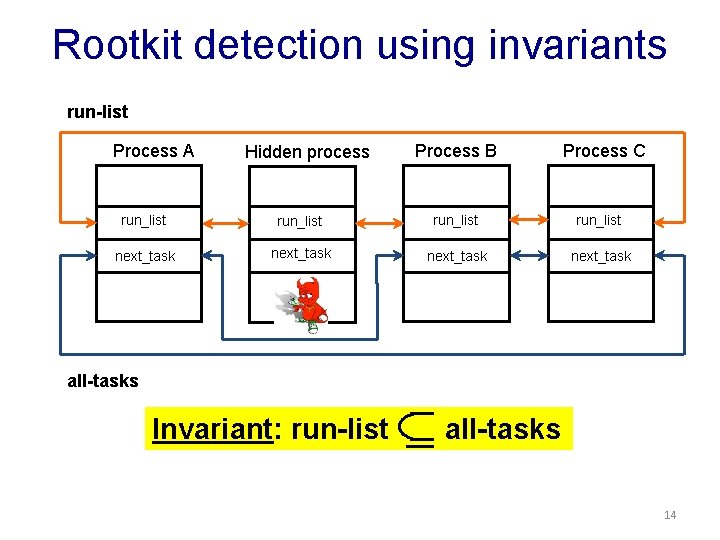

Manipulating non-control data • Change non-control data: Windows Fu rootkit run-list Process A Hidden process Process B Process C run_list next_task all-tasks 7

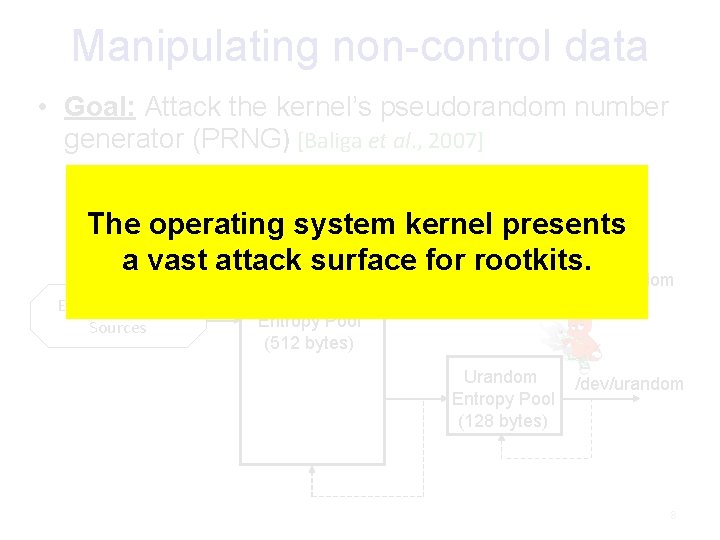

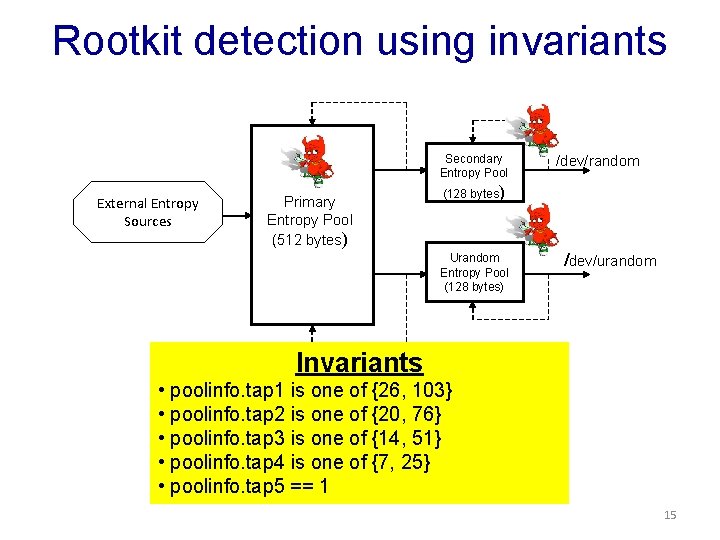

Manipulating non-control data • Goal: Attack the kernel’s pseudorandom number generator (PRNG) [Baliga et al. , 2007] The operating system kernel presents Secondary a vast attack surface for. Entropy rootkits. Pool External Entropy Sources Primary Entropy Pool (512 bytes) (128 bytes) Urandom Entropy Pool (128 bytes) /dev/random /dev/urandom 8

Detecting rootkits: Main idea • Observation: Rootkits operate by maliciously modifying kernel data structures – Modify function pointers to hijack control flow – Modify process lists to hide malicious processes – Modify polynomials to corrupt output of PRNG Continuously monitor the integrity of kernel data structures 9

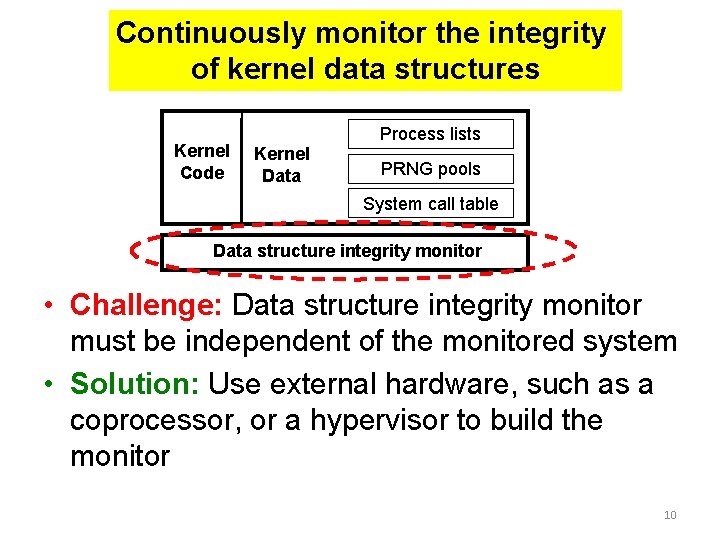

Continuously monitor the integrity of kernel data structures Kernel Code Process lists Kernel Data PRNG pools System call table Data structure integrity monitor • Challenge: Data structure integrity monitor must be independent of the monitored system • Solution: Use external hardware, such as a coprocessor, or a hypervisor to build the monitor 10

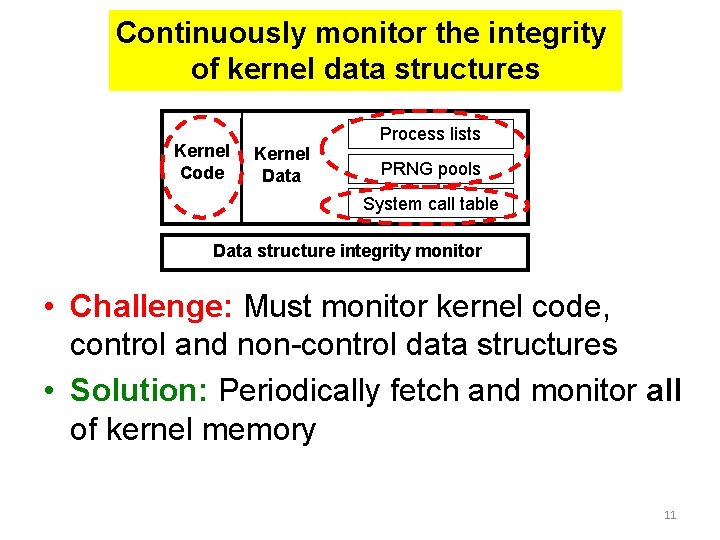

Continuously monitor the integrity of kernel data structures Kernel Code Process lists Kernel Data PRNG pools System call table Data structure integrity monitor • Challenge: Must monitor kernel code, control and non-control data structures • Solution: Periodically fetch and monitor all of kernel memory 11

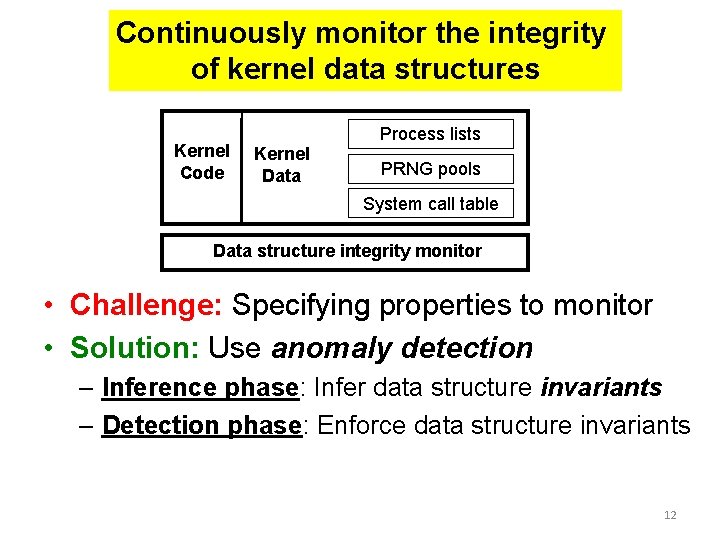

Continuously monitor the integrity of kernel data structures Kernel Code Process lists Kernel Data PRNG pools System call table Data structure integrity monitor • Challenge: Specifying properties to monitor • Solution: Use anomaly detection – Inference phase: Infer data structure invariants – Detection phase: Enforce data structure invariants 12

Rootkit detection using invariants int main() { open(…). . . return(0) } sys_open(. . . ) {. . . } evil_open System call table evil_open(. . . ) {. . . } Invariant: Function pointer values in system call table should not change 13

Rootkit detection using invariants run-list Process A Hidden process Process B Process C run_list next_task all-tasks Invariant: run-list all-tasks 14

Rootkit detection using invariants Secondary Entropy Pool External Entropy Sources Primary Entropy Pool (512 bytes) /dev/random (128 bytes) Urandom Entropy Pool (128 bytes) /dev/urandom Invariants • poolinfo. tap 1 is one of {26, 103} • poolinfo. tap 2 is one of {20, 76} • poolinfo. tap 3 is one of {14, 51} • poolinfo. tap 4 is one of {7, 25} • poolinfo. tap 5 == 1 15

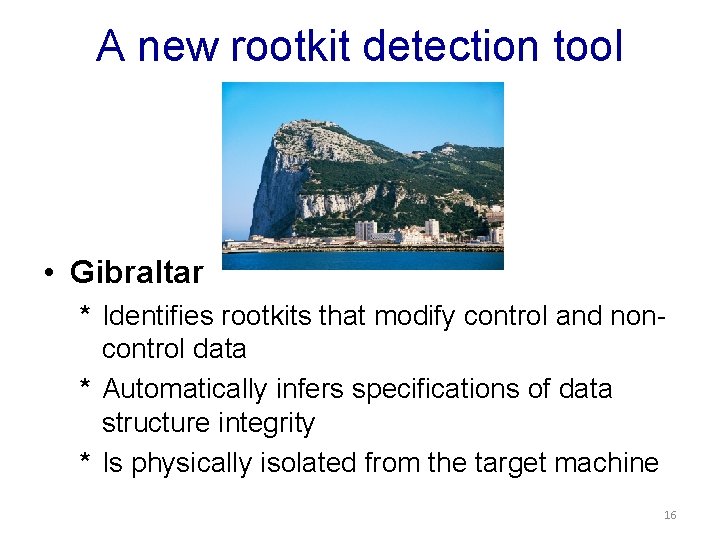

A new rootkit detection tool • Gibraltar * Identifies rootkits that modify control and noncontrol data * Automatically infers specifications of data structure integrity * Is physically isolated from the target machine 16

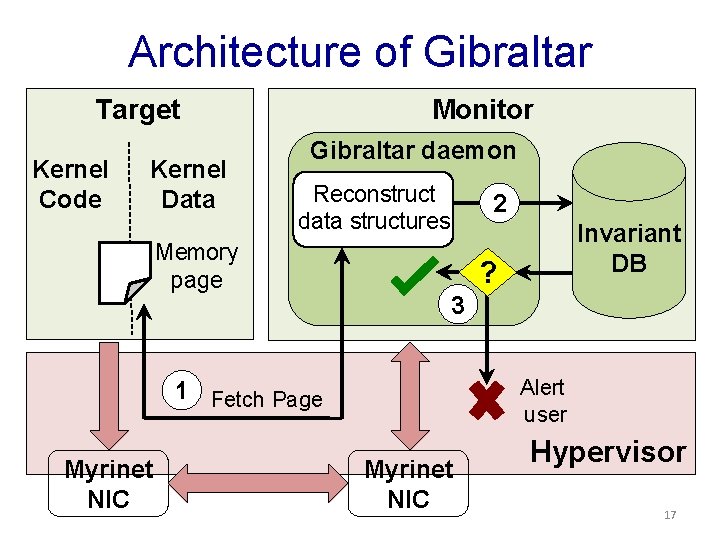

Architecture of Gibraltar Target Kernel Code Kernel Data Monitor Gibraltar daemon Reconstruct data structures Memory page 2 ? 3 Alert user 1 Fetch Page Myrinet NIC Invariant DB Myrinet NIC Hypervisor 17

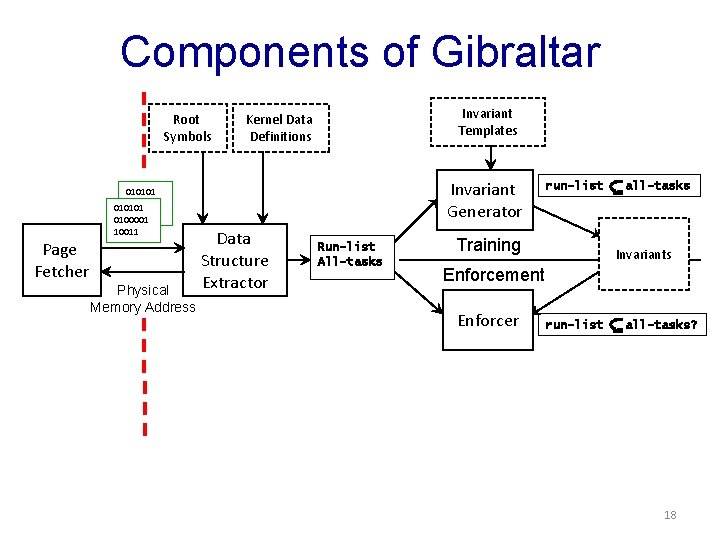

Components of Gibraltar Root Symbols Invariant Templates Kernel Data Definitions Invariant Generator 010101 0100001 010101 10011 0100001 10011 Page Fetcher Physical Memory Address Data Structure Extractor Run-list All-tasks run-list Training all-tasks Invariants Enforcement Enforcer run-list all-tasks? 18

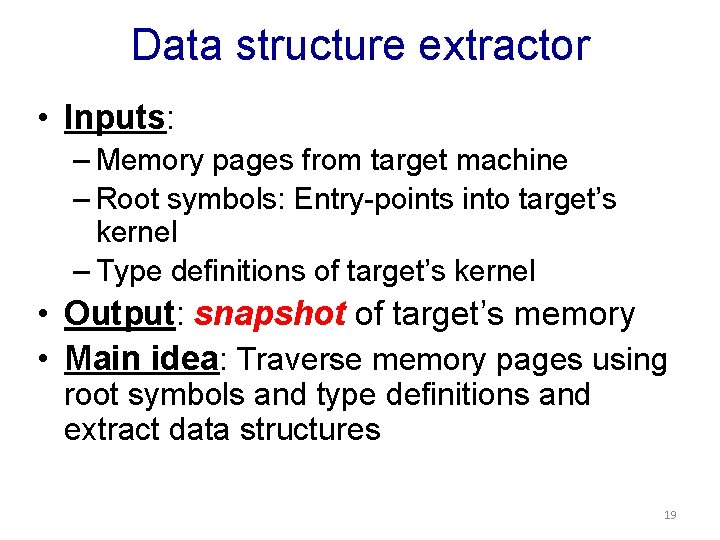

Data structure extractor • Inputs: – Memory pages from target machine – Root symbols: Entry-points into target’s kernel – Type definitions of target’s kernel • Output: snapshot of target’s memory • Main idea: Traverse memory pages using root symbols and type definitions and extract data structures 19

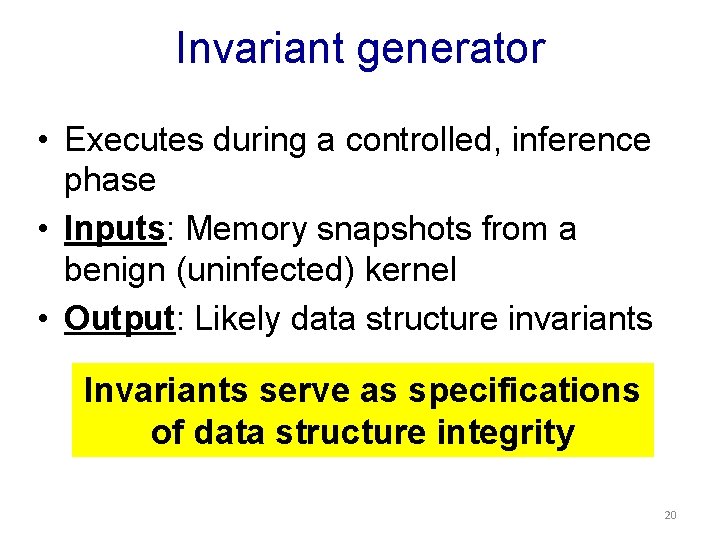

Invariant generator • Executes during a controlled, inference phase • Inputs: Memory snapshots from a benign (uninfected) kernel • Output: Likely data structure invariants Invariants serve as specifications of data structure integrity 20

![Invariant generator • Used an off-the-shelf tool: Daikon [Ernst et al. , 2000] • Invariant generator • Used an off-the-shelf tool: Daikon [Ernst et al. , 2000] •](http://slidetodoc.com/presentation_image/38f8b756efeadbe9ecd1917cceded67d/image-21.jpg)

Invariant generator • Used an off-the-shelf tool: Daikon [Ernst et al. , 2000] • Daikon observes execution of user-space programs and hypothesizes likely invariants • We adapted Daikon to reason about snapshots – Obtain snapshots at different times during training – Hypothesize likely invariants across snapshots 21

Invariant enforcer • Observes and enforces invariants on target’s execution. • Inputs: – Invariants inferred during training – Memory pages from target • Algorithm: – Extract snapshots of target’s data structures – Enforce invariants 22

Experimental evaluation ① How effective is Gibraltar at detecting rootkits? i. e. , what is the false negative rate? ② What is the quality of automaticallygenerated invariants? i. e. , what is the false positive rate? 23

Experimental setup • Implemented on a Intel Xeon 2. 80 GHz, 1 GB machine, running Linux-2. 4. 20 • Fetched memory pages using Myrinet PCI card – We also have a Xen-based implementation. • Obtained invariants by training the system using several benign workloads 24

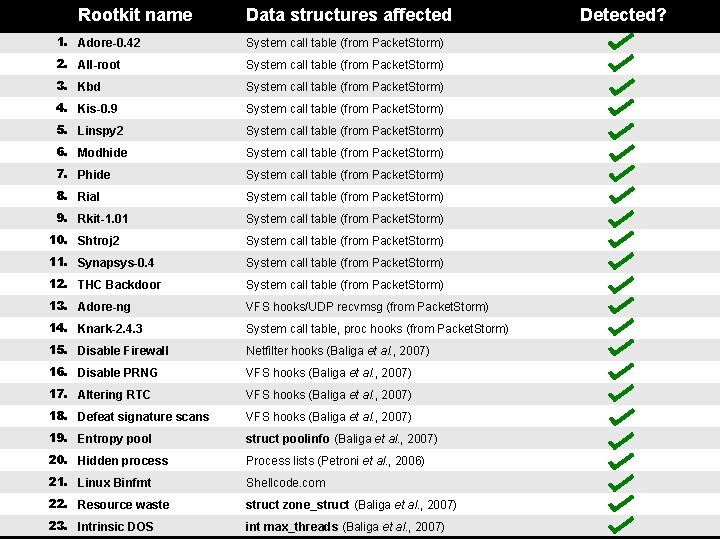

① False negative evaluation • Conducted experiments with 23 Linux rootkits u u 14 rootkits from Packet. Storm 9 advanced rootkits, discussed in the literature • All rootkits modify kernel control and noncontrol data • Installed rootkits one at a time and tested effectiveness of Gibraltar at detecting the infection 25

Rootkit name Data structures affected 1. Adore-0. 42 System call table (from Packet. Storm) 2. All-root System call table (from Packet. Storm) 3. Kbd System call table (from Packet. Storm) 4. Kis-0. 9 System call table (from Packet. Storm) 5. Linspy 2 System call table (from Packet. Storm) 6. Modhide System call table (from Packet. Storm) 7. Phide System call table (from Packet. Storm) 8. Rial System call table (from Packet. Storm) 9. Rkit-1. 01 System call table (from Packet. Storm) 10. Shtroj 2 System call table (from Packet. Storm) 11. Synapsys-0. 4 System call table (from Packet. Storm) 12. THC Backdoor System call table (from Packet. Storm) 13. Adore-ng VFS hooks/UDP recvmsg (from Packet. Storm) 14. Knark-2. 4. 3 System call table, proc hooks (from Packet. Storm) 15. Disable Firewall Netfilter hooks (Baliga et al. , 2007) 16. Disable PRNG VFS hooks (Baliga et al. , 2007) 17. Altering RTC VFS hooks (Baliga et al. , 2007) 18. Defeat signature scans VFS hooks (Baliga et al. , 2007) 19. Entropy pool struct poolinfo (Baliga et al. , 2007) 20. Hidden process Process lists (Petroni et al. , 2006) 21. Linux Binfmt Shellcode. com 22. Resource waste struct zone_struct (Baliga et al. , 2007) November 30, 2009 DOS 23. Intrinsic int max_threads (Baliga et al. , 2007) Detected? 26

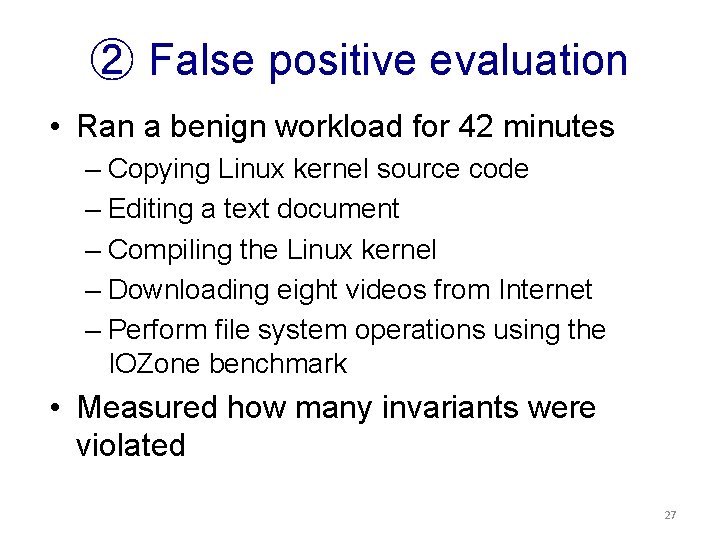

② False positive evaluation • Ran a benign workload for 42 minutes – Copying Linux kernel source code – Editing a text document – Compiling the Linux kernel – Downloading eight videos from Internet – Perform file system operations using the IOZone benchmark • Measured how many invariants were violated 27

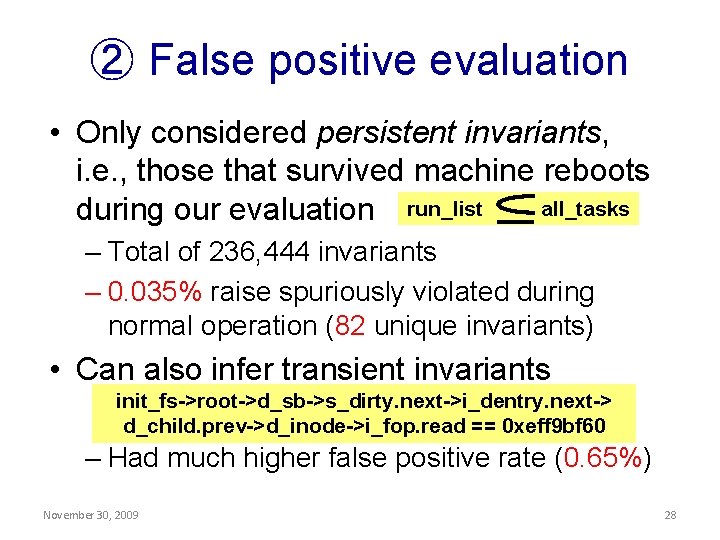

② False positive evaluation • Only considered persistent invariants, i. e. , those that survived machine reboots all_tasks during our evaluation run_list – Total of 236, 444 invariants – 0. 035% raise spuriously violated during normal operation (82 unique invariants) • Can also infer transient invariants init_fs->root->d_sb->s_dirty. next->i_dentry. next-> d_child. prev->d_inode->i_fop. read == 0 xeff 9 bf 60 – Had much higher false positive rate (0. 65%) November 30, 2009 28

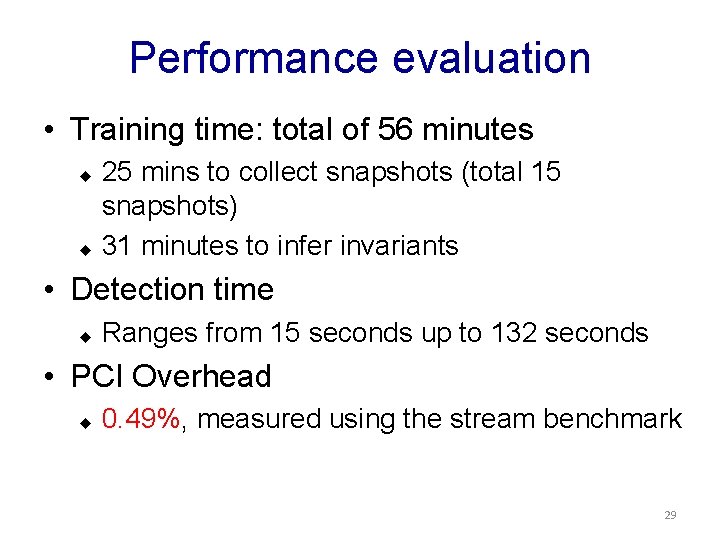

Performance evaluation • Training time: total of 56 minutes 25 mins to collect snapshots (total 15 snapshots) u 31 minutes to infer invariants u • Detection time u Ranges from 15 seconds up to 132 seconds • PCI Overhead u 0. 49%, measured using the stream benchmark 29

Part I: The past and present Detecting Kernel-Level Rootkits using Data Structure Invariants Part 2: The future Security versus Energy Tradeoffs for Host-based Mobile Rootkit Detection 30

The rise of mobile malware • Mobile devices increasingly ubiquitous: – Store personal and contextual information. – Used for sensitive tasks, e. g. , online banking. • Mobile malware has immense potential to cause societal damage. • Kaspersky Labs report (2009). – 106 types of mobile malware. – 514 variants. • Prediction: We have only seen the tip of the iceberg. 31

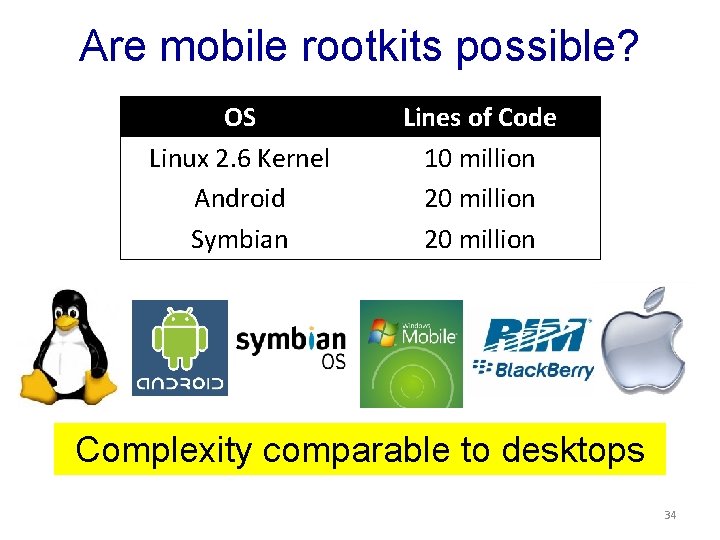

Are mobile rootkits possible? OS Linux 2. 6 Kernel Android Symbian Lines of Code 10 million 20 million Complexity comparable to desktops 34

The threat of mobile rootkits • Several recent reports of mobile malware gaining root access. • i. Phone: – i. Kee. A, i. Kee. B (2009). – Exploited jailbroken i. Phones via SSH. • Android: – Ginger. Master, Droid. Deluxe, Droid. Kung. Fu (2011). – Apps that perform root exploits against Android. 33

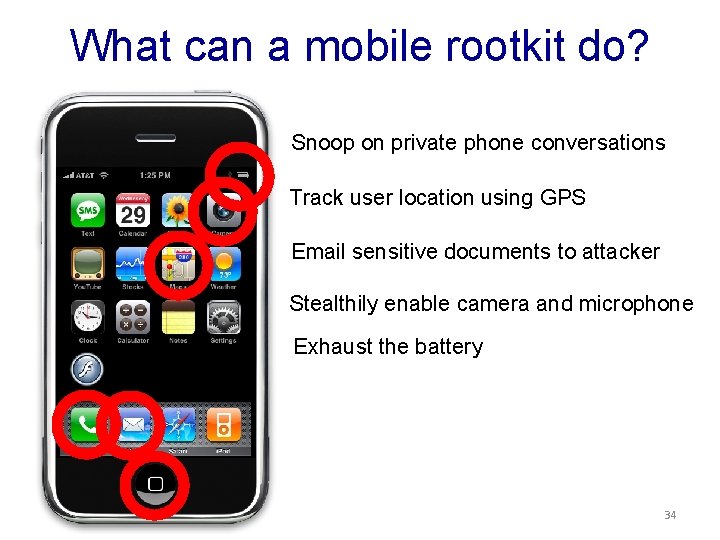

What can a mobile rootkit do? Snoop on private phone conversations Track user location using GPS Email sensitive documents to attacker Stealthily enable camera and microphone Exhaust the battery 34

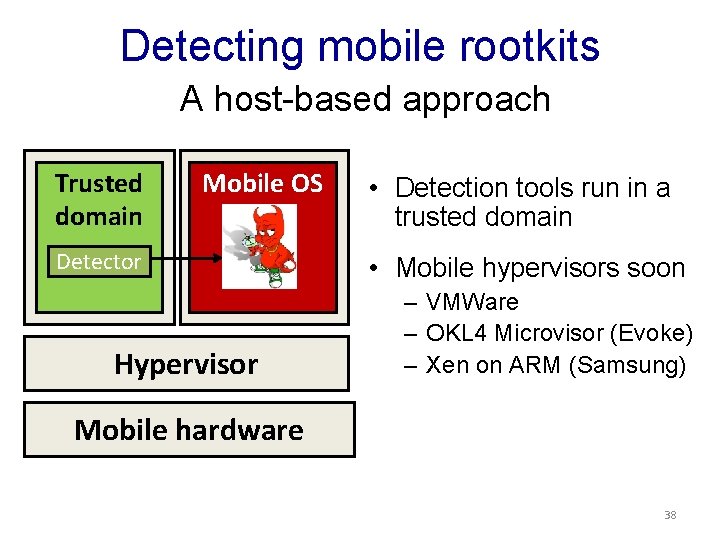

Detecting mobile rootkits A host-based approach Trusted domain Mobile OS Detector Hypervisor • Detection tools run in a trusted domain • Mobile hypervisors soon – VMWare – OKL 4 Microvisor (Evoke) – Xen on ARM (Samsung) Mobile hardware 38

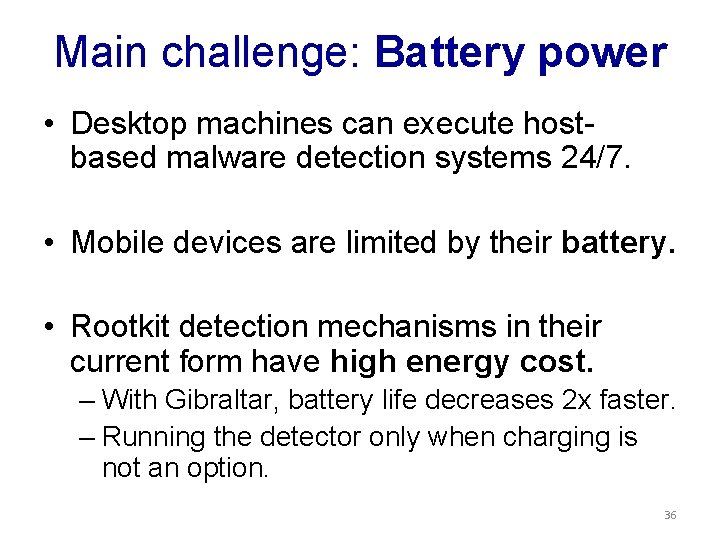

Main challenge: Battery power • Desktop machines can execute hostbased malware detection systems 24/7. • Mobile devices are limited by their battery. • Rootkit detection mechanisms in their current form have high energy cost. – With Gibraltar, battery life decreases 2 x faster. – Running the detector only when charging is not an option. 36

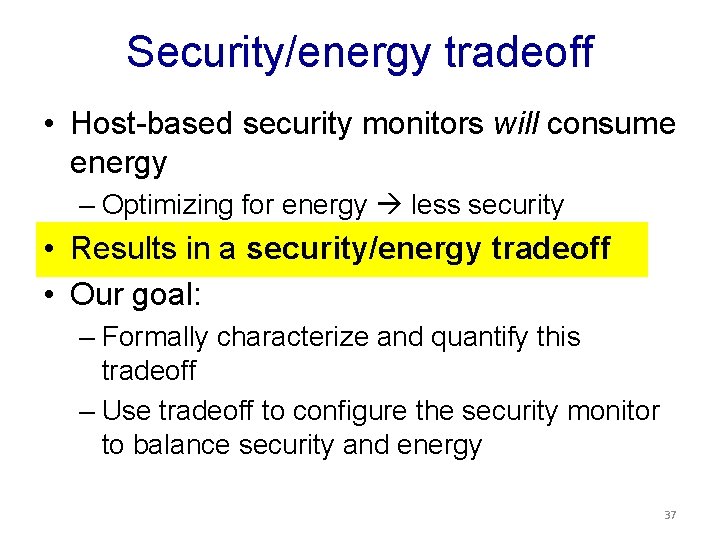

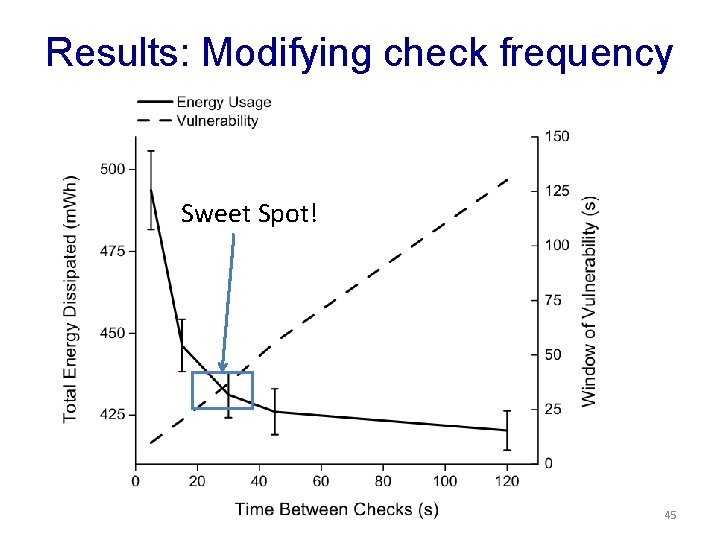

Security/energy tradeoff • Host-based security monitors will consume energy – Optimizing for energy less security • Results in a security/energy tradeoff • Our goal: – Formally characterize and quantify this tradeoff – Use tradeoff to configure the security monitor to balance security and energy 37

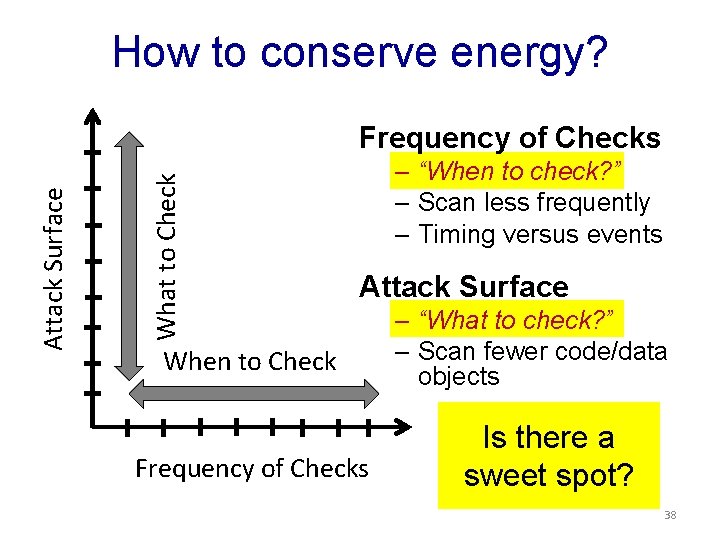

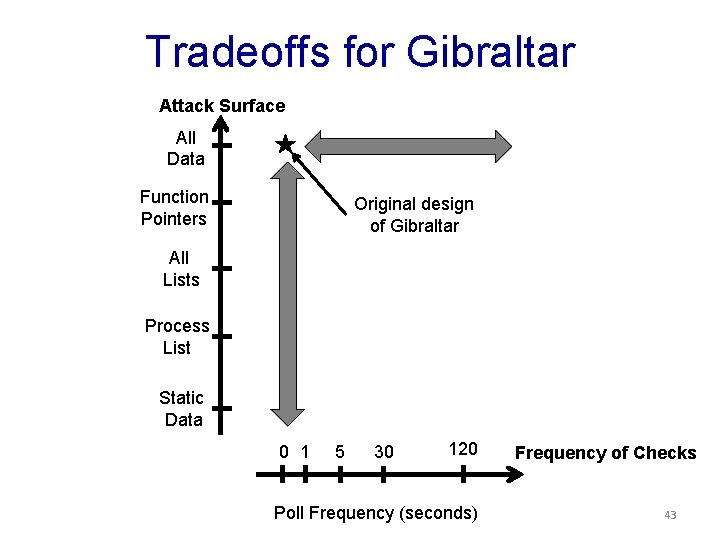

How to conserve energy? What to Check Attack Surface Frequency of Checks – “When to check? ” – Scan less frequently – Timing versus events Attack Surface When to Check Frequency of Checks – “What to check? ” – Scan fewer code/data objects Is there a sweet spot? 38

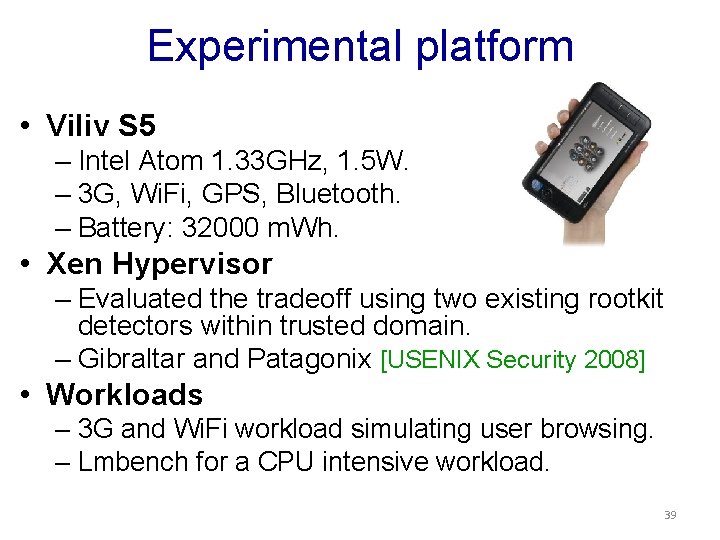

Experimental platform • Viliv S 5 – Intel Atom 1. 33 GHz, 1. 5 W. – 3 G, Wi. Fi, GPS, Bluetooth. – Battery: 32000 m. Wh. • Xen Hypervisor – Evaluated the tradeoff using two existing rootkit detectors within trusted domain. – Gibraltar and Patagonix [USENIX Security 2008] • Workloads – 3 G and Wi. Fi workload simulating user browsing. – Lmbench for a CPU intensive workload. 39

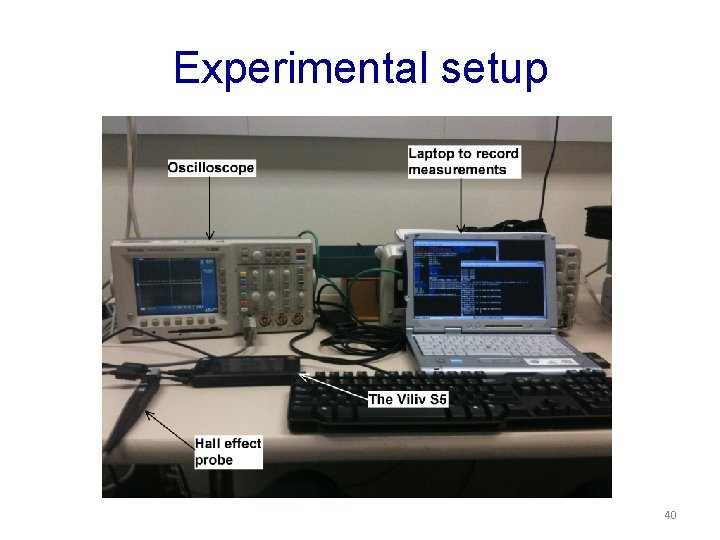

Experimental setup 40

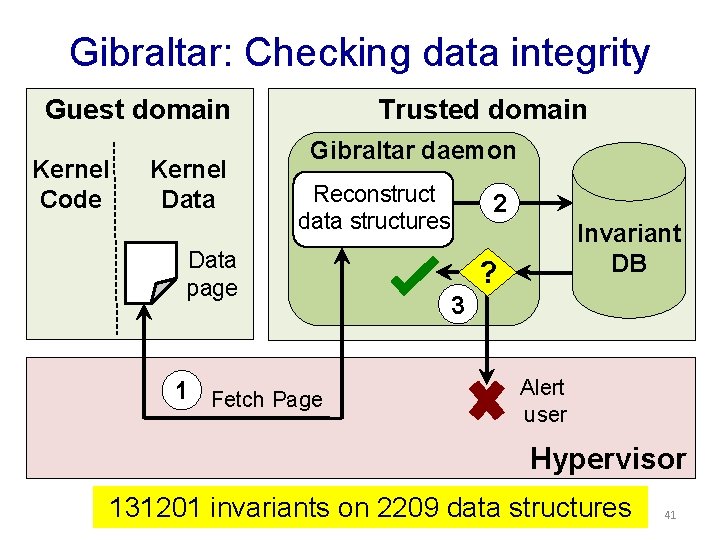

Gibraltar: Checking data integrity Guest domain Kernel Code Kernel Data Trusted domain Gibraltar daemon Reconstruct data structures Data page 1 Fetch Page 2 Invariant DB ? 3 Alert user Hypervisor 131201 invariants on 2209 data structures 41

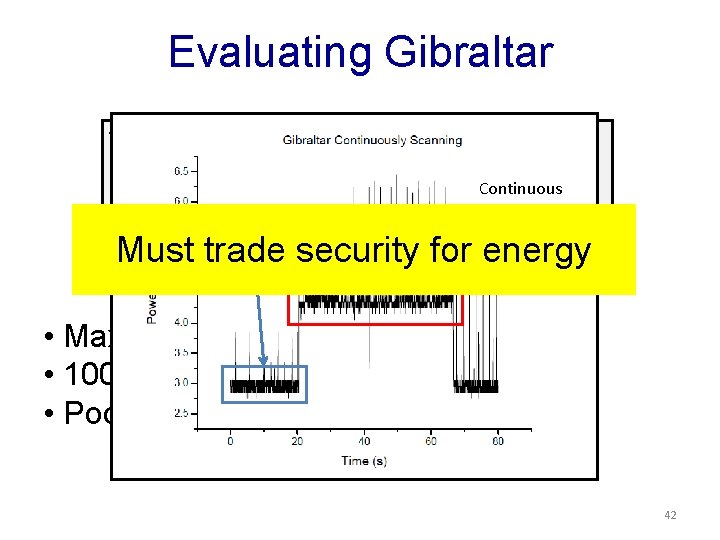

Evaluating Gibraltar while(1) { for all kernel data structures { get current value Continuous check against invariant. Scan Idle } } Must trade security for energy • Maximum security • 100% CPU usage • Poor energy efficiency 42

Tradeoffs for Gibraltar Attack Surface All Data Function Pointers Original design of Gibraltar All Lists Process List Static Data 0 1 5 30 120 Poll Frequency (seconds) Frequency of Checks 43

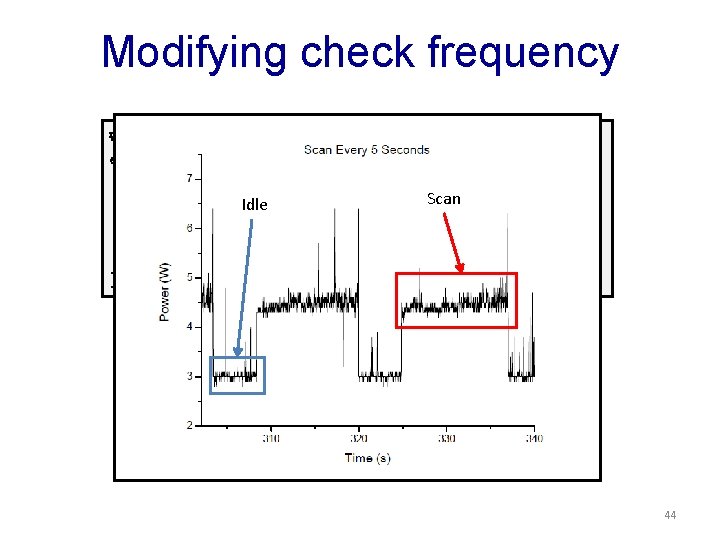

Modifying check frequency while(1) { everyfor “x”all seconds { data structures { kernel for all data structures { getkernel current value Scan Idle get current value check against invariant } } 44

Results: Modifying check frequency Sweet Spot! 45

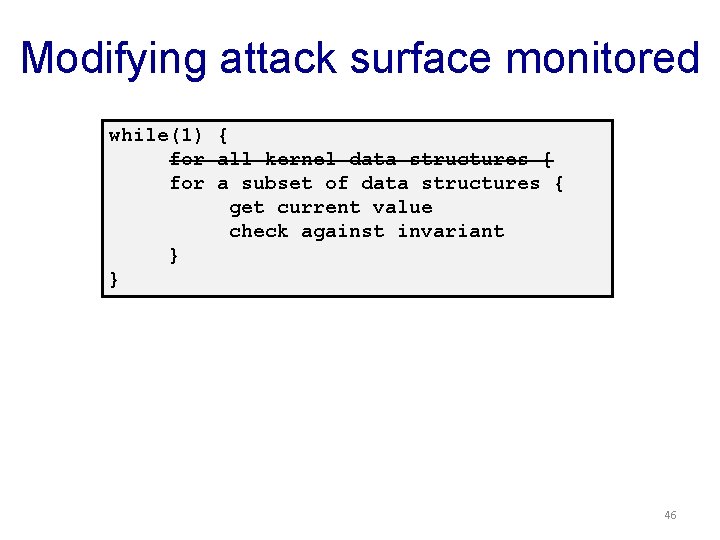

Modifying attack surface monitored while(1) for } } { all kernel data structures { aget subset of data structures { current value get current value check against invariant 46

![Results: Modifying attack surface 96% of rootkits! [Petroni et al. CCS ‘ 07] 47 Results: Modifying attack surface 96% of rootkits! [Petroni et al. CCS ‘ 07] 47](http://slidetodoc.com/presentation_image/38f8b756efeadbe9ecd1917cceded67d/image-47.jpg)

Results: Modifying attack surface 96% of rootkits! [Petroni et al. CCS ‘ 07] 47

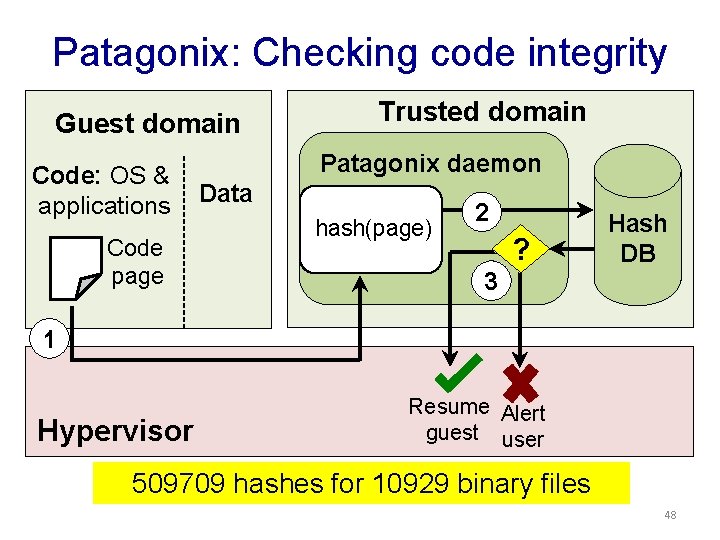

Patagonix: Checking code integrity Guest domain Code: OS & applications Code page Trusted domain Patagonix daemon Data hash(page) 2 ? Hash DB 3 1 Hypervisor Resume Alert guest user 509709 hashes for 10929 binary files 48

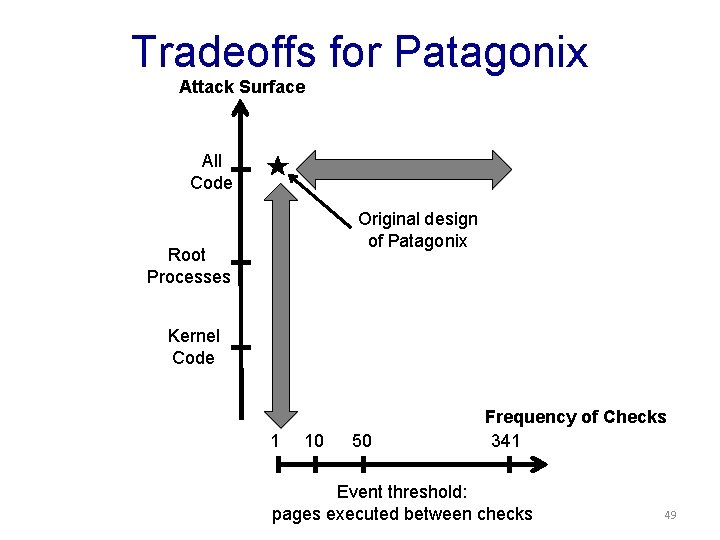

Tradeoffs for Patagonix Attack Surface All Code Original design of Patagonix Root Processes Kernel Code 1 10 50 Frequency of Checks 341 Event threshold: pages executed between checks 49

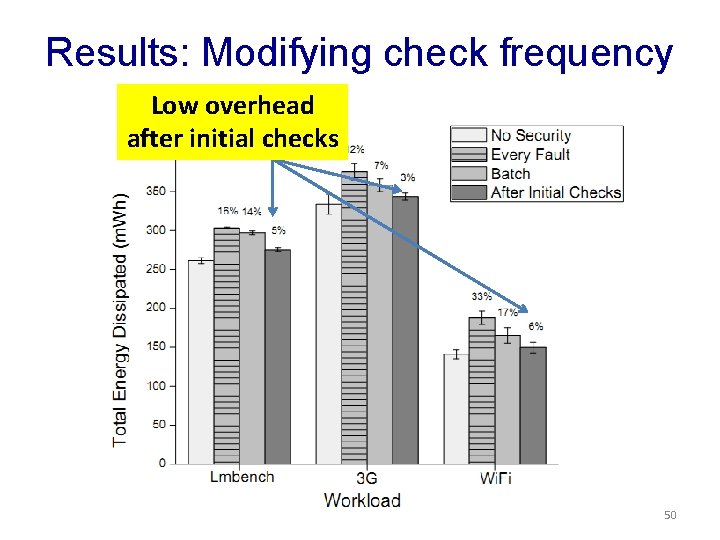

Results: Modifying check frequency Low overhead after initial checks 50

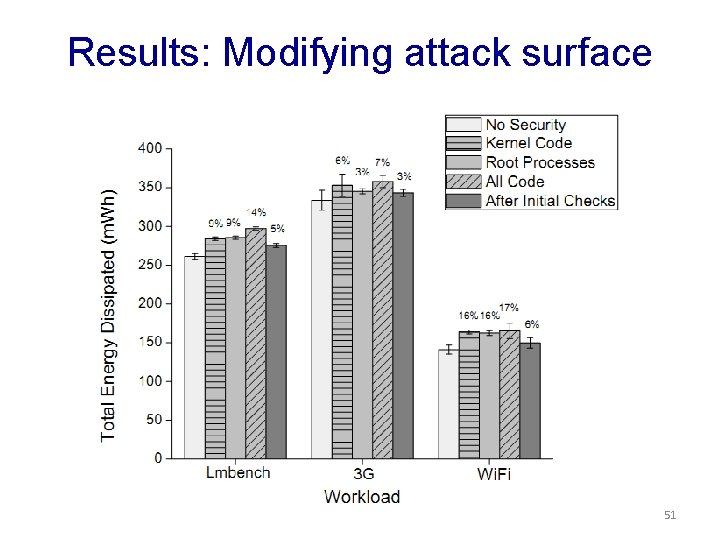

Results: Modifying attack surface 51

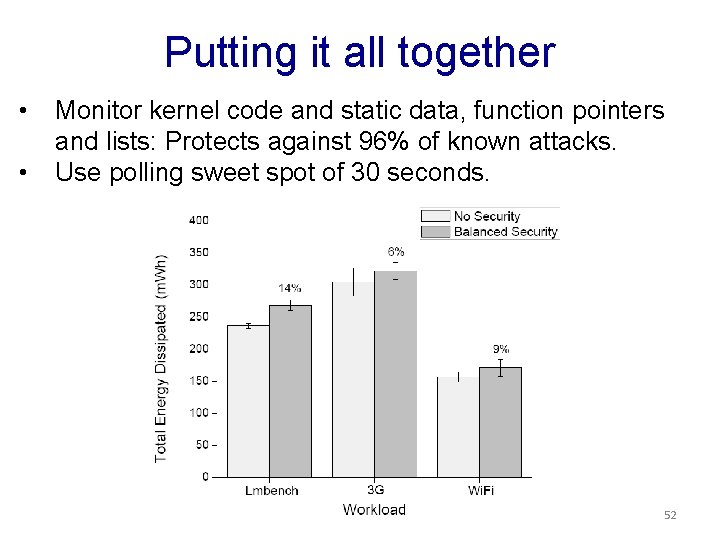

Putting it all together • • Monitor kernel code and static data, function pointers and lists: Protects against 96% of known attacks. Use polling sweet spot of 30 seconds. 52

Thank You Rootkit-based Attacks and Defenses Past, Present and Future Vinod Ganapathy vinodg@cs. rutgers. edu References: • Gibraltar: ACSAC 2008, IEEE TDSC 2011. • Mobile rootkits: Hot. Mobile 2011, Mobi. Sys 2011.

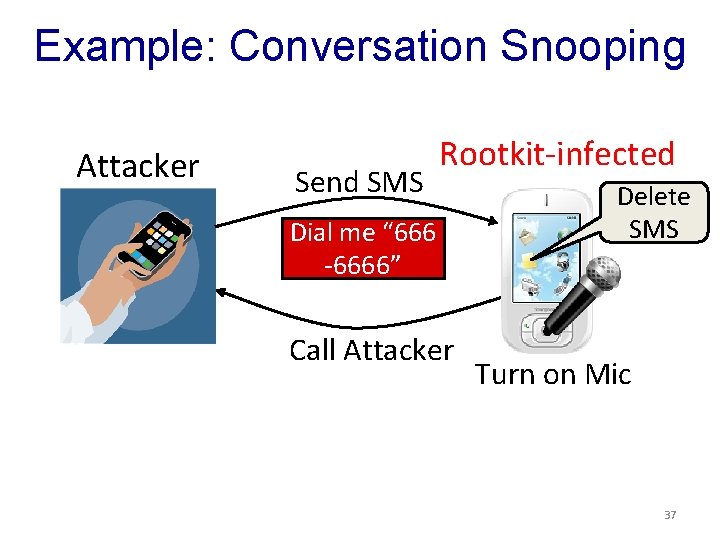

Example: Conversation Snooping Attacker Send SMS Rootkit-infected Dial me “ 666 -6666” Call Attacker Delete SMS Turn on Mic 37

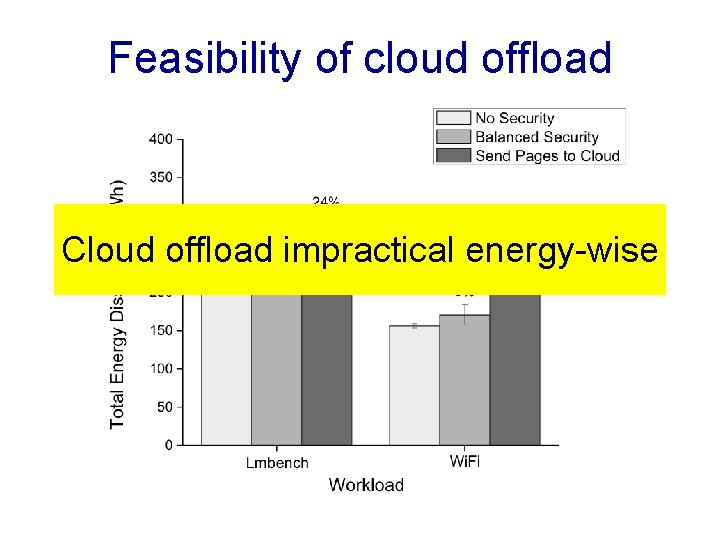

Feasibility of cloud offload Cloud offload impractical energy-wise

- Slides: 55