Rocks Clusters SUN HPC Consortium November 2004 Federico

Rocks Clusters SUN HPC Consortium November 2004 Federico D. Sacerdoti Advanced Cyber. Infrastructure Group San Diego Supercomputer Center

Outline • Rocks Identity • Rocks Mission • Why Rocks • Rocks Design • Rocks Technologies, Services, Capabilities • Rockstar Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Identity • System to build and manage Linux Clusters ¨ General Linux maintenance system for N nodes F ¨ Desktops too Happens to be good for clusters • Free • Mature • High Performance ¨ Designed for scientific workloads Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Mission • Make Clusters Easy (Papadopoulos, 00) • Most cluster projects assume a sysadmin will help build the cluster. • Build a cluster without assuming CS knowledge ¨ Simple idea, complex ramifications F Automatic configuration of all components and services F ~30 services on frontend, ~10 services on compute nodes ¨ Clusters for Scientists • Results in a very robust system that is insulated from human mistakes Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Why Rocks • Easiest way to build a Rockstar-class machine with SGE ready out of the box • More supported architectures F Pentium, Athlon, Opteron, Nocona, Itanium • More happy users F 280 registered clusters, 700 member support list F HPCwire Readers Choice Awards 2004 • More configured HPC software: 15 optional extensions (rolls) and counting. • Unmatched Release Quality. Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Why Rocks • Big projects use Rocks ¨ BIRN (20 clusters) ¨ GEON (20 clusters) ¨ NBCR (6 clusters) • Supports different clustering toolkits F Rocks Standard (Red. Hat HPC) F SCE F SCore (Single Process Space) F Open. Mosix (Single Process Space: on the way) Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Design • Uses Red. Hat’s intelligent installer ¨ Leverages Red. Hat’s ability to discover & configure hardware ¨ Everyone tries System Imaging at first F Who has homogeneous hardware? F If so, whose cluster stays that way? • Description Based install: Kickstart ¨ • Like Jumpstart Contains a viable Operating System ¨ No need to “pre-configure” an OS Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Design • No special “Rocksified” package structure. Can install any RPM. • Where Linux core packages come from: ¨ Red. Hat Advanced Workstation (from SRPMS) F Enterprise Linux 3 Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Leap of Faith • Install is primitive operation for Upgrade and Patch ¨ Seems wrong at first F Why must you reinstall the whole thing? ¨ Actually right: debugging a Linux system is fruitless at this scale. Reinstall enforces stability. ¨ Primary user has no sysadmin to help troubleshoot • Rocks install is scalable and fast: 15 min for entire cluster ¨ • Post script work done in parallel by compute nodes. Power Admins may use up 2 date or yum for patches. ¨ To compute nodes by reinstall Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Technology

Cluster Integration with Rocks 1. Build a frontend node 1. Insert CDs: Base, HPC, Kernel, optional Rolls 2. Answer install screens: network, timezone, password 2. Build compute nodes 1. Run insert-ethers on frontend (dhcpd listener) 2. PXE boot compute nodes in name order 3. Start Computing Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

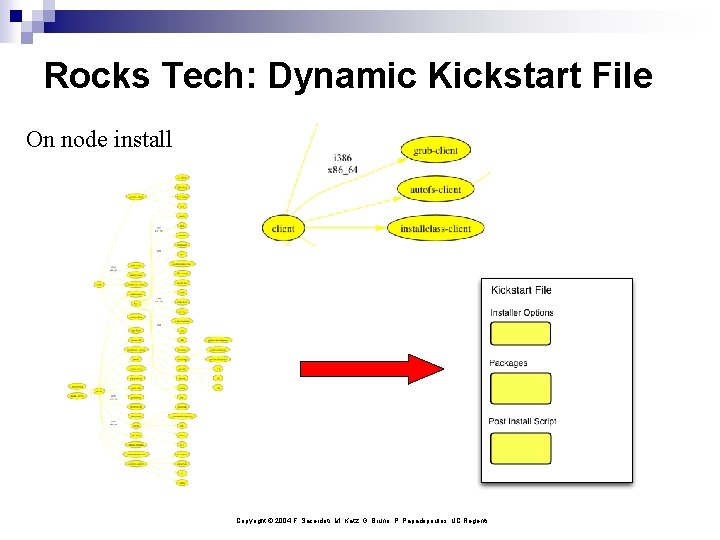

Rocks Tech: Dynamic Kickstart File On node install Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

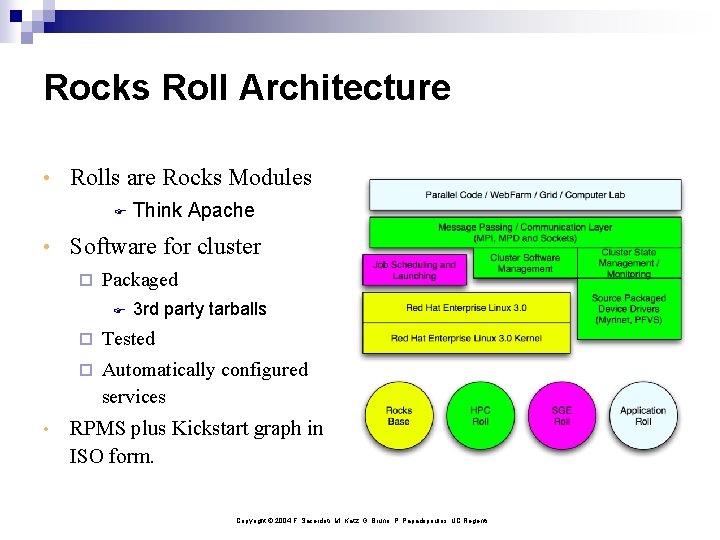

Rocks Roll Architecture • Rolls are Rocks Modules F Think Apache • Software for cluster ¨ Packaged F • 3 rd party tarballs ¨ Tested ¨ Automatically configured services RPMS plus Kickstart graph in ISO form. Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

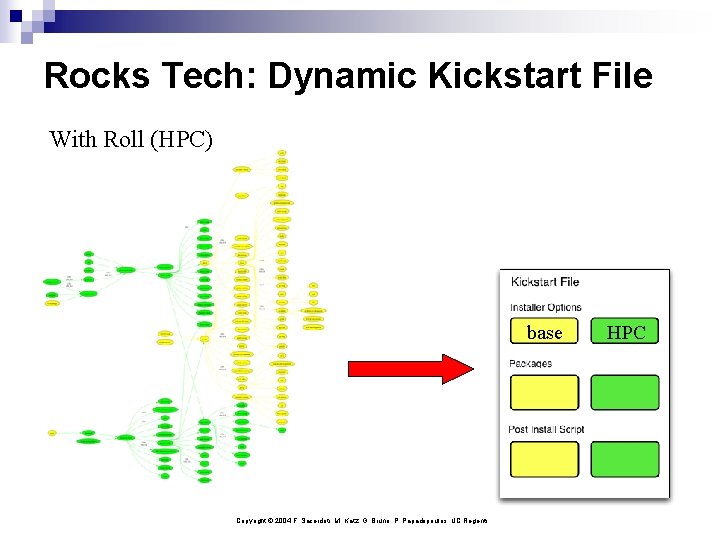

Rocks Tech: Dynamic Kickstart File With Roll (HPC) base Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents HPC

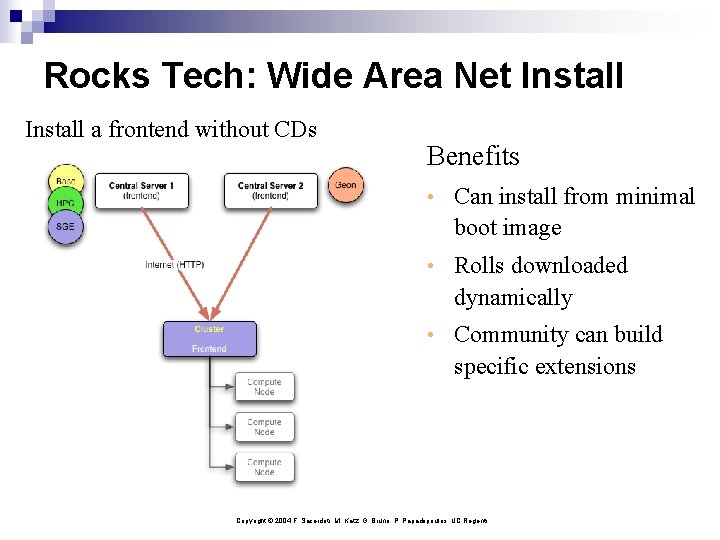

Rocks Tech: Wide Area Net Install a frontend without CDs Benefits • Can install from minimal boot image • Rolls downloaded dynamically • Community can build specific extensions Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

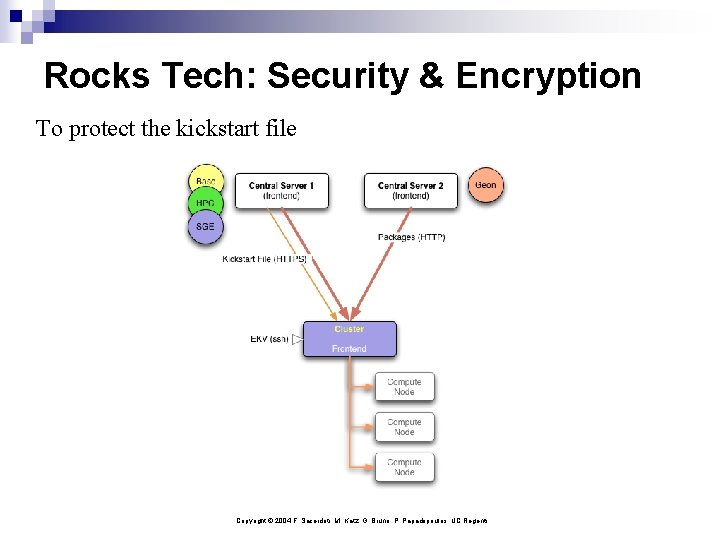

Rocks Tech: Security & Encryption To protect the kickstart file Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

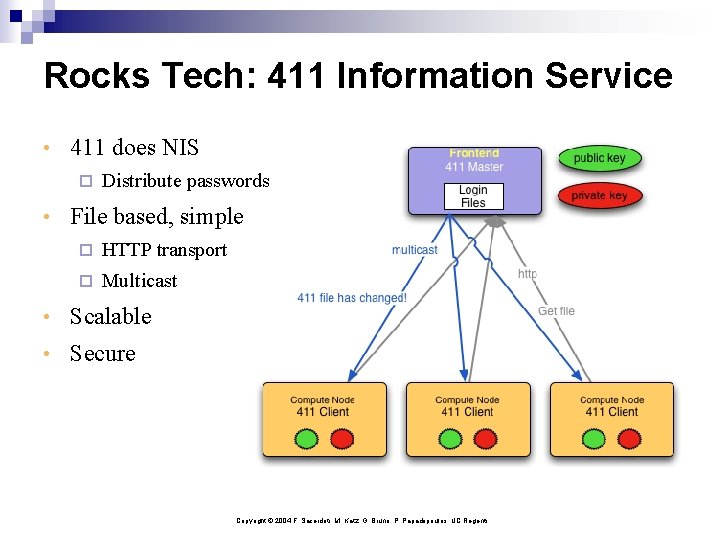

Rocks Tech: 411 Information Service • 411 does NIS ¨ Distribute passwords • File based, simple ¨ HTTP transport ¨ Multicast • Scalable • Secure Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Services

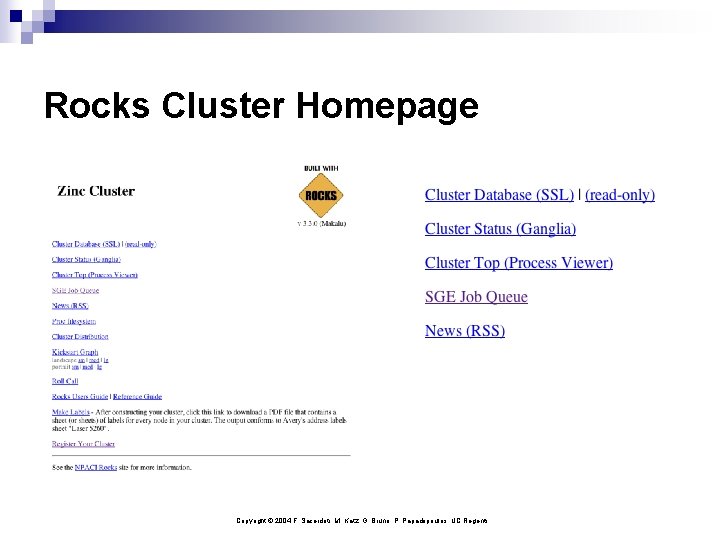

Rocks Cluster Homepage Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

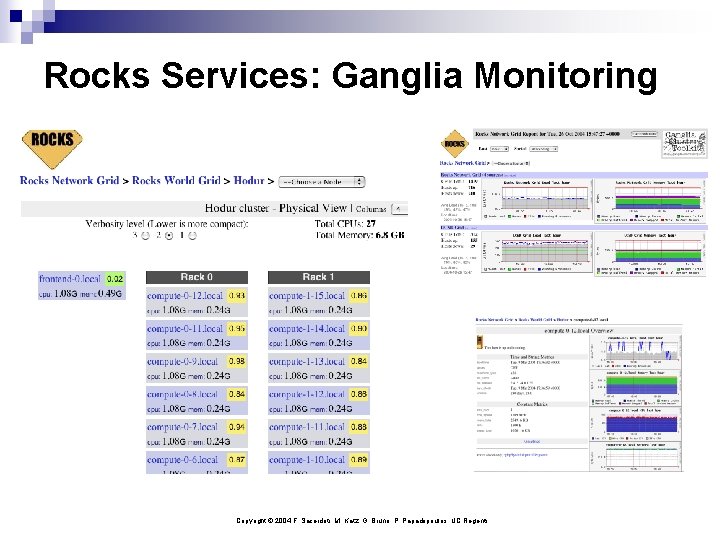

Rocks Services: Ganglia Monitoring Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

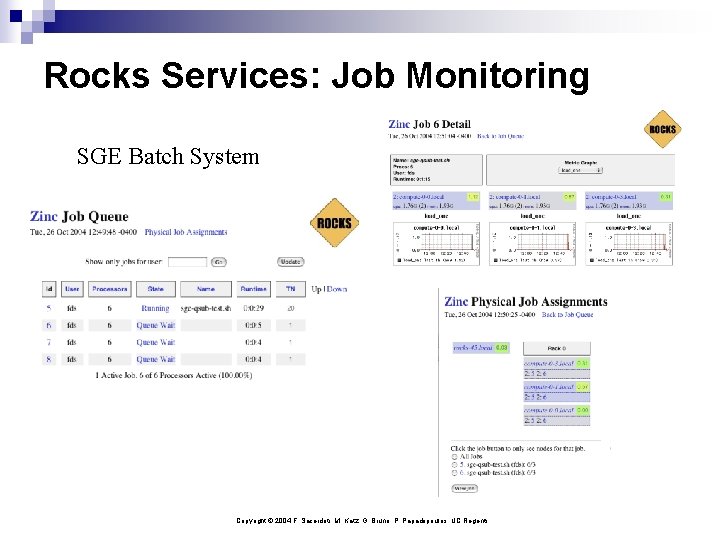

Rocks Services: Job Monitoring SGE Batch System Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

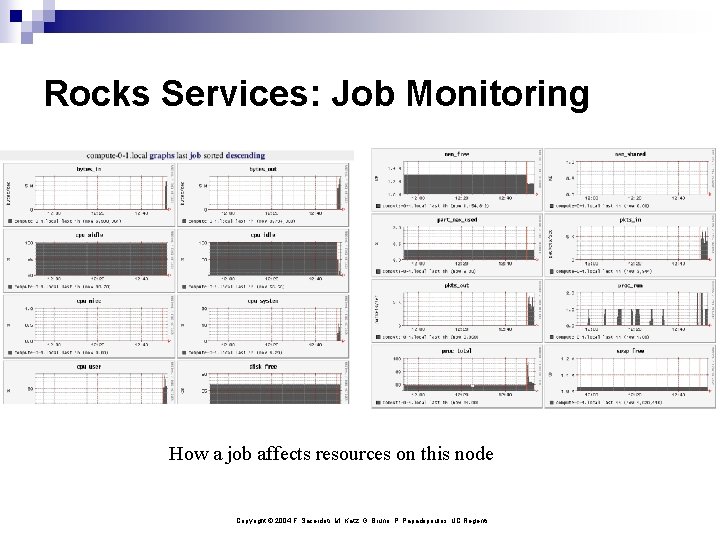

Rocks Services: Job Monitoring How a job affects resources on this node Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Services: Configured, Ready • Grid (Globus, from NMI) • Condor (NMI) ¨ Globus GRAM • SGE ¨ Globus GRAM • MPD parallel job launcher (Argonne) ¨ MPICH 1, 2 • Intel Compiler set • PVFS Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rocks Capabilities

High Performance Interconnect Support • Myrinet ¨ All major versions, GM 2 ¨ Automatic configuration and support in Rocks since first release • Infiniband ¨ Via Collaboration with AMD & Infinicon IB F IPo. IB F Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

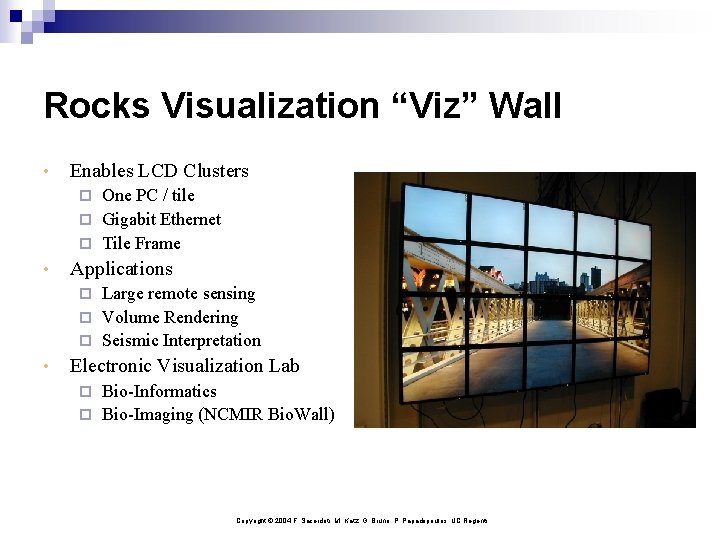

Rocks Visualization “Viz” Wall • Enables LCD Clusters One PC / tile ¨ Gigabit Ethernet ¨ Tile Frame ¨ • Applications Large remote sensing ¨ Volume Rendering ¨ Seismic Interpretation ¨ • Electronic Visualization Lab Bio-Informatics ¨ Bio-Imaging (NCMIR Bio. Wall) ¨ Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Rockstar

Rockstar Cluster • Collaboration between SDSC and SUN • 129 Nodes: Sun V 60 x (Dual P 4 Xeon) F Gigabit F Ethernet Networking (copper) Top 500 list positions: 201, 433 • Built on showroom floor of Supercomputing Conference 2003 F Racked, Wired, Installed: 2 hrs total F Running apps through SGE Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

Building of Rockstar Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

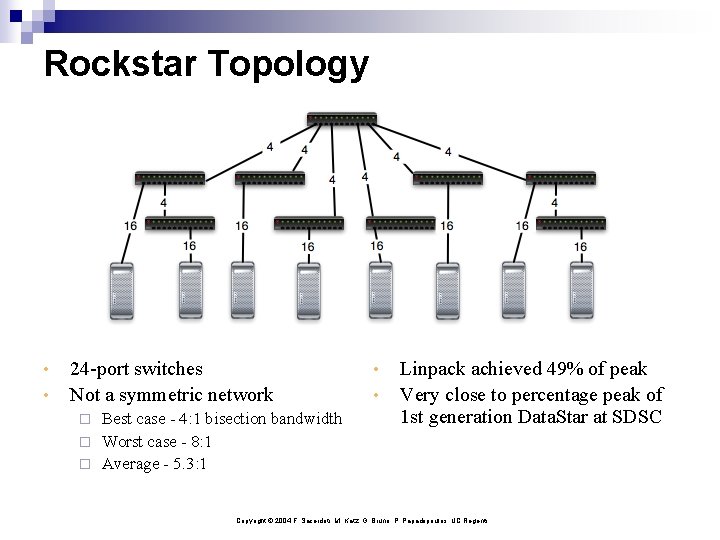

Rockstar Topology • • 24 -port switches Not a symmetric network Best case - 4: 1 bisection bandwidth ¨ Worst case - 8: 1 ¨ Average - 5. 3: 1 ¨ • • Linpack achieved 49% of peak Very close to percentage peak of 1 st generation Data. Star at SDSC Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

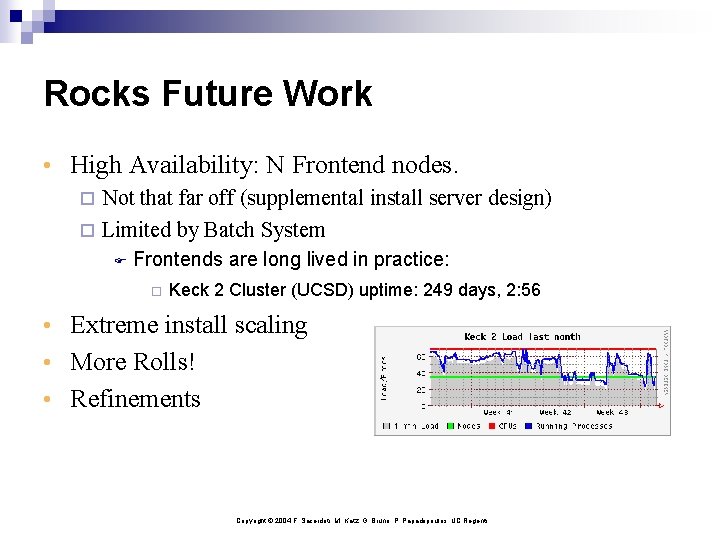

Rocks Future Work • High Availability: N Frontend nodes. ¨ Not that far off (supplemental install server design) ¨ Limited by Batch System F Frontends are long lived in practice: ¨ Keck 2 Cluster (UCSD) uptime: 249 days, 2: 56 • Extreme install scaling • More Rolls! • Refinements Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

www. rocksclusters. org • Rocks mailing List ¨ https: //lists. sdsc. edu/mailman/listinfo. cgi/npaci-rocks-discussion • Rocks Cluster Register ¨ http: //www. rocksclusters. org/rocks-register • Core: {fds, bruno, mjk, phil}@sdsc. edu Copyright © 2004 F. Sacerdoti, M. Katz, G. Bruno, P. Papadopoulos, UC Regents

- Slides: 32