Robust Semantics Information Extraction and Information Retrieval CS

Robust Semantics, Information Extraction, and Information Retrieval CS 4705

Problems with Syntax-Driven Semantics • Syntactic structures often don’t fit semantic structures very well – Important semantic elements often distributed very differently in trees for sentences that mean ‘the same’ I like soup. Soup is what I like. – Parse trees contain many structural elements not clearly important to making semantic distinctions – Syntax driven semantic representations are sometimes pretty verbose V --> serves

Semantic Grammars • Alternative to modifying syntactic grammars to deal with semantics too • Define grammars specifically in terms of the semantic information we want to extract – Domain specific: Rules correspond directly to entities and activities in the domain I want to go from Boston to Baltimore on Thursday, September 24 th – Greeting --> {Hello|Hi|Um…} – Trip. Request Need-spec travel-verb from City to City on Date

Predicting User Input • Rely on knowledge of task and (sometimes) constraints on what the user can do – Can handle very sophisticated phenomena I want to go to Boston on Thursday. I want to leave from there on Friday for Baltimore. Trip. Request Need-spec travel-verb from City on Date for City Dialogue postulate maps filler for ‘from-city’ to prespecified to-city

Priming User Input • Users will tend to use the vocabulary they hear from the system: lexical entrainment (Clark & Brennan ’ 96) – Reference to objects: the scarey M&M man – Re-use of system prompt vocabulary/syntax: Please tell me where you would like to leave/depart from. Where would you like to leave/depart from? • Explicit training vs. implicit training • Training the user vs. retraining the system

Drawbacks of Semantic Grammars • Lack of generality – A new one for each application – Large cost in development time • Can be very large, depending on how much coverage you want them to have • If users go outside the grammar, things may break disastrously I want to leave from my house at 10 a. m. I want to talk to a person.

Information Retrieval • How related to NLP? – Operates on language (speech or text) – Does it use linguistic information? • Stemming • Bag-of-words approach • Very simple analyses – Does it make use of document formatting? • Headlines, punctuation, captions • Collection: a set of documents • Term: a word or phrase • Query: a set of terms

But…what is a term? • Stop list • Stemming • Homonymy, polysemy, synonymy

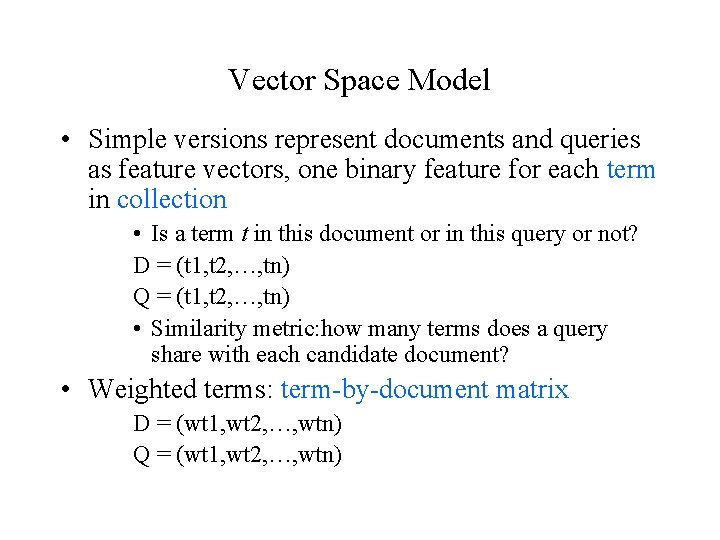

Vector Space Model • Simple versions represent documents and queries as feature vectors, one binary feature for each term in collection • Is a term t in this document or in this query or not? D = (t 1, t 2, …, tn) Q = (t 1, t 2, …, tn) • Similarity metric: how many terms does a query share with each candidate document? • Weighted terms: term-by-document matrix D = (wt 1, wt 2, …, wtn) Q = (wt 1, wt 2, …, wtn)

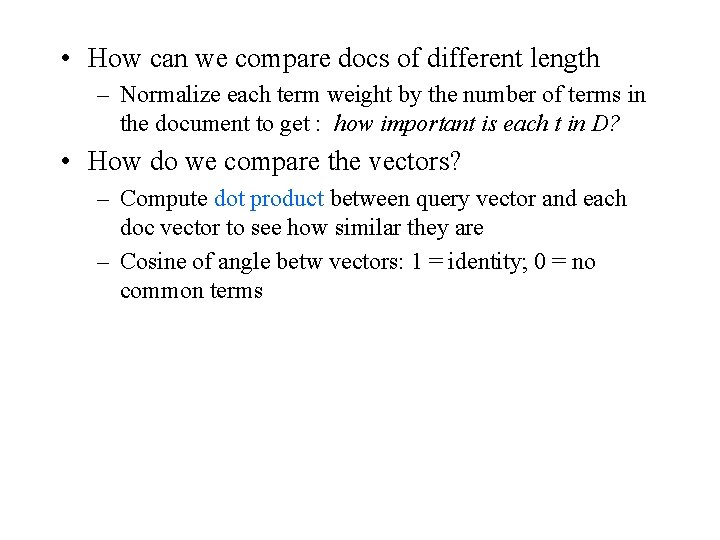

• How can we compare docs of different length – Normalize each term weight by the number of terms in the document to get : how important is each t in D? • How do we compare the vectors? – Compute dot product between query vector and each doc vector to see how similar they are – Cosine of angle betw vectors: 1 = identity; 0 = no common terms

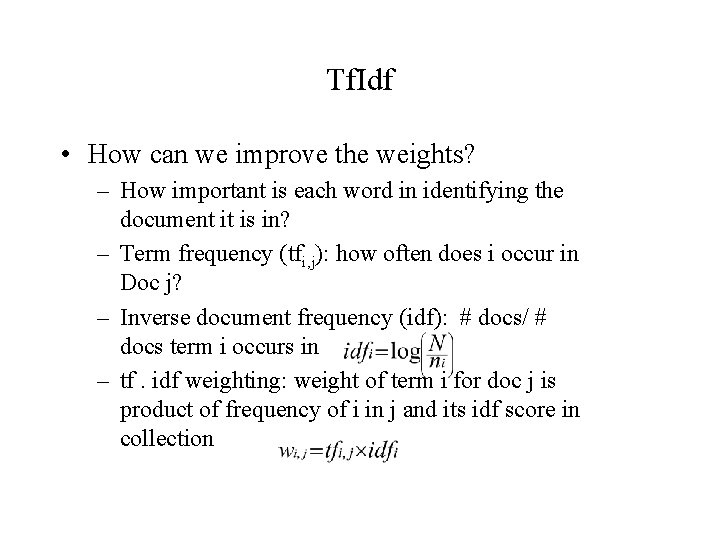

Tf. Idf • How can we improve the weights? – How important is each word in identifying the document it is in? – Term frequency (tfi, j): how often does i occur in Doc j? – Inverse document frequency (idf): # docs/ # docs term i occurs in – tf. idf weighting: weight of term i for doc j is product of frequency of i in j and its idf score in collection

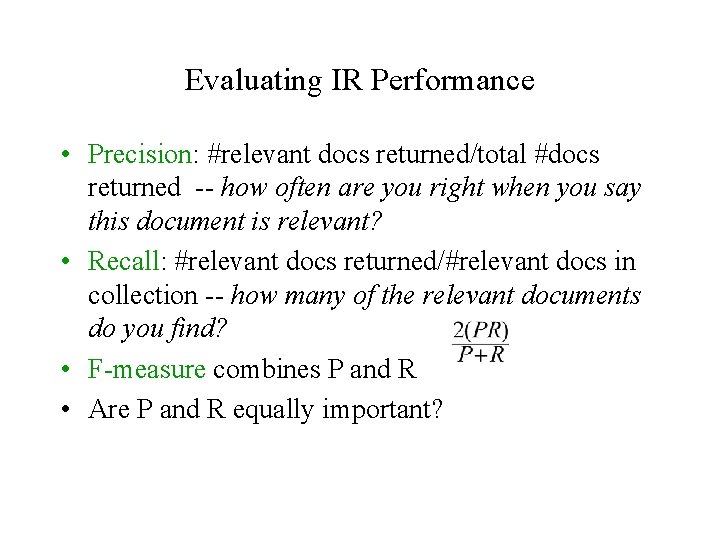

Evaluating IR Performance • Precision: #relevant docs returned/total #docs returned -- how often are you right when you say this document is relevant? • Recall: #relevant docs returned/#relevant docs in collection -- how many of the relevant documents do you find? • F-measure combines P and R • Are P and R equally important?

Improving Queries • Relevance feedback: users rate retrieved docs • Query expansion: many techniques – add top N docs retrieved to query and resubmit expanded query – Word. Net • Term clustering: cluster rows of terms in term-bydocument matrix to produce synonyms and add to query

IR Tasks • Ad hoc retrieval: ‘normal’ IR • Routing/categorization: assign new doc to one of predefined set of categories • Clustering: divide a collection into N clusters • Segmentation: segment text into coherent chunks • Summarization: compress a text by extracting summary items or eliminating less relevant items • Question-answering: find a span of text (within some window) containing the answer to a question

Information Extraction • A ‘robust’ semantic method • Idea: ‘extract’ particular types of information from arbitrary text or transcribed speech • Examples: – Named entities: people, places, organizations, times, dates • <Organization> MIPS</Organization> Vice President <Person>John Hime</Person> – MUC evaluations • Domains: Medical texts, broadcast news (terrorist reports), …

Appropriate where Semantic Grammars and Syntactic Parsers are not • Appropriate where information needs very specific and specifiable in advance – Question answering systems, gisting of news or mail… – Job ads, financial information, terrorist attacks • Input too complex and far-ranging to build semantic grammars • But full-blown syntactic parsers are impractical – Too much ambiguity for arbitrary text – 50 parses or none at all – Too slow for real-time applications

Information Extraction Techniques • Often use a set of simple templates or frames with slots to be filled in from input text – – Ignore everything else My number is 212 -555 -1212. The inventor of the wiggleswort was Capt. John T. Hart. The king died in March of 1932. • Context (neighboring words, capitalization, punctuation) provides cues to help fill in the appropriate slots • How to do better than everyone else?

The IE Process • Given a corpus and a target set of items to be extracted: – – Clean up the corpus Tokenize it Do some hand labeling of target items Extract some simple features • POS tags • Phrase Chunks … – Do some machine learning to associate features with target items or derive this associate by intuition – Use e. g. FSTs, simple or cascaded to iteratively annotate the input, eventually identifying the slot fillers

Domain-Specific IE from the Web (Patwardhan & Riloff ’ 06) • The Problem: – IE systems typically domain-specific – a new extraction procedure for every task – Supervised learning depends on hand annotation for training • Goals: – Acquire domain specific texts automatically on the Web – Identify domain-specific IE patterns automatically • Approach:

– Start with a set of seed IE patterns learned from a handlabeled corpus – Use these to identify relevant documents on the web – Find new seed patterns in the retrieved documents

MUC 04 IE Task • Corpus: – 1700 news stories about terrorist events in Latin America – Answer keys about information that should be extracted • Problems: – All upper case – 50% of texts irrelevant – Stories may describe multiple events • Best results: – 50 -70% precision and recall with hand-built components – 41 -44% recall and 49 -51% precision with automatically generated templates

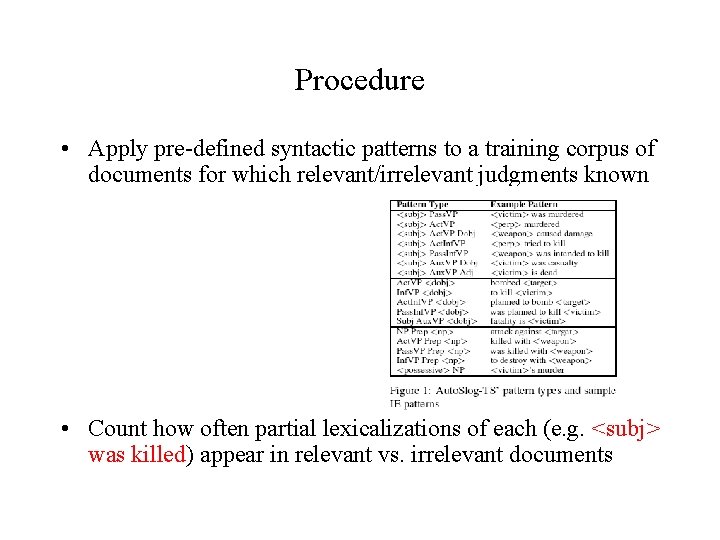

Procedure • Apply pre-defined syntactic patterns to a training corpus of documents for which relevant/irrelevant judgments known • Count how often partial lexicalizations of each (e. g. <subj> was killed) appear in relevant vs. irrelevant documents

• Rank patterns based on association with domain (frequency in domain documents vs. non-domain documents) • Manually review patterns and assign thematic roles to those deemed useful – From 40 K+ patterns 291 • Now find similar web documents

Domain Corpus Creation • Create IR queries by crossing names of 5 terrorist organizations (e. g. Al Qaeda, IRA) with 16 terrorist activities (e. g assinated, bombed, hijacked, wounded) 80 queries – – Restricted to CNN, English documents Eliminated TV transcripts Yield from 2 runs: 6, 182 documents Cleaned corpus: 5, 618 documents

Learning Domain-Specific Patterns • Hypothesis: new extraction patterns co-occurring with seed patterns from training corpus will also be associated with terrorism • Generate all extraction patterns in CNN corpus (147, 712) • Compute correlation of each with seed patterns based on frequency of co-occurrence in same sentence – keep those occurring more often that chance with some seed • Rank new patterns by their seed correlations

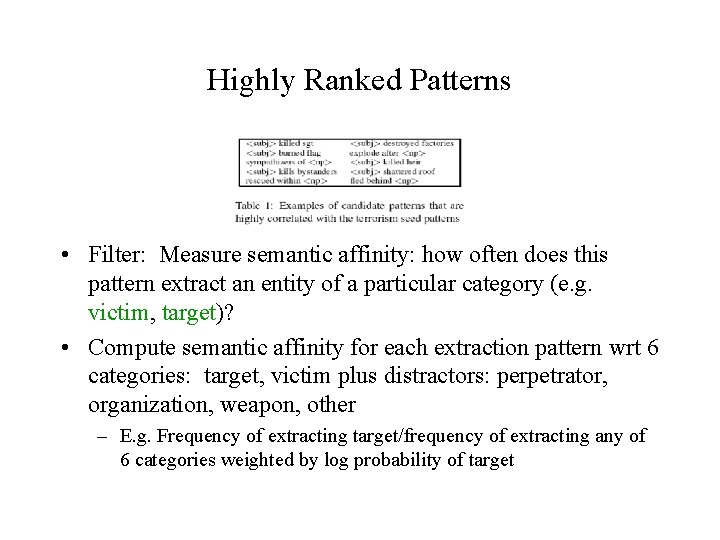

Highly Ranked Patterns • Filter: Measure semantic affinity: how often does this pattern extract an entity of a particular category (e. g. victim, target)? • Compute semantic affinity for each extraction pattern wrt 6 categories: target, victim plus distractors: perpetrator, organization, weapon, other – E. g. Frequency of extracting target/frequency of extracting any of 6 categories weighted by log probability of target

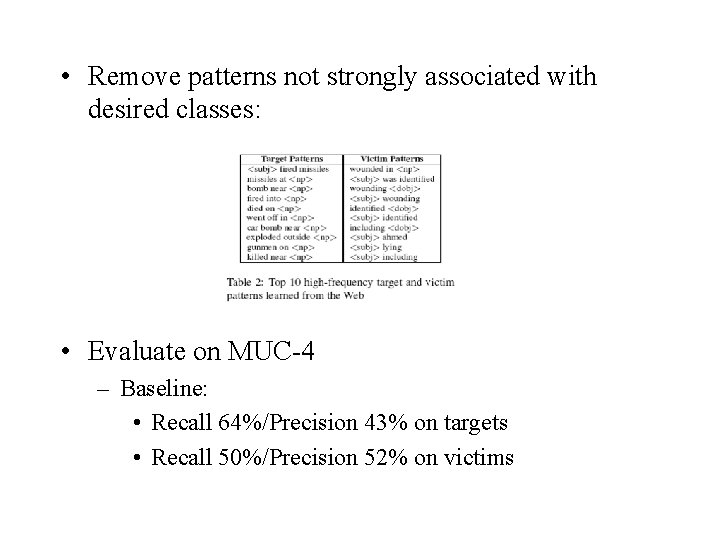

• Remove patterns not strongly associated with desired classes: • Evaluate on MUC-4 – Baseline: • Recall 64%/Precision 43% on targets • Recall 50%/Precision 52% on victims

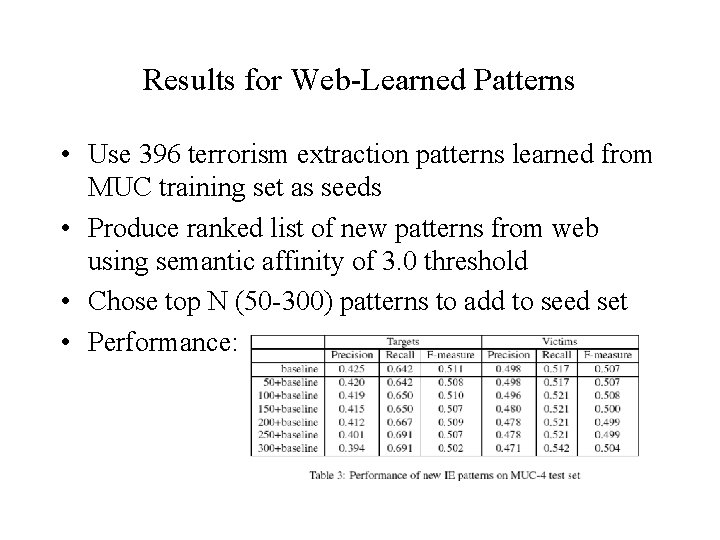

Results for Web-Learned Patterns • Use 396 terrorism extraction patterns learned from MUC training set as seeds • Produce ranked list of new patterns from web using semantic affinity of 3. 0 threshold • Chose top N (50 -300) patterns to add to seed set • Performance:

Combining IR and IE for QA • Information extraction: AQUA

Summary • Many approaches to ‘robust’ semantic analysis – Semantic grammars targeting particular domains Utterance --> Yes/No Reply --> Yes-Reply | No-Reply Yes-Reply --> {yes, yeah, right, ok, ”you bet”, …} – Information extraction techniques targeting specific tasks • Extracting information about terrorist events from news – Information retrieval techniques --> more like NLP

- Slides: 30