Robust PCA in Stata Vincenzo Verardi vverardifundp ac

Robust PCA in Stata Vincenzo Verardi (vverardi@fundp. ac. be) FUNDP (Namur) and ULB (Brussels), Belgium FNRS Associate Researcher

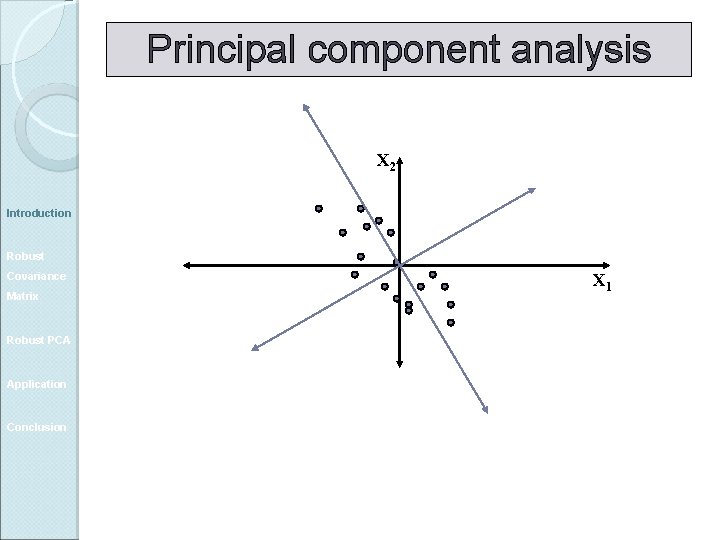

Principal component analysis Introduction Robust Covariance Matrix Robust PCA Application Conclusion PCA, transforms a set of correlated variables into a smaller set of uncorrelated variables (principal components). For p random variables X 1, …, Xp. the goal of PCA is to construct a new set of p axes in the directions of greatest variability.

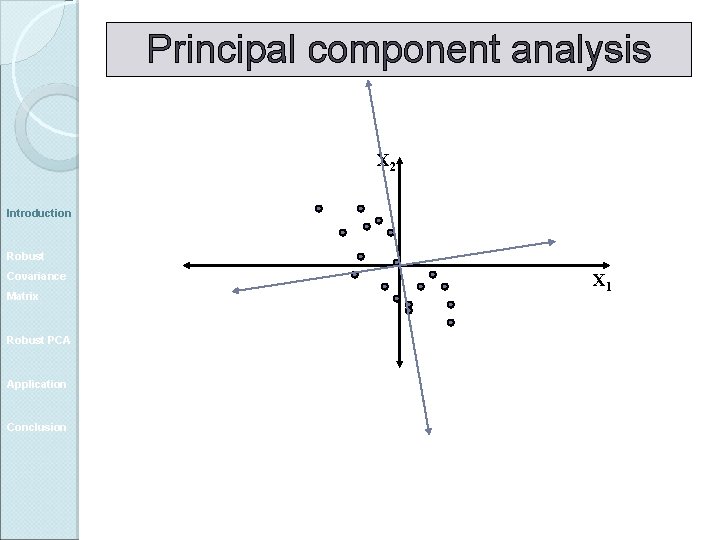

Principal component analysis X 2 Introduction Robust Covariance Matrix Robust PCA Application Conclusion X 1

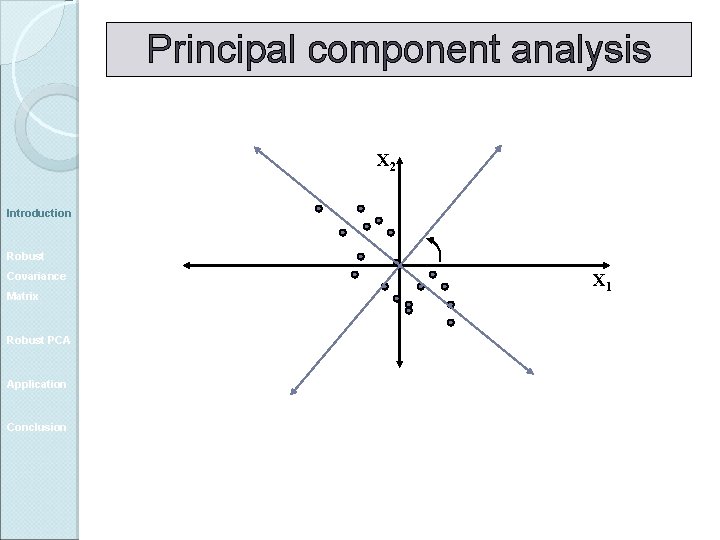

Principal component analysis X 2 Introduction Robust Covariance Matrix Robust PCA Application Conclusion X 1

Principal component analysis X 2 Introduction Robust Covariance Matrix Robust PCA Application Conclusion X 1

Principal component analysis X 2 Introduction Robust Covariance Matrix Robust PCA Application Conclusion X 1

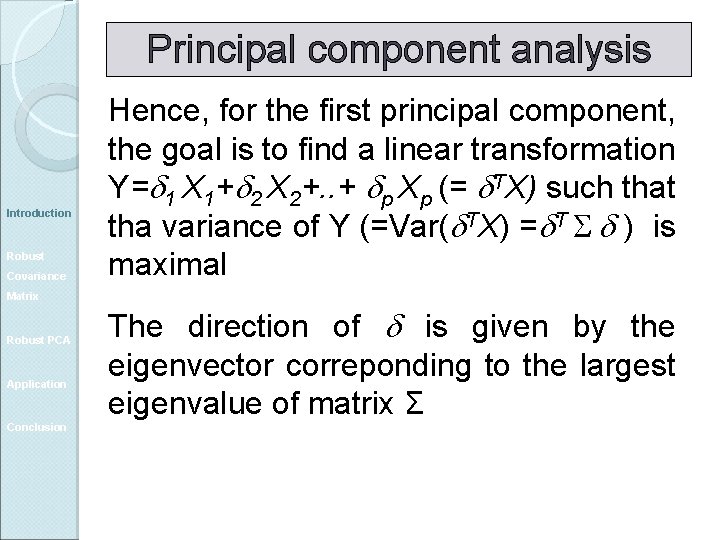

Principal component analysis Introduction Robust Covariance Hence, for the first principal component, the goal is to find a linear transformation Y= 1 X 1+ 2 X 2+. . + p Xp (= TX) such that tha variance of Y (=Var( TX) = T ) is maximal Matrix Robust PCA Application Conclusion The direction of is given by the eigenvector correponding to the largest eigenvalue of matrix Σ

Classical PCA Introduction Robust Covariance Matrix Robust PCA Application Conclusion The second vector (orthogonal to the first), is the one that has the second highest variance. This corresponds to the eigenvector associated to the second largest eigenvalue And so on …

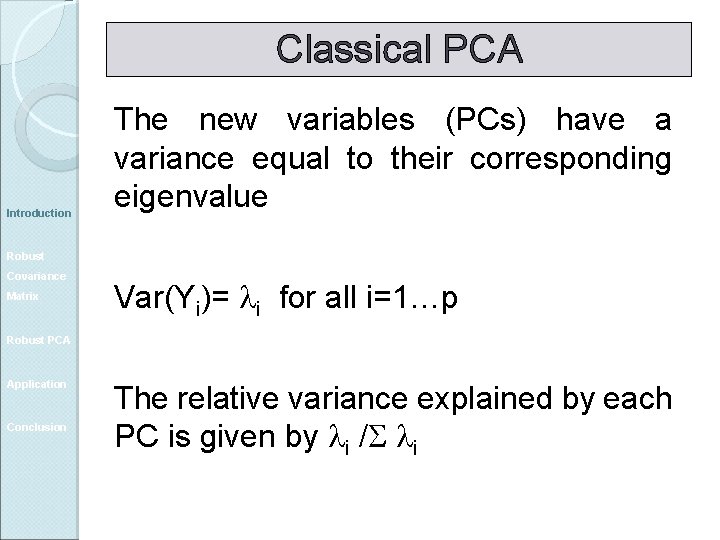

Classical PCA Introduction The new variables (PCs) have a variance equal to their corresponding eigenvalue Robust Covariance Matrix Var(Yi)= i for all i=1…p Robust PCA Application Conclusion The relative variance explained by each PC is given by i / i

Number of PC How many PC should be considered? Introduction Robust Covariance Matrix Robust PCA Application Conclusion Sufficient number of PCs to have a cumulative variance explained that is at least 60 -70% of the total Kaiser criterion: keep PCs with eigenvalues >1

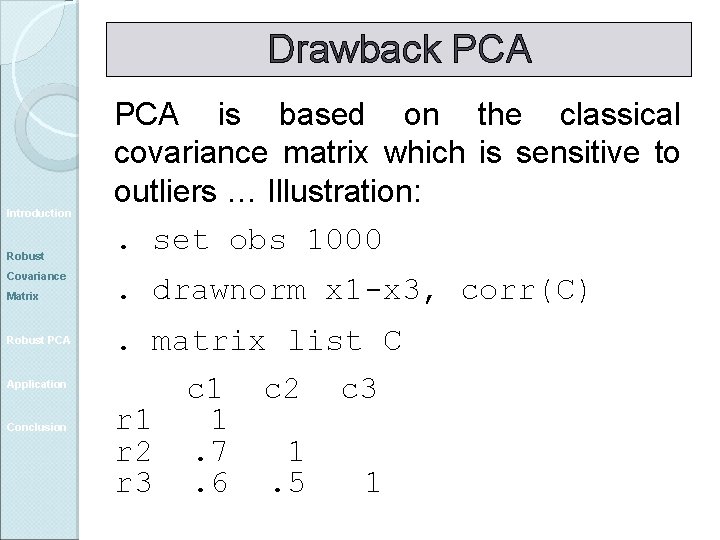

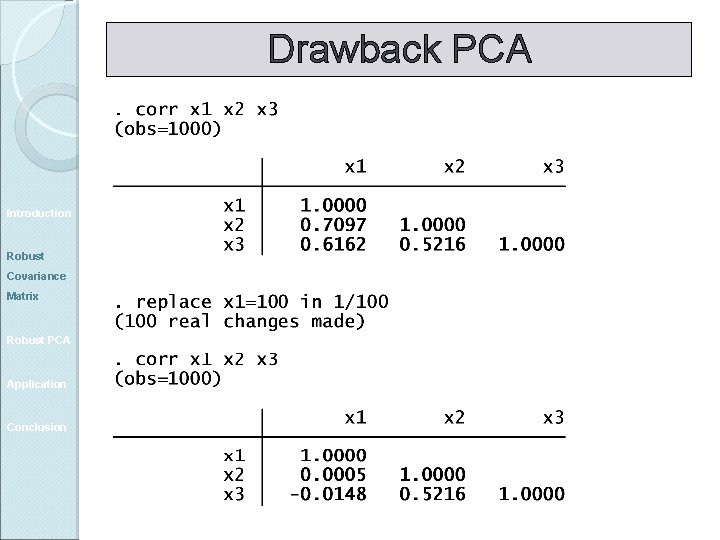

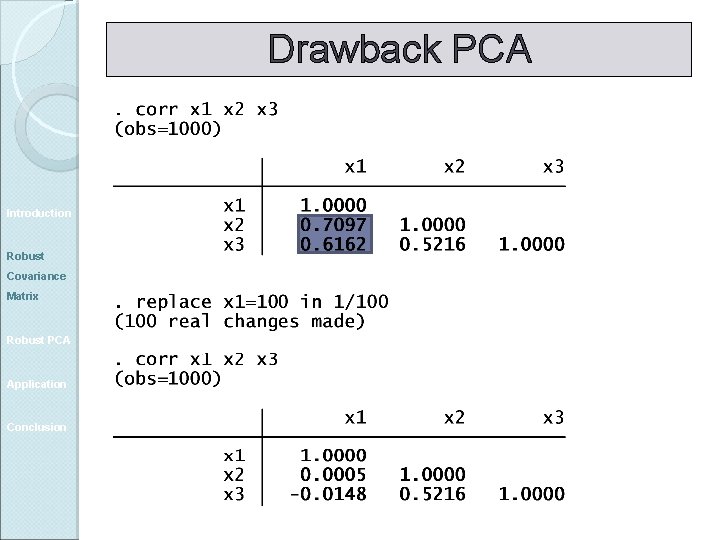

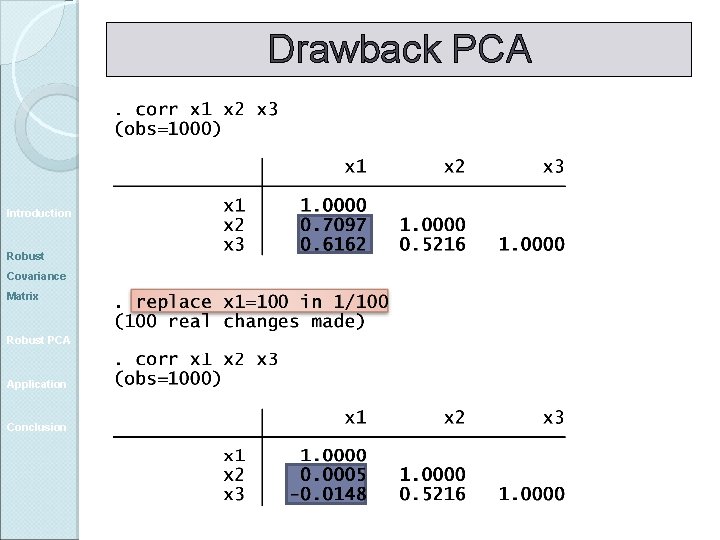

Drawback PCA Introduction Robust Covariance Matrix Robust PCA Application Conclusion PCA is based on the classical covariance matrix which is sensitive to outliers … Illustration:

Drawback PCA Introduction Robust Covariance PCA is based on the classical covariance matrix which is sensitive to outliers … Illustration: . set obs 1000 Matrix . drawnorm x 1 -x 3, corr(C) Robust PCA . matrix list C Application Conclusion r 1 r 2 r 3 c 1 1. 7. 6 c 2 c 3 1. 5 1

Drawback PCA Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Drawback PCA Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Drawback PCA Introduction Robust Covariance Matrix Robust PCA Application Conclusion

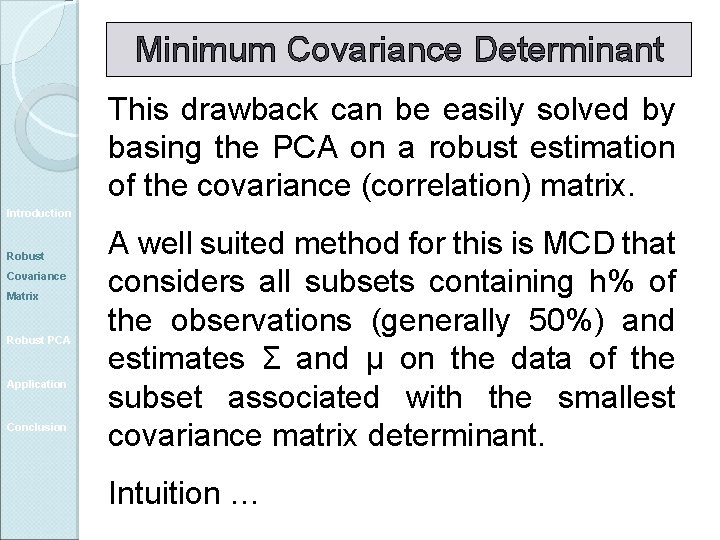

Minimum Covariance Determinant This drawback can be easily solved by basing the PCA on a robust estimation of the covariance (correlation) matrix. Introduction Robust Covariance Matrix Robust PCA Application Conclusion A well suited method for this is MCD that considers all subsets containing h% of the observations (generally 50%) and estimates Σ and µ on the data of the subset associated with the smallest covariance matrix determinant. Intuition …

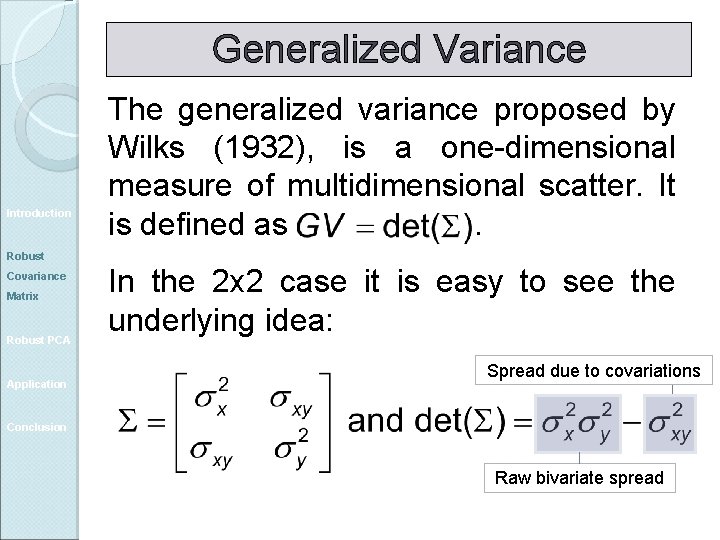

Generalized Variance Introduction The generalized variance proposed by Wilks (1932), is a one-dimensional measure of multidimensional scatter. It is defined as. Robust Covariance Matrix Robust PCA Application In the 2 x 2 case it is easy to see the underlying idea: Spread due to covariations Conclusion Raw bivariate spread

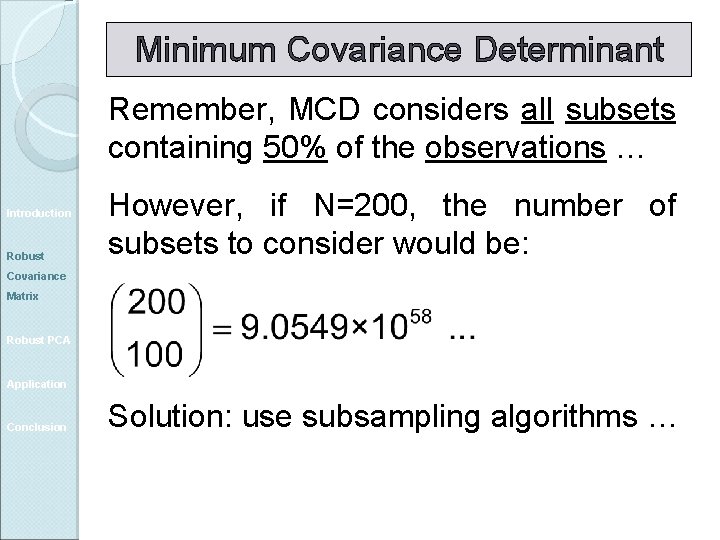

Minimum Covariance Determinant Remember, MCD considers all subsets containing 50% of the observations … Introduction Robust However, if N=200, the number of subsets to consider would be: Covariance Matrix Robust PCA Application Conclusion Solution: use subsampling algorithms …

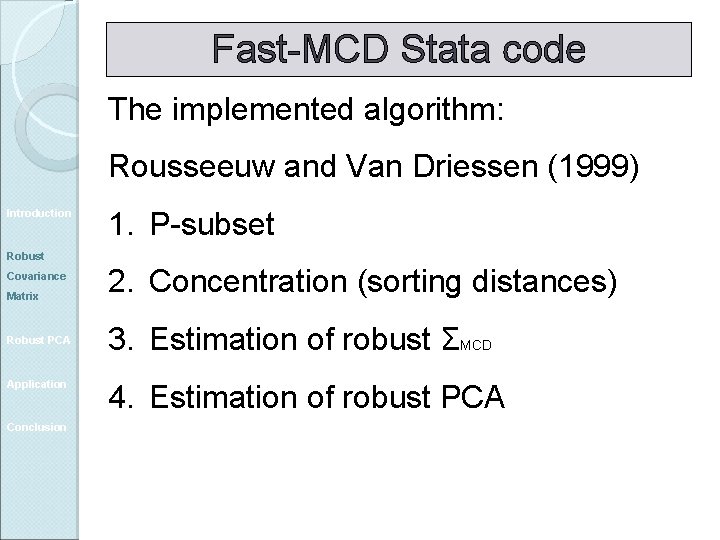

Fast-MCD Stata code The implemented algorithm: Rousseeuw and Van Driessen (1999) Introduction 1. P-subset Robust Covariance Matrix Robust PCA Application Conclusion 2. Concentration (sorting distances) 3. Estimation of robust ΣMCD 4. Estimation of robust PCA

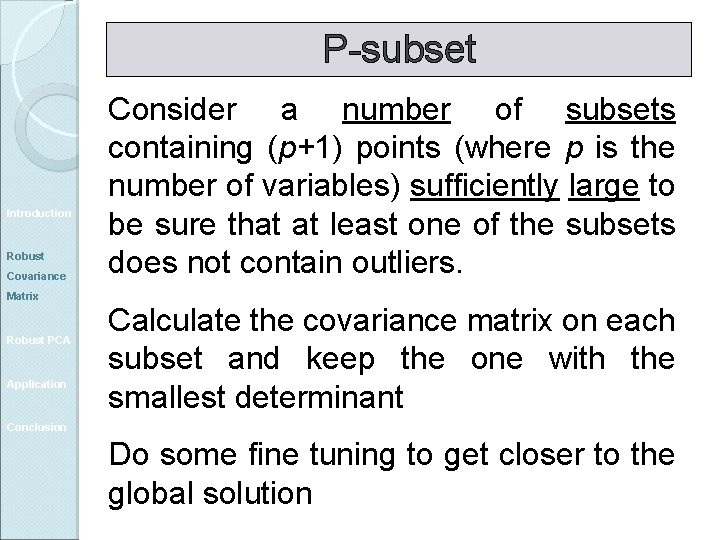

P-subset Introduction Robust Covariance Matrix Robust PCA Application Consider a number of subsets containing (p+1) points (where p is the number of variables) sufficiently large to be sure that at least one of the subsets does not contain outliers. Calculate the covariance matrix on each subset and keep the one with the smallest determinant Conclusion Do some fine tuning to get closer to the global solution

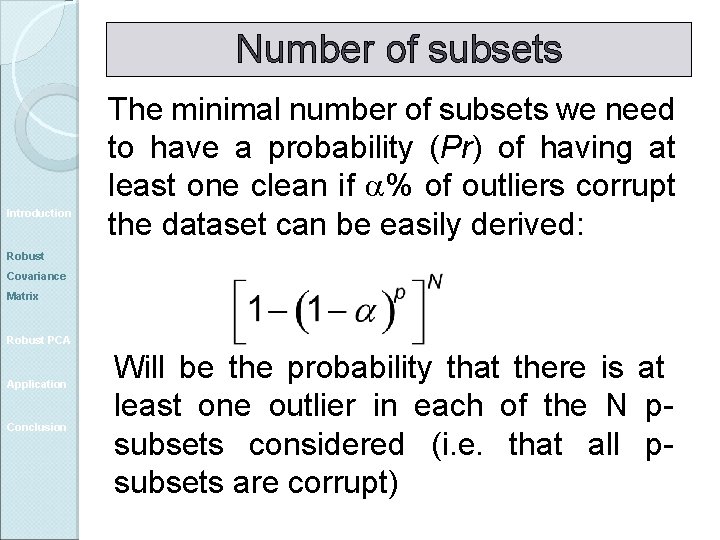

Number of subsets Introduction The minimal number of subsets we need to have a probability (Pr) of having at least one clean if a% of outliers corrupt the dataset can be easily derived: Robust Covariance Matrix Robust PCA Application Conclusion Contamination: %

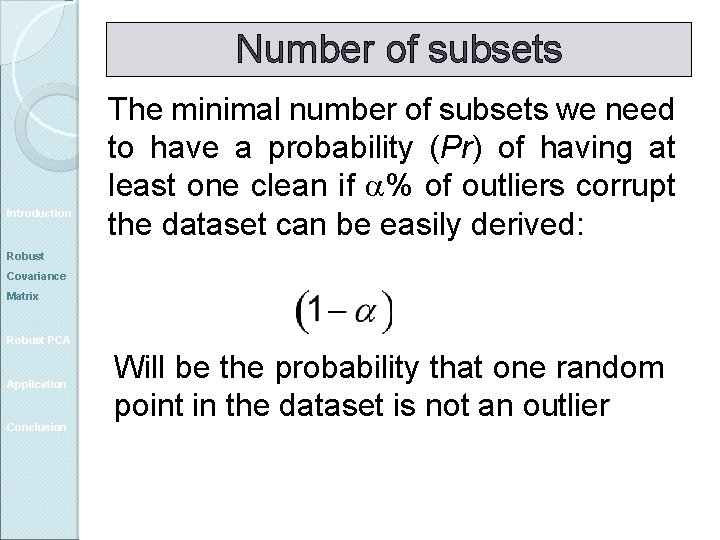

Number of subsets Introduction The minimal number of subsets we need to have a probability (Pr) of having at least one clean if a% of outliers corrupt the dataset can be easily derived: Robust Covariance Matrix Robust PCA Application Conclusion Will be the probability that one random point in the dataset is not an outlier

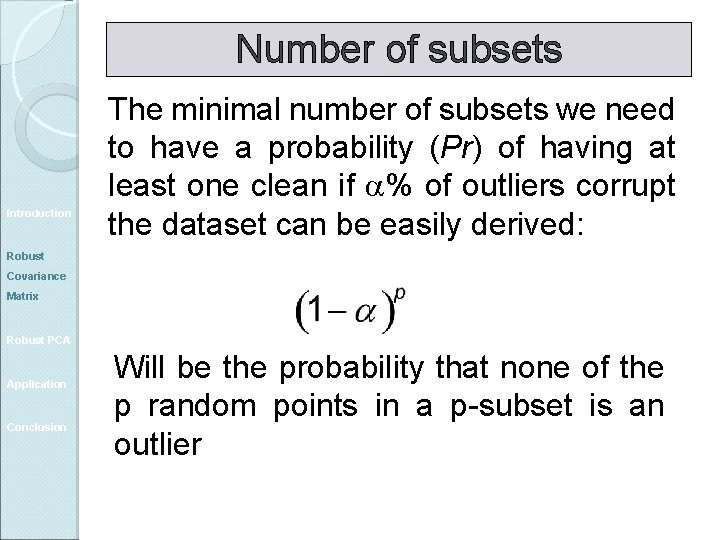

Number of subsets Introduction The minimal number of subsets we need to have a probability (Pr) of having at least one clean if a% of outliers corrupt the dataset can be easily derived: Robust Covariance Matrix Robust PCA Application Conclusion Will be the probability that none of the p random points in a p-subset is an outlier

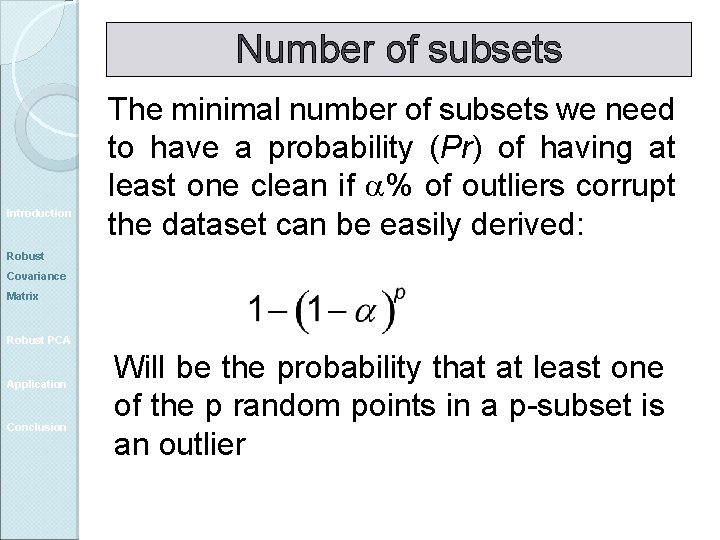

Number of subsets Introduction The minimal number of subsets we need to have a probability (Pr) of having at least one clean if a% of outliers corrupt the dataset can be easily derived: Robust Covariance Matrix Robust PCA Application Conclusion Will be the probability that at least one of the p random points in a p-subset is an outlier

Number of subsets Introduction The minimal number of subsets we need to have a probability (Pr) of having at least one clean if a% of outliers corrupt the dataset can be easily derived: Robust Covariance Matrix Robust PCA Application Conclusion Will be the probability that there is at least one outlier in each of the N psubsets considered (i. e. that all psubsets are corrupt)

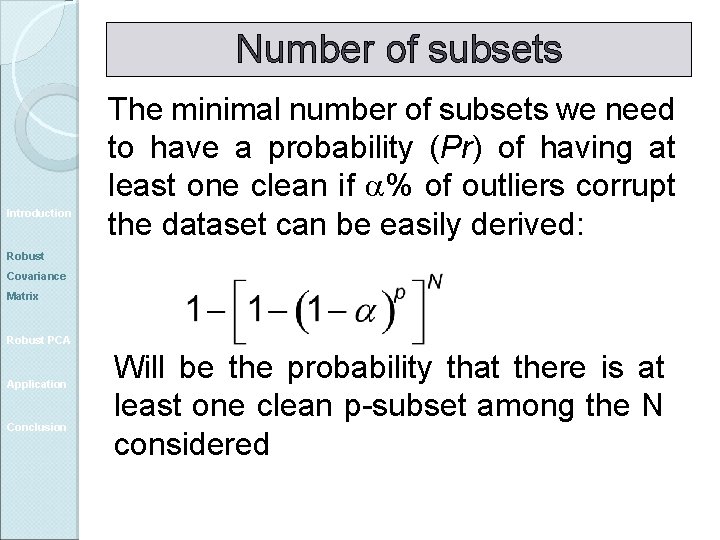

Number of subsets Introduction The minimal number of subsets we need to have a probability (Pr) of having at least one clean if a% of outliers corrupt the dataset can be easily derived: Robust Covariance Matrix Robust PCA Application Conclusion Will be the probability that there is at least one clean p-subset among the N considered

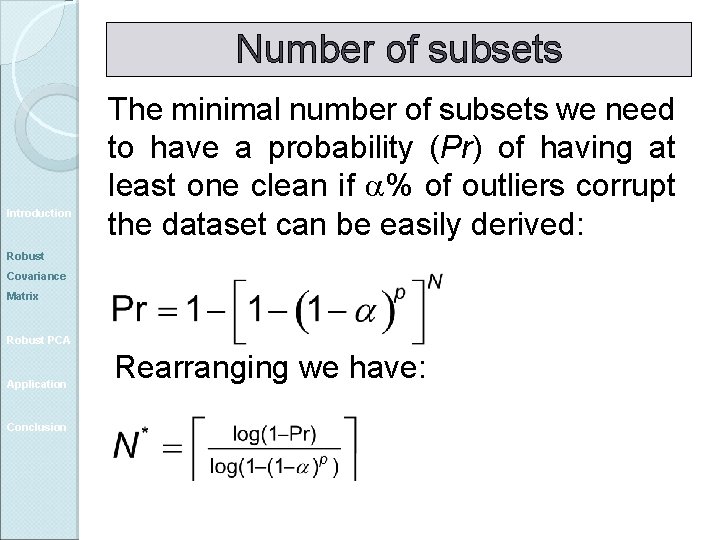

Number of subsets Introduction The minimal number of subsets we need to have a probability (Pr) of having at least one clean if a% of outliers corrupt the dataset can be easily derived: Robust Covariance Matrix Robust PCA Application Conclusion Rearranging we have:

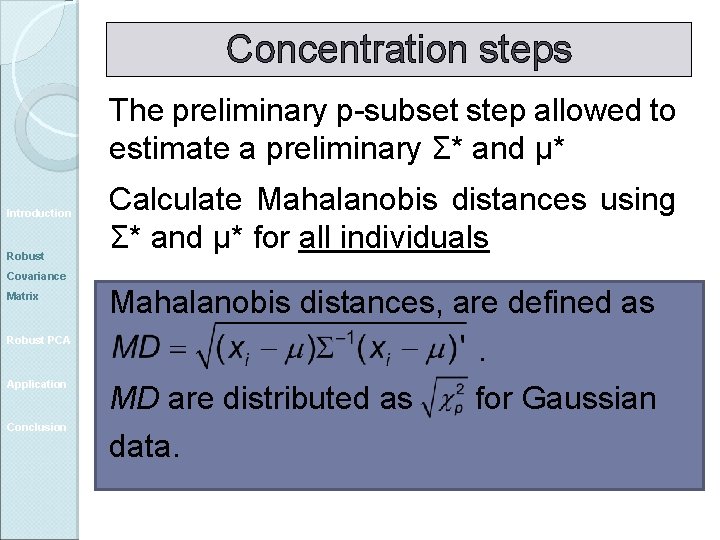

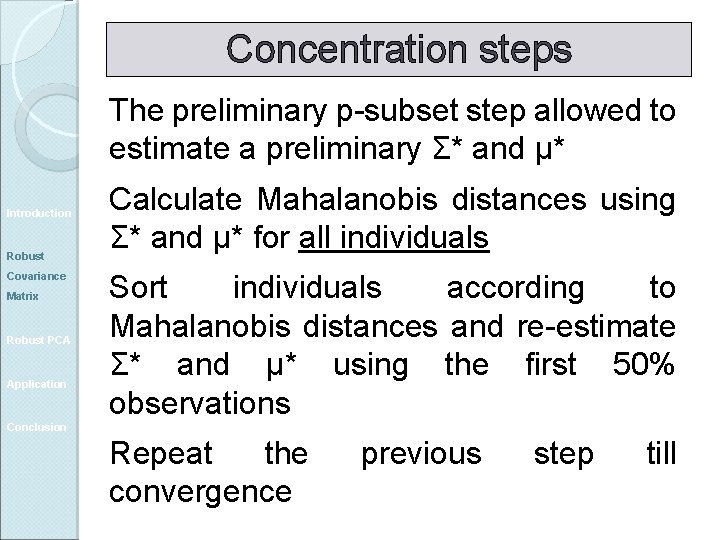

Concentration steps The preliminary p-subset step allowed to estimate a preliminary Σ* and μ* Introduction Robust Calculate Mahalanobis distances using Σ* and μ* for all individuals Covariance Matrix Robust PCA Application Conclusion Mahalanobis distances, are defined as. MD are distributed as for Gaussian data.

Concentration steps The preliminary p-subset step allowed to estimate a preliminary Σ* and μ* Introduction Robust Covariance Matrix Robust PCA Application Calculate Mahalanobis distances using Σ* and μ* for all individuals Sort individuals according to Mahalanobis distances and re-estimate Σ* and μ* using the first 50% observations Conclusion Repeat the convergence previous step till

Robust Σ in Stata In Stata, Hadi’s method is available to estimate a robust Covariance matrix Introduction Robust Covariance Matrix Robust PCA Application Conclusion Unfortunately it is not very robust The reason for this is simple, it relies on a non-robust preliminary estimation of the covariance matrix

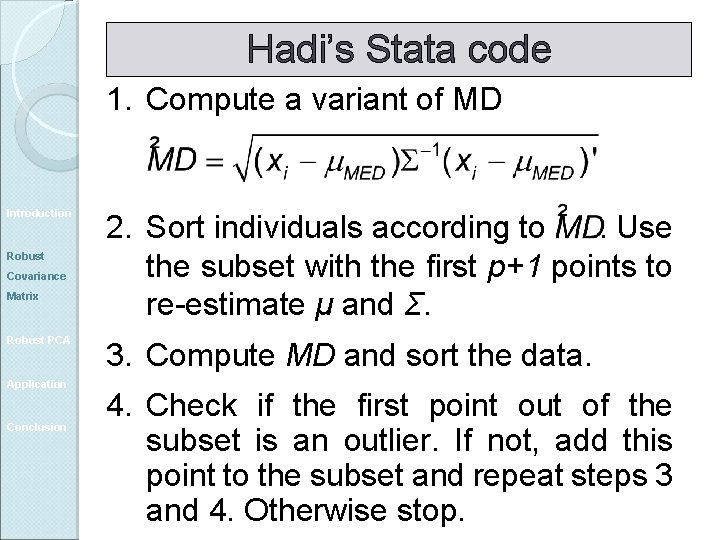

Hadi’s Stata code 1. Compute a variant of MD Introduction Robust Covariance Matrix Robust PCA Application Conclusion 2. Sort individuals according to. Use the subset with the first p+1 points to re-estimate μ and Σ. 3. Compute MD and sort the data. 4. Check if the first point out of the subset is an outlier. If not, add this point to the subset and repeat steps 3 and 4. Otherwise stop.

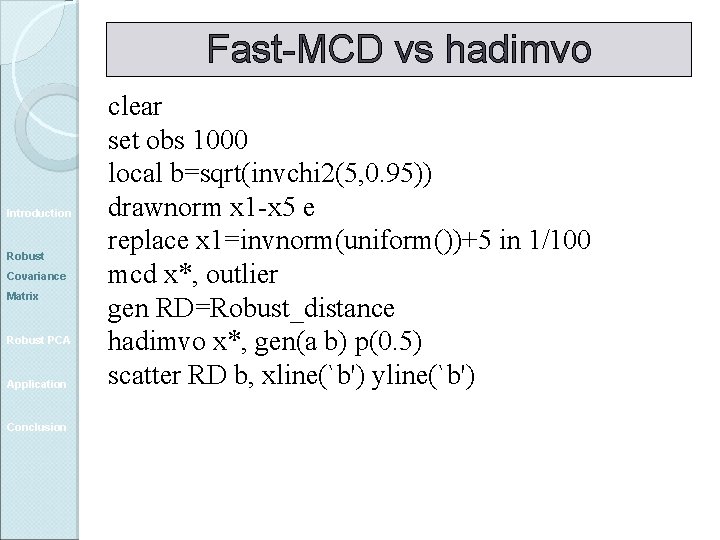

Fast-MCD vs hadimvo Introduction Robust Covariance Matrix Robust PCA Application Conclusion clear set obs 1000 local b=sqrt(invchi 2(5, 0. 95)) drawnorm x 1 -x 5 e replace x 1=invnorm(uniform())+5 in 1/100 mcd x*, outlier gen RD=Robust_distance hadimvo x*, gen(a b) p(0. 5) scatter RD b, xline(`b') yline(`b')

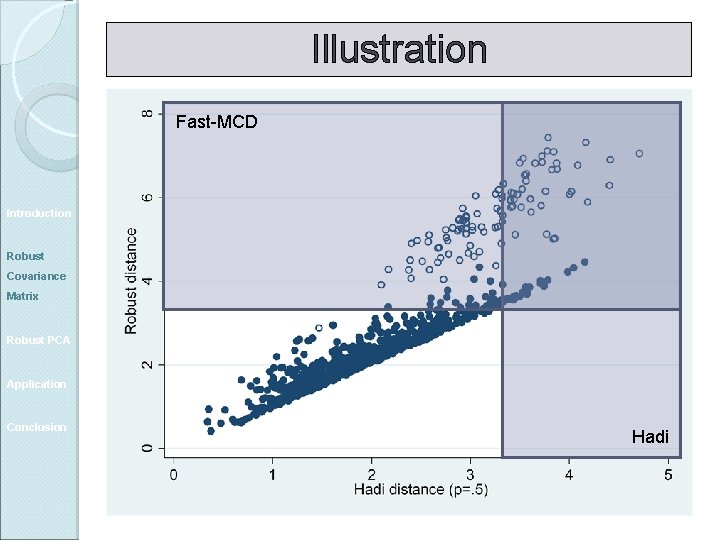

Illustration Fast-MCD Introduction Robust Covariance Matrix Robust PCA Application Conclusion Hadi

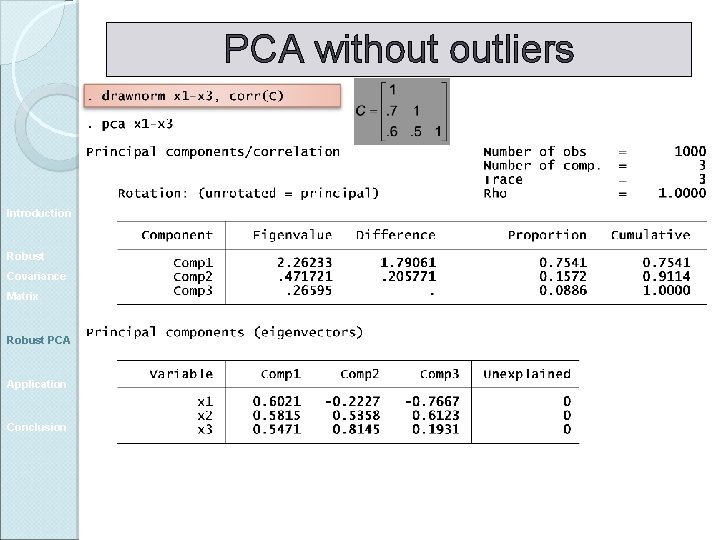

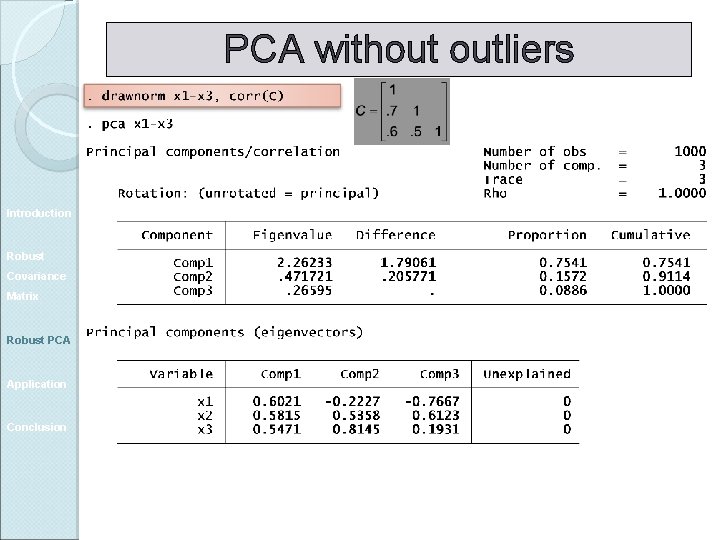

PCA without outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

PCA without outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

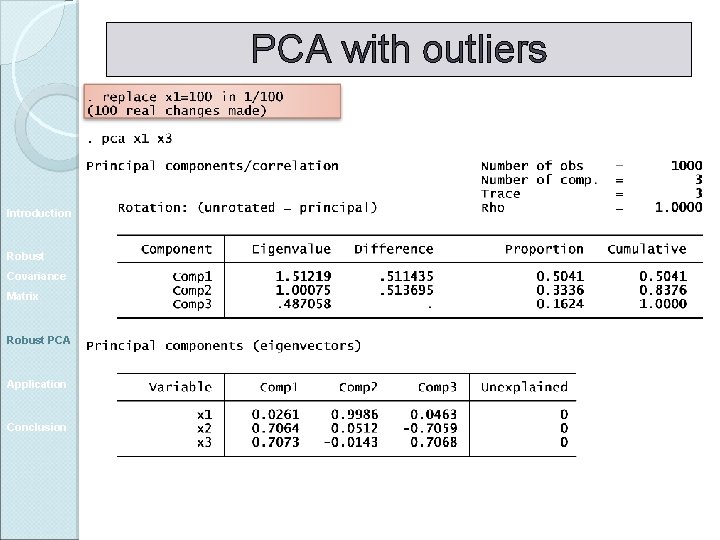

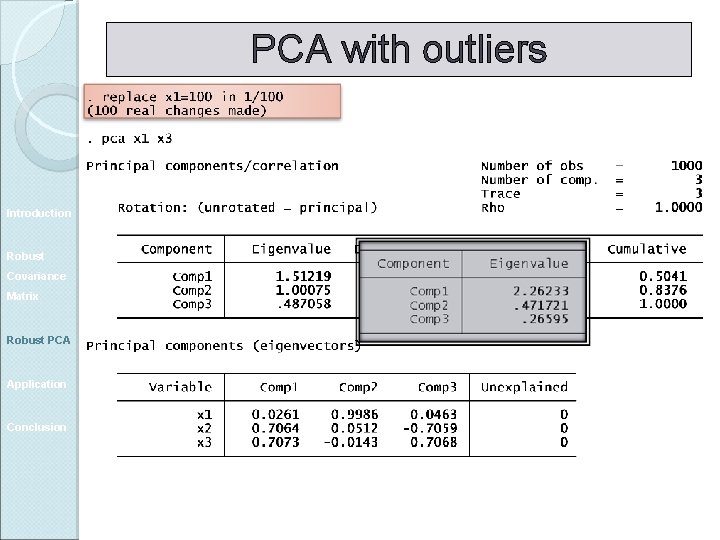

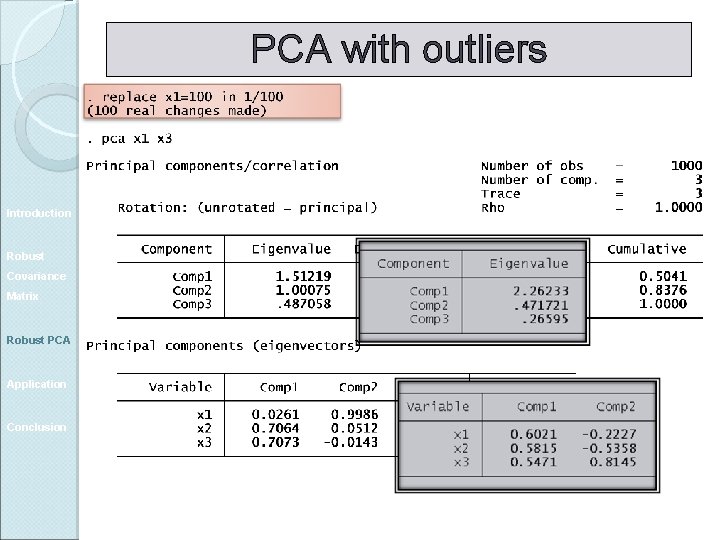

PCA with outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

PCA with outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

PCA with outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

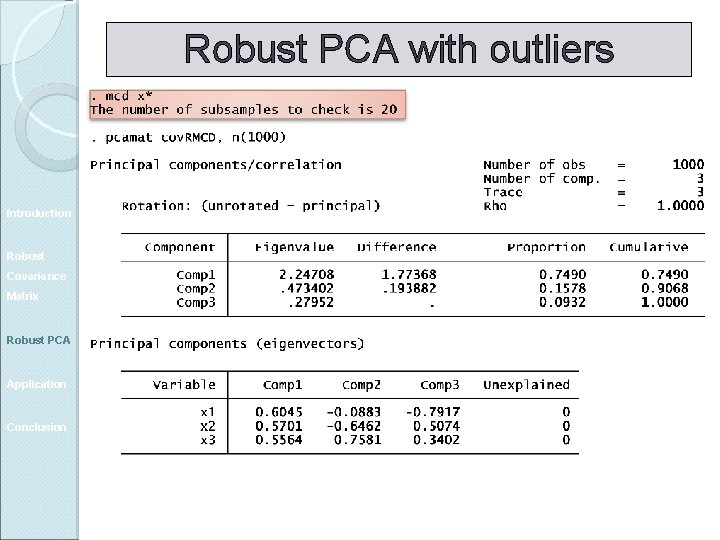

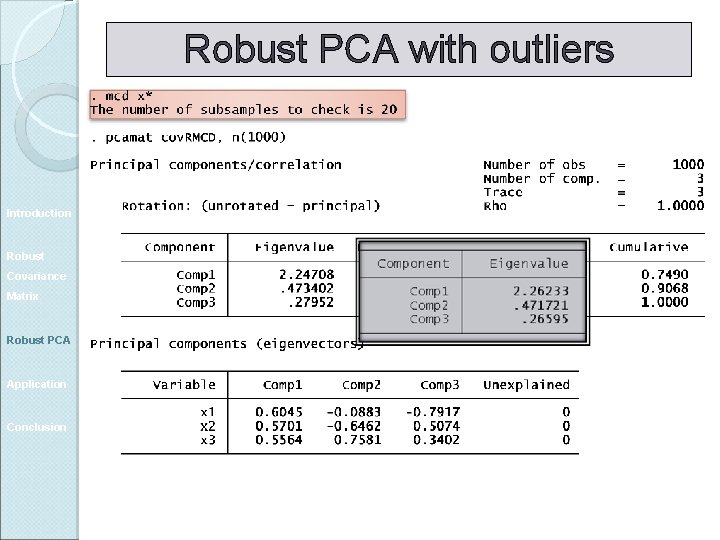

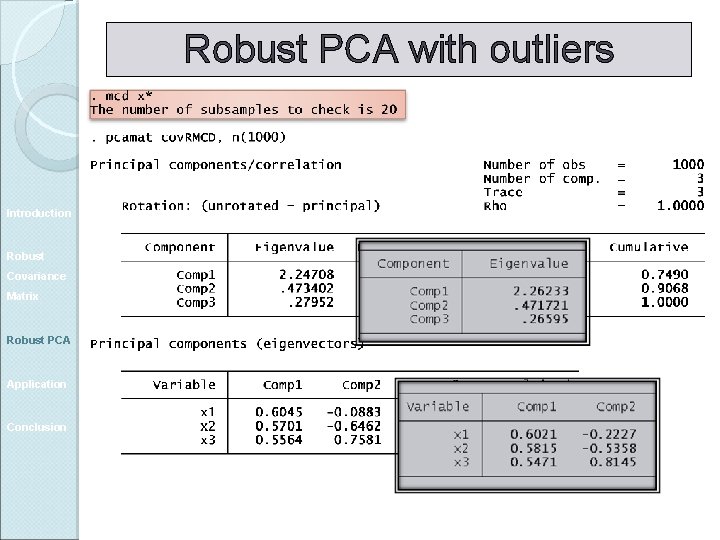

Robust PCA with outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Robust PCA with outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Robust PCA with outliers Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Application: rankings of Universities QUESTION: Can a single indicator accurately sum up research excellence? Introduction Robust Covariance Matrix GOAL: Determine the underlying factors measured by the variables used in the Shanghai ranking Robust PCA Application Conclusion Principal component analysis

ARWU variables Alumni: Alumni recipients of the Nobel prize or the Fields Medal; Introduction Robust Covariance Matrix Robust PCA Application Conclusion Award: Current faculty Nobel laureates and Fields Medal winners; Hi. Ci : Highly cited researchers N&S: Articles published in Nature and Science; PUB: Articles in the Science Citation Index-expanded, and the Social Science Citation Index;

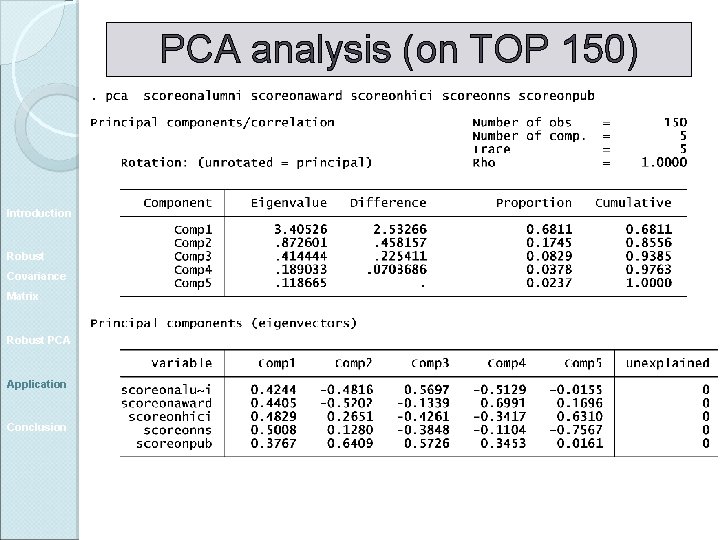

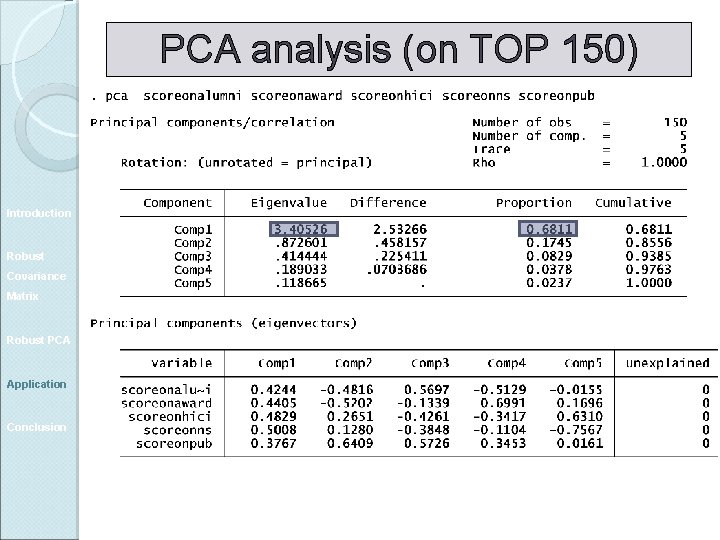

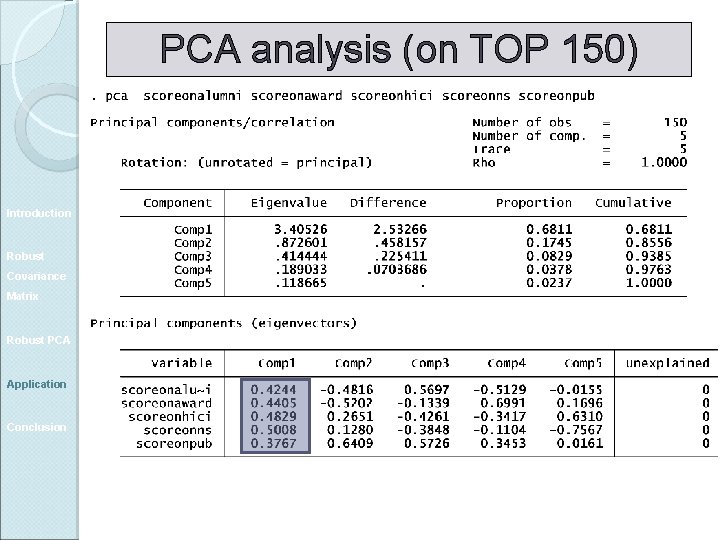

PCA analysis (on TOP 150) Introduction Robust Covariance Matrix Robust PCA Application Conclusion

PCA analysis (on TOP 150) Introduction Robust Covariance Matrix Robust PCA Application Conclusion

PCA analysis (on TOP 150) Introduction Robust Covariance Matrix Robust PCA Application Conclusion

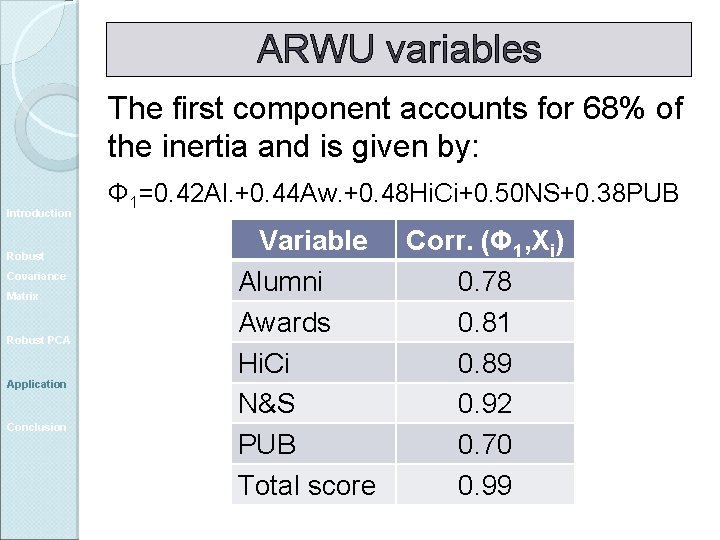

ARWU variables The first component accounts for 68% of the inertia and is given by: Introduction Robust Covariance Matrix Robust PCA Application Conclusion Φ 1=0. 42 Al. +0. 44 Aw. +0. 48 Hi. Ci+0. 50 NS+0. 38 PUB Variable Alumni Awards Hi. Ci N&S PUB Total score Corr. (Φ 1, Xi) 0. 78 0. 81 0. 89 0. 92 0. 70 0. 99

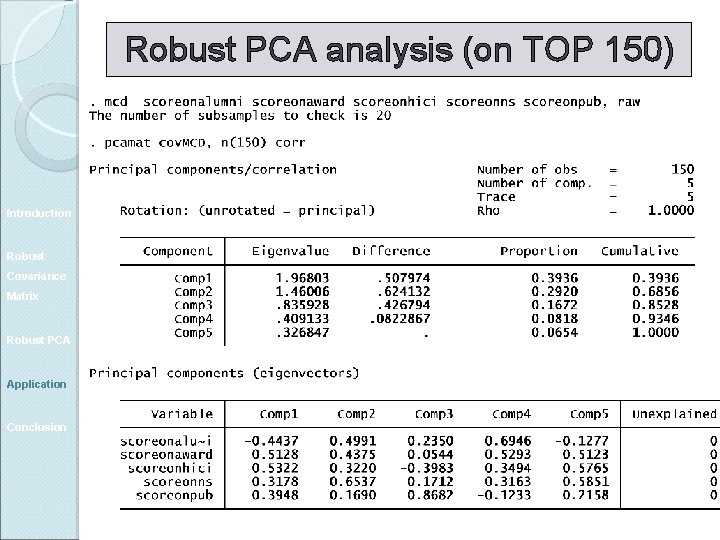

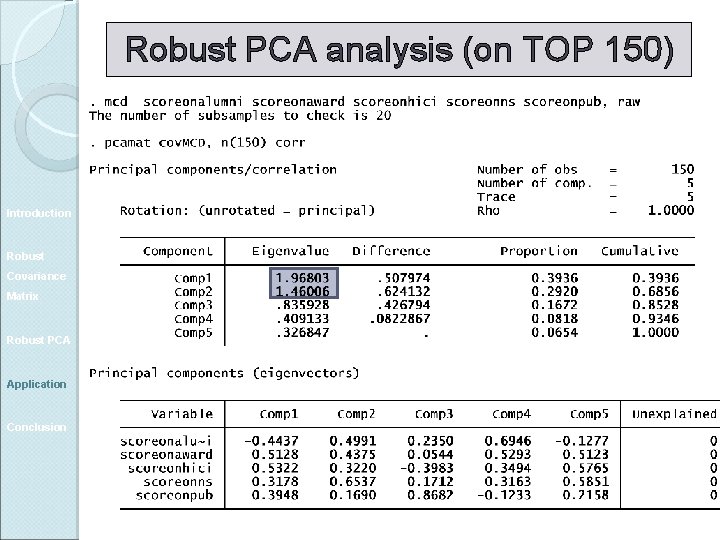

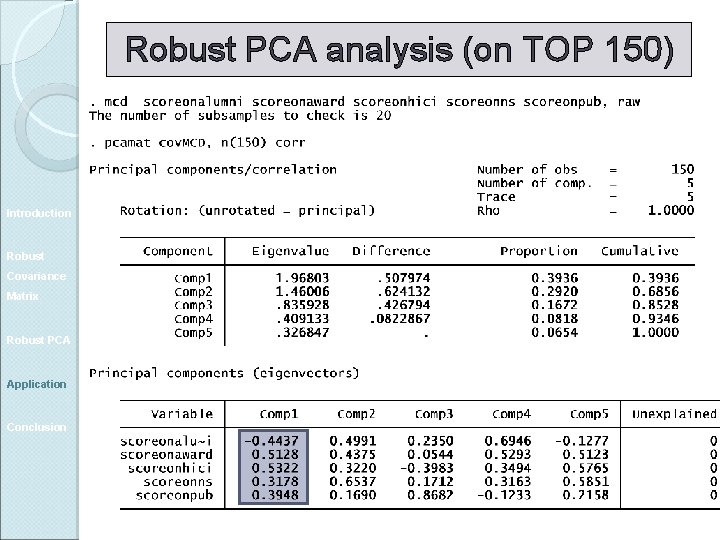

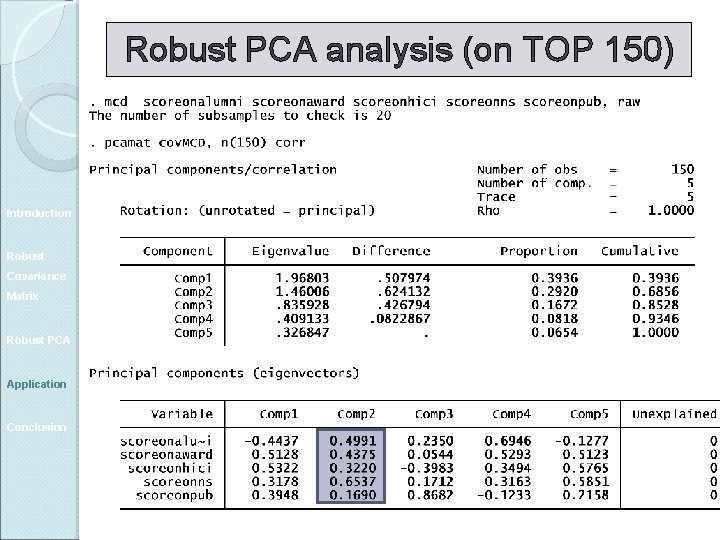

Robust PCA analysis (on TOP 150) Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Robust PCA analysis (on TOP 150) Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Robust PCA analysis (on TOP 150) Introduction Robust Covariance Matrix Robust PCA Application Conclusion

Robust PCA analysis (on TOP 150) Introduction Robust Covariance Matrix Robust PCA Application Conclusion

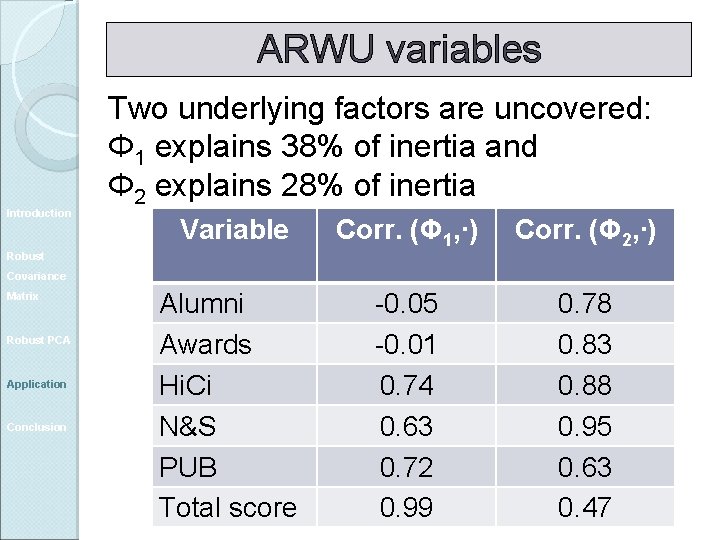

ARWU variables Introduction Two underlying factors are uncovered: Φ 1 explains 38% of inertia and Φ 2 explains 28% of inertia Variable Corr. (Φ 1, ∙) Corr. (Φ 2, ∙) Alumni Awards Hi. Ci N&S PUB Total score -0. 05 -0. 01 0. 74 0. 63 0. 72 0. 99 0. 78 0. 83 0. 88 0. 95 0. 63 0. 47 Robust Covariance Matrix Robust PCA Application Conclusion

Conclusion Classical PCA could be heavily distorted by the presence of outliers. Introduction Robust Covariance Matrix Robust PCA Application Conclusion A robustified version of PCA could be obtained either by relying on a robust covariance matrix or by removing multivariate outliers identified through a robust identification method.

- Slides: 53