Robust Nonparametric Regression by Controlling Sparsity Gonzalo Mateos

Robust Nonparametric Regression by Controlling Sparsity Gonzalo Mateos and Georgios B. Giannakis ECE Department, University of Minnesota Acknowledgments: NSF grants no. CCF-0830480, 1016605 EECS-0824007, 1002180 May 24, 2011 1

Nonparametric regression n Given , function estimation allows predicting q Estimate unknown from a training data set n If one trusts data more than any parametric model q Then go nonparametric regression: q lives in a (possibly -dimensional) space of “smooth’’ functions n Ill-posed problem q Workaround: regularization [Tikhonov’ 77], [Wahba’ 90] q RKHS with reproducing kernel and norm n Our focus q Nonparametric regression robust against outliers q Robustness by controlling sparsity 2

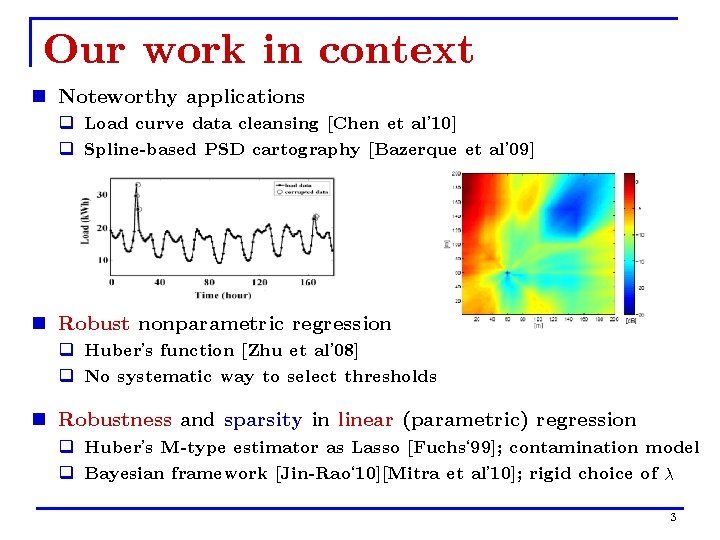

Our work in context n Noteworthy applications q Load curve data cleansing [Chen et al’ 10] q Spline-based PSD cartography [Bazerque et al’ 09] n Robust nonparametric regression q Huber’s function [Zhu et al’ 08] q No systematic way to select thresholds n Robustness and sparsity in linear (parametric) regression q Huber’s M-type estimator as Lasso [Fuchs‘ 99]; contamination model q Bayesian framework [Jin-Rao‘ 10][Mitra et al’ 10]; rigid choice of 3

![Variational LTS n Least-trimmed squares (LTS) regression [Rousseeuw’ 87] Variational (V)LTS counterpart (VLTS) Ø Variational LTS n Least-trimmed squares (LTS) regression [Rousseeuw’ 87] Variational (V)LTS counterpart (VLTS) Ø](http://slidetodoc.com/presentation_image_h2/c993aa5e4785a498a3a8613e8837f5a0/image-4.jpg)

Variational LTS n Least-trimmed squares (LTS) regression [Rousseeuw’ 87] Variational (V)LTS counterpart (VLTS) Ø Ø is the -th order statistic among residuals discarded n Q: How should we go about minimizing ? (VLTS) is nonconvex; existence of minimizer(s)? A: Try all subsamples of size , solve, and pick the best n Simple but intractable beyond small problems 4

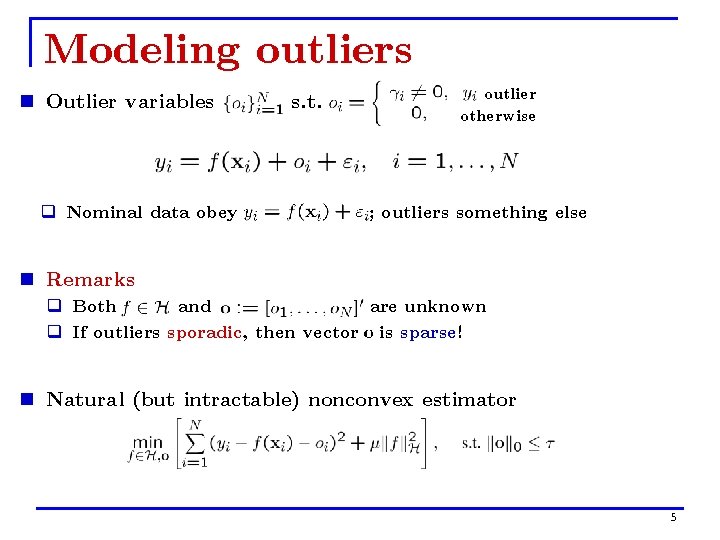

Modeling outliers n Outlier variables q Nominal data obey s. t. outlier otherwise ; outliers something else n Remarks q Both and are unknown q If outliers sporadic, then vector is sparse! n Natural (but intractable) nonconvex estimator 5

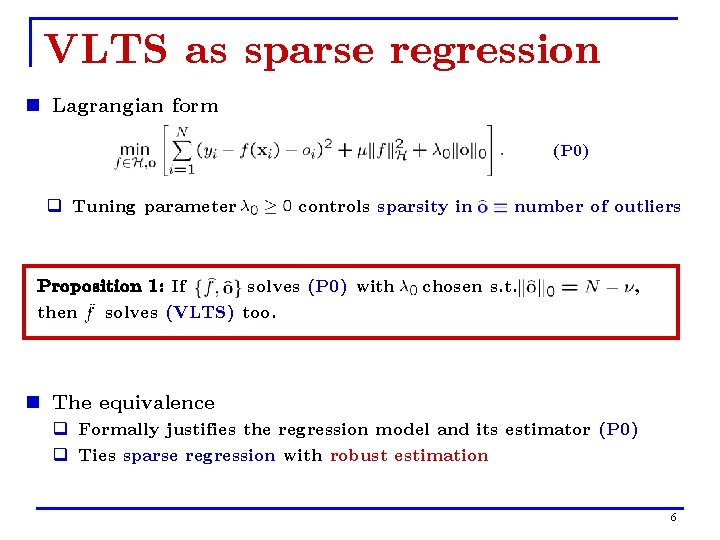

VLTS as sparse regression n Lagrangian form (P 0) q Tuning parameter controls sparsity in Proposition 1: If solves (P 0) with then solves (VLTS) too. number of outliers chosen s. t. , n The equivalence q Formally justifies the regression model and its estimator (P 0) q Ties sparse regression with robust estimation 6

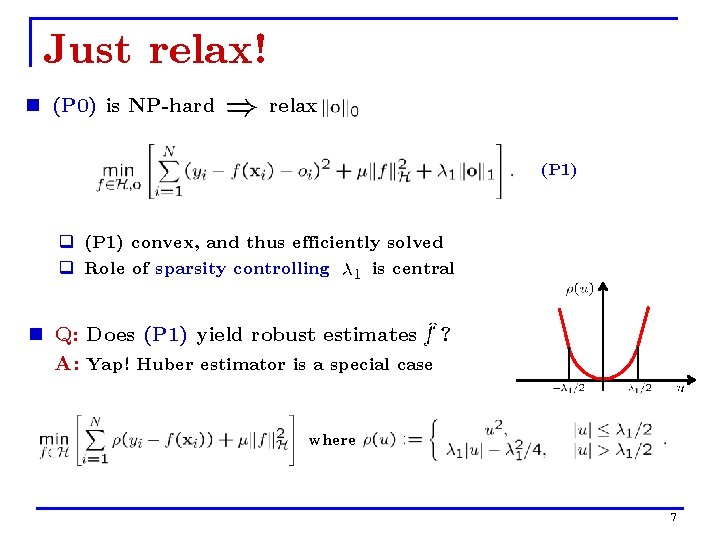

Just relax! n (P 0) is NP-hard relax (P 1) q (P 1) convex, and thus efficiently solved q Role of sparsity controlling is central n Q: Does (P 1) yield robust estimates ? A: Yap! Huber estimator is a special case where 7

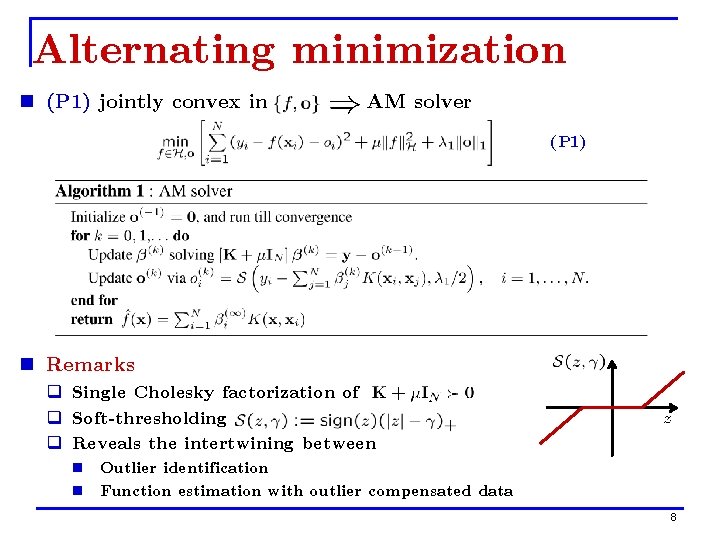

Alternating minimization n (P 1) jointly convex in AM solver (P 1) n Remarks q Single Cholesky factorization of q Soft-thresholding q Reveals the intertwining between n Outlier identification n Function estimation with outlier compensated data 8

![Lassoing outliers n Alternative to AM Proposition 2: Minimizers solve Lasso [Tibshirani’ 94] of Lassoing outliers n Alternative to AM Proposition 2: Minimizers solve Lasso [Tibshirani’ 94] of](http://slidetodoc.com/presentation_image_h2/c993aa5e4785a498a3a8613e8837f5a0/image-9.jpg)

Lassoing outliers n Alternative to AM Proposition 2: Minimizers solve Lasso [Tibshirani’ 94] of (P 1) are fully determined by w/ as and , with n Enables effective methods to select q Lasso solvers return entire robustification path (RP) n Cross-validation (CV) fails with multiple outliers [Hampel’ 86] 9

![Robustification paths q LARS returns whole RP [Efron’ 03] q Same cost of a Robustification paths q LARS returns whole RP [Efron’ 03] q Same cost of a](http://slidetodoc.com/presentation_image_h2/c993aa5e4785a498a3a8613e8837f5a0/image-10.jpg)

Robustification paths q LARS returns whole RP [Efron’ 03] q Same cost of a single LS fit ( ) Coeffs. n Lasso path of solutions is piecewise linear n Lasso is simple in the scalar case q Coordinate descent is fast! [Friedman ‘ 07] q Exploits warm starts, sparsity q Other solvers: Spa. RSA [Wright et al’ 09], SPAMS [Mairal et al’ 10] n Leverage these solvers q values of q For each , consider 2 -D grid values of 10

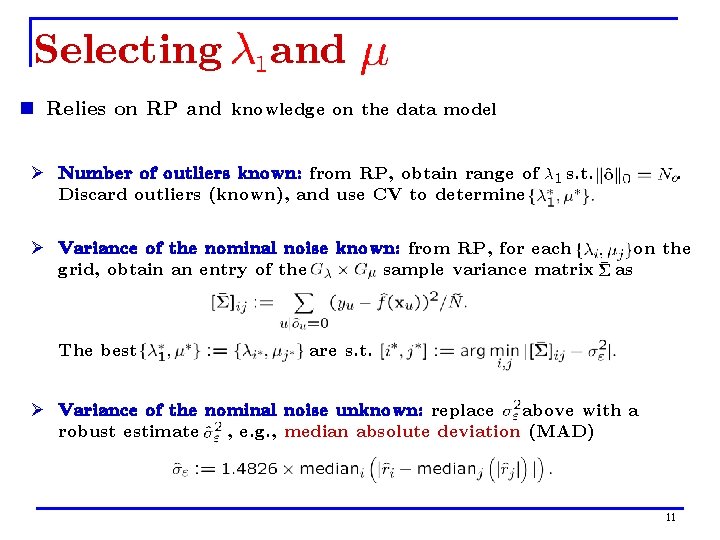

Selecting and n Relies on RP and knowledge on the data model Ø Number of outliers known: from RP, obtain range of Discard outliers (known), and use CV to determine s. t. Ø Variance of the nominal noise known: from RP, for each grid, obtain an entry of the sample variance matrix The best . on the as are s. t. Ø Variance of the nominal noise unknown: replace above with a robust estimate , e. g. , median absolute deviation (MAD) 11

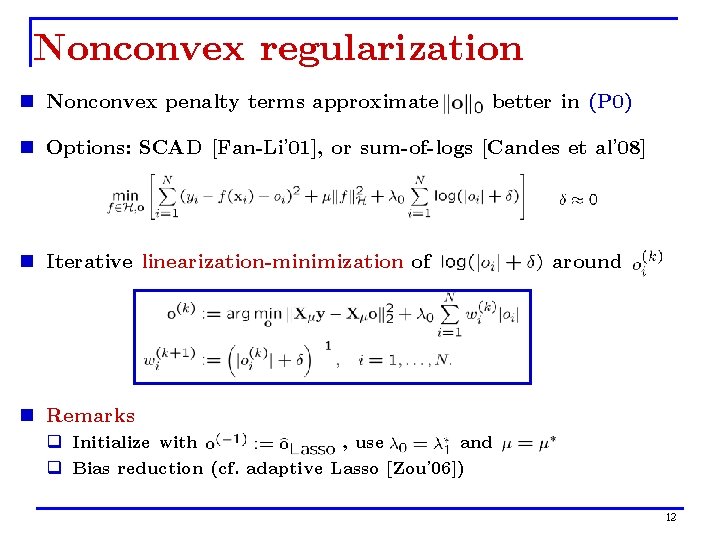

Nonconvex regularization n Nonconvex penalty terms approximate better in (P 0) n Options: SCAD [Fan-Li’ 01], or sum-of-logs [Candes et al’ 08] n Iterative linearization-minimization of around n Remarks q Initialize with , use and q Bias reduction (cf. adaptive Lasso [Zou’ 06]) 12

![Robust thin-plate splines n Specialize to thin-plate splines [Duchon’ 77], [Wahba’ 80] n Smoothing Robust thin-plate splines n Specialize to thin-plate splines [Duchon’ 77], [Wahba’ 80] n Smoothing](http://slidetodoc.com/presentation_image_h2/c993aa5e4785a498a3a8613e8837f5a0/image-13.jpg)

Robust thin-plate splines n Specialize to thin-plate splines [Duchon’ 77], [Wahba’ 80] n Smoothing penalty only a seminorm in n Solution: q Radial basis function q Augment w/ member of the nullspace of q Given , unknowns found in closed form n Still, Proposition 2 holds for appropriate 13

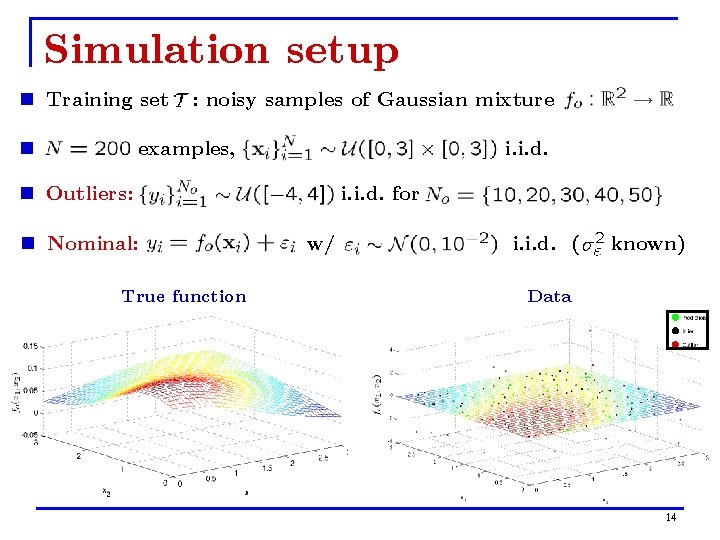

Simulation setup n Training set n : noisy samples of Gaussian mixture examples, i. i. d. n Outliers: n Nominal: True function i. i. d. for w/ i. i. d. ( known) Data 14

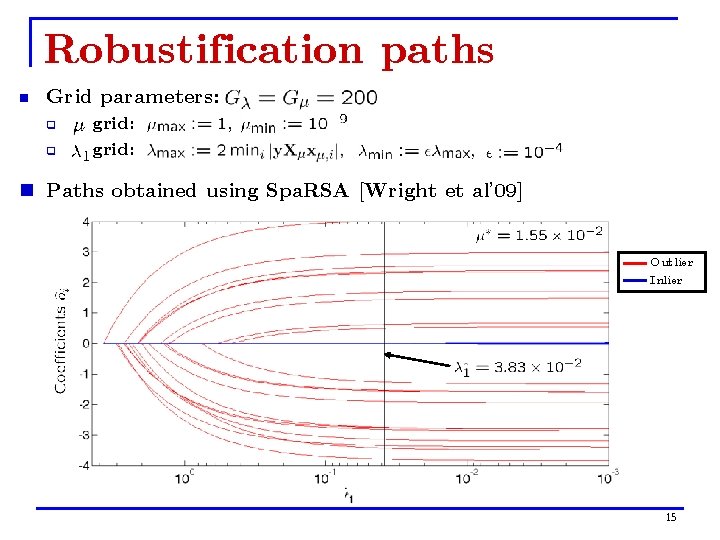

Robustification paths n Grid parameters: q q grid: n Paths obtained using Spa. RSA [Wright et al’ 09] Outlier Inlier 15

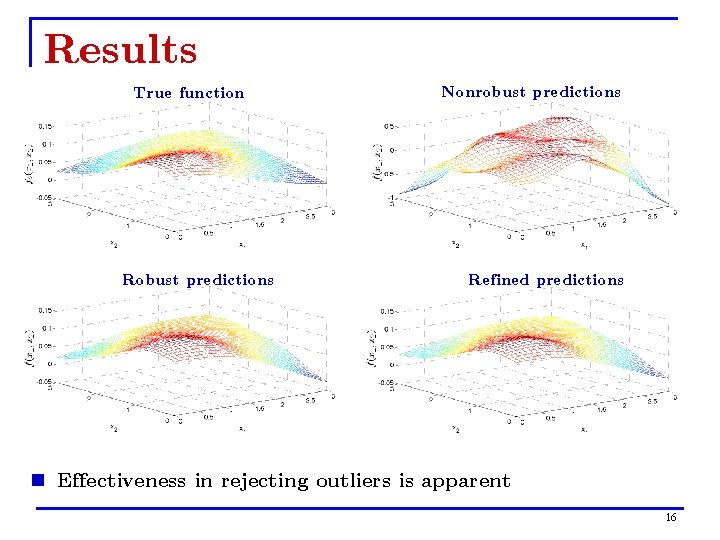

Results True function Robust predictions Nonrobust predictions Refined predictions n Effectiveness in rejecting outliers is apparent 16

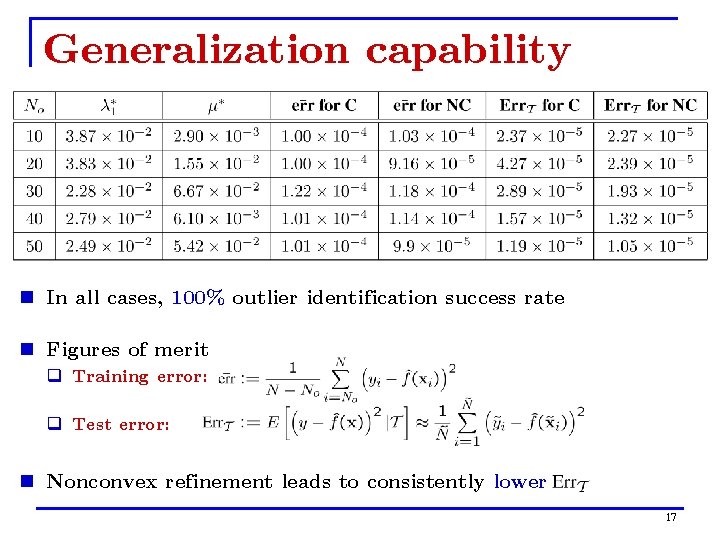

Generalization capability n In all cases, 100% outlier identification success rate n Figures of merit q Training error: q Test error: n Nonconvex refinement leads to consistently lower 17

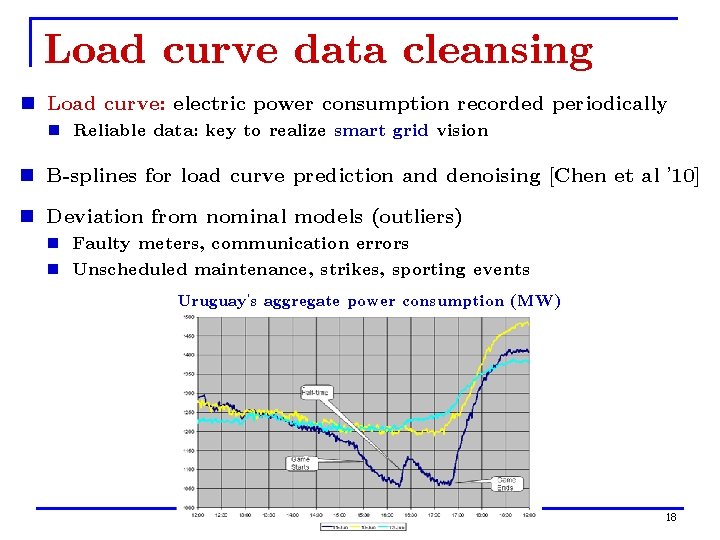

Load curve data cleansing n Load curve: electric power consumption recorded periodically n Reliable data: key to realize smart grid vision n B-splines for load curve prediction and denoising [Chen et al ’ 10] n Deviation from nominal models (outliers) n Faulty meters, communication errors n Unscheduled maintenance, strikes, sporting events Uruguay’s aggregate power consumption (MW) 18

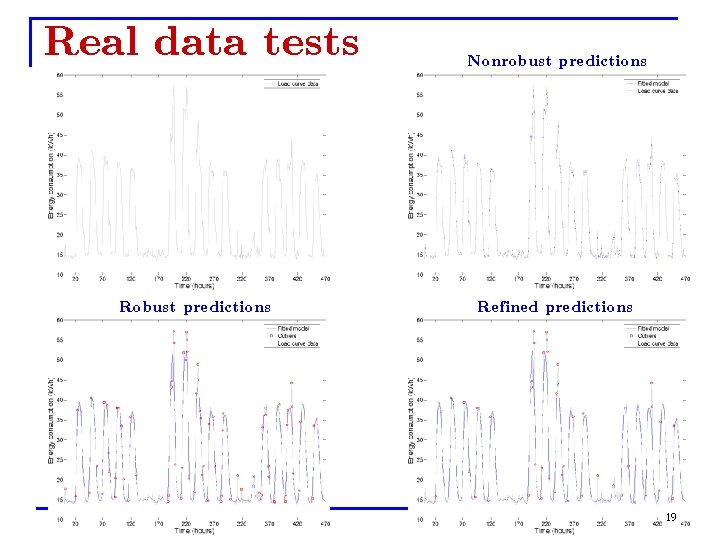

Real data tests Robust predictions Nonrobust predictions Refined predictions 19

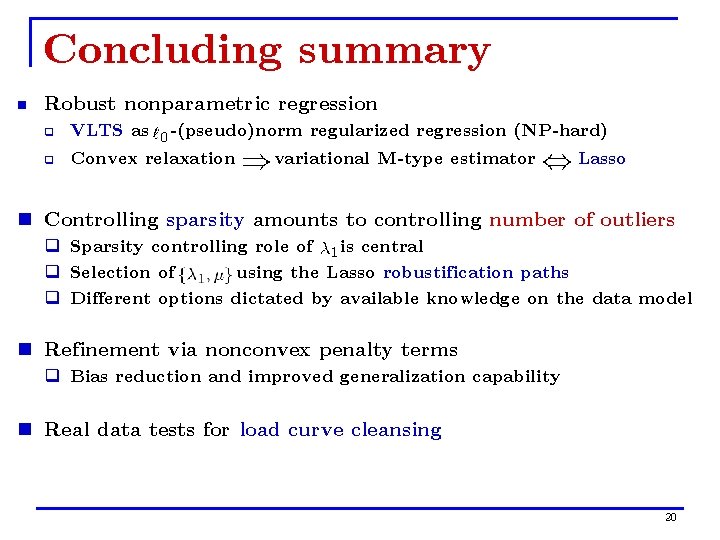

Concluding summary n Robust nonparametric regression q VLTS as -(pseudo)norm regularized regression (NP-hard) q Convex relaxation variational M-type estimator Lasso n Controlling sparsity amounts to controlling number of outliers q Sparsity controlling role of is central q Selection of using the Lasso robustification paths q Different options dictated by available knowledge on the data model n Refinement via nonconvex penalty terms q Bias reduction and improved generalization capability n Real data tests for load curve cleansing 20

- Slides: 20