Robust inference of biological Bayesian networks Masoud Rostami

Robust inference of biological Bayesian networks Masoud Rostami and Kartik Mohanram Department of Electrical and Computer Engineering Rice University, Houston, TX Laboratory for Sub-100 nm Design Department of Electrical and Computer Engineering

Outline q Regulatory networks q Inference techniques, Bayesian networks q Quantization techniques q Improving quantization by bootstrapping q Results on SOS network q Conclusions 2

Gene regulatory networks q Cells are controlled by gene regulatory networks q Microarray shows gene expression } Relative expression of genes over period of time q Reverse engineering to find the underlying network } May be used for drug discovery q Pros } Large amount of data in public repositories q Cons } Data-point scarcity } High levels of noise 3

Network inference q Several techniques to infer with different models } Bayesian networks } Dynamic Bayesian networks } Neural networks } Clustering } Boolean networks q Question of accuracy, stability, and overhead q No consensus q Bayesian networks have solid mathematical foundation 4

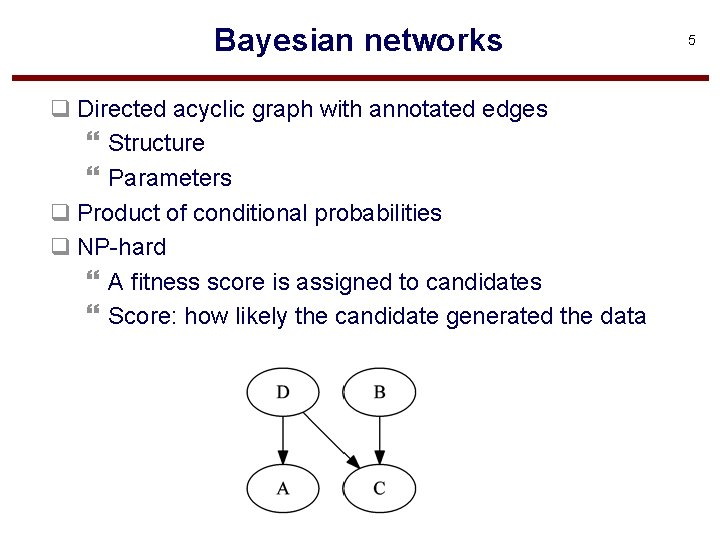

Bayesian networks q Directed acyclic graph with annotated edges } Structure } Parameters q Product of conditional probabilities q NP-hard } A fitness score is assigned to candidates } Score: how likely the candidate generated the data 5

Bayesian networks q Heuristics to find the best score } Simulated annealing } Hill-climbing } Evolutionary algorithms q No notion of time steps q It needs discrete data } At most ternary } Due to scarce data q How to quantize data? 6

Quantization q Should be smoothed? (remove spikes) q Mean? q Median? (quantile quantization) } More robust to outliers q (max+min)/2? (interval quantization) q… q Can we extract as much as information as possible? 7

![An example q Method of quantization impacts the inferred network [1] GDS 1303[ACCN], GEO An example q Method of quantization impacts the inferred network [1] GDS 1303[ACCN], GEO](http://slidetodoc.com/presentation_image/92e22128c085ddbb4011d753324f6872/image-8.jpg)

An example q Method of quantization impacts the inferred network [1] GDS 1303[ACCN], GEO database 8

Time-series q Each sample is dependent on its neighbor q Gene expression samples are dependent } Data does have some structure (it’s a waveform) q Common quantization removes this information 9

Better inference q Artificial ways to increase samples q Represent each sample n times q Takes ‘ 0’ and ‘ 1’ according to the probability q 10 times, p(‘ 1’) = 0. 20 } 2 times ‘ 1’, 8 times ‘ 0’ q Adds computational overhead q How to quantify probability } Use correlation information } Noise model? 10

Time-series Bootstrapping q Bootstrapping generates artificial data from the original q Artificial data is used to asses the accuracy q Time-series bootstrapping preserves data structure [1] B. Efron, R. Tibshirani, “An introduction to the bootstrap”, chapter 8 11

Probability of ‘ 0’ and ‘ 1’ q Find the threshold for each bootstrapped sample q Gives distribution of quantization threshold q Go back and quantize with the new set q The consensus gives probability q Benefits: } Correlation information between samples preserved } No need for a noise model 12

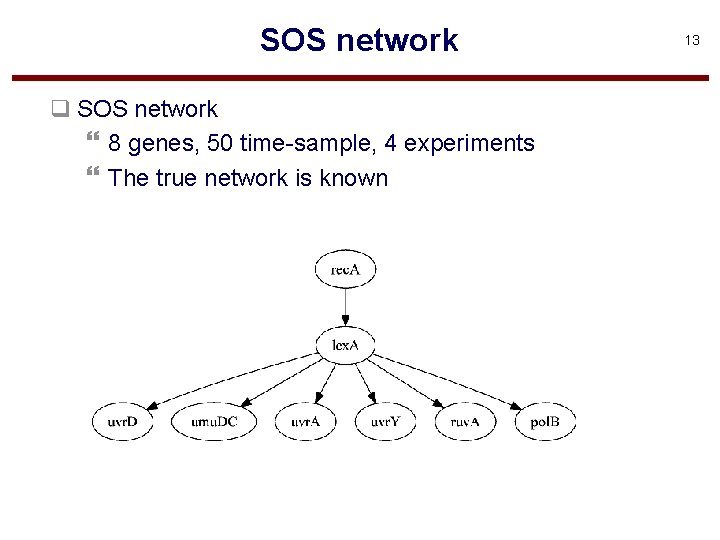

SOS network q SOS network } 8 genes, 50 time-sample, 4 experiments } The true network is known 13

Gene expression pol. B, experiment 1, SOS Time 14

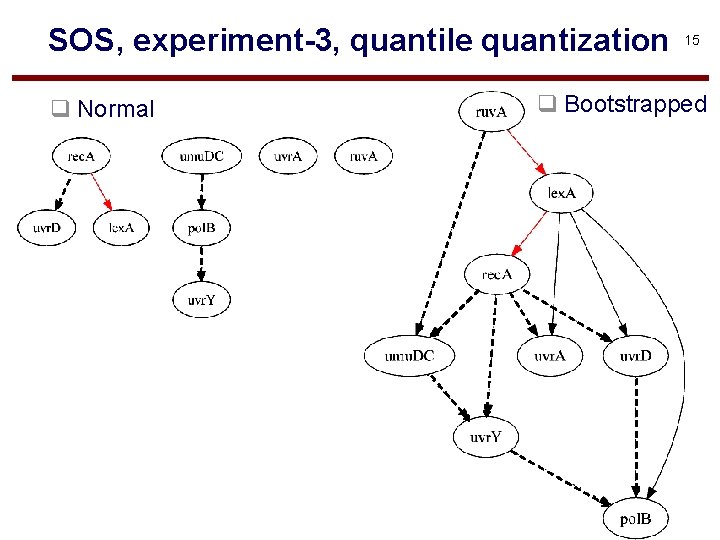

SOS, experiment-3, quantile quantization q Normal 15 q Bootstrapped

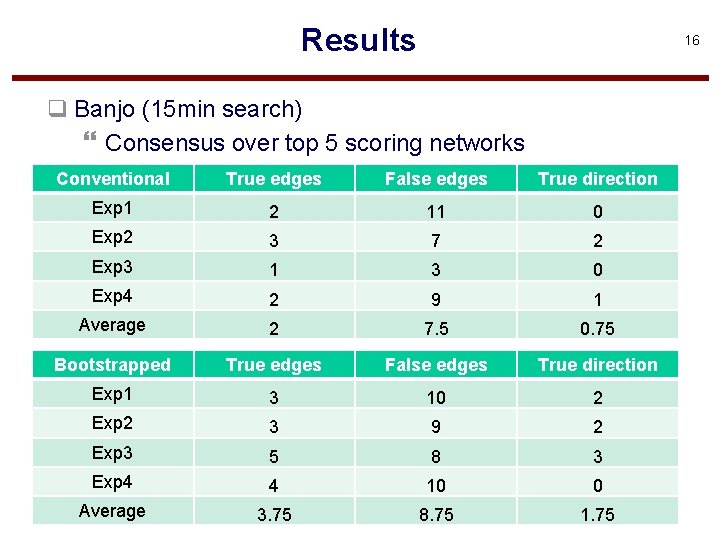

Results 16 q Banjo (15 min search) } Consensus over top 5 scoring networks Conventional True edges False edges True direction Exp 1 2 11 0 Exp 2 3 7 2 Exp 3 1 3 0 Exp 4 2 9 1 Average 2 7. 5 0. 75 Bootstrapped True edges False edges True direction Exp 1 3 10 2 Exp 2 3 9 2 Exp 3 5 8 3 Exp 4 4 10 0 Average 3. 75 8. 75 1. 75

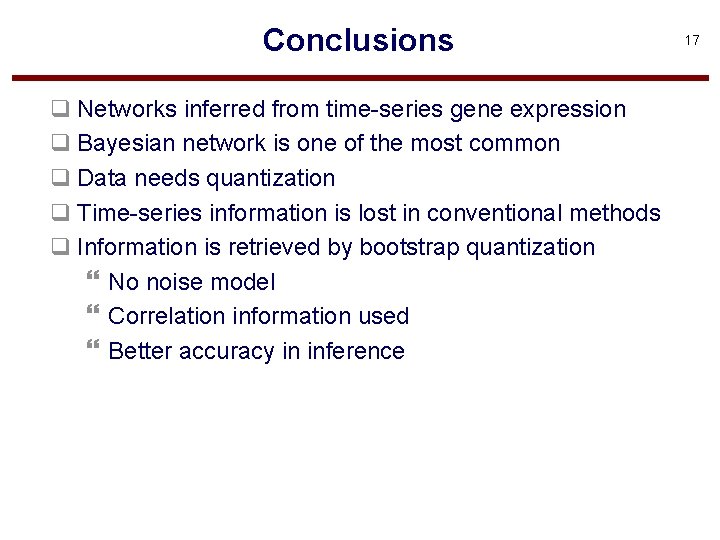

Conclusions q Networks inferred from time-series gene expression q Bayesian network is one of the most common q Data needs quantization q Time-series information is lost in conventional methods q Information is retrieved by bootstrap quantization } No noise model } Correlation information used } Better accuracy in inference 17

- Slides: 17