Revisiting Kirchhoff Migration on GPUs 2015 Rice Oil

- Slides: 26

Revisiting Kirchhoff Migration on GPUs 2015 Rice Oil & Gas HPC Workshop Rajesh Gandham, Rice University & Hess Corporation (intern) Thomas Cullison, Hess Corporation Scott Morton, Hess Corporation

Seismic Experiment http: //www. chevron. pl/images/timeline/rs. Img. Seismic. Imaging 1. jpg

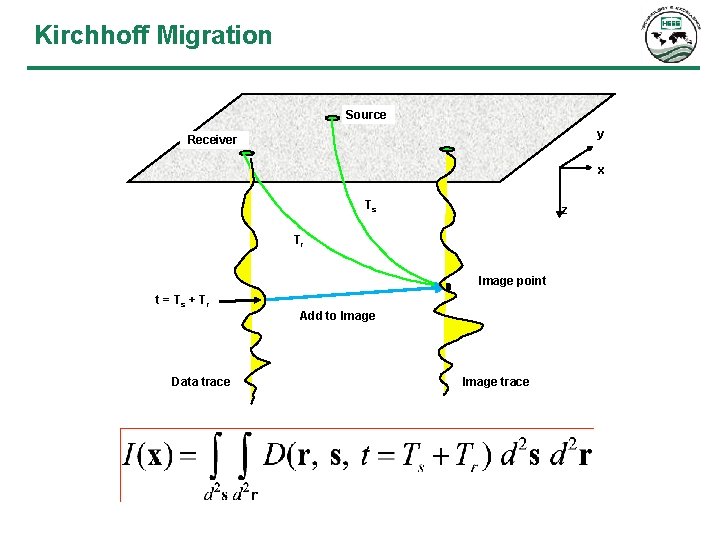

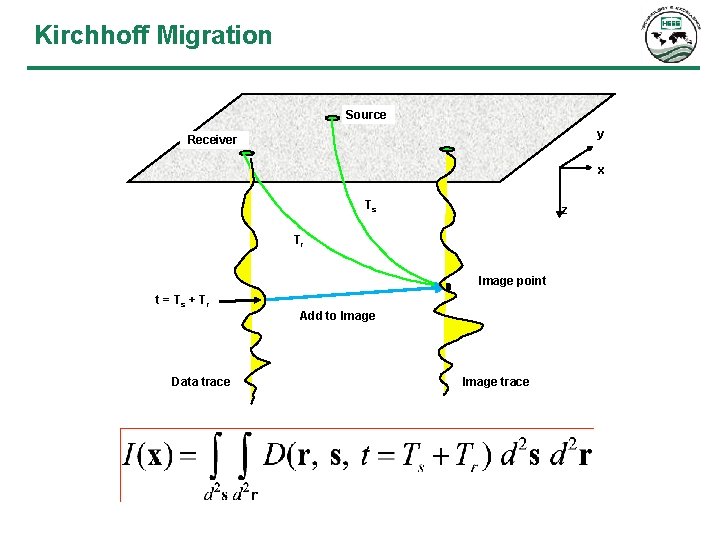

Kirchhoff Migration Source y Receiver x Ts z Tr Image point t = Ts + Tr Data trace Add to Image trace

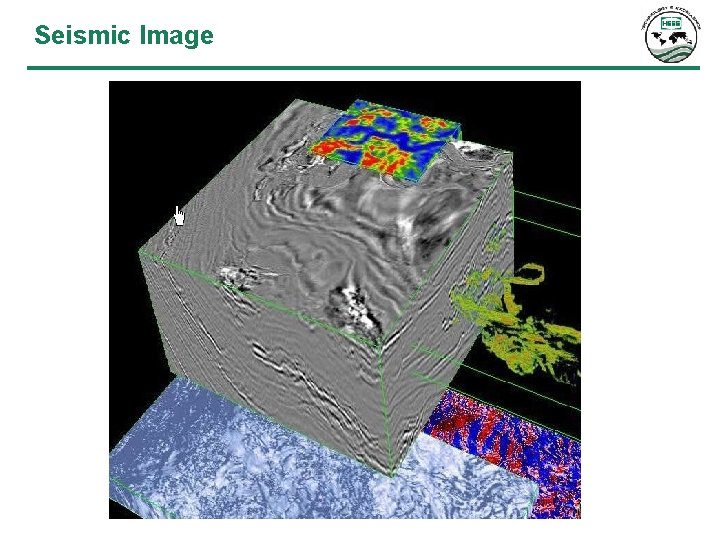

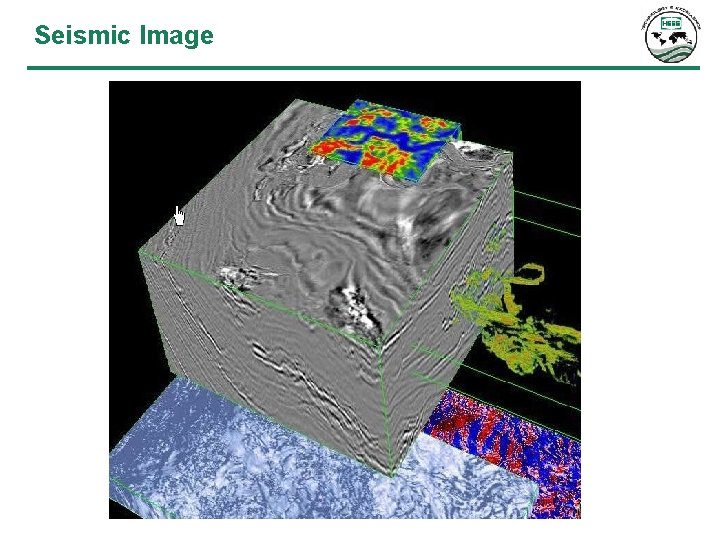

Seismic Image

Project Goals • Hardware portability • General image gathers • Improve migration performance

Project Goals • Hardware portability • General image gathers • Improve migration performance

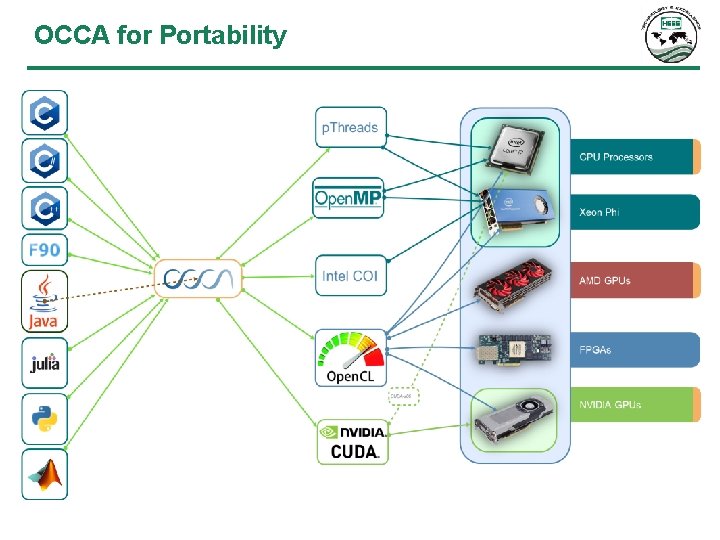

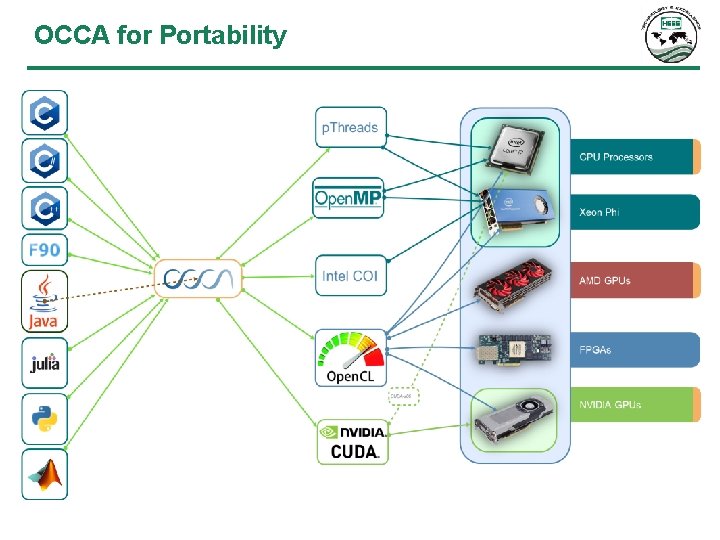

OCCA for Portability

Portability Results • Ported and tested production kernel from CUDA to OCCA in ~3 weeks • Tested and verified kernel results on CPU and GPU • Tested production migration on GPUs • Performance • Greater kernel performance because of runtime compilation • Kernels still need some tuning for best performance on various architectures

Project Goals • Hardware portability • General image gathers • Improve migration performance

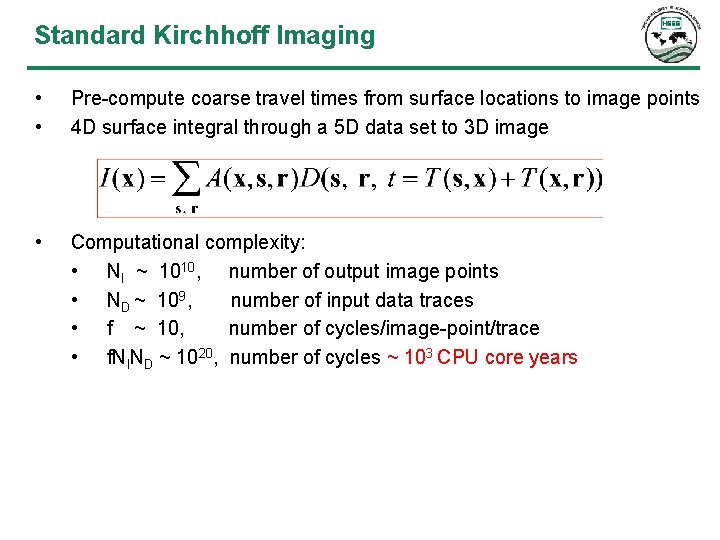

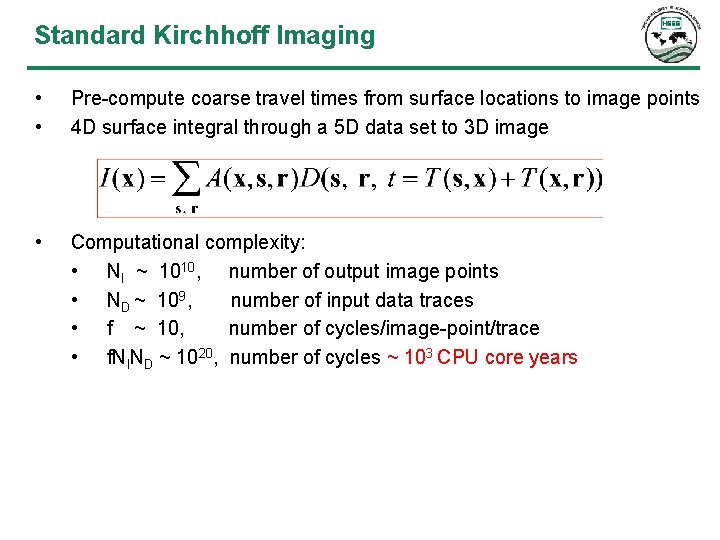

Standard Kirchhoff Imaging • • Pre-compute coarse travel times from surface locations to image points 4 D surface integral through a 5 D data set to 3 D image • Computational complexity: • NI ~ 1010, number of output image points • ND ~ 109, number of input data traces • f ~ 10, number of cycles/image-point/trace • f. Nl. ND ~ 1020, number of cycles ~ 103 CPU core years

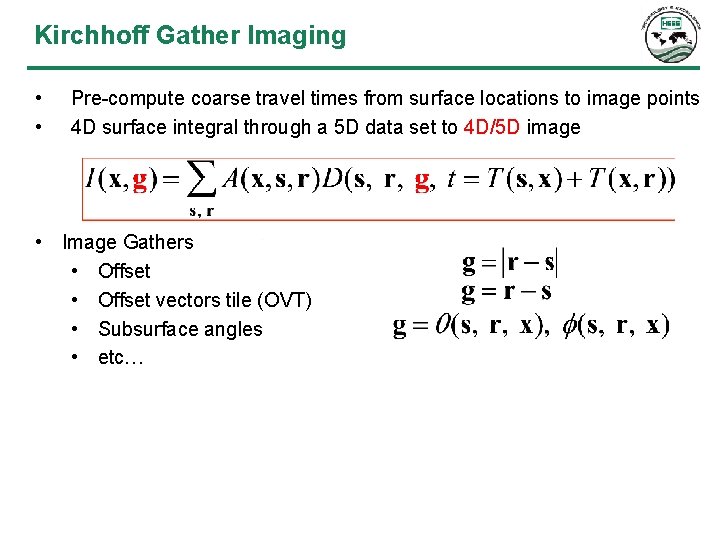

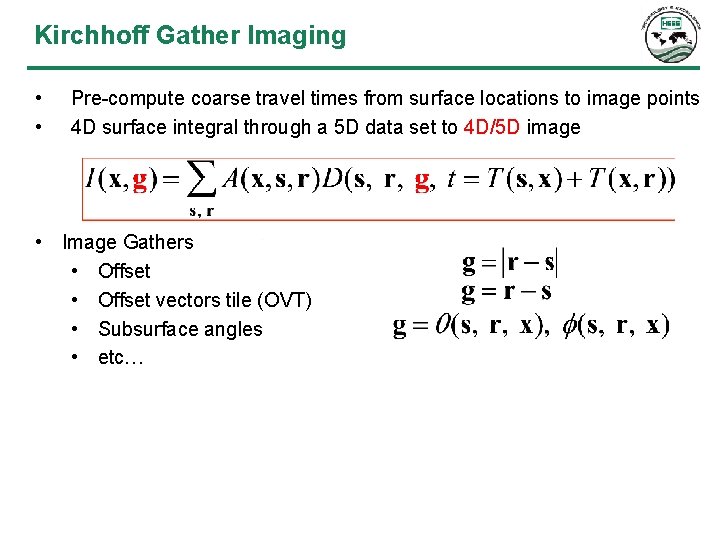

Kirchhoff Gather Imaging • • Pre-compute coarse travel times from surface locations to image points 4 D surface integral through a 5 D data set to 4 D/5 D image • Image Gathers • Offset vectors tile (OVT) • Subsurface angles • etc…

Project Goals • Hardware portability • General image gathers • Improve migration performance

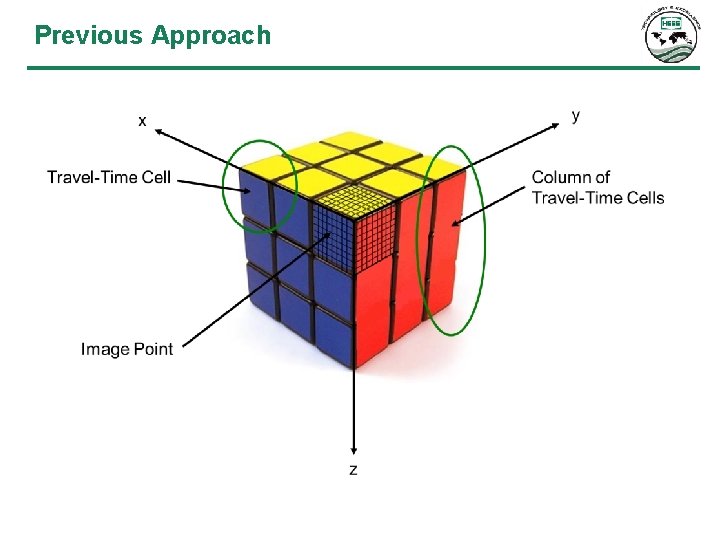

Previous Approach • Define tasks that can be run in parallel • Task should be small enough to fit on a GPU • Copying data to and from the GPU is expensive • Global memory access can be a bottleneck

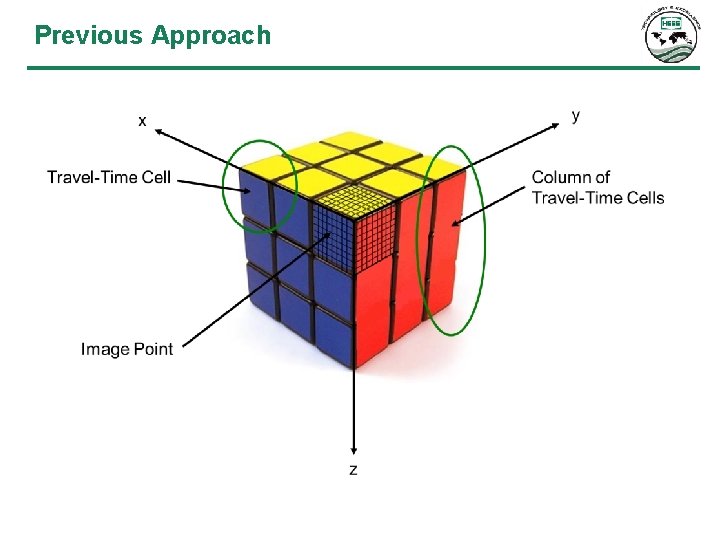

Previous Approach

Previous Approach ↔

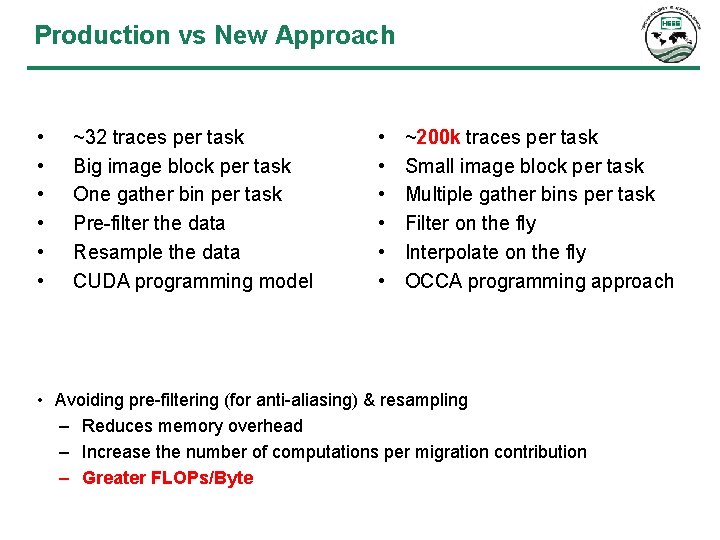

Previous Approach Overview • • • ~32 traces per task Big image block per task One gather bin per task Pre-filter the data Resample the data CUDA programming model

New Approach for Performance • Define tasks that can be run in parallel • Task should be small enough to fit on a GPU • Copying data to and from the GPU is expensive • Global memory access can be a bottleneck • Improve FLOPs/load

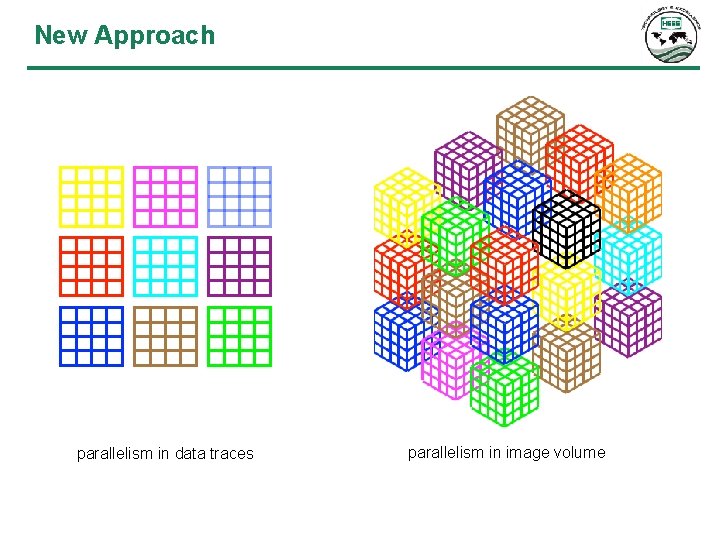

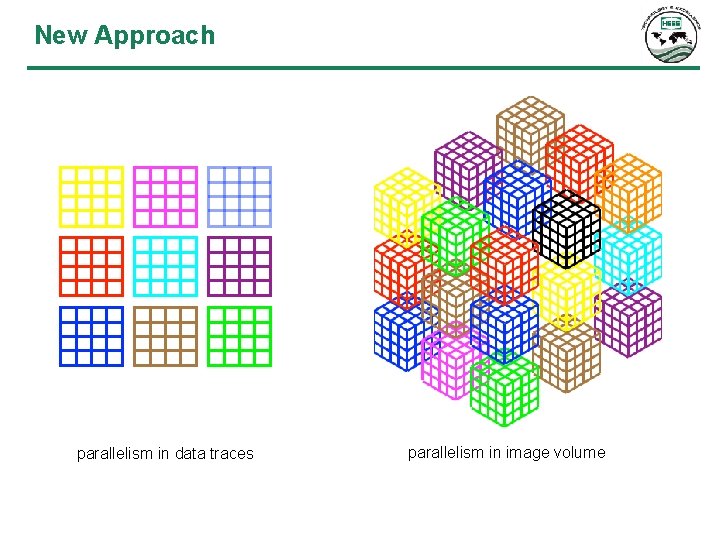

New Approach parallelism in data traces parallelism in image volume

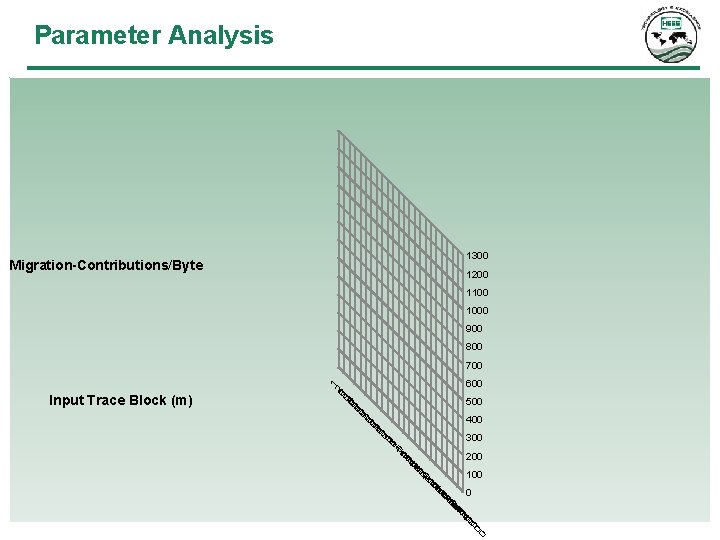

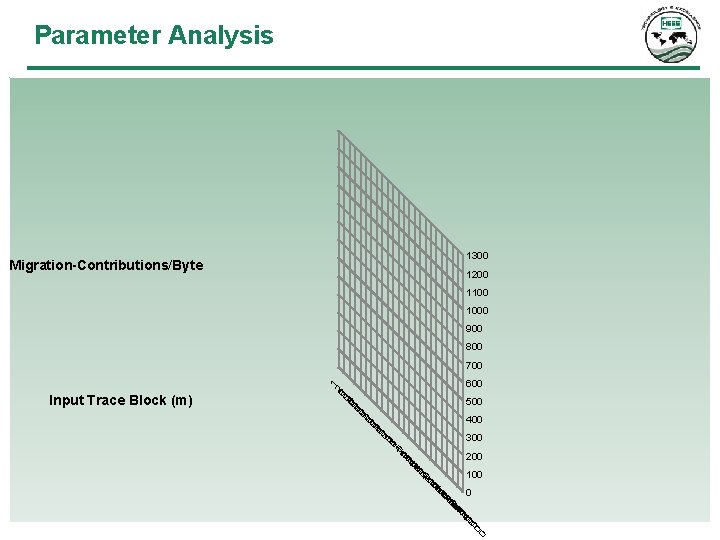

Parameter Analysis 1300 Migration-Contributions/Byte 1200 1100 1000 900 800 500 400 300 200 100 0 0 Input Trace Block (m) 600 02 03 4 0 0050 006 7 0 0 080 009 0 1 0 001 1 0 2 3001 0401 1 0 05 0601 0710 1 0 80 0902 002 2 0 01 0220 3002 0 4 000 00 1 700

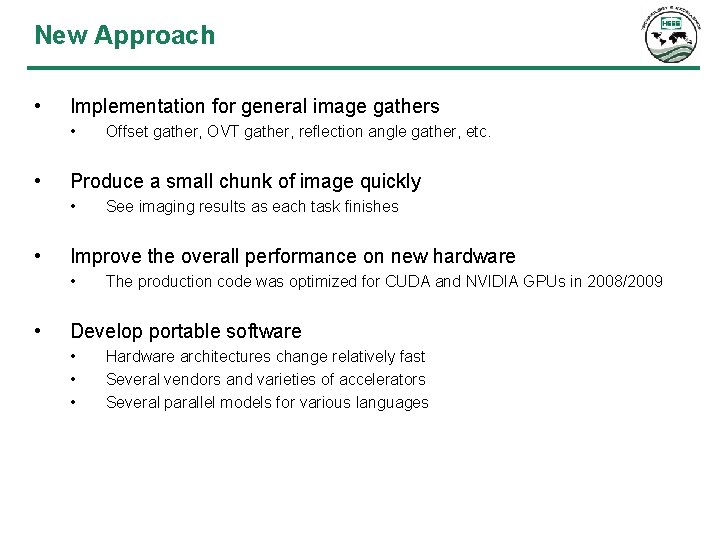

New Approach • Implementation for general image gathers • • Produce a small chunk of image quickly • • See imaging results as each task finishes Improve the overall performance on new hardware • • Offset gather, OVT gather, reflection angle gather, etc. The production code was optimized for CUDA and NVIDIA GPUs in 2008/2009 Develop portable software • • • Hardware architectures change relatively fast Several vendors and varieties of accelerators Several parallel models for various languages

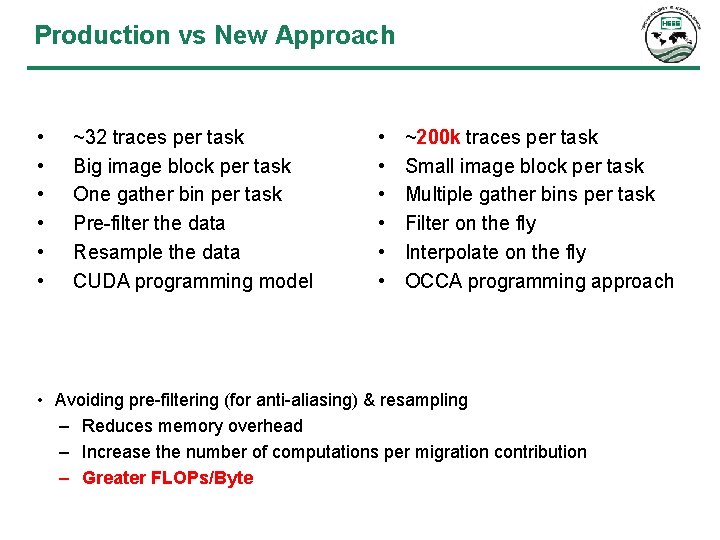

Production vs New Approach • • • ~32 traces per task Big image block per task One gather bin per task Pre-filter the data Resample the data CUDA programming model • • • ~200 k traces per task Small image block per task Multiple gather bins per task Filter on the fly Interpolate on the fly OCCA programming approach • Avoiding pre-filtering (for anti-aliasing) & resampling – Reduces memory overhead – Increase the number of computations per migration contribution – Greater FLOPs/Byte

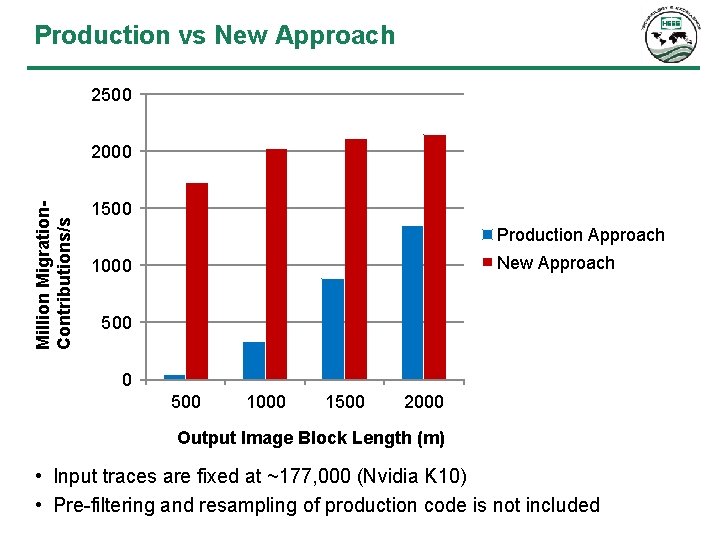

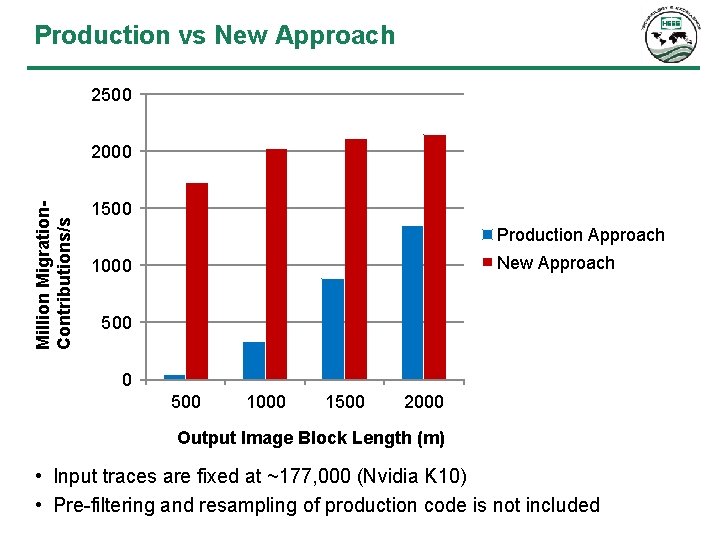

Production vs New Approach 2500 Million Migration. Contributions/s 2000 1500 Production Approach New Approach 1000 500 1000 1500 2000 Output Image Block Length (m) • Input traces are fixed at ~177, 000 (Nvidia K 10) • Pre-filtering and resampling of production code is not included

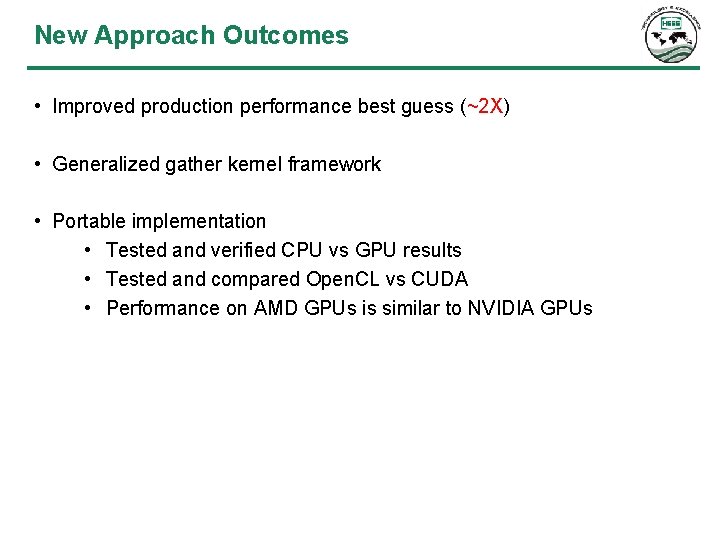

New Approach Outcomes • Improved production performance best guess (~2 X) • Generalized gather kernel framework • Portable implementation • Tested and verified CPU vs GPU results • Tested and compared Open. CL vs CUDA • Performance on AMD GPUs is similar to NVIDIA GPUs

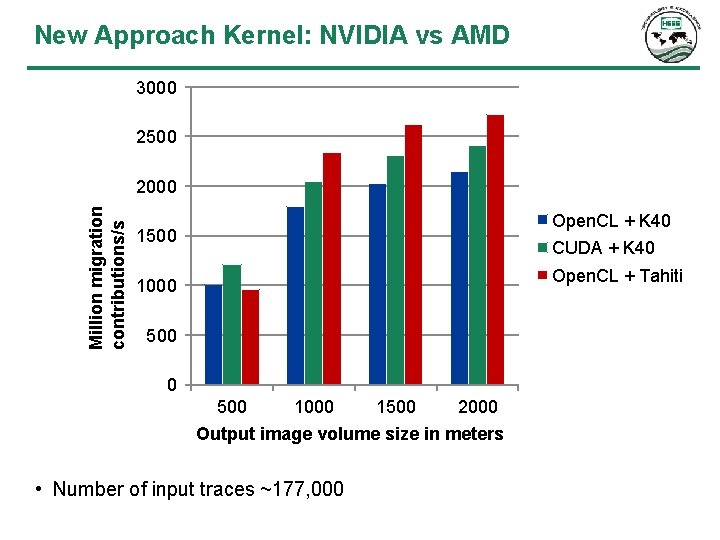

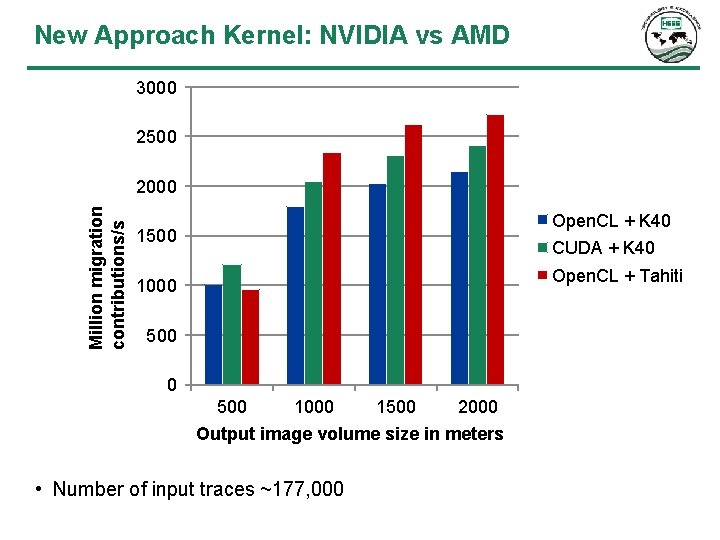

New Approach Kernel: NVIDIA vs AMD 3000 2500 Million migration contributions/s 2000 Open. CL + K 40 1500 CUDA + K 40 Open. CL + Tahiti 1000 500 1000 1500 2000 Output image volume size in meters • Number of input traces ~177, 000

Project Goals Review ü Hardware portability ü General image gathers ü Improve migration performance Future Work • Finish integration of new kernel in to production • More testing on various accelerators • Explore using mixed architecture migrations

Acknowledgements • Hess Corporation • CAAM @ Rice University • Tim Warburton • David Medina