Review Paul Heckbert Computer Science Department Carnegie Mellon

Review Paul Heckbert Computer Science Department Carnegie Mellon University 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 1

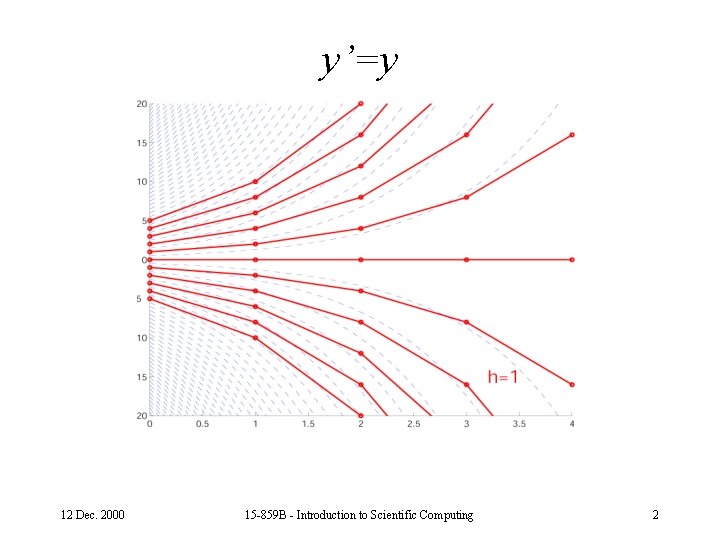

y’=y 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 2

y’=-y, stable but slow solution 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 3

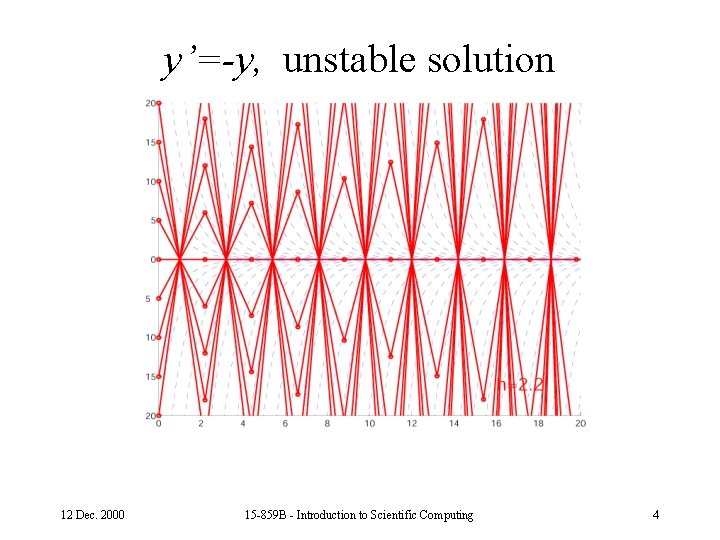

y’=-y, unstable solution 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 4

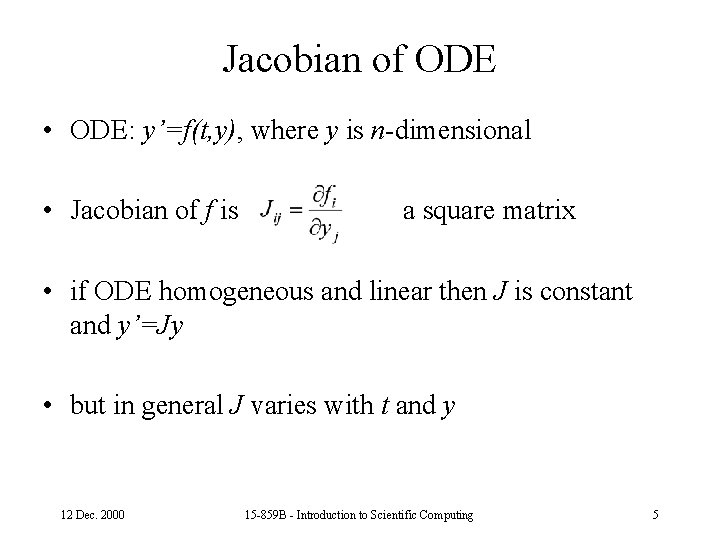

Jacobian of ODE • ODE: y’=f(t, y), where y is n-dimensional • Jacobian of f is a square matrix • if ODE homogeneous and linear then J is constant and y’=Jy • but in general J varies with t and y 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 5

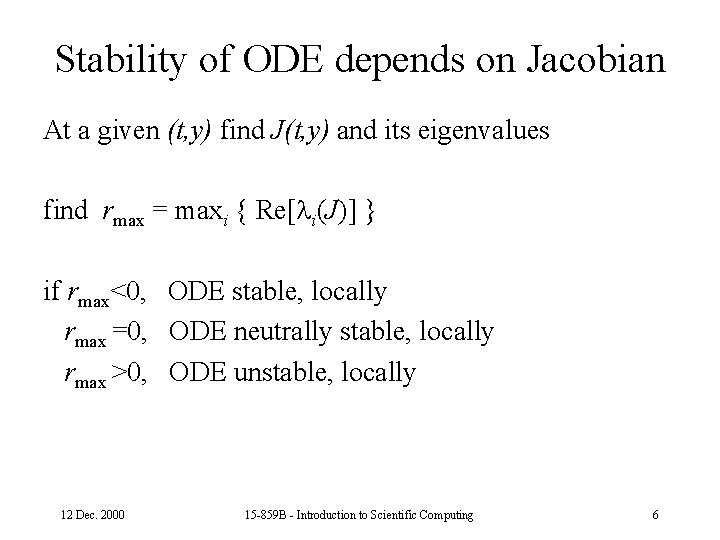

Stability of ODE depends on Jacobian At a given (t, y) find J(t, y) and its eigenvalues find rmax = maxi { Re[ i(J)] } if rmax<0, ODE stable, locally rmax =0, ODE neutrally stable, locally rmax >0, ODE unstable, locally 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 6

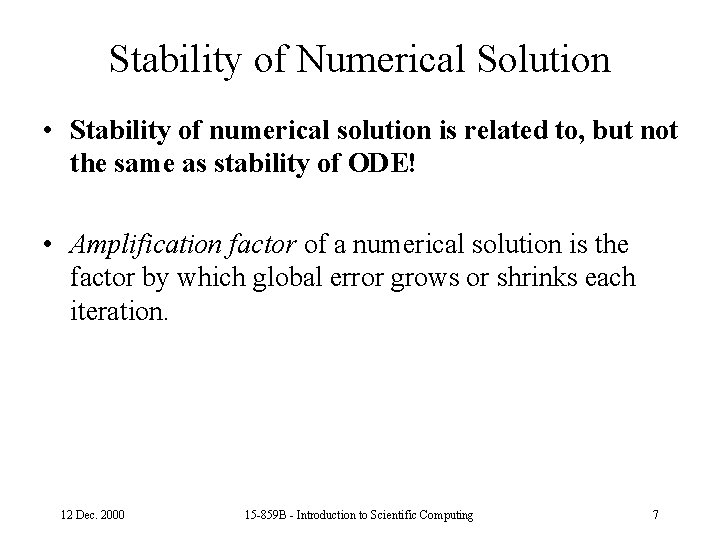

Stability of Numerical Solution • Stability of numerical solution is related to, but not the same as stability of ODE! • Amplification factor of a numerical solution is the factor by which global error grows or shrinks each iteration. 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 7

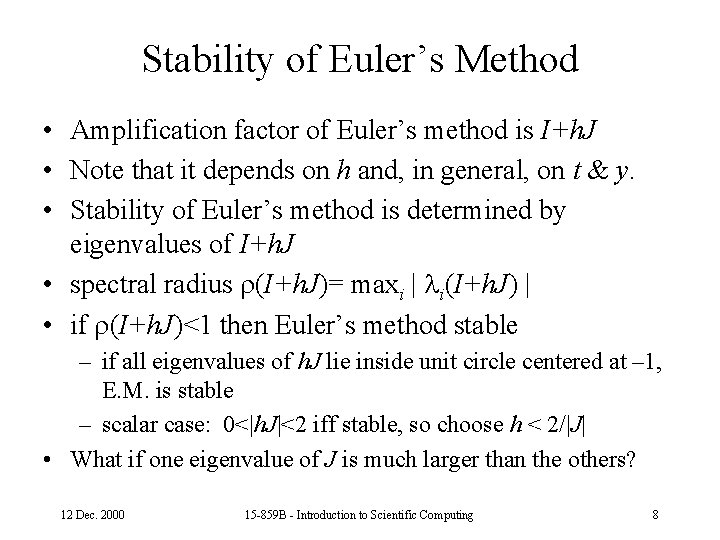

Stability of Euler’s Method • Amplification factor of Euler’s method is I+h. J • Note that it depends on h and, in general, on t & y. • Stability of Euler’s method is determined by eigenvalues of I+h. J • spectral radius (I+h. J)= maxi | i(I+h. J) | • if (I+h. J)<1 then Euler’s method stable – if all eigenvalues of h. J lie inside unit circle centered at – 1, E. M. is stable – scalar case: 0<|h. J|<2 iff stable, so choose h < 2/|J| • What if one eigenvalue of J is much larger than the others? 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 8

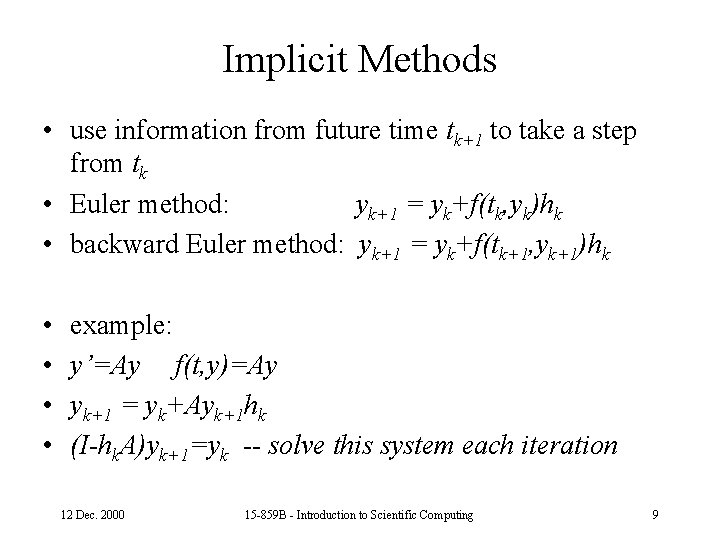

Implicit Methods • use information from future time tk+1 to take a step from tk • Euler method: yk+1 = yk+f(tk, yk)hk • backward Euler method: yk+1 = yk+f(tk+1, yk+1)hk • • example: y’=Ay f(t, y)=Ay yk+1 = yk+Ayk+1 hk (I-hk. A)yk+1=yk -- solve this system each iteration 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 9

Stability of Backward Euler’s Method • Amplification factor of B. E. M. is (I-h. J)-1 • B. E. M. is stable independent of h (unconditionally stable) as long as rmax<0, i. e. as long as ODE is stable • Implicit methods such as this permit bigger steps to be taken (larger h) 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 10

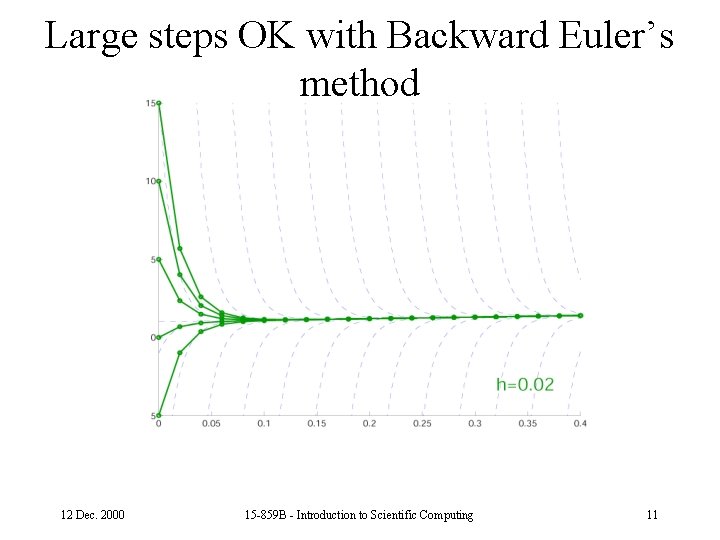

Large steps OK with Backward Euler’s method 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 11

Popular IVP Solution Methods • • Euler’s method, 1 st order backward Euler’s method, 1 st order trapezoid method (a. k. a. 2 nd order Adams-Moulton) 4 th order Runge-Kutta • If a method is pth order accurate then its global error is O(hp) 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 12

Solving Boundary Value Problems • Shooting Method – transform BVP into IVP and root-finding • Finite Difference Method – transform BVP into system of equations (linear? ) • Finite Element Method – choice of basis: linear, quadratic, . . . – collocation method • residual is zero at selected points – Galerkin method • residual function is orthogonal to each basis function 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 13

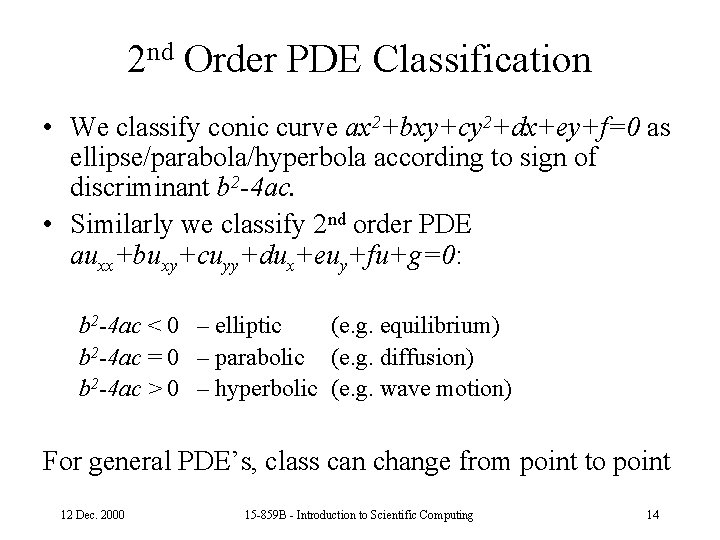

2 nd Order PDE Classification • We classify conic curve ax 2+bxy+cy 2+dx+ey+f=0 as ellipse/parabola/hyperbola according to sign of discriminant b 2 -4 ac. • Similarly we classify 2 nd order PDE auxx+buxy+cuyy+dux+euy+fu+g=0: b 2 -4 ac < 0 – elliptic (e. g. equilibrium) b 2 -4 ac = 0 – parabolic (e. g. diffusion) b 2 -4 ac > 0 – hyperbolic (e. g. wave motion) For general PDE’s, class can change from point to point 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 14

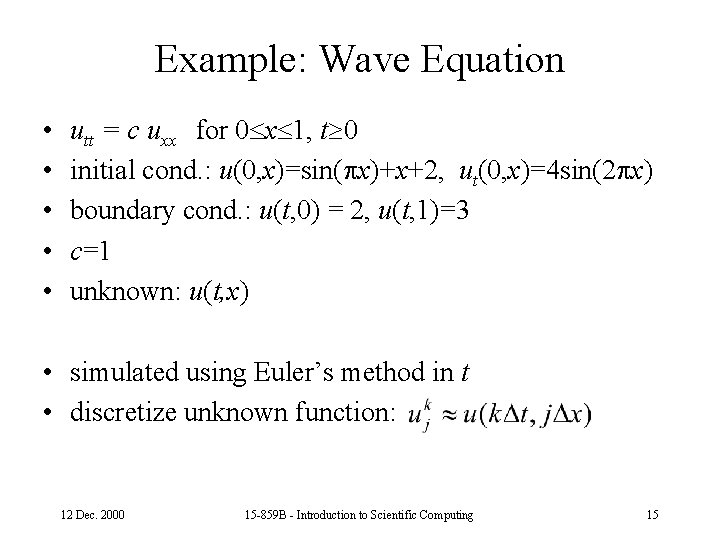

Example: Wave Equation • • • utt = c uxx for 0 x 1, t 0 initial cond. : u(0, x)=sin( x)+x+2, ut(0, x)=4 sin(2 x) boundary cond. : u(t, 0) = 2, u(t, 1)=3 c=1 unknown: u(t, x) • simulated using Euler’s method in t • discretize unknown function: 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 15

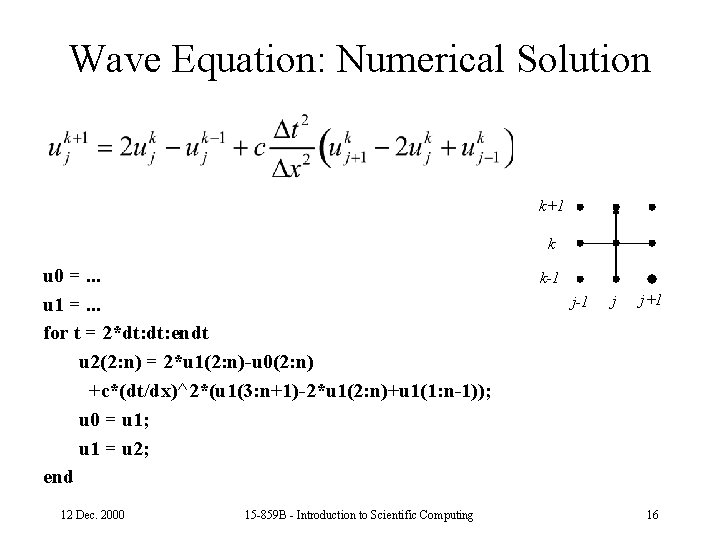

Wave Equation: Numerical Solution k+1 k u 0 =. . . u 1 =. . . for t = 2*dt: endt u 2(2: n) = 2*u 1(2: n)-u 0(2: n) +c*(dt/dx)^2*(u 1(3: n+1)-2*u 1(2: n)+u 1(1: n-1)); u 0 = u 1; u 1 = u 2; end 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing k-1 j j+1 16

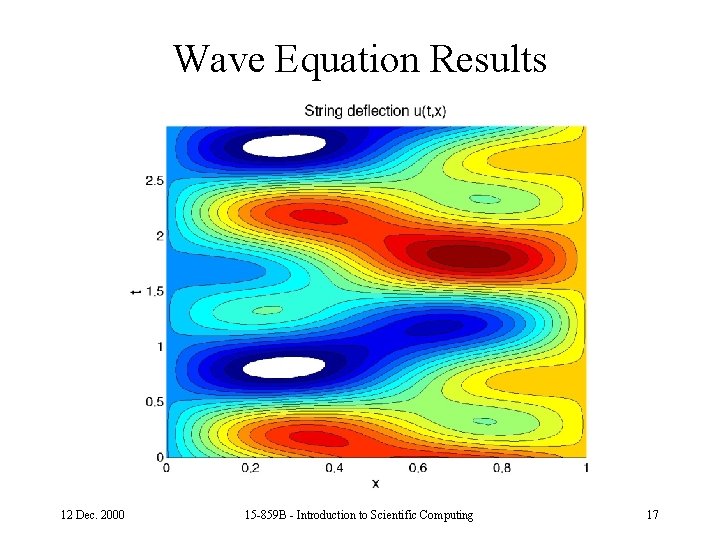

Wave Equation Results 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 17

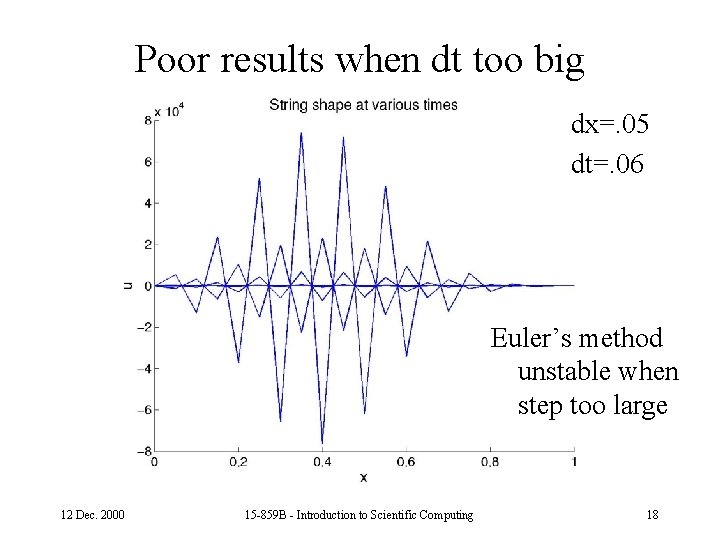

Poor results when dt too big dx=. 05 dt=. 06 Euler’s method unstable when step too large 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 18

PDE Solution Methods • Discretize in space, transform into system of IVP’s • Discretize in space and time, finite difference method. • Discretize in space and time, finite element method. – Latter methods yield sparse systems. • Sometimes the geometry and boundary conditions are simple (e. g. rectangular grid); • Sometimes they’re not (need mesh of triangles). 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 19

PDE’s and Sparse Systems • A system of equations is sparse when there are few nonzero coefficients, e. g. O(n) nonzeros in an nxn matrix. • Partial Differential Equations generally yield sparse systems of equations. • Integral Equations generally yield dense (non-sparse) systems of equations. • Sparse systems come from other sources besides PDE’s. 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 20

Example: PDE Yielding Sparse System • Laplace’s Equation in 2 -D: 2 u = uxx +uyy = 0 – domain is unit square [0, 1]2 – value of function u(x, y) specified on boundary – solve for u(x, y) in interior 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 21

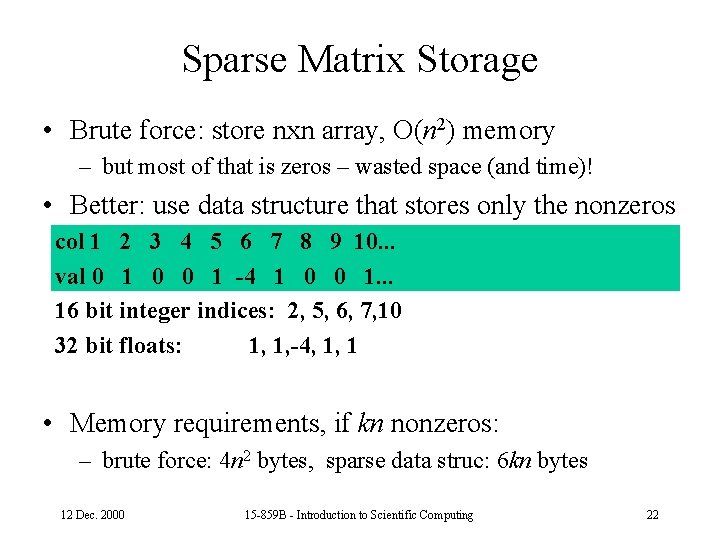

Sparse Matrix Storage • Brute force: store nxn array, O(n 2) memory – but most of that is zeros – wasted space (and time)! • Better: use data structure that stores only the nonzeros col 1 2 3 4 5 6 7 8 9 10. . . val 0 1 0 0 1 -4 1 0 0 1. . . 16 bit integer indices: 2, 5, 6, 7, 10 32 bit floats: 1, 1, -4, 1, 1 • Memory requirements, if kn nonzeros: – brute force: 4 n 2 bytes, sparse data struc: 6 kn bytes 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 22

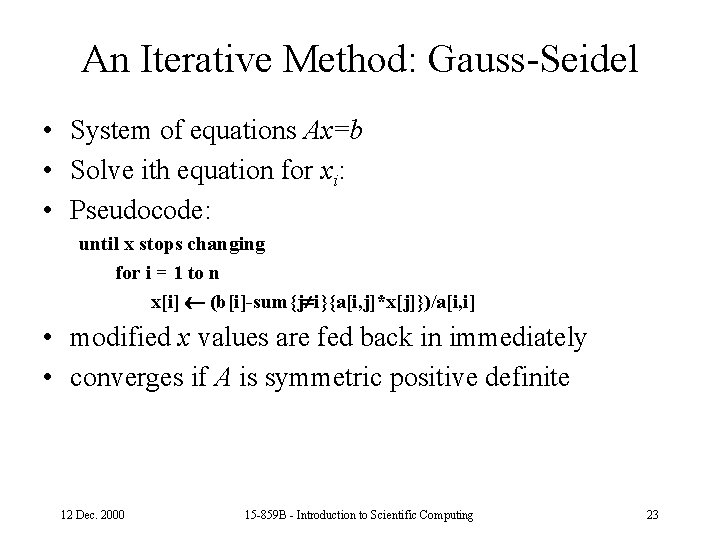

An Iterative Method: Gauss-Seidel • System of equations Ax=b • Solve ith equation for xi: • Pseudocode: until x stops changing for i = 1 to n x[i] (b[i]-sum{j i}{a[i, j]*x[j]})/a[i, i] • modified x values are fed back in immediately • converges if A is symmetric positive definite 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 23

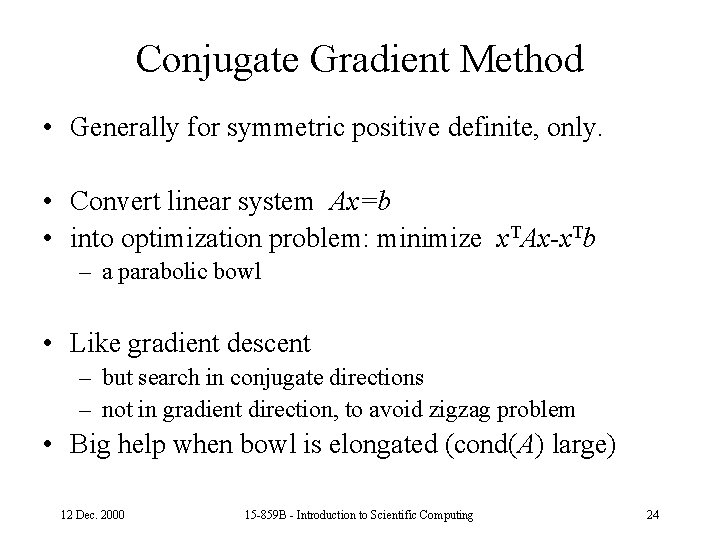

Conjugate Gradient Method • Generally for symmetric positive definite, only. • Convert linear system Ax=b • into optimization problem: minimize x. TAx-x. Tb – a parabolic bowl • Like gradient descent – but search in conjugate directions – not in gradient direction, to avoid zigzag problem • Big help when bowl is elongated (cond(A) large) 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 24

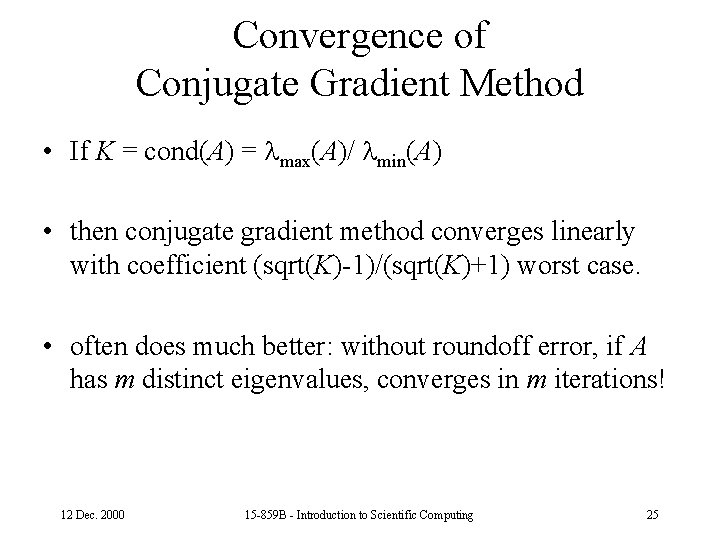

Convergence of Conjugate Gradient Method • If K = cond(A) = max(A)/ min(A) • then conjugate gradient method converges linearly with coefficient (sqrt(K)-1)/(sqrt(K)+1) worst case. • often does much better: without roundoff error, if A has m distinct eigenvalues, converges in m iterations! 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 25

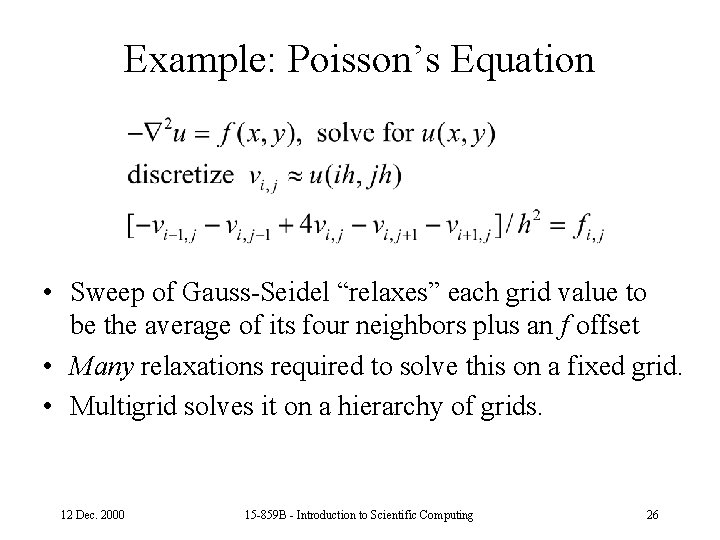

Example: Poisson’s Equation • Sweep of Gauss-Seidel “relaxes” each grid value to be the average of its four neighbors plus an f offset • Many relaxations required to solve this on a fixed grid. • Multigrid solves it on a hierarchy of grids. 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 26

Elements of Multigrid Method • relax on a given grid a few times • coarsen (restrict) a grid • refine (interpolate) a grid 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 27

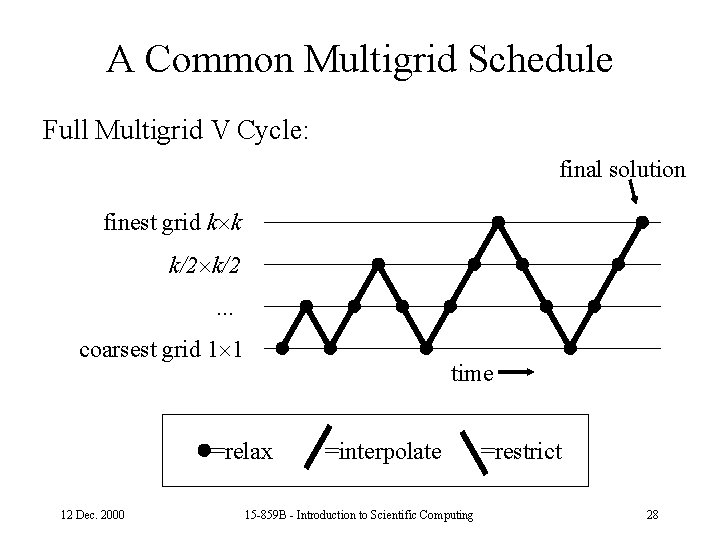

A Common Multigrid Schedule Full Multigrid V Cycle: final solution finest grid k k k/2. . . coarsest grid 1 1 time =relax 12 Dec. 2000 =interpolate 15 -859 B - Introduction to Scientific Computing =restrict 28

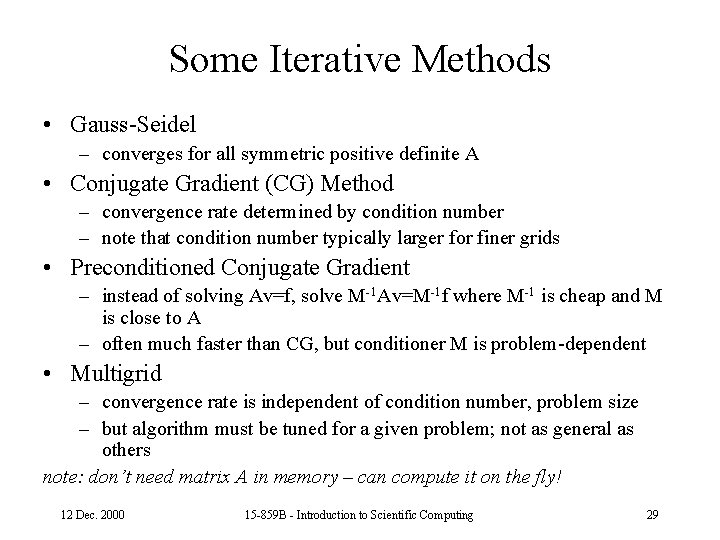

Some Iterative Methods • Gauss-Seidel – converges for all symmetric positive definite A • Conjugate Gradient (CG) Method – convergence rate determined by condition number – note that condition number typically larger for finer grids • Preconditioned Conjugate Gradient – instead of solving Av=f, solve M-1 Av=M-1 f where M-1 is cheap and M is close to A – often much faster than CG, but conditioner M is problem-dependent • Multigrid – convergence rate is independent of condition number, problem size – but algorithm must be tuned for a given problem; not as general as others note: don’t need matrix A in memory – can compute it on the fly! 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 29

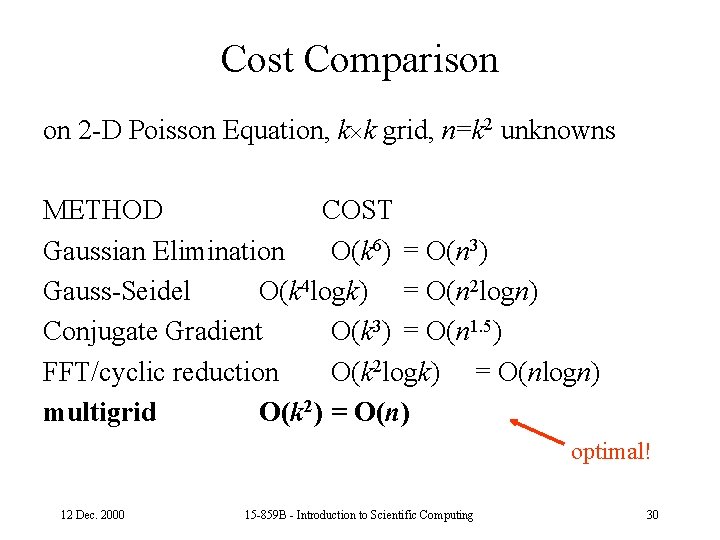

Cost Comparison on 2 -D Poisson Equation, k k grid, n=k 2 unknowns METHOD COST Gaussian Elimination O(k 6) = O(n 3) Gauss-Seidel O(k 4 logk) = O(n 2 logn) Conjugate Gradient O(k 3) = O(n 1. 5) FFT/cyclic reduction O(k 2 logk) = O(nlogn) multigrid O(k 2) = O(n) optimal! 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 30

Function Representations • sequence of samples (time domain) – finite difference method • pyramid (hierarchical) • polynomial • sinusoids of various frequency (frequency domain) – Fourier series • piecewise polynomials (finite support) – finite element method, splines • wavelet (hierarchical, finite support) – (time/frequency domain) 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 31

What Are Wavelets? In general, a family of representations using: • hierarchical (nested) basis functions • finite (“compact”) support • basis functions often orthogonal • fast transforms, often linear-time 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 32

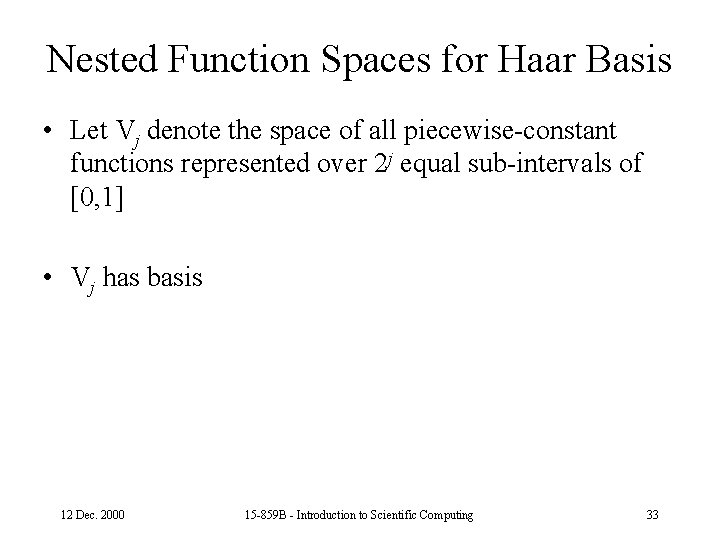

Nested Function Spaces for Haar Basis • Let Vj denote the space of all piecewise-constant functions represented over 2 j equal sub-intervals of [0, 1] • Vj has basis 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 33

Function Representations – Desirable Properties • generality – approximate anything well – discontinuities, nonperiodicity, . . . • adaptable to application – audio, pictures, flow field, terrain data, . . . • compact – approximate function with few coefficients – facilitates compression, storage, transmission • fast to compute with – differential/integral operators are sparse in this basis – Convert n-sample function to representation in O(nlogn) or O(n) time 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 34

Time-Frequency Analysis • For many applications, you want to analyze a function in both time and frequency • Analogous to a musical score • Fourier transforms give you frequency information, smearing time. • Samples of a function give you temporal information, smearing frequency. • Note: substitute “space” for “time” for pictures. 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 35

Comparison to Fourier Analysis • Fourier analysis – Basis is global – Sinusoids with frequencies in arithmetic progression • Short-time Fourier Transform (& Gabor filters) – Basis is local – Sinusoid times Gaussian – Fixed-width Gaussian “window” • Wavelet – Basis is local – Frequencies in geometric progression – Basis has constant shape independent of scale 12 Dec. 2000 15 -859 B - Introduction to Scientific Computing 36

- Slides: 36