Review in Computerized Peer Assessment Dr Phil Davies

- Slides: 48

Review in Computerized Peer. Assessment Dr Phil Davies Department of Computing Division of Computing & Mathematical Sciences FAT University of Glamorgan

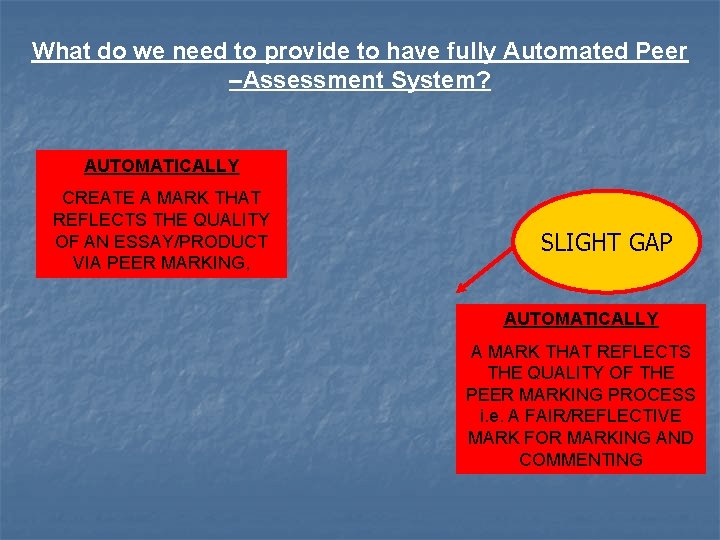

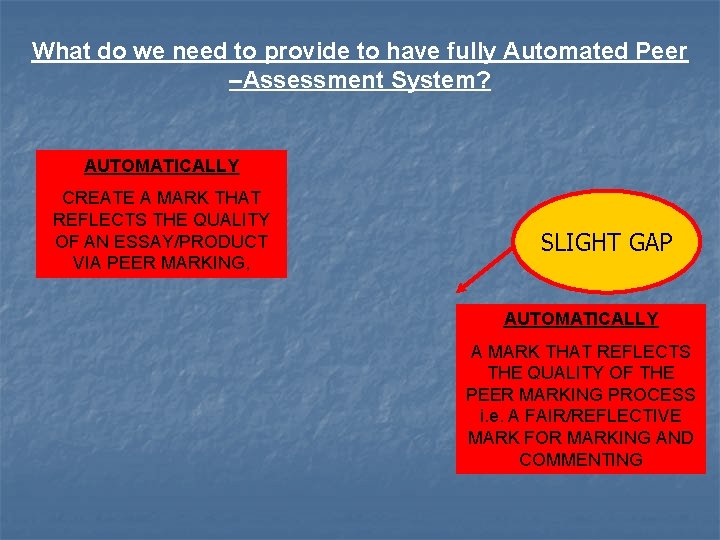

What do we need to provide to have fully Automated Peer –Assessment System? AUTOMATICALLY CREATE A MARK THAT REFLECTS THE QUALITY OF AN ESSAY/PRODUCT VIA PEER MARKING, SLIGHT GAP AUTOMATICALLY A MARK THAT REFLECTS THE QUALITY OF THE PEER MARKING PROCESS i. e. A FAIR/REFLECTIVE MARK FOR MARKING AND COMMENTING

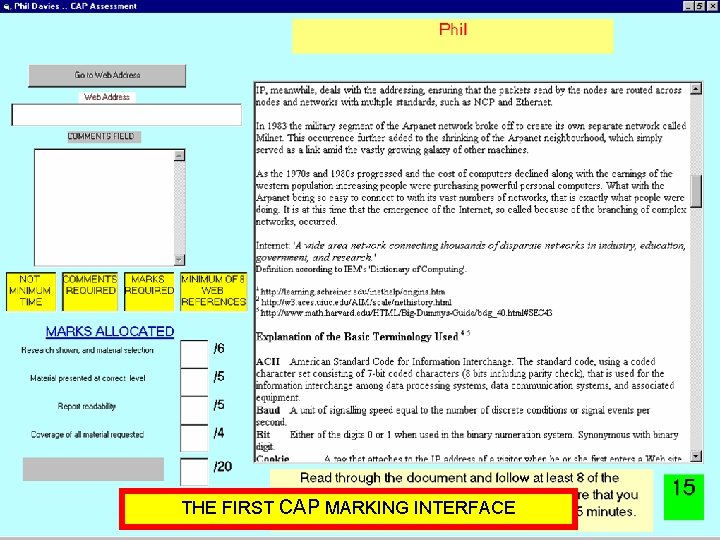

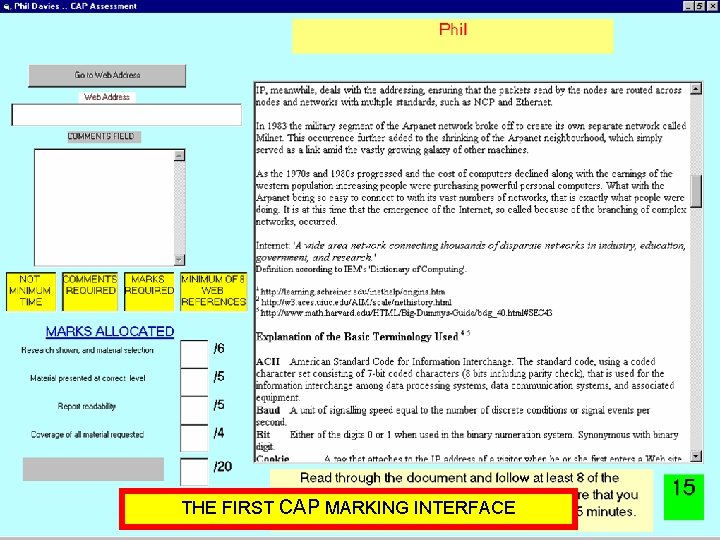

THE FIRST CAP MARKING INTERFACE

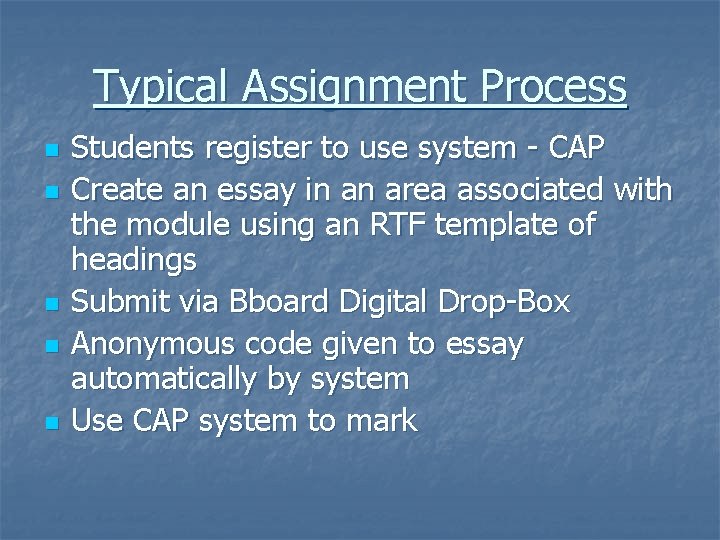

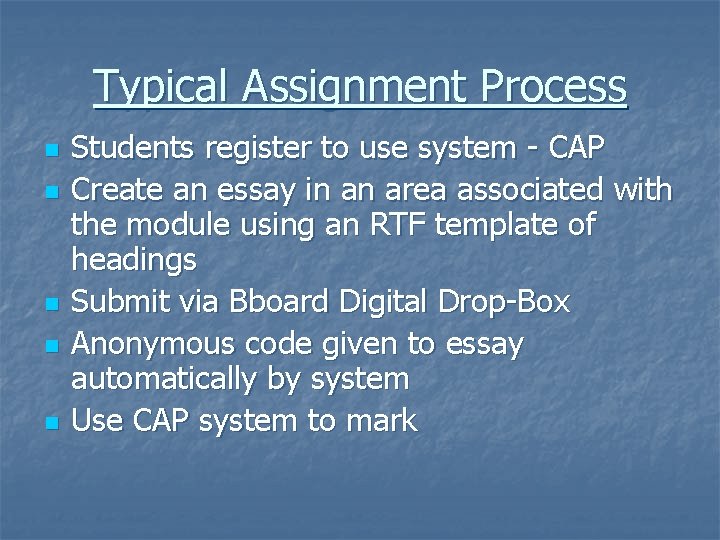

Typical Assignment Process n n n Students register to use system - CAP Create an essay in an area associated with the module using an RTF template of headings Submit via Bboard Digital Drop-Box Anonymous code given to essay automatically by system Use CAP system to mark

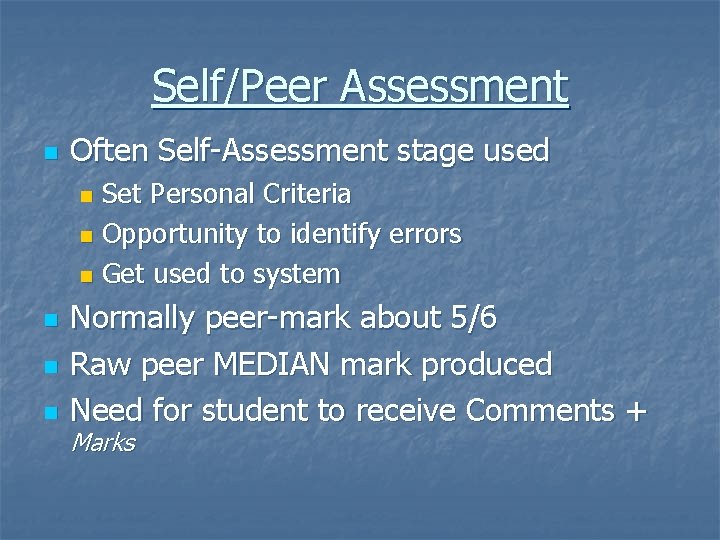

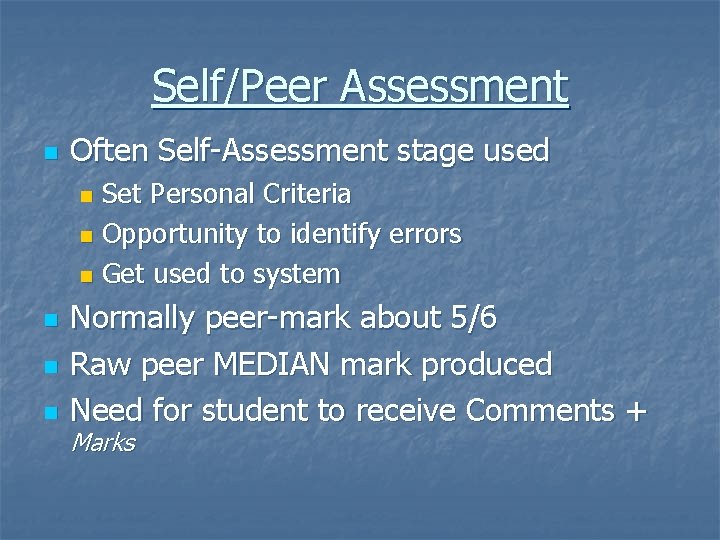

Self/Peer Assessment n Often Self-Assessment stage used Set Personal Criteria n Opportunity to identify errors n Get used to system n n Normally peer-mark about 5/6 Raw peer MEDIAN mark produced Need for student to receive Comments + Marks

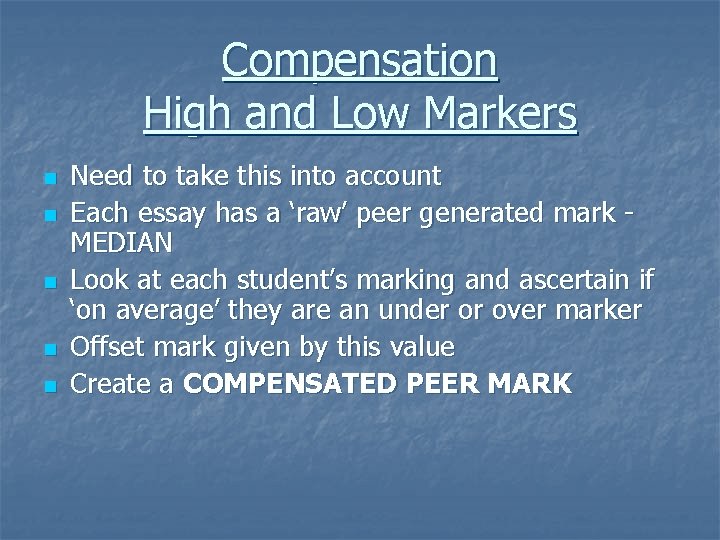

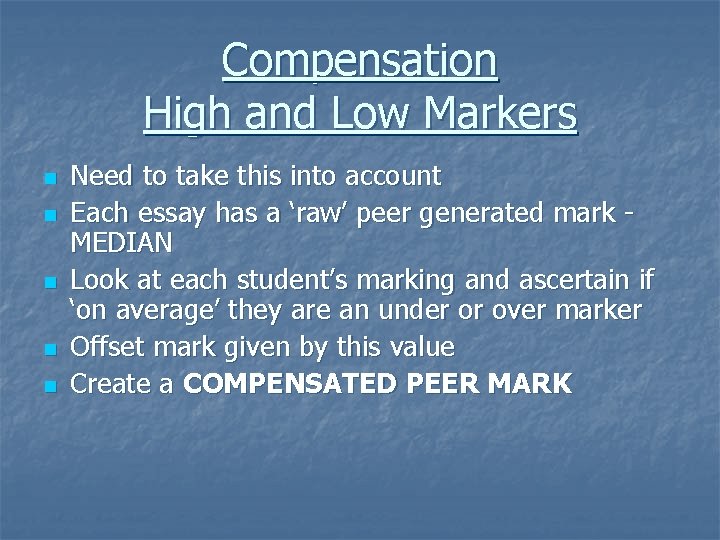

Compensation High and Low Markers n n n Need to take this into account Each essay has a ‘raw’ peer generated mark MEDIAN Look at each student’s marking and ascertain if ‘on average’ they are an under or over marker Offset mark given by this value Create a COMPENSATED PEER MARK

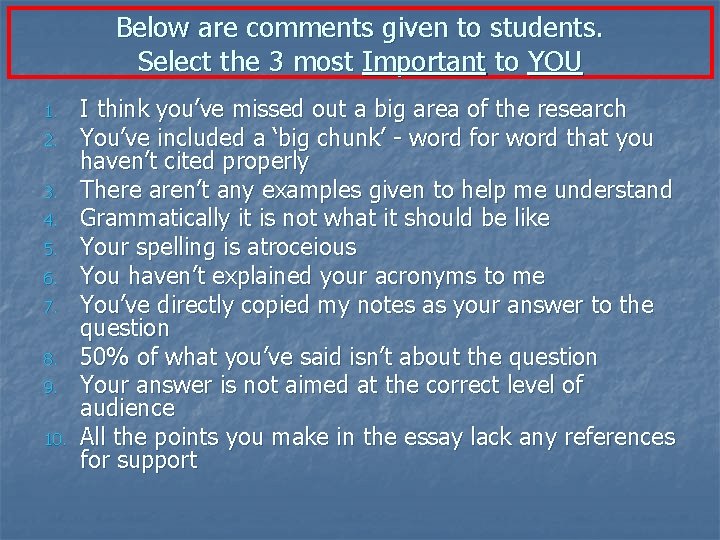

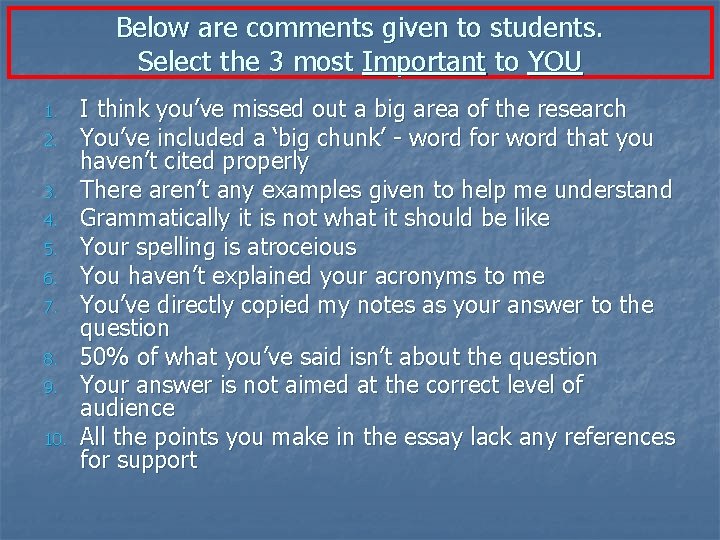

Below are comments given to students. Select the 3 most Important to YOU 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. I think you’ve missed out a big area of the research You’ve included a ‘big chunk’ - word for word that you haven’t cited properly There aren’t any examples given to help me understand Grammatically it is not what it should be like Your spelling is atroceious You haven’t explained your acronyms to me You’ve directly copied my notes as your answer to the question 50% of what you’ve said isn’t about the question Your answer is not aimed at the correct level of audience All the points you make in the essay lack any references for support

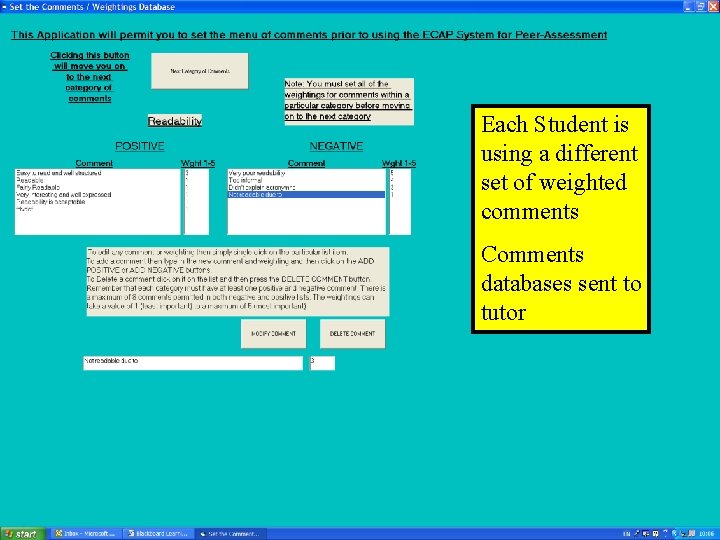

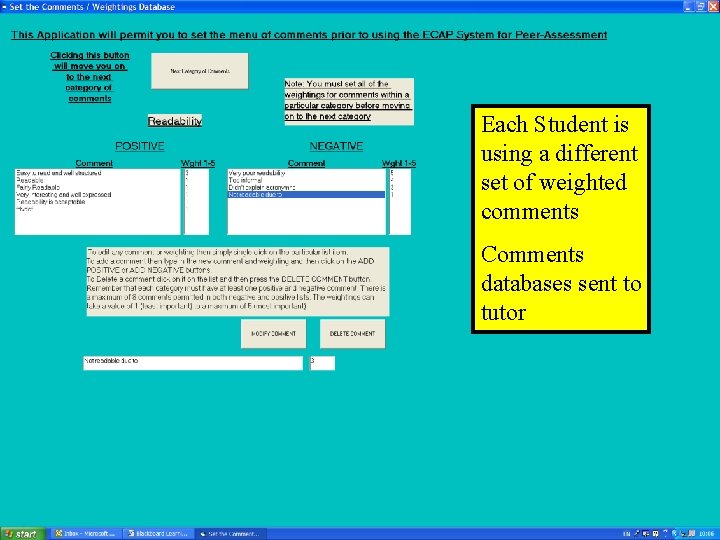

Each Student is using a different set of weighted comments Comments databases sent to tutor

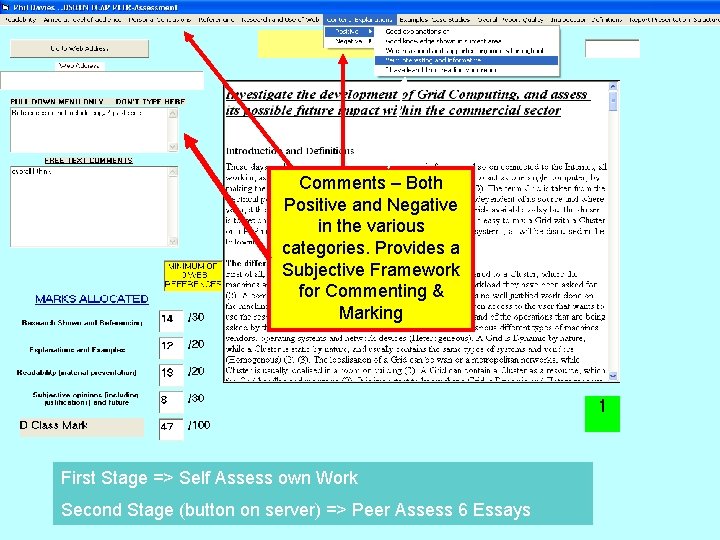

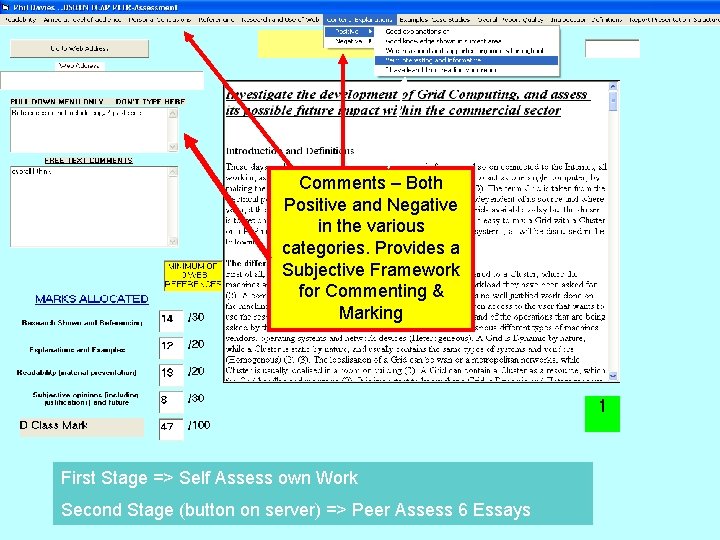

Comments – Both Positive and Negative in the various categories. Provides a Subjective Framework for Commenting & Marking First Stage => Self Assess own Work Second Stage (button on server) => Peer Assess 6 Essays

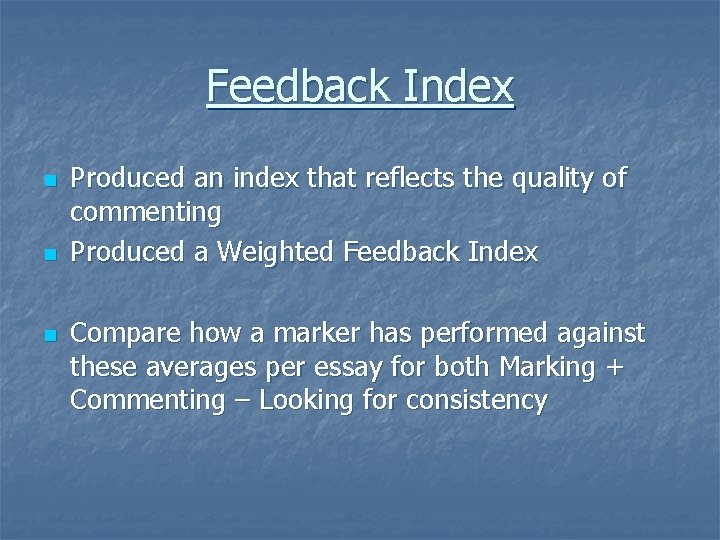

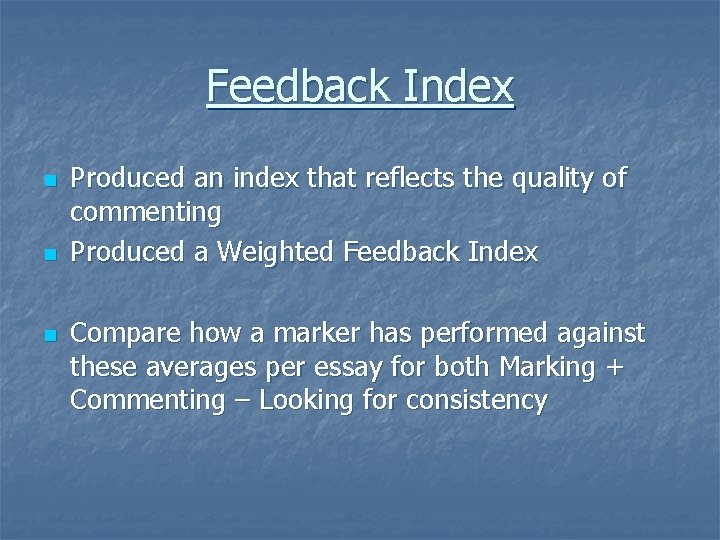

Feedback Index n n n Produced an index that reflects the quality of commenting Produced a Weighted Feedback Index Compare how a marker has performed against these averages per essay for both Marking + Commenting – Looking for consistency

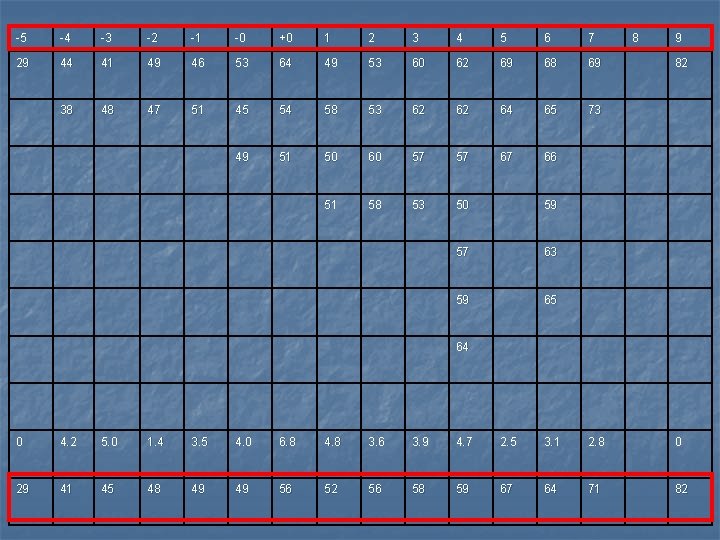

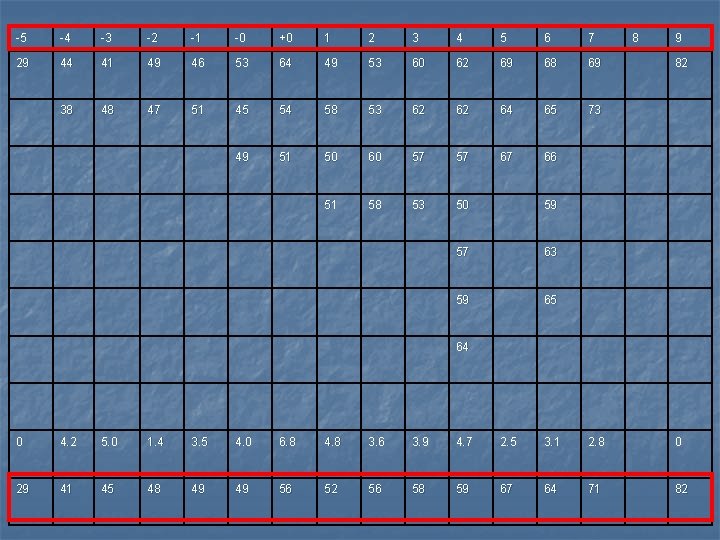

-5 -4 -3 -2 -1 -0 +0 1 2 3 4 5 6 7 29 44 41 49 46 53 64 49 53 60 62 69 68 69 38 48 47 51 45 54 58 53 62 62 64 65 73 49 51 50 60 57 57 67 66 51 58 53 50 59 57 63 59 65 8 9 82 64 0 4. 2 5. 0 1. 4 3. 5 4. 0 6. 8 4. 8 3. 6 3. 9 4. 7 2. 5 3. 1 2. 8 0 29 41 45 48 49 49 56 52 56 58 59 67 64 71 82

The Review Element n n n Originally in Communications within CAP marking process, it requires the owner of the file to ‘ask’ questions of the marker Emphasis ‘should’ be on the marker Marker does NOT see comments of other markers who’ve marked the essays that they have marked Marker does not really get to reflect on their own marking – get a reflective 2 nd chance I’ve avoided this in past -> get it right first time

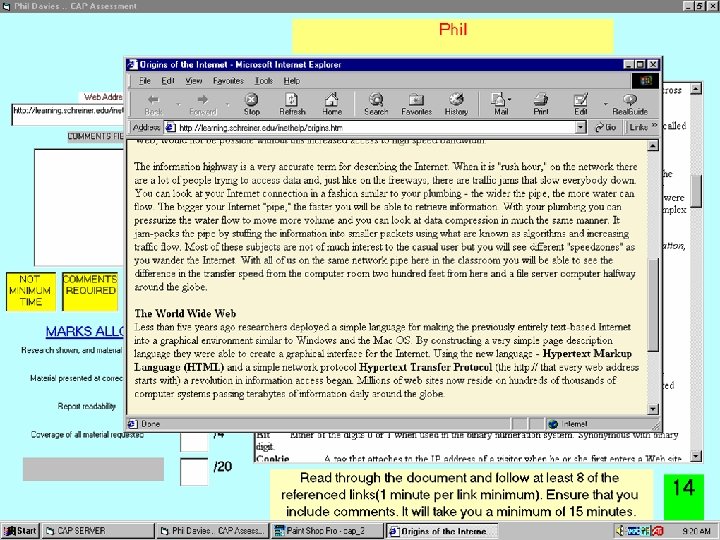

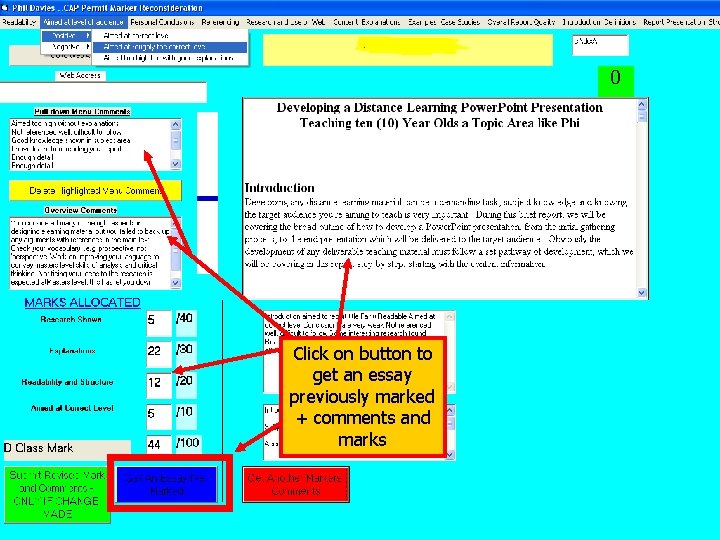

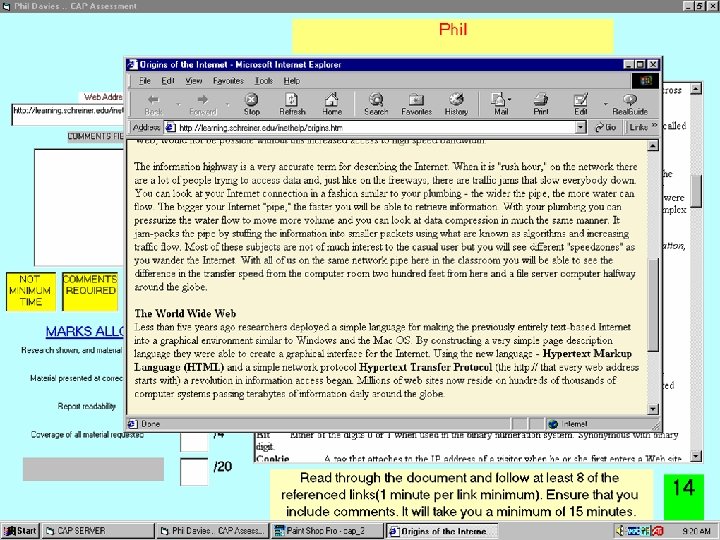

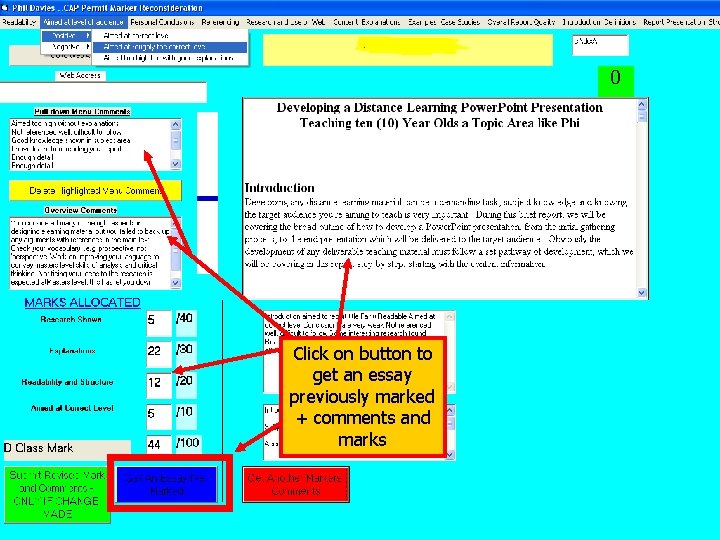

Click on button to get an essay previously marked + comments and marks

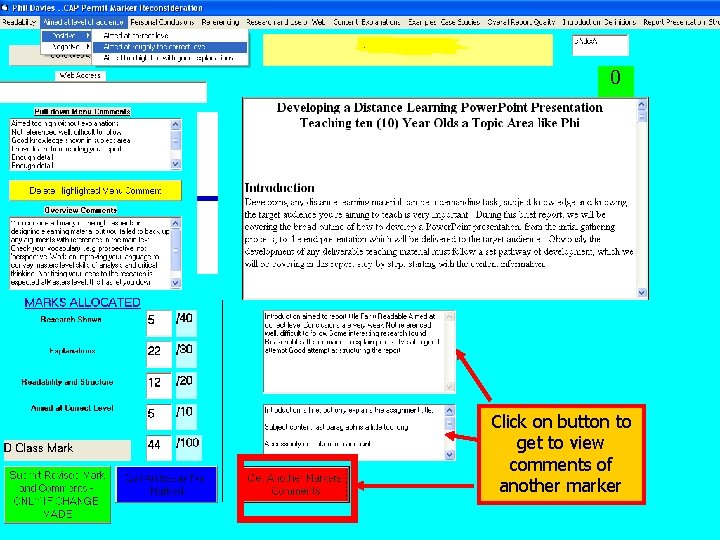

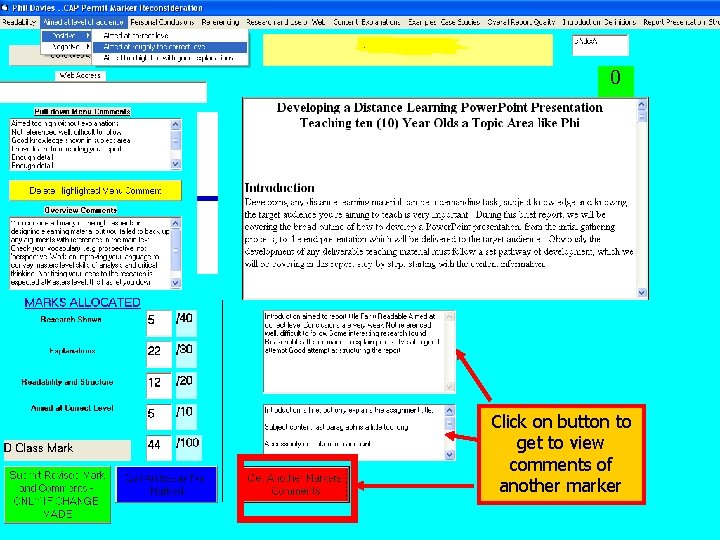

Click on button to get to view comments of another marker

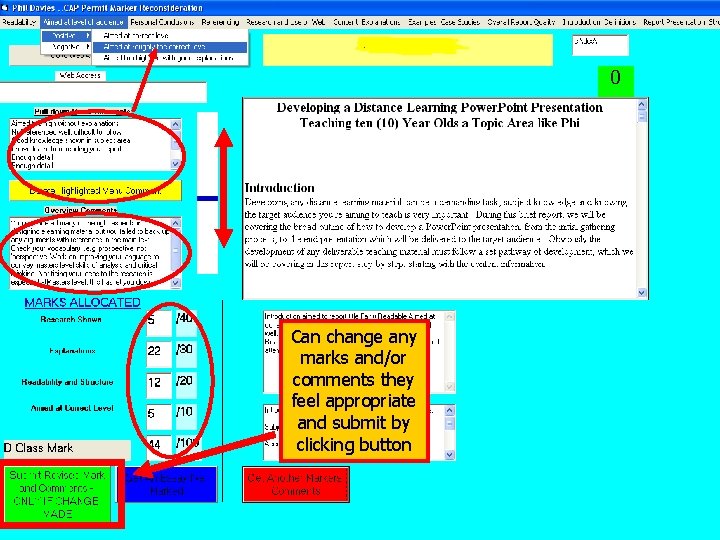

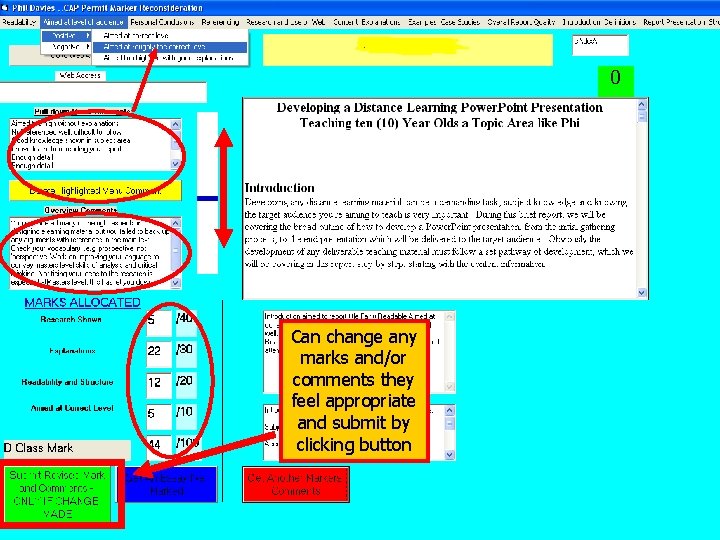

Can change any marks and/or comments they feel appropriate and submit by clicking button

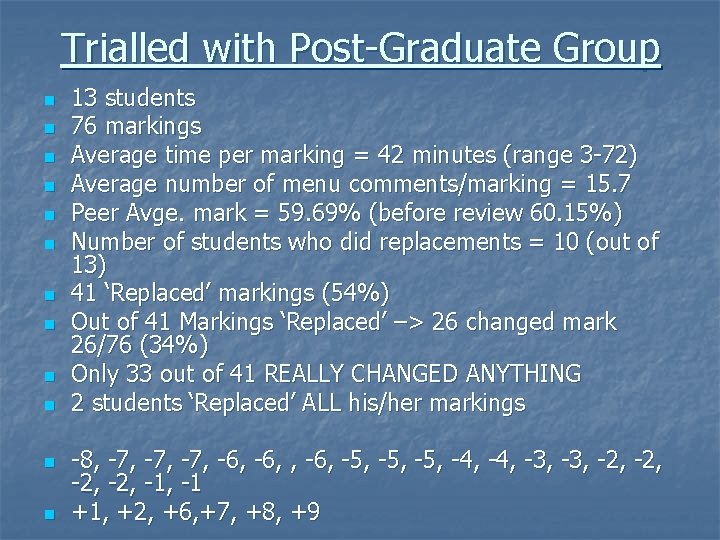

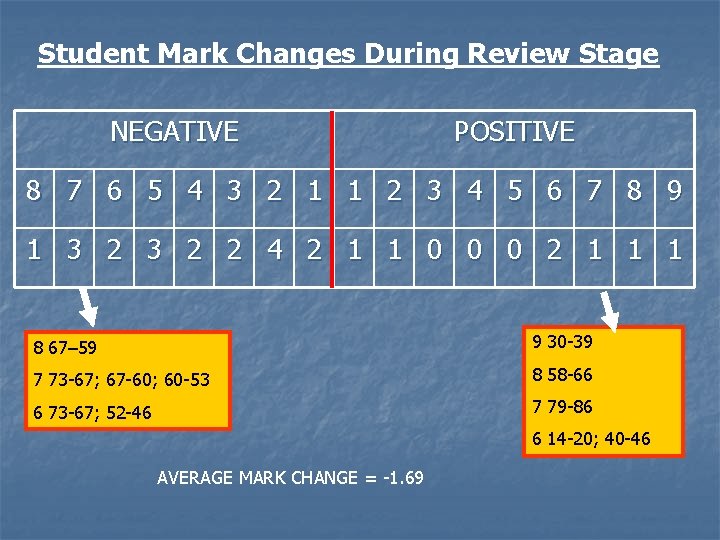

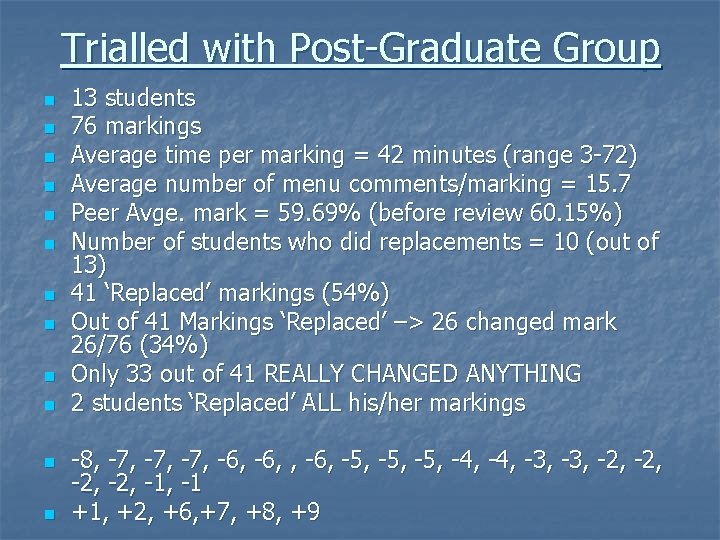

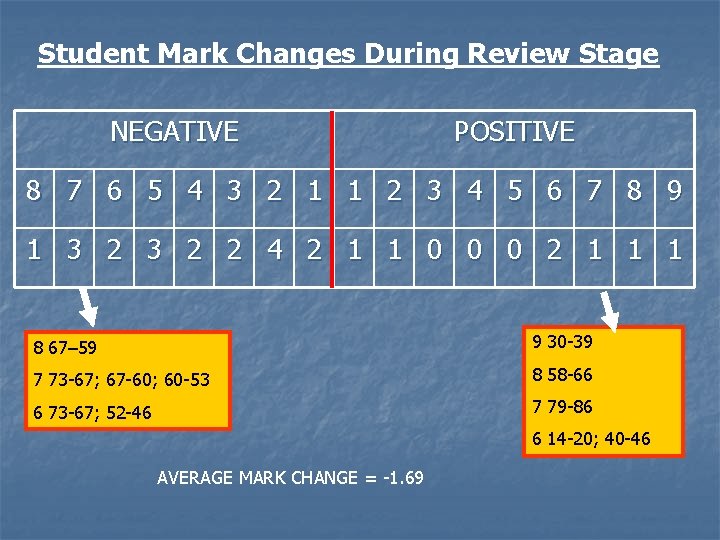

Trialled with Post-Graduate Group n n n 13 students 76 markings Average time per marking = 42 minutes (range 3 -72) Average number of menu comments/marking = 15. 7 Peer Avge. mark = 59. 69% (before review 60. 15%) Number of students who did replacements = 10 (out of 13) 41 ‘Replaced’ markings (54%) Out of 41 Markings ‘Replaced’ –> 26 changed mark 26/76 (34%) Only 33 out of 41 REALLY CHANGED ANYTHING 2 students ‘Replaced’ ALL his/her markings -8, -7, -7, -6, -5, -5, -4, -3, -2, -2, -1 +1, +2, +6, +7, +8, +9

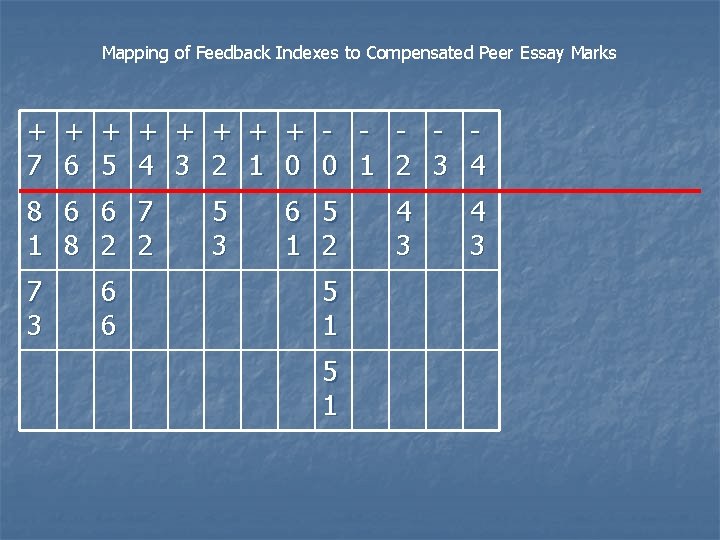

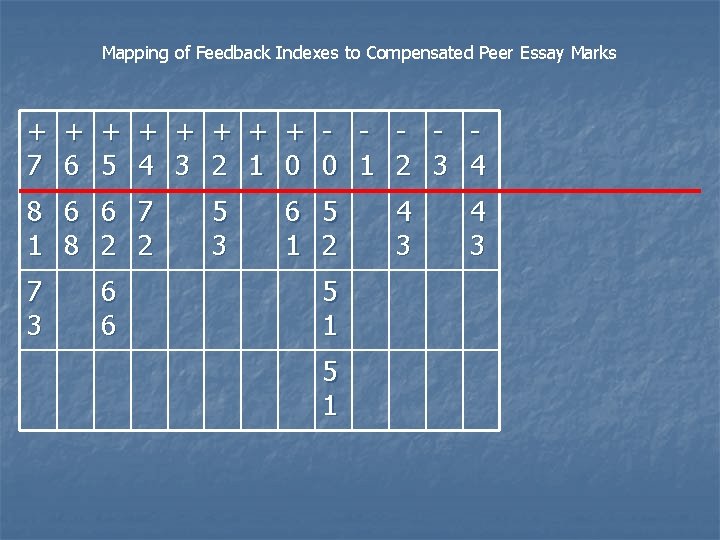

Mapping of Feedback Indexes to Compensated Peer Essay Marks + + + + - - 7 6 5 4 3 2 1 0 0 1 2 3 4 8 6 6 7 1 8 2 2 7 3 6 6 5 3 6 5 1 2 5 1 4 3

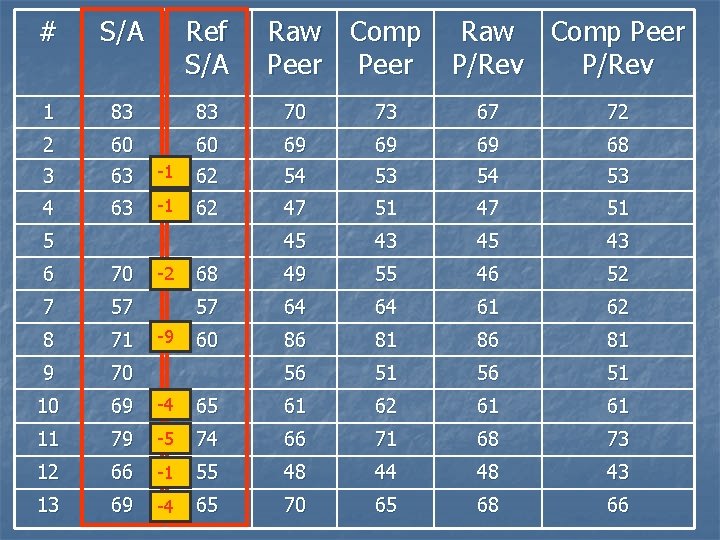

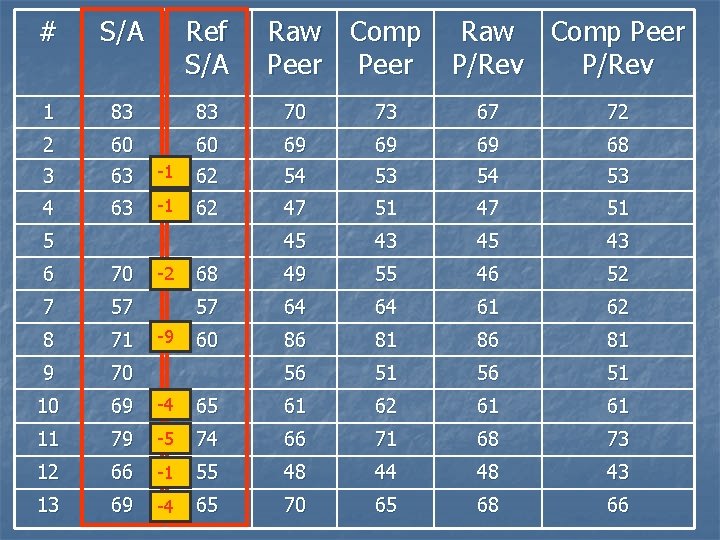

# S/A Ref S/A 1 83 83 70 73 67 72 2 60 60 69 69 69 68 3 63 -1 62 54 53 4 63 -1 62 47 51 45 43 68 49 55 46 52 57 64 64 61 62 60 86 81 56 51 5 Raw Comp Peer P/Rev 6 70 7 57 8 71 9 70 10 69 -4 65 61 62 61 61 11 79 -5 74 66 71 68 73 12 66 -1 55 48 44 48 43 13 69 -4 65 70 65 68 66 -2 -9

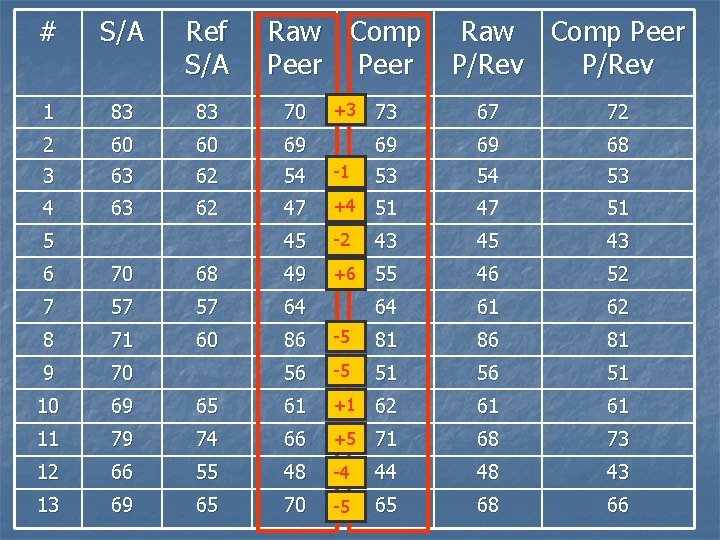

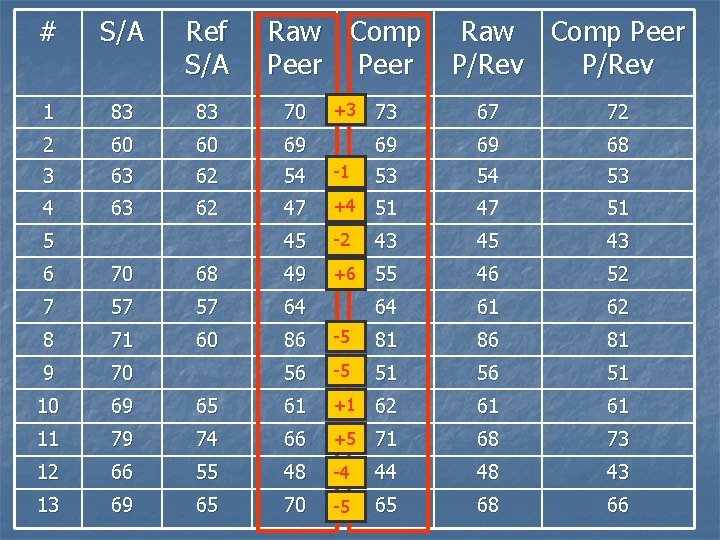

# S/A Ref S/A 1 83 83 70 2 60 60 69 3 63 62 54 4 63 62 5 Raw Comp Peer +3 Raw Comp Peer P/Rev 73 67 72 69 69 68 -1 53 54 53 47 +4 51 47 51 45 -2 43 45 43 +6 55 46 52 64 61 62 6 70 68 49 7 57 57 64 8 71 60 86 -5 81 86 81 9 70 56 -5 51 56 51 10 69 65 61 +1 62 61 61 11 79 74 66 +5 71 68 73 12 66 55 48 -4 44 48 43 13 69 65 70 -5 65 68 66

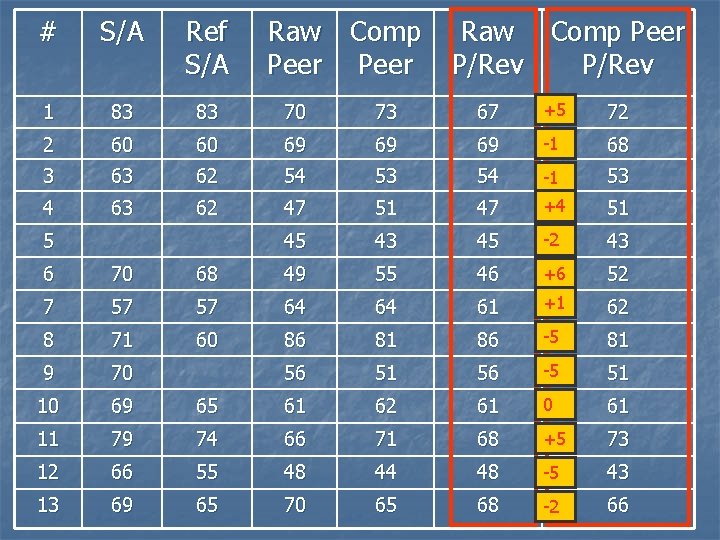

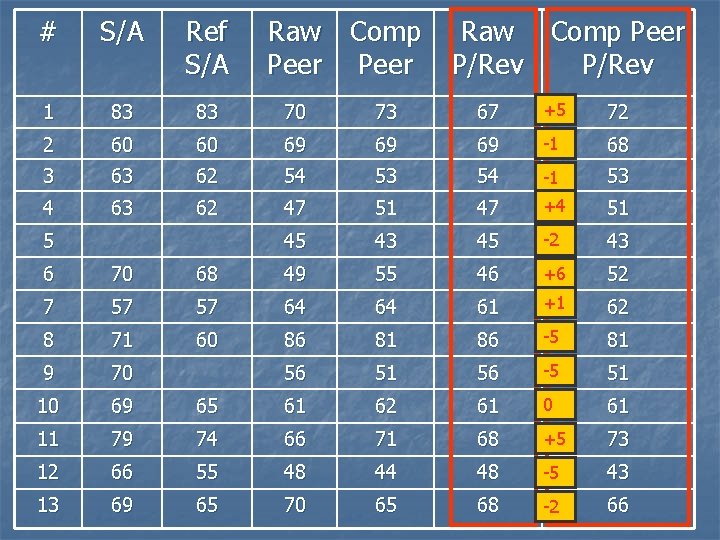

# S/A Ref S/A 1 83 83 70 73 67 +5 72 2 60 60 69 69 69 -1 68 3 63 62 54 53 54 -1 53 4 63 62 47 51 47 +4 51 45 43 45 -2 43 5 Raw Comp Peer P/Rev 6 70 68 49 55 46 +6 52 7 57 57 64 64 61 +1 62 8 71 60 86 81 86 -5 81 9 70 56 51 56 -5 51 10 69 65 61 62 61 0 61 11 79 74 66 71 68 +5 73 12 66 55 48 44 48 -5 43 13 69 65 70 65 68 -2 66

Student Mark Changes During Review Stage NEGATIVE POSITIVE 8 7 6 5 4 3 2 1 1 2 3 4 5 6 7 8 9 1 3 2 2 4 2 1 1 0 0 0 2 1 1 1 8 67– 59 9 30 -39 7 73 -67; 67 -60; 60 -53 8 58 -66 6 73 -67; 52 -46 7 79 -86 6 14 -20; 40 -46 AVERAGE MARK CHANGE = -1. 69

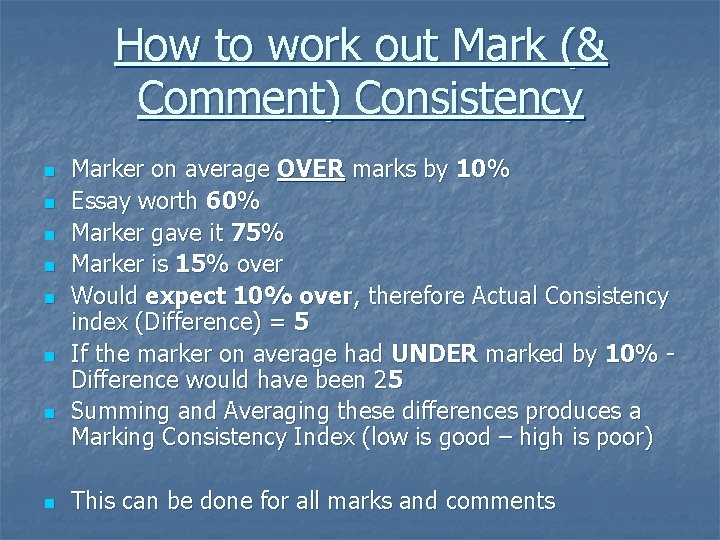

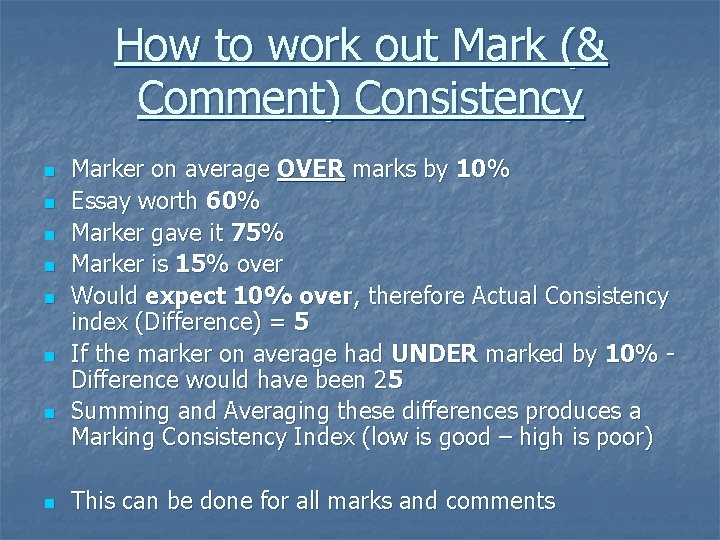

How to work out Mark (& Comment) Consistency n n n n Marker on average OVER marks by 10% Essay worth 60% Marker gave it 75% Marker is 15% over Would expect 10% over, therefore Actual Consistency index (Difference) = 5 If the marker on average had UNDER marked by 10% Difference would have been 25 Summing and Averaging these differences produces a Marking Consistency Index (low is good – high is poor) This can be done for all marks and comments

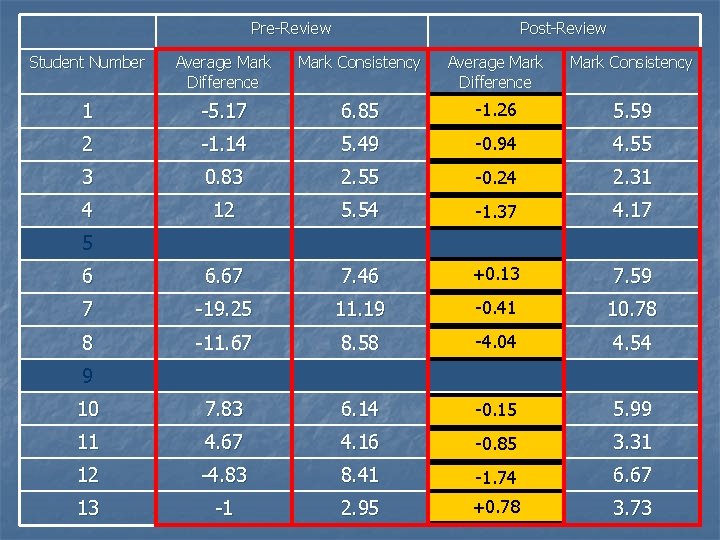

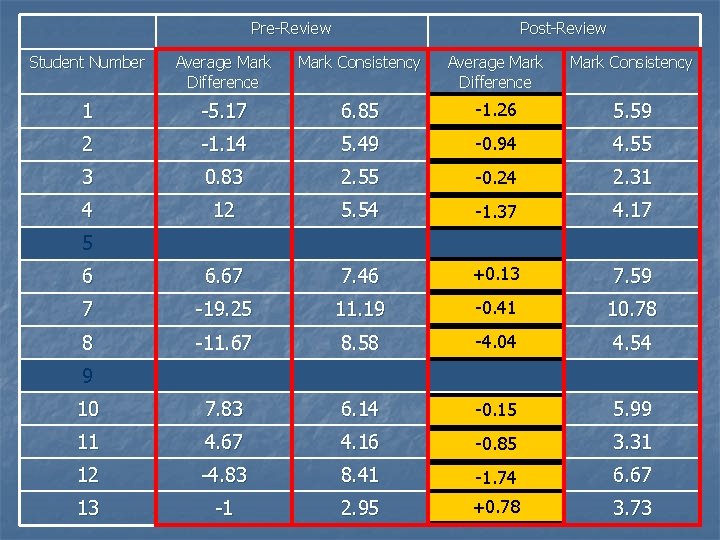

Pre-Review Post-Review Student Number Average Mark Difference Mark Consistency 1 -5. 17 6. 85 -1. 26 -6. 0 5. 59 2 -1. 14 5. 49 -0. 94 0. 14 4. 55 3 0. 83 2. 55 -0. 24 0. 5 2. 31 4 12 5. 54 11. 14 -1. 37 4. 17 6 6. 67 7. 46 +0. 13 7 7. 59 7 -19. 25 11. 19 -0. 41 -18. 25 10. 78 8 -11. 67 8. 58 -4. 04 -9. 83 4. 54 10 7. 83 6. 14 8 -0. 15 5. 99 11 4. 67 4. 16 2. 17 -0. 85 3. 31 12 -4. 83 8. 41 -3. 67 -1. 74 6. 67 13 -1 2. 95 +0. 78 0. 83 3. 73 5 9

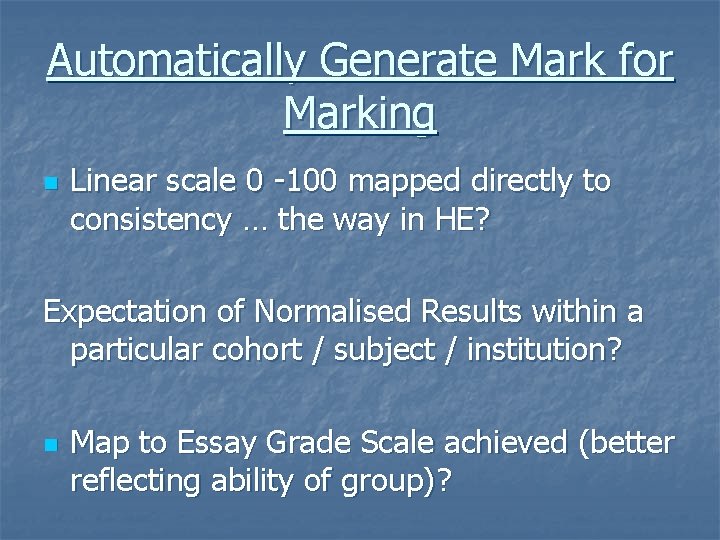

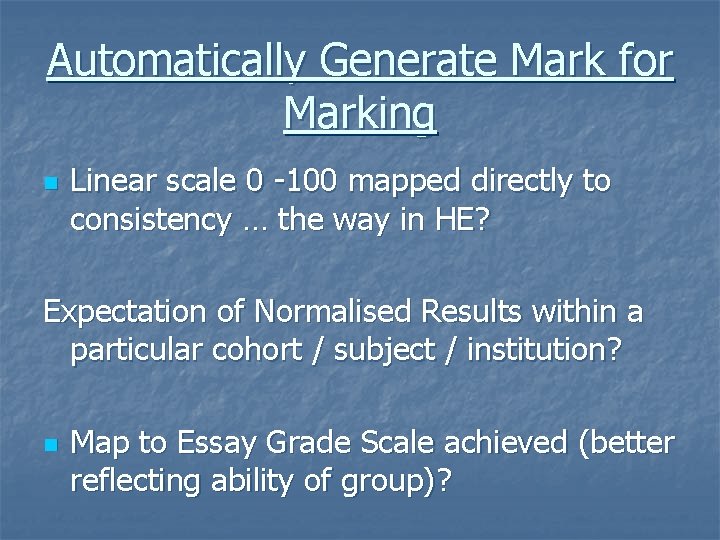

Automatically Generate Mark for Marking n Linear scale 0 -100 mapped directly to consistency … the way in HE? Expectation of Normalised Results within a particular cohort / subject / institution? n Map to Essay Grade Scale achieved (better reflecting ability of group)?

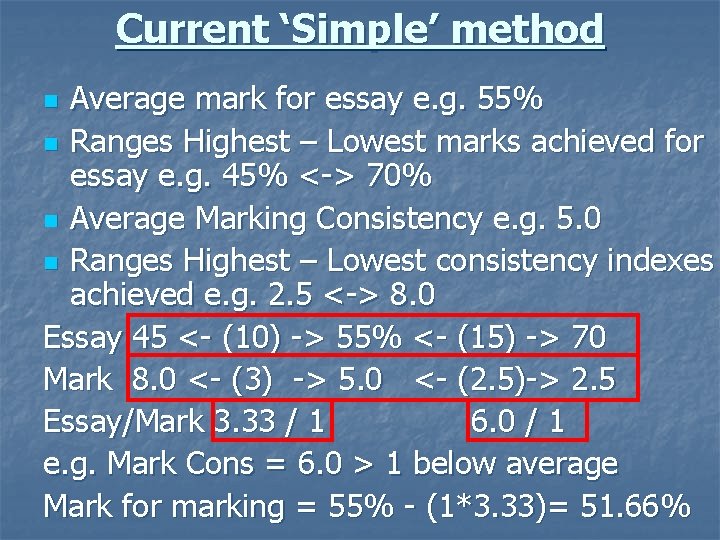

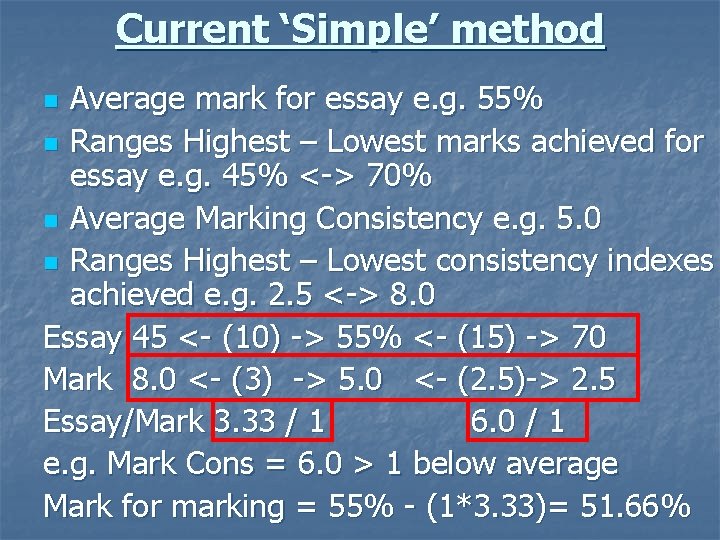

Current ‘Simple’ method Average mark for essay e. g. 55% n Ranges Highest – Lowest marks achieved for essay e. g. 45% <-> 70% n Average Marking Consistency e. g. 5. 0 n Ranges Highest – Lowest consistency indexes achieved e. g. 2. 5 <-> 8. 0 Essay 45 <- (10) -> 55% <- (15) -> 70 Mark 8. 0 <- (3) -> 5. 0 <- (2. 5)-> 2. 5 Essay/Mark 3. 33 / 1 6. 0 / 1 e. g. Mark Cons = 6. 0 > 1 below average Mark for marking = 55% - (1*3. 33)= 51. 66% n

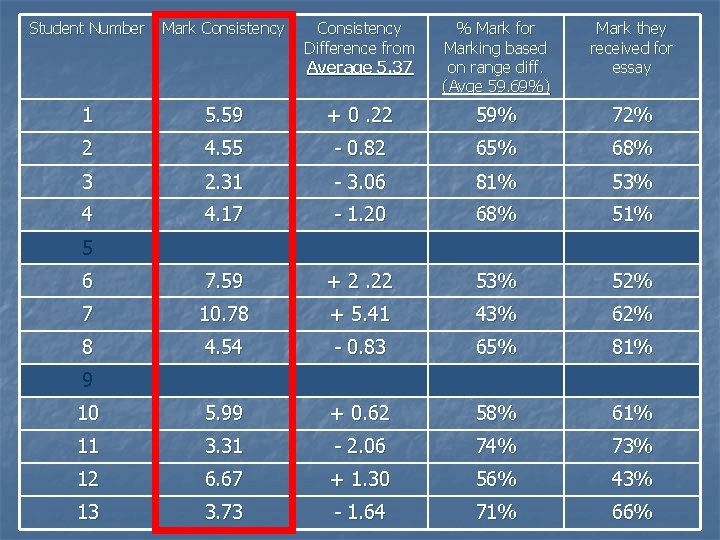

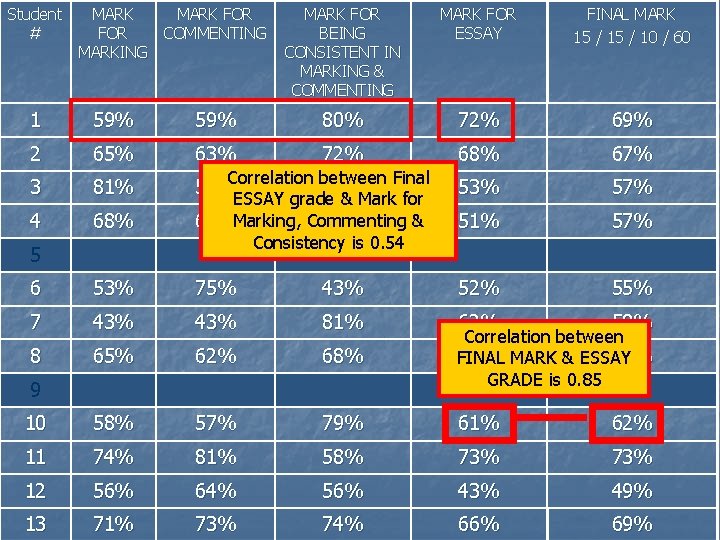

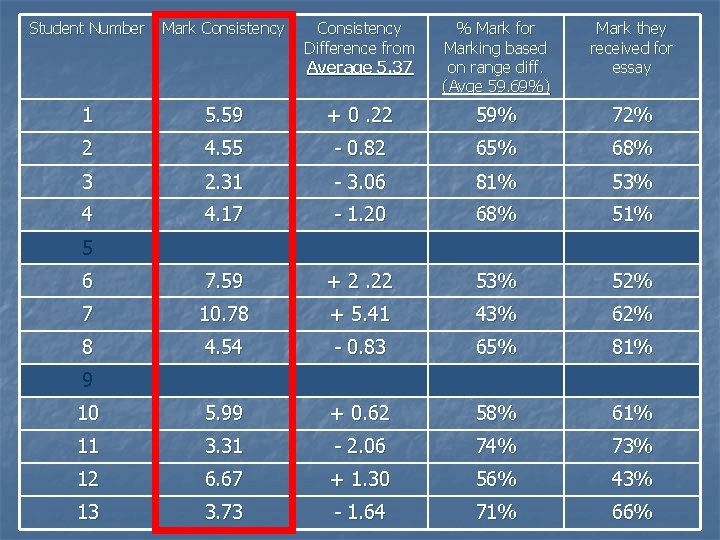

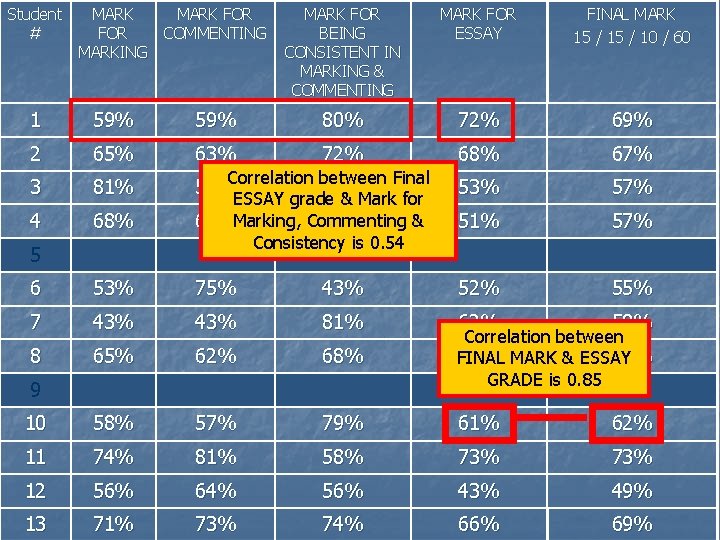

Student Number Mark Consistency Difference from Average 5. 37 % Mark for Marking based on range diff. (Avge 59. 69%) Mark they received for essay 1 5. 59 + 0. 22 59% 72% 2 4. 55 - 0. 82 65% 68% 3 2. 31 - 3. 06 81% 53% 4 4. 17 - 1. 20 68% 51% 6 7. 59 + 2. 22 53% 52% 7 10. 78 + 5. 41 43% 62% 8 4. 54 - 0. 83 65% 81% 10 5. 99 + 0. 62 58% 61% 11 3. 31 - 2. 06 74% 73% 12 6. 67 + 1. 30 56% 43% 13 3. 73 - 1. 64 71% 66% 5 9

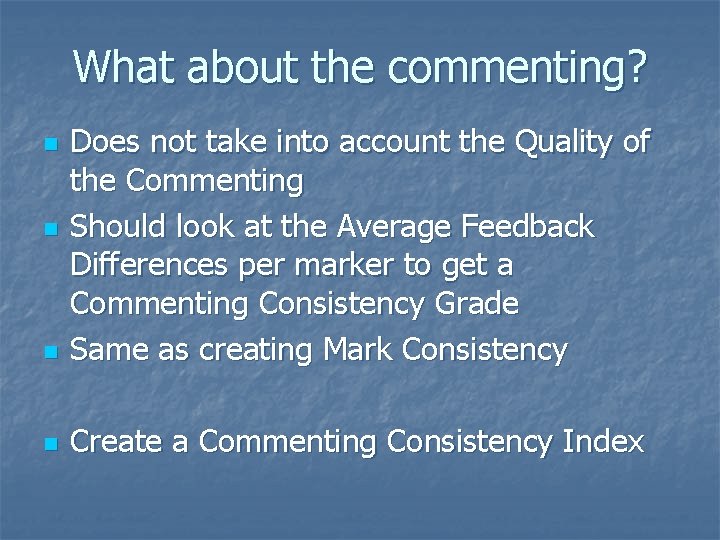

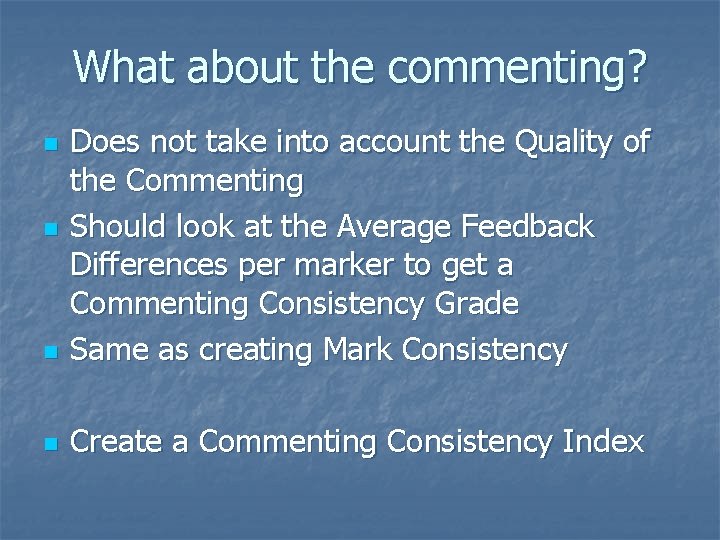

What about the commenting? n Does not take into account the Quality of the Commenting Should look at the Average Feedback Differences per marker to get a Commenting Consistency Grade Same as creating Mark Consistency n Create a Commenting Consistency Index n n

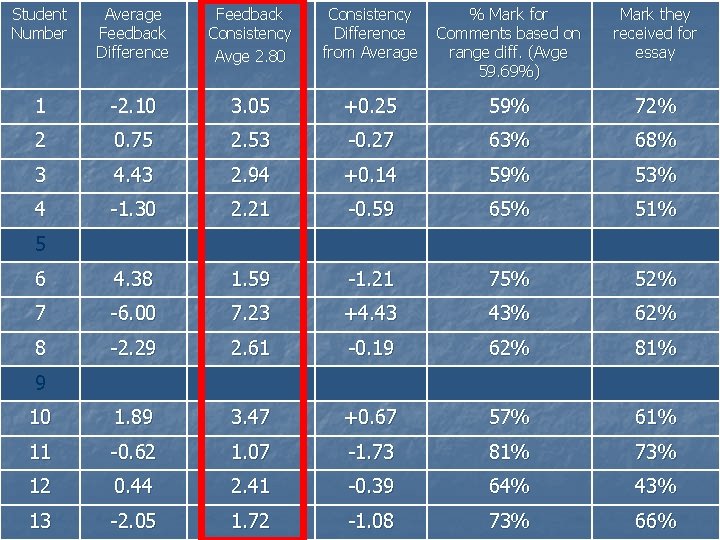

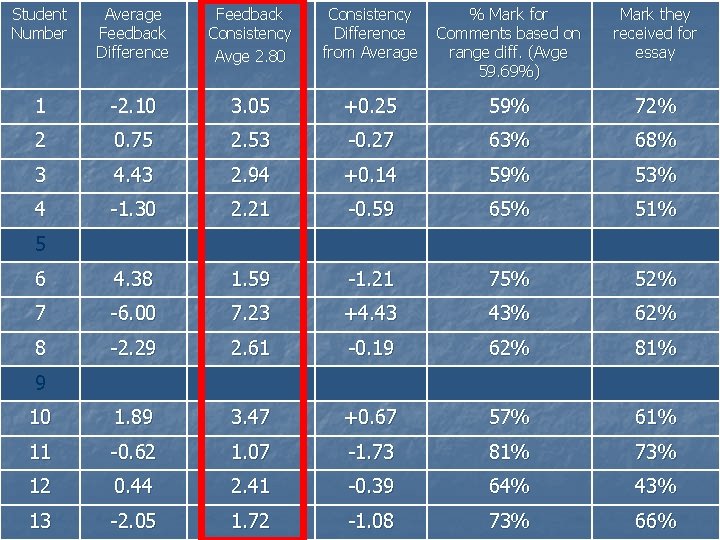

Student Number Average Feedback Difference Feedback Consistency Avge 2. 80 Consistency Difference from Average % Mark for Comments based on range diff. (Avge 59. 69%) Mark they received for essay 1 -2. 10 3. 05 +0. 25 59% 72% 2 0. 75 2. 53 -0. 27 63% 68% 3 4. 43 2. 94 +0. 14 59% 53% 4 -1. 30 2. 21 -0. 59 65% 51% 6 4. 38 1. 59 -1. 21 75% 52% 7 -6. 00 7. 23 +4. 43 43% 62% 8 -2. 29 2. 61 -0. 19 62% 81% 10 1. 89 3. 47 +0. 67 57% 61% 11 -0. 62 1. 07 -1. 73 81% 73% 12 0. 44 2. 41 -0. 39 64% 43% 13 -2. 05 1. 72 -1. 08 73% 66% 5 9

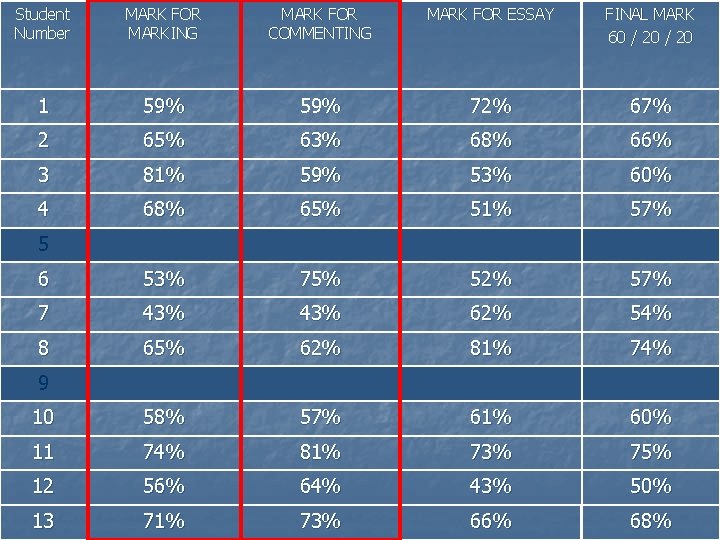

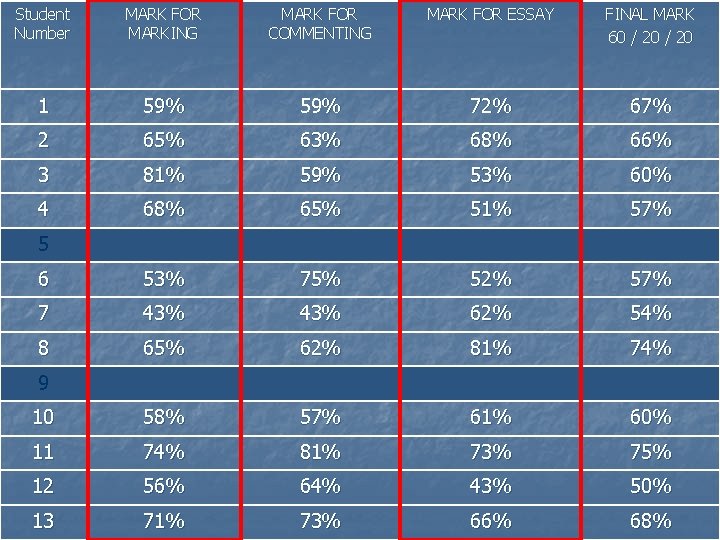

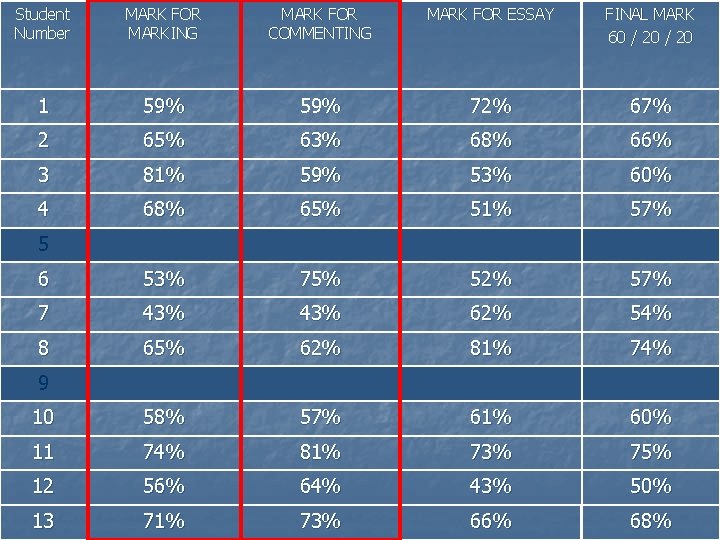

Student Number MARK FOR MARKING MARK FOR COMMENTING MARK FOR ESSAY FINAL MARK 60 / 20 1 59% 72% 67% 2 65% 63% 68% 66% 3 81% 59% 53% 60% 4 68% 65% 51% 57% 6 53% 75% 52% 57% 7 43% 62% 54% 8 65% 62% 81% 74% 10 58% 57% 61% 60% 11 74% 81% 73% 75% 12 56% 64% 43% 50% 13 71% 73% 66% 68% 5 9

Student Number MARK FOR MARKING MARK FOR COMMENTING MARK FOR ESSAY FINAL MARK 60 / 20 1 59% 72% 67% 2 65% 63% 68% 66% 3 81% 59% 53% 60% 4 68% 65% 51% 57% 6 53% 75% 52% 57% 7 43% 62% 54% 8 65% 62% 81% 74% 10 58% 57% 61% 60% 11 74% 81% 73% 75% 12 56% 64% 43% 50% 13 71% 73% 66% 68% 5 9

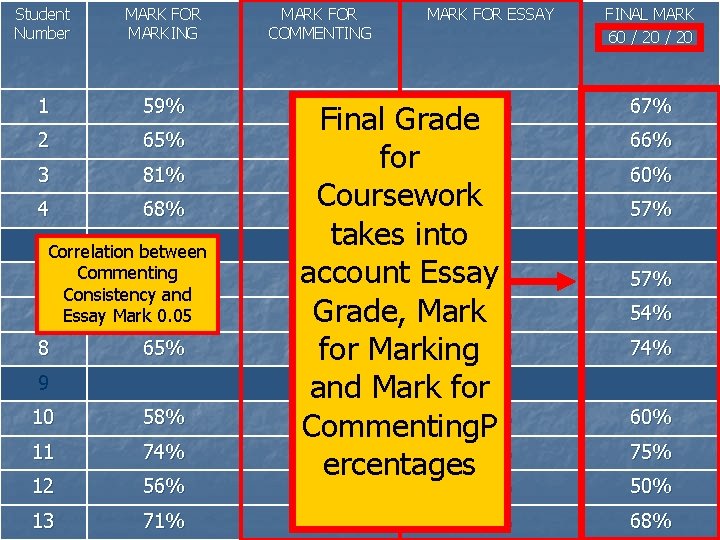

Student Number MARK FOR MARKING MARK FOR COMMENTING MARK FOR ESSAY FINAL MARK 60 / 20 1 59% 72% 2 65% 3 81% 4 68% Final Grade 63% 68% for 59% 53% Coursework 51% 65% takes into account Essay 75% 52% 43% Grade, Mark 62% for Marking 81% and Mark for 57% 61% Commenting. P 81% 73% ercentages 67% 64% 43% 50% 73% 66% 68% 5 Correlation between Commenting 53% Consistency and 7 Essay Mark 43% 0. 05 6 8 65% 9 10 58% 11 74% 12 56% 13 71% 66% 60% 57% 54% 74% 60% 75%

Split in Marks 60 / 20 n Is it reasonable? n n n Higher Order skills of Marking – worth more? If we’re judging marking process on consistency – then should be rewarded for showing consistency within marking AND commenting Revised split 60 / 15 / 10

Student # MARK FOR MARKING MARK FOR COMMENTING MARK FOR BEING CONSISTENT IN MARKING & COMMENTING MARK FOR ESSAY FINAL MARK 15 / 10 / 60 1 59% 80% 72% 69% 2 65% 63% 72% 68% 67% 3 81% 59% 53% 57% 4 68% Correlation between Final 43% ESSAY grade & Mark for & 65%Marking, Commenting 68% Consistency is 0. 54 51% 57% 5 6 53% 75% 43% 52% 55% 7 43% 81% 62% 58% 8 65% 62% 68% Correlation between 81% MARK & ESSAY 75% FINAL GRADE is 0. 85 10 58% 57% 79% 61% 62% 11 74% 81% 58% 73% 12 56% 64% 56% 43% 49% 13 71% 73% 74% 66% 69% 9

Student # MARK FOR MARKING MARK FOR COMMENTING MARK FOR BEING CONSISTENT IN MARKING & COMMENTING MARK FOR ESSAY FINAL MARK 15 / 10 / 60 1 59% 80% 69% 2 65% 63% IS 72% 68% 67% 3 81% 59% 43% 57% 4 68% 65% 68% IT 53% 51% 57% 6 53% 75% 52% 55% 7 43% 43% WORTH 62% 58% 8 65% 62% THE 81% 75% 10 58% 57% 62% 11 74% 81% HASSLE? ? 58% 61% 73% 12 56% 64% 56% 43% 49% 13 71% 73% 74% 66% 69% 5 9 81% 68% 79%

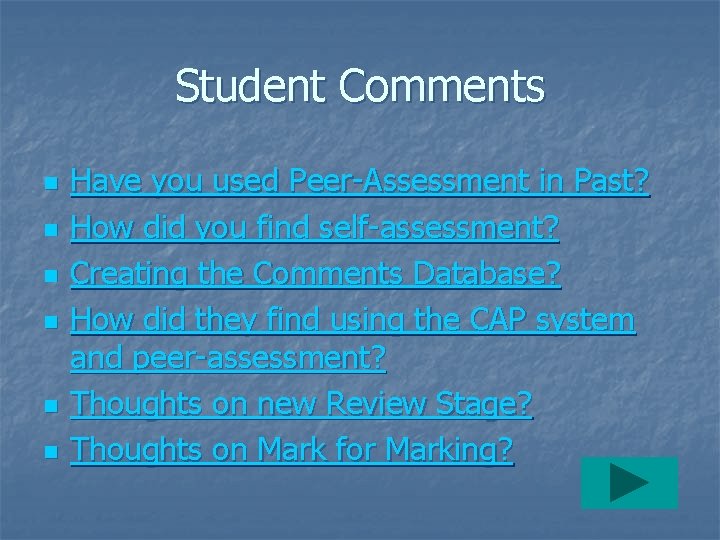

Student Comments n n n Have you used Peer-Assessment in Past? How did you find self-assessment? Creating the Comments Database? How did they find using the CAP system and peer-assessment? Thoughts on new Review Stage? Thoughts on Mark for Marking?

Two Main Points to Consider n n How do we assess the time required to perform the marking task? What split of the marks between creation & marking Definition Student or Lecturer Comments

Contact Information Email: pdavies@glam. ac. uk Phone: 01443 - 482247 Dr Phil Davies J 317 Department of Computing & Mathematical Sciences Faculty of Advanced Technology University of Glamorgan

THE END

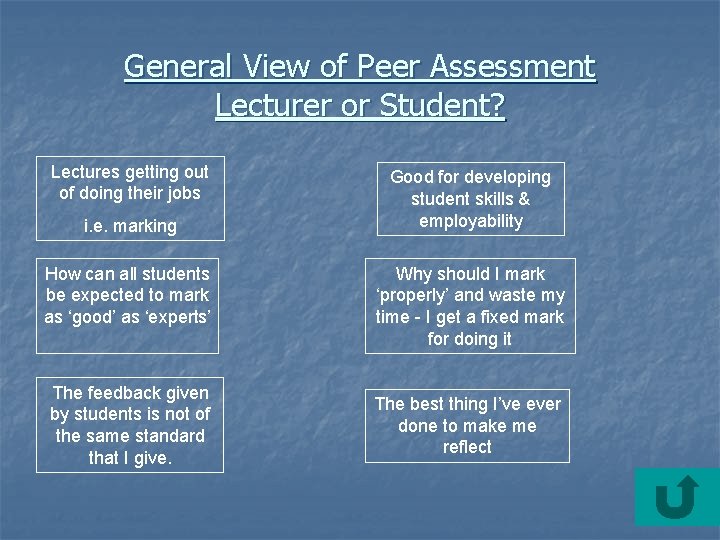

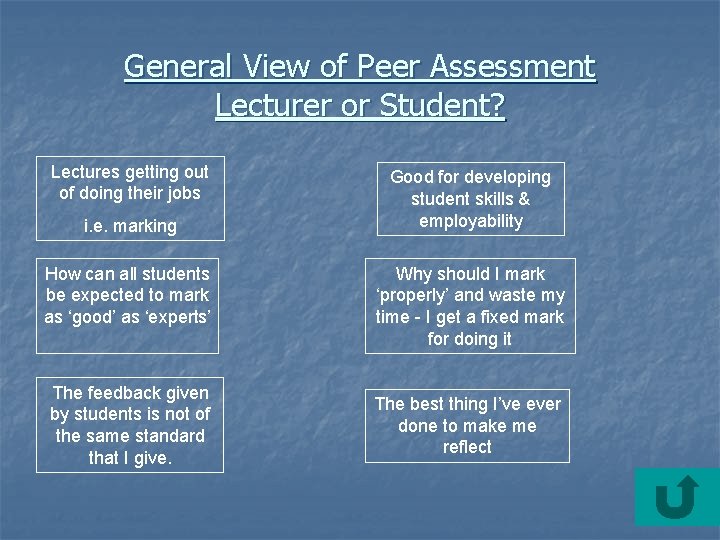

General View of Peer Assessment Lecturer or Student? Lectures getting out of doing their jobs i. e. marking How can all students be expected to mark as ‘good’ as ‘experts’ The feedback given by students is not of the same standard that I give. Good for developing student skills & employability Why should I mark ‘properly’ and waste my time - I get a fixed mark for doing it The best thing I’ve ever done to make me reflect

Defining Peer-Assessment n In describing the teacher. . A tall b******, so he was. A tall thin, mean b******, with a baldy head like a light bulb. He’d make us mark each other’s work, then for every wrong mark we got, we’d get a thump. That way – he paused – ‘we were implicated in each other’s pain’ Mc. Carthy’s Bar (Pete Mc. Carthy, 2000, page 68)

Student Comments n Used Peer-Assessment in past? None to any real degree n A couple for staff development type activities n

Student Comments How did you feel about performing Selfassessment? Very Difficult. n Helped to promote critical thinking ready for peer-assessment stage. n Made me think about how I was going to assess others n

Student Comments n Creating Comments Database? Very difficult – not knowing what comments they’d need n Weighting really helped me create criteria ready for marking n Could have helped to do dummy marking n

Student Comments n How did they find using the CAP system and peer-assessment? n n n Very positive & interesting Very time consuming Would do it better next time Important to maintain anonymity Interesting & complex – thought more about the assessment process Really helped student development

Student Comments n Thoughts on new Review Stage Liked 2 nd chance to review own marks n Gained experience going through process n Didn’t really take much note of peers’ comments n Liked to see that others felt the same about an essay n

Student Comments n Thoughts on Mark for Marking Good rewarded appropriately n Difficult to fully understand n Let owner of essay provide mark for marking n