Retrieval System Interfaces LBSC 878 Douglas W Oard

Retrieval System Interfaces LBSC 878 Douglas W. Oard and Dagobert Soergel Week 5, March 1, 1999

Agenda • • Taylor’s information need model Query formulation Query representation Focus area queries

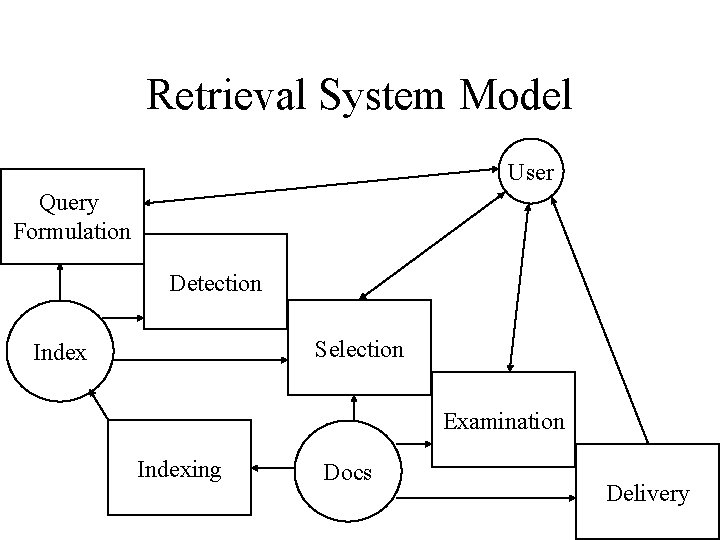

Retrieval System Model User Query Formulation Detection Selection Index Examination Indexing Docs Delivery

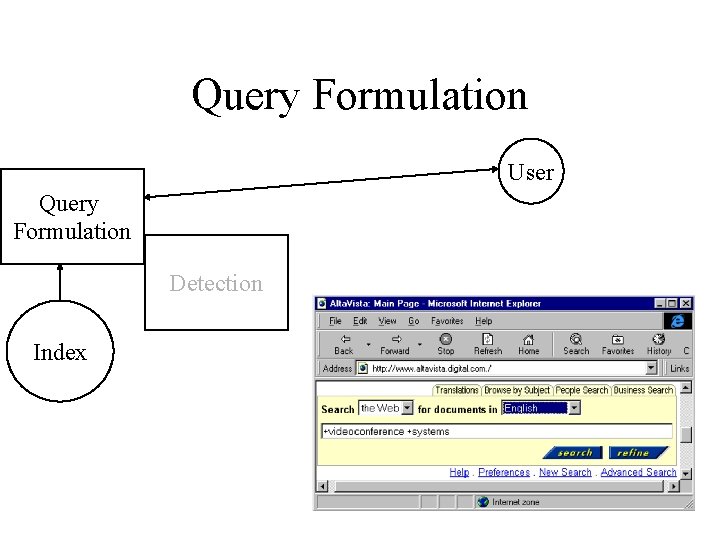

Query Formulation User Query Formulation Detection Index

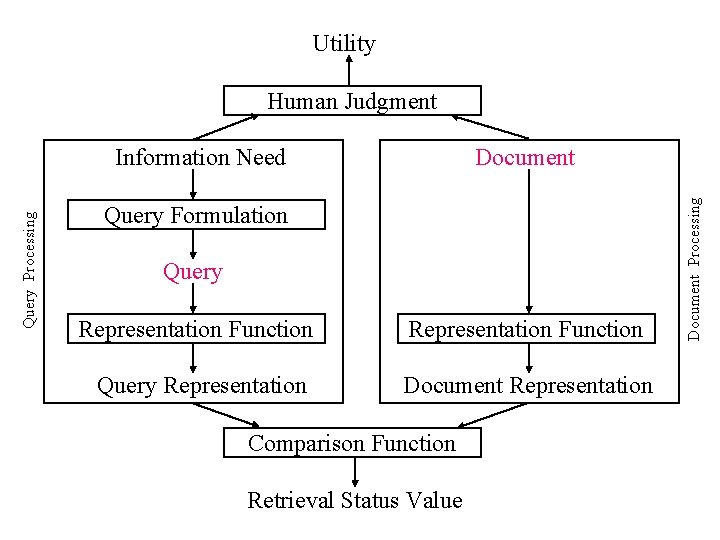

Utility Human Judgment Document Query Formulation Query Representation Function Query Representation Document Representation Comparison Function Retrieval Status Value Document Processing Query Processing Information Need

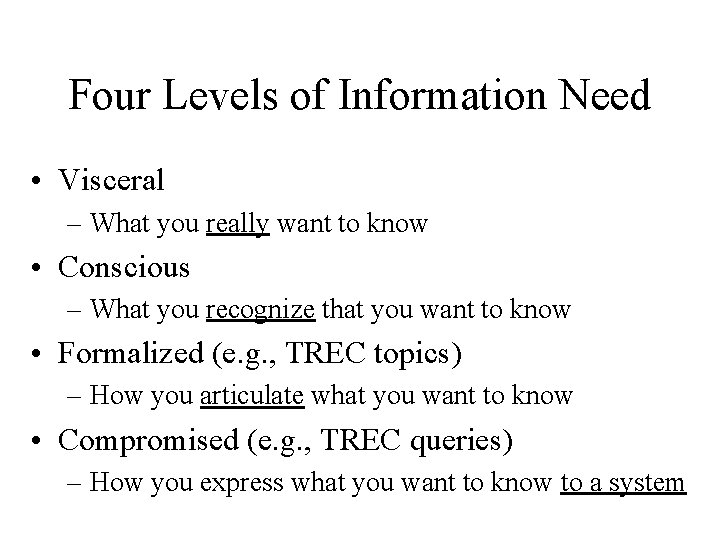

Four Levels of Information Need • Visceral – What you really want to know • Conscious – What you recognize that you want to know • Formalized (e. g. , TREC topics) – How you articulate what you want to know • Compromised (e. g. , TREC queries) – How you express what you want to know to a system

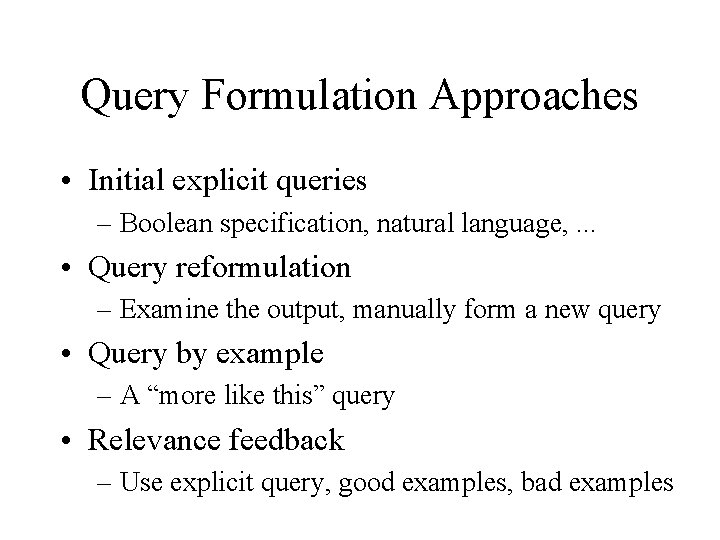

Query Formulation Approaches • Initial explicit queries – Boolean specification, natural language, . . . • Query reformulation – Examine the output, manually form a new query • Query by example – A “more like this” query • Relevance feedback – Use explicit query, good examples, bad examples

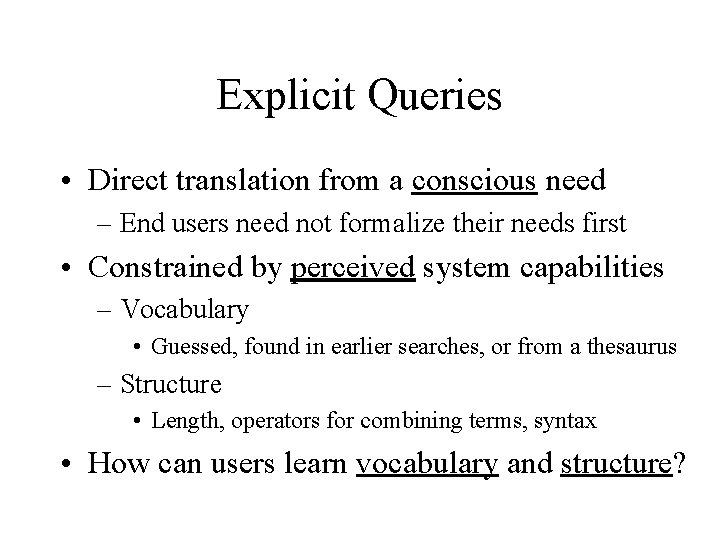

Explicit Queries • Direct translation from a conscious need – End users need not formalize their needs first • Constrained by perceived system capabilities – Vocabulary • Guessed, found in earlier searches, or from a thesaurus – Structure • Length, operators for combining terms, syntax • How can users learn vocabulary and structure?

The Short Query Problem • For ranked retrieval, long queries are helpful – Early TREC queries filled a page • Search engine logs show mostly short queries – Averaging just over 2 words per query – Very few use “advanced” query interfaces – Almost nobody reads “help” screens • Why don’t people do what’s good for them? – And what can we do about it?

Good Query Interfaces • Provide a large text entry area – Encourages longer queries • Provide examples – e. g. , For good pizza type +Chicago +“deep dish” • Examples related to the last query are particularly good • Offer lists of related terms – From a thesaurus or some term similarity measue

Iterative Query Reformulation • Pose an explicit query – Based on the conscious information need • Examine the retrieval results – To learn about vocabulary and structure – To adjust the conscious information need • Repeat the process

Poor Support for Reformulation • Some systems use obscure ranking methods – Unpredictable effects of adding or deleting terms • Some provide counterintuitive statistics – “clis”: • Alta. Vista says 3, 882 docs match the query – “clis library”: • Alta. Vista says 27, 025 docs match the query!

Query By Example • Use heuristics to construct a good query – Stevens uses examples to build Boolean queries • Think of it as a machine learning problem – Given some examples, find more like it • Learning requires an “inductive bias” – Which features to select? – How to interpret them to make predictions?

A Query-by-Example Strategy • Select which documents to use as examples – Some may be more informative than others • Select which terms to use as features – e. g. , rare terms are more selective than common ones • Decide how to interpret each term’s evidence – e. g. , frequent terms may deserve more weight

Relevance Feedback • Three sources of evidence – An explicit query – Examples of desirable documents – Examples of undesirable documents • Rocchio is used for “vector space” retrieval – A simple linear combination of three vectors

Alternate Query Modalities • Graphical queries – Used with clustering for relevance feedback – Can help users visualize simple Boolean queries • Spoken queries – Used for telephone and hands-free applications – Some error correction method must be included • Handwritten queries – Used in some “palmtop” computers – Fairly effective if some form of shorthand is used

Query Representation • Rule-based approach – Transform query into a set of selection rules • Commonly used for Boolean queries • Feature-based approach – Extract features for comparison with documents • Goal is compatible inputs for “comparison function” – Stemming needs to be done the same way! • Document and query features need not be identical – But the comparison function will have more work to do

- Slides: 17