Retrieval Models I Boolean Vector Space Probabilistic What

Retrieval Models I Boolean, Vector Space, Probabilistic

What is a retrieval model? • An idealization or abstraction of an actual process (retrieval) – results in measure of similarity b/w query and document • May describe the computational process – e. g. how documents are ranked – note that inverted file is an implementation not a model • May attempt to describe the human process – e. g. the information need, search strategy, etc • Retrieval variables: – queries, documents, terms, relevance judgements, users, information needs • Have an explicit or implicit definition of relevance Allan, Ballesteros, Croft, and/or Turtle

Mathematical models • Study the properties of the process • Draw conclusions or make predictions – Conclusions derived depend on whether model is a good approximation of the actual situation • Statistical models represent repetitive processes – predict frequencies of interesting events – use probability as the fundamental tool Allan, Ballesteros, Croft, and/or Turtle

Exact Match Retrieval • Retrieving documents that satisfy a Boolean expression constitutes the Boolean exact match retrieval model – query specifies precise retrieval criteria – every document either matches or fails to match query – result is a set of documents (no order) • Advantages: – – efficient predictable, easy to explain structured queries work well when you know exactly what docs you want Allan, Ballesteros, Croft, and/or Turtle

Exact-match Retrieval Model • Disadvantages: – query formulation difficult for most users – difficulty increases with collection size (why? ) – indexing vocabulary same as query vocabulary – acceptable precision generally means unacceptable recall – ranking models are consistently better • Best-match or ranking models are now more common – we’ll see these later Allan, Ballesteros, Croft, and/or Turtle

Boolean retrieval • Most common exact-match model – queries: logic expressions with doc features as operands – retrieved documents are generally not ranked – query formulation difficult for novice users • Boolean queries – Used by Boolean retrieval model and in other models – Boolean query Boolean model • “Pure” Boolean operators: AND, OR, and NOT • Most systems have proximity operators • Most systems support simple regular expressions as search terms to match spelling variants Allan, Ballesteros, Croft, and/or Turtle

WESTLAW: Large commercial system • Serves legal and professional market – legal materials (court opinions, statutes, regulations, …) – news (newspapers, magazines, journals, …) – financial (stock quotes, SEC materials, financial analyses, …) • Total collection size: 5 -7 Terabytes • 700, 000 users – Claim 47% of legal searchers – 120 K daily on-line users (54% of on-line users) • In operation since 1974 (51 countries as of 2002) • Best-match (and free text queries) added in 1992 Allan, Ballesteros, Croft, and/or Turtle

WESTLAW query language features • Boolean operators • Proximity operators – – Phrases - “West Publishing” Word proximity - West /5 Publishing Same sentence - Massachusetts /s technology Same paragraph - “information retrieval” /p exact-match • Restrictions – (e. g. DATE(AFTER 1992 & BEFORE 1995)) Allan, Ballesteros, Croft, and/or Turtle

WESTLAW query language features • Term expansion – Universal characters (THOM*SON) – Truncation (THOM!) – Automatic expansion of plurals, possessives • Document structure (fields) – query expression restricted to named document component (title, abstract) – title(“inference network”) & cites(Croft) & date(after 1990) Allan, Ballesteros, Croft, and/or Turtle

Features to Note about Queries • Queries are developed incrementally – add query components until reasonable number of documents retrieved • “query by numbers” – implicit relevance feedback • Queries are complex – proximity operators used very frequently – implicit OR for synonyms – /NOT is rare • Queries are long (av. 9 -10 words) – not typical Internet queries (1 -2 words) Allan, Ballesteros, Croft, and/or Turtle

WESTLAW Query Processing • Stop words – indexing stopwords (one) – query stopwords (about 50) • stopwords retained in some contexts • Thesaurus to aid user expansion of queries • Document display – sort order specified by user (e. g. date) – query term highlighting Allan, Ballesteros, Croft, and/or Turtle

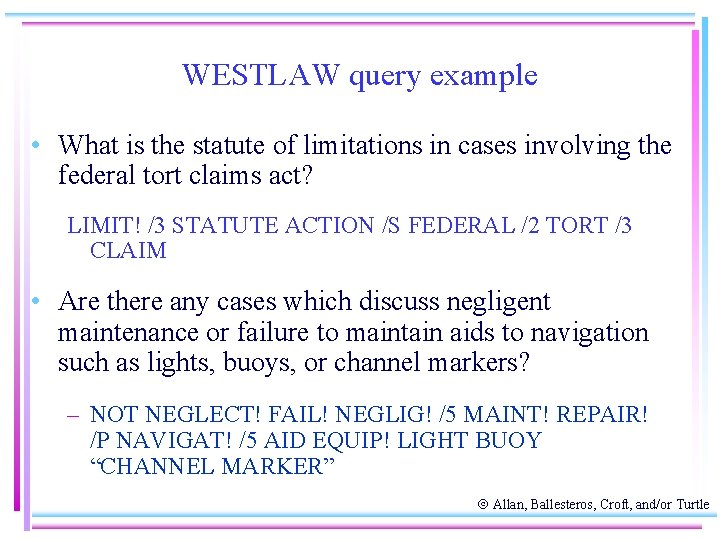

WESTLAW query example • What is the statute of limitations in cases involving the federal tort claims act? LIMIT! /3 STATUTE ACTION /S FEDERAL /2 TORT /3 CLAIM • Are there any cases which discuss negligent maintenance or failure to maintain aids to navigation such as lights, buoys, or channel markers? – NOT NEGLECT! FAIL! NEGLIG! /5 MAINT! REPAIR! /P NAVIGAT! /5 AID EQUIP! LIGHT BUOY “CHANNEL MARKER” Allan, Ballesteros, Croft, and/or Turtle

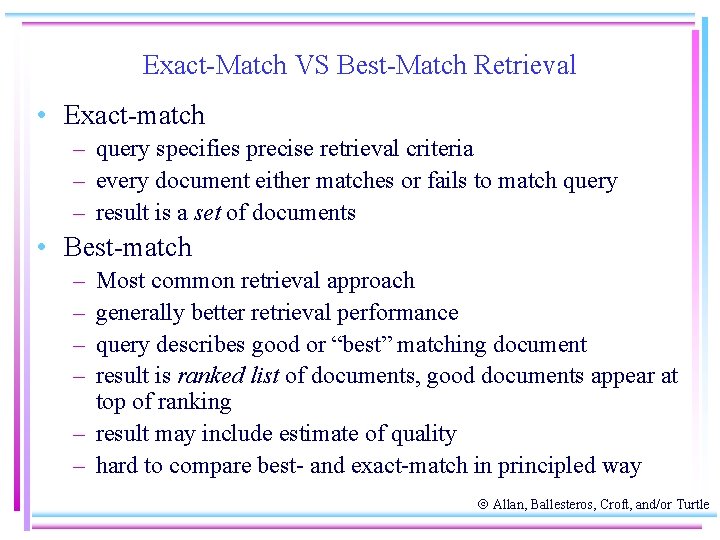

Exact-Match VS Best-Match Retrieval • Exact-match – query specifies precise retrieval criteria – every document either matches or fails to match query – result is a set of documents • Best-match – – Most common retrieval approach generally better retrieval performance query describes good or “best” matching document result is ranked list of documents, good documents appear at top of ranking – result may include estimate of quality – hard to compare best- and exact-match in principled way Allan, Ballesteros, Croft, and/or Turtle

Best-Match Retrieval • Advantages of Best Match: – Significantly more effective than exact match – Uncertainty is a better model than certainty – Easier to use (support full text queries) – Similar efficiency (based on inverted file implementations) Allan, Ballesteros, Croft, and/or Turtle

Best Match Retrieval • Disadvantages: – More difficult to convey an appropriate cognitive model (“control”) – Full text is not natural language understanding (no “magic”) – Efficiency is always less than exact match (cannot reject documents early) • Boolean or structured queries can be part of a bestmatch retrieval model Allan, Ballesteros, Croft, and/or Turtle

Why did Commercial services ignore bestmatch (for 20 years)? • • Inertia/installed software base “Cultural” differences Not clear tests on small collections would scale Operating costs Training Few convincing user studies Risk Allan, Ballesteros, Croft, and/or Turtle

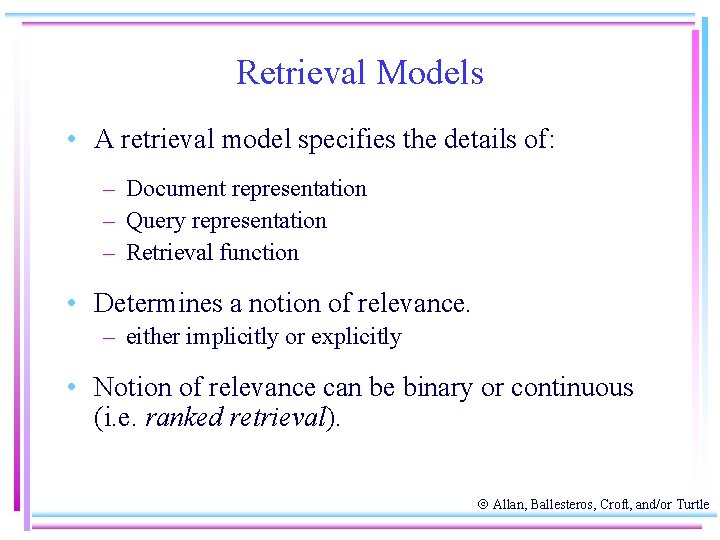

Retrieval Models • A retrieval model specifies the details of: – Document representation – Query representation – Retrieval function • Determines a notion of relevance. – either implicitly or explicitly • Notion of relevance can be binary or continuous (i. e. ranked retrieval). Allan, Ballesteros, Croft, and/or Turtle

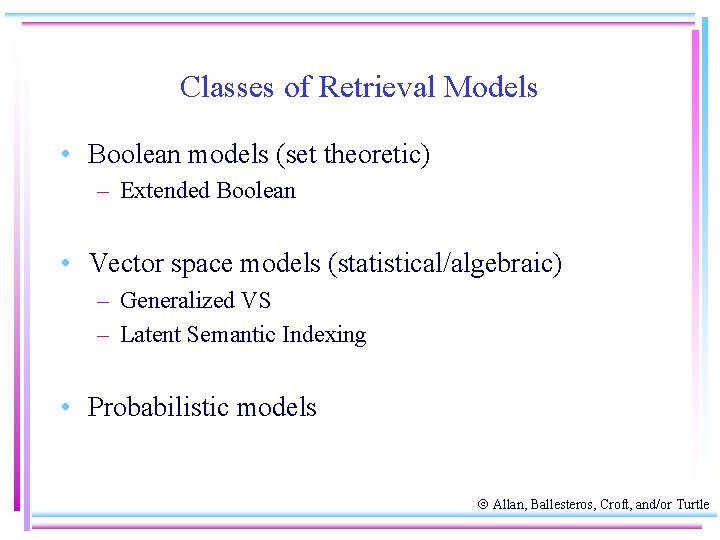

Classes of Retrieval Models • Boolean models (set theoretic) – Extended Boolean • Vector space models (statistical/algebraic) – Generalized VS – Latent Semantic Indexing • Probabilistic models Allan, Ballesteros, Croft, and/or Turtle

Other Model Dimensions • Logical View of Documents – Index terms – Full text + Structure (e. g. hypertext) • User Task – Retrieval – Browsing Allan, Ballesteros, Croft, and/or Turtle

Retrieval Tasks • Ad hoc retrieval: Fixed document corpus, varied queries. • Filtering: Fixed query, continuous document stream. – User Profile: A model of relative static preferences. – Binary decision of relevant/not-relevant. • Routing: Same as filtering but continuously supply ranked lists rather than binary filtering. Allan, Ballesteros, Croft, and/or Turtle

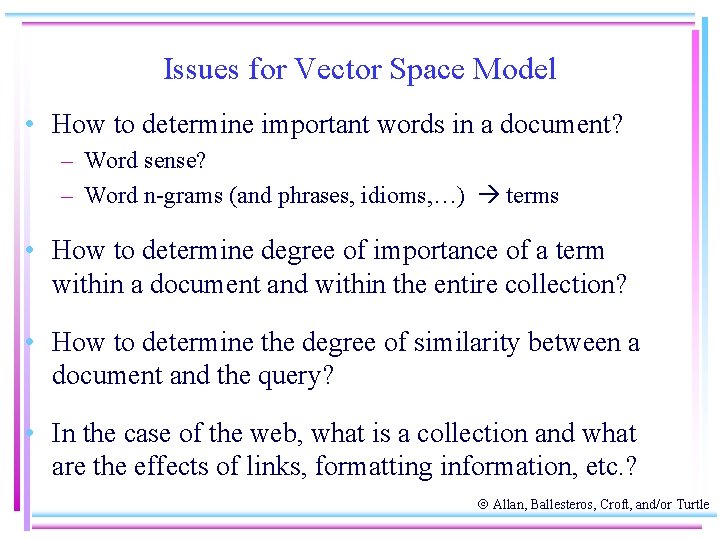

Issues for Vector Space Model • How to determine important words in a document? – Word sense? – Word n-grams (and phrases, idioms, …) terms • How to determine degree of importance of a term within a document and within the entire collection? • How to determine the degree of similarity between a document and the query? • In the case of the web, what is a collection and what are the effects of links, formatting information, etc. ? Allan, Ballesteros, Croft, and/or Turtle

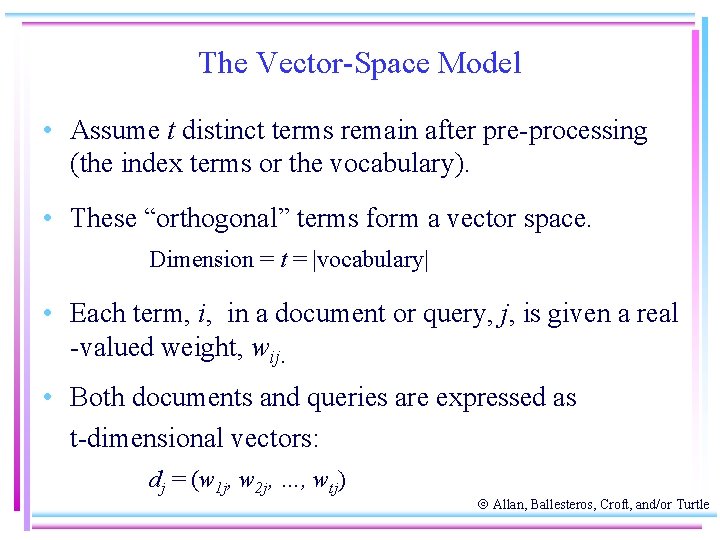

The Vector-Space Model • Assume t distinct terms remain after pre-processing (the index terms or the vocabulary). • These “orthogonal” terms form a vector space. Dimension = t = |vocabulary| • Each term, i, in a document or query, j, is given a real -valued weight, wij. • Both documents and queries are expressed as t-dimensional vectors: dj = (w 1 j, w 2 j, …, wtj) Allan, Ballesteros, Croft, and/or Turtle

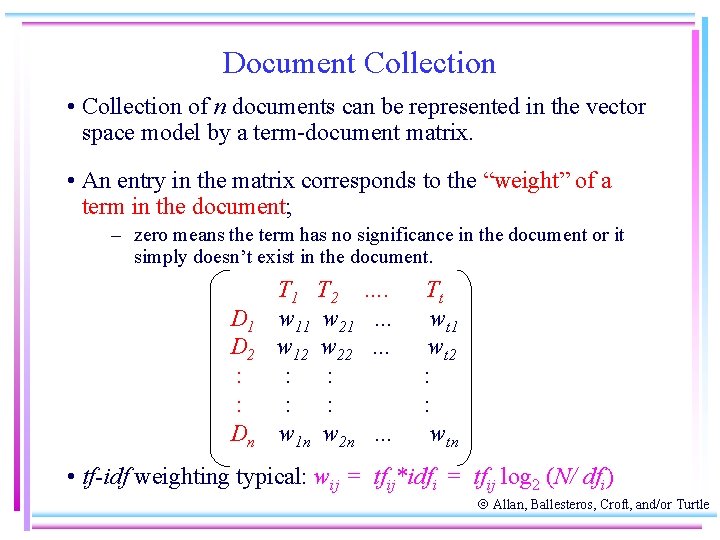

Document Collection • Collection of n documents can be represented in the vector space model by a term-document matrix. • An entry in the matrix corresponds to the “weight” of a term in the document; – zero means the term has no significance in the document or it simply doesn’t exist in the document. D 1 D 2 : : Dn T 1 T 2 w 11 w 21 w 12 w 22 : : w 1 n w 2 n …. … … … Tt wt 1 wt 2 : : wtn • tf-idf weighting typical: wij = tfij*idfi = tfij log 2 (N/ dfi) Allan, Ballesteros, Croft, and/or Turtle

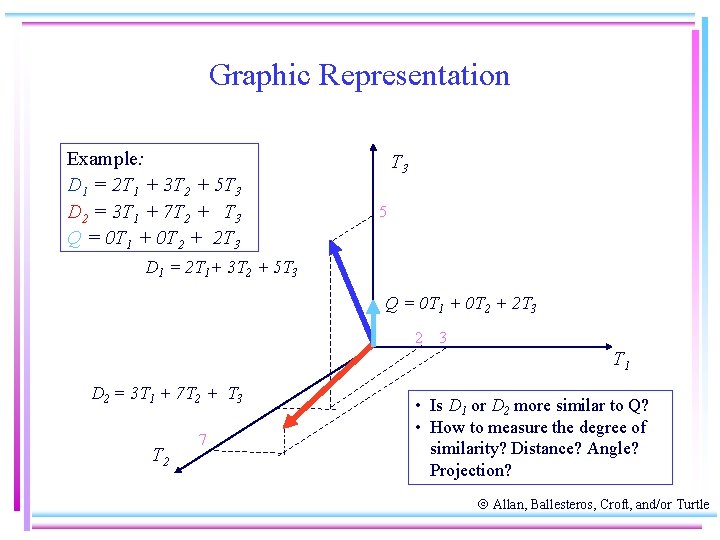

Graphic Representation Example: D 1 = 2 T 1 + 3 T 2 + 5 T 3 D 2 = 3 T 1 + 7 T 2 + T 3 Q = 0 T 1 + 0 T 2 + 2 T 3 5 D 1 = 2 T 1+ 3 T 2 + 5 T 3 Q = 0 T 1 + 0 T 2 + 2 T 3 2 3 T 1 D 2 = 3 T 1 + 7 T 2 + T 3 T 2 7 • Is D 1 or D 2 more similar to Q? • How to measure the degree of similarity? Distance? Angle? Projection? Allan, Ballesteros, Croft, and/or Turtle

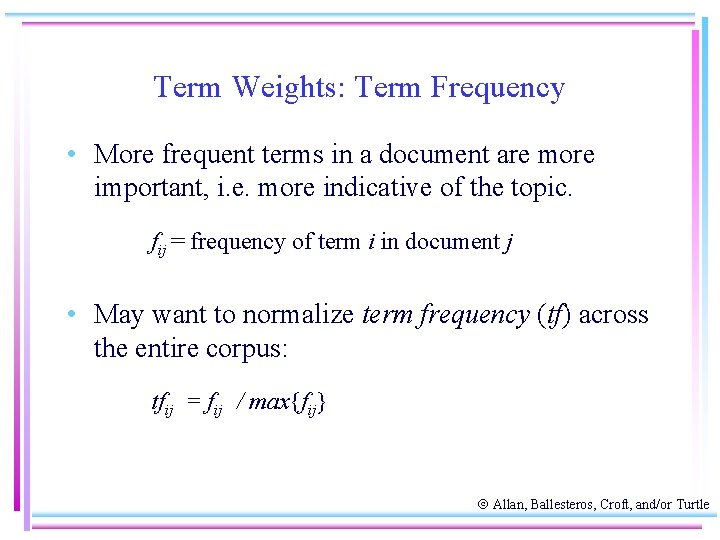

Term Weights: Term Frequency • More frequent terms in a document are more important, i. e. more indicative of the topic. fij = frequency of term i in document j • May want to normalize term frequency (tf) across the entire corpus: tfij = fij / max{fij} Allan, Ballesteros, Croft, and/or Turtle

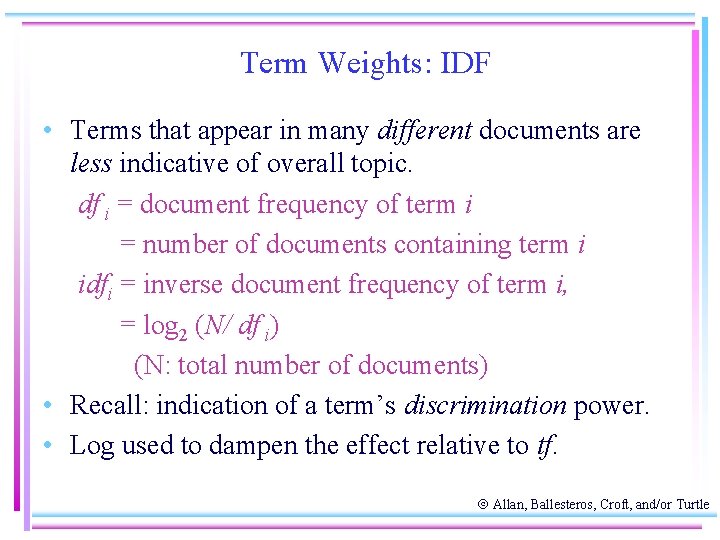

Term Weights: IDF • Terms that appear in many different documents are less indicative of overall topic. df i = document frequency of term i = number of documents containing term i idfi = inverse document frequency of term i, = log 2 (N/ df i) (N: total number of documents) • Recall: indication of a term’s discrimination power. • Log used to dampen the effect relative to tf. Allan, Ballesteros, Croft, and/or Turtle

Query Vector • Query vector is typically treated as a document and also tf-idf weighted. • Alternative is for the user to supply weights for the given query terms. Allan, Ballesteros, Croft, and/or Turtle

Similarity Measure • A similarity measure is a function that computes the degree of similarity between two vectors. • Using a similarity measure between the query and each document: – It is possible to rank the retrieved documents in the order of presumed relevance. – It is possible to enforce a certain threshold so that the size of the retrieved set can be controlled. Allan, Ballesteros, Croft, and/or Turtle

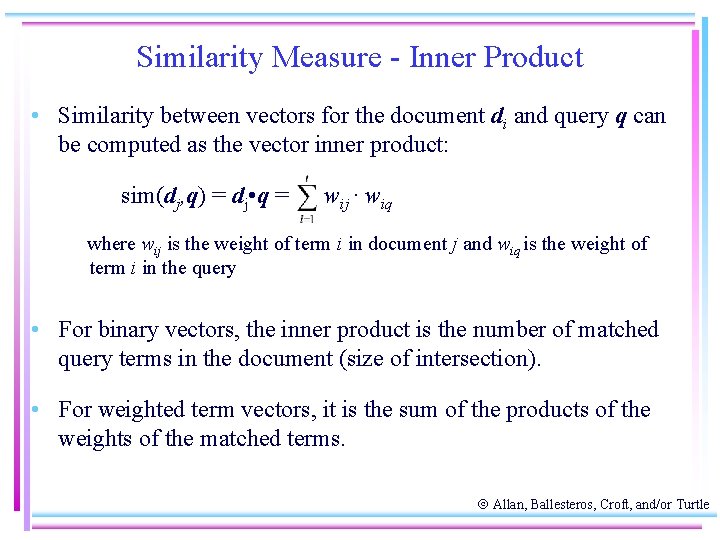

Similarity Measure - Inner Product • Similarity between vectors for the document di and query q can be computed as the vector inner product: sim(dj, q) = dj • q = wij · wiq where wij is the weight of term i in document j and wiq is the weight of term i in the query • For binary vectors, the inner product is the number of matched query terms in the document (size of intersection). • For weighted term vectors, it is the sum of the products of the weights of the matched terms. Allan, Ballesteros, Croft, and/or Turtle

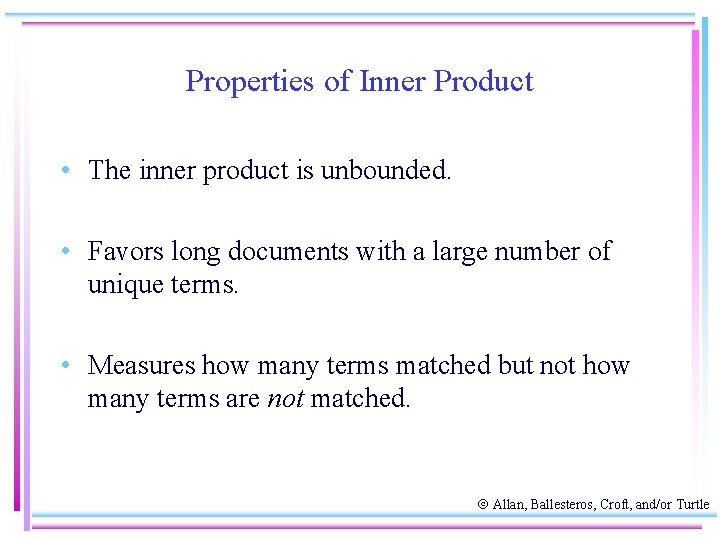

Properties of Inner Product • The inner product is unbounded. • Favors long documents with a large number of unique terms. • Measures how many terms matched but not how many terms are not matched. Allan, Ballesteros, Croft, and/or Turtle

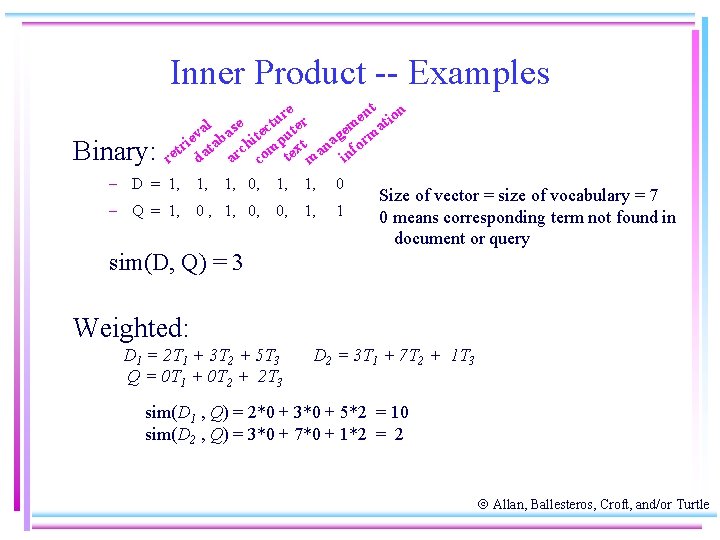

Inner Product -- Examples Binary: nt ion re r e u m at al ase tect ute e v ie tab chi p xt nag form r t m re da ar co te ma in – D = 1, 1, 1, 0, 1, 1, 0 – Q = 1, 0 , 1, 0, 0, 1, 1 Size of vector = size of vocabulary = 7 0 means corresponding term not found in document or query sim(D, Q) = 3 Weighted: D 1 = 2 T 1 + 3 T 2 + 5 T 3 Q = 0 T 1 + 0 T 2 + 2 T 3 D 2 = 3 T 1 + 7 T 2 + 1 T 3 sim(D 1 , Q) = 2*0 + 3*0 + 5*2 = 10 sim(D 2 , Q) = 3*0 + 7*0 + 1*2 = 2 Allan, Ballesteros, Croft, and/or Turtle

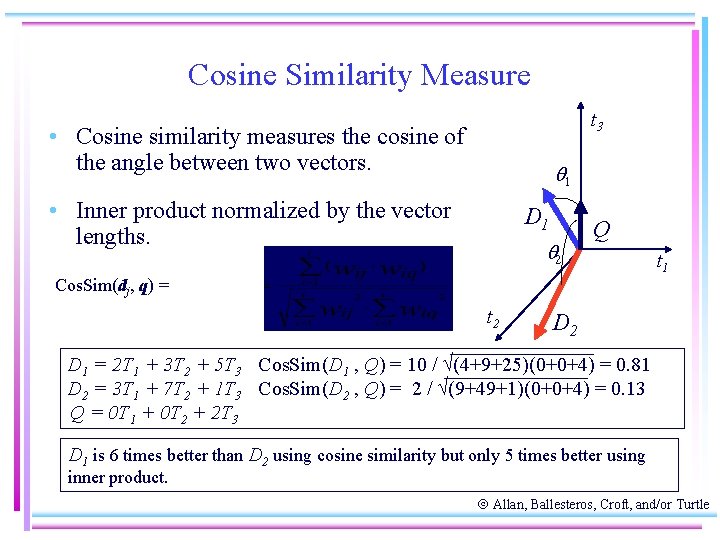

Cosine Similarity Measure t 3 • Cosine similarity measures the cosine of the angle between two vectors. 1 • Inner product normalized by the vector lengths. D 1 2 Q t 1 Cos. Sim(dj, q) = t 2 D 1 = 2 T 1 + 3 T 2 + 5 T 3 Cos. Sim(D 1 , Q) = 10 / (4+9+25)(0+0+4) = 0. 81 D 2 = 3 T 1 + 7 T 2 + 1 T 3 Cos. Sim(D 2 , Q) = 2 / (9+49+1)(0+0+4) = 0. 13 Q = 0 T 1 + 0 T 2 + 2 T 3 D 1 is 6 times better than D 2 using cosine similarity but only 5 times better using inner product. Allan, Ballesteros, Croft, and/or Turtle

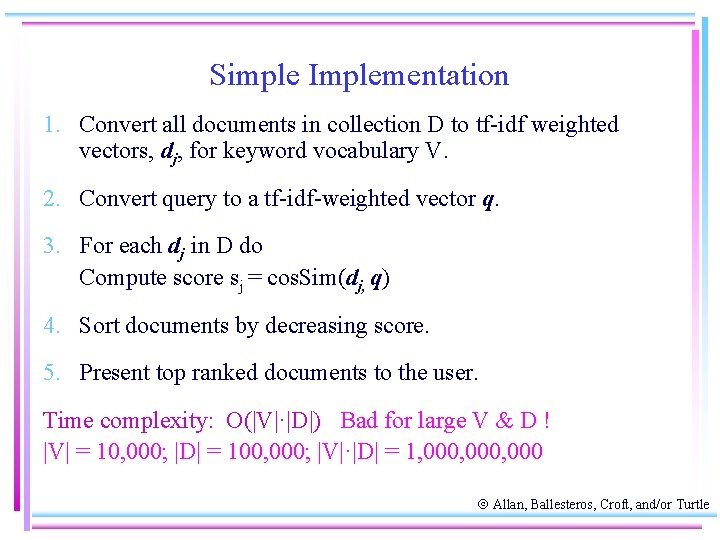

Simple Implementation 1. Convert all documents in collection D to tf-idf weighted vectors, dj, for keyword vocabulary V. 2. Convert query to a tf-idf-weighted vector q. 3. For each dj in D do Compute score sj = cos. Sim(dj, q) 4. Sort documents by decreasing score. 5. Present top ranked documents to the user. Time complexity: O(|V|·|D|) Bad for large V & D ! |V| = 10, 000; |D| = 100, 000; |V|·|D| = 1, 000, 000 Allan, Ballesteros, Croft, and/or Turtle

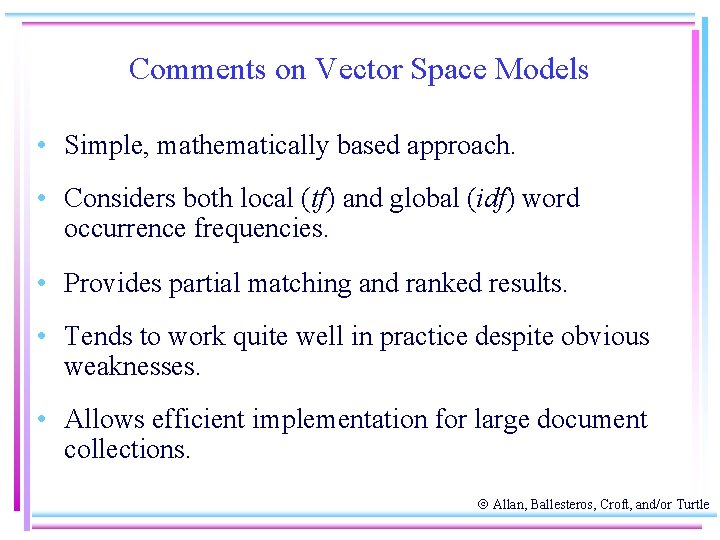

Comments on Vector Space Models • Simple, mathematically based approach. • Considers both local (tf) and global (idf) word occurrence frequencies. • Provides partial matching and ranked results. • Tends to work quite well in practice despite obvious weaknesses. • Allows efficient implementation for large document collections. Allan, Ballesteros, Croft, and/or Turtle

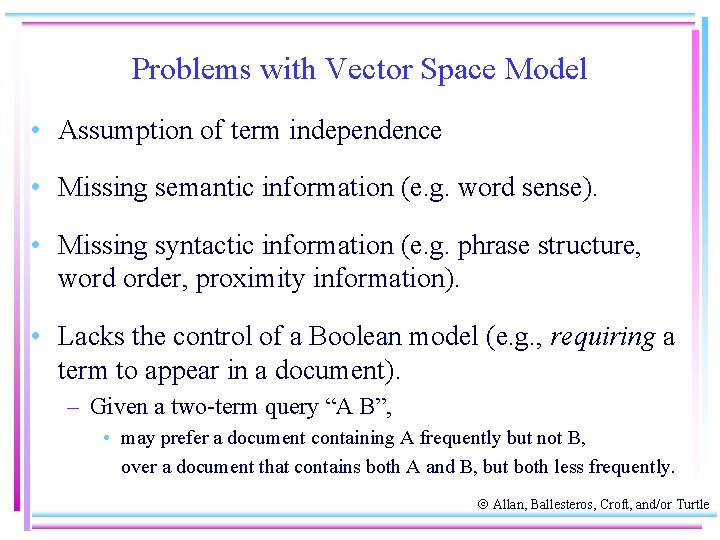

Problems with Vector Space Model • Assumption of term independence • Missing semantic information (e. g. word sense). • Missing syntactic information (e. g. phrase structure, word order, proximity information). • Lacks the control of a Boolean model (e. g. , requiring a term to appear in a document). – Given a two-term query “A B”, • may prefer a document containing A frequently but not B, over a document that contains both A and B, but both less frequently. Allan, Ballesteros, Croft, and/or Turtle

- Slides: 35