Resource Management with Deep Reinforcement Learning Hongzi Mao

- Slides: 22

Resource Management with Deep Reinforcement Learning Hongzi Mao Mohammad Alizadeh, Ishai Menache, Srikanth Kandula

Resource management is ubiquitous Cluster scheduling Video streaming Virtual machine placement …. . . Congestion Control Internet telephony 2

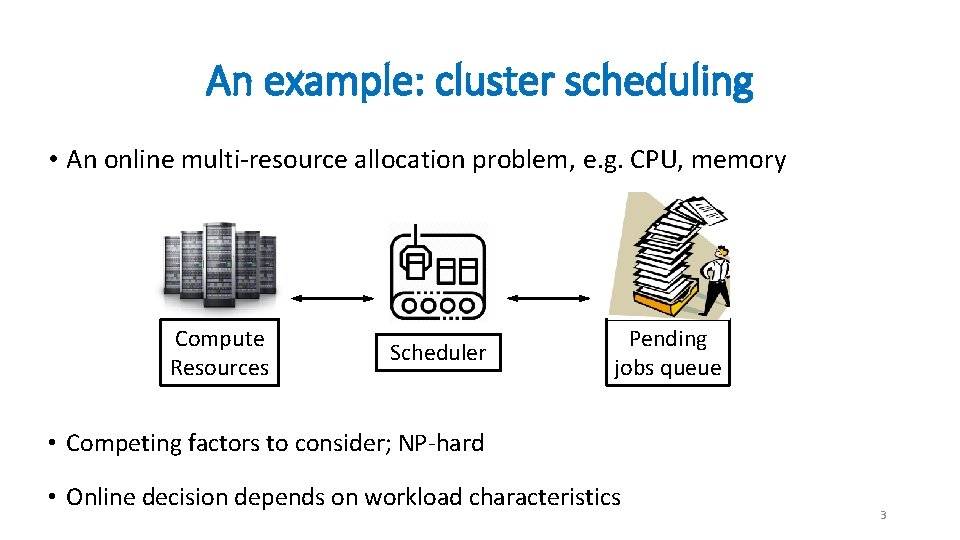

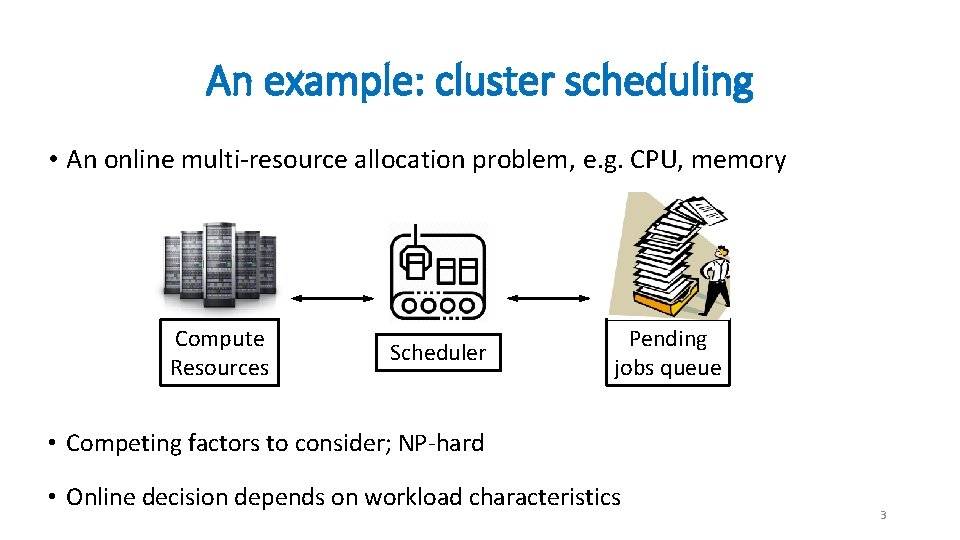

An example: cluster scheduling • An online multi-resource allocation problem, e. g. CPU, memory Compute Resources Scheduler Pending jobs queue • Competing factors to consider; NP-hard • Online decision depends on workload characteristics 3

What people do today Is there a way around human-generated heuristics? 1. Assume a simple model for the system 2. Come up with some clever heuristics 3. Painstakingly test and tune the heuristics in practice 4

Can systems learn to manage resources on their own? 5

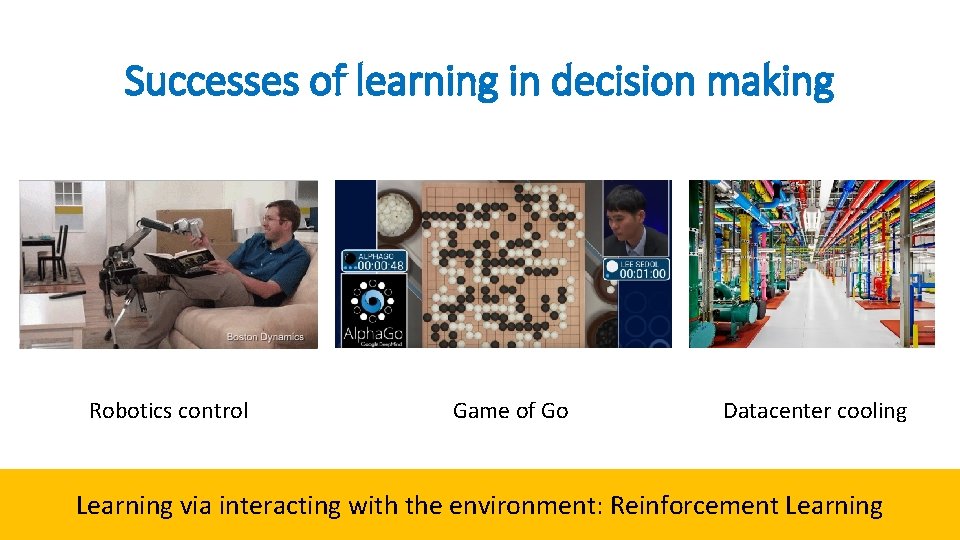

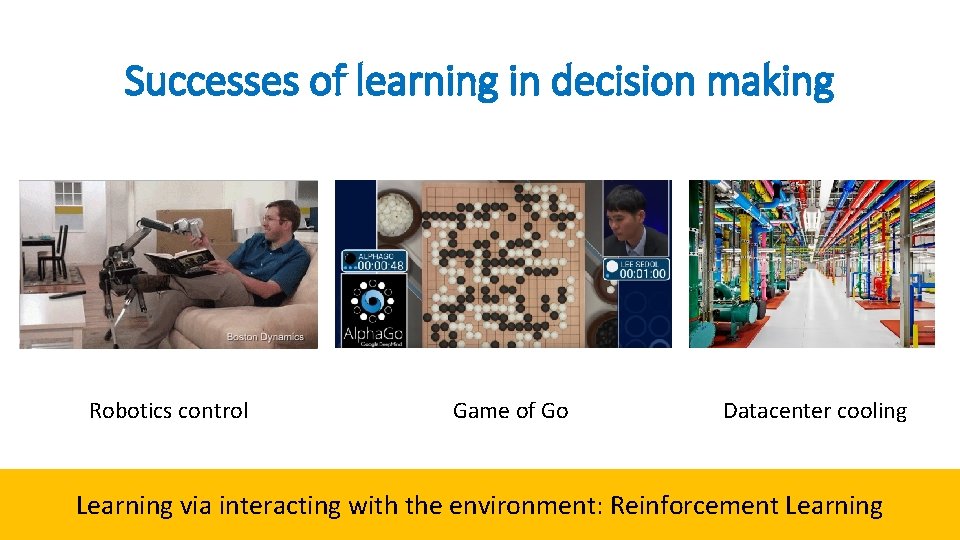

Successes of learning in decision making Robotics control Game of Go Datacenter cooling Learning via interacting with the environment: Reinforcement Learning 6

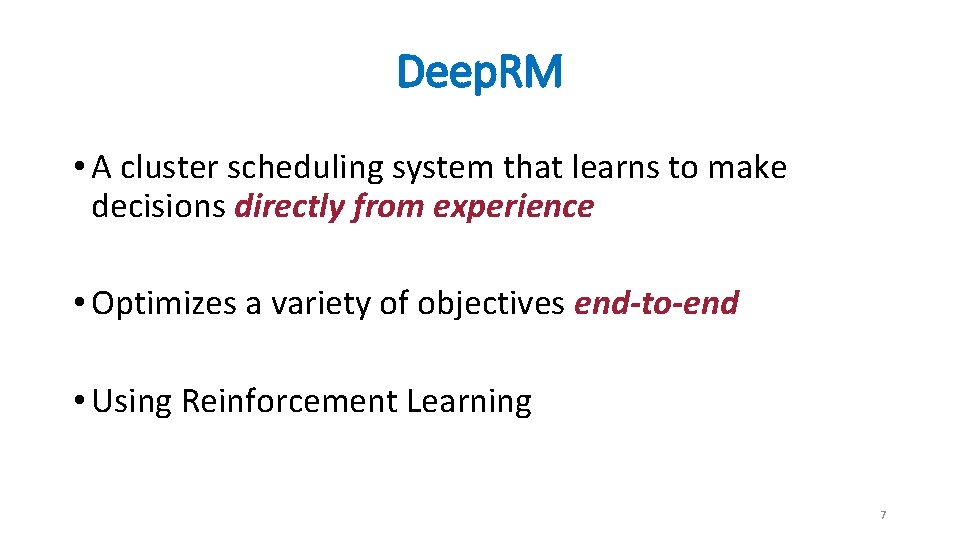

Deep. RM • A cluster scheduling system that learns to make decisions directly from experience • Optimizes a variety of objectives end-to-end • Using Reinforcement Learning 7

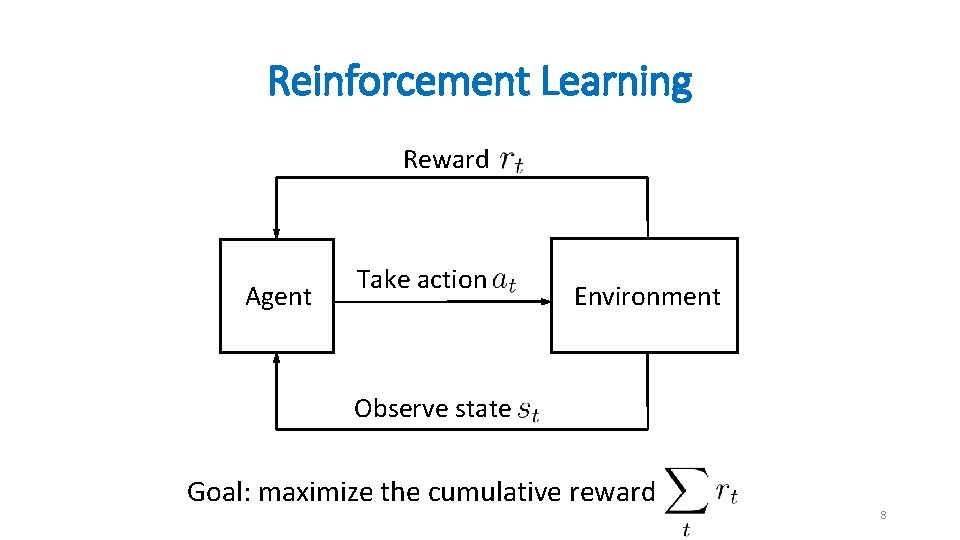

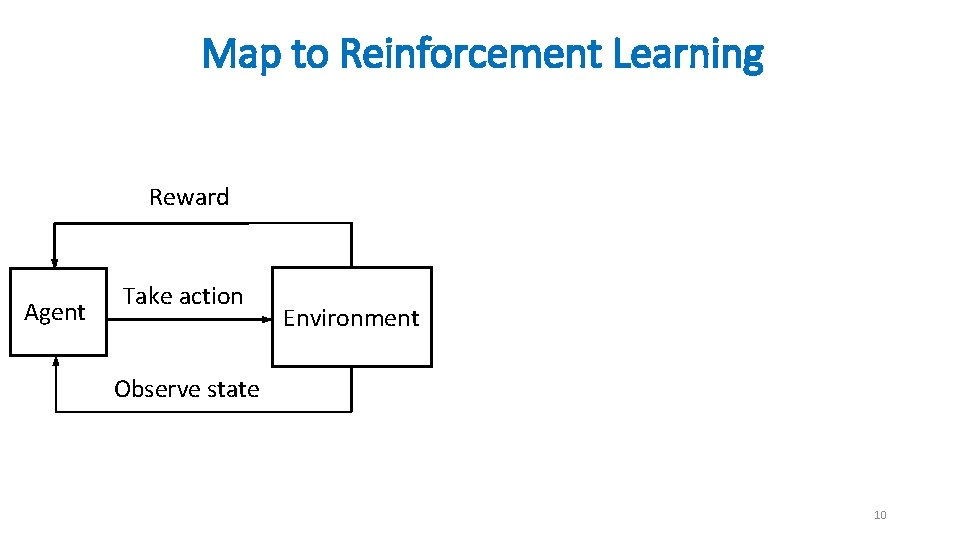

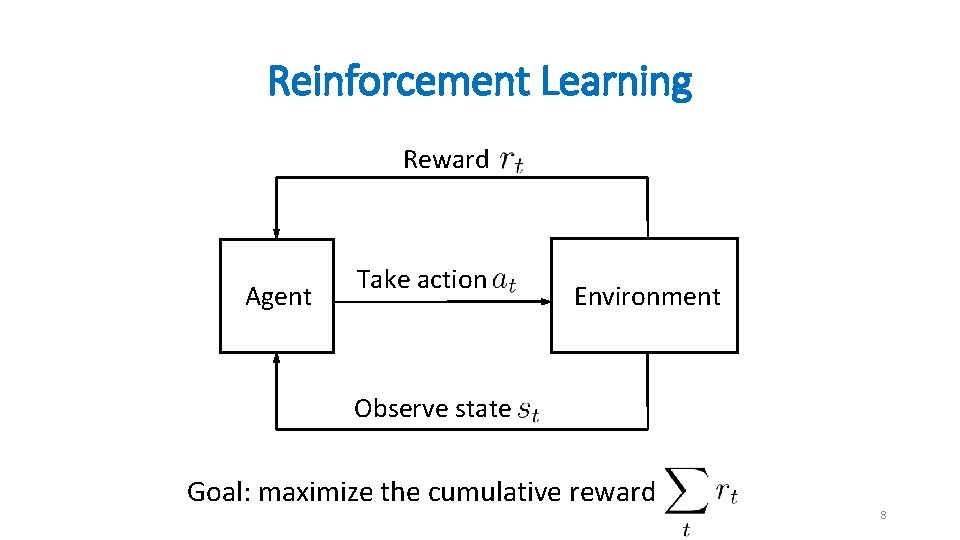

Reinforcement Learning Reward Agent Take action Environment Observe state Goal: maximize the cumulative reward 8

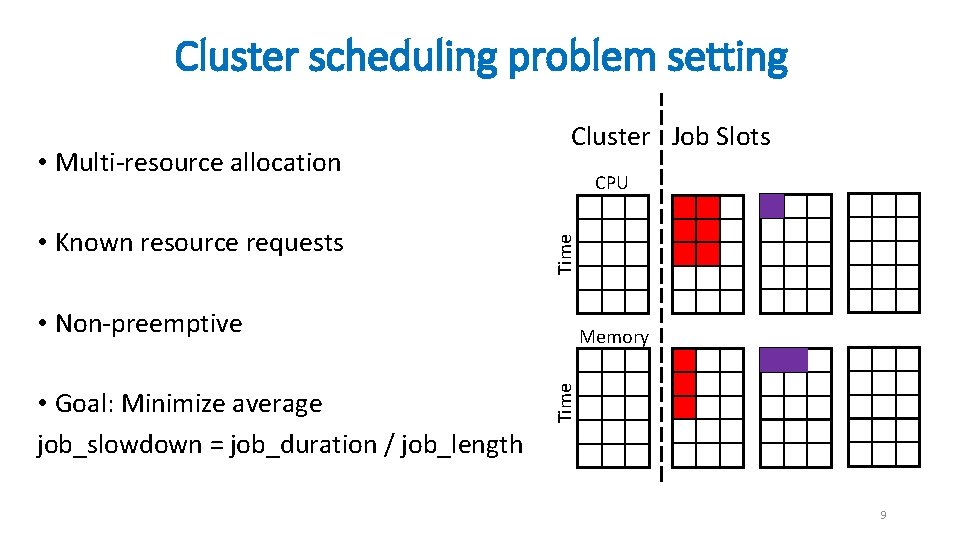

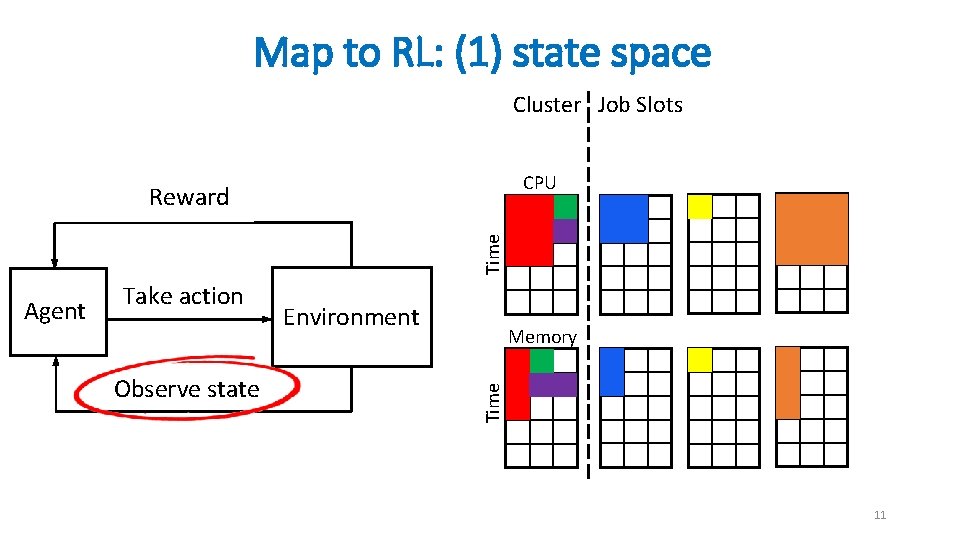

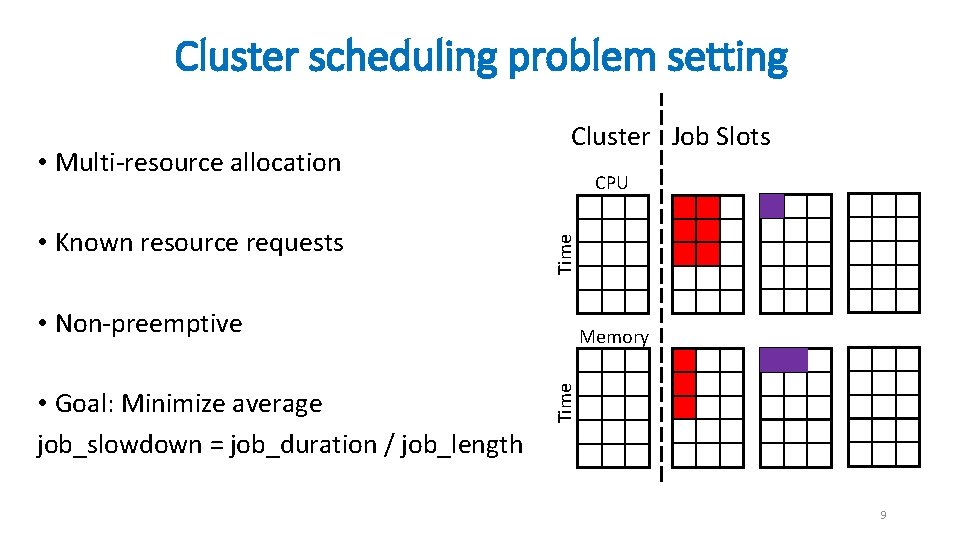

Cluster scheduling problem setting • Known resource requests CPU Time • Multi-resource allocation Cluster Job Slots • Non-preemptive Time • Goal: Minimize average job_slowdown = job_duration / job_length Memory 9

Map to Reinforcement Learning Reward Agent Take action Environment Observe state 10

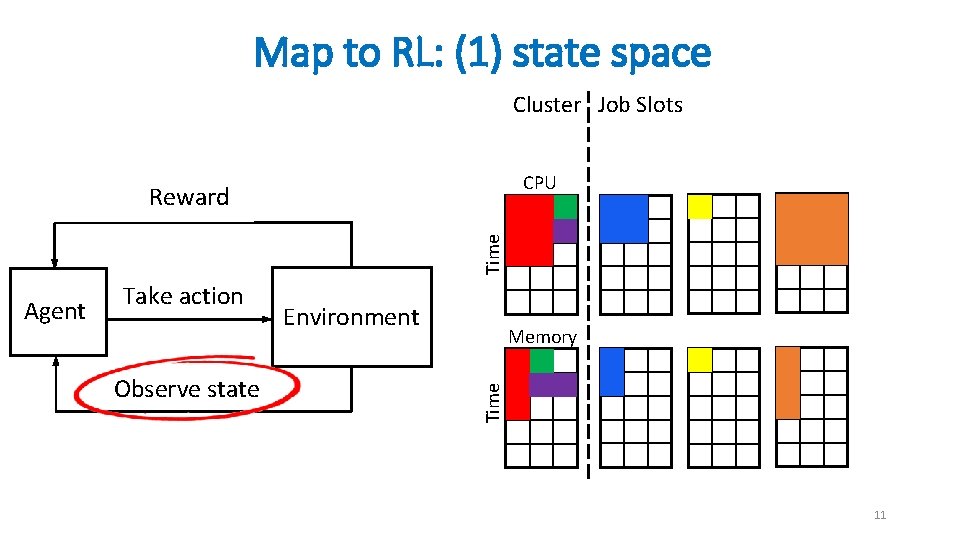

Map to RL: (1) state space Cluster Job Slots CPU Time Reward Observe state Environment Memory Time Agent Take action 11

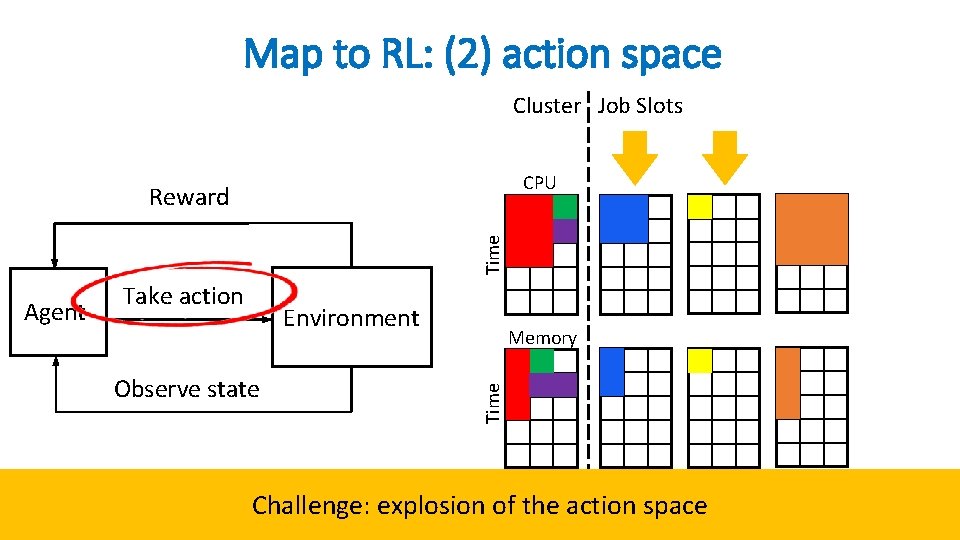

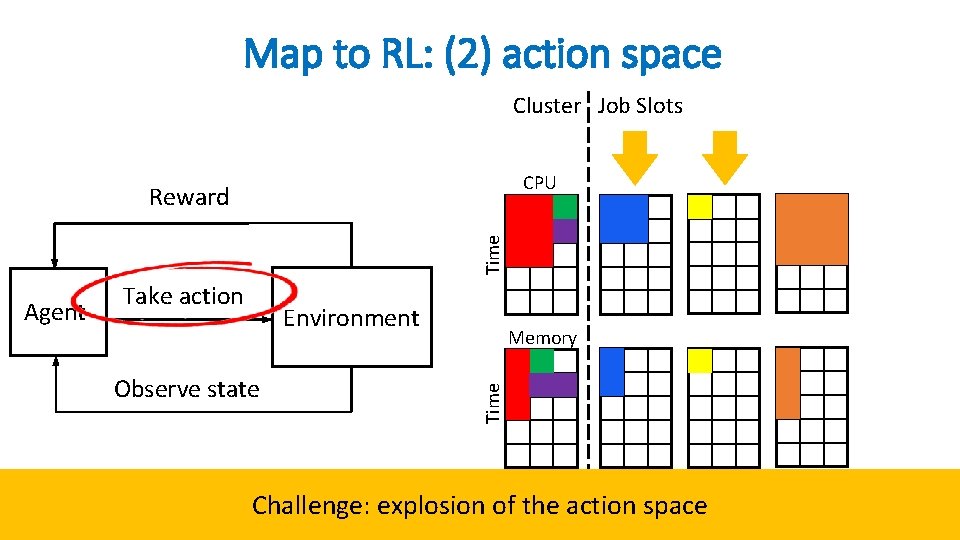

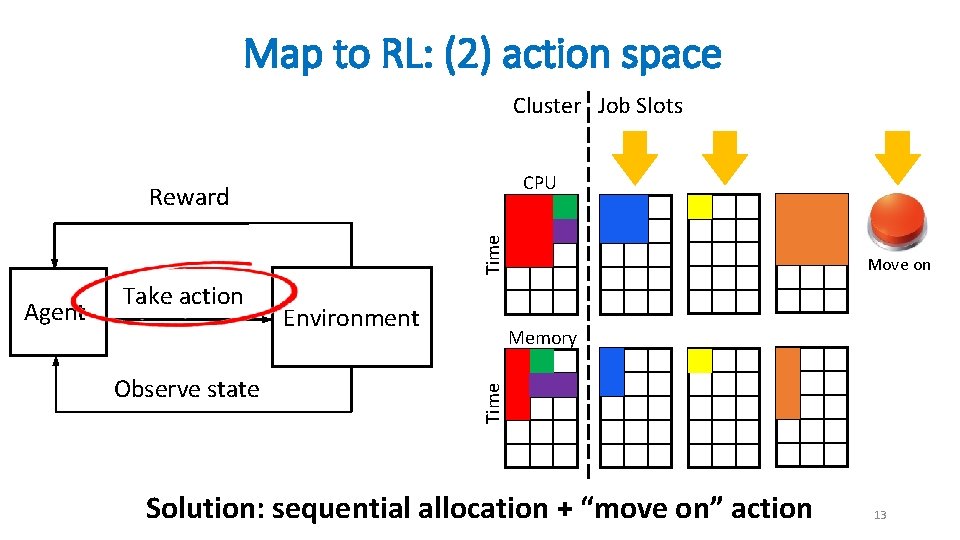

Map to RL: (2) action space Cluster Job Slots CPU Time Reward Environment Observe state Memory Time Agent Take action Challenge: explosion of the action space 12

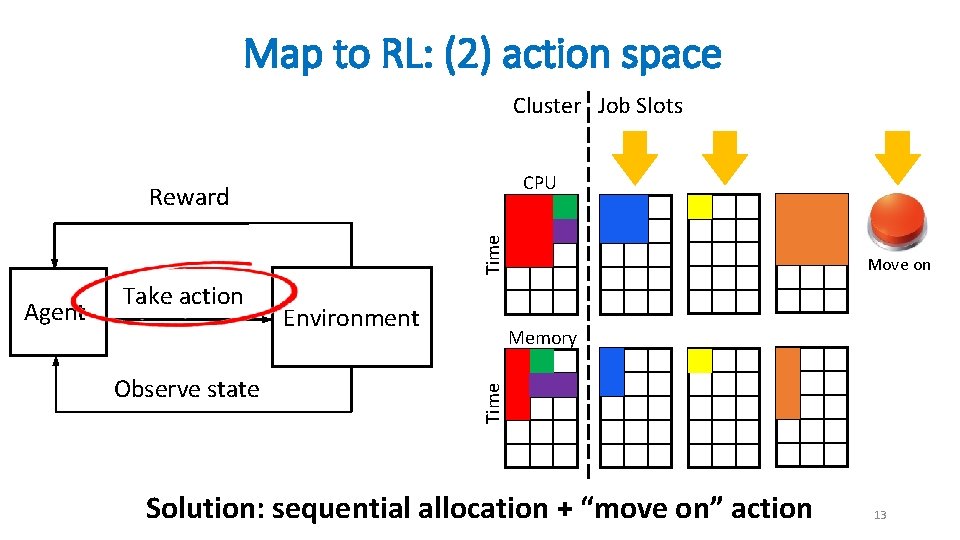

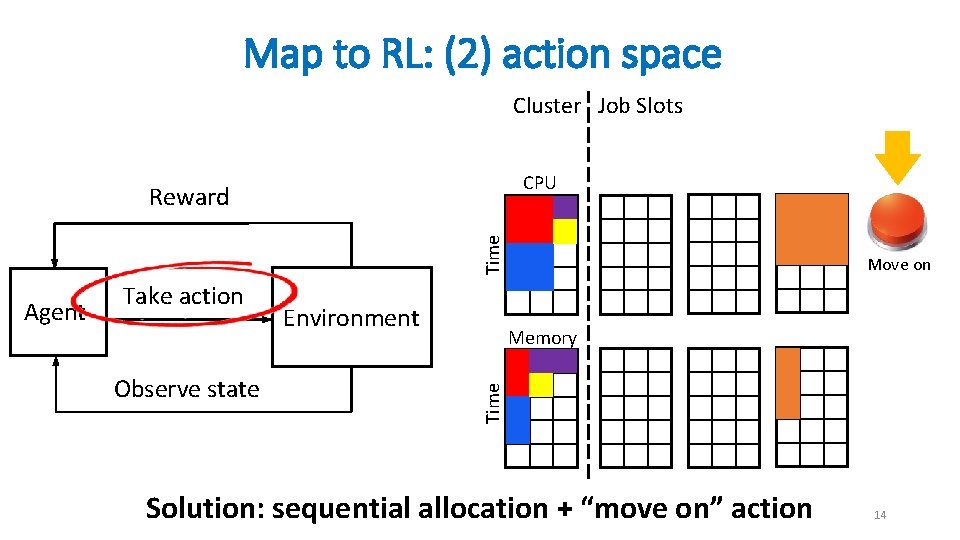

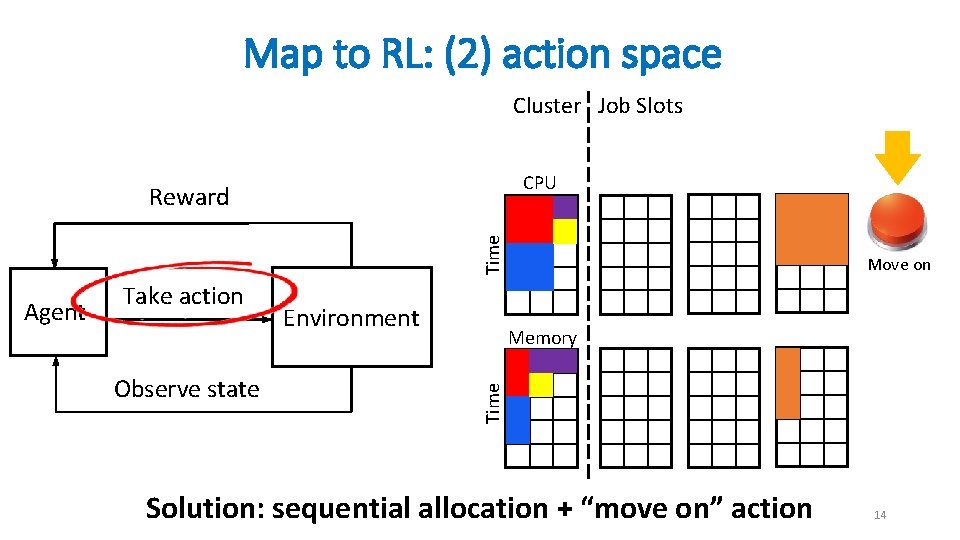

Map to RL: (2) action space Cluster Job Slots CPU Time Reward Observe state Environment Memory Time Agent Take action Move on Solution: sequential allocation + “move on” action 13

Map to RL: (2) action space Cluster Job Slots CPU Time Reward Observe state Environment Memory Time Agent Take action Move on Solution: sequential allocation + “move on” action 14

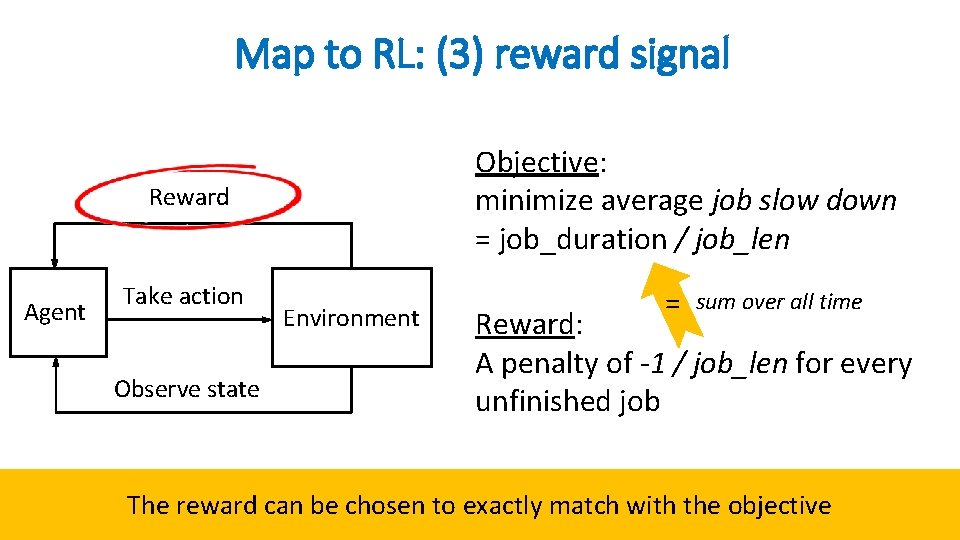

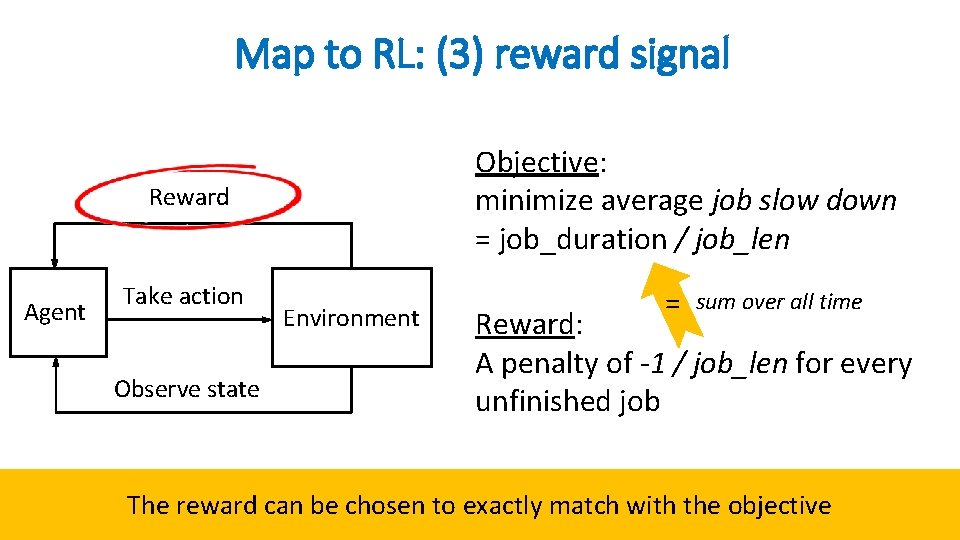

Map to RL: (3) reward signal Objective: minimize average job slow down = job_duration / job_len Reward Agent Take action Observe state Environment = sum over all time Reward: A penalty of -1 / job_len for every unfinished job The reward can be chosen to exactly match with the objective 15

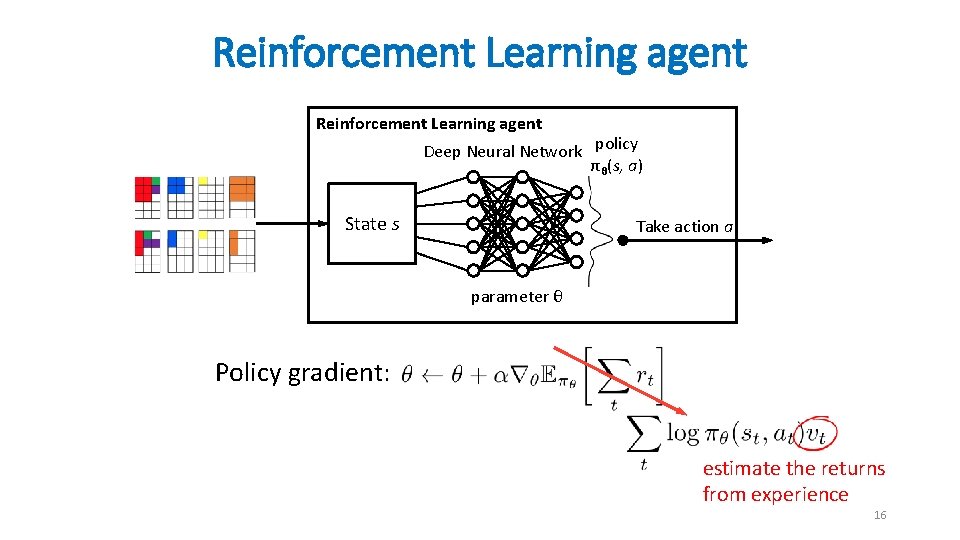

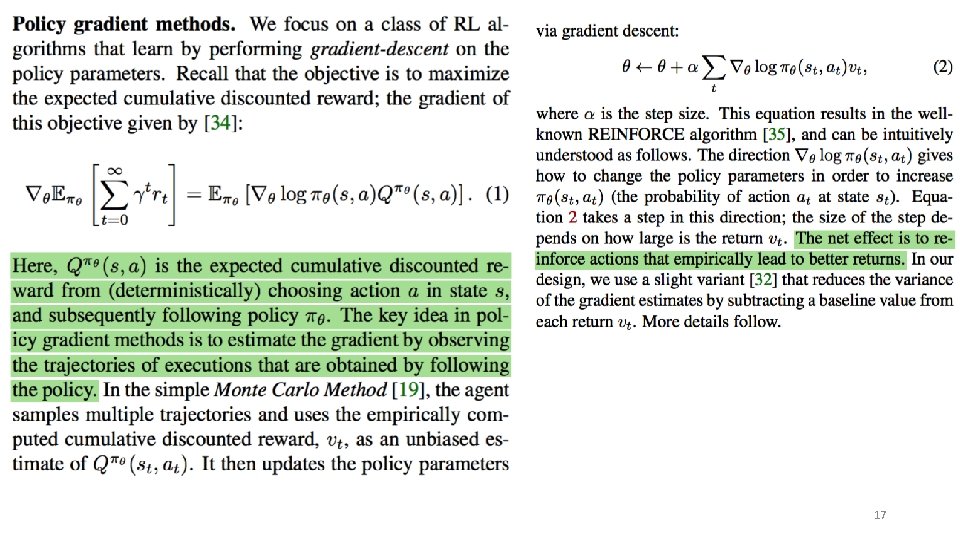

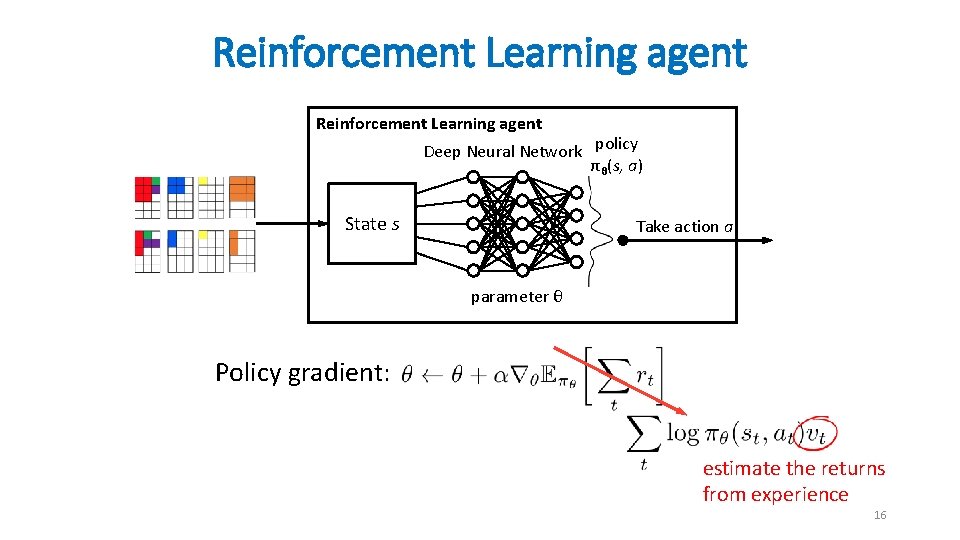

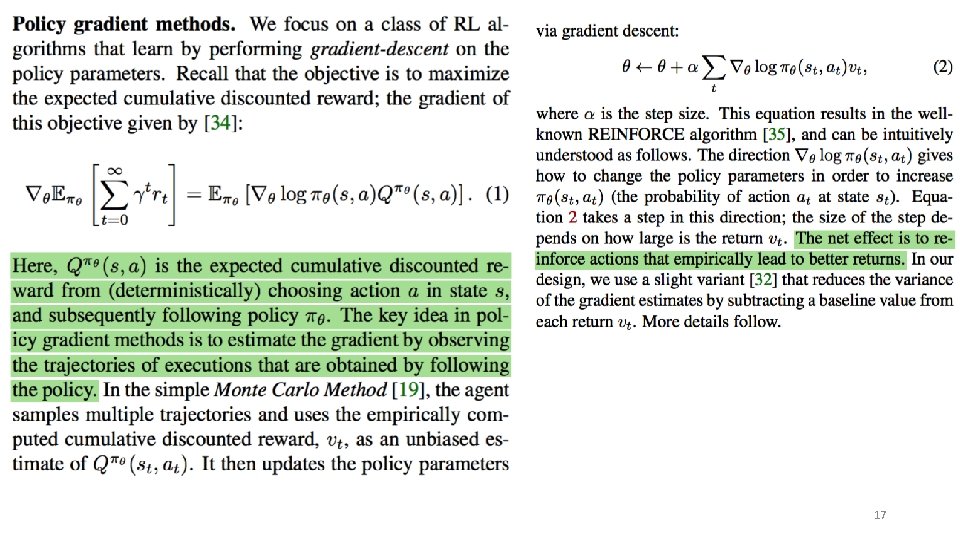

Reinforcement Learning agent Deep Neural Network policy πθ(s, a) State s Take action a parameter θ Policy gradient: estimate the returns from experience 16

17

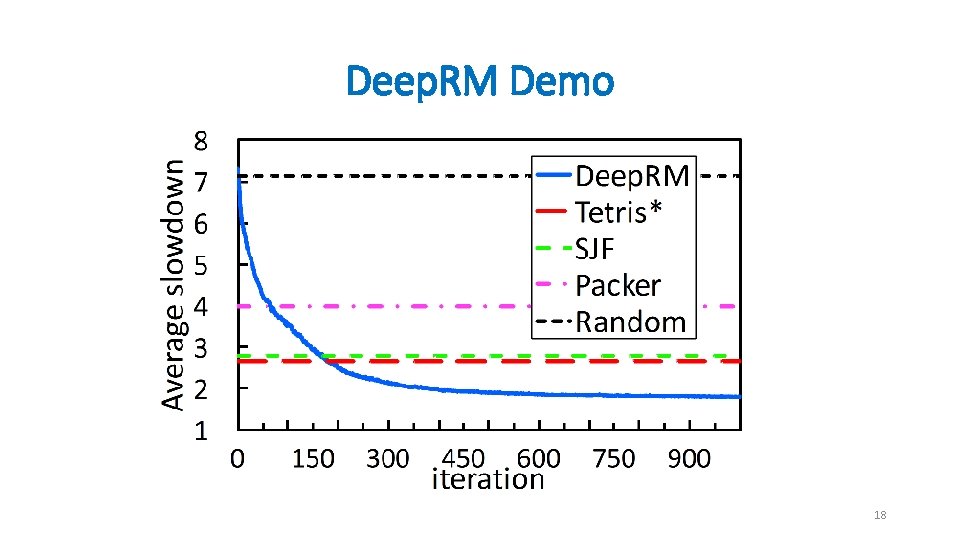

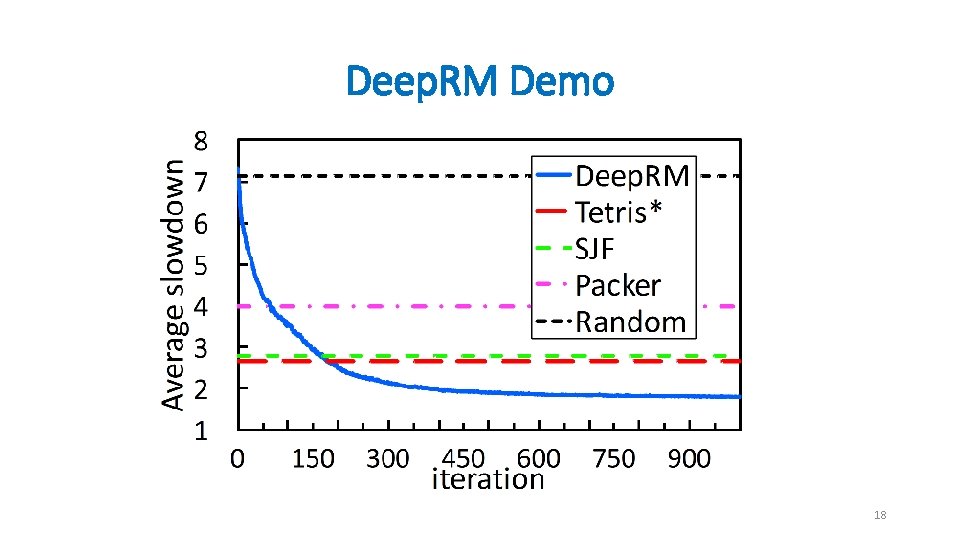

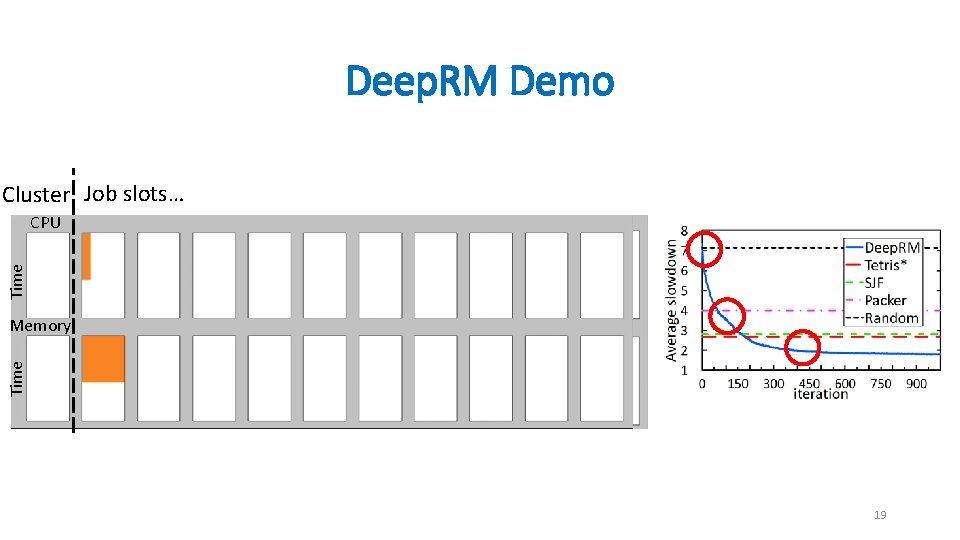

Deep. RM Demo 18

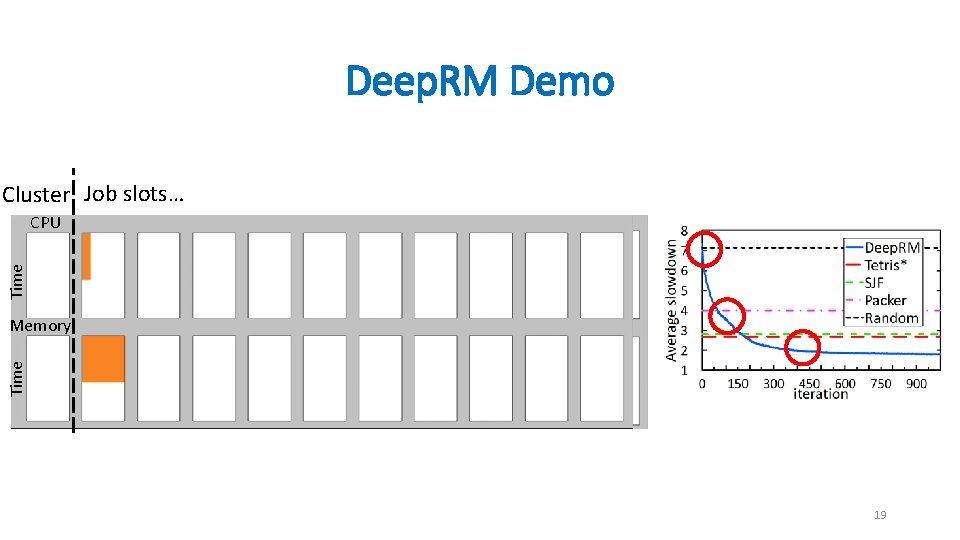

Deep. RM Demo Cluster Job slots… Time CPU Time Memory 19

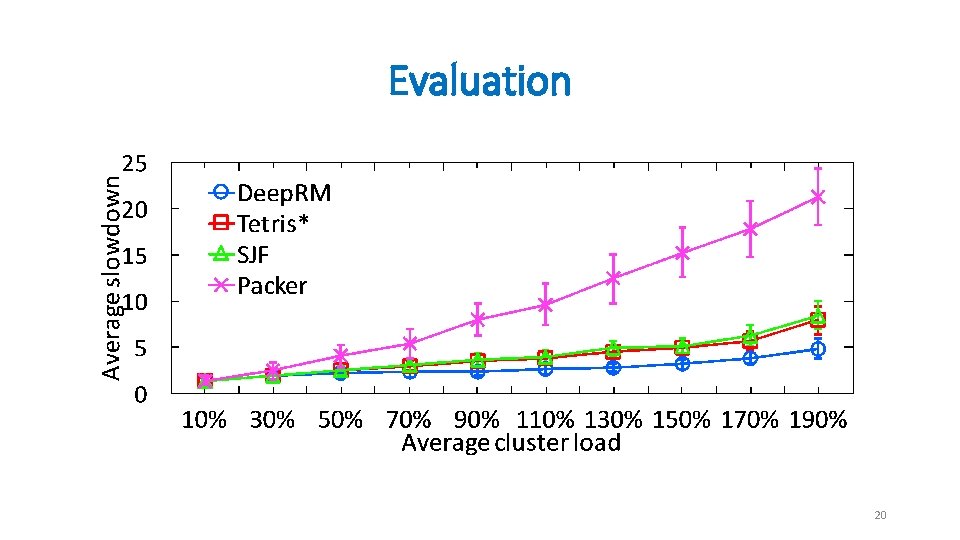

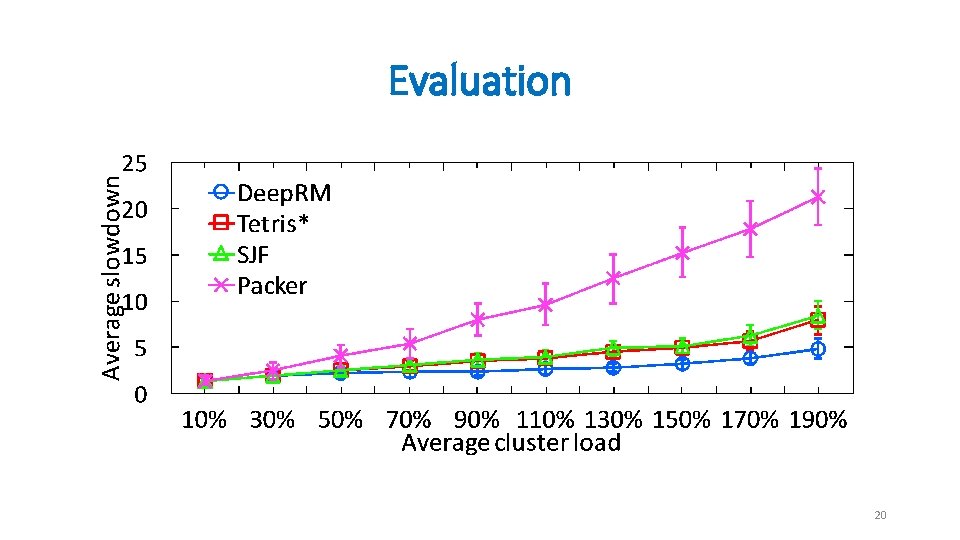

Evaluation 20

Where is the gain from • Deep. RM is actually non work-conserving • Recall that scheduling is non-preemptive. • Deep. RM Hold big jobs to make room for future small jobs Deep. RM learns this strategy purely from experience 21

Conclusions • Learning system for cluster scheduling • Deep. RM’s algorithms are applicable to other problems • Challenges: • How do humans interpret the policy? • Work with non-stationary scenarios? • Safety issues, what if it goes wrong? . . 22