Resilient Overlay Networks David Anderson Hari Balakrishnan Frank

Resilient Overlay Networks David Anderson, Hari Balakrishnan, Frank Kaashoek and Robert Morris. MIT Laboratory for Computer Science http: //nms. lcs. mit. edu/ron/ Rohit Kulkarni University of Southern California CSCI 558 L : Fall 2004

Outline n n n n Introduction What is RON ? Design Goals RON design Evaluation Related Work Future Work Conclusion

Introduction n Current organization of Internet n n n Independently operating ASes peer together Detailed routing information only within an AS Shared routing information filtered using BGP provides policy enforcement and scalability Problems with this organization n Reduced fault-tolerance of e 2 e communication Fault recovery takes many minutes Vulnerable to router and link faults, configuration errors. .

![Introduction [2] : Other studies n Studies highlighting problems n n Studies trying to Introduction [2] : Other studies n Studies highlighting problems n n Studies trying to](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-4.jpg)

Introduction [2] : Other studies n Studies highlighting problems n n Studies trying to solve problems n n n “delayed routing convergence” - inter-domain routers take 10 s of mins to reach a consistent view after a fault “e 2 e routing behavior” - routing faults prevent internet host from communicating up to 3. 3% of time avg over long period “e 2 e WAN availability” - 5% of all detected failures last more than 10, 000 secs (2 hrs 45 mins) “Multi-homing” - addressing issues with active connections. Still no quick fault recovery “Detour” - path selection in internet sub-optimal w. r. t latency, loss-rate. throughput. Showed benefits of indirect routing No wide-area system that provides quick failure-recovery

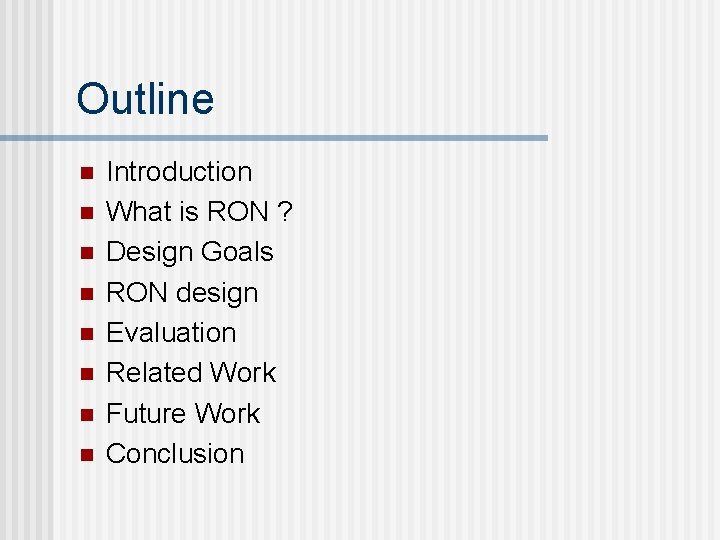

What is RON ? n n Resilient - “to recover readily from adversity” Overlay Network “an isolated virtual network deployed over an existing physical network” Figure taken from X-bone project [2]

![What is RON ? [2] n “RON is an architecture that allows distributed Internet What is RON ? [2] n “RON is an architecture that allows distributed Internet](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-6.jpg)

What is RON ? [2] n “RON is an architecture that allows distributed Internet applications to detect and recover from path outages and periods of degraded performance within several seconds” n An application layer overlay on top of existing Internet RON nodes monitor Internet paths among themselves n n Functioning Quality Route packets directly over internet or using other RON nodes

Design Goals n Goal 1: Fast failure detection and Recovery n n n Goal 2: Integrate routing & path selection w/ Applications n n n Detection by using aggressive probing Recovery by using intermediate RON nodes forwarding application specific notions of failures application specific metric in path selection Goal 3: Framework for policy routing n n Fine-grained policies aimed at users or hosts E. g. e 2 e per-user rate control, forwarding rate controls based on packet classification.

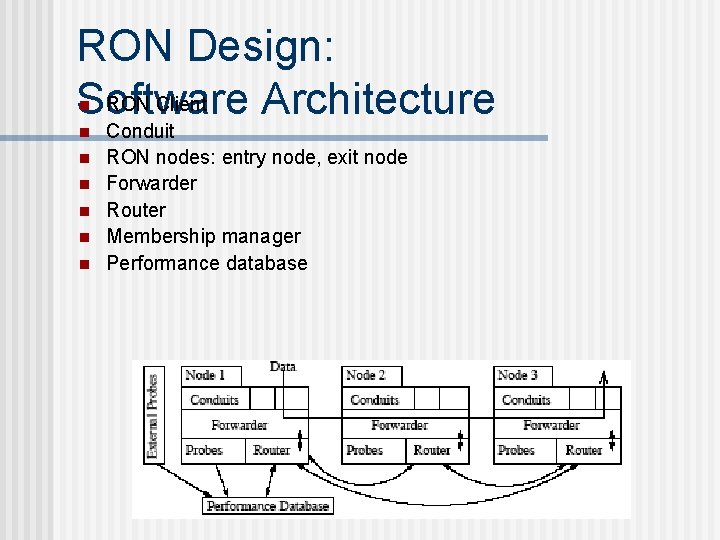

RON Design: RON Client Software Architecture n n n n Conduit RON nodes: entry node, exit node Forwarder Router Membership manager Performance database

![Design [2]: Routing and Path Selection n Link-state propagation n Link-state routing protocol Routing Design [2]: Routing and Path Selection n Link-state propagation n Link-state routing protocol Routing](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-9.jpg)

Design [2]: Routing and Path Selection n Link-state propagation n Link-state routing protocol Routing protocol is RON client with a packet type Path evaluation and selection n n Outage detection using active probing Routing metrics • Latency-minimizer - uses EWMA of RT latency samples w/ parameter (0. 9) • Loss-minimizer - uses avg of last k (100) probe samples. • TCP throughput-optimizer - combines latency and loss rate metrics using simplified TCP throughput equation n Performance database n Detailed performance information

![Design [3]: Policy routing n n Allows users to define types of traffic allowed Design [3]: Policy routing n n Allows users to define types of traffic allowed](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-10.jpg)

Design [3]: Policy routing n n Allows users to define types of traffic allowed on network links Classification n n Data classifier module Incoming (via conduit) packets get policy tag Tag identifies set of routing tables to be used Routing table formation n n Policy identifies which virtual links to use Separate set of routing tables for each policy

![Design [4]: Data forwarding The RON packet header The forwarding control and data paths Design [4]: Data forwarding The RON packet header The forwarding control and data paths](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-11.jpg)

Design [4]: Data forwarding The RON packet header The forwarding control and data paths

Evaluation: Methodology n wide-area RON deployed at several internet sites n n ISP, US Univs (I-2), Euro Univs, broadband home users, . coms Internet-2 (I-2) policy for more Internet like measurements Measurements using probe packets, throughput samples 2 datasets n RON 1 - 12 nodes, 132 paths • 2. 6 m samples, 64 hrs trace in March 2001, n RON 2 - 16 nodes, 240 paths • 3. 5 m samples, 85 hrs trace in May 2001, n n Time-averaged samples averaged over 30 mins duration Most RON hosts were: Celeron/733, 256 MB RAM, 9 GB HDD, Free. BSD. n No host was processing bottleneck

![Evaluation [2]: Overcoming path outages Outage data for RON 1 Packet loss rate averaged Evaluation [2]: Overcoming path outages Outage data for RON 1 Packet loss rate averaged](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-13.jpg)

Evaluation [2]: Overcoming path outages Outage data for RON 1 Packet loss rate averaged over 30 -min Intervals for direct Internet paths vs. RON paths for RON 1 Outage data for RON 2

![Evaluation [3]: Improving loss rates CDF of improvement in loss-rate achieved by RON 1. Evaluation [3]: Improving loss rates CDF of improvement in loss-rate achieved by RON 1.](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-14.jpg)

Evaluation [3]: Improving loss rates CDF of improvement in loss-rate achieved by RON 1. Samples are averaged over 30 mins

![Evaluation [4]: Improving latency 5 -minute avg latencies over direct internet path and over Evaluation [4]: Improving latency 5 -minute avg latencies over direct internet path and over](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-15.jpg)

Evaluation [4]: Improving latency 5 -minute avg latencies over direct internet path and over RON, as CDF

![Evaluation [5]: Improving throughput CDF of the ratio of throughput achieved via RON to Evaluation [5]: Improving throughput CDF of the ratio of throughput achieved via RON to](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-16.jpg)

Evaluation [5]: Improving throughput CDF of the ratio of throughput achieved via RON to that achieved directly via the Internet

![Evaluation [6]: Route Stability Number of path changes and run-lengths of routing persistence for Evaluation [6]: Route Stability Number of path changes and run-lengths of routing persistence for](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-17.jpg)

Evaluation [6]: Route Stability Number of path changes and run-lengths of routing persistence for different hysteresis values n n n Link-state routing table snapshots every 14 seconds. Total 5616 snapshots RON’s path selection algos on link-state trace This shows hysteresis is needed for route stability

![Evaluation [7]: Major results n n n RON was able to successfully detect and Evaluation [7]: Major results n n n RON was able to successfully detect and](http://slidetodoc.com/presentation_image_h2/f47d5478e7567418161f09fa5415a0bd/image-18.jpg)

Evaluation [7]: Major results n n n RON was able to successfully detect and recover from 100% (in RON 1) and 60% (in RON 2) of all complete outages and all periods of sustained high loss rates of 30% or more. RON takes 18 seconds, on average, to route around a failure & can do so in face of flooding attack RON successfully routed around bad throughput failures, doubling TCP throughput in 5% of all samples. In 5% of the samples, RON reduced the loss probability by 0. 05 or more Single-hop route indirection captured the majority of benefits in our RON deployment, for both outage recovery and latency optimization

Related Work n X-Bone n n n Generic framework for speedy deployment of IP-based overlay networks Management fns & mechanisms to insert packets into overlay No fault-tolerant operation - no outage detection No application controlled path selection Detour n n Showed benefits of indirect routing Kernel level system - not closely tied to application Focus not on quick failure-recovery for preventing disruptions No experimental results analysis from a real-world deployment

Future Work n Preventing misuse of established RON n n Mechanisms to detect misbehaving RON peers n n Cryptographic authentication and access control Just at administrative level not enough Scalability/Wide spread deployment n n Keep size of RON within limits (50 -100) Have co-existence of many RONs • Their interactions • Routing stability

Conclusion n n RON can greatly improve reliability of Internet packet delivery by detecting and recovering from outages and path failures more quickly (18 secs) than BGP-4 (several mins) Can overcome performance failures, improving lossrates, latency, throughput. Forwarding packets via at most one intermediate RON node is sufficient Claims of RON confirmed from experiments Good platform for resilient distributed internet application development

References n n n n “Resilient Overlay Networks”, D. Andersen, H. Balakrishnan, M. Kaashoek, R. Morris, Proc. 18 th ACM SOSP, Banff, Canada, October 2001. “Detour: a Case for Informed Internet Routing and Transport”, S. Savage, T. Anderson, A. Aggarwal, D. Becker, N. Cardwell, A. Collins, E. Hoffman, J. Snell, A. Vahdat, G. Voelker, and J. Zahorjan, IEEE Micro, 19(1): 50 -59, January 1999. “Dynamic Internet Overlay Deployment and Management Using the X-Bone”, J. Touch, Computer Networks, July 2001, pp. 117 -135 “The Case for Resilient Overlay Networks”, D. Andersen, H. i Balakrishnan, M. Kaashoek, and R. Morris, Proc. Hot. OS VIII, Schloss Elmau, Germany, May 2001. “End-to-End Routing Behavior in the Internet”, V. Paxson, In Proc. ACM SIGCOMM, (Stanford, CA, Aug. 1996). “Delayed Internet Routing Convergence”, C. Labovitz, A. Ahuja, A. Bose, F. Jahanian, In Proc. ACM SIGCOMM (Stockholm, Sweden, September 2000), pp. 175 – 187. “Modeling TCP Throughput: A simple model and its empirical validation”, J. Padhye, V. Firoiu, D. Towsley, J. Kurose, In Proc. ACM SIGCOMM (Vancouver Canada, September 1998), pp. 303 -323 “End-to-End WAN service availability”, B. Chandra, M. Dahlin, L. Gao, A. Nayate, In Proc. 3 rd USITS (San Francisco, CA, 2001), pp. 97 -108

- Slides: 22