RESEARCH DESIGN 7 CONTENTS Research Design Variables Sampling

RESEARCH DESIGN 7

CONTENTS Research Design Variables Sampling Between/Within Anomalies Designed Analysis

Contents Research Process An Example Study Type I & II errors RESEARCH DESIGN Design to minimize uncertainty

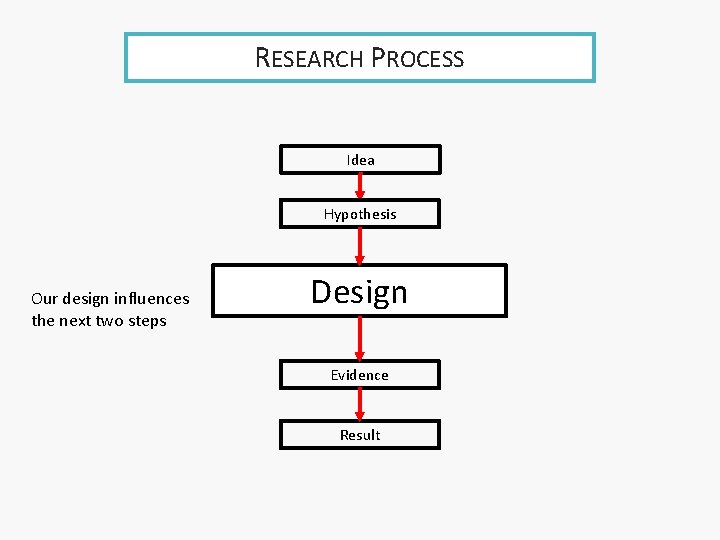

RESEARCH PROCESS Idea Hypothesis Our design influences the next two steps Design Evidence Result

RECAP • We are interested in the population • we can only take a sample ‣ our sample is an uncertain guide to the population • Uncertainty has two fundamental factors: ‣ quality of evidence ‣ quantity of independent evidence

RECAP • We can estimate the degree of uncertainty • we can also: ‣ reject the null hypothesis or ‣ fail to reject the null hypothesis • but this does not eradicate the uncertainty

RECAP • Because the uncertainty remains we may be in error: ‣ if we reject H 0, we may be making a Type I error (false positive) ‣ if we fail to reject, we may be making a Type II error (false negative)

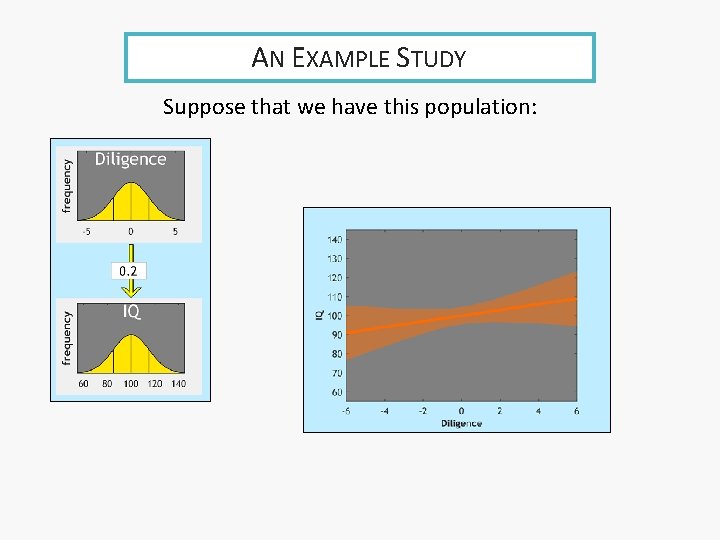

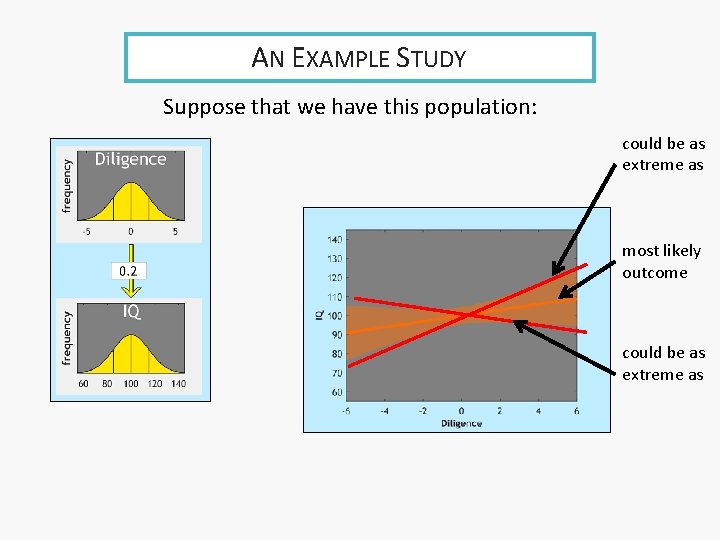

AN EXAMPLE STUDY Suppose that we have this population:

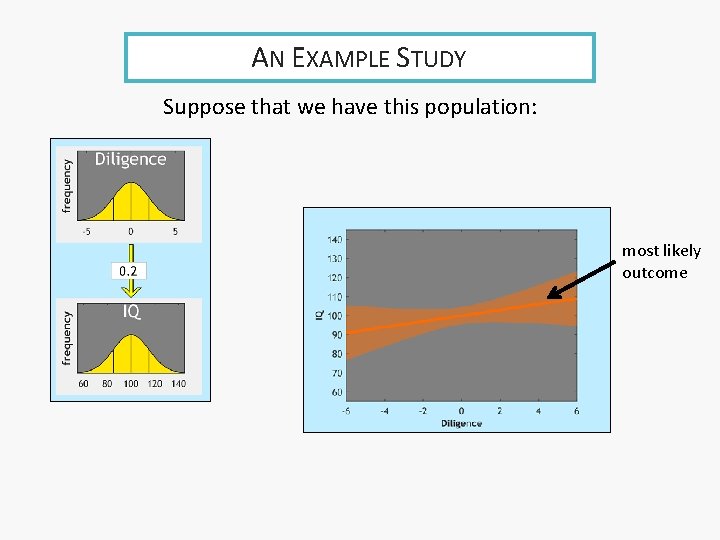

AN EXAMPLE STUDY Suppose that we have this population: most likely outcome

AN EXAMPLE STUDY Suppose that we have this population: could be as extreme as most likely outcome could be as extreme as

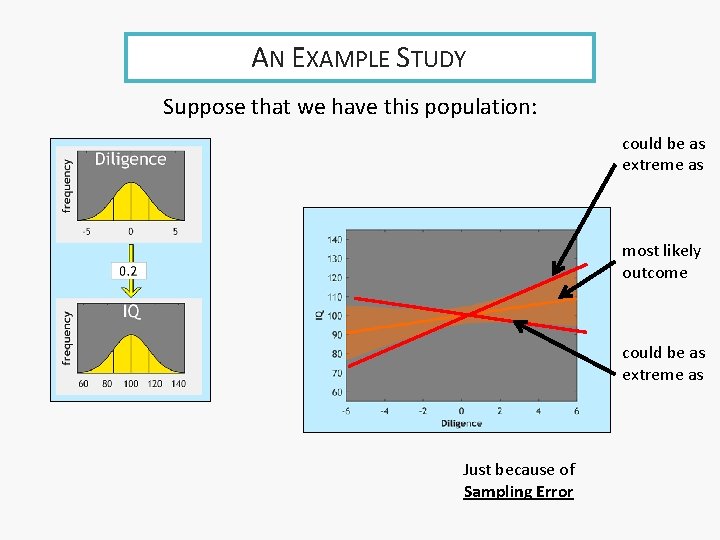

AN EXAMPLE STUDY Suppose that we have this population: could be as extreme as most likely outcome could be as extreme as Just because of Sampling Error

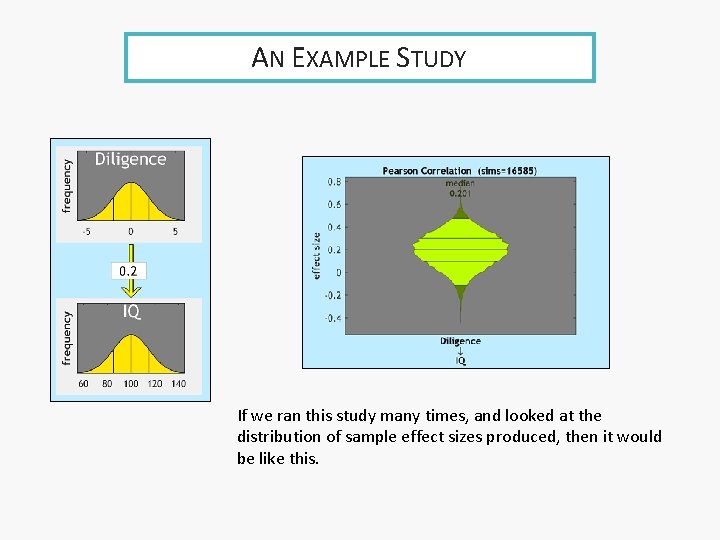

AN EXAMPLE STUDY If we ran this study many times, and looked at the distribution of sample effect sizes produced, then it would be like this.

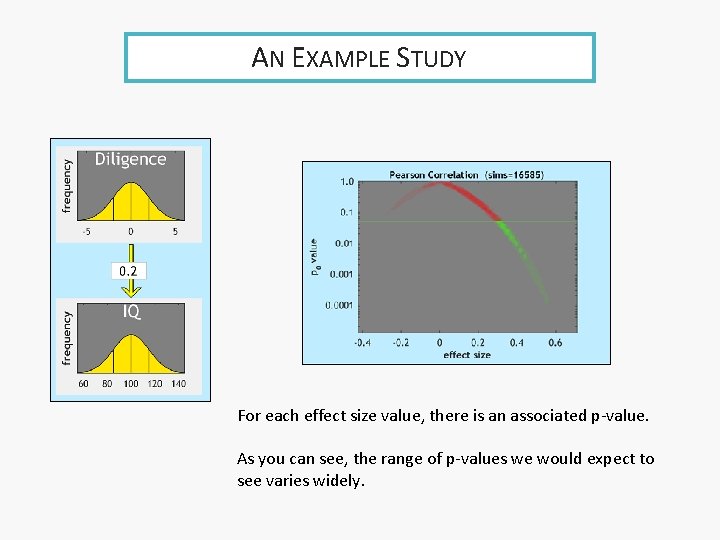

AN EXAMPLE STUDY For each effect size value, there is an associated p-value. As you can see, the range of p-values we would expect to see varies widely.

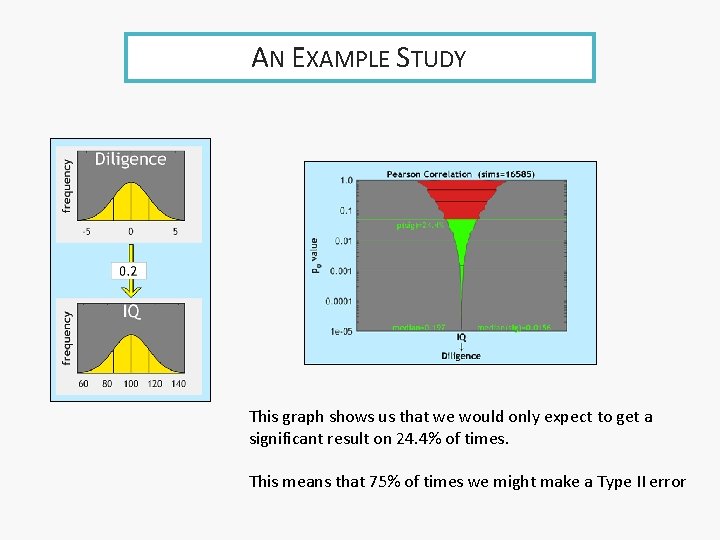

AN EXAMPLE STUDY This graph shows us that we would only expect to get a significant result on 24. 4% of times. This means that 75% of times we might make a Type II error

THE NULL HYPOTHESIS Given the null hypothesis (r=0. 0), this graph shows us that we would expect to get a significant result on 5% of times. Which means 5% of times we would make a Type I error

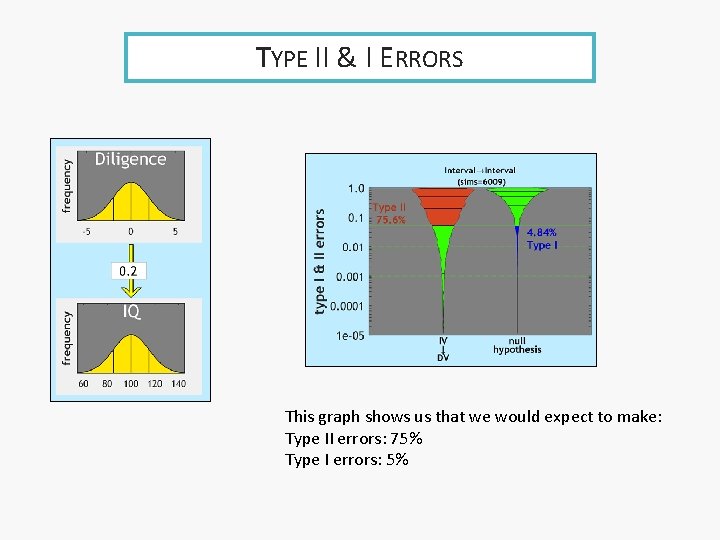

TYPE II & I ERRORS This graph shows us that we would expect to make: Type II errors: 75% Type I errors: 5%

DESIGN TO MINIMIZE UNCERTAINTY • Any result using a sample to study a population has uncertainty ‣ standard error ‣ confidence interval ‣ p value • We design our study ‣ to make the uncertainty as small as possible

LAZINESS PRINCIPLE • The laziness principle: design your research well, so you have to do less work • We need to reduce uncertainty as much as possible • Good design = ‣ quality of evidence: good choice of variables ‣ quantity of evidence: appropriate sampling & participants

Contents Choice of variables Measurement Error Choice of Variable Type VARIABLES

CHOICE OF VARIABLES • What variables we measure • How we measure them • What variable type we apply

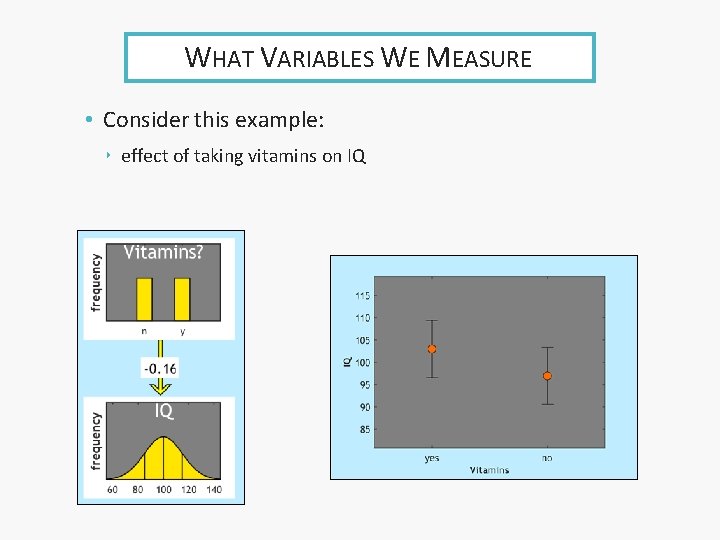

WHAT VARIABLES WE MEASURE • Consider this example: ‣ effect of taking vitamins on IQ

WHAT VARIABLES WE MEASURE • The issue we need to think about here is whether any relationship is real • or is it a spurious consequence of something else • it might be that a third variable – diligence – affects both taking vitamins and IQ ‣ making them appear to be directly related

UNCERTAINTY • Choose variables carefully!

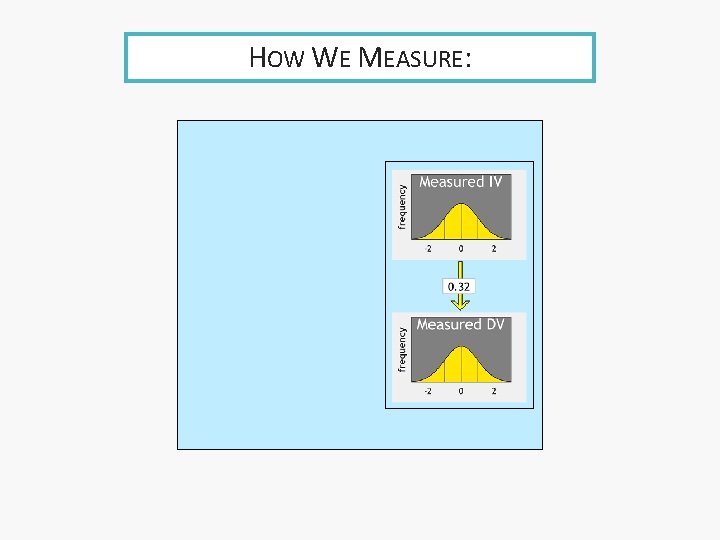

HOW WE MEASURE:

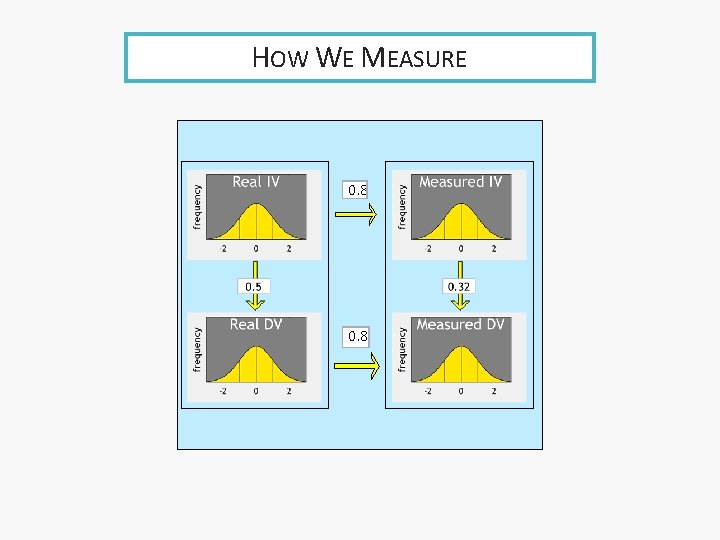

HOW WE MEASURE 0. 8

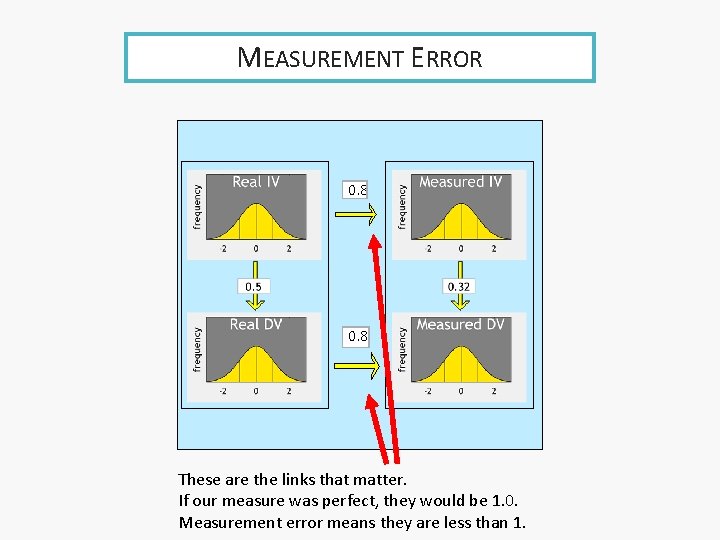

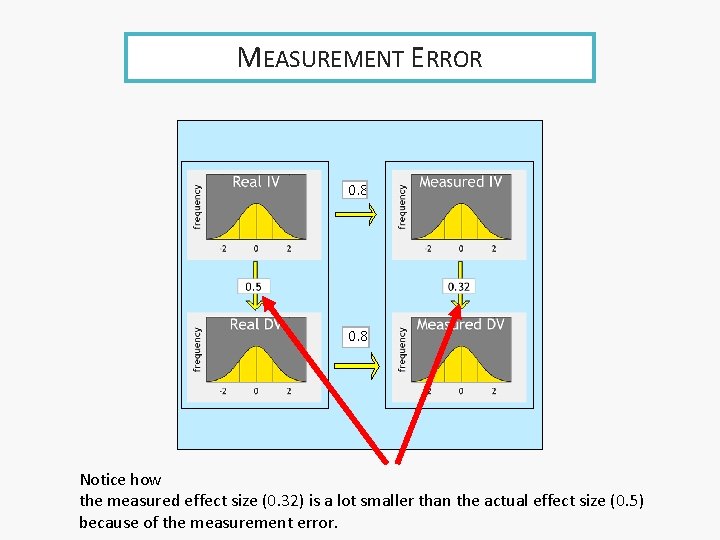

MEASUREMENT ERROR 0. 8 These are the links that matter. If our measure was perfect, they would be 1. 0. Measurement error means they are less than 1.

MEASUREMENT ERROR 0. 8 Notice how the measured effect size (0. 32) is a lot smaller than the actual effect size (0. 5) because of the measurement error.

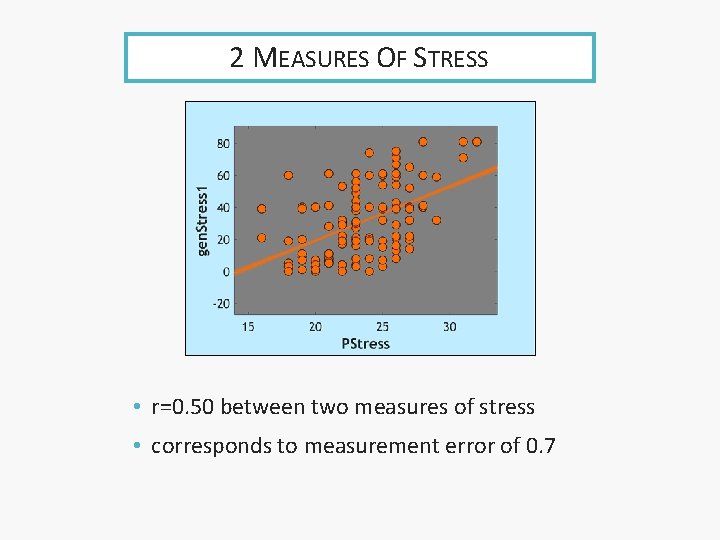

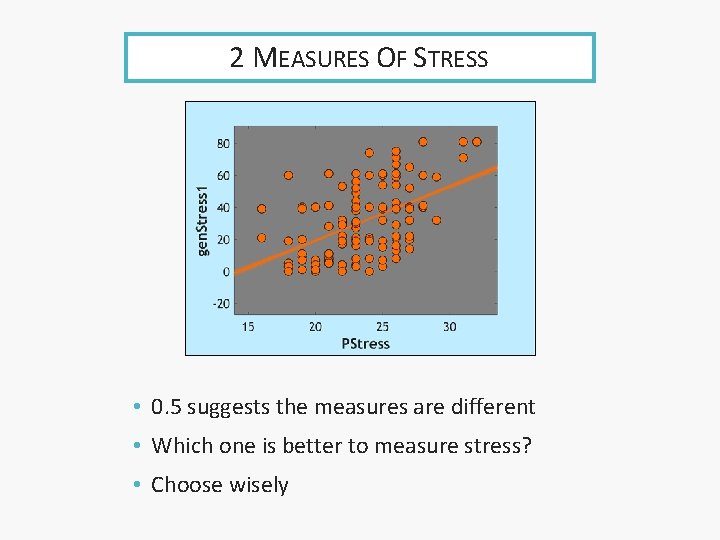

2 MEASURES OF STRESS • r=0. 50 between two measures of stress • corresponds to measurement error of 0. 7

2 MEASURES OF STRESS • 0. 5 suggests the measures are different • Which one is better to measure stress? • Choose wisely

CHOICE OF VARIABLE TYPE • Choice of variable type can influence 2 things: ‣ how much information you capture ‣ whether you can use the ordering in the data

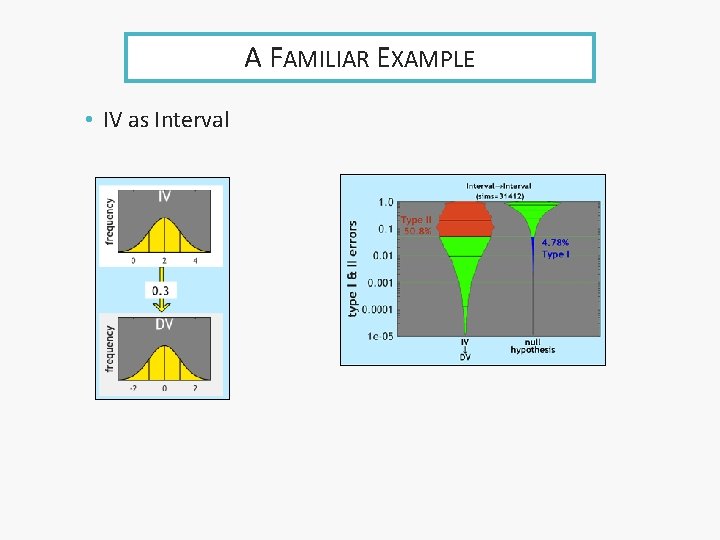

A FAMILIAR EXAMPLE • IV as Interval

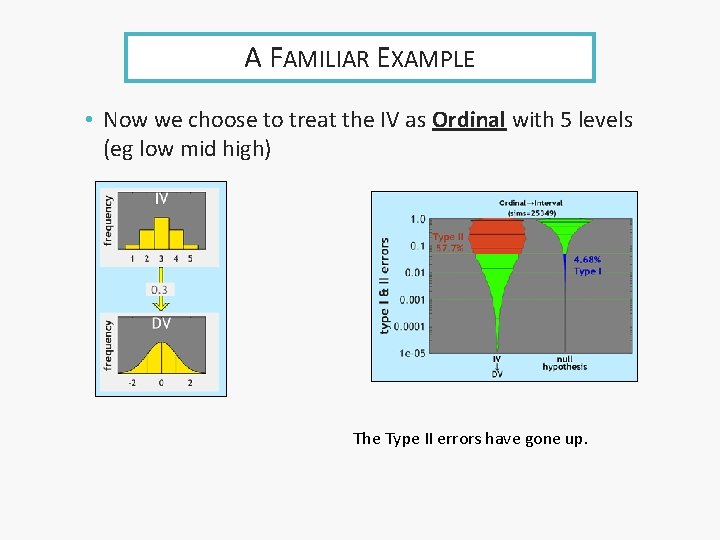

A FAMILIAR EXAMPLE • Now we choose to treat the IV as Ordinal with 5 levels (eg low mid high) The Type II errors have gone up.

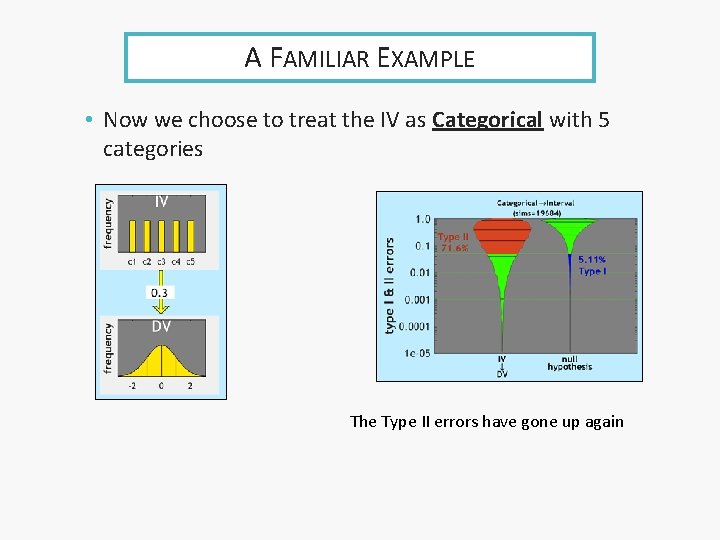

A FAMILIAR EXAMPLE • Now we choose to treat the IV as Categorical with 5 categories The Type II errors have gone up again

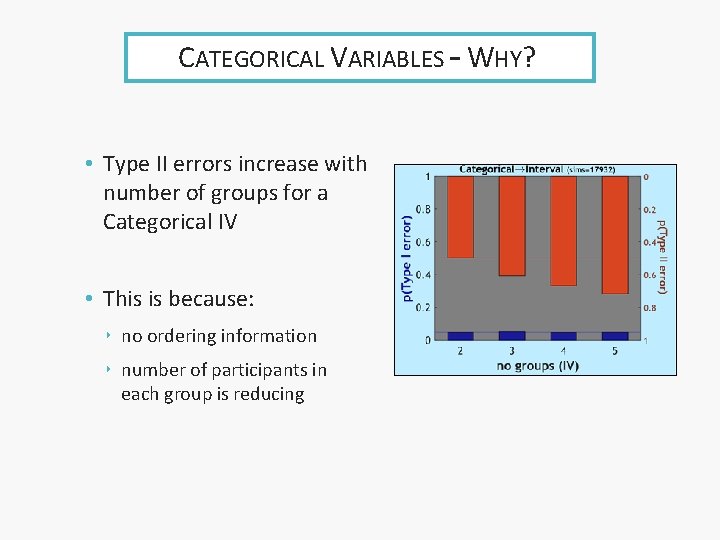

CATEGORICAL VARIABLES – WHY? • Type II errors increase with number of groups for a Categorical IV • This is because: ‣ no ordering information ‣ number of participants in each group is reducing

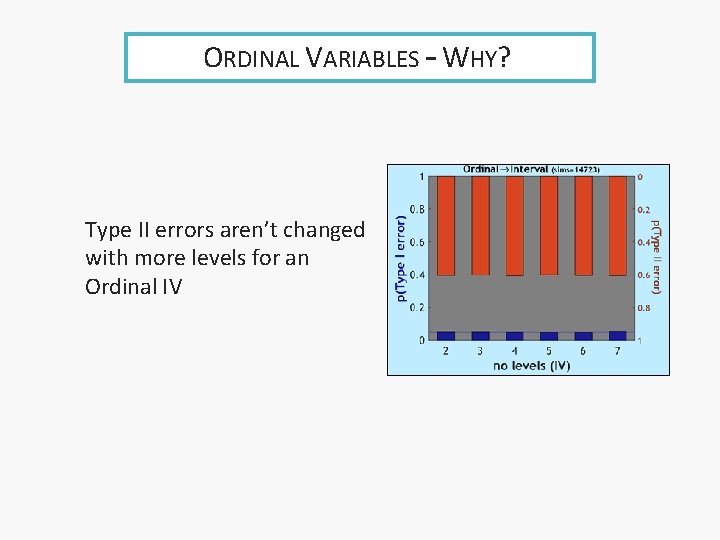

ORDINAL VARIABLES – WHY? Type II errors aren’t changed with more levels for an Ordinal IV

ERRORS AND VARIABLES • The choices we make influence Type II errors ‣ but not Type I errors, which remain fixed at 5%

RESEARCH DESIGN: VARIABLES • The research design is chosen to minimize Type II errors. • Choose variables thoughtfully • Choose strong ways of measuring variables • Use Interval measures wherever possible • These are all about the quality of the information we will get

Contents Design & Sampling Independent information Sampling Method SAMPLING Sample Size

DESIGN &SAMPLING • Goal: minimise uncertainty • Maximize quality ‣ choice of variables ‣ measurement of variables ‣ variables type • Maximize quantity ‣ sampling method & size ‣ participant allocation: between/within ‣ avoid issues

INDEPENDENT INFORMATION • Information comes from participants • How independent are the participants? ‣ Recruitment method • How much information? ‣ Number of participants

SAMPLE PARTICIPANTS • A sample needs to represent a population ‣ populations are usually full of very different people • Sample participants should be as different, or as independent as they are in the population ‣ e. g. if they are all from the same families or the same school, or all studying psychology, they are likely to be too similar ‣ they are not independent of each other

SAMPLING TO REPRESENT • A sample needs to be representative of the population ‣ represent = contain all the important features of the population ‣ including its variability/diversity • How to guarantee this? ‣ random sampling ‣ stratified sampling

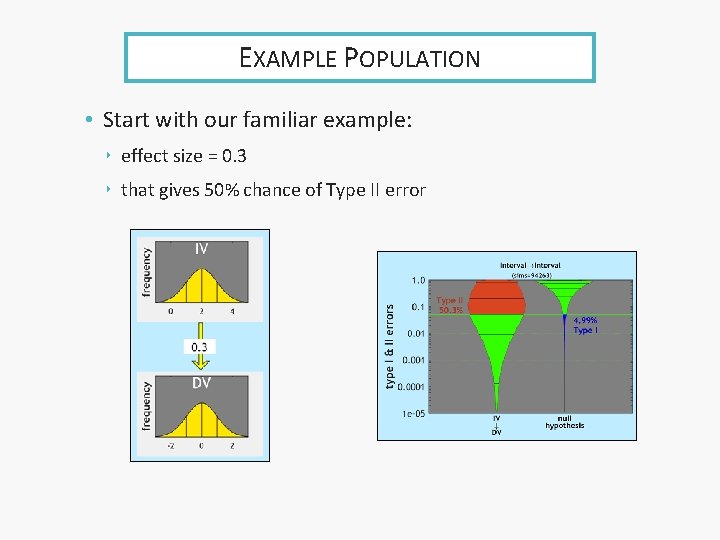

EXAMPLE POPULATION • Start with our familiar example: ‣ effect size = 0. 3 ‣ that gives 50% chance of Type II error

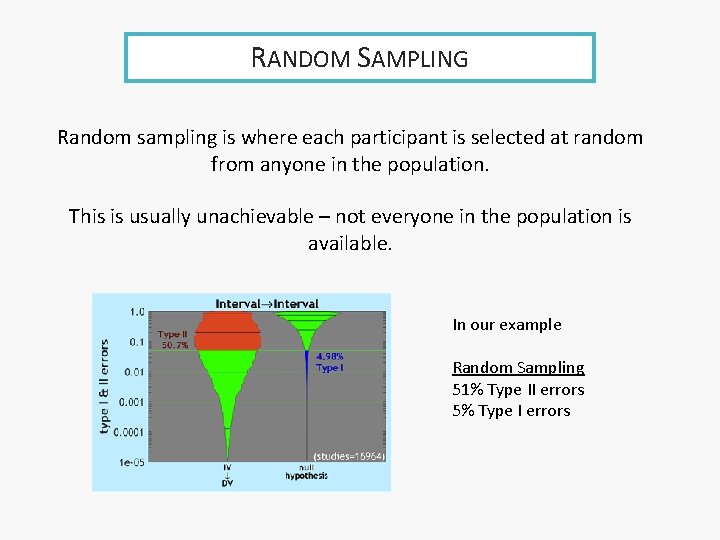

RANDOM SAMPLING Random sampling is where each participant is selected at random from anyone in the population. This is usually unachievable – not everyone in the population is available. In our example Random Sampling 51% Type II errors 5% Type I errors

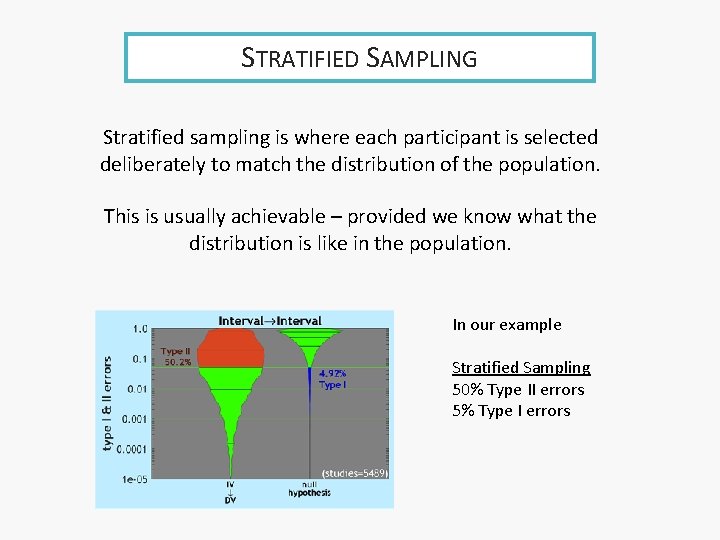

STRATIFIED SAMPLING Stratified sampling is where each participant is selected deliberately to match the distribution of the population. This is usually achievable – provided we know what the distribution is like in the population. In our example Stratified Sampling 50% Type II errors 5% Type I errors

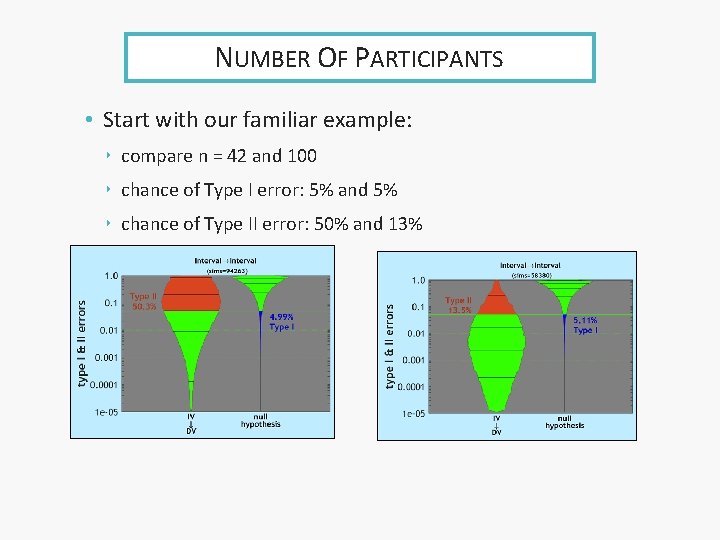

NUMBER OF PARTICIPANTS • Start with our familiar example: ‣ compare n = 42 and 100 ‣ chance of Type I error: 5% and 5% ‣ chance of Type II error: 50% and 13%

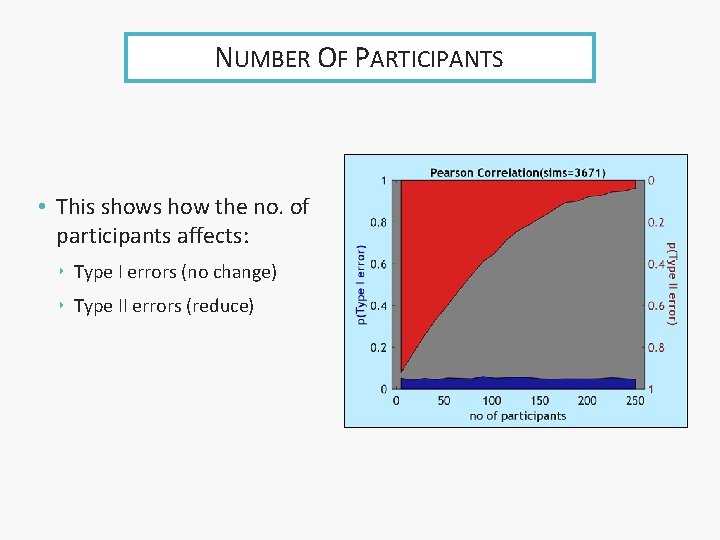

NUMBER OF PARTICIPANTS • This shows how the no. of participants affects: ‣ Type I errors (no change) ‣ Type II errors (reduce)

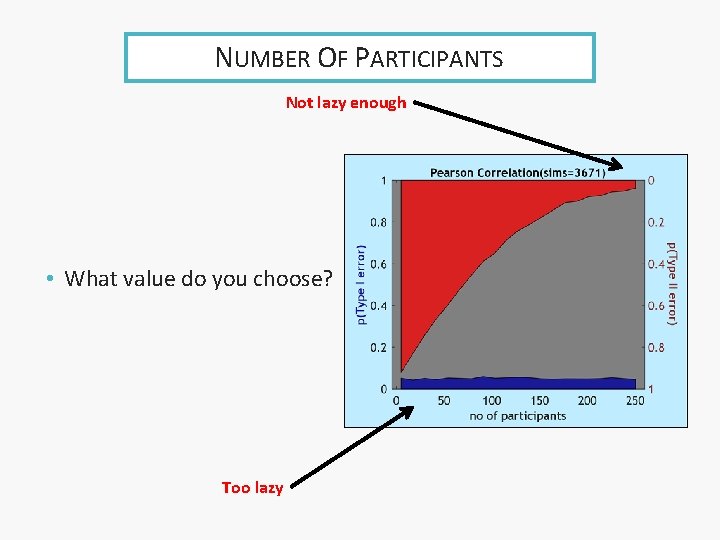

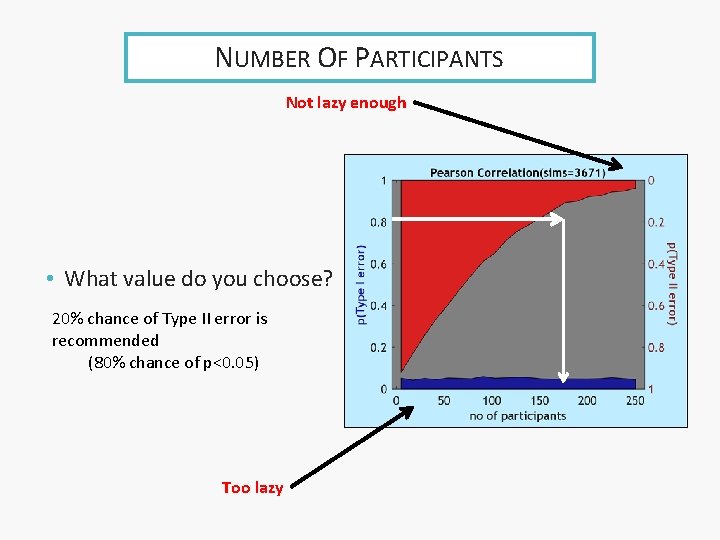

NUMBER OF PARTICIPANTS Not lazy enough • What value do you choose? Too lazy

NUMBER OF PARTICIPANTS Not lazy enough • What value do you choose? 20% chance of Type II error is recommended (80% chance of p<0. 05) Too lazy

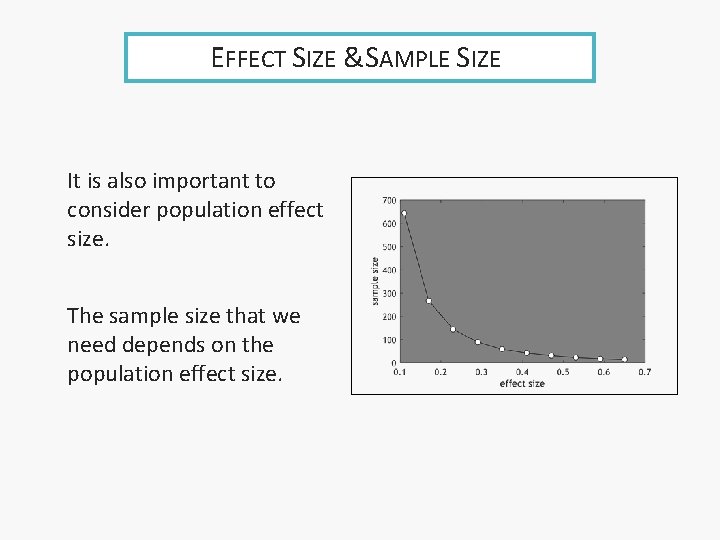

EFFECT SIZE &SAMPLE SIZE It is also important to consider population effect size. The sample size that we need depends on the population effect size.

Contents Experimental Variable Allocation of Participants Order Effects BETWEEN/WITHIN

EXPERIMENTAL VARIABLES • Sometimes we can make a variable (IV) ‣ such as some treatment/intervention vs control • We do this as a way of examining causation ‣ if we fully cause the experimental variable ‣ by random allocation ‣ then any effect it has on a DV must be caused by it • We must adhere to the random allocation ‣ anyone in the treatment group but refusing or not adhering to treatment stays in that group ‣ this is called Intention to Treat Analysis

ALLOCATION OF PARTICIPANTS • When we have an experimental IV (categorical) we may have a choice. • We can either ‣ split participants between the groups ‣ ask everybody to be in all groups • We talk of ‣ between design: split participants the comparison we make is between different participants ‣ within design: everyone does everything the comparison is within the same participants

ALLOCATION OF PARTICIPANTS • Between: different participants in each group • Within: same participants in each group

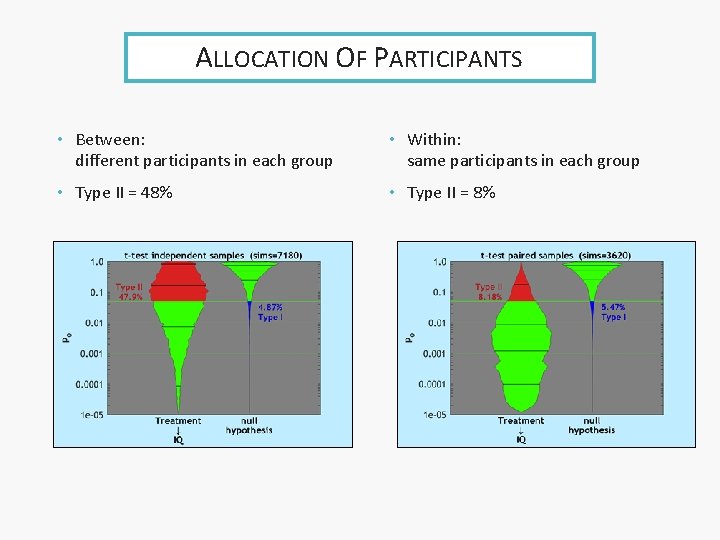

ALLOCATION OF PARTICIPANTS • Between: different participants in each group • Within: same participants in each group • Type II = 48% • Type II = 8%

ORDER EFFECTS • Order effects are an issue in within-groups design • If the participants do a task in multiple conditions, they may change their behaviour as they become familiar with the task • They may also become tired, bored, or disengaged • Counterbalancing is the solution ‣ do participants in different orders ‣ if you can

Contents Opportunity Sampling Non-independence Outliers DATA ANOMALIES

A LITTLE FURTHER. . • There are various ways in which recruiting participants can go wrong • Let’s look at three common anomalies

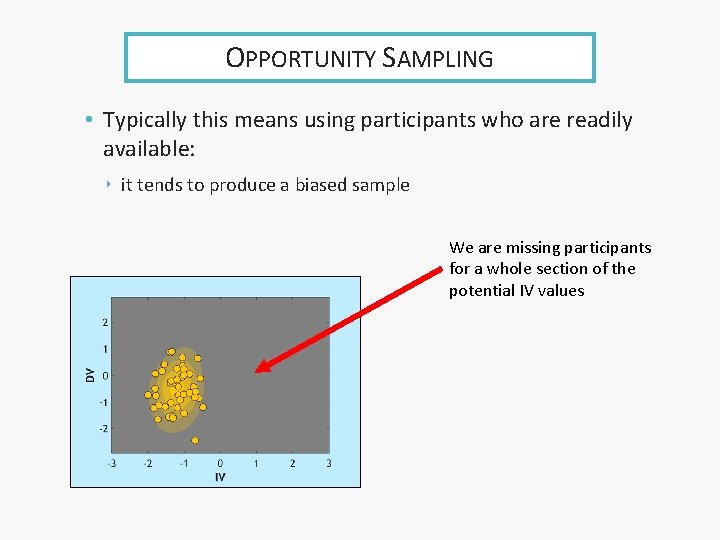

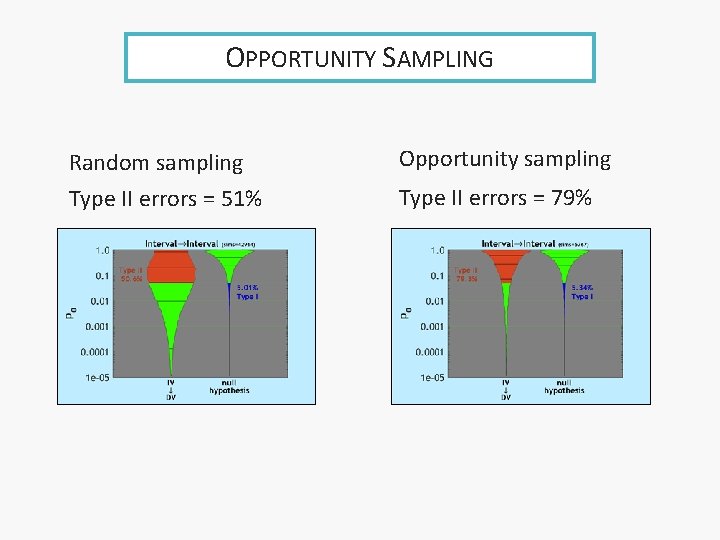

OPPORTUNITY SAMPLING • Typically this means using participants who are readily available: ‣ it tends to produce a biased sample We are missing participants for a whole section of the potential IV values

OPPORTUNITY SAMPLING Random sampling Type II errors = 51% Opportunity sampling Type II errors = 79%

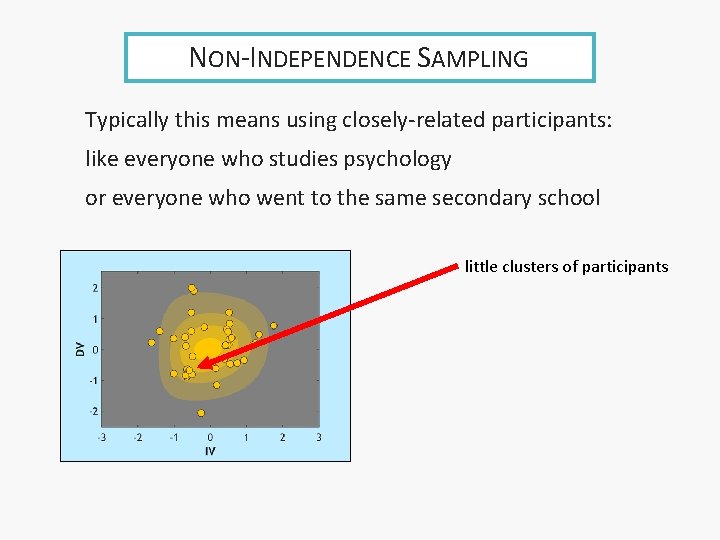

NON-INDEPENDENCE SAMPLING Typically this means using closely-related participants: like everyone who studies psychology or everyone who went to the same secondary school little clusters of participants

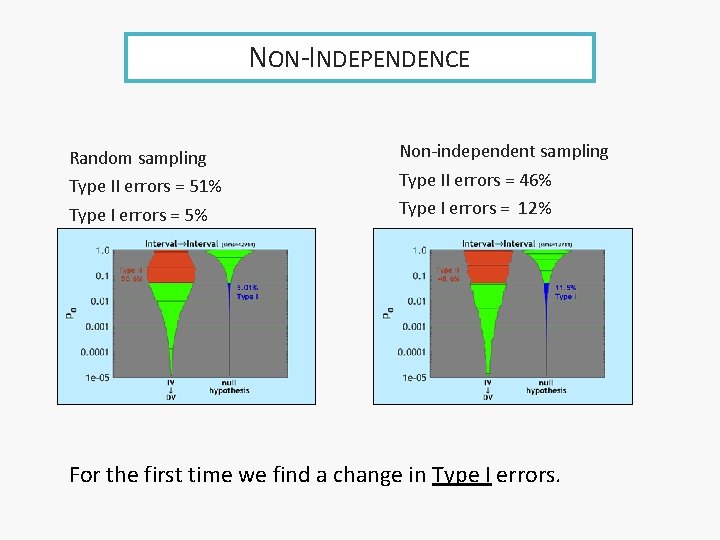

NON-INDEPENDENCE Random sampling Type II errors = 51% Type I errors = 5% Non-independent sampling Type II errors = 46% Type I errors = 12% For the first time we find a change in Type I errors.

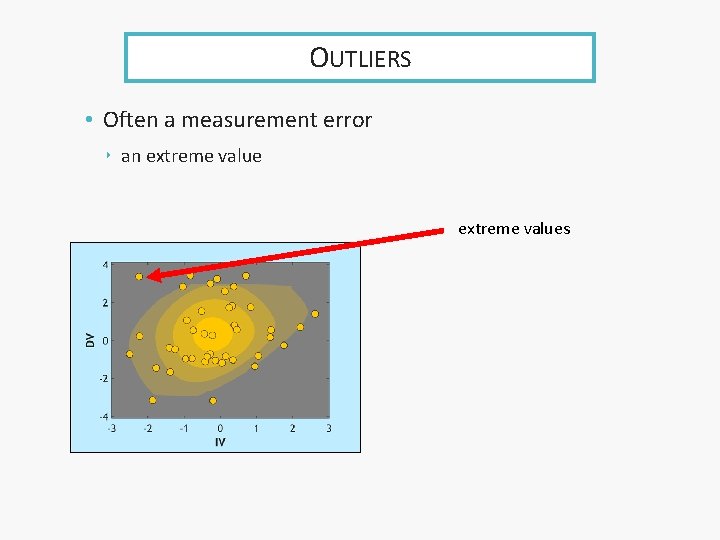

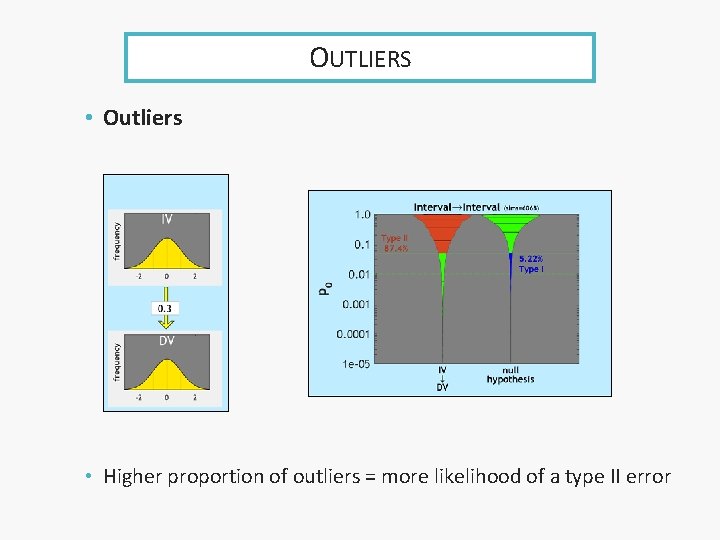

OUTLIERS • Often a measurement error ‣ an extreme values

OUTLIERS • Outliers • Higher proportion of outliers = more likelihood of a type II error

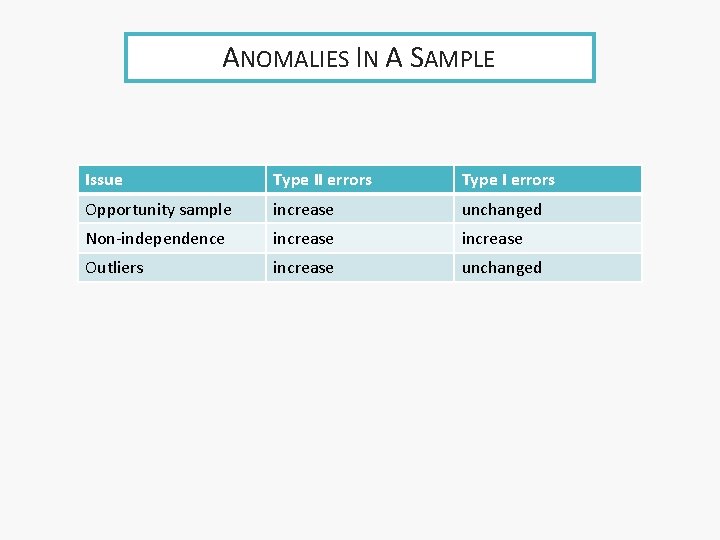

ANOMALIES IN A SAMPLE Issue Type II errors Type I errors Opportunity sample increase unchanged Non-independence increase Outliers increase unchanged

Contents Multiple tests & Type I errors Bonferroni Post-hoc tests DESIGNED ANALYSIS Contrasts

A BIT OF PREPARATION • Go back to the stupidity of having t-test and 1 -way ANOVA in the Cat Int situation: ‣ IV has 2 categories: use t-test ‣ IV has more than 2: use ANOVA • First we can acknowledge that the 1 -way ANOVA could do both situations and it is mere habit that we don’t do that • Bit then let’s look at the situation with more than 2 categories closer

1 -WAY ANOVA • 3 categories: a, b, c • This potentially involves 3 pairwise comparisons: ‣ a vs b ‣ a vs c ‣ b vs c • So we could do 3 t-tests here, but…

BUT • 3 t-tests means higher chance of making a Type I error • Chances of not making a Type I error at all: ‣ not making a Type I error for a vs b (95%) ‣ and ‣ not making a Type I error for a vs c (95%) ‣ and ‣ not making a Type I error for b vs c (95%)

AND • when we combine probabilities by and ‣ we multiply the probabilities • so total chance of not making a type I error ‣ 95% x 95% ‣ = 86% • so total chance of making a type I error ‣ =100%-86% = 14% ‣ not 5%

WELL? • this is very bad

BONFERRONI • So we make an adjustment. ‣ we set alpha (normally 0. 05) to something lower so that we keep our overall chance of making a Type I error at 5% • This is called the Bonferroni correction ‣ well not quite, because as usual statistics is upside down ‣ we need to talk about SPSS

SPSS &POST HOC TESTS • When you do post-hoc tests in SPSS, you can ask for a Bonferroni correction ‣ in which case, SPSS adjusts the p-value not the alpha level ‣ sigh

THE PROPER WAY TO PROCEED • The 1 -way ANOVA is just one test ‣ called an omnibus test ‣ and it tells us there is something going on somewhere ‣ but not where • That is enough to license us to look more closely ‣ now we can do lots of separate t-tests because we know there is something to find ‣ these do not increase the chance of a Type I error because they are justified by the omnibus test ‣ to make this plain, we call these subsequent tests post-hoc tests

THE PROPER WAY TO PROCEED • Or…

GO BACK TO T-TEST • With the t-test: ‣ if the omnibus test rejects the null hypothesis ‣ we can see without doing further tests what the effect is • there is only one difference in means ‣ the omnibus test simultaneously considers two possibilities: • a>b • b>a ‣ and tells us if either is strong • This test is called “ 2 -tailed”

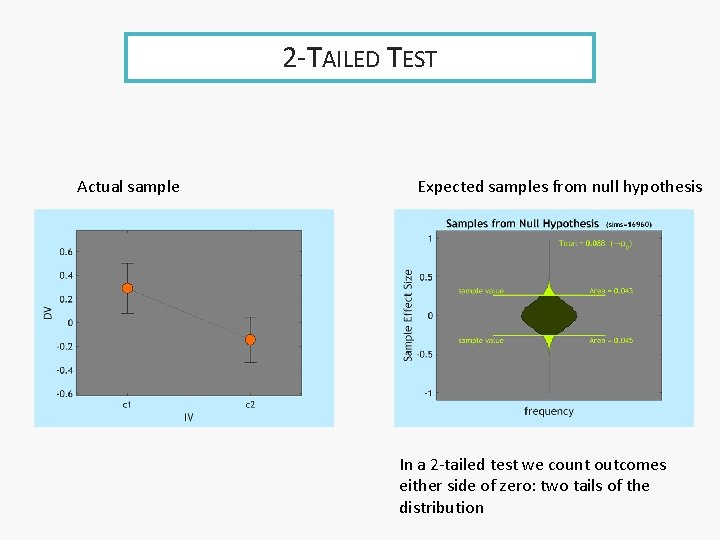

2 -TAILED TEST Actual sample Expected samples from null hypothesis In a 2 -tailed test we count outcomes either side of zero: two tails of the distribution

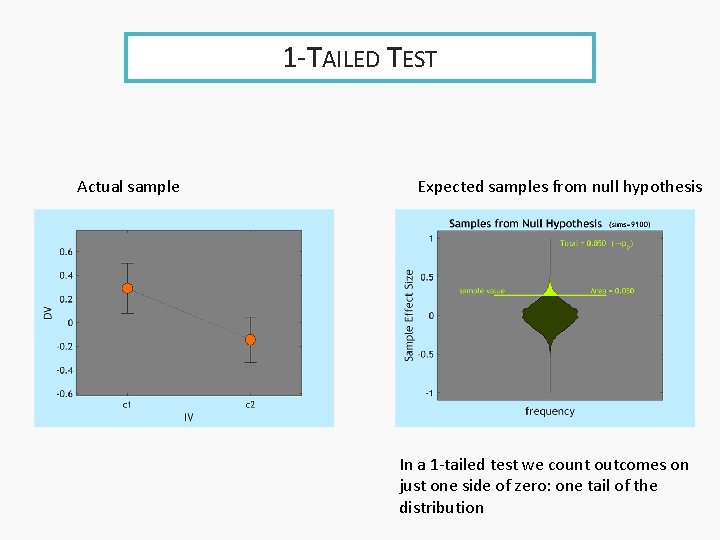

1 -TAILED TEST Actual sample Expected samples from null hypothesis In a 1 -tailed test we count outcomes on just one side of zero: one tail of the distribution

2 -TAILED & 1 -TAILED • The omnibus test in a t-test is called 2 -tailed ‣ because it simultaneously assesses both tails (ends) of the sampling distribution for the of null hypothesis ‣ either of the 2 possible departures from null hypothesis • If we have good cause to be interested in only one direction of effect ‣ we can use a 1 -tailed test ‣ the p-value is halved (which is very useful) • A t-test is restricted to a case where ‣ there are only 2 directions ‣ which correspond to the two tails of the sampling distribution for the null hypothesis

THE 1 -TAILED WARNING • There is a big warning needed here. • We must decide 1 -tailed or 2 -tailed ‣ before we look at the data ‣ and must have a good reason • Or we will double our Type I error rate

1 -WAY ANOVA • The logic of a 1 -way ANOVA means that there are multiple different directions the effect can go ‣ 3 groups means 6 different directions • In a sense the omnibus test is a 6 -tailed test • How do we get the benefit of a directional hypothesis?

CONTRASTS • The equivalent to 1 -tailed is called a Contrast effect. • In a contrast we decide the direction of the effect, before looking at the data ‣ a>b>c or ‣ b>a>c or whatever • We then use that direction in our test (and ignore all the other possible directions)

CONTRAST (ORDINAL) • The contrast: ‣ a>b>c ‣ or whatever • This converts the Categorical IV into an Ordinal IV ‣ and the contrast test does a Spearman Correlation

CONTRAST (INTERVAL) • We can be more specific: ‣ a=c*3. 1 ‣ b=c*1. 4 ‣ or whatever • This converts the Categorical IV into an Interval IV ‣ and the contrast test does a Pearson Correlation

- Slides: 84