Reproducible Science Gordon Watts University of WashingtonSeattle Seattle

Reproducible Science Gordon Watts (University of Washington/Seattle) @Seattle. Gordon 2017 -09 -22 Center for modeling complex interactions G. WATTS (UW/SEATTLE)

Introduction and Motivation Work at UW e. Science Center Outline Work from DASPOS Work from CERN Funding Agencies Conclusions & Links G. WATTS (UW/SEATTLE)

Nullius in Verba “take no one’s word for it” The Royal Society’s motto (founded 1660) G. WATTS (UW/SEATTLE)

The Royal Society Robert Boyle Gatherings where you would see the experiments reproduced Documentation standards so you could imagine being in the room • Provides evidence the claim is true • Check for fraud and error • A “springboard for progress” by enabling replication The knowledge and the techniques used to gain that knowledge G. WATTS (UW/SEATTLE)

Is this science today? G. WATTS (UW/SEATTLE)

19 pages of text and plots 1 title page 4 pages of references 13 pages of author list For a search at the world’s largest experiment G. WATTS (UW/SEATTLE)

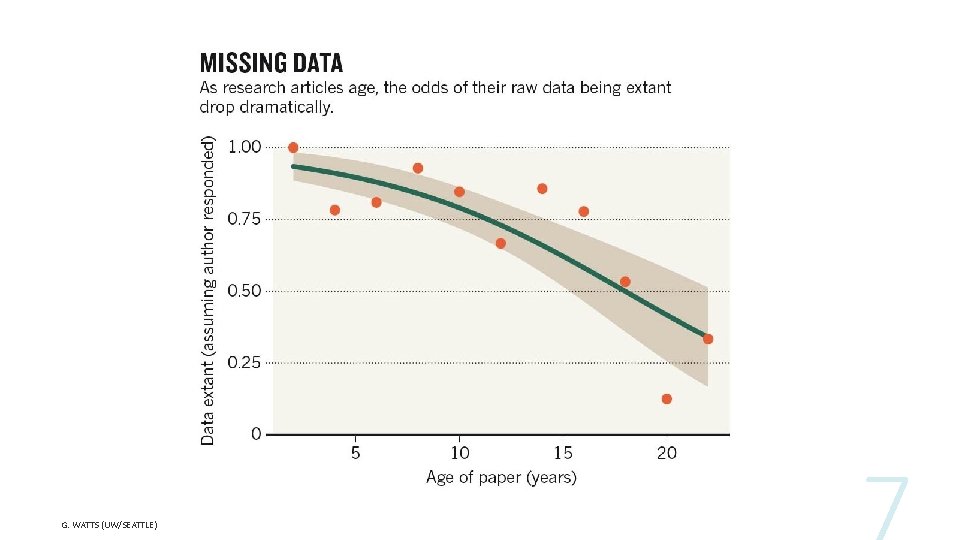

G. WATTS (UW/SEATTLE)

Big Science Big Datasets Complexity of Modern analysis software Page limits on articles G. WATTS (UW/SEATTLE)

Can we do better? We might not be able to build a second Large Hadron Collider Perhaps some of the same tools that enable Big Science/Big Datasets can be used to address this G. WATTS (UW/SEATTLE)

This is a many year effort! We have not yet codified best practices as Boyle did for this new environment G. WATTS (UW/SEATTLE)

UW e. Science Center G. WATTS (UW/SEATTLE)

https: //gordonwatts. github. io/ros-roadshow An adaptation of the standard e. Science Road Show (Workshops for Students) G. WATTS (UW/SEATTLE)

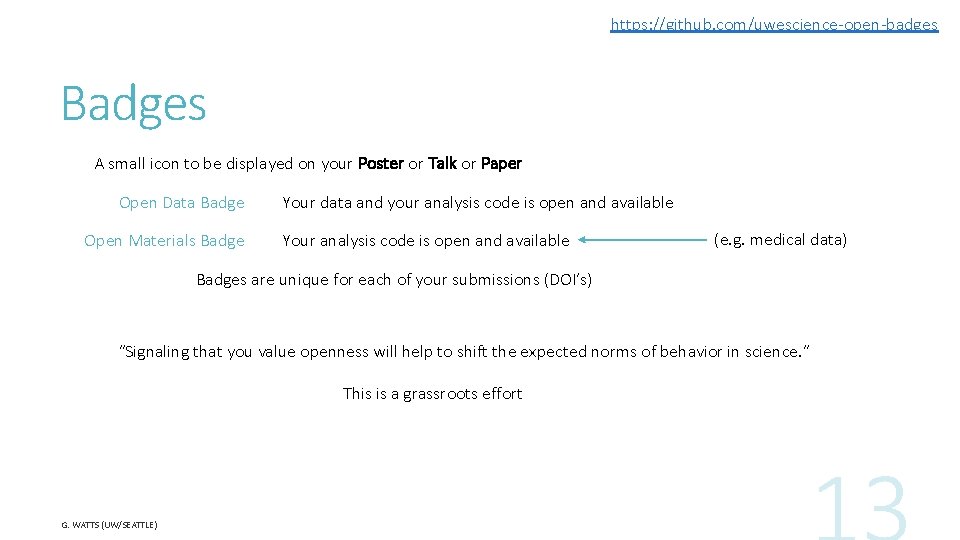

https: //github. com/uwescience-open-badges Badges A small icon to be displayed on your Poster or Talk or Paper Open Data Badge Open Materials Badge Your data and your analysis code is open and available Your analysis code is open and available (e. g. medical data) Badges are unique for each of your submissions (DOI’s) “Signaling that you value openness will help to shift the expected norms of behavior in science. ” This is a grassroots effort G. WATTS (UW/SEATTLE)

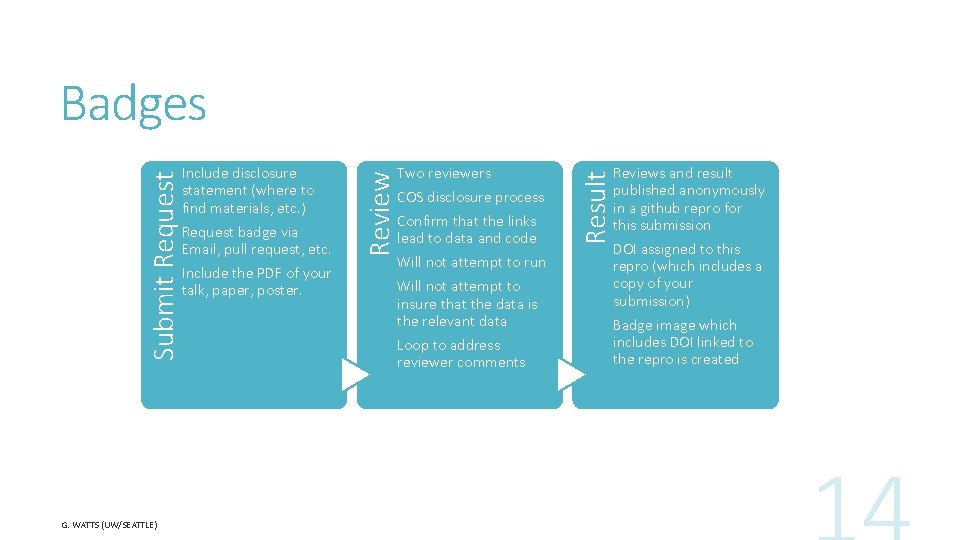

G. WATTS (UW/SEATTLE) Request badge via Email, pull request, etc. Include the PDF of your talk, paper, poster. Two reviewers COS disclosure process Confirm that the links lead to data and code Will not attempt to run Will not attempt to insure that the data is the relevant data Loop to address reviewer comments Result Include disclosure statement (where to find materials, etc. ) Review Submit Request Badges Reviews and result published anonymously in a github repro for this submission DOI assigned to this repro (which includes a copy of your submission) Badge image which includes DOI linked to the repro is created

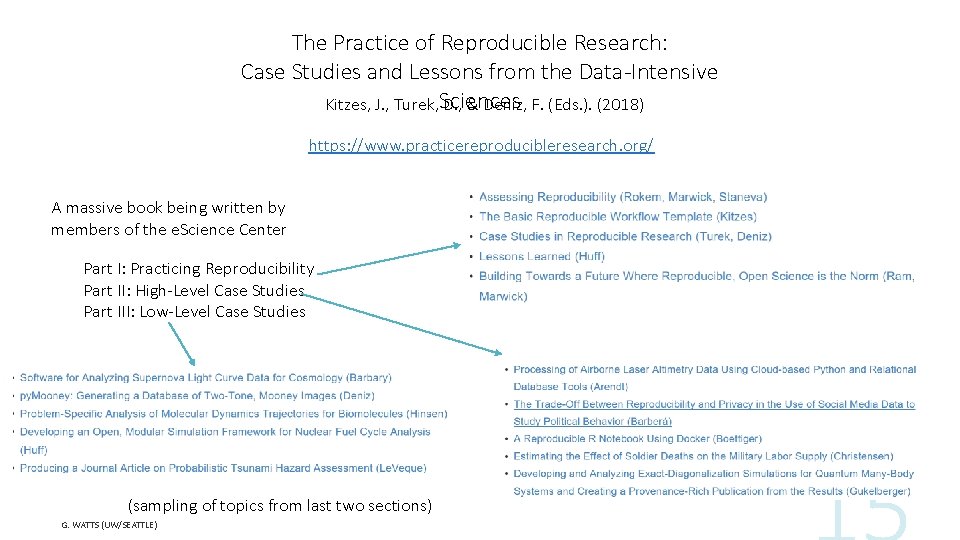

The Practice of Reproducible Research: Case Studies and Lessons from the Data-Intensive Kitzes, J. , Turek, Sciences D. , & Deniz, F. (Eds. ). (2018) https: //www. practicereproducibleresearch. org/ A massive book being written by members of the e. Science Center Part I: Practicing Reproducibility Part II: High-Level Case Studies Part III: Low-Level Case Studies (sampling of topics from last two sections) G. WATTS (UW/SEATTLE)

DASPOS G. WATTS (UW/SEATTLE)

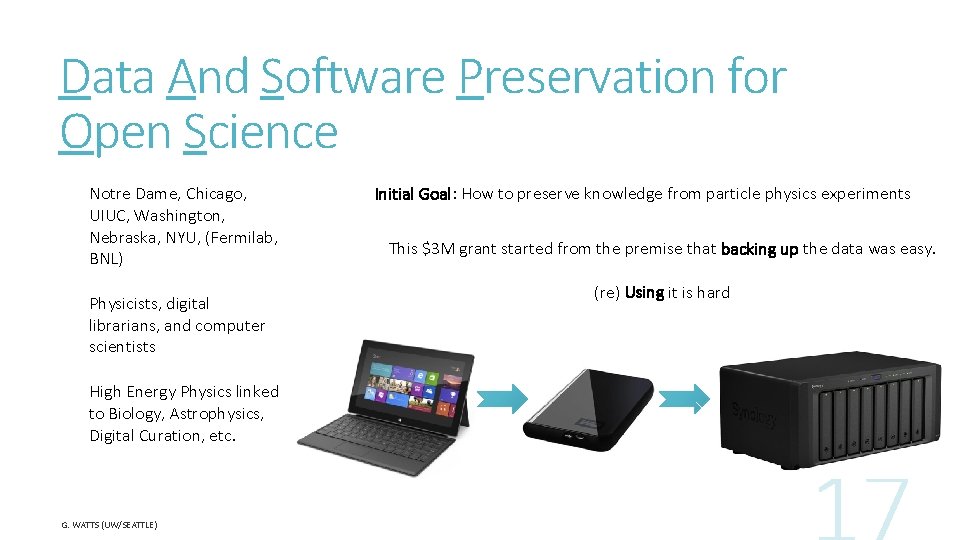

Data And Software Preservation for Open Science Notre Dame, Chicago, UIUC, Washington, Nebraska, NYU, (Fermilab, BNL) Physicists, digital librarians, and computer scientists High Energy Physics linked to Biology, Astrophysics, Digital Curation, etc. G. WATTS (UW/SEATTLE) Initial Goal: How to preserve knowledge from particle physics experiments This $3 M grant started from the premise that backing up the data was easy. (re) Using it is hard

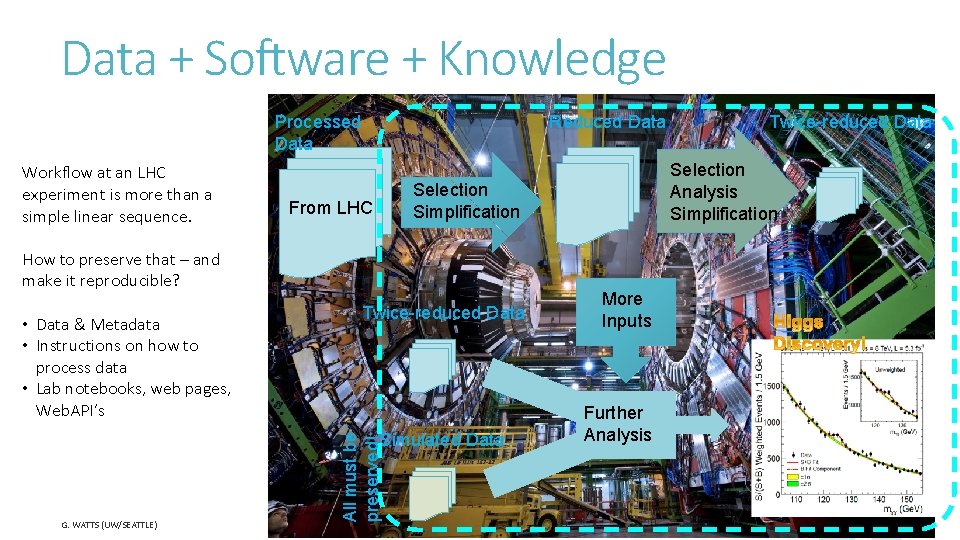

Data + Software + Knowledge Processed Data Workflow at an LHC experiment is more than a simple linear sequence. Reduced Data How to preserve that – and make it reproducible? • Data & Metadata • Instructions on how to process data • Lab notebooks, web pages, Web. API’s Twice-reduced Data All must be preserved! Simulated Data G. WATTS (UW/SEATTLE) Selection Analysis Simplification Selection Simplification From LHC Twice-reduced Data More Inputs Further Analysis

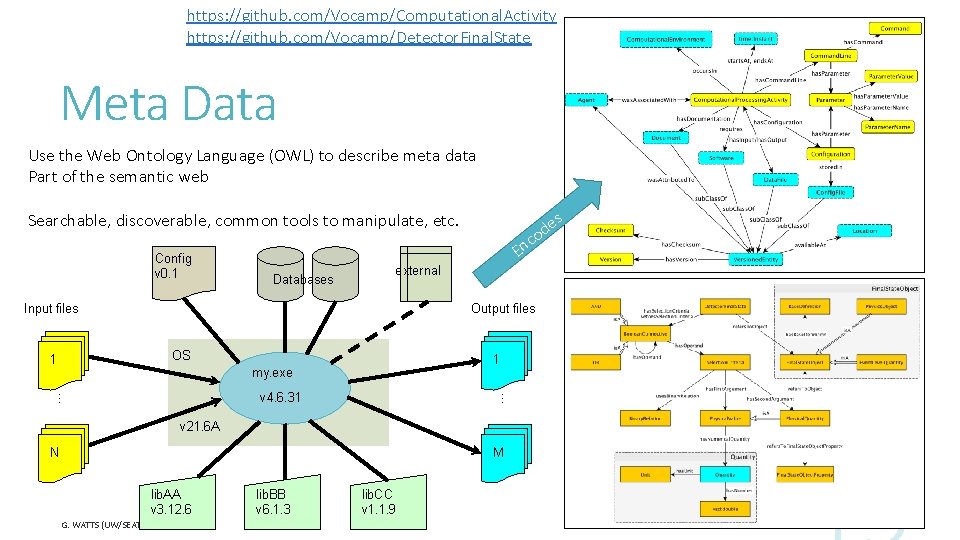

https: //github. com/Vocamp/Computational. Activity https: //github. com/Vocamp/Detector. Final. State Meta Data Use the Web Ontology Language (OWL) to describe meta data Part of the semantic web s Searchable, discoverable, common tools to manipulate, etc. Config v 0. 1 c En Databases external Input files Output files OS 1 1 my. exe … … v 4. 6. 31 v 21. 6 A N M lib. AA v 3. 12. 6 G. WATTS (UW/SEATTLE) lib. BB v 6. 1. 3 lib. CC v 1. 1. 9 e od

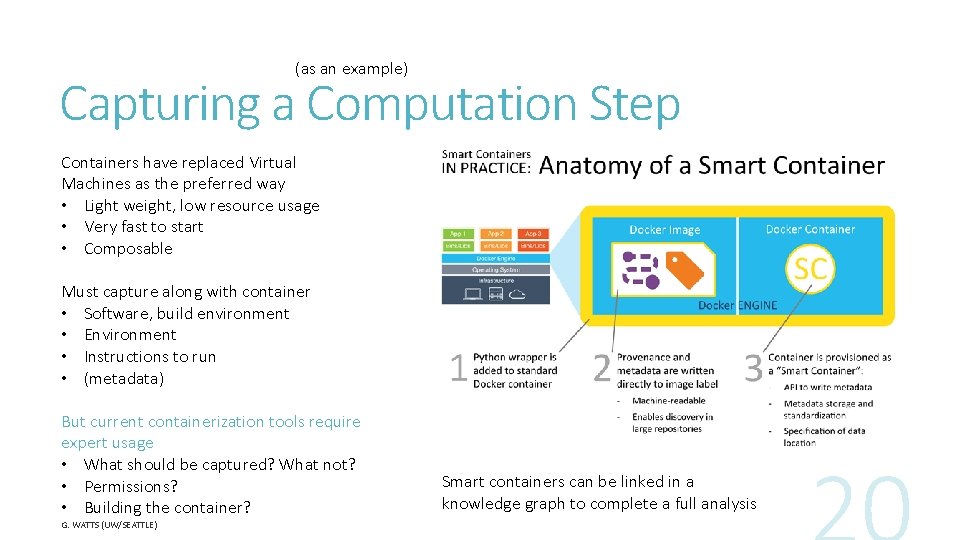

(as an example) Capturing a Computation Step Containers have replaced Virtual Machines as the preferred way • Light weight, low resource usage • Very fast to start • Composable Must capture along with container • Software, build environment • Environment • Instructions to run • (metadata) But current containerization tools require expert usage • What should be captured? What not? • Permissions? • Building the container? G. WATTS (UW/SEATTLE) Smart containers can be linked in a knowledge graph to complete a full analysis

Work at CERN Putting this together G. WATTS (UW/SEATTLE)

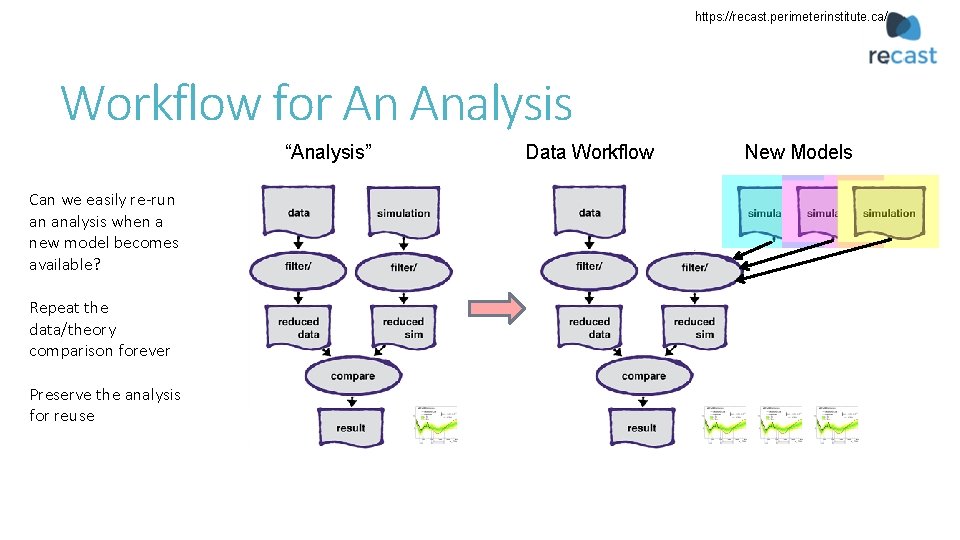

https: //recast. perimeterinstitute. ca/ Workflow for An Analysis “Analysis” Can we easily re-run an analysis when a new model becomes available? Repeat the data/theory comparison forever Preserve the analysis for reuse Data Workflow New Models

Wouldn’t it be nice if you had a tool that: • Automatically captured provenance and processing details for everything you did • could manage the bookkeeping for thousands of analysis jobs • provided a way of saving snapshots of work • could tell you “what did I do last week? ” G. WATTS (UW/SEATTLE)

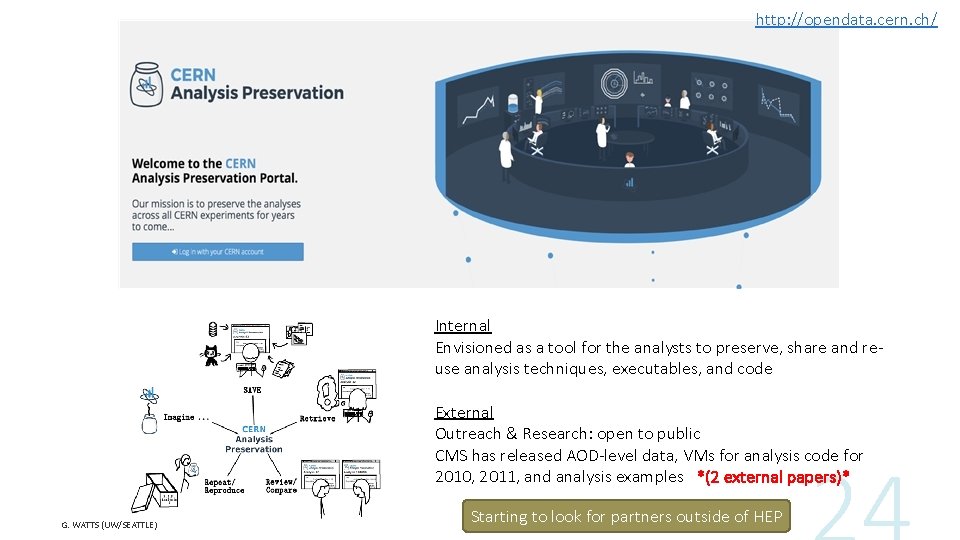

http: //opendata. cern. ch/ Internal Envisioned as a tool for the analysts to preserve, share and reuse analysis techniques, executables, and code External Outreach & Research: open to public CMS has released AOD-level data, VMs for analysis code for 2010, 2011, and analysis examples *(2 external papers)* G. WATTS (UW/SEATTLE) Starting to look for partners outside of HEP

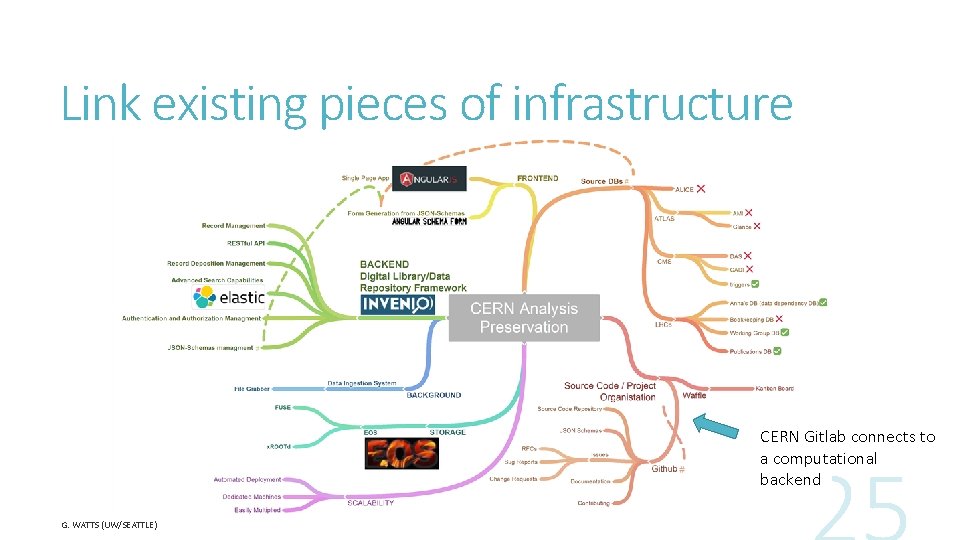

Link existing pieces of infrastructure CERN Gitlab connects to a computational backend G. WATTS (UW/SEATTLE)

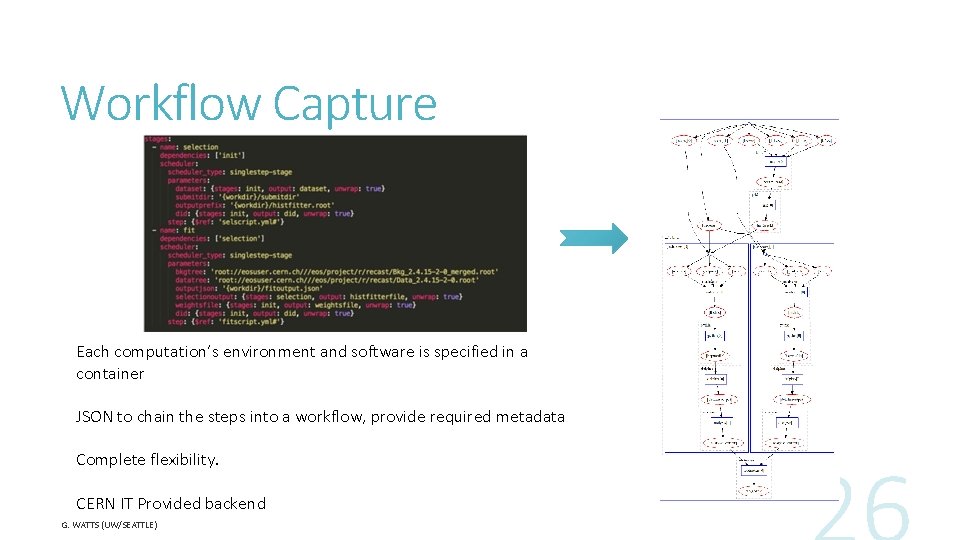

Workflow Capture Each computation’s environment and software is specified in a container JSON to chain the steps into a workflow, provide required metadata Complete flexibility. CERN IT Provided backend G. WATTS (UW/SEATTLE)

Funding Agencies G. WATTS (UW/SEATTLE)

Policy Questions mpsopendata. crc. nd. edu What data should be saved? What else should be saved besides the data to enable re-use? Where should it be stored? Who pays for the storage? Federal grants typically run 3 years after this the money is spent Who pays to allow the public to access the data? network & storage infrastructure isn’t free Funding agencies developing policies, need guidance NSF (and DOE) want to update their Data Management Plan guidelines Looking for input from the communities they serve

Policy Responses Community exercise to provide feedback to NSF on knowledge preservation questions from Physical Sciences (input from APS, ACS, surveys, workshops, etc. ) Final Report just issued, some conclusions: • Data and other digital artifacts upon which publications are based should be made publicly available in a digital, machine-readable format, and persistently linked to those relevant publications. • Different disciplines have a wide variety of current practices and expectations for appropriate levels of the sharing of data and other scientific results. A discipline-specific policy discussion will be required to determine an appropriate level of preservation and re-use. • The provision of public access to data entails costs in infrastructure and human effort, and that some types of data may be impractical to archive, annotate, and share. Cost-benefit analyses should be conducted in order to set the level of expectations for the researcher, his or her institution, and the funding agency. • Creating incentives toward the sharing of data is the primary way to accomplish broad adoption of open access to data as the norm Exploring and understanding these ingredients are the next steps for the science disciplines

Lots of personal and career benefits to using Open Science and Reproducible techniques in our daily work. Common Techniques Favor composable techniques Command line – all things scriptable and controllable from a common script As many non-proprietary tools as possible Good tools are already there Git/Git. Hub Containers and VM’s Notebooks Web Tools G. WATTS (UW/SEATTLE) Tools Conclusions Next Big Task Improve the tools! Get Started! Do not hesitate to publish and share new tools This is where the frontier of this effort currently exists Tools are all almost certainly going to be domain specific

References Kitzes, J. , Turek, D. , & Deniz, F. (Eds. ). (2018). The Practice of Reproducible Research: Case Studies and Lessons from the Data-Intensive Sciences. Oakland, CA: University of California Press. Mike Hildreth (Notre Dame), “Data, Software, and Knowledge Preservation of Scientific Results”, ACAT 2017 (Seattle). https: //indico. cern. ch/event/567550/contributions/2656689/ UW e. Science Center - http: //escience. washington. edu/ - The reproducibility working group: http: //uwescience. github. io/reproducible/ DASPOS - http: //daspos. org/ e. Science slides contain direct links as well G. WATTS (UW/SEATTLE)

- Slides: 31