Reproducibility in Research Me Mo meeting 9 Jul2020

Reproducibility in Research Me. Mo meeting 9 -Jul-2020 Johnny Lau & Stef Meliss

Aim: to cover skills, tools and best practices for research reproducibility Reproducibility, Metadata, Open Science • Revisit why the replicability ‘crisis’ is important • Metadata and why it matters • The Open Science efforts out there Presentation adopted from Ted Laderas (2019) : https: //laderast. github. io/PHE 427/week 7. 2/#1

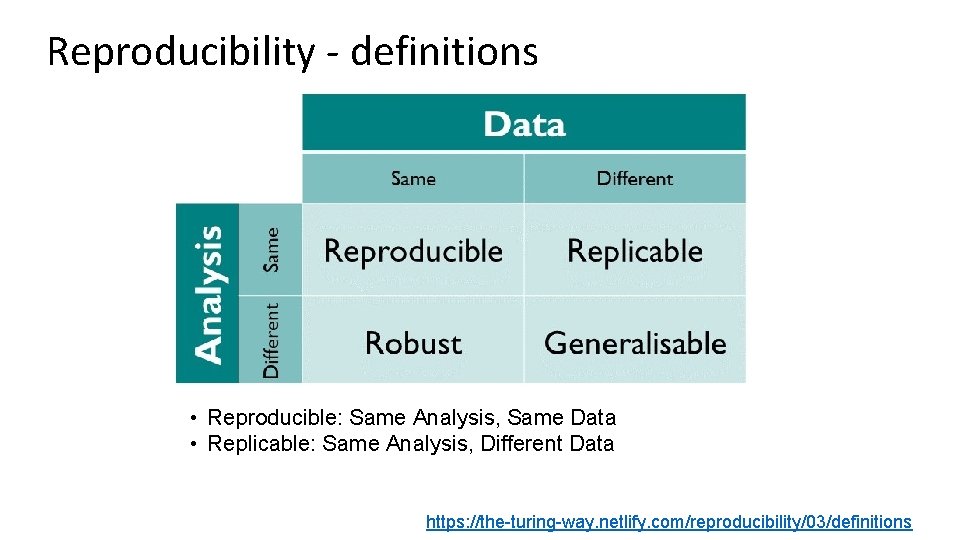

Reproducibility - definitions • Reproducible: Same Analysis, Same Data • Replicable: Same Analysis, Different Data https: //the-turing-way. netlify. com/reproducibility/03/definitions

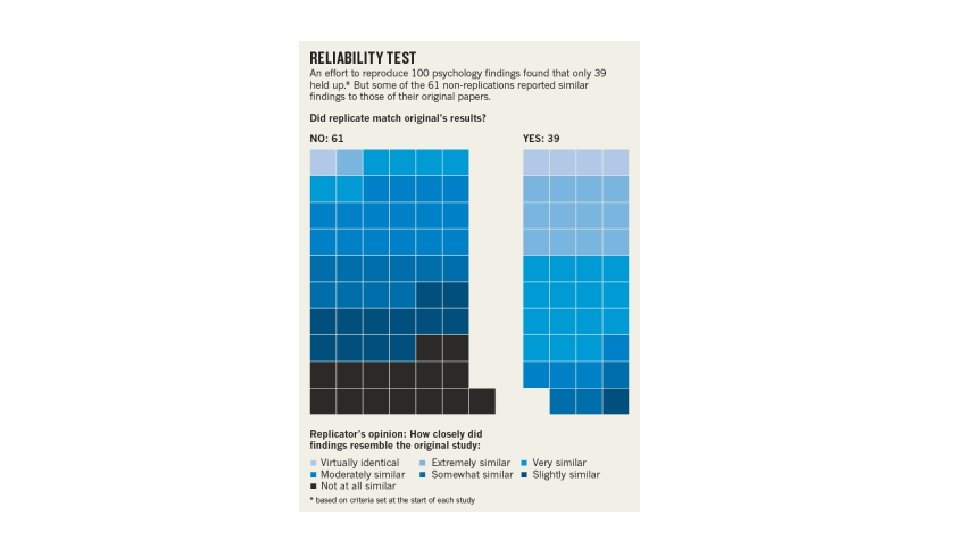

Replication Crisis? • Some key scientific findings cannot be replicated by other labs • Survey of ~1500 scientists: • 70% surveyed couldn't replicate a colleague’s study • 50% agreed there is a significant ‘crisis’ of reproducibility • Reproducibility project: findings for only 39 out of 100 psychological studies could be replicated https: //www. nature. com/news/1 -500 -scientists-lift-the-lid-on-reproducibility-1. 19970 https: //www. apa. org/monitor/2015/10/share-reproducibility

Example: Marshmallow Test

Marshmallow Test Study Findings • One marshmallow now, but two marshmallows if you wait • Walter Mischel: Self Control/delayed gratification leads to better outcomes in life • Followed up with participants 18 years later • Found increased "cognitive and academic competence" among the two marshmallow kids • Findings were used to sell "grit" as fix in education

Replicating the Marshmallow Test • Study has been difficult to replicate • Is it really just measuring socioeconomic status? • Well-off children tend to get better education • "Conceptual Replication Study": self-control is not associated with better outcomes

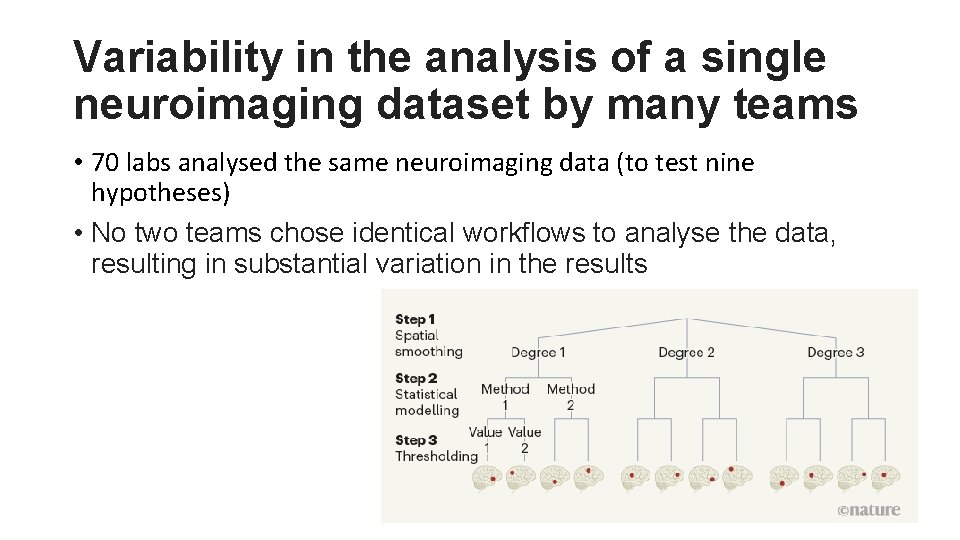

Variability in the analysis of a single neuroimaging dataset by many teams • 70 labs analysed the same neuroimaging data (to test nine hypotheses) • No two teams chose identical workflows to analyse the data, resulting in substantial variation in the results

Why do we have the replication crisis? Cultural: • Pressure to publish in prestigious journals • Requires 'impactful' results to get published • Bias against negative results Statistical: • Ioaniddis: Studies rely on questionable research practices • Sample Sizes are too small to justify conclusion • p-hacking: running too many statistical tests and only reporting the good ones Experimental: • Not enough detail to rerun the experiment and analysis

Spurious Correlations and False Findings

What are some solutions to the crisis? • Better statistical practices • Pre-registration of analyses (e. g. https: //osf. io/u 5 a 3 t/wiki/home/) • Larger sample sizes (Psychological Science Accelerator https: //psysciacc. org/) • More stringent cutoffs for statistics • Better education about reproducibility practices • e. g. The Turing Way • Metadata and Open Science Practices • Provide enough details about the experiment • More transparency about how experiment was conducted

What is Metadata? • "Data about data" • “Metadata is structured information that describes, explains, locates, or otherwise makes it easier to retrieve, use, or manage an information resource” (NISO) • Working definition: Information that lets us utilize a dataset effectively

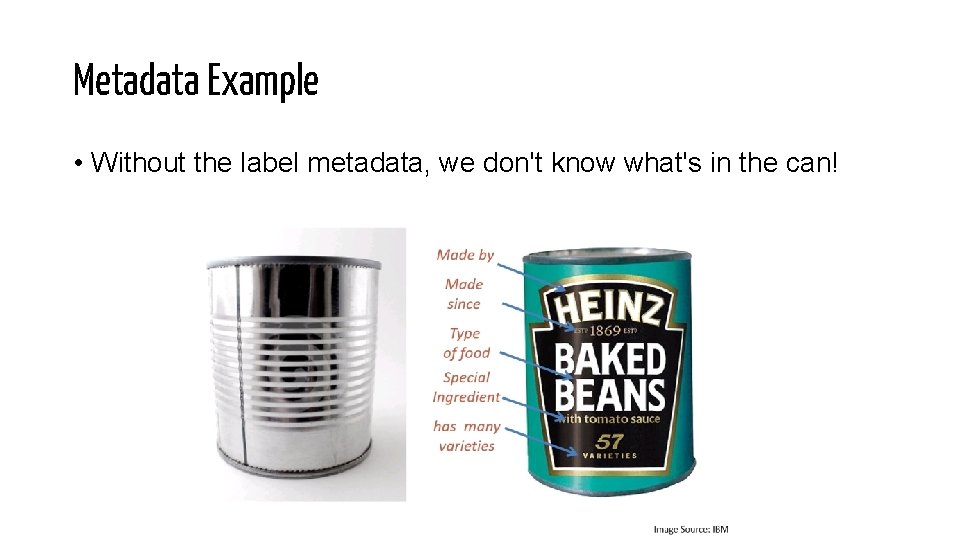

Metadata Example • Without the label metadata, we don't know what's in the can!

What Kinds of Experimental Metadata Are there? • Date experiment was done • Time a measurement was made • Number of repeated measurements • Who conducted the experiment • Dosage of treatment • How many subjects were in study • How many subjects dropped out of study • Experimental design

Reporting guidelines/analysis workflows • COBIDAS : a checklist to help with more thorough and transparent methods and result reporting in neuroimaging • WORCS : A Workflow for Open Reproducible Code in Science • An example of a re-executable neuroimaging publication

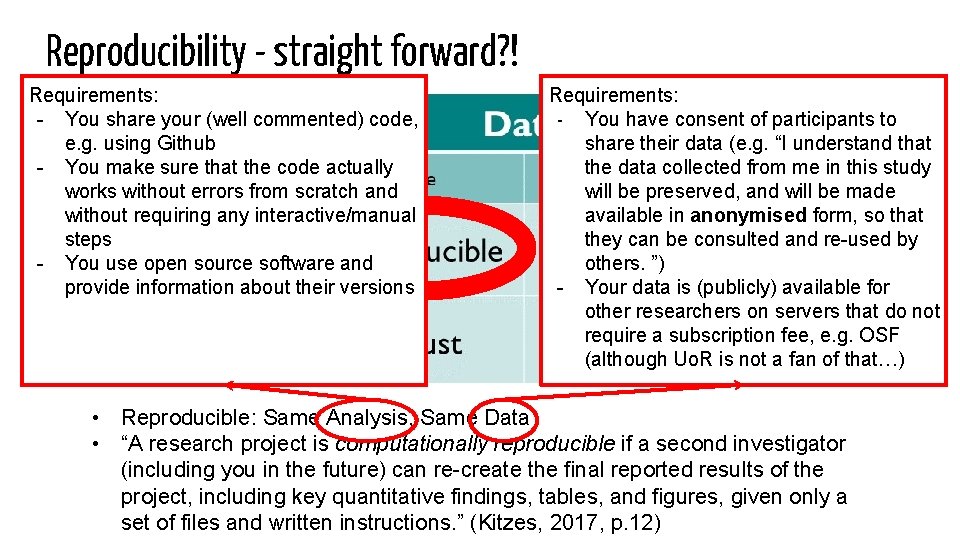

Reproducibility - straight forward? ! Requirements: - You share your (well commented) code, e. g. using Github - You make sure that the code actually works without errors from scratch and without requiring any interactive/manual steps - You use open source software and provide information about their versions Requirements: - You have consent of participants to share their data (e. g. “I understand that the data collected from me in this study will be preserved, and will be made available in anonymised form, so that they can be consulted and re-used by others. ”) - Your data is (publicly) available for other researchers on servers that do not require a subscription fee, e. g. OSF (although Uo. R is not a fan of that…) • Reproducible: Same Analysis, Same Data • “A research project is computationally reproducible if a second investigator (including you in the future) can re-create the final reported results of the project, including key quantitative findings, tables, and figures, given only a set of files and written instructions. ” (Kitzes, 2017, p. 12)

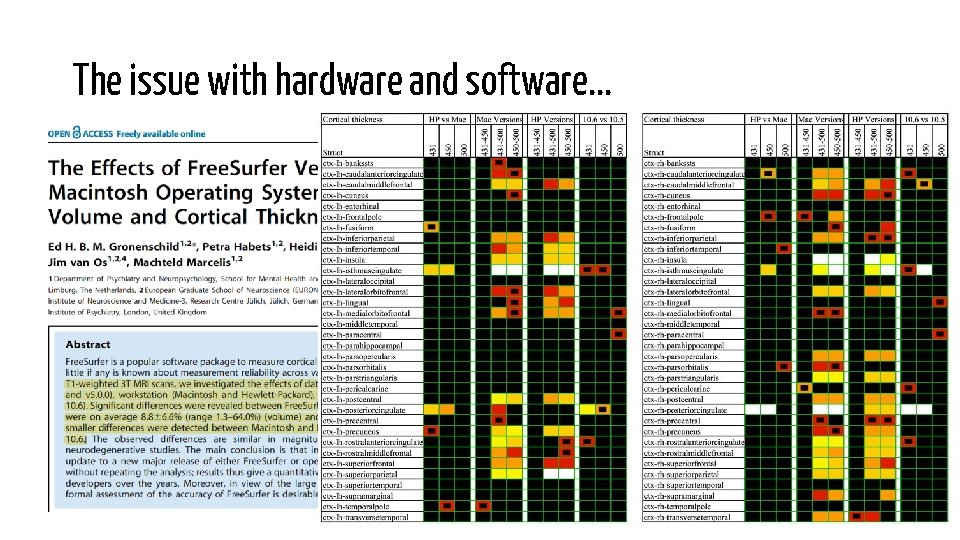

The issue with hardware and software. . .

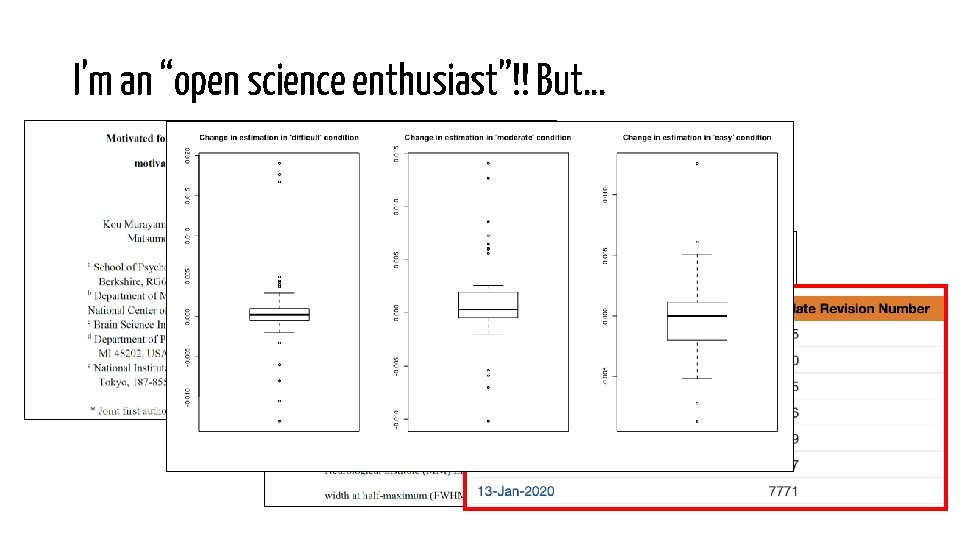

I’m an “open science enthusiast”!! But…

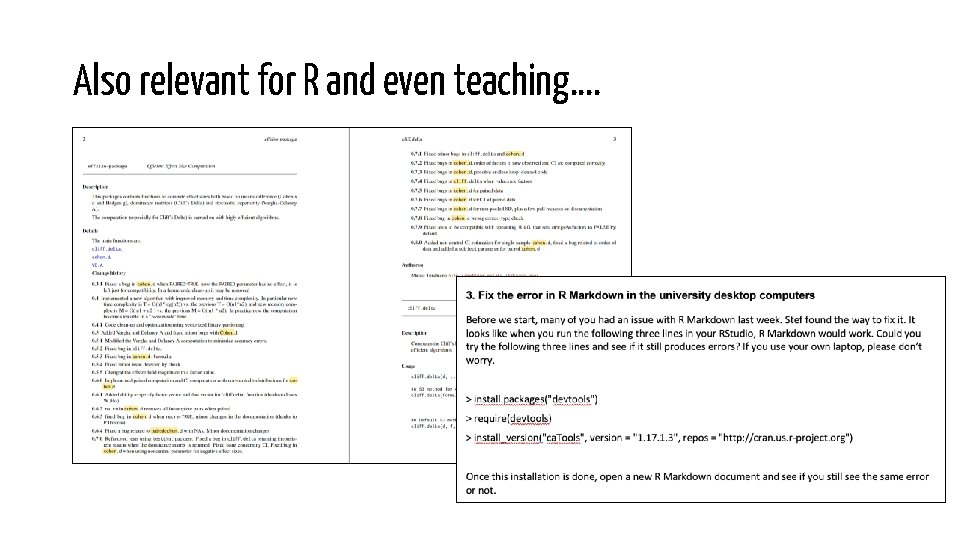

Also relevant for R and even teaching….

![specificity noun [U] (BEING EXACT): the quality of being clear and exact Be as specificity noun [U] (BEING EXACT): the quality of being clear and exact Be as](http://slidetodoc.com/presentation_image_h/875a364ffbdfb9dcb0c94980a196e17d/image-21.jpg)

specificity noun [U] (BEING EXACT): the quality of being clear and exact Be as detailed as you can when describing to software (and hardware) used to analyse your data • • • Software + versions Dependencies / packages / libraries + versions Hint: R package references can be found by calling citation("packagename") https: //dictionary. cambridge. org/dictionary/english/specificity https: //memegenerator. net/instance/63320951/office-space-boss-yeah-im-going-to-need-you-to-be-more-specific

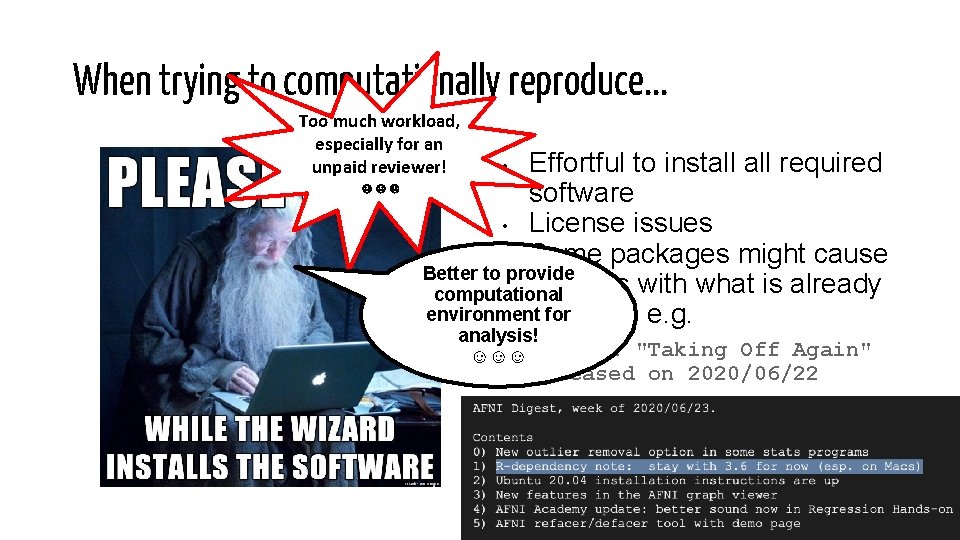

When trying to computationally reproduce. . . Too much workload, especially for an unpaid reviewer! ☹☹☹ Effortful to install required software • License issues • Some packages might cause Better to provide conflicts with what is already computational environment for installed, e. g. • analysis! ☺☺☺ R 4. 0. 2 "Taking Off Again" released on 2020/06/22

![Docker container ≈ Complete (software) environment ≈ “Program” + dependencies (incl. OS) “A [Docker] Docker container ≈ Complete (software) environment ≈ “Program” + dependencies (incl. OS) “A [Docker]](http://slidetodoc.com/presentation_image_h/875a364ffbdfb9dcb0c94980a196e17d/image-23.jpg)

Docker container ≈ Complete (software) environment ≈ “Program” + dependencies (incl. OS) “A [Docker] container is a standard unit of software that packages up code and all its dependencies so the application runs quickly and reliably from one computing environment to another. ” → software behaves exactly the same everywhere (yayay) https: //www. docker. com/resources/what-container http: //lukas-snoek. com/docker-and-binder-workshop/

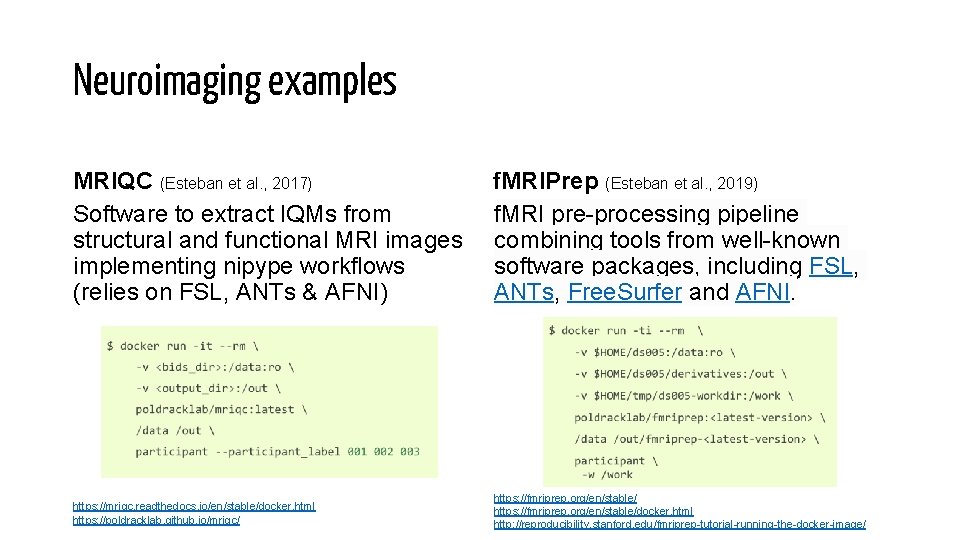

Neuroimaging examples MRIQC (Esteban et al. , 2017) f. MRIPrep (Esteban et al. , 2019) Software to extract IQMs from f. MRI pre-processing pipeline structural and functional MRI images combining tools from well-known implementing nipype workflows software packages, including FSL, (relies on FSL, ANTs & AFNI) ANTs, Free. Surfer and AFNI. https: //mriqc. readthedocs. io/en/stable/docker. html https: //poldracklab. github. io/mriqc/ https: //fmriprep. org/en/stable/docker. html http: //reproducibility. stanford. edu/fmriprep-tutorial-running-the-docker-image/

Binder “Binder allows you to create custom computing environments that can be shared and used by many remote users. ” How it works (in a nushell): Code from a public Github repository (containing e. g. Python or R code) is used to build a Docker image of the repository containing all dependencies. This Docker image is then hosted on a free server at mybinder. org providing Jupyter or Rstudio server to interactively run the code on. - R adaption based on holepunch package https: //github. com/karthik/holepunch - Step-by-step instruction at https: //github. com/karthik/binder-test - Great tutorial (~11 min): https: //www. youtube. com/watch? v=w. Skhe. V-Uqq 4&t=432 s https: //mybinder. readthedocs. io/en/latest/ https: //github. com/alan-turing-institute/the-turing-way/blob/master/workshops/boost-research-reproducibility-binder/workshop-presentations/zero-to-binder-r. md https: //github. com/karthik/holepunch

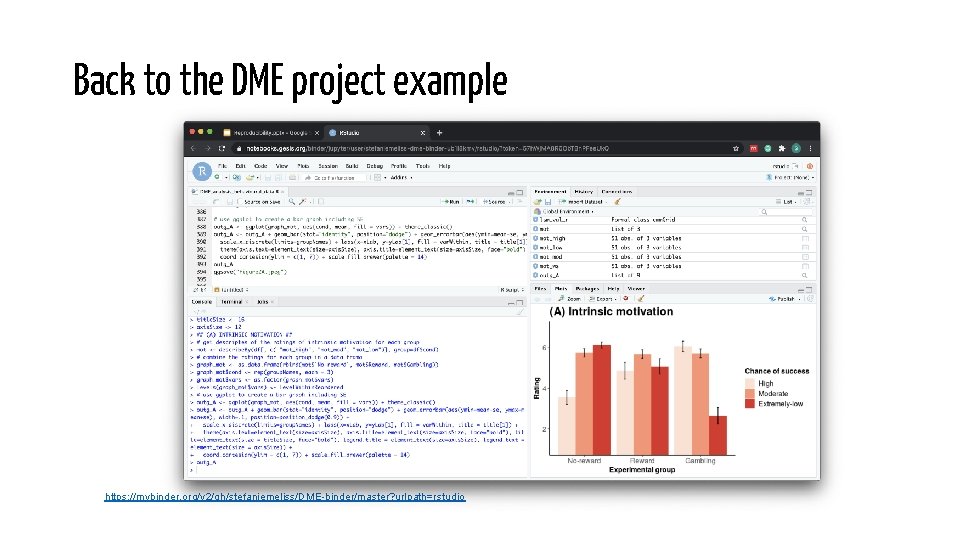

Back to the DME project example https: //mybinder. org/v 2/gh/stefaniemeliss/DME-binder/master? urlpath=rstudio

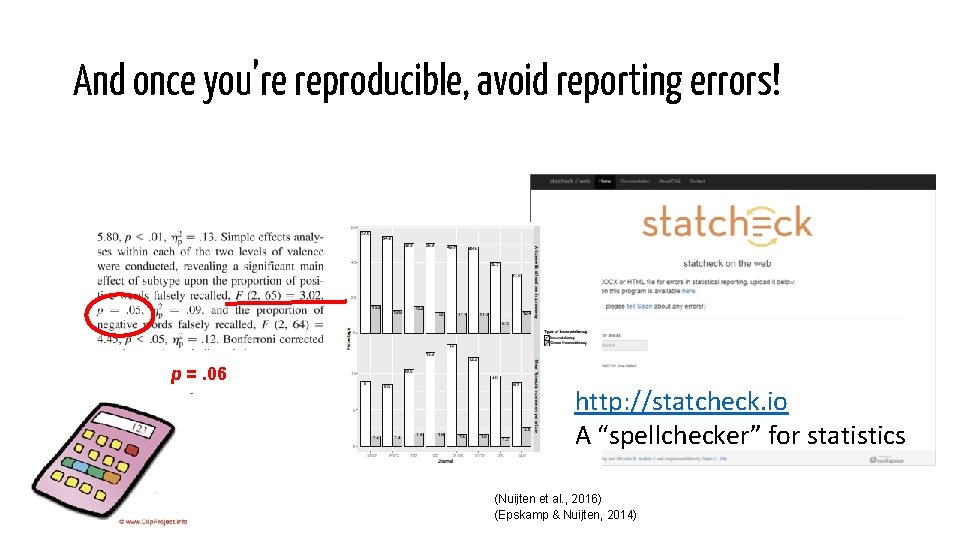

And once you’re reproducible, avoid reporting errors! p =. 06 http: //statcheck. io A “spellchecker” for statistics (Nuijten et al. , 2016) (Epskamp & Nuijten, 2014)

- Slides: 28