Reproducibility A Funder and Data Science Perspective Philip

Reproducibility: A Funder and Data Science Perspective Philip E. Bourne, Ph. D, FACMI University of Virginia Thanks to Valerie Florence, NIH for some slides http: //www. slideshare. net/pebourne peb 6 a@virginia. edu Net. Sci Preworkshop 2017 June 19, 2017 6/19/17 1

Who Am I Representing And What Is My Bias? • I am presenting my views, not necessarily those of NIH • Now leading an institutional data science initiative • Total data parasite • Unnatural interest in scholarly communication • Co-founded and founding EIC PLOS Computational Biology – OA advocate • Prior co-Director Protein Data Bank • Amateur student researcher in scholarly communication 6/19/17 2

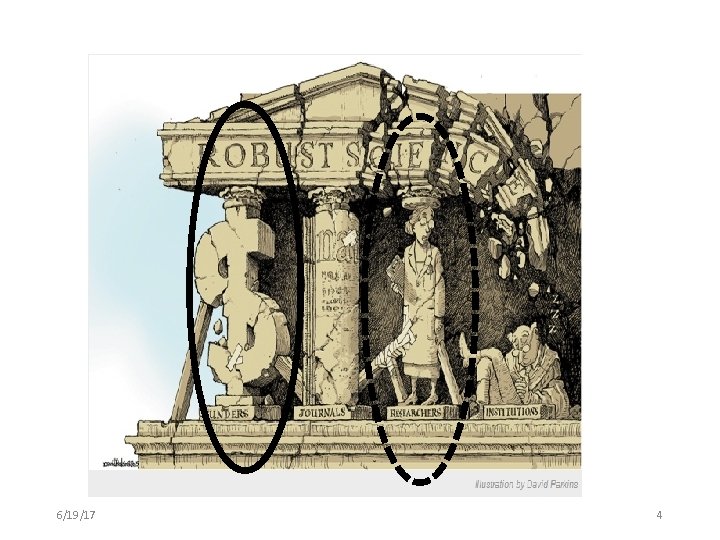

Reproducibility is the responsibility of all stakeholders…. 6/19/17 3

6/19/17 4

Lets start with researchers … 6/19/17 5

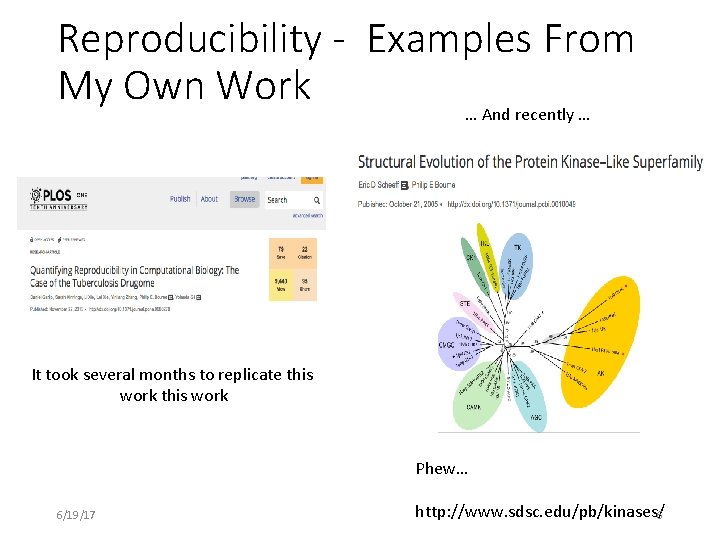

Reproducibility - Examples From My Own Work … And recently … It took several months to replicate this work Phew… 6/19/17 http: //www. sdsc. edu/pb/kinases/6

Beyond value to myself (and even the emphasis is not enough) there is too little incentive to make my work reproducible by others … 6/19/17 7

Tools Fix This Problem Right? • Extracted all PMC papers with associated Jupyter notebooks available • Approx. 100 • Took a random sample of 25 • Only 1 ran out of the box • Several ran with minor modification • Others lacked libraries, sufficient details to run etc. It takes more than tools. . It takes incentives … Daniel Mietchen 2017 Personal Communication 6/19/17 8

Funders and publishers are the major levers. . What are funders doing? Consider the NIH …. . 6/19/17 9

6/19/17 10

NIH Special Focus Area https: //www. nih. gov/research-training/rigor-reproducibility 6/19/17 11

Outcomes – General … 6/19/17 12

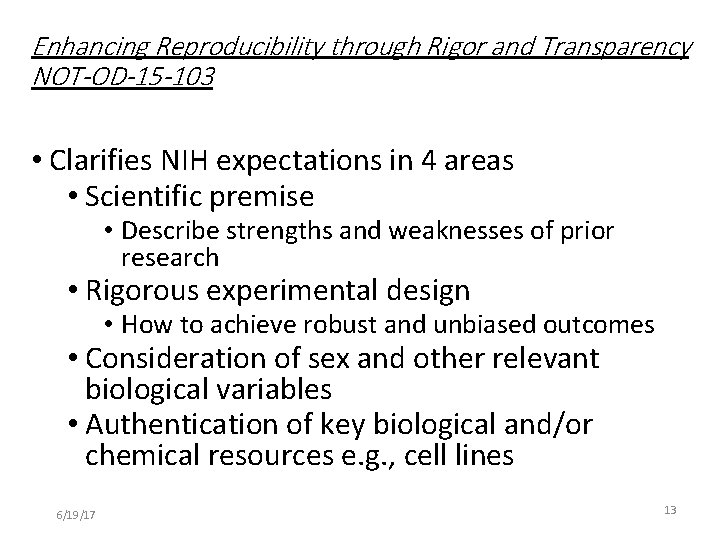

Enhancing Reproducibility through Rigor and Transparency NOT-OD-15 -103 • Clarifies NIH expectations in 4 areas • Scientific premise • Describe strengths and weaknesses of prior research • Rigorous experimental design • How to achieve robust and unbiased outcomes • Consideration of sex and other relevant biological variables • Authentication of key biological and/or chemical resources e. g. , cell lines 6/19/17 13

Outcomes – network based … 6/19/17 14

Experiment in Moving from Pipes to Platforms 6/19/17 Sangeet Paul Choudary https: //www. slideshare. net/sanguit 15

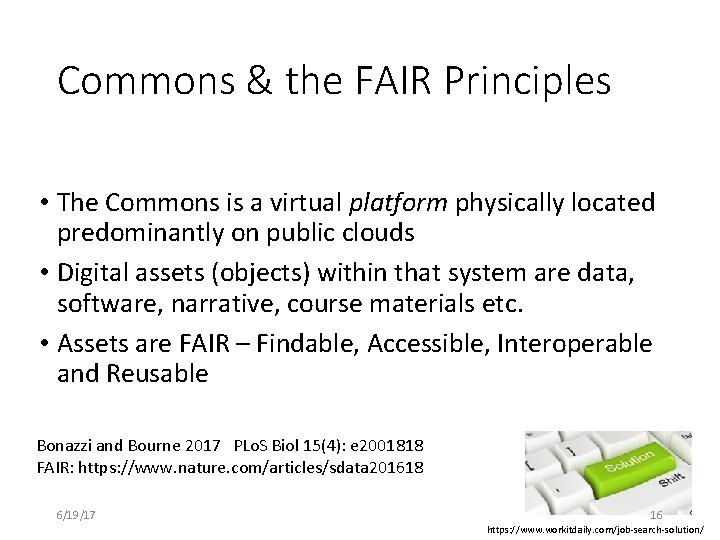

Commons & the FAIR Principles • The Commons is a virtual platform physically located predominantly on public clouds • Digital assets (objects) within that system are data, software, narrative, course materials etc. • Assets are FAIR – Findable, Accessible, Interoperable and Reusable Bonazzi and Bourne 2017 PLo. S Biol 15(4): e 2001818 FAIR: https: //www. nature. com/articles/sdata 201618 6/19/17 16 https: //www. workitdaily. com/job-search-solution/

Just announced … https: //commonfund. nih. gov/sites/default/file s/RM-17 -026_Commons. Pilot. Phase. pdf Bonazzi and Bourne 2017 FAIR: https: //www. nature. com/articles/sdata 201618 6/19/17 17

Current Data Commons Pilots Commons Platform Pilots Cloud Credit Model • Explore feasibility of the Commons Platform • Facilitate collaboration and interoperability • Provide access to cloud via credits to populate the Commons • Connecting credits to NIH Grants Reference Data Sets • Making large and/or high value NIH funded data sets and tools accessible in the cloud Resource Search & Index • Developing Data & Software Indexing methods • Leveraging BD 2 K efforts bio. CADDIE et al • Collaborating with external groups 6/19/17 18

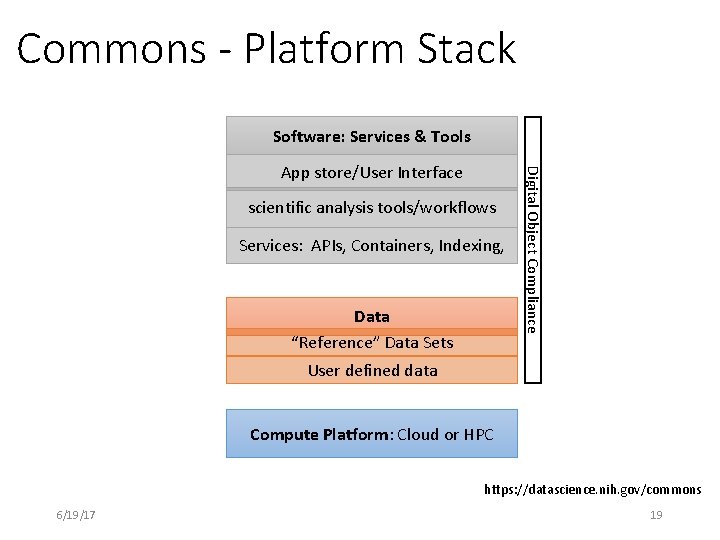

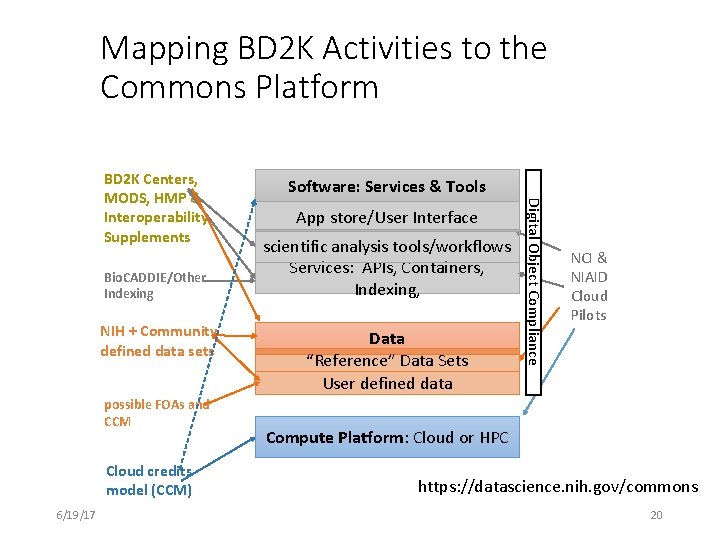

Commons - Platform Stack Software: Services & Tools scientific analysis tools/workflows Services: APIs, Containers, Indexing, Data “Reference” Data Sets Digital Object Compliance App store/User Interface User defined data Compute Platform: Cloud or HPC https: //datascience. nih. gov/commons 6/19/17 19

Mapping BD 2 K Activities to the Commons Platform Bio. CADDIE/Other Indexing NIH + Community defined data sets possible FOAs and CCM Cloud credits model (CCM) 6/19/17 Software: Services & Tools App store/User Interface scientific analysis tools/workflows Services: APIs, Containers, Indexing, Data “Reference” Data Sets User defined data Digital Object Compliance BD 2 K Centers, MODS, HMP & Interoperability Supplements NCI & NIAID Cloud Pilots Compute Platform: Cloud or HPC https: //datascience. nih. gov/commons 20

Overarching Questions • Is the Commons a step towards improved reproducibility? • Is the Commons approach at odds with other approaches, if not how best to coordinate? • Do the pilots enable a full evaluation for a larger scale implementation? • How best to evaluate the success of the pilots? 6/19/17 21

Other Questions • Is a mix of cloud vendors appropriate? • How to balance the overall metrics of success? • • Reproducibility Cost saving Efficiency – centralized data vs distributed New science User satisfaction Data integration and reuse – how to measure? Data security • What are the weaknesses? 6/19/17 22

• Thank You 6/19/17 23

Acknowledgements • Vivien Bonazzi, Jennie Larkin, Michelle Dunn, Mark Guyer, Allen Dearry, Sonynka Ngosso Tonya Scott, Lisa Dunneback, Vivek Navale (CIT/ADDS) • NLM/NCBI: Patricia Brennan, Mike Huerta, George Komatsoulis • NHGRI: Eric Green, Valentina di Francesco • NIGMS: Jon Lorsch, Susan Gregurick, Peter Lyster • CIT: Andrea Norris • NIH Common Fund: Jim Anderson , Betsy Wilder, Leslie Derr • NCI Cloud Pilots/ GDC: Warren Kibbe, Tony Kerlavage, Tanja Davidsen • Commons Reference Data Set Working Group: Weiniu Gan (HL), Ajay Pillai (HG), Elaine Ayres, (BITRIS), Sean Davis (NCI), Vinay Pai (NIBIB), Maria Giovanni (AI), Leslie Derr (CF), Claire Schulkey (AI) • RIWG Core Team: Ron Margolis (DK), Ian Fore, (NCI), Alison Yao (AI), Claire Schulkey (AI), Eric Choi (AI) • OSP: Dina Paltoo 6/19/17 24

- Slides: 24