Reminders Starting today and every Tuesday before c

Reminders Starting today and every Tuesday before c - Weekly updates on slack - Format: Team: Name 1 Name 2 Last week: a brief summary of what you did last week, and some challenges you might be facing in the project. This week: a brief summary of what you are planning to do this w and how you are going to overcome the challenges from last wee - Scribe for Today

Serially Equivalent + Scalable Parallel Machine Learning Dimitris Papailiopoulos

Today - Serial Equivalence - Beyond Hongwild - How much asynchrony is possible? - Open Problems

Today Single Machine, Multi-core

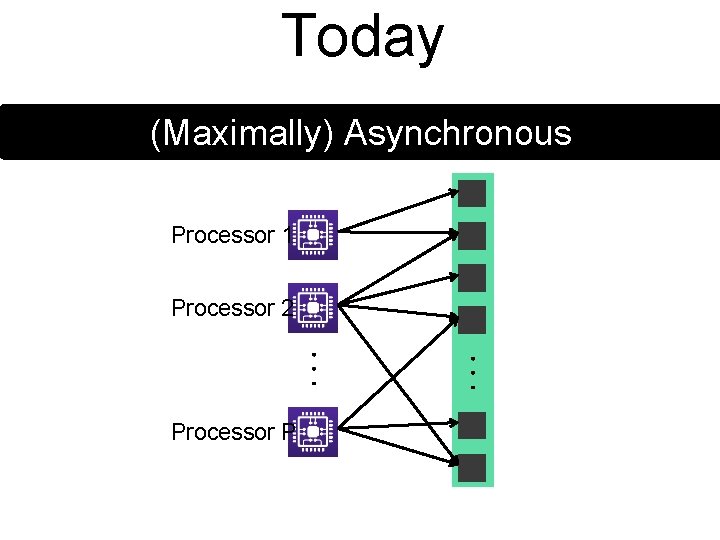

Today (Maximally) Asynchronous Processor 1 Processor 2 Processor P

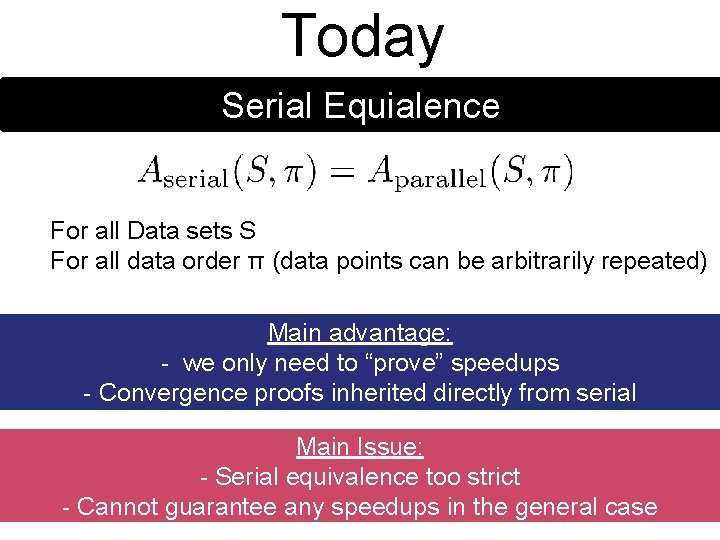

Today Serial Equialence For all Data sets S For all data order π (data points can be arbitrarily repeated) Main advantage: - we only need to “prove” speedups - Convergence proofs inherited directly from serial Main Issue: - Serial equivalence too strict - Cannot guarantee any speedups in the general case

The Stochastic Updates Meta-algorithm

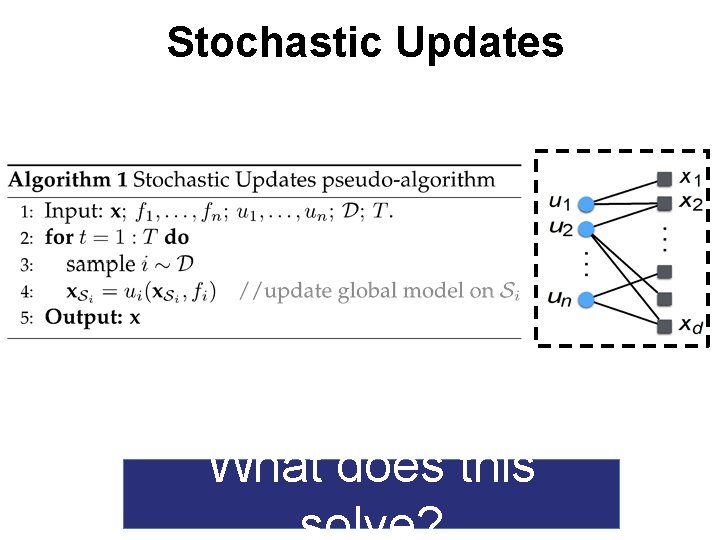

Stochastic Updates What does this

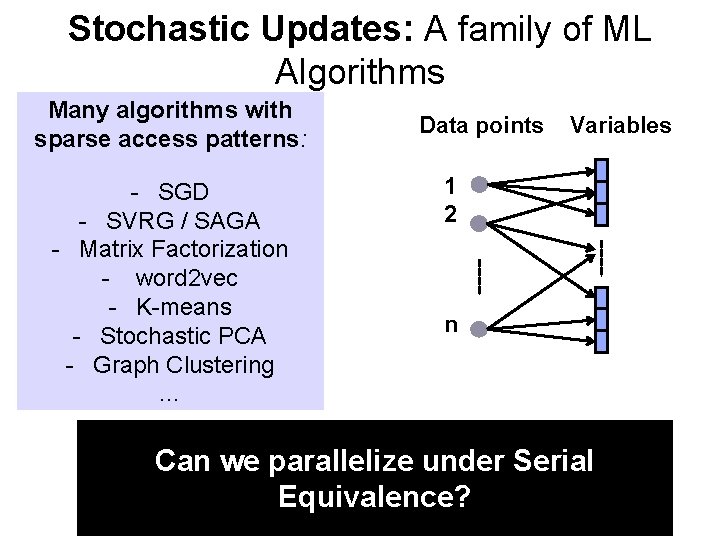

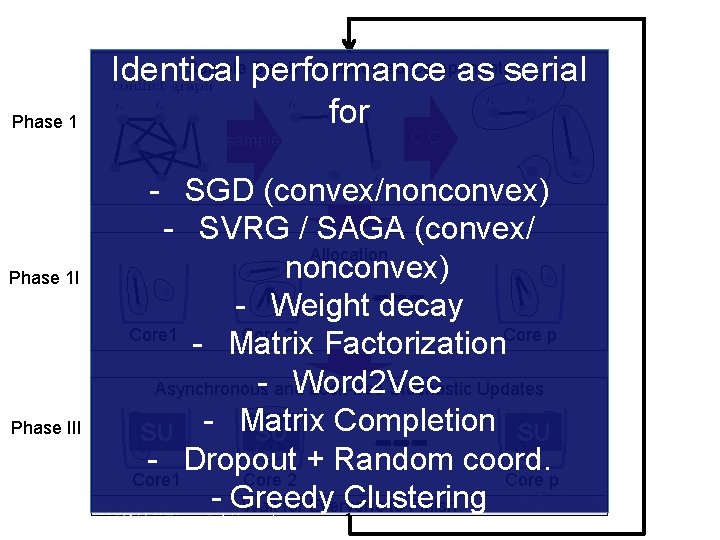

Stochastic Updates: A family of ML Algorithms Many algorithms with sparse access patterns: - SGD - SVRG / SAGA - Matrix Factorization - word 2 vec - K-means - Stochastic PCA - Graph Clustering … Data points Variables 1 2 n Can we parallelize under Serial Equivalence?

A graph view of Conflicts in Parallel Updates

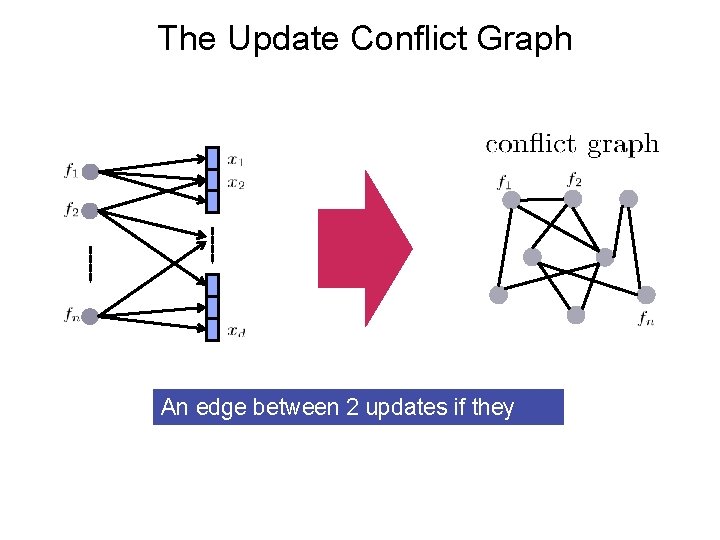

The Update Conflict Graph An edge between 2 updates if they overlap

![The Theorem [Krivelevich’ 14] sample Lemma: Sample less than vertices (with/without replacement) The Theorem [Krivelevich’ 14] sample Lemma: Sample less than vertices (with/without replacement)](http://slidetodoc.com/presentation_image/63e26c77d63dcbb44761fe9e741c63b3/image-12.jpg)

The Theorem [Krivelevich’ 14] sample Lemma: Sample less than vertices (with/without replacement)

![The Theorem [Krivelevich’ 14] sample Lemma: Sample less than Then, the induced sub-graph shatters The Theorem [Krivelevich’ 14] sample Lemma: Sample less than Then, the induced sub-graph shatters](http://slidetodoc.com/presentation_image/63e26c77d63dcbb44761fe9e741c63b3/image-13.jpg)

The Theorem [Krivelevich’ 14] sample Lemma: Sample less than Then, the induced sub-graph shatters vertices (with/without replacement)

![The Theorem [Krivelevich’ 14] C. C. sample Lemma: Sample less than vertices (with/without replacement) The Theorem [Krivelevich’ 14] C. C. sample Lemma: Sample less than vertices (with/without replacement)](http://slidetodoc.com/presentation_image/63e26c77d63dcbb44761fe9e741c63b3/image-14.jpg)

The Theorem [Krivelevich’ 14] C. C. sample Lemma: Sample less than vertices (with/without replacement) Then, the induced sub-graph shatters, The largest connected component has size Even if the Graph was a Single Huge Conflict Component!

Building a Parallelization Framework out of a Single Theorem

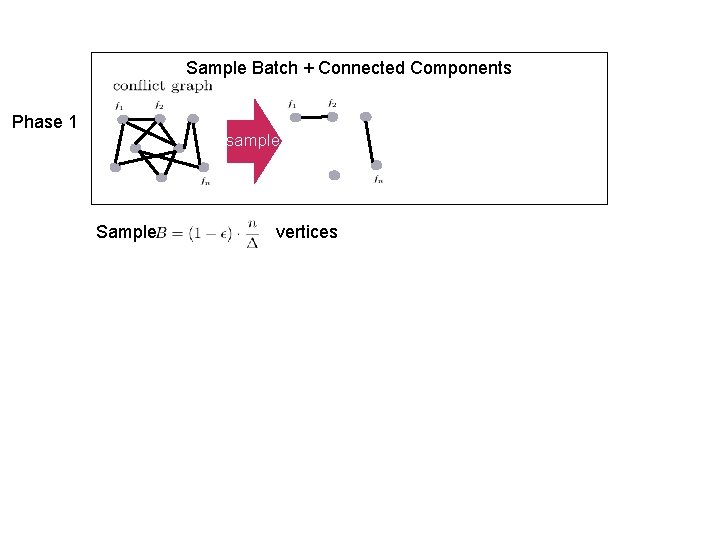

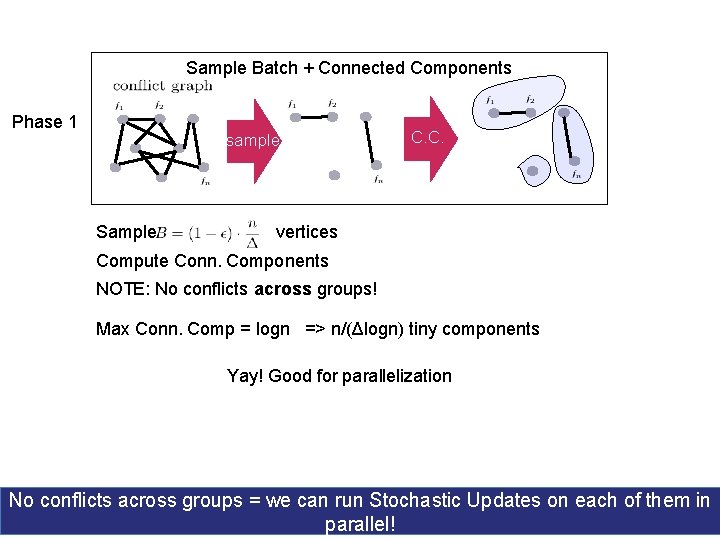

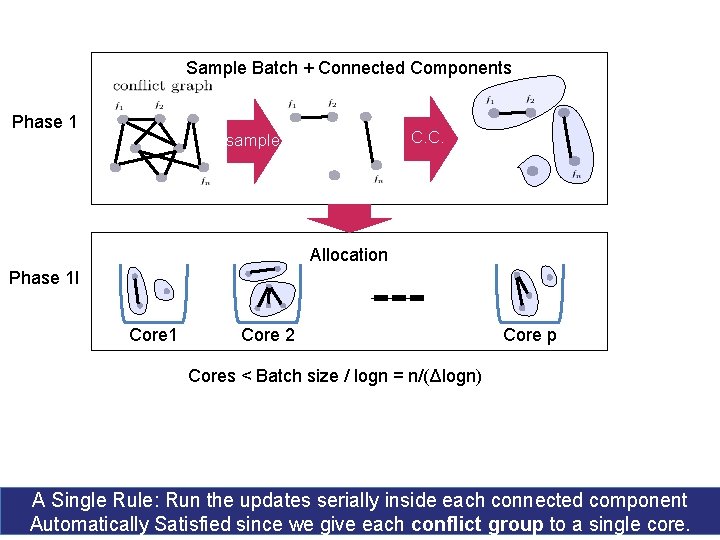

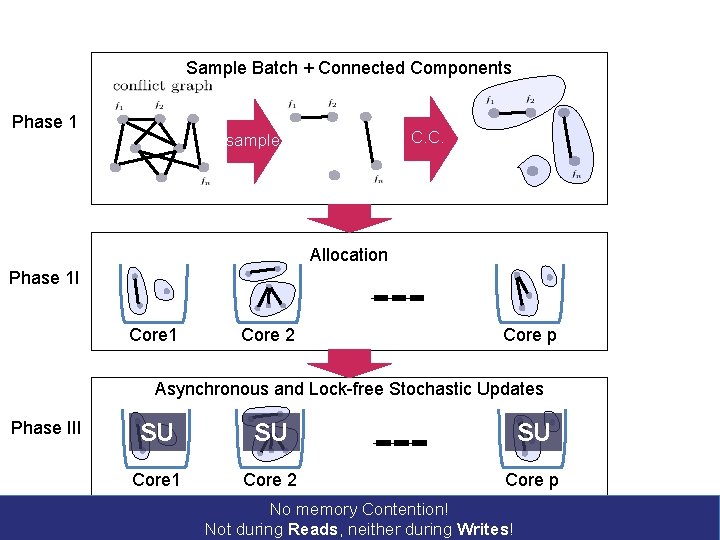

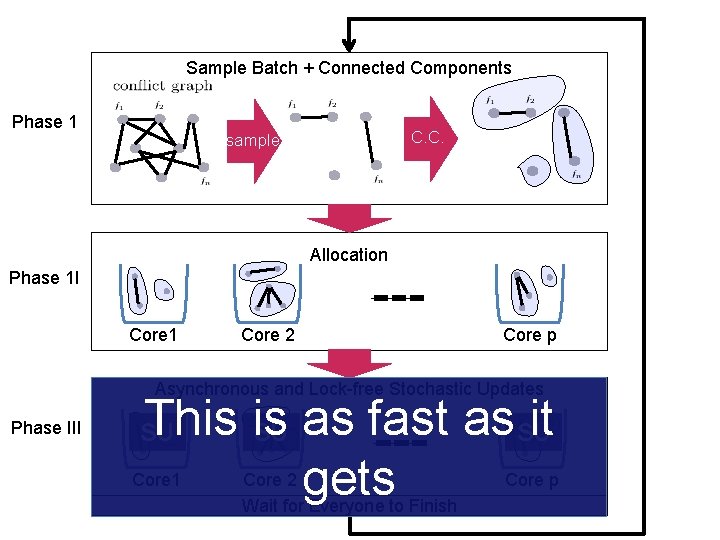

Sample Batch + Connected Components Phase 1 sample Sample vertices

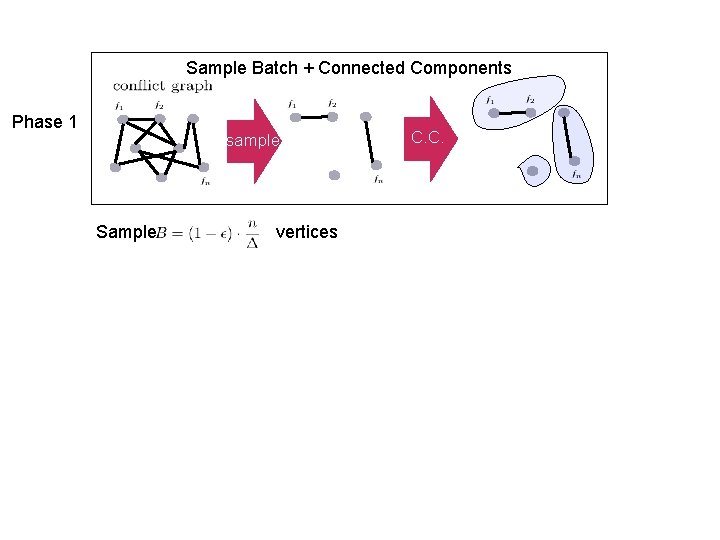

Sample Batch + Connected Components Phase 1 sample Sample vertices C. C.

Sample Batch + Connected Components Phase 1 sample Sample C. C. vertices Compute Conn. Components NOTE: No conflicts across groups! Max Conn. Comp = logn => n/(Δlogn) tiny components Yay! Good for parallelization No conflicts across groups = we can run Stochastic Updates on each of them in parallel!

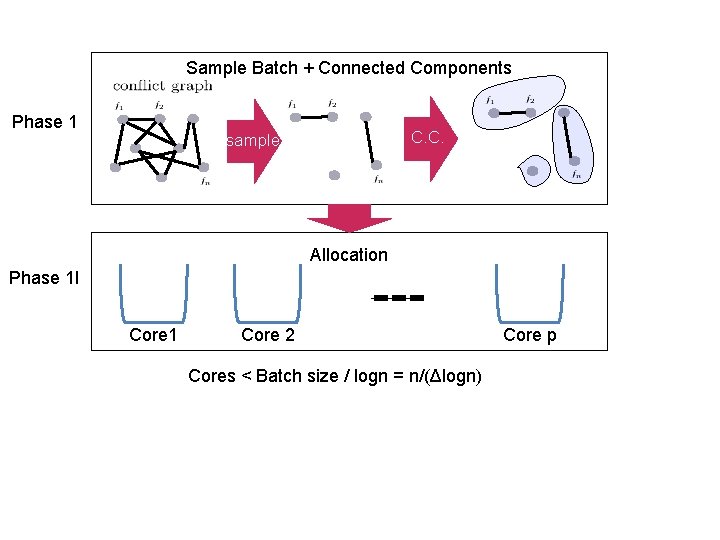

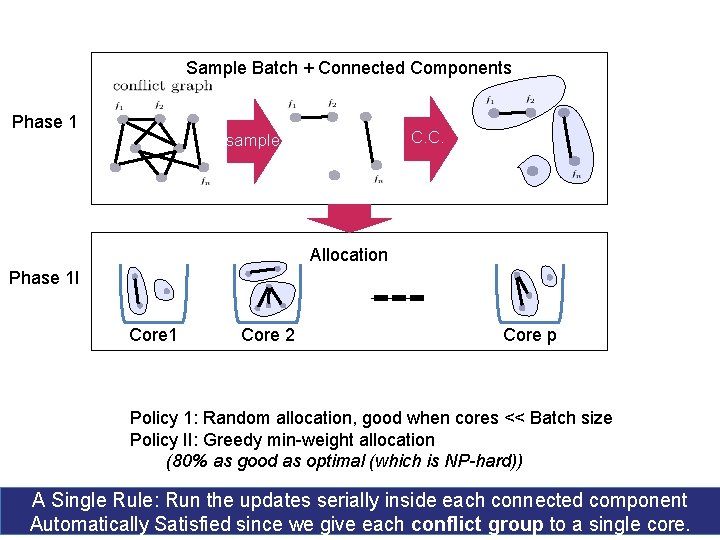

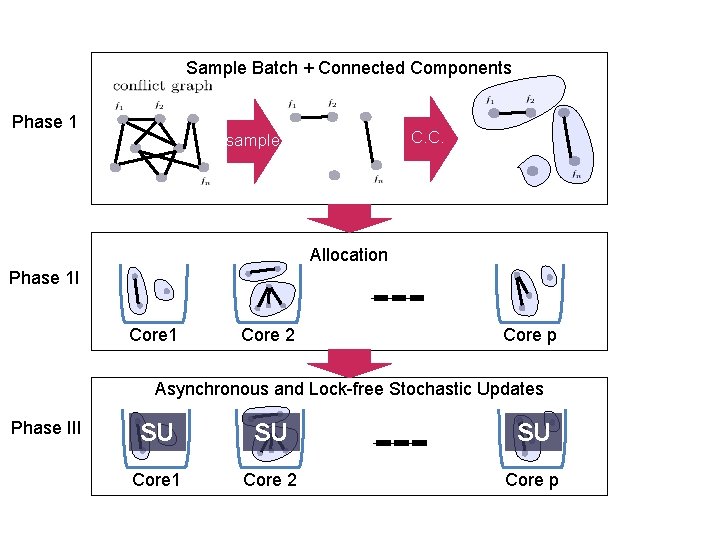

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Cores < Batch size / logn = n/(Δlogn) Core p

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Cores < Batch size / logn = n/(Δlogn) A Single Rule: Run the updates serially inside each connected component Automatically Satisfied since we give each conflict group to a single core.

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Policy 1: Random allocation, good when cores << Batch size Policy II: Greedy min-weight allocation (80% as good as optimal (which is NP-hard)) A Single Rule: Run the updates serially inside each connected component Automatically Satisfied since we give each conflict group to a single core.

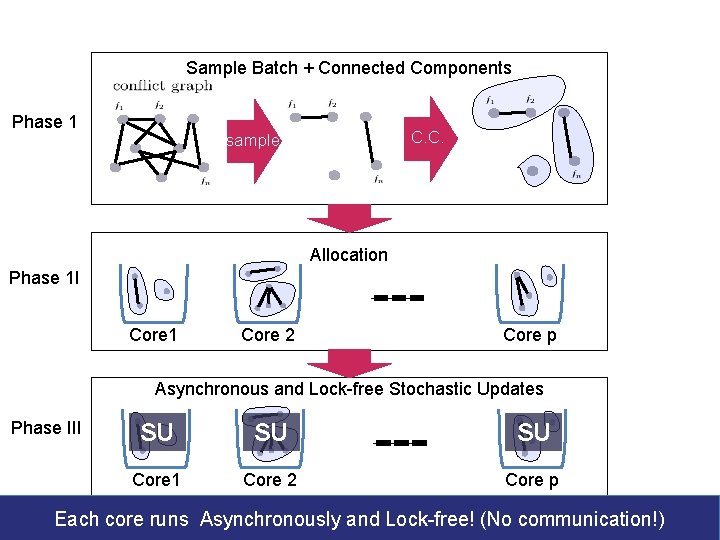

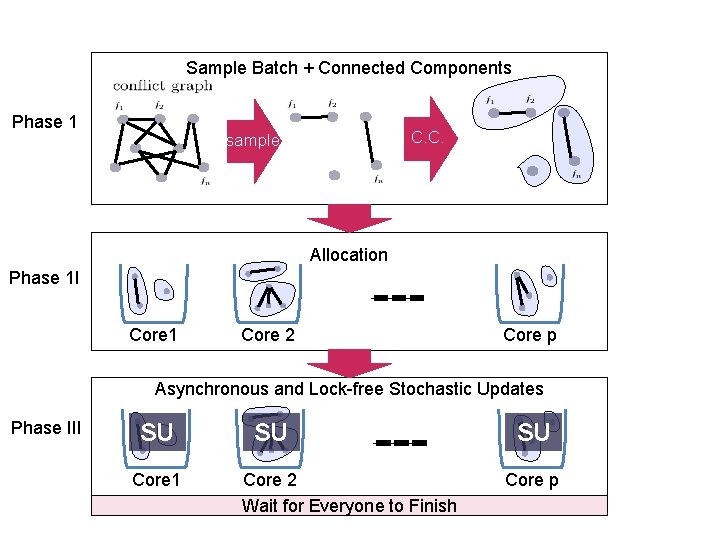

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Asynchronous and Lock-free Stochastic Updates Phase III SU SU SU Core 1 Core 2 Core p

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Asynchronous and Lock-free Stochastic Updates Phase III SU SU SU Core 1 Core 2 Core p Each core runs Asynchronously and Lock-free! (No communication!)

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Asynchronous and Lock-free Stochastic Updates Phase III SU SU SU Core 1 Core 2 Core p No memory Contention! Not during Reads, neither during Writes!

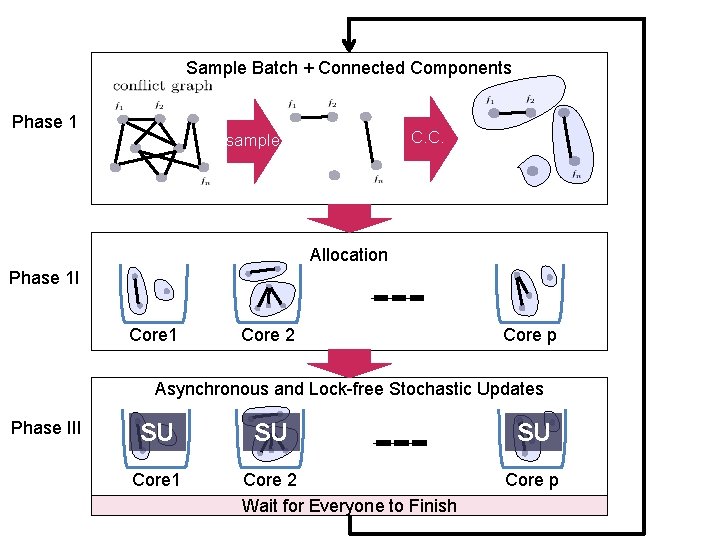

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Asynchronous and Lock-free Stochastic Updates Phase III SU Core 1 SU Core 2 Wait for Everyone to Finish SU Core p

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Asynchronous and Lock-free Stochastic Updates Phase III SU Core 1 SU Core 2 Wait for Everyone to Finish SU Core p

Cyclades guarantees Serially Equivalence But does it guarantee speedups?

Sample Batch + Connected Components Phase 1 C. C. sample Allocation Phase 1 I Core 1 Core 2 Core p Asynchronous and Lock-free Stochastic Updates Phase III This is it SU as fast as SU gets SU Core 1 Core 2 Wait for Everyone to Finish Core p

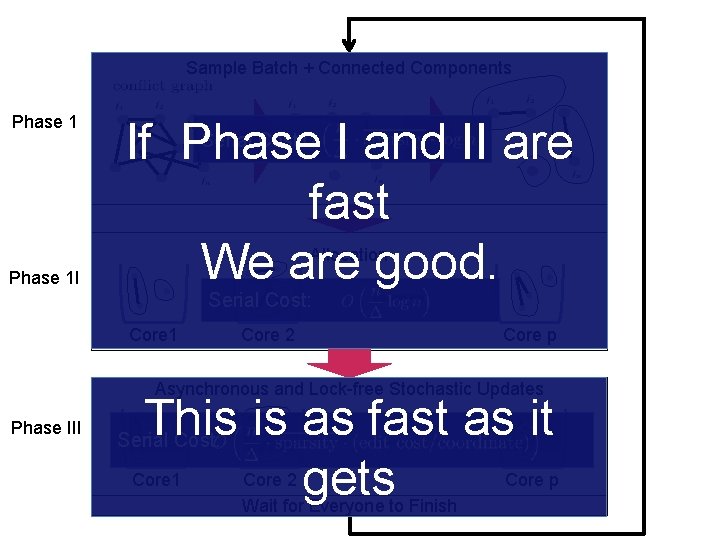

Sample Batch + Connected Components Phase 1 If Phase I and II are fast We are good. C. C. sample Serial Cost: Allocation Phase 1 I Serial Cost: Core 1 Core 2 Core p Asynchronous and Lock-free Stochastic Updates Phase III This is it SU as fast as SU gets SUCost: Serial Core 1 Core 2 Wait for Everyone to Finish Core p

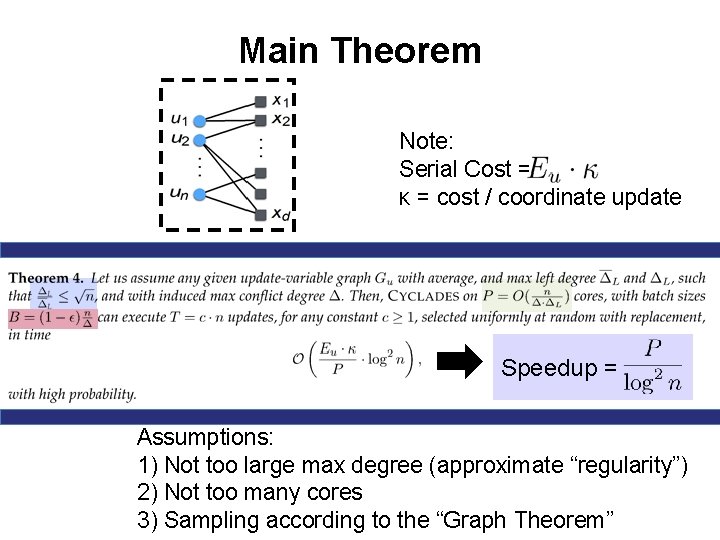

Main Theorem Note: Serial Cost = κ = cost / coordinate update Speedup = Assumptions: 1) Not too large max degree (approximate “regularity”) 2) Not too many cores 3) Sampling according to the “Graph Theorem”

Phase 1 I Phase III Sample performance Batch + Connected Components Identical as serial for sample C. C. - SGD (convex/nonconvex) - SVRG / SAGA (convex/ Allocation nonconvex) - Weight decay Core 1 Core 2 - Matrix Factorization. Core p - and. Word 2 Vec Asynchronous Lock-free Stochastic Updates Completion SU SU - Matrix SU Dropout + Random coord. Core 1 Core 2 Core p - Greedy Clustering Wait for Everyone to Finish

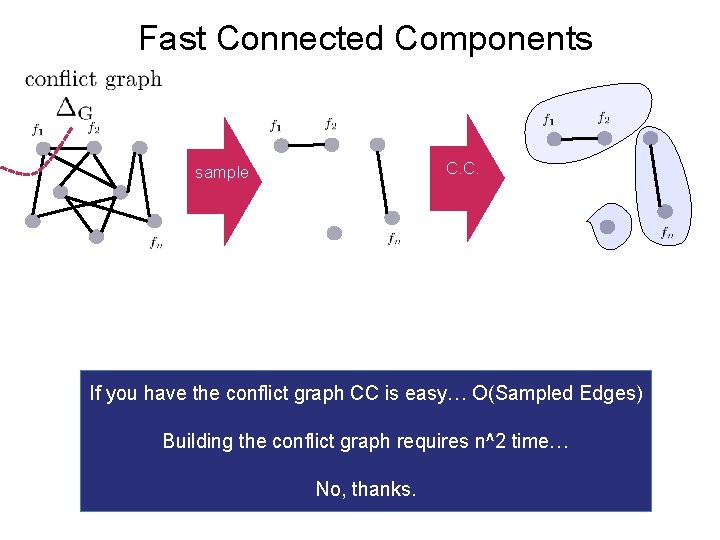

Fast Connected Components

Fast Connected Components C. C. sample If you have the conflict graph CC is easy… O(Sampled Edges) Building the conflict graph requires n^2 time… No, thanks.

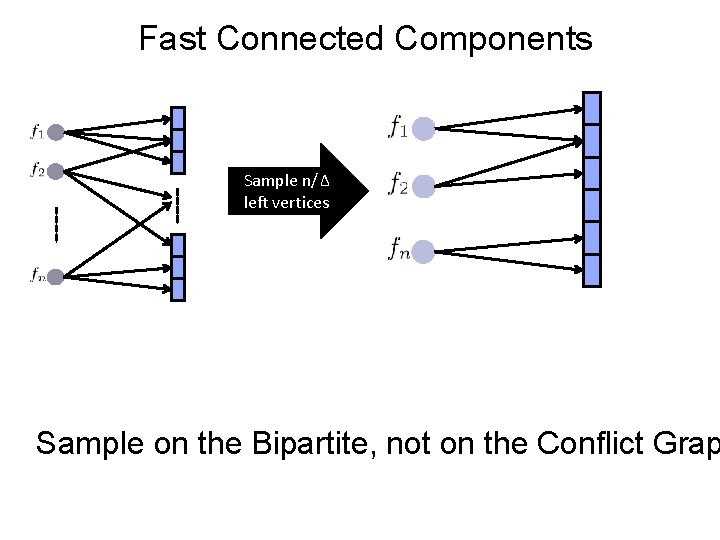

Fast Connected Components Sample n/Δ left vertices Sample on the Bipartite, not on the Conflict Grap

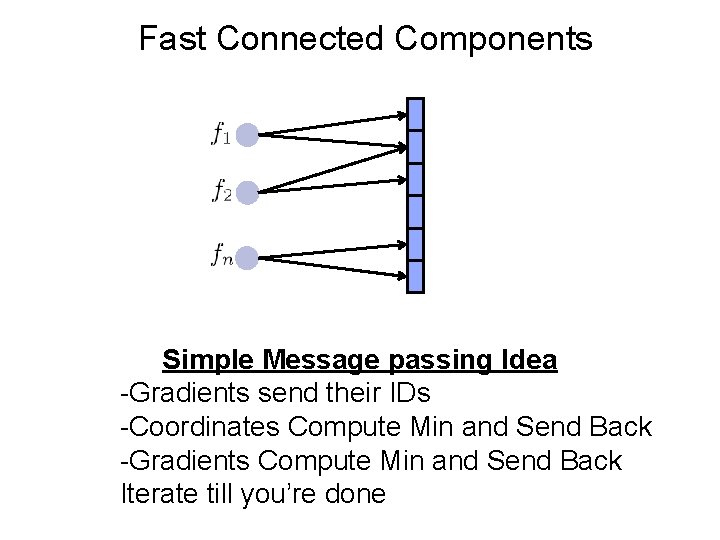

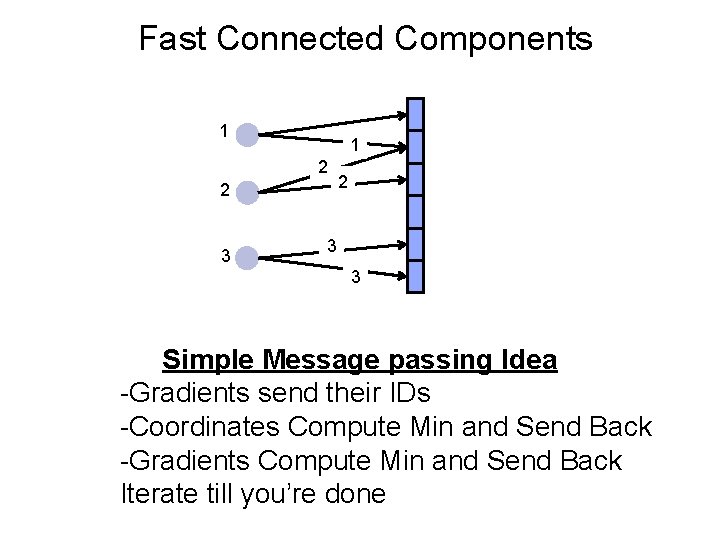

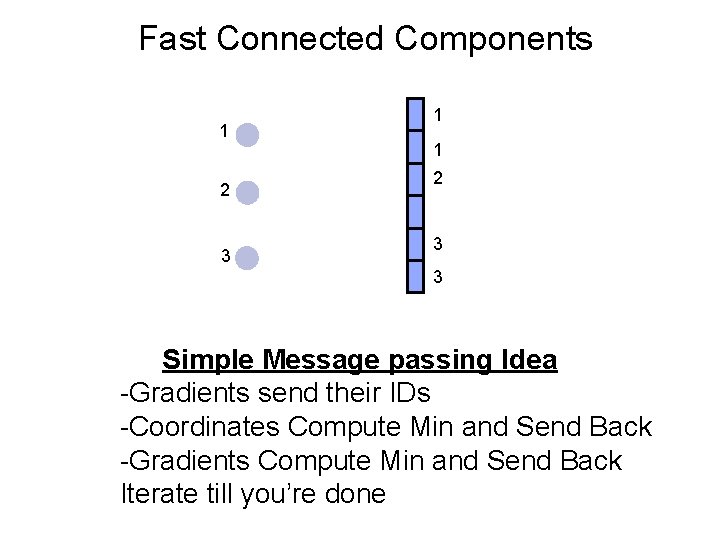

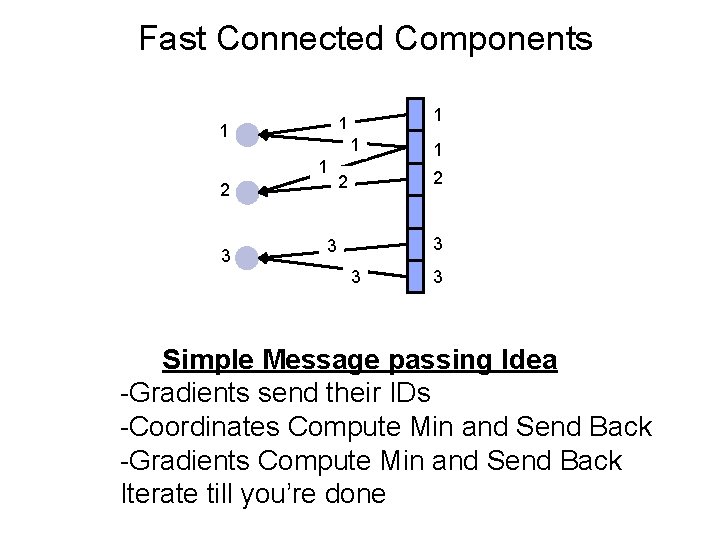

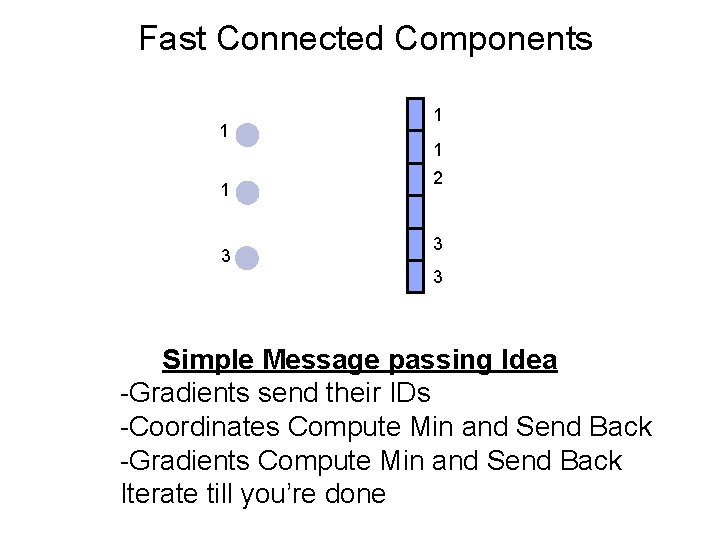

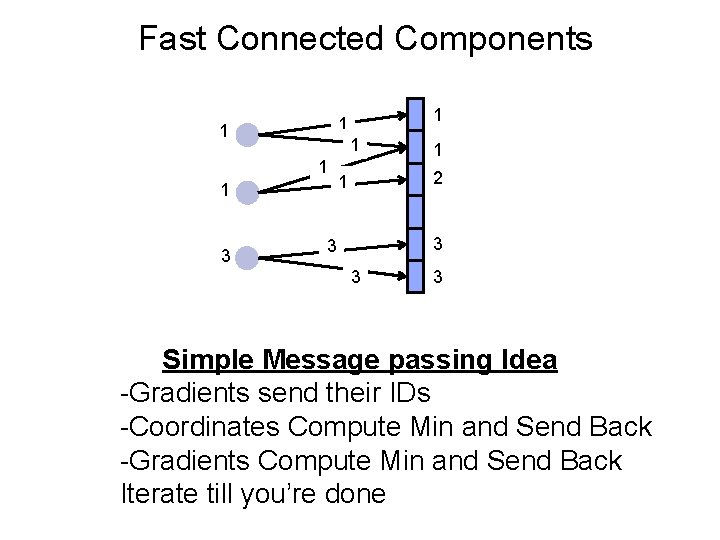

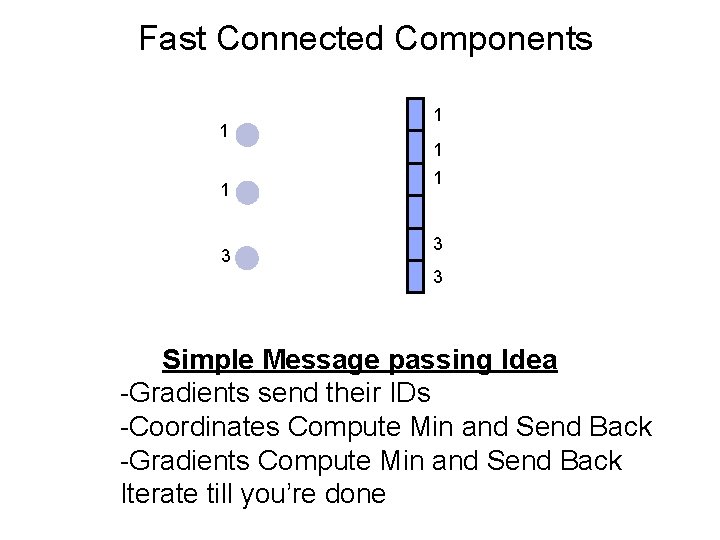

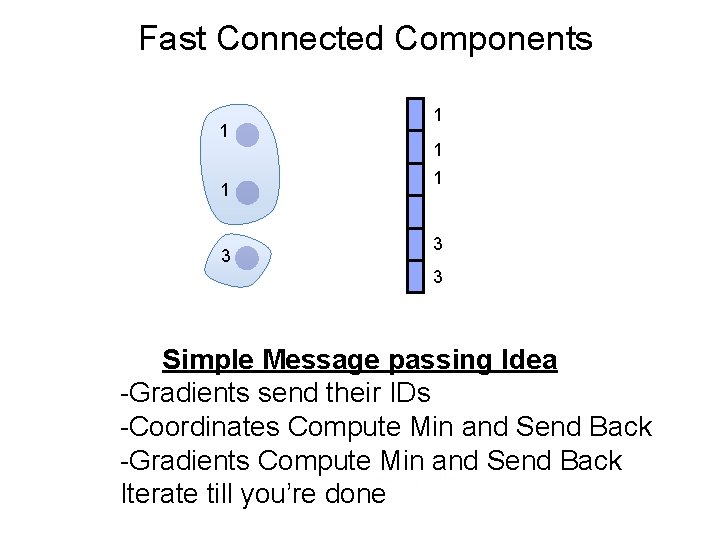

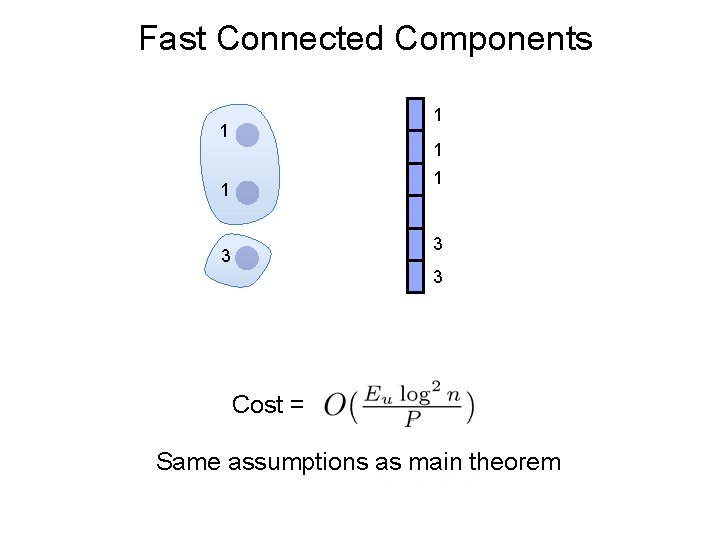

Fast Connected Components Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 1 2 2 3 3 Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 2 3 1 1 2 3 3 Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 1 1 2 3 1 1 1 2 2 3 3 Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 1 3 1 1 2 3 3 Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 1 3 1 1 1 2 1 3 3 Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 1 3 1 1 1 3 3 Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 1 3 1 1 1 3 3 Simple Message passing Idea -Gradients send their IDs -Coordinates Compute Min and Send Back -Gradients Compute Min and Send Back Iterate till you’re done

Fast Connected Components 1 1 1 3 3 3 Cost = Same assumptions as main theorem

Proof of “The Theorem”

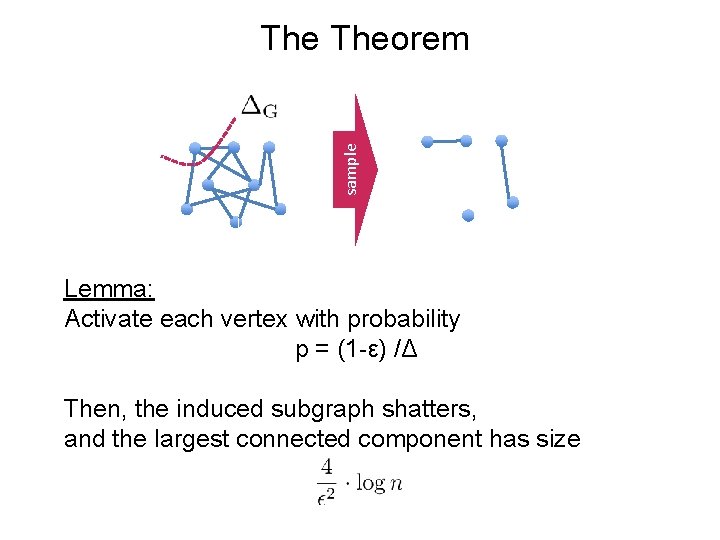

sample Theorem Lemma: Activate each vertex with probability p = (1 -ε) /Δ Then, the induced subgraph shatters, and the largest connected component has size

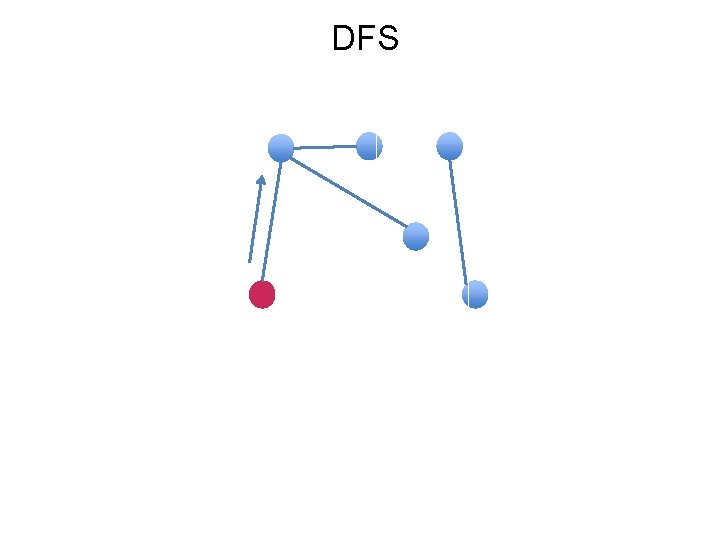

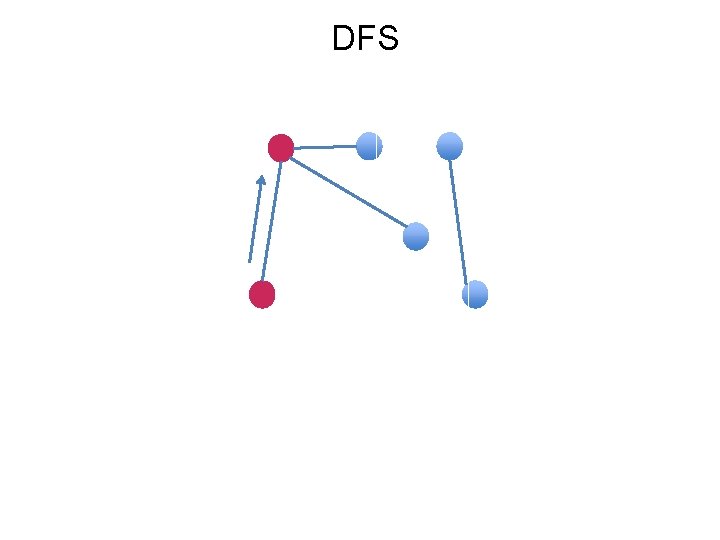

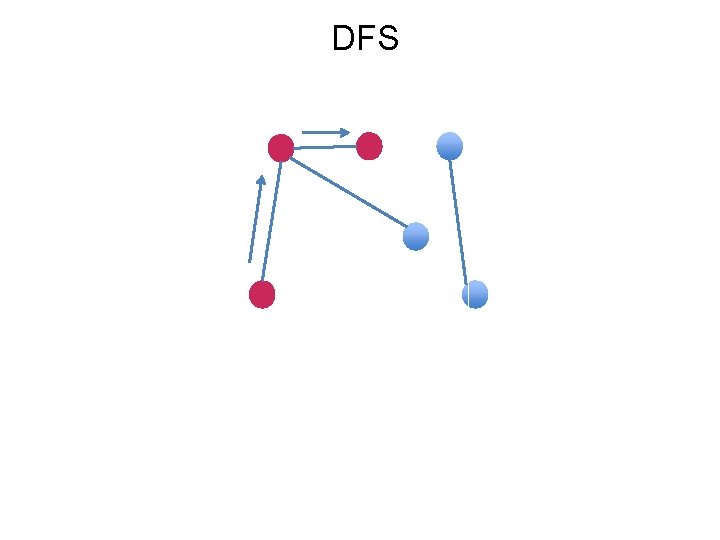

DFS 101

DFS

DFS

DFS

DFS

DFS

DFS

DFS

DFS

Probabilistic DFS

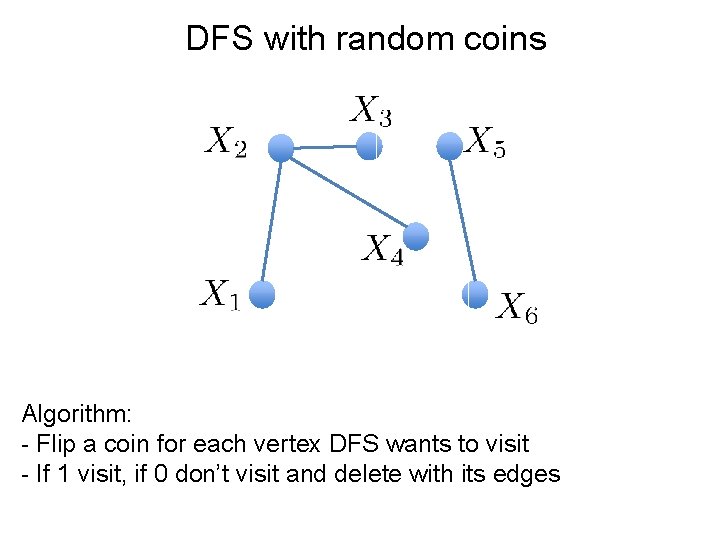

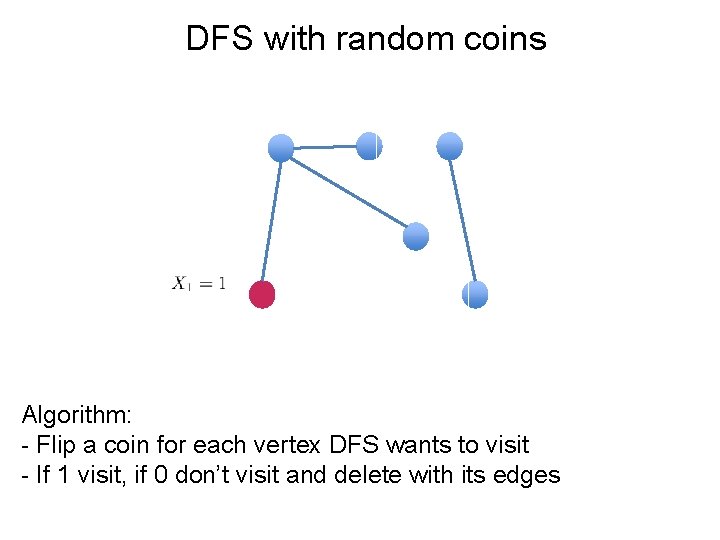

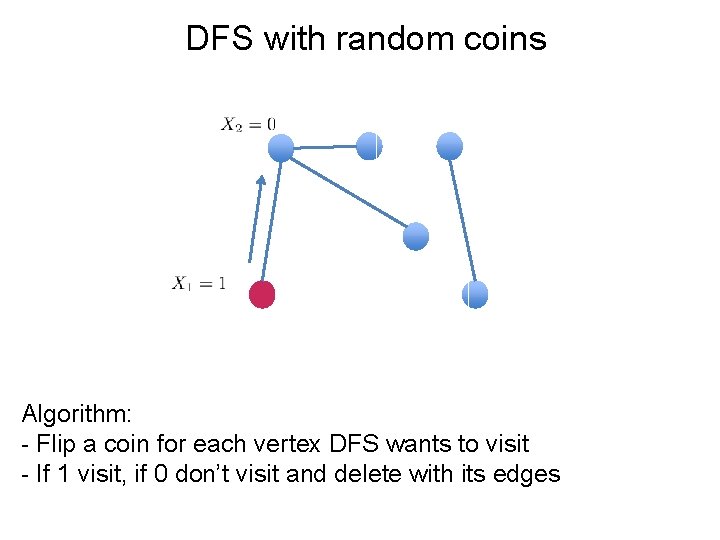

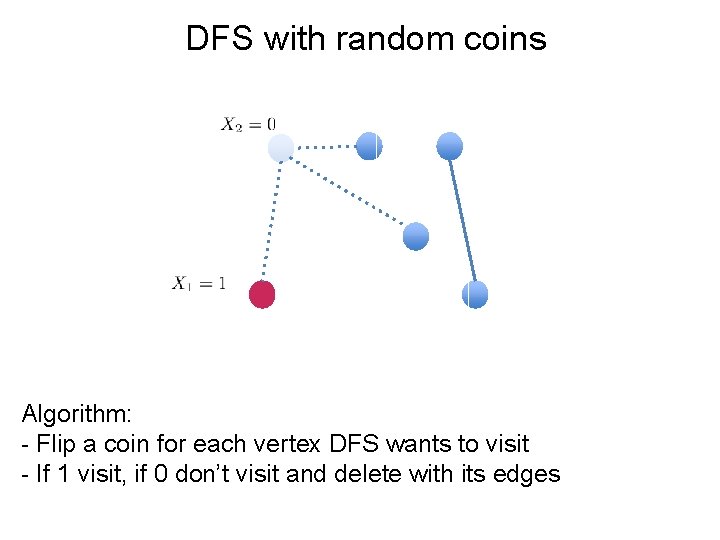

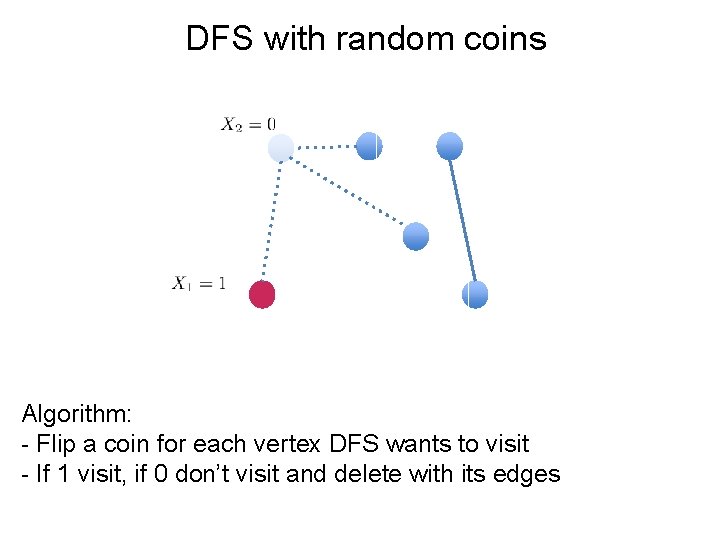

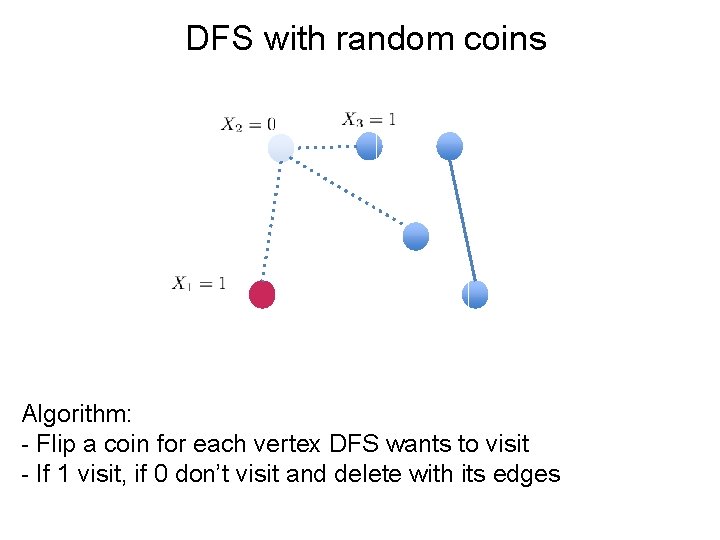

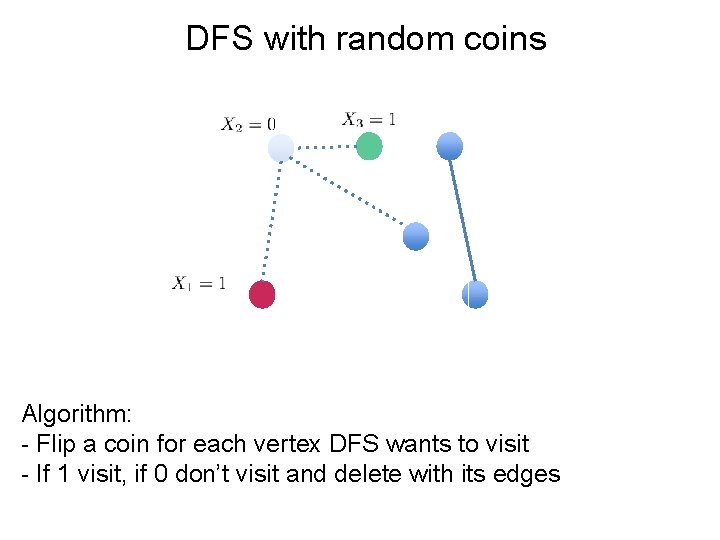

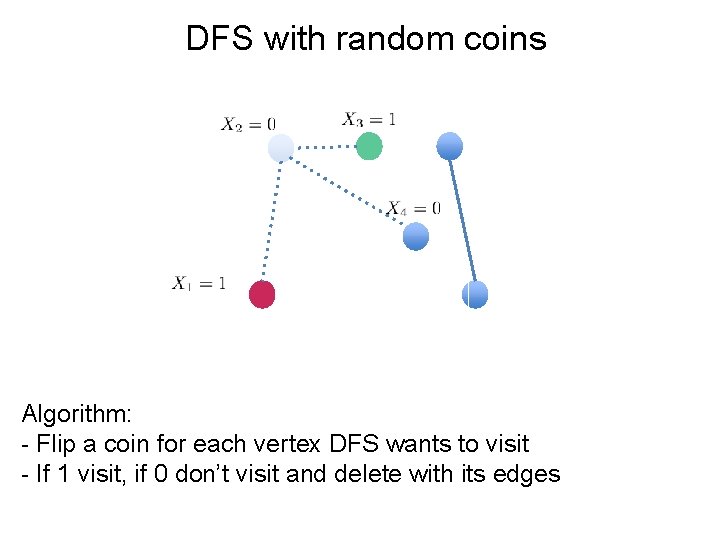

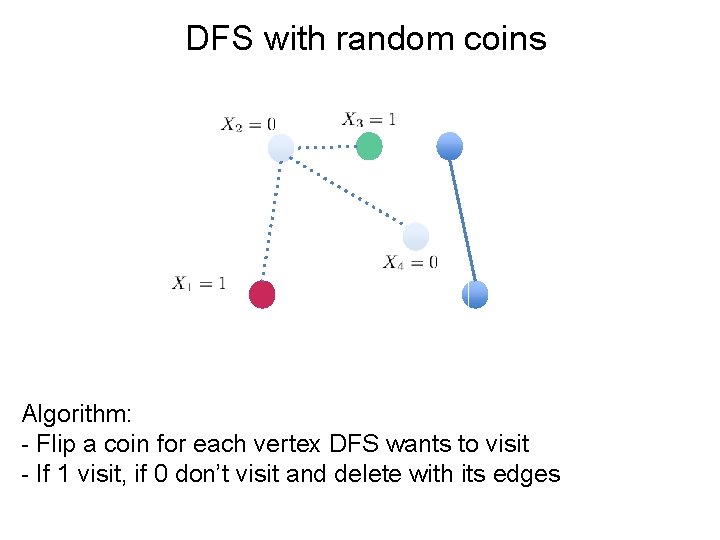

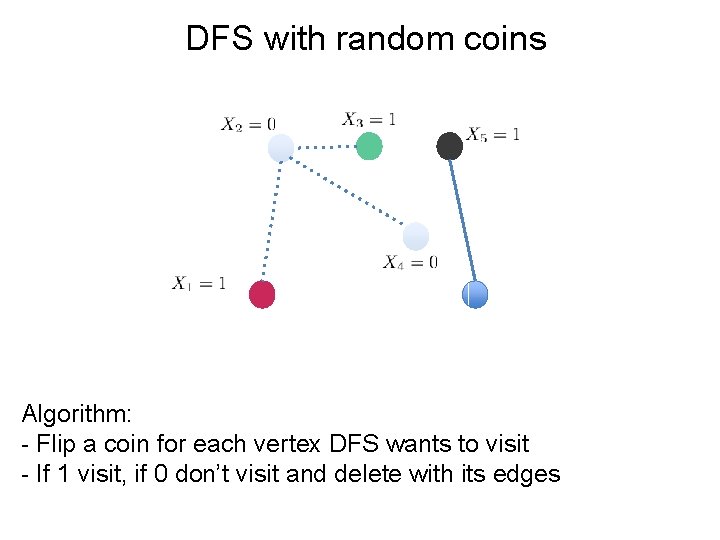

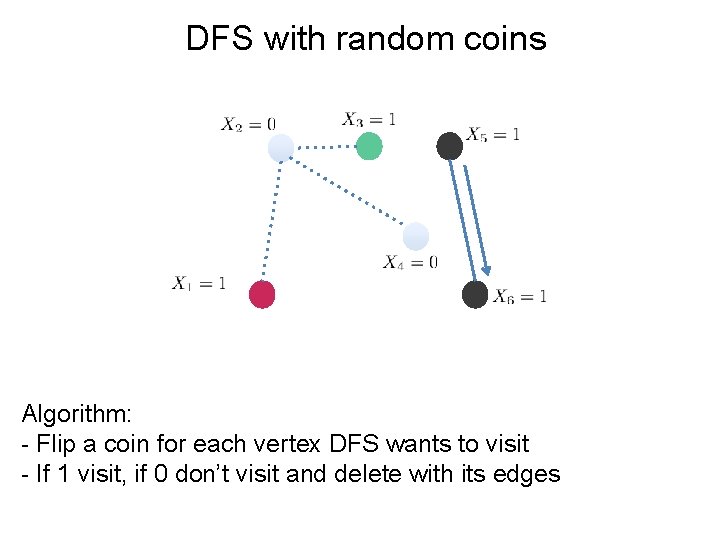

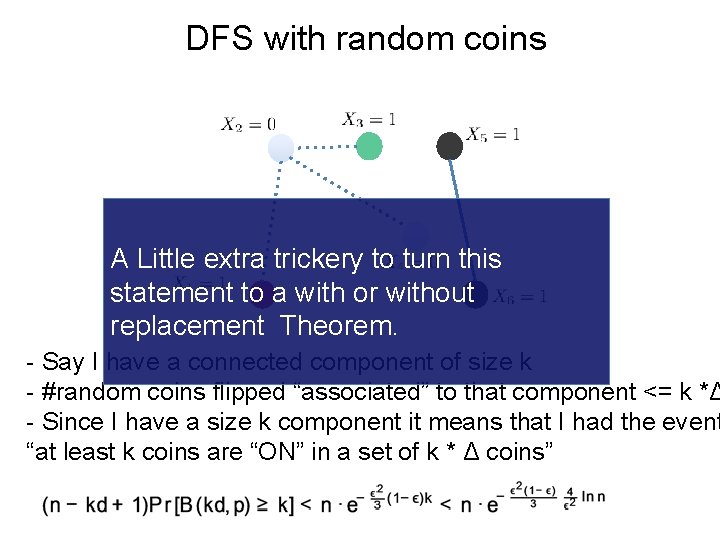

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins Algorithm: - Flip a coin for each vertex DFS wants to visit - If 1 visit, if 0 don’t visit and delete with its edges

DFS with random coins A Little extra trickery to turn this statement to a with or without replacement Theorem. - Say I have a connected component of size k - #random coins flipped “associated” to that component <= k *Δ - Since I have a size k component it means that I had the event “at least k coins are “ON” in a set of k * Δ coins”

Is Max. Degree really an issue?

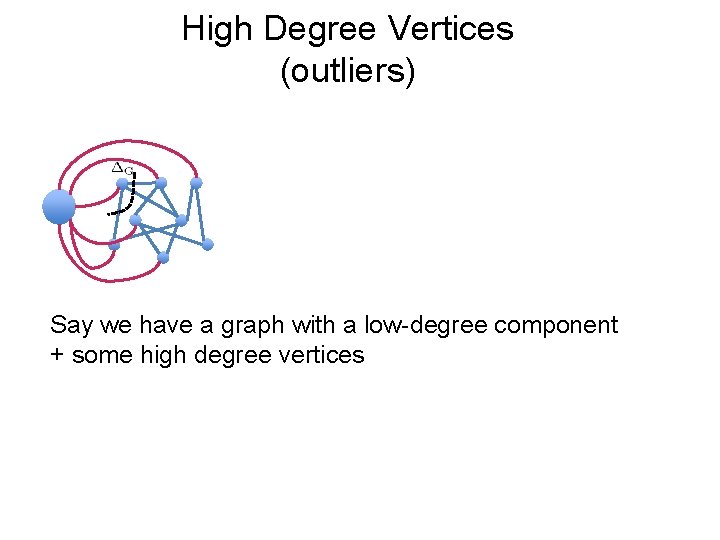

High Degree Vertices (outliers) Say we have a graph with a low-degree component + some high degree vertices

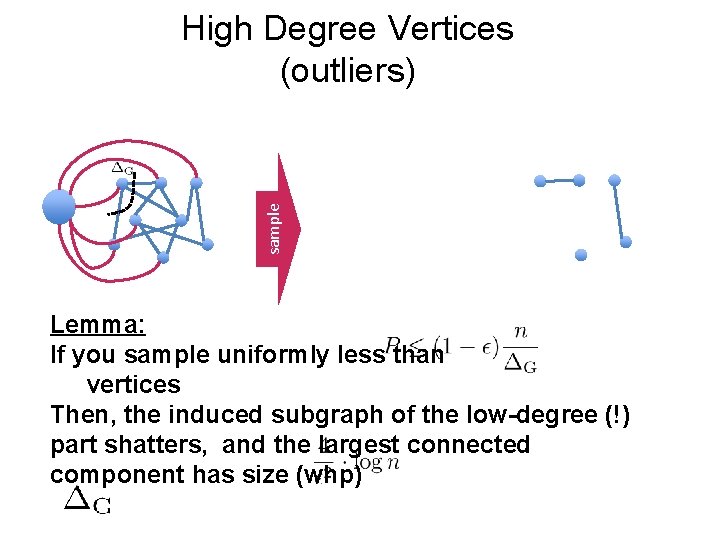

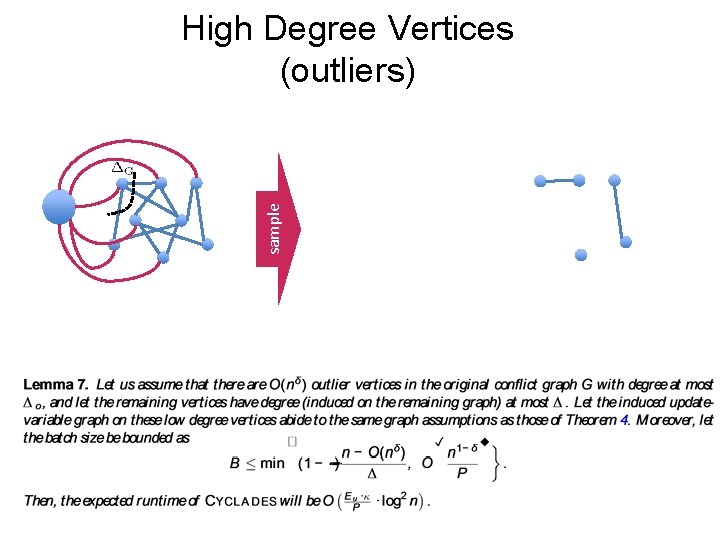

sample High Degree Vertices (outliers) Lemma: If you sample uniformly less than vertices Then, the induced subgraph of the low-degree (!) part shatters, and the largest connected component has size (whp)

sample High Degree Vertices (outliers)

Experiments

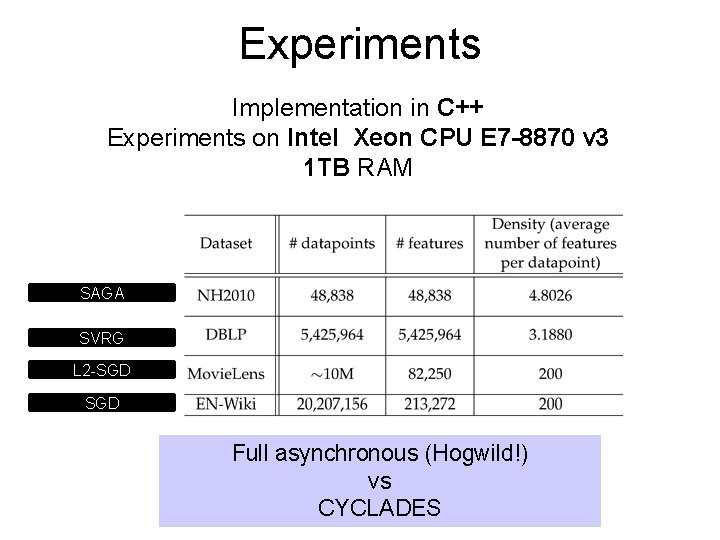

Experiments Implementation in C++ Experiments on Intel Xeon CPU E 7 -8870 v 3 1 TB RAM SAGA SVRG L 2 -SGD Full asynchronous (Hogwild!) vs CYCLADES

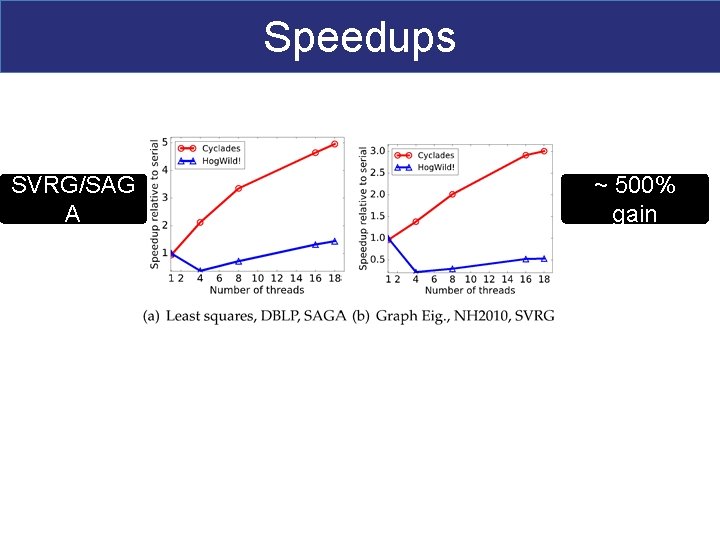

Speedups SVRG/SAG A ~ 500% gain SGD ~ 30% gain

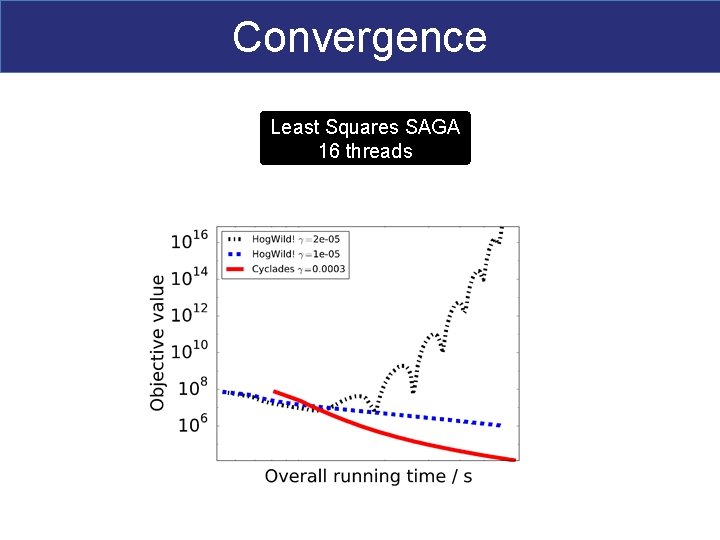

Convergence Least Squares SAGA 16 threads

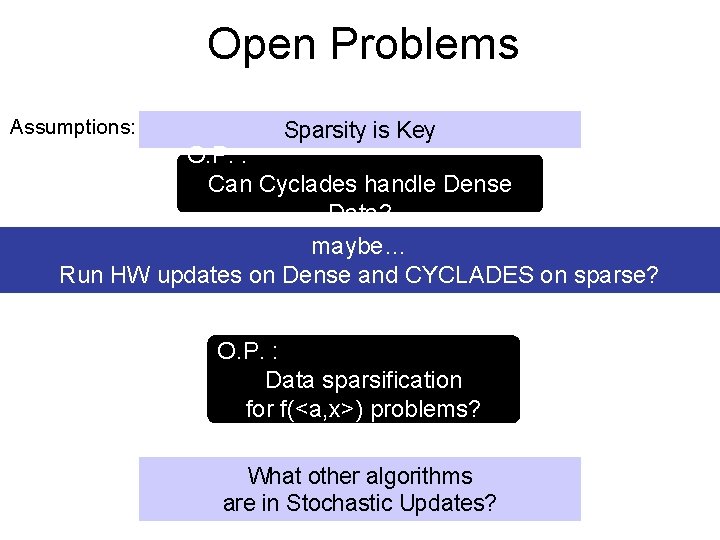

Open Problems Assumptions: Sparsity is Key O. P. : Can Cyclades handle Dense Data? maybe… Run HW updates on Dense and CYCLADES on sparse? O. P. : Data sparsification for f(<a, x>) problems? What other algorithms are in Stochastic Updates?

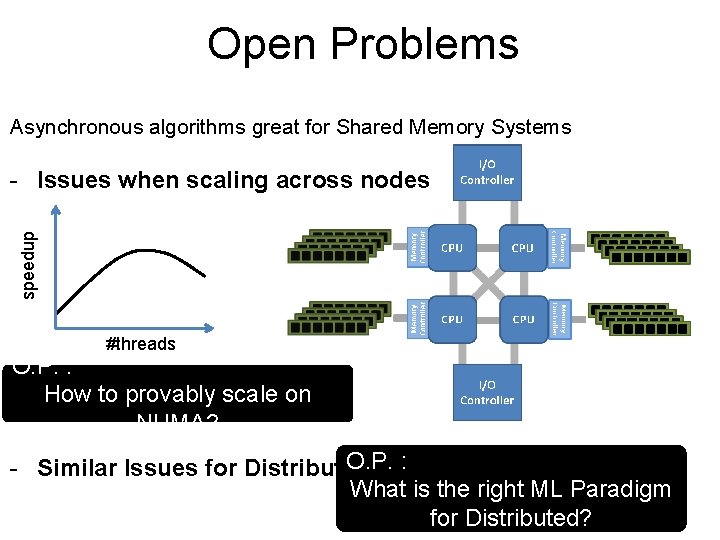

Open Problems Asynchronous algorithms great for Shared Memory Systems speedup - Issues when scaling across nodes #threads O. P. : How to provably scale on NUMA? O. P. : - Similar Issues for Distributed: What is the right ML Paradigm for Distributed?

CYCLADES a framework for Parallel Sparse ML algorithms - Lock-free + (maximally) Asynchronous - No Conflicts - Serializable - Black-box analysis

Next Time - Communication Bottlenecks - Compressed Gradients - Quantization

- Slides: 80