Reliable Datagram Sockets and Infini Band Hanan Hit

Reliable Datagram Sockets and Infini. Band Hanan Hit No. COUG Staff 2010

Agenda • Infiniband Basics • What is RDS (Reliable Datagram Sockets)? • Advantages of RDS over Infini. Band • Architecture Overview • TPC-H over 11 g Benchmark • Infini. Band vs. 10 GE 2

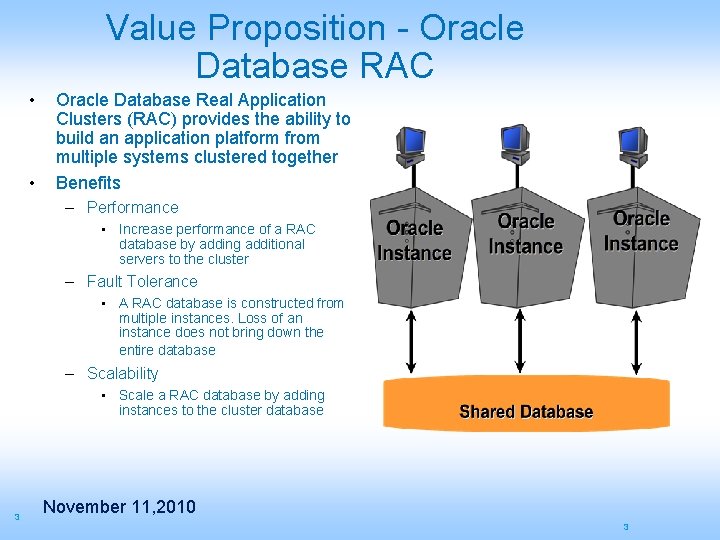

Value Proposition - Oracle Database RAC • • Oracle Database Real Application Clusters (RAC) provides the ability to build an application platform from multiple systems clustered together Benefits – Performance • Increase performance of a RAC database by adding additional servers to the cluster – Fault Tolerance • A RAC database is constructed from multiple instances. Loss of an instance does not bring down the entire database – Scalability • Scale a RAC database by adding instances to the cluster database 3 November 11, 2010 3

Some Facts • High-end database applications in the OLTP category are in size range from 10 -20 TB with 2 -10 k IOPS. • The high end DW applications falls into the category of 20 -40 TB with I/O bandwidth requirement of around 4 -8 GB per second. • The x 86_64 server with 2 sockets seems to offer the best price at the current point. • The major limitations of the above servers is limited number of slots available to connect to the external I/O cards and the CPU cost of processing I/O in conventional kernel based I/O mechanisms. • The main challenge in building cluster databases that runs in multiple serves is the ability to provide low cost balanced I/O bandwidth. • The conventional fiber channel based storage arrays with its expensive plumbing does not scale very well to create the balance where these db servers could be optimally utilized. 4 November 11, 2010

IBA/Reliable Datagram Sockets (RDS) Protocol What is IBA Infini. Band Architecture (IBA) is an industry-standard, channel-based, switched-fabric, high-speed interconnect architecture with low latency and high throughput. The Infini. Band architecture specification defines a connection between processor nodes and high performance I/O nodes such as storage devices. What is RDS • A low overhead, low latency, high bandwidth, ultra reliable, supportable, Inter-Process Communication (IPC) protocol and transport system • Matches Oracle’s existing IPC models for RAC communication Ø Optimized for transfers from 200 Bytes to 8 MByte • Based on Socket API 5 November 11, 2010

Reliable Datagram Sockets (RDS) Protocol • Leverage Infini. Band’s built-in high availability and load balance features • Port failover on the same HCA • HCA failover on the same system • Automatic load balancing • Open Source on Open Fabric / OFED http: //www. openfabrics. org/downloads/OFED/ofed-1. 4/OFED-1. 4 -docs/ 6 November 11, 2010

Advantages of RDS over Infini. Band • Lowering Data Center TCO requires efficient fabrics • Oracle RAC 11 g will scale for database intensive applications only with the proper high speed protocol and efficient interconnect • RDS over 10 GE • 10 Gbps not enough to feed multi core Server IO needs • Each core may require > 3 Gbps • Packets can be lost and require retransmit • Statistics are not accurate throughput indication • Efficiency is much lower than reported • RDS over Infini. Band • The network efficiency is always 100% • 40 Gbps today • Uses Infiniband delivery capabilities that offload end-to-end checking to the Infiniband fabric. • Integrated in the Linux kernel • More tools will be ported to support RDS, i. e. : netstat, etc. • Shows significant real world application performance boost • Decision Support System • Mixed Batch/OLTP workloads 7 November 11, 2010

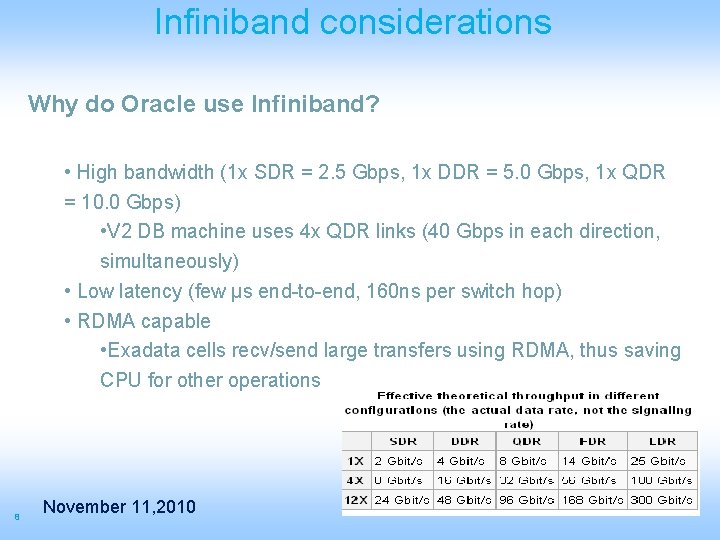

Infiniband considerations Why do Oracle use Infiniband? • High bandwidth (1 x SDR = 2. 5 Gbps, 1 x DDR = 5. 0 Gbps, 1 x QDR = 10. 0 Gbps) • V 2 DB machine uses 4 x QDR links (40 Gbps in each direction, simultaneously) • Low latency (few µs end-to-end, 160 ns per switch hop) • RDMA capable • Exadata cells recv/send large transfers using RDMA, thus saving CPU for other operations 8 November 11, 2010

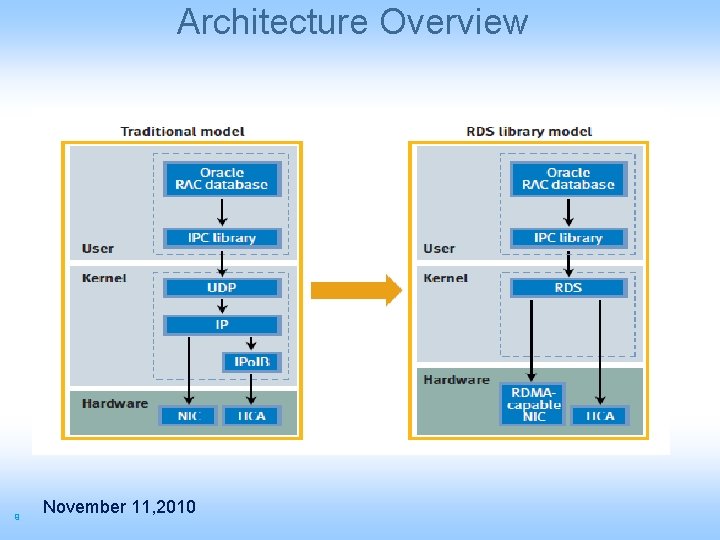

Architecture Overview 9 November 11, 2010

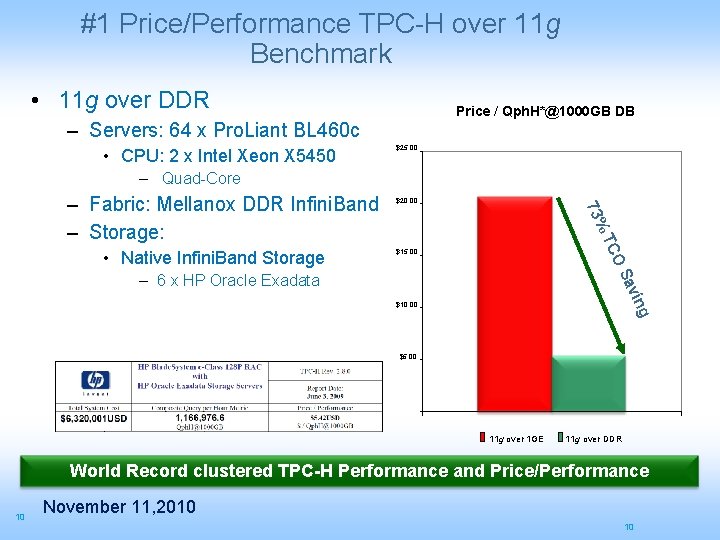

#1 Price/Performance TPC-H over 11 g Benchmark • 11 g over DDR Price / Qph. H*@1000 GB DB – Servers: 64 x Pro. Liant BL 460 c • CPU: 2 x Intel Xeon X 5450 $25. 00 – Quad-Core TC $15. 00 OS • Native Infini. Band Storage $20. 00 73% – Fabric: Mellanox DDR Infini. Band – Storage: n avi – 6 x HP Oracle Exadata $10. 00 g $5. 00 11 g over 1 GE 11 g over DDR World Record clustered TPC-H Performance and Price/Performance 10 November 11, 2010 10

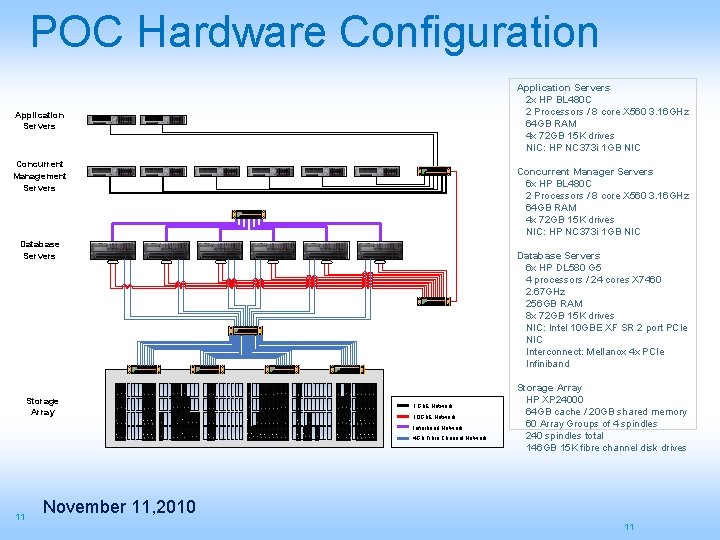

POC Hardware Configuration Application Servers 2 x HP BL 480 C 2 Processors / 8 core X 560 3. 16 GHz 64 GB RAM 4 x 72 GB 15 K drives NIC: HP NC 373 i 1 GB NIC Application Servers Concurrent Management Servers Concurrent Manager Servers 6 x HP BL 480 C 2 Processors / 8 core X 560 3. 16 GHz 64 GB RAM 4 x 72 GB 15 K drives NIC: HP NC 373 i 1 GB NIC Database Servers Storage Array Database Servers 6 x HP DL 580 G 5 4 processors / 24 cores X 7460 2. 67 GHz 256 GB RAM 8 x 72 GB 15 K drives NIC: Intel 10 GBE XF SR 2 port PCIe NIC Interconnect: Mellanox 4 x PCIe Infiniband 1 Gb. E Network 10 Gb. E Network Infiniband Network 4 Gb Fibre Channel Network 11 Storage Array HP XP 24000 64 GB cache / 20 GB shared memory 60 Array Groups of 4 spindles 240 spindles total 146 GB 15 K fibre channel disk drives November 11, 2010 11

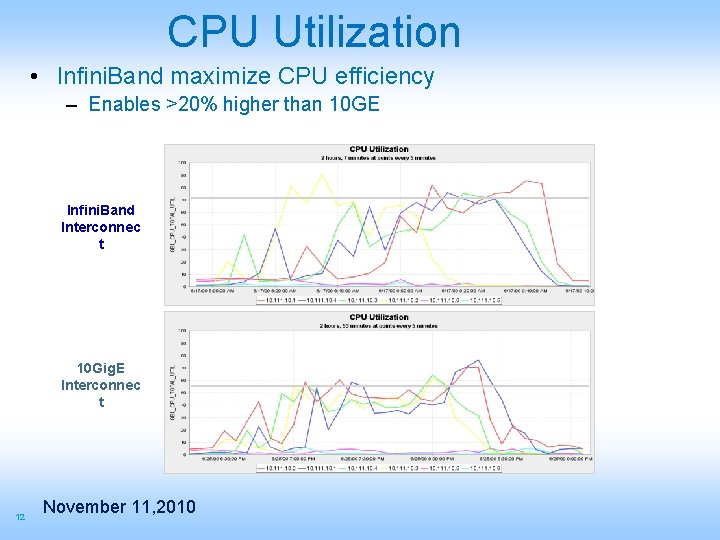

CPU Utilization • Infini. Band maximize CPU efficiency – Enables >20% higher than 10 GE Infini. Band Interconnec t 10 Gig. E Interconnec t 12 November 11, 2010

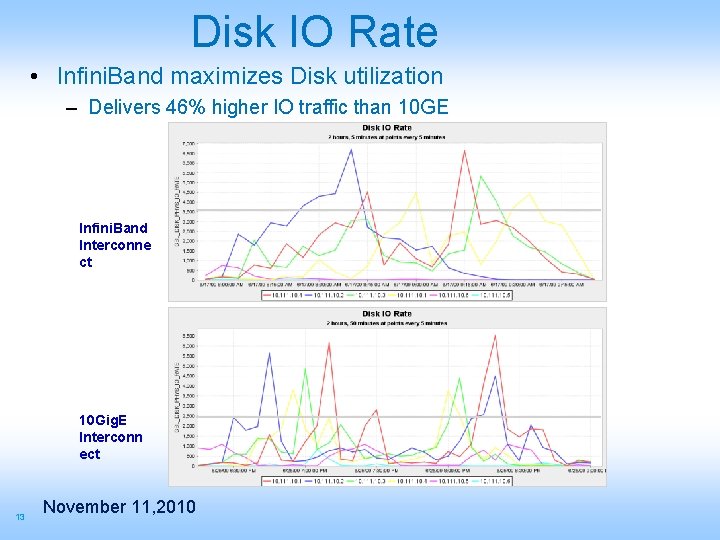

Disk IO Rate • Infini. Band maximizes Disk utilization – Delivers 46% higher IO traffic than 10 GE Infini. Band Interconne ct 10 Gig. E Interconn ect 13 November 11, 2010

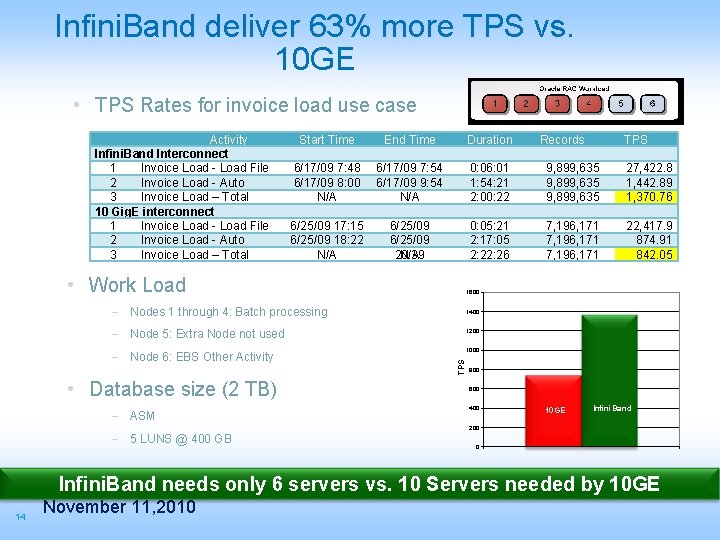

Infini. Band deliver 63% more TPS vs. 10 GE • TPS Rates for invoice load use case Activity Infini. Band Interconnect 1 Invoice Load - Load File 2 Invoice Load - Auto 3 Invoice Load – Total 10 Gig. E interconnect 1 Invoice Load - Load File 2 Invoice Load - Auto 3 Invoice Load – Total Start Time End Time Duration 6/17/09 7: 48 6/17/09 8: 00 N/A 6/17/09 7: 54 6/17/09 9: 54 N/A 0: 06: 01 1: 54: 21 2: 00: 22 9, 899, 635 27, 422. 8 1, 442. 89 1 1, 370. 76 6/25/09 17: 15 6/25/09 18: 22 N/A 6/25/09 17: 20 N/A 20: 39 0: 05: 21 2: 17: 05 2: 26 7, 196, 171 22, 417. 9 874. 91 8 842. 05 • Work Load 1400 1200 – Node 6: EBS Other Activity 1000 TPS – Node 5: Extra Node not used – ASM TPS 1600 – Nodes 1 through 4: Batch processing • Database size (2 TB) Records 800 600 400 10 GE Infini. Band 200 – 5 LUNS @ 400 GB 0 Infini. Band needs only 6 servers vs. 10 Servers needed by 10 GE 14 November 11, 2010

Sun Oracle Database Machine • Clustering is the architecture of the future – Highest performance, lowest cost, redundant, incrementally scalable • Sun Oracle Database Machine that based on 40 Gb/s Infini. Band delivers a complete clustering architecture for all data management needs 15 November 11, 2010

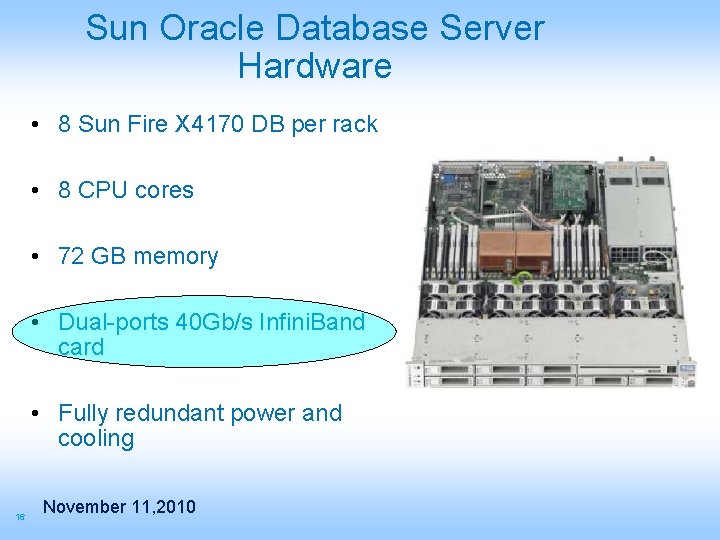

Sun Oracle Database Server Hardware • 8 Sun Fire X 4170 DB per rack • 8 CPU cores • 72 GB memory • Dual-ports 40 Gb/s Infini. Band card • Fully redundant power and cooling 16 November 11, 2010

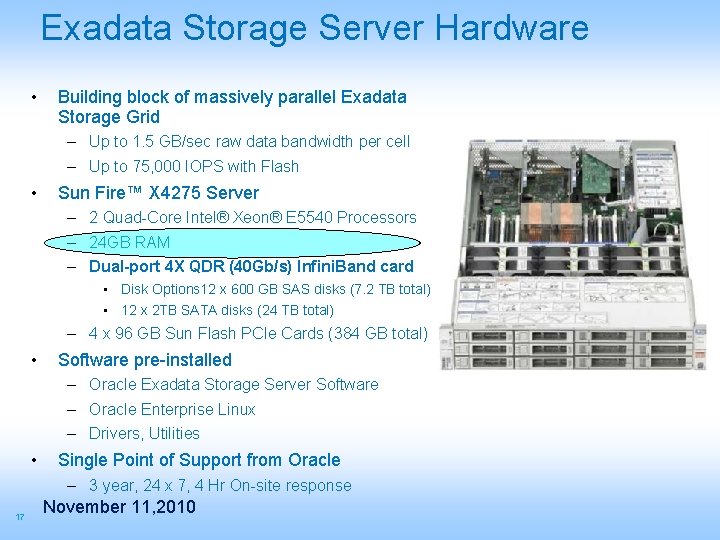

Exadata Storage Server Hardware • Building block of massively parallel Exadata Storage Grid – Up to 1. 5 GB/sec raw data bandwidth per cell – Up to 75, 000 IOPS with Flash • Sun Fire™ X 4275 Server – 2 Quad-Core Intel® Xeon® E 5540 Processors – 24 GB RAM – Dual-port 4 X QDR (40 Gb/s) Infini. Band card • Disk Options 12 x 600 GB SAS disks (7. 2 TB total) • 12 x 2 TB SATA disks (24 TB total) – 4 x 96 GB Sun Flash PCIe Cards (384 GB total) • Software pre-installed – Oracle Exadata Storage Server Software – Oracle Enterprise Linux – Drivers, Utilities • Single Point of Support from Oracle – 3 year, 24 x 7, 4 Hr On-site response 17 November 11, 2010

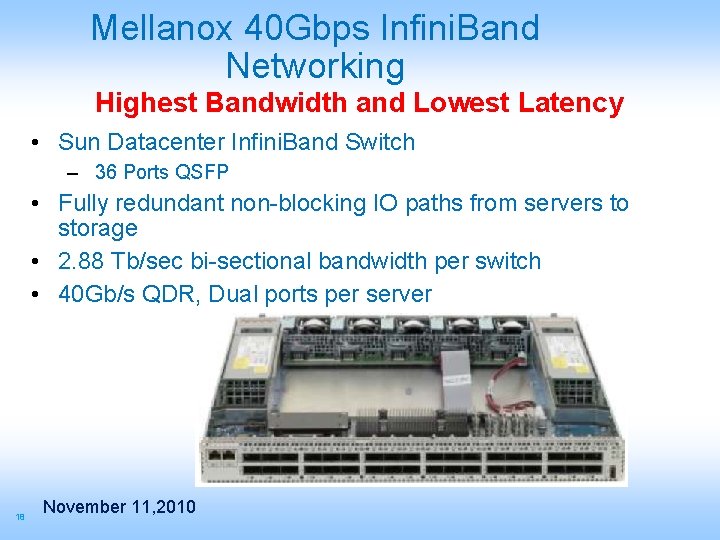

Mellanox 40 Gbps Infini. Band Networking Highest Bandwidth and Lowest Latency • Sun Datacenter Infini. Band Switch – 36 Ports QSFP • Fully redundant non-blocking IO paths from servers to storage • 2. 88 Tb/sec bi-sectional bandwidth per switch • 40 Gb/s QDR, Dual ports per server 18 November 11, 2010

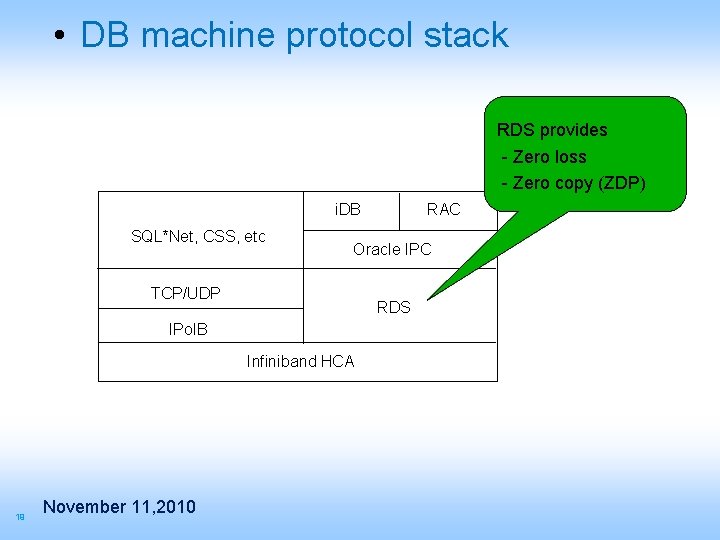

• DB machine protocol stack RDS provides - Zero loss - Zero copy (ZDP) i. DB SQL*Net, CSS, etc Oracle IPC TCP/UDP RDS IPo. IB Infiniband HCA 19 November 11, 2010 RAC

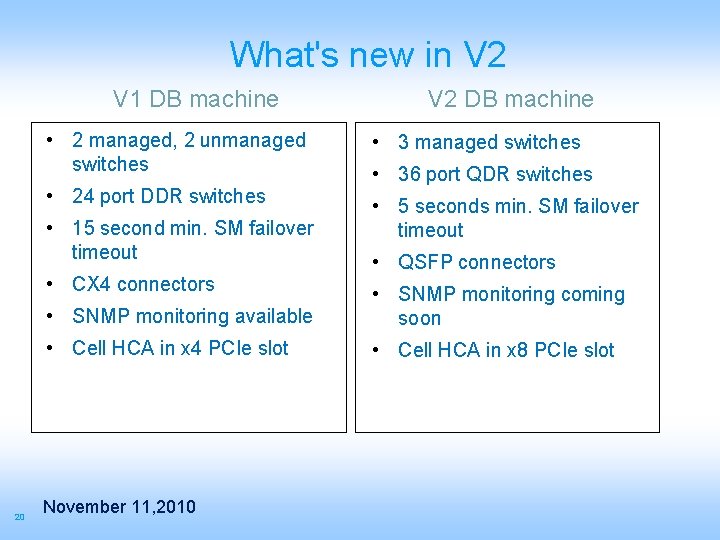

What's new in V 2 V 1 DB machine • 2 managed, 2 unmanaged switches • 3 managed switches • 24 port DDR switches • 5 seconds min. SM failover timeout • 15 second min. SM failover timeout • CX 4 connectors 20 V 2 DB machine • 36 port QDR switches • QSFP connectors • SNMP monitoring available • SNMP monitoring coming soon • Cell HCA in x 4 PCIe slot • Cell HCA in x 8 PCIe slot November 11, 2010

Infiniband Monitoring • SNMP alerts on Sun IB switches are coming • EM support for IB fabric coming – Voltaire EM plugin available (at an extra cost) • In the meantime, customers can & should monitor using – IB commands from host – Switch CLI to monitor various switch components • Self monitoring exists – Exadata cell software monitors its own IB ports – Bonding driver monitors local port failures – SM monitors all port failures on the fabric 21 November 11, 2010

Scale Performance and Capacity • Scalable – Scales to 8 rack database machine by just adding wires • More with external Infini. Band switches – Scales to hundreds of storage servers • Multi-petabyte databases 22 November 11, 2010 • Redundant and Fault Tolerant – Failure of any component is tolerated – Data is mirrored across storage servers

Competitive Advantage “…everybody is using Ethernet, we are using Infini. Band, 40 Gb/s Infini. Band” Larry Ellison Keynote at Oracle Open. World introducing Exadata-2 (Sun Oracle DB machine), October 14, 2009 San Francisco 23 November 11, 2010

- Slides: 23