Reliability Validity Scaling Reliability Repeatedly measure unchanged things

Reliability, Validity, & Scaling

Reliability • Repeatedly measure unchanged things. • Do you get the same measurements? • Charles Spearman, Classical Measurement Theory. • If perfectly reliable, then corr between true scores and measurements = +1. • r < 1 because of random error. • error symmetrically distributed about 0.

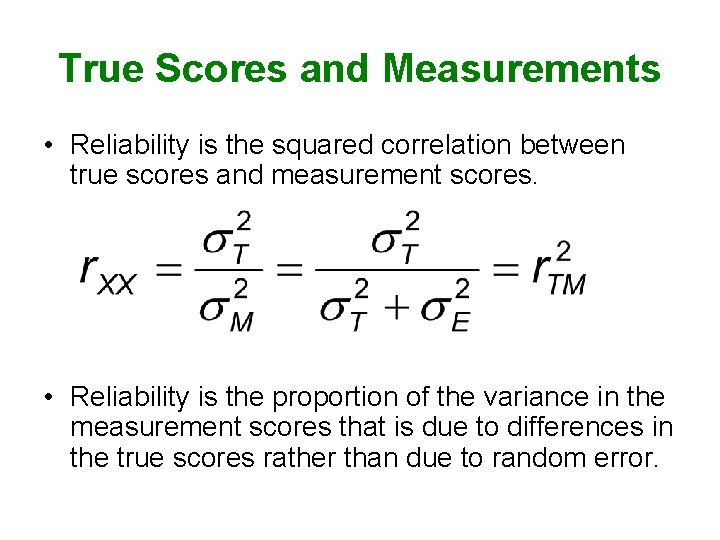

True Scores and Measurements • Reliability is the squared correlation between true scores and measurement scores. • Reliability is the proportion of the variance in the measurement scores that is due to differences in the true scores rather than due to random error.

• Systematic error – not random – measuring something else, in addition to the construct of interest • Reliability cannot be known, can be estimated.

Test-Retest Reliability • Measure subjects at two points in time. • Correlate ( r ) the two sets of measurements. • . 7 OK for research instruments • need it higher for practical applications and important decisions. • M and SD should not vary much from Time 1 to Time 2, usually.

Alternate/Parallel Forms • Estimate reliability with r between forms. • M and SD should be same for both forms. • Pattern of corrs with other variables should be same for both forms.

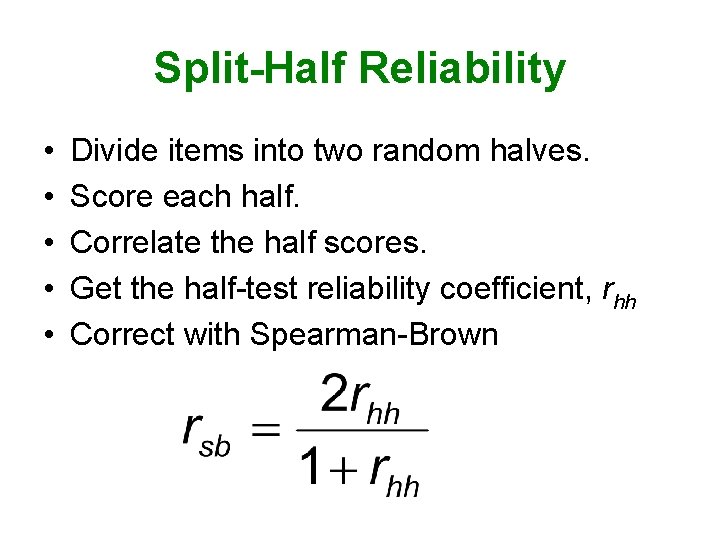

Split-Half Reliability • • • Divide items into two random halves. Score each half. Correlate the half scores. Get the half-test reliability coefficient, rhh Correct with Spearman-Brown

Cronbach’s Coefficient Alpha • Obtained value of rsb depends on how you split the items into haves. • Find rsb for all possible pairs of split halves. • Compute mean of these. • But you don’t really compute it this way. • This is a lower bound for the true reliability. • That is, it underestimates true reliability.

Maximized Lambda 4 • This is the best estimator of reliability. • Compute rsb for all possible pairs of split halves. • The largest rsb = the estimated reliability. • If more than a few items, this is unreasonably tedious. • But there are ways to estimate it.

Construct Validity • To what extent are we really measuring/manipulating the construct of interest? • Face Validity – do others agree that it sounds valid?

Content Validity • Detail the population of things (behaviors, attitudes, etc. ) that are of interest. • Consider your operationalization of the construct (the details of how you proposed to measure it) as a sample of that population. • Is your sample representative of the population – ask experts.

Criterion-Related Validity • Established by demonstrating that your operationalization has the expected pattern of correlations with other variables. • Concurrent Validity – demonstrate the expected correlation with other variables measured at the same time. • Predictive Validity – demonstrate the expected correlation with other variables measured later in time.

• Convergent Validity – demonstrate the expected correlation with measures of other constructs. • Discriminant Validity – demonstrate the expected lack of correlation with measures of other constructs.

Scaling • Scaling = construction of instruments for measuring abstract constructs. • I shall discuss the creation of a Likertscale, my favorite type of scale.

Likert Scales • Define the Concept • Generate Potential Items – About 100 statements. – On some, agreement indicates being high on the measured attribute – On others, agreement indicates being low on the measured attribute

Likert Response Scale – Use a multi-point response scale like this: 1. People should make certain that their actions never intentionally harm others even to a small degree. Strongly Disagree Neutral Agree Strongly Agree

Evaluate the Potential Items • Get judges to evaluate each item on a 5 -point scale – – – 1 – Agreement = very low on attribute 2 – Agreement = low on attribute 3 – Agreement tells you nothing 4 – Agreement = high on attribute 5 – Agreement = very high on attribute • Select items with very high or very low means and little variability among the judges.

Alternate Method of Item Evaluation • Ask some judges to respond to the items in the way they think someone high in the attribute would respond. • Ask other judges to respond as would one low in the attribute. • Prefer items that best discriminate between these two groups • Also ask judges to identify items that are unclear or confusing.

Pilot Test the Items • Administer to a sample of persons from the population of interest • Conduct an item analysis (more on this later) • Prefer items which have high item-total correlations • Consider conducting a factor analysis (more on this later)

Administer the Final Scale • on each item, response which indicates least amount of the attribute scored as 1 • next least amount response scored as 2 • and so on • respondent’s total score = sum of item scores or mean of item scores • dealing with nonresponses on some items • reflecting items (reverse scoring)

Item Analysis • • You believe the scale is unidimensional. Each item measures the same thing. Item scores should be well correlated. Evaluate this belief with an item analysis. – is the scale internally consistent? – if so, it is also reliable. – are there items that do not correlate well with the others?

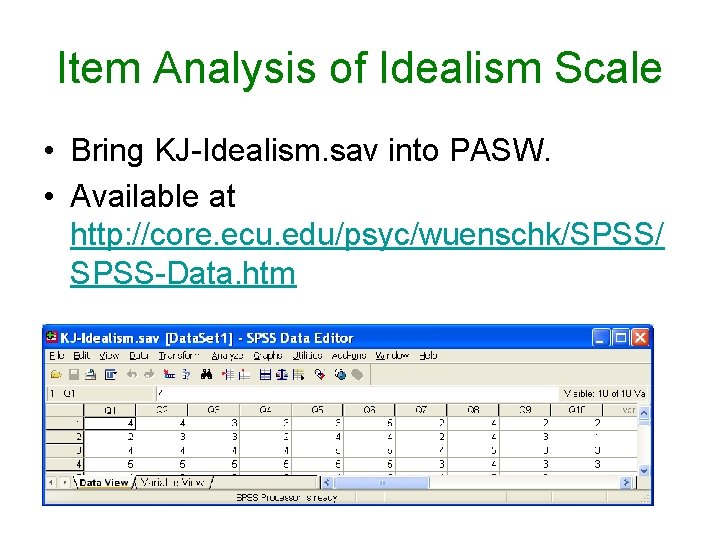

Item Analysis of Idealism Scale • Bring KJ-Idealism. sav into PASW. • Available at http: //core. ecu. edu/psyc/wuenschk/SPSS/ SPSS-Data. htm

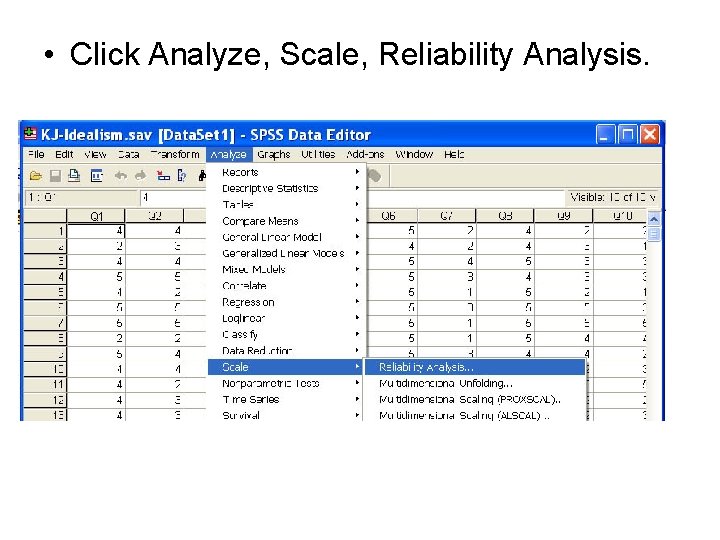

• Click Analyze, Scale, Reliability Analysis.

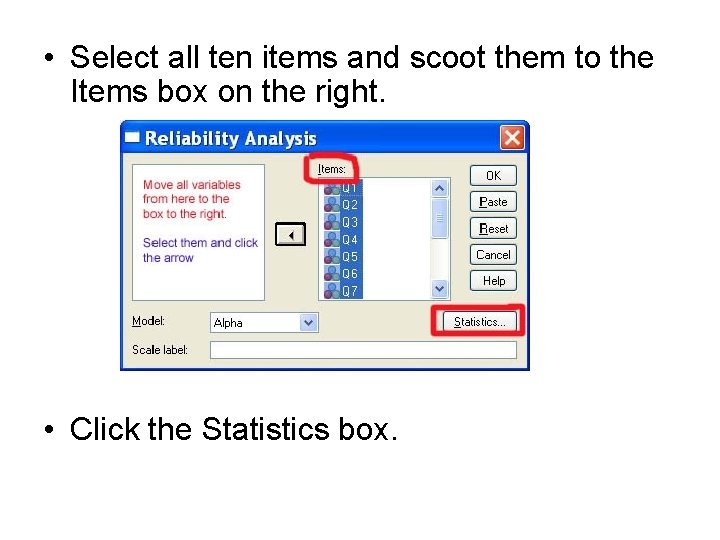

• Select all ten items and scoot them to the Items box on the right. • Click the Statistics box.

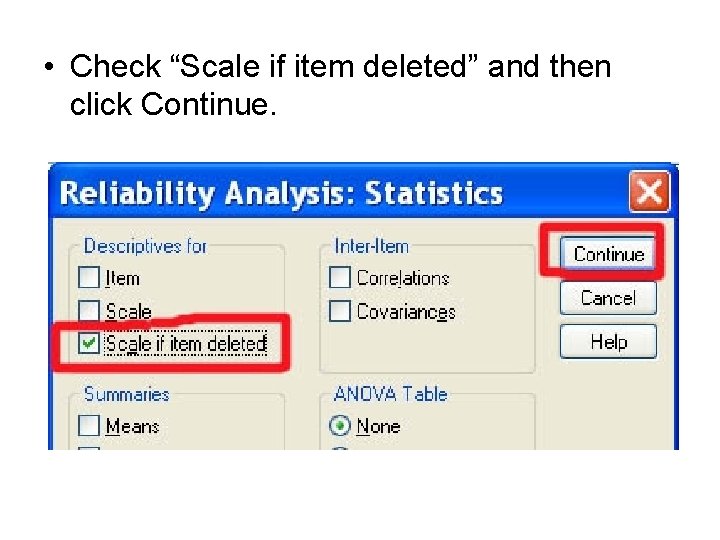

• Check “Scale if item deleted” and then click Continue.

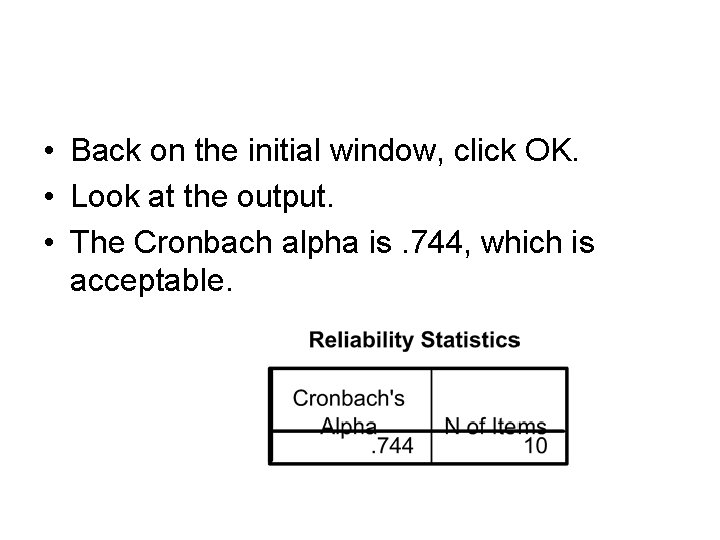

• Back on the initial window, click OK. • Look at the output. • The Cronbach alpha is. 744, which is acceptable.

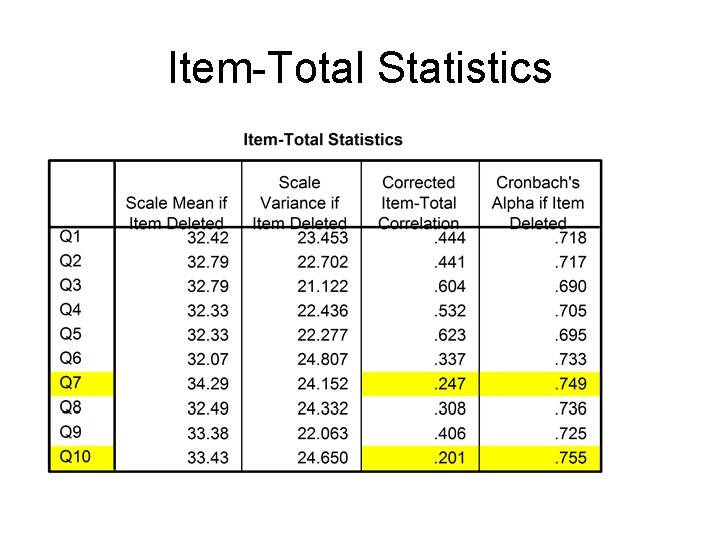

Item-Total Statistics

Troublesome Items • • • Items 7 and 10 are troublesome. Deleting them would increase alpha. But not by much, so I retained them. Item 7 stats are especially distressing: “Deciding whether or not to perform an act by balancing the positive consequences of the act against the negative consequences of the act is immoral. ”

What Next? • I should attempt to rewrite item 7 to make it more clear that it applies to ethical decisions, not other cost-benefit analysis. • But this is not my scale, • And who has the time?

Scale Might Not Be Unidimensional • If the items are measuring two or more different things, alpha may well be low. • You need to split the scale into two or more subscales. • Factor analysis can be helpful here (but no promises).

- Slides: 30