Reliability Availability and Serviceability RAS for HighPerformance Computing

Reliability, Availability, and Serviceability (RAS) for High-Performance Computing Presented by Stephen L. Scott Christian Engelmann Computer Science Research Group Computer Science and Mathematics Division

Research and development goals · Provide high-level RAS capabilities for current terascale and next-generation petascale highperformance computing (HPC) systems · Eliminate many of the numerous single points of failure and control in today’s HPC systems Develop techniques to enable HPC systems to run computational jobs 24/7 Develop proof-of-concept prototypes and production-type RAS solutions 2

MOLAR: Adaptive runtime support for high-end computing operating and runtime systems · Addresses the challenges for operating and runtime systems to run large applications efficiently on future ultrascale high-end computers · Part of the Forum to Address Scalable Technology for Runtime and Operating Systems (FAST-OS) · MOLAR is a collaborative research effort (www. fastos. org/molar) 3

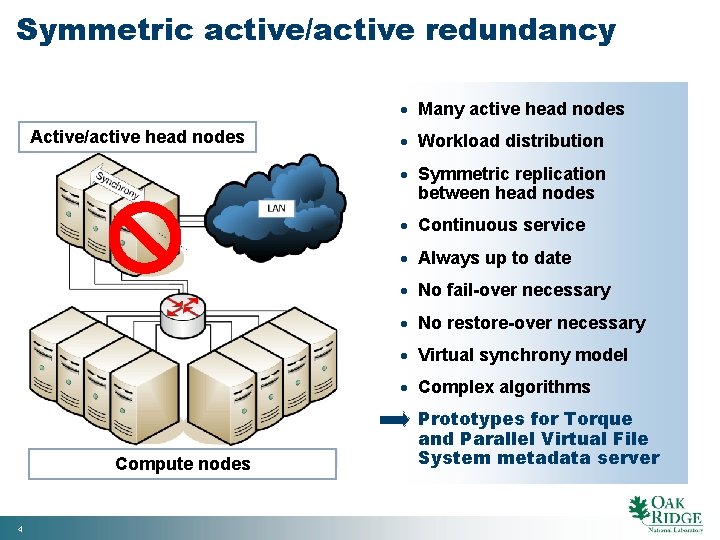

Symmetric active/active redundancy · Many active head nodes Active/active head nodes · Workload distribution · Symmetric replication between head nodes · Continuous service · Always up to date · No fail-over necessary · No restore-over necessary · Virtual synchrony model · Complex algorithms Compute nodes 4 · Prototypes for Torque and Parallel Virtual File System metadata server

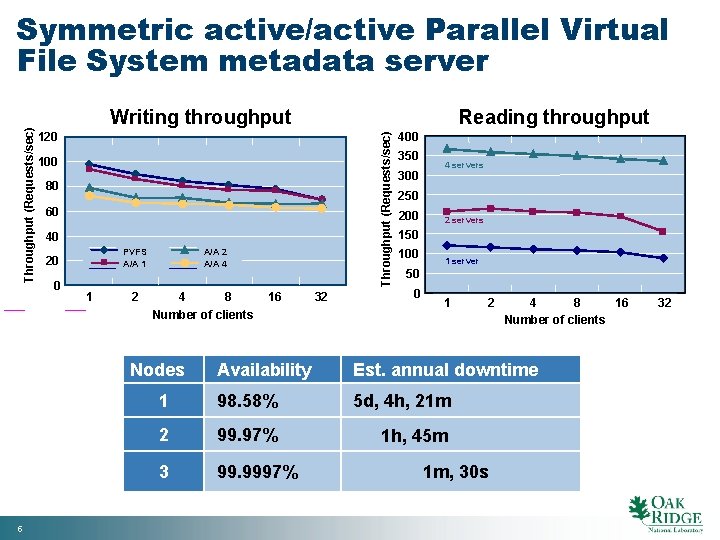

Writing throughput 120 100 80 60 40 PVFS A/A 1 20 0 1 2 A/A 4 4 8 Number of clients Nodes 5 Reading throughput Throughput (Requests/sec) Symmetric active/active Parallel Virtual File System metadata server 16 32 400 350 300 4 servers 250 200 2 servers 150 100 1 server 50 0 1 2 4 8 16 Number of clients Availability Est. annual downtime 1 98. 58% 5 d, 4 h, 21 m 2 99. 97% 1 h, 45 m 3 99. 9997% 1 m, 30 s 32

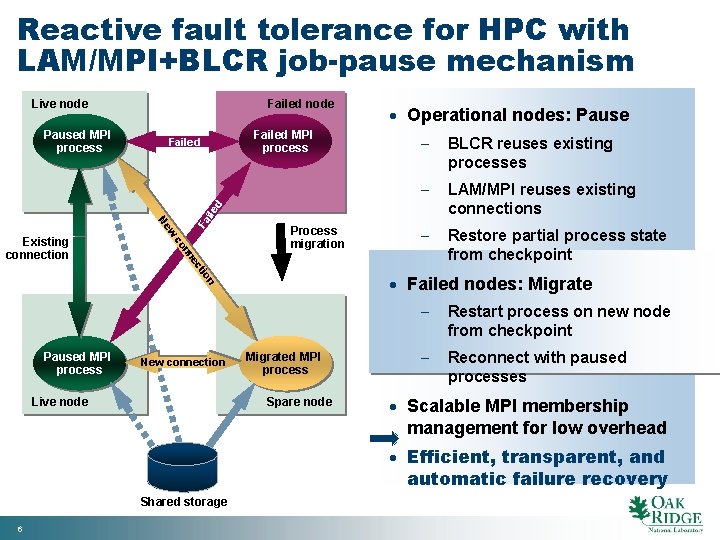

Reactive fault tolerance for HPC with LAM/MPI+BLCR job-pause mechanism Live node Failed MPI process Failed · Operational nodes: Pause BLCR reuses existing processes LAM/MPI reuses existing connections Restore partial process state from checkpoint Process migration n tio ec nn co Existing connection w Ne Fa ile d Paused MPI process Failed node Paused MPI process New connection Live node · Failed nodes: Migrated MPI process Spare node Restart process on new node from checkpoint Reconnect with paused processes · Scalable MPI membership management for low overhead · Efficient, transparent, and automatic failure recovery Shared storage 6

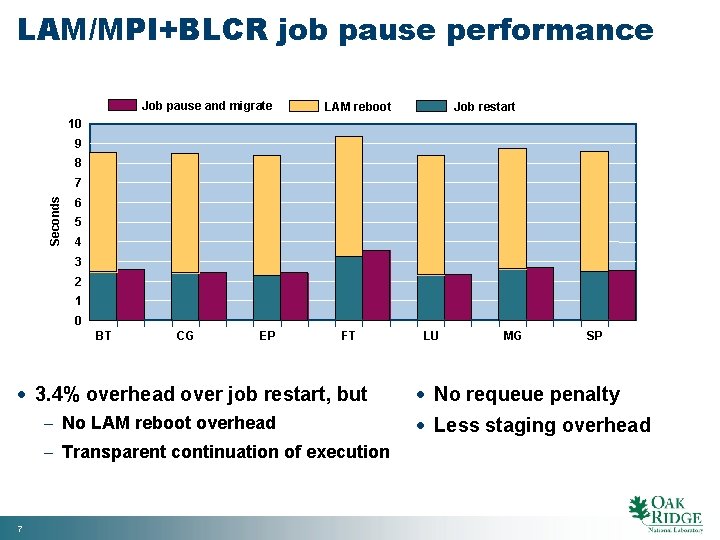

LAM/MPI+BLCR job pause performance Job pause and migrate LAM reboot Job restart 10 9 8 Seconds 7 6 5 4 3 2 1 0 BT CG EP FT · 3. 4% overhead over job restart, but No LAM reboot overhead Transparent continuation of execution 7 LU MG SP · No requeue penalty · Less staging overhead

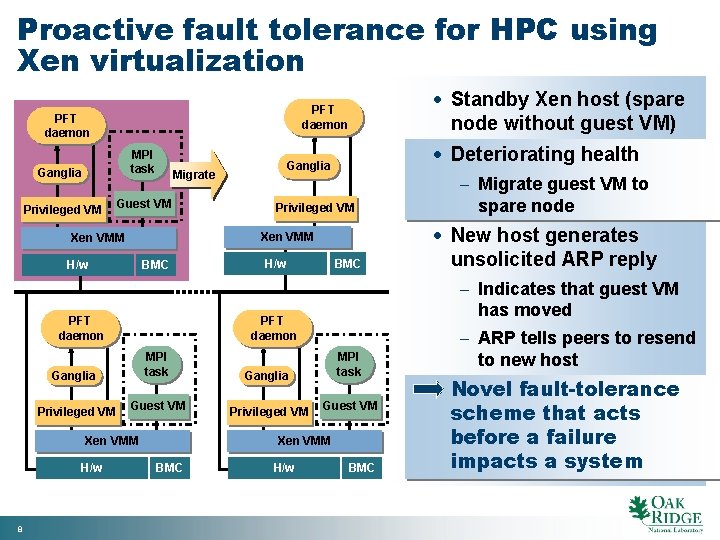

Proactive fault tolerance for HPC using Xen virtualization PFT daemon Ganglia MPI task Privileged VM Guest VM Privileged VM Xen VMM H/w BMC PFT daemon H/w BMC Ganglia MPI task Privileged VM Guest VM Xen VMM BMC H/w Migrate guest VM to spare node · New host generates unsolicited ARP reply Indicates that guest VM has moved PFT daemon Xen VMM 8 · Deteriorating health Ganglia Migrate Xen VMM H/w · Standby Xen host (spare node without guest VM) PFT daemon BMC ARP tells peers to resend to new host · Novel fault-tolerance scheme that acts before a failure impacts a system

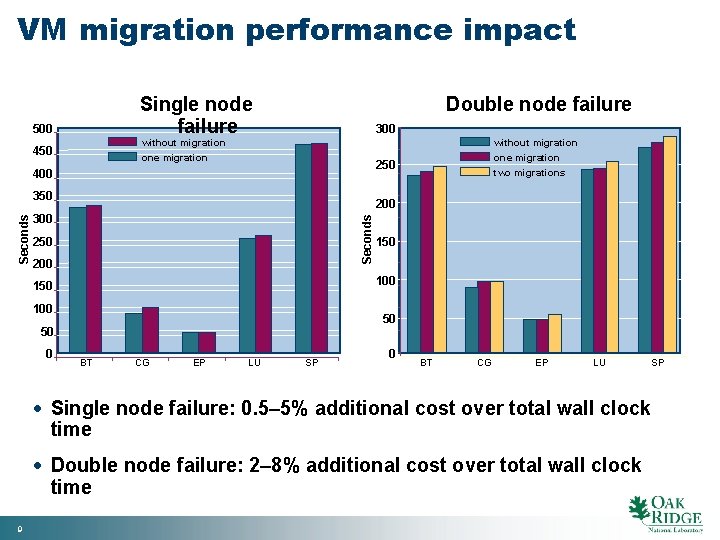

VM migration performance impact Single node failure 500 Double node failure 300 without migration 450 without migration one migration 400 200 300 Seconds 350 200 150 100 50 50 0 one migration two migrations 250 BT CG EP LU SP 0 BT CG EP LU · Single node failure: 0. 5– 5% additional cost over total wall clock time · Double node failure: 2– 8% additional cost over total wall clock time 9 SP

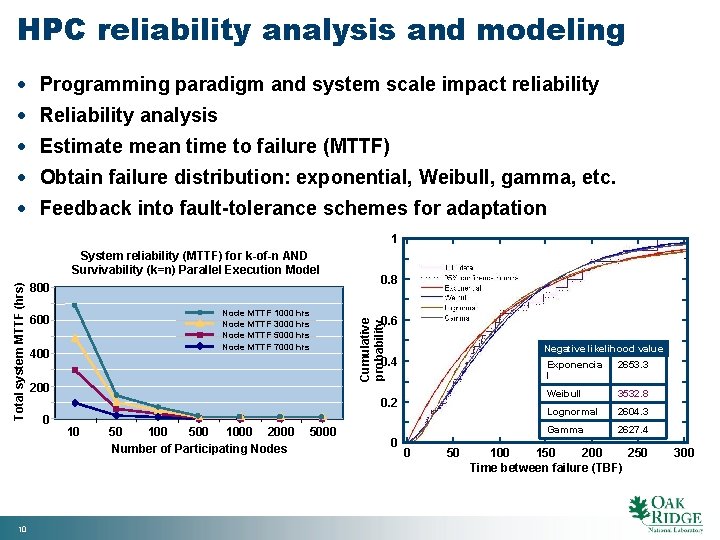

HPC reliability analysis and modeling · Programming paradigm and system scale impact reliability · Reliability analysis · Estimate mean time to failure (MTTF) · Obtain failure distribution: exponential, Weibull, gamma, etc. · Feedback into fault-tolerance schemes for adaptation 1 10 800 Node MTTF 1000 hrs Node MTTF 3000 hrs Node MTTF 5000 hrs Node MTTF 7000 hrs 600 400 0. 8 0. 6 Cumulative probability Total system MTTF (hrs) System reliability (MTTF) for k-of-n AND Survivability (k=n) Parallel Execution Model Negative likelihood value 0. 4 200 0. 2 0 10 50 100 500 1000 2000 Number of Participating Nodes 5000 0 0 50 Exponencia l 2653. 3 Weibull 3532. 8 Lognormal 2604. 3 Gamma 2627. 4 100 150 200 250 Time between failure (TBF) 300

Contacts regarding RAS research Stephen L. Scott Computer Science Research Group Computer Science and Mathematics Division (865) 574 -3144 scottsl@ornl Christian Engelmann Computer Science Research Group Computer Science and Mathematics Division (865) 574 -3132 engelmannc@ornl 11 Scott_RAS_SC 07 11

- Slides: 11